4.1. Dataset

This study uses the Microsoft Azure Telemetry Dataset as the primary data source. The dataset consists of multidimensional operational logs and performance monitoring metrics collected from a cloud service platform. It covers various components such as virtual machines, container services, load balancers, and database instances. The data include key monitoring indicators such as CPU utilization, memory usage, disk I/O, network latency, throughput, and request error rate. These metrics are continuously sampled at one-minute intervals, allowing a detailed observation of the dynamic behavior of the cloud platform. Unlike traditional static logs, this dataset ensures temporal synchronization across different components through a unified timestamp alignment mechanism, providing a reliable foundation for modeling cross-node dependencies and analyzing anomaly propagation.

Structurally, the dataset also contains service call topologies and node dependency relationships, which are used to construct dynamic service dependency graphs from call-chain logs. This graph structure reveals the interaction patterns and dependency directions among service modules, helping the model capture anomaly propagation paths in the spatial dimension. For example, when an upstream database or cache node experiences performance bottlenecks, downstream services may show request timeouts or response delays. Traditional single-node monitoring cannot directly reflect such cascading effects. By incorporating graph structural information, the model can learn both local node features and global topological correlations during training, thus supporting multilevel anomaly detection and fault prediction.

The dataset is characterized by high dimensionality, long temporal span, and multi-source heterogeneity. It can be widely applied to cloud operations, AIOps, and anomaly diagnosis tasks. It contains millions of monitoring records and thousands of node entities, with rich annotations of anomaly events such as service interruptions, performance degradation, network congestion, and resource contention. Due to its authenticity and complexity, this dataset effectively validates the robustness and generalization ability of models under high-noise conditions. It provides a solid data foundation and experimental basis for subsequent joint modeling of graph and temporal dependencies.

4.2. Experimental Results

This paper first conducts a comparative experiment, and the experimental results are shown in

Table 1.

From the overall results, the proposed cloud service fault prediction model that integrates graph structure and temporal dependence outperforms all comparison methods across multiple metrics, especially in accuracy and F1-Score. The traditional MLP model lacks temporal modeling ability and structural dependency awareness, and can only learn linear relationships among static features. Therefore, its detection capability is limited in complex and dynamic environments. As network architectures evolve, BiLSTM and 1D-CNN introduce temporal modeling and local feature extraction mechanisms, which enhance the model's sensitivity to temporal patterns of anomalies. Their performance shows significant improvement over baseline methods, demonstrating the importance of temporal dependence modeling for recognizing abnormal behaviors in cloud platforms.

The Transformer model further validates the effectiveness of global dependency modeling. Through the self-attention mechanism, it captures long-range dependencies in the temporal dimension, allowing stable detection under complex workload fluctuations and multi-scale variations. However, this approach still focuses mainly on single-node sequential features and does not fully model the topological relationships and fault propagation paths between different service nodes. As a result, when cross-node anomaly propagation occurs, time-only modeling struggles to achieve high precision, and the model's recall and F1 remain limited.

The introduction of graph neural structures, such as GNN and GAT, leads to further improvements across all metrics, indicating the significant value of structural information for anomaly detection. GNN enables feature transmission and aggregation among nodes, capturing potential dependencies and interactions between services. GAT adaptively adjusts neighbor weights through an attention mechanism, giving the model stronger representational power in heterogeneous topologies. This structural awareness in the spatial dimension allows the model to identify anomaly propagation chains, enhancing system-level detection capability and robustness. It highlights the adaptability and generalization of graph structures in cloud service scenarios.

Finally, the proposed model integrating graph structure and temporal dependence achieves the best performance across all metrics, with an accuracy of 0.9215 and an F1-Score of 0.8879. These results show that joint spatiotemporal modeling effectively combines inter-node dependencies with temporal evolution features, overcoming the limitations of one-dimensional modeling. The model can identify both local transient anomalies and cross-node propagation anomalies. In complex cloud service environments, this fusion modeling strategy not only improves fault prediction accuracy but also enhances stability and interpretability under dynamic topologies. It provides a more robust solution for intelligent operation and anomaly prediction in cloud platforms.

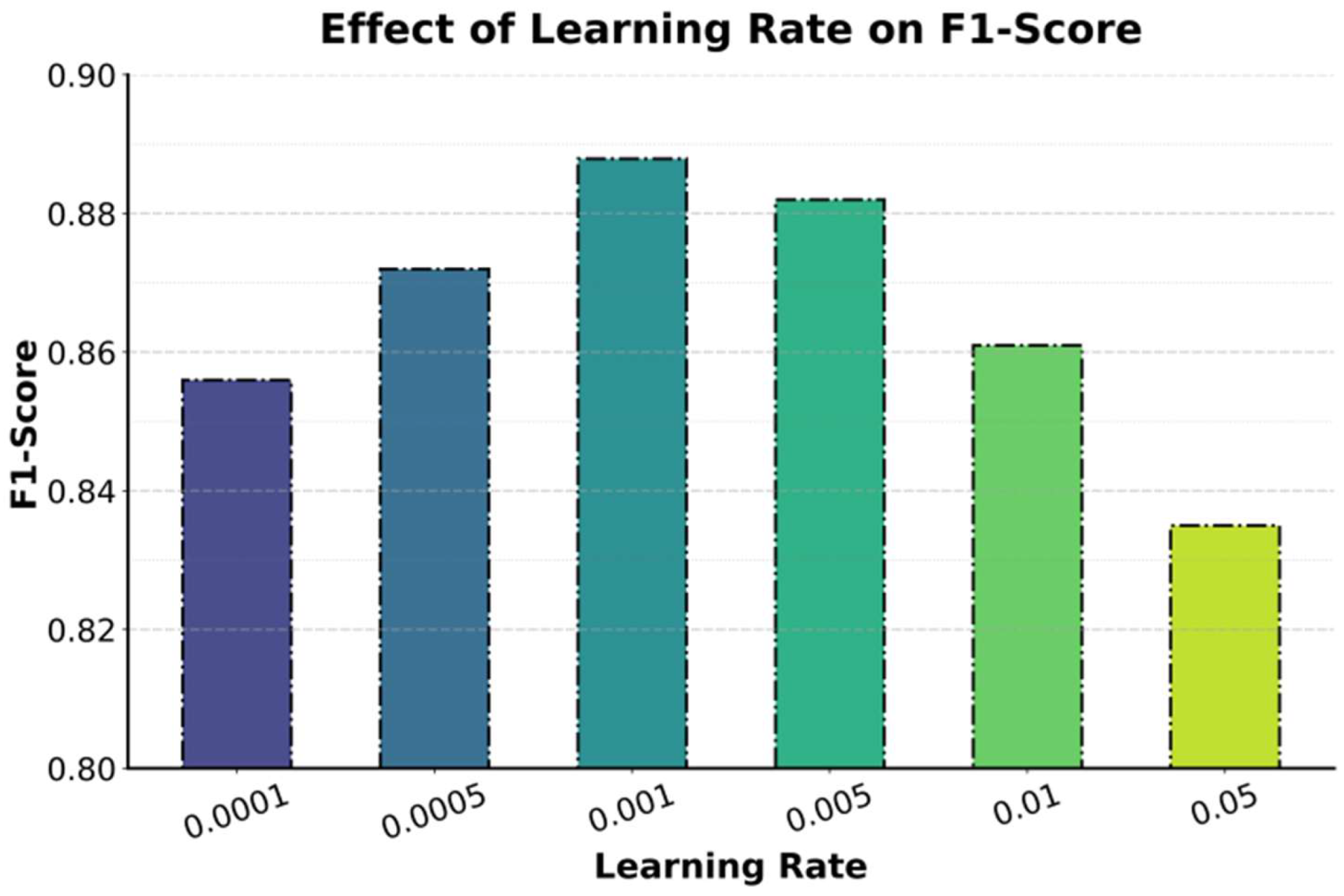

This paper also presents an experiment on the effect of learning rate on F1-Score, and the experimental results are shown in

Figure 2.

The experimental results show that the learning rate has a significant impact on the model's F1-Score, presenting a typical pattern of "optimal in the middle and declining at both ends." When the learning rate is low (for example, 0.0001 or 0.0005), the parameter update speed is slow, leading to a gradual convergence process. The model struggles to fully capture the complex correlations among cloud service anomaly features, resulting in a relatively low F1-Score. Although the model is stable under this condition, its response to sudden anomalies is not sensitive enough, making it difficult to identify rapidly evolving service faults effectively.

When the learning rate increases to 0.001, the model achieves its best performance with an F1-Score of about 0.888. This indicates that the parameter update speed and gradient variation are well balanced at this stage. The graph structural information and temporal dependency features are effectively integrated, enabling precise anomaly perception and fault prediction in complex cloud service topologies. The model shows optimal gradient convergence, can quickly capture system state changes, and avoids excessive oscillations. This demonstrates the stable learning characteristics of spatiotemporal joint modeling under dynamic cloud environments.

However, when the learning rate continues to increase (for example, from 0.005 to 0.05), the model performance begins to decline. An excessively high learning rate causes violent gradient updates, which may overshoot the optimal solution region and lead to unstable oscillations in parameter space. This instability is more apparent in cloud environments characterized by high noise and non-stationarity. Such irregular updates disrupt the continuity of temporal features and the consistency of topological constraints, resulting in blurred anomaly detection boundaries. Therefore, choosing an appropriate learning rate is essential to ensure stable convergence and high-accuracy prediction in complex and dynamic topologies.

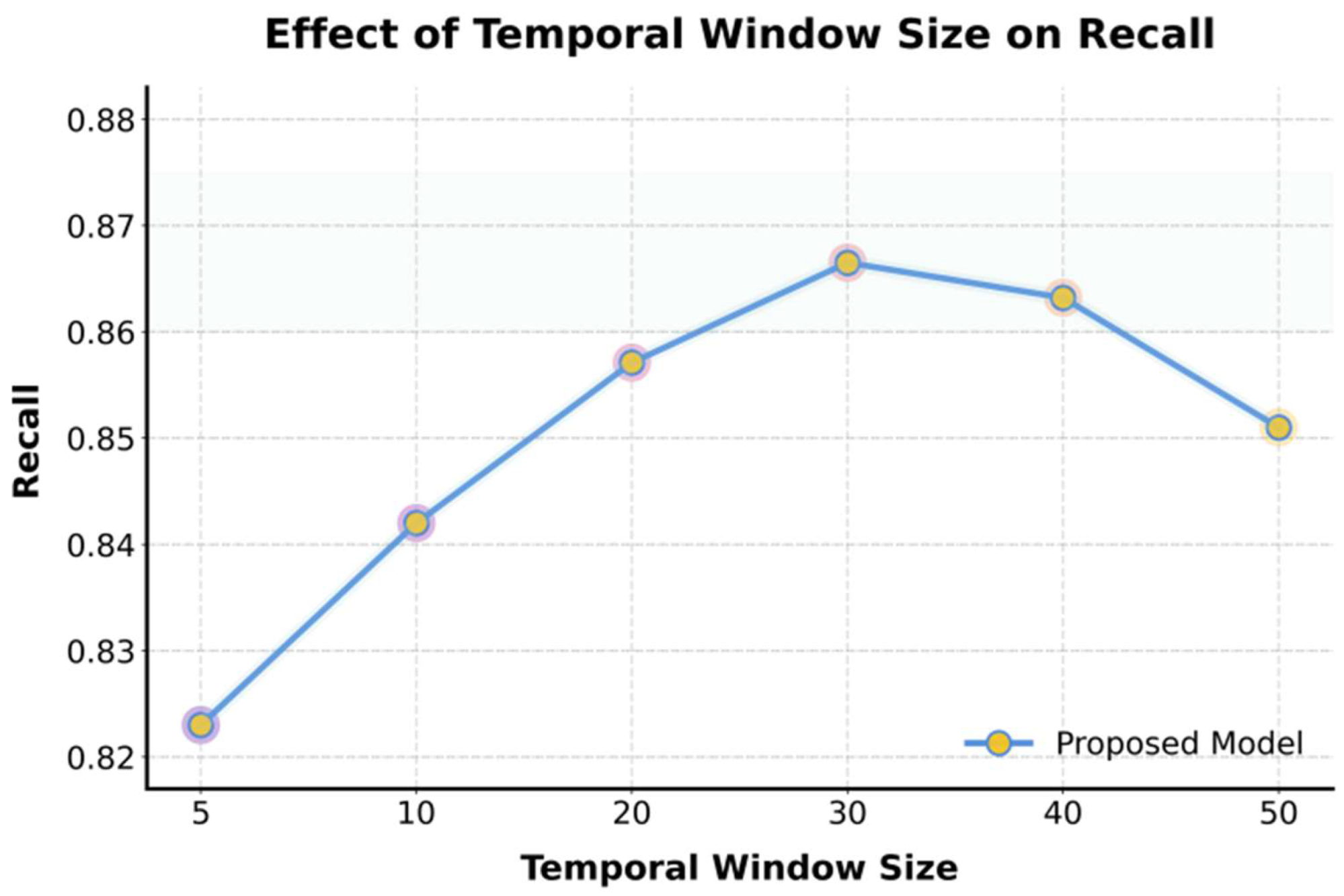

This paper also presents an experiment on the impact of time window length on prediction recall performance, and the experimental results are shown in

Figure 3.

The experimental results show that the length of the time window has a clear nonlinear effect on the model's recall performance. When the time window is short (for example, 5 or 10), the model has limited ability to capture temporal variation patterns, leading to incomplete recognition of anomalies. A short window cannot fully utilize the historical information of service states and fails to extract long-term dependency features of anomaly evolution. As a result, the model's perception range for sudden faults is restricted, which is reflected in a lower recall value.

As the time window increases to 20 or 30, the model's recall improves significantly and reaches its peak at a window length of 30. This indicates that within this range, the model achieves a good balance between short-term dynamics and long-term trend modeling, effectively capturing cross-temporal dependencies in cloud service systems. A longer temporal context helps the model understand the periodic variations and latent delay effects of service performance, making anomaly detection more comprehensive and stable. These findings confirm the critical role of temporal features in dynamic cloud environments and highlight the advantage of the proposed method in modeling long-term dependencies.

When the time window continues to increase (for example, 40 or 50), the recall slightly decreases. This suggests that overly long sequences introduce redundant or outdated information, which increases noise interference and computational complexity. In such cases, the model's attention may become dispersed, reducing its focus on key anomalous segments and weakening detection sensitivity. Therefore, an appropriate time window design is crucial for cloud service fault prediction. It should cover sufficient temporal information to reflect evolutionary patterns while avoiding the performance degradation caused by redundancy and dynamic drift.

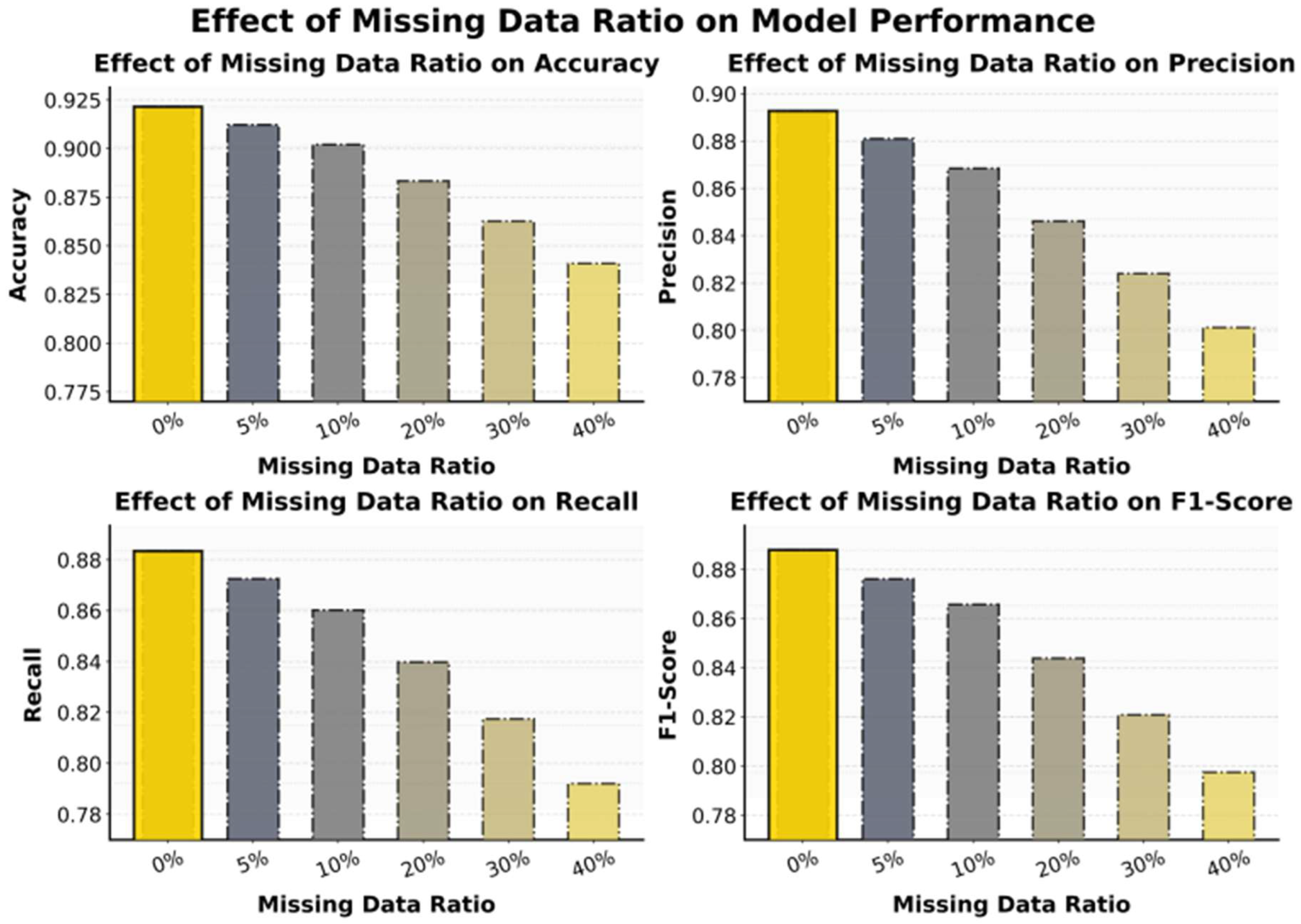

This paper also presents the impact of the missing data ratio on the experimental results of the model, and the experimental results are shown in

Figure 4.

The experimental results show that as the data missing rate increases, the model exhibits a gradual decline across all evaluation metrics, with the most significant drops observed in accuracy and F1-Score. When the missing rate is low (0%–10%), the model still maintains a high level of performance. This indicates that the proposed graph-temporal fusion structure has certain robustness and fault-tolerance capabilities. At this stage, the model alleviates the information loss caused by partial data absence through graph structure modeling and temporal context compensation, ensuring stable and reliable predictions under complex cloud service environments.

When the missing rate reaches 20% to 30%, the model performance decreases significantly. Missing samples lead to incomplete node feature representations and disrupt the continuity of temporal dependencies. This interference affects the model's ability to identify anomaly propagation paths and capture dynamic features. Feature loss also causes insufficient information transmission within the graph structure, leading to imbalanced weight updates for some critical nodes and a noticeable decline in recall. In multi-node dependency scenarios of cloud services, missing data amplify local errors and propagate them through the topology, resulting in cumulative effects on global perception.

When the missing rate exceeds 40%, the model's predictive performance becomes nearly unstable, indicating that large-scale data loss surpasses the model's adaptive compensation capacity. Although the model retains some degree of fault tolerance, the spatiotemporal fusion module fails to maintain effective representations under highly sparse inputs, reducing the discriminative power of anomaly features. These results demonstrate that data completeness is crucial for cloud service anomaly perception. The proposed method, however, can still maintain high detection accuracy under low to moderate missing rates, showing its adaptability and robustness to common data missing issues in real-world cloud platforms.

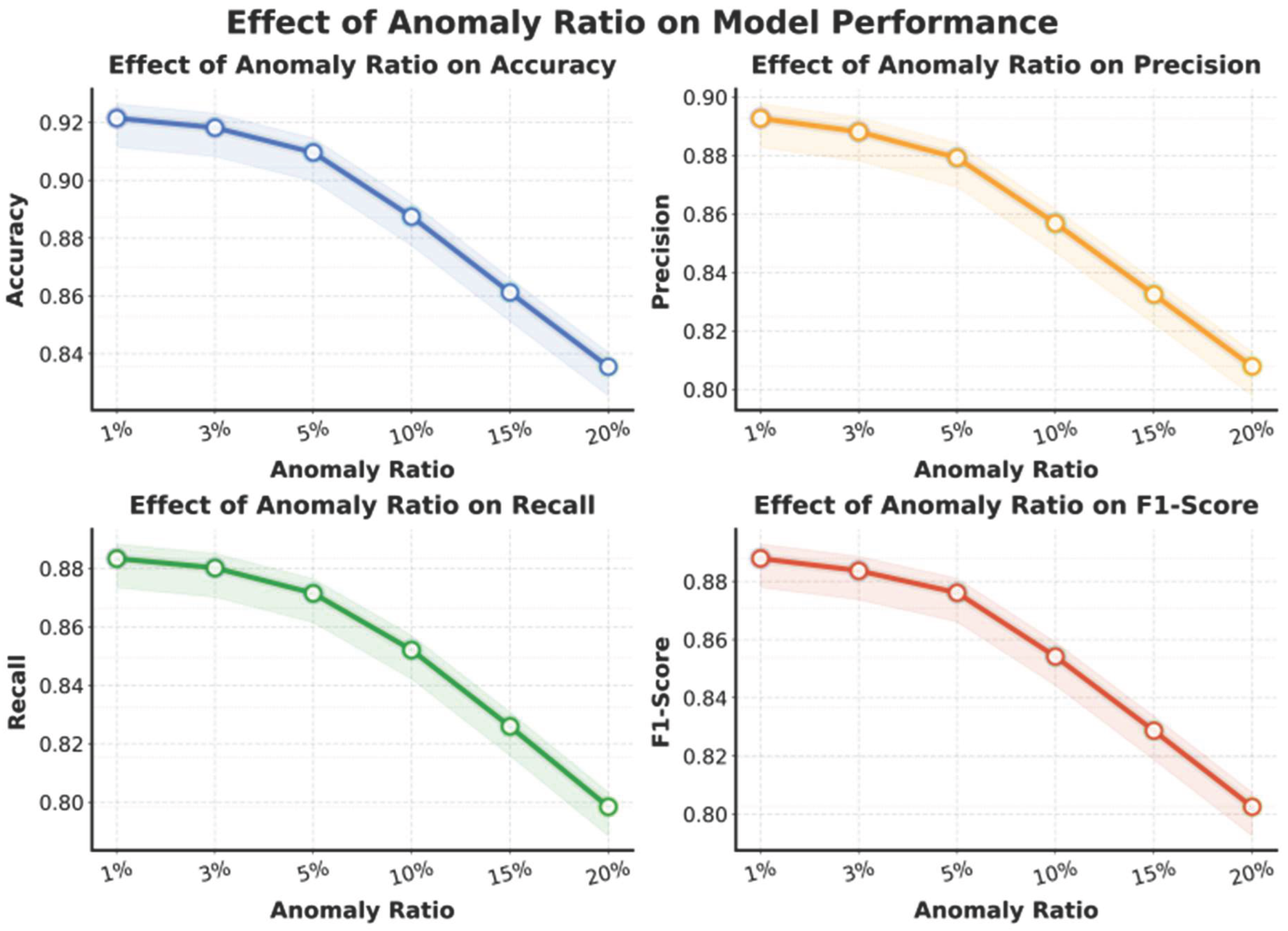

This paper also presents the impact of the anomaly ratio on the experimental results of the model, and the experimental results are shown in

Figure 5.

The experimental results show that as the proportion of anomalous samples increases, the model exhibits a gradual decline in performance across all metrics, with the most significant decreases observed in accuracy and F1-Score. When the anomaly proportion is low (1%–5%), the model maintains stable overall performance. This indicates that the proposed graph-temporal fusion structure can effectively recognize anomalies when the data distribution is balanced. At this stage, the model fully utilizes structural dependencies and temporal correlations to detect subtle fluctuations and potential risks in cloud service systems, demonstrating robustness and sensitivity in low-anomaly environments.

When the anomaly proportion rises to around 10%, the model performance begins to decline noticeably. The main reason is that the increased proportion of anomalous samples in the training set causes a shift in the decision boundary, which interferes with the learning of normal patterns. Since anomalies in cloud services often exhibit nonlinear propagation and complex cross-node dependencies, a higher anomaly proportion leads to feature noise accumulation when aggregating graph structural information, weakening the temporal consistency of the model. In addition, some anomaly patterns overlap semantically, further increasing classification difficulty and causing simultaneous drops in recall and precision.

When the anomaly proportion exceeds 15%, the model performance deteriorates rapidly. This indicates that in high-anomaly scenarios, the extreme distribution shift severely affects the model's discriminative capability. The local smoothness assumption of the graph structure is no longer valid, resulting in inaccurate feature propagation among nodes and reduced temporal prediction ability. These results suggest that although the proposed method demonstrates strong structural modeling and temporal learning capabilities, additional strategies such as data resampling or anomaly-weight balancing are needed in high-anomaly environments. Such strategies can help maintain model robustness and generalization performance, enabling better adaptation to the imbalanced data distributions commonly found in complex cloud platforms.