Submitted:

28 February 2026

Posted:

03 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1 The Core Problem: Evolving Data Distributions in Real-World PHM

1.2. Limitations of conventional Deep Learning Models for RUL Prediction

1.3. Motivation for Integrating Domain Adaptation and Continual Learning

1.4. Scope and Objectives of this Review

- analyze the sources and characteristics of evolving data distributions in bearing prognostics,

- review DA techniques relevant to cross-condition and cross-domain RUL prediction,

- review CL strategies applicable to sequential and long-term prognostic scenarios,

- examine existing studies that combine or bridge domain adaptation and CL concepts, and

- identify open challenges and future research directions toward reliable lifelong prognostic systems.

2. Background – Related Works

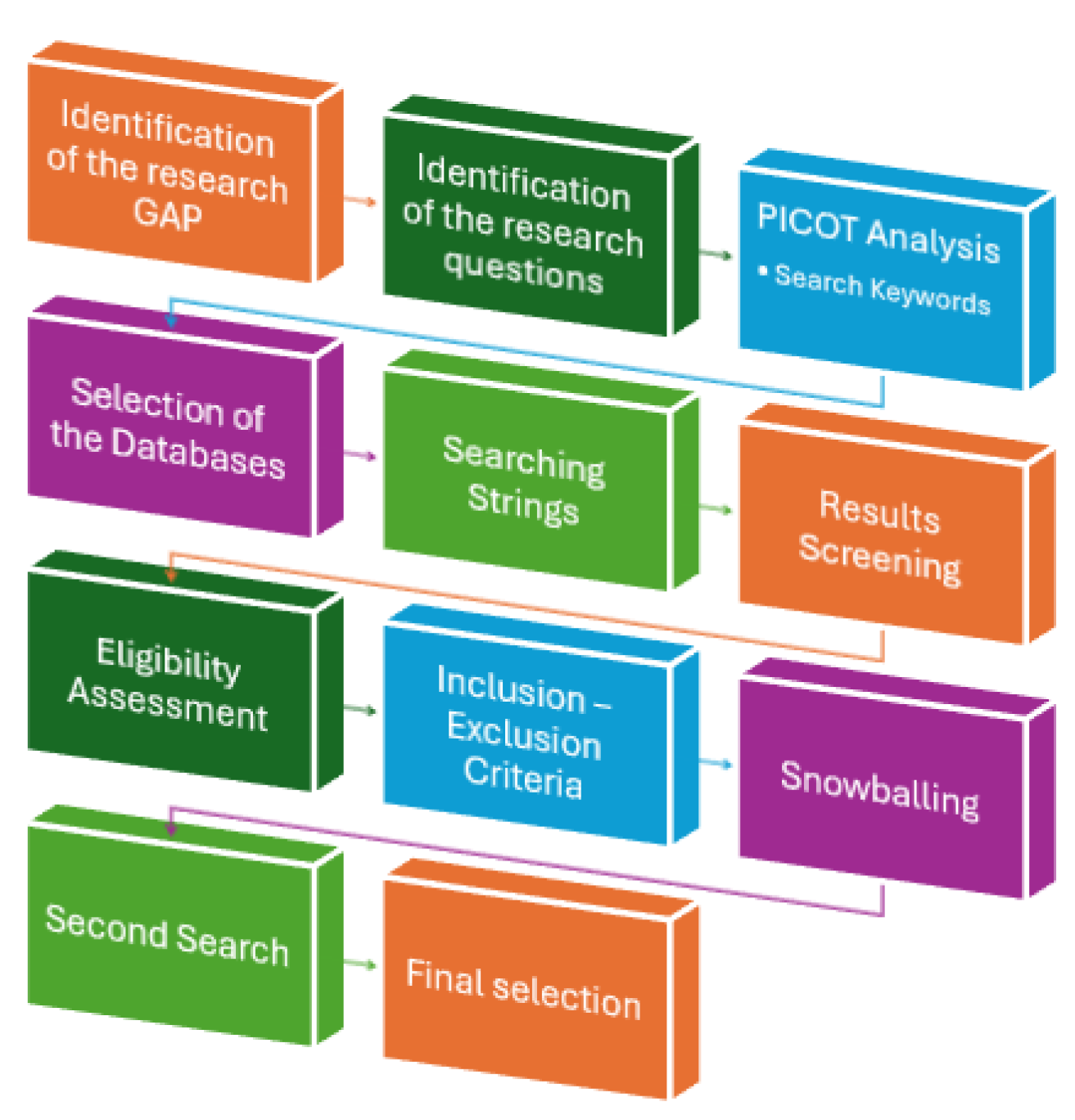

3. Methodology

3.1. Research Objectives and Review Scope

3.2. PICOT Framework

3.3. P (Population / Problem):

3.4. I (Intervention):

3.5. C (Comparison):

3.6. O (Outcomes):

- Reduced catastrophic forgetting in continual learning scenarios

- Real-time adaptability for online condition monitoring

- Improved reliability and safety of industrial predictive maintenance strategies

| Application Domain (P) | bearing rotating machinery |

| Prognostics Task (O) | RUL prediction Fault prognosis Health degradation Predictive maintenance |

| Learning Paradigm (I/C) | Deep learning Data driven models Domain adaption Incremental learning Continual learning |

- Initial search: conducted in August 2025

- Update search: conducted five months later in January 2026, prior to manuscript submission.

3.7. Eligibility Criteria

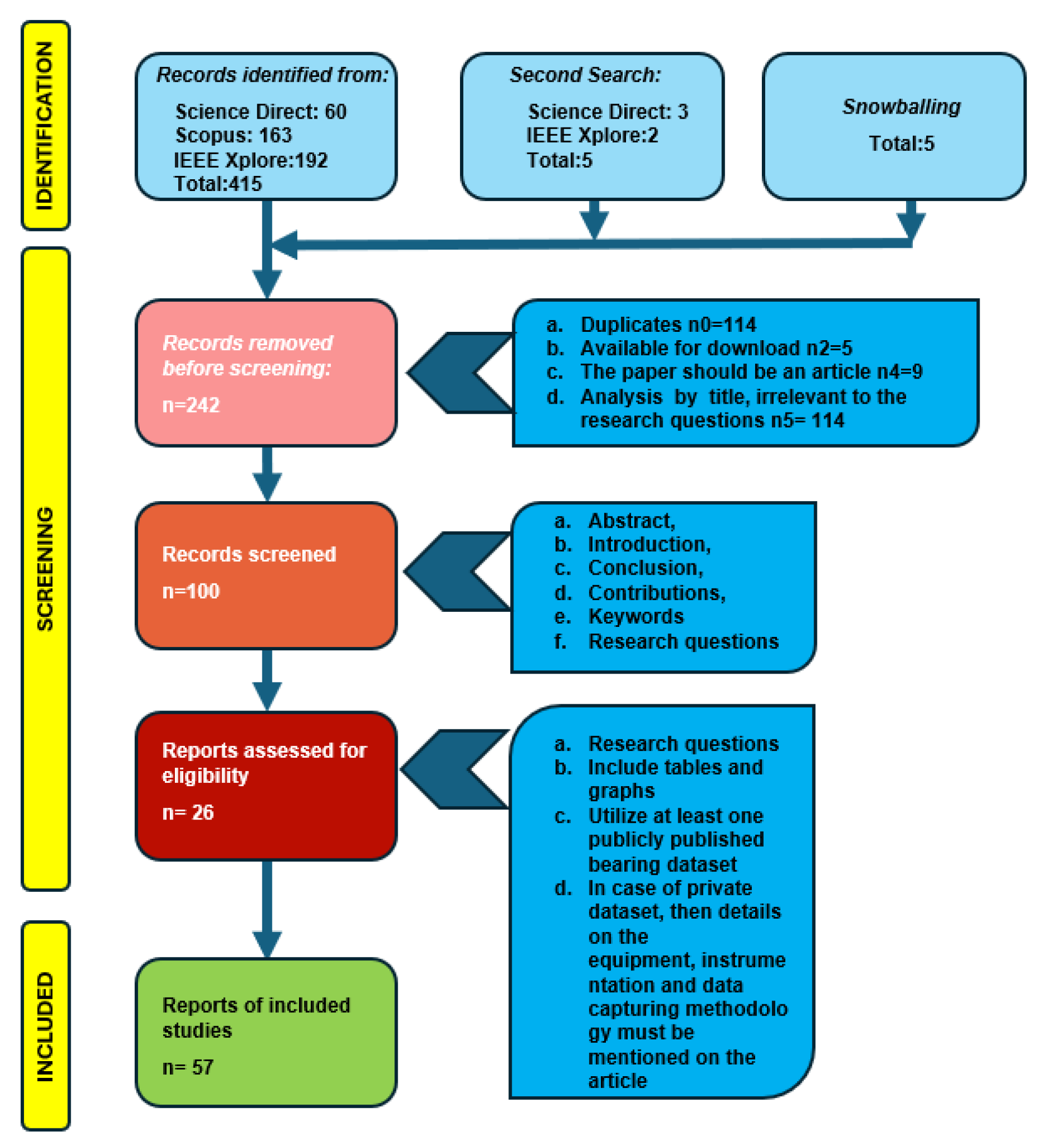

3.8. First Stage – Initial Selection

- They should be published after 2020

- Duplicates n0=114

- Language English n1=0

- Available for download n2=5

- The articles should be published in a peer-reviewed journals and papers n3=0

- The paper should be an article n4=9

- Analysis by title, irrelevant to the research questions n5= 114

3.9. Second Stage - Analysis by Abstract, Introduction, Conclusion, Contributions, Keywords

- Empirical, theoretical, or methodological contributions

- Relevant to the research questions

- Key words like Adaptive Learning, Few Shots, Incremental (Continual) learning and Domain adaptation should be all included in the paper

- Contribution to the specific field of Domain adaptation, Continual Learning and their integration in a unified paradigm.

- Excluded n6= 100

3.10. Selection of Papers - Third stage - Complete Reading of the Papers. Extraction of Answers Related to Research Questions

- Relevant to the research questions

- The papers must include tables and graphs with experimental results and conclusions.

- The research should utilise at least one well-known, publicly published university ball bearing dataset, such as NASA’s bearing dataset, the Intelligent Maintenance Systems (IMS) dataset, or IEEE PHM2012.

- If the researchers used a private dataset, details on the equipment, instrumentation, and data capturing methodology must be mentioned in the article.

- Excluded n7= 26

3.11. Snowballing

| First Stage – Aug 2025 | |||

| Science Direct | (“transfer learning” OR "domain shift" OR "domain adaptation" OR "adaptive learning" OR "Incremental learning" OR "Few-shot learning" OR "variable operating condition") AND "remaining useful life" AND "bearing" | 60 | 2020:1 2021:6 2022:8 2023:10 2024:16 2025:18 2026:1 |

| IEEE Xplore | (("All Metadata": transfer learning OR "All Metadata": domain shift OR "All Metadata": domain adaptation OR "All Metadata": adaptive learning OR "All Metadata": Incremental learning OR "All Metadata": few-shot learning OR "All Metadata": variable operating condition*) AND ("All Metadata": remaining useful life) AND ("All Metadata": bearing*) ) | 192 | 2020:7 2021:23 2022:30 2023:40 2024:57 2025:35 2026:3 |

| Scopus | TITLE-ABS-KEY ( ( “transfer learning” OR "domain shift" OR "domain adaptation" OR "adaptive learning" OR "incremental learning" OR "Continual learning" OR "Few-shot learning" OR "variable operating condition" ) AND "bearing*" AND "remaining useful life" ) | 163 | 2020:4 2021:19 2022:26 2023:31 2024:50 2025:33 2026:0 |

| TOTAL | 415 | ||

| Second Stage – Jan 2026 | |||

| Science Direct | (“transfer learning” OR "domain shift" OR "domain adaptation" ) AND ("Incremental learning" OR "Continual Learning” AND “Catastrophic forgetting” ) AND "remaining useful life" | 3 | |

| IEEE | (("All Metadata": transfer learning OR "All Metadata": domain shift OR "All Metadata": domain adaptation ) AND ("All Metadata": Incremental learning OR "All Metadata": Continual Learning OR "All Metadata": Catastrophic Forgetting) AND ("All Metadata": remaining useful life) AND ("All Metadata": bearing*) ) | 2 | |

| Total: | 5 | ||

4. Tackling Domain Shift: A Review of Domain Adaptation in PHM

4.1. Domain Shift in PHM Applications

- Variations in the rotational speed, applied load, and duty cycles [8].

- Changes in ambient conditions such as temperature, humidity and noise [48]

- Sensor replacement, recalibration, reconfiguration or drift [21]

- Differences in machinery configurations or manufacturing tolerances [47].

- Progressive component degradation and maintenance interventions [8].

- Incomplete or imbalanced data [23].

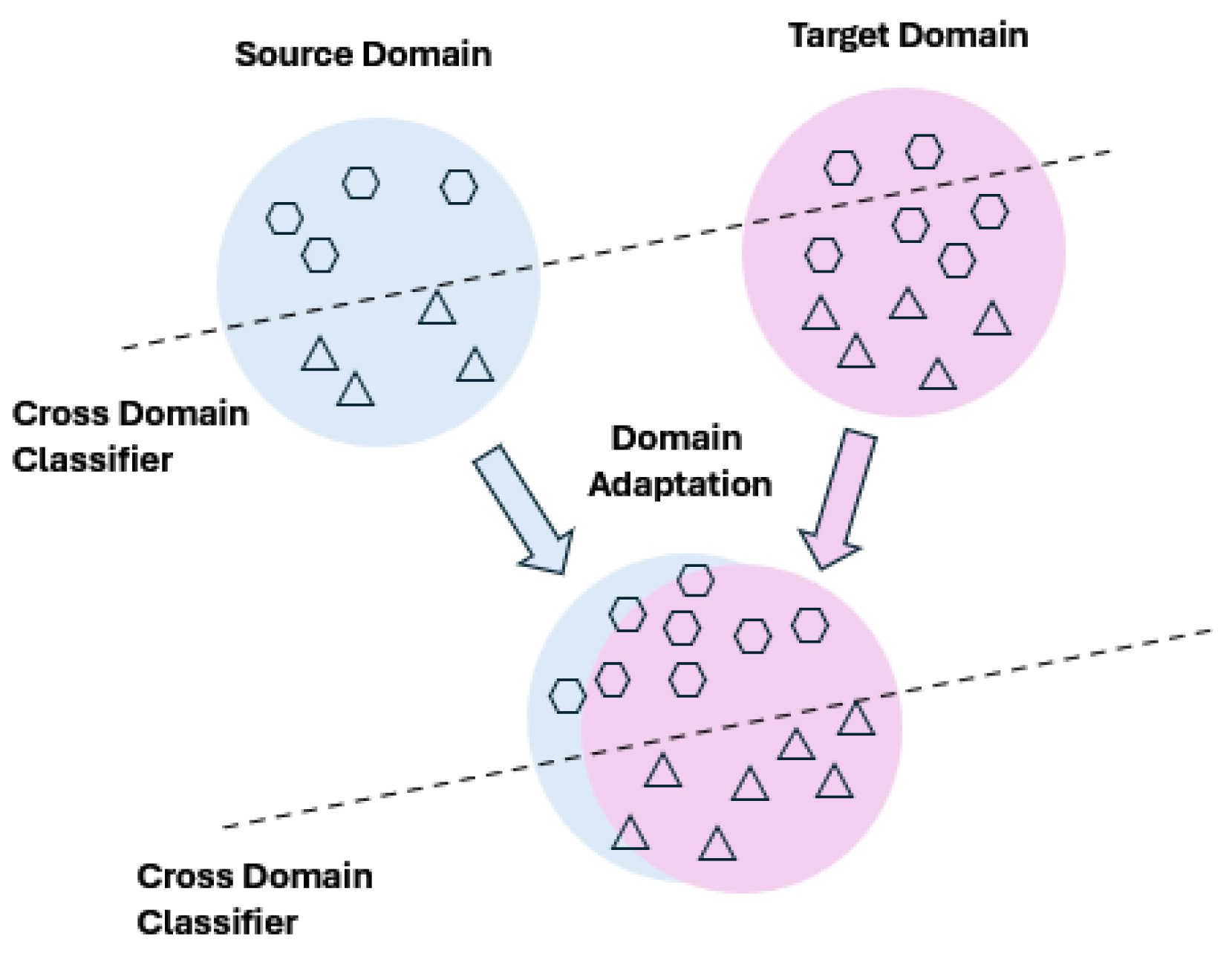

4.2. Fundamentals of Domain Adaptation

4.3. Categories of Domain Adaptation Methods in PHM

4.4. Feature-Based Domain Adaptation

4.5. Parameter-Based Domain Adaptation

4.6. Hybrid Domain Adaptation Approaches

4.7. Representative DA Applications in Bearing RUL Prediction

4.8. Open-Set and Unsupervised Domain Adaptation Challenges and Limitations of Domain Adaptation in Dynamic Environments

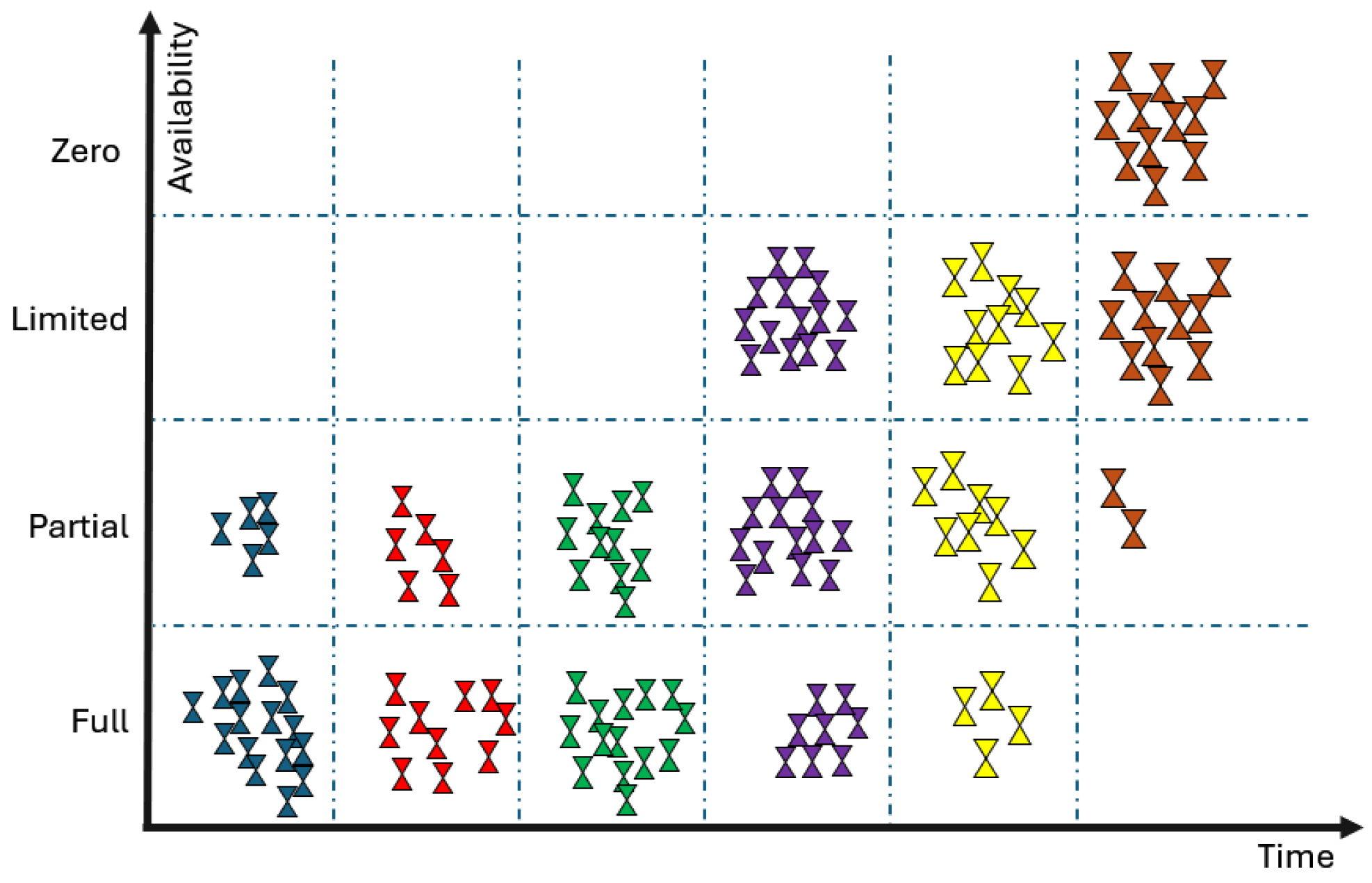

- A primary limitation of traditional DA methods is the assumption that the data in the source and target domains are balanced and complete, which means that all fault categories are present. However, in real-world online monitoring, the target data are often acquired sequentially. At the beginning of monitoring, only healthy data may be available, while fault data has not yet been generated [8]

- Adaptation is typically performed once per target domain, preventing lifelong learning. Most of the DA techniques assume that the target domain's data distribution is fixed and fully available during training, which prevents the model from engaging in lifelong or CL as the machinery's condition evolves over time [8], [11], [39]

- Multitask learning and knowledge accumulation across sequential domains are neglected, leading to catastrophic memory losses. Moreover, in practical applications, machinery may operate under various new working conditions or equipment configurations that were not available during training [14], [16].

- Most deep-learning-based DA methods focus solely on improving point prediction accuracy and do not account for the uncertainty inherent in the stochastic degradation processes of machinery components. This results in prediction results with unknown credibility, which affects decision-making for predictive maintenance [23]

5. Learning Sequentially: A Review of Continual Learning in PHM

5.1. Motivation for Continual Learning in PHM

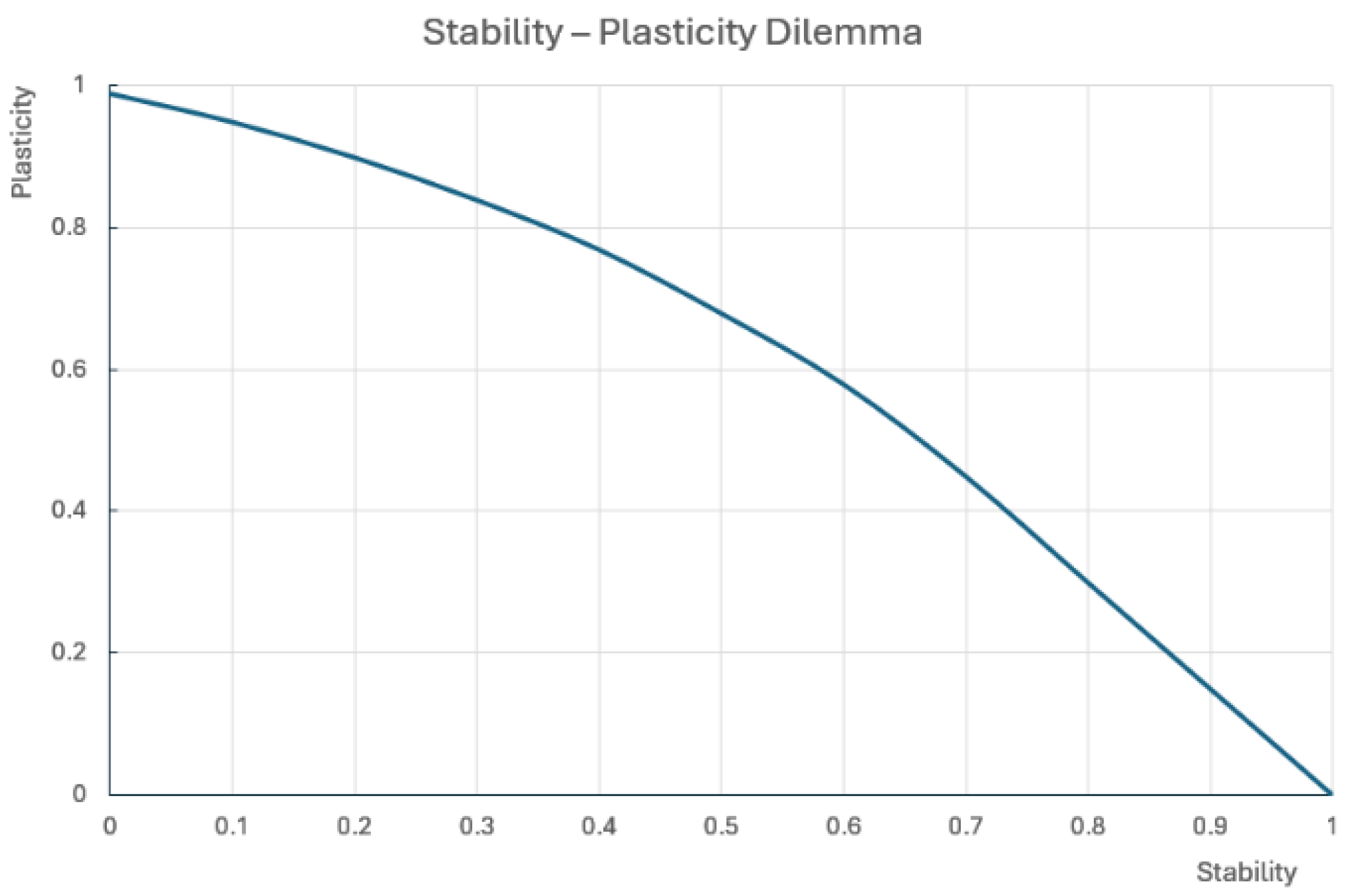

5.2. The Plasticity–Stability Dilemma and Catastrophic Forgetting

5.3. Core Continual Learning Strategies

5.4. Regularization-Based Methods

5.5. Replay-Based Methods

5.6. Architecture-Based and Parameter Isolation Methods

5.7. Continual Learning Scenarios in PHM Applications

5.8. Challenges and Limitations of Continual Learning for PHM

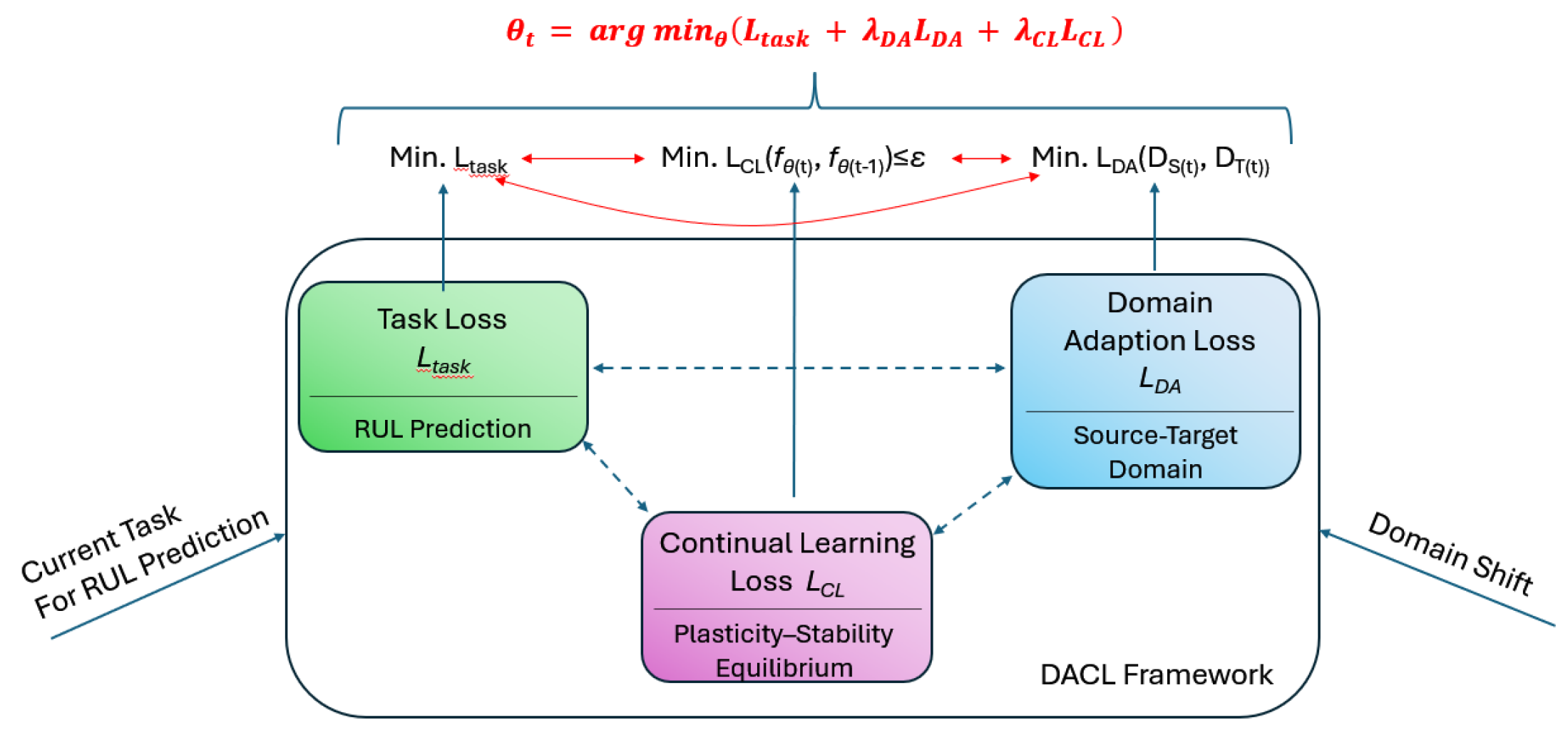

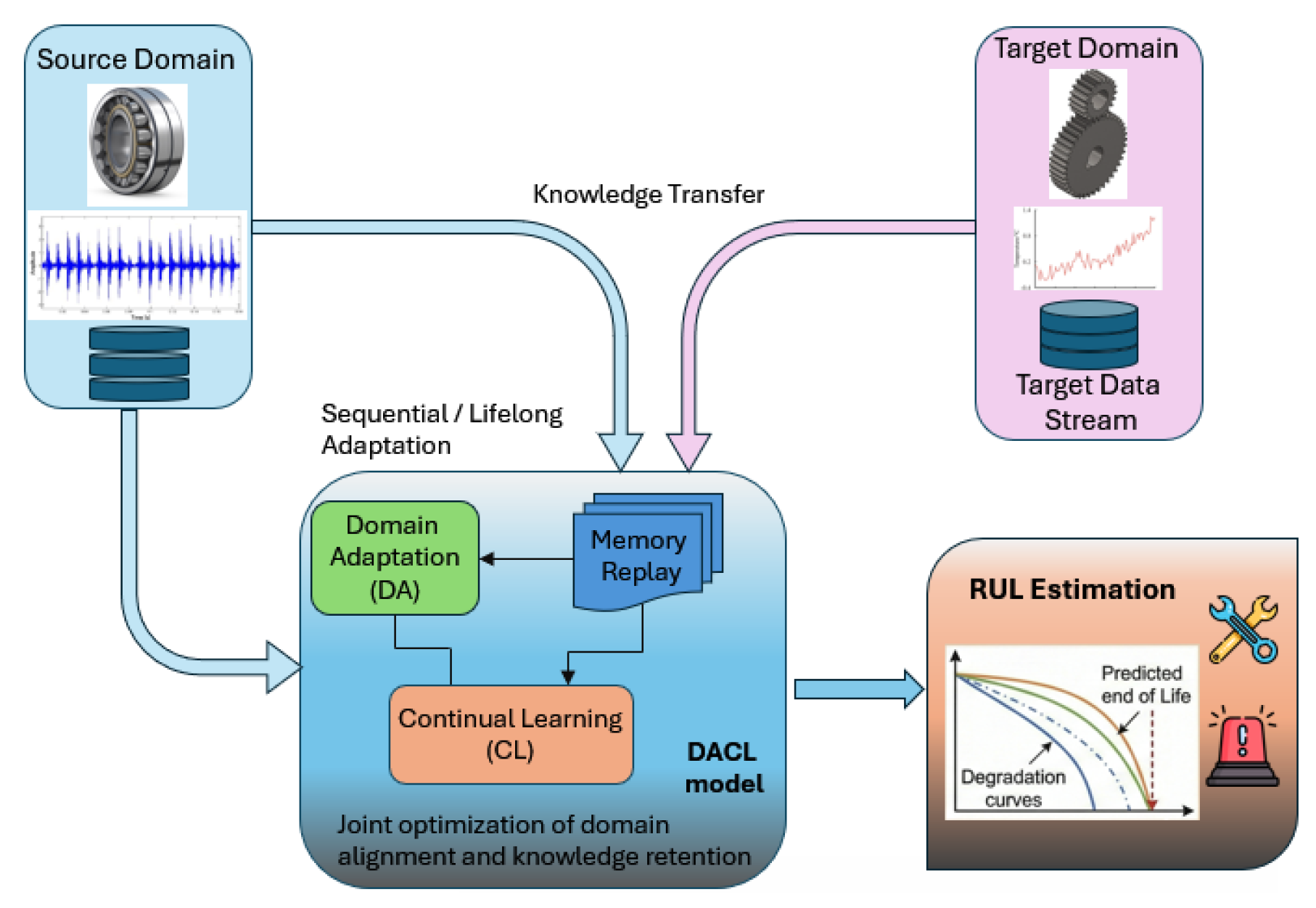

6. The convergence: Domain Adaptive Continual Deep Learning for Bearing Prognosis & RUL Estimation

6.1. Motivation for Integrating DA and CL in Real World PHM Applications

6.2. Problem Formulation

6.3. Integrated Learning Frameworks

6.4. Inter–Intra Task Feature Alignment and Attention Mechanisms [7], [41], [52], [60]

6.5. Pseudo-Labelling and Center-Aware Adaptation Strategies [25], [36], [41], [52]

6.6. Rehearsal-Based Memory Mechanisms [5], [16], [35], [41], [52], [53]

6.7. A Review of Domain-Adaptive Continual Learning Approaches

| Author |

Application/ Dataset |

Model and Method | Integrated Learning Framework | Key Contributions | Limitations |

| Mao et al (2025) [36] |

Online RUL prediction for rolling element bearings under varying and unknown operating conditions. (XJTY-SY dataset and IEEE PHM2012 PRONOSTIA platform dataset. UNSW Bearing Dataset) |

Deep Incremental Regression TL method. Denoising Autoencoder (DAE) for vibration signal preprocessing Multi-layer LSTM feature extractor Fully-connected regression head for RUL prediction Wiener process-assisted incremental update mechanism for online DTL |

Built around the Wiener process–assisted pseudo-labelling of online data to mitigate the drift of operating conditions. | Data–Model Interactive Prognostics Framework: Essential degradation information extracted from offline data and online dynamic tendency information provided by the Wiener process. No need for full-life data of target machine Robust under unknown and varying operating conditions |

The proposed model initially requires historical full-life degradation data from similar types of machines. The Wiener process formulation assumes consistent degradation evolution. LSTM pre-training and feature adaptation increase the offline training costs. The authors did not test their model on real industrial feed data. |

| Xuan et al (2025) [61] |

Fault prognostics and RUL prediction for predictive maintenance under evolving operating contexts for aircraft turbofan engines. (NASA C-MAPSS dataset) |

Bayesian Neural Network (BNN) with variational inference for probabilistic RUL regression. | Regularization-based lifelong learning with variance-based importance weighting, stability loss, variance boosting, and decoupled optimization (no memory, no replay) | Introduces a Bayesian Lifelong Learning Framework for Fault Prognostics. The model is trained with variance boosting and decoupled optimization that enables a better stability-plasticity trade-off. Privacy-Preserving Continual Prognostics since previous datasets are not stored |

Higher computational cost because the BNN is trained more slowly than other deterministic networks. The models were updated in sequential batches but not in online streaming data. However, it has not been validated using real industrial data. |

| Guo & Sun (2025) [26] |

Gas-path fault diagnosis of aero-engines under dynamic and non-stationary operating conditions. (High-fidelity physics-based turbofan simulation dataset from Xi’an Jiaotong University) |

Continual domain adaptation framework (RCDAF) 1D CNN-based fault classifier with continuous unsupervised domain adaptation. Controllable Batch Normalization (CBN) mechanism |

The proposed framework utilises memory-assisted continual domain adaptation with pseudo-labelling, supervised and pseudo-supervised contrastive learning (SCL+PSC), dual knowledge distillation, rehearsal buffer, controlled batch normalisation, and restraining-past regularisation. | Robust continual domain adaptation framework for aeroengine PHM. Introduces SCL plus PSC for robust semantic consistency under a domain shift. It demonstrates forgetting-resistant knowledge retention. The model is robust under Noise & Sensor Drift |

The model relies on pseudo-labels, which may degrade when they become unreliable. Multi-objective optimisation and replay introduce additional training costs. Large, sudden data shifts can temporarily destabilise adaptation. |

| Zhao et al (2025) [5] | Rotating machinery fault diagnosis (HUST, WT gearbox, experimental, rolling mill experimental) | Dual shared space-driven domain continuous learning network (DSS-DCL). The model integrates: Shared Parameter Space Constraint and Parameter Distribution Uniformity, and Shared Feature Space Constraint -Multi-Perspective Knowledge Distillation (LMPKD) |

Rehearsal is utilised through experience replay with compact memory. | This study proposes a Dual shared space-driven domain continuous learning network (DSS-DCL), a unified model for domain-incremental fault diagnosis in dynamic environments. Introduces the parameter distribution uniformity loss (LPD) to expand the shared feasible parameter region and improve the stability–plasticity trade-off. It designs multiperspective knowledge distillation (LMPKD) to preserve multiscale representations and logits across domains. It employs a focal loss to mitigate the imbalance between the new domain data and replayed samples. |

Memory storing limitations Upper bound achieved by joint training to evolve the working conditions. Although compact, the model is directly dependent on LPD/LMPKD/focal multi-hyperparameter fine-tuning. |

| Kim et al (2025) [16] | RUL prediction under varying and time varying operating conditions (real-world machine – Robot Milling Dataset) |

Fisher-informed continual learning (FICL) Sharpness Aware Minimization (SAM) |

Rehearsal - A regularisation-based- continual learning that preserves old knowledge without storing old data explicitly is utilised, which is not the classic rehearsal model. | Single predictive model for RUL prediction under varying operating conditions without the need to save the previously trained models. RUL prediction performance for previous operating conditions |

This research is currently validated only for single-tool RUL prediction. This study assumes that the RUL decreases linearly with time. The theoretical relationship between FICL’s loss landscape analysis and "mode connectivity" in deep learning optimization has not yet been fully explored |

| Li et al (2025) [41] |

RUL prediction of bearings specifically addressing challenges in wind turbines where working conditions are harsh and variable (XJTY-SY dataset, IEEE PHM2012 PRONOSTIA platform dataset, Bearings from on-site wind turbine high-speed shafts) | Trend-Constrained Incremental Transfer Prognosis (TCITP). Demodulation Feature Fusion (DFF)-based Health Indicator Construction. Trend-Constrained Transfer Learning. Trend-Constrained Transfer Learning |

Rehearsal: This study adopted experience replay–based incremental learning. Previously learned knowledge is retained and replayed during incremental updates. | A novel feature fusion health indicator was proposed to predict bearing aging. A trend-constrained transfer learning method is presented in this paper for state estimation. An incremental prognosis method is developed to update the prognostic model for predicting the remaining useful life. It can update the prognostic model with newly acquired transfer pairing and review the previous knowledge with experience replay method |

The proposed method cannot handle abrupt degradation. The generalisation to unlabelled target domains is limited. The approach assumes that the degradation trends across domains are comparable and monotonic |

| Guo et al (2025) [15] |

RUL prediction for rolling element bearings under varying operating conditions and degradation trends. (PHM2012 PRONOSTIA platform dataset) | Stage-related Online Incremental Adversarial Domain Adaptation (SR-OIADA) transfer learning algorithm. Based on DANN: Feature extractor: 1D CNN (TCNN), Domain discriminator (with Gradient Reversal Layer), RUL regression predictor, Unsupervised stacked autoencoder (SAE) for online degradation stage detection |

Buffer Rehearsal. Historical online samples are retained and reused at each checkpoint via incremental learning to prevent the forgetting of information. Moreover, Stage-related adversarial domain adaptation aligns source vs. target features (inter-domain) and stage-to-stage degradation representations (intra-process). | A novel online degradation stage division algorithm is proposed to adaptively detects the health status of the bearings monitored online. A Stage-related Online Incremental Adversarial Domain Adaptation (SR-OIADA) transfer learning algorithm is proposed that integrates incremental learning, transfer learning, and adversarial domain adaptation. |

The update times were defined empirically. However, these studies did not propose an adaptive checkpoint-triggering mechanism. The HI stage division was fixed at three stages. |

| Zeng et al (2024) [24] |

Online self-driven RUL prediction for rolling bearings under cross-condition, cross-machine scenarios, and large distribution divergence. (PHM2012 PRONOSTIA platform dataset, XJTU-SY bearing dataset) | Bayesian Domain-Adversarial Regression Adaptation (BDRA), Deep Autoencoder (DAE) feature extractor, Tensorized domain discriminator (DANN-style), Bayesian LSTM regressor with Variational Inference (VI), Tucker tensor decomposition for degradation representation |

Pseudo-Labelling, Online target data chunks receive pseudo-labels generated by the Bayesian pre-training network and are used for incremental regression updates. Moreover, Domain-adversarial training aligns source vs target (inter-domain) features using a tensorized discriminator; monotonicity-aware self-supervised learning aligns intra-degradation trends between successive online chunks | This study proposes a bidirectional information transfer mechanism for regression transfer learning in an online scenario. Utilizes core tensor for self-supervised information and Bayesian VI for pseudo-label information Introduces an innovative framework for self-driven RUL transfer prediction in open environments This enables lightweight prediction and reduces error accumulation without run-to-failure data from the target machines. Provides a confidence interval for prediction results, offering clear practical value |

High model complexity. The current method may not provide a sufficiently rational interval for the prediction results because it uses a Gaussian distribution to estimate the regressor output. The authors noted that Weibull modelling better represents the failure behaviour. The model performance depends on the selection of an appropriate online chunk size for the training data. |

| Zeng et al (2024) [62] |

Online RUL prediction of rotating machinery (rolling bearings) under unknown and drifting working conditions. (PHM2012 PRONOSTIA platform dataset, XJTU-SY bearing dataset) | Tensor Domain-Adversarial Regression Adaptation with Interpretability (TDARA), Stacked Denoising Autoencoder (SDAE) for features extraction, Tensor Representation Module that captures degradation structure and temporal correlations, Tensor Domain Discriminator that Aligns source and target feature distributions using adversarial loss, LSTM regressor predictor trained on source RUL labels | The research assigns pseudo RUL labels generated by a pre-trained model to the online target data blocks. The pseudo-labels were then used to update the incremental regressions. Tensor domain adversarial training aligns source vs. target (inter-domain) features, and trend regularisation aligns the intra-degradation temporal structure across the blocks. | This study integrates a DANN with Tucker tensor decomposition to preserve the degradation structure, while aligning the domains. Tensor Tucker decomposition was utilised to identify key features and analyse interpretability at the geometric level. Extractes degradation trend information based on core tensor and established multi-scale evaluation criteria for transferability | The proposed method utilises an incremental continual learning method which is not evaluated under multitask CL benchmarks. The proposed method imposes a risk of gradual drift because it does not explicitly retain knowledge with replay or memory mechanisms. Trend modelling assumes monotonic degradation which may not generalise to all assets. |

| Xie et al (2024) [60] |

Online RUL prediction with uncertainty quantification for continuously running machinery. (XJTU-SY bearing dataset, NCWP journal-bearing dataset, C-MAPSS turbofan engine dataset) | Incremental Contrast Hybrid Model (ICHM), and Contrastive Learning Transformer (CLformer), which predict the degradation trend and increments online. Enhanced Generalized Wiener Process (EGWP), calculates the RUL probability density function | The authors used an incremental contrastive learning method that aligns future latent features with actual future latent features (positive pairs), enforcing temporal/intra-trajectory consistency under distribution drift. | The proposed incremental contrast hybrid model (ICHM) is updated in real time to mitigate the prediction offset and align the past and future latent representations. Moreover, the model can adapt online in real time without the need for run-to-failure retraining. | This study uses RMS as the HI and monotonic trend for RUL thresholding, which may be less suitable for non-monotonic degradation applications. The proposed model appears to be sensitive to the selection of the IL and sliding window hyperparameters. The authors note that incremental data from very different domains may harm final prediction if deployed cross-domain |

| Liu et al (2024) [27] |

Online industrial fault prognosis and RUL prediction under dynamic operating conditions using task-free continual learning. It focuses on real-time predictive maintenance using non-stationary sensor data streams. (CMAPSS, N-CMAPSS) | Online Task-Free Continual Fault Prognosis (OTFCFP) framework based on a Continual Neural Dirichlet Process Mixture (CN-DPM) model. Bayesian nonparametric Dirichlet Process Mixture, Attention-based Temporal Convolutional Network (TCN) for RUL regression, GRU-based Variational Autoencoder (VAE) for task identification and density estimation | The proposed work uses an Expansion-based task-free continual learning via Bayesian Mixture of Experts (CN-DPM) | A novel online task-free continual fault prognosis paradigm for practical industrial scenarios is proposed. Introduces a CN-DPM-based continual learning framework that automatically identifies task boundaries and allocates new experts to them. It integrates a Mixture of Experts with Bayesian nonparametric learning to avoid catastrophic forgetting. | The proposed model assumes the same sensor space; hence, distribution shifts are handled via expert expansion only. The authors did not use domain adaptation or feature alignments. The model requires labelled RUL data for training. |

| Li et al (2024) [57] |

Digital Twin (DT) for rolling bearings with online dynamic evolution and RUL prediction under varying working conditions. (PHM2012 PRONOSTIA) | End-Edge-Cloud Digital Twin architecture with a Condition-Adaptive Dynamic Continual Learning Digital Twin Model (CADCL-DTM) | The model utilises regularisation-based continual learning using EWC with condition-adaptive penalty. | This study proposes a realistic end-edge-cloud Digital Twin architecture for rolling bearings. It Introduces CADCL-DTM, a condition-adaptive continual learning DT model. Designs different regularisation penalties for intra-condition and inter-condition task transitions. This enables the DT to continuously learn new working conditions while mitigating catastrophic forgetting. |

In this study, the authors did not perform a standard domain adaptation. Condition changes are handled via regularisation rather than explicit distribution alignment. This requires a manual hyperparameter tuning. Different α values were manually selected for the intra-condition (α=500) and inter-condition (α=150) scenarios. |

| Li et al (2023) [32] |

Industrial fault diagnosis of rotating machinery using industrial streaming data and varying working conditions. (Private industrial dataset collected from a transmission test rig) |

Deep Continual Transfer Learning with Dynamic Weight Aggregation (DCTL-DWA). Adversarial Continual Domain Adaptation (ACDA). |

Continual transfer learning with rehearsal memory, DWA stability–plasticity control, DANN-based domain adaptation, and triplet metric alignment | A novel DCTL-DWA framework is proposed for processing the Industrial streaming data. The model learns the optimal stability–plasticity trade-off in each phase using the DWA. It maintains the diagnostic performance across various speeds and loads owing to the proposed ACDA. |

The model was tested only on private test rig data. The model does not incorporate real-time online learning; instead, it employs a session-based approach. |

| Zhuang et al (2023) [7] |

Online Remaining Useful Life (RUL) prediction of rolling bearings under online unknown operating conditions. (PHM2012 PRONOSTIA, Auxiliary ABLT platform) | Multi-source Adversarial Online Regression (MAOR) with a three-stage pipeline Pseudo-domain extension, via encoder–decoder that adaptively generates multiple pseudo domains from a single source. Two-stage Multi-source Adversarial Domain Adaptation (MADA). Offline–online prediction framework |

The model predicts pseudo-labelling to generate pseudo-domains and is used to guide adaptive weights and regression training. In addition, it performs domain-level adaptation and feature-level adaptations embedded into MADA to reduce the marginal divergence. Finally, adversarial multi-source alignment is incorporated for domain-invariant features. | A multi-source adversarial online regression (MAOR) framework is proposed for the RUL under unknown online conditions. Introduces a pseudo-label information-guided pseudo-domain extension with adaptive weighting and a domain-level adaptation. A two-stage MADA was designed for this study. It embeds feature-level adaptation to mitigate marginal feature divergence, which is often ignored in global adversarial alignment. An offline–online prediction scheme with dynamic adaptive weighting for real-time updates was developed. |

The model performance degrades, and the training becomes unstable as the number of pseudo domains increases. Multiple hyperparameters require careful tuning to achieve optimal performance. The model is quite complex, incorporating pseudo-domain generation, two-stage adversarial training, and online updating, whichincreases theetraininggand inferenceecostst. |

| Mao et al (2023) [25] |

Online RUL prediction across machines (cross-machine and cross-condition) with unlabelled streaming target data and significant degradation characteristic divergence. (PHM2012 PRONOSTIA, XJTU-SY, In-house roller-bearing test rig) | Self-Supervised Deep Tensor Domain-Adversarial Regression Adaptation (SD-TDA-RA) with two stages: 1. Pretrained Tensor Domain-Adversarial Network, including a feature extractor, tensorized domain discriminator, Tucker decomposition, source regression predictor, and tendency regularizer. 2. Online Self-Supervised Fine-Tuning, incorporating Pseudo-labels and Self-supervised monotonicity loss computation | The model incorporates pseudo-labels for each online block generated by the pretrained network and is used for fine-tuning. Also features alignment through domain-adversarial alignment (DANN) on tensor core representations and tendency regularization via MMD | Proposes a novel tensor-based regression domain-adversarial adaptation for online RUL across machines. A tensorized domain discriminator was introduced to capture temporal degradation. It designs bidirectional information transfer, horizontal (pseudo-supervised across machines), and vertical (self-supervised from target monotonicity). A tendency regularizer is added to maintain the degradation trends while aligning the domains. • Develops a lightweight online fine-tuning strategy |

The proposed model exhibits complexity because it incorporates tensor decomposition (MDT + Tucker) and alternating minimisation. Multiple hyperparameters require careful fine-tuning. Knowledge retention relies on alignment and self-supervision rather than memory replay. |

| Li and Jha (2024) [56] |

Smart healthcare disease detection using wearable medical sensors (WMS) under domain-incremental adaptation. (CovidDeep, DiabDeep, MHDDeep) | Past-Agnostic Generative Replay (PAGE) is based on the following: Synthetic Data Generation (SDG) module, Pseudo labelling from new real data domain, Multi-dimensional Gaussian Mixture Model (GMM) is adopted for probability density estimation in the SDG, Extended Inductive Conformal Prediction (EICP) method is incorporated to generate confidence scores and credibility values for disease detection results |

The model utilises generative replay using synthetic samples generated from the GMM and pseudo-labelling to create synthetic samples from the new real data. | The author proposed PAGE, a past-agnostic generative replay framework for domain-incremental adaptation. This enables continual learning without storing past domain data. Introduces SDG with GMM density estimation for high-quality synthetic tabular data generation. Integrates Extended Inductive Conformal Prediction (EICP) for confidence and credibility estimation. | The performance of the model relies on the ability of the GMM to capture the new domain distribution. The proposed method targets only domain-incremental adaptation, not class- and task-incremental scenarios. |

| De Carvallo et al (2024) [52] |

Vision-based unsupervised cross-domain task-incremental learning. (MNIST ↔ USPS, VisDA-2017, Office-31, Office-Home, DomainNet) | Cross-Domain Continual Learning (CDCL) is a transformer-based framework that unifies continual learning and UDA. Inter–Intra Task Cross-Attention that aligns category-level features between source and target and retains past alignment, intra task Center-Aware Pseudo-Labelling, Rehearsal with Logit Replay: stores selected source, target pairs, Backbone, a Compact convolutional tokenizer + transformer encoder |

It utilises rehearsal through logit replay to maintain task boundaries. In addition, pseudo-labelling is performed for unlabelled target data to form confident cross-domain pairs. Finally, inter/intra-feature alignment consolidates the prior alignment by freezing the core parameters and learning new tasks. | It introduces CDCL, a unified framework for unsupervised cross-domain task-incremental learning. It proposes inter–intra-task cross-attention to maintain and extend category-level domain alignment over time, reducing feature-alignment catastrophic forgetting. An intra-task centre-aware pseudo-labelling pipeline was designed to select accurate cross-domain pairs and suppress noise. • Incorporates sample rehearsal and logit replay to consolidate past knowledge. |

The performance of the model relies on using a fixed rehearsal buffer. This research focused on vision UDA and did not evaluate non-visual applications. The model generates task-specific hyperparameters that are accumulated over time. |

| Rakshit et al (2024) [35] |

Lifelong visual recognition under domain shift with unknown classes (Office-Home, DomainNet,UPRN-RSDA (remote sensing) dataset) | Incremental Open-Set Domain Adaptation (IOSDA), Multi-Domain & Class-guided GAN (MDCGAN), Multi-output Ensemble Open-set DA (MEOSDA) | The proposed model reconstructs previous domains using synthetic data (rehearsal), and pseudo-labelled data are used to train the next iteration of the MDCGAN. | Introduces a novel problem formulation of Incremental Open-set Domain Adaptation (IOSDA). It integrates replay, pseudo-labelling, and open-set adversarial DA in a unified lifelong framework. Releases UPRN-RSDA, a new IOSDA benchmark for remote sensing | The model performance relies on MDCGAN’s ability of the MDCGAN to accurately model the prior domains; poor generation may propagate errors. Feature alignment is implicit via adversarial training rather than explicit inter/intra-class metric learning. Multi-head classifiers grow linearly with the number of domains, which can eventually lead to memory and inference overheads. |

| Nguyen M. et al (2022) [53] |

Incremental multi-target unsupervised domain adaptation (MTDA) for object detection is motivated by real-world scenarios such as multi-camera video surveillance and adverse weather conditions. (PascalVOC, Cityscape, Wildtrack multi-camera dataset) | Multi-target Domain Adaptation with Domain Transfer Module (MTDA-DTM). Instead of storing past target data or duplicating detectors, the model incorporates MTDA-DTM, a lightweight Domain Transfer Module (DTM) that transfers source images into a joint representation space of all previously learned target domains. | Generative replay is utilised via the DTM. The past target distributions were approximated by transforming source images into pseudo-target images. | Introduces MTDA-DTM, a cost-efficient incremental MTDA framework for object detection. A novel Domain Transfer Module (DTM) that maps source images into a joint representation of all previous target domains is proposed. This enables incremental adaptation without storing previous target data or duplicating detectors. Prevention of catastrophic forgetting via pseudo-target replay. |

The proposed model is not applicable to time-series or prognostic domains. This requires careful tuning of the replay weight α for different domain shifts. Image-space pseudo-samples are not visually realistic. |

| Truong et al (2024) [49] |

Continual unsupervised domain adaptation (UDA) for semantic scene segmentation in self-driving cars. (GTA5 (synthetic, labelled source, Cityscapes (real, unlabelled target), IDD (Indian driving dataset), Mapillary Vistas) | Continual Unsupervised Domain Adaptation (CONDA), Bijective Maximum Likelihood (BML) loss, Bijective Network for Structured Output Modelling | This study utilised pseudo-labels generated via the EMA teacher model for unsupervised segmentation loss. Moreover, Alignment is performed in prediction distribution space using Bijective Maximum Likelihood | This study presents a novel Continual Unsupervised Domain Adaptation (CONDA) approach for semantic scene segmentation. It formulates continual domain adaptation by regularizing the distribution shift of predictions between source and target domains to avoid catastrophic forgetting introducing the Bijective Maximum Likelihood. The bijective network captures global structural information and represents segmentation distributions |

The specific model was validated only on semantic segmentation data sets. It requires high computational power to train invertible networks. Its performance depends on the pseudo-labels generated by the EMA model. No explicit feature space alignment was performed; instead, alignment was conducted solely in the output distribution space. |

6.8. Advantages of Integrated DA–CL Approaches for Bearing RUL Prediction

6.9. Limitations and Practical Implementation Challenges

- o

- Replay-based methods improve forgetting mitigation but face limitations owing to memory and computational resource demands. This becomes more prevail over long operational lifetimes with many sequential domains

- o

- Moreover, task-incremental learning approaches suffer from a theoretical limitation of infinitely growing parameters as new tasks are added

- Data Quality and Labelling Challenges. Online frameworks must operate with imperfect data and a lack of ground truth labels for the target domain. Moreover, current models primarily focus on progressive run-to-failure degradation and cannot effectively address cases of abrupt degradation [5], [25], [36], [62].

- Limited interpretability of adaptive and memory-based mechanisms. In general, previously presented models are adaptive, and their memory-based strategies significantly improve continual and domain-adaptive learning performances. However, they often operate as black-box mechanisms, offering limited insight into how past knowledge is preserved and how new domain information is integrated [41], [62].

- Finally, there is a lack of standardised benchmarks that jointly evaluate DA and CL performance in the PHM. Although previous studies have explored the integration of DA and CL for PHM, there is currently no standardised benchmark that jointly evaluates both capabilities under a unified experimental protocol.

- Addressing these challenges is essential for translating integrated DA–CL approaches from academic studies to industrial applications.

7. Research Gaps - Challenges and Future Research Directions

7.1. Assumptions of Monotonicity and progressive Degradation Remain Restrictiv

7.2. Robust Learning with Severely Limited or Noisy Supervision Is Challenging

7.3. Scalability and Memory Efficiency Remain Major Bottlenecks for Lifelong Deployment

7.4. Dynamic Domain Discovery and Task-Free Adaptation Remain Largely Unsolved Problems

7.5. Model Interpretability Remains Limited

7.6. The Lack of Standardized Benchmarks and Evaluation Protocols Hinders Reproducible Assessment

8. Conclusions

References

- Wang, T.; Guo, D.; Sun, X.-M. ‘Contrastive Generative Replay Method of Remaining Useful Life Prediction for Rolling Bearings’. IEEE Sensors Journal 2023, vol. 23(no. 19), 23893–23902. [Google Scholar] [CrossRef]

- Ren, X.; Qin, Y.; Li, B.; Wang, B.; Yi, X.; Jia, L. ‘A core space gradient projection-based continual learning framework for remaining useful life prediction of machinery under variable operating conditions’. Reliability Engineering and System Safety 2024, vol. 252. [Google Scholar] [CrossRef]

- Apeiranthitis, S.; Zacharia, P.; Chatzopoulos, A.; Papoutsidakis, M. ‘Predictive Maintenance of Machinery with Rotating Parts Using Convolutional Neural Networks’. Electronics 2024, vol. 13(no. 2), 460. [Google Scholar] [CrossRef]

- Apeiranthitis, S.; Drosos, C.; Papoutsidakis, M.; Chatzopoulos, A. ‘THE IMPORTANCE OF PROPER PLANNING AND MACHINERY MAINTENANCE OF MERCHANT VESSELS’. Hellenic Institure of Marite Technology 2025, no. 19th. [Google Scholar]

- Zhao, S.; Bai, Y.; Hou, C. ‘A dual shared space-driven domain continuous learning network for rotating machinery fault diagnosis in dynamic environments’. Meas. Sci. Technol. 2025, vol. 36(no. 9), 096140. [Google Scholar] [CrossRef]

- Sun, W.; Wang, H.; Liu, Z.; Qu, R. ‘Method for Predicting RUL of Rolling Bearings under Different Operating Conditions Based on Transfer Learning and Few Labeled Data’. Sensors 2023, vol. 23(no. 1). [Google Scholar] [CrossRef] [PubMed]

- Zhuang, J.; Cao, Y.; Jia, M.; Zhao, X.; Peng, Q. ‘Remaining useful life prediction of bearings using multi-source adversarial online regression under online unknown conditions’. Expert Systems with Applications 2023, vol. 227. [Google Scholar] [CrossRef]

- Chou, C. -B.; Lee, C. -H. ‘Generative Neural Network-Based Online Domain Adaptation (GNN-ODA) Approach for Incomplete Target Domain Data’. IEEE Transactions on Instrumentation and Measurement 2023, vol. 72, 1–10. [Google Scholar] [CrossRef]

- Kumar. , ‘Entropy-based domain adaption strategy for predicting remaining useful life of rolling element bearing’. Engineering Applications of Artificial Intelligence 2024, vol. 133. [Google Scholar] [CrossRef]

- Cao, Y.; Jia, M.; Ding, P.; Zhao, X.; Ding, Y. ‘Incremental Learning for Remaining Useful Life Prediction via Temporal Cascade Broad Learning System With Newly Acquired Data’. IEEE Transactions on Industrial Informatics 2023, vol. 19(no. 4), 6234–6245. [Google Scholar] [CrossRef]

- Mao, W.; Wang, J.; Feng, K.; Zhong, Z.; Zuo, M. ‘Dynamic modeling-assisted tensor regression transfer learning for online remaining useful life prediction under open environment’. Reliability Engineering and System Safety vol. 263, 2025. [CrossRef]

- Wang, T.; Liu, H.; Guo, D.; Sun, X.-M. ‘Continual Residual Reservoir Computing for Remaining Useful Life Prediction’. IEEE Trans. Ind. Inf. 2024, vol. 20(no. 1), 931–940. [Google Scholar] [CrossRef]

- Zhou, J.; Qin, Y. ‘A Continuous Remaining Useful Life Prediction Method With Multistage Attention Convolutional Neural Network and Knowledge Weight Constraint’. IEEE Transactions on Neural Networks and Learning Systems 2025, vol. 36(no. 7), 11847–11860. [Google Scholar] [CrossRef]

- Ding, N.; Li, H.; Xin, Q.; Wu, B.; Jiang, D. ‘Multi-source domain generalization for degradation monitoring of journal bearings under unseen conditions’. Reliability Engineering and System Safety 2023, vol. 230. [Google Scholar] [CrossRef]

- Guo, W.; Li, F.; Zhang, P.; Luo, L. ‘A stage-related online incremental transfer learning-based remaining useful life prediction method of bearings’. Applied Soft Computing vol. 169, 2025. [CrossRef]

- Kim, G. , ‘Fisher-informed continual learning for remaining useful life prediction of machining tools under varying operating conditions’. Reliability Engineering & System Safety 2025, vol. 253, 110549. [Google Scholar] [CrossRef]

- Lu, X.; Yao, X.; Jiang, Q.; Shen, Y.; Xu, F.; Zhu, Q. ‘Remaining useful life prediction model of cross-domain rolling bearing via dynamic hybrid domain adaptation and attention contrastive learning’. Computers in Industry vol. 164, 2025. [CrossRef]

- ‘1-s2.0-S0031320322002527-main.pdf’.

- Chen, Z.; Chen, J.; Liu, Z.; Liu, Y. ‘Mutual-learning based self-supervised knowledge distillation framework for remaining useful life prediction under variable working condition-induced domain shift scenarios’. Reliability Engineering and System Safety vol. 264, 2025. [CrossRef]

- Benatia, M. A.; Hafsi, M.; Ben Ayed, S. ‘A continual learning approach for failure prediction under non-stationary conditions: Application to condition monitoring data streams’. Computers & Industrial Engineering 2025, vol. 204, 111049. [Google Scholar] [CrossRef]

- Shang, J.; Xu, D.; Li, M.; Qiu, H.; Jiang, C.; Gao, L. ‘Remaining useful life prediction of rotating equipment under multiple operating conditions via multi-source adversarial distillation domain adaptation’. Reliability Engineering and System Safety vol. 256, 2025. [CrossRef]

- Hurtado, J.; Salvati, D.; Semola, R.; Bosio, M.; Lomonaco, V. ‘Continual Learning for Predictive Maintenance: Overview and Challenges’. Intelligent Systems with Applications 2023, vol. 19, 200251. [Google Scholar] [CrossRef]

- Zhang, T.; Wang, H. ‘Quantile regression network-based cross-domain prediction model for rolling bearing remaining useful life’. Applied Soft Computing 2024, vol. 159. [Google Scholar] [CrossRef]

- Zeng, P.; Mao, W.; Li, Y.; Wang, N.; Zhong, Z. ‘Bayesian Domain-Adversarial Regression Adaptation: A New Self-Driven Remaining Useful Life Prediction Method Across Different Machines With Uncertainty Quantification’. IEEE Sensors Journal 2024, vol. 24(no. 20), 32673–32683. [Google Scholar] [CrossRef]

- Mao, W.; Liu, K.; Zhang, Y.; Liang, X.; Wang, Z. ‘Self-Supervised Deep Tensor Domain-Adversarial Regression Adaptation for Online Remaining Useful Life Prediction Across Machines’. IEEE Transactions on Instrumentation and Measurement 2023, vol. 72. [Google Scholar] [CrossRef]

- Guo, C.; Sun, Y. ‘A Robust Continual Domain Adaptation Framework for Gas Path Fault Diagnosis of Aero-Engine under Dynamic Operating Conditions’. IEEE Trans. Aerosp. Electron. Syst. 1–14, 2025. [CrossRef]

- Liu, C.; Zhang, L.; Zheng, Y.; Jiang, Z.; Zheng, J.; Wu, C. ‘Online industrial fault prognosis in dynamic environments via task-free continual learning’. Neurocomputing 2024, vol. 598, 127930. [Google Scholar] [CrossRef]

- Lin, Tianjiao; Song, Liuyang; Cui, L.; Wang, H. ‘Continual learning for unknown domain fault diagnosis in rotating machinery via Diffusion-Integrated Dynamic Mixture Experts’. Engineering Applications of Artificial Intelligence 2025, vol. 156, 111056. [Google Scholar] [CrossRef]

- Zhang, X.; Li, Z.; Wang, J. ‘Joint domain-adaptive transformer model for bearing remaining useful life prediction across different domains’. Engineering Applications of Artificial Intelligence vol. 159, 2025. [CrossRef]

- Ren, X.; Qin, Y.; Wang, B.; Cheng, X.; Jia, L. ‘A Complementary Continual Learning Framework Using Incremental Samples for Remaining Useful Life Prediction of Machinery’. IEEE Transactions on Industrial Informatics 2024, vol. 20(no. 12), 14330–14340. [Google Scholar] [CrossRef]

- He, X.; Ding, C.; Qiao, F.; Shi, J. ‘An Incremental Remaining Useful Life Prediction Method Based on Wasserstein GAN and Knowledge Distillation’. presented at the Conference Proceedings - IEEE International Conference on Systems, Man and Cybernetics, 2024; pp. 3857–3862. [Google Scholar] [CrossRef]

- Li, J.; Huang, R.; Chen, Z.; He, G.; Gryllias, K. C.; Li, W. ‘Deep continual transfer learning with dynamic weight aggregation for fault diagnosis of industrial streaming data under varying working conditions’. Advanced Engineering Informatics 2023, vol. 55, 101883. [Google Scholar] [CrossRef]

- Bidaki, S. A. ‘Online Continual Learning: A Systematic Literature Review of Approaches, Challenges, and Benchmarks’. arXiv 2025, arXiv:2501.04897arXiv. [Google Scholar] [CrossRef]

- Wang, L.; Zhang, X.; Su, H.; Zhu, J. ‘A Comprehensive Survey of Continual Learning: Theory, Method and Application’. arXiv 2024, arXiv:2302.00487arXiv. [Google Scholar] [CrossRef] [PubMed]

- Rakshit, S.; Bandyopadhyay, H.; Das, N.; Banerjee, B. ‘Incremental Open-set Domain Adaptation’. arXiv 2024, arXiv:2409.00530. [Google Scholar] [CrossRef]

- Mao, W.; Guo, R.; Wang, J.; Zuo, M.; Zhong, Z. ‘Wiener process-assisted online remaining useful life prediction with deep incremental regression transfer learning’. Reliability Engineering & System Safety 2026, vol. 267, 111867. [Google Scholar] [CrossRef]

- Ren, X.; Qiu, H.; Chen, D.; Peng, C.; Qin, Y.; Wang, B. ‘A Continual Learning Framework with Adaptive Synapses for Remaining Useful Life Prediction’. presented at the 2023 Global Reliability and Prognostics and Health Management Conference, PHM-Hangzhou 2023, 2023. [Google Scholar] [CrossRef]

- She, D.; Luo, Y.; Wang, Y.; Gan, S.; Yan, X.; Pecht, M. G. ‘A meta-transfer-driven method for predicting the remaining useful life of rolling bearing with few shot data’. Measurement: Journal of the International Measurement Confederation vol. 254, 2025. [CrossRef]

- Yang, J.; Sun, D.; Wang, L.; Zhang, W.; Wang, X. ‘DPMA: Self-Supervised Dual-Path Meta Alignment Network for Remaining Useful Life Prediction with Limited Data and Unknown Working Conditions’. IEEE Transactions on Instrumentation and Measurement 1–1, 2025. [CrossRef]

- Zhou, J.; Luo, J.; Pu, H.; Qin, Y. ‘Multibranch Horizontal Augmentation Network for Continuous Remaining Useful Life Prediction’. IEEE Transactions on Systems, Man, and Cybernetics: Systems 2025, vol. 55(no. 3), 2237–2249. [Google Scholar] [CrossRef]

- Li, X. ‘Trend-constrained pairing based incremental transfer learning for remaining useful life prediction of bearings in wind turbines’. Expert Systems with Applications vol. 263, 2025. [CrossRef]

- Page, M. J. , ‘PRISMA 2020 explanation and elaboration: updated guidance and exemplars for reporting systematic reviews’. BMJ 2021, n160. [Google Scholar] [CrossRef]

- Page, M. J. , ‘The PRISMA 2020 statement: an updated guideline for reporting systematic reviews’. BMJ 2021, n71. [Google Scholar] [CrossRef]

- Rethlefsen, M. L. , ‘PRISMA-S: an extension to the PRISMA Statement for Reporting Literature Searches in Systematic Reviews’. Syst Rev 2021, vol. 10(no. 1), 39. [Google Scholar] [CrossRef]

- Milner, K. A.; Hays, D.; Farus-Brown, S.; Zonsius, M. C.; Saska, E.; Fineout-Overholt, E. ‘National evaluation of DNP students’ use of the PICOT method for formulating clinical questions’. Worldviews Ev Based Nurs 2024, vol. 21(no. 2), 216–222. [Google Scholar] [CrossRef]

- Riva, J. J.; Malik, K. M. P.; Burnie, S. J.; Endicott, A. R.; Busse, J. W. ‘What is your research question? An introduction to the PICOT format for clinicians’. J Can Chiropr Assoc 2012, vol. 56(no. 3), 167–171. [Google Scholar]

- Guo, J.; Song, Y.; Wang, Z.; Chen, Q. ‘A dual-channel transferable model for cross-domain remaining useful life prediction of rolling bearings under uncertainty’. Measurement Science and Technology vol. 36(no. 3), 2025. [CrossRef]

- Ye, Y.; Wang, J.; Yang, J.; Yao, D.; Zhou, T. ‘Adaptive MAGNN-TCN: An Innovative Approach for Bearings Remaining Useful Life Prediction’. IEEE Sensors Journal vol. 25(no. 4), 7467–7481, 2025. [CrossRef]

- Truong, T.-D.; Helton, P.; Moustafa, A.; Cothren, J. D.; Luu, K. ‘CONDA: Continual Unsupervised Domain Adaptation Learning in Visual Perception for Self-Driving Cars’. 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, WA, USA, Jun. 2024; IEEE; pp. 5642–5650. [Google Scholar] [CrossRef]

- Han, Y.; Hu, A.; Huang, Q.; Zhang, Y.; Lin, Z.; Ma, J. ‘Sinkhorn divergence-based contrast domain adaptation for remaining useful life prediction of rolling bearings under multiple operating conditions’. Reliability Engineering and System Safety vol. 253, 2025. [CrossRef]

- Shang, X.; Qiu, H.; Jiang, C.; Liang, P.; Ding, S.; Gao, L. ‘A remaining useful life estimation method based on meta contrastive learning’. Reliability Engineering & System Safety 2026, vol. 268, 111972. [Google Scholar] [CrossRef]

- De Carvalho, M.; Pratama, M.; Zhang, J.; Haoyan, C.; Yapp, E. ‘Towards Cross-Domain Continual Learning’. 2024 IEEE 40th International Conference on Data Engineering (ICDE), May 2024; IEEE: Utrecht, Netherlands; pp. 1131–1142. [Google Scholar] [CrossRef]

- Nguyen-Meidine, L. T.; Kiran, M.; Pedersoli, M.; Dolz, J.; Blais-Morin, L.-A.; Granger, E. ‘Incremental Multi-Target Domain Adaptation for Object Detection with Efficient Domain Transfer’. arXiv 2022, arXiv:2104.06476. [Google Scholar] [CrossRef]

- Lai, S.; Zhao, Z.; Zhu, F.; Lin, X.; Zhang, Q.; Meng, G. ‘Pareto Continual Learning: Preference-Conditioned Learning and Adaption for Dynamic Stability-Plasticity Trade-off’. arXiv 2025. [Google Scholar] [CrossRef]

- Rudroff, T.; Rainio, O.; Klén, R. ‘Neuroplasticity Meets Artificial Intelligence: A Hippocampus-Inspired Approach to the Stability–Plasticity Dilemma’. Brain Sciences 2024, vol. 14(no. 11), 1111. [Google Scholar] [CrossRef] [PubMed]

- Li, C.-H.; Jha, N. K. ‘PAGE: Domain-Incremental Adaptation with Past-Agnostic Generative Replay for Smart Healthcare’. arXiv 2024, arXiv:2403.08197. [Google Scholar] [CrossRef]

- Li, X.; Ma, X.; Zhang, H.; Yuan, D. ‘Condition-Adaptive Dynamic Evolution Method for Digital Twin of Rolling Bearings Based on Continual Learning’. 2024 China Automation Congress (CAC), Nov. 2024; pp. 5666–5671. [Google Scholar] [CrossRef]

- Van De Ven, G. M.; Tuytelaars, T.; Tolias, A. S. ‘Three types of incremental learning’. Nat Mach Intell 2022, vol. 4(no. 12), 1185–1197. [Google Scholar] [CrossRef]

- Que, Z.; Jin, X.; Xu, Z.; Hu, C. ‘Remaining Useful Life Prediction Based on Incremental Learning’. IEEE Transactions on Reliability 2024, vol. 73(no. 2), 876–884. [Google Scholar] [CrossRef]

- Xie, S. , ‘Incremental Contrast Hybrid Model for Online Remaining Useful Life Prediction With Uncertainty Quantification in Machines’. IEEE Transactions on Industrial Informatics 2024, vol. 20(no. 12), 14308–14320. [Google Scholar] [CrossRef]

- Xuan, Q. L.; Munderloh, M.; Ostermann, J. ‘Lifelong Learning for Fault Prognostics in Predictive Maintenance with Bayesian Neural Networks’. in 2025 25th International Conference on Software Quality, Reliability, and Security Companion (QRS-C), Jul. 2025; IEEE: Hangzhou, China; pp. 483–491. [Google Scholar] [CrossRef]

- Zeng, P.; Mao, W.; Zhang, W. ‘Interpretability Analysis and Transferability Evaluation of Domain-Adversarial Regression Adaptation Model based on Tensor Representation’. In presented at the Proceedings of the 36th Chinese Control and Decision Conference, CCDC; 2024; 2024, pp. 1919–1925. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).