Arrows of Explanation

In the case of physicists discussing physical theories and experiments, considering the arrows of explanation is equivalent to examining the connections between conceptual models at different levels of organization. In this case, theories are compared between each other at the level of mathematical formalism. Within Eddington’s metaphor, a world of shadows of continuum mechanics and a world of shadows of statistical mechanics is related to the burning of a real candle, but the discussion is limited to comparing two worlds of shadows between each other.

This section examines several arrows of explanation. First, it examines the thriving research program of determining the properties of a substance from molecular constants. In this case, the equations of continuum mechanics remain unchanged, but it is assumed that the numerical values of the properties of a substance can be determined from atomic-molecular concepts. The success of statistical mechanics is largely due to this development. In some cases, first-principles calculations have been carried out with good accuracy, but in general, there is a hierarchy of approximations, which is connected to ongoing experimental research.

The next arrow of explanation is related to the research program of deriving the equations of continuum mechanics from the fundamental equations of quantum and statistical mechanics. In this case, success has been limited, and the connection to experimental research is rather indirect.

After that concepts in popular science literature are considered. There it is assumed that in the burning candle example, the entire process can be represented as follows. The system moves from one state to the next one according to the fundamental laws of physics. Such concepts translate into qualitative explanations, in which the emergence of new properties at the level of continuum mechanics is discussed. A look at the concept of emergence from the viewpoint of the mathematical formalism of theories at different levels of organization reveals the problematic nature of this concept.

Determining Properties of a Substance from Molecular Constants

In continuum mechanics, each substance requires a set of properties that must be determined experimentally. The theory defines a conceptual model of an ideal experiment, based on which the properties of a substance are measured in real experiments. These experimentally determined properties are then used to solve practical problems. Statistical mechanics offers a way to estimate the properties of a substance from its molecular properties and, in this sense, explains their relationship with molecular constants. This was the main point in Einstein’s quote [

2] in the introduction. The use of atomic-molecular concepts allowed us to unify the various properties of a substance.

This arrow of explanation initially appeared in kinetic theory, where it was possible to relate the interaction potential between atoms with the equation of state. However, kinetic theory lacked conceptual models that could be used for the experimental study of interaction potentials. The interaction potentials must be known, but the theory was unable to provide experimental means for their determination.

In practice, interaction potentials could only be found from the values of macroproperties during the solution of the inverse problem. This led to the development of a corresponding research program. The form of interaction potentials with unknown parameters was chosen, which were then determined from experimental values of macroproperties by means of the inverse problem. A good description of the history of such research is given in Rowlinson’s book, ‘

Cohesion. Scientific History of Intermolecular Forces’ [

19].

The development of quantum mechanics changed the situation, since the interaction potential could be determined by solving the electron Schrödinger equation under the Born-Oppenheimer approximation. This led to a wave of enthusiasm among physicists; for example, in 1929, Paul Dirac said [

20]:

‘The underlying physical laws necessary for the mathematical theory of a large part of physics and the whole of chemistry are thus completely known, and the difficulty is only that the exact application of these laws leads to equations much too complicated to be soluble.’

Extrapolationism in this context means a transition to universality of a solution after initial successes. Physicists’ optimism led to the successful research program developing algorithms for the numerical solution of the electron Schrödinger equation and thus, to create computational chemistry [

21]. Reasonable extrapolationism in this case is associated with an understanding of the limits of what is possible in this field.

Estimating the properties of a substance involves two steps. The first is solving the Schrödinger equation and finding the interaction potential. This step relates to experimental spectroscopy studies, which allow us to verify the reliability of the calculations. Spectra and interaction potentials are used in the second step to estimate the properties of a substance. In general, the intermolecular interaction potential leads to the appearance of a configuration integral, but in the case of a polyatomic ideal gas, only an intramolecular interaction potential is sufficient.

For relatively simple systems, it is possible by means of ab initio calculations to achieve an accuracy exceeding experimental [

22], but for more complex substances, a hierarchy of approximations appears [

23]. Ab initio calculations are replaced by semi-empirical methods, in which simplifications are introduced; as a result, some quantities are determined from experiments. Next, come molecular mechanics and molecular dynamics methods with an empirical force field when, figuratively speaking, as the system becomes more complex, approximation is adjusted by another approximation. The use of approximations is combined with parallel experimental studies, which allow us to select the appropriate level of approximation for solving a given problem.

Estimation of the configuration integral generally involves molecular dynamics and Monte Carlo simulations. A good example in this respect is the 2019 paper, ‘

Thermophysical properties of the Lennard-Jones fluid: Database and data assessment’ [

24]. This paper deals with a hypothetical substance consisting of point masses with the Lennard-Jones potential between them. This thought model is fully expressed by the mathematical equations of classical mechanics; therefore, it must correspond to a well-defined equation of state for gas and liquid. The paper compares the results of many studies to determine the equation of state and other thermodynamic properties:

‘The mutual agreement of these data sets is approximately ±1% for the vapor pressure, ±0.2% for the saturated liquid density, ±1% for the saturated vapor density, and ±0.75% for the enthalpy of vaporization – excluding the region close to the critical point.’

This isn’t a comparison of the experimental results, but rather a comparison between the computer simulation results of different groups. Hence, this demonstrates the accuracy of existing numerical algorithms for a relatively simple system.

Thus, reasonable extrapolationism consists of establishing a realistic border for the current capabilities to estimate the properties of a substance from molecular constants and realistic forecasts for future development. No doubt, since Dirac’s statement, significant progress has been made in the development of numerical algorithms and in the advancement of computing power. Hence the border of what is possible moves, but still many open problems remain. First and foremost, the transition to more complex systems is impossible due to the exponential growth of computing power requirements. It should also be noted that for a few properties, such as rate constants, the very principles of ab initio calculations are in their infancy.

Now let me return to Dirac’s statement in a literal sense. In this case, the claim that the theory is complete for absolutely all chemical systems is radical extrapolationism. Verifying this assertion requires calculations and comparison of the results with experiments. Without this, it is impossible to claim that all problems are due solely to a lack of computing power. However, such verification is impossible at present and in the foreseeable future. In other words, such an assertion goes far beyond experimental research and does nothing to advance it.

Deriving the Equations of Continuum Mechanics from Statistical Mechanics

Mathematics allows us to prove general theorems even in cases where equations cannot be solved, and thus new arrows of explanation appear. The task is to derive the equations of continuum mechanics from fundamental theories of physics in a general form. Interestingly, such a program makes the essence of Hilbert’s sixth problem [

25] ‘

Mathematical treatment of the axioms of physics’:

‘The investigations on the foundations of geometry suggest the problem: To treat in the same manner, by means of axioms, those physical sciences in which mathematics plays an important part; in the first rank are the theory of probabilities and mechanics.’

‘As to the axioms of the theory of probabilities, it seems to me desirable that their logical investigation should be accompanied by a rigorous and satisfactory development of the method of mean values in mathematical physics, and in particular in the kinetic theory of gases.’

‘Important investigations by physicists on the foundations of mechanics are at hand; I refer to the writings of Mach, Hertz, Boltzmann and Volkmann.It is therefore very desirable that the discussion of the foundations of mechanics be taken up by mathematicians also. Thus Boltzmann’s work on the principles of mechanics suggests the problem of developing mathematically the limiting processes, there merely indicated, which lead from the atomistic view to the laws of motion of continua.’

In equilibrium statistical mechanics, there is a derivation of the fundamental equation of classical thermodynamics [

26]. It is used, among other things, to prove the relationship between changes in the Helmholtz energy and the partition function. This allows us to speak of a certain arrow of explanation of thermodynamics based on statistical mechanics. However, this derivation lies beyond the Hilbert ideals, since it uses a number of additional assumptions not contained in the original equations of classical mechanics as follows: the equal a priori probability of microstates in the microcanonical ensemble, the postulate of the arrow of time, and the requirement to determine the numerical value of the Boltzmann constant from experiments [

27]. Moreover, this derivation is limited to considering only the expansion work.

The situation is significantly worse in the case of proving the Clausius inequality and finding the arrow of time in non-equilibrium statistical mechanics. A reasonable arrow of explanation exists only when considering a monatomic ideal gas in Boltzmann’s statistical interpretation of the second law. Gibbs’s generalization of the entropy of a system in the general case is connected with the peculiarities of Liouville’s theorem, when the entropy of a system formally remains constant during an irreversible process. Attempts to transfer Boltzmann’s ideas to Γ-space remain at the level of qualitative explanations [

28].

There is no time in classical thermodynamics, but the equations of continuum mechanics explicitly include time, and they are time asymmetric. Practical work in non-equilibrium statistical mechanics always contains additional postulates that lead to the arrow of time. The question of the arrow of time in statistical mechanics has been discussed for a long time, but a satisfactory solution remains elusive. Moreover, by this discussion there is no connection with experimental research.

Burning Candle at the Level of Fundamental Equations of Physics

Certain advances in statistical mechanics in estimating the properties of a substance from molecular constants lead to radical extrapolationism, in the form of assertions that the entire process of candle burning can be described directly at the level of fundamental theories of physics. Let me take Sean Carroll’s book, ‘

From Eternity to Here: The Quest for the Ultimate Theory of Time’ [

29] as an example. It states that the world passes from one state to the next one according to the laws of physics. A couple of quotes from the book:

‘The laws of physics can be thought of as a machine that tells us, given what the world is like right now, what it will evolve into a moment later.’

‘That’s a standard way of thinking about the laws of physics ... You tell me what is going on in the world (say, the position and velocity of every single particle in the universe) at one moment of time, and the laws of physics are a black box that tells us what the world will evolve into just one moment later.’

Carroll speaks of the world, but I limit myself to the process of candle burning, for the sake of clarity, in an isolated system. Let us consider the concept of a local Laplace demon to describe such a system.

The development of numerical methods based on finite elements and finite volumes, coupled with increased computing power, has led to the creation of software that makes continuum mechanics accessible to engineers. The modeling process is conducted in a user-friendly graphical interface, where a 3D model is discretized using mesh generators, and thus the job is converted to a computational problem. A complete calculation of a burning candle at this level is already possible, although engineers prefer to break the problem down into smaller parts to find more efficient solutions to practical problems.

In this case, Carroll’s statement accurately conveys the essence of such software, but Carroll was referring to the fundamental laws of physics. In this case, there is a system of equations that cannot be solved in principle, since including so many particles in the analysis is unthinkable. Even a complete notation of such a system of equations becomes impossible, let alone its solution. This type of discussion is an unmistakable sign of radical extrapolationism, in the spirit of the discussion of Laplace’s demon. In this form, the connection with experimental research is completely lost.

Moreover, there are serious problems even at the conceptual level, since it becomes unclear which laws should be used to estimate the transition of an isolated system with a burning candle from one state to the next one. Carroll’s statement applies to classical statistical mechanics, which underlies numerical algorithms for molecular dynamics. However, this level of approximation is too rough. The process requires considering chemical reactions, but the conceptual problem can be illustrated by a much simpler problem: accounting for vibrational motions in polyatomic molecules.

As already mentioned, in the 19th century, the discrepancy between experimental heat capacities of diatomic molecules and the predictions of kinetic theory was one of the first signs of the inapplicability of classical mechanics to describing molecular motion. Including vibrational motion in the analysis led to overestimated heat capacity of diatomic gases and subsequent experiments showed that heat capacity depends on temperature.

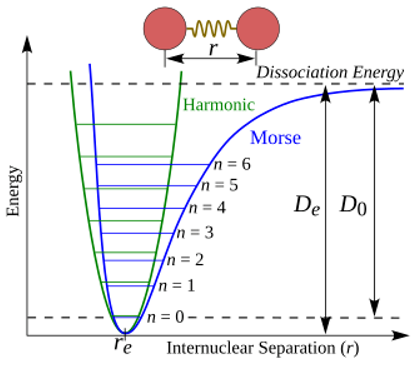

A figure from Wikipedia (Morse potential) [

30] helps to better understand the problem of vibrational motion:

It shows potential energy as a function of the distance between atoms. The green line represents the harmonic oscillator approximation, and the blue line represents the actual curve, when increasing distance leads to molecule dissociation. Such a potential curve can be calculated by solving the Schrödinger electron equation. Vibrational energy is discrete, and the difference between vibrational levels at room temperature exceeds the available thermal energy.

As the temperature increases, a sufficient number of vibrational levels are excited, corresponding to the activation of a vibrational degree of freedom in classical theory. At low temperatures, all molecules are practically at the ground vibrational level, and vibrational motion is effectively switched off. This explains how the term “frozen degree of freedom” originated. The molecule has degrees of freedom that ceased to be active at low temperatures. This behavior cannot be described in classical mechanics.

It is unclear which laws should be used to correctly account for the vibrational motions of molecules during the transition of the entire system from one state to the next one. Quantum mechanics of vibrational states deals with the wave function, but it is unclear how to combine the vibrational wave function with classical mechanics to make it consistent with Carroll’s description. Taking into account the evolution of the wave function of the entire system, like a burning candle, makes the situation even worse, since it becomes unclear how to obtain the combustion process itself. This is one of the problems with quantum mechanics: it lacks a smooth transition from quantum-mechanical phenomena to classical ones.

As already mentioned, a hierarchy of approximations is used in practice, but in this case, Carroll’s coherent picture is disrupted. Selecting the correct level of approximation requires understanding the specific conditions of the problem in question. A return to experimental research is only possible by rejecting the language of radical extrapolationism.

Arrows of Explanation and Emergence

Let us consider emergence—it is often discussed when different levels of organization are at play. Typically, the topic of emergence arises in the qualitative discussion of the previous section about the transition of the world from one state to the next one according to the laws of physics. In such a discussion, emergence concerns the macroproperties of a substance in respect to the properties of atoms, but the discussion is exclusively qualitative. It is argued that the properties of a substance must somehow emerge from the movement of atoms, and then the question is considered whether the new emerging entity at a higher level of organization can influence the behavior of a lower level.

Below is a brief overview of this problem within the framework adopted in this paper. Let us imagine physicists discussing the mathematical formalism of physical theories at the macro and micro levels. In this case, it becomes unclear how qualitative discussion of emergence can be connected to physical theories. Let us consider several examples.

Currently, there are debates in philosophy of chemistry about the Born-Oppenheimer approximation as an example of emergence in chemistry [

31]. The idea is that chemistry is based on a concept of molecular structure that cannot be found before the Born-Oppenheimer approximation. This discussion of the emergent nature of molecular structure raises many questions, since the statement ‘the Born-Oppenheimer approximation emerges’ does not seem meaningful. In this case, it is more accurate to say that there is no rigorous transition from the equations of fundamental physics to molecular structures in chemistry.

The relationship between the properties of a substance in continuum mechanics and molecular constants was discussed in the first section. Typically, the Born-Oppenheimer approximation is employed, but the relationship remains valid even without the Born-Oppenheimer approximation. This significantly complicates the calculations, making them currently only practical for extremely simple systems. Thus, from this perspective, the typical question arises again about the limits of applicability of the original equations during quantum mechanical calculations.

Similarly, it is impossible to speak about emergence in the case of the arrow of time in continuum mechanics. A more accurate statement is that certain elements of continuum mechanics cannot be found in statistical mechanics without additional assumptions. In other words, one should not say that the arrow of time emerges when considering mathematical equations; it’s more accurate to speak of the limitations of the mathematical proofs available.

The emergence of temperature should be considered in the same way. In equilibrium statistical mechanics, there is an equivalent of thermodynamic temperature in the form of a parameter of the canonical Gibbs ensemble distribution. In non-equilibrium statistical mechanics, there are states in which there is no temperature, but in these cases, there are relaxation processes that lead to the establishment of local thermal equilibrium. Thus, temperature is related to relaxation processes, not to emergence.