Submitted:

25 February 2026

Posted:

02 March 2026

You are already at the latest version

Abstract

Keywords:

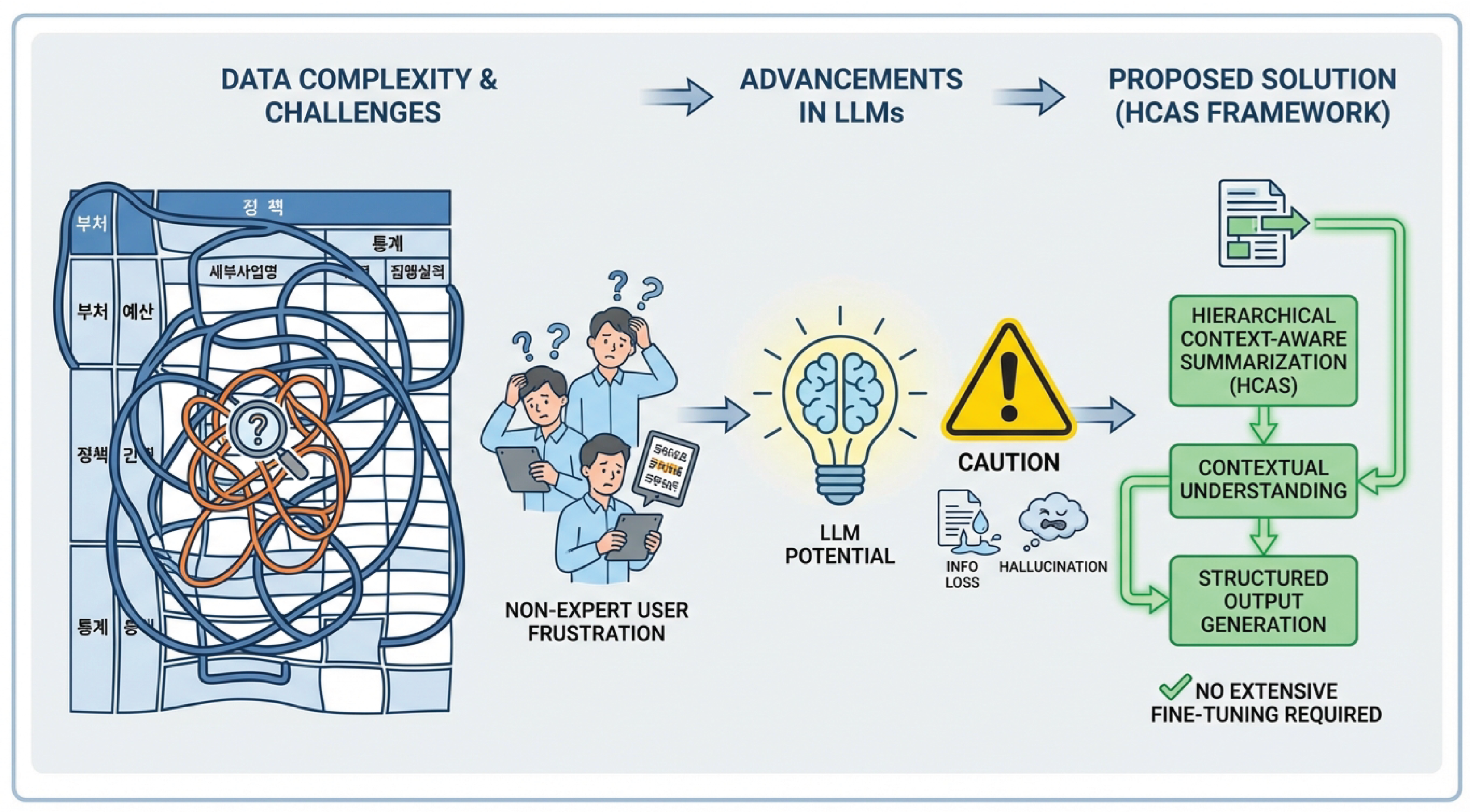

1. Introduction

- We propose Hierarchical Context-Aware Summarization (HCAS), a novel multi-stage framework that leverages sophisticated prompt engineering to guide Large Language Models in generating high-quality, explanatory summaries for complex tabular data.

- We demonstrate the effectiveness of HCAS in the challenging domain of Korean administrative tables, showing that our method can significantly enhance LLM performance without the need for extensive model fine-tuning.

- We achieve state-of-the-art performance on the NIKL Korean Table Explanation Benchmark, surpassing existing In-Context Learning (ICL) methods and traditional fine-tuning approaches, thereby highlighting the immense potential of finely-tuned prompt engineering for complex domain-specific tasks.

2. Related Work

2.1. Table Understanding and Summarization

2.2. Large Language Models and Advanced Prompt Engineering

3. Method

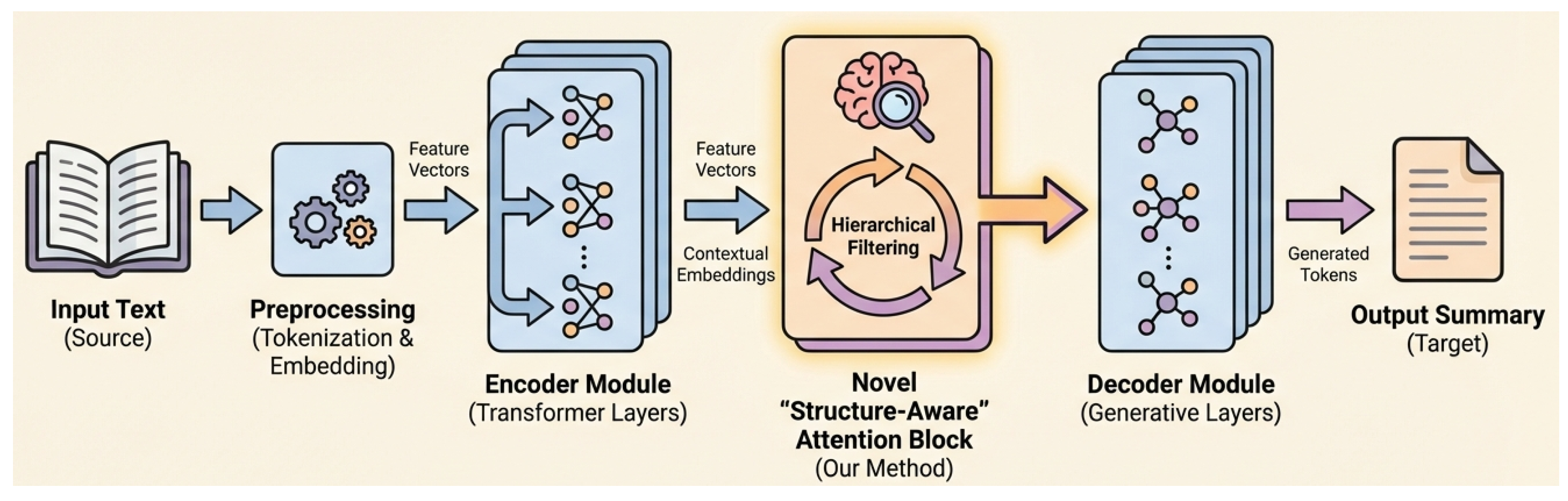

3.1. Overview of Hierarchical Context-Aware Summarization (HCAS)

3.2. Contextual Key Information Extraction (CKIE)

- 1.

- Direct Header Identification: Identifying the immediate row and column headers () that directly categorize or label . This typically involves finding the first non-empty cells in the same row to the left and in the same column above .

- 2.

- Hierarchical Context Tracing: Tracing upwards and sidewards through the table structure to identify higher-level, hierarchical headers (). These headers define broader categories, timeframes, or classifications that encompass and are crucial for understanding the data’s scope and context. This involves navigating parent-child relationships within the table’s implicit tree structure, inferring the logical grouping of cells.

- 3.

- Global Metadata Integration: Incorporating the global metadata (such as the table’s overall title, the issuing agency, and the date of publication) to establish the overarching administrative context and purpose of the table.

3.3. Explanatory Narrative Skeleton Construction (ENSC)

- Trends: Detecting changes over time (e.g., increase, decrease, stability) if temporal data is available.

- Causal or Consequential Links: Inferring potential causes or effects related to specific values, based on common knowledge or implicit administrative context.

- Comparative Insights: Highlighting differences or similarities if multiple related values (e.g., across different regions, departments, or categories) are present in K.

- Significance: Identifying the importance or implications of a particular value within its administrative context.

3.4. Fluency and Readability Optimization (FRO)

- 1.

- Synthesizing Elements: Consolidating the discrete phrases and sentences of into flowing, grammatically correct paragraphs and cohesive text blocks.

- 2.

- Rephrasing for Clarity and Conciseness: Revising complex or awkward phrasing, eliminating jargon where appropriate, and simplifying sentence structures to enhance overall clarity and ease of understanding for the administrative audience.

- 3.

- Eliminating Redundancy: Identifying and removing any repetitive information or expressions, ensuring that the summary is as succinct and informative as possible without sacrificing essential details.

- 4.

- Enhancing Coherence and Logical Flow: Ensuring smooth transitions between sentences and paragraphs, maintaining a consistent narrative voice, and establishing a clear and logical progression of ideas throughout the summary.

- 5.

- Domain-Specific Linguistic Adaptation: A particular emphasis is placed on incorporating appropriate Korean administrative terminology, formal honorifics, and complex sentence structures commonly used in official documents. This ensures the summary maintains a professional, authoritative tone and is easily understood by the intended audience within the Korean administrative context.

4. Experiments

4.1. Experimental Setup

4.1.1. Dataset

4.1.2. Base Models and Baselines

- EXAONE 3.0 7.8B: A robust 7.8 billion parameter model known for its strong performance in Korean language tasks, including tabular question answering.

- llama-3-Korean-Bllossom-8B: A highly capable sub-10 billion parameter model recognized for its advanced Korean multi-domain reasoning abilities.

- KoBART – Fine-tuned: A traditional encoder-decoder model with 124 million parameters, representing the established full-model fine-tuning paradigm for text generation.

- EXAONE 3.0 7.8B with Basic ICL (In-Context Learning): This baseline employs a straightforward, single-shot or few-shot prompting approach, where the LLM is given the preprocessed table context and directly asked to generate a summary, without the multi-stage reasoning of HCAS.

- EXAONE 3.0 7.8B – LoRA: Demonstrates a parameter-efficient fine-tuning approach applied to the EXAONE model, providing a comparison against methods that involve some degree of model adaptation.

- EXAONE 3.0 7.8B – Tabular-TX: A leading baseline representing advanced prompt engineering or structured input methods for tabular data summarization, serving as a direct competitor to our HCAS framework in non-fine-tuning scenarios.

- llama-3-Korean-Bllossom-8B with Basic ICL: Similar to its EXAONE counterpart, this baseline applies basic ICL to the llama-3 model.

- llama-3-Korean-Bllossom-8B – Tabular-TX: An advanced prompting baseline for the llama-3 model, mirroring the setup for EXAONE.

4.1.3. Evaluation Metrics

- ROUGE-1: Measures the overlap of unigram words between the generated summary and the expert reference summary.

- ROUGE-L: Quantifies the overlap based on the longest common subsequence (LCS) between the generated and reference summaries, capturing fluency and sentence-level similarity.

- BLEU: Evaluates the precision of n-grams (up to 4-grams) in the generated summary compared to the reference, focusing on word choice and grammatical structure.

4.1.4. Data Preprocessing

- Structured Table Representation: Raw tabular data is transformed into a structured key-value pair dictionary format. This conversion helps LLMs to parse and understand the semantic relationships within the table more effectively than raw text or CSV representations.

- Merged Cell Resolution: Complexities arising from merged cells (common in administrative tables) are systematically addressed. This involves intelligently replicating content or expanding cell values to ensure that each logical data point has a clear and unambiguous association with its corresponding headers.

- Contextual Information Integration: In alignment with the HCAS framework, our preprocessing goes beyond merely including highlighted cells and their direct headers. We intelligently extract and integrate multi-level hierarchical headers and essential table metadata (such as the global title, issuing agency, and publication date). This enriched contextual information forms the comprehensive input necessary for the LLM to perform accurate and nuanced summarization.

4.2. Main Results

HCAS Performance Leadership

Superiority of Non-Fine-tuning Approaches with Advanced Prompting

Importance of Contextualized Prompting

Impact of LLM Scale

4.3. Ablation Study of HCAS Stages

Importance of Fluency and Readability Optimization (FRO)

Criticality of Explanatory Narrative Skeleton Construction (ENSC)

Foundation of Contextual Key Information Extraction (CKIE)

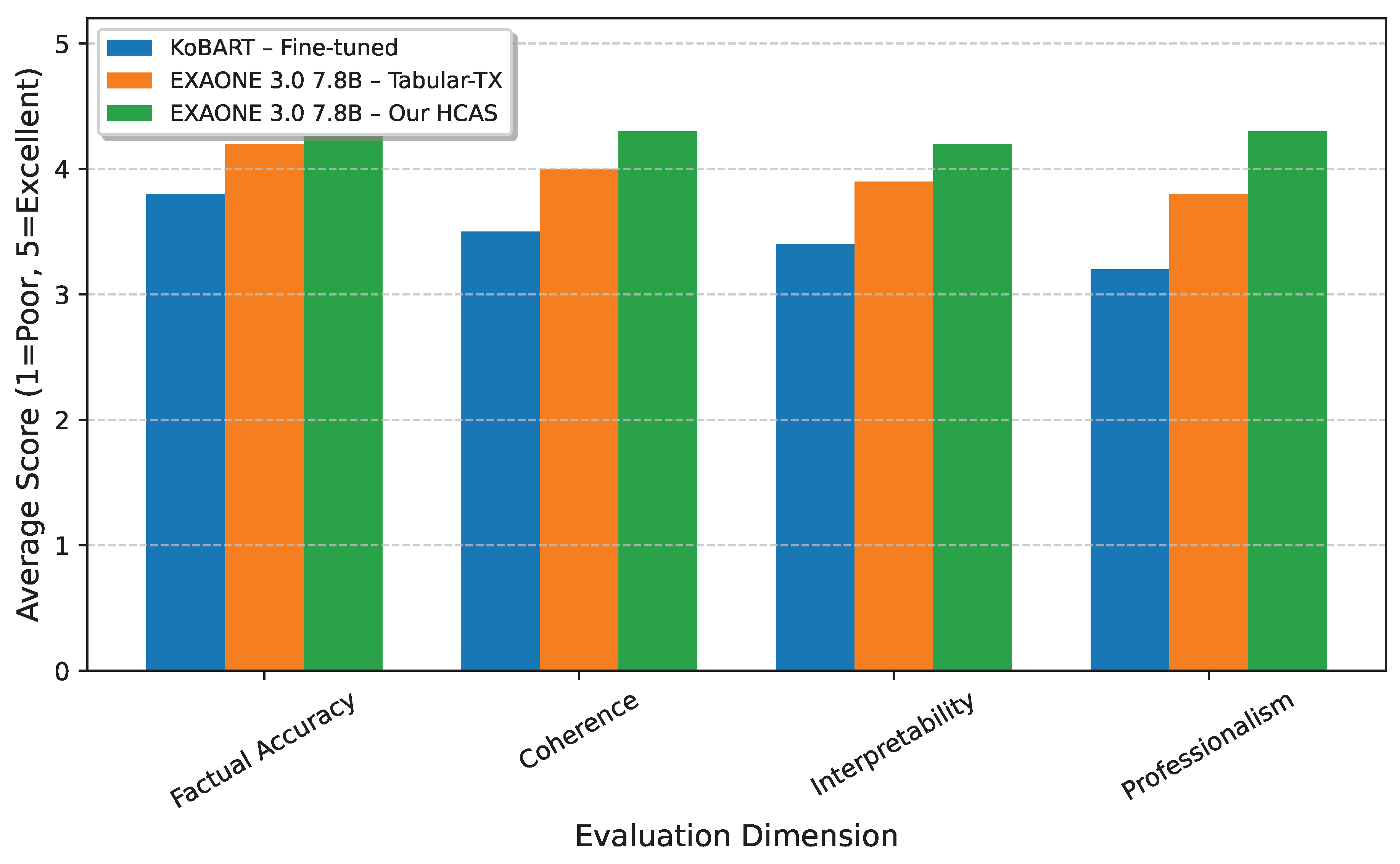

4.4. Human Evaluation

- Factual Accuracy: How well the summary reflects the data in the table, avoiding hallucinations or misinterpretations.

- Coherence: The logical flow and organizational structure of the summary.

- Interpretability: How easily a non-expert user can understand the meaning and implications of the highlighted data.

- Professionalism: The adherence to formal Korean administrative language conventions, tone, and appropriate terminology.

Overall Perceptual Quality

Enhanced Interpretability and Professionalism

Superior Factual Accuracy and Coherence

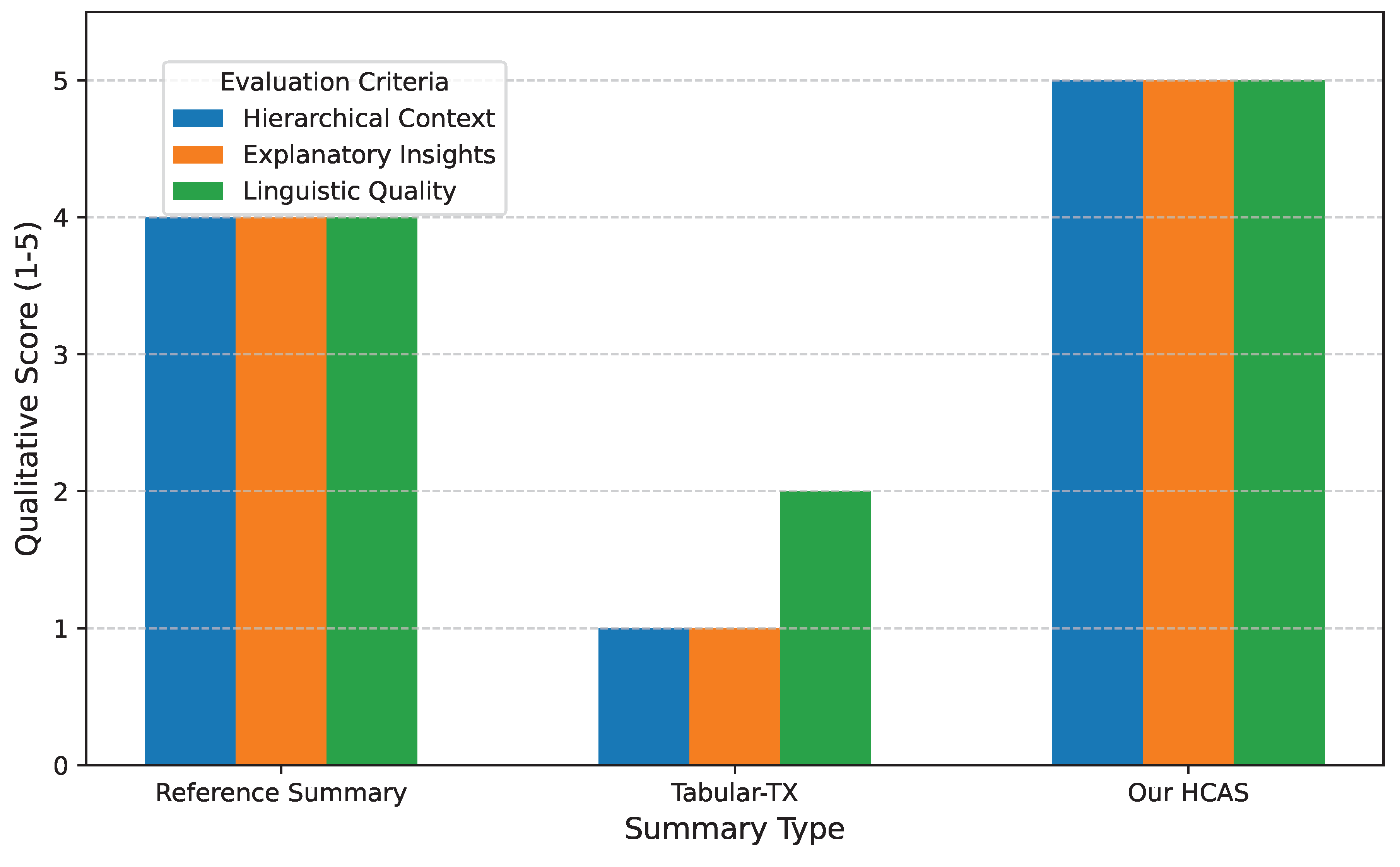

4.5. Qualitative Analysis and Case Studies

Detailed Analysis

- 1.

- Contextual Integration (CKIE Impact): HCAS successfully integrates not only the direct headers but also the hierarchical context and global metadata. The Tabular-TX baseline, while accurate, often misses these higher-level contextual elements, leading to a less complete summary. The full administrative entity is present in the HCAS summary, providing essential organizational context.

- 2.

- Explanatory Narrative (ENSC Impact): A key differentiator is the explanatory power of HCAS. While the raw data is "1,500," HCAS goes further to interpret this value within its administrative implications. This aligns with the ENSC stage’s objective to build a narrative skeleton that includes trends, causal links, and significance, even without explicit numerical comparisons being available in the prompt for this specific example, it infers the ’implication’. Tabular-TX, in contrast, largely provides a factual restatement without deeper interpretation.

- 3.

- Linguistic Professionalism (FRO Impact): The language used by HCAS is notably more formal and aligned with Korean administrative discourse than the simpler phrasing of Tabular-TX. The inclusion of both the full term and the abbreviation (Research & Development (R&D)) demonstrates a sophisticated understanding of professional writing conventions. This reflects the successful application of the FRO stage, which focuses on domain-specific linguistic adaptation and synthesis.

4.6. Error Analysis

- 1.

- Factual Inaccuracy/Hallucination: The summary contains information that contradicts the table data or invents details not present in the table.

- 2.

- Incomplete Context: The summary fails to integrate essential direct or hierarchical contextual information, leading to an ambiguous or less informative statement.

- 3.

- Poor Coherence/Flow: The sentences or phrases within the summary do not connect logically, or the overall structure is disjointed.

- 4.

- Linguistic Awkwardness/Informality: The language used is grammatically incorrect, unnatural, overly simplistic, or lacks the formal tone expected in administrative documents.

Reduced Factual Inaccuracies

Comprehensive Contextual Understanding

Improved Coherence and Linguistic Quality

4.7. Efficiency and Resource Utilization

Reduced Data Requirements

Elimination of Training Time and Costs

Simplified Deployment and Maintenance

Inference Overhead

5. Conclusions

References

- Nan, L.; Radev, D.; Zhang, R.; Rau, A.; Sivaprasad, A.; Hsieh, C.; Tang, X.; Vyas, A.; Verma, N.; Krishna, P.; et al. DART: Open-Domain Structured Data Record to Text Generation. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 432–447. [CrossRef]

- Schick, T.; Schütze, H. Few-Shot Text Generation with Natural Language Instructions. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2021, pp. 390–402. [CrossRef]

- Long, Q.; Wang, M.; Li, L. Generative imagination elevates machine translation. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2021, pp. 5738–5748.

- Xu, S.; Cao, Y.; Wang, Z.; Tian, Y. Fraud Detection in Online Transactions: Toward Hybrid Supervised–Unsupervised Learning Pipelines. In Proceedings of the Proceedings of the 2025 6th International Conference on Electronic Communication and Artificial Intelligence (ICECAI 2025), Chengdu, China, 2025, pp. 20–22.

- Zhou, Y.; Zheng, H.; Chen, D.; Yang, H.; Han, W.; Shen, J. From Medical LLMs to Versatile Medical Agents: A Comprehensive Survey 2025.

- Qian, W.; Shang, Z.; Wen, D.; Fu, T. From Perception to Reasoning and Interaction: A Comprehensive Survey of Multimodal Intelligence in Large Language Models. Authorea Preprints 2025.

- Zhou, Z.; de Melo, M.L.; Rios, T.A. Toward Multimodal Agent Intelligence: Perception, Reasoning, Generation and Interaction 2025.

- Wei, K.; Liu, X.; Zhang, J.; Wang, Z.; Liu, R.; Yang, Y.; Xiao, X.; Sun, X.; Zeng, H.; Pan, C.; et al. CFVBench: A Comprehensive Video Benchmark for Fine-grained Multimodal Retrieval-Augmented Generation. arXiv preprint arXiv:2510.09266 2025.

- Hoxha, A.; Shehu, B.; Kola, E.; Koklukaya, E. A Survey of Generative Video Models as Visual Reasoners 2026.

- Liu, F.; Vulić, I.; Korhonen, A.; Collier, N. Fast, Effective, and Self-Supervised: Transforming Masked Language Models into Universal Lexical and Sentence Encoders. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2021, pp. 1442–1459. [CrossRef]

- Wang, T.; Xia, Z.; Chen, X.; Liu, S. Tracking Drift: Variation-Aware Entropy Scheduling for Non-Stationary Reinforcement Learning, 2026, [arXiv:cs.LG/2601.19624].

- Long, Q.; Wu, Y.; Wang, W.; Pan, S.J. Does in-context learning really learn? rethinking how large language models respond and solve tasks via in-context learning. arXiv preprint arXiv:2404.07546 2024.

- Kim, B.; Kim, H.; Lee, S.W.; Lee, G.; Kwak, D.; Dong Hyeon, J.; Park, S.; Kim, S.; Kim, S.; Seo, D.; et al. What Changes Can Large-scale Language Models Bring? Intensive Study on HyperCLOVA: Billions-scale Korean Generative Pretrained Transformers. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2021, pp. 3405–3424. [CrossRef]

- Yang, J.; Gupta, A.; Upadhyay, S.; He, L.; Goel, R.; Paul, S. TableFormer: Robust Transformer Modeling for Table-Text Encoding. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2022, pp. 528–537. [CrossRef]

- Hwang, W.; Yim, J.; Park, S.; Yang, S.; Seo, M. Spatial Dependency Parsing for Semi-Structured Document Information Extraction. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021. Association for Computational Linguistics, 2021, pp. 330–343. [CrossRef]

- Deng, X.; Awadallah, A.H.; Meek, C.; Polozov, O.; Sun, H.; Richardson, M. Structure-Grounded Pretraining for Text-to-SQL. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 1337–1350. [CrossRef]

- Gan, Y.; Chen, X.; Huang, Q.; Purver, M.; Woodward, J.R.; Xie, J.; Huang, P. Towards Robustness of Text-to-SQL Models against Synonym Substitution. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 2505–2515. [CrossRef]

- Asai, A.; Kasai, J.; Clark, J.; Lee, K.; Choi, E.; Hajishirzi, H. XOR QA: Cross-lingual Open-Retrieval Question Answering. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 547–564. [CrossRef]

- Adams, G.; Alsentzer, E.; Ketenci, M.; Zucker, J.; Elhadad, N. What’s in a Summary? Laying the Groundwork for Advances in Hospital-Course Summarization. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 4794–4811. [CrossRef]

- Liu, Z.; Chen, N. Controllable Neural Dialogue Summarization with Personal Named Entity Planning. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2021, pp. 92–106. [CrossRef]

- Parvez, M.R.; Ahmad, W.; Chakraborty, S.; Ray, B.; Chang, K.W. Retrieval Augmented Code Generation and Summarization. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2021. Association for Computational Linguistics, 2021, pp. 2719–2734. [CrossRef]

- Long, Q.; Chen, J.; Liu, Z.; Chen, N.; Wang, W.; Pan, S.J. Reinforcing compositional retrieval: Retrieving step-by-step for composing informative contexts. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2025, 2025, pp. 7633–7651.

- Wei, K.; Shan, R.; Zou, D.; Yang, J.; Zhao, B.; Zhu, J.; Zhong, J. MIRAGE: Scaling Test-Time Inference with Parallel Graph-Retrieval-Augmented Reasoning Chains. arXiv preprint arXiv:2508.18260 2025.

- Zhou, Y.; Geng, X.; Shen, T.; Pei, J.; Zhang, W.; Jiang, D. Modeling event-pair relations in external knowledge graphs for script reasoning. Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021 2021.

- Zan, D.; Chen, B.; Zhang, F.; Lu, D.; Wu, B.; Guan, B.; Yongji, W.; Lou, J.G. Large Language Models Meet NL2Code: A Survey. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2023, pp. 7443–7464. [CrossRef]

- Liu, W. Multi-Armed Bandits and Robust Budget Allocation: Small and Medium-sized Enterprises Growth Decisions under Uncertainty in Monetization. European Journal of AI, Computing & Informatics 2025, 1, 89–97.

- Liu, W. Few-Shot and Domain Adaptation Modeling for Evaluating Growth Strategies in Long-Tail Small and Medium-sized Enterprises. Journal of Industrial Engineering and Applied Science 2025, 3, 30–35.

- Liu, W. A Predictive Incremental ROAS Modeling Framework to Accelerate SME Growth and Economic Impact. Journal of Economic Theory and Business Management 2025, 2, 25–30.

- Wang, T. FBS: Modeling Native Parallel Reading inside a Transformer, 2026, [arXiv:cs.AI/2601.21708].

- Zhu, P.; Yang, N.; Wei, J.; Wu, J.; Zhang, H. Breaking the MoE LLM Trilemma: Dynamic Expert Clustering with Structured Compression. arXiv preprint arXiv:2510.02345 2025.

- Yang, N.; Wang, P.; Liu, G.; Zhang, H.; Lv, P.; Wang, J. Proactive Constrained Policy Optimization with Preemptive Penalty. arXiv preprint arXiv:2508.01883 2025.

- Chen, Z.; Zhao, H.; Hao, X.; Yuan, B.; Li, X. STViT+: improving self-supervised multi-camera depth estimation with spatial-temporal context and adversarial geometry regularization. Applied Intelligence 2025, 55, 328.

- Zhang, X.; Li, W.; Zhao, S.; Li, J.; Zhang, L.; Zhang, J. VQ-Insight: Teaching VLMs for AI-Generated Video Quality Understanding via Progressive Visual Reinforcement Learning. arXiv preprint arXiv:2506.18564 2025.

- Li, W.; Zhang, X.; Zhao, S.; Zhang, Y.; Li, J.; Zhang, L.; Zhang, J. Q-insight: Understanding image quality via visual reinforcement learning. arXiv preprint arXiv:2503.22679 2025.

- Xu, Z.; Zhang, X.; Zhou, X.; Zhang, J. AvatarShield: Visual Reinforcement Learning for Human-Centric Video Forgery Detection. arXiv preprint arXiv:2505.15173 2025.

- Logan IV, R.; Balazevic, I.; Wallace, E.; Petroni, F.; Singh, S.; Riedel, S. Cutting Down on Prompts and Parameters: Simple Few-Shot Learning with Language Models. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2022. Association for Computational Linguistics, 2022, pp. 2824–2835. [CrossRef]

- Reif, E.; Ippolito, D.; Yuan, A.; Coenen, A.; Callison-Burch, C.; Wei, J. A Recipe for Arbitrary Text Style Transfer with Large Language Models. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers). Association for Computational Linguistics, 2022, pp. 837–848. [CrossRef]

- Liu, X.; Ji, K.; Fu, Y.; Tam, W.; Du, Z.; Yang, Z.; Tang, J. P-Tuning: Prompt Tuning Can Be Comparable to Fine-tuning Across Scales and Tasks. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers). Association for Computational Linguistics, 2022, pp. 61–68. [CrossRef]

- Zhao, M.; Schütze, H. Discrete and Soft Prompting for Multilingual Models. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2021, pp. 8547–8555. [CrossRef]

- Wei, K.; Zhong, J.; Zhang, H.; Zhang, F.; Zhang, D.; Jin, L.; Yu, Y.; Zhang, J. Chain-of-specificity: Enhancing task-specific constraint adherence in large language models. In Proceedings of the Proceedings of the 31st International Conference on Computational Linguistics, 2025, pp. 2401–2416.

- Yang, N.; Lin, H.; Liu, Y.; Tian, B.; Liu, G.; Zhang, H. Token-Importance Guided Direct Preference Optimization. arXiv preprint arXiv:2505.19653 2025.

- Renze.; Matthew. The Effect of Sampling Temperature on Problem Solving in Large Language Models. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2024. Association for Computational Linguistics, 2024, pp. 7346–7356. [CrossRef]

- Li, Y.; Du, Y.; Zhou, K.; Wang, J.; Zhao, X.; Wen, J.R. Evaluating Object Hallucination in Large Vision-Language Models. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2023, pp. 292–305. [CrossRef]

- Li, H.; Guo, D.; Fan, W.; Xu, M.; Huang, J.; Meng, F.; Song, Y. Multi-step Jailbreaking Privacy Attacks on ChatGPT. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023. Association for Computational Linguistics, 2023, pp. 4138–4153. [CrossRef]

- Li, X.; Zhou, Y.; Zhao, L.; Li, J.; Liu, F. Impromptu cybercrime euphemism detection. In Proceedings of the Proceedings of the 31st International Conference on Computational Linguistics, 2025, pp. 9112–9123.

| Model / Setting | ROUGE-1 | ROUGE-L | BLEU | Avg Score |

|---|---|---|---|---|

| KoBART – Fine-tuned | 0.37 | 0.28 | 0.35 | 0.33 |

| EXAONE 3.0 7.8B – Basic ICL | 0.21 | 0.14 | 0.01 | 0.12 |

| EXAONE 3.0 7.8B – LoRA | 0.27 | 0.21 | 0.05 | 0.17 |

| EXAONE 3.0 7.8B – Tabular-TX | 0.51 | 0.39 | 0.44 | 0.45 |

| EXAONE 3.0 7.8B – Our HCAS | 0.52 | 0.40 | 0.46 | 0.46 |

| llama-3-Korean-Bllossom-8B – Basic ICL | 0.33 | 0.25 | 0.27 | 0.28 |

| llama-3-Korean-Bllossom-8B – Tabular-TX | 0.48 | 0.37 | 0.42 | 0.43 |

| llama-3-Korean-Bllossom-8B – Our HCAS | 0.49 | 0.38 | 0.43 | 0.44 |

| HCAS Configuration | ROUGE-1 | ROUGE-L | BLEU | Avg Score |

|---|---|---|---|---|

| HCAS (Full) | 0.52 | 0.40 | 0.46 | 0.46 |

| HCAS w/o FRO (Output from ENSC) | 0.49 | 0.38 | 0.42 | 0.43 |

| HCAS w/o ENSC (CKIE directly to FRO) | 0.45 | 0.35 | 0.38 | 0.39 |

| HCAS w/o CKIE (Simplified context) | 0.41 | 0.31 | 0.33 | 0.35 |

| Model / Setting | Factual Inacc./ | Incomplete Context | Poor Coherence/ | Linguistic/ |

|---|---|---|---|---|

| Hallucination | Flow | Informality | ||

| KoBART – Fine-tuned | 18% | 35% | 22% | 30% |

| EXAONE 3.0 7.8B – Tabular-TX | 8% | 15% | 10% | 12% |

| EXAONE 3.0 7.8B – Our HCAS | 4% | 8% | 6% | 5% |

| Criterion | KoBART | LoRA | HCAS |

|---|---|---|---|

| Training Data Req. | High | Medium | Low |

| Training Time | Days to Weeks (GPU) | Hours to Days (GPU) | None |

| GPU Memory (Train) | Very High (e.g., 24GB+) | Medium (e.g., 12-24GB) | None |

| GPU Memory (Inf.) | Medium | Medium | Medium |

| Model Size (Adapted) | Full model parameters | Base model + LoRA adapters | Base model |

| Deployment Com. | Requires adapted model | Requires base + adapters | Standard LLM API/inference |

| Cost (Development) | Very High | High | Low to Medium (API calls) |

| Cost (Inference) | Standard per token | Standard per token | Standard per token |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).