1. Motivation, positioning, and scope

“Essentially, all models are wrong, but some are useful.”

This manuscript does not propose a new physical theory, nor does it claim to unify quantum mechanics, classical dynamics, relativity, and thermodynamics at the level of a single state space. Instead, it proposes an operational meta-model that assembles predictions in a shared observable space. The contribution is therefore methodological: a disciplined way to combine existing regime-specific models, quantify confidence through soft weights, and detect when the available model catalog is insufficient.

Contributions (Incremental, Operational)

The contributions are modest and practical:

A common observable space is formalized via a feature map , and regime transitions are treated as decisions in (rather than forcing incompatible state spaces into a single master model).

A standard entropically-regularized soft selection rule (softmax/Gibbs form) is used to obtain convex weights from losses, augmented with a complexity penalty to mitigate overfitting.

A small set of physics-inspired knobs (decoherence/classicality, relativistic severity, thermodynamicity) is used as operational priors that bias weights toward plausible regimes.

A simple out-of-catalog diagnostic (surprise/residual monitoring) is proposed to flag when the chosen and the candidate model set fail to explain data.

Relation to Prior Work

The weighting mechanism is closely related to well-known ideas such as Bayesian model averaging, Gibbs posteriors/PAC-Bayes methods, and mixture-of-experts gating. The goal is not to replace these frameworks, but to provide an explicit physics-facing operational interface: (i) a shared observable space tailored to measurement constraints, (ii) regime priors encoded by calibrated knobs, and (iii) a simple diagnostic for model-set inadequacy. In this sense, the novelty is primarily in the packaging and operationalization for micro–macro and regime-transition problems.

Scope and Limitations

This framework is only as good as (a) the chosen observable map and (b) the candidate model catalog. The knobs are not fundamental constants; they are operational summaries that must be calibrated for each experimental context. Accordingly, the method should be read as a principled decision layer on top of existing models, not as a substitute for domain-specific mechanistic modeling.

2. Level of Unification: the Output Space and the Map

Let

X be the vector of observables to be predicted. Each formalism

produces a natural object

(trajectory, distribution, spectrum, quantum channel, etc.). A feature map is defined as

Typical examples:

for a distribution P.

for a sampled spectrum.

for sampled correlations.

In practice, .

2.1. The Choice of is not Neutral (an Explicit Operational Hypothesis)

Selecting and encodes which degrees of freedom are considered relevant in the micro–macro transition. In that sense, the coarse-graining implicit in is an operational hypothesis: it assumes that certain functionals (moments, spectra, correlations) capture what is essential for the regime of interest. This choice should be guided by invariances, scales, and measurement constraints.

3. Assembly: Convex Combination and Structure-Preserving Operators

3.1. Base Meta-Predictor (Convex)

A minimal assembler is defined by

3.2. Structured assembly (when constraints exist)

When the observable has structure (e.g., normalized distributions, PSD matrices, density matrices), a naive linear combination may violate constraints (positivity, normalization, etc.). In such cases, a structure-preserving assembly operator is used:

where

is chosen to preserve structure. Examples:

Distributions: convex mixture in the probability simplex (preserves normalization and non-negativity).

Density matrices: convex mixture of states (preserves positivity and trace 1).

Constrained observables: projection onto a valid convex set: .

Equation (

1) is used as the minimal reference, and (

2) as its natural extension.

4. Weights from Loss: Soft Selection with Regularization and Complexity

Let

be a total loss associated with model

i. Define:

4.1. Convex Interpretation (Minimum Loss + Entropy)

Rule (

3) solves

where

and the second term is entropic regularization.

4.2. Loss Definition in

If a reference

exists (measurement or “fine” simulation),

4.3. Complexity Penalty (AIC/BIC/MDL)

To reduce bias toward overly complex models,

where

can be AIC/BIC/MDL [

2,

3,

4].

4.4. Knobs as Calibrated Parametrizations

The functional forms used for are chosen for monotonicity, boundedness, and numerical stability. Other monotone parametrizations would be equally valid. In applications, these mappings should be calibrated (or learned) from validity data, measurement noise levels, and known scale separations.

5. Physics-Facing Priors from Knobs

Define dimensionless knobs (examples; operational use only):

Let

. A minimal factorized prior is:

with

.

5.1. Combining Prior + Loss

6. Hardening: Knob Coupling and Out-of-Catalog Monitoring

6.1. Coupling Among Knobs (Validity Covariance)

Knobs

are often correlated in real systems. Let

and introduce a covariance matrix

. A Mahalanobis-type penalty is defined by

where

is an operational center (possibly context-dependent).

6.3. Surprise as a Diagnostic (Not a Physical Law)

Define residual loss

and

The surprise indicator is intended as an engineering diagnostic summarizing persistent mismatch between assembled predictions and reference observations. No claim is made that it is, by itself, a thermodynamic entropy production law; any thermodynamic reading should be treated as a hypothesis to be tested in open-system settings.

7. Minimal Case Study Template and a NISQ Acid Test

7.1. Minimal Reproducibility Checklist

Any case study should report: (i) the definition of and the dimension of , (ii) the metric d used for loss, (iii) the candidate models compared, (iv) the calibration procedure for and for knobs, and (v) weight trajectories with residual monitoring.

7.2. NISQ Acid Test: Critical Decoherence

For a qubit with dephasing characterized by

,

and

depends on effective mixing/noise. The operational critical time is defined by

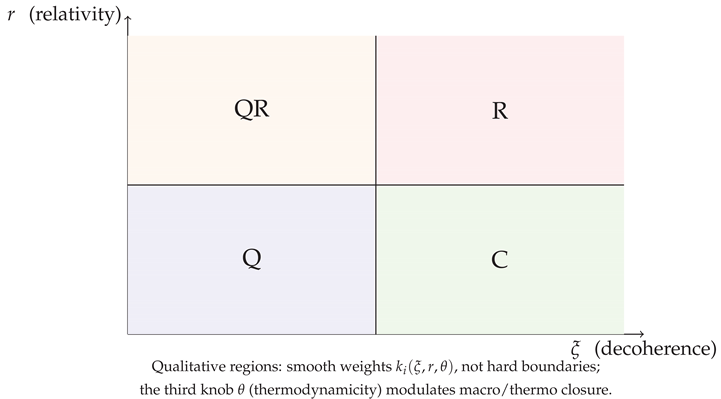

8. Qualitative Regime Figure

9. Conclusions

An operational meta-model for regime-aware prediction assembly has been presented. The framework combines (i) a shared observable space, (ii) soft loss-based weighting with regularization and complexity control, (iii) physics-facing regime priors via calibrated knobs, and (iv) a diagnostic for persistent out-of-catalog mismatch. The proposal is deliberately incremental: it is intended as a practical decision layer on top of existing mechanistic models, with clear calibration and validation hooks.

References

- Box, G. E. P.; Draper, N. R. Empirical Model-Building and Response Surfaces; John Wiley & Sons, 1987. [Google Scholar]

- Akaike, H.

A new look at the statistical model identification

. IEEE Transactions on Automatic Control 1974, 19(6), 716–723. [Google Scholar] [CrossRef]

- Schwarz, G.

Estimating the dimension of a model

. Annals of Statistics 1978, 6(2), 461–464. [Google Scholar] [CrossRef]

- Rissanen, J.

Modeling by shortest data description

. Automatica 1978, 14(5), 465–471. [Google Scholar] [CrossRef]

- Hoeting, J. A.; Madigan, D.; Raftery, A. E.; Volinsky, C. T.

Bayesian model averaging: A tutorial

. Statistical Science 1999, 14(4), 382–417. [Google Scholar] [CrossRef]

- Catoni, O. PAC-Bayesian Supervised Classification: The Thermodynamics of Statistical Learning, IMS Lecture Notes–Monograph Series, 2007.

- Amari, S.-I. Information Geometry and Its Applications; Springer, 2016. [Google Scholar]

- Jacobs, R. A.; Jordan, M. I.; Nowlan, S. J.; Hinton, G. E.

Adaptive mixtures of local experts

. Neural Computation 1991, 3(1), 79–87. [Google Scholar] [CrossRef] [PubMed]

- Zurek, W. H. Decoherence, einselection, and the quantum origins of the classical. Rev. Mod. Phys. 2003, 75, 715–775. [Google Scholar] [CrossRef]

- Breuer, H.-P.; Petruccione, F. The Theory of Open Quantum Systems; Oxford University Press, 2002. [Google Scholar]

- Jaynes, E. T. Information Theory and Statistical Mechanics. Phys. Rev. 1957, 106, 620–630. [Google Scholar] [CrossRef]

- Zwanzig, R. Nonequilibrium Statistical Mechanics; Oxford University Press, 2001. [Google Scholar]

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).