A. Dataset

This study adopts the ALFRED dataset as the primary evaluation benchmark. The dataset is built on an interactive indoor simulation environment and provides complex interaction sequences across multiple scenes, tasks, and action chains. It covers navigation, object manipulation, task planning, and other types of instruction execution. The layout, object states, and interaction outcomes in the environment are highly variable. Agents must handle significant uncertainty and long-term dependencies during execution. These characteristics align well with the research objectives of adaptive task decomposition and strategy updating in dynamic environments.

Tasks in ALFRED consist of high-level goals and multi-step subtasks. Agents must understand the semantics of the instructions and perform long-horizon reasoning, state tracking, and behavioral adjustment in a dynamic environment. The dataset includes rich scene states, visual observations, action sequences, and natural language descriptions. These elements provide strong supervision for building large model-driven context understanding modules. In addition, the interactability and variability of objects, together with the diversity of task chains, make ALFRED an ideal platform for evaluating structured decision making.

The dataset is fully open source and offers a unified simulation interface and a reproducible environment. This design enables fair comparison under the same task configurations and uncertain conditions. Its dynamic properties, cross-task transfer settings, and high-level semantic structures required for task decomposition match the focus of this study. Research based on this dataset can verify the reasoning consistency of agents in complex environments. It can also evaluate their sensitivity to environmental changes and their ability to evolve strategies in long-horizon tasks.

B. Experimental Results

This paper first conducts a comparative experiment, and the experimental results are shown in

Table 1.

From the table, it can be observed that all methods show a clear stratified performance pattern under dynamic and uncertain environments. Traditional methods face evident limitations when handling changing environments, long task chains, and complex interactions. Their task success rate and path efficiency remain low due to insufficient sensitivity to environmental variations, limited global semantic understanding, and the inability to reconstruct task structures in real time. As the methods progress from V2xpnp and Muma tom to SciAgents and Mapcoder, overall performance improves. However, they still struggle to maintain stable task completion when environmental disturbances are frequent, and task structures change dynamically. This trend reflects the weaknesses of traditional architectures in long-horizon reasoning and structured planning.

In terms of path execution efficiency, Path Efficiency increases as model complexity and semantic understanding improve. Yet all methods still show delayed responses to environmental changes and limited understanding of task chain structures. V2xpnp and Muma tom often fail to adjust their trajectories when the environment shifts, which leads to low path efficiency. Even SciAgents and Mapcoder exhibit trajectory drift, redundant actions, and inconsistent strategies in tasks with long-term dependencies. These observations indicate that strategies relying only on static planning or local adjustments cannot maintain globally consistent behavior in complex scenarios.

The Error Recovery Rate provides further insight into differences in adaptive strategy capability. Most baseline methods do not include an explicit strategy updating mechanism. When deviations occur during execution or when object states or environmental conditions change, the agents often fail to recover the task sequence effectively. As a result, their recovery rates remain low. In contrast, models with adaptive strategy updating can rapidly reconstruct task understanding and adjust action sequences when facing errors or environmental shifts. Their recovery rates are significantly higher than those of traditional methods. This pattern highlights the importance of strategy updating modules for improving long-term robustness.

The comparison of Action Prediction Entropy further illustrates differences in decision stability among methods. Baseline methods often show dispersed action selection distributions, indicating high uncertainty in their strategies under dynamic environments. As environmental complexity increases, the randomness and inconsistency of their action outputs become more pronounced. The entropy score of the proposed method is the lowest, which shows that its strategy maintains a more stable action distribution under environmental disturbances. It relies less on random search and more on structured reasoning and feedback-driven policy adaptation. Overall, the results demonstrate that adaptive task decomposition and dynamic strategy updating can significantly enhance task completion, decision stability, and recovery capability in uncertain environments. These improvements align closely with the core objectives of the proposed model.

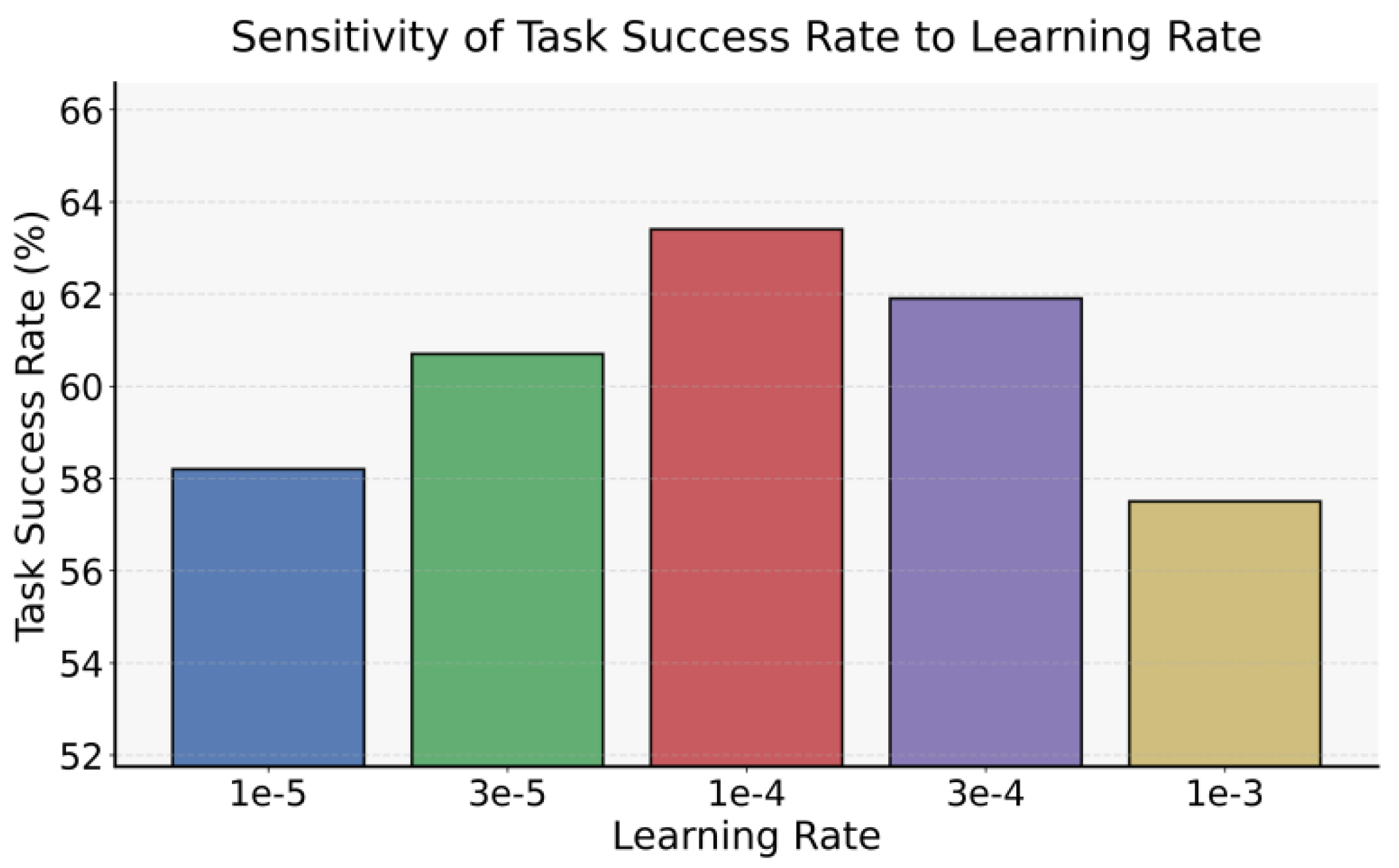

In the adaptive task decomposition and strategy update framework, the learning rate is a crucial hyperparameter that directly affects the convergence speed of the strategy and the stability of task execution. When the agent continuously iterates its strategy in a dynamic and uncertain environment, an excessively large or small learning rate will affect its ability to respond to environmental changes and maintain the task chain. Therefore, it is necessary to conduct a systematic sensitivity analysis of different learning rate settings to clarify their impact on task success rate and guide a more reasonable hyperparameter selection. The experimental results are shown in

Figure 2.

The figure illustrates that the choice of learning rate has a clear impact on the stability of task execution in dynamic and uncertain environments. A very small learning rate slows the update process. The agent cannot adapt to ongoing changes in the environment. A very large learning rate causes unstable updates and fluctuating behavior, which prevents the formation of a reliable policy. A moderate learning rate achieves a balance between update speed and stability. This balance allows the agent to remain responsive to environmental variation while maintaining consistent behavior, which results in higher task success rates.

The changes in path efficiency further reveal how learning rate influences long sequence execution. In scenarios with long task chains and frequent environmental shifts, insufficient policy updates cause redundant actions and increasing trajectory deviation. Excessively rapid updates can repeatedly overwrite the execution path and weaken global planning consistency. When the learning rate falls within a suitable range, the agent preserves stable behavior while correcting local errors. This leads to improved path efficiency and reflects the positive effect of adaptive task decomposition and strategy updating on execution quality.

The results for error recovery and action prediction entropy show that learning rate directly affects recoverability and decision stability. Under disturbances or task drift, a low learning rate prevents timely reconstruction of the task structure. A high learning rate makes unnecessary resets more likely, which disrupts the recovery process. With an appropriate learning rate, the model adjusts its policy based on feedback in a more structured and controlled manner. Recovery performance improves. At the same time, lower action entropy indicates more stable and consistent decision-making. These characteristics help the agent maintain reliable performance in complex and changing environments.

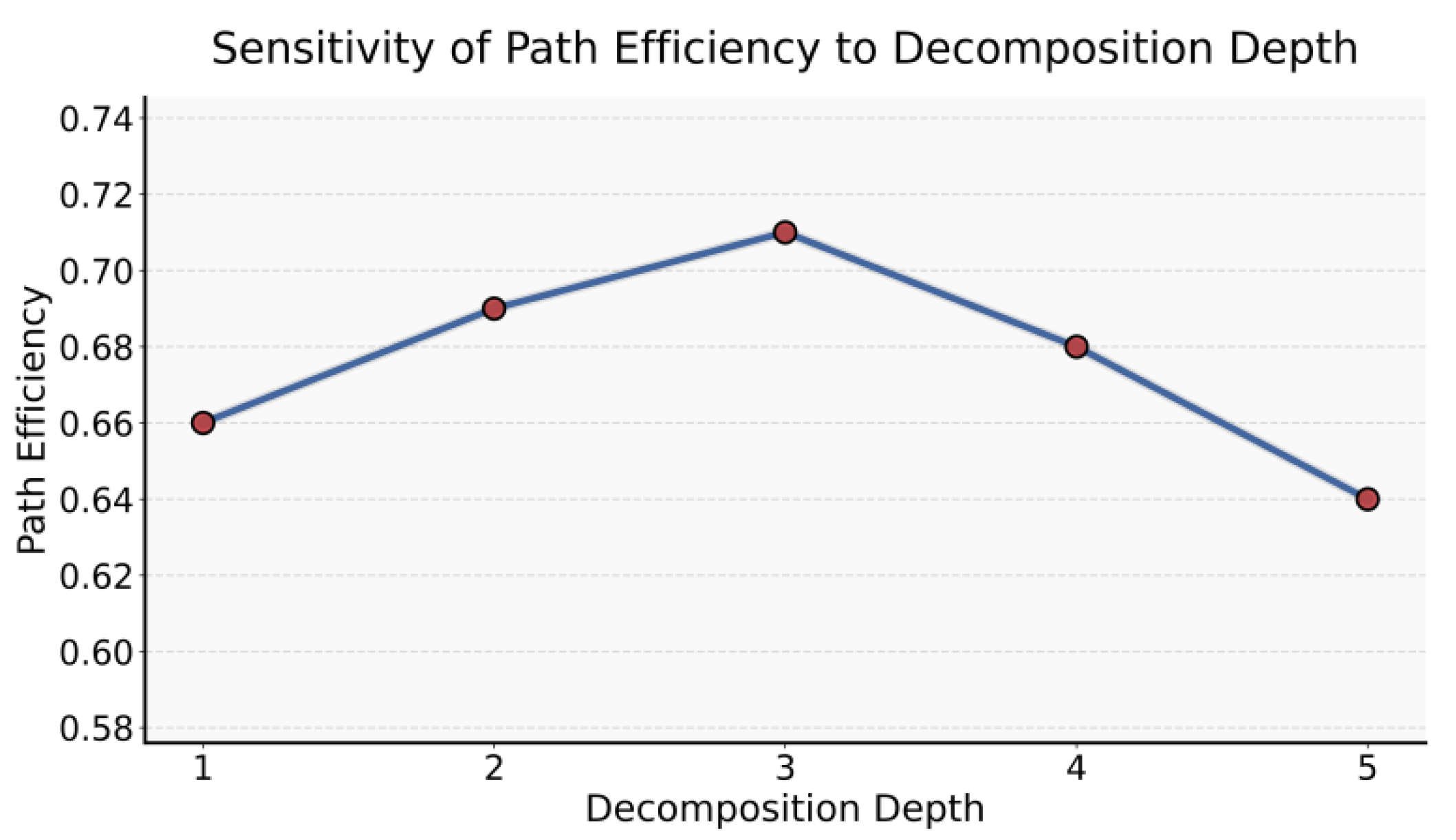

The number of task decomposition layers directly determines the granularity of the agent’s structure when handling complex tasks, and this granularity affects the accuracy of path planning and the redundancy of action sequences. When the number of decomposition layers is too small, the agent struggles to capture fine-grained operational logic, while too many layers can increase the computational burden and affect path execution efficiency. Therefore, it is necessary to systematically examine the impact of different task decomposition layer numbers on path efficiency to determine the optimal decomposition depth for dynamic and uncertain environments. The experimental results are shown in

Figure 3.

The curve in the figure shows that the impact of task decomposition depth on path efficiency follows a clear structural pattern. As the decomposition granularity shifts from coarse to more refined levels, path efficiency first increases and then decreases. This indicates that deeper decomposition is not always better, and shallow decomposition is not always preferable. There exists an optimal range that matches the dynamics of the environment and the complexity of the task. When the decomposition depth is low, the structural guidance available to the agent is limited. As a result, the agent struggles to maintain a stable decision sequence under complex environmental changes. This leads to redundant actions and frequent turning in path execution.

Increasing the decomposition depth provides the agent with more complete hierarchical cues. This allows the agent to inherit contextual information more accurately during reasoning and to reduce ineffective actions. Path efficiency reaches its peak within this range. However, the improvement does not continue indefinitely. When the decomposition depth exceeds a reasonable threshold, the task structure becomes overly fragmented. The amount of structural information that must be processed at each step increases. This slows the agent’s response to dynamic and uncertain conditions and adds unnecessary reasoning overhead during execution.

At deeper decomposition levels, excessive structural load may reduce the agent’s ability to adjust its strategy when abrupt environmental changes occur. For example, when object positions shift or interference events appear, too many structural nodes may delay the triggering of policy updates. This delay causes the planning process to lag behind the environment, which decreases path efficiency. This observation suggests that task decomposition and strategy updating must operate together to maintain efficient behavior in dynamic scenarios.

Overall, the results show that an appropriate decomposition depth is essential for building large model agents with adaptive capabilities. A moderate number of decomposition layers achieves a balance between structural guidance and reasoning burden. It allows the agent to capture fine-grained task logic while avoiding the reaction slowdown caused by structural overload. These findings further support the importance of the adaptive task decomposition framework proposed in this work. Dynamically adjusting structural granularity based on environmental variation and task demands helps maintain stable and efficient path execution under uncertainty.

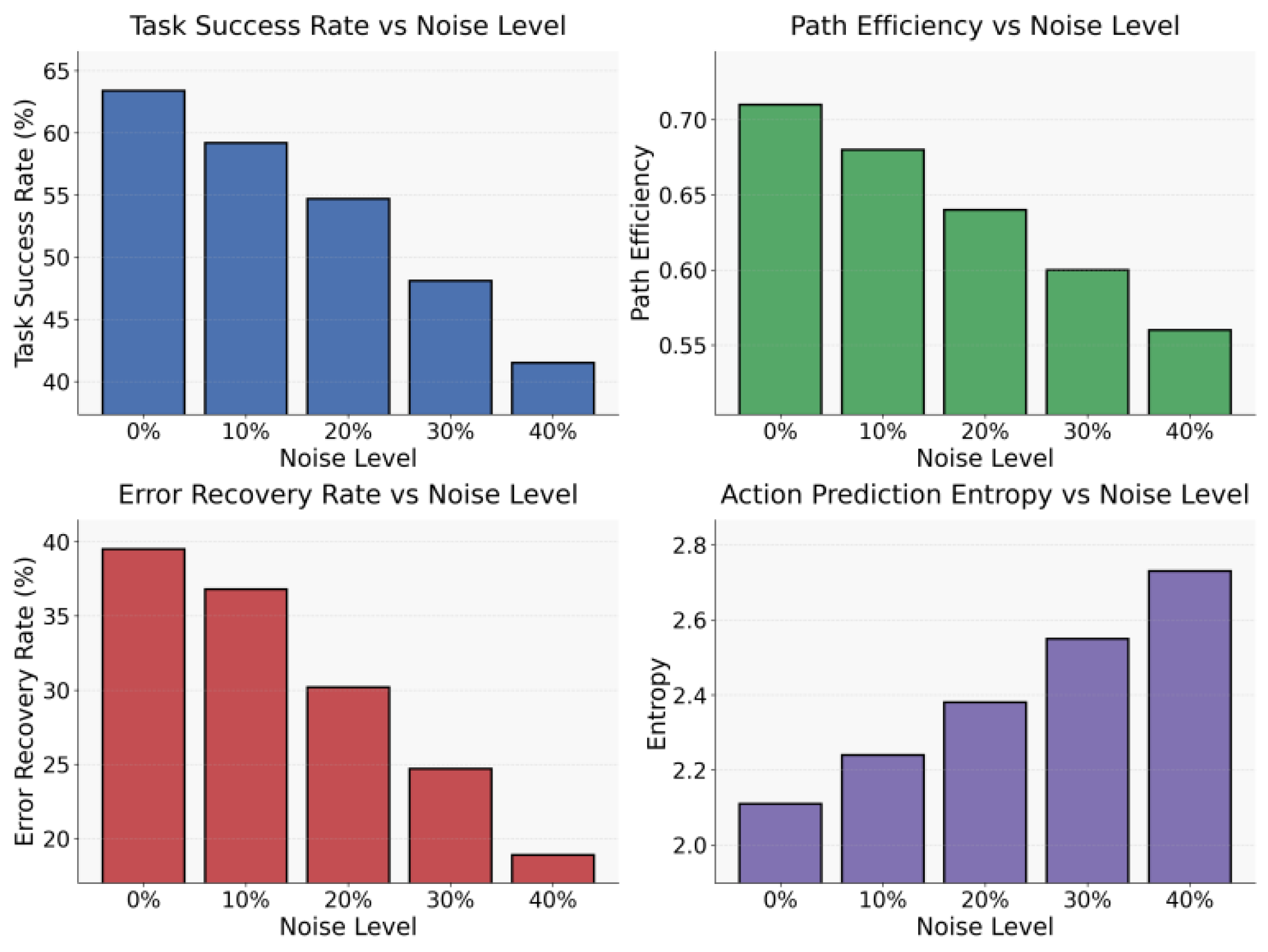

This paper also presents the impact of different levels of perceptual noise on the experimental results, which are shown in

Figure 4.

The four subplots together show that increasing perceptual noise significantly weakens task execution quality in dynamic and uncertain environments. As noise levels rise, state recognition, scene understanding, and action feedback all become disturbed. These disruptions lead to biased environmental representations. Since the perception module provides the input foundation for task decomposition and policy generation, corrupted input signals affect both structural inference and subsequent decision updates. As a result, task success rate and path efficiency decline steadily.

The changes in path efficiency further reveal how noise damages structured planning. When environmental features received by the agent are inaccurate or distorted, the agent is more likely to deviate from planned routes and produce redundant or repeated actions. Even with a certain level of reasoning capability, the agent cannot maintain consistent planning under misleading perceptual inputs. Therefore, path efficiency decreases as noise increases, which reflects the difficulty of sustaining stable execution strategies under uncertain observations.

The variation in error recovery ability highlights the fragility of strategy updating mechanisms in high noise settings. With higher noise injection, the agent deviates from the correct action path more easily. Its ability to adjust strategies through environmental feedback also weakens. This indicates that under heavy noise, the agent becomes slower at identifying errors during reasoning. Strategy updates fail to trigger in time, and the recovery rate shows a persistent downward trend. This finding underscores the importance of strengthening policy updating modules to enhance robustness.

The rising trend of action prediction entropy reflects increasing decision uncertainty. Higher noise creates greater ambiguity in environmental interpretation. The action distribution becomes more dispersed and unstable. The agent exhibits stronger randomness when selecting actions and struggles to maintain a stable reasoning chain about the task structure. Higher entropy values indicate weaker operational preferences and greater sensitivity to momentary disturbances. Overall, these results suggest that perceptual quality is critical for maintaining accurate task decomposition and effective strategy updating. Improving perceptual robustness is therefore essential for building highly stable large model agents.