1. Introduction

The rapid advancement of artificial intelligence technologies, particularly large language models (LLMs) such as OpenAI’s GPT series, Google’s Gemini, and Anthropic’s Claude, has fundamentally transformed human–machine interaction across numerous domains [

1,

2,

3]. In education, these models offer capabilities ranging from automated code generation and real-time tutoring to personalized feedback and adaptive content delivery [

4,

5]. Concurrently, blockchain technology has emerged as a transformative force in educational settings, enabling secure credential verification, decentralized certificate management, and tamper-proof academic records [

6,

7]. The European Commission’s 2017 report indicated that blockchain-based credential verification systems could reduce diploma fraud by 78% [

8], and pioneering initiatives such as MIT’s Blockcerts [

9] and Sony Global Education’s decentralized certification platform [

10] have demonstrated the practical viability of these approaches. More recently, Centeno Cuya and Palaoag [

11] confirmed blockchain’s potential as a universal solution against credential fraud, and Cardenas-Quispe and Pacheco [

12] experimentally validated a hybrid blockchain prototype for academic credential authenticity.

Developing blockchain-based educational systems demands proficiency across two fundamentally distinct domains: technical expertise, encompassing blockchain protocol knowledge (Ethereum, Hyperledger), smart contract development (Solidity, Vyper), cryptography, gas optimization, and Web3 integration [

13,

14]; and pedagogical expertise, involving educational theory (constructivism, cognitivism, connectivism), instructional design, learner needs analysis, accessibility, and assessment [

15,

16]. A systematic literature review following PRISMA guidelines (N = 247 articles, Web of Science and Scopus, 2018–2025) revealed that 73% of blockchain-education research is technically oriented, only 12% adopts a pedagogical design perspective, and the remaining 15% addresses general conceptual frameworks. According to OECD TALIS 2018 data, merely 23% of teachers possess all three TPACK knowledge types simultaneously [

17], a proportion presumably even lower in the blockchain context. This competency gap carries tangible consequences: a survey of 50 EdTech companies in Turkey reported that 68% of blockchain projects experienced delays averaging 4.2 months due to competency mismatches, resulting in a mean 35% cost overrun [

18].

Despite the growing importance of human–AI collaboration, the existing literature overwhelmingly adopts a model-centric perspective, focusing on LLM capabilities rather than the human factors that shape interaction quality [

19,

20]. Four fundamental gaps can be identified. First, LLM performance research predominantly examines model abilities [

1,

21], and prompt engineering studies offer general principles [

22,

23] without empirically investigating how user competency levels affect outcomes; although the Dreyfus and Dreyfus [

24] five-stage competency model has been validated in nursing [

25] and teaching [

26], it has not been applied to AI-assisted task performance. Second, current AI recommendation systems, whether collaborative filtering, content-based, or hybrid [

27,

28], predominantly employ similarity-based approaches, neglecting the insight from Vygotsky’s [

29] Zone of Proximal Development (ZPD) that optimal learning occurs slightly above the learner’s current level, and from Nonaka and Takeuchi’s [

30] knowledge transfer theory that complementary expertise is central to knowledge creation. Third, studies at the blockchain–education intersection typically treat technical and pedagogical expertise in isolation [

13,

31,

32], leaving the integration prescribed by Mishra and Koehler’s [

16] TPACK framework insufficiently explored. Fourth, mathematical modeling in human–AI collaboration research remains limited, with studies relying primarily on qualitative methods [

33] or basic statistical analyses. A systematic intersection analysis confirms the scope of these gaps: blockchain ∩ education yielded N = 247 studies; blockchain ∩ AI, N = 29; blockchain ∩ competency, N = 12; education ∩ AI ∩ competency, N = 9; and blockchain ∩ education ∩ AI ∩ competency, N = 0.

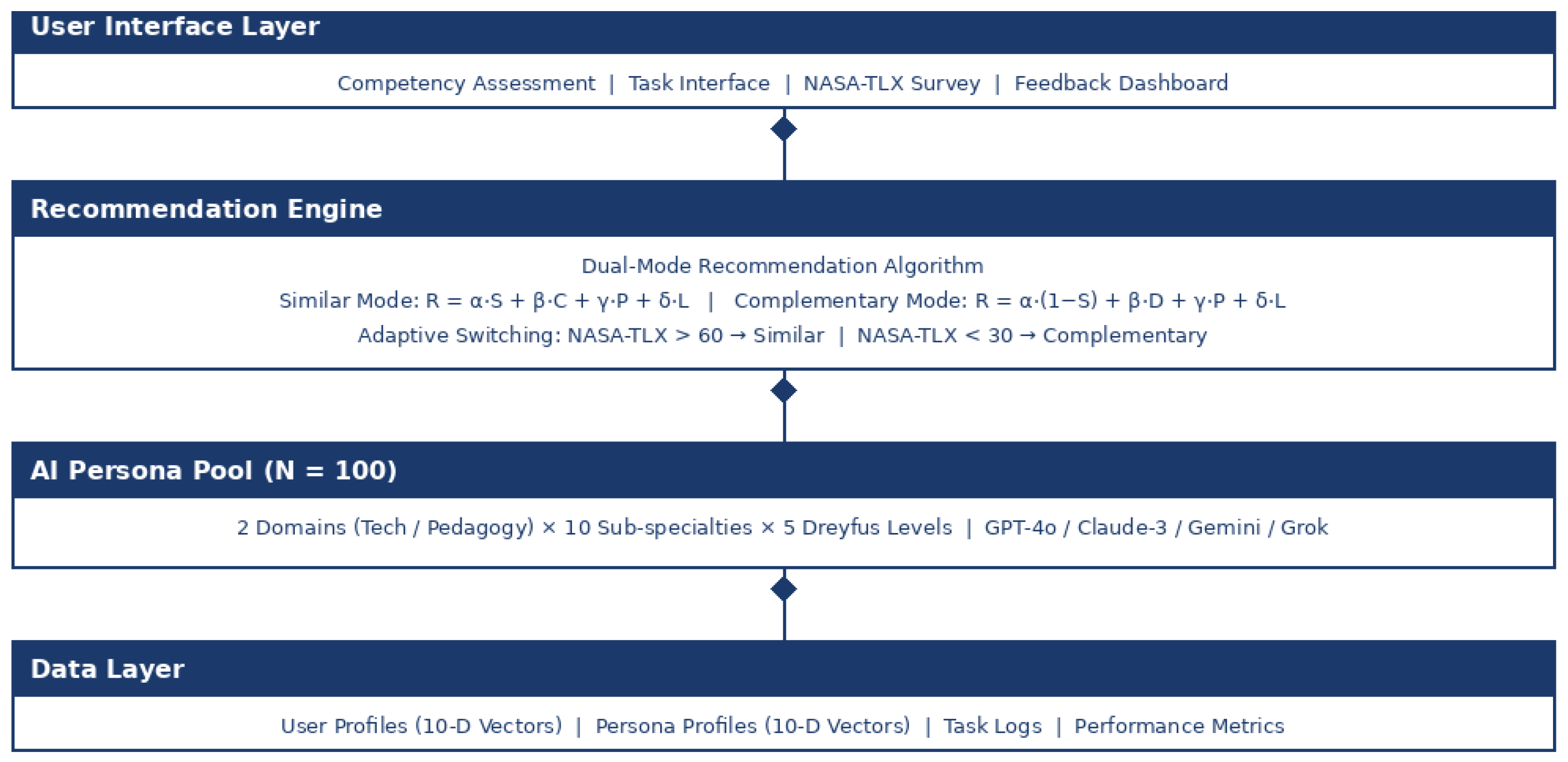

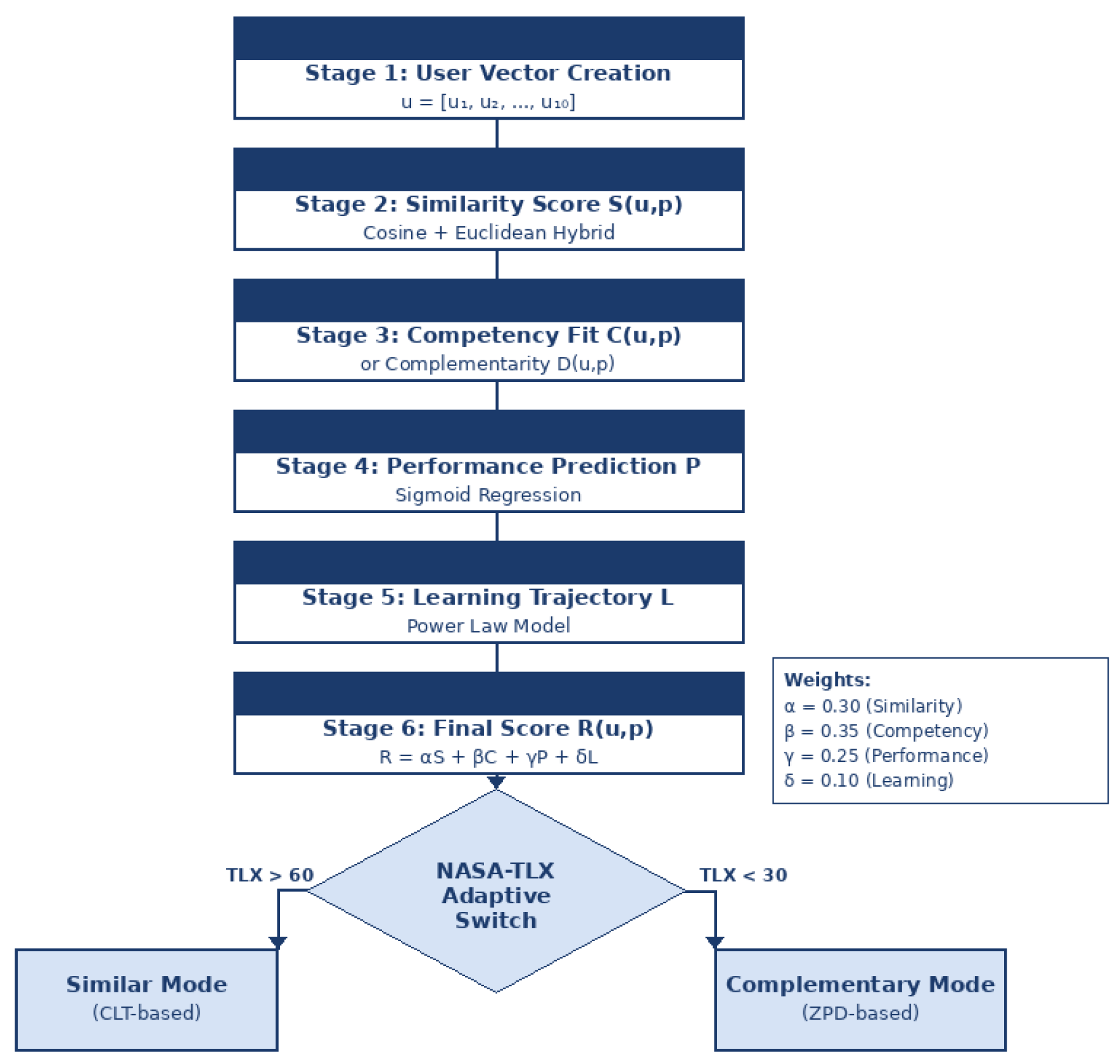

To address these gaps, this study develops, implements, and evaluates the Persona-In-The-Loop (PITL) system, a web-based adaptive AI persona recommendation framework integrating five theoretical foundations. The Dreyfus and Dreyfus [

24] five-stage model (Novice, Advanced Beginner, Competent, Proficient, Expert) provides the taxonomy for classifying both user and persona competency levels. Vygotsky’s [

29] ZPD furnishes the theoretical basis for a complementary recommendation mode, in which AI personas are assigned one to two Dreyfus levels above the user to facilitate learning within the ZPD, operationalized through a Gaussian match function [

35,

36]. Sweller’s [

37] Cognitive Load Theory (CLT) underpins a similarity-based mode, in which matching users with comparable-level personas minimizes extraneous cognitive load; the expertise reversal effect [

40] further supports this differentiated approach, as detailed guidance beneficial for novices can generate redundant load for experts [

38,

39]. Nonaka and Takeuchi’s [

30] SECI model guides knowledge decomposition in the vector space, enabling nuanced competency profiling across procedural, declarative, and conditional knowledge. Finally, the TPACK framework [

16] determines the dual-domain structure of the 100-persona pool, comprising 50 technology-oriented and 50 pedagogy-oriented personas organized across ten sub-specialties and five Dreyfus levels. These five theories function as an integrated system: Dreyfus provides classification, ZPD and CLT supply opposing but complementary matching strategies, SECI guides knowledge representation, and TPACK structures the domain architecture.

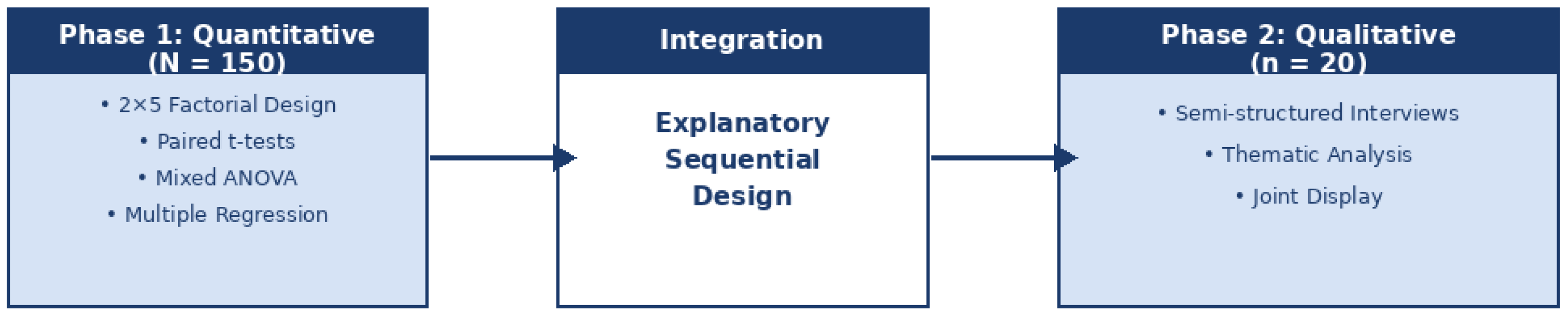

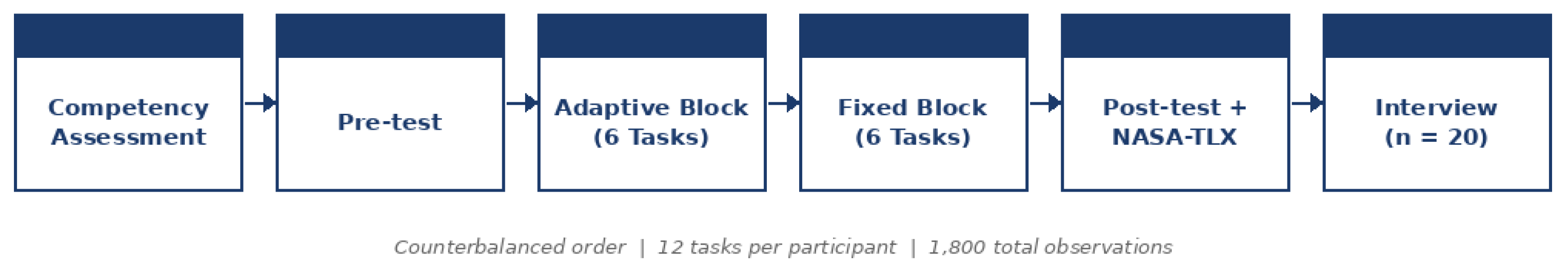

PITL operates in two modes whose recommendation scores are governed by a unified mathematical formulation. An adaptive switching mechanism driven by NASA-TLX [

41] cognitive load feedback dynamically transitions between modes: when total cognitive load exceeds a defined threshold, the system shifts to similarity mode to reduce burden; when load falls below a lower threshold, it switches to complementary mode to promote learning. The principal aim of this study is to empirically evaluate PITL through a mixed-methods design with 150 participants, examining the differential effects of the two modes on learning gain, cognitive load, code quality, and task duration; the moderating role of Dreyfus competency level; and the superiority of the adaptive mechanism over fixed-mode assignment. Quantitative findings are corroborated through phenomenological interviews with 20 participants. The results demonstrate that complementary mode produces significantly higher learning gains, whereas similarity mode yields lower cognitive load and higher code quality, and that the adaptive mechanism outperforms both fixed-mode conditions, together providing the first empirically validated dual-mode competency-based AI persona recommendation system for blockchain education.

4. Results

4.1. Assumption Checks

Normality was assessed via Shapiro-Wilk tests on participant-level mode averages. Significant violations were detected for learning gain in similar mode (W = .965, p = .001), cognitive load in both modes (similar: W = .974, p = .006; complementary: W = .977, p = .012), and code quality in both modes (both p < .001). Consequently, all paired comparisons were validated with Wilcoxon signed-rank tests, which yielded results consistent with parametric tests (all p < .001). Levene’s tests confirmed homogeneity of variance across Dreyfus levels for all dependent variables: learning gain (F(4, 145) = 0.80, p = .530), cognitive load (F(4, 145) = 1.13, p = .343), code quality (F(4, 145) = 0.97, p = .426), and task duration (F(4, 145) = 0.24, p = .914). Sphericity was automatically satisfied as the within-subject factor (mode) had only two levels.

4.2. Mode Effect: Similar vs. Complementary (H1, H2)

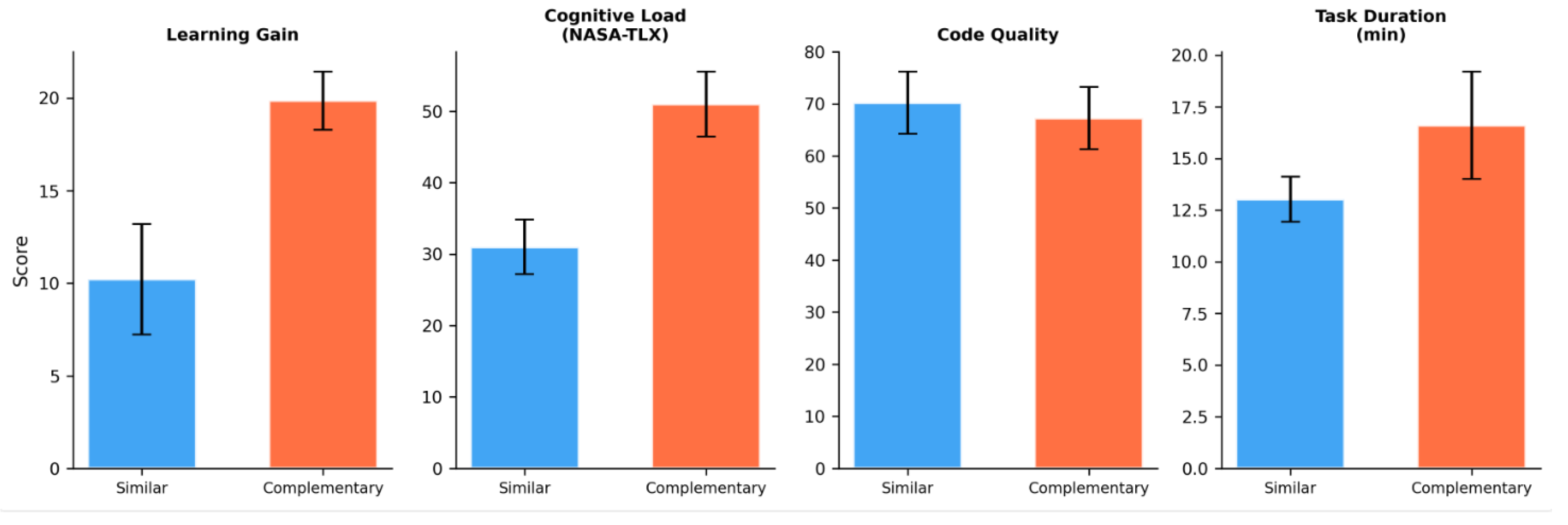

Paired-samples t-tests comparing participant-level mode averages across all four dependent variables are reported in

Table 4 and visualized in

Figure 5.

All four dependent variables showed statistically significant differences between modes. Complementary mode produced substantially higher learning gains (d = 5.13), while similar mode yielded lower cognitive load (d = 9.02) and higher code quality (d = −1.70). Task duration was longer in complementary mode (d = 1.71). Both H1 and H2 were supported.

4.3. Mode × Dreyfus Level Interaction (H3)

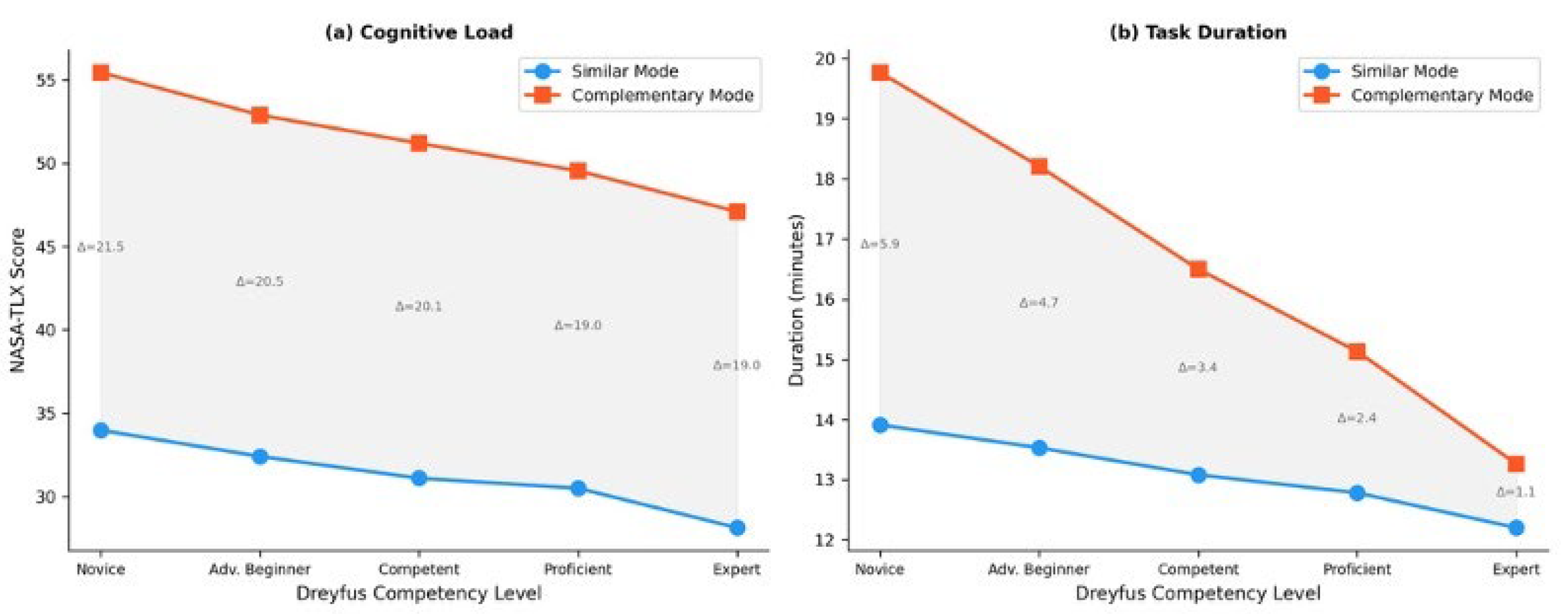

Two-way mixed ANOVAs (Mode [within] × Dreyfus level [between]) were conducted for each dependent variable. Results are summarized in

Table 5.

The Mode × Dreyfus interaction was significant for cognitive load (F(4, 145) = 5.86, p < .001, η²p = .139) and task duration (F(4, 145) = 101.33, p < .001, η²p = .737), but not for learning gain (p = .255) or code quality (p = .440). H3 was therefore partially supported. Interaction profiles are displayed in

Figure 6.

Table 6 depicts simple effects analyses for cognitive load revealed that the mode difference was largest at the novice level (Δ = 21.46, d = 9.68) and smallest at the expert level (Δ = 18.97, d = 8.53). For task duration, the pattern was more pronounced: novices required 5.86 extra minutes in complementary mode (d = 5.38), whereas experts required only 1.06 extra minutes (d = 0.87). The non-significant interaction for learning gain and code quality indicates that mode effects on these outcomes are robust across all competency levels, a finding with important practical implications.

4.4. Adaptive Mode-Switching Mechanism (H4)

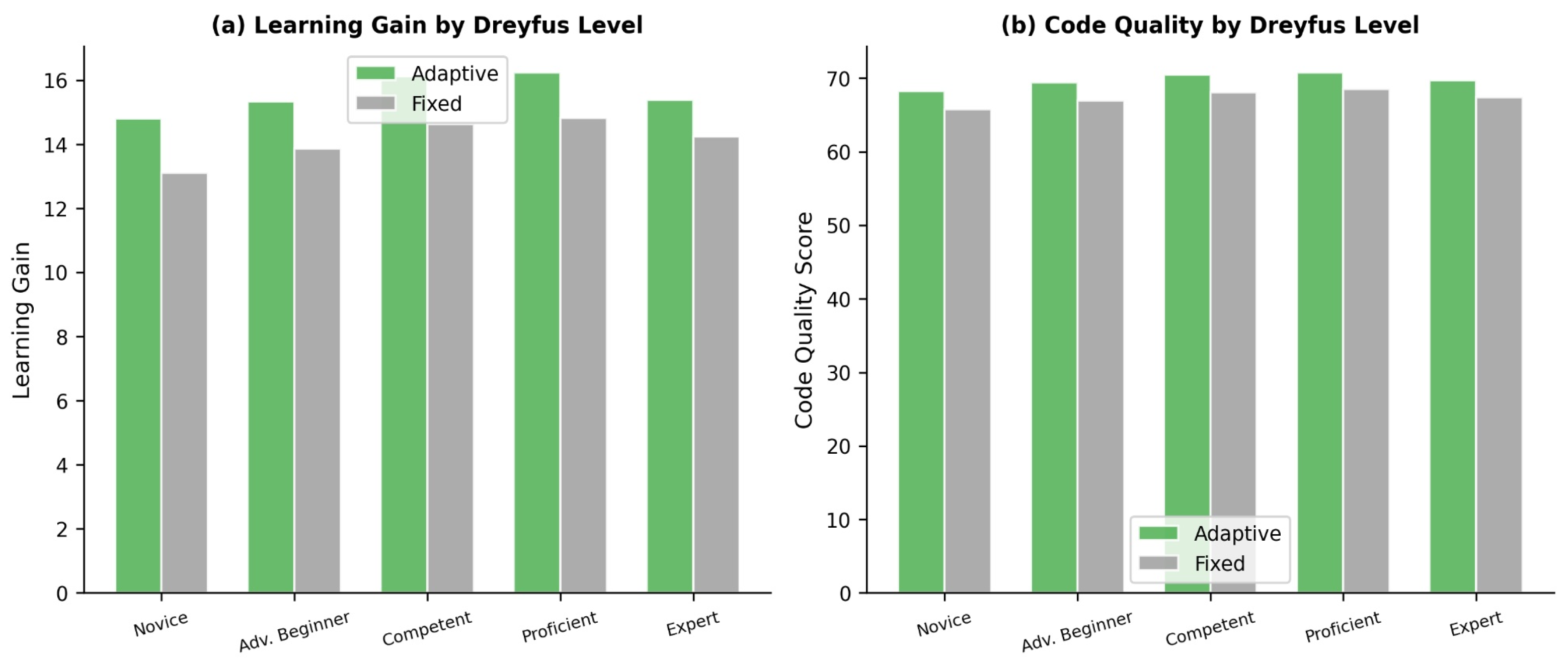

Each participant completed tasks in both an adaptive block (mode dynamically assigned) and a fixed block (mode held constant). Results are presented in

Table 7 and

Figure 7.

The adaptive block outperformed the fixed block for both learning gain (d = 1.23) and code quality (d = 1.37). At the participant level, 134 of 150 (89.3%) showed higher composite performance in the adaptive block; a binomial test confirmed this proportion was significantly above chance (p < .001). A one-way ANOVA on adaptive advantage scores revealed no significant differences across Dreyfus levels for learning gain (F(4, 145) = 1.33, p = .263) or code quality (F(4, 145) = 0.74, p = .564), indicating that the adaptive mechanism was consistently effective regardless of competency level. H4 was supported.

4.5. Regression Models

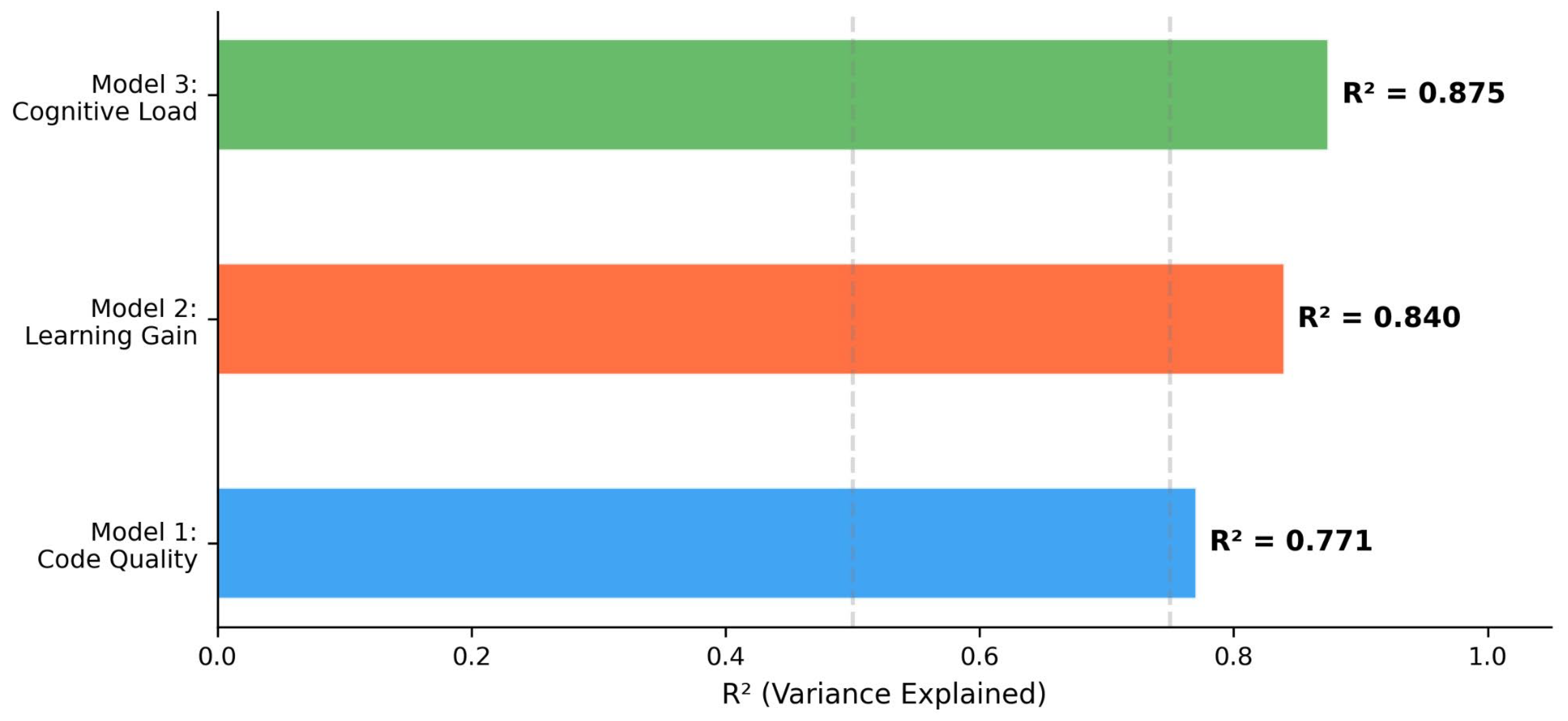

Three multiple linear regression models were tested on 1800 task-level observations. Results are summarized in

Table 8 and

Figure 8.

Model 1 explained 77.1% of variance in code quality; Dreyfus level was the strongest predictor (β = .86, p < .001), while mode had a significant negative effect (β = −.21, p < .001), reflecting lower code quality in complementary mode. Model 2 explained 84.0% of variance in learning gain; mode was the dominant predictor (β = .82, p < .001), with cognitive load non-significant (β = .02, p = .452). Model 3 explained 87.5% of variance in cognitive load; mode was dominant (β = .85, p < .001), and Dreyfus level had a significant negative coefficient (β = −.32, p < .001), confirming that cognitive load decreases with increasing competency.

4.6. Qualitative Findings

Semi-structured interviews with 20 participants (4 per Dreyfus level, balanced across domains) yielded four themes and twelve sub-themes via thematic analysis (κ = .84).

Persona Experience: Participants overwhelmingly perceived persona interactions as realistic and contextually appropriate. A novice participant (P3) noted: “The persona started with basics rather than overwhelming me with complex code, which was reassuring.” An expert participant (P17) reported: “The persona responded at an appropriate depth, no superficial explanations wasting time.” Four participants noted occasional repetition or context loss.

Mode Perception: Thirteen of 20 participants found complementary mode more instructive. An advanced beginner (P5) stated: “The complementary persona filled gaps I did not know I had.” Conversely, seven participants preferred similar mode for efficiency. A proficient participant (P14) observed: “In similar mode, the persona spoke my language and we reached results quickly.”

Learning Process: Participants reported that persona-driven question-answer dynamics accelerated learning (P11: “Asking questions to the persona also revealed my own knowledge gaps—it was a bidirectional process”). Smart contract security and gas optimization were the most frequently cited challenging topics.

System Evaluation: Sixteen of 20 participants rated the platform’s usability positively. Regarding adaptive switching, 11 participants reported perceiving mode transitions in the persona’s communication style, while 9 did not notice distinct changes. The most frequently suggested improvements were: integrated code execution environment (n = 13), more visual content (n = 11), interaction history display (n = 10), and additional real-world scenarios (n = 8).

Table 9.

Summary of qualitative themes and convergence with quantitative findings.

Table 9.

Summary of qualitative themes and convergence with quantitative findings.

| Quantitative Finding |

Qualitative Theme |

Convergence |

| Comp. mode: higher learning gain (d = 5.13) |

13/20 found Comp. more instructive |

Confirmed |

| Sim. mode: lower cognitive load (d = 9.02) |

Participants described Sim. as “smoother, more efficient” |

Confirmed |

| Mode × Dreyfus interaction (CL, duration) |

Novices reported more difficulty in Comp. mode |

Confirmed |

| Sim. mode: higher code quality (d = 1.70) |

Participants reported more productive work in Sim. mode |

Confirmed |

| Adaptive > Fixed (d = 1.23, 1.37) |

11/20 noticed mode transitions; some reported relief |

Partially confirmed |

5. Discussion

5.1. Mode Effects and Theoretical Implications

The most prominent finding of this study is the strong differential effect of recommendation modes on learning versus performance outcomes. Complementary mode produced substantially higher learning gains (d = 5.13), while similar mode yielded lower cognitive load (d = 9.02) and higher code quality (d = 1.70). This pattern is theoretically coherent: each mode operates according to the predictions of its foundational theory.

The complementary mode’s superiority in learning gain aligns directly with Vygotsky’s [

29] ZPD theory. By presenting users with AI personas who possess strengths in the user’s weaker areas, the system functions as a More Knowledgeable Other, positioning the learner within their zone of proximal development. This finding is consistent with Ferguson, Van den Broek, and Van Oostendorp [

57], who demonstrated that AI-guided adaptive systems can maintain learners within an optimal ZPD without imposing excessive cognitive load. Bjork and Bjork’s [

58] “desirable difficulties” framework provides additional theoretical support: conditions that temporarily slow immediate performance can enhance long-term retention and transfer. The higher cognitive load and longer task durations observed in complementary mode are thus not merely costs but potentially indicators of deeper cognitive processing conducive to durable learning.

The similar mode’s advantage in code quality and cognitive load is equally well-grounded in CLT. When users interact with personas at comparable proficiency levels, the extraneous cognitive load associated with processing unfamiliar approaches is minimized [

37,

39]. Feng [

59] similarly found that personalized AI feedback reduced extraneous cognitive load while preserving productive cognitive engagement in a study of 484 learners. Gkintoni et al. [

60] confirmed through a systematic review of 103 studies that AI-assisted systems can dynamically monitor and manage the three types of cognitive load. However, the exceptionally large effect sizes (d = 5.13 for learning gain, d = 9.02 for cognitive load) warrant cautious interpretation. Regression analyses confirmed that mode was the strongest predictor of learning gain (β = .82) and cognitive load (β = .85), indicating that the within-subjects design amplified between-condition contrasts. The ecological validity of these effects in less controlled settings remains to be established.

5.2. Dreyfus Level Interactions

The partial support for H3 reveals an important theoretical distinction. The Mode × Dreyfus interaction was significant for cognitive load (η²p = .139) and task duration (η²p = .737) but not for learning gain or code quality. This pattern indicates that competency level moderates the process (how effortful and time-consuming mode transitions are) but not the outcome (what learners ultimately achieve).

For cognitive load, the interaction profile shows that novices experienced a larger mode-related increase (Δ = 21.46 points) compared to experts (Δ = 18.97). This is consistent with the expertise reversal effect [

40]: novice learners lack the schema structures needed to efficiently process complementary information, resulting in higher extraneous cognitive load. For task duration, the interaction was dramatic (η²p = .737): novices required 5.86 additional minutes in complementary mode, while experts needed only 1.06 additional minutes. These findings mirror Christodoulou and Angeli’s [

51] work on adaptive learning environments where novice learners benefited more from scaffolded support but also required more processing time. The non-significant interaction for learning gain and code quality carries an important practical implication: the mode-driven advantages (complementary for learning, similar for quality) are consistent across all five Dreyfus levels. This universality simplifies system deployment, as the same dual-mode architecture can serve the entire competency spectrum without level-specific algorithmic modifications.

5.3. Adaptive Mechanism Effectiveness

The NASA-TLX-based adaptive switching mechanism outperformed fixed-mode assignment for both learning gain (d = 1.23) and code quality (d = 1.37), with 89.3% of participants performing better in the adaptive block. Crucially, this advantage was consistent across all Dreyfus levels (no significant level × block interaction), suggesting that real-time cognitive load monitoring provides universal benefits.

The adaptive mechanism’s effectiveness can be attributed to its ability to dynamically balance the learning-performance trade-off. When a participant’s cognitive load exceeds the threshold, the switch to similar mode provides immediate relief; when cognitive load drops, the switch to complementary mode capitalizes on available cognitive capacity for learning. This dynamic calibration mirrors the theoretical prescription of CLT—optimizing the allocation of cognitive resources across intrinsic, extraneous, and germane processing.

5.4. Qualitative Insights and Convergence

The mixed-methods integration revealed strong convergence between quantitative and qualitative findings. The 13 of 20 participants who found complementary mode “more instructive” directly corroborate the significantly higher learning gains. The 7 who preferred similar mode for “efficiency” align with the lower cognitive load and higher code quality findings. Novice participants’ reports of greater difficulty in complementary mode echo the significant Mode × Dreyfus interaction for cognitive load. The qualitative data also revealed phenomena not captured by quantitative instruments. Several participants described a metacognitive awareness triggered by complementary mode—the realization of previously unrecognized knowledge gaps (P11: “Asking questions to the persona also revealed my own knowledge gaps”). This metacognitive dimension, while not directly measured, may partially explain the superior learning gains and warrants further investigation. The varying awareness of adaptive mode transitions (11 of 20 noticed them) suggests that the switching mechanism operates effectively regardless of whether users are consciously aware of the transition, a finding with important implications for transparent AI design.

5.5. Limitations

Several limitations should be acknowledged. First, this study employed a cross-sectional design; the long-term retention and transfer effects of dual-mode instruction were not assessed. Bjork and Bjork’s [

58] desirable difficulties framework predicts that complementary mode’s initial difficulties should yield long-term benefits, but this remains to be empirically confirmed through longitudinal studies. Second, the study focused exclusively on Ethereum blockchain and Solidity smart contract development. Generalization to other programming domains, blockchain platforms (Solana, Cardano), or non-technical educational contexts requires replication. Third, participant competency levels were partially determined through self-assessment, which is susceptible to the Dunning-Kruger effect [

61] and social desirability bias [

62]. Although self-report data were triangulated with objective measures, some classification error is inevitable. Fourth, the sample was drawn from Turkish universities and EdTech companies, limiting cultural generalizability. Hofstede’s [

63] cultural dimensions suggest that attitudes toward AI-assisted learning may vary across cultural contexts. Fifth, the exceptionally large effect sizes observed, while statistically robust, should be interpreted with caution. The controlled within-subject experimental design may have amplified between-condition differences that would be attenuated in naturalistic settings. Replication studies with larger, more diverse samples in real classroom environments are necessary. Finally, the AI personas are powered by commercial LLMs (GPT-4o, Claude-3.5 Sonnet, Gemini 3, Grok 4) whose behaviors may change with model updates. The stability and reproducibility of persona interactions over time represent an ongoing challenge for AI-based educational systems.

5.6. Practical Implications

The findings offer several implications for the design of intelligent tutoring systems. First, educational AI platforms should implement dual-mode architectures rather than single-mode approaches. The consistent finding that complementary mode optimizes learning while similar mode optimizes immediate performance suggests that both modes serve distinct and valuable pedagogical purposes. Second, NASA-TLX or comparable real-time cognitive load assessment should be integrated into adaptive learning systems to drive mode-switching decisions. The consistent superiority of the adaptive mechanism across all Dreyfus levels argues strongly for dynamic over static mode assignment. Third, the Dreyfus competency model provides a practical framework for structuring AI persona pools. The five-level classification, combined with dual-domain (technology/pedagogy) structuring, offers a tractable yet nuanced approach to persona design that can be extended to other educational domains. Fourth, the significant interaction for cognitive load and task duration, not for learning gain, implies that support mechanisms should be competency-sensitive for process optimization (providing novices additional time, scaffolding, and simpler interfaces) even when the same dual-mode algorithm can serve all levels for outcome optimization.

6. Conclusions

This study introduced and evaluated the PITL (Persona in The Loop) system, a dual-mode adaptive AI persona recommendation framework for blockchain education. The system integrates five theoretical frameworks—Dreyfus competency model, Zone of Proximal Development, Cognitive Load Theory, SECI knowledge creation model, and TPACK—into a coherent multi-theoretical architecture with 100 AI personas structured across two domains, ten sub-specialties, and five competency levels.

A mixed-methods evaluation with 150 participants confirmed that complementary mode significantly enhanced learning gains (d = 5.13), while similar mode reduced cognitive load (d = 9.02) and improved code quality (d = 1.70). The Mode × Dreyfus interaction was significant for process variables (cognitive load, task duration) but not for outcome variables (learning gain, code quality), indicating that mode effects on learning are robust across competency levels while the experiential costs vary. The NASA-TLX-based adaptive switching mechanism consistently outperformed fixed-mode assignment (d = 1.23 for learning gain, d = 1.37 for code quality), with 89.3% of participants benefiting. Qualitative findings from 20 interviews corroborated the quantitative results and revealed additional metacognitive benefits of complementary mode.

Future research should conduct longitudinal studies to assess the long-term retention and transfer effects of dual-mode instruction, replicate the study across different educational domains and cultural contexts, integrate neurophysiological cognitive load measures (EEG, eye-tracking), optimize the adaptive mechanism’s threshold parameters, and investigate multi-persona interaction scenarios. The PITL framework provides a theoretically grounded, empirically validated foundation for the next generation of competency-aware, adaptive AI-assisted educational systems.