Submitted:

14 February 2026

Posted:

27 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Theoretical Background

2.1. Competence-Oriented ESD and the Problem of Detecting Change

2.2. Place-Based Learning, Mobile AR Affordances, and Field Constraints

2.3. Why Gamification Can Be an Applied Design Layer for Competence-Oriented AR Initiatives

2.4. Why Four-Wave Repeated Cross-Sectional Evidence, and Why Robustness Is Required

- Third, composition sensitivity strategies, including weighting when harmonized covariates are available and resampling-based perturbations when they are not, help bound interpretation to patterns that remain stable under reasonable changes in sample composition and scoring choices [45].

2.5. Bridges to the Present Study

3. Materials and Methods

3.1. Study Design and Reporting Scope

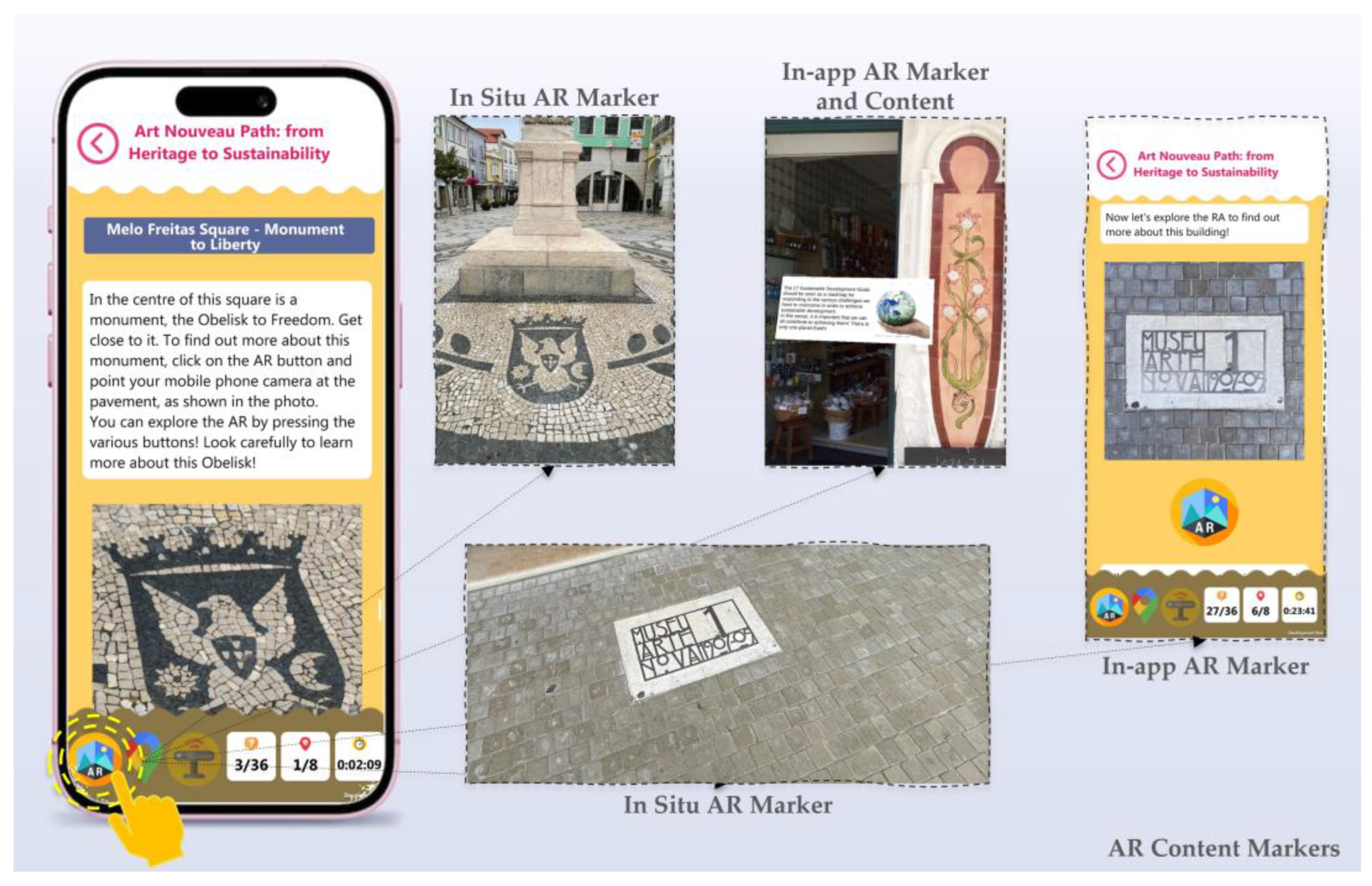

3.2. Educational Intervention and Context

3.3. Participants and Recruitment

3.4. Instruments and Measures

3.4.1. Students’ Questionnaire Series, Wave Nomenclature, and Instrument Foundations

3.4.2. Wave Instruments and Comparability Principle

3.4.3. Primary Outcome: GCQuest and the ESV Composite Score

3.4.4. S4-Specific Indicators for Mechanisms and Interpretability

3.5. Data Ingestion, Freezing, and Quality Control

3.6. Quantitative Analysis

3.6.1. Descriptive and Distribution-Aware Summaries

3.6.2. Cross-Wave Trend Inference (S1–S4)

3.6.3. Threshold Prevalence Indicators

3.6.4. S4-Only Exploratory Associations (Mechanisms)

3.7. Analysis Governance, Risk Controls, and Reproducibility

3.8. Ethics and Data Availability

4. Results

4.1. Data Completeness and Analytic Sample (ESV Block, Q1–Q25)

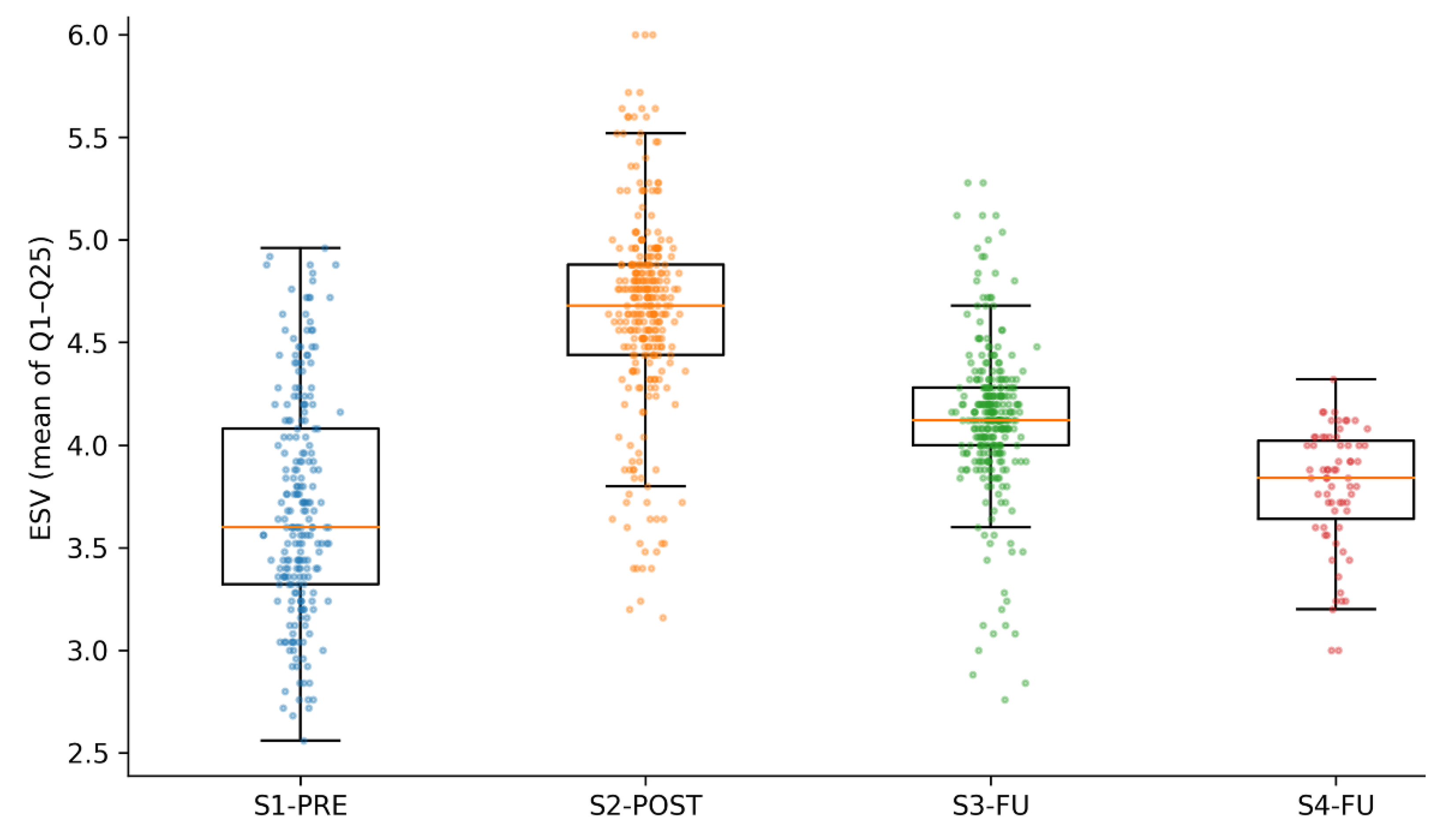

4.2. Does Adding S4-DFU Change the Global ESV Trend Across S1–S4?

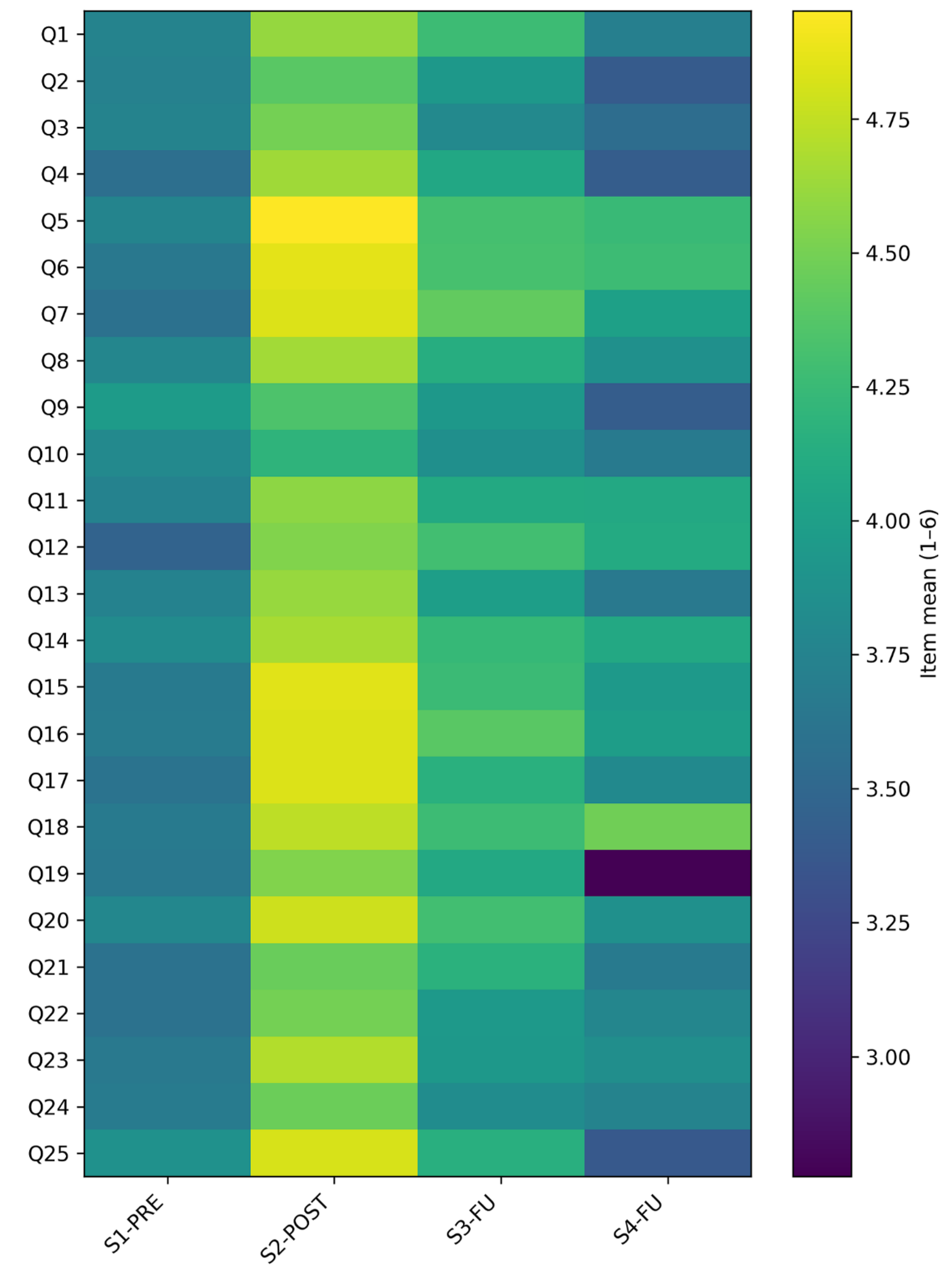

4.3. Is the Item-Level Pattern in S4 Consistent with S1–S3 and Which Items Are Most Temporally Sensitive?

4.4. Are Trend Inferences Robust to Plausible Analytic Choices?

4.5. Exploratory S4-DFU Contextual Indicators (Regarded as Not Primary Outcomes)

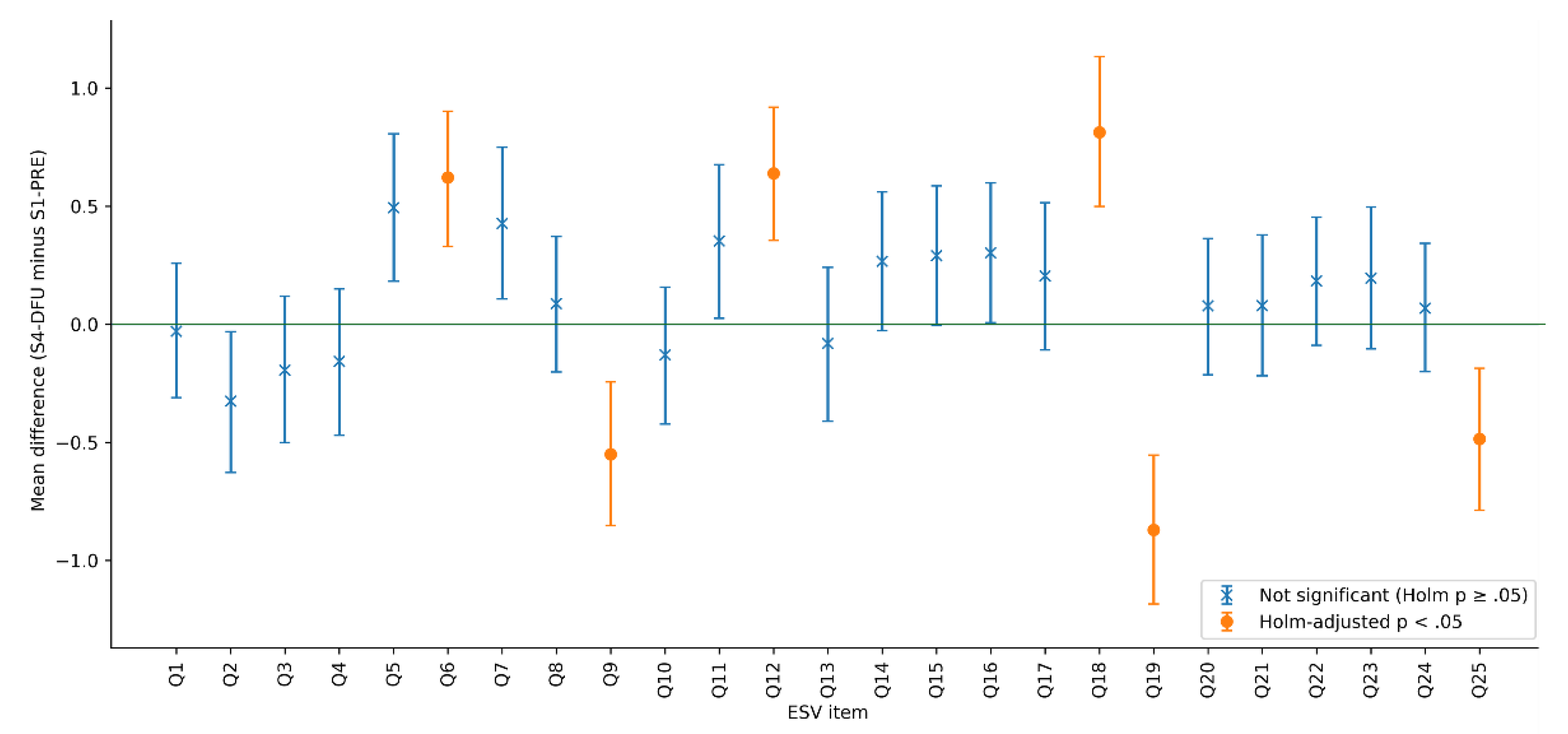

4.6. Baseline-to-Latest Contrast: S1-PRE vs S4-DFU (Complete-Case Q1–Q25)

4.6.1. Descriptives and 95% Confidence Intervals

4.6.2. Two-Sample Contrast (S1 vs S4), Effect Sizes, and 95% Intervals

4.6.3. Differences in High-Agreement Prevalence (S4 − S1) with 95% CI

4.6.4. Distributional Shift Across Quantiles (Shift Function)

5. Discussion

5.1. What the Four-Wave Evidence Adds to Competence-Oriented ESD Evaluation

5.2. Interpreting the S2-POST Peak Under Mobile AR and Gamification as a Socio-Technical System

5.3. Why Attenuation and Convergence Toward Baseline Are Not Anomalous in Competence-Oriented ESD Measurement

5.4. Item-Level Meaning: What Changes Appear Fragile at Distant Follow-Up

5.5. Implications for Applied XR Evidence Standards and for Interpreting Transfer-Relevant Results

- First, multiwave evidence strengthens claims when it is paired with construct-aligned outcomes and robustness checks, rather than relying on single deployments or short pre-post windows [7,18,20]. The inclusion of S4-DFU functions as a stress test: the S2-POST peak remains a robust feature of the evidence, but the longer-horizon pattern is clarified as attenuation and convergence toward baseline.

- Second, field constraints should be treated as first-order explanatory variables when interpreting outcome trajectories in outdoor mobile AR. Under the socio-technical framing established in Section 2.2, usability friction, technical disruption, attentional switching, and orchestration demands can systematically divert processing away from competence-relevant reasoning, thereby shaping when self-report signals peak and how they attenuate across cohorts [7,34,36,37]. In this perspective, the pronounced S2-POST elevation and later attenuation are compatible with a high-salience field encounter that is difficult to sustain without reinforcement cycles beyond the path, rather than with a monotonic competence trajectory.

- Third, S4-DFU contextual indicators suggest that XR/UX frictions were not negligible in the field setting: 20/67 participants (29.90%) reported map-navigation difficulty or did not use the map. These indicators are not treated as outcomes, but as deployment-relevant bounds that qualify transfer-oriented interpretations and inform refinement priorities for gamified XR systems deployed in authentic settings [20]. Such navigation and interface frictions are also a known moderating factor in immersive experiences more broadly, including VR, where interaction design choices can shape cognitive load, persistence, and the interpretability of learning signals [20].

- Fourth, transparency about the intervention as a socio-technical system remains central for transfer-relevant interpretation. The Art Nouveau Path has been documented as a competence-oriented design anchored in GreenComp [4], providing the design and construct-alignment basis for outcome interpretation [23,24,49,50]. Other related studies extend the adoption and resilience lenses through a city-scale transfer focus and a curriculum-aligned urban resilience framing.

6. Conclusions, Limitations, and Future Paths

6.1. Conclusions

- First, the aggregate trajectory is non-monotonic. ESV shows a pronounced post-activity elevation (S2-POST), partial attenuation at follow-up (S3-FU), and convergence toward baseline levels in the latest wave (S4-DFU). The addition of S4-DFU therefore strengthens interpretive discipline by moving the narrative away from monotonic trend expectations and toward a time-sensitive pattern in which proximal signals are strongest;

- Second, change is not uniform within the ESV construct. Item-level analyses indicate that the recent decline from S3-FU to S4-DFU is concentrated in a subset of items, most notably the item targeting longer-term resource management through questioning personal needs (Q19). In the baseline-to-latest contrast (S1-PRE versus S4-DFU), reliable item-level differences show a mixed pattern, with increases in some values-consistent appraisal and cultural appreciation items alongside decreases in maintenance-sensitive self-regulation and critique items. This reinforces the value of reporting item-level concentration of effects when interpreting competence-oriented measures;

- Third, the core pattern is robust to plausible analytic choices. The main conclusions were preserved under alternative scoring (median-based ESV), equal-n sensitivity through repeated downsampling, and distribution-aware analyses (shift functions).

6.2. Limitations

- First, the four-wave evidence is repeated cross-sectional rather than individually longitudinal, so observed differences can reflect cohort composition and contextual variation as well as intervention-consistent development. Even when measurement is held constant, the design cannot directly separate within-person change from between-cohort differences, and causal attribution remains constrained in the absence of random assignment or individual tracking [55,56];

- Second, sampling is necessarily opportunistic within school-based field conditions, and the distant follow-up wave is affected by reachability and participation constraints, increasing the plausibility of selection effects and limiting the ability to fully characterize nonresponse mechanisms [74];

- Third, outcomes rely on self-report Likert-type responses, which can be influenced by social desirability, shifting internal standards, and appraisal recalibration over time. These mechanisms are consistent with response shift and reference bias, which can distort between-group or between-wave comparisons even when instrument wording is unchanged [25,26];

- Fourth, the analytic sample size in the latest wave is comparatively small, so the study is less sensitive to small effects and estimates may be more sample-dependent than in earlier waves; this limitation is structural in long-horizon school follow-ups and should temper claims about subtle item-level changes [59];

- Fifth, item-wise inference entails multiple comparisons; although familywise control was applied, statistical significance should not be treated as a binary proxy for educational importance, and interpretation should remain anchored in effect magnitudes, uncertainty, and the plausibility of mechanisms [64];

- Sixth, field deployment of mobile AR introduces variability in device heterogeneity, outdoor lighting, tracking stability, and usability frictions, which can influence experience quality and potentially downstream reporting; these conditions are difficult to standardize across cohorts and academic years in authentic settings [7,34,37];

- Seventh, this study operationalizes sustainability competence through a single GreenComp [4] domain and self-assessed endorsement, so generalization to broader competence domains, behavioral enactment, or performance-based outcomes should be treated as out of scope;

- Eighth, additional S4-DFU blocks and open-ended prompts were collected to support transfer-oriented and planning-facing analyses, but they are not exhaustively analyzed in this article and are reserved for follow-on work.

6.3. Future Paths

- First, the observed pattern motivates designs that treat the path as a competence catalyst that benefits from planned reinforcement cycles. Lightweight booster routines aligned with the curriculum (brief consolidation prompts, short classroom revisits, or periodic micro-tasks connected to selected POIs) are plausible next steps to test whether maintenance-sensitive items can be stabilized across later cohorts;

- Second, formal tests of measurement invariance for the ordinal ESV block across waves would strengthen comparability claims and help separate outcome shifts from response-style shifts. Complementary indicators, such as short behavioral intention checks, trace-derived proxies, or teacher-reported corroboration, could also improve triangulation without increasing student burden;

- Third, within repeated cross-sectional constraints, future analyses can use trend-focused quasi-experimental approaches, such as cohort-aligned sampling, matched school comparisons, or interrupted time-series style logic if implementation timing and exposure intensity can be documented more precisely;

- Fourth, S4-DFU included mechanism- and transfer-oriented blocks beyond the invariant ESV core, while the present article intentionally treats these as contextual bounds rather than outcomes. A dedicated continuation paper can therefore exploit the unused S4-DFU extensions to examine transfer-facing indicators, including everyday noticing and planning-facing micro-interventions for public space, thereby extending the research program from competence trend monitoring to human-centered urban design and climate-adaptation cue legibility;

- Fifth, replication should be treated as a primary research path. EduCITY provides authoring tools that support the creation of AR-based, place-based learning experiences, and its web-based workflow enables location-based games across multiple urban settings. This makes it feasible to replicate the Art Nouveau Path logic in other Art Nouveau cities or to translate it to other heritage typologies, while testing how local morphology, POI density, and municipal routines moderate both usability envelopes and learning-relevant signals;

- Sixth, scalable deployment requires explicit governance, stewardship, and maintenance routines rather than ad hoc pilots. Future work should operationalize a municipal-ready replication package, including content rights and update cycles, safety and accessibility procedures, device and connectivity contingencies, and privacy-proportionate monitoring, so that cross-city evidence becomes comparable and cumulative.

6.4. Synthesis

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AR | Augmented Reality |

| S1-PRE | Students’ Pre-activity Questionnaire |

| S2-POST | Students’ Post-activity Questionnaire |

| S3-FU | Students’ Follow-up Questionnaire |

| S4-DFU | Students’ Distant Follow-up Questionnaire |

| GCQuest | GreenComp-Based Questionnaire |

| ESV | ‘Embodying Sustainability Values’ |

| XR | Extended Reality |

| (HCI) | Human–Computer Interaction |

| UX | User Experience |

| ESD | Education for Sustainable Development |

| UNESCO | United Nations Educational, Scientific and Cultural Organization |

| GreenComp | European Sustainability Competence Framework |

| MARG | Mobile Augmented Reality Game |

| RQ | Research Question |

| Q | GCQuest item |

| MR | Mixed Reality |

| DTLE | Digital Teaching and Learning Environment |

| POI | Point of Interest |

| M | Mean |

| K | Knowledge |

| S | Skills |

| A | Attitudes |

| SD | Standard Deviation |

| CI | Confidence Interval |

| MDN | Median |

| IQR | Interquartile Range |

| SOP | Standard Operating Procedure |

| GDPR | General Data Protection Regulation |

| R | Range |

| MWU | Mann–Whitney U test |

| pp | Percentual Point |

Appendix A

Appendix A.1. S4-DFU (Distant Follow-Up) Questionnaire: Blocks, Response Types, and Coding Rules

| Item code | Indicator / construct (brief) | Response format |

Notes (skip logic, coding) |

|---|---|---|---|

| A.0 | Grade band (composition metadata) | Categorical | Self-reported grade band at the time of S4-DFU. |

| A.1.1 | Everyday noticing / retention prompt (open recall) | Open-ended text | Short free-text statement; analyzed qualitatively. |

| A.1.2 | Sustainability-related behavior change since the path | Yes/No | Binary self-report indicator. |

| A.1.2.1 | Example of sustainability-related action adopted | Open-ended text | Conditional follow-up to A.1.2 (if “Yes”); qualitative. |

| A.1.3 | Additional transfer indicator (binary) | Yes/No | Binary self-report indicator (as administered on the S4-DFU form). |

| A.1.4 | Additional transfer indicator (binary) | Yes/No | Binary self-report indicator (as administered on the S4-DFU form). |

| A.1.5.1 | Perceived value of learning outdoors / in situ (binary) | Yes/No | Binary preference/valuation indicator. |

| A.1.5.2 | Justification for A.1.5.1 | Open-ended text | Conditional follow-up to A.1.5.1; qualitative. |

| A.1.6 | Personal definition of “sustainability” | Open-ended text | Short free-text definition; qualitative. |

| A.1.7 (1-6) | Global perceived influence of the path on daily life | 6-point Likert | Higher values indicate higher perceived influence. |

| A.2.1 | Heritage recall / engagement trigger (binary) | Yes/No | Binary indicator. |

| A.2.1.1 | Example of recalled heritage element | Open-ended text | Conditional follow-up to A.2.1; qualitative. |

| A.2.2 | Heritage-related engagement indicator (binary) | Yes/No | Binary indicator (as administered on the S4-DFU form). |

| A.2.3 | Heritage-related engagement indicator (binary) | Yes/No | Binary indicator (as administered on the S4-DFU form). |

| A.2.4 | Civic responsibility for local heritage (binary) | Yes/No | Binary indicator. |

| A.2.5 | City as a shared resource (binary) | Yes/No | Binary indicator. |

| A.2.6.1 | Perceived narrative coherence (binary) | Yes/No | Binary indicator. |

| A.2.6.2 | Explanation for A.2.6.1 | Open-ended text | Conditional follow-up to A.2.6.1; qualitative. |

| A.3.1 | Recalled usability / ease indicator (binary) | Yes/No | Binary indicator (as administered on the S4-DFU form). |

| A.3.2 | Recalled usability / ease indicator (binary) | Yes/No | Binary indicator (as administered on the S4-DFU form). |

| A.3.3 | Use of the in-app map feature | Yes/No/Did not use | Feature-use item; “did not use” enables explicit structural non-use. |

| A.3.4 | Recalled usability / ease indicator (binary) | Yes/No | Binary indicator (as administered on the S4-DFU form). |

| A.3.5 | Recalled usability / ease indicator (binary) | Yes/No | Binary indicator (as administered on the S4-DFU form). |

| A.3.6 | Recalled usability / ease indicator (binary) | Yes/No | Binary indicator (as administered on the S4-DFU form). |

| A.3.7 | Recalled usability / ease indicator (binary) | Yes/No | Binary indicator (as administered on the S4-DFU form). |

| A.3.8 | Recalled usability / ease indicator (binary) | Yes/No | Binary indicator (as administered on the S4-DFU form). |

| A.3.9 | Most salient facilitator or barrier (e.g., weather, glare, signal) | Open-ended text | Free-text; analyzed qualitatively; supports interpretation of A.3.1–A.3.8. |

| A.3.10 | Suggested improvement to the path/app | Open-ended text (optional) | Free-text; may be blank/NA; analyzed qualitatively. |

| A.4.1.1 (1-6) | Cue literacy: perceived salience of public-space domain 1 | 6-point Likert | Six parallel items (A.4.1.1–A.4.1.6) administered in the order presented on the form. |

| A.4.1.2 (1-6) | Cue literacy: perceived salience of public-space domain 2 | 6-point Likert | See note for A.4.1.1. |

| A.4.1.3 (1-6) | Cue literacy: perceived salience of public-space domain 3 | 6-point Likert | See note for A.4.1.1. |

| A.4.1.4 (1-6) | Cue literacy: perceived salience of public-space domain 4 | 6-point Likert | See note for A.4.1.1. |

| A.4.1.5 (1-6) | Cue literacy: perceived salience of public-space domain 5 | 6-point Likert | See note for A.4.1.1. |

| A.4.1.6 (1-6) | Cue literacy: perceived salience of public-space domain 6 | 6-point Likert | See note for A.4.1.1. |

| A.4.2 | Micro-intervention proposal for improving a local public space | Open-ended text | Free-text proposal; analyzed qualitatively. |

| A.4.3 | Everyday noticing indicator aligned with A.4 domains (binary) | Yes/No | Binary indicator. |

| A.4.4 | Everyday noticing indicator aligned with A.4 domains (binary) | Yes/No | Binary indicator. |

| A.4.5 | Everyday noticing indicator aligned with A.4 domains (binary) | Yes/No | Binary indicator. |

| A.4.6 | Everyday noticing indicator aligned with A.4 domains (binary) | Yes/No | Binary indicator; |

| A.4.7 | Everyday noticing indicator aligned with A.4 domains (binary) | Yes/No | Binary indicator. |

| A.4.5 | Sustainability values block aligned with GreenComp (ESV domain) | Q1–Q25 | 6-point Likert (forced-choice) |

| Q at GCQuest | Item core | KSA | GreenComp competence |

GreenComp KSA ID |

|---|---|---|---|---|

| Q1 | … be prone to act in line with values and principles for sustainability. | A | 1.1 Valuing sustainability | 1.1.A1 |

| Q2 | … articulate and negotiate sustainability values, principles and objectives while recognising different viewpoints. | S | 1.1 Valuing sustainability | 1.1.S4 |

| Q3 | … identify processes or action that avoid or reduce the use of natural resources. | S | 1.3 Promoting nature | 1.3.S5 |

| Q4 | … know about the main parts of the natural environment (geosphere, biosphere, hydrosphere, cryosphere and atmosphere) and that living organisms and non-living components are closely linked and depend on each other. | Knowledge | 1.3 Promoting nature | 1.3.K1 |

| Q5 | … be open-minded to others and their world-views. | A | 1.1 Valuing sustainability | 1.1.A3 |

| Q6 | … bring personal choices and action in line with sustainability values and principles. | S | 1.1 Valuing sustainability | 1.1.S3 |

| Q7 | … acknowledge cultural diversity within planetary limits. | S | 1.3 Promoting nature | 1.3.S3 |

| Q8 | … apply equity and justice for current and future generations as criteria for environmental preservation and the use of natural resources. | S | 1.2 Supporting fairness | 1.2.S1 |

| Q9 | … know about environmental justice, namely considering the interests and capabilities of other species and environmental ecosystems. | K | 1.2 Supporting fairness | 1.2.K2 |

| Q10 | … know the main views on sustainability: anthropocentrism (human-centric), technocentrism (technological solutions to ecological problems) and ecocentrism (nature-centred), and how they influence assumptions and arguments. | K | 1.1 Valuing sustainability | 1.1.K1 |

| Q11 | … be critical towards the notion that humans are more important than other life forms. | A | 1.3 Promoting nature | 1.3.A2 |

| Q12 | … evaluate issues and action based on sustainability values and principles. | S | 1.1 Valuing sustainability | 1.1.S2 |

| Q13 | … be willing to share and clarify views on sustainability values. | A | 1.1 Valuing sustainability | 1.1.A2 |

| Q14 | … find opportunities to spend time in nature and helps to restore it. | S | 1.3 Promoting nature | 1.3.S4 |

| Q15 | … show empathy with all life forms. | A | 1.3 Promoting nature | 1.3.A3 |

| Q16 | … know that values and principles influence action that can damage, does not harm, restores or regenerates the environment. | K | 1.1 Valuing sustainability | 1.1.K3 |

| Q17 | … assess own impact on nature and consider the protection of nature an essential task for every individual. | S | 1.3 Promoting nature | 1.3.S1 |

| Q18 | … respect, understand and appreciate various cultures in relation to sustainability, including minority cultures, local and indigenous traditions and knowledge systems. | S | 1.2 Supporting fairness | 1.2.S3 |

| Q19 | … know that humans shape ecosystems and that human activities can rapidly and irreversibly damage ecosystems. | K | 1.3 Promoting nature | 1.3.K4 |

| Q20 | … continuously strive to restore nature. | A | 1.3 Promoting nature | 1.3.A5 |

| Q21 | … know that individuals and communities differ in how and how much they can promote sustainability. | K | 1.2 Supporting fairness | 1.2.K4 |

| Q22 | … help build consensus on sustainability in an inclusive manner. | S | 1.2 Supporting fairness | 1.2.S4 |

| Q23 | … identify and include values of communities, including minorities, in problem framing and decision making on sustainability. | S | 1.1 Valuing sustainability | 1.1.S5 |

| Q24 | … know that people are part of nature and that the divide between human and ecological systems is arbitrary. | K | 1.3 Promoting nature | 1.3.K3 |

| Q25 | … be ready to critique and value various cultural contexts depending on their impact on sustainability. | A | 1.1 Valuing sustainability | 1.1.A4 |

References

- UNESCO Education for Sustainable Development in Action; UNESCO, Ed.; Paris; UNESCO, 2012; ISBN 9789230010638. [Google Scholar]

- Cebrián, G.; Junyent, M.; Mulà, I. Current Practices and Future Pathways towards Competencies in Education for Sustainable Development. Sustain. 2021, 13, 8733. [Google Scholar] [CrossRef]

- UNESCO Education for Sustainable Development: A Roadmap; UNESCO, 2020; ISBN 978-92-3-100394-3.

- Bianchi, G.; Pisiotis, U.; Cabrera, M.; Punie, Y.; Bacigalupo, M. The European Sustainability Competence Framework; 2022; ISBN 9789276464853. [Google Scholar]

- Redman, A.; Wiek, A. Competencies for Advancing Transformations Towards Sustainability. Front. Educ. 2021, 6, 1–11. [Google Scholar] [CrossRef]

- Wiek, A.; Withycombe, L.; Redman, C. Key Competencies in Sustainability: A Reference Framework for Academic Program Development. Sustain. Sci. 2011, 6, 203–218. [Google Scholar] [CrossRef]

- Akçayır, M.; Akçayır, G. Advantages and Challenges Associated with Augmented Reality for Education: A Systematic Review of the Literature. Educ. Res. Rev. 2017, 20, 1–11. [Google Scholar] [CrossRef]

- Chang, H.-Y.; Binali, T.; Liang, J.-C.; Chiou, G.-L.; Cheng, K.-H.; Lee, S.W.-Y.; Tsai, C.-C. Ten Years of Augmented Reality in Education: A Meta-Analysis of (Quasi-) Experimental Studies to Investigate the Impact. Comput. Educ. 2022, 191, 104641. [Google Scholar] [CrossRef]

- Deterding, S.; Dixon, D.; Khaled, R.; Nacke, L. From Game Design Elements to Gamefulness. In Proceedings of the Proceedings of the 15th International Academic MindTrek Conference: Envisioning Future Media Environments, New York, NY, USA, September 28 2011; ACM; pp. 9–15. [Google Scholar]

- Ryan, R.M.; Deci, E.L. Self-Determination Theory and the Facilitation of Intrinsic Motivation, Social Development, and Well-Being. Am. Psychol. 2000, 55, 68–78. [Google Scholar] [CrossRef]

- Caffrey, L.; Browne, F. Understanding the Social Worker–Family Relationship through Self-determination Theory: A Realist Synthesis of Signs of Safety. Child Fam. Soc. Work 2022, 27, 513–525. [Google Scholar] [CrossRef]

- Bai, S.; Hew, K.F.; Huang, B. Does Gamification Improve Student Learning Outcome? Evidence from a Meta-Analysis and Synthesis of Qualitative Data in Educational Contexts. Educ. Res. Rev. 2020, 30, 100322. [Google Scholar] [CrossRef]

- Sailer, M.; Homner, L. The Gamification of Learning: A Meta-Analysis. Educ. Psychol. Rev. 2020, 32, 77–112. [Google Scholar] [CrossRef]

- Wang, K.; Tekler, Z.D.; Cheah, L.; Herremans, D.; Blessing, L. Evaluating the Effectiveness of an Augmented Reality Game Promoting Environmental Action. Sustainability 2021, 13, 13912. [Google Scholar] [CrossRef]

- Kleftodimos, A.; Moustaka, M.; Evagelou, A. Location-Based Augmented Reality for Cultural Heritage Education: Creating Educational, Gamified Location-Based AR Applications for the Prehistoric Lake Settlement of Dispilio. Digital 2023, 3, 18–45. [Google Scholar] [CrossRef]

- Li, M.; Ma, S.; Shi, Y. Examining the Effectiveness of Gamification as a Tool Promoting Teaching and Learning in Educational Settings: A Meta-Analysis. Front. Psychol. 2023, 14. [Google Scholar] [CrossRef]

- Zeng, J.; Sun, D.; Looi, C.; Fan, A.C.W. Exploring the Impact of Gamification on Students’ Academic Performance: A Comprehensive Meta-analysis of Studies from the Year 2008 to 2023. Br. J. Educ. Technol. 2024, 55, 2478–2502. [Google Scholar] [CrossRef]

- Koivisto, J.; Hamari, J. The Rise of Motivational Information Systems: A Review of Gamification Research. Int. J. Inf. Manage. 2019, 45, 191–210. [Google Scholar] [CrossRef]

- Lampropoulos, G.; Keramopoulos, E.; Diamantaras, K.; Evangelidis, G. Augmented Reality and Gamification in Education: A Systematic Literature Review of Research, Applications, and Empirical Studies. Appl. Sci. 2022, 12, 6809. [Google Scholar] [CrossRef]

- Lampropoulos, G.; Kinshuk. Virtual Reality and Gamification in Education: A Systematic Review. Educ. Technol. Res. Dev. 2024, 72, 1691–1785. [Google Scholar] [CrossRef]

- Morgan, S.L.; Lee, J. A Rolling Panel Model of Cohort, Period, and Aging Effects for the Analysis of the General Social Survey. Sociol. Methods Res. 2024, 53, 369–420. [Google Scholar] [CrossRef]

- Lebo, M.J.; Weber, C. An Effective Approach to the Repeated Cross-Sectional Design. Am. J. Pol. Sci. 2015, 59, 242–258. [Google Scholar] [CrossRef]

- Ferreira-Santos, J.; Pombo, L. The Art Nouveau Path: Promoting Sustainability Competences Through a Mobile Augmented Reality Game. Multimodal Technol. Interact. 2025, 9, 77. [Google Scholar] [CrossRef]

- Ferreira-Santos, J.; Pombo, L. The. Art Nouveau Path: Valuing Urban Heritage Through Mobile Augmented Reality and Sustainability Education 2025, 44. [Google Scholar] [CrossRef]

- Sprangers, M.A..; Schwartz, C.E. Integrating Response Shift into Health-Related Quality of Life Research: A Theoretical Model. Soc. Sci. Med. 1999, 48, 1507–1515. [Google Scholar] [CrossRef] [PubMed]

- Lira, B.; O’Brien, J.M.; Peña, P.A.; Galla, B.M.; D’Mello, S.; Yeager, D.S.; Defnet, A.; Kautz, T.; Munkacsy, K.; Duckworth, A.L. Large Studies Reveal How Reference Bias Limits Policy Applications of Self-Report Measures. Sci. Rep. 2022, 12, 19189. [Google Scholar] [CrossRef]

- Gruenewald, D.A. The Best of Both Worlds: A Critical Pedagogy of Place. Educ. Res. 2003, 32, 3–12. [Google Scholar] [CrossRef]

- Ho, S.-J.; Hsu, Y.-S.; Lai, C.-H.; Chen, F.-H.; Yang, M.-H. Applying Game-Based Experiential Learning to Comprehensive Sustainable Development-Based Education. Sustainability 2022, 14, 1172. [Google Scholar] [CrossRef]

- Kolb, D.A. Experiential Learning: Experience as the Source of Learning and Development, 2nd ed.; Pearson Education Inc.: Upper Saddle River, New Jersey, 2015; ISBN 9780133892505. [Google Scholar]

- Lave, J.; Wenger, E. Situated Learning: Legitimate Peripheral Participation; Cambridge University Press: Cambridge, 1991; ISBN 978-0521423748. [Google Scholar]

- Semken, S.; Freeman, C.B. Sense of Place in the Practice and Assessment of Place-based Science Teaching. Sci. Educ. 2008, 92, 1042–1057. [Google Scholar] [CrossRef]

- Sobel, D. Place-Based Education, Connecting Classrooms and Communities Closing the Achievement Gap: The SEER Report. NAMTA J. 2014, 39, 61–78. [Google Scholar]

- Ibañez-Etxeberria, A.; Gómez-Carrasco, C.J.; Fontal, O.; García-Ceballos, S. Virtual Environments and Augmented Reality Applied to Heritage Education. An Evaluative Study. Appl. Sci. 2020, 10. [Google Scholar] [CrossRef]

- Dunleavy, M.; Dede, C. Augmented Reality Teaching and Learning. In Handbook of Research on Educational Communications and Technology; Spector, J.M., Merrill, M.D., Elen, J., M. J., B., Eds.; Springer: New York, 2014; pp. 735–745. [Google Scholar]

- Wu, H.K.; Lee, S.W.Y.; Chang, H.Y.; Liang, J.C. Current Status, Opportunities and Challenges of Augmented Reality in Education. Comput. Educ. 2013, 62, 41–49. [Google Scholar] [CrossRef]

- Kamarainen, A.M.; Metcalf, S.; Grotzer, T.; Browne, A.; Mazzuca, D.; Tutwiler, M.S.; Dede, C. EcoMOBILE: Integrating Augmented Reality and Probeware with Environmental Education Field Trips. Comput. Educ. 2013, 68, 545–556. [Google Scholar] [CrossRef]

- Radu, I. Augmented Reality in Education: A Meta-Review and Cross-Media Analysis. Pers Ubiquit Comput 2014, 18, 1533–1543. [Google Scholar] [CrossRef]

- Garzón, J.; Acevedo, J. Meta-Analysis of the Impact of Augmented Reality on Students ’ Learning Gains. Educ. Res. Rev. 2019, 27, 244–260. [Google Scholar] [CrossRef]

- Mayer, R. Multimedia Learning; Cambridge University Press, 2020; ISBN 9781316941355. [Google Scholar]

- Sweller, J. Cognitive Load During Problem Solving: Effects on Learning. Cogn. Sci. 1988, 12, 257–285. [Google Scholar] [CrossRef]

- Sweller, J.; Ayres, P.; Kalyuga, S. Cognitive Load Theory; Springer, 2011. [Google Scholar]

- Strada, F.; Lopez, M.X.; Fabricatore, C.; Diniz dos Santos, A.; Gyaurov, D.; Battegazzorre, E.; Bottino, A. Leveraging a Collaborative Augmented Reality Serious Game to Promote Sustainability Awareness, Commitment and Adaptive Problem-Management. Int. J. Hum. Comput. Stud. 2023, 172, 102984. [Google Scholar] [CrossRef]

- Wilhelm, S.; Förster, R.; Zimmermann, A.B. Implementing Competence Orientation: Towards Constructively Aligned Education for Sustainable Development in University-Level Teaching-And-Learning. Sustainability 2019, 11, 1891. [Google Scholar] [CrossRef]

- Landers, R.N. Developing a Theory of Gamified Learning. Simul. Gaming 2014, 45, 752–768. [Google Scholar] [CrossRef]

- Ye, K.; Bilinski, A.; Lee, Y. Difference-in-Differences Analysis with Repeated Cross-Sectional Survey Data. Heal. Serv. Outcomes Res. Methodol. 2025. [Google Scholar] [CrossRef]

- Ho, A.D. A Nonparametric Framework for Comparing Trends and Gaps Across Tests. J. Educ. Behav. Stat. 2009, 34, 201–228. [Google Scholar] [CrossRef]

- Putnick, D.L.; Bornstein, M.H. Measurement Invariance Conventions and Reporting: The State of the Art and Future Directions for Psychological Research. Dev. Rev. 2016, 41, 71–90. [Google Scholar] [CrossRef]

- Vandenberg, R.J.; Lance, C.E. A Review and Synthesis of the Measurement Invariance Literature: Suggestions, Practices, and Recommendations for Organizational Research. Organ. Res. Methods 2000, 3, 4–70. [Google Scholar] [CrossRef]

- Ferreira-Santos, J.; Pombo, L. The Art Nouveau Path: From Gameplay Logs to Learning Analytics in a Mobile Augmented Reality Game for Sustainability Education. Information 2026, 17(1). [Google Scholar] [CrossRef]

- Ferreira-Santos, J.; Pombo, L. The Art Nouveau Path: Longitudinal Analysis of Students’ Perceptions of Sustainability Competence Development Through a Mobile Augmented Reality Game. Computers 2026, 15, 86. [Google Scholar] [CrossRef]

- “S1-PRE Questionnaire”. Available online: https://zenodo.org/records/16540741 (accessed on 12 February 2026).

- “S2-POST Questionnaire”. Available online: https://zenodo.org/records/17738943 (accessed on 12 February 2026).

- “S3-FU Questionnaire”. Available online: https://zenodo.org/records/17739015 (accessed on 12 February 2026).

- “S4-DFU Questionnaire”. Available online. (accessed on 12 February 2026). [CrossRef]

- Brady, H.E.; Johnston, R. Repeated Cross-Sections in Survey Data. In Emerging Trends in the Social and Behavioral Sciences; Wiley, 2015; pp. 1–18. [Google Scholar]

- Deaton, A. Panel Data from Time Series of Cross-Sections. J. Econom. 1985, 30, 109–126. [Google Scholar] [CrossRef]

- Pelzer, B.; Eisinga, R.; Franses, P.H. “Panelizing” Repeated Cross Sections. Qual. Quant. 2005, 39, 155–174. [Google Scholar] [CrossRef]

- Ferreira-Santos, J.; Marques, M.M.; Pombo, L. GreenComp-Based Questionnaire (GCQuest): Questionnaire Development and Validation. unpublished Work. 2024. [Google Scholar]

- Button, K.S.; Ioannidis, J.P.A.; Mokrysz, C.; Nosek, B.A.; Flint, J.; Robinson, E.S.J.; Munafò, M.R. Power Failure: Why Small Sample Size Undermines the Reliability of Neuroscience. Nat. Rev. Neurosci. 2013, 14, 365–376. [Google Scholar] [CrossRef]

- Nosek, B.A.; Alter, G.; Banks, G.C.; Borsboom, D.; Bowman, S.D.; Breckler, S.J.; Buck, S.; Chambers, C.D.; Chin, G.; Christensen, G.; et al. Promoting an Open Research Culture. Science (80-. ). 2015, 348, 1422–1425. [Google Scholar] [CrossRef]

- Nosek, B.A.; Ebersole, C.R.; DeHaven, A.C.; Mellor, D.T. The Preregistration Revolution. Proc. Natl. Acad. Sci. 2018, 115, 2600–2606. [Google Scholar] [CrossRef] [PubMed]

- Simmons, J.P.; Nelson, L.D.; Simonsohn, U. False-Positive Psychology. Psychol. Sci. 2011, 22, 1359–1366. [Google Scholar] [CrossRef] [PubMed]

- Municipal Educational Action Program of Aveiro 2024–2025 (PAEMA). Available online: https://tinyurl.com/PAEMAveiro (accessed on 12 February 2026).

- Wasserstein, R.L.; Lazar, N.A. The ASA Statement on p -Values: Context, Process, and Purpose. Am. Stat. 2016, 70, 129–133. [Google Scholar] [CrossRef]

- Upsher, R.; Dommett, E.; Carlisle, S.; Conner, S.; Codina, G.; Nobili, A.; Byrom, N.C. Improving Reporting Standards in Quantitative Educational Intervention Research: Introducing the CLOSER and CIDER Checklists. J. New Approaches Educ. Res. 2025, 14, 2. [Google Scholar] [CrossRef]

- Garland, R. The Mid-Point on a Rating Scale: Is It Desirable? Mark. Bull. 1991, 2, 66–70. [Google Scholar]

- Beglar, D.; Nemoto, T. Developing Likert-Scale Questionnaires. In Proceedings of the JALT2013 Conference Proceedings, 2014; pp. 1–8. [Google Scholar]

- South, L.; Saffo, D.; Vitek, O.; Dunne, C.; Borkin, M.A. Effective Use of Likert Scales in Visualization Evaluations: A Systematic Review. Comput. Graph. Forum 2022, 41, 43–55. [Google Scholar] [CrossRef]

- Regulation (European Union) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data (General Data Protection Regulation). Official Journal of the European Union L119, 1–88. Available online: https://zenodo.org/records/16540741 (accessed on 12 February 2026).

- Boone, H.; Boone, D. Analyzing Likert Data. J. Ext. 2012, 50. [Google Scholar] [CrossRef]

- Jamieson, S. Likert Scales: How to (Ab)Use Them. Med. Educ. 2004, 38, 1217–1218. [Google Scholar] [CrossRef]

- Norman, G. Likert Scales, Levels of Measurement and the “Laws” of Statistics. Adv. Heal. Sci. Educ. 2010, 15, 625–632. [Google Scholar] [CrossRef]

- Little, R.J.A.; Rubin, D.B. Statistical Analysis with Missing Data, 3rd ed.; 2019; ISBN 978-0-470-52679-8. [Google Scholar]

- Peng, R.D. Reproducible Research in Computational Science. Science (80-. ). 2011, 334, 1226–1227. [Google Scholar] [CrossRef]

- Wilkinson, M.D.; Dumontier, M.; Aalbersberg, Ij.J.; Appleton, G.; Axton, M.; Baak, A.; Blomberg, N.; Boiten, J.-W.; da Silva Santos, L.B.; Bourne, P.E.; et al. The FAIR Guiding Principles for Scientific Data Management and Stewardship. Sci. Data 2016, 3, 160018. [Google Scholar] [CrossRef]

- Van den Broeck, J.; Argeseanu Cunningham, S.; Eeckels, R.; Herbst, K. Data Cleaning: Detecting, Diagnosing, and Editing Data Abnormalities. PLoS Med. 2005, 2, e267. [Google Scholar] [CrossRef]

- Taber, K.S. The Use of Cronbach’s Alpha When Developing and Reporting Research Instruments in Science Education. Res. Sci. Educ. 2018, 48, 1273–1296. [Google Scholar] [CrossRef]

- Zinbarg, R.E.; Revelle, W.; Yovel, I.; Li, W. Cronbach’s α, Revelle’s β, and Mcdonald’s ΩH: Their Relations with Each Other and Two Alternative Conceptualizations of Reliability. Psychometrika 2005, 70, 123–133. [Google Scholar] [CrossRef]

- Dunn, T.J.; Baguley, T.; Brunsden, V. From Alpha to Omega: A Practical Solution to the Pervasive Problem of Internal Consistency Estimation. Br. J. Psychol. 2014, 105, 399–412. [Google Scholar] [CrossRef]

- Dunn, O.J. Multiple Comparisons Using Rank Sums. Technometrics 1964, 6, 241–252. [Google Scholar] [CrossRef]

- Holm, S. A Simple Sequentially Rejective Multiple Test Procedure. Scand. J. Stat. 1979, 6, 65–70. [Google Scholar] [CrossRef]

- Kruskal, W.H.; Wallis, W.A. Use of Ranks in One-Criterion Variance Analysis. J. Am. Stat. Assoc. 1952, 47, 583–621. [Google Scholar] [CrossRef]

- Kerby, D.S. The Simple Difference Formula: An Approach to Teaching Nonparametric Correlation. Compr. Psychol. 2014, 3(11), IT.3.1. [Google Scholar] [CrossRef]

- Cureton, E.E. Rank-Biserial Correlation. Psychometrika 1956, 21, 287–290. [Google Scholar] [CrossRef]

- Delacre, M.; Leys, C.; Mora, Y.L.; Lakens, D. Taking Parametric Assumptions Seriously: Arguments for the Use of Welch’s F-Test Instead of the Classical F-Test in One-Way ANOVA. Int. Rev. Soc. Psychol. 2019, 32, 13. [Google Scholar] [CrossRef]

- Welch, B.L. On the Comparison of Several Mean Values: An Alternative Approach. Biometrika 1951, 38, 330–336. [Google Scholar] [CrossRef]

- Demssie, Y.N.; Biemans, H.J.A.; Wesselink, R.; Mulder, M. Fostering Students’ Systems Thinking Competence for Sustainability by Using Multiple Real-World Learning Approaches. Environ. Educ. Res. 2023, 29, 261–286. [Google Scholar] [CrossRef]

- Olsson, D.; Gericke, N.; Boeve-de Pauw, J. The Effectiveness of Education for Sustainable Development Revisited – a Longitudinal Study on Secondary Students’ Action Competence for Sustainability. Environ. Educ. Res. 2022, 28, 405–429. [Google Scholar] [CrossRef]

| Wave | Timing (relative) | Questionnaire form (version) | Raw N | Analytic n (complete case Q1–Q25) | Shared comparable block used for S1–S4 trend |

|---|---|---|---|---|---|

| S1-PRE | Pre-intervention baseline | GCQuest-S1PRE | 221 | 221 | GCQuest ESV (Q1–Q25; 1–6) |

| S2-POST | Immediate post-intervention | GCQuest-S2POST | 439 | 438 | GCQuest ESV (Q1–Q25; 1–6) |

| S3-FU | Follow-up | GCQuest-S3FU | 434 | 434 | GCQuest ESV (Q1–Q25; 1–6) |

| S4-DFU | Distant follow-up | GCQuest-S4DFU | 69 | 67 | GCQuest ESV (Q1–Q25; 1–6) |

| Wave | N total | N analytic | ESV M (95% CI) |

SD | MDN [Q1, Q3] |

% ESV ≥ 4.00 (95% CI) |

% ESV ≥ 4.50 (95% CI) |

|---|---|---|---|---|---|---|---|

| S1-PRE | 221 | 221 | 3.70 (3.63, 3.77) | 0.54 | 3.60 [3.32, 4.08] | 29.00% (23.40%, 35.30%) | 9.00% (5.90%, 13.60%) |

| S2-POST | 439 | 438 | 4.64 (4.59, 4.68) | 0.50 | 4.68 [4.44, 4.88] | 88.60% (85.30%, 91.20%) | 70.80% (66.40%, 74.80%) |

| S3-FU | 434 | 434 | 4.13 (4.09, 4.16) | 0.36 | 4.12 [4.00, 4.28] | 75.10% (70.80%, 79.00%) | 9.90% (7.40%, 13.10%) |

| S4-DFU | 69 | 67 | 3.79 (3.72, 3.86) | 0.30 | 3.84 [3.64, 4.02] | 34.30% (24.10%, 46.30%) | 0.00% (0.00%, 5.40%) |

| Comparison | Z | pHolm | Cliff’s delta2 |

|---|---|---|---|

| S1-PRE vs S2-POST | -19.44 | < 1e-82 | -0.783 |

| S1-PRE vs S3-FU | -7.86 | < 1e-13 | -0.490 |

| S1-PRE vs S4-DFU | -2.11 | 0.0345 | -0.171 |

| S2-POST vs S3-FU | 14.10 | < 1e-43 | 0.641 |

| S2-POST vs S4-DFU | 12.47 | < 1e-34 | 0.833 |

| S3-FU vs S4-DFU | 5.19 | < 1e-6 | 0.602 |

| Item | M S3 | M S4 | Delta M (S4 − S3) |

pHolm | Cliff’s delta (S3 − S4) |

|---|---|---|---|---|---|

| Q19 | 4.09 | 2.78 | -1.32 | < 1e-15 | 0.614 |

| Q25 | 4.15 | 3.39 | -0.76 | < 1e-7 | 0.421 |

| Q4 | 4.08 | 3.42 | -0.67 | < 1e-4 | 0.355 |

| Q1 | 4.26 | 3.72 | -0.55 | < 1e-4 | 0.340 |

| Q2 | 3.93 | 3.40 | -0.53 | < 1e-3 | 0.296 |

| Q20 | 4.29 | 3.87 | -0.43 | 0.002 | 0.277 |

| Q21 | 4.16 | 3.67 | -0.49 | 0.003 | 0.272 |

| Q9 | 3.94 | 3.42 | -0.52 | 0.004 | 0.268 |

| Q16 | 4.40 | 3.99 | -0.41 | 0.013 | 0.242 |

| Q17 | 4.16 | 3.81 | -0.35 | 0.021 | 0.232 |

| Q7 | 4.43 | 4.01 | -0.41 | 0.032 | 0.221 |

| Measure | Statistic or category | Value |

|---|---|---|

| Heritage Engagement index (A.2.1 to A.2.5) | M (SD) | 3.78 (0.98) |

| Heritage Engagement index (A.2.1 to A.2.5) | MDN | 4 |

| Heritage Engagement index (A.2.1 to A.2.5) | Range (R) | 1 to 5 |

| Technology Usability index (TechUsability) | M (SD) | 3.03 (0.87) |

| Technology Usability index (TechUsability) | MDN | 3 |

| Technology Usability index (TechUsability) | R | 1 to 4 |

| Path map use (A.3.3) | Easy | 47/67 (70.10%) |

| Path map use (A.3.3) | Not easy | 12/67 (17.90%) |

| Path map use (A.3.3) | Not used | 8/67 (11.90%) |

| Path map use (A.3.3) | Difficulty or non-use | 20/67 (29.90%) |

| TechUsability distribution | Score 1 | 4/67 (6.00%) |

| TechUsability distribution | Score 2 | 12/67 (17.90%) |

| TechUsability distribution | Score 3 | 29/67 (43.30%) |

| TechUsability distribution | Score 4 | 22/67 (32.80%) |

| Wave | Analytic N | ESV M (95% CI) |

SD | MDN (95% CI) |

IQR | % ESV ≥ 4.00 (95% CI) | % ESV ≥ 4.50 (95% CI) |

|---|---|---|---|---|---|---|---|

| S1-PRE | 221 | 3.70 [3.63, 3.77] | 0.54 | 3.60 [3.56, 3.72] | 3.32–4.08 | 29.00% [23.40%, 35.30%] | 9.00% [5.90%, 13.60%] |

| S4-DFU | 67 | 3.79 [3.72, 3.86] | 0.30 | 3.84 [3.76, 3.92] | 3.64–4.02 | 34.30% [24.10%, 46.30%] | 0.00% [0.00%, 5.40%] |

| Metric | Estimate |

|---|---|

| Mean difference (S4 − S1) | 0.091 |

| 95% CI (bootstrap) for mean difference | [-0.009, 0.189] |

| 95% CI (Welch) for mean difference | [-0.010, 0.192] |

| Median difference (S4 − S1) | 0.240 |

| 95% CI (bootstrap) for median difference | [0.080, 0.320] |

| Hodges–Lehmann (S4 − S1) | 0.160 |

| 95% CI (bootstrap) Hodges–Lehmann | [0.040, 0.240] |

| Mann–Whitney U (two-sided) | U = 6141, p = 0.0345 |

| Cliff’s delta (S4 − S1) | 0.171 |

| 95% CI (bootstrap) Cliff’s delta | [0.034, 0.301] |

| Welch t-test (sensitivity) | t = 1.776, p = 0.077 |

| Threshold | S1-PRE | S4-DFU | Difference (S4 − S1), 95% CI |

|---|---|---|---|

| ESV ≥ 4.00 | 29.00% | 34.30% | 5.40 pp4 [-6.70 pp, 18.50 pp] |

| ESV ≥ 4.50 | 9.00% | 0.00% | -9.00 pp [-13.60 pp, -2.80 pp] |

| Item | Mean S1 | Mean S4 | Delta (S4 − S1) | pHolm | Cliff’s delta (S4 − S1) |

|---|---|---|---|---|---|

| Q6 | 3.65 | 4.27 | 0.622 | 0.047073 | 0.241 |

| Q9 | 3.97 | 3.42 | -0.550 | 0.035528 | -0.248 |

| Q12 | 3.47 | 4.10 | 0.638 | 0.017545 | 0.266 |

| Q18 | 3.67 | 4.48 | 0.812 | 0.002052 | 0.310 |

| Q19 | 3.65 | 2.78 | -0.871 | 0.000174 | -0.355 |

| Q25 | 3.87 | 3.39 | -0.485 | 0.047073 | -0.239 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.