Submitted:

12 February 2026

Posted:

13 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Variability in LLM-Generated Outputs

1.2. Natural Language Processing Application as an Evaluation Method

2. Experiment 1: Short-Term Model Consistency

2.1. Introduction Experiment 1

2.2. Method Experiemnt 1

2.2.1. Model Selection

2.2.2. Text Corpus

2.2.3. Procedure

2.3. Results Experiment 1

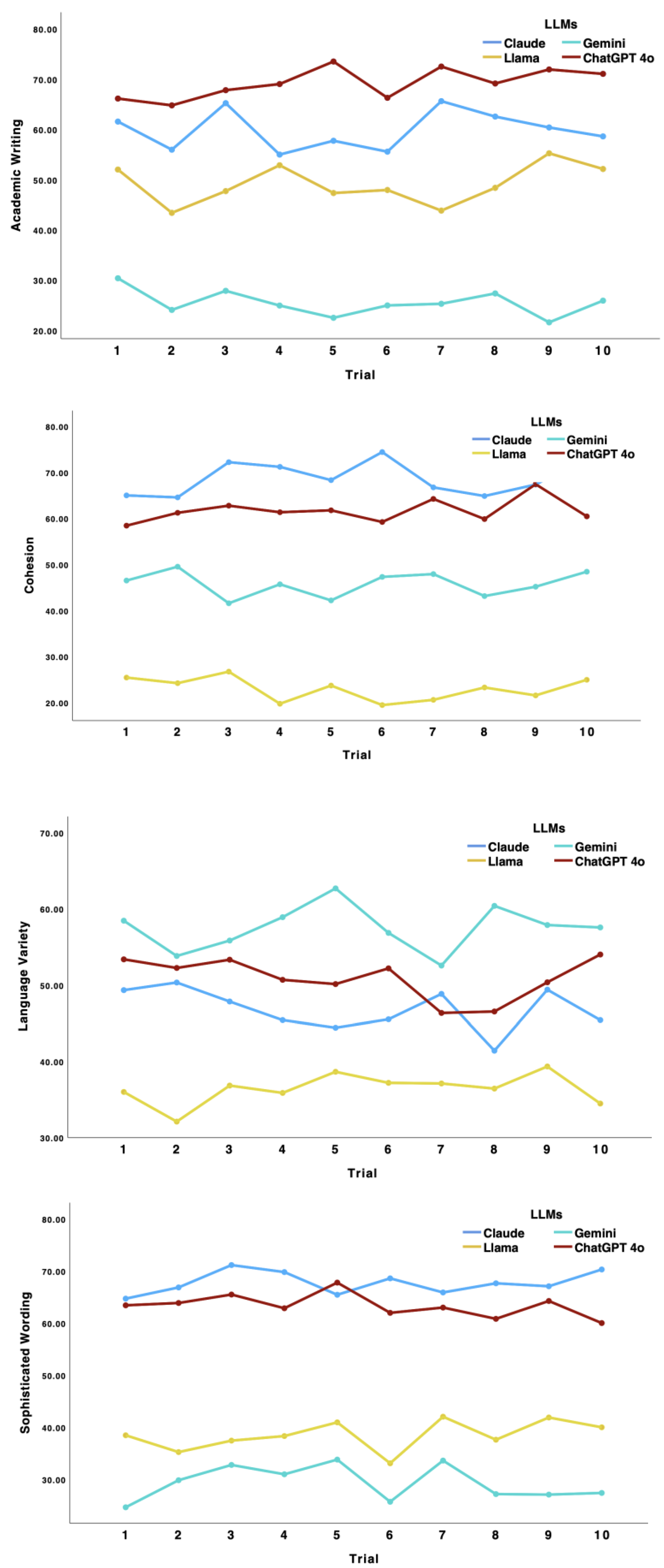

2.3.1. RQ1: Do Linguistic Features of Generated Outputs Shift Across Repeated Repetitions?

2.3.2. Linguistic Variations in Generated Outputs by Different LLMs

2.4. Discussion Experiment 1

3. Experiment 2: Linguistic Variability Across Model Versions

3.1. Introduction Experiment 2

3.2. Method Experiment 2

3.2.1. Model Versions & Deployment Details

3.2.2. Task Setup & NLP-Based Evaluation

3.3. Results Experiment 2

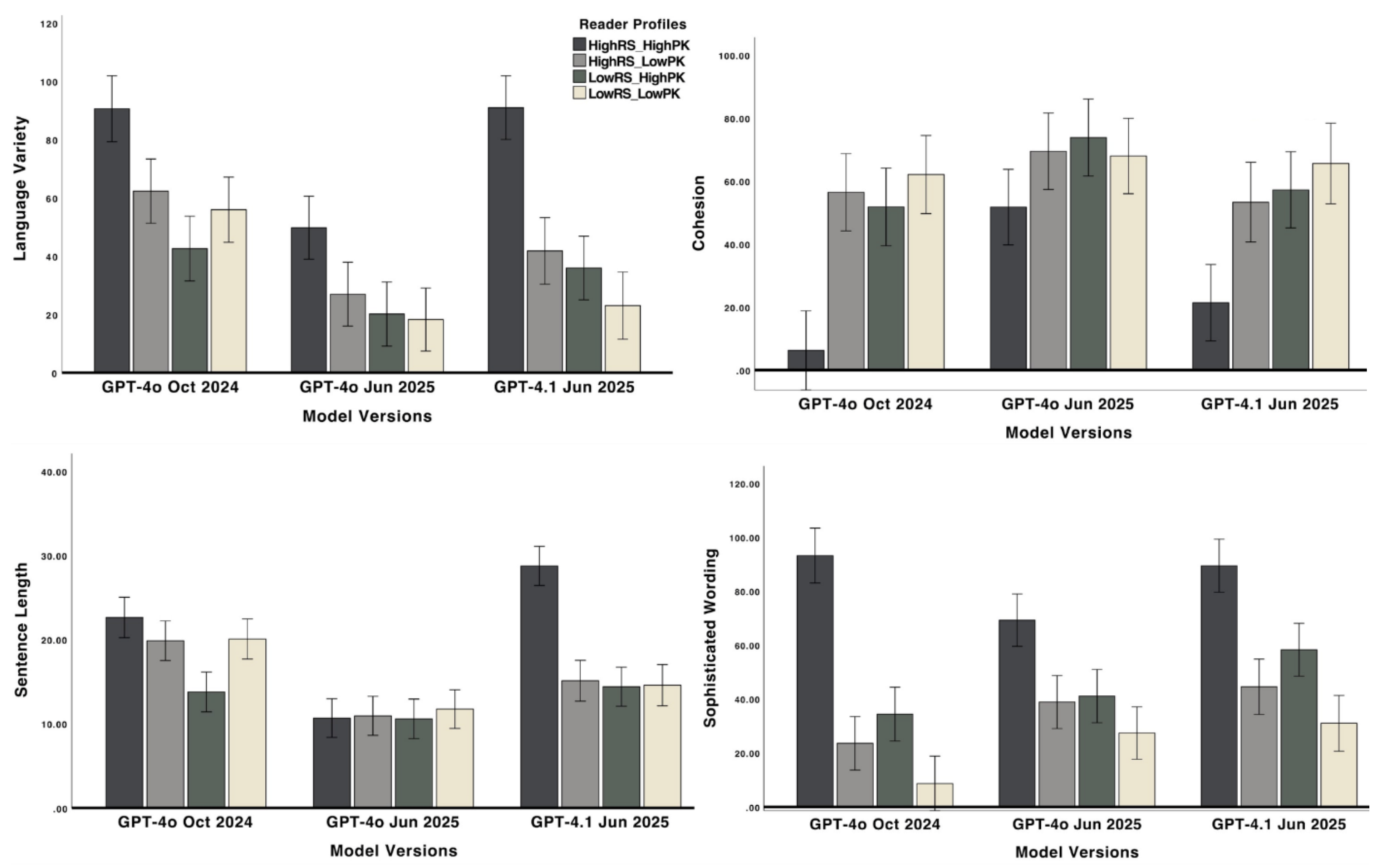

3.3.1. RQ2: Do Linguistic Features in Outputs Shift Due to Model Updates over Time?

3.3.2. RQ3: How Do Newer Models Respond Differently to Personalization Tasks?

4. Discussion

Limitations and Future Directions

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| PK | Prior knowledge |

| RS | Reading skills |

| GenAI NLP |

Generative AI Natural Language Processing |

| LLM WAT |

Large Language Model Writing Analytics Tool |

Appendix A

| Model | Owned by | Release Date | Capabilities | Context Capacities |

| ChatGPT-4o | OpenAI | May 2024 | Better reasoning vs earlier GPT models with stronger multimodal understanding and generative abilities | Not publicly disclosed, but estimate smaller than 1 million tokens |

| ChatGPT-4.1 | OpenAI | April 2025 | Faster and more cost-efficient; benchmarks show reduced latency and improved inference vs older models in several tasks Designed for better instruction-following, coding, and long-context comprehension |

Not publicly disclosed, but estimate up to 1 million tokens |

| Claude 3.5 | Anthropic | June 2024 | Strong reasoning and instruction-following, optimized for analytical writing, summarization, and code understanding with high factual coherence | Up to 200,000 tokens |

| Llama 3.1 | Meta | July 2024 | Open-weight LLM optimized for multilingual generation, reasoning, and instruction-tuned tasks, used widely for research and deployment | Up to 128,000 tokens |

| Gemini Pro 1.5 | Google DeepMind | February 2024 | Advanced multimodal reasoning with strong long-context performance, effective for document understanding, summarization, and cross-modal tasks | Up to 1 million tokens |

Appendix B

Appendix C

| Domain | Topic | Text Titles | Word Count | FKGL |

| Science | Biology | Bacteria | 468 | 12.10 |

| Science | Biology | The Cells | 426 | 11.61 |

| Science | Biology | Microbes | 407 | 14.38 |

| Science | Biology | Genetic Equilibrium | 441 | 12.61 |

| Science | Biology | Food Webs | 492 | 12.06 |

| Science | Biology | Patterns of Evolution | 341 | 15.09 |

| Science | Biology | Causes and Effects of Mutations | 318 | 11.35 |

| Science | Biochemistry | Photosynthesis | 427 | 11.44 |

| Science | Chemistry | Chemistry of Life | 436 | 12.71 |

| Science | Physics | What are Gravitational Waves? | 359 | 16.51 |

Appendix D

| Features | Metrics and Descriptions |

| Writing Style | Academic writing: The extent to which texts include academic wordings and sophisticated sentence structures, typical properties of scientific texts |

| Conceptual Density |

Development of ideas: The extent to which ideas and concepts are elaborated in a text, also indicates complex sentences requiring cognitive effort to comprehend Noun-to-verb ratio: High noun-to-verb ratio indicates densely packed information in a text Discourse-level cohesion: The extent to which the text contains connectives and cohesion cues (e.g., repeating ideas and concepts) |

|

Syntax Structure |

Sentence length: Longer sentences indicate more complex syntax structure Language variety: The extent to which the text contains varied lexical and syntax structures |

|

Lexical Features |

Complex vocabulary: Lower measures indicate texts contain wordings that are familiar and easy to be recognized. In contrast, high measures indicate texts contain advanced vocabulary |

References

- Ahmed, A.; Aziz, S.; Abd-Alrazaq, A.; AlSaad, R.; Sheikh, J. Leveraging LLMs and wearables to provide personalized recommendations for enhancing student well-being and academic performance through a proof of concept. Sci. Rep. 2025, 15, 4591. [Google Scholar] [CrossRef]

- Bhattacharjee, A.; Zeng, Y.; Xu, S.Y.; Kulzhabayeva, D.; Ma, M.; Kornfield, R.; et al. Understanding the role of large language models in personalizing and scaffolding strategies to combat academic procrastination. In Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems, Honolulu, HI, USA, 11–16 May 2024; pp. 1–18. [Google Scholar]

- Huang, W.; Abbeel, P.; Pathak, D.; Mordatch, I. Language Models as Zero-Shot Planners: Extracting Actionable Knowledge for Embodied Agents. arXiv 2022, arXiv:2201.07207. [Google Scholar] [CrossRef]

- Merino-Campos, C. The impact of artificial intelligence on personalized learning in higher education: A systematic review. Trends High. Educ. 2025, 4, 17. [Google Scholar] [CrossRef]

- Sharma, S.; Mittal, P.; Kumar, M.; Bhardwaj, V. The role of large language models in personalized learning: A systematic review of educational impact. Discover Sustain. 2025, 6, 1–24. [Google Scholar] [CrossRef]

- Kintsch, W. Revisiting the construction–integration model of text comprehension and its implications for instruction. In Theoretical Models and Processes of Literacy; Routledge: New York, NY, USA, 2018; pp. 178–203. [Google Scholar]

- Huynh, L.; McNamara, D.S. GenAI-Powered Text Personalization: Natural Language Processing Validation of Adaptation Capabilities. Appl. Sci. 2025, 15, 6791. [Google Scholar] [CrossRef]

- Huynh, L.; McNamara, D.S. Natural Language Processing as a Scalable Method for Evaluating Educational Text Personalization by LLMs. Appl. Sci. 2025, 15, 12128. [Google Scholar] [CrossRef]

- Peláez-Sánchez, I.C.; Velarde-Camaqui, D.; Glasserman-Morales, L.D. The impact of large language models on higher education: Exploring the connection between AI and Education 4.0. Front. Educ. 2024, 9, 1392091. [Google Scholar] [CrossRef]

- Yang, Q.; Liang, C. A Second-Classroom Personalized Learning Path Recommendation System Based on Large Language Model Technology. Appl. Sci. 2025, 15, 7655. [Google Scholar] [CrossRef]

- Raj, H.; Rosati, D.; Majumdar, S. Measuring Reliability of Large Language Models through Semantic Consistency. arXiv 2023, arXiv:2211.05853. [Google Scholar] [CrossRef]

- Srivastava, A.; et al. Beyond the Imitation Game: Quantifying and Extrapolating the Capabilities of Language Models. arXiv 2022, arXiv:2206.04615. [Google Scholar] [CrossRef]

- Liu, Y.; Cong, T.; Zhao, Z.; Backes, M.; Shen, Y.; Zhang, Y. Robustness Over Time: Understanding Adversarial Examples’ Effectiveness on Longitudinal Versions of Large Language Models. arXiv 2023, arXiv:2308.07847. [Google Scholar] [CrossRef]

- Radford, A.; Wu, J.; Child, R.; Luan, D.; Amodei, D.; Sutskever, I. Language models are unsupervised multitask learners. OpenAI Blog 2019, 1, 9. [Google Scholar]

- Atil, B.; Chittams, A.; Fu, L.; Ture, F.; Xu, L.; Baldwin, B. LLM Stability: A detailed analysis with some surprises. arXiv 2024. arXiv:2408 (submitted). [Google Scholar]

- Wang, J.J.; Wang, V.X. Assessing consistency and reproducibility in the outputs of large language models: Evidence across diverse finance and accounting tasks. arXiv 2025, arXiv:2503.16974. [Google Scholar] [CrossRef]

- Chen, L.; Zaharia, M.; Zou, J. How is ChatGPT’s behavior changing over time? Harvard Data Sci. Rev. 2024, 6(2). [Google Scholar] [CrossRef]

- Tu, S.; Li, C.; Yu, J.; Wang, X.; Hou, L.; Li, J. ChatLog: Carefully evaluating the evolution of ChatGPT across time. arXiv 2023, arXiv:2304.14106. [Google Scholar]

- Park, C.; Kim, H. Understanding LLM development through longitudinal study: Insights from the Open Ko-LLM leaderboard. arXiv 2024, arXiv:2409.03257. [Google Scholar] [CrossRef]

- Anisuzzaman, D.M.; Malins, J.G.; Friedman, P.A.; Attia, Z.I. Fine-tuning large language models for specialized use cases. Mayo Clin. Proc. Digit. Health 2025, 3, 100184. [Google Scholar] [CrossRef]

- Durrani, N.; Sajjad, H.; Dalvi, F. How transfer learning impacts linguistic knowledge in deep NLP models? arXiv 2021, arXiv:2105.15179. [Google Scholar] [CrossRef]

- Lu, W.; Luu, R.K.; Buehler, M.J. Fine-tuning large language models for domain adaptation: Exploration of training strategies, scaling, model merging and synergistic capabilities. npj Comput. Mater. 2025, 11, 84. [Google Scholar] [CrossRef]

- Mosbach, M.; Khokhlova, A.; Hedderich, M.A.; Klakow, D. On the interplay between fine-tuning and sentence-level probing for linguistic knowledge in pre-trained transformers. arXiv 2020, arXiv:2010.02616. [Google Scholar]

- Luo, Z.; Xie, Q.; Ananiadou, S. Factual consistency evaluation of summarization in the era of large language models. Expert Syst. Appl. 2024, 254, 124456. [Google Scholar] [CrossRef]

- Cui, W.; Zhang, J.; Li, Z.; Damien, L.; Das, K.; Malin, B.; Kumar, S. DCR-Consistency: Divide–Conquer–Reasoning for Consistency Evaluation and Improvement of Large Language Models. arXiv 2024, arXiv:2401.02132. [Google Scholar]

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; et al. Language models are few-shot learners. Adv. Neural Inf. Process. Syst. 2020, 33, 1877–1901. [Google Scholar]

- Sahoo, P.; Singh, A.K.; Saha, S.; Jain, V.; Mondal, S.; Chadha, A. A systematic survey of prompt engineering in large language models: Techniques and applications. arXiv 2024, arXiv:2402.07927. [Google Scholar] [CrossRef]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Xia, F.; Chi, E.; et al. Chain-of-thought prompting elicits reasoning in large language models. Adv. Neural Inf. Process. Syst. 2022, 35, 24824–24837. [Google Scholar]

- Warr, M.; Pivovarova, M.; Mishra, P.; Oster, N.J. Is ChatGPT Racially Biased? The Case of Evaluating Student Writing. In The Case of Evaluating Student Writing; Elsevier: Amsterdam, The Netherlands, 2024. [Google Scholar]

- Banerjee, S.; Lavie, A. METEOR: An automatic metric for MT evaluation with improved correlation with human judgments. In Proceedings of the ACL Workshop on Intrinsic and Extrinsic Evaluation Measures for Machine Translation and/or Summarization, Ann Arbor, MI, USA, June 2005; pp. 65–72. [Google Scholar]

- Lin, C.-Y. ROUGE: A package for automatic evaluation of summaries. In Proceedings of the Workshop on Text Summarization Branches Out, Barcelona, Spain, July 2004; pp. 74–81. [Google Scholar]

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W. BLEU: a method for automatic evaluation of machine translation. In Proceedings of the 40th Annual Meeting of the Association for Computational Linguistics (ACL), Philadelphia, PA, USA, July 2002; pp. 311–318. [Google Scholar]

- Crossley, S.A. Developing linguistic constructs of text readability using natural language processing. Sci. Stud. Read. 2025, 29, 138–160. [Google Scholar] [CrossRef]

- Crossley, S.; Salsbury, T.; McNamara, D. Measuring L2 lexical growth using hypernymic relationships. Lang. Learn. 2009, 59, 307–334. [Google Scholar] [CrossRef]

- Tetzlaff, L.; Schmiedek, F.; Brod, G. Developing personalized education: A dynamic framework. Educ. Psychol. Rev. 2021, 33, 863–882. [Google Scholar] [CrossRef]

- Crossley, S.A.; Skalicky, S.; Dascalu, M.; McNamara, D.S.; Kyle, K. Predicting text comprehension, processing, and familiarity in adult readers: New approaches to readability formulas. Discourse Process. 2017, 54, 340–359. [Google Scholar] [CrossRef]

- McNamara, D.S.; Graesser, A.C.; Louwerse, M.M. Sources of text difficulty: Across genres and grades. In Measuring Up: Advances in How We Assess Reading Ability; Sabatini, J.P., Albro, E., O’Reilly, T., Eds.; Rowman & Littlefield: Lanham, MD, USA, 2012; pp. 89–116. [Google Scholar]

- Kintsch, W. The role of knowledge in discourse comprehension: A construction–integration model. Psychological Review 1988, 95, 163–182. [Google Scholar] [CrossRef]

- Potter, A.; Shortt, M.; Goldshtein, M.; Roscoe, R. D. Assessing academic language in tenth-grade essays using natural language processing. Assess Writing 2025, 64, 100921. [Google Scholar] [CrossRef]

- Ozuru, Y.; Dempsey, K.; McNamara, D.S. Prior knowledge, reading skill, and text cohesion in the comprehension of science texts. Learn. Instr. 2009, 19, 228–242. [Google Scholar] [CrossRef]

- O’Reilly, T.; McNamara, D.S. Reversing the reverse cohesion effect: Good texts can be better for strategic, high-knowledge readers. Discourse Process. 2007, 43, 121–152. [Google Scholar] [CrossRef]

- Baayen, R.H.; Davidson, D.J.; Bates, D.M. Mixed-effects modeling with crossed random effects for subjects and items. J. Mem. Lang. 2008, 59, 390–412. [Google Scholar] [CrossRef]

- Bommasani, R.; Hudson, D.A.; Adeli, E.; Altman, R.; Arora, S.; von Arx, S.; et al. On the opportunities and risks of foundation models. arXiv 2021, arXiv:2108.07258. [Google Scholar] [CrossRef]

- Achiam, J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F.L.; et al. GPT-4 Technical Report. arXiv 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Biber, D.; Gray, B. Grammatical Complexity in Academic English: Linguistic Change in Writing, 1st ed.; Cambridge University Press: Cambridge, UK, 2016. [Google Scholar]

- Gardner, D.; Davies, M. A new academic vocabulary list. Appl. Linguist. 2014, 35, 305–327. [Google Scholar] [CrossRef]

- Reviriego Vasallo, P.; Conde Díaz, J.; Merino-Gómez, E.; Martínez Ruiz, G.; Hernández Gutiérrez, J.A. Playing with Words: Comparing the Vocabulary and Lexical Diversity of ChatGPT and Humans. Mach. Learn. Appl. 2024, 18, 100602. [Google Scholar] [CrossRef]

- Gupta, V.; Chowdhury, S.P.; Zouhar, V.; Rooein, D.; Sachan, M. Multilingual performance biases of large language models in education. arXiv 2025, arXiv:2504.17720. [Google Scholar] [CrossRef]

- OpenAI. Introducing GPT-4.1 in the API. Available online: https://openai.com/index/gpt-4-1/ (accessed on 14 April 2025).

- Mosbach, M.; Andriushchenko, M.; Klakow, D. On the stability of fine-tuning BERT: Misconceptions, explanations, and strong baselines. arXiv 2020, arXiv:2006.04884. [Google Scholar]

- Merchant, A.; Rahimtoroghi, E.; Pavlick, E.; Tenney, I. What happens to BERT embeddings during fine-tuning? arXiv 2020, arXiv:2004.14448. [Google Scholar] [CrossRef]

- Ji, Z.; Lee, N.; Frieske, R.; Yu, T.; Su, D.; Xu, Y.; et al. Survey of hallucination in natural language generation. ACM Comput. Surv. 2023, 55, 1–38. [Google Scholar] [CrossRef]

- Koedinger, K.R.; et al. Predictive Analytics in Education. Int. J. Learn. Analytics & AI in Educ. 2013. [Google Scholar]

- Aleven, V.; McLaren, B.M.; Sewall, J.; van Velsen, M.; Demi, S. Embedding Intelligent Tutoring Systems in MOOCs and e-learning Platforms. In Proceedings of IT, 2016; Springer.

- Alkaissi, H.; McFarlane, S.I. Artificial Hallucinations in ChatGPT: Implications in Scientific Writing. Cureus 2023, 15, e35179. [Google Scholar] [CrossRef]

- Hatem, R.; Simmons, B.; Thornton, J.E. A call to address AI “hallucinations” and how healthcare professionals can mitigate their risks. Cureus 2023, 15. [Google Scholar] [CrossRef]

- Wang, Y.; et al. Factuality of Large Language Models: A Survey. arXiv 2024, arXiv:2402.02420. [Google Scholar]

- Maleki, N.; Padmanabhan, B.; Dutta, K. AI hallucinations: a misnomer worth clarifying. In Proceedings of the 2024 IEEE Conference on Artificial Intelligence (CAI), Singapore, 24-26 June 2024; IEEE: Piscataway, NJ, USA, 2024; pp. 133–138. [Google Scholar]

- Amershi, S.; et al. Guidelines for Human-AI Interaction. CHI / Microsoft Research Technical Report 2019. [Google Scholar]

- Mitchell, M.; et al. Model Cards for Model Reporting. In Proceedings 2019.

- Holstein, K.; et al. (2019). Design / Human-in-the-loop for Education — teacher-AI complementarity (multiple proceedings/examples). 2019.

- OpenAI, Retiring GPT-4o, GPT-4.1, GPT-4.1 mini, and OpenAI o4-mini in ChatGPT, OpenAI, Jan. 29, 2026. Available online: https://openai.com/index/retiring-gpt-4o-and-older-models/ (accessed on 11 February 2026).

- Laban, P.; Kryściński, W.; Agarwal, D.; Fabbri, A.R.; Xiong, C.; Joty, S.; Wu, C.S. LLMs as factual reasoners: Insights from existing benchmarks and beyond. arXiv 2023, arXiv:2305.14540. [Google Scholar] [CrossRef]

| Linguistic Features |

Claude M SD |

Llama M SD |

ChatGPT M SD |

Gemini M SD |

F | p | ||||

| Academic Writing | 59.98 | 25.10 | 52.02 | 27.76 | 27.38 | 19.35 | 63.98 | 24.19 | 8.44 | <0.001 |

| Idea Development | 64.52 | 15.54 | 27.35 | 14.21 | 26.32 | 12.47 | 79.08 | 17.21 | 84.15 | <0.001 |

| Sentence Cohesion | 13.33 | 12.95 | 62.38 | 24.14 | 80.24 | 17.25 | 37.01 | 20.55 | 6.41 | <0.001 |

| Noun-to-Verb ratio | 6.08 | 8.29 | 0.72 | 1.26 | 0.71 | 0.61 | 2.36 | 2.24 | 8.61 | <0.001 |

| Sentence Length | 11.11 | 2.86 | 19.09 | 4.29 | 29.12 | 7.72 | 17.00 | 3.25 | 71.34 | <0.001 |

| Language Variety | 46.35 | 24.87 | 30.78 | 17.27 | 53.83 | 26.00 | 60.53 | 20.38 | 6.76 | <0.001 |

| Sophisticated Word | 67.86 | 33.84 | 40.66 | 26.42 | 30.67 | 16.66 | 59.46 | 23.48 | 9.29 | <0.001 |

| Linguistic Features | Component 1 | Component 2 | Component 3 | Component 4 |

| Sentence Length | -0.84 | -0.28 | -0.17 | 0.20 |

| Idea Development | 0.76 | 0.23 | 0.16 | 0.44 |

| Academic Writing | 0.34 | 0.81 | -0.15 | -0.20 |

| Sophisticated Wording | 0.12 | 0.85 | 0.16 | 0.30 |

| Sentence Cohesion | -0.01 | 0.16 | 0.89 | -0.21 |

| Noun-to-Verb | 0.41 | -0.17 | 0.79 | 0.04 |

| Language Variety | 0.01 | 0.05 | -0.18 | 0.94 |

| Eigenvalue | 2.52 | 1.65 | 1.13 | 0.69 |

| Variance Explained | 35.99 | 23.61 | 16.09 | 9.85 |

| Cumulative Variance | 35.99 | 59.61 | 75.70 | 85.54 |

| Linguistic Features | ChatGPT 4o Oct 2024 | ChatGPT 4o June 2025 | ChatGPT 4.1 June 2025 | ||||||

| M | SD | M | SD | M | SD | F (2, 212) | p | η2 | |

| Source Similarity | 0.79 | 0.12 | 0.90 | 0.04 | 0.88 | 0.06 | 41.04 | <0.001 | 0.43 |

| Academic Writing | 33.72 | 30.55 | 61.84 | 19.72 | 57.83 | 22.10 | 24.83 | <0.001 | 0.32 |

| Idea Development | 41.88 | 26.08 | 50.15 | 17.76 | 58.98 | 21.15 | 6.21 | 0.003 | 0.10 |

| Language Variety | 62.52 | 24.22 | 28.32 | 20.50 | 48.69 | 31.19 | 39.46 | <0.001 | 0.42 |

| Sentence Cohesion | 44.27 | 26.99 | 65.94 | 22.28 | 48.97 | 25.34 | 13.44 | <0.001 | 0.20 |

| Noun-to-Verb Ratio | 2.07 | 0.42 | 2.10 | 0.35 | 2.26 | 0.45 | 8.39 | <0.001 | 0.14 |

| Sentence Length | 19.05 | 4.80 | 10.94 | 2.34 | 18.22 | 7.56 | 54.80 | <0.001 | 0.51 |

| Sophisticated Wording | 40.81 | 33.06 | 45.35 | 20.11 | 53.56 | 29.14 | 8.11 | <0.001 | 0.13 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).