Submitted:

11 February 2026

Posted:

12 February 2026

You are already at the latest version

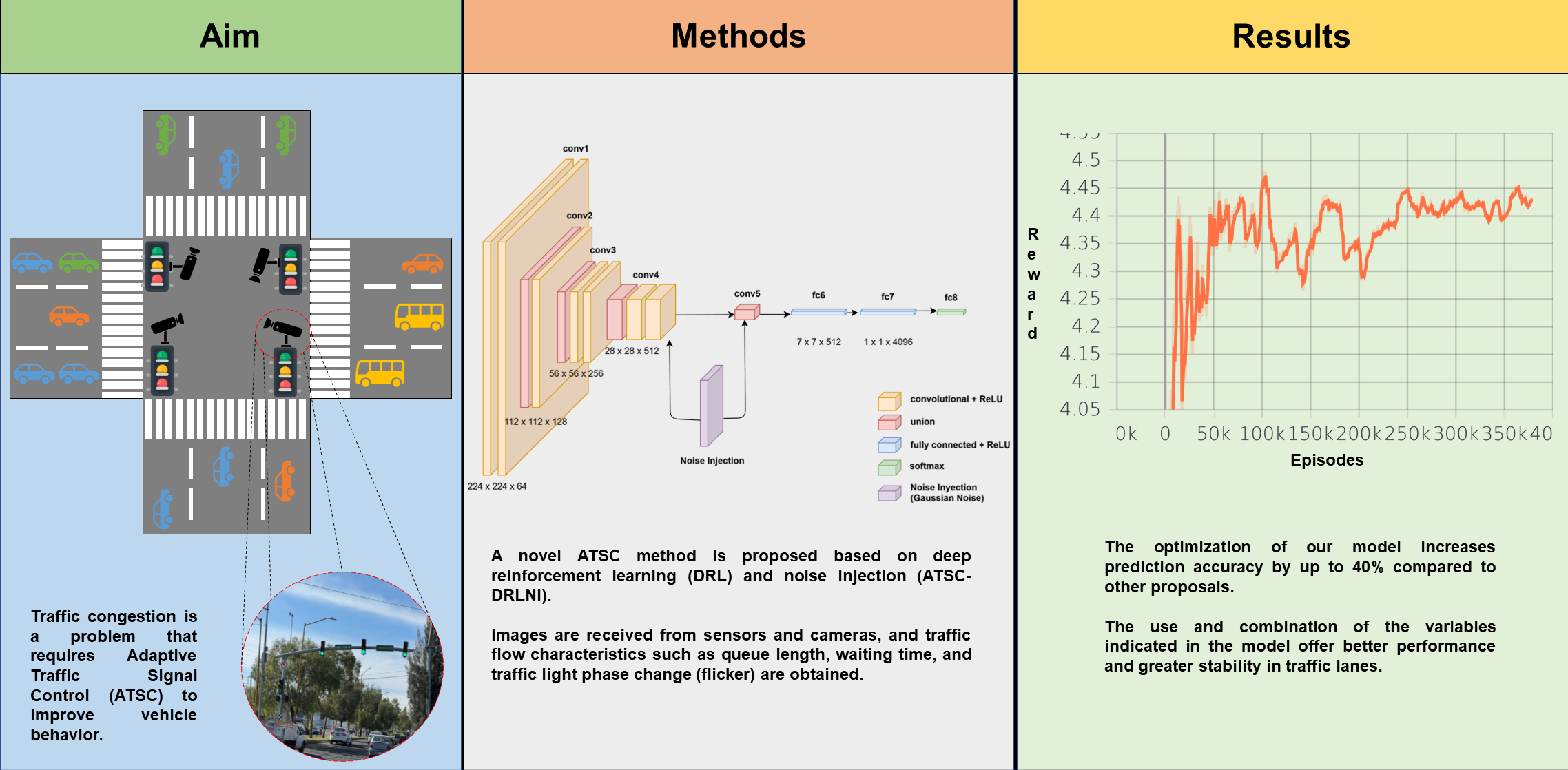

Abstract

Keywords:

1. Introduction

- 1.

- A decentralized DRL framework with NI (ATSC-DRLNI) for single-intersection ATSC in which each traffic light operates as an independent agent adapting to local traffic conditions.

- 2.

- A stability-oriented reward function that integrates queue length, flickers, and A2C learning feedback in an effort to mitigate premature phase changes and support smoother convergence.

- 3.

- A comparative evaluation against seven baseline methods in Simulation of Urban MObility (SUMO) platform using real traffic data from a Mexican city for calibration and validation, suggesting improvements in queue reduction and phase stability.

- 4.

- An effort toward narrowing the gap between simulation-based ATSC research and potential real-world applications that emphasizes the role of stability-related variables in decentralized DRL settings.

2. Preliminaries on Machine Learning

2.1. Artificial Neural Networks (ANNs)

2.2. Reinforcement Learning (RL)

2.3. Q-Learning (DQN)

2.4. Advantage Actor-Critic (A2C)

2.5. Neural Networks in Reinforcement Learning

3. Materials and Methods

3.1. System Description

3.2. Actions

3.3. States

3.4. Reward

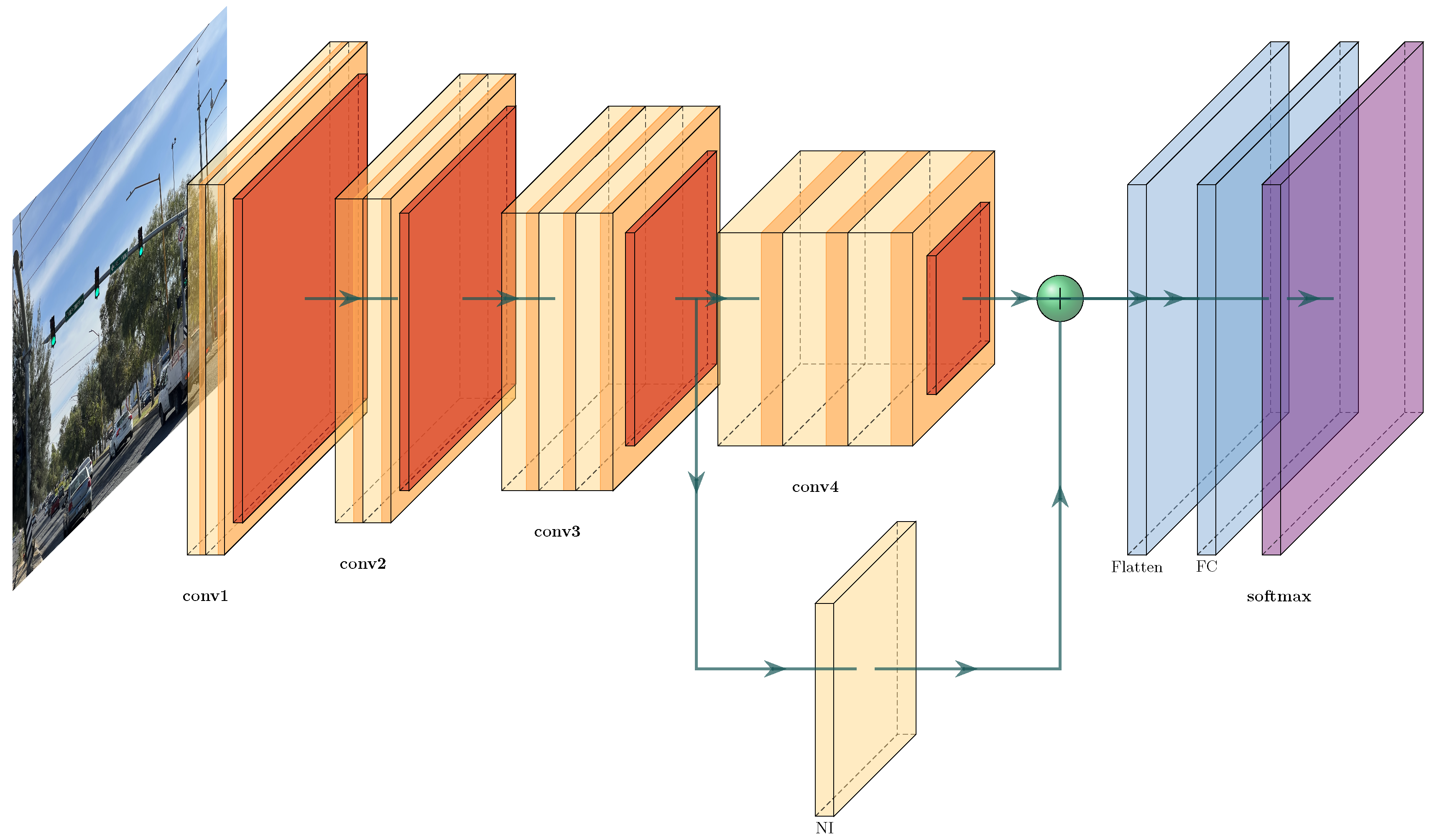

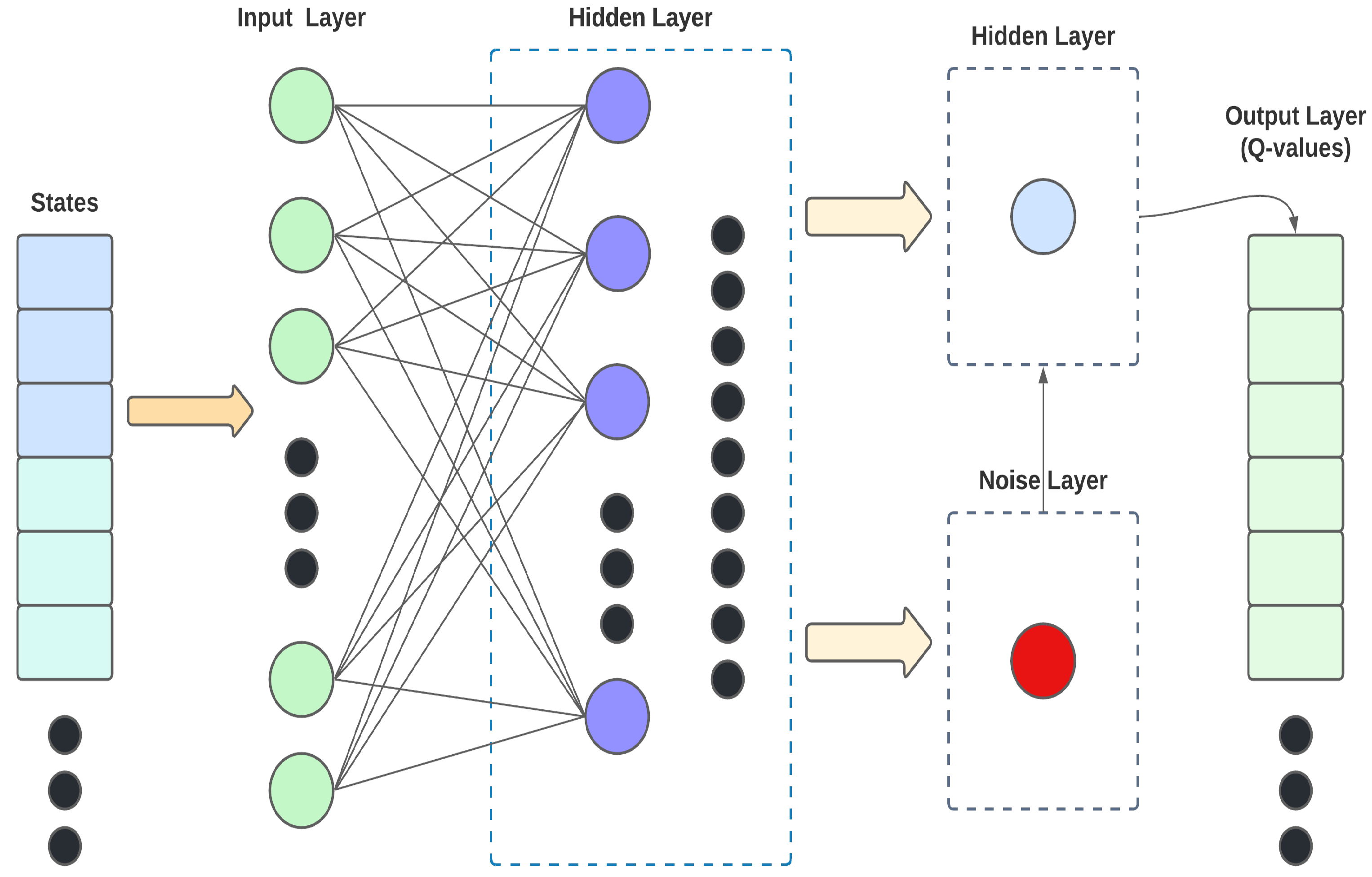

3.5. The Neural Network Structure

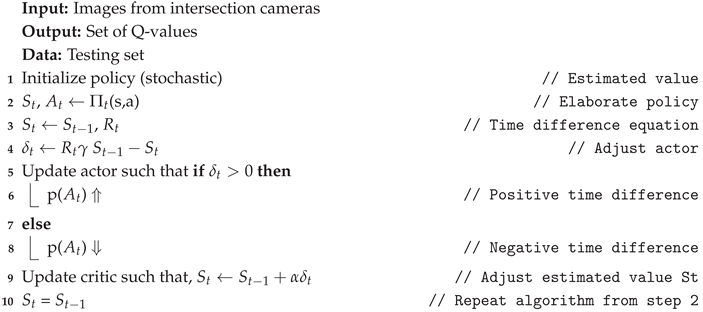

| Algorithm 1: ATSC-DRLNI policy optimization |

|

4. Results

4.1. Setup

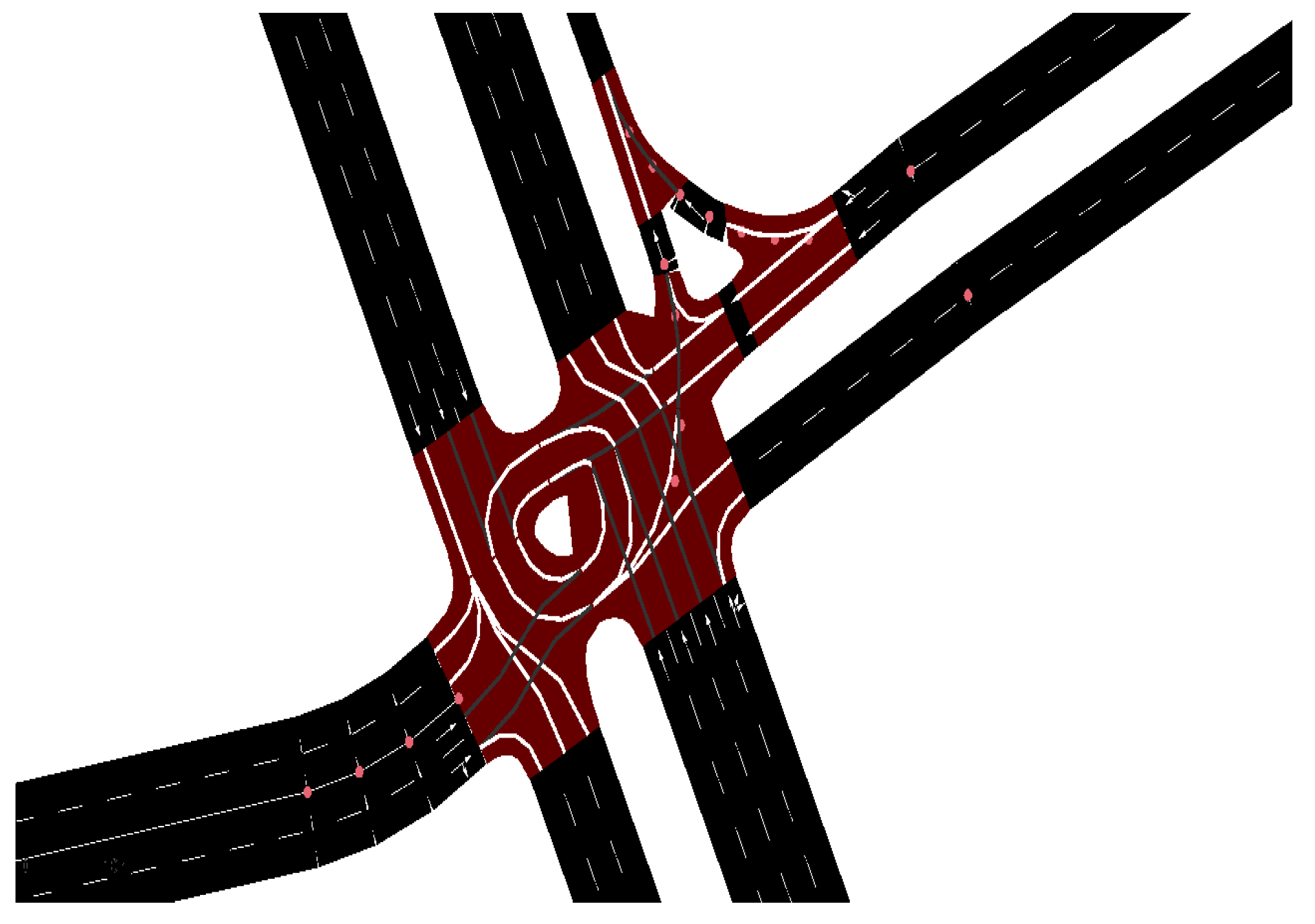

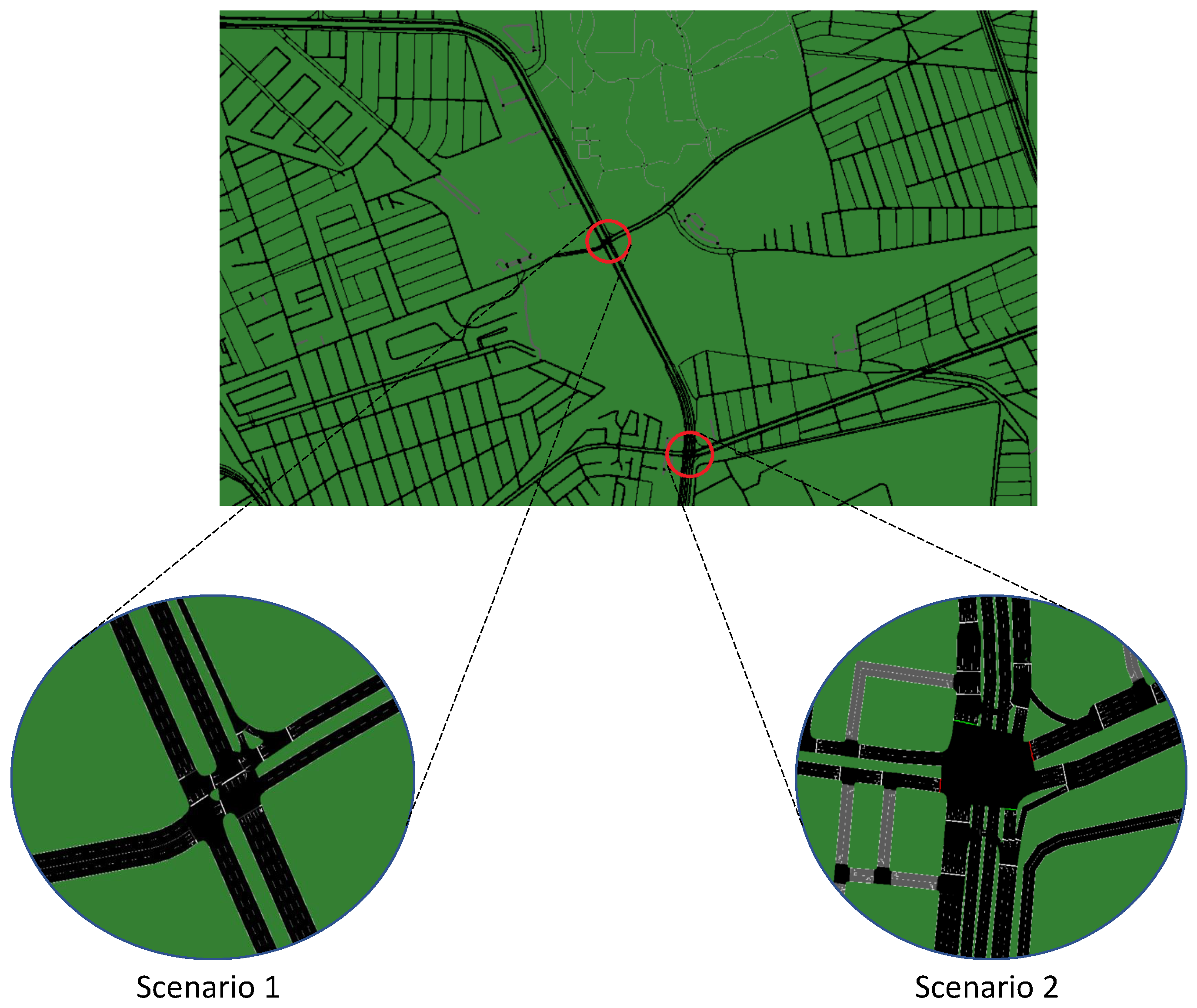

4.2. Simulation Environment and Dataset

| Parameter | Value |

|---|---|

| Number of lanes | 10 |

| Traffic Flow | Random trips |

| Simulation platform | SUMO |

| Yellow phase duration | 5 s |

| Initial Green phase duration | 30 s |

| Distance D to intersection to detect vehicles | 800 m |

| Value of | 5,000 vehicles |

| Value of | 100 flicker |

| Value of | 0.2 flicker |

| Parameter | Value |

|---|---|

| Number of lanes | 14 |

| Traffic Flow | Random trips |

| Simulation platform | SUMO |

| Yellow phase duration | 5 s |

| Initial Green phase duration | 30 s |

| Distance D to intersection to detect vehicles | 800 m |

| Value of | 6,500 vehicles |

| Value of | 100 flicker |

| Value of | 0.2 flicker |

4.3. Selected Methods for Comparison

- Fixed-Time (FT): This is a classical non-adaptive method used for traffic light control that serves as a baseline for comparisons.

- Multi-Agent Deep Reinforcement Learning (MADRL): Multi-agent algorithm based on DRL. It uses gradient policies and trains agents in a centralized manner, where collective decision-making is based on real-time information feedback to agents. There are also descentralized versions, where agents are autonomous with decision-making not depending on other agents.

- Multi-Agent Recurrent Deep Deterministic Policy Gradient (MARDDPG): decentralized algorithm for agent training where each agent shares policies and information with other agents. Individual agents are capable to execute decision independently.

- Single-Agent Based Algorithm (SABA): DQN based descentralized algorithm where agents do not relate between them, although they try to predict what other agents may decide.

- Longest Queue First (LQF): This method favors lane selection where the green light is already active and gives priority to vehicles close to the intersection, a situation that may produce inconsistent phase cycles.

- Q-Learning (DQN): RL-based method for action learning that uses a predefined number of Q-values that are related to the number of actions. Actions are stored in a table that contains elements, such as vehicles queue, waiting time of vehicles in lanes close to the intersection and the current phase.

- Self-Organizing Traffic Lights (SOTL): This method uses a self organizing algorithm to determine the phases of the traffic lights.

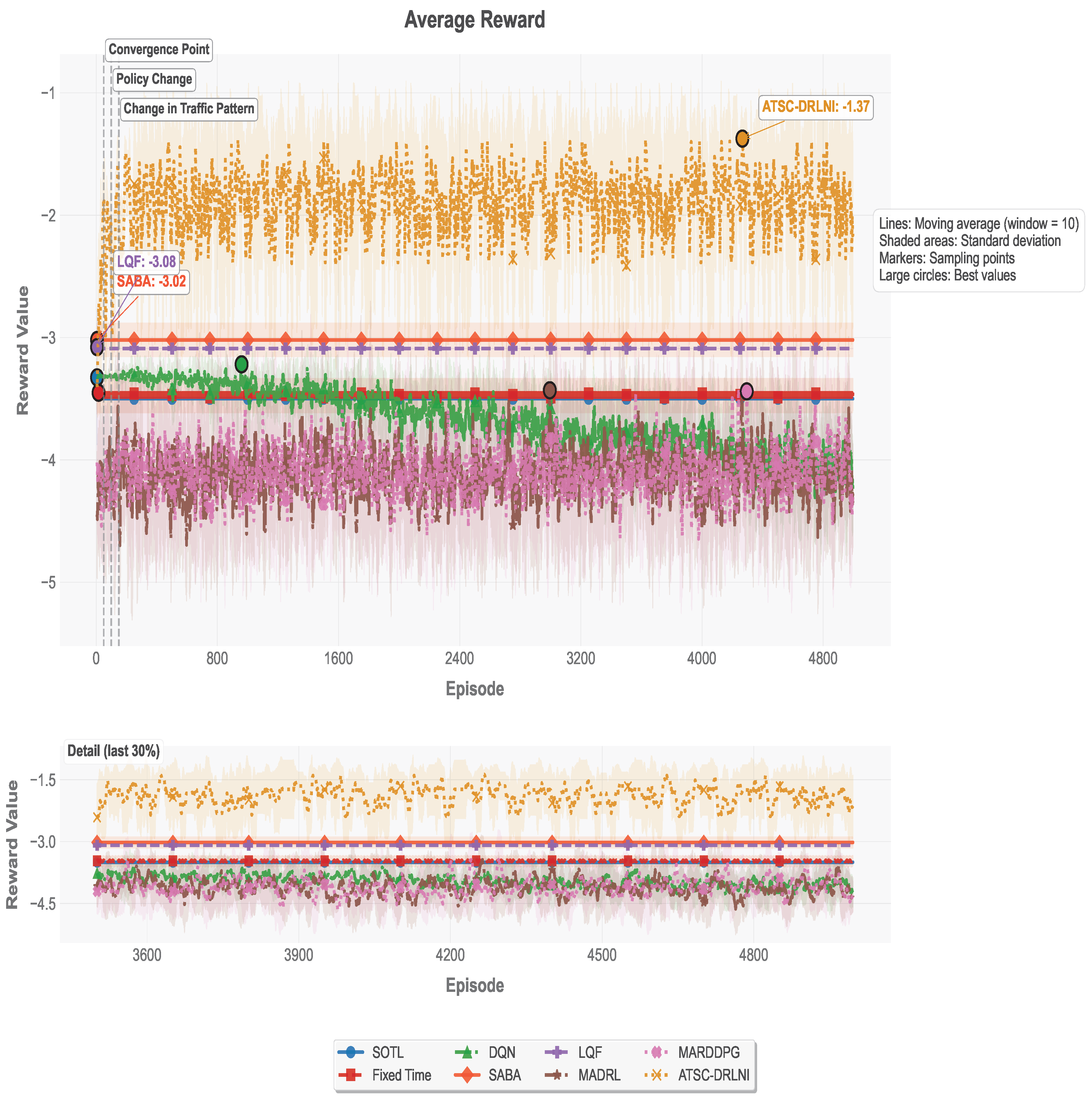

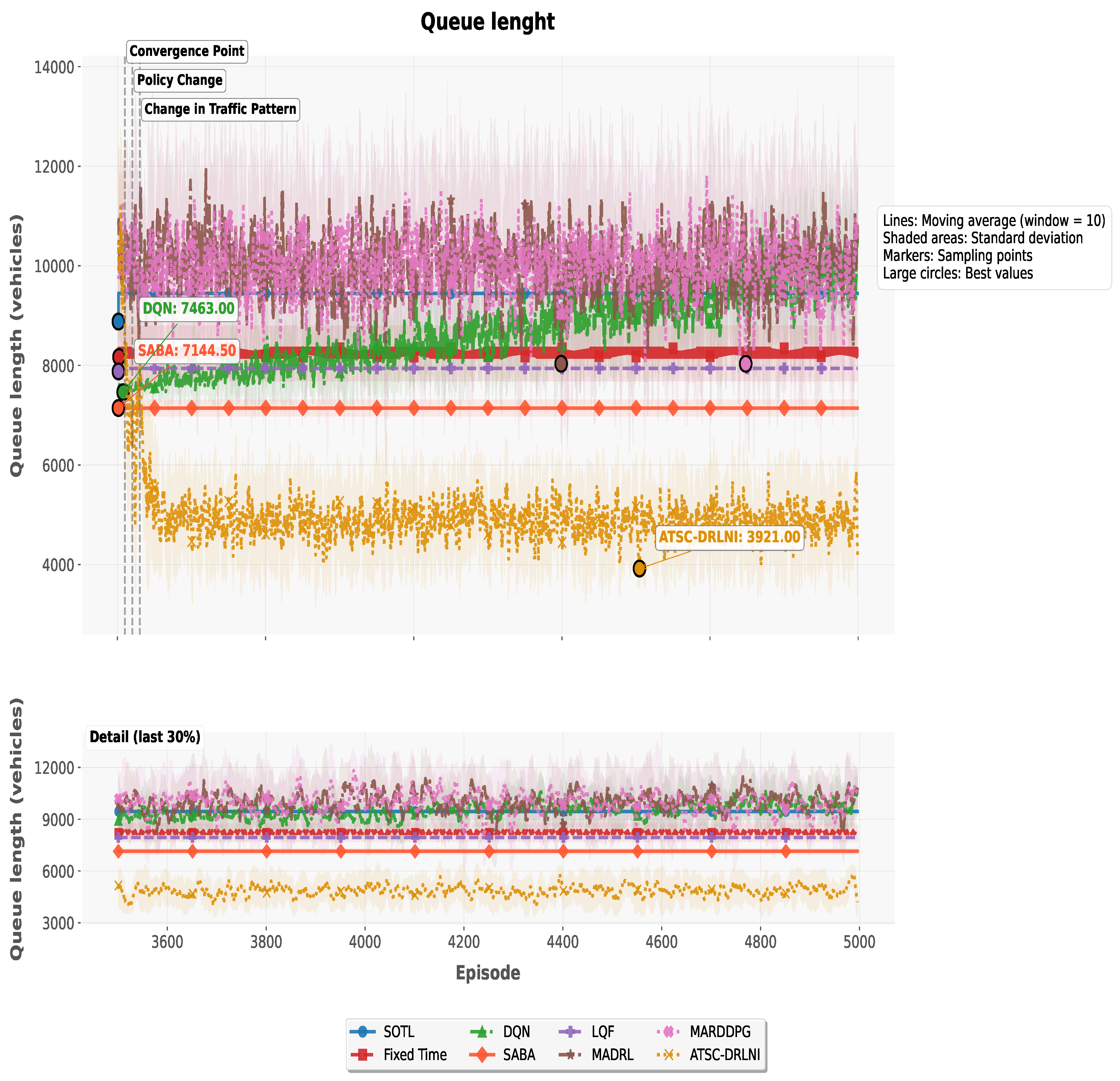

4.4. Simulation Results

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| IA | Artificial Intelligence |

| ML | Machine Lerning |

| DL | Deep Learning |

| DRL | Deep Reinforcement Learning |

| MDP | Markov Decision Process |

| ANN | Artificial Neural Network |

| NN | Neural Network |

| CNN | Convolutional Neural Network |

| DQN | Deep Q-Network |

| ER | Experience Replay |

| ReLU | Rectified Linear Unit |

| GN | Gaussian Noise |

| NI | Noise Injection |

| GAs | Genetic Algorithms |

| cGAs | Cellular Genetic Algorithms |

| FL | Fuzzy Logic |

| TD | Time Difference |

| A2C | Advantage Actor Critic |

| SUMO | Simulation of Urban Mobility |

| ATSC | Adaptive Traffic Signal Control |

| FT | Fixed-Time |

| SCOOT | Split Cycle Offset Optimization Technique |

| SOLT | Self-Organizing Traffic Lights |

| RHODES | RealTime, Hierarchical, Optimized, Distributed, and Effective System |

| SCATS | Sydney Coordinated Adaptive Traffic System |

| MARDDPG | Multi-Agent Recurrent Deep Deterministic Policy Gradient |

| MARL | Multi-Agent Reinforcement Learning |

| MADRL | Multi-Agent Deep Reinforcement Learning |

| SABA | Single-Agent Based Algorithm |

| LQF | Longest Queue First |

| cGAs | Cellular Genetic Algorithms |

| CI | Continuous Integration |

| IoT | Internet of Things |

| CAVs | Connected and Automated Vehicles |

| AR | Average Reward |

| AQL | Average Queue Length |

| AR | Average Reward |

| Flicker | Traffic Light Changes |

| HIL | Hardware-In-Loop |

References

- Thomson, I.; Bull, A. Urban traffic congestion: its economic and social causes and consequences. Cepal review 2006, 2002, 105–116. [Google Scholar] [CrossRef]

- Bull, A.; für Technische Zusammenarbeit, D.G.; for Latin America; U.N.E.C; the Caribbean. Traffic Congestion: The Problem and how to Deal with it, Cuadernos de la CEPAL, United Nations, Economic Commission for Latin America and the Caribbean, 2003.

- González-Aliste, P.; Derpich, I.; López, M. Reducing Urban Traffic Congestion via Charging Price. Sustainability 2023, 15. [Google Scholar] [CrossRef]

- Herath Bandara, S.J.; Thilakarathne, N. Economic and Public Health Impacts of Transportation-Driven Air Pollution in South Asia. Sustainability 2025, 17. [Google Scholar] [CrossRef]

- Krzyzanowski, M.; Kuna-Dibbert, B.; Schneider, J. Health effects of transport-related air pollution; WHO Regional Office Europe, 2005. [Google Scholar]

- Zhang, K.; Batterman, S. Air pollution and health risks due to vehicle traffic. Science of the Total Environment 2013, 450-451, 307–316. [Google Scholar] [CrossRef]

- Inrix. 2025 INRIX Global Traffic Scorecard. 2025. [Google Scholar]

- Cravioto, J.; Yamasue, E.; Okumura, H.; Ishihara, K.N. Road transport externalities in Mexico: Estimates and international comparisons. Transport Policy 2013, 30, 63–76. [Google Scholar] [CrossRef]

- Salazar-Carrillo, J.; Torres-Ruiz, M.; Davis, C.A.; Quintero, R.; Moreno-Ibarra, M.; Guzmán, G. Traffic Congestion Analysis Based on a Web-GIS and Data Mining of Traffic Events from Twitter. Sensors 2021, 21. [Google Scholar] [CrossRef]

- Thorne, E.; Chong Ling, E.; Phillips, W. Assessment of the economic costs of vehicle traffic congestion in the Caribbean: a case study of Trinidad and Tobago; Technical report; Naciones Unidas Comisión Económica para América Latina y el Caribe (CEPAL), 2024. [Google Scholar]

- Fleming, S. Traffic congestion cost the US economy nearly $87 billion in 2018, 2019. Accessed: 2025-11-15.

- Pop, M.D.; Pandey, J.; Ramasamy, V. Future Networks 2030: Challenges in Intelligent Transportation Systems. In Proceedings of the 2020 8th International Conference on Reliability, Infocom Technologies and Optimization (Trends and Future Directions) (ICRITO), 2020; pp. 898–902. [Google Scholar] [CrossRef]

- Goetz, A.R. Transport challenges in rapidly growing cities: is there a magic bullet? Transport Reviews 2019, 39, 701–705. [Google Scholar] [CrossRef]

- Congestion, M.U.T. Managing urban traffic congestion; OECD Publishing: Paris, France, 2007. [Google Scholar]

- Eom, M.; Kim, B.I. The traffic signal control problem for intersections: a review. European Transport Research Review Cited by: 131; All Open Access, Gold Open Access. 2020, 12. [Google Scholar] [CrossRef]

- Thunig, T.; Scheffler, R.; Strehler, M.; Nagel, K. Optimization and simulation of fixed-time traffic signal control in real-world applications. Proceedings of the Procedia Computer Science 2019, 151, 826–833. [Google Scholar] [CrossRef]

- Koonce, P.; Rodegerdts, L. Traffic signal timing manual. Technical report, United States; Federal Highway Administration, 2008. [Google Scholar]

- Qadri, S.S.S.M.; Gökçe, M.A.; Öner, E. State-of-art review of traffic signal control methods: challenges and opportunities. European Transport Research Review 2020, 12. [Google Scholar] [CrossRef]

- Serban, A.A.; Frunzete, M. Analysis and comparison between urban traffic control systems. In Proceedings of the 2022 IEEE 28th International Symposium for Design and Technology in Electronic Packaging (SIITME), 2022; pp. 92–95. [Google Scholar] [CrossRef]

- Cools, S.B.; Gershenson, C.; D’Hooghe, B. Self-Organizing Traffic Lights: A Realistic Simulation. In Advances in Applied Self-Organizing Systems; Springer London: London, 2013; pp. 45–55. [Google Scholar] [CrossRef]

- Cools, S.B.; Gershenson, C.; D’Hooghe, B. Self-Organizing Traffic Lights: A Realistic Simulation. Advanced Information and Knowledge Processing 2008, 41–50. [Google Scholar] [CrossRef]

- Ferreira, M.; Fernandes, R.; Conceição, H.; Viriyasitavat, W.; Tonguz, O.K. Self-organized traffic control. In Proceedings of the Proceedings of the Seventh ACM International Workshop on VehiculAr InterNETworking, New York, NY, USA, 2010; VANET ’10, pp. 85–90. [Google Scholar] [CrossRef]

- Mirchandani, P.; Wang, F.Y. RHODES to intelligent transportation systems. IEEE Intelligent Systems 2005, 20, 10–15. [Google Scholar] [CrossRef]

- Lowrie, P. SCATS, Sydney Co-ordinated Adaptive Traffic System: A traffic responsive method of controlling urban traffic; Roads and Traffic Authority of New South Wales. Traffic Control Section, 1990. [Google Scholar]

- Wang, T.; Cao, J.; Hussain, A. Adaptive Traffic Signal Control for large-scale scenario with Cooperative Group-based Multi-agent reinforcement learning. Transportation Research Part C: Emerging Technologies 2021, 125. [Google Scholar] [CrossRef]

- Tunc, I.; Yesilyurt, A.Y.; Soylemez, M.T. Different Fuzzy Logic Control Strategies for Traffic Signal Timing Control with State Inputs. Proceedings of the IFAC-PapersOnLine 2021, Vol. 54, 265–270. [Google Scholar] [CrossRef]

- Shaikh, P.W.; El-Abd, M.; Khanafer, M.; Gao, K. A Review on Swarm Intelligence and Evolutionary Algorithms for Solving the Traffic Signal Control Problem. IEEE Transactions on Intelligent Transportation Systems 2022, 23, 48–63. [Google Scholar] [CrossRef]

- Guo, Y.; Liu, Y.; Oerlemans, A.; Lao, S.; Wu, S.; Lew, M.S. Deep learning for visual understanding: A review. Neurocomputing;Recent Developments on Deep Big Vision 2016, 187, 27–48. [Google Scholar] [CrossRef]

- Kumar, R.; Sharma, N.V.K.; Chaurasiya, V.K. Adaptive traffic light control using deep reinforcement learning technique. Multimedia Tools and Applications 2024, 83, 13851–13872. [Google Scholar] [CrossRef]

- Bálint, K.; Tamás, T.; Tamás, B. Deep Reinforcement Learning based approach for Traffic Signal Control. Proceedings of the Transportation Research Procedia 2022, 62, 278–285. [Google Scholar] [CrossRef]

- Tan, J.; Yuan, Q.; Guo, W.; Xie, N.; Liu, F.; Wei, J.; Zhang, X. Deep Reinforcement Learning for Traffic Signal Control Model and Adaptation Study. Sensors 2022, 22. [Google Scholar] [CrossRef] [PubMed]

- Damadam, S.; Zourbakhsh, M.; Javidan, R.; Faroughi, A. An Intelligent IoT Based Traffic Light Management System: Deep Reinforcement Learning. Smart Cities 2022, 5, 1293–1311. [Google Scholar] [CrossRef]

- Ibrokhimov, B.; Kim, Y.J.; Kang, S. Biased Pressure: Cyclic Reinforcement Learning Model for Intelligent Traffic Signal Control. Sensors 2022, 22. [Google Scholar] [CrossRef]

- Wu, J.; Ran, Y.; Lou, Y.; Shu, L. CenLight: Centralized traffic grid signal optimization via action and state decomposition. IET Intelligent Transport Systems 2023, 17, 1247–1261. [Google Scholar] [CrossRef]

- Abdelghaffar, H.M.; Rakha, H.A. A Novel Decentralized Game-Theoretic Adaptive Traffic Signal Controller: Large-Scale Testing. Sensors 2019, 19. [Google Scholar] [CrossRef] [PubMed]

- Mo, Z.; Li, W.; Fu, Y.; Ruan, K.; Di, X. CVLight: Decentralized learning for adaptive traffic signal control with connected vehicles. Transportation Research Part C: Emerging Technologies 2022, 141. [Google Scholar] [CrossRef]

- Chow, A.H.; Sha, R.; Li, S. Centralised and decentralised signal timing optimisation approaches for network traffic control. Transportation Research Part C: Emerging Technologies 2020, 113, 108–123. [Google Scholar] [CrossRef]

- Guo, Q.; Li, L.; (Jeff) Ban, X. Urban traffic signal control with connected and automated vehicles: A survey. Transportation Research Part C: Emerging Technologies 2019, 101, 313–334. [Google Scholar] [CrossRef]

- Chu, T.; Wang, J.; Codeca, L.; Li, Z. Multi-agent deep reinforcement learning for large-scale traffic signal control. IEEE Transactions on Intelligent Transportation Systems 2020, 21, 1086–1095. [Google Scholar] [CrossRef]

- Zhao, H.; Dong, C.; Cao, J.; Chen, Q. A survey on deep reinforcement learning approaches for traffic signal control. Engineering Applications of Artificial Intelligence 2024, 133. [Google Scholar] [CrossRef]

- Aoki, S.; Rajkumar, R. Cyber Traffic Light: Safe Cooperation for Autonomous Vehicles at Dynamic Intersections. IEEE Transactions on Intelligent Transportation Systems 2022, 23, 22519–22534. [Google Scholar] [CrossRef]

- Wang, B.; He, Z.; Sheng, J.; Chen, Y. Deep Reinforcement Learning for Traffic Light Timing Optimization. Processes 2022, 10. [Google Scholar] [CrossRef]

- El-Qoraychy, F.Z.; Dridi, M.; Creput, J.C. Deep Reinforcement Learning for Vehicle Intersection Management in High-Dimensional Action Spaces. In Proceedings of the Proceedings of the 2024 7th International Conference on Machine Learning and Machine Intelligence (MLMI), New York, NY, USA, 2024; MLMI ’24, pp. 39–45. [Google Scholar] [CrossRef]

- Shi, Y.; Wang, Z.; LaClair, T.J.; Wang, C.R.; Shao, Y.; Yuan, J. A Novel Deep Reinforcement Learning Approach to Traffic Signal Control with Connected Vehicles. Applied Sciences 2023, 13. [Google Scholar] [CrossRef]

- Miletić, M.; Ivanjko, E.; Gregurić, M.; Kušić, K. A review of reinforcement learning applications in adaptive traffic signal control. IET Intelligent Transport Systems 2022, 16, 1269–1285. [Google Scholar] [CrossRef]

- Majstorović, Ž.; Tišljarić, L.; Ivanjko, E.; Carić, T. Urban Traffic Signal Control under Mixed Traffic Flows: Literature Review. Applied Sciences 2023, 13. [Google Scholar] [CrossRef]

- Zhong, D.; Boukerche, A. Traffic Signal Control Using Deep Reinforcement Learning with Multiple Resources of Rewards. In Proceedings of the Proceedings of the 16th ACM International Symposium on Performance Evaluation of Wireless Ad Hoc, Sensor, & Ubiquitous Networks, New York, NY, USA, 2019; PE-WASUN ’19, pp. 23–28. [Google Scholar] [CrossRef]

- Li, D.; Wu, J.; Xu, M.; Wang, Z.; Hu, K. Adaptive Traffic Signal Control Model on Intersections Based on Deep Reinforcement Learning. Journal of Advanced Transportation 2020, 2020. [Google Scholar] [CrossRef]

- Pan, T. Traffic Light Control with Reinforcement Learning. arXiv 2023, arXiv:cs. [Google Scholar] [CrossRef]

- Agrawal, A.; Paulus, R. Intelligent traffic light design and control in smart cities: A survey on techniques and methodologies. International Journal of Vehicle Information and Communication Systems 2020, 5, 436–481. [Google Scholar] [CrossRef]

- Li, Z.; Xu, C.; Zhang, G. A Deep Reinforcement Learning Approach for Traffic Signal Control Optimization. arXiv 2021, arXiv:2107.06115. [Google Scholar] [CrossRef]

- Nawar, M.; Fares, A.; Al-Sammak, A. Rainbow Deep Reinforcement Learning Agent for Improved Solution of the Traffic Congestion. In Proceedings of the 2019 7th International Japan-Africa Conference on Electronics, Communications, and Computations, (JAC-ECC), 2019; pp. 80–83. [Google Scholar] [CrossRef]

- Mirbakhsh, S.; Azizi, M. Adaptive traffic signal safety and efficiency improvement by multi objective deep reinforcement learning approach. arXiv 2024, arXiv:2408.00814. [Google Scholar] [CrossRef]

- Shao, J.; Zheng, C.; Chen, Y.; Huang, Y.; Zhang, R. MoveLight: Enhancing Traffic Signal Control through Movement-Centric Deep Reinforcement Learning. CoRR 2024. [Google Scholar] [CrossRef]

- Sattarzadeh, A.R.; Pathirana, P.N. Enhancing Adaptive Traffic Control Systems with Deep Reinforcement Learning and Graphical Models. In Proceedings of the 2024 IEEE International Conference on Future Machine Learning and Data Science (FMLDS), 2024; pp. 39–44. [Google Scholar] [CrossRef]

- Dhashyanth, N.; Hemchand, R.; Priyanga, R.; Soorya, S.; Sudheesh, P. Adaptive Traffic Control Using Deep Reinforcement Learning. In Proceedings of the 2024 IEEE Pune Section International Conference (PuneCon), 2024; pp. 1–8. [Google Scholar] [CrossRef]

- Ma, D.; Zhou, B.; Song, X.; Dai, H. A Deep Reinforcement Learning Approach to Traffic Signal Control With Temporal Traffic Pattern Mining. IEEE Transactions on Intelligent Transportation Systems 2022, 23, 11789–11800. [Google Scholar] [CrossRef]

- Lecun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. Proceedings of the IEEE 1998, 86, 2278–2324. [Google Scholar] [CrossRef]

- Zhang, A.; Lipton, Z.C.; Li, M.; Smola, A.J. Dive into Deep Learning; Cambridge University Press, 2023; Available online: https://D2L.ai.

- Abiodun, O.I.; Jantan, A.; Omolara, A.E.; Dada, K.V.; Mohamed, N.A.; Arshad, H. State-of-the-art in artificial neural network applications: A survey. Heliyon 2018, 4. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press, 2016; Available online: http://www.deeplearningbook.org.

- François-Lavet, V.; Henderson, P.; Islam, R.; Bellemare, M.G.; Pineau, J. An introduction to deep reinforcement learning. Foundations and Trends in Machine Learning 2018, 11, 219–354. [Google Scholar] [CrossRef]

- Nian, R.; Liu, J.; Huang, B. A review On reinforcement learning: Introduction and applications in industrial process control. Computers & Chemical Engineering 2020, 139, 106886. [Google Scholar] [CrossRef]

- Mahadevan, S. Average reward reinforcement learning: Foundations, algorithms, and empirical results. Machine learning 1996, 22, 159–195. [Google Scholar] [CrossRef]

- Naeem, M.; Rizvi, S.T.H.; Coronato, A. A Gentle Introduction to Reinforcement Learning and its Application in Different Fields. IEEE Access 2020, 8, 209320–209344. [Google Scholar] [CrossRef]

- Aubret, A.; Matignon, L.; Hassas, S. An Information-Theoretic Perspective on Intrinsic Motivation in Reinforcement Learning: A Survey. Entropy 2023, 25. [Google Scholar] [CrossRef] [PubMed]

- Hariharan, N.; Anand, P.G. A Brief Study of Deep Reinforcement Learning with Epsilon-Greedy Exploration. International Journal of Computing and Digital Systems 2022, 11, 541–551. [Google Scholar] [CrossRef] [PubMed]

- Dabney, W.; Ostrovski, G.; Barreto, A. Temporally-Extended ε-Greedy Exploration. In Proceedings of the International Conference on Learning Representations, 2021. [Google Scholar] [CrossRef]

- Konda, V.; Tsitsiklis, J. Actor-Critic Algorithms. In Proceedings of the Advances in Neural Information Processing Systems; Solla, S., Leen, T., Müller, K., Eds.; MIT Press, 1999; Vol. 12. [Google Scholar] [CrossRef]

- Grondman, I.; Busoniu, L.; Lopes, G.A.D.; Babuška, R. A survey of actor-critic reinforcement learning: Standard and natural policy gradients. IEEE Transactions on Systems, Man and Cybernetics Part C: Applications and Reviews 2012, 42, 1291–1307. [Google Scholar] [CrossRef]

- Wang, K.; Liu, A.; Lin, B. Single-Loop Deep Actor-Critic for Constrained Reinforcement Learning With Provable Convergence. IEEE Transactions on Signal Processing 2024, 72, 4871–4887. [Google Scholar] [CrossRef]

- Zur, R.M.; Jiang, Y.; Pesce, L.L.; Drukker, K. Noise injection for training artificial neural networks: A comparison with weight decay and early stopping. Medical Physics 2009, 36, 4810–4818. [Google Scholar] [CrossRef]

- Bishop, C.M. Training with Noise is Equivalent to Tikhonov Regularization. Neural Computation 1995, 7, 108–116. [Google Scholar] [CrossRef]

- Yang, T.; Fan, W. Enhancing Robustness of Deep Reinforcement Learning Based Adaptive Traffic Signal Controllers in Mixed Traffic Environments Through Data Fusion and Multi-Discrete Actions. IEEE Transactions on Intelligent Transportation Systems 2024, 25, 14196–14208. [Google Scholar] [CrossRef]

- Friesen, M.; Tan, T.; Jasperneite, J.; Wang, J. Application of Multi-Agent Deep Reinforcement Learning to Optimize Real-World Traffic Signal Controls. Authorea Preprints 2021. [Google Scholar] [CrossRef]

| Symbol | Meaning |

|---|---|

| s | State |

| a | Action |

| Reward | |

| E | Environment |

| A | Agent |

| N | North |

| S | South |

| E | East |

| W | West |

| QL | Deep Q-Network (DQN) |

| T | Time frame |

| Green Light | |

| Yellow Light | |

| Red Light | |

| conv | Convolutional neural network |

| Parameter | Value |

|---|---|

| Number of lanes | 10 |

| Traffic Flow | Random trips |

| Simulation platform | SUMO |

| Yellow phase duration | 5 s |

| Initial Green phase duration | 30 s |

| Distance D to intersection to detect vehicles | 800 m |

| Value of | 5,000 vehicles |

| Value of | 100 flicker |

| Value of | 0.2 flicker |

| Parameter | Description |

|---|---|

| Batch size | Number of samples used at each iteration to determine the NN error. |

| Neural network layers | Number of ANN layer to identify patterns or characteristics of the input data. |

| Hidden layers | Number of intermidate layer to process the information between input and output. |

| Noise injection layer | Layer to introduce gaussian noise to improve model generalization. |

| Activation function | Non linear function that determines the output of each neuron. ReLU is the most used because of its high computational efficiency and capactiy to mitigate the gradient vanishing problem. |

| Learning rate | Rate controlling the size of weight adjustment during NN training, based of previous reward values. |

| Discount factor | Factor that determines the importance of future rewards in comparison with past rewards. |

| Optimizer | Algorithm to adjust NN parameters and to control convergence speed during training. |

| Episode | Total number of simulations episodes to be executed during training. |

| Epsilon starting | Initial value of exploration parameter for the -greedy strategy. |

| Epsilon ending | Final value of exploration parameter for the -greedy strategy, after exponential decay. |

| DQN Model Simulation Parametes | |

|---|---|

| Parameter | Value |

| Batch size | 32 |

| Neural network layers | 4 |

| Number of Hidden Layers | 3 |

| Noise injection layers (Gaussian) | 1 |

| Activation function | ReLU |

| Learning rate | |

| Discount factor | 0.99 MSE |

| Optimizer | Adam |

| Episodes | 5,000 |

| Epsilon starting | 1.0 |

| Epsilon ending | 0.05 |

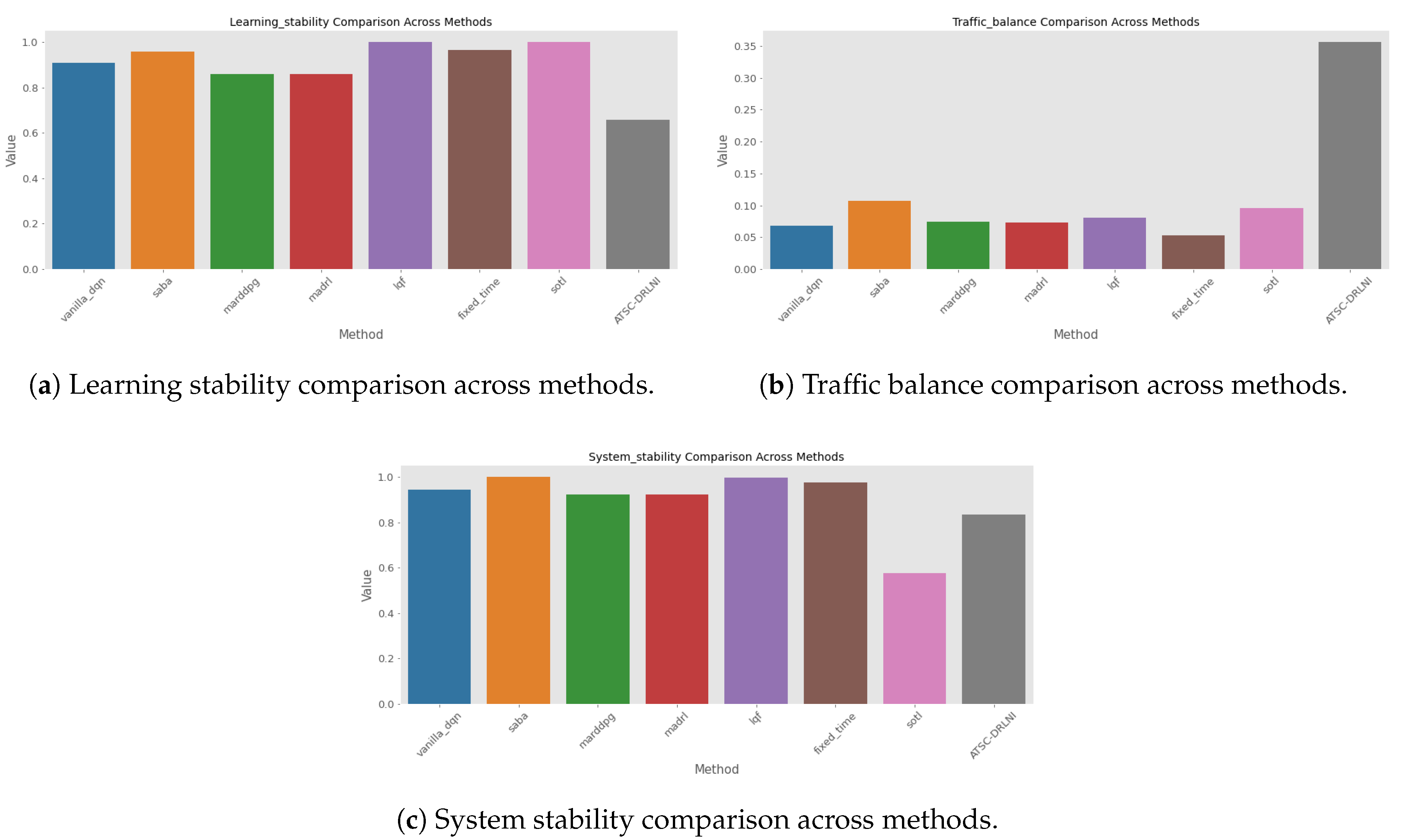

| Methods | ||||||||

|---|---|---|---|---|---|---|---|---|

| Metrics | vanilla_dqn | saba | marddpg | madrl | lqf | fixed_time | sotl | ATSC-DRLNI |

| AQL_mean | 4341.726 | 3572.250 | 4994.691 | 5004.292 | 3970.439 | 4117.582 | 4723.433 | 2497.479 |

| AQL_std | 596.119 | 164.266 | 983.944 | 993.988 | 6.435 | 242.440 | 55.359 | 675.804 |

| AQL_final | 4708.643 | 3572.250 | 4992.671 | 4986.901 | 3970.500 | 4117.548 | 4724.000 | 2426.285 |

| AWT_mean | 2170.863 | 1786.125 | 2497.345 | 2502.146 | 1985.220 | 2058.791 | 2361.716 | 1248.739 |

| AWT_std | 298.059 | 82.133 | 491.972 | 496.994 | 3.217 | 121.220 | 27.680 | 337.902 |

| Reward_mean | -3.661 | -3.020 | -4.103 | -4.112 | -3.090 | -3.472 | -3.500 | -1.902 |

| Reward_std | 0.368 | 0.130 | 0.577 | 0.583 | 0.002 | 0.118 | 0.025 | 0.648 |

| Reward_final | -3.912 | -3.020 | -4.100 | -4.101 | -3.090 | -3.472 | -3.500 | -1.874 |

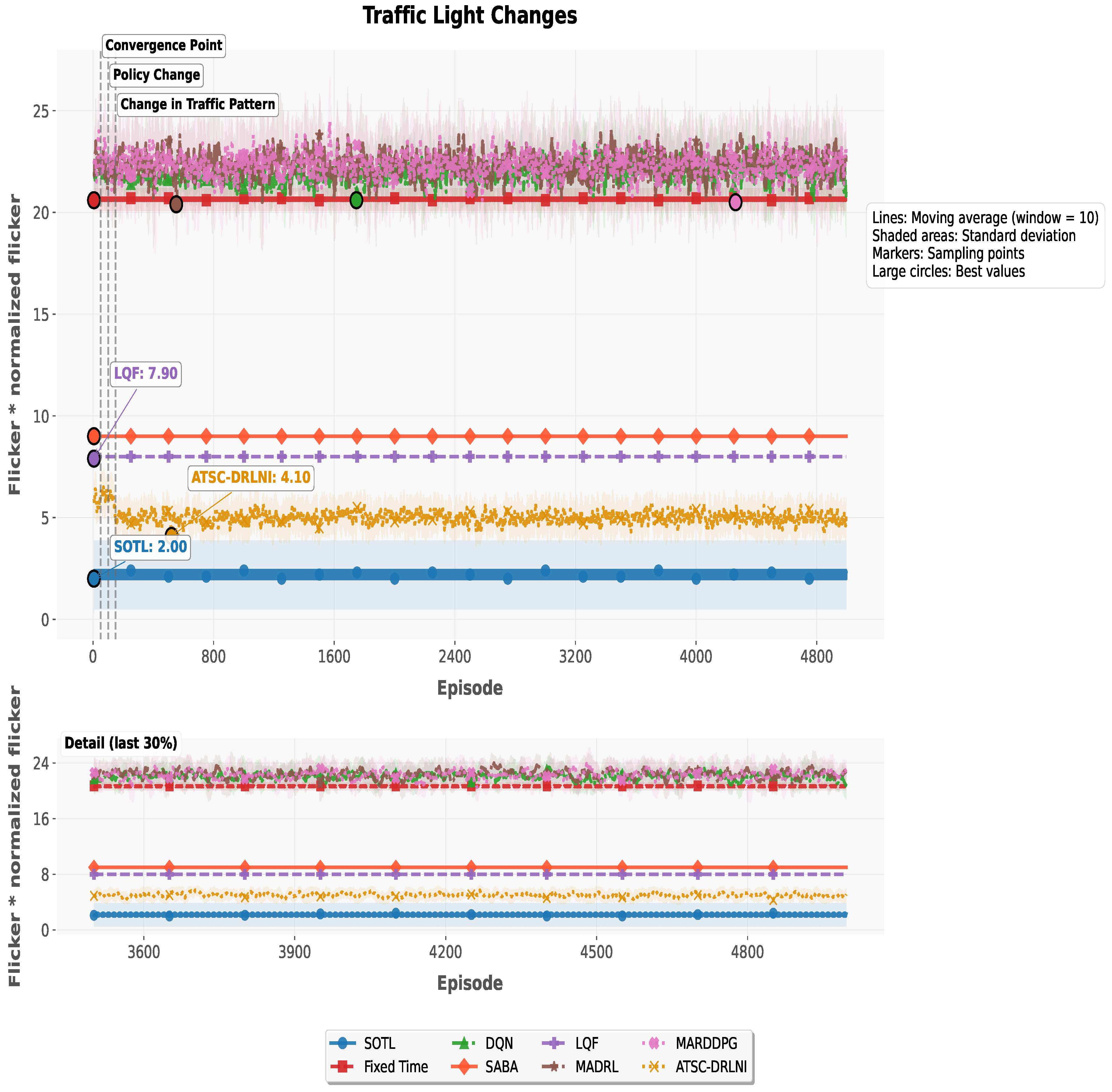

| Flicker_mean | 21.885 | 9.000 | 22.328 | 22.340 | 8.000 | 20.666 | 0.001 | 5.024 |

| Flicker_std | 1.199 | 0.000 | 1.738 | 1.757 | 0.014 | 0.472 | 0.042 | 0.832 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).