Submitted:

11 February 2026

Posted:

11 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. High Level Overview

-

Input Encoders

- Text Tokenizer / Embeddings

- Vision Encoder (ViT, CNN)

-

Core Network (Transformer Stack)

-

Transformer Blocks (repeated N times)

-

Multi-Head Attention

- QKV Projections

- Attention Score Computation

- Softmax

- Weighted Sum (Attention Output)

-

Feed-Forward Network (MLP)

- Linear Projection (Up)

- Activation (GELU / SwiGLU)

- Linear Projection (Down)

- Layer Normalization

- Residual Connections

-

-

-

Output Heads

- Language Modeling Head

- Classification / Regression Heads

1.2. Training vs Inference

| Component | Training | Inference |

|---|---|---|

| Tokenization / Input Encoding | ||

| Embedding Layers | ||

| Self-Attention | ||

| Cross-Attention (Multimodal) | ||

| Feed-Forward Networks (MLP) | ||

| Layer Normalization | ||

| Residual Connections | ||

| Output Projection / LM Head | ||

| Loss Computation | – | |

| Backpropagation | – | |

| Optimizer Updates | – | |

| Learning Rate Scheduling | – | |

| Regularization Objectives | – | |

| Key–Value Cache | – | |

| Decoding / Sampling Strategy | – |

1.3. The Transformer Backbone: Architecture and Components

1.3.1. Core Computational Blocks

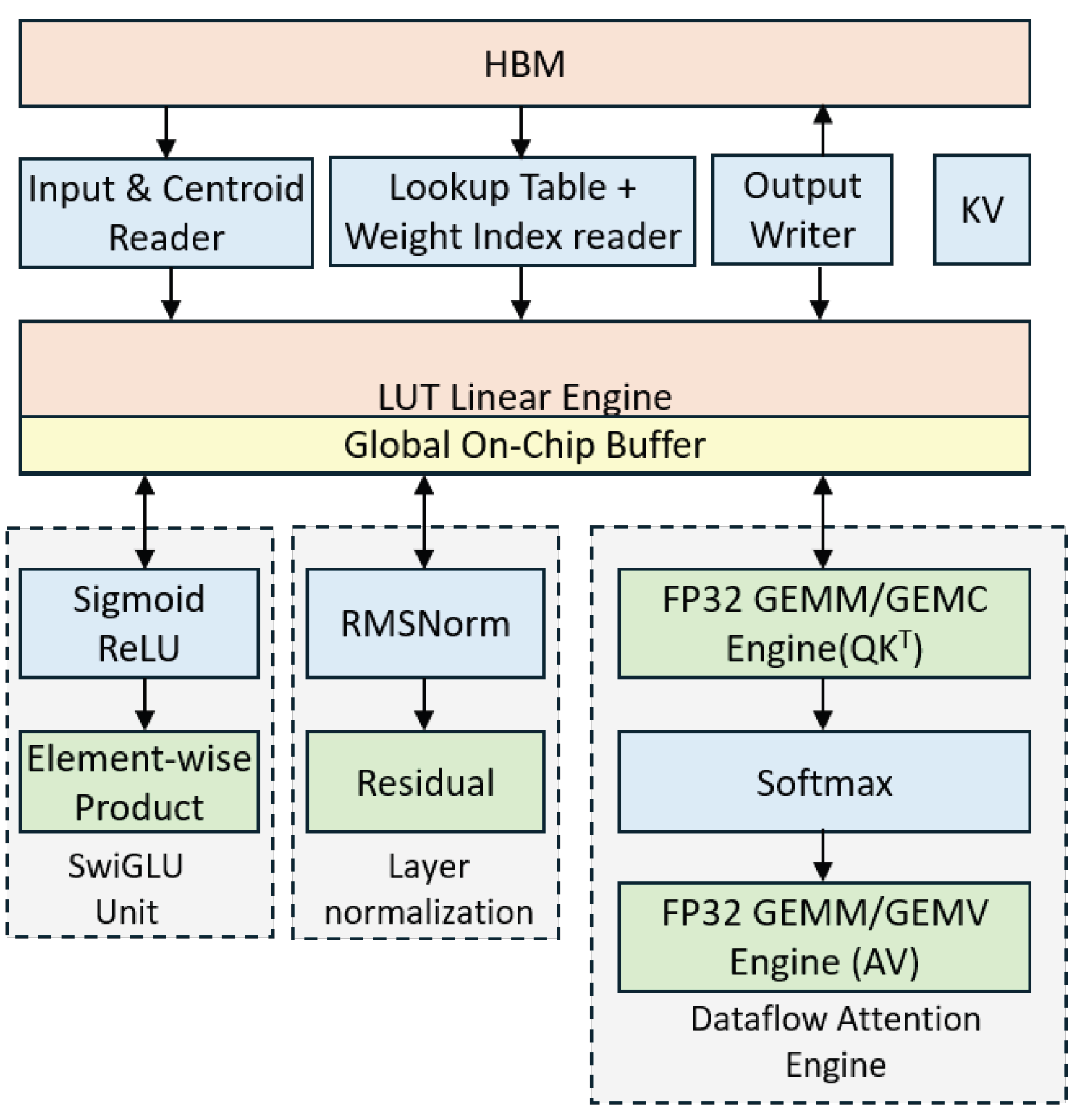

- Input/Output Embedding: Converting discrete tokens into high-dimensional vectors. In hardware, this is typically a Large Look-Up Table (LUT) stored in high-bandwidth memory (HBM).

- Multi-Head Attention (MHA): The most computationally intensive part, involving large-scale matrix-matrix multiplications (GEMM) to calculate the relationship between different parts of the input sequence.

- Position-wise Feed-Forward Network (FFN): A set of fully connected layers (MLPs) that process each token independently.

- Normalization and Activation: Layers like LayerNorm or RMSNorm, and non-linearities such as GeLU or SwiGLU, which require specialized arithmetic units beyond simple adders.

1.3.2. The Attention Mechanism

- (Query matrix): represents the set of queries, where each query encodes what information is being sought. In self-attention, queries correspond to the current tokens attending to other tokens.

- (Key matrix): represents the set of keys, where each key encodes what information is available. Keys are compared with queries to compute attention scores.

- (Value matrix): represents the set of values, containing the actual information to be aggregated. Values are weighted by the attention scores to produce the final output.

1.4. Why Efficient Hardware Implementation Is Critical

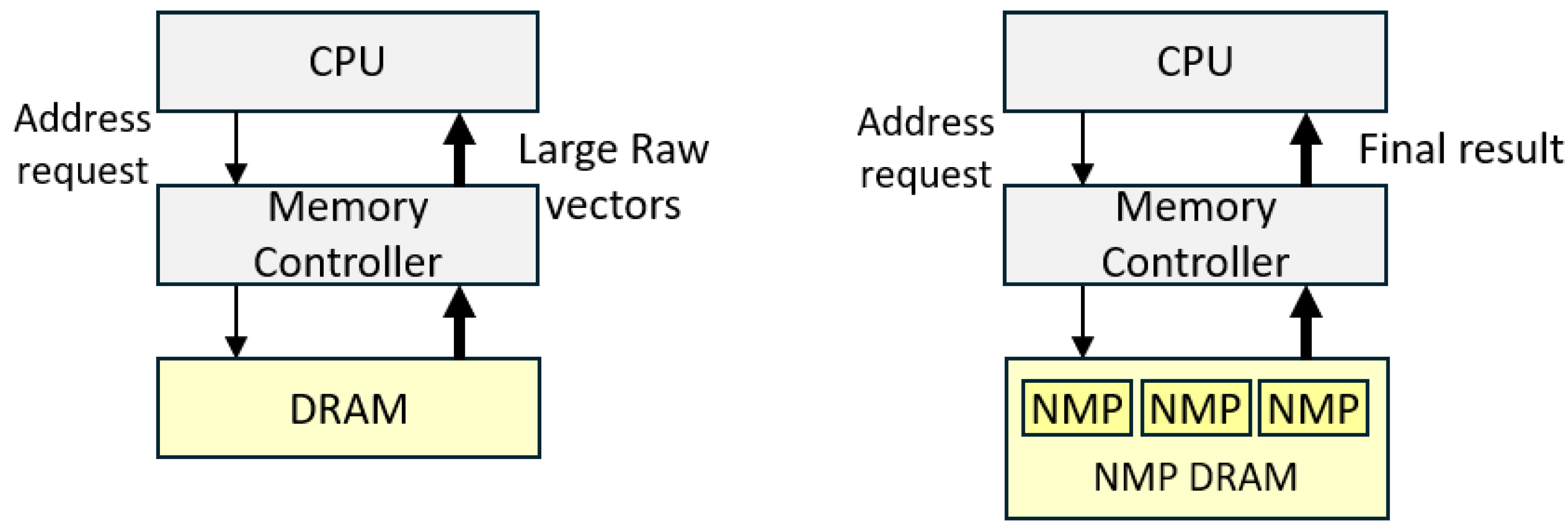

- The Memory Wall: LLM inference is often memory-bandwidth bound rather than compute-bound. Moving weights from external DRAM to the processing elements (PEs) consumes significantly more energy than the actual computation. Custom hardware allows for **Near-Memory Computing** and advanced **Weight Compression (INT4/FP8)

- Latency for Real-time Interaction: Applications like real-time translation and autonomous agents require "sub-perceptual" latency (often <10ms per token). Standard CPU/GPU pipelines introduce overhead that custom RTL pipelines can eliminate through asynchronous data transfer and fused operations.

- Training a single state-of-the-art LLM can consume over . By implementing components like the Softmax or FFN in dedicated silicon, we can achieve up to a 50× improvement in energy efficiency (TOPS/W) compared to general-purpose silicon.

- A description of the main components and the hierarchy of the Transformer networks

- A tutorial on how the main components of the transformer networks are implemented in real hardware

- A short survey on the most recent and the most efficient implementation of each component in hardware.

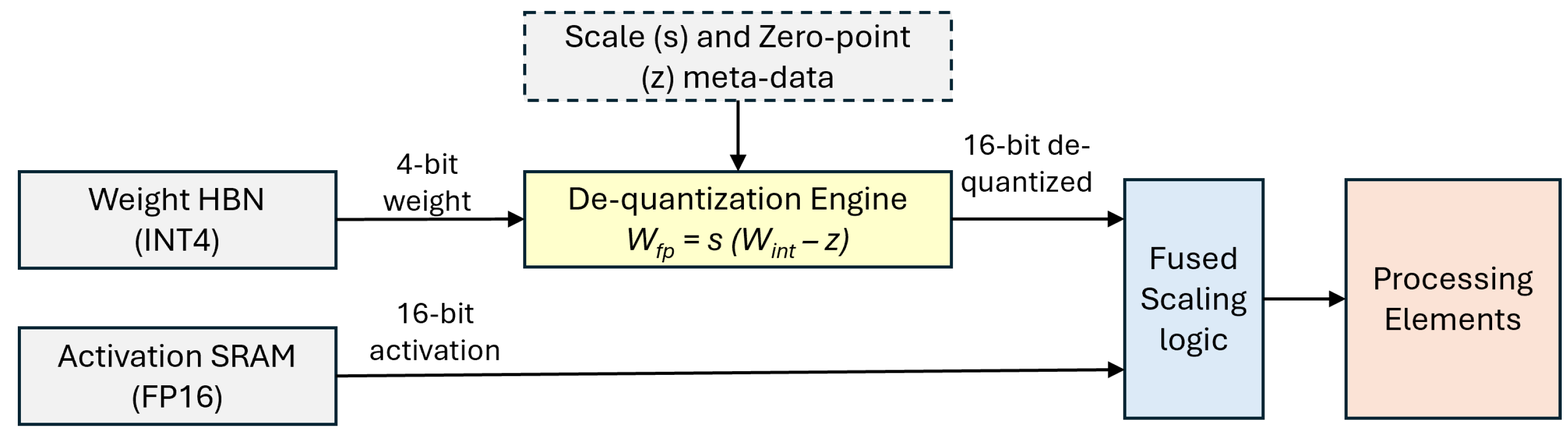

2. Hardware De-Quantization and Numeric Formats

2.1. The De-quantization Engine

2.2. Comparison of Modern Numeric Formats

| Metric | INT4 | FP8 | MXFP8 |

|---|---|---|---|

| Precision Bits | 4 | 8 | 8 (shared exp) |

| Compression Ratio | |||

| Hardware Logic | Simple LUT/Shift | Standard FMA | Specialized MX-Unit |

| Dynamic Range | Low | Medium | High |

| Scaling Overhead | Per-tensor/block | Per-tensor | Per-vector (Block) |

| Relative Efficiency |

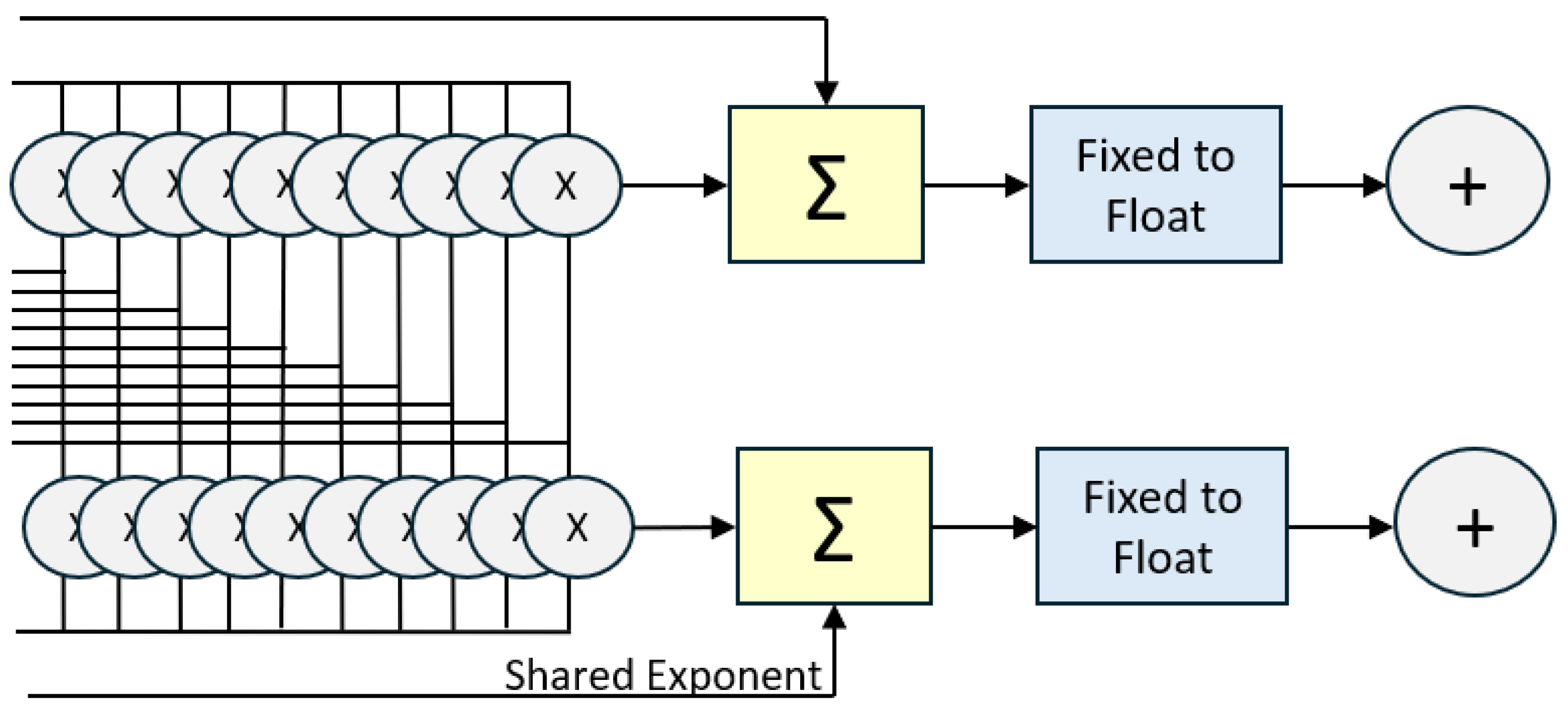

2.3. The Rise of Microscaling (MX) Formats

3. Input Encoder and Embedding Layer Hardware

3.1. Token Embedding: The Memory Bandwidth Challenge

3.1.1. Near-Memory Processing (NMP) and AxDIMM

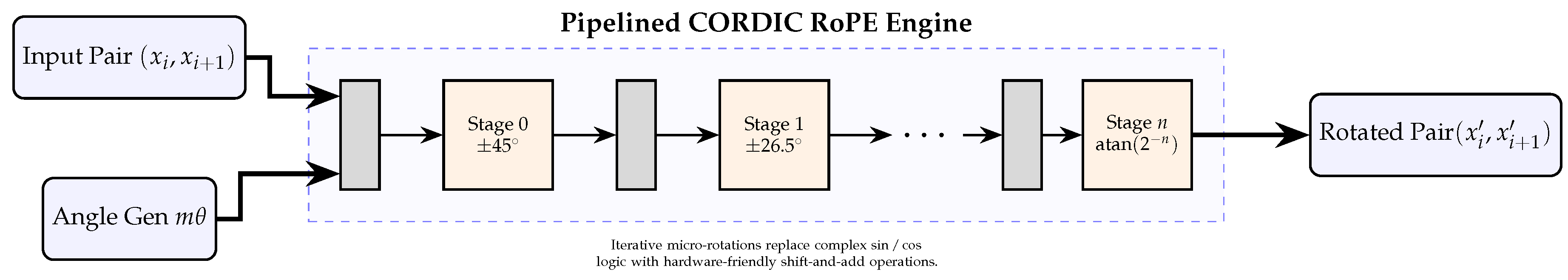

3.2. Positional Encoding: Implementing RoPE in Silicon

3.3. Output Decoders and De-Quantization

3.3.1. Hardware-Aware De-Quantization

4. Multi-Head Attention (MHA) Architecture

4.1. Mathematical Formulation

4.2. Hardware Operational Flow

- 1.

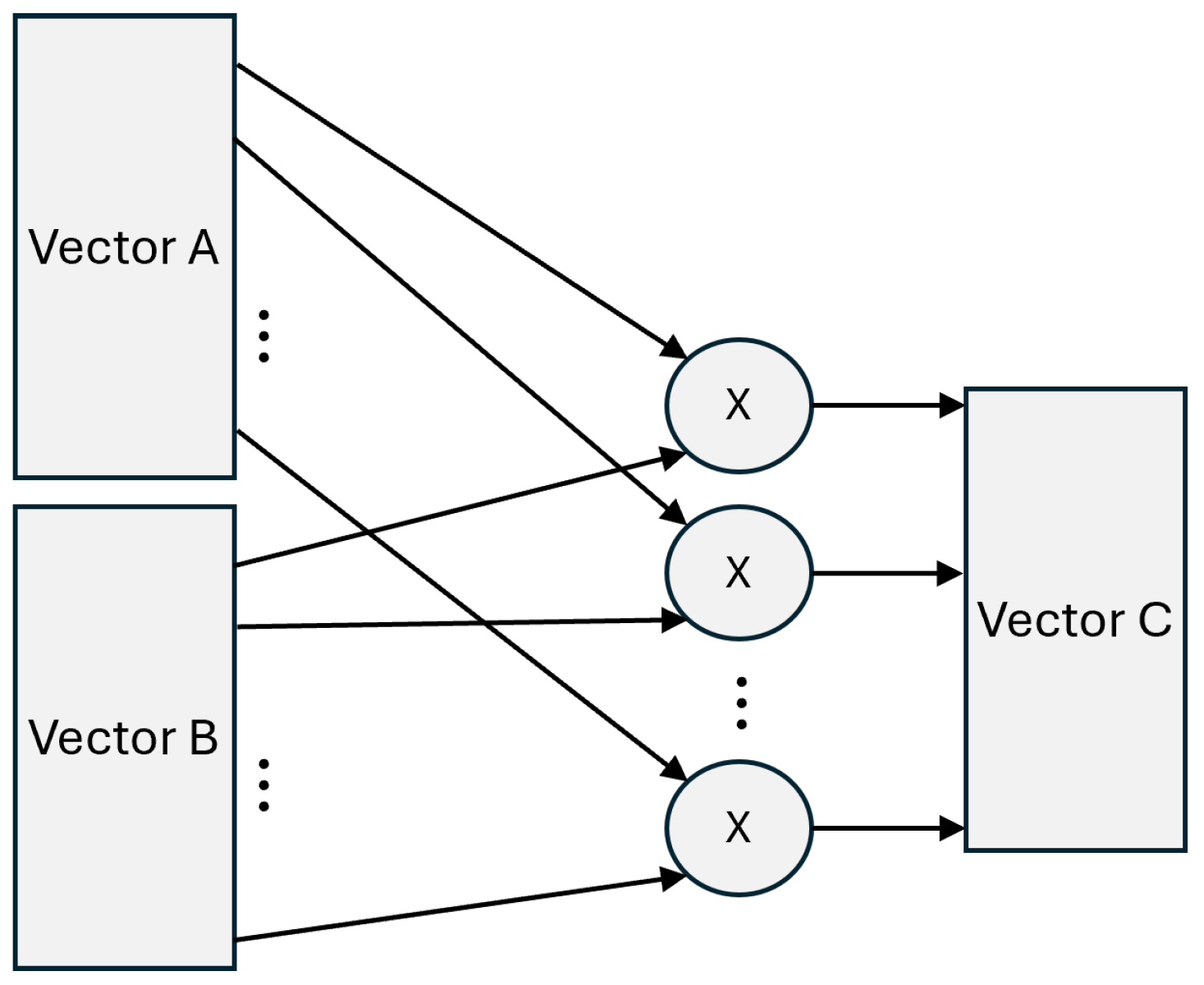

- Linear Projection: Input vectors are multiplied by weight matrices (). In hardware, this is implemented using Systolic Arrays or General Matrix Multiply (GEMM) engines optimized for the target precision (e.g., FP16, INT8, or Microscaling formats like MXFP8 [4]).

- 2.

- MatMul Score Calculation (): This stage computes the similarity scores. For long sequences, this creates a massive intermediate "Score Matrix." Hardware accelerators often use tiling strategies to keep these scores in on-chip SRAM to avoid costly DRAM access.

- 3.

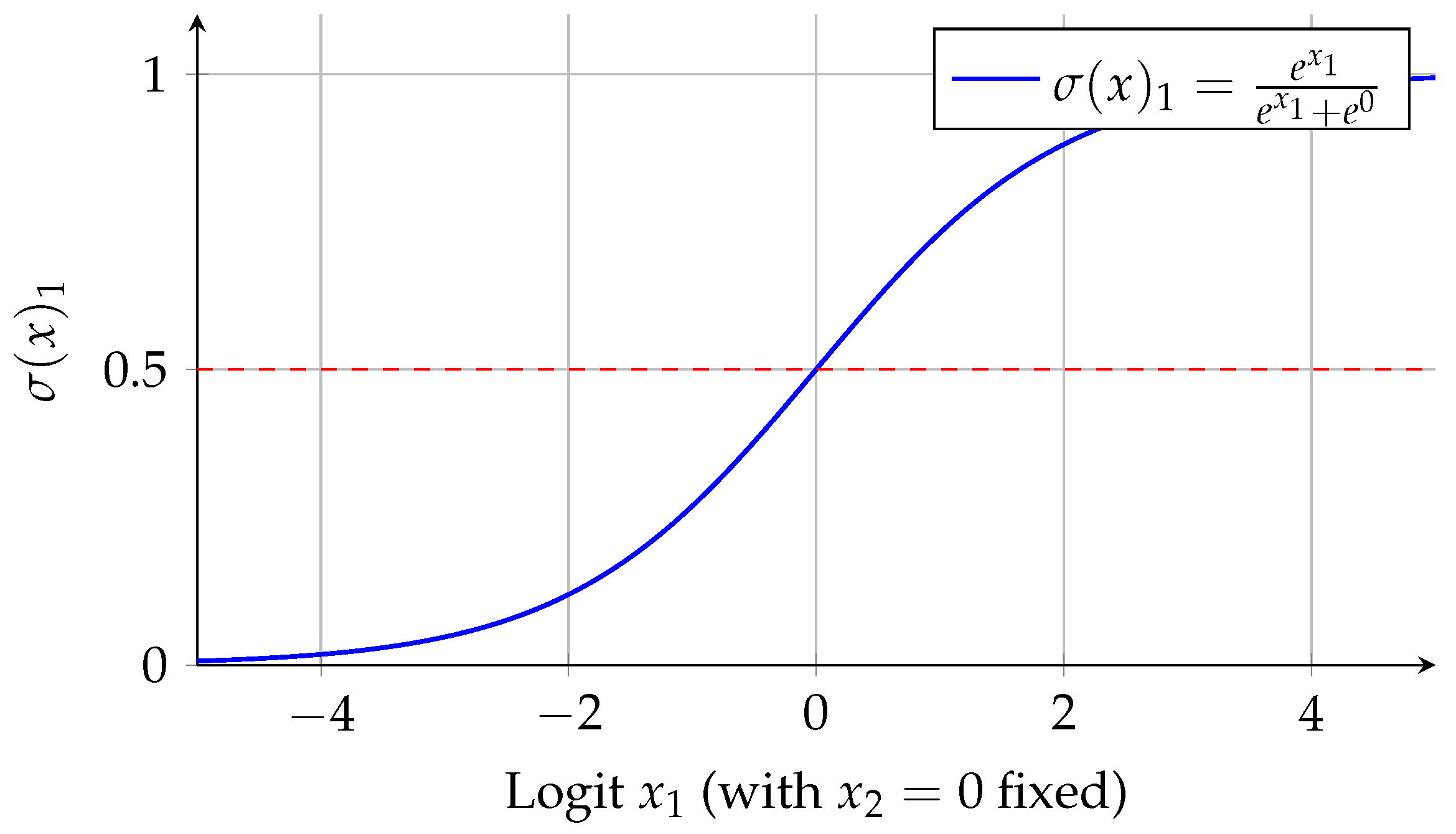

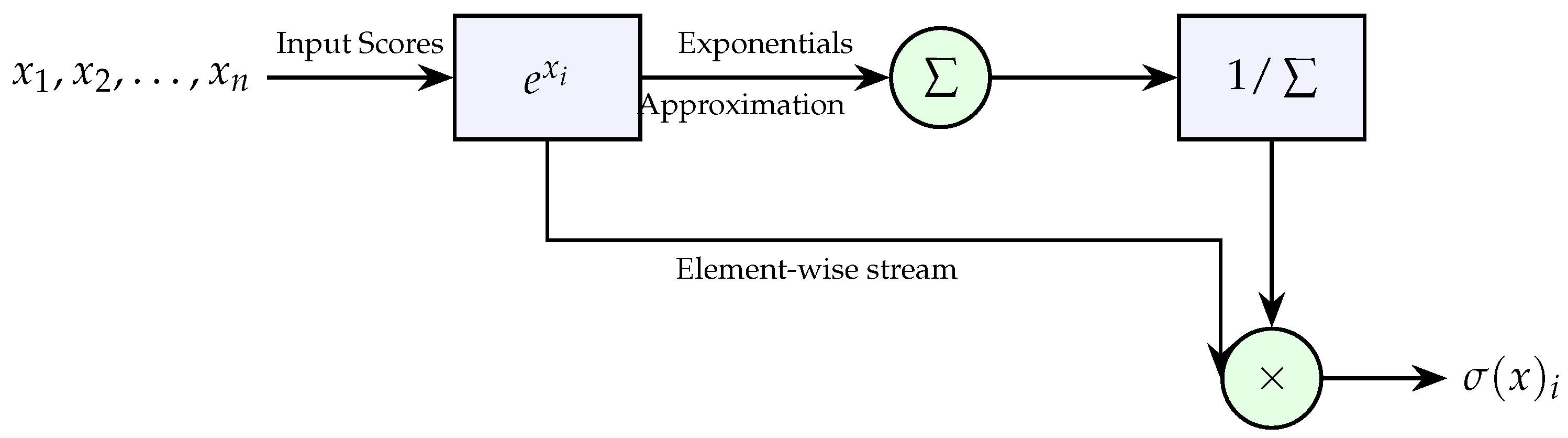

- Softmax Normalization: This is a non-linear operation involving exponentiation and division. Digital implementation typically utilizes CORDIC algorithms or Taylor series expansions, often combined with a "Streaming Softmax" approach to reduce latency [8].

- 4.

- Weighted Sum (): The normalized scores are used to weight the Value vectors. This requires another GEMM operation, often pipelined directly after the Softmax unit.

4.3. Hardware Design of Linear Projections

4.3.1. Architectural Implementation: Systolic Arrays

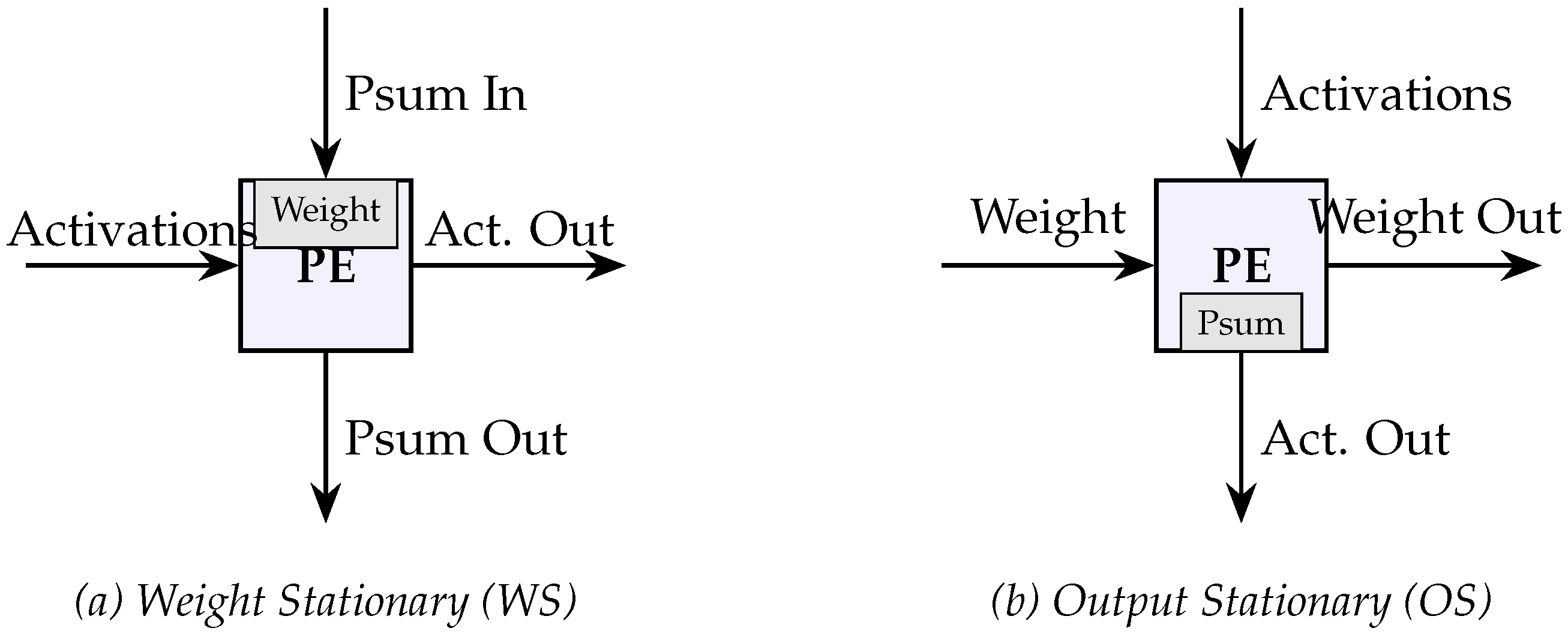

- Weight Stationary (WS): In a Weight Stationary architecture, each processing element (PE) pre-loads a weight value and stores it in its internal register for the duration of a compute cycle. Input activations are then streamed horizontally through the array, while partial sums (psums) are accumulated vertically. This is ideal for scenarios where the weight matrix is significantly larger than the activations. It is best for Transformer encoding (prefill) stages where the weight matrix is reused across many tokens. The main advantage is that it minimizes the energy-intensive process of fetching weights from the Global Buffer (GB) or DRAM.

- Output Stationary (OS): In an Output Stationary architecture, the weight and activation data move through the array, but the partial sums remain fixed in the PE’s accumulator until the final output value is fully computed. Since partial sums remain in the PEs until the final output is computed, this leads to reduced traffic associated with writing intermediate results back to the scratchpad memory. This option is best for transformer decoding (token generation) or scenarios with small batch sizes where minimizing the write-back of intermediate partial sums is critical. The main advantage is that it reduces the traffic on the accumulation bus and the number of scratchpad memory writes.

4.3.2. Low-Precision and Microscaling Formats

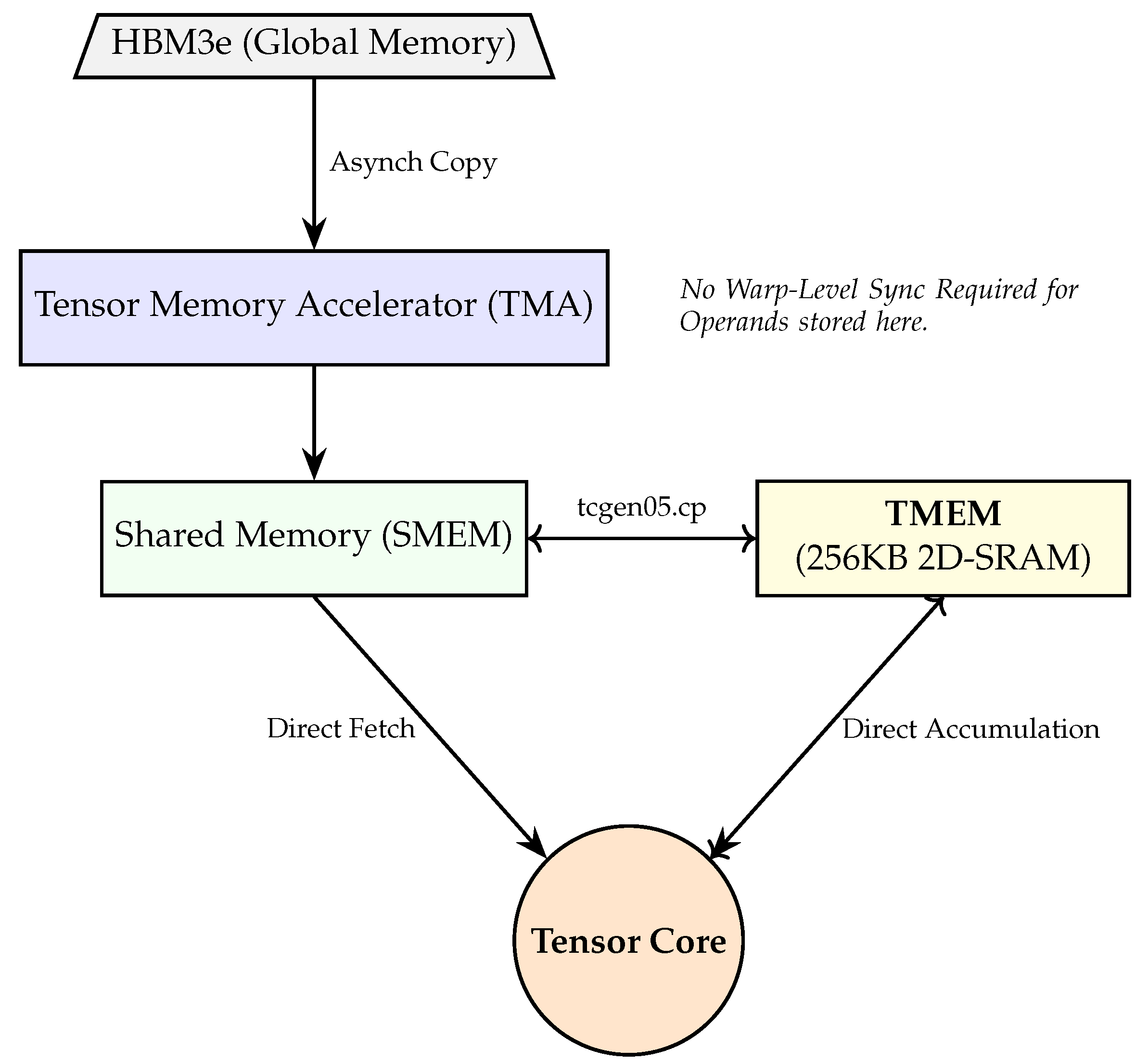

4.3.3. NVIDIA Blackwell (B200) Architecture

- Native FP4 Support: The introduction of 4-bit floating point (FP4) doubles the throughput of linear projections compared to FP8, reaching up to 20 PetaFLOPS of AI performance per GPU [9].

- Second-Generation Transformer Engine: This logic dynamically manages precision at a per-layer or per-tensor level, ensuring that the linear projection units operate at the lowest possible precision without diverging.

- Tensor Memory (TMEM): A dedicated memory pathway that allows Tensor Cores to fetch operands directly from shared memory without warp-level synchronization, reducing idle cycles in the pipeline [10].

- Reduced Register Pressure: Large accumulators reside in TMEM rather than the Register File (RF), freeing up RF space for other complex operations like LayerNorm or RoPE [13].

- Higher Bandwidth: TMEM provides an effective bandwidth of ∼100,TB/s within the SM, which is 2.5× the speed of the aggregate L1/Shared Memory pathway.

- Synchronization Latency Hiding: Because operands are fetched directly from TMEM, the pipeline does not stall for warp-level coordination, reducing the time-to-first-token (TTFT) by up to for long-context sequences [12].

| TMEM |

| (256KB 2D-SRAM) |

4.3.4. Processing-in-Memory (PIM)

4.4. Attention Score Calculation MatMul:

- Single-Pass Pipelining: For decoding stages where the input is a single query vector, algorithms like SwiftKV Attention enable a per-token pipelined architecture. This allows the hardware to process KV cache entries exactly once without storing intermediate scores, significantly reducing latency on edge accelerators [16].

- Block-Sparse Approximations: Recent implementations such as SALE utilize low-bit quantized query-key products to estimate the importance of token pairs. Only the "sink" and "local" regions of the attention map—which contain the highest scores—are computed at high precision, enabling a speedup for 64K context windows [17].

4.4.1. NVIDIA Blackwell (B200) Tensor Memory

4.4.2. SwiftKV-MHA Accelerator

4.4.3. WGMMA Instructions

4.5. FPGAs

4.6. Softmax Normalization in Hardware

| Implementation | Complexity | Latency | Efficiency |

|---|---|---|---|

| Exaxt | High | High | Low |

| CORDIC | Low | High | Medium |

| LUT | Medium | Low | High |

- Pass 1: Exponential Calculation: Input logits are processed through Non-Linear Units (NLUs)—typically utilizing Look-Up Tables (LUTs), CORDIC, or piecewise linear approximations—to compute . To prevent numerical overflow, hardware designs often subtract the maximum logit () before exponentiation.

- Pass 2: Summation: The exponential results are streamed into a high-precision accumulator to calculate the global denominator, . This pass acts as a synchronization barrier, as the final probability cannot be determined until the sum of the entire vector is finalized.

- Pass 3: Normalization/Division: Each stored or recomputed exponential value is normalized by the global sum. In digital logic, this is often implemented as a multiplication by the reciprocal () to improve throughput, as hardware dividers are significantly more area-intensive than multipliers.

4.6.1. Approximation Strategies for the Exponential Function

- CORDIC-based Implementation: The COordinate Rotation Digital Computer (CORDIC) algorithm is a versatile iterative method that computes hyperbolic and transcendental functions using only shifts and additions. To compute , CORDIC operates in the hyperbolic vectoring mode. By decomposing the exponent into a series of predefined elementary rotation angles, the hardware performs a sequence of iterative micro-rotations. CORDIC is highly area-efficient as it eliminates the need for multipliers or large memory blocks (BRAM/SRAM). However, it introduces significant latency due to its iterative nature (typically n cycles for n-bit precision) [18].

- LUT-based Exponential Units: As of 2026, Look-Up Table (LUT) approaches are the preferred standard for high-throughput LLM accelerators like the NVIDIA Blackwell (B200) and specialized FPGAs.

- Bipartite LUTs: To further reduce memory footprint, bipartite LUTs use two smaller tables to store the most significant and least significant parts of the approximation, which are then combined via a single addition [19].

- Piecewise Linear Approximation (PLA): The exponential curve is divided into segments, and each segment is approximated by a linear equation . The coefficients a and b are stored in a small LUT.

4.6.2. Log-Domain Division and Bipartite LUTs

4.6.3. Streaming (Online) Softmax

4.6.4. Skip Softmax and Sparsity

4.6.5. Base-2 Transformation:

4.6.6. Fusion with Attention:

4.6.7. The E2Softmax Architecture

5. Feed-Forward Network (FFN) Hardware Design

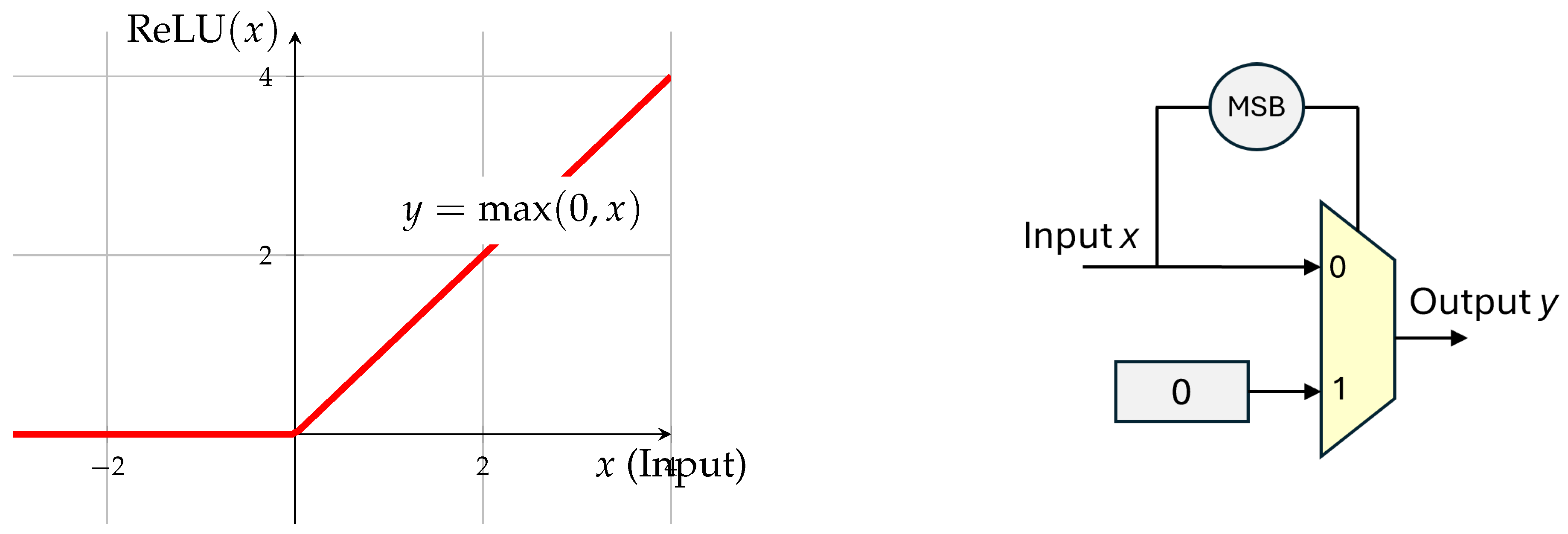

5.1. Architectural Shift: From ReLU to SwiGLU

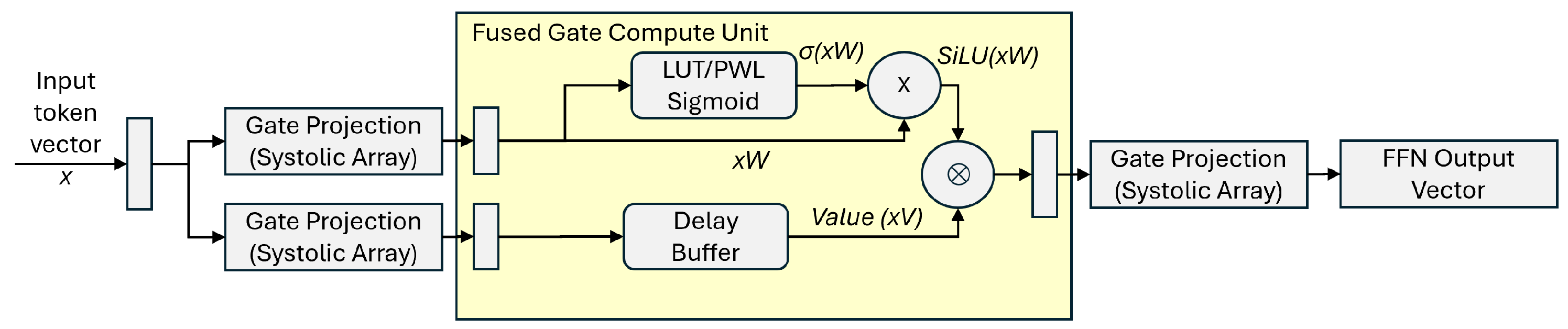

5.1.1. Gated Pipeline Architecture

- Parallel Projection: A bifurcated systolic array or a "Wide-MAC" unit processes W and V concurrently.

- SiLU Activation: The SiLU (Sigmoid Linear Unit) function () is implemented using a high-speed piecewise linear (PWL) approximation or a small LUT-based sigmoid generator.

- On-the-fly Hadamard: The multiplication of and is performed in the local register file to minimize toggle activity and power consumption.

- Parallel Datapaths: and are fed into the i-th multiplier simultaneously.

- High Throughput: Because there are no partial sum reductions, the operation is perfectly parallelizable and can be fully pipelined with a latency of only a few clock cycles (depending on the precision, e.g., FP16 or INT8).

- Memory Alignment: The primary bottleneck is ensuring that both operands A and B arrive at the execution units at the same time. This often requires synchronized FIFO buffers or dual-port SRAM blocks to handle the dual-stream data fetch.

5.2. State-of-the-Art: LUT-LLM and Memory-Based Computation

6. Conclusion and Future Outlook

6.1. The Necessity of Hardware-Software Co-Design

6.2. Emerging Technologies and Radical Architectures

- Neuromorphic and Event-Based Computing: By leveraging spiking neural networks (SNNs), future accelerators may achieve extreme sparsity. Neuromorphic hardware only "fires" when significant information is detected, potentially reducing the energy consumption of the FFN layers by orders of magnitude.

- Optical and Photonic Interconnects: To solve the "Memory Wall," research into silicon photonics aims to replace electrical copper traces with light-based data movement, enabling Terabit-per-second bandwidth between HBM and the compute core with near-zero heat dissipation.

- Analog In-Memory Computing (AiMC): While this tutorial focused on digital logic, analog crossbar arrays (using ReRAM or Phase-Change Memory) are emerging as a way to perform matrix multiplications at the location of the data itself, bypassing the Von Neumann bottleneck entirely.

- Sub-2-bit and 1-bit Quantization: As quantization theory reaches the "Binary/Ternary" limit, hardware will transition from complex floating-point units to simple bit-manipulation logic, allowing for massive parallelization on a single die.

References

- Varghese, G.; Xu, J. Network Algorithmics: An Interdisciplinary Approach to Designing Fast Networked Devices, 2nd ed.; Morgan Kaufmann, 2022. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser; Polosukhin, I. Attention is all you need. In Proceedings of the Advances in neural information processing systems, 2017; pp. 5998–6008. [Google Scholar]

- Dao, T.; Fu, D.Y.; Ermon, S.; Rudra, A.; Ré, C. Flashattention: Fast and memory-efficient exact attention with io-awareness. arXiv arXiv:2205.14135.

- OCP Microscaling Formats (MX) Specification v1.0. Open Compute Project Technical report. 2024.

- Lee, J. Near-Memory Processing in Action: Accelerating Personalized Recommendation With AxDIMM. IEEE Micro; 2025. [Google Scholar]

- Contributors, R. Efficient Hardware Architecture Design for Rotary Position Embedding of Large Language Models. arXiv 2026. arXiv:2601.39036.

- Engineering, E. Ultra-Efficient SLMs: Embedl’s Breakthrough for On-Device AI. Technical Whitepaper, 2025. [Google Scholar]

- Rabe, M.N.; Staats, C. Self-attention Does Not Need O(n2) Memory. arXiv arXiv:2112.05682.

- Team, C.E. NVIDIA B200 GPU Guide: Use Cases, Models, and Benchmarks. Clarifai Technical Blog 2026. [Google Scholar]

- Jarmusch, A.; Chandrasekaran, S. Microbenchmarking NVIDIA’s Blackwell Architecture: An in-depth Architectural Analysis. arXiv arXiv:2512.02189.

- Team, M. Matrix Multiplication on Blackwell: Part 1 - Introduction. Modular Technical Blog, 2025. [Google Scholar]

- Research, E.M. Blackwell GPU Architecture: Microbenchmarking and Performance Analysis. arXiv 2026. arXiv:2601.10953.

- Vishnumurthy, N. NVIDIA Blackwell Architecture: A Deep Dive into the Next Generation of AI Computing. Medium Technical Publication, 2025. [Google Scholar]

- Lee, J. Pyramid: Accelerating LLM Inference With Cross-Level Processing-in-Memory. In Proceedings of the IEEE Computer Architecture Letters, 2025. [Google Scholar]

- Engineering, N. Overcoming Compute and Memory Bottlenecks with FlashAttention4 on NVIDIA Blackwell. NVIDIA Technical Blog, 2026. [Google Scholar]

- Zhang, Junming; Zhang, Qinyan; H.S.F.G.S.H.R.N.X.M. SwiftKV: An Edge-Oriented Attention Algorithm and Multi-Head Accelerator for Fast, Efficient LLM Decoding. arXiv 2026. arXiv:2601.10953.

- Review, U. SALE: Low-Bit Estimation for Efficient Sparse Attention in Long-Context LLM Prefilling. In Proceedings of the ICLR 2026 Conference Submission, 2026. [Google Scholar]

- Vatalaro, M. Comparative Study on CORDIC Accelerators for NCO and Nonlinear Applications. In Lund University Publications; 2025. [Google Scholar]

- Wang, W.; Zhou, S. SOLE: Hardware-Software Co-design of Softmax and LayerNorm for Efficient Transformer Inference. In Proceedings of the ICCAD 2025, 2025. [Google Scholar]

- Li, W.J. Hardware-oriented algorithms for softmax and layer normalization of large language models. Science China Information Sciences 2024, 67. [Google Scholar] [CrossRef]

- Wang, W.; Zhou, S.; Sun, W.; Liu, Y. SOLE: Hardware-Software Co-design of Softmax and LayerNorm for Efficient Transformer Inference. arXiv arXiv:2510.17189.

- Engineering, D. FlashAttention 4: Faster, Memory-Efficient Attention for LLMs. Practitioner Deep Dives, 2026. [Google Scholar]

- Blog, N.D. Accelerating Long-Context Inference with Skip Softmax in NVIDIA TensorRT-LLM. 2025. [Google Scholar]

- Stevens, J.R. Softermax: Hardware-Friendly Softmax Approximation for Transformers. arXiv 2024. arXiv:2401.12345.

- Research, E.M. E2Softmax: Efficient Hardware Softmax Approximation via Log-Domain Division. Technical Report. 2026. [Google Scholar]

- Shojaei, M. SwiGLU: The FFN Upgrade for State of the Art Transformers. DEV Community, 2025. [Google Scholar]

- Chen, H. Flash-FFN: A Pipelined Accelerator for Gated Linear Units. In Proceedings of the Symposium on High-Performance Computer Architecture (HPCA), 2025. [Google Scholar]

- Review, U. LUT-LLM: Efficient Large Language Model Inference with Memory-based Computations on FPGAs. arXiv arXiv:2511.06174.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).