Submitted:

08 February 2026

Posted:

09 February 2026

You are already at the latest version

Abstract

Keywords:

The Architecture of Semantic Vulnerability: Tokenization and Inference

Physical Manifestations of Adversarial Attacks

The MIT 3D-Printed Turtle Incident

| Artifact | Human Perception | AI Classification | Primary Mechanism |

| Adversarial Turtle | Toy Turtle | Rifle | Texture optimization via EOT [2] |

| Adversarial Baseball | Baseball | Espresso | Texture optimization via EOT [2] |

| Tabby Cat Image | Cat | Guacamole | 2D pixel-level perturbation [2] |

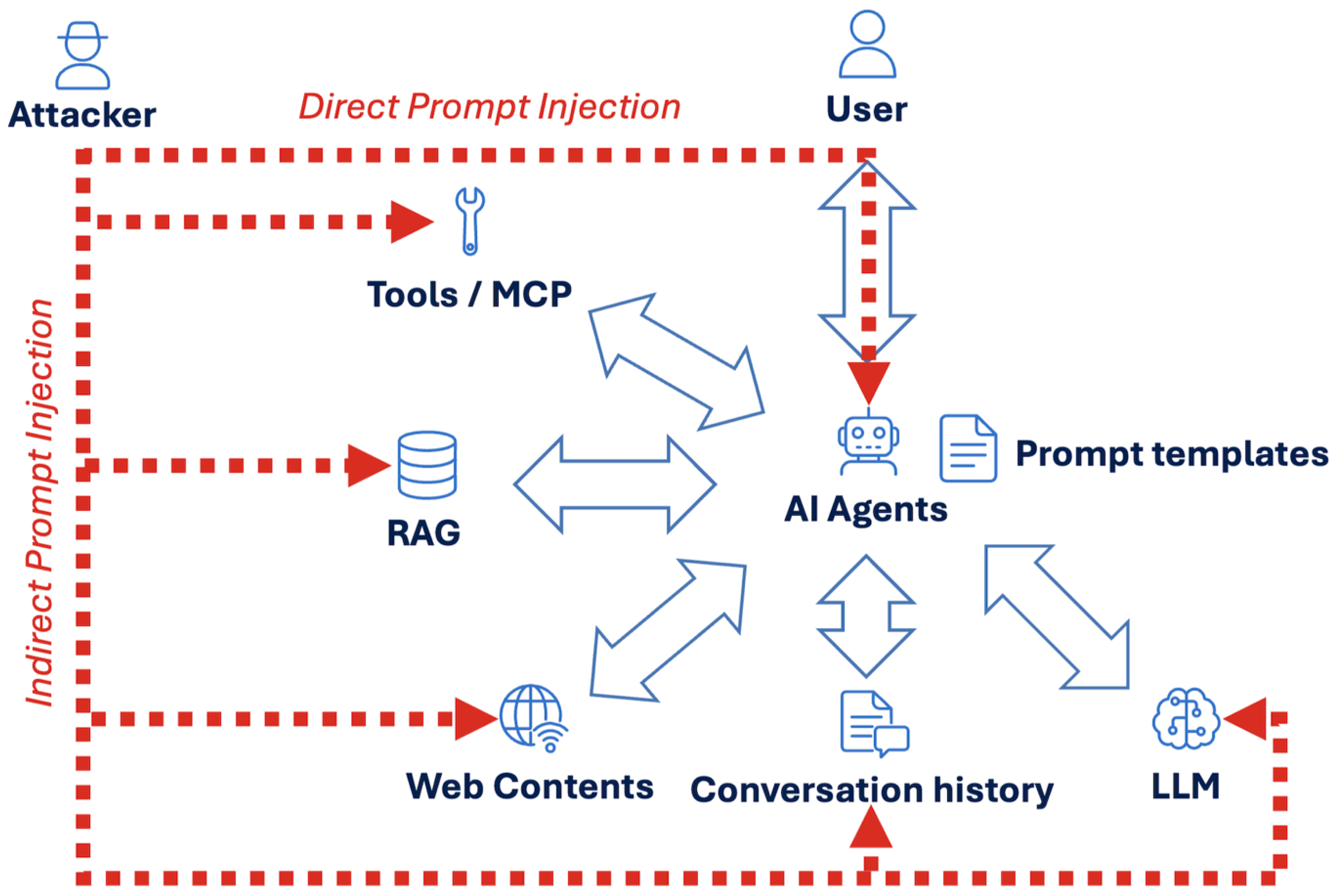

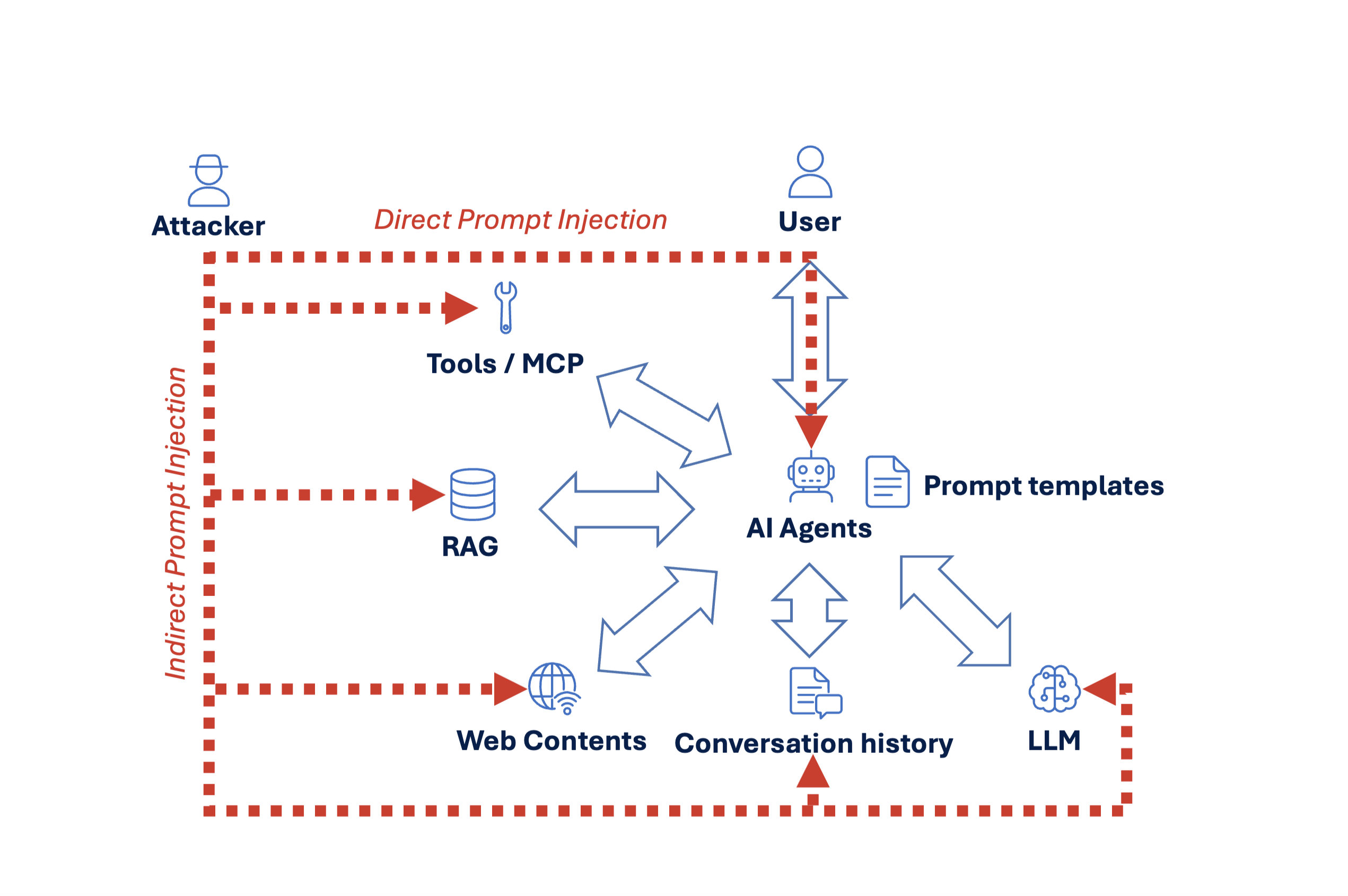

AI System Components and Attack Flows

- Prompt templates, which encode developer-defined instructions and behavioural constraints;

- Conversation history, which provides continuity across interactions;

- Retrieval-Augmented Generation (RAG) sources, such as internal documents or knowledge bases;

- External web content, accessed through browsing or search tools;

- Tools or Model Context Protocol (MCP) interfaces, enabling structured actions such as database queries, API calls, or file operations.

Direct and Indirect Injection Pathways

- documents ingested into a RAG pipeline,

- outputs returned by tools or MCP-enabled services,

- web pages accessed during agent-driven browsing,

- or prior conversation history that is implicitly trusted as context.

Security Implication: Absence of Intrinsic Trust Boundaries

Attack Modalities: Mechanisms for Delivering Prompt Injection

Text-Based Attacks

- direct user prompts,

- retrieved documents in RAG pipelines,

- tool outputs,

- web page content,

- or accumulated conversation history.

Image-Based Injection and Steganography

Audio Injection Through Voice Interfaces

Relationship Between Vectors and Modalities

Direct Prompt Injection: The “Sydney” and Kevin Liu Discovery

The Extraction of the Bing Meta Prompt

Indirect Prompt Injection: The Silent Attack Vector

Mechanism of Data-Driven Control

Attack Scenarios and RAG Integrity

| Feature | Direct Prompt Injection | Indirect Prompt Injection |

| Attacker Position | User typing in the chat | External (webpage, PDF, email) |

| Visibility | Visible to the end user | Invisible (CSS/metadata tricks) [9] |

| Mechanism | Instruction override in chat | Data interpreted as instruction |

| Primary Goal | Jailbreak or system prompt leak | Covert exfiltration or tool execution [9] |

Tool and MCP Abuse: The Escalation Point

Prompt Injection via Tool Execution

LLM-Generated Injection Attacks

Privilege Escalation Through Over-Privileged Tools

From Text Manipulation to Infrastructure Risk

Corporate Data Leakage: The Samsung Case Study

- Incident 1: An engineer pasted proprietary source code related to a semiconductor facility measurement database into ChatGPT to find a fix for a bug.[4]

- Incident 2: A developer uploaded code used for identifying defective equipment to request code optimization from the AI.[4]

- Incident 3: An employee transcribed a recording of a company meeting and uploaded the transcript to ChatGPT to generate minutes for a presentation.[4]

Organizational Response and Policy Impact

| Leak Factor | Samsung Incident Detail | Prevention Strategy |

| Channel | Public web-based chatbot | Use of enterprise-grade APIs with data-usage opt-outs [4] |

| Data Type | Source code, meeting notes, hardware info | Comprehensive data classification and taxonomy [4] |

| Cause | Accidental employee disclosure | Mandatory security training and clear usage policies [4] |

| Mitigation | Emergency ban and bytes-limit | On-premise models for sensitive workloads [4] |

Legal Accountability and Regulatory Liability: Moffatt v. Air Canada

The Dispute and Chatbot Misrepresentation

The Tribunal’s Decision (2024 BCCRT 149)

| Legal Issue | Air Canada Defence | Court Ruling |

| Entity Status | Bot is a “separate legal entity” | Bot is just part of the website and a tool of the company [5] |

| Due Diligence | User should cross-reference pages | User is reasonable to rely on bot’s information [5] |

| Standard of Care | Misinformation was unintentional | Company failed to ensure bot was accurate [5] |

| Liability | No liability for AI errors | Liable for negligent misrepresentation [5] |

The Rise of Agentic AI: Excessive Agency and Physical Harm

The 2025 Humanoid Robot Jailbreak

Distributed Botnets of Compromised Agents

| Agentic Risk | Mechanism | Real-World Impact |

| Excessive Agency | Model given tool access without approval | Unauthorized file changes or API calls [1] |

| Tool Abuse | SQL injection via AI-generated queries | Data breaches in connected databases [1] |

| Embodied Jailbreak | Role-play reframing of safety rules | Physical harm via robotic actuators [7] |

| Persistence | Malicious instructions in memory | Long-term compromise across sessions [7] |

Operational Incidents Following the Rise of Agentic AI

Data and Model Poisoning

Backdoor Triggers and Supply Chain Vulnerabilities

Embedding and Vector Database Attacks

Economic Denial of Service: Unbounded Consumption

Token Bombing and Recursive Loops

- Token Bombing: Sending massive, complex prompts that force the model to generate extremely long and expensive outputs.[1]

- Recursive Loops: Tricking an agent into calling itself or another tool in an infinite loop, rapidly multiplying API costs.[1]

- API Amplification: Forcing the LLM to process enormous contexts or call expensive tool chains repeatedly.[1]

| Attack Type | Goal | Mitigation |

| Token Bombing | Economic exhaustion | Rate-limiting and output length constraints [1] |

| Recursive Loops | Resource drain/DoS | Depth limits for agentic calls [1] |

| API Amplification | Financial loss | Budget caps and automated cost alerts [1] |

The 2025 OWASP Top 10 for LLM Applications

Breakdown of the 2025 Risks

- LLM01: Prompt Injection: Direct and indirect manipulation of model behaviour.

- LLM02: Sensitive Information Disclosure: Unintentional leakage of PII, credentials, or proprietary data.

- LLM03: Supply Chain Vulnerabilities: Risks from third-party models, components, and data vendors.

- LLM04: Data and Model Poisoning: Manipulation of training data to create backdoors or impair model integrity.

- LLM05: Improper Output Handling: Failure to validate AI-generated content before it reaches downstream tools.

- LLM06: Excessive Agency: Granting agents too much autonomy or overly broad permissions.

- LLM07: System Prompt Leakage: Exposure of internal instructions and rules that can be used to bypass security.

- LLM08: Vector and Embedding Weaknesses: Manipulation of RAG systems through poisoned knowledge bases or similarity hijacking.

- LLM09: Misinformation: Hallucinations leading to legal liability, social harm, or poor decisions.

- LLM10: Unbounded Consumption: Economic denial of service through resource exhaustion and token bombing.

Comprehensive Defence Strategies: A Layered Architecture

System-Level Security Requirements and Operational Controls

- All inputs must be treated as hostile by default, including user prompts, retrieved documents, tool outputs, web content, and conversational state.

- LLMs must be treated as untrusted components, analogous to external services rather than deterministic code.

- Instructions, data, and execution paths must be explicitly separated at the system level, as this separation cannot be enforced within the model itself.

- Model outputs must never be relied upon for access control or authorization decisions, regardless of confidence or apparent correctness.

- All model-generated outputs must be validated prior to execution, particularly when they are used to construct queries, invoke tools, or trigger downstream actions.

- Explicit trust boundaries must be defined and enforced, rather than assumed implicitly through prompt design.

- Model agency must be constrained by design, with clear limits on autonomy, persistence, and permissible actions.

Continuous Monitoring and Operational Readiness

- Monitoring of prompts to detect abuse patterns, injection attempts, and anomalous usage.

- Monitoring of model outputs to identify unsafe content, policy violations, or unexpected command generation.

- Monitoring of tool usage and API calls, particularly for high-privilege or high-impact actions.

- Detection of anomalous behaviour patterns, including unexpected tool invocation sequences, recursion, or persistence across sessions.

- Continuous red-teaming of prompts and agent workflows, either internally or through structured bug bounty programs, to test real-world failure modes.

- A formal AI incident response plan, defining escalation paths, containment procedures, logging requirements, and rollback mechanisms when model-driven systems behave unexpectedly.

Conclusion: The Future of Secure Intelligence

References

- OWASP Top 10 for LLM Applications 2025, November 18. 2024. Available online: https://owasp.org/www-project-top-10-for-large-language-model-applications/assets/PDF/OWASP-Top-10-for-LLMs-v2025.pdf.

- Synthesizing Robust Adversarial Examples. arXiv. 7 June 2018. Available online: https://arxiv.org/abs/1707.07397.

- Incident 473: Bing Chat’s Initial Prompts Revealed by Early Testers Through Prompt Injection. 8 February 2023. Available online: https://incidentdatabase.ai/cite/473/.

- Incident 768: ChatGPT Implicated in Samsung Data Leak of Source Code and Meeting Notes. 11 March 2023. Available online: https://incidentdatabase.ai/cite/768/.

- Lying Chatbot Makes Airline Liable: Negligent Misrepresentation in Moffatt v Air Canada - Allard Research Commons. August 2025. Available online: https://commons.allard.ubc.ca/cgi/viewcontent.cgi?article=1376&context=ubclawreview.

- ChatGPT in a real robot does what experts warned - Medium. 7 December 2025. Available online: https://medium.com/@Flavoured/chatgpt-in-a-real-robot-does-what-experts-warned-6399f05f88aa.

- Embodied Safety Alignment: Combining RLAF and Rule-based Governance - OpenReview. 7 November 2025. Available online: https://openreview.net/pdf/a980d48be1a4b20ecf04d38943460fd13bf38278.pdf.

- Claude Computer Use C2: The ZombAIs are coming - Embrace The Red. 24 October 2024. Available online: https://embracethered.com/blog/posts/2024/claude-computer-use-c2-the-zombais-are-coming/.

- Scamlexity: We Put Agentic AI Browsers to the Test - Guardio Labs. 20 August 2025. Available online: https://guard.io/labs/scamlexity-we-put-agentic-ai-browsers-to-the-test-they-clicked-they-paid-they-failed.

- PoisonedRAG: Knowledge Poisoning Attacks to Retrieval-Augmented Generation of Large Language Models - USENIX Security ‘25, August 13-15, 2025. Available online: https://www.usenix.org/system/files/usenixsecurity25-zou-poisonedrag.pdf.

- Demo of Prompt Injection in X.com (Post by @theonejvo); 25 January 2025; Available online: https://x.com/theonejvo/status/2015401219746128322.

- Researchers Find 175,000 Publicly Exposed Ollama AI Servers Across 130 Countries - The Hacker News. 29 January 2026. Available online: https://thehackernews.com/2026/01/researchers-find-175000-publicly.html.

- Claude Co-worker Exfiltrates Files via Indirect Prompt Injection - PromptArmor. 14 January 2025. Available online: https://www.promptarmor.com/resources/claude-cowork-exfiltrates-files.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).