Submitted:

03 February 2026

Posted:

05 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- A collection of the latest works in markerless visual 3D Pose Recovery and comparisons between them

- A technological framework to easily describe the capabilities of 3DPR methodologies and their capabilities

- Identification of remaining problems in the field and recent techniques that may assist in addressing them

2. Background

2.1. Related Works

2.2. Metrics for Pose Estimation in Robotics

2.2.1. Object Pose Estimation Metrics

2.2.2. Actor Pose Estimation Metrics

2.3. Datasets and Benchmarks

2.3.1. YCB-Video (Objects)

2.3.2. YCB-M (Objects)

2.3.3. LineMOD (Objects)

2.3.4. LineMOD-OCCLUDED (Objects)

2.3.5. PhoCal (Objects)

2.3.6. SURREAL (Actors)

2.3.7. Human3.6M (Actors)

2.3.8. T-LESS (Objects)

2.3.9. CAMERA (Objects)

2.3.10. REAL275 (Objects)

3. Deep Learning for 3DPR

3.1. Object Pose Estimation

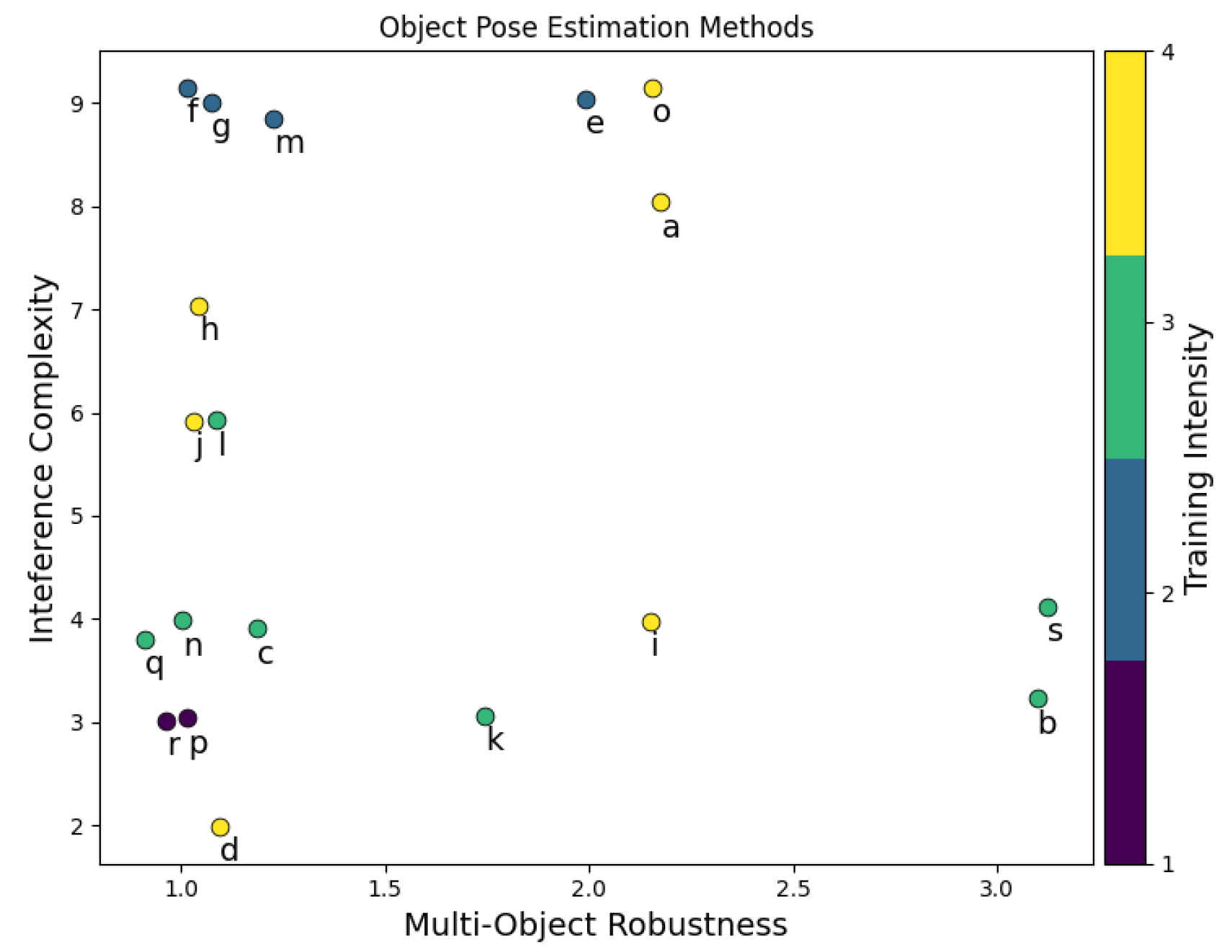

3.1.1. Axes

Multi-Object Robustness

Inference Complexity

Training Intensity

3.1.2. Works

PoseCNN

DOPE

Pix2Pose

EfficentPose

SS6D

Self6D++

RePOSE

SO-POSE

FFB6D

ROPE

Template-Pose

OVE6D

OSSID

OSOP

Gen6D

OnePose

TexPose

ICG+

CASAPose

3.2. Actor Pose Estimation

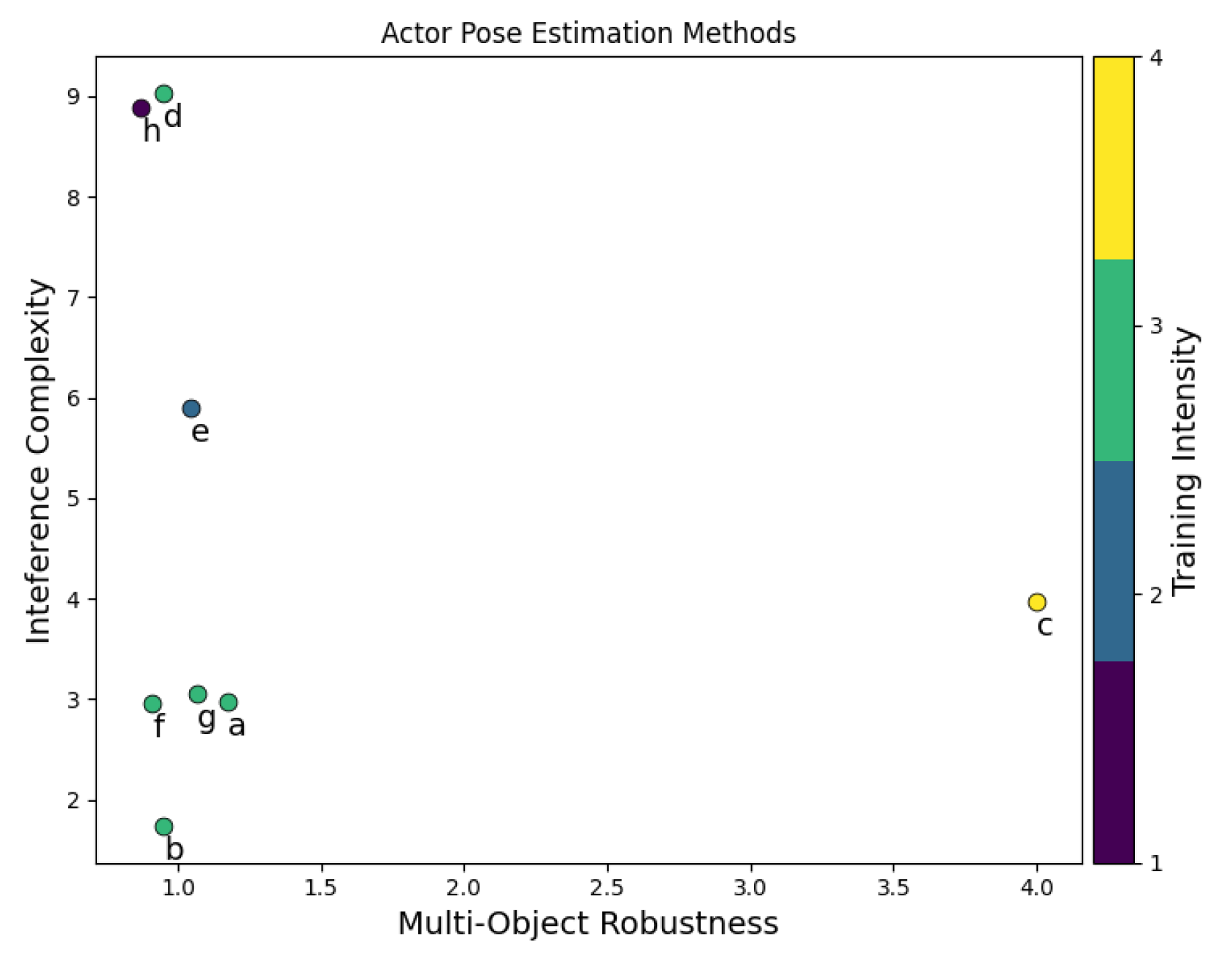

3.2.1. Axes

Multi-Agent Robustness

3.2.2. Works

CRAVES

DREAM

Anipose

RoboPose

Watch It Move

SPDH

SGTAPose

CASA

3.3. Selection Criteria

3.3.1. Data Availability

3.3.2. Subject Diversity

3.3.3. Timing Requirements

4. Future Work

4.1. Multi-Actor, Multi-Object Scenes

4.2. Occlusion

4.3. Comprehensive Multi-Robots as Actors Datasets

4.4. Pose Estimation Without 3D Object Models

5. Conclusion

Funding

| 1 | This is intended as a general guideline and not specific advice guaranteeing stability in any given system |

Acknowledgments

References

- Alirezazadeh, S.; Alexandre, L.A. Dynamic Task Scheduling for Human-Robot Collaboration. IEEE Robotics and Automation Letters 2022, 7, 8699–8704. [Google Scholar] [CrossRef]

- Fusaro, F.; Lamon, E.; Momi, E.D.; Ajoudani, A. An Integrated Dynamic Method for Allocating Roles and Planning Tasks for Mixed Human-Robot Teams. In Proceedings of the 2021 30th IEEE International Conference on Robot & Human Interactive Communication (RO-MAN), 2021; pp. 534–539. [Google Scholar] [CrossRef]

- Pupa, A.; Van Dijk, W.; Secchi, C. A Human-Centered Dynamic Scheduling Architecture for Collaborative Application. IEEE Robotics and Automation Letters 2021, 6, 4736–4743. [Google Scholar] [CrossRef]

- Bochkovskiy, A.; Wang, C.Y.; Liao, H.Y.M. Yolov4: Optimal speed and accuracy of object detection. arXiv arXiv:2004.10934. [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2016; pp. 770–778. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv arXiv:1409.1556. [CrossRef]

- Cao, Z.; Simon, T.; Wei, S.E.; Sheikh, Y. Realtime multi-person 2d pose estimation using part affinity fields. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017; pp. 7291–7299. [Google Scholar]

- Pishchulin, L.; Insafutdinov, E.; Tang, S.; Andres, B.; Andriluka, M.; Gehler, P.V.; Schiele, B. Deepcut: Joint subset partition and labeling for multi person pose estimation. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2016; pp. 4929–4937. [Google Scholar]

- Lee, T.E.; Tremblay, J.; To, T.; Cheng, J.; Mosier, T.; Kroemer, O.; Fox, D.; Birchfield, S. Camera-to-Robot Pose Estimation from a Single Image. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), 2020; pp. 9426–9432. [Google Scholar] [CrossRef]

- Xiang, Y.; Schmidt, T.; Narayanan, V.; Fox, D. PoseCNN: A Convolutional Neural Network for 6D Object Pose Estimation in Cluttered Scenes. In Robotics: Science and Systems (RSS); 2018. [Google Scholar]

- Huang, Z.; Shen, Y.; Li, J.; Fey, M.; Brecher, C. A Survey on AI-Driven Digital Twins in Industry 4.0: Smart Manufacturing and Advanced Robotics. Sensors 2021, 21. [Google Scholar] [CrossRef]

- Mihai, S. Digital Twins: A Survey on Enabling Technologies, Challenges, Trends and Future Prospects. IEEE Communications Surveys & Tutorials 2022, 24, 2255–2291. [Google Scholar] [CrossRef]

- Proia, S.; Carli, R.; Cavone, G.; Dotoli, M. Control Techniques for Safe, Ergonomic, and Efficient Human-Robot Collaboration in the Digital Industry: A Survey. IEEE Transactions on Automation Science and Engineering 2022, 19, 1798–1819. [Google Scholar] [CrossRef]

- Thelen, A.; Zhang, X.; Fink, O.; Lu, Y.; Ghosh, S.; Youn, B.D.; Todd, M.D.; Mahadevan, S.; Hu, C.; Hu, Z. A comprehensive review of digital twin—part 1: modeling and twinning enabling technologies. Structural and Multidisciplinary Optimization 2022, 65, 354. [Google Scholar] [CrossRef]

- Toshev, A.; Szegedy, C. DeepPose: Human Pose Estimation via Deep Neural Networks. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition, 2014; pp. 1653–1660. [Google Scholar] [CrossRef]

- Andriluka, M.; Pishchulin, L.; Gehler, P.; Schiele, B. 2D Human Pose Estimation: New Benchmark and State of the Art Analysis. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition, 2014; pp. 3686–3693. [Google Scholar] [CrossRef]

- Eichner, M.; Marin-Jimenez, M.; Zisserman, A.; Ferrari, V. 2d articulated human pose estimation and retrieval in (almost) unconstrained still images. International journal of computer vision 2012, 99, 190–214. [Google Scholar] [CrossRef]

- Hinterstoisser, S.; Lepetit, V.; Ilic, S.; Holzer, S.; Bradski, G.; Konolige, K.; Navab, N. Model based training, detection and pose estimation of texture-less 3d objects in heavily cluttered scenes. In Proceedings of the Asian conference on computer vision, 2012; Springer; pp. 548–562. [Google Scholar]

- Hodaň, T.; Matas, J.; Obdržálek, Š. On evaluation of 6D object pose estimation. In Proceedings of the European Conference on Computer Vision, 2016; Springer; pp. 606–619. [Google Scholar]

- Ionescu, C.; Papava, D.; Olaru, V.; Sminchisescu, C. Human3. 6m: Large scale datasets and predictive methods for 3d human sensing in natural environments. IEEE transactions on pattern analysis and machine intelligence 2013, 36, 1325–1339. [Google Scholar] [CrossRef]

- Calli, B.; Walsman, A.; Singh, A.; Srinivasa, S.; Abbeel, P.; Dollar, A.M. Benchmarking in Manipulation Research: Using the Yale-CMU-Berkeley Object and Model Set. IEEE Robotics Automation Magazine 2015, 22, 36–52. [Google Scholar] [CrossRef]

- Hinterstoisser, S.; Lepetit, V.; Ilic, S.; Holzer, S.; Bradski, G.; Konolige, K.; Navab, N. Model Based Training, Detection and Pose Estimation of Texture-Less 3d Objects in Heavily Cluttered Scenes. In Proceedings of the ACCV’12, Berlin, Heidelberg, 2012; pp. 548–562. [Google Scholar] [CrossRef]

- Garrido-Jurado, S.; Muñoz Salinas, R.; Madrid-Cuevas, F.; Marín-Jiménez, M. Automatic Generation and Detection of Highly Reliable Fiducial Markers under Occlusion. Pattern Recogn. 2014, 47, 2280–2292. [Google Scholar] [CrossRef]

- Wang, P.; Jung, H.; Li, Y.; Shen, S.; Srikanth, R.P.; Garattoni, L.; Meier, S.; Navab, N.; Busam, B. PhoCaL: A Multi-Modal Dataset for Category-Level Object Pose Estimation With Photometrically Challenging Objects. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2022; pp. 21222–21231. [Google Scholar]

- Varol, G.; Romero, J.; Martin, X.; Mahmood, N.; Black, M.J.; Laptev, I.; Schmid, C. Learning from Synthetic Humans. In Proceedings of the CVPR, 2017. [Google Scholar]

- Hodaň, T.; Haluza, P.; Obdržálek, Š.; Matas, J.; Lourakis, M.; Zabulis, X. T-LESS: An RGB-D Dataset for 6D Pose Estimation of Texture-less Objects. IEEE Winter Conference on Applications of Computer Vision (WACV), 2017. [Google Scholar]

- Wang, H.; Sridhar, S.; Huang, J.; Valentin, J.; Song, S.; Guibas, L.J. Normalized Object Coordinate Space for Category-Level 6D Object Pose and Size Estimation. In Proceedings of the The IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2019. [Google Scholar]

- Brachmann, E. 6D Object Pose Estimation using 3D Object Coordinates [Data]; 2020. [Google Scholar] [CrossRef]

- Chen, B.; Chin, T.J.; Klimavicius, M. Occlusion-Robust Object Pose Estimation with Holistic Representation. In Proceedings of the WACV, 2022. [Google Scholar]

- Bukschat, Y.; Vetter, M. EfficientPose: An efficient, accurate and scalable end-to-end 6D multi object pose estimation approach, 2020. arXiv arXiv:cs.

- Wang, G.; Manhardt, F.; Liu, X.; Ji, X.; Tombari, F. Occlusion-Aware Self-Supervised Monocular 6D Object Pose Estimation. IEEE Transactions on Pattern Analysis and Machine Intelligence 2021, 1–1. [Google Scholar] [CrossRef]

- Tremblay, J.; To, T.; Sundaralingam, B.; Xiang, Y.; Fox, D.; Birchfield, S. Deep Object Pose Estimation for Semantic Robotic Grasping of Household Objects. CoRR 2018, abs/1809.10790. [Google Scholar]

- Li, S.; Xu, C.; Xie, M. A Robust O(n) Solution to the Perspective-n-Point Problem. IEEE Transactions on Pattern Analysis and Machine Intelligence 2012, 34, 1444–1450. [Google Scholar] [CrossRef] [PubMed]

- Park, K.; Patten, T.; Vincze, M. Pix2Pose: Pix2Pose: Pixel-Wise Coordinate Regression of Objects for 6D Pose Estimation. In Proceedings of the The IEEE International Conference on Computer Vision (ICCV), Oct 2019. [Google Scholar]

- Deng, X.; Xiang, Y.; Mousavian, A.; Eppner, C.; Bretl, T.; Fox, D. Self-supervised 6D Object Pose Estimation for Robot Manipulation. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), 2020; pp. 3665–3671. [Google Scholar] [CrossRef]

- Iwase, S.; Liu, X.; Khirodkar, R.; Yokota, R.; Kitani, K.M. RePOSE: Fast 6D Object Pose Refinement via Deep Texture Rendering. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), October 2021; pp. 3303–3312. [Google Scholar]

- Di, Y.; Manhardt, F.; Wang, G.; Ji, X.; Navab, N.; Tombari, F. SO-Pose: Exploiting Self-Occlusion for Direct 6D Pose Estimation. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), October 2021; pp. 12396–12405. [Google Scholar]

- He, Y.; et al. FFB6D: A Full Flow Bidirectional Fusion Network for 6D Pose Estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2021. [Google Scholar]

- Nguyen, V.N.; Hu, Y.; Xiao, Y.; Salzmann, M.; Lepetit, V. Templates for 3D Object Pose Estimation Revisited: Generalization to New Objects and Robustness to Occlusions. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022; pp. 6771–6780. [Google Scholar]

- Cai, D.; Heikkilä, J.; Rahtu, E. OVE6D: Object Viewpoint Encoding for Depth-Based 6D Object Pose Estimation. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2022; pp. 6803–6813. [Google Scholar]

- Gu, Q.; Okorn, B.; Held, D. OSSID: Online Self-Supervised Instance Detection by (And For) Pose Estimation. IEEE Robotics and Automation Letters 2022, 7, 3022–3029. [Google Scholar] [CrossRef]

- Shugurov, I.; Li, F.; Busam, B.; Ilic, S. OSOP: A Multi-Stage One Shot Object Pose Estimation Framework. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2022; pp. 6835–6844. [Google Scholar]

- Liu, Y.; Wen, Y.; Peng, S.; Lin, C.; Long, X.; Komura, T.; Wang, W. Gen6D: Generalizable Model-Free 6-DoF Object Pose Estimation from RGB Images. In Proceedings of the ECCV, 2022. [Google Scholar]

- Sun, J.; Wang, Z.; Zhang, S.; He, X.; Zhao, H.; Zhang, G.; Zhou, X. OnePose: One-Shot Object Pose Estimation without CAD Models. CVPR, 2022. [Google Scholar]

- Chen, H.; Manhardt, F.; Navab, N.; Busam, B. TexPose: Neural Texture Learning for Self-Supervised 6D Object Pose Estimation. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2023; pp. 4841–4852. [Google Scholar]

- Stoiber, M.; Elsayed, M.; Reichert, A.E.; Steidle, F.; Lee, D.; Triebel, R. Fusing Visual Appearance and Geometry for Multi-modality 6DoF Object Tracking. arXiv 2023. arXiv:2302.11458.

- Stoiber, M.; Sundermeyer, M.; Triebel, R. Iterative corresponding geometry: Fusing region and depth for highly efficient 3d tracking of textureless objects. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022; pp. 6855–6865. [Google Scholar]

- Gard, N.; Hilsmann, A.; Eisert, P. CASAPose: Class-Adaptive and Semantic-Aware Multi-Object Pose Estimation. In Proceedings of the 33rd British Machine Vision Conference 2022, BMVC 2022, London, UK, November 21-24, 2022; BMVA Press, 2022. [Google Scholar]

- Zuo, Y.; Qiu, W.; Xie, L.; Zhong, F.; Wang, Y.; Yuille, A.L. Craves: Controlling robotic arm with a vision-based economic system. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2019; pp. 4214–4223. [Google Scholar]

- Karashchuk, P.; Rupp, K.L.; Dickinson, E.S.; Walling-Bell, S.; Sanders, E.; Azim, E.; Brunton, B.W.; Tuthill, J.C. Anipose: a toolkit for robust markerless 3D pose estimation. Cell reports 2021, 36, 109730. [Google Scholar] [CrossRef]

- Labbé, Y.; Carpentier, J.; Aubry, M.; Sivic, J. Single-view robot pose and joint angle estimation via render & compare. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021; pp. 1654–1663. [Google Scholar]

- Noguchi, A.; Iqbal, U.; Tremblay, J.; Harada, T.; Gallo, O. Watch It Move: Unsupervised Discovery of 3D Joints for Re-Posing of Articulated Objects. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2022; pp. 3677–3687. [Google Scholar]

- Simoni, A.; Pini, S.; Borghi, G.; Vezzani, R. Semi-Perspective Decoupled Heatmaps for 3D Robot Pose Estimation From Depth Maps. IEEE Robotics and Automation Letters 2022, 7, 11569–11576. [Google Scholar] [CrossRef]

- Tian, Y.; Zhang, J.; Yin, Z.; Dong, H. Robot Structure Prior Guided Temporal Attention for Camera-to-Robot Pose Estimation From Image Sequence. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2023; pp. 8917–8926. [Google Scholar]

- Wu, Y.; Chen, Z.; Liu, S.; Ren, Z.; Wang, S. CASA: Category-agnostic Skeletal Animal Reconstruction. In Proceedings of the Advances in Neural Information Processing Systems; Koyejo, S., Mohamed, S., Agarwal, A., Belgrave, D., Cho, K., Oh, A., Eds.; Curran Associates, Inc., 2022; Vol. 35, pp. 28559–28574. [Google Scholar]

- ISO. Road vehicles – Functional safety. 2011. [Google Scholar]

- DALLEL, M.; HAVARD, V.; BAUDRY, D.; SAVATIER, X. InHARD - Industrial Human Action Recognition Dataset in the Context of Industrial Collaborative Robotics. In Proceedings of the 2020 IEEE International Conference on Human-Machine Systems (ICHMS), 2020; pp. 1–6. [Google Scholar]

- Park, K.; Mousavian, A.; Xiang, Y.; Fox, D. Latentfusion: End-to-end differentiable reconstruction and rendering for unseen object pose estimation. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020; pp. 10710–10719. [Google Scholar]

Short Biography of Authors

|

Maximillian Panoff Maximillian ‘Max’ Panoff (Student Member, IEEE) is a fourth-year Ph.D. student in the Department of Electrical and Computer Engineering. he earned his B.E. with a Concentration in Robotics and a Minor in Social Sciences from Stevens Institute of Technology in Hoboken, NJ. During his time at the University of Florida where he studies human collaborative robotics, he was awarded the Graduate School Preeminence Award. |

|

Antonio Hendricks Antonio Hendricks (Student Member, IEEE) joined the Smart Systems Lab as a Ph.D. Student and Graduate Research Assistant at the University of Florida in the ECE Department in the Fall 2022 semester. He completed his Bachelor in Computer Engineering with a focus on Machine Intelligence and Autonomous Robotics Systems at Florida Polytechnic University in Lakeland Florida. He plans to focus on safe autonomy and advanced robotic intelligence for surgery, utilizing modern methods of deep learning and computer vision. |

|

Peter Forcha Peter Forcha is currently pursuing a Ph.D. in the Department of Electrical and Computer Engineering at the University of Florida. He obtained his bachelor’s degree in Electrical and Electronics Engineering at the University of Buea (Cameroon) and completed his Master of Engineering in Power Systems at Makerere University (Uganda). Peter’s research is focused on building hardware accelerators for machine learning along other machine learning applications, such as computer vision and natural language processing. |

|

Minchul Jung Minchul Jung is a first-year Graduate student at the University of Florida. He works in the Electrical and Computer Engineering department and conducts research with the Smart Systems Lab, led by Dr. Christophe Bobda. His research interests include Human-robot interaction and computer vision. He holds a bachelors degree in Aerospace Engineering from Korea Airforce Academy. |

|

Shuo Wang Shuo Wang (Fellow, IEEE) received the Ph.D. degree in electrical engineering from Virginia Tech, Blacksburg, VA, USA, in 2005.,He is currently a Full Professor with the Department of Electrical and Computer Engineering, University of Florida, Gainesville, FL, USA. He has authored or coauthored more than 200 IEEE journal and conference papers and holds around 30 pending/issued U.S./international patents.,Dr. Wang was the recipient of the Best Transaction Paper Award from the IEEE Power Electronics Society in 2006 and two William M. Portnoy Awards for the papers published in the IEEE Industry Applications Society in 2004 and 2012, respectively. He received the Distinguished Paper Award from IEEE Symposium on Security and Privacy 2022. In 2012, he was also the recipient of the prestigious National Science Foundation CAREER Award. He is an Associate Editor of the IEEE Transactions on Industry Applications and IEEE Transactions on Electromagnetic Compatibility. He was a Technical Program Co-Chair for the IEEE 2014 International Electric Vehicle Conference. |

|

Professor Bobda Christophe Bobda (Member, IEEE) received a Licence in mathematics from the University of Yaounde, Cameroon, in 1992, a diploma of computer science and a Ph.D. degree (with honors) in computer science from the University of Paderborn in Germany in 1999 and 2003 (In the chair of Prof. Franz J. Rammig) respectively. In June 2003 he joined the department of computer science at the University of Erlangen-Nuremberg in Germany as Post doc, under the direction of Prof Jürgen Teich. Dr. Bobda received the best dissertation award 2003 from the University of Paderborn for his work on synthesis of reconfigurable systems using temporal partitioning and temporal placement. In 2005 Dr. Bobda was appointed assistant professor at the University of Kaiserslautern. There he set the chair for Self-Organizing Embedded Systems that he led until October 2007. From 2007 to 2010 Dr. Bobda was a Professor at the University of Potsdam and leader of The working Group Computer Engineering. |

| Survey | Focus and Contribution |

|---|---|

| Huang et al. 2021 [11] | A review of AI enhanced DT rather than how DTs are implemented |

| Mihai et al. 2022 [12] | Holistic coverage of DT, including market impact and computational surfaces, but without discussion on 3DPR. |

| Silva et al. 2022 [13] | Control Techniques using information from DT to improve human safety |

| Thelen et al. 2022 [14] | A comprehensive overview of DT creation, from model creation to state estimation under noisy conditions, but limited discussion of the sensors and sensing techniques used in “Physical-to-virtual (P2V) twinning" |

| This survey | A dedicated review of visual 3DPR methods to solve the problem of maintaining the physical-virtual link in DT |

| Metric Name | Performance Gradient | Best For | Notes |

|---|---|---|---|

| ADD(-S) | Negative | Objects (PEG) | Compares the average distance between each point in the predicted location of a 3D model and the model’s true position. |

| VSD | Negative | Objects (PEG) | Compares the distance between the closest points in 3D surfaces. If the distance is below some threshold, it is ignored, otherwise it adds a flat value irrespective of actual distance. |

| PDJ | Positive | Actors (RPE) | If the distance from the predicted point to the true is within a percentage of the torso record 1, otherwise 0. Average across all joints. |

| PCP | Positive | Actors (RPE) | If the distance from the predicted point to the true is within a percentage of the limb length, record 1, otherwise 0. Replaced by PDJ. |

| PCK | Positive | Actors (RPE) | If the distance from the predicted point to the true is within a percentage of the total area of the subject record 1, otherwise 0. |

| MPJPE | Negative | Actors (RPE) | Sum all the distances between predicted and true joints for each actor in the scene. It can be scaled by actor size in multiple ways. |

| MPJAE | Negative | Actors (RPE) | Sum all the distances between predicted and true joints for each actor in the scene divided by the true length of the corresponding limb. |

| Technique | RGB(D) | YCB [21] | LineMOD [22] | LM-Occluded [28] | MOR | TI | IC | FPS | GPU |

|---|---|---|---|---|---|---|---|---|---|

| PoseCNN Section 3.1.2.1 | RGB | 75.9% | – | 24.9% | 2 | 4 | 8 | – | – |

| PoseCNN Section 3.1.2.1 | RGB-D | 93.0% | – | 78.0% | 2 | 4 | 8 | – | – |

| DOPE Section 3.1.2.2 | RGB | 80.5% | – | – | 3 | 3 | 3 | 4.31 | NVIDIA Titan X |

| Pix2Pose Section 3.1.2.3 | RGB | – | 72.4% | 32.0% | 1 | 3 | 4 | 6.5 | NVIDIA 1080 |

| Efficient Pose Section 3.1.2.4 | RGB | – | 97.25% | 83.89% | 3 | 4 | 2 | 27.45 | NVIDIA 2080Ti |

| SS6D Section 3.1.2.5 | RGB-D | 60.8% | – | – | 2 | 2 | Iter | 0.1† | 2×NVIDIA Titan X |

| Self6D++Section 3.1.2.6 | RGB | 90.5% | 85.6% | 59.8% | 1 | 2 | Iter | 100 | NVIDIA Titan X |

| Self6D++Section 3.1.2.6 | RGB-D | 91.1% | 88.5% | 64.7% | 1 | 2 | Iter | 25 | NVIDIA Titan X |

| RePose Section 3.1.2.7 | RGB | 70.5% | 96.1% | 51.6% | 1 | 2 | Iter | 91 | NVIDIA 2080 Super |

| SO-Pose Section 3.1.2.8 | RGB | 90.9% | 96% | 62.3% | 1 | 4 | 7 | 20 | NVIDIA Titan X |

| FFB6D Section 3.1.2.9 | RGB-D | 92.7% | 99.7% | 66.2% | 2 | 4 | 4 | 13.3 | – |

| ROPE Section 3.1.2.10 | RGB | 79.88% | 95.61% | 45.95% | 1 | 4 | 6 | – | – |

| Template Pose Section 3.1.2.11 | RGB | – | 99.1% | 79.4% | 2 | 3 | 3 | 125 | NVIDIA V100 |

| OVE6D Section 3.1.2.12 | RGB-D | – | 92.4% | 72.8% | 1 | 3 | 6 | 20 | NVIDIA RTX3090 |

| OSSID Section 3.1.2.13 | RGB-D | 63.2% | – | 64.0% | 1 | 2 | Iter | 4.34 | – |

| OSOP Section 3.1.2.14 | RGB | 80% | 86% | 61% | 1 | 3 | 4 | – | – |

| Gen6D Section 3.1.2.15 | RGB | – | 20.74% | – | 2 | 4 | Iter | 128 | NVIDIA 2080Ti |

| OnePose Section 3.1.2.16 | RGB-D | – | 76.9% | – | 1 | 1 | 3 | 12.5 | NVIDIA V100 |

| TexPose Section 3.1.2.17 | RGB | – | 91.7% | 66.7% | 1 | 3 | 4 | – | – |

| ICG+ Section 3.1.2.18 | RGB | 94% | 97.7% | – | 1 | 1 | 3 | 312.4 | NVIDIA RTX A5000 |

| CASAPose Section 3.1.2.19 | RGB | – | 68.1% | 35.9% | 3 | 3 | 4 | 27 | NVIDIA RTX A100 |

| Axis | Level 1 | Level 2 | Level 3 | Level 4 |

|---|---|---|---|---|

| Multi-Object Robustness (MOR) | 1 Object, 1 Class | 1 Object, 2+ Classes | 2+ Object, Unique Classes | 2+ Object, 2+ Classes |

| Inference Complexity (IC) | 1 Stage | 2 Stages | 3 Stages | 4 Stages* |

| Training Intensity (TI) | Unsupervised | Semi-Supervised | Supervised Transferable / Synthetic | Supervised |

| Multi-Agent Robustness (MAR) | 1 Actor, 1 Class | 1 Actor, 2+ Classes | 2+ Actors, Unique Classes | 2+ Actors, 2+ Classes |

| Technique | Mean Error (cm) | MAR | TI | IC |

|---|---|---|---|---|

| CRAVES Section 3.2.2.1 | 2.67 | 1 | 3 | 3 |

| DREAM Section 3.2.2.2 | 2.74 | 1 | 3 | 2 |

| Anipose(Human, 90thpercentile) Section 3.2.2.3 | 8.6 | 4 | 4 | 4 |

| RoboPose Section 3.2.2.4 | 1.34 | 1 | 3 | Iter |

| Watch-it-move Section 3.2.2.5 | 7.59 | 1 | 2 | 6 |

| SPDH Section 3.2.2.6 | 4.41 | 1 | 3 | 3 |

| SGTAPose Section 3.2.2.7 | 1.812 | 1 | 3 | 3 |

| CASA Section 3.2.2.8 | 0.053 (mCham) | 1 | 1 | Iter |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).