Submitted:

04 February 2026

Posted:

05 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. The State of the Debate

3. AI Evaluation Metrics

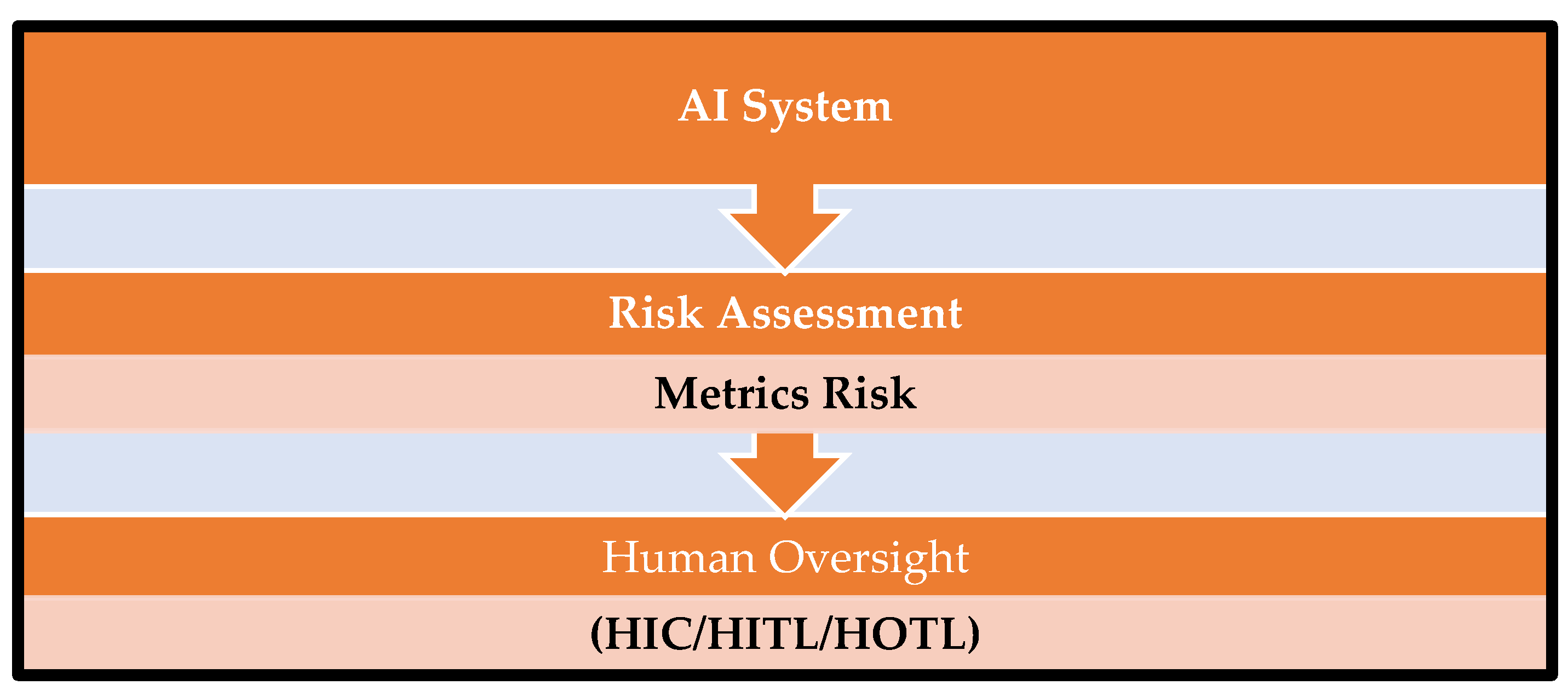

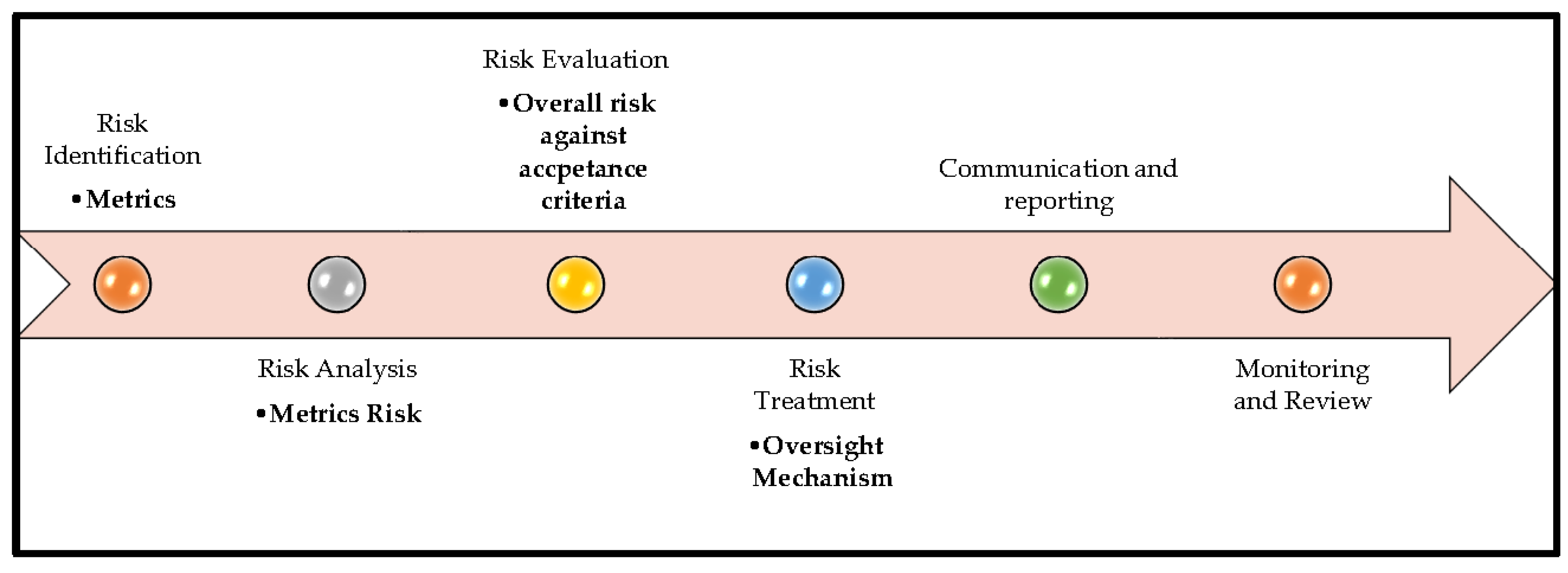

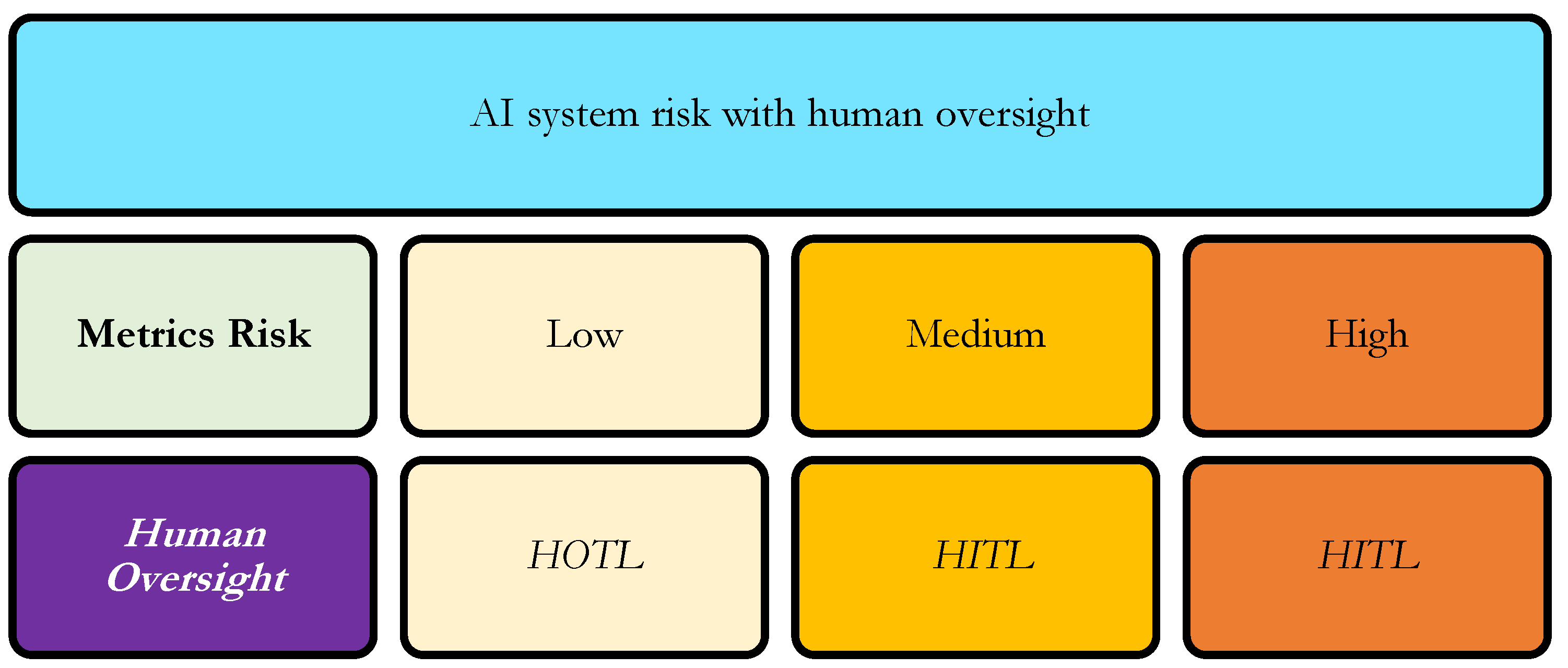

4. Risk Assessment and Oversight

4.1. Risk Identification

4.2. Risk Analysis

4.3. Risk Evaluation

- Worst-case approach, use the metric result that shows the highest risk.

- Key-metric approach, focus on metrics that were identified in advance as the most important or critical.

- Average approach, calculate the overall risk based on the average of all metric results.

- Other documented approaches, use any alternative method, as long as it is clearly described and justified.

4.4. Risk Treatment

4.4. Communication & Reporting

4.5. Monitoring & Review

5. Illustrative Risk Assessments Informing Human Oversight

5.1. Risk Identification

5.2. Risk Analysis

| Type | Result |

|---|---|

| Metrics Risk | Medium |

| Human Oversight | HITL |

5.3. Risk Evaluation

5.4. Risk Treatment

5.5. Monitoring and Review

5.6. Communication and Reporting

6. Conclusion

Abbreviations

| AI | Artificial Intelligence |

| AUC - ROC | Area Under the Receiver Operating Characteristic Curve |

| FN | False Negative |

| FP | False Positive |

| HIC | Human-in-Command |

| HITL | Human-in-the-Loop |

| HOTL | Human-on-the-Loop |

| ISO | International Standards Organization |

| MSE | Mean Squared Error |

| TN | True Negative |

| TP | True Positive |

| TSR | Task Success Rate |

References

- US FDA. Considerations for the use of artificial intelligence to support regulatory decision-making for drug and biological products. 2025. Available online: https://www.fda.gov/media/168399/download.

- ISO. ISO 31000. 2018. Available online: https://www.iso.org/standard/65694.html#lifecycle.

- ISO. 2023. Available online: https://www.iso.org/standard/77304.html.

- Raji, I. D.; Smart, A.; White, R. N.; Mitchell, M.; Gebru, T.; Hutchinson, B.; Barnes, P. Closing the AI accountability gap: Defining an end-to-end framework for internal algorithmic auditing. In Proceedings of the 2020 conference on fairness, accountability, and transparency, 2020, January; pp. 33–44. [Google Scholar]

- Mitchell, M.; Wu, S.; Zaldivar, A.; Barnes, P.; Vasserman, L.; Hutchinson, B.; Gebru, T. Model cards for model reporting. In Proceedings of the conference on fairness, accountability, and transparency, 2019, January; pp. 220–229. [Google Scholar] [CrossRef]

- European Commission, S. Ethics guidelines for trustworthy AI. Publications Office. 2019. Available online: https://digital-strategy.ec.europa.eu/en/library/ethics-guidelines-trustworthy-ai.

- Green, B. The flaws of policies requiring human oversight of government algorithms. Computer Law & Security Review 2022, 45, 105681. [Google Scholar] [CrossRef]

- International Council for Harmonisation of Technical Requirements for Pharmaceuticals for Human Use ICH Q9(R1): Quality risk management. 2023. Available online: https://www.ich.org.

- Kandikatla, L.; Radeljic, B. AI and Human Oversight: A Risk-Based Framework for Alignment. arXiv 2025, arXiv:2510.09090. [Google Scholar] [CrossRef]

- European Union. Regulation (EU) 2024/1689 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act). Official Journal of the European Union 2024. [Google Scholar]

- Mikalef, P.; Conboy, K.; Lundström, J. E.; Popovič, A. Thinking responsibly about responsible AI and ‘the dark side’of AI. European Journal of Information Systems 2022, 31(3), 257–268. [Google Scholar] [CrossRef]

- Kocak, B.; Klontzas, M. E.; Stanzione, A.; Meddeb, A.; Demircioğlu, A.; Bluethgen, C.; Cuocolo, R. Evaluation metrics in medical imaging AI: fundamentals, pitfalls, misapplications, and recommendations. European Journal of Radiology Artificial Intelligence 2025, 100030. [Google Scholar] [CrossRef]

- McDermott, M.; Zhang, H.; Hansen, L.; Angelotti, G.; Gallifant, J. A closer look at auroc and auprc under class imbalance. Advances in Neural Information Processing Systems 2024, 37, 44102–44163. [Google Scholar]

- Uy, H.; Fielding, C.; Hohlfeld, A.; Ochodo, E.; Opare, A.; Mukonda, E.; Engel, M. E. Diagnostic test accuracy of artificial intelligence in screening for referable diabetic retinopathy in real-world settings: A systematic review and meta-analysis. PLOS Global Public Health 2023, 3(9), e0002160. [Google Scholar] [CrossRef] [PubMed]

- Developers, Google. Classification: Accuracy, recall, precision, and related metrics. 2025. Available online: https://developers.google.com/machine-learning/crash-course/classification/accuracy-precision-recall.

- Ripatti, A. R. Screening Diabetic Retinopathy and Age-Related Macular Degeneration with Artificial Intelligence: New Innovations for Eye Care. 2025. [Google Scholar]

| Metrics | Definition | Interpretation | Formula |

|---|---|---|---|

| Task Success Rate (TSR) | Proportion of end-to-end clinical tasks successfully executed without system failure or human intervention | Assesses operational reliability and workflow readiness | Tasks completed / Tasks initiated |

| Accuracy | Proportion of correct predictions among all predictions | Overall correctness; may be misleading in imbalanced datasets | (TP + TN) / (TP + TN + FP + FN) |

| Precision | Proportion of predicted positives that are truly positive | Controls false positives; relevant for minimizing unnecessary referrals | TP / (TP + FP) |

| Recall (Sensitivity) | Proportion of actual positive cases correctly identified | Controls false negatives; critical in screening and safety-critical use cases | TP / (TP + FN) |

| F1 Score | Harmonic mean of precision and recall | Balances false positives and false negatives in imbalanced data | 2 × (Precision × Recall) / (Precision + Recall) |

| AUC–ROC | Ability of the model to discriminate between positive and negative classes across all thresholds | Measures robustness and comparative model performance | Threshold-independent |

| Metrics | High Performance = Low Risk | Medium Performance = Medium Risk | Low Performance = High Risk |

|---|---|---|---|

| Task Success Rate (TSR) | Greater than 95% | 85 to 94% | 80 - 85% |

| Accuracy | Greater than 90 % | 80 to 89 % | 75 % to 80 % |

| Precision | Greater than 90 % | 80 to 89% | 75 % to 80 % |

| Recall (Sensitivity) | Greater than 90 % | 80 to 89% | 75 % to 80 % |

| F1 Score | Greater than 0.85 | 0.75 to 0.84 | 0.70 to 0.75 |

| AUC–ROC | Greater than 0.90 | 0.80 to 0.89 | 0.70 to 0.75 |

| Metric | Clinical Relevance | Calculation / Formula | Observed Value | Risk Interpretation (Based on Table 2) |

|---|---|---|---|---|

| Task Success Rate (TSR) | Measures the AI system’s ability to process all retinal images reliably; ensures no cases are skipped, supporting workflow continuity | Tasks successfully completed / Total tasks initiated | 900 / 1,000 = 90% | Medium risk |

| Accuracy | Provides an overall measure of correct classifications; useful for general performance but must consider disease prevalence to avoid masking false negatives | (TP + TN) / (TP + TN + FP + FN) | (160 + 780) / 1,000 = 94% | Low risk |

| Precision | Indicates the proportion of AI-flagged referable DR cases that are truly referable; reduces unnecessary specialist referrals and patient burden | TP / (TP + FP) | 160 / 200 = 80% | Low risk |

| Recall (Sensitivity) | Measures the AI system’s ability to correctly identify all patients with referable DR; critical for preventing missed diagnoses and ensuring patient safety | TP / (TP + FN) | 160 / 180 = 89% | Low risk |

| F1 Score | Combines precision and recall to provide a balanced assessment of AI performance, particularly important in imbalanced datasets where missed cases or false referrals matter | 2 × (Precision × Recall) / (Precision + Recall) | = 0.84 | Low risk |

| AUC–ROC | Evaluates the AI’s ability to discriminate between referable and non-referable DR across all classification thresholds; threshold-independent indicator of overall model quality | Threshold-independent | 0.92 | Low risk |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).