Submitted:

30 January 2026

Posted:

02 February 2026

You are already at the latest version

Abstract

Keywords:

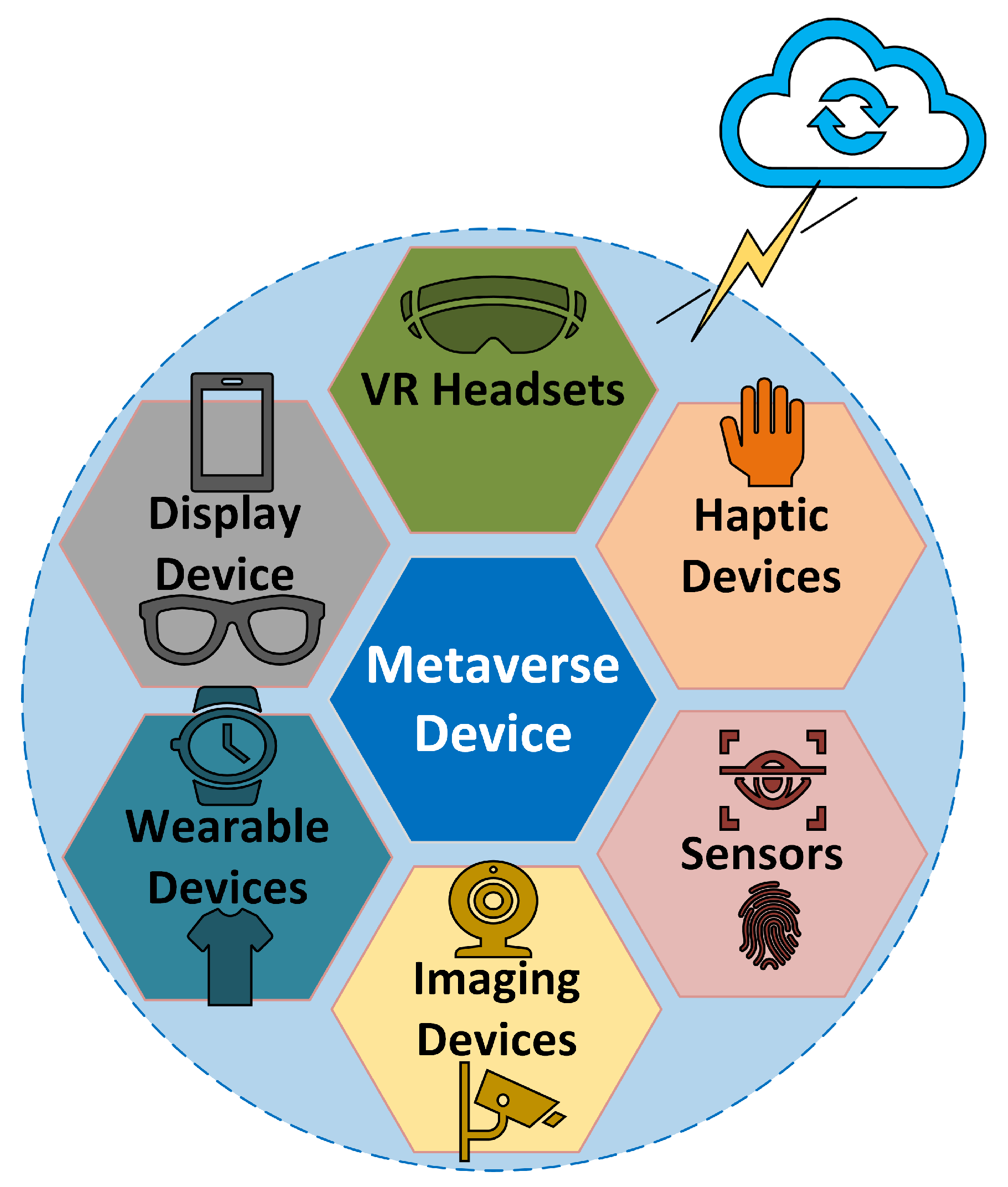

1. Introduction

2. Wireless Network Evolution and New Directions

3. Directions

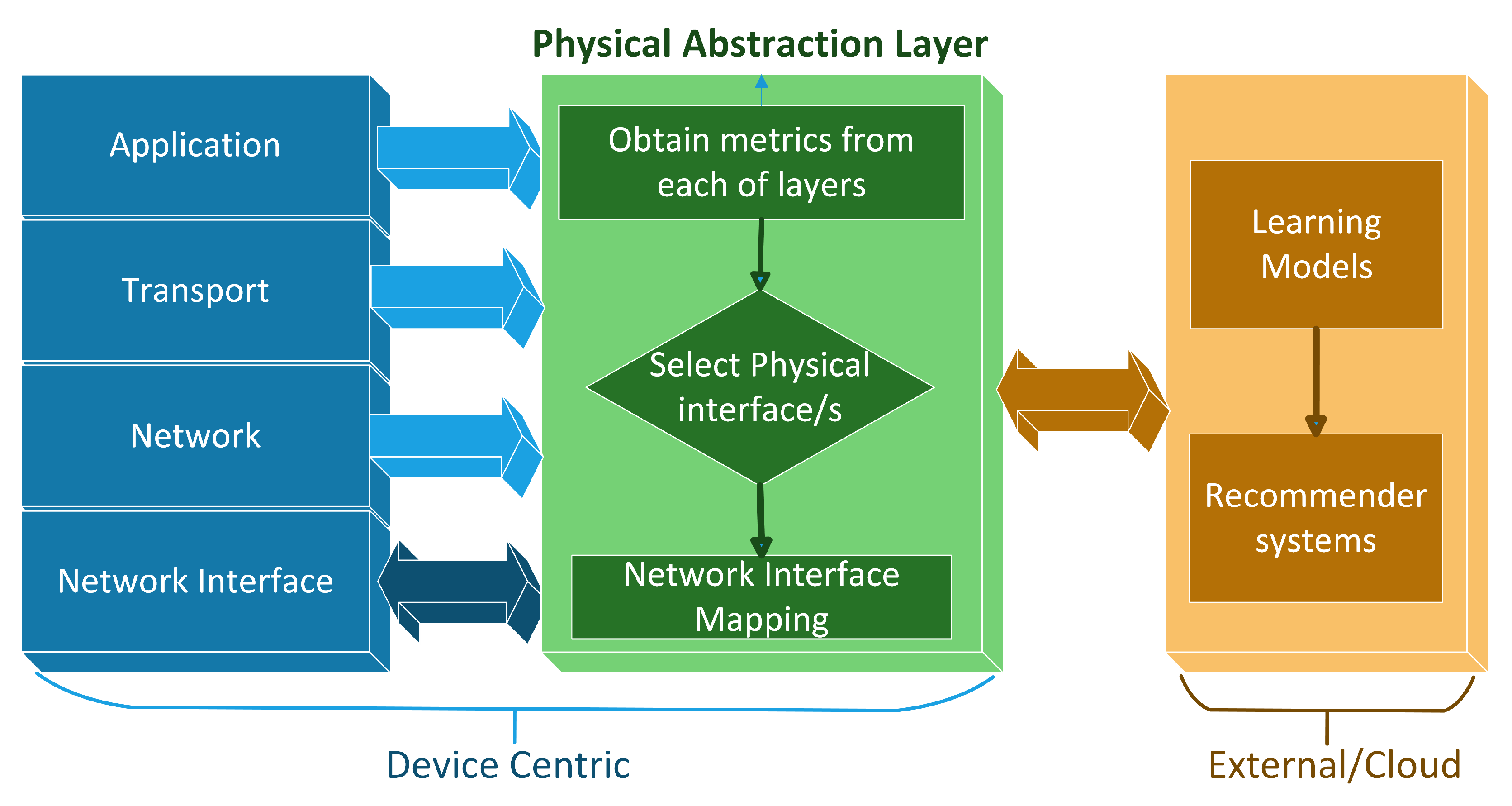

4. Proposed Network Model

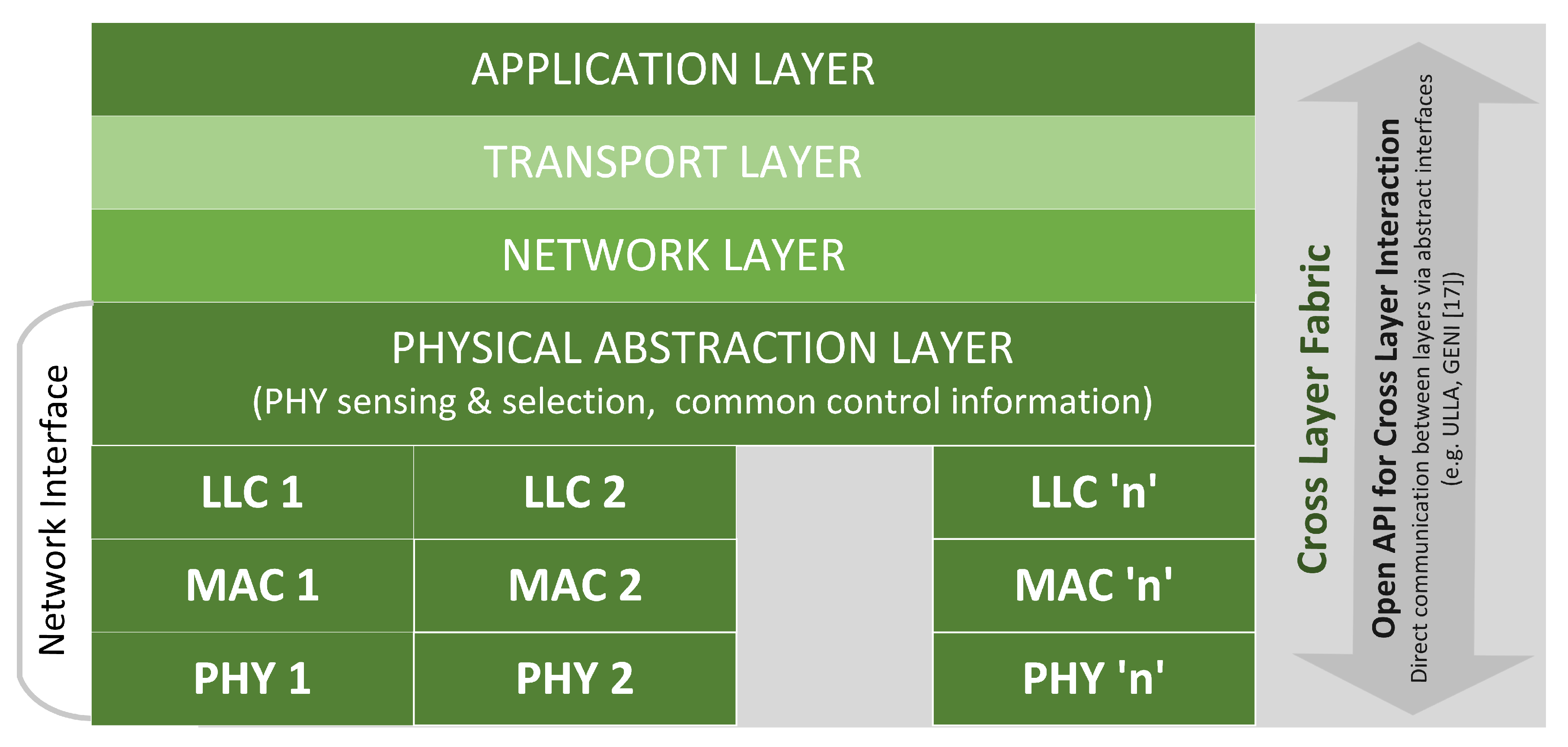

- 1.

- The application layer has access to the bottom layer information such as network congestion metrics, resource availability, link error rates, and physical layer metrics which enables higher layers to evaluate application needs and set parameters such as encryption length and compression rate accordingly.

- 2.

- Middle layers as Network and Transport layer will have access to upper layer information such as user application type, encryption length, and lower layer information such as physical resource utilization, and channel estimates which will enable them to provision, create or merge networks dynamically.

- 3.

- Lower layers such as the physical layer and link layer have access to upper layer information such as application requirements, network congestion rates and other layer’s information. This will enable lower layers to schedule resources efficiently.

- 4.

- In the conventional layered model, TCP session starts out with a three-way handshake between the end points. A different approach is to set up multiple sessions across multiple interfaces for an application and merge the sessions at the receiver. This will require intelligence at the transmitter to split the sessions and combine them at the receiver end.

- 5.

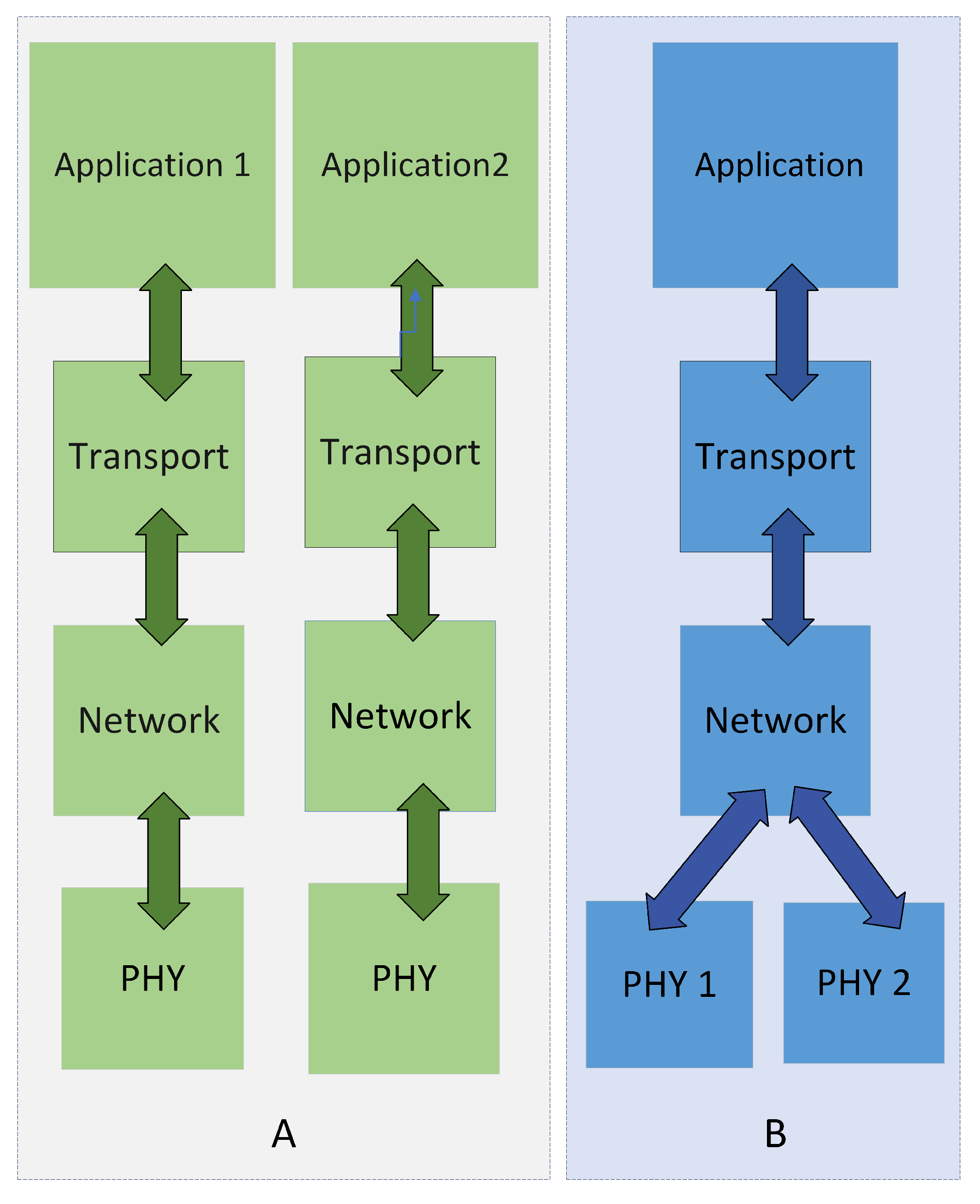

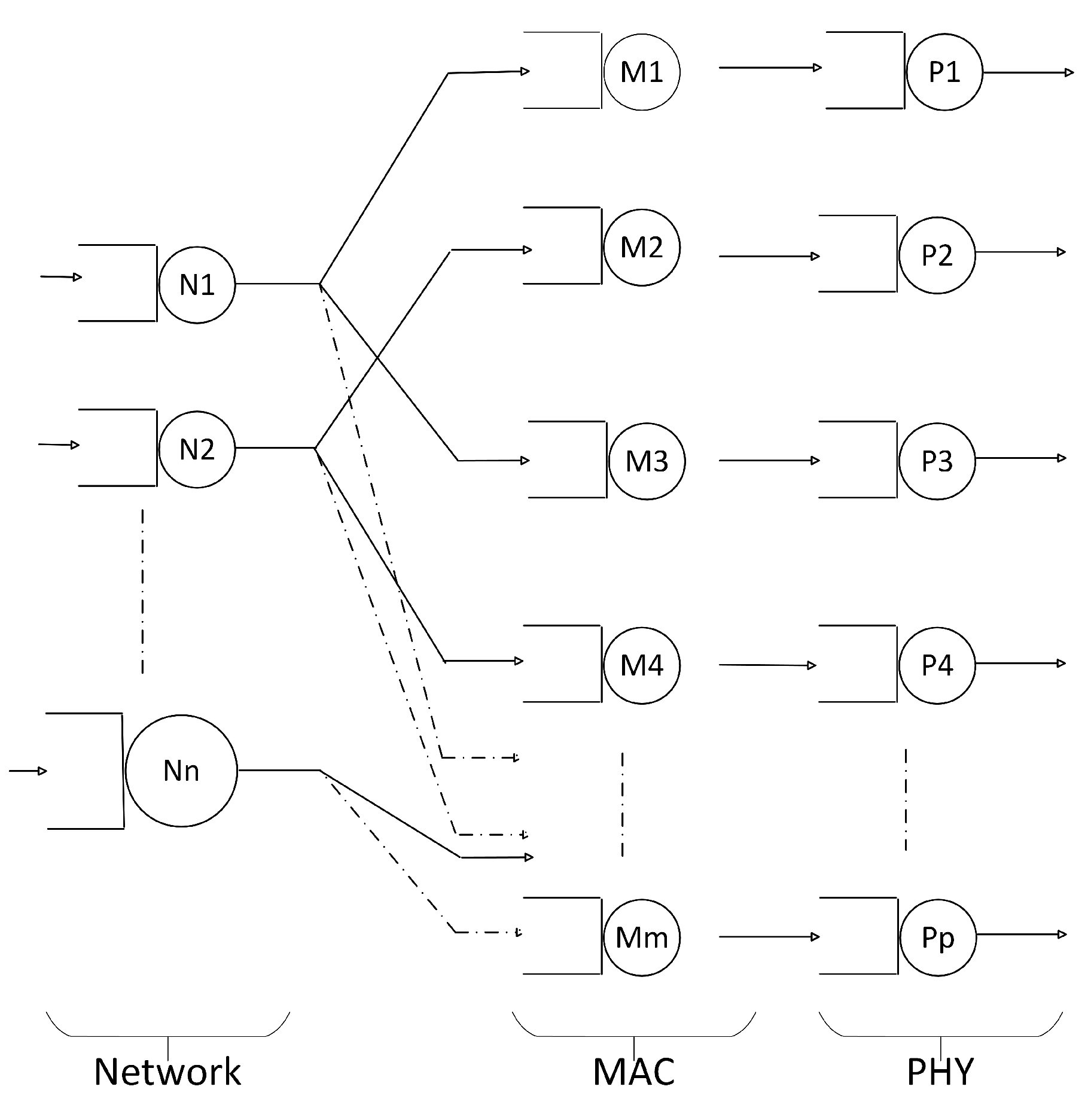

- Link layers transmit frames across multiple physical layer interfaces as illustrated in Figure 2. Conceptually the idea is to split a network flow across available physical media and reassemble frames at the receiving link layer. A physical abstraction layer is proposed to abstract network layer from the network interface layer. This splitting of information at the network interface layer is abstracted from the network layer as the distribution is dynamic.

5. Protocol Design

6. Comparative Analysis

7. Analytical Model

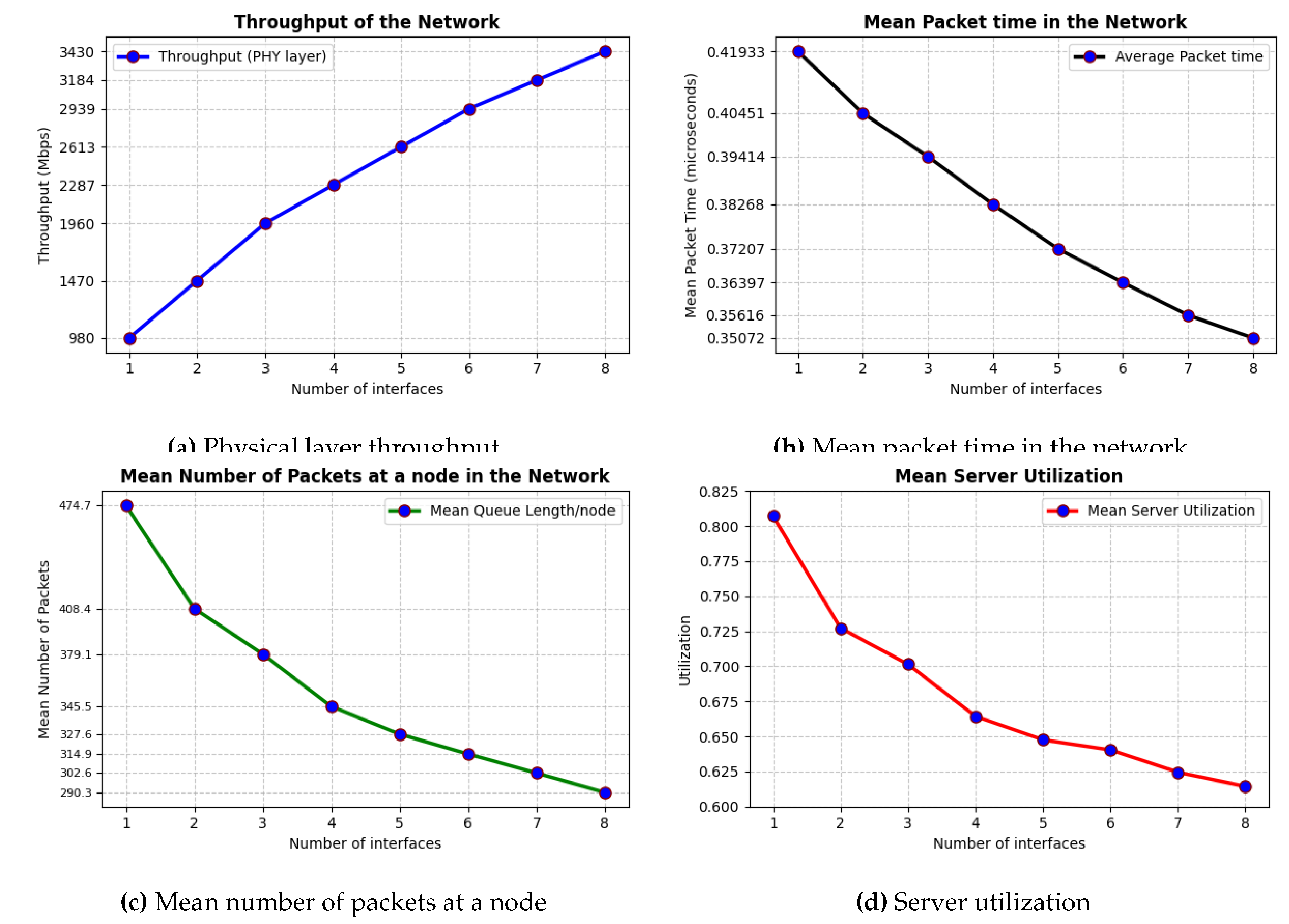

- Quantify Performance Gains: We aim to quantify the performance gains resulting from the distribution of network packets across multiple interfaces. The proposed approach was validated by quantifying the throughput increase between the network and interface layers. This analysis incorporated the overhead of queue processing and potential data losses at each interface to provide a realistic assessment of the achievable performance gains.

- Optimize Interface Allocation: The model also facilitates optimization of the number of interfaces for a given network flow characteristic. By analyzing the performance impact of varying the number of interfaces, an optimal configuration can be identified that maximizes efficiency and minimizes latency, considering the specific demands of the network traffic.

- Node is a FCFS queue and may have one or more exponential servers, each with a specific service rate.

- External arrival rates to node is a Poisson process.

- After completing service at node at the Network layer, a packet may proceed to node at the MAC layer, and then subsequently exit from the PHY layer.

| Notation | Description |

|---|---|

| n | Number of network interfaces |

| M | Number of nodes in the system |

| Service rate which is equal to | |

| Arrival rate which is | |

| Effective service rate accounting for delays | |

| External arrival rate | |

| Sum of all external arrival rates | |

| W | Mean Waiting time of a packet in the network |

| N | Number of packets at a node |

| Utilization of the server | |

| Probability of transition from node i to node j | |

| Service Penalty |

8. Analytical Modeling Results

- 1.

- Packet processing time

- 2.

- Net system throughput

- 3.

- Queue length

- 4.

- Server utilization

| Parameter | Description |

|---|---|

| Exponential distribution | |

| Poisson distribution | |

| Uniform distribution for Service Penalty | |

| n | Number of MAC-PHY interfaces |

| Aggregation across 3 states |

9. Conclusion

Biographies

|

A. George (IEEE Senior Member) is a faculty at the Higher Colleges of Technology, |

| United Arab Emirates. He earned his Doctorate degree in Computer Science from | |

| the University of Louisville, USA and his master’s degree in computer science | |

| from Ball State University, USA. Dr. George has over a decade and a half of | |

| industry and academic experience in multinational companies such as Kyocera, | |

| National Instruments, and Alliance University. His primary areas of interest | |

| include wireless networks, distributed computing, and machine learning. He | |

| has published in reputed journals such as Elsevier and IJPCC. His recent | |

| publication is a book titled Towards Wireless Heterogeneity in 6G Networks with | |

| CRC Press (April 2024). |

|

M. W. Hussain is an Associate Professor in the School of Computing and |

| Information Technology at Reva University, Bangalore. He received his PhD in | |

| Computer Science from the National Institute of Technology Meghalaya, Shillong, | |

| and his master’s degree in Computer Science from the National Institute of | |

| Technology Arunachal Pradesh. His research interests include software-defined | |

| networking and big data. His work has appeared in reputed venues, including the | |

| IEEE Internet of Things Journal and journals published by IEEE, Elsevier, | |

| and Wiley. |

References

- Park, S.M.; Kim, Y.G. A metaverse: taxonomy, components, applications, and open challenges. IEEE access 2022, 10, 4209–4251. [Google Scholar] [CrossRef]

- Tang, F.; Chen, X.; Zhao, M.; Kato, N. The Roadmap of Communication and Networking in 6G for the Metaverse. IEEE Wireless Communications 2022.

- Fu, B.; Xiao, Y.; Deng, H.; Zeng, H. A survey of cross-layer designs in wireless networks. IEEE Communications Surveys & Tutorials 2013, 16, 110–126. [Google Scholar] [CrossRef]

- Rabinovitsj, D. The next big connectivity challenge: Building metaverse-ready networks. https://tech.facebook.com/ideas/2022/2/metaverse-ready-networks/, 2022.

- Wang, C.X.; You, X.; Gao, X.; Zhu, X.; Li, Z.; Zhang, C.; Wang, H.; Huang, Y.; Chen, Y.; Haas, H.; et al. On the road to 6G: Visions, requirements, key technologies and testbeds. IEEE Communications Surveys & Tutorials, 2023. [Google Scholar]

- Akyildiz, I.F.; Kak, A.; Nie, S. 6G and beyond: The future of wireless communications systems. IEEE access 2020, 8, 133995–134030. [Google Scholar] [CrossRef]

- Habibi, M.A.; Han, B.; Nasimi, M.; Kuruvatti, N.P.; Fellan, A.; Schotten, H.D. Towards a fully virtualized, cloudified, and slicing-aware RAN for 6G mobile networks. In 6G Mobile Wireless Networks; Springer, 2021; pp. 327–358. [Google Scholar]

- Wheeler, D.; Natarajan, B. Engineering semantic communication: A survey. IEEE Access 2023, 11, 13965–13995. [Google Scholar] [CrossRef]

- Ostovar, A.; Keshavarz, H.; Quan, Z. Cognitive radio networks for green wireless communications: an overview. Telecommunication Systems 2021, 76, 129–138. [Google Scholar] [CrossRef]

- Chen, Z.; Zhang, Z.; Yang, Z. Big AI models for 6G wireless networks: Opportunities, challenges, and research directions. IEEE Wireless Communications 2024.

- Khoramnejad, F.; Hossain, E. Generative AI for the optimization of next-generation wireless networks: Basics, state-of-the-art, and open challenges. IEEE Communications Surveys & Tutorials 2025.

- Olaifa, J.O.; Arifler, D. Dual Connectivity in Heterogeneous Cellular Networks: Analysis of Optimal Splitting of Elastic File Transfers Using Flow-Level Performance Models. IEEE Access 2023, 11, 140582–140595. [Google Scholar] [CrossRef]

- Khan, S.A.; Shayea, I.; Ergen, M.; Mohamad, H. Handover management over dual connectivity in 5G technology with future ultra-dense mobile heterogeneous networks: A review. Engineering Science and Technology, an International Journal 2022, 35, 101172. [Google Scholar] [CrossRef]

- Prakash, M.; Abdrabou, A.; Zhuang, W. Stochastic delay guarantees for devices with dual connectivity. IEEE Internet of Things Journal 2023, 11, 2126–2138. [Google Scholar] [CrossRef]

- Geelen, A. Deutsche Telekom, Ericsson and Qualcomm demonstrate millimeter wave technologies for QoS managed connectivity. https://www.telekom.com/en/media/media-information/archive/5g-millimeter-wave-technologies-for-industry-1024054, 2023.

- Srivastava, V.; Motani, M. Cross-layer design: a survey and the road ahead. IEEE communications magazine 2005, 43, 112–119. [Google Scholar] [CrossRef]

- Sooriyabandara, M.; Farnham, T.; Mahonen, P.; Petrova, M.; Riihijarvi, J.; Wang, Z. Generic interface architecture supporting cognitive resource management in future wireless networks. IEEE Communications Magazine 2011, 49, 103–113. [Google Scholar] [CrossRef]

- Al Emam, F.A.; Nasr, M.E.; Kishk, S.E. Coordinated handover signaling and cross-layer adaptation in heterogeneous wireless networking. Mobile Networks and Applications 2020, 25, 285–299. [Google Scholar] [CrossRef]

- Ramly, A.M.; Abdullah, N.F.; Nordin, R. Cross-layer design and performance analysis for ultra-reliable factory of the future based on 5G mobile networks. IEEE Access 2021, 9, 68161–68175. [Google Scholar] [CrossRef]

- Chatterjee, S.; De, S. QoE-aware cross-layer adaptation for D2D video communication in cooperative cognitive radio networks. IEEE Systems Journal 2022, 16, 2078–2089. [Google Scholar] [CrossRef]

- Liu, K.; Xu, X.; Chen, M.; Liu, B.; Wu, L.; Lee, V.C. A hierarchical architecture for the future internet of vehicles. IEEE Communications Magazine 2019, 57, 41–47. [Google Scholar] [CrossRef]

- Hussain, M.W.; Sangaiah, A.K.; Reddy, K.H.K.; Roy, D.S.; Alenazi, M.J.; Javvaji, P.K. A Novel Intelligent Task Offloading Scheme for Multi-Controller Environment in Software Defined Internet of Vehicles. IEEE Internet of Things Journal 2025.

- Stewart, W.J. Probability, Markov chains, queues, and simulation: the mathematical basis of performance modeling; Princeton university press, 2009.

| Design Element | Objective | Description |

|---|---|---|

| Cross Layer Optimization – Layer Interfaces and Message Format | – Exchange information across layers. – Design and deploy middleware to exchange messages across layers. | – Define message format across layers. – Specify data model for an open API across layers. |

| Cross Layer Optimization – Best Outcome | – Apply constrained optimization at each layer. – Avoid optimization loops where layers counteract each other. | – Allow layers to enable/disable cross-layer optimizations. – Ensure consistent actions at each layer across devices. |

| Flexible Physical Layer – Transmission Management | – Distribute network flow over multiple interfaces. – Manage retransmission policy across radio interfaces. | – Decide retransmission on same or other interfaces. – Detect and retransmit with minimal delay and jitter. |

| Flexible Physical Layer – Transmission Flexibility | – Discover and utilize appropriate radio interfaces. – Transmit efficiently across selected interfaces. | – Dynamically segregate traffic across interfaces. – Select interfaces ensuring consistent performance. |

| Features | TCP/IP | Proposed Model |

|---|---|---|

| Layer- centric | Layering in TCP/IP was an after outcome of the merging of existing protocols. Hence it fails to represent protocol stack other than the TCP/IP suite (e.g. Bluetooth connection). | Proposed model is based on TCP/IP is fine-grained where layers are functionally equipped regardless of the technology at each layer. |

| Function Separation | TCP/IP model does not have a clear separation between the services and functions at each layer, might cause inconsistency. | Model revolves around the idea of function separation into layers with a focus on layer independence. |

| Protocol Adaptation | Protocol or layer boundaries are rigid which restricts the optimization of protocols to address futuristic application scenarios. | Intelligent protocol adaptations or optimizations can be done based on the application scenario as layers are not fully agnostic. |

| Cross-layer visibility | Layer interfaces are closed and offer no visibility to perform constrained optimizations that spans layer boundaries. | Individual layers can make optimal decisions with cross layer fabric providing enhanced visibility. |

| Layer-dependence | Linear mapping from application layer to network interface layer where a single application is attached to a single radio interface. | Physical abstraction layer removes the linear mapping from application to the network interface layer where a single application can be attached to multiple radio interface. |

| Openness | Closed set of interfaces offer limited functionality to improve network performance. | Cross layer fabric provides accessible information across layers via an open API where layers can directly communicate with each other. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).