Submitted:

24 January 2026

Posted:

26 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Sample and Study Scope Description

2.2. Experimental Design and Control Setup

2.3. Measurement Procedures and Quality Control

2.4. Data Processing and Model Formulation

2.5. Statistical Analysis and Reproducibility

3. Results and Discussion

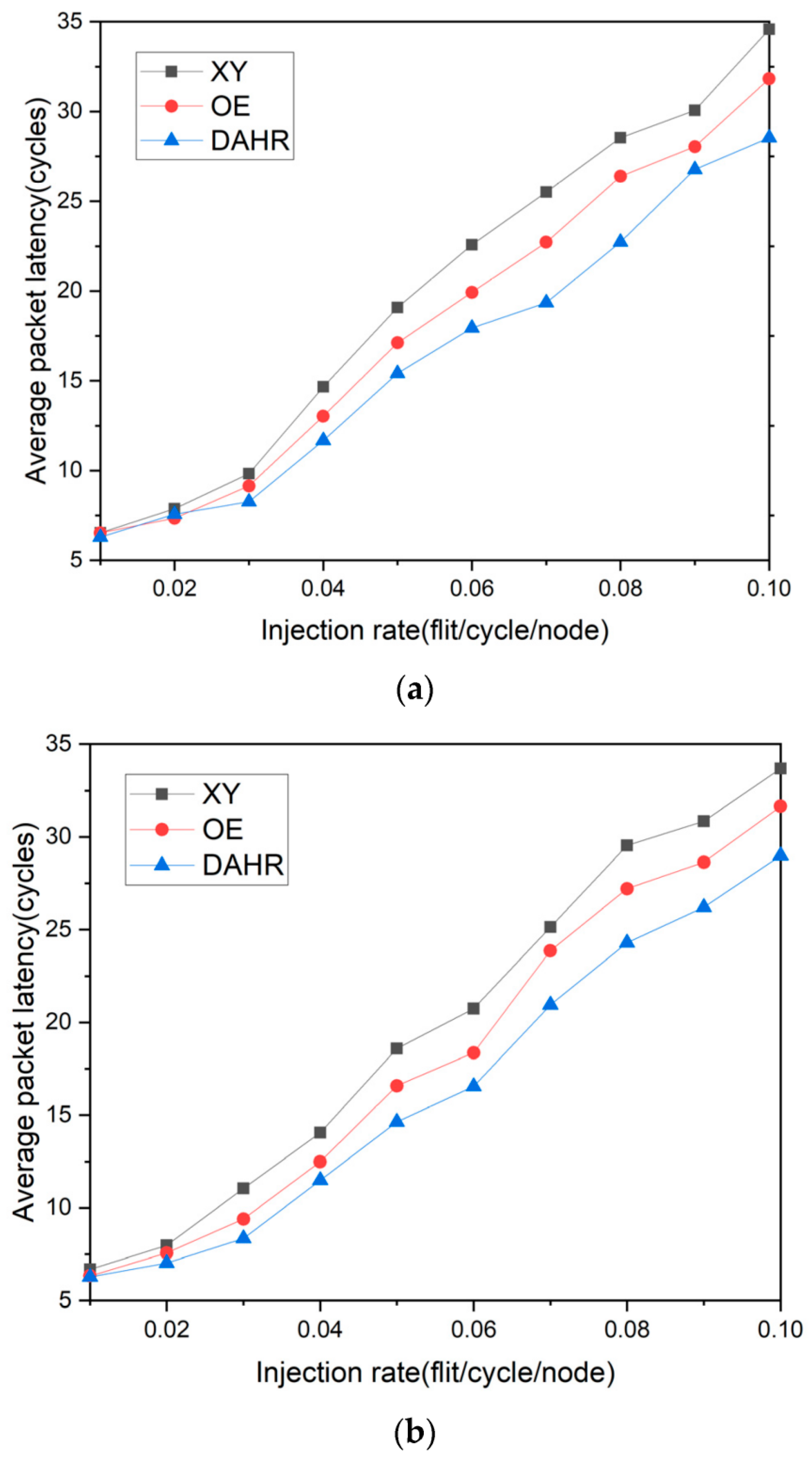

3.1. Latency–Reliability Behavior Under Transient Faults

3.2. Reliability Under Permanent and Mixed Fault Modes

3.3. Effect of Fault Clustering and Array Scaling

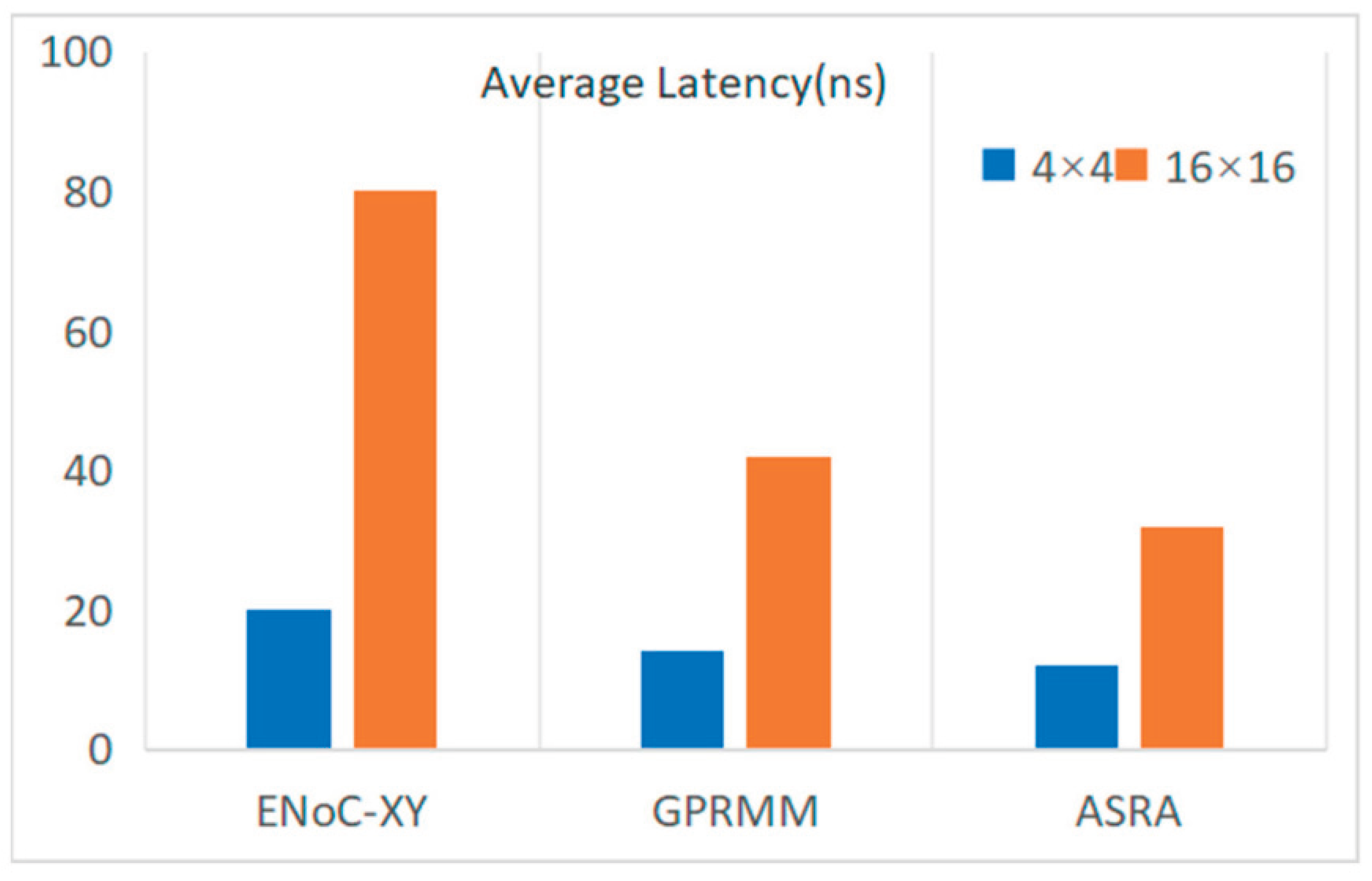

3.4. Overhead, Trade-Offs, and Comparison with Fixed Configurations

4. Conclusion

References

- Chowdhury, T. K.; Ashfaq, S. High-Performance Computing Architectures To Strengthen Cloud Infrastructure Security. American Journal of Interdisciplinary Studies 2024, 5(03), 01–42. [Google Scholar] [CrossRef]

- Cai, Z., Xiao, W., Sun, H., Luo, C., Zhang, Y., Wan, K., ... & Hu, J. (2025, May). R-kv: Redundancy-aware kv cache compression for reasoning models. In The Thirty-ninth Annual Conference on Neural Information Processing Systems.

- Borujeni, F. G.; Hamad, M. S.; Shahi, A. S. Coupled hydraulic-geomechanical analysis in well drilling operations: a systematic review of experimental and numerical methodologies. Carbonates and Evaporites 2026, 41(1), 13. [Google Scholar] [CrossRef]

- Yang, M., Wang, Y., Shi, J., & Tong, L. (2025). Reinforcement Learning Based Multi-Stage Ad Sorting and Personalized Recommendation System Design.

- Narumi, K., Qin, F., Liu, S., Cheng, H. Y., Gu, J., Kawahara, Y., ... & Yao, L. (2019, October). Self-healing UI: Mechanically and electrically self-healing materials for sensing and actuation interfaces. In Proceedings of the 32nd Annual ACM Symposium on User Interface Software and Technology (pp. 293-306).

- Wu, Q., Shao, Y., Wang, J., & Sun, X. (2025). Learning Optimal Multimodal Information Bottleneck Representations. arXiv preprint arXiv:2505.19996. arXiv:2505.19996.

- Balhara, S.; Gupta, N.; Alkhayyat, A.; Bharti, I.; Malik, R. Q.; Mahmood, S. N.; Abedi, F. A survey on deep reinforcement learning architectures, applications and emerging trends. IET Communications 2025, 19(1), e12447. [Google Scholar] [CrossRef]

- Tan, L.; Peng, Z.; Song, Y.; Liu, X.; Jiang, H.; Liu, S.; Xiang, Z. Unsupervised domain adaptation method based on relative entropy regularization and measure propagation. Entropy 2025, 27(4), 426. [Google Scholar] [CrossRef] [PubMed]

- Sheu, J. B.; Gao, X. Q. Alliance or no alliance—Bargaining power in competing reverse supply chains. European Journal of Operational Research 2014, 233(2), 313–325. [Google Scholar] [CrossRef]

- Chatzopoulos, O., Karystinos, N., Papadimitriou, G., Gizopoulos, D., Dixit, H. D., & Sankar, S. (2025, March). Veritas–Demystifying silent data corruptions: μArch-level modeling and fleet data of modern x86 CPUs. In 2025 IEEE International Symposium on High Performance Computer Architecture (HPCA) (pp. 1-14). IEEE.

- Bai, W.; Wu, K.; Wu, Q.; Lu, K. AFLGopher: Accelerating Directed Fuzzing via Feasibility-Aware Guidance. arXiv 2025, arXiv:2511.10828. [Google Scholar] [CrossRef]

- Khalil, K., Kumar, A., & Bayoumi, M. (2024). Dynamic fault tolerance approach for network-on-chip architecture. IEEE Journal on Emerging and Selected Topics in Circuits and Systems.

- Du, Y. Research on Deep Learning Models for Forecasting Cross-Border Trade Demand Driven by Multi-Source Time-Series Data. Journal of Science, Innovation & Social Impact 2025, 1(2), 63–70. [Google Scholar]

- Hamdi, M. M.; Abdulhakeem, B. S.; Nafea, A. A. PSOA-CRL: A Hybrid Multi-Objective Routing Mechanism Using Particle Swarm Optimization and Actor-Critic Reinforcement Learning For VANETs. Mesopotamian Journal of Big Data 2025, 2025, 241–260. [Google Scholar] [CrossRef]

- Mao, Y., Ma, X., & Li, J. (2025). Research on API Security Gateway and Data Access Control Model for Multi-Tenant Full-Stack Systems.

- Hukerikar, S., Lotfi, A., Huang, Y., Campbell, J., & Saxena, N. (2024, June). Optimizing Large-Scale Fault Injection Experiments through Martingale Hypothesis: A Systematic Approach for Reliability Assessment of Safety-Critical Systems. In 2024 54th Annual IEEE/IFIP International Conference on Dependable Systems and Networks-Supplemental Volume (DSN-S) (pp. 111-117). IEEE.

- Mao, Y., Ma, X., & Li, J. (2025). Research on Web System Anomaly Detection and Intelligent Operations Based on Log Modeling and Self-Supervised Learning.

- Enjavimadar, M.; Rastegar, M. Optimal reliability-centered maintenance strategy based on the failure modes and effect analysis in power distribution systems. Electric Power Systems Research 2022, 203, 107647. [Google Scholar] [CrossRef]

- Liu, S., Feng, H., & Liu, X. (2025). A Study on the Mechanism of Generative Design Tools' Impact on Visual Language Reconstruction: An Interactive Analysis of Semantic Mapping and User Cognition. Authorea Preprints.

- Anuradha, P.; Majumder, P.; Sivaraman, K.; Vignesh, N. A.; Jayakar, S. A.; Selvaraj, A.; Soufiene, B. O. Enhancing high-speed data communications: Optimization of route controlling network on chip implementation. IEEE Access 2024, 12, 123514–123528. [Google Scholar] [CrossRef]

- Redish, A. D., Kepecs, A., Anderson, L. M., Calvin, O. L., Grissom, N. M., Haynos, A. F., & Zilverstand, A. (2022). Computational validity: using computation to translate behaviours across species. Philosophical Transactions of the Royal Society B: Biological Sciences, 377(1844).

- Sefati, S. S.; Halunga, S. Ultra-reliability and low-latency communications on the internet of things based on 5G network: literature review, classification, and future research view. Transactions on Emerging Telecommunications Technologies 2023, 34(6), e4770. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).