Submitted:

17 January 2026

Posted:

21 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

3. Approach

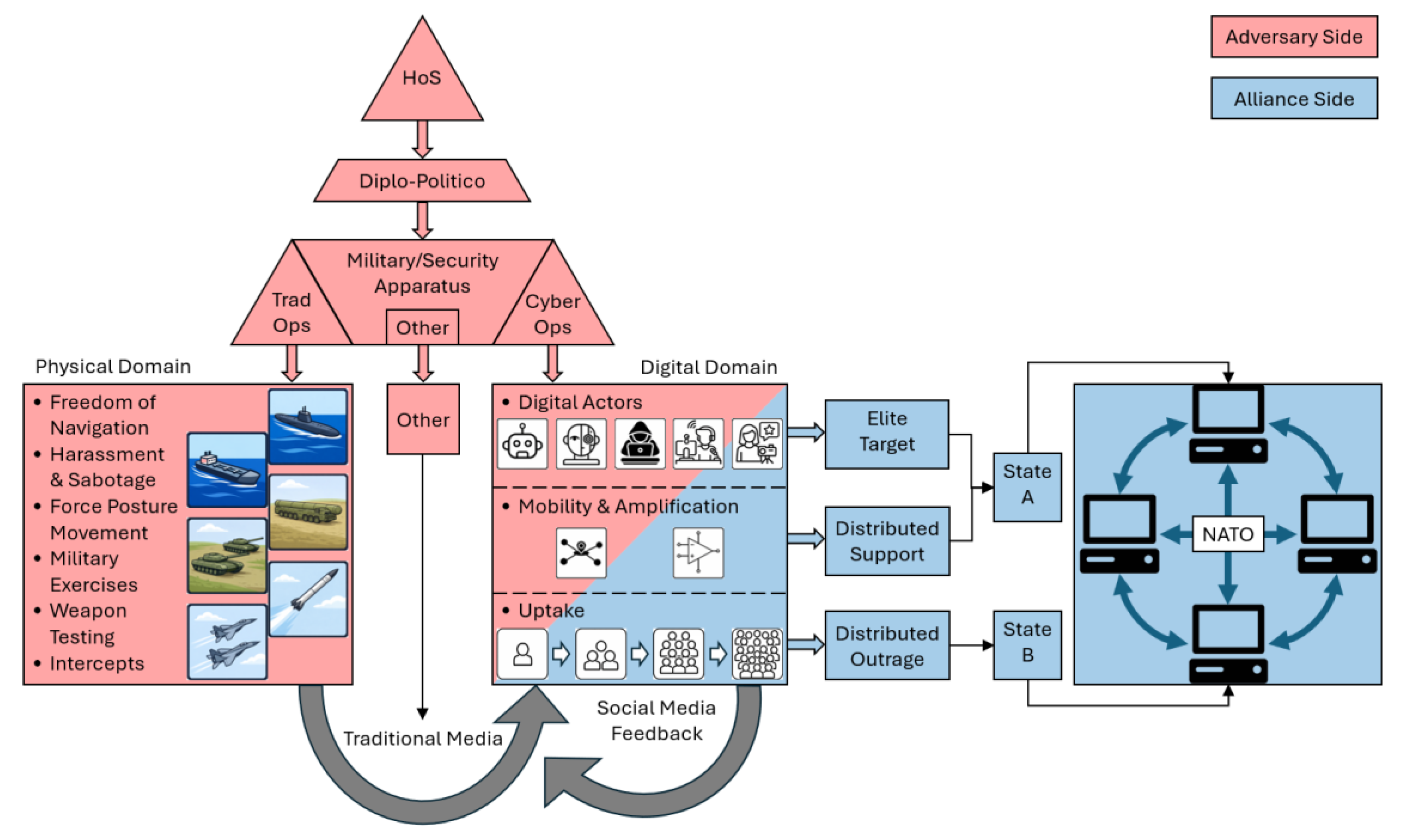

4. Adversary-Initiation

4.1. Traditional Operations

- freedom of navigation movements assert access, transit rights, and jurisdictional contestation through routine passage in disputed or sensitive spaces;

- harassment and sabotage introduce friction, disruption, or damage while remaining below thresholds that would compel formal military response or attribution;

- force posture adjustments modify basing, readiness, or deployment configurations to signal capability, commitment, or flexibility without direct engagement;

- military exercises rehearse operations and interoperability in a manner that is visible, repeatable, and plausibly defensive;

- weapons testing demonstrates technological capability and credibility while remaining temporally and geographically bounded; and

- close encounters such as intercepts probe behavioral norms and thresholds through controlled proximity and interaction.

4.2. Other

- doctrinal and conceptual production defines what counts as realistic, responsible, or credible security thinking through strategy documents and official concepts;

- intelligence boundary management calibrates uncertainty through selective emphasis, declassification, or silence, using ambiguity as a resource rather than a failure;

- lawfare and normative preparation establishes legal and historical frames around sovereignty, treaties, and jurisdiction in advance of contestation;

- resource and industrial framing presents infrastructure, energy, and dependency issues as technical or economic facts while encoding security logics of scarcity and inevitability; and

- counterfactual and futures conditioning narrows perceived possibilities through war games, scenarios, and strategic foresight that circulate indirectly via expert communities.

4.3. Cyber Operations

- disinformation operations deliberately seed false or misleading content in order to shape belief, trust, or perceived reality;

- misinformation amplification increases the visibility and reach of misleading claims regardless of their original source, often through coordinated networks;

- coordinated inauthentic behavior deploys networks of accounts that conceal coordination or identity to simulate organic consensus or grassroots activity;

- automation and computational propaganda use political bots and automated agents to manipulate visibility, ranking, and perceived popularity;

- cyborg operations combine human control with automation, producing accounts that shift between bot-like and human-like behavior over time;

- troll and persona management employs human-operated accounts to provoke, antagonize, distract, and polarize through identity performance and conflict generation; and

- hack-and-leak as cyber-enabled influence releases authentic but selectively obtained material through digital means in order to shape media narratives and political salience.

5. Media

6. Digital Environment

7. Political Salience

8. NATO Network

9. Implications

10. Conclusions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Jordan, J. International Competition Below the Threshold of War: Toward a Theory of Gray Zone Conflict. Journal of Strategic Security 2020, 14, 1–24. [Google Scholar] [CrossRef]

- Dobias, P.; Christensen, K. The 'Grey Zone' and Hybrid Activities. Connections: The Quarterly Journal 2022, 21, 41–54. [Google Scholar] [CrossRef]

- Cormac, R.; Aldrich, R.J. Grey is the new black: covert action and implausible deniability. International Affairs 2018, 94, 477–494. [Google Scholar] [CrossRef]

- Shelest, H.; Parachini, J.V. Why Does Russia Disinform About Biological Weapons? Ukraine Analytica 2023, 1, 3–17. https://ukraine-analytica.org/wp-content/uploads/Shelest3.pdf.

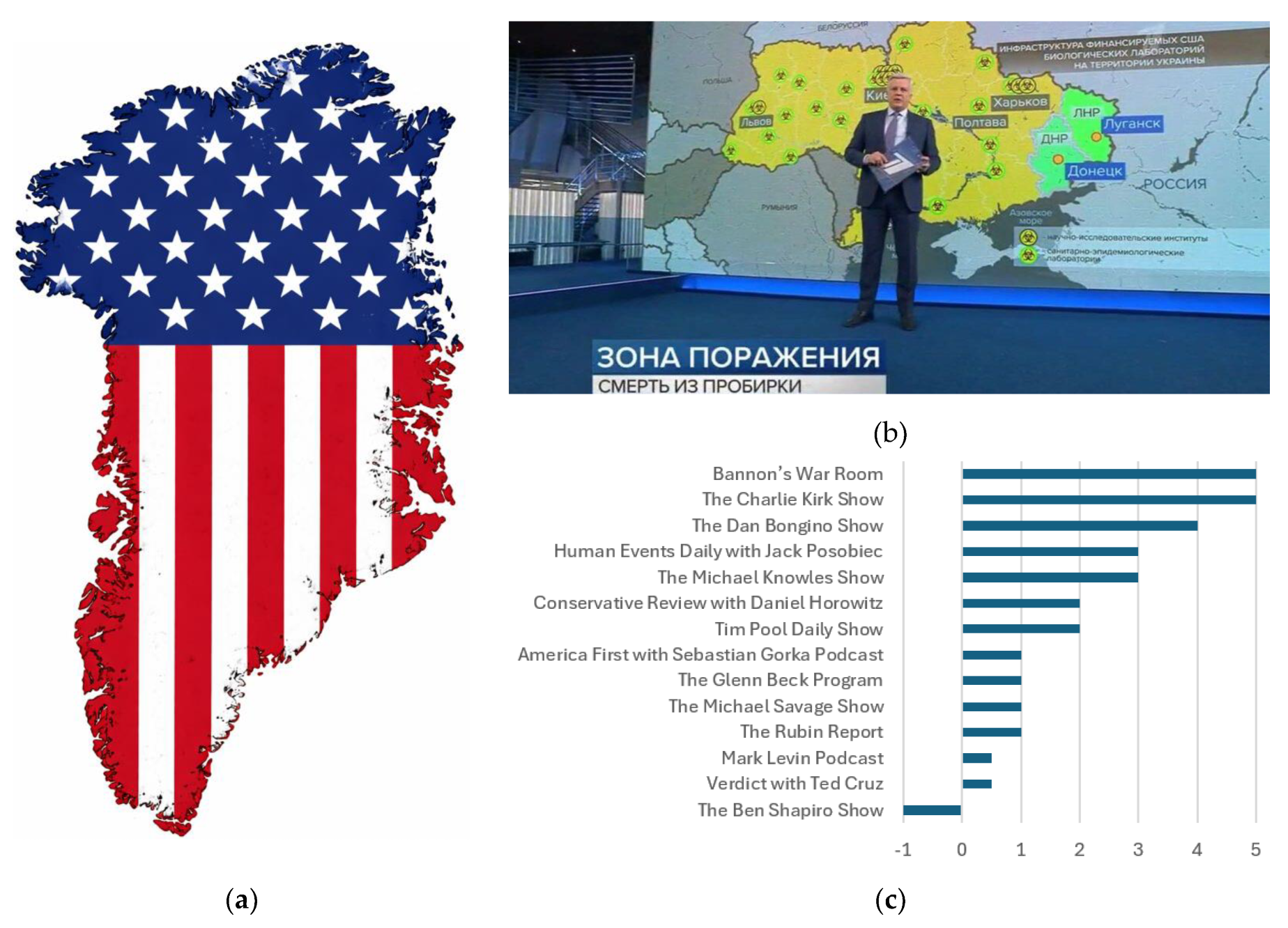

- Brandt, J.; Wirtschafter, V.; Danaditya, A. Popular Podcasters Spread Russian Disinformation about Ukraine Biolabs. Brookings TechStream 2022, 23, https://www.brookings.edu/articles/popular-podcasters-spread-russian-disinformation-about-ukraine-biolabs/.

- Mälksoo, M. A ritual approach to deterrence: I am, therefore I deter. European Journal of International Relations 2020, 27, 53–78. [Google Scholar] [CrossRef]

- Hyzen, A.; Van den Bulck, H. “Putin’s War of Choice”: U.S. Propaganda and the Russia–Ukraine Invasion. Journalism and Media 2024, 5, 233–254. [Google Scholar] [CrossRef]

- Ruiz-Incertis, R.; Tuñón-Navarro, J. European Institutional Discourse Concerning the Russian Invasion of Ukraine on the Social Network X. Journalism and Media 2024, 5, 1646–1683. [Google Scholar] [CrossRef]

- Ruiz-Incertis, R.; Tuñón-Navarro, J. EU Digital Communication in Times of Hybrid Warfare: The Case of Russia and Ukraine on X. Information 2025, 16, 825. [Google Scholar] [CrossRef]

- Selvarajah, S.; Fiorito, L. Media, Public Opinion, and the ICC in the Russia–Ukraine War. Journalism and Media 2023, 4, 760–789. [Google Scholar] [CrossRef]

- Pallarés-Renau, M.; Miquel-Segarra, S.; López-Font, L. Red Cross Presence and Prominence in Spanish Headlines during the First 100 Days of War in Ukraine. Social Sciences 2023, 12, 368. [Google Scholar] [CrossRef]

- Rožukalne, A.; Kažoka, A.; Siliņa, L. “Are Journalists Traitors of the State, Really?”—Self-Censorship Development during the Russian–Ukrainian War: The Case of Latvian PSM. Social Sciences 2024, 13, 350. [Google Scholar] [CrossRef]

- Ronzhyn, A.; Batlle Rubio, A.; Cardenal, A.S. Affordances of Wartime Collective Action on Facebook. Journalism and Media 2025, 6, 194. [Google Scholar] [CrossRef]

- Morais, R.; Piñeiro-Naval, V.; Blanco-Herrero, D. Beyond Information Warfare: Exploring Fact-Checking Research About the Russia–Ukraine War. Journalism and Media 2025, 6, 48. [Google Scholar] [CrossRef]

- Sánchez-del-Vas, R.; Tuñón-Navarro, J. Beyond the Battlefield: A Cross-European Study of Wartime Disinformation. Journalism and Media 2025, 6, 115. [Google Scholar] [CrossRef]

- Skarpa, P.E.; Simoglou, K.B.; Garoufallou, E. Russo-Ukrainian War and Trust or Mistrust in Information: A Snapshot of Individuals’ Perceptions in Greece. Journalism and Media 2023, 4, 835–852. [Google Scholar] [CrossRef]

- Melotti, G.; Villano, P.; Pivetti, M. Social Representations of the War in Italy during the Russia/Ukraine Conflict. Social Sciences 2024, 13, 545. [Google Scholar] [CrossRef]

- Domalewska, D. Online Verbal Aggression on Social Media During Times of Political Turmoil: Discursive Patterns from Poland’s 2020 Protests and Election. Journalism and Media 2025, 6, 146. [Google Scholar] [CrossRef]

- Qiu, X.; Yu, W.; Huang, Y.; Yang, J. Emotional Geopolitics of War: Disparities in Russia–Ukraine War Coverage Between CGTN and VOA. Journalism and Media 2025, 6, 208. [Google Scholar] [CrossRef]

- Hajek, A.; Kretzler, B.; König, H.-H. Social Media Addiction and Fear of War in Germany. Psychiatry International 2022, 3, 313–319. [Google Scholar] [CrossRef]

- Sufi, F. Novel Application of Open-Source Cyber Intelligence. Electronics 2023, 12, 3610. [Google Scholar] [CrossRef]

- Vyas, P.; Vyas, G.; Dhiman, G. RUemo—The Classification Framework for Russia-Ukraine War-Related Societal Emotions on Twitter through Machine Learning. Algorithms 2023, 16, 69. [Google Scholar] [CrossRef]

- Gorostiza-Cerviño, A.; Serna-Ortega, Á.; Moreno-Cabanillas, A.; Almansa-Martínez, A.; Castillo-Esparcia, A. Examining the Roles, Sentiments, and Discourse of European Interest Groups in the Ukrainian War through X (Twitter). Information 2024, 15, 422. [Google Scholar] [CrossRef]

- Shu, Y.; Chen, X.; Di, X. Mobility Pattern Analysis during Russia–Ukraine War Using Twitter Location Data. Information 2024, 15, 76. [Google Scholar] [CrossRef]

- Sufi, F. Social Media Analytics on Russia–Ukraine Cyber War with Natural Language Processing: Perspectives and Challenges. Information 2023, 14, 485. [Google Scholar] [CrossRef]

- Skopik, F.; Pahi, T. Under false flag: using technical artifacts for cyber attack attribution. Cybersecurity 2020, 3, 8. [Google Scholar] [CrossRef]

- Rushing, B. Analysis of Media Influence on Military Decision-Making. In Proceedings of the International Conference on Cyber Warfare and Security, Johannesburg, South Africa, 2024; pp. 308-316. Available online: https://www.academia.edu/download/122060634/1918.pdf.

- Makhortykh, M.; Sydorova, M. Social media and visual framing of the conflict in Eastern Ukraine. Media, War & Conflict 2017, 10, 359–381. [Google Scholar] [CrossRef]

- Joseph, M.F.; Poznansky, M. Media technology, covert action, and the politics of exposure. Journal of Peace Research 2017, 55, 320–335. [Google Scholar] [CrossRef]

- Mustafa, H.; Luczak-Roesch, M.; Johnstone, D. Conceptualizing the evolving nature of computational propaganda: a systematic literature review. Annals of the International Communication Association 2025, 49, 45–60. [Google Scholar] [CrossRef]

- Wilson, B. Soft systems methodology: Conceptual model building and its contribution; Wiley: Chichester, UK, 2001. [Google Scholar]

- Nadolski, M.; Fairbanks, J. Complex systems analysis of hybrid warfare. Procedia Computer Science 2019, 153, 210–217. [Google Scholar] [CrossRef]

- Glaser, C.L. Political Consequences of Military Strategy: Expanding and Refining the Spiral and Deterrence Models. World Politics 1992, 44, 497–538. [Google Scholar] [CrossRef]

- George, A. The Need for Influence Theory and Actor-Specific Behavioral Models of Adversaries. Comparative Strategy 2003, 22, 463–487. [Google Scholar] [CrossRef]

- Altman, D. Advancing without Attacking: The Strategic Game around the Use of Force. Security Studies 2018, 27, 58–88. [Google Scholar] [CrossRef]

- Faizullaev, A.; Cornut, J. Narrative practice in international politics and diplomacy: the case of the Crimean crisis. Journal of International Relations and Development 2017, 20, 578–604. [Google Scholar] [CrossRef]

- Haelig, C.G. Political-Military Integration: The Relationship Between National Security Strategy and Changes in Military Doctrine in the United States Army and Marine Corps. Ph.D, Princeton University, United States -- New Jersey, 2023. Available online: https://www.proquest.com/dissertations-theses/political-military-integration-relationship/docview/2869881038/se-2.

- Alberts, D.S.; Hayes, R.E. Power to the Edge: Command, Control in the Information Age; CCRP Publication Series, 2003. [Google Scholar]

- Pedrozo, R. Close Encounters at Sea. Naval War College Review 2009, 62, 101–112, http://www.jstor.org/stable/26397037. [Google Scholar]

- Lindsay, J.R.; Gartzke, E. Politics by many other means: The comparative strategic advantages of operational domains. Journal of Strategic Studies 2022, 45, 743–776. [Google Scholar] [CrossRef]

- Lucas, E. The Coming Storm: Baltic Sea Security Report, Washington, DC; Center for European Policy Analysis: Washington, DC, 2015. Available online: https://ekspertai.eu/static/uploads/2014/01/Baltic%20Sea%20Security%20Report-%20(2)_compressed.pdf.

- Valeriano, B.; Jensen, B.M.; Maness, R.C. Cyber Strategy: The Evolving Character of Power and Coercion; Oxford University Press, 2018. [Google Scholar]

- Fitton, O. Cyber Operations and Gray Zones: Challenges for NATO. Connections 2016, 15, 109–119, http://www.jstor.org/stable/26326443. [Google Scholar]

- Schulze, M. Cyber in War: Assessing the Strategic, Tactical, and Operational Utility of Military Cyber Operations. In Proceedings of the 2020 12th International Conference on Cyber Conflict (CyCon), 26-29 May 2020, 2020; pp. 183-197. Available online.

- Howard, P.N.; Woolley, S.; Calo, R. Algorithms, bots, and political communication in the US 2016 election: The challenge of automated political communication for election law and administration. Journal of Information Technology & Politics 2018, 15, 81–93. [Google Scholar] [CrossRef]

- Ng, L.H.X.; Robertson, D.C.; Carley, K.M. Cyborgs for strategic communication on social media. Big Data & Society 2024, 11, 20539517241231275. [Google Scholar] [CrossRef]

- Di Salvo, P. “Hacking and information disorder: the weaponization of leaking”. Critical Studies in Media Communication 2025, 42, 83–88. [Google Scholar] [CrossRef]

- Hoskins, A.; O'Loughlin, B. War and Media; Polity Press, 2010. [Google Scholar]

- Durani, K.; Eckhardt, A.; Durani, W.; Kollmer, T.; Augustin, N. Visual audience gatekeeping on social media platforms: A critical investigation on visual information diffusion before and during the Russo–Ukrainian War. Information Systems Journal 2024, 34, 415–468. [Google Scholar] [CrossRef]

- Chadwick, A. The Hybrid Media System: Politics and Power; Oxford University Press, 2017. [Google Scholar]

- Bruns, A. Gatewatching and news curation; Peter Lang Publishing: New York, NY, 2018; Volume 113. [Google Scholar]

- McGregor, S.C. Social media as public opinion: How journalists use social media to represent public opinion. Journalism 2019, 20, 1070–1086. [Google Scholar] [CrossRef]

- Sacco, V.; Bossio, D. Using social media in the news reportage of War & Conflict: Opportunities and Challenges. The journal of media innovations 2015, 2, 59–76. [Google Scholar] [CrossRef]

- Loik, R. Undersea Hybrid Threats in Strategic Competition: The Emerging Domain of NATO–EU Defense Cooperation. Journal on Baltic Security 2024, 10, 1–25. [Google Scholar] [CrossRef]

- Składanowski, M.; Smuniewski, C.; Lukasik-Turecka, A. The Media’s Role in Preparing Russian Society for War with the West: Constructing an Image of Enemies and Allies in the Cases of Latvia, Poland, and Serbia (2014–2022). Journalism and Media 2025, 6, 79. [Google Scholar] [CrossRef]

- Meleshevich, K.; Schafer, B. Online information laundering: The role of social media. Alliance for Securing Democracy, 2018; 9, 8, https://www.gmfus.org/sites/default/files/InfoLaundering_final%20edited.pdf. [Google Scholar]

- Cordeiro, A. Digital Deceptions: Unveiling the Impact of Pseudo-Local News on Democracy and Crafting Countermeasures (Metric Media Case Study). Masters, Concordia University, 2025; Available online: https://spectrum.library.concordia.ca/id/eprint/995423/. [Google Scholar]

- Papacharissi, Z. Affective publics: Sentiment, technology, and politics; Oxford University Press, 2015. [Google Scholar]

- Gillespie, T. Custodians of the Internet: Platforms, content moderation, and the hidden decisions that shape social media; Yale University Press, 2018. [Google Scholar]

- Tsfati, Y.; Boomgaarden, H.G.; Strömbäck, J.; Vliegenthart, R.; Damstra, A.; Lindgren, E. Causes and Consequences of Mainstream Media Dissemination of Fake News: Literature Review and Synthesis. Annals of the International Communication Association 2020, 44, 157–173. [Google Scholar] [CrossRef]

- Bradshaw, S.; Howard, P.N. The global disinformation order: 2019 global inventory of organised social media manipulation, Project on Computational Propaganda: Oxford, UK, 2019; Available online: https://digitalcommons.unl.edu/cgi/viewcontent.cgi?article=1209&context=scholcom.

- Woolley, S.C. Bots and Computational Propaganda: Automation for Communication and Control. In Social Media and Democracy; Persily, N., Tucker, J.A., Eds.; SSRC Anxieties of Democracy; Cambridge University Press: Cambridge, 2020; pp. 89–110, https://www.cambridge.org/core/product/A15EE25C278B442EF00199AA660BFADD. [Google Scholar]

- Woolley, S.; Monaco, N. Amplify the Party, Suppress the Opposition: Social Media, Bots, and Electoral Fraud. Geo. L. Tech. Rev. 2019, 4, 447–461. [Google Scholar]

- Tufekci, Z. Algorithmic harms beyond Facebook and Google: Emergent challenges of computational agency. Colo. Tech. LJ 2015, 13, 203. [Google Scholar]

- Bennett, W.L.; Livingston, S. The disinformation order: Disruptive communication and the decline of democratic institutions. European Journal of Communication 2018, 33, 122–139. [Google Scholar] [CrossRef]

- Benkler, Y.; Faris, R.; Roberts, H. Network Propaganda: Manipulation, Disinformation, and Radicalization in American Politics; 2018. [Google Scholar] [CrossRef]

- Lazer, D.M.J.; Baum, M.A.; Benkler, Y.; Berinsky, A.J.; Greenhill, K.M.; Menczer, F.; Metzger, M.J.; Nyhan, B.; Pennycook, G.; Rothschild, D.; et al. The science of fake news. Science 2018, 359, 1094–1096. [Google Scholar] [CrossRef]

- Vosoughi, S.; Roy, D.; Aral, S. The spread of true and false news online. Science 2018, 359, 1146–1151. [Google Scholar] [CrossRef] [PubMed]

- Pesu, M.; Sinkkonen, V. Trans-atlantic (mis)trust in perspective: asymmetry, abandonment and alliance cohesion. Cambridge Review of International Affairs 2024, 37, 206–225. [Google Scholar] [CrossRef]

- Kunertova, D.; Schmitt, O. Assessing NATO’s cohesion: methods and implications. International Politics 2025, 62, 1097–1110. [Google Scholar] [CrossRef]

- Homolar, A.; Turner, O. Narrative alliances: the discursive foundations of international order. International Affairs 2024, 100, 203–220. [Google Scholar] [CrossRef]

- Snyder, G.H. Alliance politics; Cornell University Press, 2007. [Google Scholar]

- DeMets, S.A.; Spiro, E.S. Podcasts in the periphery: Tracing guest trajectories in political podcasts. Social Networks 2025, 82, 65–79. [Google Scholar] [CrossRef]

- Wilson, T.; Starbird, K. Cross-platform disinformation campaigns: lessons learned and next steps. Harvard Kennedy School Misinformation Review 2020, 1. [Google Scholar] [CrossRef]

- Dowling, D.O.; Johnson, P.R.; Ekdale, B. Hijacking Journalism: Legitimacy and Metajournalistic Discourse in Right-Wing Podcasts. Media and Communication; Vol 10, No 3 (2022): Journalism, Activism, and Social Media: Exploring the Shifts in Journalistic Roles, Performance, and Interconnectedness 2022, Vol 10. [Google Scholar] [CrossRef]

- Rasheed, H.; Cuddy, L.; Molokach, B.; Nam, J.; Feuerstein, S.; Holbert, L.; Young, D. From Punchlines to Politics: The Joe Rogan Experience as a Case Study of the Politicization of Apolitical Spaces in the US. APSA Preprints 2025. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).