Submitted:

15 January 2026

Posted:

19 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Problem Definition

1.2. Proposed Method

1.3. Contributions

2. Related Work

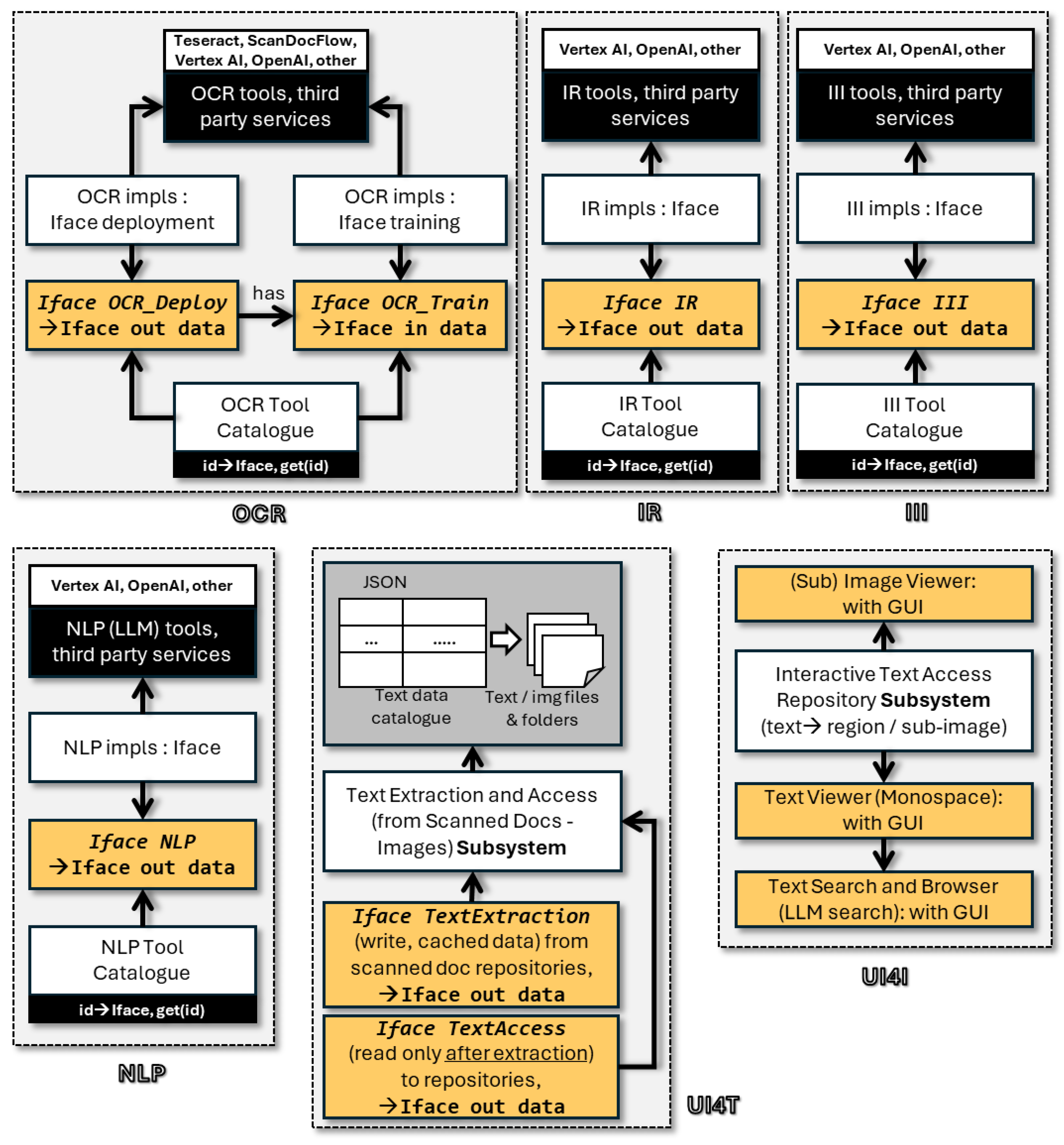

3. Architecture

3.1. Components

- OCR (Optical Character Recognition): scans text from images and returns recognized text, split in sentences, words and characters, together with geometric information of the respective bounding boxes in the original binary image

- NLP (Natural Language Processing): accepts text and applies various functions such as spell checking, data record extraction based on described record prompts (entity classes), together with information on the original text fragments (either corrected or from which content was extrapolated)

- IR (Image Recognition): scans an image to capture specific sub-images (first level containment) and returns all recognized occurrences as bounding boxes

- III or I3 (Image in Image): scans an image to recognize sub-images within other images (second level containment) and returns all recognized occurrences as bounding boxes including the outer and inner ones

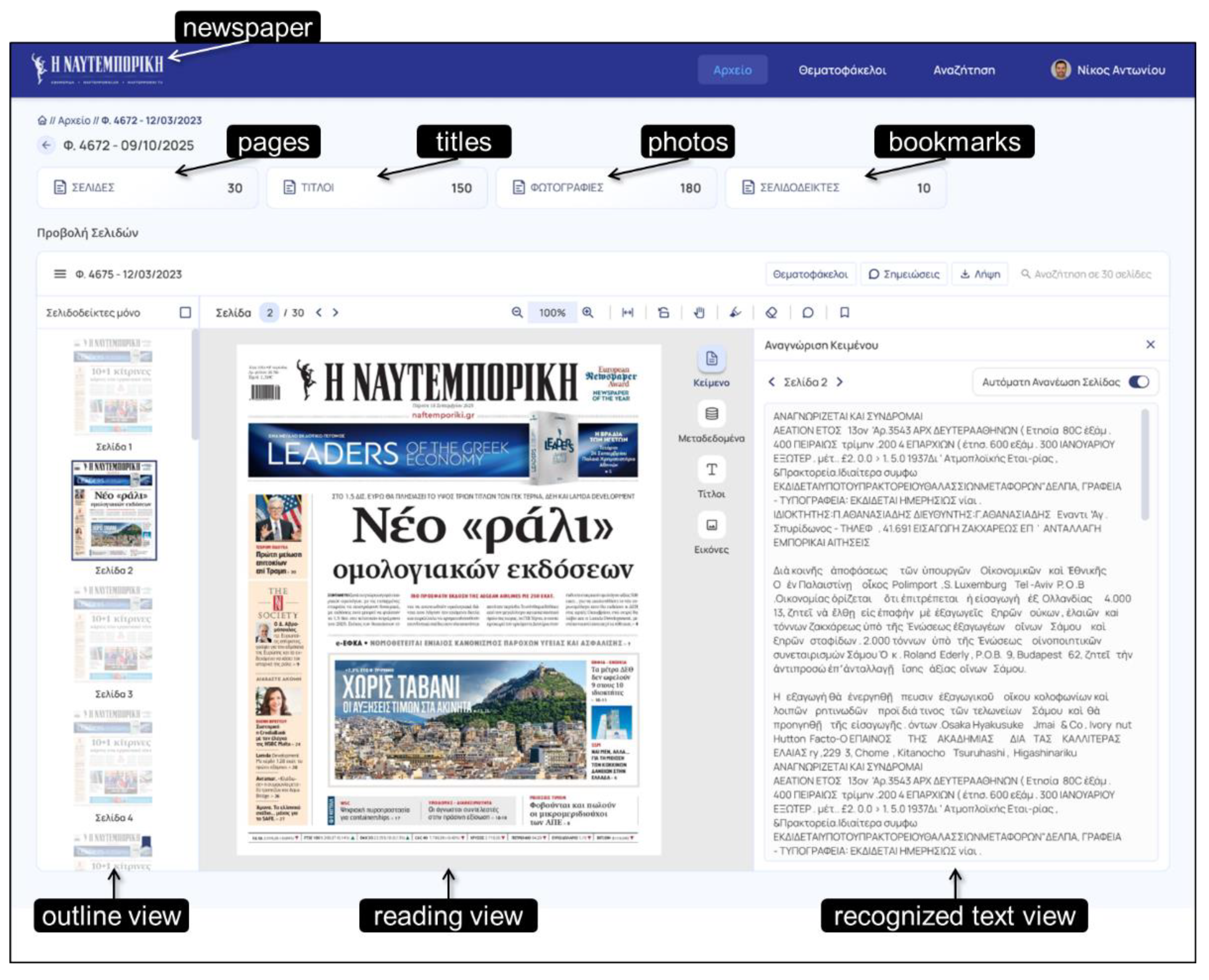

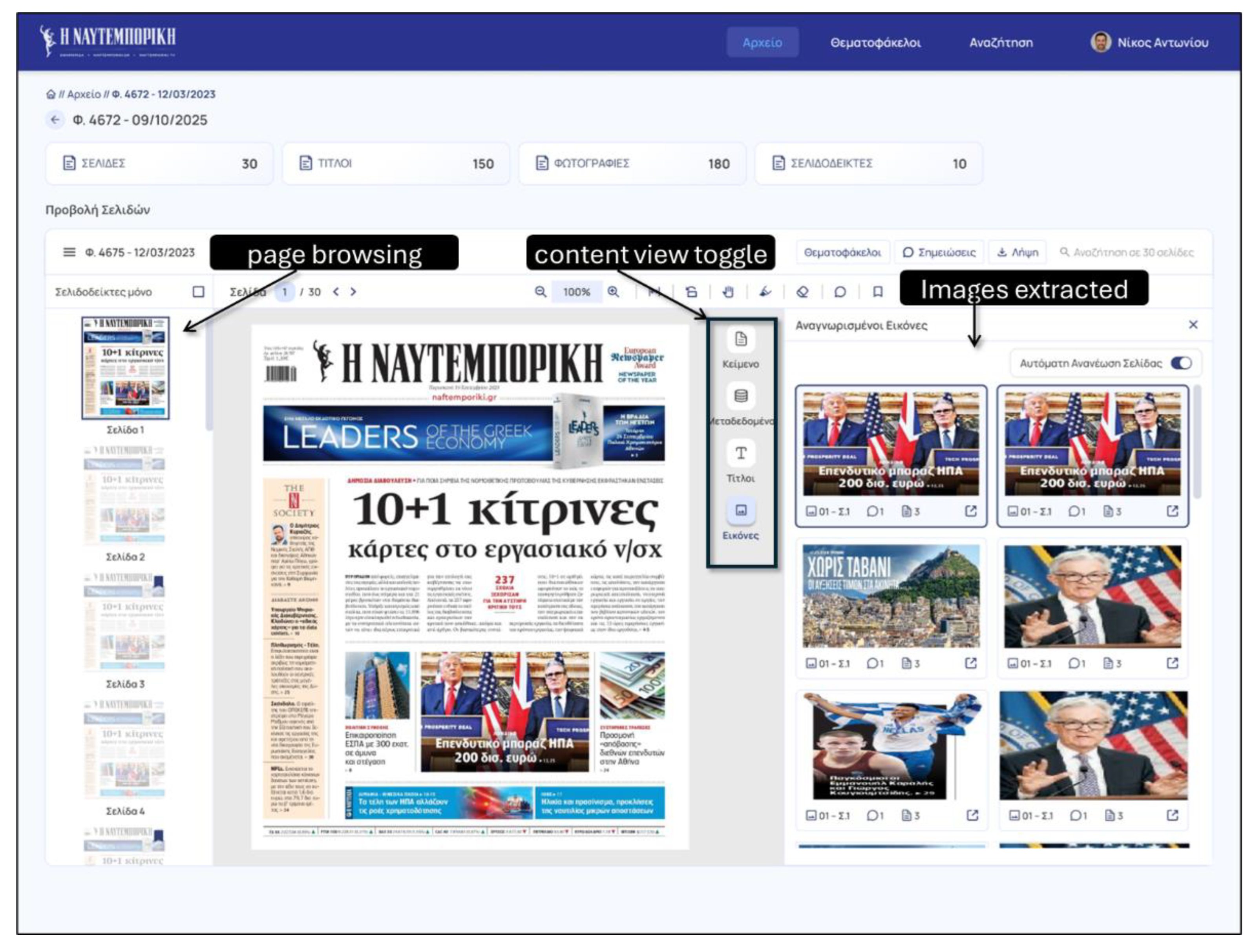

- UI4T (UI for Text): a non-AI component that accepts as input the outcome of OCR or NLP components, offering many interactive features including browsing, text search, bounding box reviewing, lens feature supporting juxtaposition of text with the respective parts in the original image, etc.

- UI4I (UI for Images): a non-AI component that accepts as input the outcome of IR or III components, enabling interactively review and even edit (scaling, stretching, selection and cropping) all recognized sub-images together with their placement in the original images

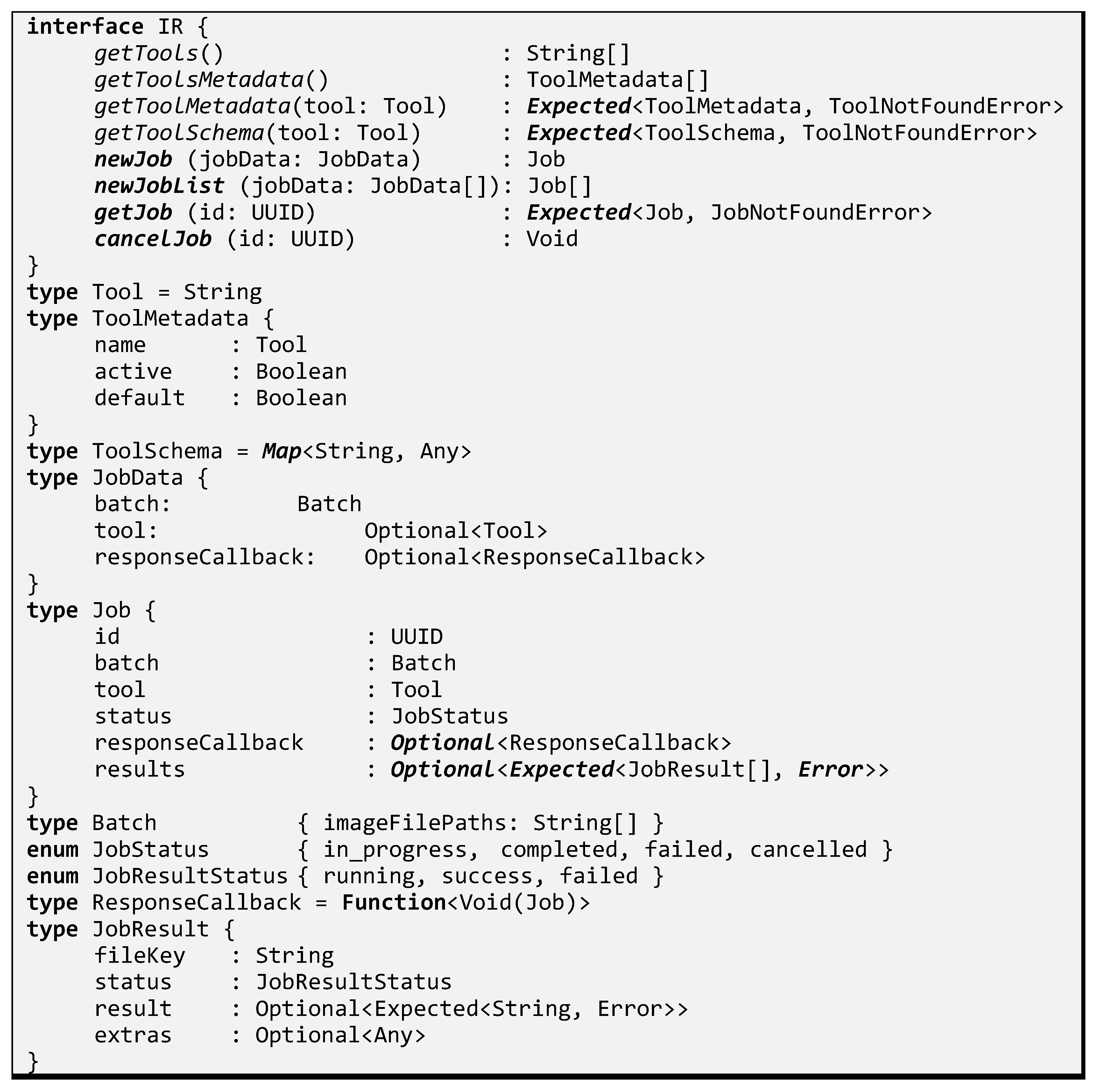

3.2. Interfaces

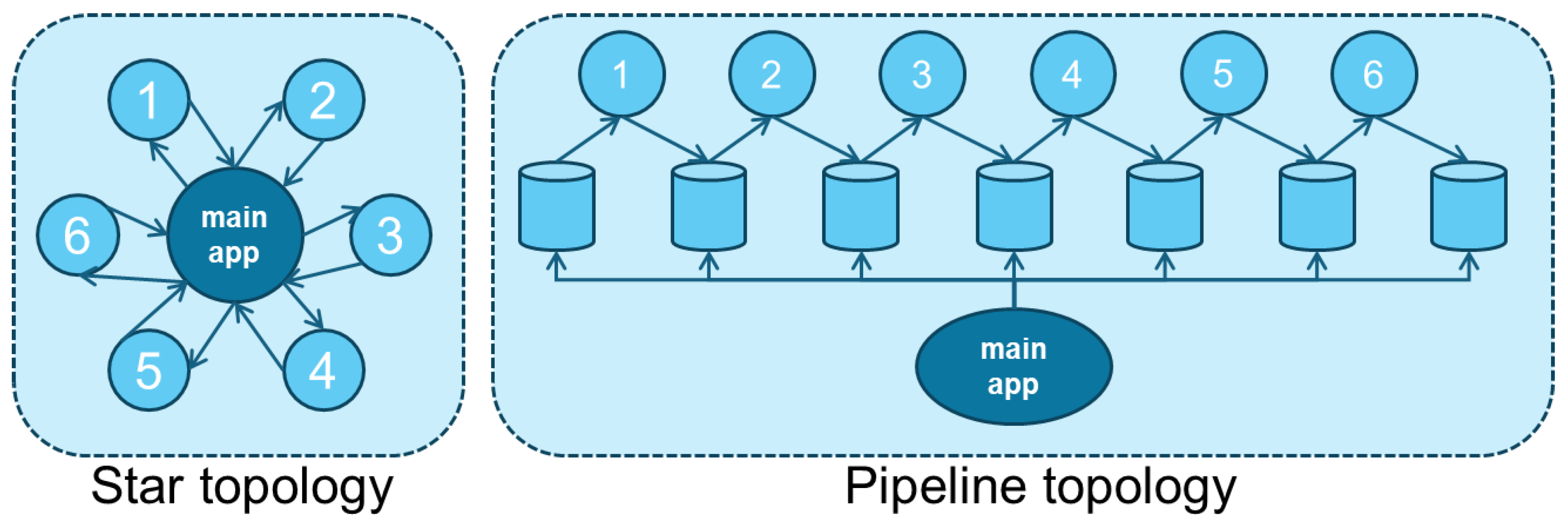

3.3. Application

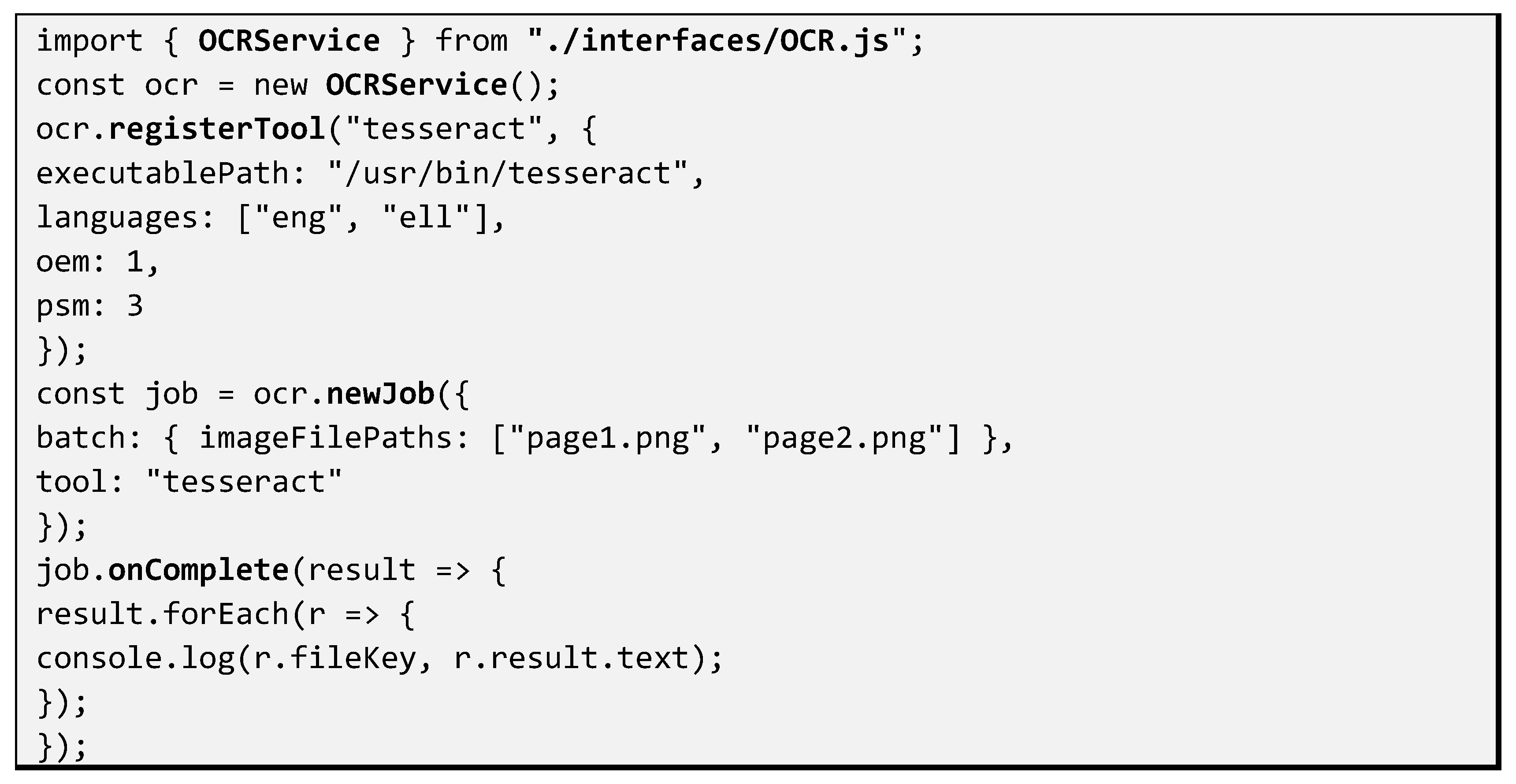

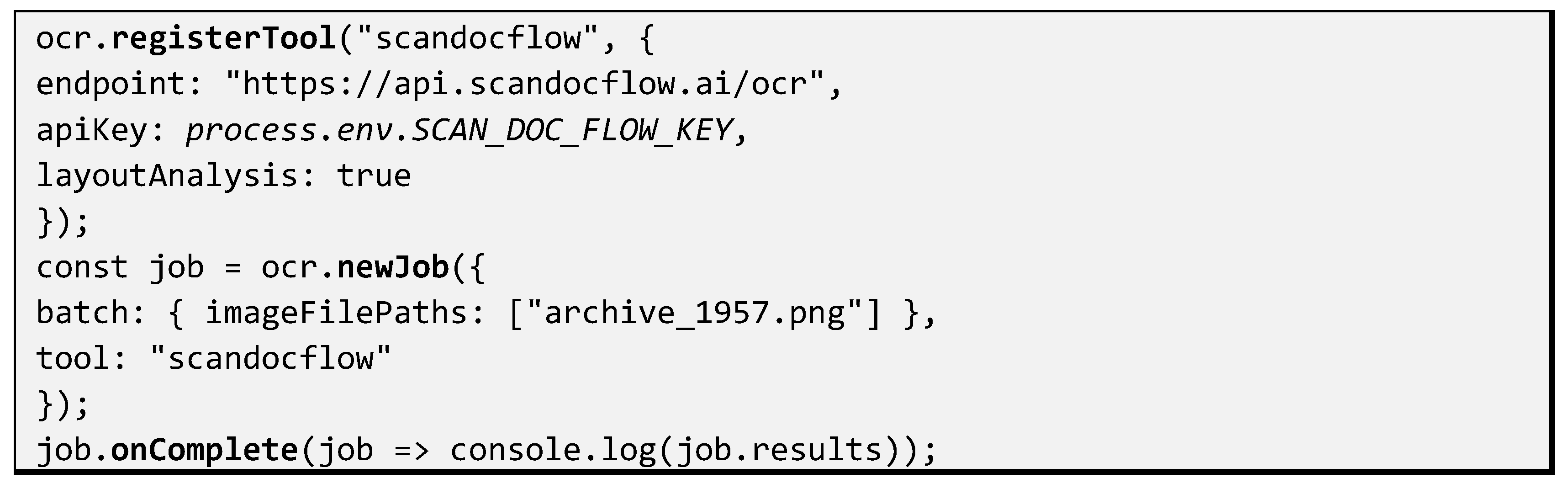

5. Implementation

5.1. Tools

5.1.1. Tesseract (OCR)

- Text recognition from raster images

- Word, line, and character segmentation

- Bounding box extraction for provenance tracking

- Language-specific recognition models

- Confidence scoring per recognized segment

5.1.2. ScanDocFlow (OCR)

- Advanced image preprocessing

- Layout-aware OCR

- Noise and skew correction

- Batch processing with callbacks

- Fine-grained confidence metrics

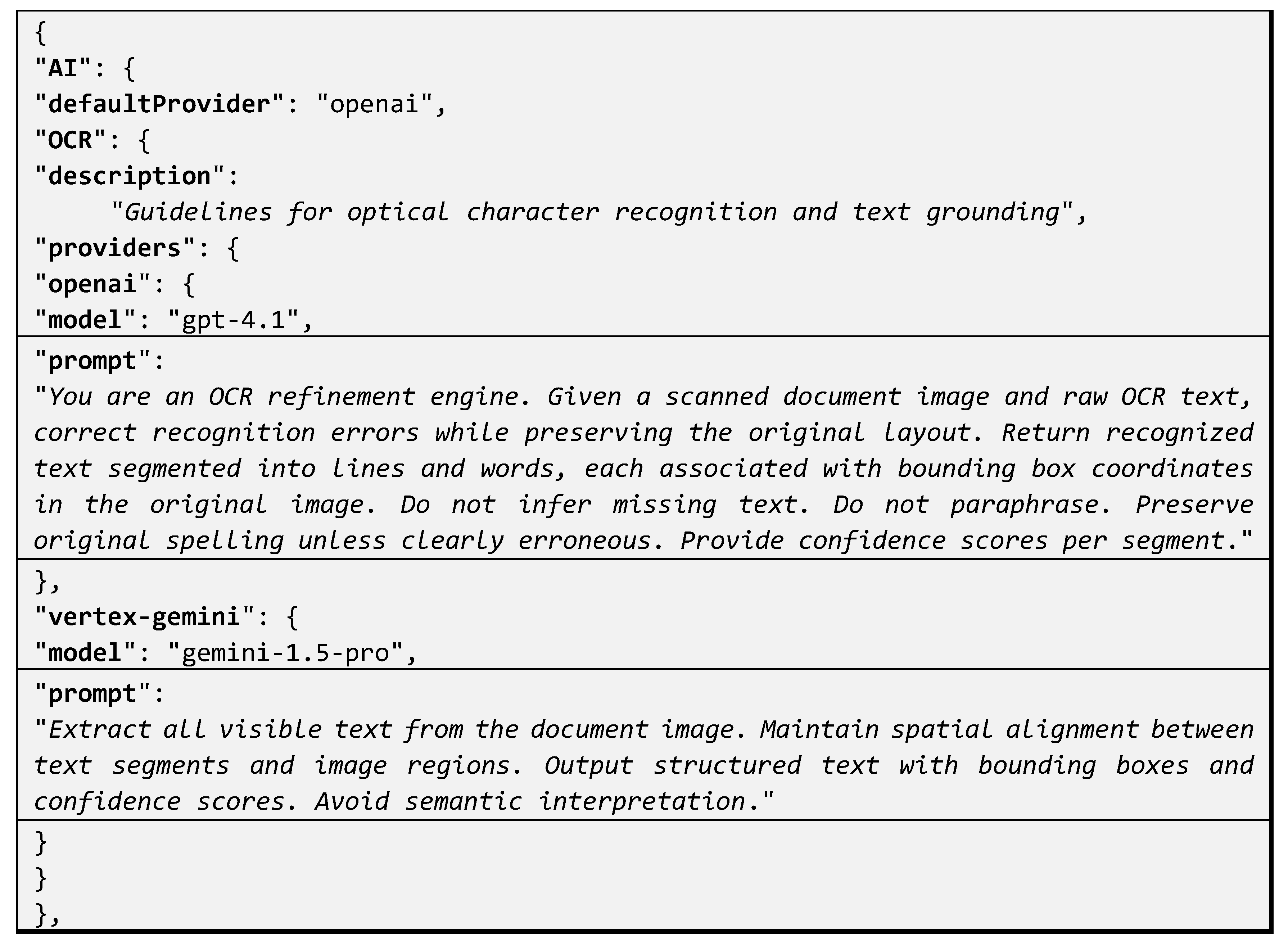

5.1.3. Open AI / Chat GTP (OCR, NLP, IR, III)

- OCR correction and enrichment

- Named entity and record extraction (NLP)

- Visual object detection (IR)

- Nested image detection (III)

- Prompt-driven semantic reasoning

5.1.4. Open CV (IR, III)

- Contour and region detection

- Feature-based image segmentation

- Hierarchical (nested) region detection

- Fast batch execution

- No external dependencies or API costs

5.1.5. Vertex AI / Gemini (OCR, NLP, IR, III)

- Multimodal OCR and NLP

- Layout and object recognition

- Domain-adaptable extraction

- Scalable batch execution

- Enterprise security and governance

5.2. Gluing

- available tools,

- metadata (default, active),

- schemas describing configurable parameters.

- side-by-side evaluation of alternative tools,

- failover and cost-aware selection,

- incremental replacement without redeployment.

5.3. Prompting

- Prompts become configurable assets

- Is keyed by interface (OCR, NLP, IR, III)

- Supports multiple AI providers (OpenAI, Gemini, etc.)

- Clearly separates functional guidelines per interface

- Is suitable both for runtime use

6. Case Study

6.1. Overview

6.2. Challenges

- Typography ambiguities due to low visual quality

- Unordinary column layout disruptions

- Extended use of calligraphic text illustrations

- Ad images overlapping with news images

- Dual page images with embedded text content

6.3. Details

7. Discussion

7.1. Experience Reporting

7.1.1. What When Right

- First-class configurable prompting for AI services

- Managing multiple providers per AI-service interface

- Confidence scoring enabled runtime decision for alternative tools

- Configurable output redirection for data curation and improvements

- AI-cost computation as a first-class function enabling budget estimates

- Computation caching enabling to save AI-service costs

- Separation of AI-service interfaces from application GUI modules

7.1.2. What Went Wrong

- High expectations of AI tools regarding for OCR, IR and III service quality

- Continuous improvements on AI-service quality while we were developing

- Missies in scanning which resulted in practical AI failures

- Significant quality variations in certain cases between different tools

- Non-uniform AI-service outputs requiring more work on output data interface

7.1.3. Improvements Underway

- Support more metadata categories to enable new custom consumers

- Making meta-data and consumers first-class add-ons

- Include form authoring GUI modules to extract arbitrary form data

- Graphical authoring for service pipelining and orchestration

7.2. Applying to Sensor-Oriented Applications

7.2.1. Document Sensing Process (Scanners)

7.2.2. Sensor Data Characteristics and AI-Service Interaction

7.2.3. Applications for Sensor-Driven Information Systems

8. Conclusions

Author Contributions

Funding

References

- Batenburg, K.J.; Sijbers, J. Image acquisition and sensing challenges in large-scale digitization systems. Sensors 2023, 23, 3124. [Google Scholar]

- Xu, Y.; et al. LayoutLM: Pre-training of Text and Layout for Document Image Understanding. arXiv 2019, arXiv:1912.13318. [Google Scholar]

- Kim, G.; et al. Donut: Document understanding transformer without OCR. arXiv 2021, arXiv:2111.15664. [Google Scholar]

- Powalski, R.; et al. Going Full-TILT Boogie on Document Understanding with Text-Image-Layout Transformer. arXiv 2021, arXiv:2102.09550. [Google Scholar]

- Jaume, G.; Ekenel, H.K.; Thiran, J. FUNSD: A Dataset for Form Understanding in Noisy Scanned Documents. arXiv 2019, arXiv:1905.13538. [Google Scholar] [CrossRef]

- Liu, C.; Zhang, Y.; Wang, J. Optical sensing and image quality assessment for document image analysis. Sensors 2024, 24, 1457. [Google Scholar]

- Chen, L.; Xia, C.; Zhao, Z.; Fu, H.; Chen, Y. AI-Driven Sensing Technology: Review. Sensors 2024, 24, 2958. [Google Scholar] [CrossRef] [PubMed]

- Singh, R.; Pal, U.; Blumenstein, M. Sensor-induced degradations in document image processing: A survey. IEEE Sensors Journal 2023, 23, 21045–21060. [Google Scholar]

- Mathew, M.; Karatzas, D.; Manmatha, R.; Jawahar, C. DocVQA: A Dataset for VQA on Document Images. arXiv 2020, arXiv:2007.00398. [Google Scholar]

- Agarwal, A.; Laxmichand Patel, H.; Pattnayak, P.; Panda, S.; Kumar, P.; Kumar, T. CoRR abs/2412.03590; Enhancing Document AI Data Generation Through Graph-Based Synthetic Layouts. 2024.

- Zhong, X.; et al. PubLayNet: Largest dataset ever for document layout analysis. arXiv 2019, arXiv:1908.07836. [Google Scholar]

- Lopez, P.; et al. GROBID: Machine learning for extracting and structuring documents. Project documentation 2019. [Google Scholar]

- Wang, B.; Wu, B.; Li, W.; Fang, M.; Liang, Y.; Huang, Z.; Wang, H.; Huang, J.; Chen, L.; Chu, W.; Qi, Y. Infinity Parser: Layout Aware Reinforcement Learning for Scanned Document Parsing. CoRR abs/2506.03197 2025. [Google Scholar]

- OCR-D Project. OCR-D Framework and Provenance Model. ALTO/PAGE-XML Documentation 2021. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).