Submitted:

08 January 2026

Posted:

09 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- a unified formal model for simulation-based multi-objective optimization experiments in WSNs, including reactive state transitions for workflow coordination;

- a distributed and modular architecture that instantiates this model through event-driven orchestration and containerized simulations, enabling scalable parallel evaluation;

- a synthetic evaluation backend to support controlled experiments and benchmarking independent of a specific simulator;

- an empirical proof of feasibility under increasing degrees of distributed execution, reporting execution time and resource usage to characterize orchestration overhead and scalability;

- a reproducible experimentation environment that preserves structured metadata and execution artifacts to support replay, sharing, and extension of multi-objective optimization studies.

2. Related Work

3. Formal Foundations

3.1. Experiment Model

- X denotes the configuration space of candidate wireless sensor network (WSN) solutions. Each element represents a complete network configuration and may encode, depending on the problem definition, geometric parameters (e.g., node or relay positions), structural decisions (e.g., connectivity or routing), protocol-level choices (e.g., MAC or duty-cycling schemes), and other controllable design variables;

- is the simulation parameter space, encoding topology, node deployment, communication models, MAC protocols, traffic profiles, and environmental assumptions;

- is a vector-valued objective function that maps each network configuration to m performance metrics under a given simulation context . The evaluation implicitly embeds the execution of the simulation model and the computation of objective values;

- denotes the optimization strategy that governs population evolution, such as evolutionary algorithms, exact solvers, or heuristics. All algorithm-specific design choices and parameterizations, including population size, selection mechanisms, variation operators, and strategy-specific control parameters, are considered intrinsic to and are therefore encapsulated in its definition.

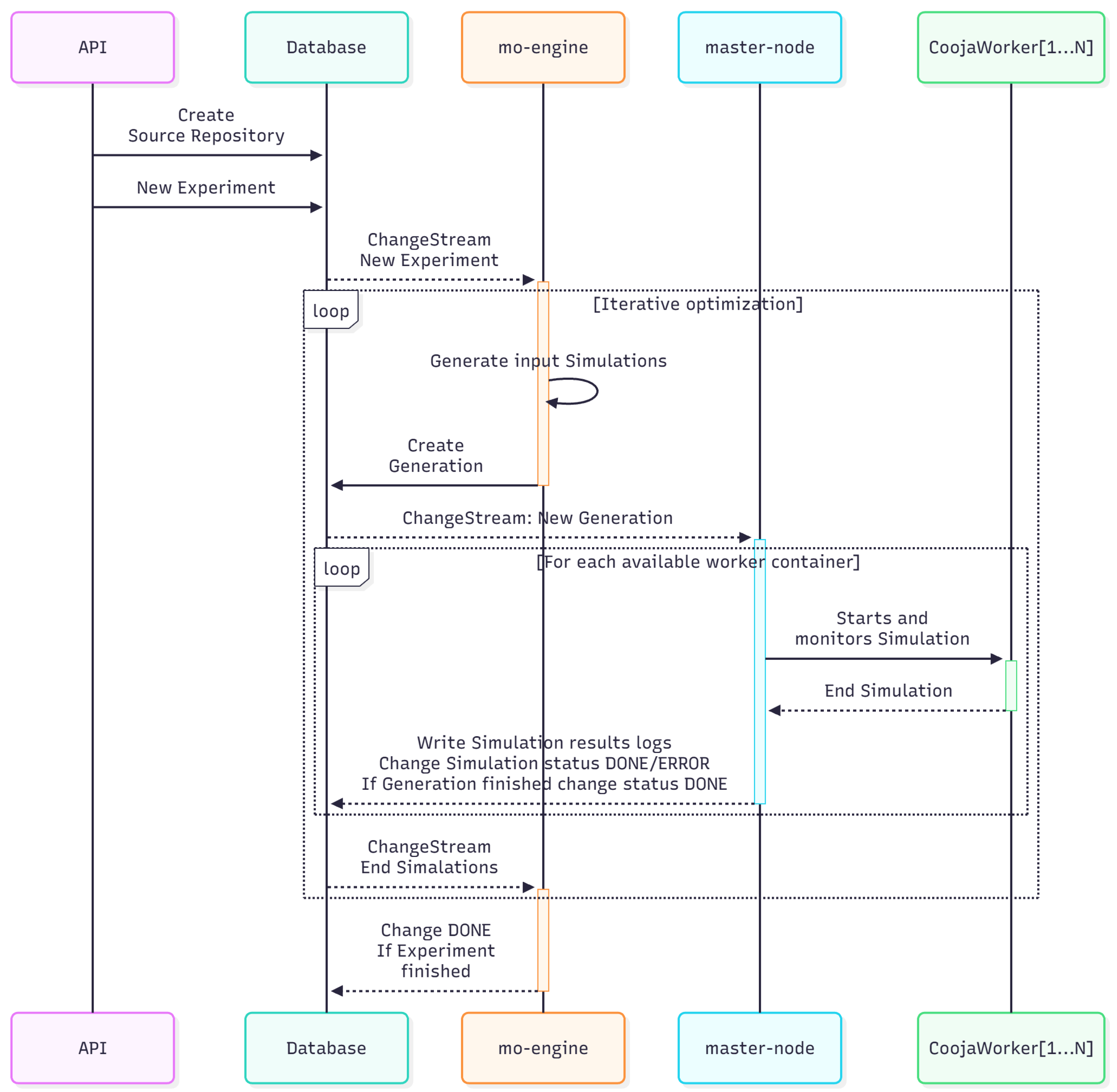

3.2. Distributed Orchestration Model

- ExperimentCreated initializes the optimization workflow;

- GenerationReady triggers the dispatch of distributed simulations;

- SimulationCompleted signals the availability of evaluation results.

3.3. Simulation and Evaluation Model

3.3.0.1. Event-Driven Optimization

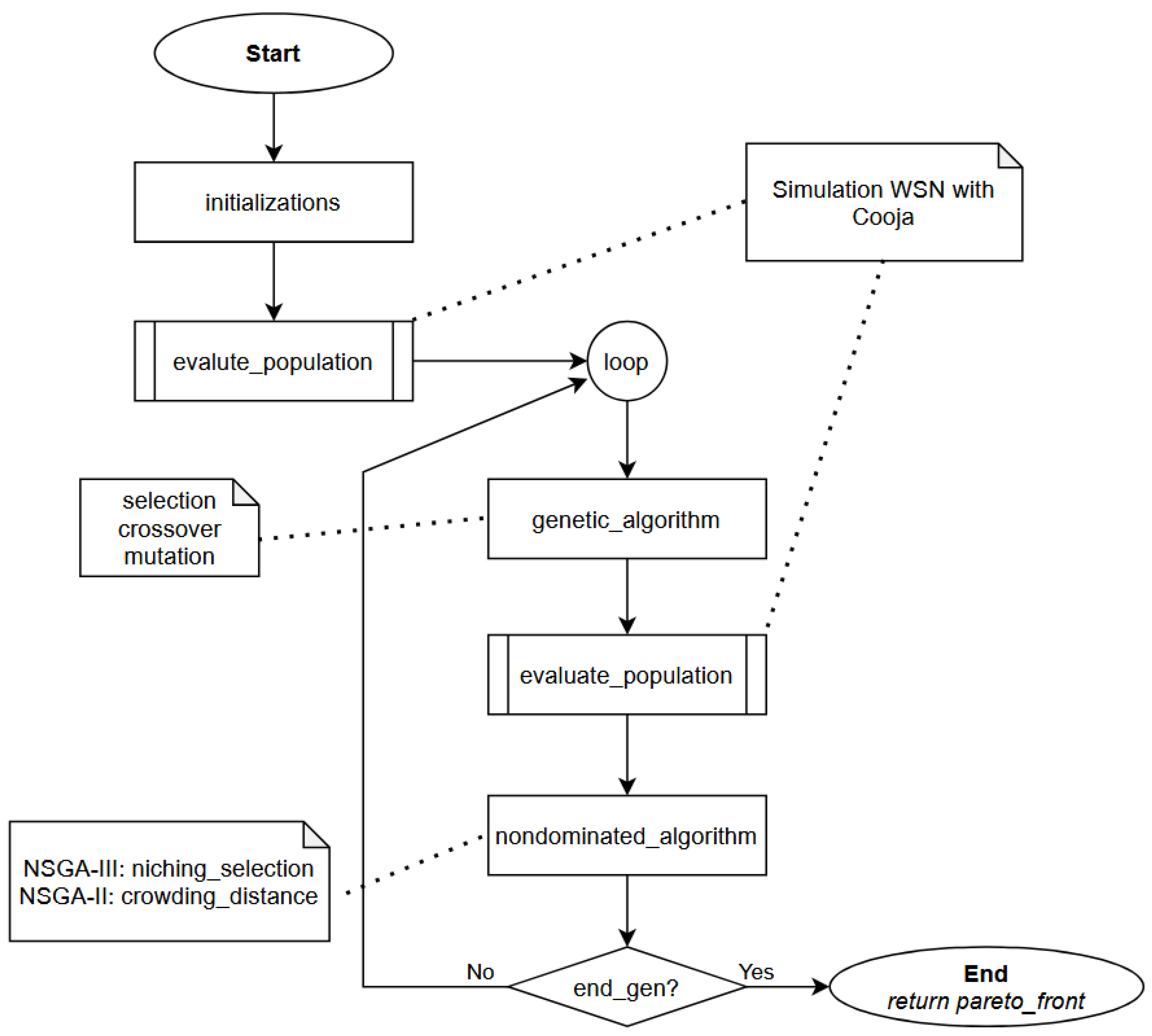

3.4. Optimization Model

- is the population at generation k;

- is the evaluation operator induced by the simulation model;

- denotes a generic evolutionary strategy.

3.5. Minimal Convergence Conditions

- (Finite Population) Each generation has fixed and finite cardinality;

- (Elitism) Non-dominated solutions are preserved with non-zero probability;

- (Ergodic Variation) The variation operators induced by define an ergodic Markov chain over X;

- (Consistent Evaluation) The evaluation operator is stationary with respect to , up to bounded stochastic noise.

3.6. Schedule Execution Model

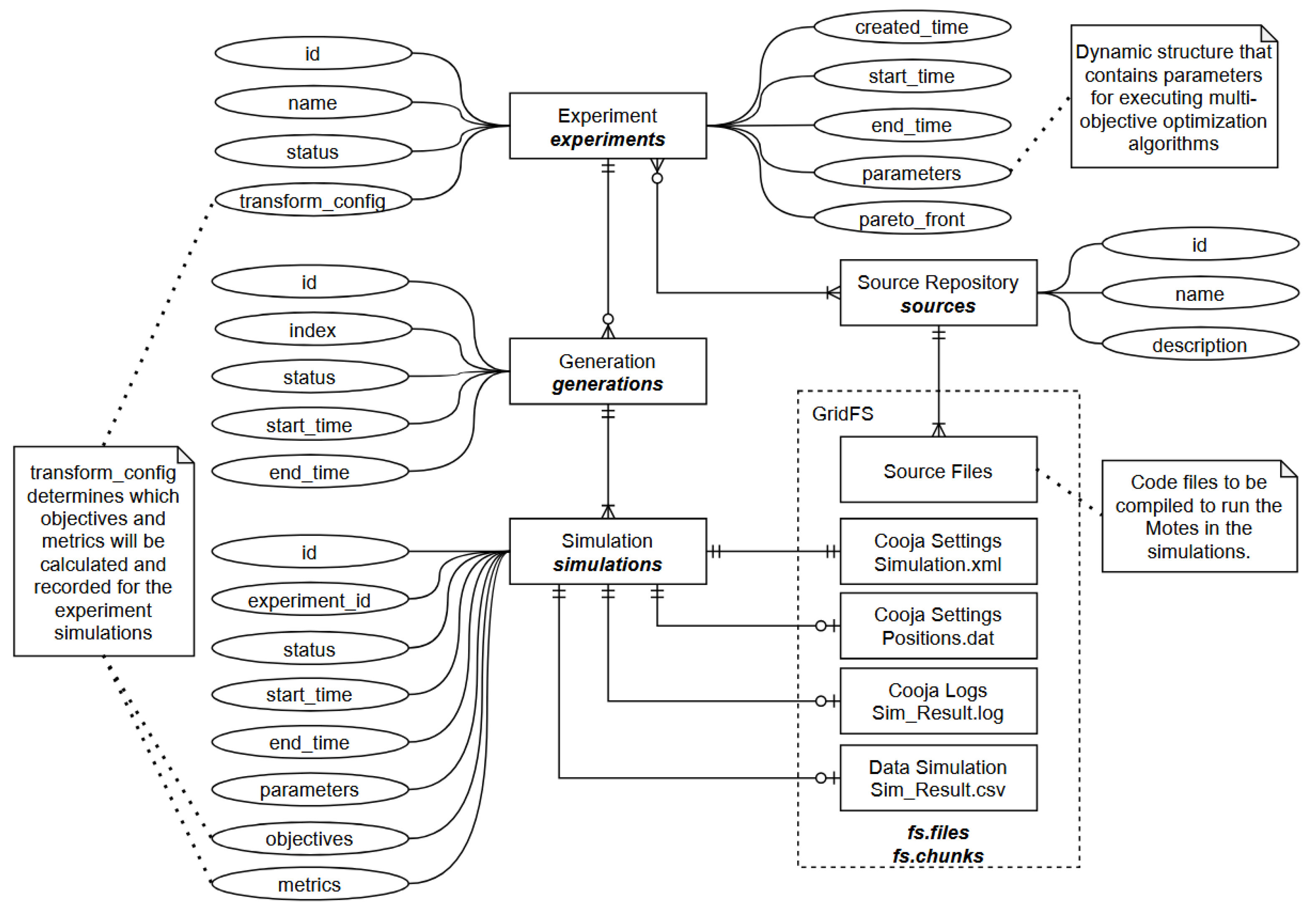

3.7. Reproducibility Model

- experiments, generations, and simulations are immutable entities;

- container images encapsulate simulator binaries and dependencies;

- event streams preserve chronological execution semantics;

- logs, configurations, and binary artifacts are persistently stored.

4. Materials and Methods

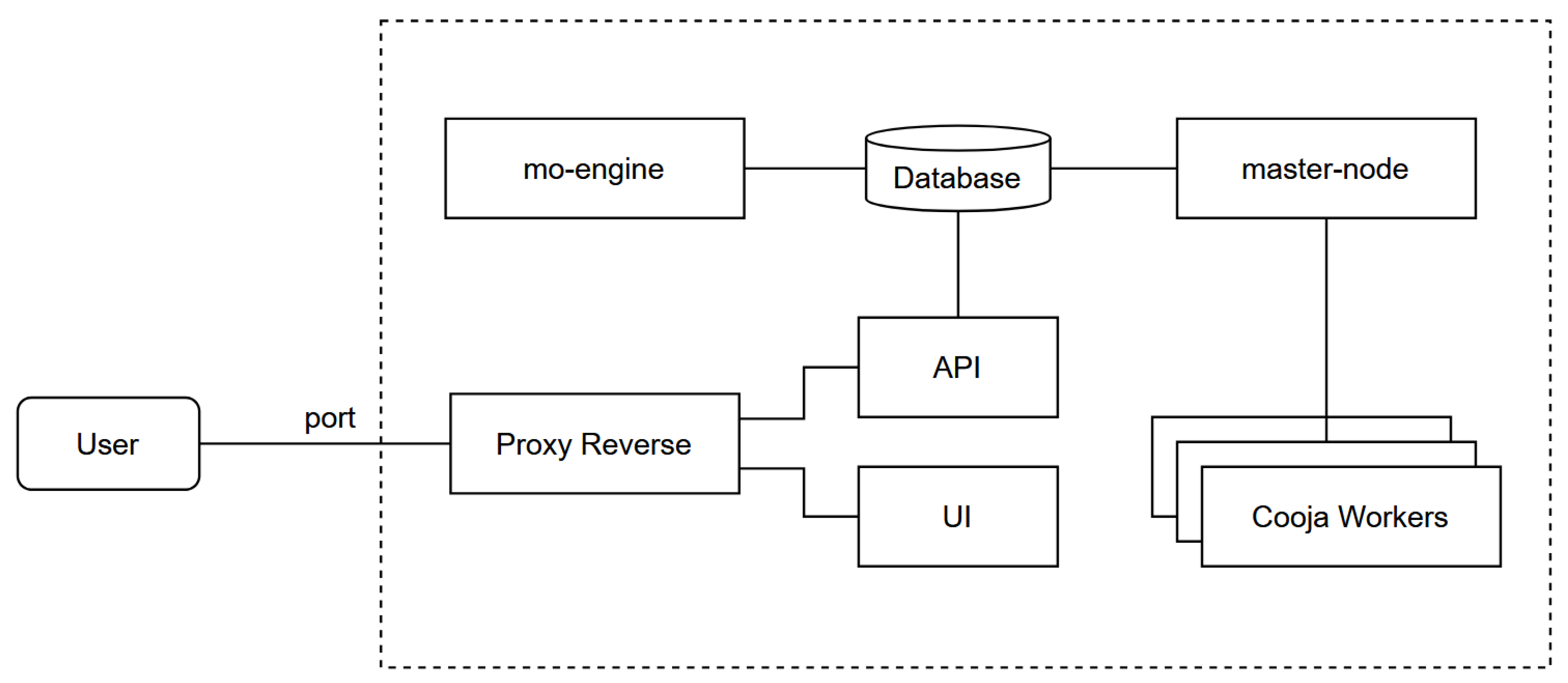

4.1. System Architecture

4.2. Workflow

- An experiment is created via REST API and stored in database.

- The mo-engine observes the database for pending experiments and generates simulation queues (generations) according to an optimization strategy (e.g., NSGA-III or Random).

- The master-node executes containerized simulations in parallel and collects results.

- Results are saved back into database, triggering the next generation of optimization.

4.3. Core Components

Master-Node.

MO-Engine.

Database.

API.

Graphical User Interface (GUI).

4.4. Experimental Organization

5. Prototype Implementation and Proof of Feasibility

5.1. Dummy Experiment Example

5.2. Real Executions and Performance Evaluation

Experimental Setup.

- Scenario 1: 10 concurrent Cooja containers;

- Scenario 2: 30 concurrent Cooja containers.

Execution Time.

- 5 hours and 41 minutes for 10 containers;

- 6 hours and 33 minutes for 30 containers.

Resource Usage.

| Component | CPU(%) | Mem(MiB) | Mem(%) | NetRX(B/s) | NetTX(B/s) |

|---|---|---|---|---|---|

| Cooja (avg) | 36.97 | 2285.37 | 2.36 | 0.38 | 0.43 |

| Master-node | 9.61 | 51.57 | 0.05 | 603.33 | 321.92 |

| MO-engine | 1.56 | 289.38 | 0.30 | 1173.67 | 639.16 |

| Database (MongoDB) | 23.62 | 413.77 | 0.43 | 954.46 | 1765.49 |

| REST API | 9.02 | 87.99 | 0.09 | 0.02 | 0.00 |

Observations:

5.3. NSGA Integration

6. Discussion

7. Conclusions

- developing a robust software platform with a graphical user interface (GUI) to support research and experimentation with wireless sensor networks;

- integrating additional simulators beyond Cooja to extend the applicability of the framework to different network environments;

- employing the architecture as a foundation for designing new optimization techniques tailored to WSNs;

- creating advanced visualization and analytical tools for Pareto front exploration and decision support;

- incorporating graphical resources to aid researchers in the visual interpretation and comparative analysis of simulation results;

- integrating mathematical and analytical models to enhance the performance of both simulations and optimization algorithms;

- to produce a well-documented experimentation platform that promotes collaborative use, reproducibility, and reuse throughout the scientific community.

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Hart, J.K.; Martinez, K. Environmental Sensor Networks: A Revolution in the Earth System Science? 78, 177–191. [CrossRef]

- Ali, A.; Ming, Y.; Chakraborty, S.; Iram, S. A Comprehensive Survey on Real-Time Applications of WSN. 9, 77. [CrossRef]

- Asif, M.J.; Saqib, S.; Ahmad, R.F.; Khan, H. Leveraging Wireless Sensor Networks for Real-Time Monitoring and Control of Industrial Environments. [CrossRef]

- Musa, P.; Sugeru, H.; Wibowo, E.P. Wireless Sensor Networks for Precision Agriculture: A Review of NPK Sensor Implementations. 24, 51. [CrossRef]

- Khalifeh, A.; Darabkh, K.A.; Khasawneh, A.M.; Alqaisieh, I.; Salameh, M.; AlAbdala, A.; Alrubaye, S.; Alassaf, A.; Al-HajAli, S.; Al-Wardat, R.; et al. Wireless Sensor Networks for Smart Cities: Network Design, Implementation and Performance Evaluation. 10, 218. [CrossRef]

- Fei, Z.; Li, B.; Yang, S.; Xing, C.; Chen, H.; Hanzo, L. A Survey of Multi-Objective Optimization in Wireless Sensor Networks: Metrics, Algorithms, and Open Problems. 19, 550–586. [CrossRef]

- Kandris, D.; Alexandridis, A.; Dagiuklas, T.; Panaousis, E.; Vergados, D.D. Multiobjective Optimization Algorithms for Wireless Sensor Networks; 2020; pp. 1–5. [Google Scholar] [CrossRef]

- Thekkil, T.M.; Prabakaran, N. A Multi-Objective Optimization for Remote Monitoring Cost Minimization in Wireless Sensor Networks. 121, 1049–1065. [CrossRef]

- Deb, K.; Pratap, A.; Agarwal, S.; Meyarivan, T. A Fast and Elitist Multiobjective Genetic Algorithm. 6, 182–197. [CrossRef]

- Deb, K.; Jain, H. An Evolutionary Many-Objective Optimization Algorithm Using Reference-Point-Based Nondominated Sorting Approach, Part I: Solving Problems With Box Constraints. 18, 577–601. [CrossRef]

- Li, H.; Deb, K.; Zhang, Q.; Suganthan, P.; Chen, L. Comparison between MOEA/D and NSGA-III on a Set of Novel Many and Multi-Objective Benchmark Problems with Challenging Difficulties. 46, 104–117. [CrossRef]

- Gunjan. A Review on Multi-objective Optimization in Wireless Sensor Networks Using Nature Inspired Meta-heuristic Algorithms. 55, 2587–2611. [CrossRef]

- Moshref, M.; Al-Sayyed, R.; Al-Sharaeh, S. MULTI-OBJECTIVE OPTIMIZATION ALGORITHMS FOR WIRELESS SENSOR NETWORKS: A COMPREHENSIVE SURVEY.

- Egea-Lopez, E.; Vales-Alonso, J.; Martinez-Sala, A.; Pavon-Mario, P.; Garcia-Haro, J. Simulation Scalability Issues in Wireless Sensor Networks. 44, 64–73. [CrossRef]

- Hong, W.; Tang, K. Large-Scale Multi-Objective Evolutionary Optimization.

- Oikonomou, G.; Duquennoy, S.; Elsts, A.; Eriksson, J.; Tanaka, Y.; Tsiftes, N. The Contiki-NG Open Source Operating System for next Generation IoT Devices. 18, 101089. [CrossRef]

- Kumar, M.; Hussain, S. Simulation Model For Wireless Body Area Network Using Castalia. In Proceedings of the 2022 1st International Conference on Informatics (ICI); IEEE; pp. 204–207. [CrossRef]

- Carneiro, G.; Fontes, H.; Ricardo, M. Fast Prototyping of Network Protocols through Ns-3 Simulation Model Reuse. 19, 2063–2075. [CrossRef]

- Riliskis, L.; Osipov, E. Maestro: An Orchestration Framework for Large-Scale WSN Simulations. 14, 5392–5414. [CrossRef]

- Harwell, J.; Gini, M. SIERRA: A Modular Framework for Accelerating Research and Improving Reproducibility. In Proceedings of the 2023 IEEE International Conference on Robotics and Automation (ICRA); IEEE; pp. 9111–9117. [CrossRef]

- Sarikhani, M.; Wendelborn, A. Mechanisms for Provenance Collection in Scientific Workflow Systems. 100, 439–472. [CrossRef]

- Santana-Perez, I.; Pérez-Hernández, M.S. Towards Reproducibility in Scientific Workflows: An Infrastructure-Based Approach 2015, 1–11. [CrossRef]

- Nam, S.M.; Kim, H.J. WSN-SES/MB: System Entity Structure and Model Base Framework for Large-Scale Wireless Sensor Networks. 21, 430. [CrossRef]

- Ferreira, A.M.A.; Azevedo, L.J.D.M.D.; Estrella, J.C.; Delbem, A.C.B. Case Studies with the Contiki-NG Simulator to Design Strategies for Sensors’ Communication Optimization in an IoT-Fog Ecosystem. 23, 2300. [CrossRef] [PubMed]

- Ferreira, A.M.A. Projeto e Análise de Rede de Sensores em Névoa utilizando uma Abordagem com Otimização Multiobjetivo. [CrossRef]

- Rudolph, G. Convergence Analysis of Canonical Genetic Algorithms. 5, 96–101. [CrossRef]

- Zitzler, E.; Thiele, L. Multiobjective Evolutionary Algorithms: A Comparative Case Study and the Strength Pareto Approach. 3, 257–271. [CrossRef]

- Deb, K. Multiobjective Optimization: Interactive and Evolutionary Approaches. Number v.5252 in Lecture Notes in Computer Science Ser; Springer Berlin / Heidelberg.

- Liu, J.; Sarker, R.; Elsayed, S.; Essam, D.; Siswanto, N. Large-Scale Evolutionary Optimization: A Review and Comparative Study. 85, 101466. [CrossRef]

- MongoDB, Inc. Change Streams — Database Manual. In MongoDB official documentation; MongoDB, Inc., 2025. [Google Scholar]

- MongoDB; Inc. An Introduction to Change Streams, 2025. MongoDB documentation on Change Streams capabilities.

| 1 | The Cooja container. Docker image available on https://hub.docker.com/repository/docker/juniocesarferreira/simulation-cooja/general

|

| Framework | Event-driven | Workflow mgmt. | Multi-sim. support | Provenance | MOO support | Formal model |

|---|---|---|---|---|---|---|

| Maestro [19] | ✗ | ✓ | ✓ | Partial | ✗ | ✗ |

| SIERRA [20] | ✗ | ✓ | ✓ | ✓ | ✗ | ✗ |

| WSN-SES/MB [23] | ✗ | Partial | ✓ | ✗ | ✗ | Partial |

| Ferreira et al. [24,25] | ✗ | Partial | ✗ | ✗ | Partial | ✗ |

| This work | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

| Component | CPU(%) | Mem(MiB) | Mem(%) | NetRX(B/s) | NetTX(B/s) |

|---|---|---|---|---|---|

| Cooja (avg) | 100.39 | 2542.45 | 2.63 | 8.9 | 150.5 |

| Master-node | 5.90 | 78.96 | 0.08 | 2231.38 | 1957.30 |

| MO-engine | 1.29 | 315.78 | 0.33 | 1196.64 | 632.98 |

| Database (MongoDB) | 54.03 | 404.09 | 0.42 | 2507.54 | 1927.37 |

| REST API | 12.86 | 85.80 | 0.09 | 0.07 | 0.03 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).