Submitted:

06 January 2026

Posted:

07 January 2026

You are already at the latest version

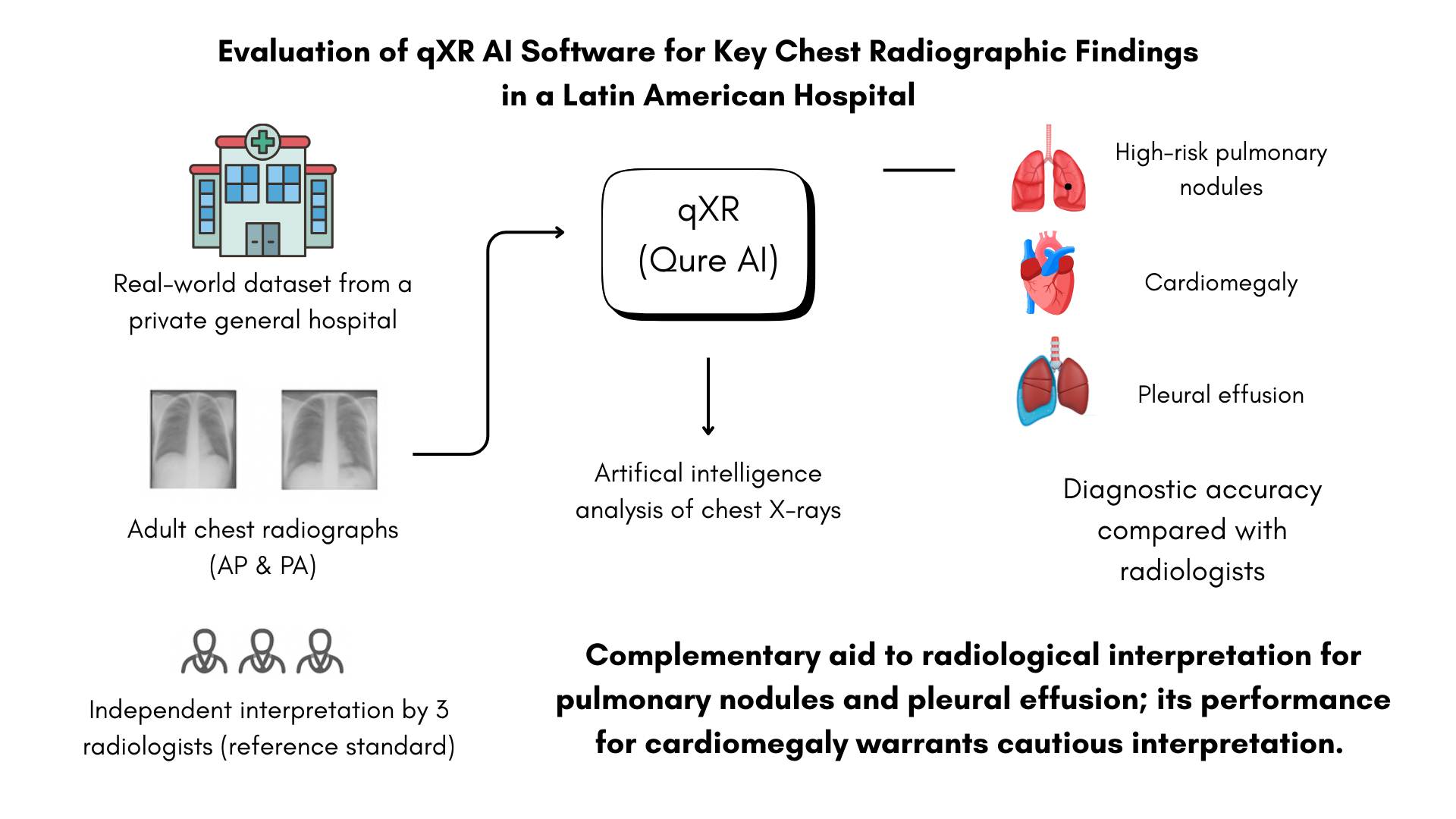

Abstract

Keywords:

1. Introduction

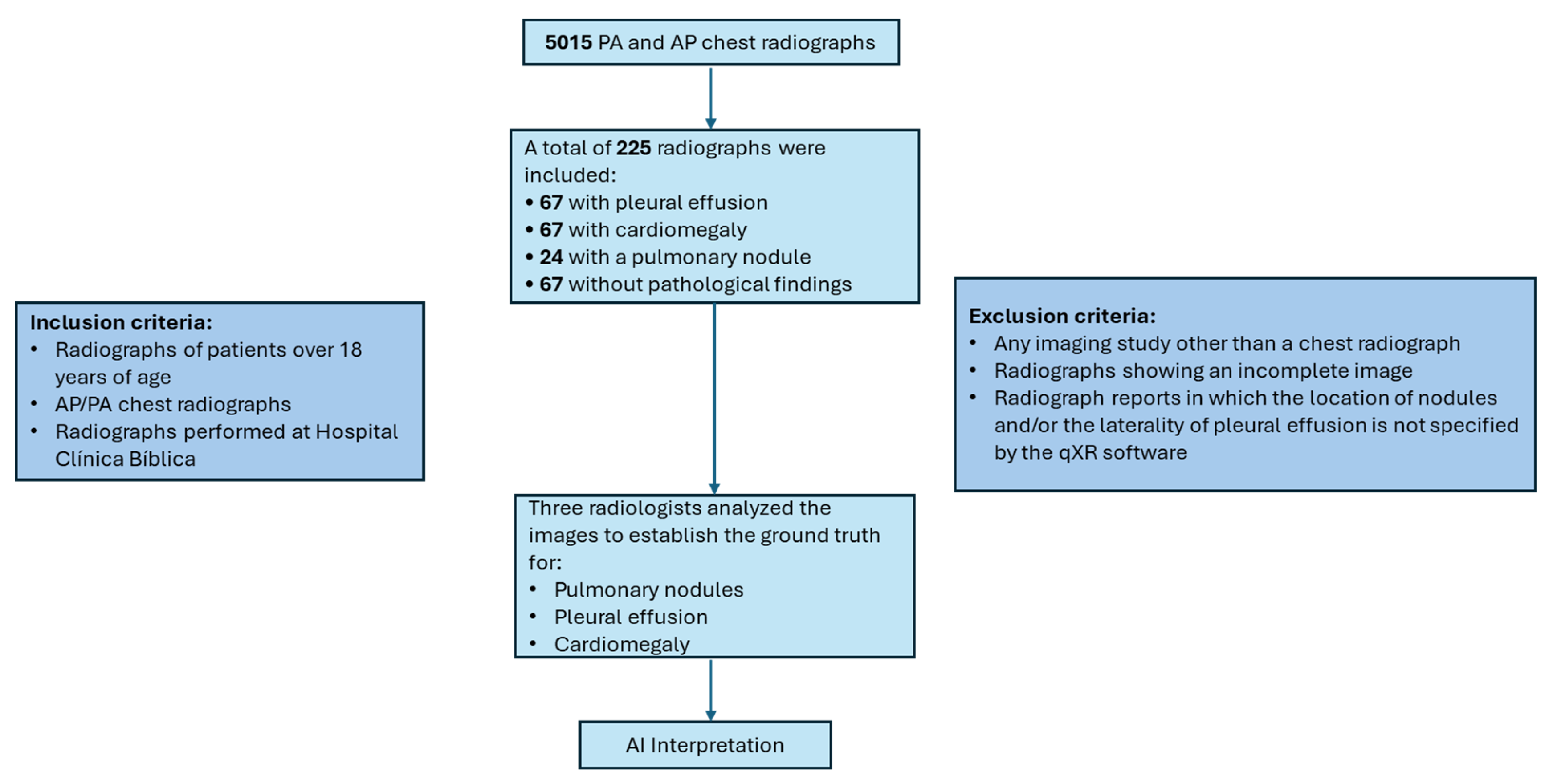

2. Materials and Methods

AI Algorithm

Establishment of the Reference Standard in the Study

- Presence or absence of pulmonary nodules, specifying quantity and anatomical location (left or right lung; upper, middle, or lower fields) when applicable.

- Presence or absence of pleural effusion, indicating laterality.

- Presence or absence of cardiomegaly.

Anonymization and Data Recording

Statistical Analysis

3. Results

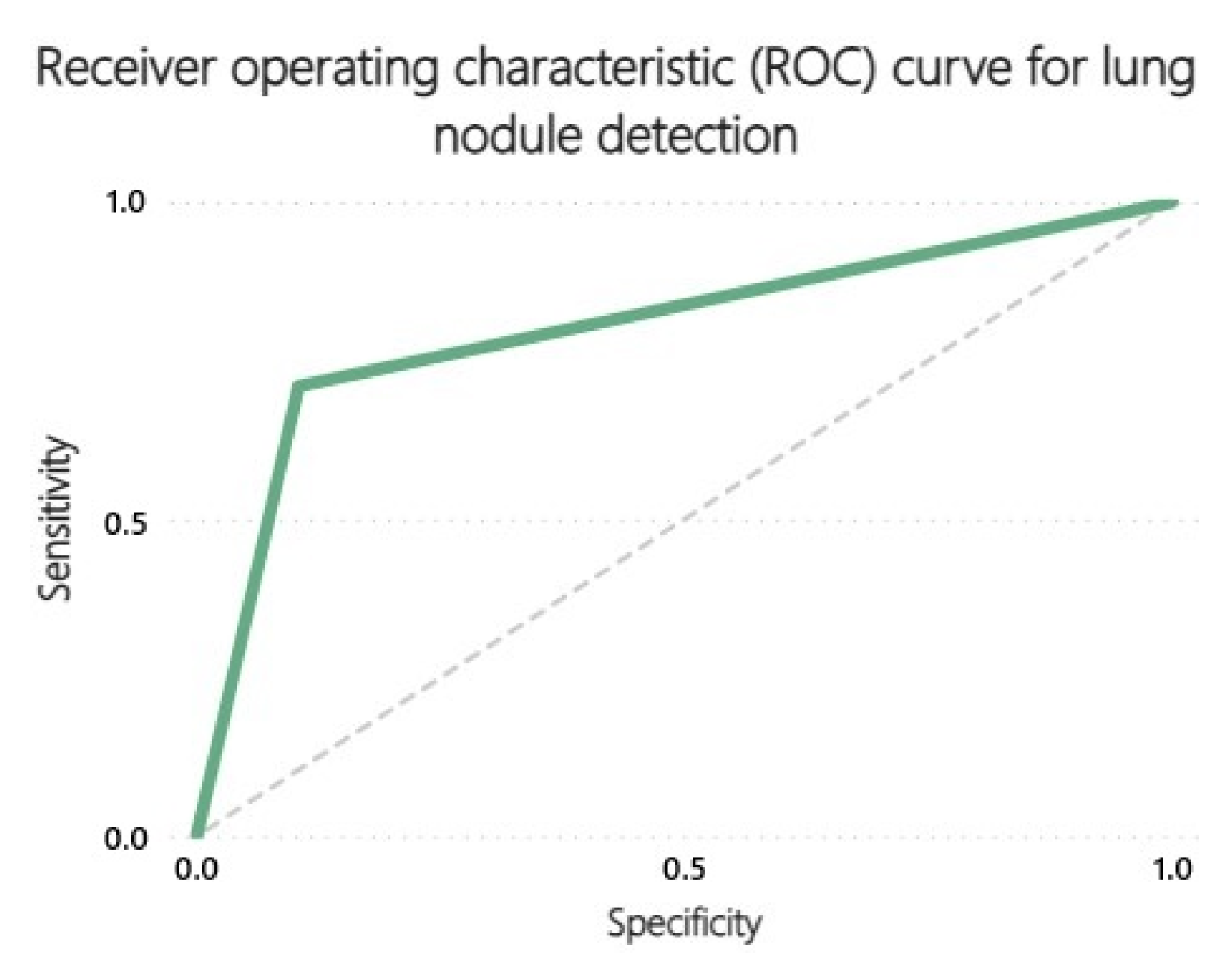

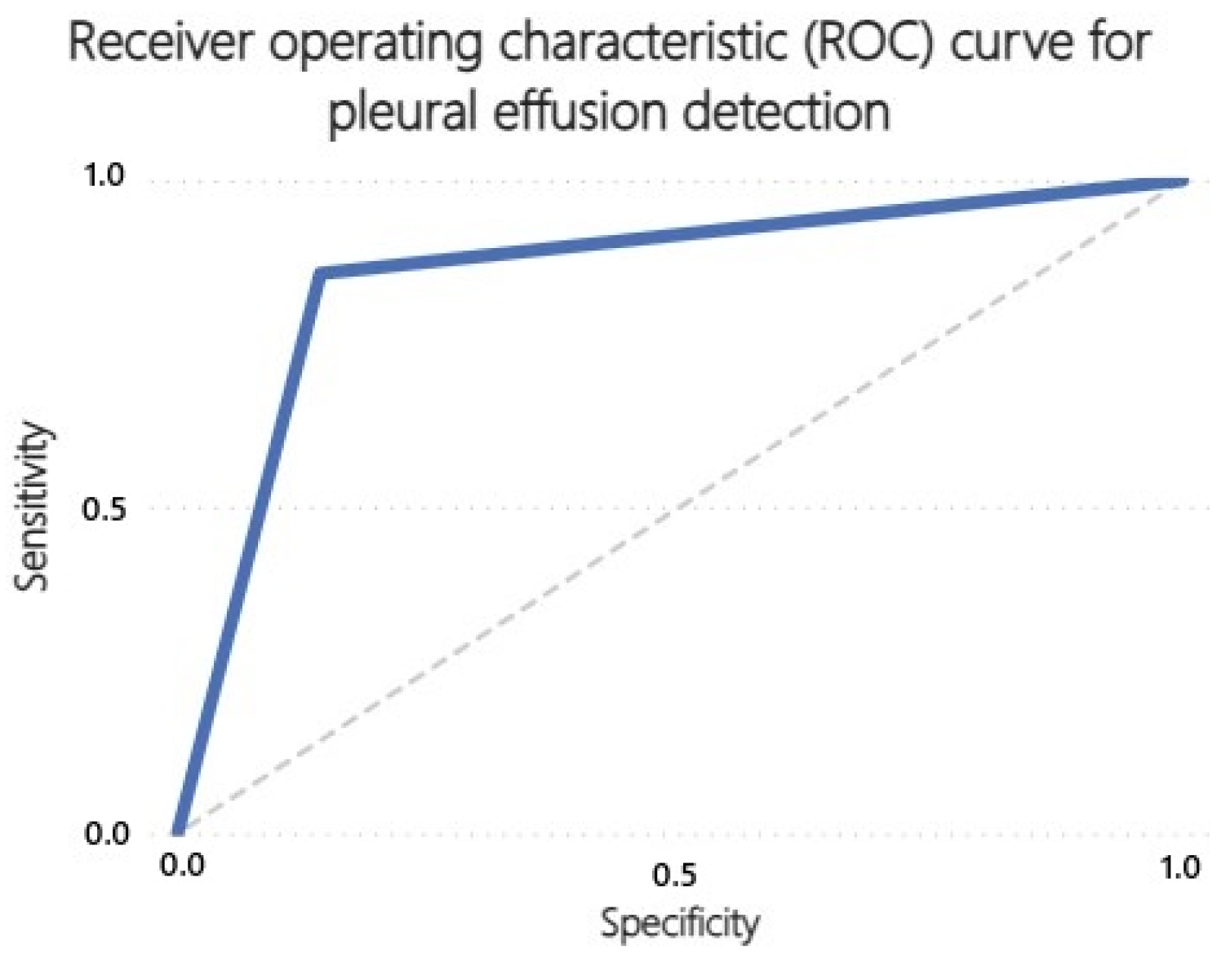

3.1. Specificity and Sensitivity

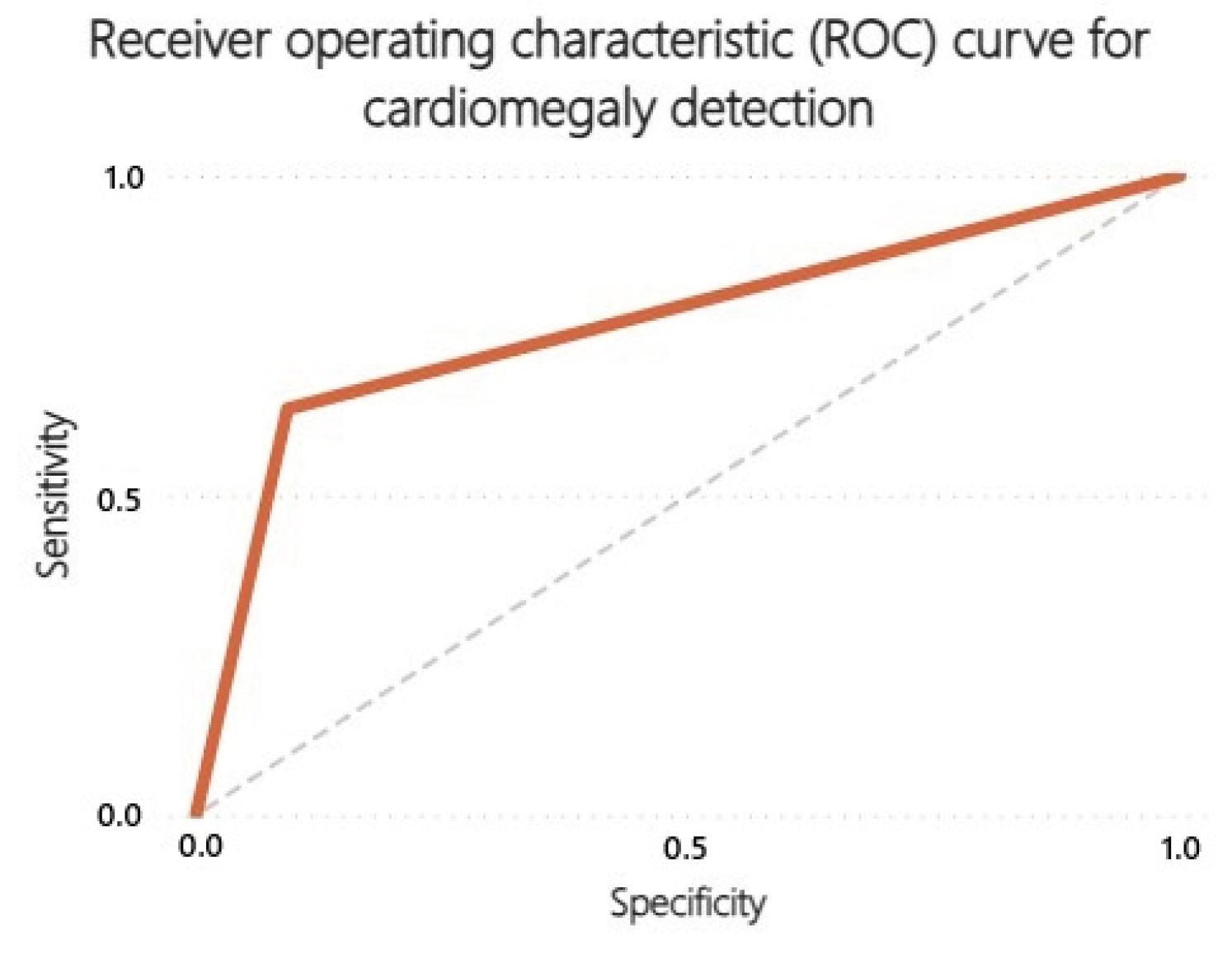

3.2. Receiver Operating Characteristics (ROC) Curves

4. Discussion

Limitations

Future Research

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| ROC | Receiver Operating Characteristic |

| AUC | Area Under the Curve |

| PA | Posteroanterior |

| AP | Anteroposterior |

| PPV | Positive Predictive Value |

| NPV | Negative Predictive Value |

| FDA | U.S. Food and Drug Administration |

| CNNs | Deep Convolutional Neural Networks |

| CI | Confidence Interval |

| CTR | Cardiothoracic Ratio |

References

- Celik, A.; Surmeli, A.O.; Demir, M.; Esen, K.; Camsari, A. The diagnostic value of chest X-ray scanning by the help of Artificial Intelligence in Heart Failure (ART-IN-HF). Clin Cardiol. 2023, 46, 1562–1568. [Google Scholar] [CrossRef] [PubMed]

- Mahboub, B.; Tadepalli, M.; Raj, T.; Santhanakrishnan, R.; Hachim, M.; Bastaki, U.; Hamoudi, R.; Haider, E.; Alabousi, A. Identifying malignant nodules on chest X-rays. A validation study of radiologist versus artificial intelligence diagnostic accuracy. Adv Biomed Health Sci. 2022, 1, 137–143. [Google Scholar] [CrossRef]

- Rohan, K.; Anupama, R.; Shivaraj, K. Artificial Intelligence in Radiology: Augmentation, Not Replacement. Cureus 2025, 6, e86247. [Google Scholar] [CrossRef] [PubMed]

- Homayounieh, F.; Digumarthy, S.; Ebrahimian, S.; Rueckel, J.; Hoppe, B.F.; Sabel, B.O. An Artificial Intelligence–Based Chest X-ray Model on Human Nodule Detection Accuracy From a Multicenter Study. JAMA Netw Open. 2021, 4, e2141096. [Google Scholar] [CrossRef] [PubMed]

- Niehoff, J.H.; Kalaitzidis, J.; Kroeger, J.R.; Schoenbeck, D.; Borggrefe, J.; Michael, A.E. Evaluation of the clinical performance of an AI-based application for the automated analysis of chest X-rays. Sci Rep. 2023, 13, 3680. [Google Scholar] [CrossRef] [PubMed]

- Achour, N.; Zapata, T.; Saleh, Y.; Pierscionek, B.; Azzopardi-Muscat, N. The role of AI in mitigating the impact of radiologist shortages: A systematized review. Health Technol. 2025, 15, 489–501. [Google Scholar] [CrossRef] [PubMed]

- Queralt-Miró, C.; Vidal-Alaball, J.; Fuster-Casanovas, A.; Escalé-Besa, A. Real-world testing o fan artificial intelligence algorithm for the analysis of chest X-rays in primary care settings. Sci Rep. 2024, 14, 5199. [Google Scholar] [CrossRef] [PubMed]

- García-García, J.A.; Reding-Bernal, A.; López-Alvarenga, J.C. Cálculo del tamaño de la muestra en investigación en educación médica. Inv Ed Med. 2013, 2, 217–224. [Google Scholar] [CrossRef]

- Zaki, H.A.; Albaroudi, B.; Shaban, E.E.; Shaban, A.; Elgassim, M.; Almarri, N.D. Advancement in pleura effusion diagnosis: A systematic review and meta-analysis of point-of-care ultrasound versus radiographic thoracic imaging. Ultrasound J. 2024, 16. [Google Scholar] [CrossRef] [PubMed]

- Blake, S.R.; Das, N.; Tadepalli, M.; Reddy, B.; Singh, A.; Agrawal, R. Using Artificial Intelligence to Stratify Normal versus Abnormal Chest X-rays: External Validation of a Deep Learning Algorithm at East Kent Hospitals University NHS Foundation Trust. Diagnostics 2023, 13, 3408. [Google Scholar] [CrossRef] [PubMed]

- Cerda, J.; Cifuentes, L. Uso de curvas ROC en investigación clínica: Aspectos teórico-prácticos. Revista chilena de infectología 2012, 29, 138–141. [Google Scholar] [CrossRef] [PubMed]

- Cerda, L.J.; Villarroel Del, P.L. Evaluación de la concordancia inter-observador en investigación pediátrica: Coeficiente de Kappa. Revista chilena de pediatría 2008, 79, 54–58. [Google Scholar] [CrossRef]

- Qure AI|AI assistance for Accelerated Healthcare. Available online: https://www.qure.ai/ (accessed on 24 November 2025).

- Nam, J.G.; Park, S.; Hwang, E.J.; Lee, J.H.; Jin, K.N.; Lim, K.Y. Development and Validation of Deep Learning-based Automatic Detection Algorithm for Malignant Pulmonary Nodules on Chest Radiographs. Radiology 2019, 290, 218–228. [Google Scholar] [CrossRef] [PubMed]

- Schalekamp, S.; van Ginneken, B.; Koedam, E.; Snoeren, M.M.; Tiehuis, A.M.; Wittenberg, R. Computer-aided detection improves detection of pulmonary nodules in chest radiographs beyond the support by bone-suppressed images. Radiology 2014, 272, 252–261. [Google Scholar] [CrossRef] [PubMed]

- Litjens, G.; Kooi, T.; Bejnordi, B.E.; Setio, A.A.A.; Ciompi, F.; Ghafoorian, M. A survey on deep learning in medical image analysis. Med Image Anal. 2017, 42, 60–88. [Google Scholar] [CrossRef] [PubMed]

- Kufel, J.; Czogalik, Ł.; Bielówka, M.; Magiera, M.; Mitręga, A.; Dudek, P. Measurement of Cardiothoracic Ratio on Chest X-rays Using Artificial Intelligence-A Systematic Review and Meta-Analysis. J Clin Med. 2024, 13, 4659. [Google Scholar] [CrossRef] [PubMed]

- American College of Radiology. Available online: https://www.acr.org/Data-Science-and-Informatics/AI-in-Your-Practice/AI-Use-Cases/Use-Cases/Cardiomegaly-Detection (accessed on 17 November 2025).

- Govindarajan, A.; Govindarajan, A.; Tanamala, S.; Chattoraj, S.; Reddy, B.; Agrawal, R. Role of an Automated Deep Learning Algorithm for Reliable Screening of Abnormality in Chest Radiographs: A Prospective Multicenter Quality Improvement Study. Diagnostics (Basel) 2022, 12, 2724. [Google Scholar] [CrossRef] [PubMed]

- Broder, J. Imaging the Chest: The Chest Radiograph. Diagnostic Imaging for the Emergency Physician 2011, 185–296. [Google Scholar]

| Radiological Sign | True Positives | True Negatives | False Positives | False Negatives |

|---|---|---|---|---|

| Pulmonary nodule | 22 | 197 | 23 | 9 |

| Cardiomegaly | 54 | 127 | 13 | 31 |

| Pleural effusion | 42 | 151 | 25 | 7 |

| Radiological Sign | Sensitivity | Specificity | PPV | NPV | Cohen’s Kappa |

|---|---|---|---|---|---|

| Pulmonary nodule | 0.71 | 0.90 | 0.49 | 0.96 | 0.51 |

| Cardiomegaly | 0.64 | 0.91 | 0.81 | 0.80 | 0.57 |

| Pleural effusion | 0.86 | 0.86 | 0.63 | 0.96 | 0.63 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.