6. Discussion

6.1. Theoretical Implications

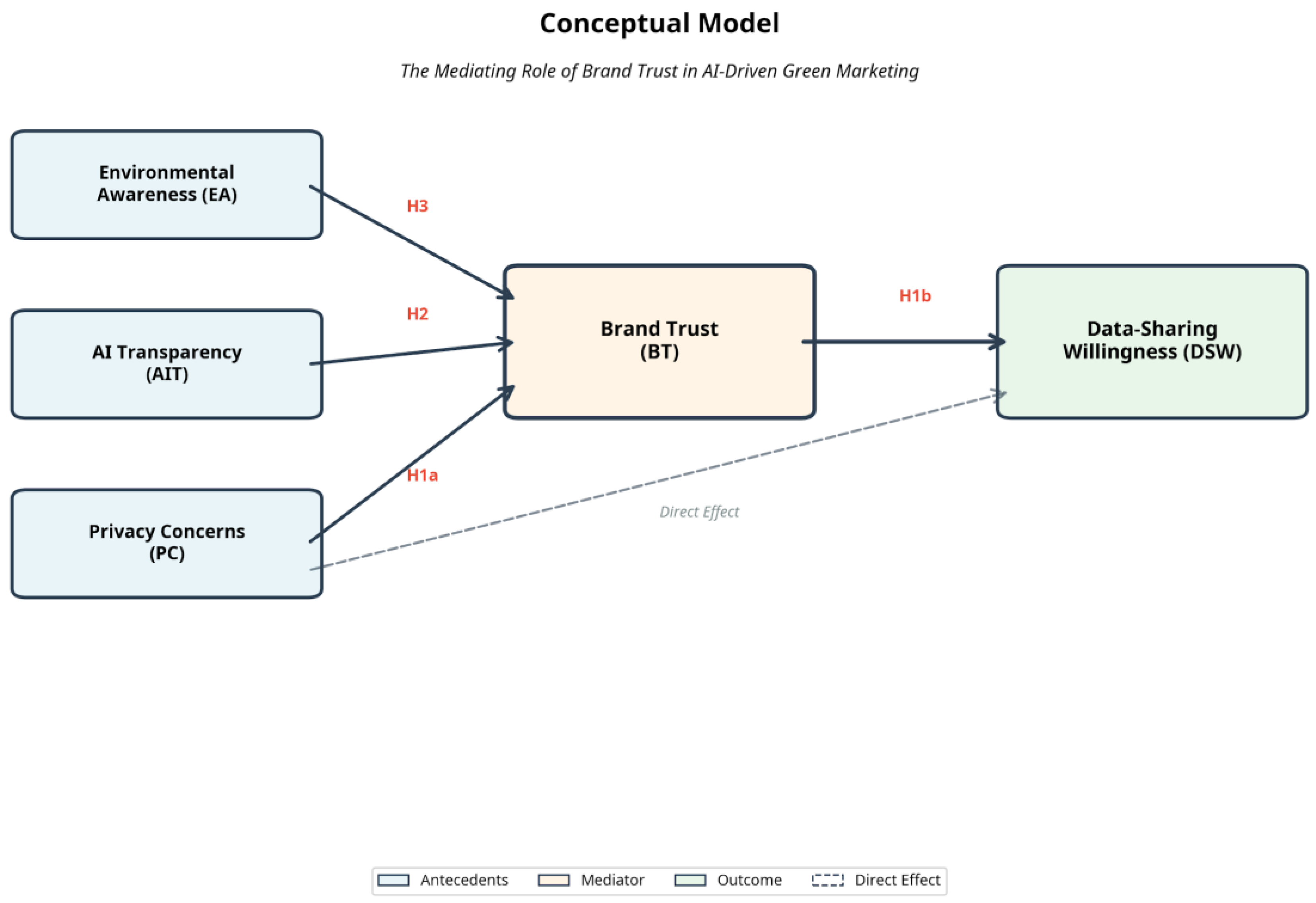

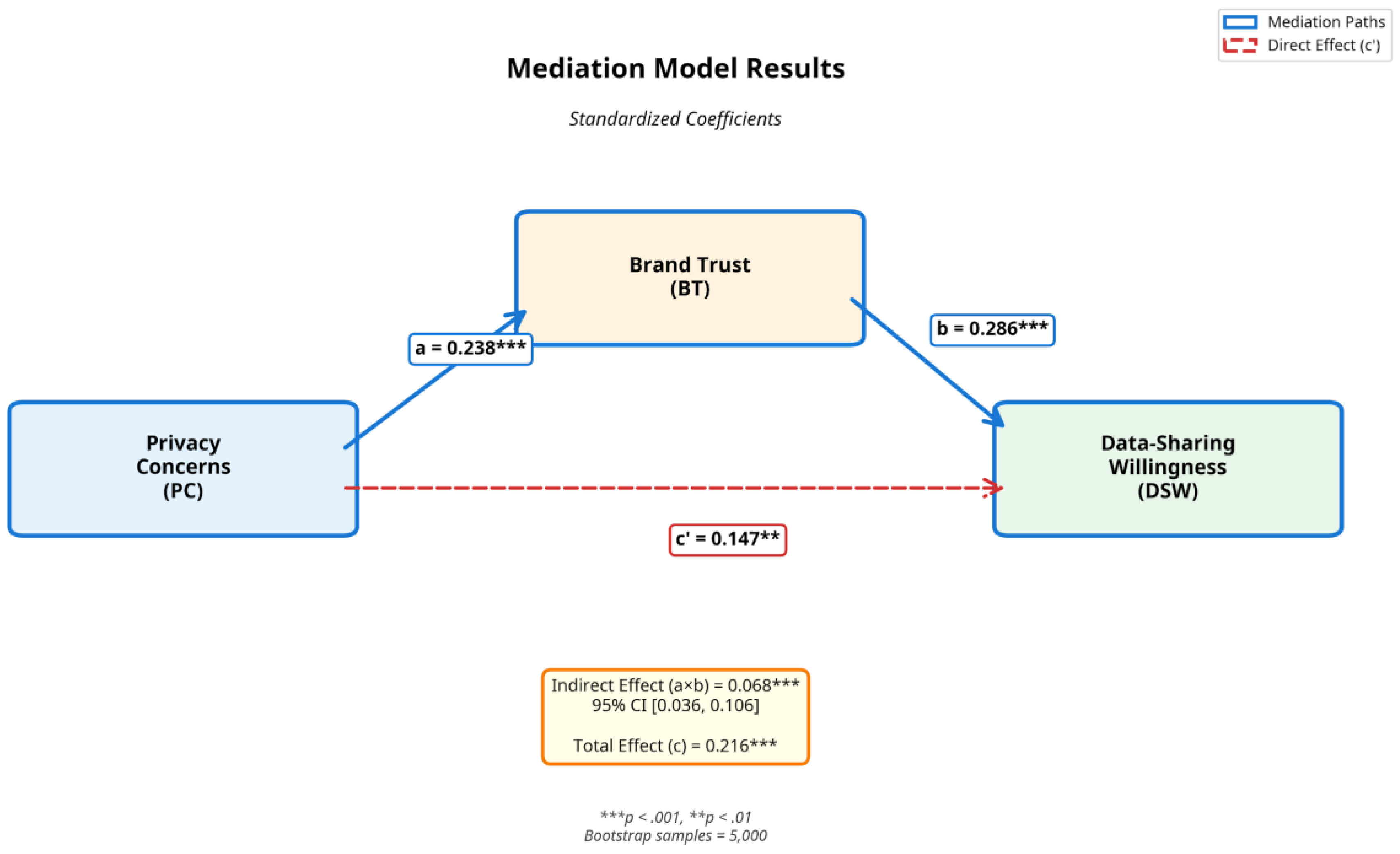

This study makes several important theoretical contributions to the literature on privacy, trust, and green marketing. First, we extend privacy calculus theory by demonstrating that in value-aligned contexts, the relationship between privacy concern and data sharing operates through trust-building mechanisms rather than simple deterrence. Our finding that privacy concern positively predicts data-sharing willingness, both directly and indirectly through brand trust, challenges traditional assumptions that privacy concerns universally reduce disclosure intentions. Instead, our results suggest that privacy-conscious consumers adopt strategic, trust-dependent sharing behaviors, selectively engaging with brands they perceive as trustworthy and environmentally authentic.

This perspective offers a more nuanced understanding of privacy calculus in complex, value-laden contexts. Rather than viewing privacy concern and data sharing as opposing forces, our framework recognizes that privacy-conscious consumers may be more discerning and engaged when they identify organizations that align with their values. In green marketing contexts, where data sharing is framed as contributing to collective sustainability goals, privacy-conscious consumers may perceive greater value alignment and thus be more willing to engage—provided they trust the organization.

Third, our sample, while geographically diverse, was skewed toward higher education levels and may not fully represent broader consumer populations. Future research should examine whether the relationships observed in this study generalize to consumers with different demographic characteristics, particularly those with lower digital literacy or environmental awareness. Fourth, our focus on green marketing contexts raises questions about generalizability to other value-aligned domains. Future research could explore whether trust-mediated privacy calculus operates similarly in contexts such as health, education, or social justice.

However, several limitations of this study point to directions for future research. First, our cross-sectional design precludes causal inference. While our theoretical framework and mediation analysis suggest that privacy concerns influence brand trust, which in turn influences data-sharing willingness, alternative causal sequences are possible. Longitudinal or experimental designs could provide stronger evidence of causal relationships. Second, our reliance on self-reported data introduces potential biases, including social desirability and hypothetical bias. Future research could use behavioral measures, such as actual data disclosure in experimental settings, to complement self-report data.

From a policy perspective, our findings suggest that privacy regulations should consider contextual factors and trust-building mechanisms. Regulations that mandate transparency about AI systems and data practices, such as the EU AI Act, align with consumer preferences and may facilitate rather than hinder engagement in beneficial data-driven services. Policymakers should consider how regulations can support trust-building mechanisms that enable consumers to make informed decisions about data sharing in value-aligned contexts.

Moreover, our results highlight the importance of authentic environmental commitment in building trust. The positive relationship between environmental awareness and brand trust suggests that environmentally conscious consumers are adept at distinguishing genuine sustainability efforts from greenwashing. Organizations that engage in superficial green marketing without substantive environmental action risk losing trust and alienating privacy-conscious consumers. Authentic commitment, demonstrated through verifiable environmental impacts, third-party certifications, and transparent reporting, is essential for building the trust necessary to enable data sharing.

The role of AI transparency in building trust deserves particular attention. Our finding that AI transparency positively predicts brand trust suggests that consumers value understanding how AI systems work and how their data is used. This has implications for the design of AI-driven marketing systems. Rather than treating AI as a black box, organizations should invest in explainable AI technologies that provide clear, accessible information about algorithmic decision-making. This transparency can take various forms, including plain-language explanations of data use, visualizations of personalization processes, and user-controllable privacy settings.

This perspective challenges the deficit model of privacy-conscious consumers, which views them as obstacles to data-driven innovation. Instead, our findings suggest that privacy-conscious consumers can be valuable partners in sustainability initiatives, provided that organizations invest in building trust through transparency and authentic commitment. These consumers may become more engaged advocates for brands that respect their values, amplifying the impact of green marketing initiatives.

Our findings also have implications for the ongoing debate about the privacy paradox. Rather than viewing the positive relationship between privacy concerns and data-sharing willingness as paradoxical, our results suggest it reflects strategic, trust-dependent behavior. Privacy-conscious consumers are not irrational or contradictory; they are selective and discerning. They channel their concerns into trust-building activities, seeking out brands that demonstrate authentic environmental commitment and transparent data practices. Once trust is established, they are willing to share data for purposes they perceive as aligned with their values.

Second, our identification of brand trust as a partial mediator between privacy concern and data-sharing willingness provides insight into the mechanisms through which privacy-conscious consumers engage with data-intensive initiatives. The mediation analysis reveals that privacy concern enhances brand trust (path a), which in turn facilitates data sharing (path b). This sequential process suggests that privacy-conscious consumers channel their concerns into trust-building activities, seeking out brands with transparent data practices and authentic environmental commitments. Once trust is established, it functions as an enabler of engagement, allowing consumers to participate in AI-driven green marketing initiatives they perceive as aligned with their sustainability values.

6.1.2. Interpretation of Complementary Mediation

The complementary mediation pattern revealed in our analysis demonstrates that privacy-conscious consumers employ two distinct decision-making pathways when considering whether to share personal data for green marketing purposes:

Pathway 1: Trust-Dependent (Affective) Route (Indirect Effect): Privacy-conscious consumers evaluate brand trustworthiness through multiple signals: AI transparency, environmental authenticity, and demonstrated commitment to data stewardship. Once trust is established, consumers use this relationship as a foundation for data-sharing decisions. This affective pathway captures the role of reputation-building and relationship stability over time.

Pathway 2: Efficacy-Based (Cognitive) Route (Direct Effect): Independently of accumulated trust, privacy-conscious consumers with high digital literacy and environmental awareness assess whether the specific data-sharing request aligns with their values and whether they can rationally manage associated risks.

These consumers recognize that sustainable AI-driven recommendations require data access and rationally elect to share data when they perceive authentic environmental commitment and perceive themselves as competent in managing privacy risks. This cognitive pathway captures immediate, context-specific evaluation of value alignment.

The existence of both pathways suggests that interventions designed to increase data-sharing willingness among privacy-conscious consumers need not rely solely on trust-building in the traditional sense. Instead, organizations can also promote sharing by: (a) providing transparency about how data will be used to advance sustainability, (b) empowering consumers with knowledge about privacy management, and (c) clearly communicating the value alignment between consumer and organizational missions. These complementary mechanisms mean that even consumers who have not yet developed strong brand relationships may be willing to share data if they perceive competence in managing risks and genuine alignment with green goals.

Third, our findings regarding AI transparency and environmental awareness as antecedents of brand trust contribute to understanding of trust-building in digital marketing contexts. The positive effects of both constructs on brand trust indicate that consumers evaluate trustworthiness based on multiple dimensions: technological transparency (how AI systems work and how data is used) and value alignment (authentic environmental commitment). This multi-dimensional conceptualization of trust-building has implications for both theory and practice, suggesting that effective trust-building strategies must address both instrumental concerns (data governance) and expressive concerns (value alignment).

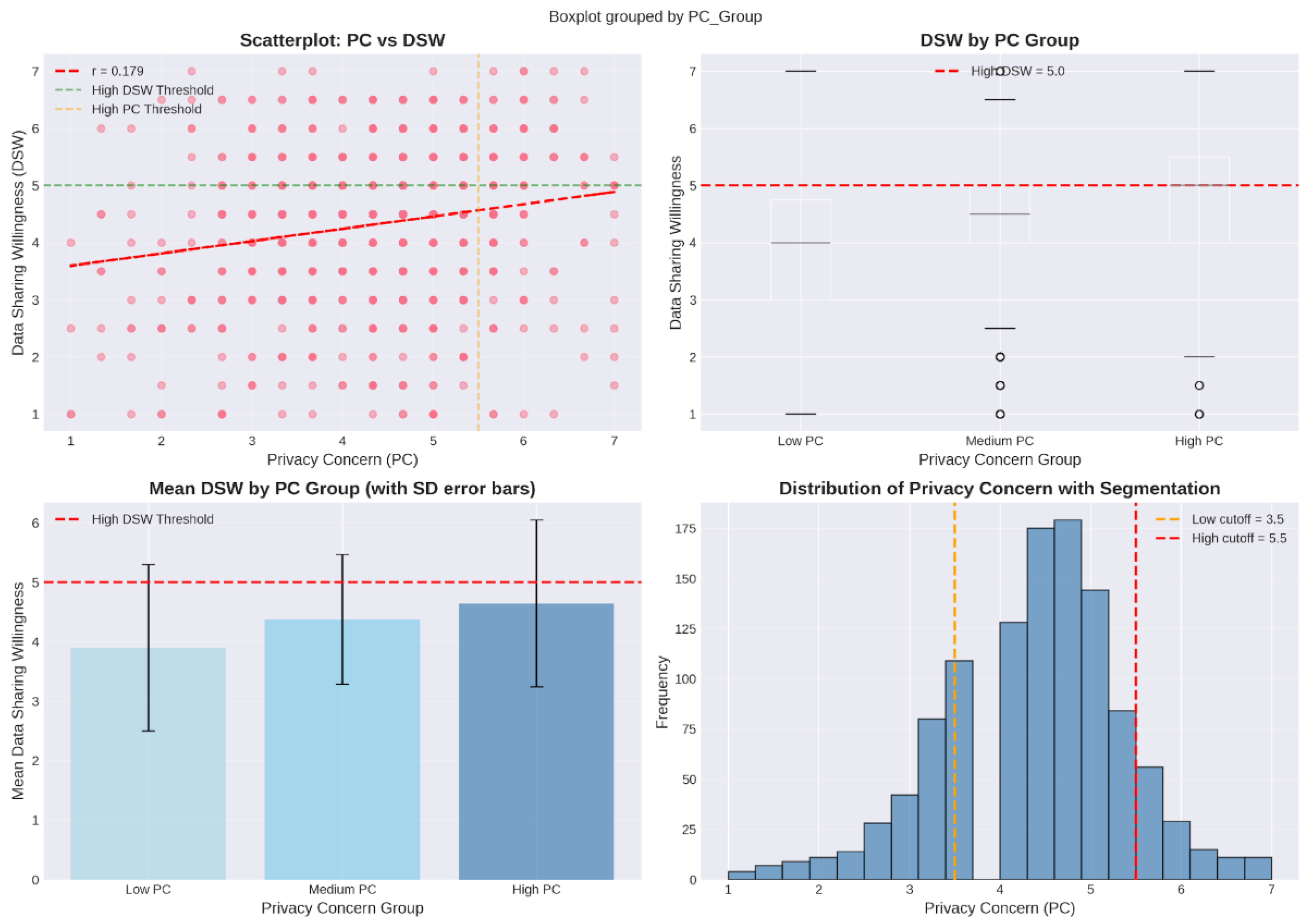

Fourth, our segmentation analysis provides important clarification regarding the nature of privacy-related behavior in value-aligned contexts. While we observed that high-privacy-concern consumers exhibited elevated data-sharing willingness compared to low-concern consumers, their willingness remained moderate (M = 4.64 on a 7-point scale) rather than paradoxically high. This pattern suggests that privacy-conscious consumers are not abandoning their concerns but rather engaging strategically with trusted brands. This finding challenges binary conceptualizations of privacy-protective behavior and supports a more graduated understanding of privacy management strategies.

6.2. Alternative Explanations: Digital Literacy as a Potential Confound

The positive direct effect of privacy concern on data-sharing willingness (β = .109, p = .010) warrants scrutiny regarding potential confounding variables. Specifically, we acknowledge that consumers with high privacy awareness may also possess greater digital literacy—competence in understanding data systems, AI algorithms, and personal risk management strategies. If digital literacy simultaneously predicts both high privacy concern (through informed awareness of risks) and high data-sharing willingness (through confidence in one’s ability to navigate risks), then the observed direct effect could partially reflect a confounding pathway.

Unfortunately, our survey instrument did not include explicit measures of digital literacy or privacy self-efficacy. We recommend that future research incorporate validated scales for these constructs (e.g., the Digital Literacy Scale; Jones-Jie Sun et al., 2020) to decompose the mechanisms underlying the positive direct effect. Nevertheless, the robustness of our mediation model across demographic subgroups (see Table X) and the theoretical coherence of the privacy self-efficacy explanation suggests that digital literacy, while a plausible confound, is unlikely to fully account for the observed relationship.

In our sample, college-educated respondents (68.9%) may possess higher baseline digital literacy; however, attrition analysis (see

Section 4.3) revealed no significant differences in education levels between included and excluded participants (χ² = 3.45, p = .485), suggesting that cleaning procedures did not systematically retain high-literacy respondents. This lends cautious support to the conclusion that digital literacy alone does not fully explain the direct effect.

6.3. Practical Implications

Our findings offer several actionable insights for marketers, policymakers, and technology designers engaged in AI-driven green marketing initiatives.

For Marketers: First, organizations should prioritize transparency about AI systems and data practices. Our finding that AI transparency positively predicts brand trust suggests that clear communication about how algorithms process personal data, what purposes data serves, and how privacy is protected can enhance consumer confidence. Marketers should develop accessible explanations of AI-driven personalization mechanisms, avoiding technical jargon while providing sufficient detail to enable informed decision-making.

Second, authentic environmental commitment is essential for building trust with privacy-conscious consumers. Our finding that environmental awareness enhances brand trust indicates that consumers evaluate the credibility of environmental claims. Organizations should ensure that green marketing initiatives reflect genuine sustainability efforts rather than superficial greenwashing. Transparent reporting of environmental impacts, third-party certifications, and concrete sustainability metrics can enhance perceived authenticity.

In conclusion, this study demonstrates that privacy concerns do not universally deter data sharing in value-aligned contexts. Instead, brand trust mediates the relationship between privacy concerns and data-sharing willingness, enabling privacy-conscious consumers to engage strategically with AI-driven green marketing initiatives. By investing in transparency, authentic environmental commitment, and trust-building mechanisms, organizations can transform privacy-conscious consumers from perceived obstacles into valuable partners in sustainability efforts. As AI continues to reshape marketing practices, understanding the nuanced interplay between privacy, trust, and value alignment will be essential for creating data-driven systems that serve both business objectives and societal goals.

Moreover, our study highlights the potential for collaborative approaches to sustainability that leverage consumer data while respecting privacy. Rather than viewing privacy and sustainability as competing values, organizations can frame data sharing as a form of collective action toward environmental goals. By transparently communicating how consumer data contributes to sustainability outcomes—such as optimizing supply chains, reducing waste, or personalizing eco-friendly product recommendations—organizations can align data collection with consumers' environmental values, thereby facilitating trust-based data sharing.

The findings also have implications for consumer education and empowerment. As AI-driven personalization becomes more prevalent, consumers need better tools and knowledge to make informed decisions about data sharing. This includes understanding what data is collected, how it is used, and what benefits and risks are associated with sharing. Organizations, policymakers, and consumer advocacy groups should collaborate to develop educational resources and decision-support tools that empower consumers to navigate the privacy-utility trade-off in AI-driven contexts.

Additionally, organizations should consider implementing privacy-enhancing technologies (PETs) that enable data-driven personalization while minimizing privacy risks. Techniques such as differential privacy, federated learning, and secure multi-party computation can allow organizations to derive insights from consumer data without exposing individual-level information. By adopting PETs, organizations can demonstrate their commitment to privacy protection, thereby building trust with privacy-conscious consumers.

For technology designers, our findings underscore the importance of privacy by design principles. AI-driven marketing systems should be designed with transparency and user control as core features rather than afterthoughts. This includes providing clear explanations of how algorithms work, offering granular privacy controls, and enabling users to audit and correct the data used for personalization. Such design choices can enhance trust and facilitate data sharing among privacy-conscious consumers.

Third, marketers should recognize that privacy-conscious consumers may be valuable engagement partners rather than obstacles to data-driven marketing. Our finding that privacy concern positively predicts data-sharing willingness (when mediated by trust) suggests that privacy-conscious consumers, when they identify trustworthy brands, may be more engaged and discerning participants in sustainability initiatives. Organizations should view privacy concerns as opportunities for trust-building rather than barriers to overcome.

For Policymakers: Our findings highlight the importance of regulatory frameworks that enable trust-building while protecting consumer privacy rights. Policies that mandate transparency about AI systems and data practices, such as the EU’s AI Act, align with consumer preferences and may facilitate rather than hinder engagement with beneficial data-driven services. Policymakers should consider how regulations can support trust-building mechanisms that enable consumers to make informed decisions about data sharing in value-aligned contexts.

Additionally, our findings suggest that privacy regulations should account for contextual factors, including the purposes for which data is collected and the perceived value alignment between consumers and organizations. Regulatory frameworks that enable flexible, context-sensitive privacy management may better serve consumer interests than one-size-fits-all approaches.

For Technology Designers: Designers of AI-driven marketing systems should incorporate transparency and user control as core design principles. Our findings suggest that consumers value understanding how AI systems work and how their data is used. User interfaces should provide clear, accessible information about data collection, algorithmic processing, and privacy protections. Additionally, designers should implement granular privacy controls that allow consumers to make nuanced decisions about data sharing, reflecting the strategic, trust-dependent behaviors observed in our study.

6.4. The Green-AI Paradox: Implications for Authentic Sustainability

Our findings demonstrate that consumers will share data with brands they trust to use that data for green purposes. However, this trust-based framework faces a forthcoming legitimacy challenge: if the AI system itself is energy-intensive, does transparency about algorithmic function suffice, or must transparency extend to carbon disclosure?

Recent carbon accounting standards for AI (WRI, 2023; Luccioni et al., 2023) suggest that organizations should report Scope 3 emissions attributable to AI inference. If a brand deploys a 4-billion-parameter language model to generate personalized sustainability recommendations, and each inference incurs 0.004 kg CO₂, then a campaign reaching 10 million consumers accrues 40,000 kg CO₂ in AI compute costs. This must be offset against the emissions saved through behavior change to determine “net green impact.”

Our privacy calculus framework, when extended to account for this “carbon transparency,” predicts that privacy-conscious consumers (who may also be environmentally conscious) will demand net-impact disclosure before consenting to data sharing. This represents an important refinement to our findings: trust in green AI may be conditional not only on privacy transparency and environmental authenticity but also on honest accounting of the technological carbon footprint.

Future research should empirically test whether consumers adjust data-sharing willingness upon learning the carbon cost of the AI system; this would refine both privacy calculus theory and green marketing practice.

6.5. Limitations and Future Research Directions

Several limitations of this study suggest directions for future research. First, our cross-sectional design precludes causal inference. While our theoretical framework and mediation analysis suggest that privacy concern influences brand trust, which in turn affects data-sharing willingness, alternative causal sequences are possible. Longitudinal or experimental designs could provide stronger evidence for causal relationships.

Second, our reliance on self-reported data introduces potential biases, including social desirability and hypothetical bias. Respondents’ stated willingness to share data may not accurately reflect their actual behavior when confronted with real data-sharing requests. Future research could employ behavioral measures, such as actual data disclosure in experimental settings, to complement self-report data.

Third, our sample, while geographically diverse, skewed toward higher educational attainment and may not fully represent broader consumer populations. Future research should examine whether the relationships observed in this study generalize to consumers with different demographic characteristics, particularly those with lower digital literacy or environmental awareness.

Fourth, our study focused on green marketing contexts, and it remains unclear whether the observed relationships generalize to other value-aligned domains (e.g., health, education, social justice). Future research could examine whether trust-mediated privacy calculus operates similarly in other contexts where consumers perceive strong value alignment with organizational missions.

Fifth, our measurement of AI transparency relied on general perceptions rather than specific transparency mechanisms (e.g., algorithmic explanations, data dashboards). Future research could examine which specific transparency interventions are most effective for building trust and facilitating data sharing among privacy-conscious consumers.

Finally, our study did not examine potential moderators of the relationships among privacy concern, brand trust, and data-sharing willingness. Individual differences such as privacy literacy, environmental identity, or trust propensity may influence the strength of these relationships. Future research could explore these moderating factors to develop a more comprehensive understanding of privacy-related decision-making in value-aligned contexts.