Submitted:

01 January 2026

Posted:

05 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Glossary and Notation

| Term | Definition |

| CPA | Cost per Acquisition: Spend/Purchases (reporting currency INR). Primary decision metric. |

| CVR | Purchase conversion rate: Purchases/Link Clicks. Inference metric for two-proportion tests. |

| ROAS | Return on Ad Spend: Revenue/Spend (platform-reported). Guardrail, not a decision metric. |

| SRM | Sample Ratio Mismatch: statistically significant deviation from planned traffic split.[4] |

| CUPED | Controlled-experiment Using Pre-Experiment Data; variance reduction using pre-period covariates.[6] |

| Attribution | 7-day click / 1-day view window, applied uniformly across arms for optimization and reporting.[7] |

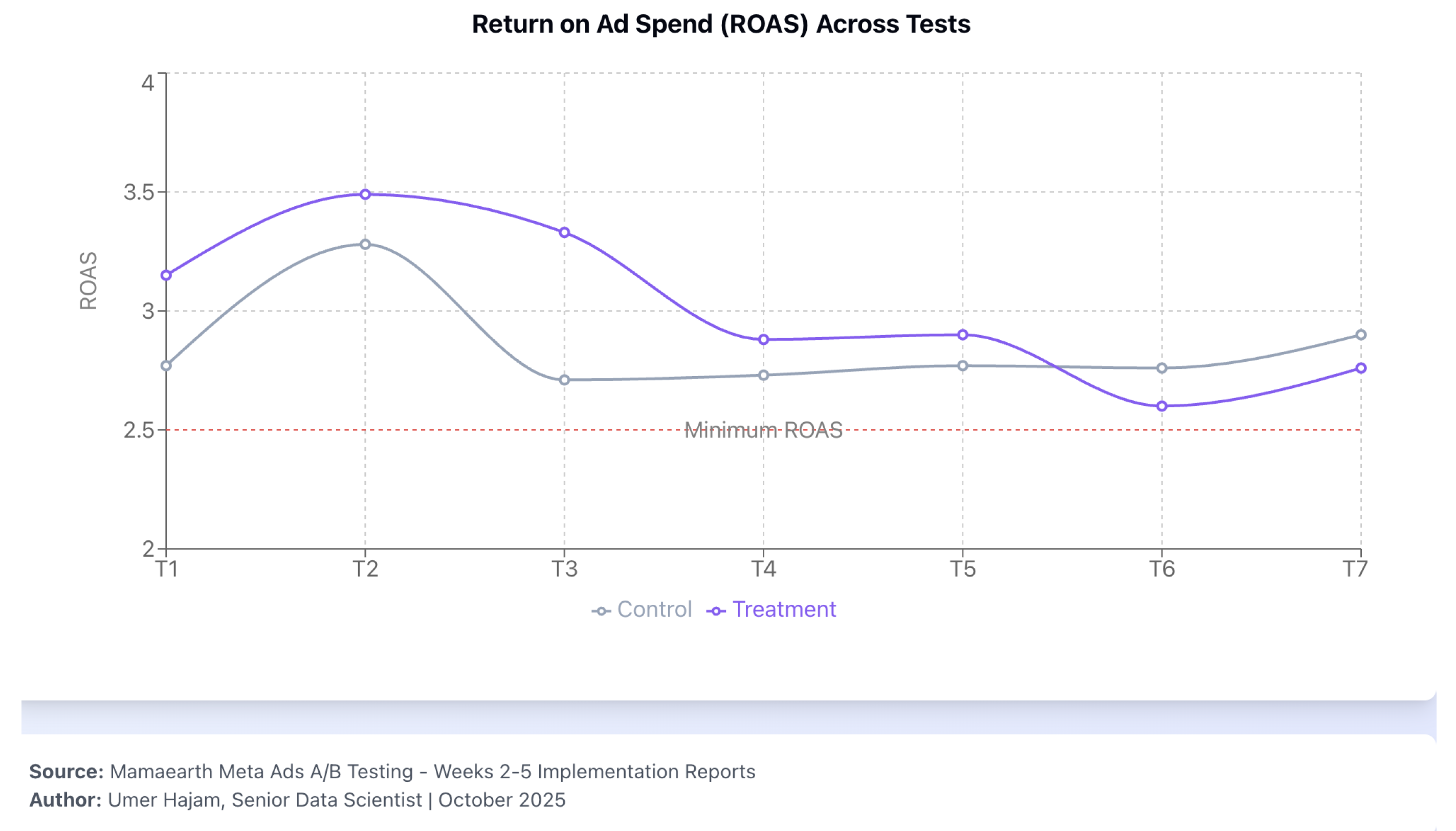

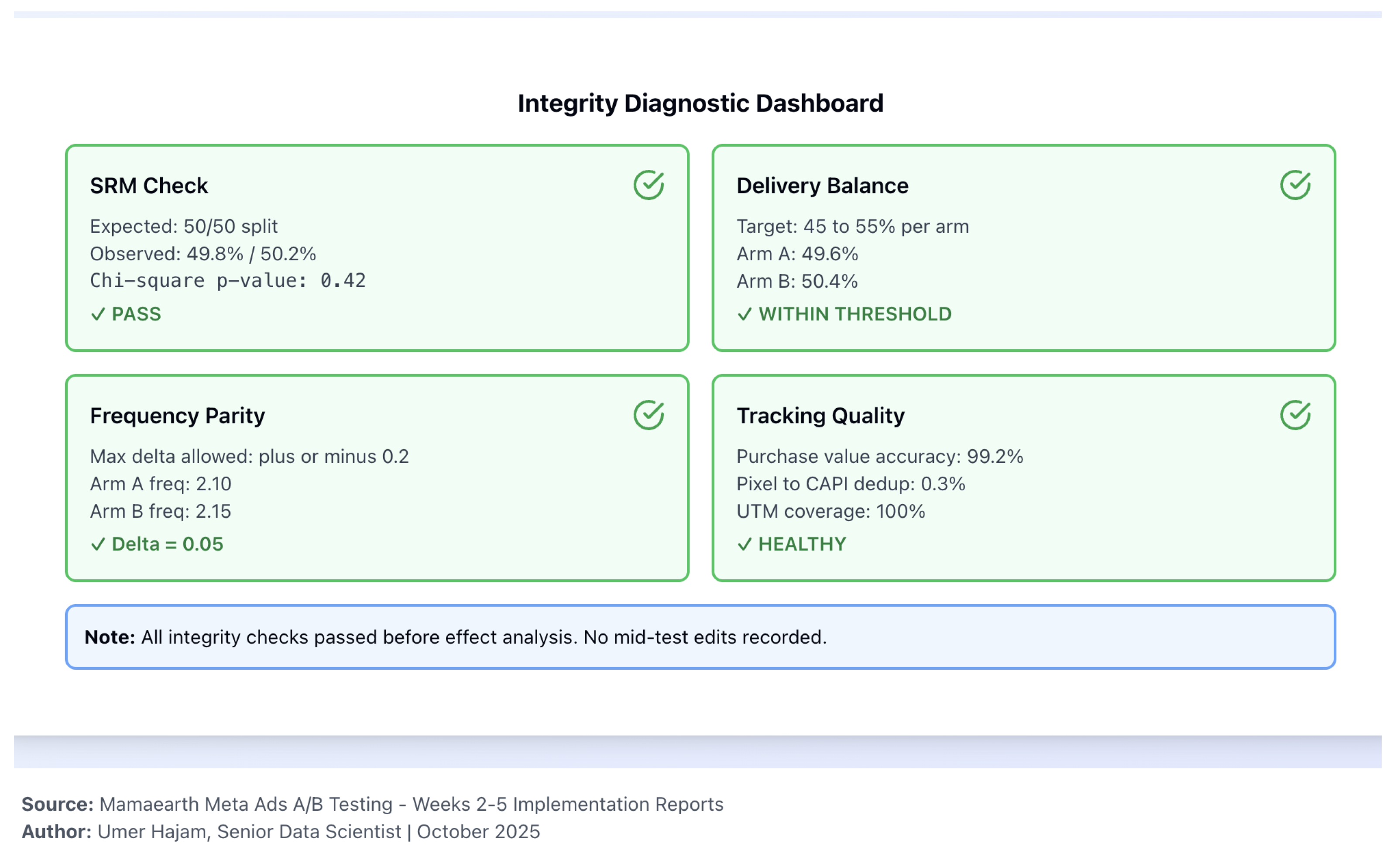

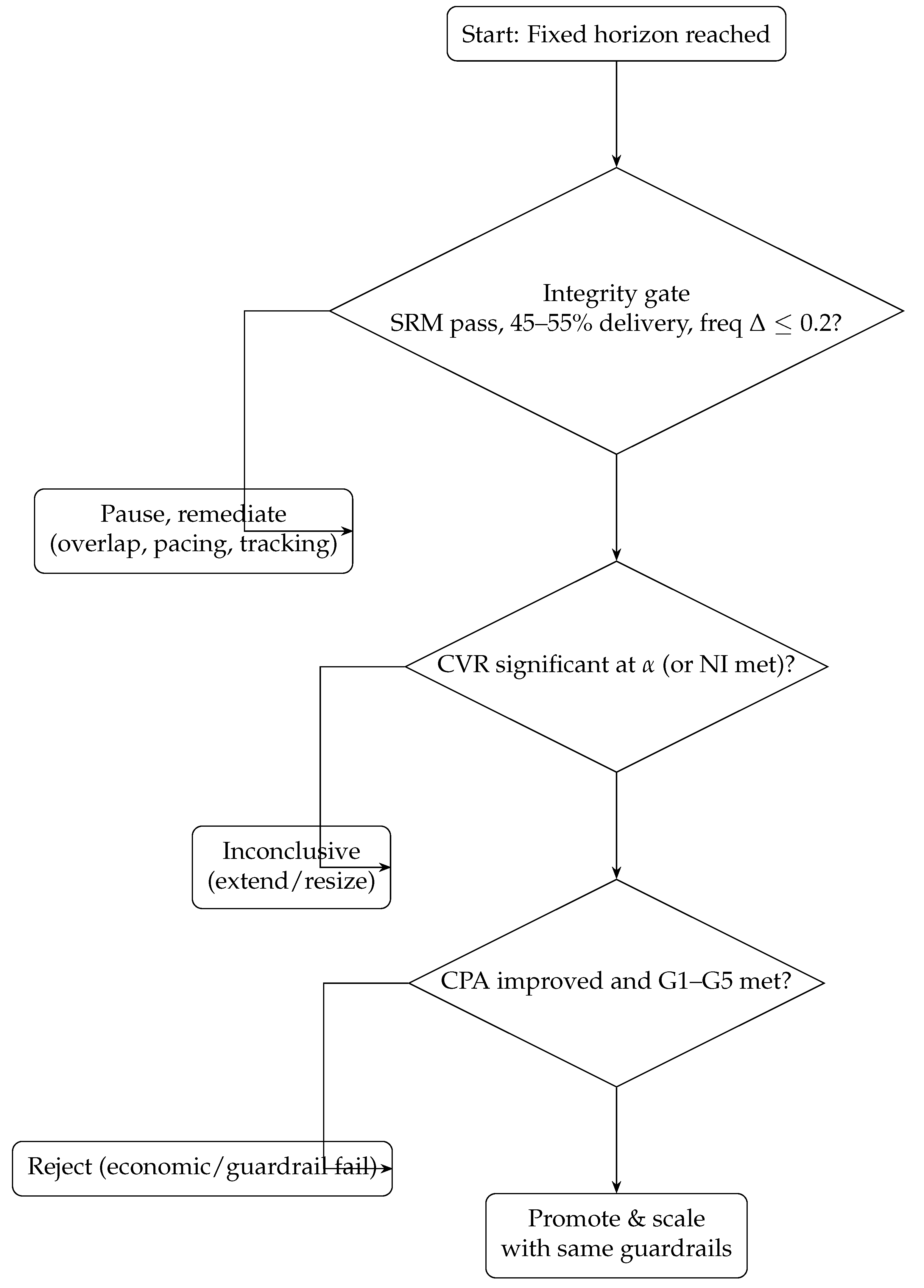

| Guardrails G1–G5 | G1: ROAS threshold; G2: delivery 45–55%; G3: frequency parity ; G4: web vitals (LCP < 2.5 s, CLS < 0.1, INP target);[8] G5: SRM pass (). |

3. Literature Review

- Foundations in online experimentation.

- Paid social experimentation: platform-specific challenges.

- Gap and this framework.

4. Framework Development

4.1. Design Principles

4.2. Measurement Plan

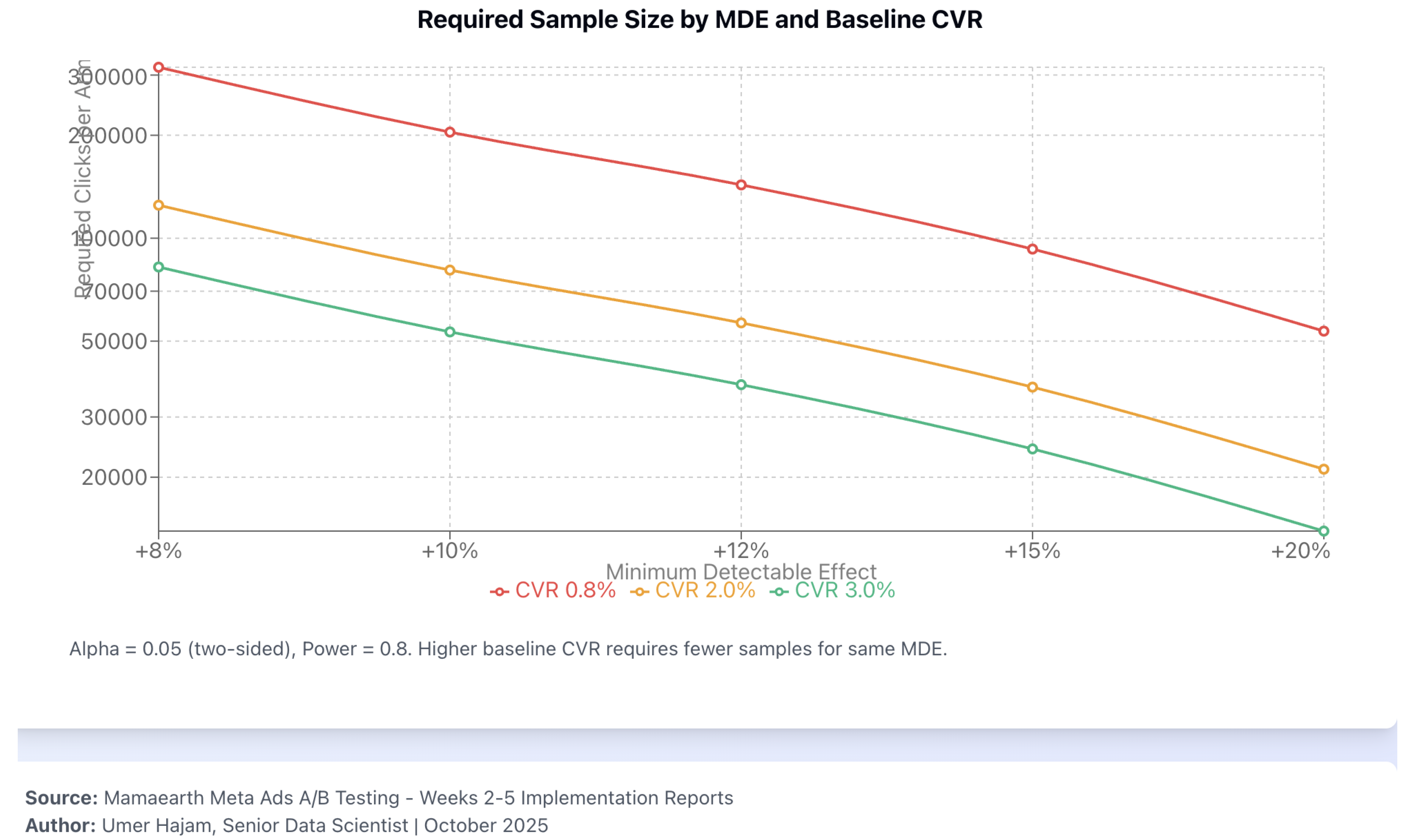

4.3. Power, Sample Size, and MDE

4.4. Integrity Diagnostics

4.5. Variance Reduction (CUPED)

4.6. Statistical Analysis and Decision Rule

5. Simulation Study (Illustrative, Not Empirical)

5.1. Overview and Uncertainty

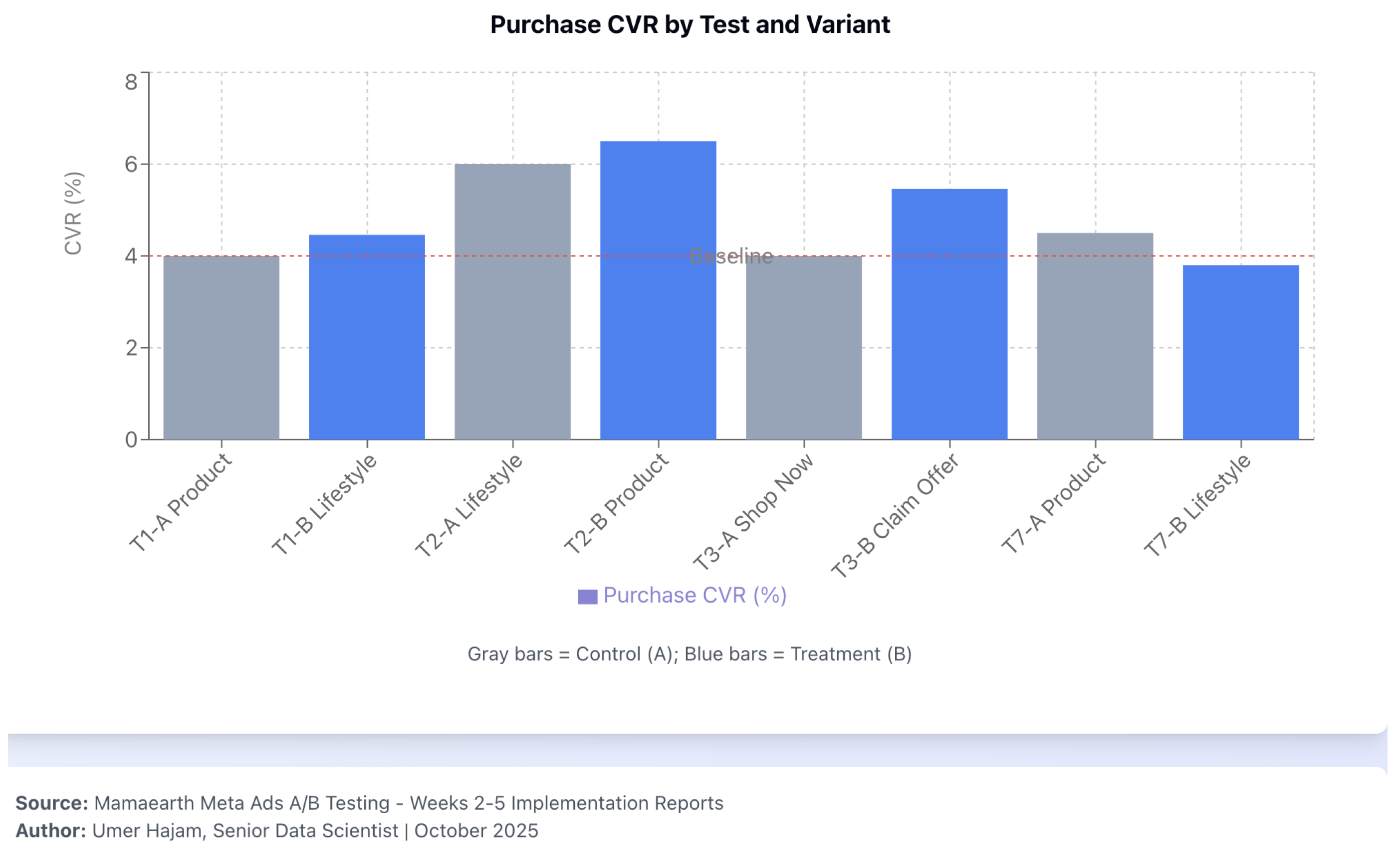

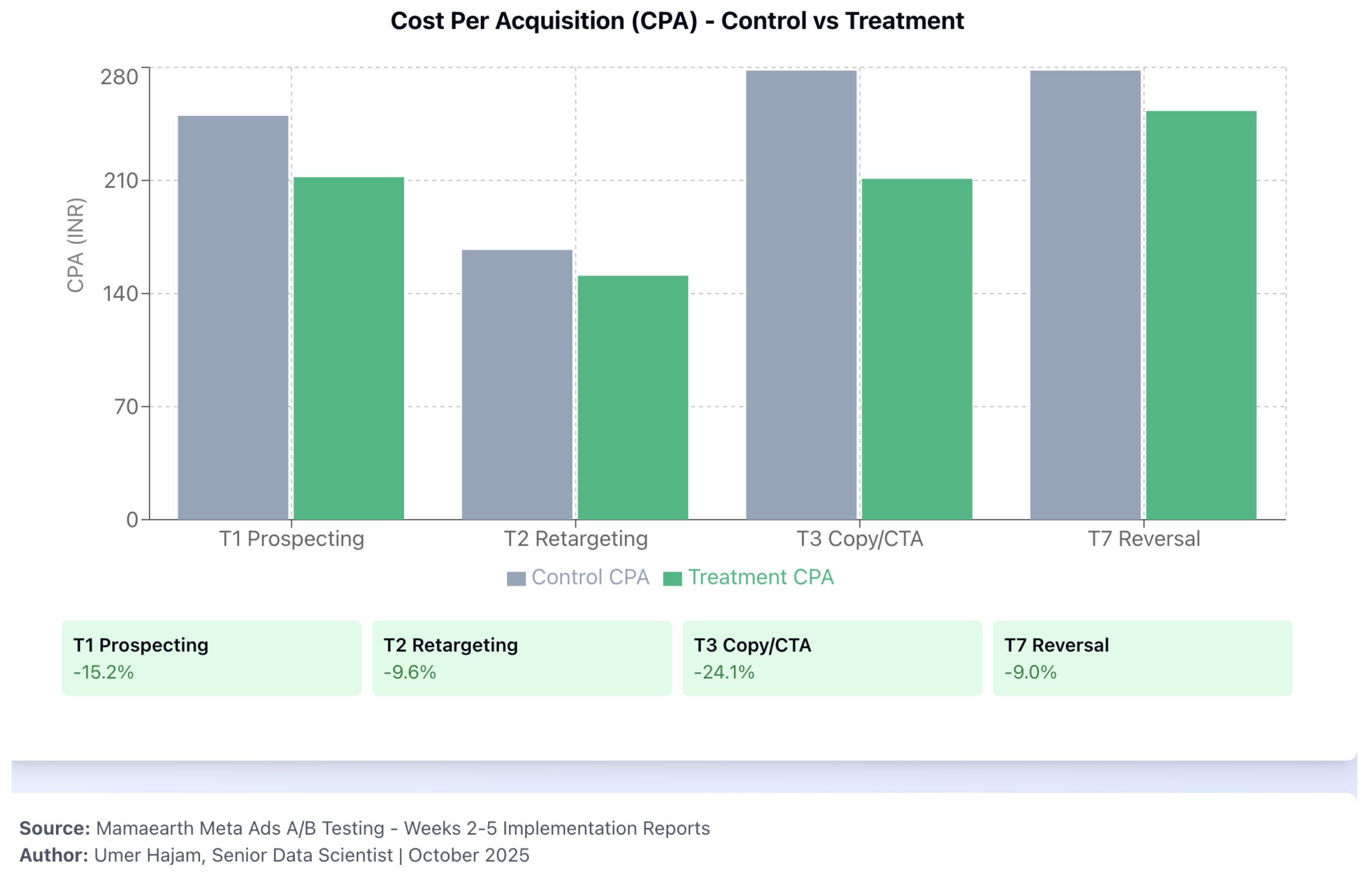

- T1 (Prospecting creative). Simulated CVR difference pp (4.46% vs. 4.00%; , ; 95% CI pp) illustrates a promote decision on lower CPA (INR 212 vs. INR 250; ) assuming G1–G5 hold.

- T2 (Retargeting creative). Borderline CVR lift ( pp; , ) but higher CPA for lifestyle (INR 167); retain product on the CPA rule.

- T3 (Copy/CTA). Claim Offer simulated CVR lift pp () with CPA improvement () → promote.

- T4–T6. Inconclusive at ; resize or extend duration.

- T7 (Retargeting reverse). Harmful CVR ( pp; ) → reject, regardless of nominal CPA decrease.

5.2. Diagnostics and Integrity

5.3. Promotion Decisions (Hypothetical)

| Test | Lever | CVR A | CVR B | z | p | CPA A | CPA B | Hypothetical decision & rationale | |

|---|---|---|---|---|---|---|---|---|---|

| T1 | Prospecting creative | 4.00% | 4.46% | +0.46 pp | 2.62 | 0.009 | INR 250 | INR 212 | Promote B (lifestyle); CVR↑, CPA , ROAS guardrail met |

| T2 | Retargeting creative | 6.00% | 6.50% | +0.50 pp | 1.97 | 0.049 | INR 151 | INR 167 | Retain A (product); decision on CPA; A cheaper despite CVR favoring B |

| T3 | Copy/CTA | 4.00% | 5.46% | +1.46 pp | 6.92 | INR 278 | INR 211 | Promote B (Claim Offer); large CVR↑, CPA | |

| T4 | Placement/format | 4.00% | 4.27% | +0.27 pp | 1.31 | 0.19 | INR 278 | INR 252 | Inconclusive; continue or resize |

| T5 | Message match | 4.00% | 4.00% | +0.00 pp | 0.00 | 1.00 | INR 227 | INR 250 | Inconclusive; no CVR signal |

| T6 | Audience breadth | 4.00% | 4.20% | +0.20 pp | 1.05 | 0.29 | INR 250 | INR 264 | Inconclusive; widen sample or refine segments |

| T7 | Retargeting (reverse) | 4.50% | 3.80% | pp | INR 278 | INR 253 | Reject B (harmful CVR); do not promote despite nominal CPA drop |

6. Implementation Guide (Practitioner-Oriented)

6.1. Pre-Launch Checklist

- Hypothesis preregistered (factor, direction, MDE, guardrails, decision rule).

- Sample-size calculation completed; duration ≥ 7 days (covers weekly cyclicality).

- Landing-page parity checked (headline, hero, CTA alignment); web vitals targets met.[8]

- Audience exclusions applied; no overlap between arms; learning-phase expectations documented.[10]

- Attribution window locked at 7C/1V across arms for both optimization and reporting.[7]

- Incident-log template prepared (settings snapshot, timestamps, remediation path).

6.2. Daily Monitoring Dashboard

6.3. Decision Flowchart

6.4. Example Pre-Registration Template

| Field | Entry (example) |

| Test ID | T1_Prospecting_Creative_Nov2025 |

| Hypothesis | Lifestyle video increases CVR by ≥12% (relative) vs. product creative |

| Primary metric | CPA (INR); decision variable |

| Inference metric | Purchase CVR (Purchases/Link Clicks) |

| Attribution | 7-day click / 1-day view (fixed across arms) |

| Guardrails | G1: ROAS; G2: delivery 45–55%; G3: freq ; G4: LCP < 2.5 s, CLS < 0.1; G5: SRM pass |

| MDE (relative) | 12% (prospecting), 8% (retargeting) |

| Sample/arm | 114,327 clicks (from power curve at baseline CVR 1.0%, MDE 12%) |

| Duration | 10 days (covers weekdays/weekend) |

| Stop rule | Fixed horizon; no interim peeking (Pocock spending if forced) |

| Multiplicity | Holm–Bonferroni across concurrent tests; report BH-FDR as sensitivity |

| Incident protocol | Snapshot settings; pause on SRM/delivery imbalance; relaunch with fresh ID |

7. Discussion

- What this paper contributes.

- What this paper does not claim.

- Strengths.

- Threats not addressed.

- Future work.

8. Conclusion

Funding

Data & Code Availability

Conflicts of Interest

Appendix A. Evidence-Pack Checklist (Simulated)

Appendix B. Simulation Data Generation (Pseudocode)

seed = 42 for test in T1..T7: set baseline_cvr by context (prospecting=0.040, retargeting=0.060) set rel_effect per scenario (e.g., T1 +11.5%, T3 +36.5%, T7 -15.6%) draw clicks per arm from planned sample size with small Poisson jitter purchases_A ~ Binomial(clicks_A, baseline_cvr) purchases_B ~ Binomial(clicks_B, baseline_cvr * (1 + rel_effect)) spend_A, spend_B calibrated to produce target CPA and ROAS distributions compute metrics, SRM chi-square, integrity flags

Appendix C. Roles and Responsibilities (RACI Snapshot)

| Activity | Data Science | Media Buyer | Engineering/Analytics | Product/GM |

| Hypothesis & preregistration | R | C | C | A |

| Sample sizing & MDE | R | C | C | I |

| Split-test setup (Meta) | C | R | C | I |

| Tracking QA (Pixel+CAPI, GA4) | C | C | R | I |

| Monitoring (SRM, delivery) | R | R | C | I |

| Decision & rollout | C | R | C | A |

| Incident handling | R | R | R | I |

References

- Kohavi, R.; Tang, D.; Xu, Y. Trustworthy Online Controlled Experiments: A Practical Guide to A/B Testing; Cambridge University Press, 2020.

- Johari, R.; Pekelis, L.; Walsh, D. Always Valid Inference: Bringing Sequential Analysis to A/B Testing, 2017, [arXiv:stat.ME/1512.04922].

- Bakshy, E.; Eckles, D.; Bernstein, M.S. Designing and Deploying Online Field Experiments. In Proceedings of the Proceedings of the 23rd International Conference on World Wide Web, 2014.

- Miller, E. Seven Pitfalls to Avoid in A/B Testing, 2015. Includes Sample Ratio Mismatch (SRM) diagnostics.

- Vermeer, L. Sample Ratio Mismatch: Why and How to Detect It. https://lukasz.cepowski.com/srm, 2019. Blog post.

- Deng, A.; Xu, Y.; Kohavi, R.; Walker, T. Improving the Sensitivity of Online Controlled Experiments by Utilizing Pre-Experiment Data. In Proceedings of the Proceedings of the 19th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 2013, pp. 1239–1247. [CrossRef]

- Meta. About Attribution in Meta Ads Reporting, 2025. Accessed 1 Nov 2025.

- Google. Web Vitals, 2025. Accessed 1 Nov 2025.

- Pocock, S.J. Group Sequential Methods in the Design and Analysis of Clinical Trials. Biometrika 1977, 64, 191–199.

- Meta. A/B Tests and Experiments on Meta Ads, 2025. Accessed 1 Nov 2025.

- Gordon, B.R.; Zettelmeyer, F.; Bhargava, N.; Chapsky, D. A Comparison of Approaches to Advertising Measurement: Evidence from Big Field Experiments at Facebook. Marketing Science 2019, 38, 193–225.

- Lewis, R.A.; Rao, J.M. The Unfavorable Economics of Measuring the Returns to Advertising. Quarterly Journal of Economics 2015, 130, 1941–1973.

- Meta. Creative Best Practices for Mobile and Feed Environments, 2025. Accessed 1 Nov 2025.

- Meta. About Lookalike Audiences, 2025. Accessed 1 Nov 2025.

- Benjamini, Y.; Hochberg, Y. Controlling the False Discovery Rate: A Practical and Powerful Approach to Multiple Testing. Journal of the Royal Statistical Society: Series B 1995, 57, 289–300.

- Holm, S. A Simple Sequentially Rejective Multiple Test Procedure. Scandinavian Journal of Statistics 1979, 6, 65–70.

- NIST/SEMATECH e-Handbook of Statistical Methods: Two-Proportion Tests. https://www.itl.nist.gov/div898/handbook/prc/section3/prc33.htm. Accessed 2025-10-15.

- Brown, L.D.; Cai, T.T.; DasGupta, A. Interval Estimation for a Binomial Proportion. Statistical Science 2001, 16, 101–133.

- Analytics-Toolkit.com. Sample Ratio Mismatch (SRM) in A/B Testing. https://blog.analytics-toolkit.com/, 2019.

- Meta Developers. Deduplicate Pixel and Conversions API Events. https://developers.facebook.com/docs/marketing-api/conversions-api/deduplicate-pixel-and-server-events/, 2025. Accessed 2025-10-15.

- Meta Business Help Center. About Deduplication for Pixel and Conversions API. https://www.facebook.com/business/help/823677331451951, 2025. Accessed 2025-10-15.

- Google Support. GA4 URL Builders (UTM Parameters). https://support.google.com/analytics/answer/10917952, 2025. Accessed 2025-10-15.

- Fedorovicius, J. Guide to UTM Parameters in Google Analytics 4. https://www.analyticsmania.com/post/utm-parameters-in-google-analytics-4/, 2025. Accessed 2025-10-15.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).