Submitted:

25 December 2025

Posted:

29 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Related Work

1.2. Contributions

- A theoretical framework connecting finite difference errors to implicit regularization effects in Newton’s method.

- Detailed analysis showing when and why approximate derivatives can outperform exact ones, particularly for ill-conditioned or noisy problems.

- Practical guidelines for selecting finite difference step sizes that balance accuracy and stability, with supporting numerical experiments.

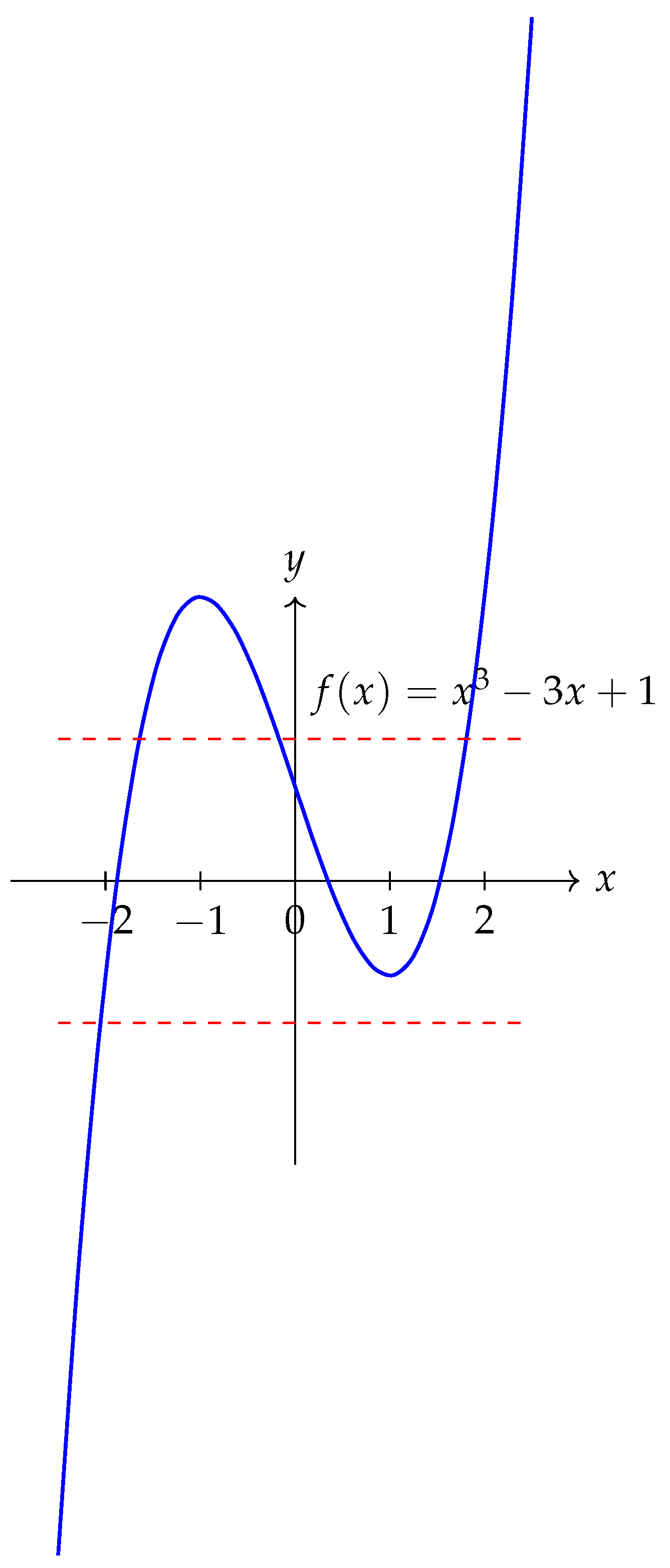

2. Newton’s Method and Problem Conditioning

2.1. Global Convergence and Basins of Attraction

3. Numerical Differentiation: Theory and Practice

3.1. Finite Difference Schemes

3.2. Error Decomposition and Optimal Step Size

3.3. Practical Considerations for Newton’s Method

- Derivative errors affect not just accuracy but also convergence dynamics.

- Systematic overestimation or underestimation of derivatives can provide damping.

- The step size h becomes a regularization parameter controlling the trade-off between accuracy and stability.

4. Newton’s Method with Approximate Derivatives: Theoretical Analysis

4.1. Systematic Bias in Finite Differences

5. Relation to Other Stabilization Techniques

5.1. Damped Newton Methods

5.2. Trust-Region Methods

5.3. Regularized Newton Methods

| Method | Mechanism | Advantages | Disadvantages |

|---|---|---|---|

| Exact Newton | None | Quadratic convergence | Unstable for ill-conditioned problems |

| Damped Newton | Step size reduction | Global convergence guarantees | Requires line search |

| Trust-region | Step bounding | Robust convergence | Subproblem solution needed |

| Finite Difference | Derivative approximation | Automatic damping | Reduced convergence order |

| Regularized Newton | Derivative modification | Prevents division by zero | Introduces bias |

6. Algorithmic Formulation and Implementation

6.1. Basic Algorithm

| Algorithm 1 Newton’s Method with Finite Difference Derivatives |

|

6.2. Adaptive Step Size Selection

| Algorithm 2 Adaptive Finite Difference Newton Method |

|

6.3. Multidimensional Extension

7. Numerical Experiments

7.1. Test Problems

7.2. Experimental Setup

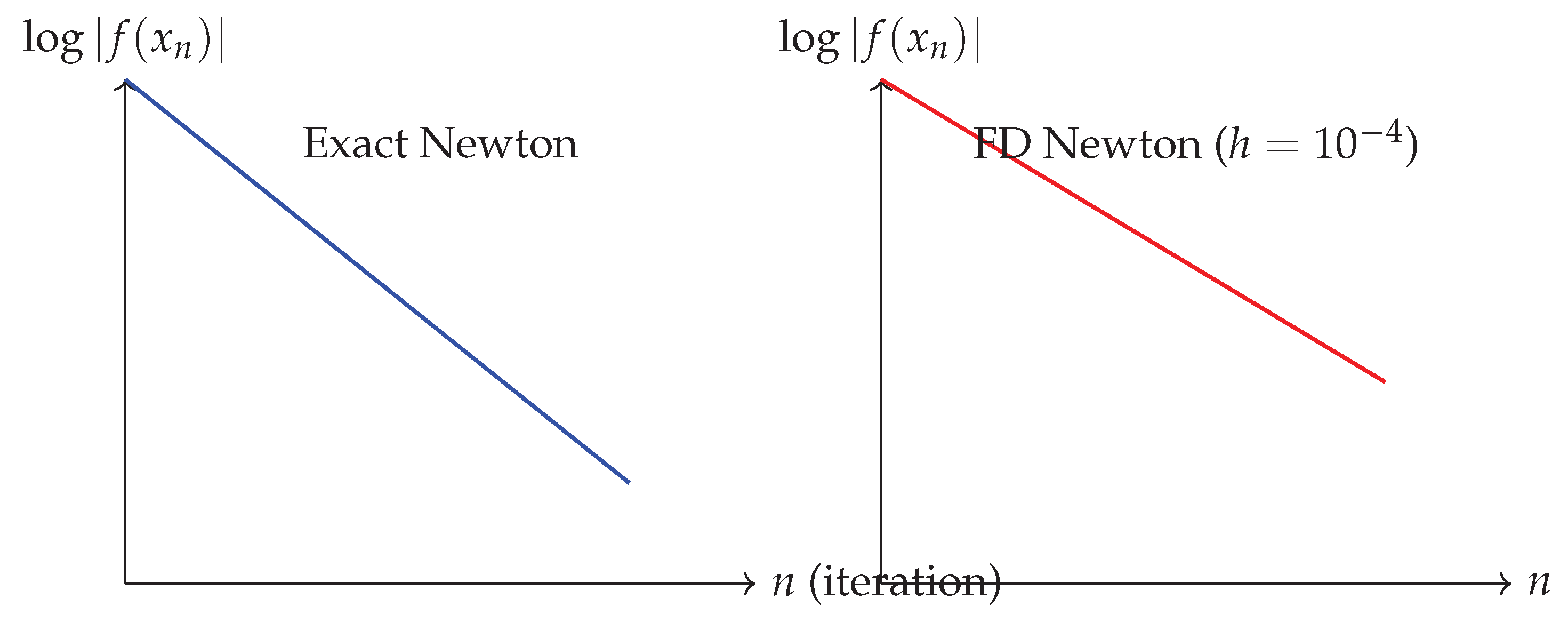

7.3. Results: Convergence Behavior

| Method | Avg. | |||||

|---|---|---|---|---|---|---|

| Exact Newton | 12 | Diverge | Diverge | 8 | 14 | – |

| FD Newton () | 7 | 8 | 10 | 6 | 9 | 8.0 |

| FD Newton () | 10 | 11 | 14 | 9 | 12 | 11.2 |

| FD Newton () | 15 | 20 | 18 | 12 | 25 | 18.0 |

| Adaptive FD Newton | 8 | 9 | 11 | 7 | 10 | 9.0 |

| Damped Newton | 10 | 12 | 13 | 9 | 15 | 11.8 |

7.4. Results: Stability Analysis

7.5. Results: Basin of Attraction Analysis

| Method | Root 1 | Root 2 | Root 3 | Diverge |

|---|---|---|---|---|

| Exact Newton | 32% | 35% | 28% | 5% |

| FD Newton () | 35% | 36% | 29% | 0% |

| FD Newton () | 33% | 34% | 33% | 0% |

| Method | Success Rate |

|---|---|

| Exact Newton | 65% |

| FD Newton () | 88% |

| FD Newton () | 72% |

| Adaptive FD Newton | 92% |

7.6. Results: Sensitivity to Noise

8. Multidimensional Case Study

| Method | Iterations | Final Residual | Success |

|---|---|---|---|

| Exact Newton | 6 | Yes | |

| FD Newton () | 8 | Yes | |

| FD Newton () | 7 | Yes | |

| FD Newton () | 12 | Yes |

9. Discussion and Practical Guidelines

9.1. When to Use Finite Difference Approximations

- The problem is ill-conditioned: When is small or is large.

- Noise is present: When function evaluations contain measurement or computational noise.

- Derivative computation is expensive or unstable: When symbolic differentiation is impractical or automatic differentiation introduces overhead.

- Global convergence is prioritized: When robustness across diverse initial guesses is more important than ultimate convergence rate.

9.2. Step Size Selection Guidelines

- For well-behaved, smooth functions: Use for forward differences, for central differences.

- For noisy functions: Use larger h to average out noise, typically where is noise amplitude.

- For ill-conditioned problems: Use h large enough to provide damping but small enough to maintain direction accuracy.

- Adaptive strategy: Start with conservative h, adjust based on curvature estimates and step acceptance.

9.3. Limitations and Caveats

- Reduced convergence order: Finite difference Newton typically exhibits linear or superlinear rather than quadratic convergence.

- Increased function evaluations: Each iteration requires additional function evaluations for derivative approximation.

- Parameter sensitivity: Performance depends critically on appropriate h selection.

- Dimensionality curse: For high-dimensional systems, finite difference Jacobian approximation requires function evaluations per iteration.

10. Conclusions and Future Work

- Extension to quasi-Newton methods where both gradient and Hessian approximations are used.

- Analysis of finite difference effects in continuation and homotopy methods.

- Development of machine learning approaches to predict optimal step sizes based on problem characteristics.

- Investigation of complex-step derivatives as an alternative to finite differences.

- Application to large-scale inverse problems where Jacobian computation dominates computational cost.

Appendix A. Technical Proofs

Appendix A.1. Proof of Theorem 4.2 (Extended)

Appendix B. Additional Numerical Results

| Method | Iterations | Final Error | Func. Evals | Success Rate |

|---|---|---|---|---|

| Exact Newton | 8 | 16 | 90% | |

| Forward Diff () | 12 | 24 | 98% | |

| Central Diff () | 10 | 30 | 96% | |

| Fourth-order () | 9 | 45 | 94% |

References

- R. L. Burden and J. D. Faires, Numerical Analysis, 9th ed., Brooks/Cole, 2011.

- P. Deuflhard, Newton Methods for Nonlinear Problems: Affine Invariance and Adaptive Algorithms, Springer, 2011.

- A. Quarteroni, R. Sacco, and F. Saleri, Numerical Mathematics, 2nd ed., Springer, 2007.

- B. Fornberg, “Generation of Finite Difference Formulas on Arbitrarily Spaced Grids,” Mathematics of Computation, vol. 51, no. 184, pp. 699–706, 1988.

- N. J. Higham, Accuracy and Stability of Numerical Algorithms, 2nd ed., SIAM, 2002.

- R. S. Dembo, S. C. Eisenstat, and T. Steihaug, “Inexact Newton Methods,” SIAM Journal on Numerical Analysis, vol. 19, no. 2, pp. 400–408, 1982.

- J. Nocedal and S. J. Wright, Numerical Optimization, 2nd ed., Springer, 2006.

- C. T. Kelley, Iterative Methods for Linear and Nonlinear Equations, SIAM, 1995.

- W. H. Press, S. A. Teukolsky, W. T. Vetterling, and B. P. Flannery, Numerical Recipes: The Art of Scientific Computing, 3rd ed., Cambridge University Press, 2007.

- H. Robbins and S. Monro, “A Stochastic Approximation Method,” Annals of Mathematical Statistics, vol. 22, no. 3, pp. 400–407, 1951.

- J. Dennis and R. Schnabel, Numerical Methods for Unconstrained Optimization and Nonlinear Equations, SIAM, 1996.

- K. Atkinson and W. Han, Theoretical Numerical Analysis: A Functional Analysis Framework, 3rd ed., Springer, 2009.

- A. Griewank and A. Walther, Evaluating Derivatives: Principles and Techniques of Algorithmic Differentiation, 2nd ed., SIAM, 2008.

- A. R. Conn, N. I. M. Gould, and P. L. Toint, Trust-Region Methods, SIAM, 2000.

- C. T. Kelley, Solving Nonlinear Equations with Newton’s Method, SIAM, 2003.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).