Submitted:

19 December 2025

Posted:

22 December 2025

You are already at the latest version

Abstract

Keywords:

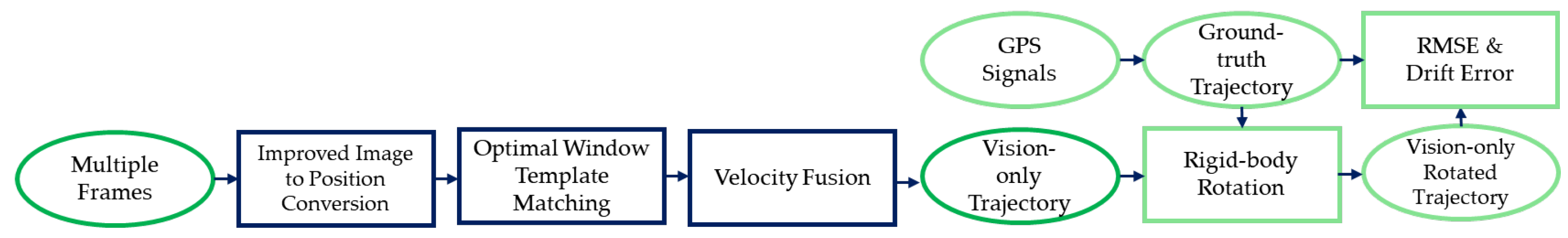

1. Introduction

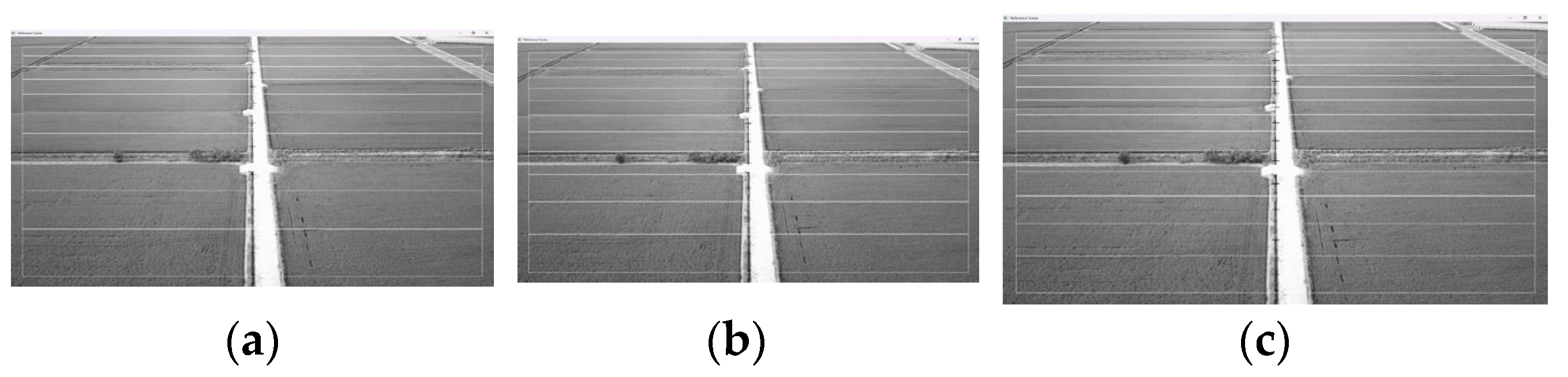

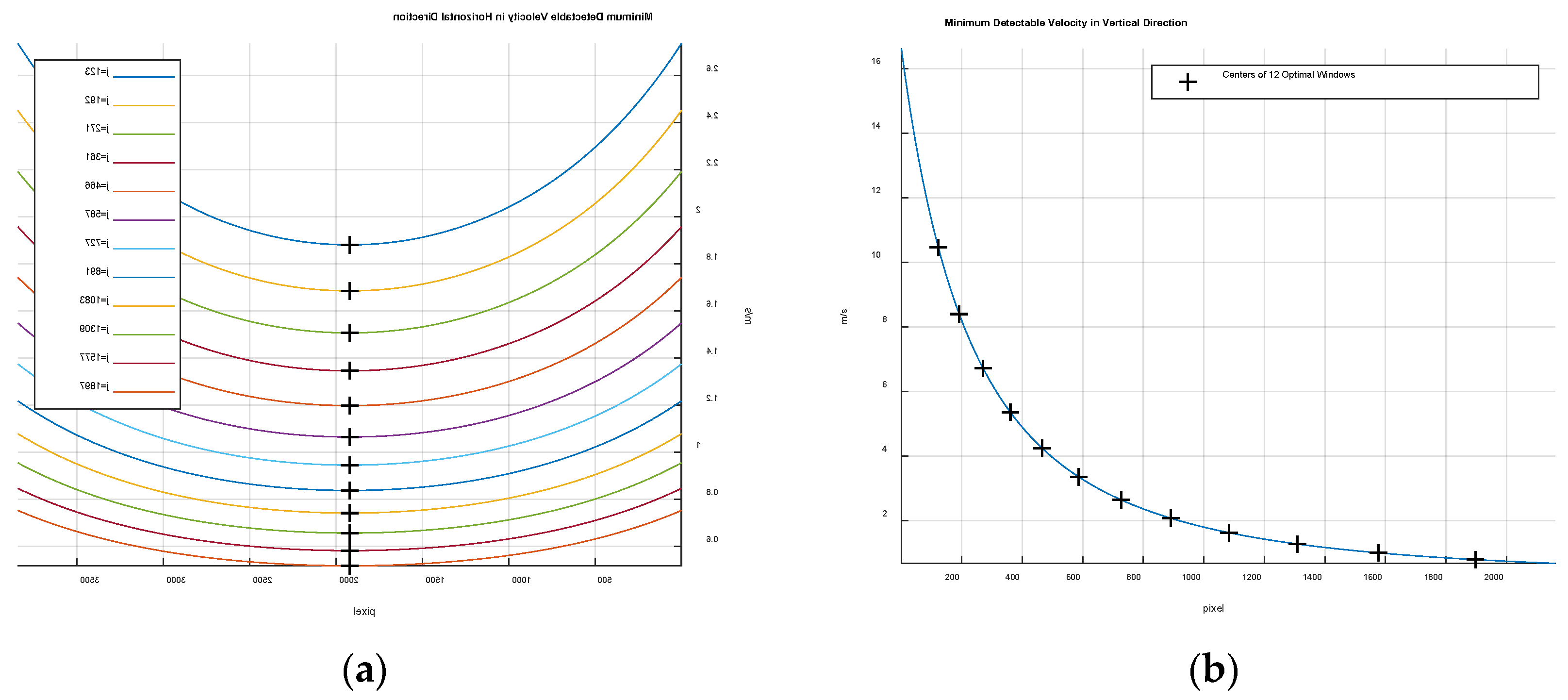

2. Optimal Windows for Template Matching

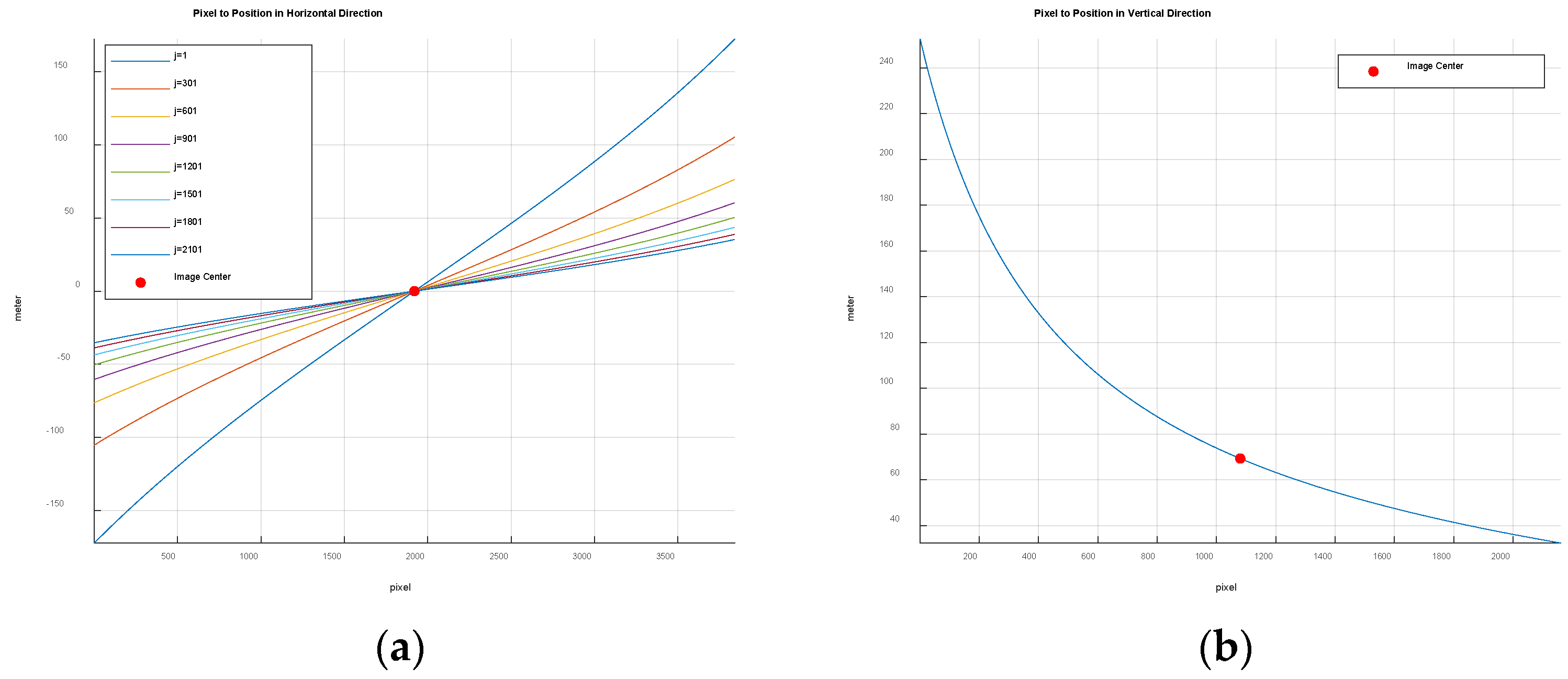

2.1. Improved Image-to-Position Conversion

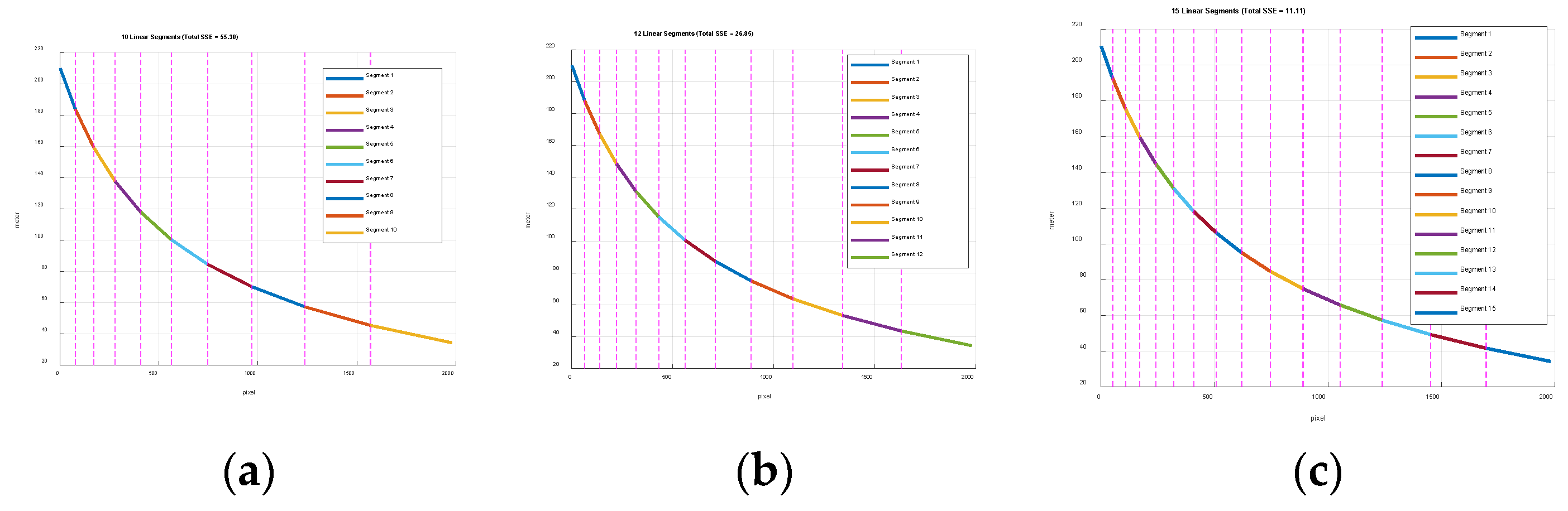

2.2. Optimal Windows

3. Velocity Fusion and Performance Evaluation

3.1. Velocity Fusion

3.2. Traejctory Generation

3.3. Perofrmance Evaluation

4. Results

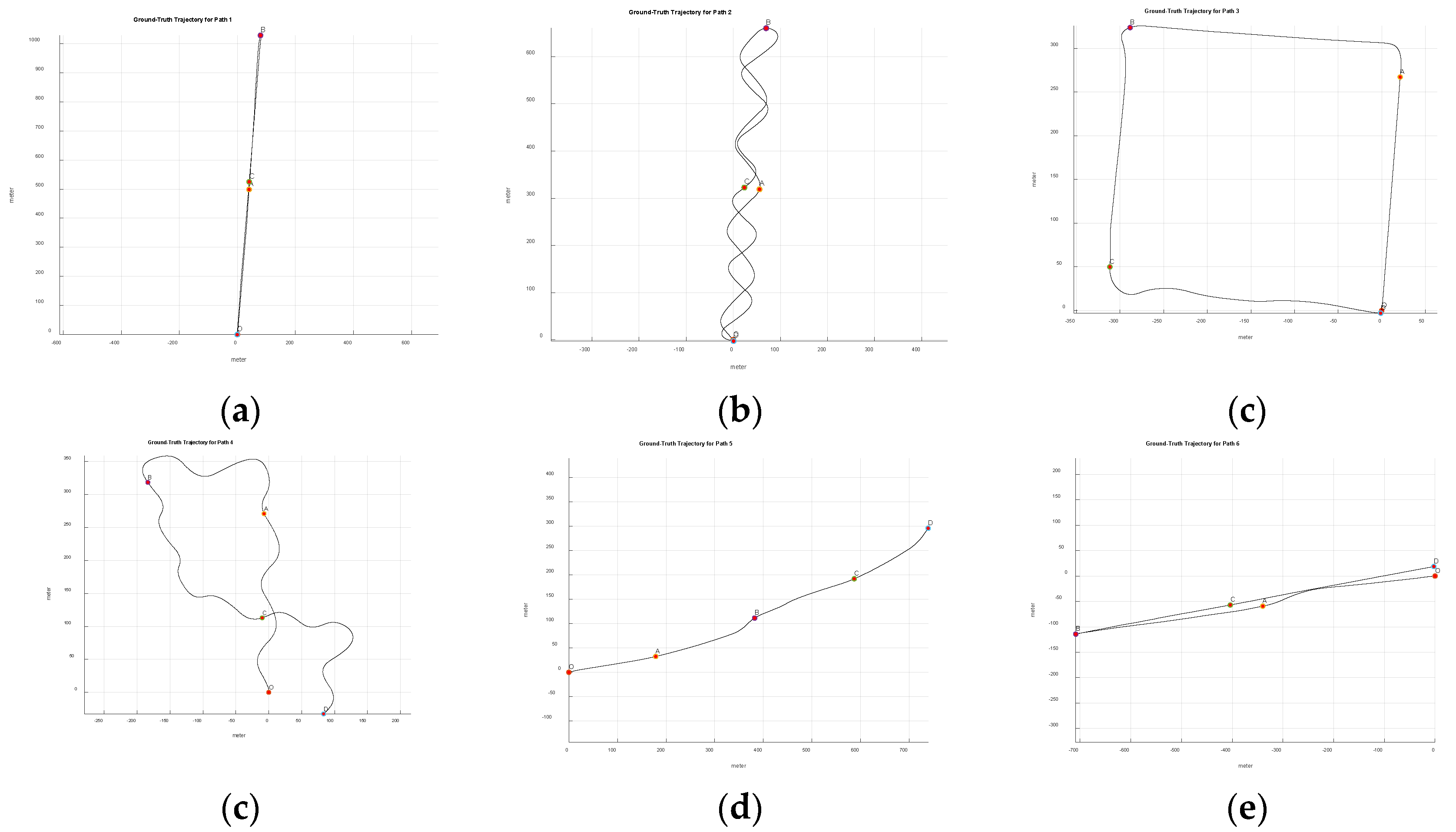

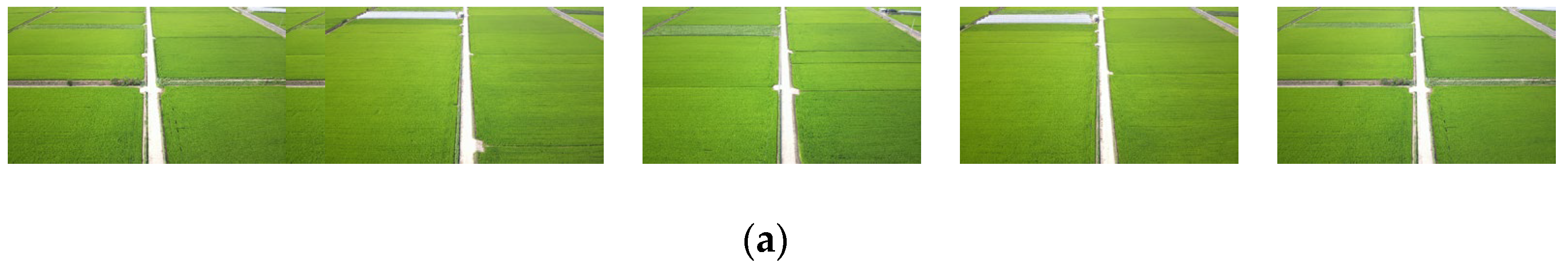

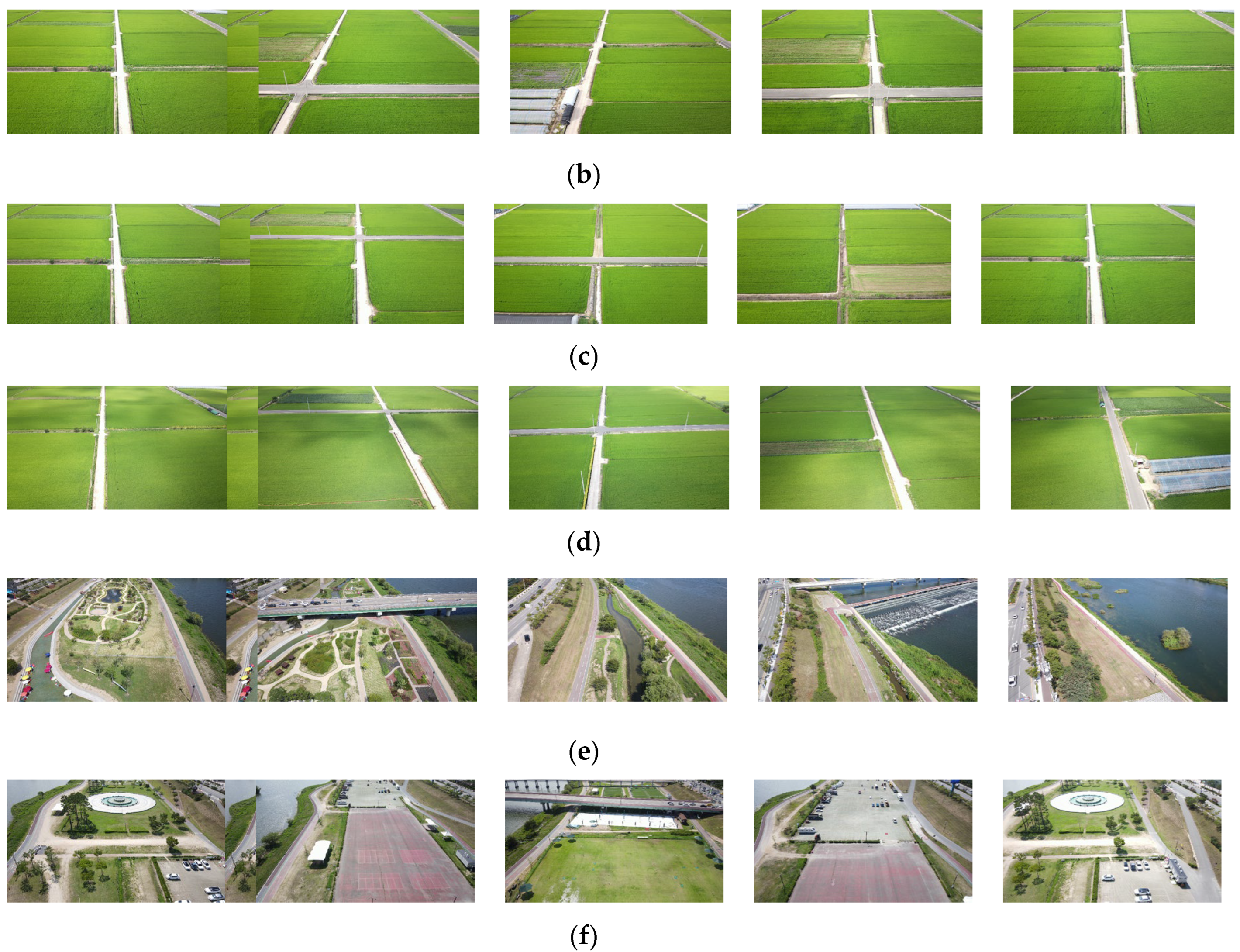

4.1. Flight Paths

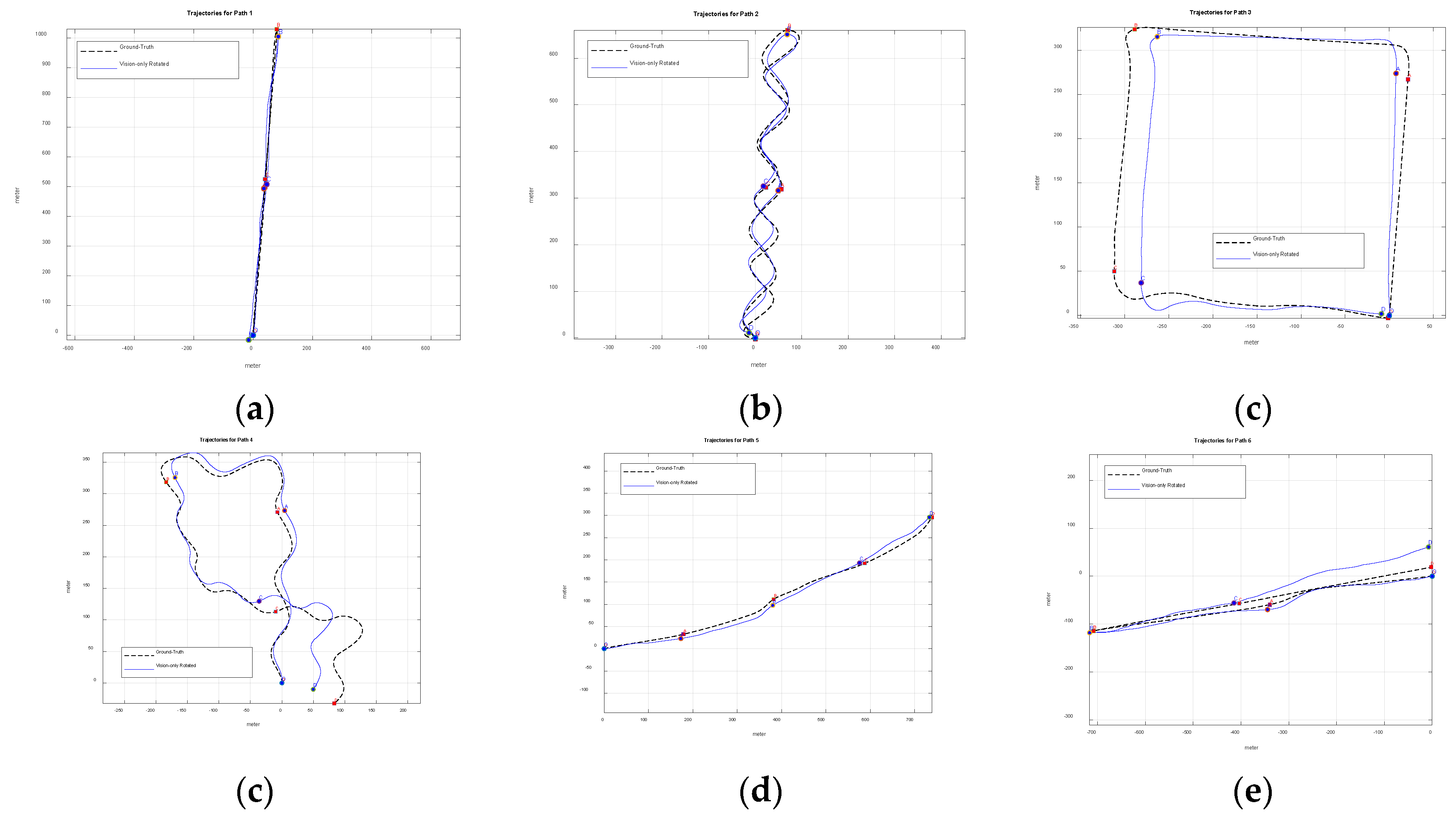

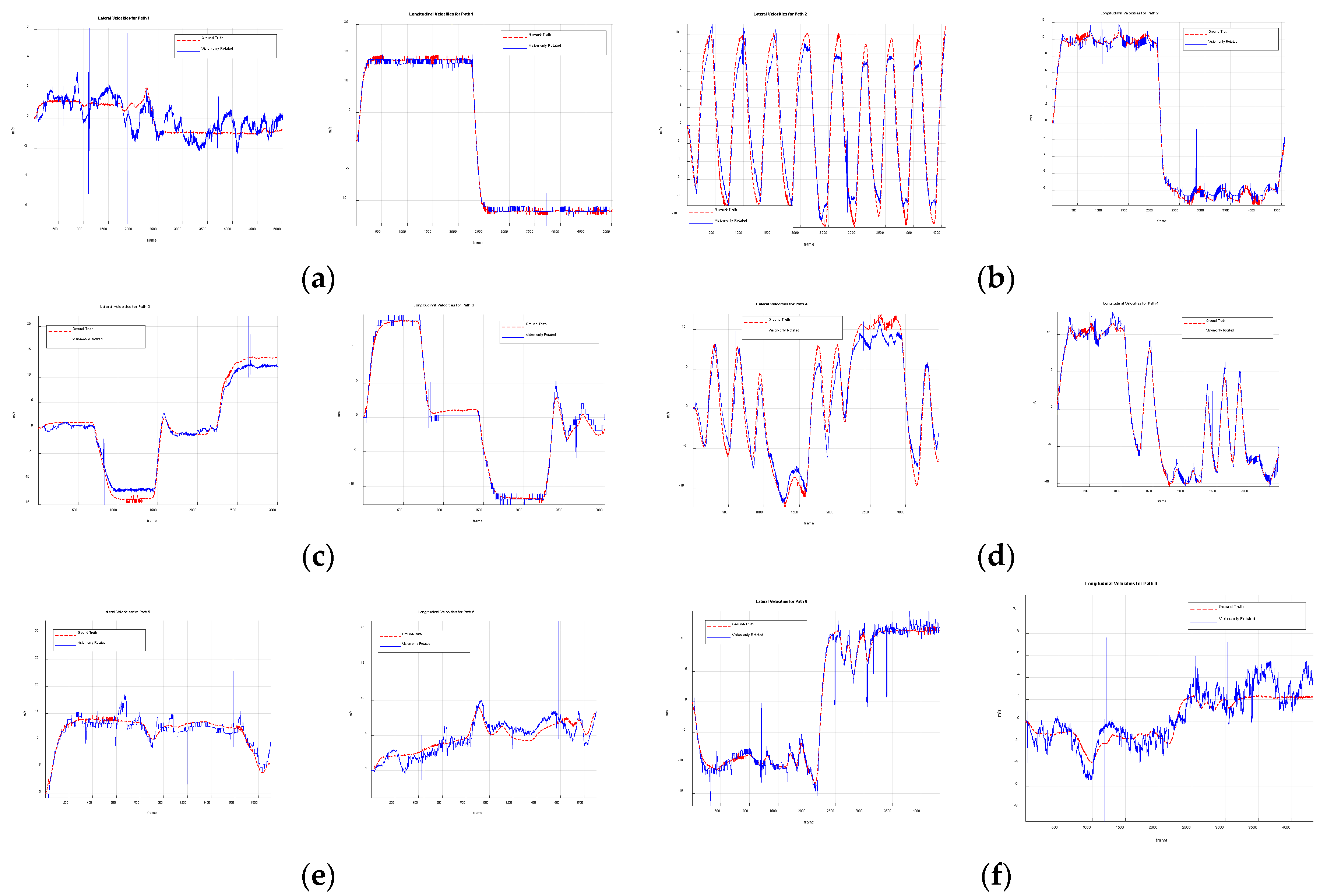

4.2. Vision-Only Rotated Trajectories

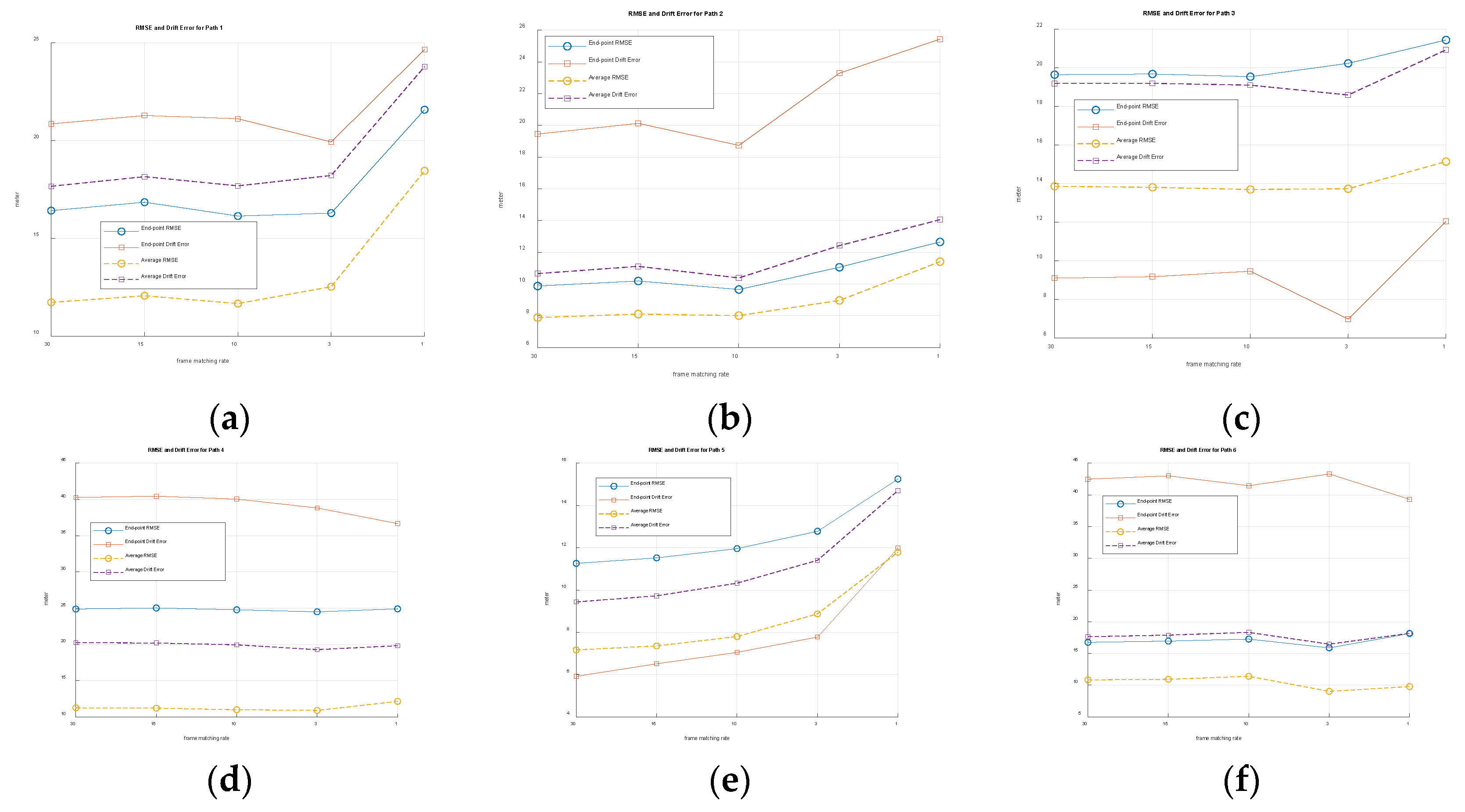

4.3. Effects of Zero-Order Hold Scheme

5. Discussion

6. Conclusions

Supplementary Materials

Funding

Data Availability Statement

Acknowledgments

References

- Osmani, K.; Schulz, D. Comprehensive Investigation of Unmanned Aerial Vehicles (UAVs): An In-Depth Analysis of Avionics Systems. Sensors 2024, 24, 3064. [Google Scholar] [CrossRef]

- Kumar, P.; Pal, K.; Govil, M.C.; Choudhary, A. Comprehensive Review of Path Planning Techniques for Unmanned Aerial Vehicles (UAVs). ACM Comput. Surv. 2025, 58, 1–44. [Google Scholar] [CrossRef]

- Bany Abdelnabi, A.A.; Rabadi, G. Human Detection from Unmanned Aerial Vehicles’ Images for Search and Rescue Missions: A State-of-the-Art Review. IEEE Access 2024, 12, 152009–152035. [Google Scholar] [CrossRef]

- Ye, X.; Song, F.; Zhang, Z.; Zeng, Q. A Review of Small UAV Navigation System Based on Multisource Sensor Fusion. IEEE Sens. J. 2023, 23, 18926–18948. [Google Scholar] [CrossRef]

- Bhatti, U.I.; Ochieng, W.Y. Failure Modes and Models for Integrated GPS/INS Systems. J. Navig. 2007, 60, 327–348. [Google Scholar] [CrossRef]

- Alghamdi, S.; Alahmari, S.; Yonbawi, S.; Alsaleem, K.; Ateeq, F.; Almushir, F. Autonomous Navigation Systems in GPS-Denied Environments: A Review of Techniques and Applications. In Proceedings of the 2025 11th International Conference on Automation, Robotics, and Applications (ICARA), Zagreb, Croatia, 2025; pp. 290–299. [Google Scholar] [CrossRef]

- Jarraya, I.; Al-Batati, A.; Kadri, M.B.; Abdelkader, M.; Ammar, A.; Boulila, W.; Koubaa, A. GNSS-Denied Unmanned Aerial Vehicle Navigation: Analyzing Computational Complexity, Sensor Fusion, and Localization Methodologies. Satell. Navig. 2025, 6, 9. [Google Scholar] [CrossRef]

- Scaramuzza, D.; Fraundorfer, F. Visual Odometry [Tutorial]. IEEE Robot. Autom. Mag. 2011, 18, 80–92. [Google Scholar] [CrossRef]

- Qin, T.; Li, P.; Shen, S. VINS-Mono: A Robust and Versatile Monocular Visual-Inertial State Estimator. IEEE Trans. Robot. 2018, 34, 1004–1020. [Google Scholar] [CrossRef]

- Chen, C.; Tian, Y.; Lin, L.; Chen, S.; Li, H.; Wang, Y.; Su, K. Obtaining World Coordinate Information of UAV in GNSS Denied Environments. Sensors 2020, 20, 2241. [Google Scholar] [CrossRef] [PubMed]

- Gonzalez, R.C.; Woods, R.E. Digital Image Processing, 4th ed.; Pearson: Boston, MA, USA, 2018. [Google Scholar]

- Matthews, I.; Ishikawa, T.; Baker, S. The Template Update Problem. IEEE Trans. Pattern Anal. Mach. Intell. 2004, 26, 810–815. [Google Scholar] [CrossRef] [PubMed]

- Wan, X.; Liu, J.; Yan, H.; Morgan, G.L.K. Illumination-Invariant Image Matching for Autonomous UAV Localisation Based on Optical Sensing. ISPRS J. Photogramm. Remote Sens. 2016, 119, 198–213. [Google Scholar] [CrossRef]

- Deng, H.; Li, D.; Shen, B.; Zhao, Z.; Arif, U. Absolute Velocity Estimation of UAVs Based on Phase Correlation and Monocular Vision in Unknown GNSS-Denied Environments. IET Image Process. 2024, 18, 3218–3230. [Google Scholar] [CrossRef]

- Jin, Z.; Wang, X.; Moran, B.; Pan, Q.; Zhao, C. Multi-Region Scene Matching Based Localisation for Autonomous Vision Navigation of UAVs. J. Navig. 2016, 69, 1215–1233. [Google Scholar] [CrossRef]

- Avola, D.; Cinque, L.; Emam, E.; Fontana, F.; Foresti, G.L.; Marini, M.R.; Mecca, A.; Pannone, D. UAV Geo-Localization for Navigation: A Survey. IEEE Access 2024, 12, 125332–125357. [Google Scholar] [CrossRef]

- Yeom, S. Drone State Estimation Based on Frame-to-Frame Template Matching with Optimal Windows. Drones 2025, 9, 457. [Google Scholar] [CrossRef]

- Hecht, E. Optics, 5th ed.; Pearson: Boston, MA, USA, 2017. [Google Scholar]

- Yeom, S. Long Distance Ground Target Tracking with Aerial Image-to-Position Conversion and Improved Track Association. Drones 2022, 6, 55. [Google Scholar] [CrossRef]

- Muggeo, V.M.R. Estimating Regression Models with Unknown Break-Points. Stat. Med. 2003, 22, 3055–3071. [Google Scholar] [CrossRef]

- Bishop, C.M. Pattern Recognition and Machine Learning; Springer: New York, NY, USA, 2006. [Google Scholar]

- OpenCV Developers. Template Matching. 2024. Available online: https://docs.opencv.org/4.x/d4/dc6/tutorial_py_template_matching.html (accessed on 5 June 2025).

- DJI. Mavic 2 Enterprise Advanced User Manual. Available online: https://dl.djicdn.com/downloads/Mavic_2_Enterprise_Advanced/20210331/Mavic_2_Enterprise_Advanced_User_Manual_EN.pdf (accessed on 17 December 2025).

- Bellman, R. On the Approximation of Curves by Line Segments Using Dynamic Programming. Commun. ACM 1961, 4, 284–286. [Google Scholar] [CrossRef]

- Jackson, B.; Sargus, R.; Homayouni, R.; McLemore, D.; Yao, G. An Algorithm for Optimal Partitioning of Data on an Interval. IEEE Signal Process. Lett. 2005, 12, 105–108. [Google Scholar] [CrossRef]

- Kay, S.M. Fundamentals of Statistical Signal Processing, Volume I: Estimation Theory; Prentice Hall: Upper Saddle River, NJ, USA, 1993. [Google Scholar]

- Elmenreich, W. Fusion of Continuous-Valued Sensor Measurements Using Confidence-Weighted Averaging. J. Vib. Control 2007, 13, 1303–1312. [Google Scholar] [CrossRef]

- Joshi, S.; Boyd, S. Sensor Selection via Convex Optimization. IEEE Trans. Signal Process. 2009, 57, 451–462. [Google Scholar] [CrossRef]

- Zhang, Z.; Scaramuzza, D. A Tutorial on Quantitative Trajectory Evaluation for SLAM. arXiv 2018, arXiv:1801.06581. [Google Scholar]

- Umeyama, S. Least-Squares Estimation of Transformation Parameters Between Two Point Patterns. IEEE Trans. Pattern Anal. Mach. Intell. 1991, 13, 376–380. [Google Scholar] [CrossRef]

- Hampel, F.R. The Influence Curve and Its Role in Robust Estimation. J. Am. Stat. Assoc. 1974, 69, 383–393. [Google Scholar] [CrossRef]

- Yeom, S. Thermal Image Tracking for Search and Rescue Missions with a Drone. Drones 2024, 8, 53. [Google Scholar] [CrossRef]

|

Points |

Path 1 | Path 2 | Path 3 | Path 4 | Path 5 | Path 6 | |||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Frame Number |

Path Length (m) |

Frame Number |

Path Length (m) |

Frame Number |

Path Length (m) |

Frame Number |

Path Length (m) |

Frame Number |

Path Length (m) |

Frame Number |

Path Length (m) |

||

| A | 1175 | 500.5 | 1063 | 398.7 | 678 | 268.35 | 866 | 302.19 | 476 | 182.05 | 1131 | 346.97 | |

| B | 2350 | 1033.1 | 2126 | 824.6 | 1482 | 611.45 | 1731 | 613.65 | 951 | 403.22 | 2261 | 720.68 | |

| C | 3686 | 1539.4 | 3367 | 1284.0 | 2242 | 891.84 | 2596 | 928.49 | 1426 | 624.12 | 3291 | 1031.44 | |

| D | 5021 | 2066.7 | 4560 | 1725.6 | 3013 | 1225.90 | 3462 | 1231.27 | 1901 | 810.99 | 4320 | 1440.1 | |

|

Points |

Path 1 | Path 2 | Path 3 | Path 4 | Path 5 | Path 6 | ||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| RMSE (m) |

Drift (m) |

RMSE (m) |

Drift (m) |

RMSE (m) |

Drift (m) |

RMSE (m) |

Drift (m) |

RMSE (m) |

Drift (m) |

RMSE (m) |

Drift (m) |

|

| A | 2.85 | 5.45 | 6.23 | 5.23 | 4.07 | 5.91 | 3.18 | 2.45 | 3.06 | 7.32 | 7.96 | 6.99 |

| B | 11.93 | 26.45 | 7.68 | 10.95 | 11.82 | 28.19 | 5.59 | 5.27 | 5.67 | 6.29 | 8.35 | 9.42 |

| C | 15.7 | 17.89 | 7.78 | 7 | 19.98 | 33.62 | 11.41 | 32.99 | 8.67 | 18.22 | 10.27 | 11.69 |

| D | 16.44 | 21.72 | 9.88 | 19.45 | 19.65 | 9.11 | 24.89 | 40.28 | 11.26 | 5.92 | 16.75 | 42.47 |

| Avg. | 11.73 | 17.88 | 7.89 | 10.66 | 13.88 | 19.21 | 11.27 | 20.25 | 7.17 | 9.44 | 10.83 | 17.64 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).