Submitted:

12 December 2025

Posted:

15 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Novelty of the Approach

1.2. Datasets Used

1.3. Importance of Robust Captioning and Classification Models

1.4. Research Objectives

1.5. Contribution

2. Previous Works

2.1. State-of-the-Art Models for Image Classification

2.2. State-of-the-Art Models for Image Captioning

2.3. Integrating Classification and Captioning for Dataset Enrichment

2.4. Relevance to Current Work

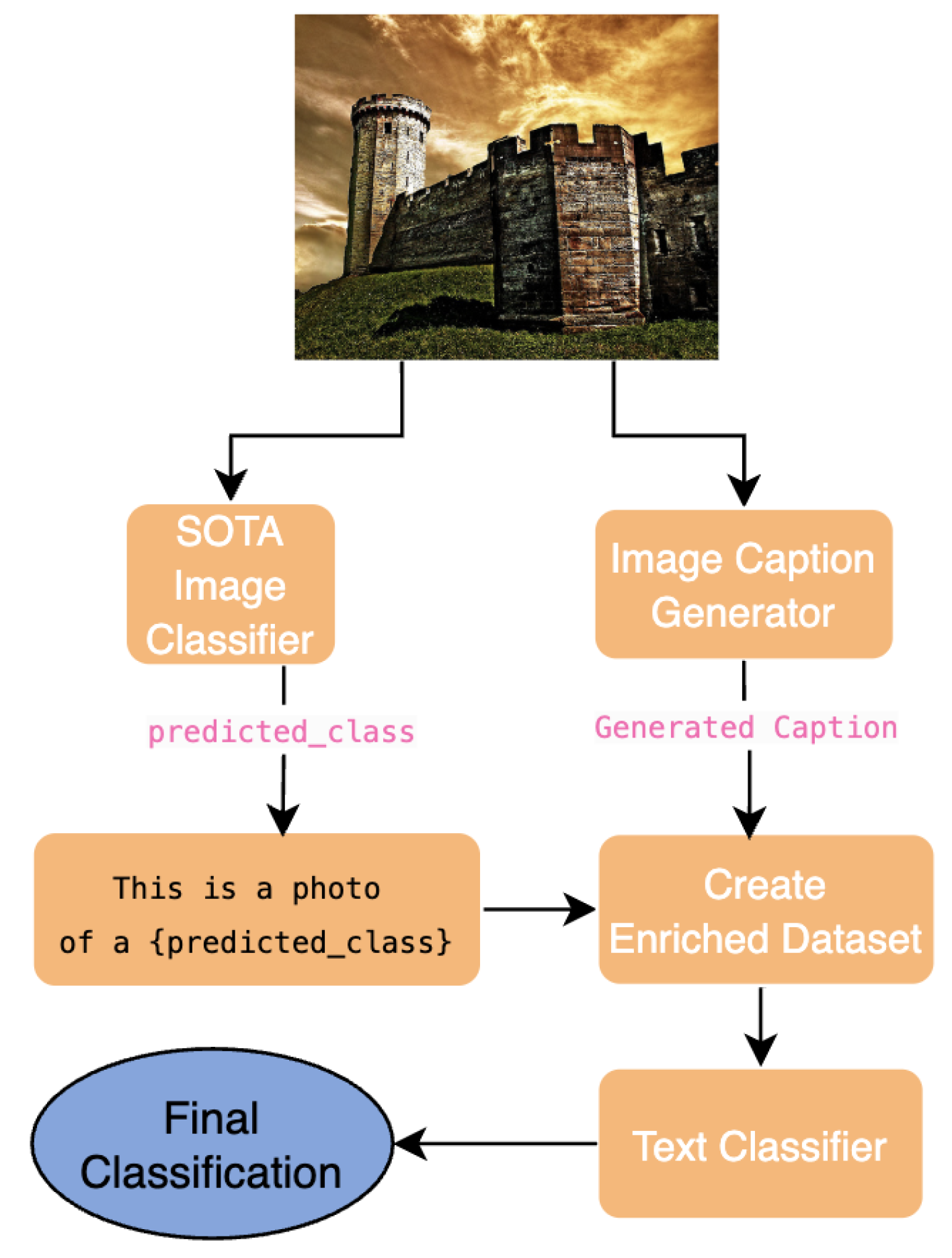

3. Proposed Methodology

4. Model Evaluation and Selection

4.1. Ablation Study and Model Selection

4.1.1. Image Classification Models

4.1.2. Image Captioning Models

| Dataset | BLIP Base Caption | GIT Base Caption | ViT-GPT2 Caption |

|---|---|---|---|

| CIFAR-10 | A small white dog with a green collar | A white flower | A small bird standing on top of a dirt ground |

| CIFAR-100 | A man riding a horse in a field | Red nose on cow | A cow standing on top of a lush green field |

4.2. Key Observations

4.3. Final Model Selection

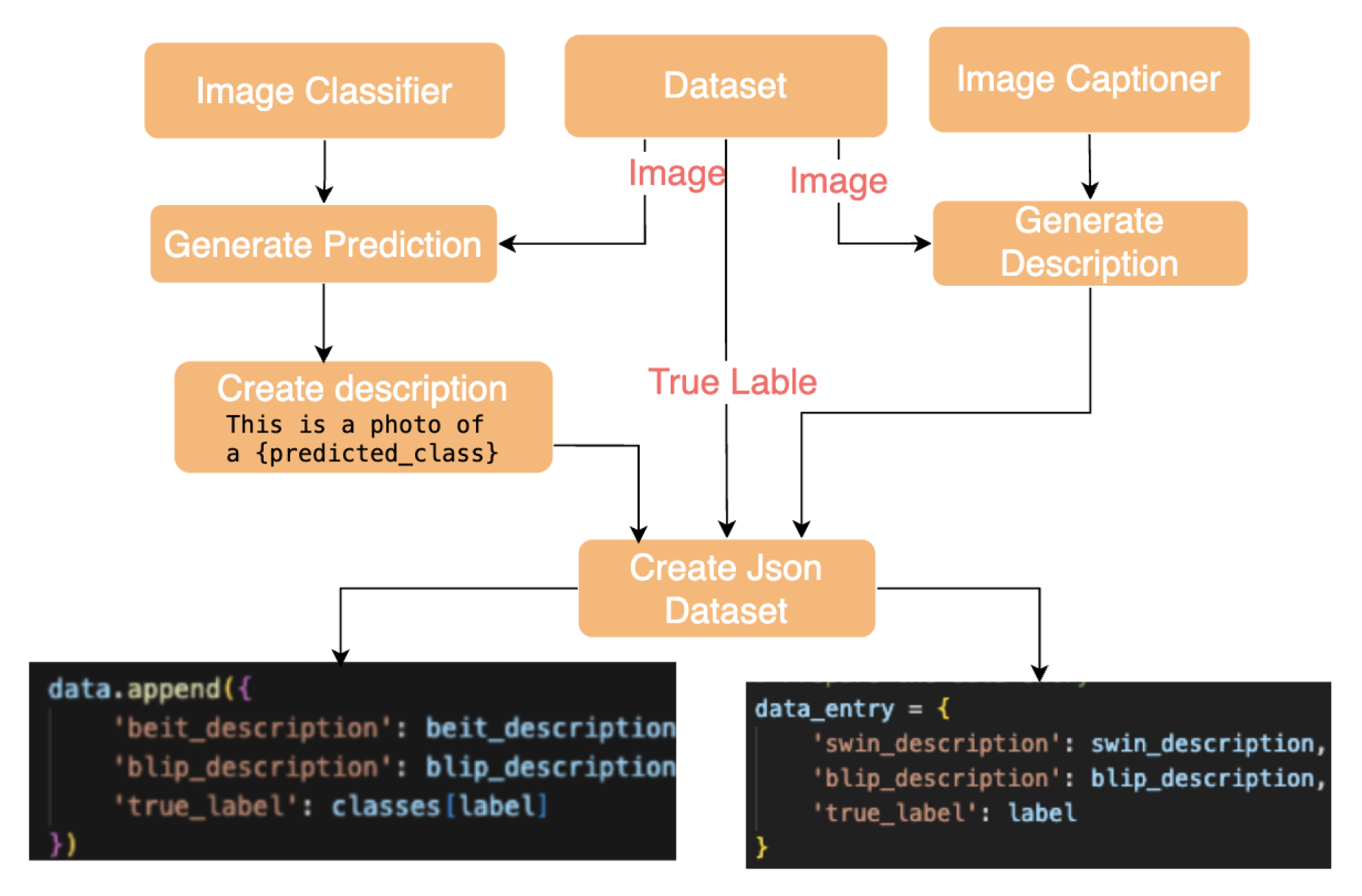

5. Enriched Dataset Generation and Statistics

5.1. Workflow Overview

5.2. Generated Dataset Examples

| Dataset | Model | Generated Text | True Label |

|---|---|---|---|

| CIFAR-10 | BEiT | This is a photo of a frog. | Frog |

| BLIP | A small white dog with a green collar | ||

| CIFAR-100 | Swin | This is a photo of an horse. | Horse |

| BLIP | A man riding a horse in a field |

5.3. Statistics of Enriched Datasets

| Dataset | Model | Mean ± |

|---|---|---|

| CIFAR-10 (60,000 Images) | BEiT | 7.00 - 0.00 |

| BLIP | 11.15 - 0.83 | |

| Combined | 18.15 - 0.83 | |

| CIFAR-100 (60,000 Images) | Swin | 7.00 - 0.00 |

| BLIP | 11.13 - 1.33 | |

| Combined | 18.13 - 1.33 |

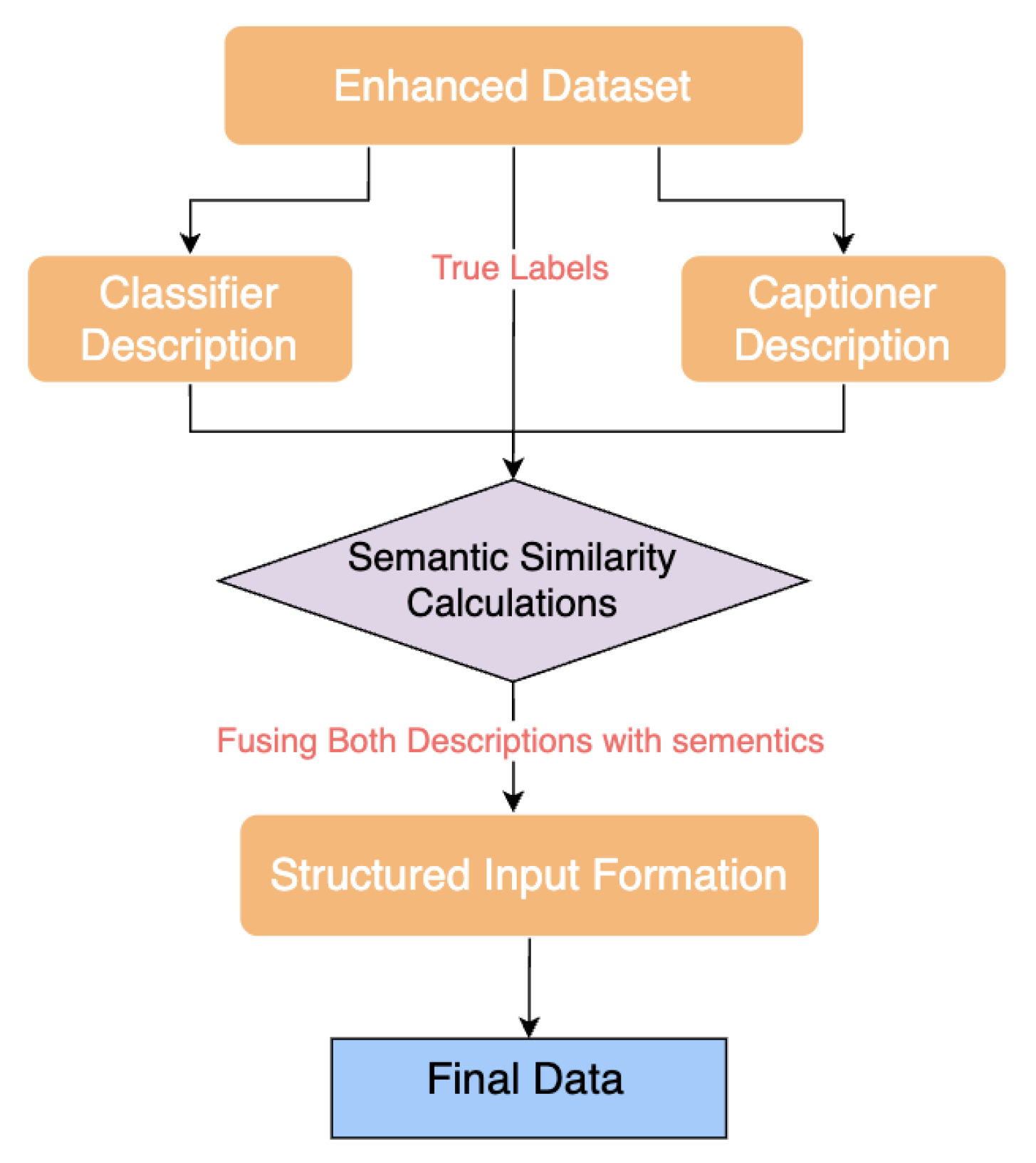

6. Fine-Tuning Pipeline Models on Enriched Descriptions

6.1. Overview of Fine-Tuning Pipelines

| Dataset | Pipeline |

|---|---|

| CIFAR-10 | BEiT + BLIP + BART |

| CIFAR-100 | Swin Transformer + BLIP + BART |

6.2. Pipeline for CIFAR-10 and CIFAR-100

6.3. Data Processing Pipeline

6.4. Hyperparameter Configuration & Fine-Tuning

6.5. Performance Comparison with State-of-the-Art

7. Conclusion and Future Work

7.1. Conclusion

7.2. Challenges and Limitations

7.3. Future Work

7.4. Final Remarks

References

- Kochnev, R.; Goodarzi, A.T.; Bentyn, Z.A.; Ignatov, D.; Timofte, R. Optuna vs Code Llama: Are LLMs a New Paradigm for Hyperparameter Tuning? arXiv 2025, arXiv:2504.06006. [Google Scholar] [CrossRef]

- Gado, M.; Taliee, T.; Memon, M.D.; Ignatov, D.; Timofte, R. VIST-GPT: Ushering in the Era of Visual Storytelling with LLMs? arXiv 2025, arXiv:2504.19267. [Google Scholar] [CrossRef]

- Kochnev, R.; et al. NNGPT: Neural Network Model Generation. arXiv 2025. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet classification with deep convolutional neural networks. Commun. ACM 2017, 60, 84–90. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. In Proceedings of the International Conference on Learning Representations (ICLR), 2015. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2016. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. In Proceedings of the International Conference on Learning Representations, 2020. [Google Scholar]

- Tan, M.; Le, Q. EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks. In Proceedings of the Proceedings of the 36th International Conference on Machine Learning; Chaudhuri, K.; Salakhutdinov, R., Eds. PMLR, 09–15 Jun 2019, Vol. 97, Proceedings of Machine Learning Research, pp. 6105–6114.

- Bao, H.; Dong, L.; Wei, F. BEiT: BERT Pre-Training of Image Transformers. In Proceedings of the International Conference on Learning Representations (ICLR), 2022. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical Vision Transformer Using Shifted Windows. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), October 2021; pp. 10012–10022. [Google Scholar]

- Fang, Y.; Sun, Q.; Wang, X.; Huang, T.; Wang, X.; Cao, Y. EVA: Exploring the Limits of Masked Visual Representation Learning at Scale. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023. [Google Scholar]

- Krizhevsky, A.; Hinton, G.; et al. Learning multiple layers of features from tiny images.(2009). 2009. [Google Scholar]

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Fei-Fei, L. ImageNet: A Large-Scale Hierarchical Image Database. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. IEEE, 2009; pp. 248–255. [Google Scholar]

- Goodarzi, A.T.; Kochnev, R.; Khalid, W.; Qin, F.; Uzun, T.A.; Dhameliya, Y.S.; Kathiriya, Y.K.; Bentyn, Z.A.; Ignatov, D.; Timofte, R. LEMUR Neural Network Dataset: Towards Seamless AutoML. arXiv 2025, arXiv:2504.10552. [Google Scholar] [CrossRef]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning Transferable Visual Models From Natural Language Supervision. In Proceedings of the Proceedings of the 38th International Conference on Machine Learning; Meila, M.; Zhang, T., Eds. PMLR, 18–24 Jul 2021, Vol. 139, Proceedings of Machine Learning Research, pp. 8748–8763.

- Li, J.; Li, D.; Xiong, C.; Hoi, S. BLIP: Bootstrapping Language-Image Pre-training for Unified Vision-Language Understanding and Generation. In Proceedings of the Proceedings of the 39th International Conference on Machine Learning; Chaudhuri, K.; Jegelka, S.; Song, L.; Szepesvari, C.; Niu, G.; Sabato, S., Eds. PMLR, 17–23 Jul 2022, Vol. 162, Proceedings of Machine Learning Research, pp. 12888–12900.

- Wang, J.; Yang, Z.; Hu, X.; Li, L.; Lin, K.; Gan, Z.; Liu, Z.; Liu, C.; Wang, L. GIT: A Generative Image-to-text Transformer for Vision and Language. Transactions on Machine Learning Research, 2022. [Google Scholar]

- Vasireddy, I.; HimaBindu, G.; B., R. Transformative Fusion: Vision Transformers and GPT-2 Unleashing New Frontiers in Image Captioning within Image Processing. Proceedings of the International Journal of Innovative Research in Engineering and Management 2023, Vol. 10, 55–59. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Hinton, G. Learning Multiple Layers of Features from Tiny Images (CIFAR-10). Technical report, University of Toronto, 2009. CIFAR-10 dataset.

- Krizhevsky, A.; Hinton, G. Learning Multiple Layers of Features from Tiny Images (CIFAR-100). Technical report, University of Toronto, 2009. CIFAR-100 dataset.

- Esteva, A.; Kuprel, B.; Novoa, R.A.; Ko, J.; Swetter, S.M.; Blau, H.M.; Thrun, S. Dermatologist-Level Classification of Skin Cancer with Deep Neural Networks. Nature 2017, 542, 115–118. [Google Scholar] [CrossRef] [PubMed]

- Geiger, A.; Lenz, P.; Urtasun, R. Are We Ready for Autonomous Driving? The KITTI Vision Benchmark Suite. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. IEEE, 2012; pp. 3354–3361. [Google Scholar]

- Akhtar, M.J.; Mahum, R.; Butt, F.S.; Amin, R.; El-Sherbeeny, A.M.; Lee, S.M.; Shaikh, S. A Robust Framework for Object Detection in a Traffic Surveillance System. Electronics 2022, 11. [Google Scholar] [CrossRef]

- Need, A.I.A.Y. Ashish Vaswani, Noam Shazeer, NikiParmar, JakobUszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, IlliaPolosukhin 2017.

- Yang, J.; Duan, J.; Tran, S.; Xu, Y.; Chanda, S.; Chen, L.; Zeng, B.; Chilimbi, T.; Huang, J. Vision-Language Pre-Training With Triple Contrastive Learning. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2022; pp. 15671–15680. [Google Scholar]

- Aboudeshish, N.; Ignatov, D.; Timofte, R. AUGMENTGEST: CAN RANDOM DATA CROPPING AUGMENTATION BOOST GESTURE RECOGNITION PERFORMANCE? arXiv preprint 2025. [Google Scholar]

- Vinyals, O.; Toshev, A.; Bengio, S.; Erhan, D. Show and Tell: A Neural Image Caption Generator. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2015. [Google Scholar]

- Karpathy, A.; Fei-Fei, L. Deep Visual-Semantic Alignments for Generating Image Descriptions. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2015. [Google Scholar]

- Chen, Y.C.; Li, L.; Yu, L.; El Kholy, A.; Ahmed, F.; Gan, Z.; Cheng, Y.; Liu, J. UNITER: UNiversal Image-TExt Representation Learning. In Proceedings of the Computer Vision – ECCV 2020; Vedaldi, A.; Bischof, H.; Brox, T.; Frahm, J.M., Eds., Cham, 2020; pp. 104–120.

- Kim, W.; Son, B.; Kim, I. ViLT: Vision-and-Language Transformer Without Convolution or Region Supervision. In Proceedings of the Proceedings of the 38th International Conference on Machine Learning; Meila, M.; Zhang, T., Eds. PMLR, 18–24 Jul 2021, Vol. 139, Proceedings of Machine Learning Research, pp. 5583–5594.

- Li, J.; Li, D.; Xiong, C.; Hoi, S. BLIP: Bootstrapping Language-Image Pre-training for Unified Vision-Language Understanding and Generation. In Proceedings of the Proceedings of the 39th International Conference on Machine Learning; Chaudhuri, K.; Jegelka, S.; Song, L.; Szepesvari, C.; Niu, G.; Sabato, S., Eds. PMLR, 17–23 Jul 2022, Vol. 162, Proceedings of Machine Learning Research, pp. 12888–12900.

- Alayrac, J.B.; Donahue, J.; Luc, P.; Miech, A.; Barr, I.; Hasson, Y.; Lenc, K.; Mensch, A.; Millican, K.; Reynolds, M.; et al. Flamingo: a Visual Language Model for Few-Shot Learning. In Proceedings of the Advances in Neural Information Processing Systems; Koyejo, S.; Mohamed, S.; Agarwal, A.; Belgrave, D.; Cho, K.; Oh, A., Eds. Curran Associates, Inc., 2022, Vol. 35, pp. 23716–23736.

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2019; pp. 4171–4186. [Google Scholar]

- Hu, R.; Singh, A. UniT: Multimodal Multitask Learning With a Unified Transformer. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), October 2021; pp. 1439–1449. [Google Scholar]

- Wang, W.; Bao, H.; Dong, L.; Wei, F. VLP: Vision-Language Pre-Training of Fusion Transformers. In Proceedings of the Proceedings of the 38th International Conference on Machine Learning, 2021. [Google Scholar]

- Sharma, P.; Ding, N.; Goodman, S.; Soricut, R. Conceptual Captions: A Cleaned, Hypernymed, Image Alt-text Dataset For Automatic Image Captioning. In Proceedings of the Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers); Gurevych, I.; Miyao, Y., Eds., Melbourne, Australia, 2018; pp. 2556–2565. [CrossRef]

- Changpinyo, S.; Sharma, P.; Ding, N.; Soricut, R. Conceptual 12M: Pushing Web-Scale Image-Text Pre-Training To Recognize Long-Tail Visual Concepts. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2021; pp. 3558–3568. [Google Scholar]

- Rotstein, N.; Bensaïd, D.; Brody, S.; Ganz, R.; Kimmel, R. FuseCap: Leveraging Large Language Models for Enriched Fused Image Captions. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), January 2024; pp. 5689–5700. [Google Scholar]

- Anderson, P.; Fernando, B.; Johnson, M.; Gould, S. SPICE: Semantic Propositional Image Caption Evaluation. In Proceedings of the Computer Vision – ECCV 2016; Leibe, B.; Matas, J.; Sebe, N.; Welling, M., Eds., Cham, 2016; pp. 382–398.

- Bazi, Y.; Bashmal, L.; Rahhal, M.M.A.; Ricci, R.; Melgani, F. RS-LLaVA: A Large Vision-Language Model for Joint Captioning and Question Answering in Remote Sensing Imagery. Remote Sensing 2024, 16, 1477. [Google Scholar] [CrossRef]

- Huang, P.Y.; Hu, R.; Schwing, A.; Murphy, K. Understanding and Improving the Masked Language Modeling Objective for Vision-and-Language Pretraining. In Proceedings of the Advances in Neural Information Processing Systems, 2019. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Face, H. Hugging Face: Advancing Natural Language Processing and Machine Learning, 2019.

| 1 |

| Dataset | Model | Accuracy (%) |

|---|---|---|

| CIFAR-10 | ViT-B/16 [43] | 97.88 |

| CLIP-ViT-Base-Patch32 [43] | 97.59 | |

| Fine-tuned BEiT (Ours) | 99.00 | |

| CIFAR-100 | ViT-B/16 [43] | 91.48 |

| CLIP-ViT-Base-Patch32 [43] | 88.37 | |

| Swin-Base [43] | 92.01 |

| Training Parameter | Value |

|---|---|

| Batch Size | 8 (Gradient Accumulation: 4 steps) |

| Learning Rate | (Phase 1), (Phase 2) |

| Optimizer | AdamW |

| Loss Function | Cross-Entropy Loss |

| Epochs | 3 (Phase 1), 2 (Phase 2) |

| Scheduler | Linear Decay |

| Mixed Precision Training | Enabled (FP16) |

| Dataset | Captioner | Text Classifier | Accuracy (%) |

|---|---|---|---|

| CIFAR-10 | BLIP | BART | 89.48 |

| GIT | BART | 74.65 | |

| ViT-GPT2 | BART | 74.79 | |

| BLIP | DeBERTa | 87.94 | |

| GIT | DeBERTa | 71.98 | |

| ViT-GPT2 | DeBERTa | 73.62 | |

| CIFAR-100 | BLIP | BART | 49.98 |

| GIT | BART | 29.87 | |

| ViT-GPT2 | BART | 26.07 | |

| BLIP | DeBERTa | 49.81 | |

| GIT | DeBERTa | 31.45 | |

| ViT-GPT2 | DeBERTa | 26.59 |

| Hyperparameter | CIFAR-10 | CIFAR-100 |

|---|---|---|

| Epochs | 18 | 11 |

| Batch Size | 32 | 32 |

| Warmup Steps | 500 | 500 |

| Learning Rate | 3e-6 | 3e-6 |

| Weight Decay | 0.005 | 0.005 |

| LR Scheduler | cosine | cosine |

| Loss Function | MSE | MSE |

| Metric | CIFAR-10 | CIFAR-100 | ||||

|---|---|---|---|---|---|---|

| ViT-H/14 | Used: BEiT | Ours | EffNet- l2 | Used: Swin | Ours | |

| Accuracy | 99.50% | 99.00% | 99.73% | 96.08% | 92.01% | 98.39% |

| Precision | — | — | 99.46% | — | — | 97.11% |

| Recall | — | — | 99.73% | — | — | 98.39% |

| F1 Score | — | — | 99.59% | — | — | 97.65% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).