Figure 1.

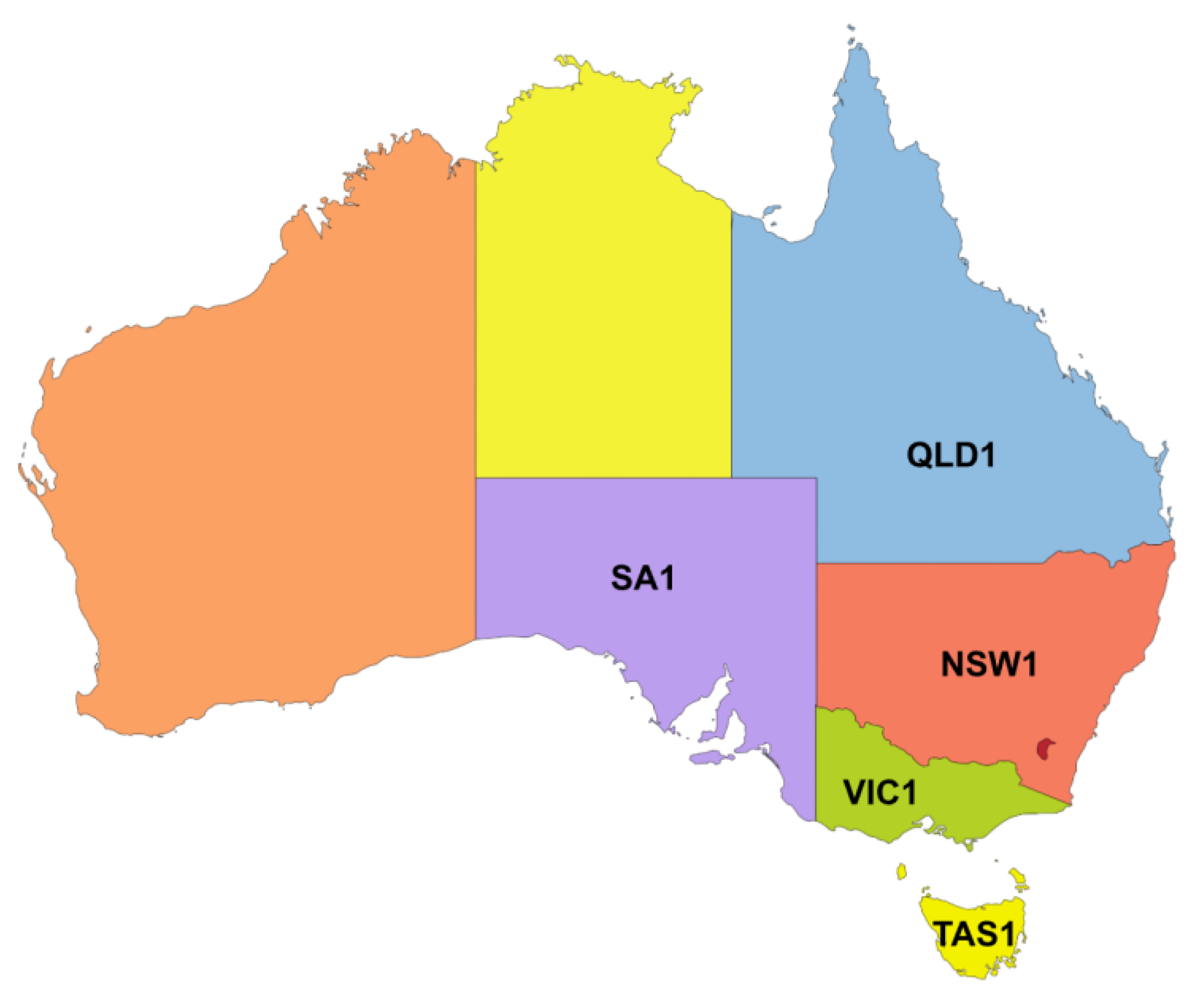

Map of Australia showing the interconnected NEM regions. The main focus of this study will be on NSW1 which represents the largest state by population in Australia [

27].

Figure 1.

Map of Australia showing the interconnected NEM regions. The main focus of this study will be on NSW1 which represents the largest state by population in Australia [

27].

Figure 2.

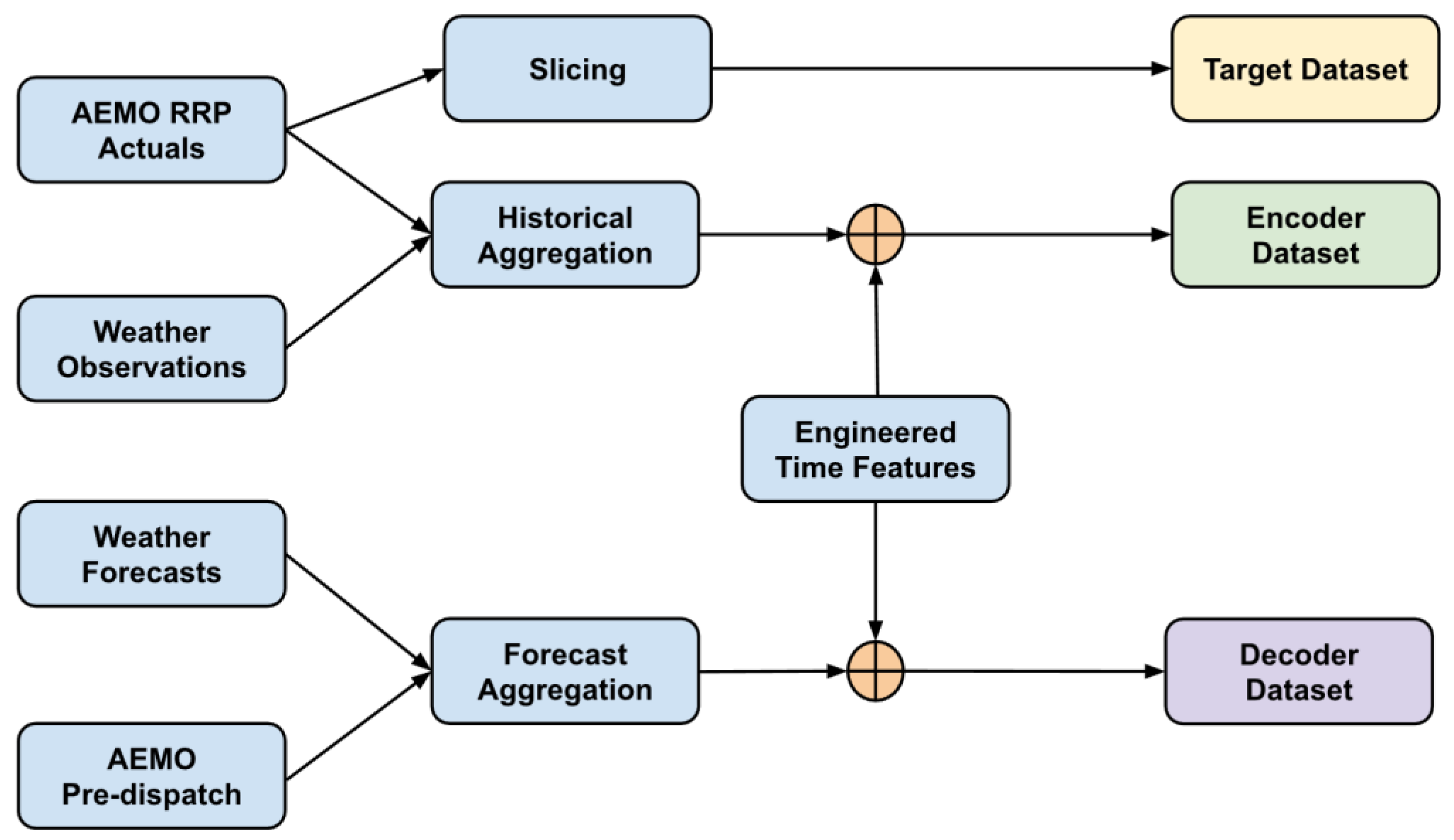

Data pipeline for model training. The complete data preparation workflow integrates AEMO RRP actuals, weather observations, weather forecasts, and AEMO pre-dispatch forecasts. Historical inputs are aggregated and aligned with engineered time-based features to form the encoder dataset, while forecast inputs undergo a separate aggregation process to construct the decoder dataset. Target values are produced through direct slicing of RRP actuals. The combined pipeline ensures temporally consistent, feature-rich inputs for transformer-based electricity price forecasting.

Figure 2.

Data pipeline for model training. The complete data preparation workflow integrates AEMO RRP actuals, weather observations, weather forecasts, and AEMO pre-dispatch forecasts. Historical inputs are aggregated and aligned with engineered time-based features to form the encoder dataset, while forecast inputs undergo a separate aggregation process to construct the decoder dataset. Target values are produced through direct slicing of RRP actuals. The combined pipeline ensures temporally consistent, feature-rich inputs for transformer-based electricity price forecasting.

Figure 3.

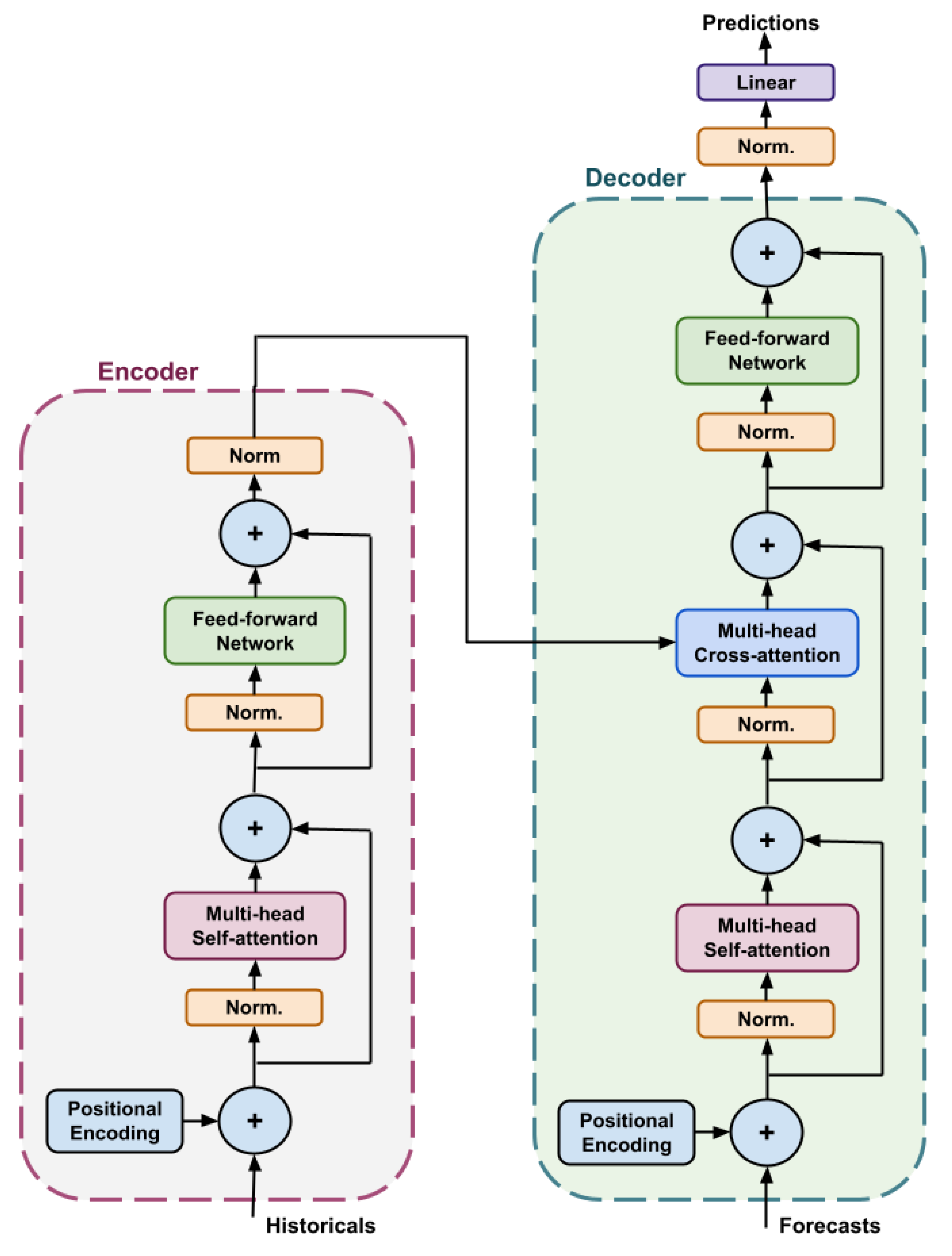

Pre-layer normalisation encoder–decoder transformer architecture with parallel decoding. The encoder (left, purple box) processes historical inputs using multi-head self-attention and a position-wise feed-forward network, each wrapped with residual connections and layer normalisation (“Norm.”) applied before each block. The decoder (right, green box) processes known future inputs using self-attention and cross-attention over the encoder output, followed by a feed-forward layer. Predictions are produced via a final normalisation and linear projection layer. Positional encodings are added to both historical and future inputs. Parallel decoding is implemented as a non-autoregressive procedure in which the decoder generates all forecast horizons in parallel within one forward computation, eliminating the need to condition each timestep on earlier model outputs.

Figure 3.

Pre-layer normalisation encoder–decoder transformer architecture with parallel decoding. The encoder (left, purple box) processes historical inputs using multi-head self-attention and a position-wise feed-forward network, each wrapped with residual connections and layer normalisation (“Norm.”) applied before each block. The decoder (right, green box) processes known future inputs using self-attention and cross-attention over the encoder output, followed by a feed-forward layer. Predictions are produced via a final normalisation and linear projection layer. Positional encodings are added to both historical and future inputs. Parallel decoding is implemented as a non-autoregressive procedure in which the decoder generates all forecast horizons in parallel within one forward computation, eliminating the need to condition each timestep on earlier model outputs.

Figure 4.

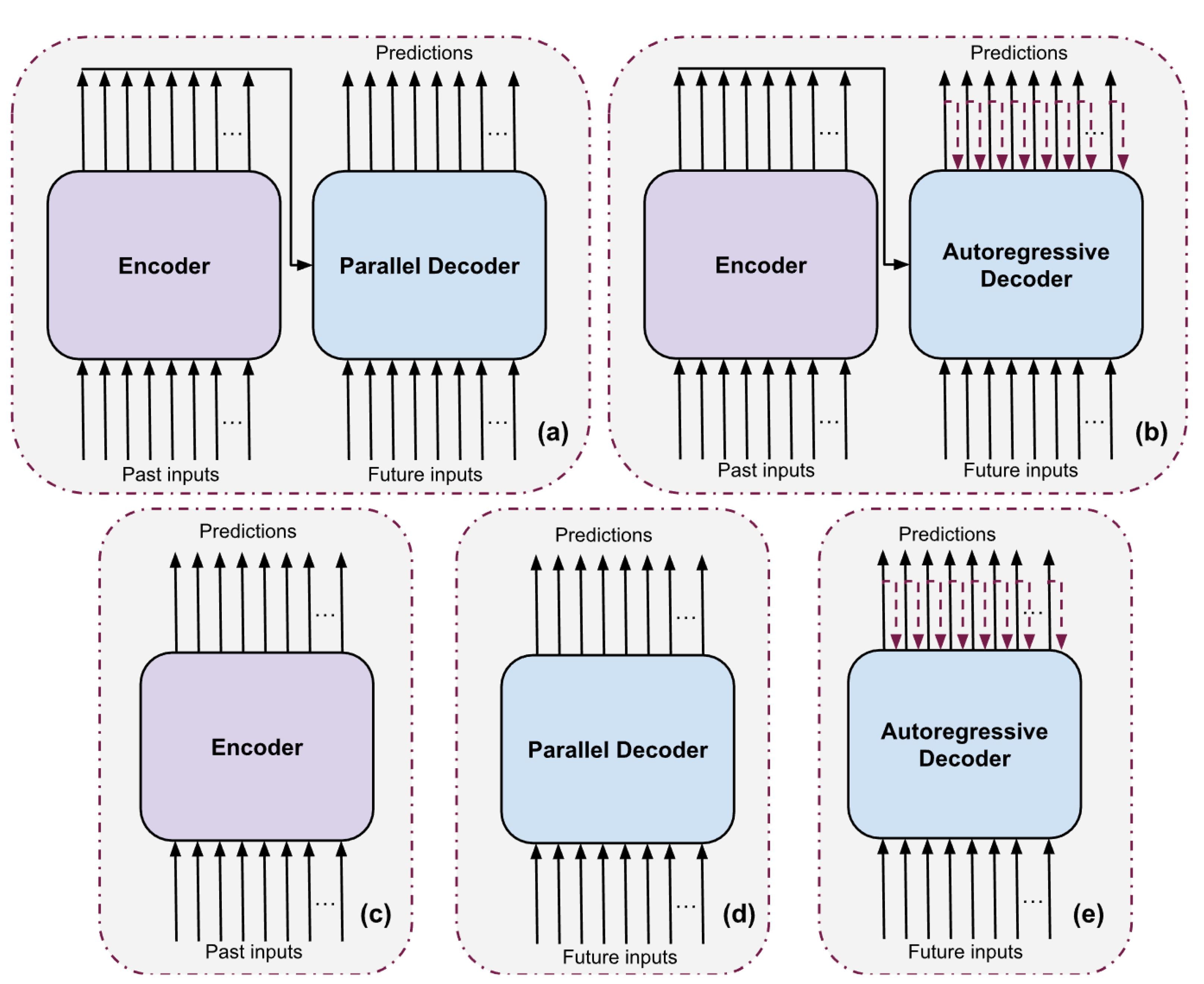

Transformer architectures evaluated. (a) Enc-dec transformer with parallel decoding, (b) Enc-dec transformer with autoregressive decoding, (c) Encoder-only transformer, (d) Decoder-only transformer with parallel decoding, and (e) Decoder-only transformer with autoregressive decoding.

Figure 4.

Transformer architectures evaluated. (a) Enc-dec transformer with parallel decoding, (b) Enc-dec transformer with autoregressive decoding, (c) Encoder-only transformer, (d) Decoder-only transformer with parallel decoding, and (e) Decoder-only transformer with autoregressive decoding.

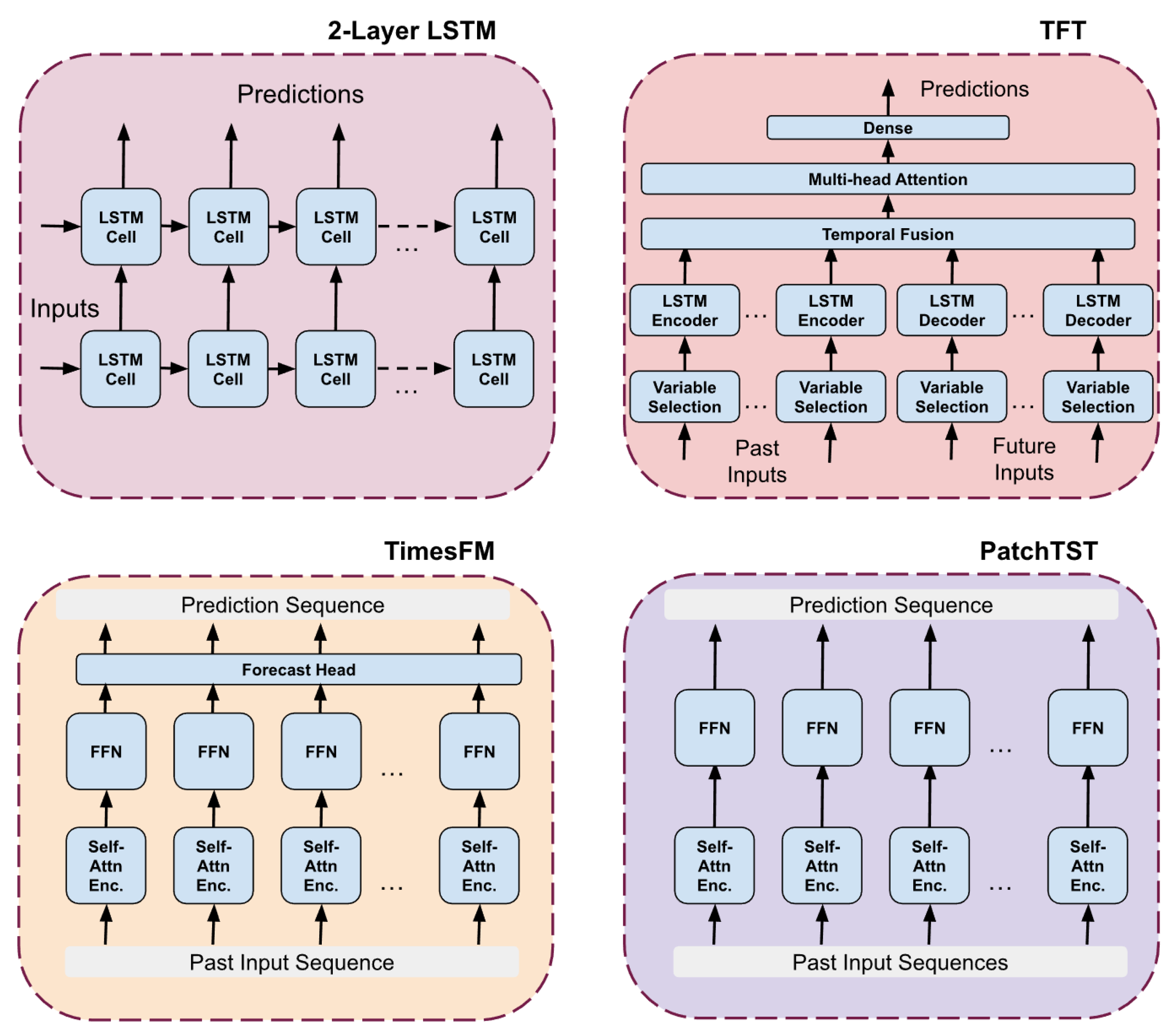

Figure 5.

Architectural overview of the comparison models used in this study, including a two-layer Long Short Term Memory network, the Temporal Fusion Transformer, TimesFM, and PatchTST. Each model represents a distinct class of sequence modelling approaches ranging from recurrent neural networks to attention based encoder architectures. These diagrams illustrate the structural differences in how each model processes historical inputs and generates multi step forecasts, providing context for the comparative evaluation presented in this work.

Figure 5.

Architectural overview of the comparison models used in this study, including a two-layer Long Short Term Memory network, the Temporal Fusion Transformer, TimesFM, and PatchTST. Each model represents a distinct class of sequence modelling approaches ranging from recurrent neural networks to attention based encoder architectures. These diagrams illustrate the structural differences in how each model processes historical inputs and generates multi step forecasts, providing context for the comparative evaluation presented in this work.

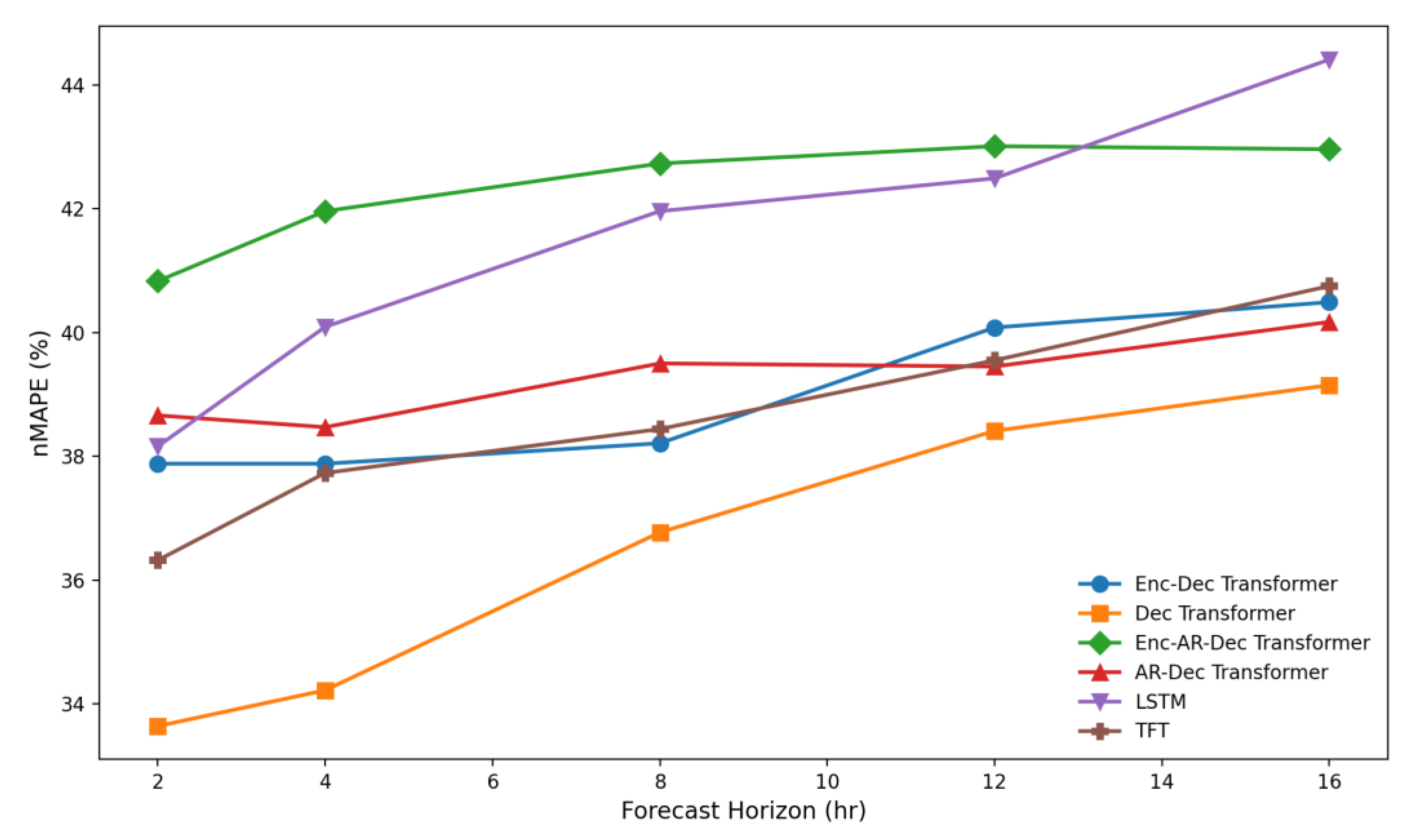

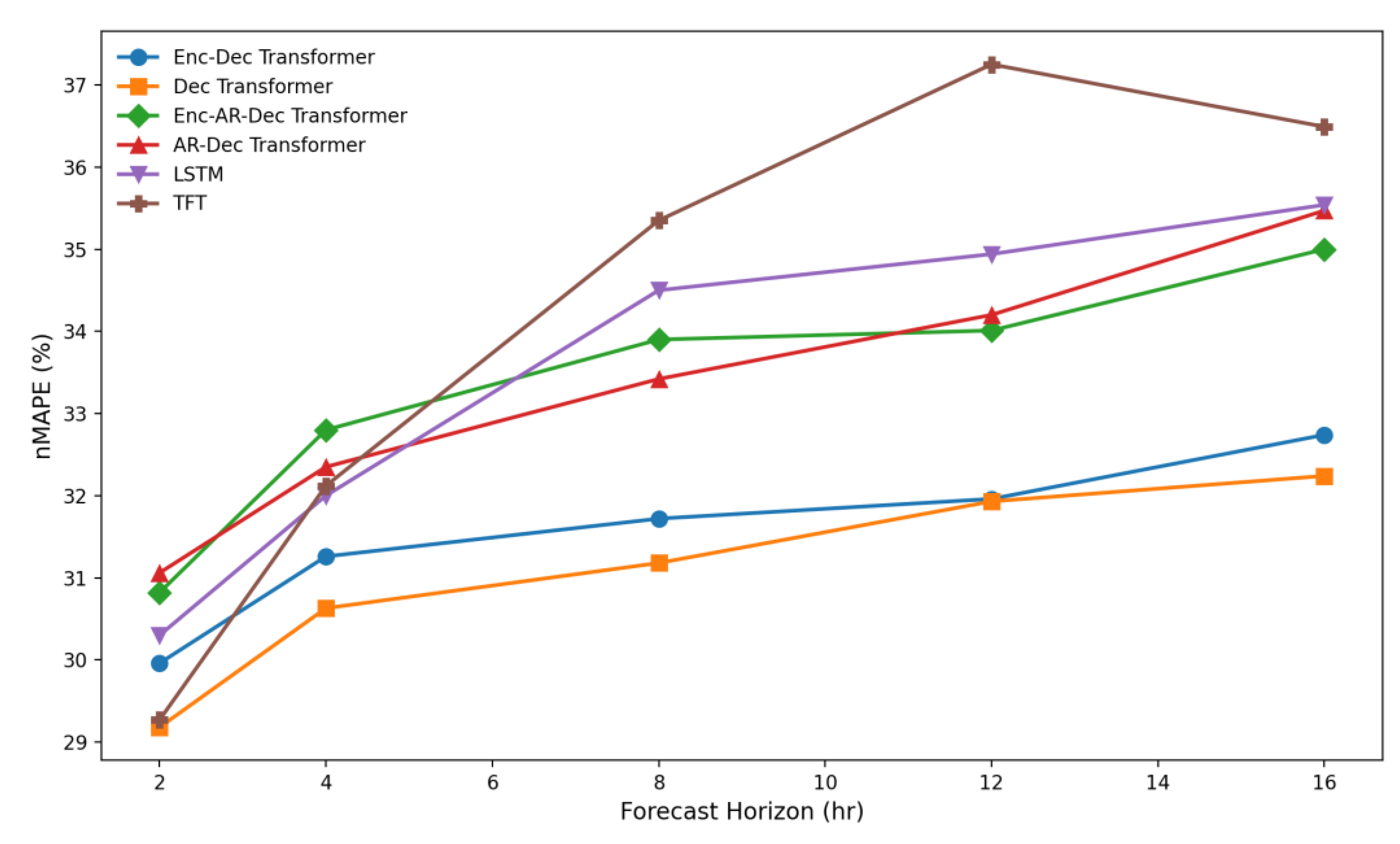

Figure 6.

NSW1 normalised MAPE performance of the top six models showing the parallel decoder-only transformer, the enc-dec transformer, and the temporal fusion transformer with the best performance across all prediction horizons.

Figure 6.

NSW1 normalised MAPE performance of the top six models showing the parallel decoder-only transformer, the enc-dec transformer, and the temporal fusion transformer with the best performance across all prediction horizons.

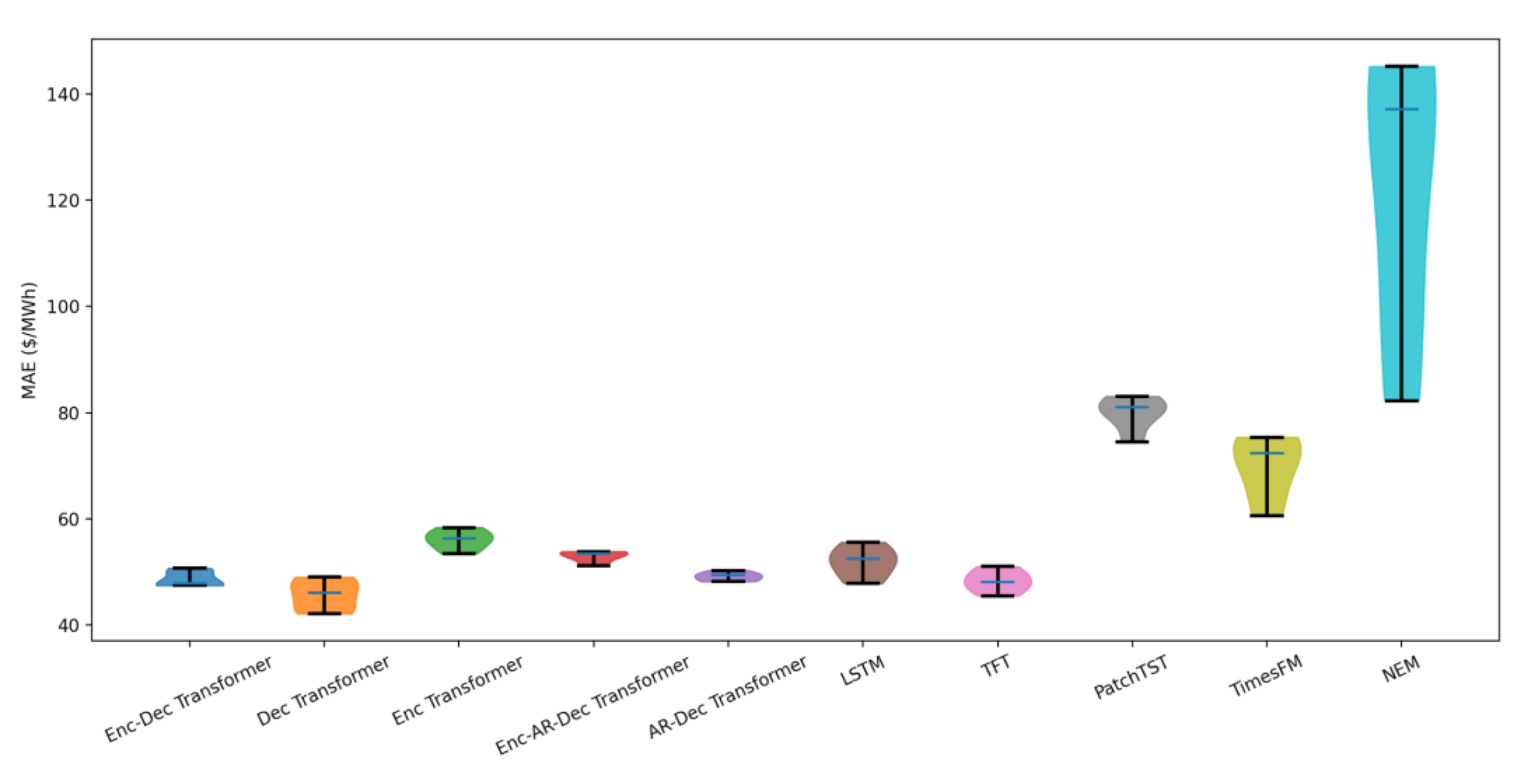

Figure 7.

Mean Absolute Error (MAE) distribution of all models for NSW1 across the full evaluation period. Violin plots illustrate both the spread and central tendency of errors for each architecture, highlighting the superior accuracy and lower variability of the transformer-based models compared with LSTM, TFT, PatchTST, TimesFM, and the official NEM forecasts. The plot shows that the encoder–decoder and decoder-only transformer variants consistently achieved the lowest MAE values, while TimesFM exhibited substantially higher error dispersion.

Figure 7.

Mean Absolute Error (MAE) distribution of all models for NSW1 across the full evaluation period. Violin plots illustrate both the spread and central tendency of errors for each architecture, highlighting the superior accuracy and lower variability of the transformer-based models compared with LSTM, TFT, PatchTST, TimesFM, and the official NEM forecasts. The plot shows that the encoder–decoder and decoder-only transformer variants consistently achieved the lowest MAE values, while TimesFM exhibited substantially higher error dispersion.

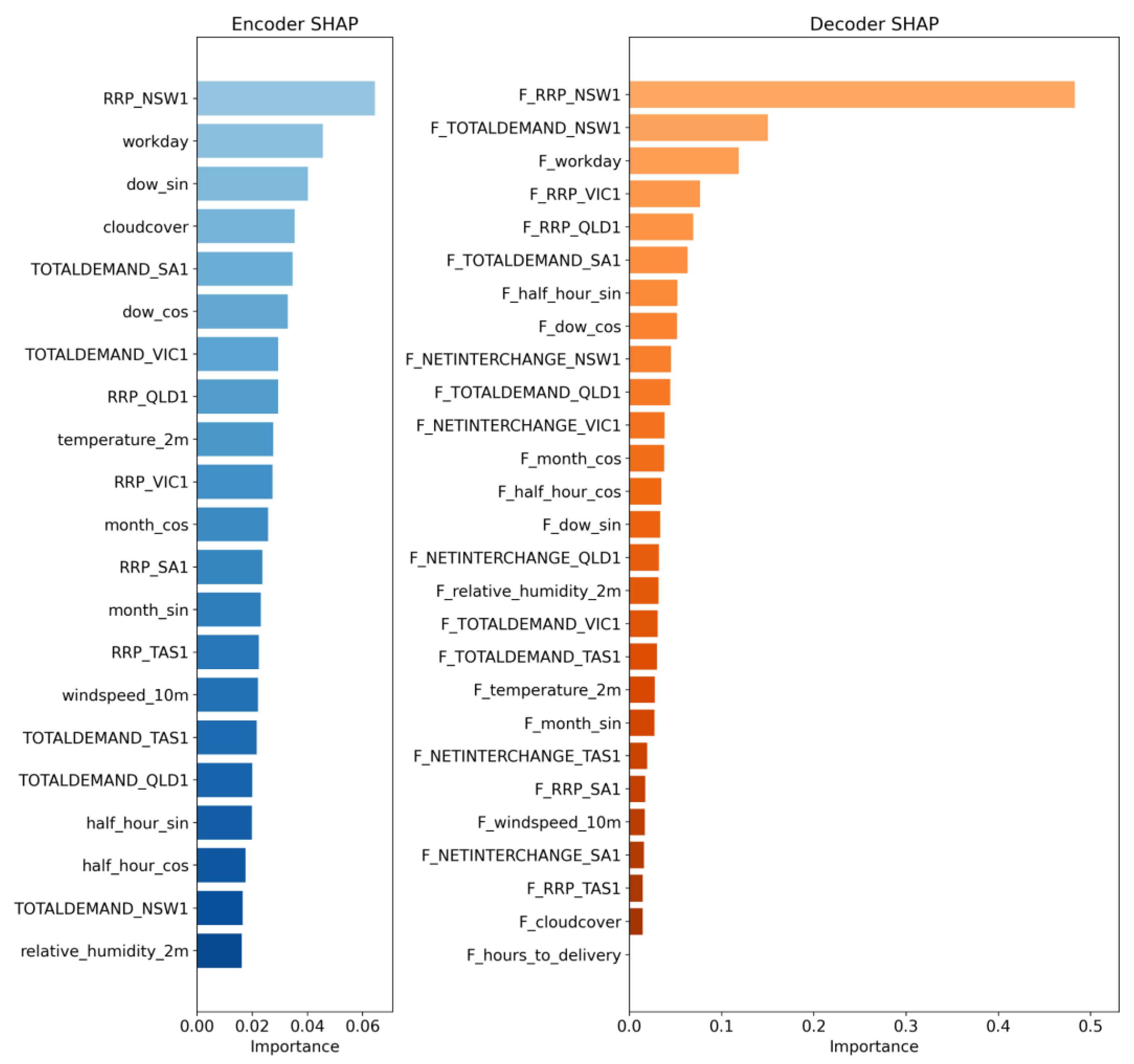

Figure 8.

NSW1 SHAP feature importance for the encoder and decoder features of the NSW1 transformer model. The results show that future-facing covariates—particularly the AEMO forecast price (F_RRP_NSW1) and regional predispatch demand and RRP forecasts—dominate model importance, accounting for the majority of predictive power. Historical signals and cyclical encodings contribute comparatively little.

Figure 8.

NSW1 SHAP feature importance for the encoder and decoder features of the NSW1 transformer model. The results show that future-facing covariates—particularly the AEMO forecast price (F_RRP_NSW1) and regional predispatch demand and RRP forecasts—dominate model importance, accounting for the majority of predictive power. Historical signals and cyclical encodings contribute comparatively little.

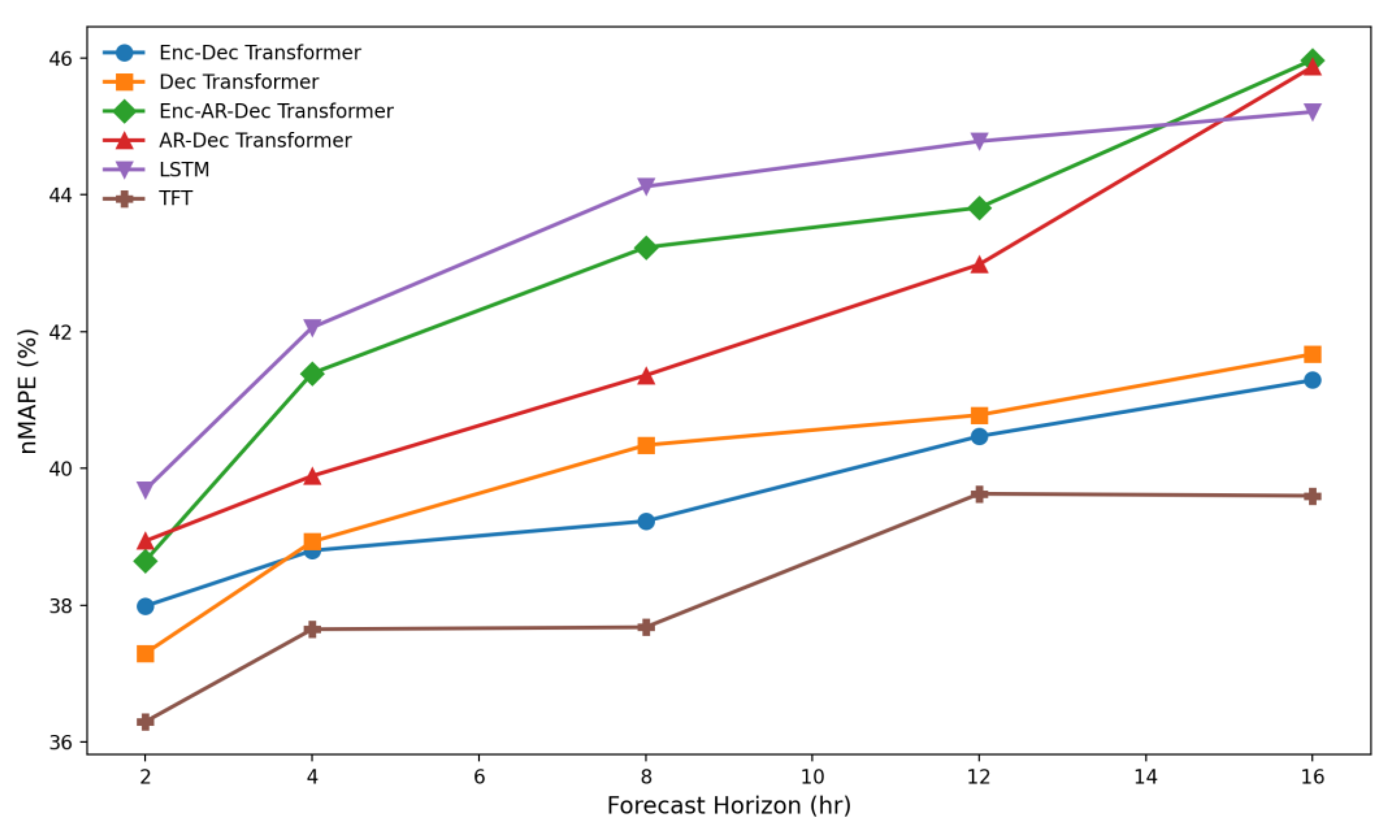

Figure 9.

QLD1 normalised MAPE performance of the top six models showing the parallel decoder-only transformer, enc-dec transformer, and the auto-regressive transformers with the best performance across all prediction horizons.

Figure 9.

QLD1 normalised MAPE performance of the top six models showing the parallel decoder-only transformer, enc-dec transformer, and the auto-regressive transformers with the best performance across all prediction horizons.

Figure 10.

VIC1 normalised MAPE performance of the top six models showing the temporal fusion transformer (TFT), enc-dec transformer and the parallel decoder-only transformer with the best performance across all prediction horizons.

Figure 10.

VIC1 normalised MAPE performance of the top six models showing the temporal fusion transformer (TFT), enc-dec transformer and the parallel decoder-only transformer with the best performance across all prediction horizons.

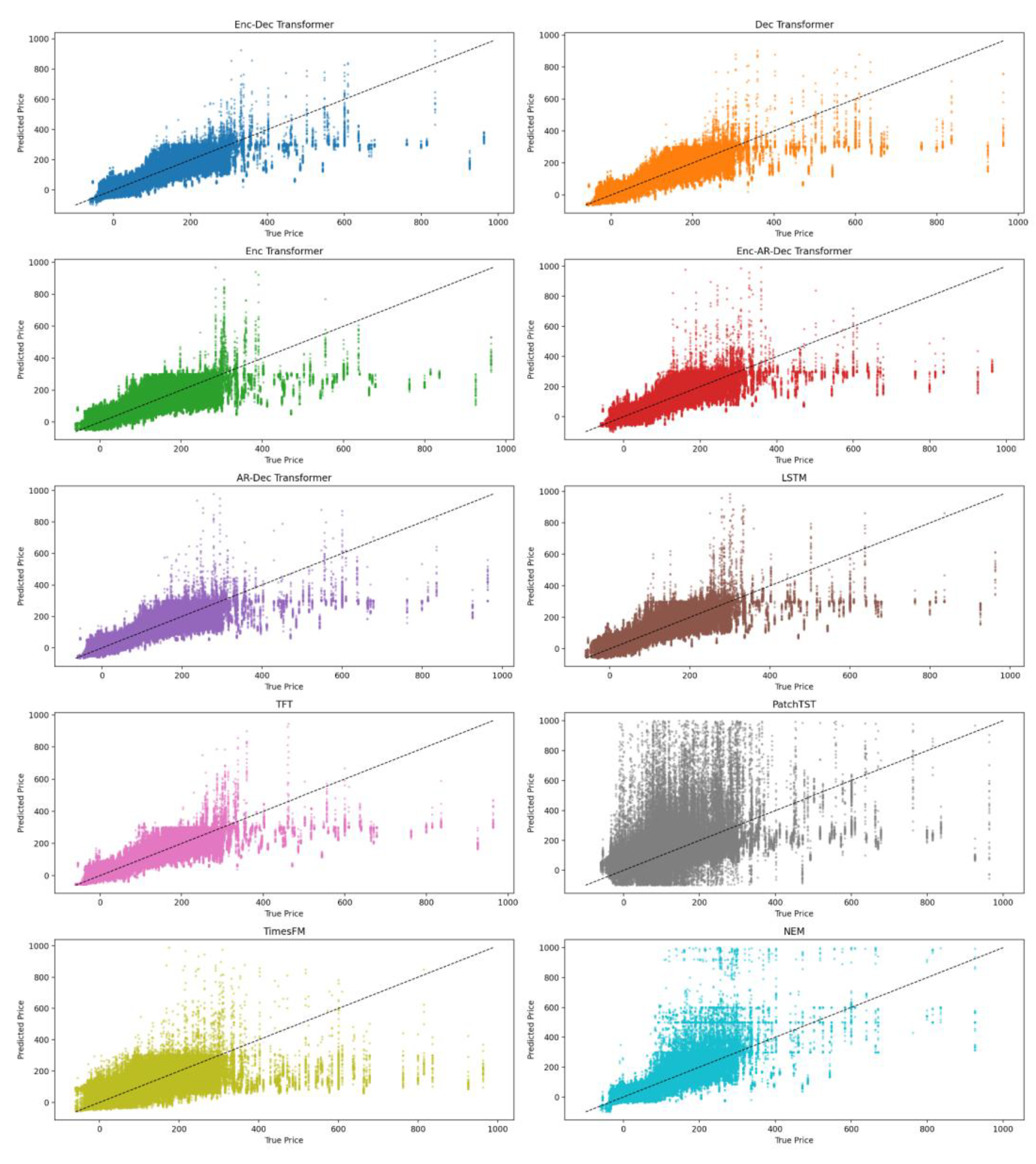

Figure 11.

Zoomed scatter plots of predictions vs true values for the models across all horizons in the NSW1 region. Extreme price spikes have been truncated. The plots illustrate the distribution of errors across various price ranges, with models showing a wide variance, especially with respect to price predictions above $400 with most models reverting to the mean for such rare events.

Figure 11.

Zoomed scatter plots of predictions vs true values for the models across all horizons in the NSW1 region. Extreme price spikes have been truncated. The plots illustrate the distribution of errors across various price ranges, with models showing a wide variance, especially with respect to price predictions above $400 with most models reverting to the mean for such rare events.

Table 1.

Summary of all input features used in this study, indicating whether each variable was incorporated as a historical encoder feature, a forecast decoder feature, or both. Data sources and brief descriptions are provided for clarity.

Table 1.

Summary of all input features used in this study, indicating whether each variable was incorporated as a historical encoder feature, a forecast decoder feature, or both. Data sources and brief descriptions are provided for clarity.

| Feature |

Historical |

Forecast |

Data Source |

Description |

| RRP |

Yes |

Yes |

AEMO |

Regional reference price for each NEM region |

| Demand |

Yes |

Yes |

AEMO |

Total demand for each NEM region |

| Net Interchange |

Yes |

Yes |

AEMO |

The amount of energy flowing in or out of each NEM region |

| Temperature, cloud cover, humidity, wind speed |

Yes |

Yes |

Open Meteo |

The more applicable weather conditions for the capital city of the given NEM region |

| Workday |

Yes |

Yes |

Engineered |

Binary indicating if the day of week is a workday |

| Half-hour Cos/Sin |

Yes |

Yes |

Engineered |

Circular encoded half-hour time slot to indicate diurnal position |

| Day of Week Cos/Sin |

Yes |

Yes |

Engineered |

Circular encoded day of the week to indicate weekly position |

| Month Cos/Sin |

Yes |

Yes |

Engineered |

Circular encoded month of the year to indicate yearly seasonal cycle |

| Hours to delivery |

No |

Yes |

Engineered |

Hours until the forecast point |

Table 2.

Summary of transformer model variants evaluated in this study, including tiny, small, medium, large, encoder-only, and decoder-only configurations. ‘layers’ refers to the number of repeated layers of the encoder and decoder; ‘heads’ represent the number of self-attention heads that each attention block has; ‘in+out’ refers to the input and output sequence lengths; ‘d_model’ is the main embedding size of the layers of model, ff_dim is the hidden layer size of the feed-forward network and dropout is the value used at each of the dropout layers.

Table 2.

Summary of transformer model variants evaluated in this study, including tiny, small, medium, large, encoder-only, and decoder-only configurations. ‘layers’ refers to the number of repeated layers of the encoder and decoder; ‘heads’ represent the number of self-attention heads that each attention block has; ‘in+out’ refers to the input and output sequence lengths; ‘d_model’ is the main embedding size of the layers of model, ff_dim is the hidden layer size of the feed-forward network and dropout is the value used at each of the dropout layers.

| Model |

layers |

heads |

in+out len |

d_model |

ff_dim |

dropout |

| Tiny, enc-dec |

2 |

2 |

96+32 |

64 |

256 |

0.05 |

| Small, enc-dec |

3 |

4 |

96+32 |

128 |

512 |

0.05 |

| Med, enc-dec |

3 |

4 |

96+32 |

256 |

1024 |

0.05 |

| Large, enc-dec |

4 |

8 |

96+32 |

512 |

2048 |

0.05 |

| Small, decoder only |

3 |

4 |

0+32 |

128 |

512 |

0.05 |

| Small, encoder only |

3 |

4 |

96+0

|

128 |

512 |

0.05 |

Table 3.

Hyperparameters used for models in this study.

Table 3.

Hyperparameters used for models in this study.

| Hyperparameter |

Transformers & LSTM |

TFT |

PatchTST |

| Batch size |

128 |

128 |

128 |

| Input sequence length |

96 x 30-minute periods |

96 x 30-minute periods |

96 x 30-minute periods |

| Scaler |

QuantileTransformer |

QuantileTransformer |

- |

| Output sequence length |

32 x 30m periods |

32 x 30m periods |

32 x 30m periods |

| Dropout |

0.05 |

0.10 |

0.10 |

| Initial learning rate |

2e-4 |

2e-4 |

1e-5 |

| Global Clipnorm |

2.0 |

- |

- |

| Optimiser |

Adam (β=0.9, β2=0.999, ε=1e-9) |

Adam |

Adam (clipnorm=0.01) |

| Loss |

Huber (δ=0.8) |

Quantile[0.1,0.5,0.9] |

MSE |

| Max. epochs |

20 |

20 |

20 |

| Reduce LR on Plateau |

Factor=0.5, Patience=2 |

Factor=0.5, Patience=2 |

- |

| Early stop |

Patience=5, Monitor=val_loss |

Patience=5, Monitor=val_loss |

- |

| Patch & Stride |

- |

- |

24 & 6 |

Table 4.

NSW1 region comparative model normalised MAPE (%) results. The table reports percentage errors at fixed forecast horizons (2, 4, 8, 12, and 16 hours ahead), enabling direct comparison of short-, medium-, and long-range predictive performance. The listed transformer models are all of the “small” size.

Table 4.

NSW1 region comparative model normalised MAPE (%) results. The table reports percentage errors at fixed forecast horizons (2, 4, 8, 12, and 16 hours ahead), enabling direct comparison of short-, medium-, and long-range predictive performance. The listed transformer models are all of the “small” size.

| Model |

2h |

4h |

8h |

12h |

16h |

| Enc-Dec Transformer |

37.9 |

37.9 |

38.2 |

40.1 |

40.5 |

| Dec Transformer |

33.6 |

34.2 |

36.8 |

38.4 |

39.2 |

| Enc Transformer |

42.7 |

44.0 |

45.0 |

45.6 |

46.6 |

| Enc-AR-Dec Transformer |

40.8 |

42.0 |

42.7 |

43.0 |

43.0 |

| AR-Dec Transformer |

38.7 |

38.5 |

39.5 |

39.5 |

40.2 |

| LSTM |

38.2 |

40.1 |

42.0 |

42.5 |

44.4 |

| TFT |

36.3 |

37.7 |

38.4 |

39.5 |

40.8 |

| PatchTST |

59.4 |

64.7 |

66.4 |

65.0 |

63.6 |

| TimesFM |

48.3 |

53.3 |

59.4 |

60.2 |

57.8 |

| NEM |

65.6 |

82.7 |

116.0 |

109.6 |

114.6 |

Table 5.

QLD1 NEM region results for all small-model architectures, showing normalised MAPE across 2-, 4-, 8-, 12-, and 16-hour forecast horizons. All models were fully retrained on QLD1 targets using the same input features and training configuration as the NSW1 experiments.

Table 5.

QLD1 NEM region results for all small-model architectures, showing normalised MAPE across 2-, 4-, 8-, 12-, and 16-hour forecast horizons. All models were fully retrained on QLD1 targets using the same input features and training configuration as the NSW1 experiments.

| Model |

2h |

4h |

8h |

12h |

16h |

| Enc-Dec Transformer |

30.0 |

31.3 |

31.7 |

32.0 |

32.7 |

| Dec Transformer |

29.2 |

30.6 |

31.2 |

31.9 |

32.2 |

| Enc Transformer |

42.2 |

43.6 |

45.3 |

45.0 |

45.1 |

| Enc-AR-Dec Transformer |

30.8 |

32.8 |

33.9 |

34.0 |

35.0 |

| AR-Dec Transformer |

31.1 |

32.3 |

33.4 |

34.2 |

35.5 |

| LSTM |

30.3 |

32.0 |

34.5 |

34.9 |

35.5 |

| TFT |

29.3 |

32.1 |

35.3 |

37.2 |

36.5 |

| PatchTST |

60.3 |

77.5 |

97.6 |

94.3 |

99.0 |

| TimesFM |

62.1 |

82.5 |

104.3 |

99.0 |

105.6 |

| NEM |

57.0 |

79.1 |

120.4 |

124.7 |

121.9 |

Table 6.

VIC1 NEM region results for all small-model architectures, showing normalised MAPE across 2-, 4-, 8-, 12-, and 16-hour forecast horizons. Each model was retrained on VIC1 targets to evaluate performance consistency and model robustness across different NEM regions.

Table 6.

VIC1 NEM region results for all small-model architectures, showing normalised MAPE across 2-, 4-, 8-, 12-, and 16-hour forecast horizons. Each model was retrained on VIC1 targets to evaluate performance consistency and model robustness across different NEM regions.

| Model |

2h |

4h |

8h |

12h |

16h |

| Enc-Dec Transformer |

38.0 |

38.8 |

39.2 |

40.5 |

41.3 |

| Dec Transformer |

37.3 |

38.9 |

40.3 |

40.8 |

41.7 |

| Enc Transformer |

61.3 |

63.0 |

64.7 |

64.7 |

65.4 |

| Enc-AR-Dec Transformer |

38.7 |

41.4 |

43.2 |

43.8 |

46.0 |

| AR-Dec Transformer |

38.9 |

39.9 |

41.4 |

43.0 |

45.9 |

| LSTM |

39.7 |

42.1 |

44.1 |

44.8 |

45.2 |

| TFT |

36.3 |

37.7 |

37.7 |

39.6 |

39.6 |

| PatchTST |

82.9 |

95.1 |

105.1 |

101.6 |

107.7 |

| TimesFM |

85.2 |

100.3 |

112.3 |

107.2 |

114.5 |

| NEM |

35.7 |

41.0 |

60.3 |

59.3 |

61.4 |

Table 7.

NSW1 model-size comparison for the encoder–decoder transformer, showing normalised MAPE across 2-, 4-, 8-, 12-, and 16-hour horizons. Results indicate that the small model provides a good balance between accuracy and capacity for this dataset.

Table 7.

NSW1 model-size comparison for the encoder–decoder transformer, showing normalised MAPE across 2-, 4-, 8-, 12-, and 16-hour horizons. Results indicate that the small model provides a good balance between accuracy and capacity for this dataset.

Table 8.

Diebold-Mariano test results comparing each model’s forecasts with the official NEM forecasts for the NSW1 region. All models show statistically significant improvements over the NEM benchmark at the 95% confidence level.

Table 8.

Diebold-Mariano test results comparing each model’s forecasts with the official NEM forecasts for the NSW1 region. All models show statistically significant improvements over the NEM benchmark at the 95% confidence level.

| Model |

Diebold Mariano-stat |

p-value |

| Enc-Dec Transformer |

-6.85 |

7.6e-12 |

| Dec Transformer |

-7.13 |

1.1e-12 |

| Enc Transformer |

-6.27 |

7.1e-10 |

| Enc-AR-Dec Transformer |

-6.52 |

7.5e-11 |

| AR-Dec Transformer |

-6.84 |

8.6e-12 |

| LSTM |

-6.57 |

5.4e-11 |

| TFT |

-6.90 |

5.6e-12 |

| PatchTST |

-4.03 |

5.6e-5 |

| TimesFM |

-4.92 |

9.0e-7 |