Submitted:

03 December 2025

Posted:

05 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Development of a spatiotemporal integrated meteorological feature set, incorporating lag processing, rolling window statistics, and physically coupled features, refined via Random Forest for enhanced interpretability.

- Systematic evaluation of architecture-augmentation synergy, comprehensively assessing multiple strategies under extreme imbalance conditions.

- Design of physics-constrained data augmentation, enforcing meteorological evolution laws to ensure physical plausibility.

- Establishment of a multi-dimensional evaluation system, including Recall and F1-score, to thoroughly assess minority class identification and stability.

2. Materials and Methods

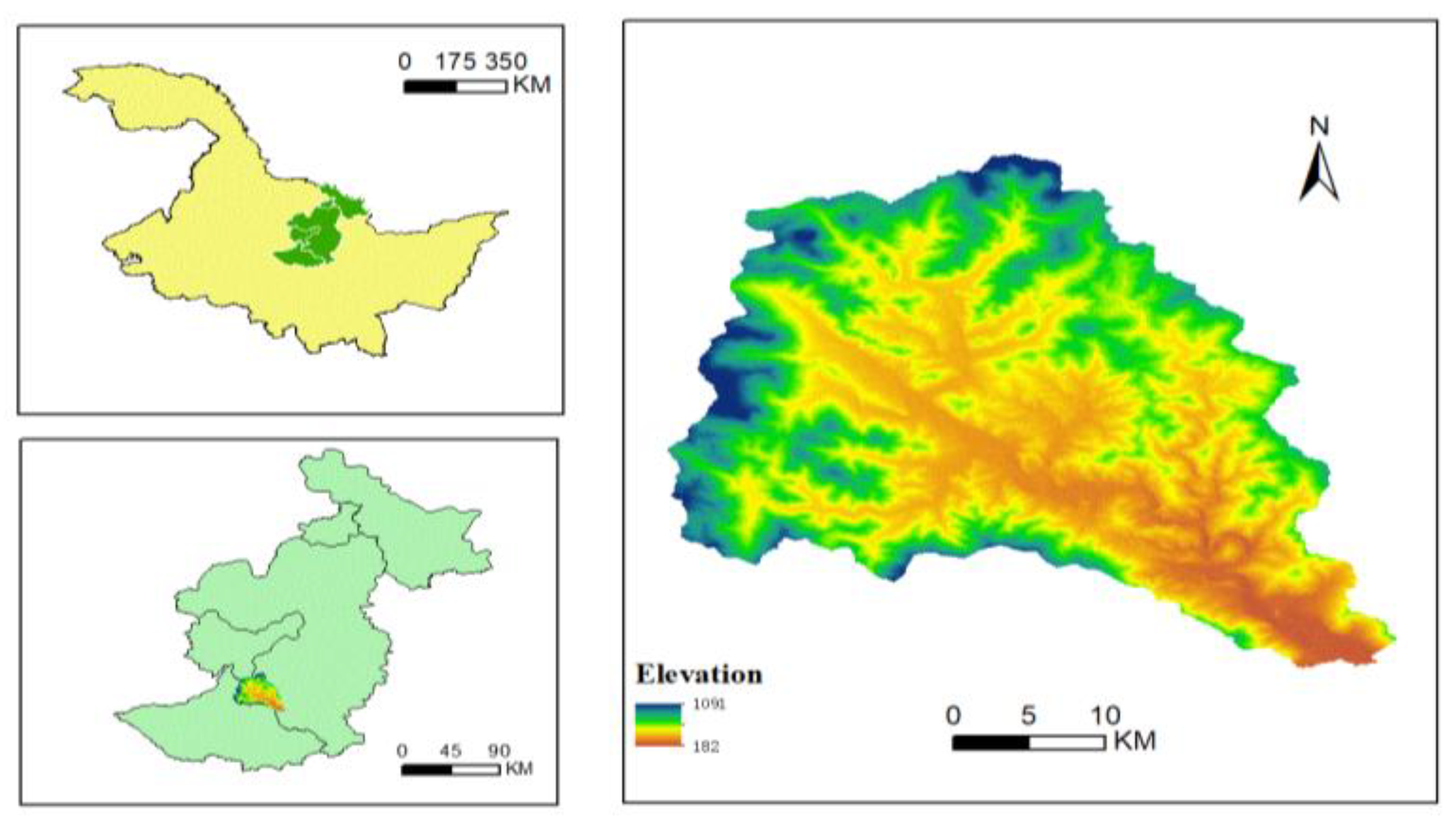

2.1. Study Area

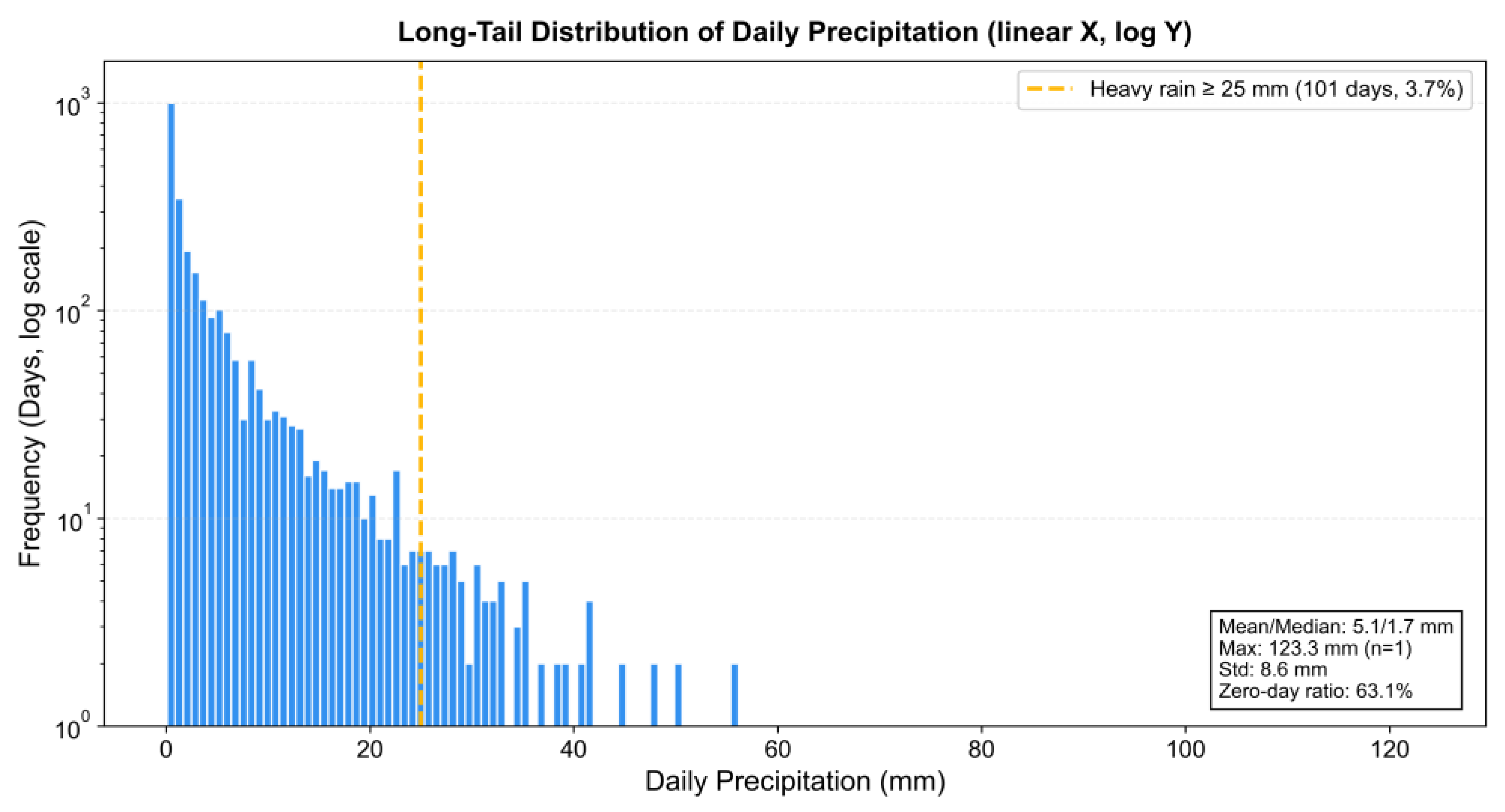

2.2. Materials

3. Methods

3.1. Feature Selection

- 1.

- Standardization:The feature matrix X ∈ R^(n×d) was standardized using Z-score normalization, calculated as follows:In this equation, μj and σj represent the mean and standard deviation of the j-th feature.

- 1.

- Redundant Feature Elimination: Features exhibiting high linear correlation (∣r∣>0.8) were removed, and low-variance features (σ 2<0.01) were filtered out. This step aimed to reduce inter-feature redundancy and enhance feature independence.

- 2.

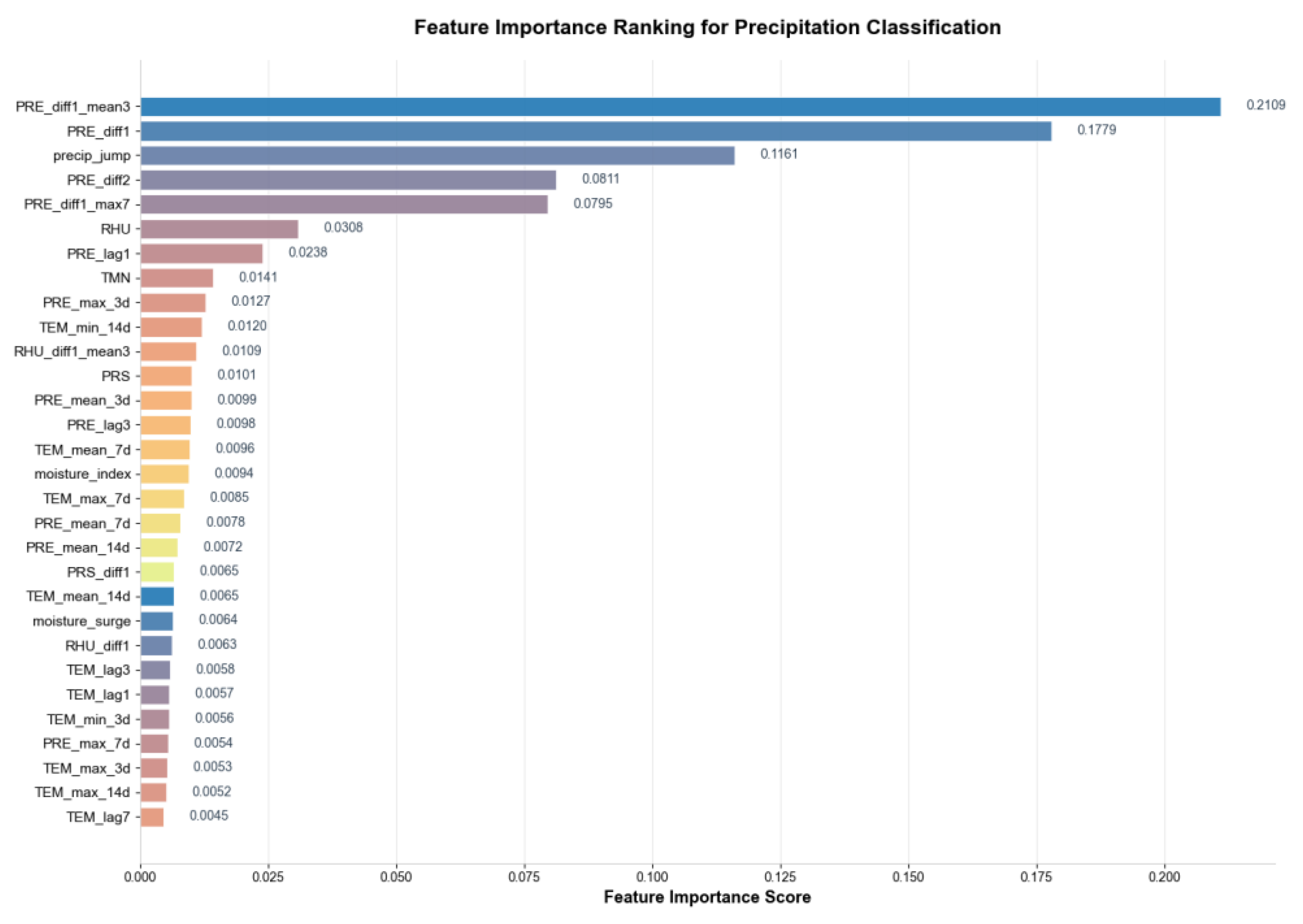

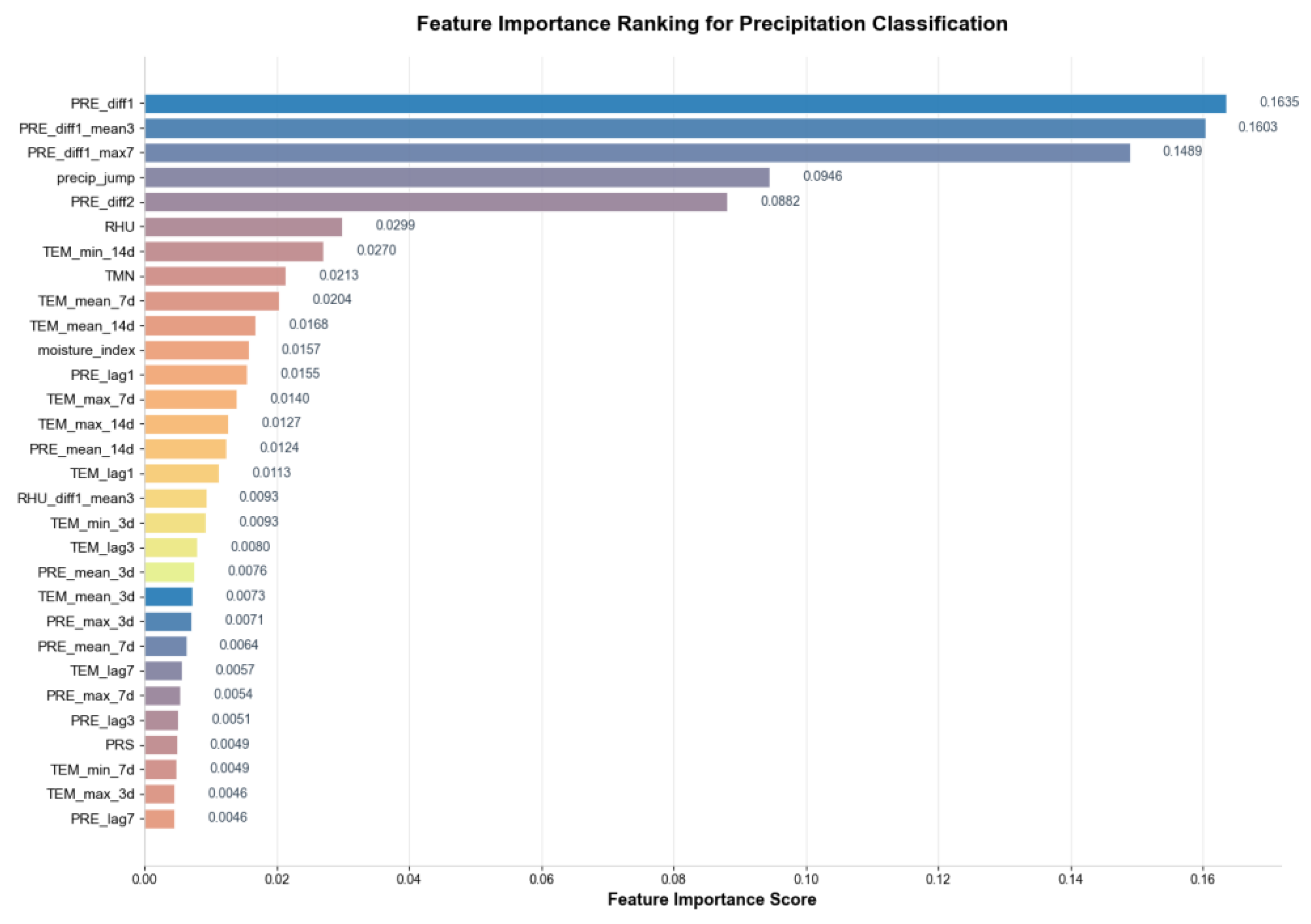

- Feature Selection: Based on the feature importance ranking derived from the Random Forest algorithm (see Figure 2), the top 30 most discriminative features were selected. This method evaluates the average contribution of a feature during node splits in the tree models, thereby measuring its impact on the classification outcome [41,42].

3.2. Sample Ugmentation Strategy

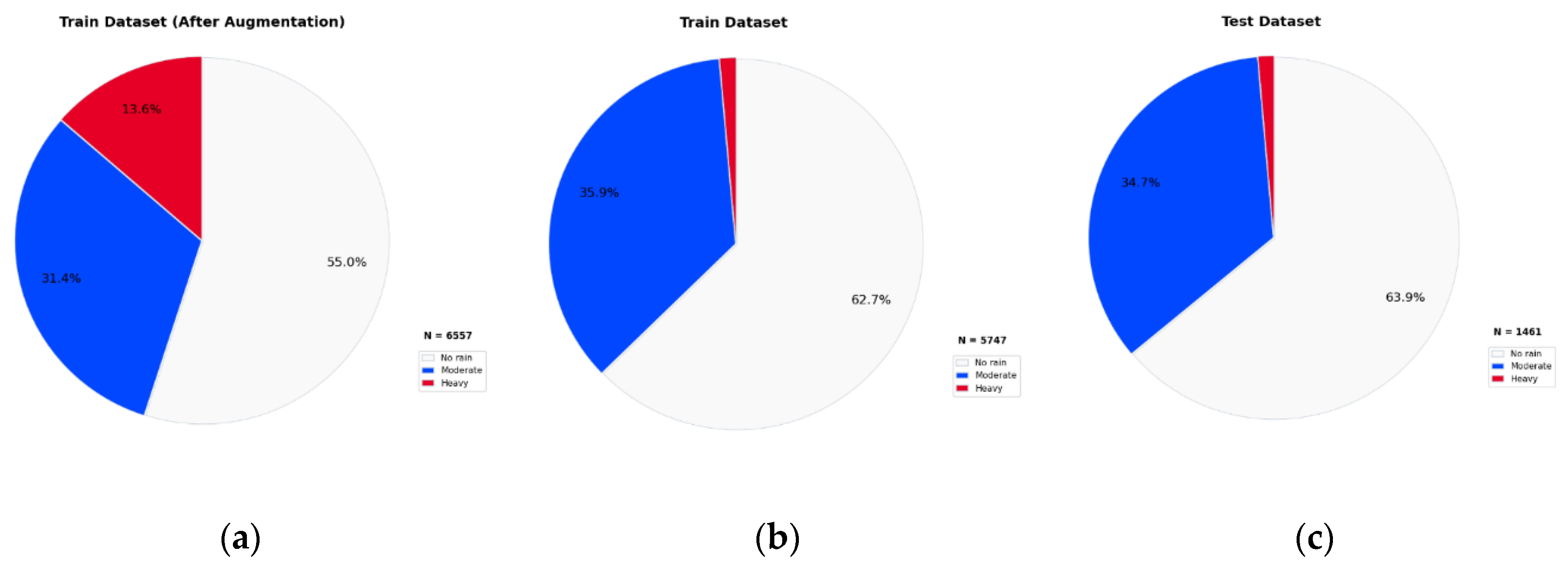

3.3. Data Partitioning Strategy

3.4. Evaluation Metrics

3.5. Threshold Decision Mechanism

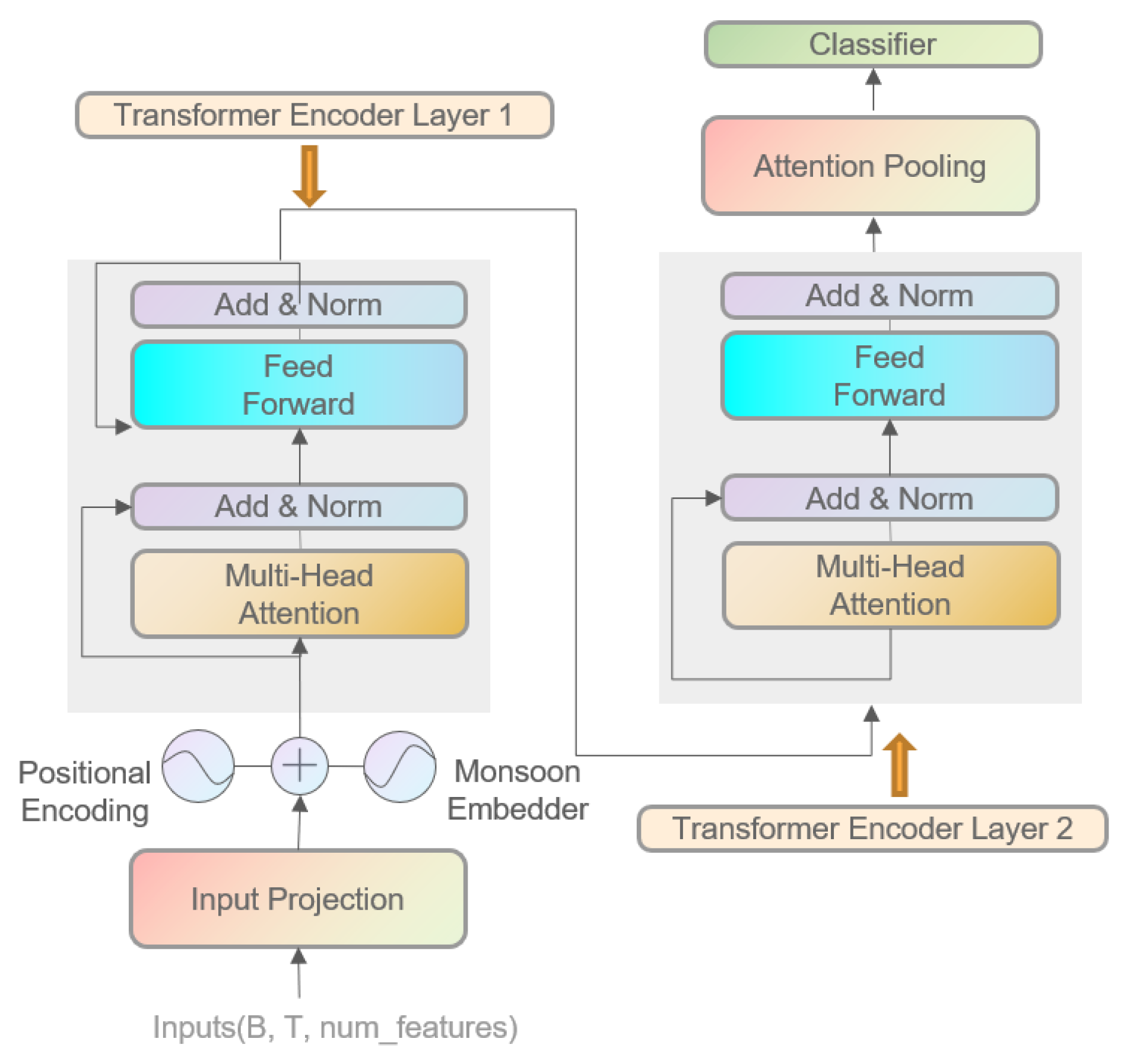

3.6. Monsoon Feature Enhancer

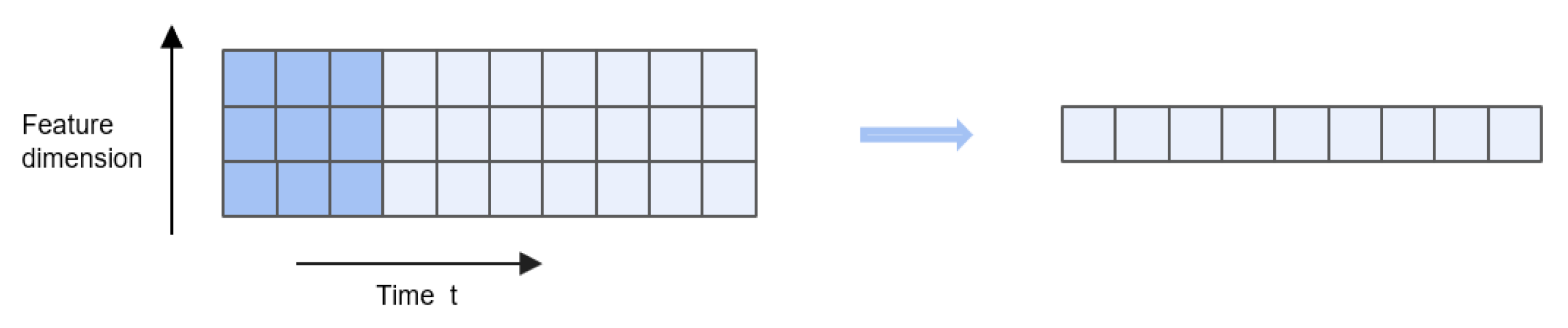

3.7. Input Windowing Strategy

4. Model Architecture

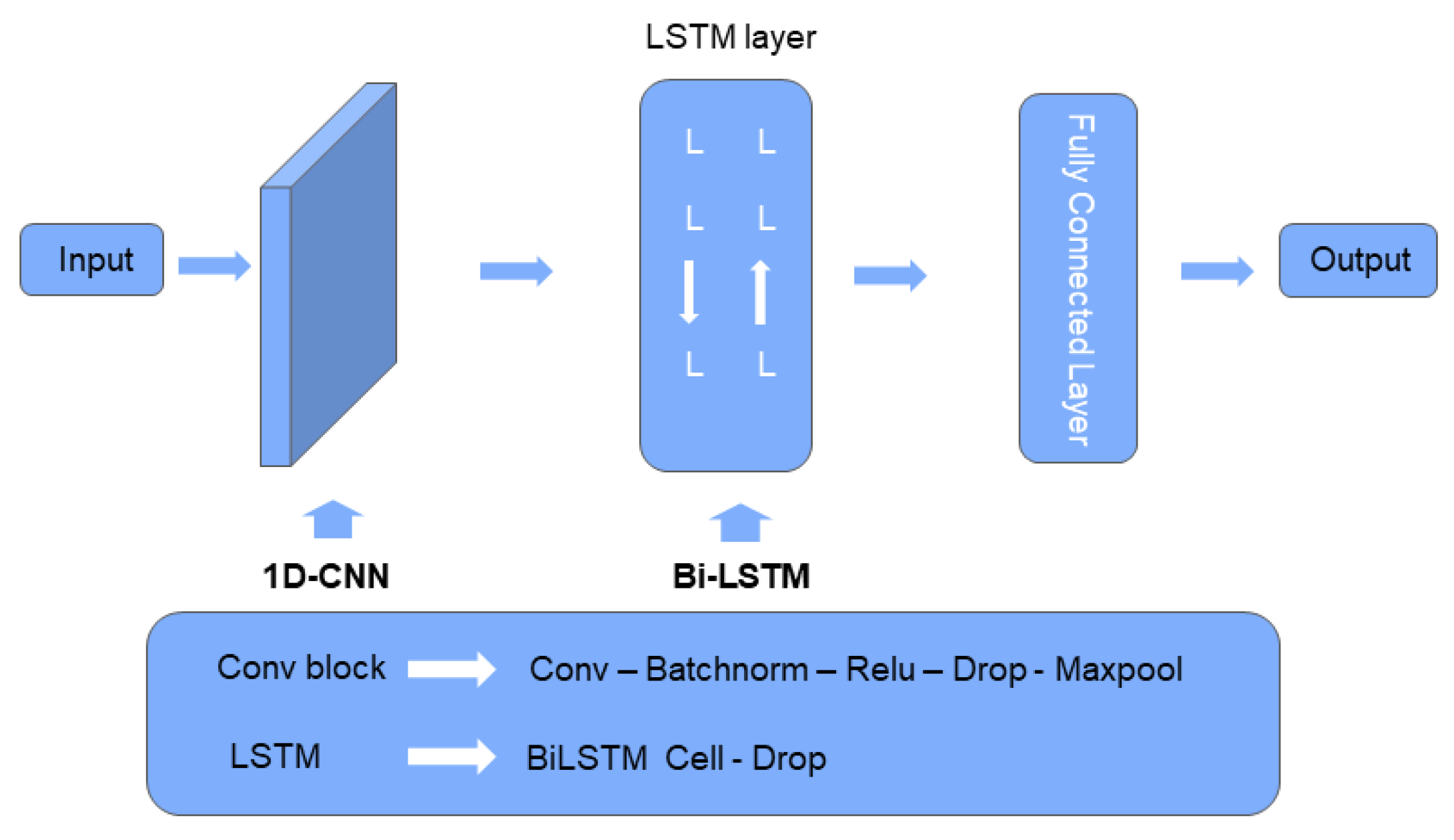

4.11. D-CNN

4.2. BiLSTM

4.31. DCNN-BILSTM

4.4. Improved Transformer

4.5. Raining Configuration

5. Results and Discussion

5.1. The Impact of Data Augmentation Methods on Model Performanc

5.1.1. Comparison of Data Augmentation under Three-Class Classification Task

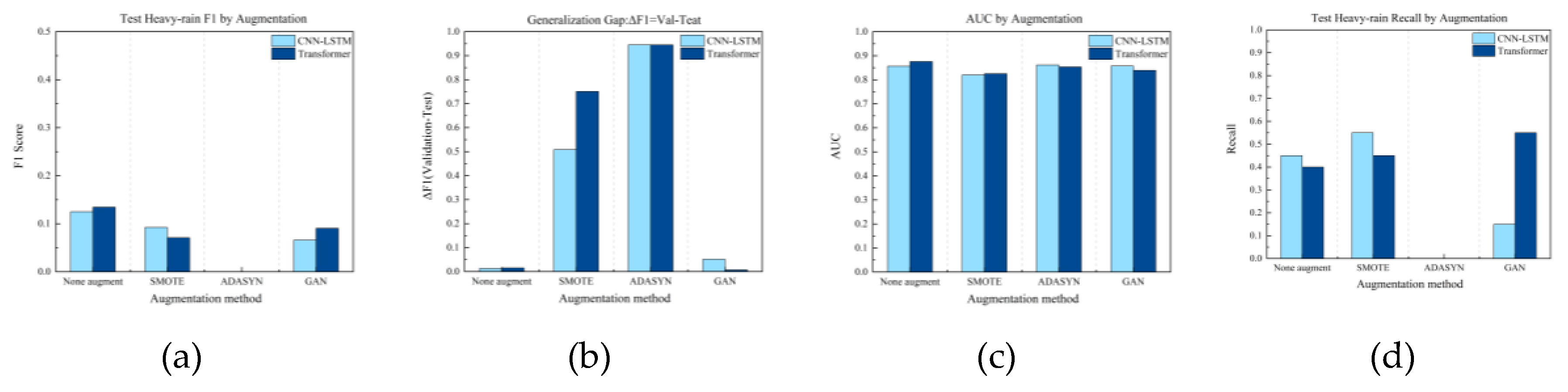

5.1.2. Comparison of Data Augmentation under Binary Classification Task

5.2. Performance Comparison between Model Architectures and Task Types

5.2.1. Comparison of Model Architectures under Three-Class Classification Task

5.2.2. Comparison of Model Architectures under Binary Classification Task

5.3. Comparison of Models with and without Feature Enhancement

5.3.1. Contribution Evaluation of Feature Engineering under Three-Class Classification Task

5.3.2. Contribution Evaluation of Feature Engineering under Binary Classification Task

5.4. Comprehensive Evaluation: Synergistic Effects and Optimal Configuration Strategy of Data Augmentation, Feature Engineering, and Model Architecture

| Experimental Combination | test_f1_heavy | ΔF1 |

|---|---|---|

| CNN-LSTM+ Feature Engineering+SMOTE | 0.1869 | 0.4713 |

| CNN-LSTM+ Feature Engineering+ADASYN | 0.177 | 0.3498 |

| Transformer+ No Feature Engineering+ No Augmentation | 0.1345 | -0.0154 |

| CNN-LSTM+ No Feature Engineering+ No Augmentation | 0.125 | 0.0127 |

| Experimental Combination | ΔF1 | Validation F1 | Test F1 |

|---|---|---|---|

| CNN-LSTM + Feature Engineering + Physics-Informed Augmentation | -0.0255 | 0.0767 | 0.1022 |

| Transformer + No Feature Engineering + Physics-Informed Augmentation | -0.0180 | 0.0563 | 0.0743 |

| Transformer + No Feature Engineering + No Augmentation | -0.0154 | 0.1191 | 0.1345 |

| Transformer + No Feature Engineering + GAN | -0.0067 | 0.0838 | 0.0905 |

| Transformer + Feature Engineering + Physics-Informed Augmentation | 0.0017 | 0.0517 | 0.05 |

6. Conclusions

- For Reliable Early Warnings: CNN-LSTM + Feature Engineering + Physics-informed Augmentation.

- For Peak Performance (Tolerating Risk): CNN-LSTM + Feature Engineering + SMOTE (with rigorous monitoring).

- For a Stable, Interpretable Baseline: Transformer + No Feature Engineering + No Augmentation.

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Kundzewicz, Z. W. Climate change impacts on the hydrological cycle. Ecohydrology & Hydrobiology 2008, 8(2-4), 195–203. [Google Scholar] [CrossRef]

- Schmitt, R.W. The Ocean Component of the Global Water Cycle. Rev. Geophys. 1995, 33, 1395–1409. [Google Scholar] [CrossRef]

- Haile, A.T.; Yan, F.; Habib, E. Accuracy of the CMORPH Satellite-Rainfall Product over Lake Tana Basin in Eastern Africa. Atmos. Res. 2015, 163, 177–187. [Google Scholar] [CrossRef]

- Kim, J.; Han, H. Evaluation of the CMORPH High-Resolution Precipitation Product for Hydrological Applications over South Korea. Atmos. Res. 2021, 258, 105650. [Google Scholar] [CrossRef]

- Hirabayashi, Y.; Mahendran, R.; Koirala, S.; Konoshima, L.; Yamazaki, D.; Watanabe, S.; Kim, H.; Kanae, S. Global flood risk under climate change. Nat. Clim. Change 2013, 3, 816–821. [Google Scholar] [CrossRef]

- Arnell, N.W.; Gosling, S.N. The impacts of climate change on river flood risk at the global scale. Clim. Change 2016, 134, 387–401. [Google Scholar] [CrossRef]

- Mondal, A.; Lakshmi, V.; Hashemi, H. Intercomparison of trend analysis of multi satellite monthly precipitation products and gage measurements for river basins of India. J. Hydrol. 2018, 565, 779–790. [Google Scholar] [CrossRef]

- Dandridge, C.; Lakshmi, V.; Bolten, J.; Srinivasan, R. Evaluation of satellite-based rainfall estimates in the Lower Mekong River Basin. Remote Sens. 2019, 11, 2709. [Google Scholar] [CrossRef]

- Maviza, A.; Ahmed, F. Climate change/variability and hydrological modelling studies in Zimbabwe: A review of progress and knowledge gaps. SN Appl. Sci. 2021, 3, 549. [Google Scholar] [CrossRef]

- Herman, J.D.; Quinn, J.D.; Steinschneider, S.; Giuliani, M.; Fletcher, S. Climate adaptation as a control problem: Review and perspectives on dynamic water resources planning under uncertainty. Water Resour. Res. 2020, 56, e24389. [Google Scholar] [CrossRef]

- Siddharam Aiswarya, L.; Rajesh, G.M.; Gaddikeri, V.; Jatav, M.S.; Dimple; Rajput, J. Assessment and Development of Water Resources with Modern Technologies. In Recent Advancements in Sustainable Agricultural Practices: Harnessing Technology for Water Resources, Irrigation and Environmental Management; Springer Nature: Singapore, 2024; pp. 225–245. [Google Scholar]

- Westra, S.; Fowler, H.J.; Evans, J.P.; Alexander, L.V.; Berg, P.; Johnson, F.; Kendon, E.J.; Lenderink, G.; Roberts, N.M. Future changes to the intensity and frequency of short-duration extreme rainfall. Rev. Geophys. 2014, 52, 522–555. [Google Scholar] [CrossRef]

- Hou, A.Y.; Kakar, R.K.; Neeck, S.; Azarbarzin, A.A.; Kummerow, C.D.; Kojima, M.; Oki, R.; Nakamura, K.; Iguchi, T. The Global Precipitation Measurement Mission. Bull. Am. Meteorol. Soc. 2014, 95, 701–722. [Google Scholar] [CrossRef]

- Stagl, J.; Mayr, E.; Koch, H.; Hattermann, F.F.; Huang, S. Effects of Climate Change on the Hydrological Cycle in Central and Eastern Europe. In Managing Protected Areas in Central and Eastern Europe Under Climate Change; Rannow, S., Neubert, M., Eds.; Advances in Global Change Research; Springer: Dordrecht, The Netherlands, 2014; pp. 31–43. ISBN 978-94-007-7960-0. ISBN 978-94-007-7960-0.

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Computation 1997, 9(8), 1735–1780. [Google Scholar] [CrossRef]

- Sun, A. Y.; Scanlon, B. R.; Save, H.; et al. Reconstruction of GRACE Total Water Storage Through Automated Machine Learning. Water Resources Research 2021, 57(2), e2020WR028669. [Google Scholar] [CrossRef]

- Li, W.; Gao, X.; Hao, Z.; et al. Using deep learning for precipitation forecasting based on spatio-temporal information: a case study. Clim Dyn 2022, 58, 443–457. [Google Scholar] [CrossRef]

- Young, P; Shellswell, S. Time series analysis, forecasting and control[J]. IEEE Transactions on Automatic Control 1972, 17(2), 281–283. [Google Scholar] [CrossRef]

- Wang, H R; Wang, C; Lin, X; et al. An improved ARIMA model for precipitation simulations[J]. Nonlinear Processes in Geophysics 2014, 21(6), 1159–1168. [Google Scholar] [CrossRef]

- Lai, Y.; Dzombak, D. A. Use of Integrated Global Climate Model Simulations and Statistical Time Series Forecasting to Project Regional Temperature and Precipitation. Journal of Applied Meteorology and Climatology 2021, 60(5), 695–710. [Google Scholar] [CrossRef]

- Vapnik, V. N. A note on one class of perceptrons. Automat. Rem. Control 1964, 25, 821–837. [Google Scholar]

- Boser, B. E.; Guyon, I. M.; Vapnik, V. N. A training algorithm for optimal margin classifiers. In Proceedings of the fifth annual workshop on Computational learning theory, 1992, July; pp. 144–152. [Google Scholar]

- Breiman, L. Random forests. Machine learning 2001, 45(1), 5–32. [Google Scholar] [CrossRef]

- Tripathi, S.; Srinivas, V. V.; Nanjundiah, R. S. Downscaling of precipitation for climate change scenarios: a support vector machine approach. Journal of hydrology 2006, 330(3-4), 621–640. [Google Scholar] [CrossRef]

- Das, S.; Chakraborty, R.; Maitra, A. A random forest algorithm for nowcasting of intense precipitation events. Advances in Space Research 2017, 60(6), 1271–1282. [Google Scholar] [CrossRef]

- Hartigan, J.; MacNamara, S.; Leslie, L. M. Application of machine learning to attribution and prediction of seasonal precipitation and temperature trends in Canberra, Australia. Climate 2020, 8(6), 76. [Google Scholar] [CrossRef]

- Chen, T., & Guestrin, C. (2016, August). Xgboost: A scalable tree boosting system. In Proceedings of the 22nd acm sigkdd international conference on knowledge discovery and data mining (pp. 785-794).

- Tang, T.; Jiao, D.; Chen, T.; Gui, G. Medium-and long-term precipitation forecasting method based on data augmentation and machine learning algorithms. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing 2022, 15, 1000–1011. [Google Scholar] [CrossRef]

- Rasp, S.; Pritchard, M. S.; Gentine, P. Deep learning to represent subgrid processes in climate models. Proceedings of the national academy of sciences 2018, 115(39), 9684–9689. [Google Scholar] [CrossRef]

- Shi, X.; Chen, Z.; Wang, H.; Yeung, D. Y.; Wong, W. K.; Woo, W. C. Convolutional LSTM network: A machine learning approach for precipitation nowcasting. Advances in neural information processing systems 2015, 28. [Google Scholar]

- Adibfar, A.; Davani, H. PrecipNet: A transformer-based downscaling framework for improved precipitation prediction in San Diego County. Journal of Hydrology: Regional Studies 2025, 62, 102738. [Google Scholar] [CrossRef]

- Liu, Z., Lin, Y., Cao, Y., Hu, H., Wei, Y., Zhang, Z., ... & Guo, B. (2021). Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 10012-10022).

- Xu, L., Qin, J., Sun, D., Liao, Y., & Zheng, J. (2024). Pfformer: A time-series forecasting model for short-term precipitation forecasting. IEEE Access.

- Zhang, K.; Zhang, G.; Wang, X. TransMambaCNN: A Spatiotemporal Transformer Network Fusing State-Space Models and CNNs for Short-Term Precipitation Forecasting. Remote Sensing 2025, 17(18), 3200. [Google Scholar] [CrossRef]

- Bhattacharya, S.; et al. Antlion re-sampling based deep neural network model for classification of imbalanced multimodal stroke dataset. Multimedia Tools and Applications 2022, 81, 41429–41453. [Google Scholar]

- Sit, M.; Demiray, B. Z.; Demir, I. A systematic review of deep learning applications in streamflow data augmentation and forecasting. 2022. [Google Scholar] [CrossRef]

- Sui, Y.; Jiang, D.; Tian, Z. Latest update of the climatology and changes in the seasonal distribution of precipitation over China. Theoretical and applied climatology 2013, 113(3), 599–610. [Google Scholar] [CrossRef]

- Lee, C.-E.; Kim, S. U. Applicability of Zero-Inflated Models to Fit the Torrential Rainfall Count Data with Extra Zeros in South Korea. Water 2017, 9(2), 123. [Google Scholar] [CrossRef]

- Lee, C. E.; et al. Applicability of Zero-Inflated Models to Fit the Torrential Rainfall Data. Water 2017, 9(2), 123. [Google Scholar] [CrossRef]

- Guyon, I.; Elisseeff, A. An introduction to variable and feature selection. Journal of Machine Learning Research 2003, 3, 1157–1182. [Google Scholar]

- Breiman, L. Random forests. Machine Learning 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Rodriguez-Galiano, V. F.; et al. An assessment of the effectiveness of a random forest classifier for land-cover classification. Remote Sensing of Environment 2012, 123, 37–50. [Google Scholar] [CrossRef]

- Chawla, N. V.; Bowyer, K. W.; Hall, L. O.; Kegelmeyer, W. P. SMOTE: Synthetic Minority Over-sampling Technique. Journal of Artificial Intelligence Research 2002, 16, 321–357. [Google Scholar] [CrossRef]

- Lv, Y.; et al. Precipitation Retrieval from FY-3G/MWRI-RM Based on SMOTE-LGBM. Atmosphere 2024, 15(11), 1268. [Google Scholar] [CrossRef]

- Ye, Y.; Li, Y.; Ouyang, R.; Zhang, Z.; Tang, Y.; Bai, S. Improving machine learning based phase and hardness prediction of high-entropy alloys by using Gaussian noise augmented data. Computational Materials Science 2023, 223, 112140. [Google Scholar] [CrossRef]

- He, H., Bai, Y., Garcia, E. A., & Li, S. (2008). ADASYN: Adaptive Synthetic Sampling Approach for Imbalanced Learning. IEEE International Joint Conference on Neural Networks (IJCNN), 1322–1328. 2008.

- Han, H.; Liu, W. Improving Rainfall Prediction Using Adaptive Synthetic Sampling and LSTM Network. Atmosphere 2023, 14(6), 932. [Google Scholar]

- Lv, Y.; et al. Precipitation Retrieval from FY-3G/MWRI-RM Based on SMOTE-LGBM. Atmosphere 2024, 15(11), 1268. [Google Scholar] [CrossRef]

- Karpatne, A.; Watkins, W.; Read, J.; Kumar, V. Physics-guided neural networks (PGNN): Incorporating scientific knowledge into deep learning models. IEEE Transactions on Knowledge and Data Engineering 2017, 29(9), 2351–2365. [Google Scholar]

- Shen, W.; Chen, S.; Xu, J.; et al. Enhancing Extreme Precipitation Forecasts through Machine Learning Quality Control of Precipitable Water Data from FengYun-2E. Remote Sensing 2024, 16, 3104. [Google Scholar] [CrossRef]

- Yoon, J., Jarrett, D., & van der Schaar, M. (2019). Time-series Generative Adversarial Networks. Advances in Neural Information Processing Systems (NeurIPS), 32.

- Wang, Y.; Zhai, H.; Cao, X.; Geng, X. A Novel Accident Duration Prediction Method Based on a Conditional Table Generative Adversarial Network and Transformer. Sustainability 2024, 16(16), 6821. [Google Scholar] [CrossRef]

- Goodfellow, I. et al. (2014). Generative Adversarial Nets. Advances in Neural Information Processing Systems (NeurIPS), 27.

- Chen, S.; Xu, X.; Zhang, Y.; Shao, D.; Zhang, S.; Zeng, M. Two-stream convolutional LSTM for precipitation nowcasting. Neural Computing & Applications 2022, 34, 13281–13290. [Google Scholar] [CrossRef]

- Shen, W.; Chen, S.; Xu, J.; Zhang, Y.; Liang, X.; Zhang, Y. Enhancing Extreme Precipitation Forecasts through Machine Learning Quality Control of Precipitable Water Data. Remote Sensing 2024, 16, 3104. [Google Scholar] [CrossRef]

- Wang, G.; Feng, Y.; Dai, Y.; Chen, Z.; Wu, Y. Optimization Design of a Windshield for a Container Ship Based on Support Vector Regression Surrogate Model. Ocean Engineering 2024, 313, 119405. [Google Scholar] [CrossRef]

- Hyndman, R. J.; Athanasopoulos, G. Forecasting: Principles and Practice, 2nd ed.; OTexts: Melbourne, Australia, 2018. [Google Scholar]

- Kaufman, S.; Rosset, S.; Perlich, C.; Stitelman, O. Leakage in data mining: Formulation, detection, and avoidance. ACM Transactions on Knowledge Discovery from Data (TKDD) 2012, 6(4), 15. [Google Scholar] [CrossRef]

- Cerqueira, V.; Torgo, L.; Mozetič, I. Evaluating time series forecasting models: An empirical study on performance estimation methods. Machine Learning 2020, 109(11), 1997–2028. [Google Scholar] [CrossRef]

- Provost, F.; Fawcett, T. Robust classification for imprecise environments. Machine Learning 2001, 42(3), 203–231. [Google Scholar] [CrossRef]

- Shen, W.; Chen, S.; Xu, J.; Zhang, Y.; Liang, X.; Zhang, Y. Enhancing Extreme Precipitation Forecasts through Machine Learning Quality Control of Precipitable Water Data. Remote Sensing 2024, 16, 3104. [Google Scholar] [CrossRef]

- Li, W.; Gao, X.; Hao, Z.; Sun, R. Using deep learning for precipitation forecasting based on spatio-temporal information: a case study. Climate Dynamics 2022, 58(1), 443–457. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A. N.; Polosukhin, I. Attention is all you need. Advances in neural information processing systems 2017, 30. [Google Scholar]

| Fold | Training Year Range | Validation Year Range | Data Coverage |

|---|---|---|---|

| Fold 1 | 1980–1989 | 1990–1992 | Approx 65% |

| Fold 2 | 1980–1991 | 1992–1994 | Approx 70% |

| Fold 3 | 1980–1993 | 1994–1995 | Approx 75% |

| model | augment | test_f1_heavy | delta_f1 | test_recall_heavy | test_auc_score |

|---|---|---|---|---|---|

| CNN-LSTM | None augment | 0.1053 | 0.0241 | 0.15 | 0.6412 |

| CNN-LSTM | SMOTE | 0.1186 | 0.0187 | 0.1825 | 0.6578 |

| CNN-LSTM | ADASYN | 0.0954 | 0.0304 | 0.1427 | 0.6364 |

| CNN-LSTM | GAN | 0.1053 | 0.0241 | 0.2488 | 0.6451 |

| Transformer | None augment | 0.087 | 0.0373 | 0.75 | 0.6289 |

| Transformer | ADASYN | 0 | 0.3035 | 0 | 0.6263 |

| Transformer | SMOTE | 0.125 | 0.0476 | 0.35 | 0.5978 |

| Transformer | GAN | 0.0345 | 0.0181 | 0.1 | 0.636 |

| model | augment | test_f1_heavy | delta_f1 | test_recall_heavy | test_auc_score |

|---|---|---|---|---|---|

| CNN-LSTM | None augment | 0.125 | 0.0127 | 0.45 | 0.8563 |

| CNN-LSTM | SMOTE | 0.0924 | 0.5082 | 0.55 | 0.8198 |

| CNN-LSTM | ADASYN | 0 | 0.9448 | 0 | 0.8614 |

| CNN-LSTM | GAN | 0.0659 | 0.0513 | 0.15 | 0.8573 |

| Transformer | None augment | 0.1345 | 0.0154 | 0.4 | 0.8755 |

| Transformer | ADASYN | 0 | 0.9448 | 0 | 0.8532 |

| Transformer | SMOTE | 0.0709 | 0.7505 | 0.45 | 0.8253 |

| Transformer | GAN | 0.0905 | 0.0067 | 0.55 | 0.8391 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).