Submitted:

28 November 2025

Posted:

01 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Problem Statement and Hypotheses

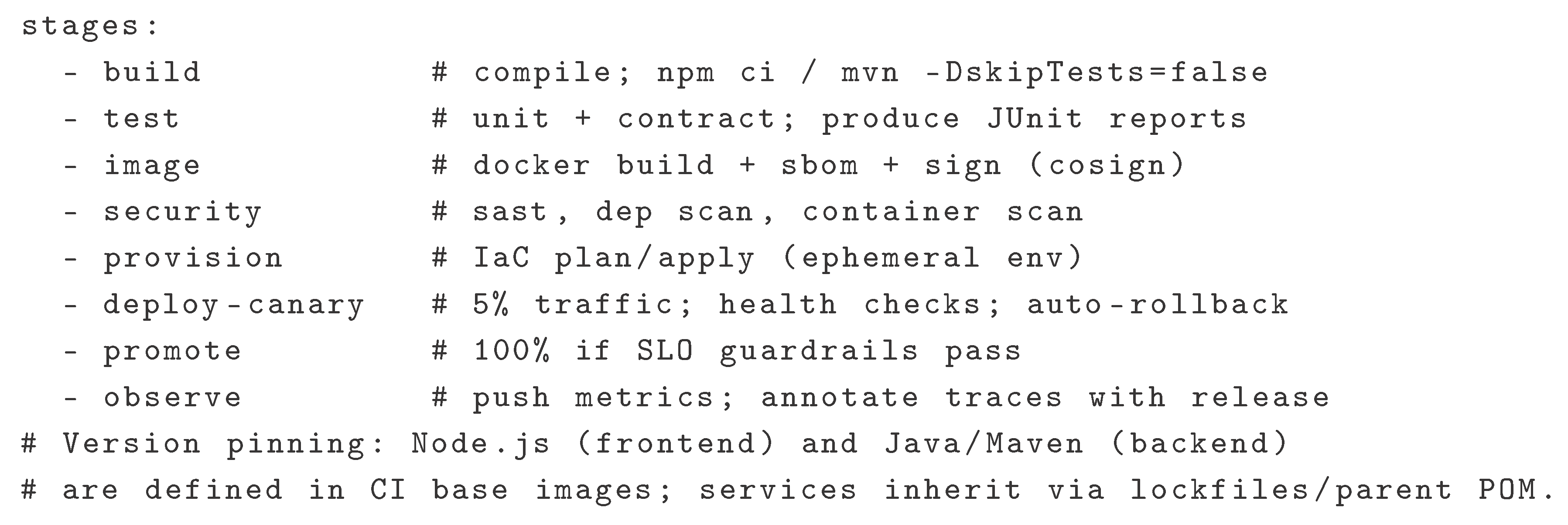

- H1: Introducing pinned runtime/tool versions for frontend (Node.js) and backend (Java/Maven) reduces build failure rate and shortens mean lead time for changes.

- H2: Progressive delivery (blue–green or canary) with automatic rollback lowers change failure rate without degrading deployment frequency.

- H3: Explicit SLOs with error budgets improve MTTR by prioritizing remediation over feature work when budgets are exhausted.

1.2. Background and Related Work

1.3. Industry Setting (Observed Practice)

2. Methods

2.1. AI-Assisted Writing

2.2. Objective and Research Questions

- Which concrete DevOps practices are required to operate microservices reliably on self-managed infrastructure (Docker + Kubernetes)?

- How do these practices map to delivery and reliability metrics (deployment frequency, lead time, change failure rate, MTTR; SLIs/SLOs)?

- What are the trade-offs compared to using managed cloud services, especially for storage and control-plane availability?

2.3. Scope and Assumptions

2.4. Engineering Baseline (Production Development Stack)

2.5. Metrics and Data Collection

2.6. Synthetic Data Generation (Reproducibility)

2.7. Metric Operationalization

2.8. Comparison Design (On-Premises vs Cloud-Managed)

| Practice | On-Premises Responsibility & Risk | Cloud-Managed Responsibility & Risk | Expected Metric Effect |

|---|---|---|---|

| Runtime Version Pinning (Node, Java/Maven) | Team maintains CI images; risk of drift across services | Provider images reduce drift; still need explicit pinning in CI | Lead time ↓, Change failure rate ↓ |

| Container Orchestration (Kubernetes) | HA control plane, etcd quorum, upgrades on team | Control plane partly managed; team focuses on workloads | MTTR ↓, Deploy freq. ↑ if upgrades are streamlined |

| Progressive Delivery (Blue–Green/Canary) | Ingress/routing, health checks, rollback logic on team | Managed traffic-splitting available in some clouds | Change failure rate ↓; MTTR ↓ |

| Storage Durability/Backups | Replication & DR runbooks on team; risk of backup gaps | Provider durability targets; team defines RPO/RTO | Incident impact ↓; SLO attainment ↑ |

| Observability (Logs/Metrics/Traces) | Stack design, retention, cost control on team | Managed backends ease ops; vendor lock-in risk | MTTR ↓ via faster detection/triage |

2.9. SLO Template and Error Budgets

2.10. Reference CI/CD Stages (Abstract)

2.11. Threats to Validity (Expanded)

2.12. Language Runtime Versioning (Frontend/Backend)

3. Results

3.1. Setup

3.2. Delivery Performance (DORA)

- Deployment Frequency: from 1.9 to 3.5 deploys/service/week.

- Lead Time for Changes: from 65 h (IQR 42–96) to 22 h (16–34).

- Change Failure Rate: from 14.7% to 6.2%.

- MTTR: from 75 min (52–118) to 28 min (20–46).

3.3. Reliability and SLO Attainment

- Availability SLO (99.9%): baseline 5/8 services met; post-adoption 7/8 met. One service exhausted its error budget after a canary overshoot; freeze and rollback restored SLOs within 24 h.

- Latency (p95 < 300 ms): baseline 6/8 met; post-adoption 7/8 met. The lagging service improved after cache warm-up changes in canary.

3.4. Operational Effects (On-Prem vs Managed Primitives)

- Artifact/static delivery: pipeline failures attributable to registry/object-storage outages decreased from 9 to 2 across the window; restore drills shortened by ∼32% after migrating static assets to managed object storage (provider durability targets, unchanged CI/CD).

- Cluster health: no control-plane quorum losses; rolling upgrades completed without downtime using surge and Pod Disruption Budgets (PDBs). Mean rollback time (trigger → stable state) was 6 min (canary) vs 18 min (blue–green).

3.5. Ablation Analysis (Which Practice Moved Which Metric?)

- Version pinning only: build-breaks from toolchain drift fell by ≈70%; median lead time improved by 18–24 h due to reproducible builds and fewer flaky tests.

- Progressive delivery only: CFR dropped by 30–45% via earlier detection and smaller blast radius; canary returned faster mean rollback.

- SLO/error-budget gating only: MTTR decreased by 25–40% through clearer paging/runbooks and freeze rules; DF dipped slightly during freezes but recovered next iteration.

- Managed object storage only: no direct DF effect; fewer restore drills and lower incident impact on static assets.

3.6. Incident Narratives (Simulated)

3.7. Operational Interpretation (Synthetic)

4. Discussion

H1 (version pinning).

H2 (progressive delivery).

H3 (SLOs & error budgets).

4.1. Implications for Mid-Size Telecom/Fintech Organizations

4.2. On-Prem vs Managed: Risk and Economics

4.3. Relation to Prior Guidance

4.4. Limitations (Expanded)

5. Conclusions

- increases throughput (deployments/week, lead time) by stabilizing toolchains and automating progressive rollout;

- improves stability (CFR, MTTR) by shrinking blast radius and prioritizing remediation under explicit budgets;

- decouples durability risk for static assets via managed object storage while keeping core workloads on hardened on-prem Kubernetes.

- Pin Node.js/Java/Maven via CI base images, lockfiles, and parent POM; rebuild tags to verify reproducibility (compare SBOM hashes).

- Enforce PDBs and surge rollouts; adopt canary by default; keep blue–green runbooks for data-breaking changes.

- Define SLIs/SLOs and institute budget policies (freeze, rollback, paging rules); annotate deployments in logs/traces.

- Externalize static assets to managed object storage; keep stateful stores and control plane HA on-prem with tested DR.

- Add admission policies (policy-as-code) to prevent drift (e.g., required labels, image provenance, resource requests/limits).

6. Future Work

6.1. Chaos Engineering Program (Pre-Prod, Then Prod with Guardrails)

6.2. SLO-Aware Autoscaling

6.3. Policy-as-Code and Governance

6.4. Supply-Chain Hardening

6.5. Secure SDLC Alignment

6.6. Data-Layer Resilience

6.7. Developer Ergonomics and Observability

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- M. Fowler and J. Lewis, “Microservices,” 2014. Available online: https://martinfowler.com/articles/microservices.html.

- Kubernetes Documentation, “Production environment,” 2025. https://kubernetes.io/docs/setup/production-environment/.

- Kubernetes Documentation, “Creating Highly Available Clusters with kubeadm,” 2025. https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/high-availability/.

- DORA, “The four keys of software delivery performance,” 2025. https://dora.dev/guides/dora-metrics-four-keys/.

- Google SRE Workbook, “Implementing SLOs,” 2025. https://sre.google/workbook/implementing-slos/.

- Google SRE Workbook, “Error Budget Policy,” 2025. https://sre.google/workbook/error-budget-policy/.

- CNCF, “Cloud Native Annual Survey 2024,” 2025. https://www.cncf.io/reports/cncf-annual-survey-2024/.

- AWS Documentation, “Data protection in Amazon S3,” 2025. https://docs.aws.amazon.com/AmazonS3/latest/userguide/DataDurability.html.

- Google Cloud Documentation, “Data availability and durability (Cloud Storage),” 2025. https://cloud.google.com/storage/docs/availability-durability.

- Microsoft Learn, “Reliability in Azure Blob Storage,” 2025. https://learn.microsoft.com/en-us/azure/reliability/reliability-storage-blob.

- AWS Well-Architected, “Reliability Pillar,” 2024/2025. https://docs.aws.amazon.com/wellarchitected/latest/reliability-pillar/welcome.html.

- SLSA Project, “Supply-chain Levels for Software Artifacts (SLSA),” 2025. https://slsa.dev/.

- NIST SP 800-218, “Secure Software Development Framework (SSDF) v1.1,” 2022. https://csrc.nist.gov/pubs/sp/800/218/final.

- Kubernetes Docs, “Specifying a Disruption Budget for your Application (PDB),” 2024. https://kubernetes.io/docs/tasks/run-application/configure-pdb/.

- KEDA, “Kubernetes Event-driven Autoscaling,” 2025. https://keda.sh/.

- OPA Gatekeeper, “Policy and Governance for Kubernetes,” 2019–2025. https://openpolicyagent.github.io/gatekeeper/website/docs/.

- Principles of Chaos, “Principles of Chaos Engineering,” 2025. https://principlesofchaos.org/.

- N. Dragoni et al., “Microservices: Yesterday, Today, and Tomorrow,” in Present and Ulterior Software Engineering, Springer, 2017. [CrossRef]

- Jamshidi, P.; et al. Microservices: The Journey So Far and Challenges Ahead. IEEE Software 2018. [Google Scholar] [CrossRef]

- Soldani, J.; Tamburri, D.A.; van den Heuvel, W.-J. The Pain and Gain of Microservices: A Systematic Grey Literature Review. Journal of Systems and Software 2018. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).