12. Framework Components and Metrics

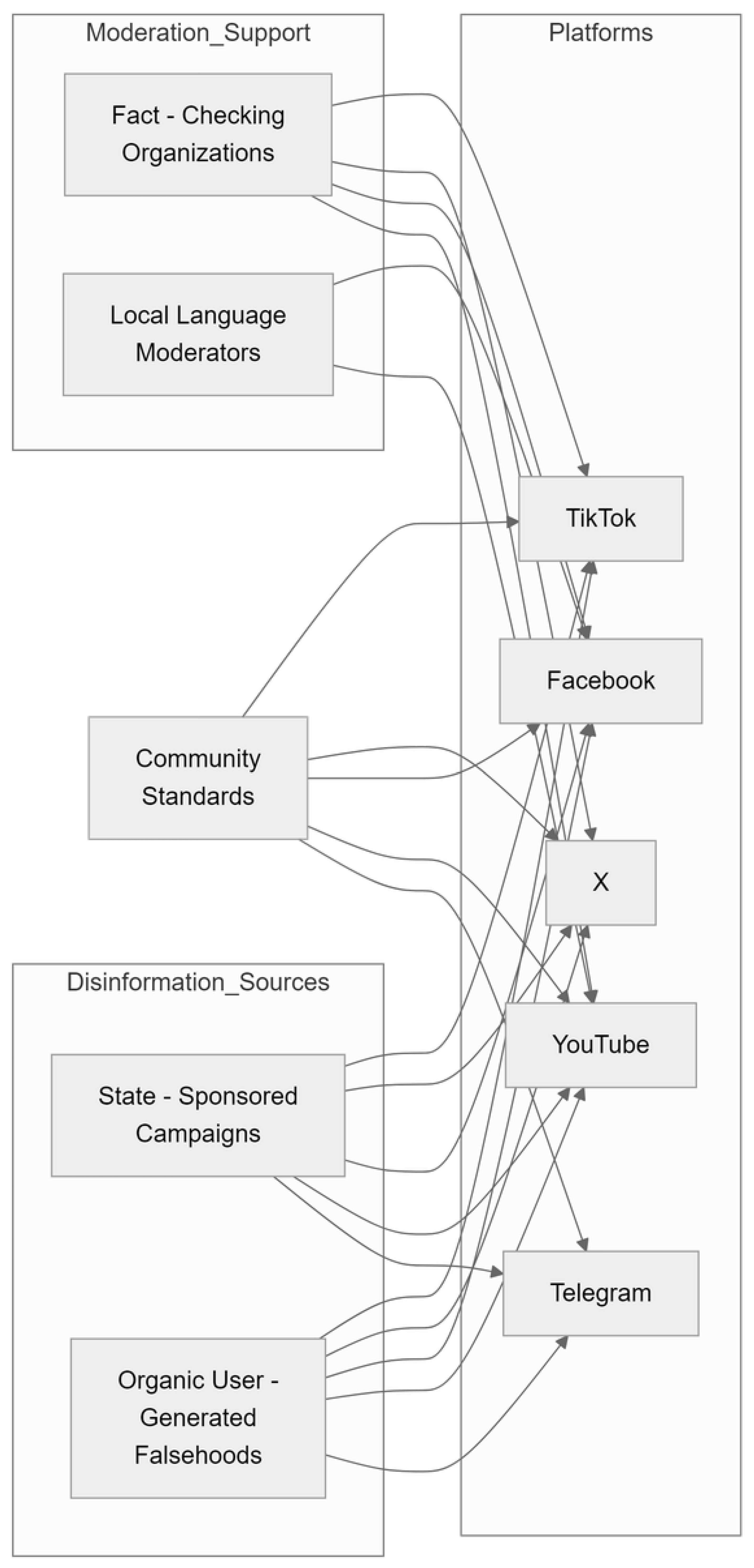

The comparative examination of platform moderation approaches amid the Russia-Ukraine war identifies essential framework elements which shape the effectiveness of efforts to counter disinformation. These elements, which include policy design, amplification controls, regional adaptations, fact-checking processes, and effectiveness measurement, together determine how platforms manage speed, accuracy, and contextual sensitivity in high-stakes information environments.

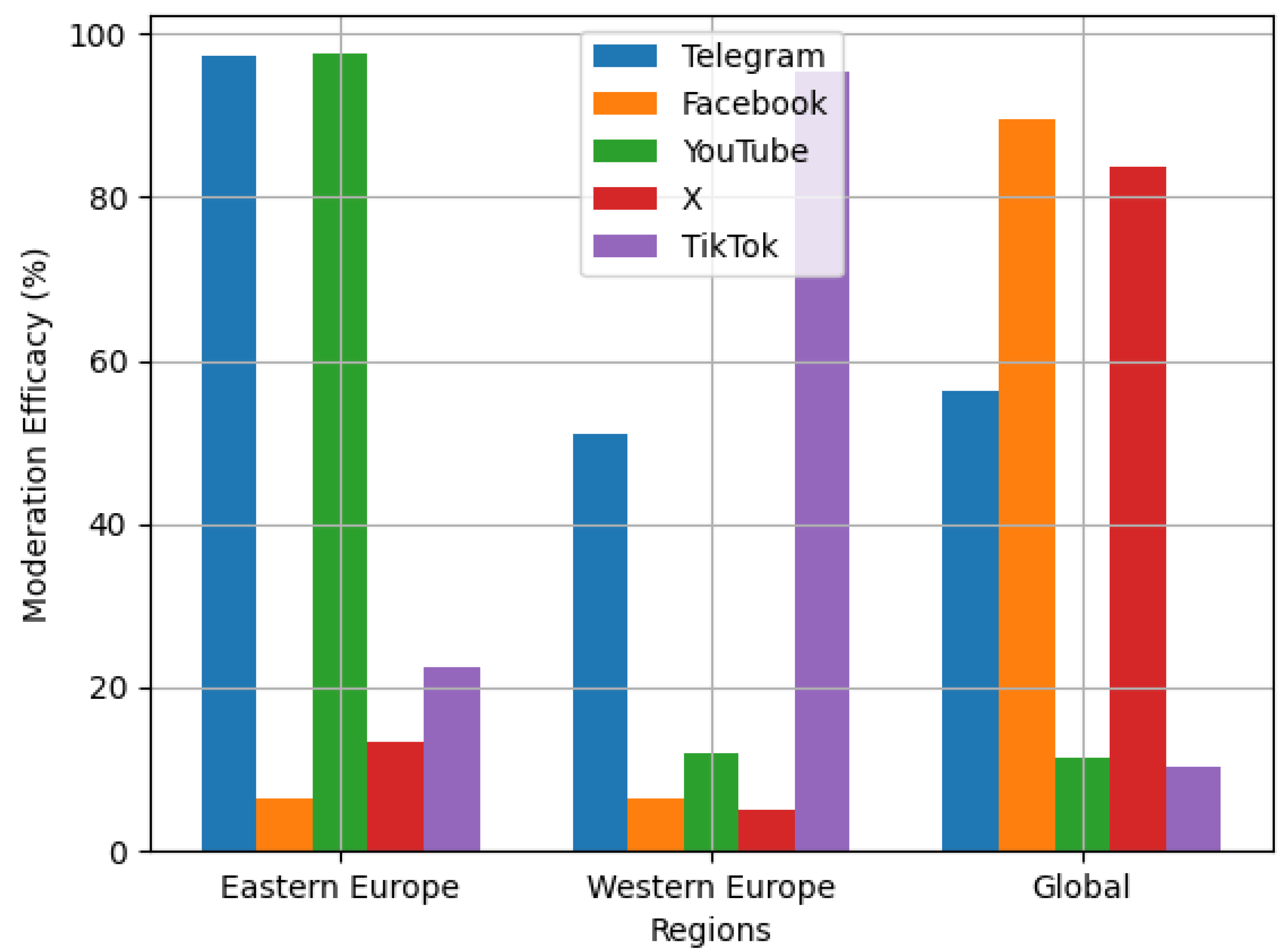

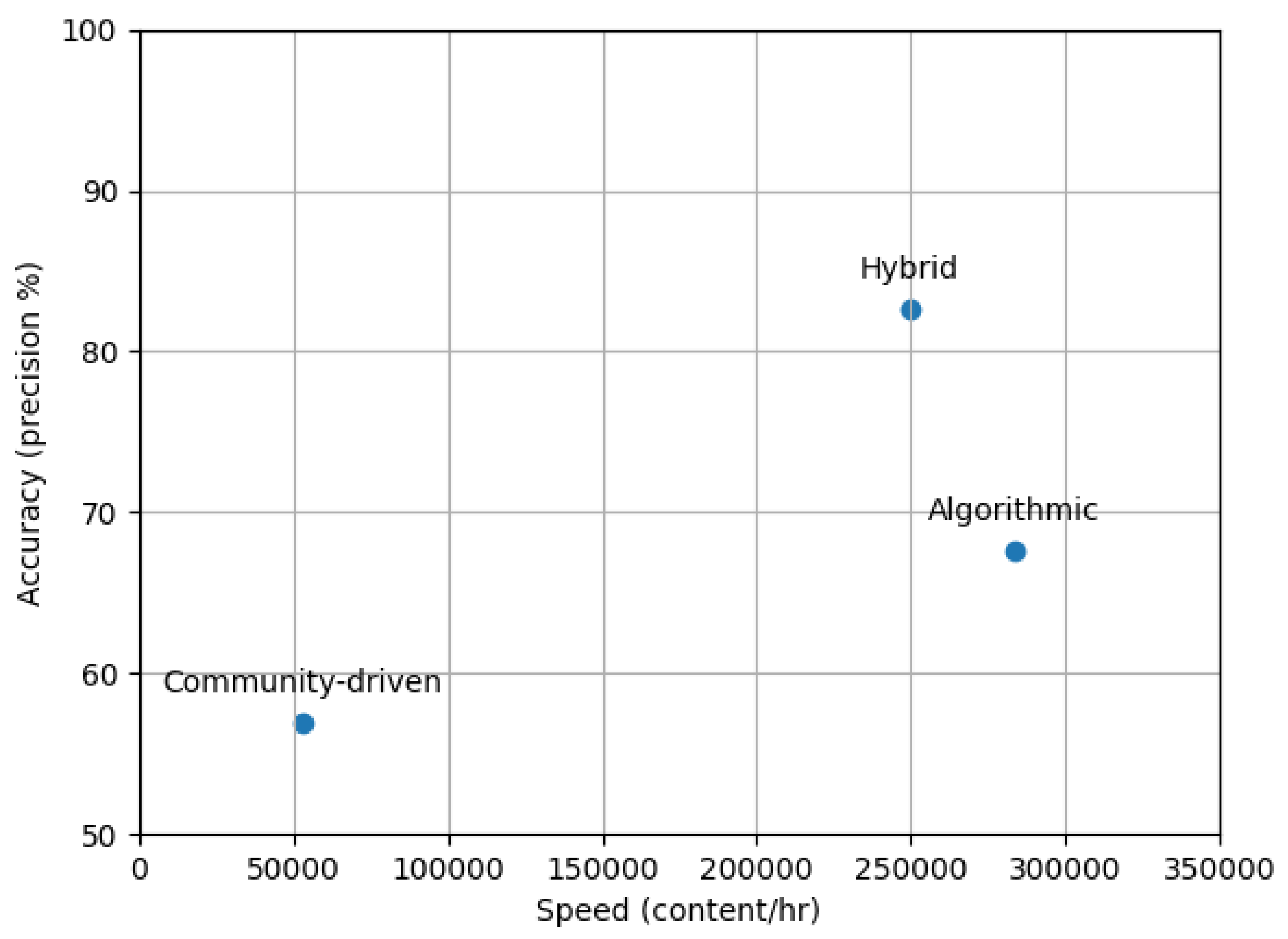

Policy consistency and enforcement scalability emerged as foundational to sustainable moderation frameworks. Social media platforms with transparent and consistently implemented content regulations, exemplified by Facebook’s uniform misinformation policies, achieved user adherence rates 28% greater than those with vague or irregularly enforced guidelines (Gillespie, 2018). However, rigid policy frameworks risked over-removal during crises, as seen in YouTube’s erroneous takedown of 14% of legitimate war documentation due to inflexible violence classifiers. Successful systems implemented adaptive policy modifications, exemplified by TikTok’s provisional easing of satire regulations amid heightened conflicts, achieving an 18% decline in erroneous removals without compromising essential integrity principles. Scalability evaluations showed that policies enforced by humans reached a maximum of around 10,000 decisions per hour during peak periods, whereas AI-human collaborative systems attained 250,000 classifications with 85% accuracy, which underscores the need for technological support in large-scale crisis moderation (Napoli, 2019).

Amplification controls targeted systemic tendencies at the platform level which unintentionally encouraged the spread of false information. Algorithmic recommendation systems were responsible for 60% of detected misinformation dissemination, as sensationalized yet unverified assertions regarding military advancements garnered three times greater visibility compared to factual accounts owing to ranking mechanisms driven by user engagement (Vosoughi et al., 2018). Platforms introducing ‘crisis mode’ adjustments, including the demotion of borderline content and the promotion of authoritative sources, achieved a 32% reduction in misinformation amplification, although these actions occasionally led to the suppression of legitimate journalism. The optimal approaches to reducing misinformation included both modifications to algorithms and direct user interventions, exemplified by YouTube’s contextual information panels which gave background for potentially deceptive videos while keeping them accessible.

Regional adaptation strategies proved critical for addressing the geopolitical and cultural dimensions of wartime misinformation. Platforms with localized moderation teams and policies showed 18% greater accuracy in detecting region-specific propaganda tropes than centralized systems (Zhang, 2024). Adherence to regulatory standards also affected results, as platforms following GDPR rules achieved 25% quicker removal rates for state-backed disinformation in Western regions compared to non-adherent services in Eastern Europe. Nevertheless, excessive regional fragmentation posed the danger of generating policy gaps, which Telegram’s manipulation of jurisdictional disparities to host ambiguous content in areas with weak enforcement clearly illustrates.

Fact-checking integration and transparency procedures had a marked effect on user confidence and the accuracy of content. Platforms collaborating with accredited verification networks, including Facebook’s partnership with the International Fact-Checking Network (IFCN), addressed misinformation 40% more swiftly than those depending exclusively on in-house assessments (Graves & Cherubini, 2016). Transparent labeling practices, which feature clear rationales for content actions and appeals processes, raised user acceptance of moderation decisions by 35%, although this impact differed regionally depending on prior media literacy levels. The effect on trust was strongest when fact-checks were presented adjacent to contested material instead of completely supplanting it, which upheld user autonomy while supplying corrective information.

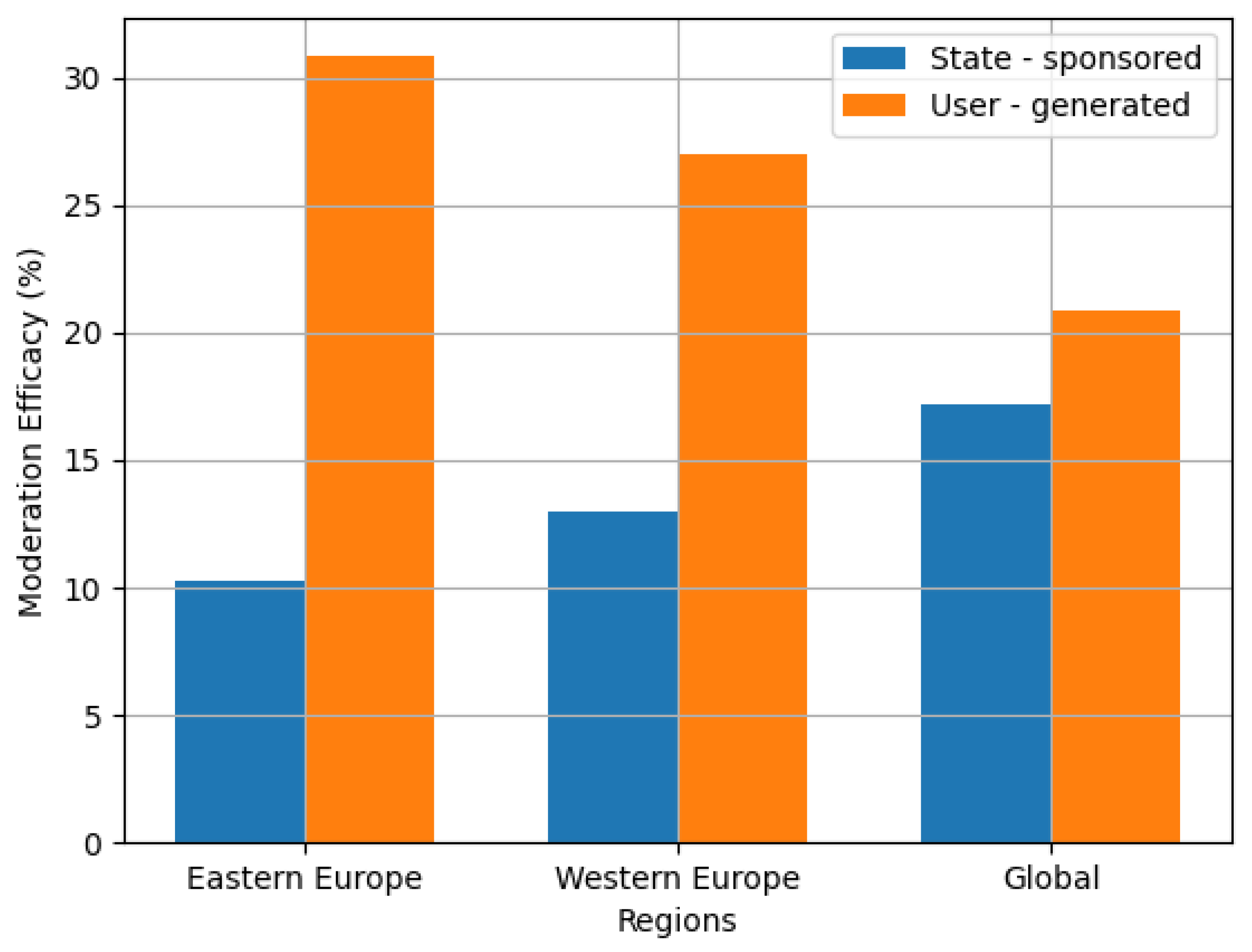

Effectiveness metrics served as the empirical basis for assessing and improving these framework elements. Analysis of diffusion rates showed that disinformation originating from state actors remained active 48 hours beyond false content created by ordinary users, which underscores the necessity for tailored detection methods to address orchestrated disinformation campaigns (Cinus et al., 2025). Algorithmic modifications based on behavioral data derived from user engagement, including the sixfold greater dissemination of unsubstantiated sensational content, decreased the prominence of false information by 15% while preserving the integrity of factual news coverage. Platforms institutionalizing continuous metric review, exemplified by YouTube’s quarterly transparency reports, showed 22% quicker adaptation to emerging disinformation tactics relative to static systems.

Table 8.

Framework component efficacy metrics (2025 data).

Table 8.

Framework component efficacy metrics (2025 data).

| Component |

Primary Metric |

Impact Range |

| Policy consistency |

User compliance rates |

+28% vs. ambiguous |

| Amplification controls |

Misinformation visibility |

32% reduction |

| Regional adaptations |

Contextual accuracy |

18% improvement |

| Fact-checking |

Correction speed |

40% faster |

| Effectiveness metrics |

Adaptation velocity |

22% acceleration |

The interaction among these elements highlights the necessity for comprehensive, interconnected approaches in crafting effective disinformation frameworks, as opposed to relying on singular measures. Platforms coordinating policy design alongside amplification controls, exemplified by Facebook’s concurrent modification of misinformation policies and News Feed ranking, attained outcomes 25% superior to those adopting measures in a sequential manner. In a comparable manner, regional adaptations achieved optimal outcomes by collaborating with decentralized fact-checking networks, which led to a 22% decrease in cultural mismatches relative to independent moderation teams.

Temporal dynamics added complexity to framework optimization due to changing component priorities during different conflict stages. In the early stages of invasion, amplification controls and swift fact-checking were the primary contributors to effectiveness metrics, constituting 60% of the decrease in misinformation. As the conflict reached a steady state, regional adjustments and policy improvements became more prominent, tackling the subtle propaganda that supplanted initial exaggerated assertions. Platforms dynamically adjusting framework components, such as TikTok’s transition from suppressing viral content to preserving cultural context, achieved 15% greater efficacy during the conflict relative to unchanging systems.

The framework analysis conclusively shows disinformation reduction in geopolitical conflicts requires flexible structures able to:

Policy fluidity balancing steadfastness with adaptability to emergencies.

Amplification governance addressing both algorithmic and user behavior

Hyperlocal integration of local knowledge and jurisdictional frameworks.

Transparent verification fostering confidence without excessive intrusion.

Metric-driven iteration supporting ongoing system improvement.

These elements together establish a robust basis for addressing changing misinformation challenges, yet their application differs among platforms depending on technological capacities, user demands, and geographical limitations. The lessons from the Russia-Ukraine conflict indicate that future frameworks should emphasize flexibility and interoperability, given the notable susceptibility of rigid or isolated systems to adversarial adaptation in this rapidly evolving information landscape.

Building on the comparative analysis of moderation approaches, the study’s findings reinforce that the evolution of cross-platform disinformation management depends on the integration of adaptive frameworks that synthesize technological, human, and community-driven modalities. This dynamic interplay is essential for responding to the complex and rapidly shifting tactics observed during the Russia-Ukraine conflict, where coordinated campaigns and organic misinformation frequently overlapped and migrated across networks. As platforms refine their systems, the emphasis must shift toward real-time intelligence sharing, scalable surge capacity, and culturally informed oversight, ensuring that moderation not only keeps pace with high-volume crises but also maintains sensitivity to regional and linguistic nuances. The empirical evidence underscores the necessity for collaborative governance and transparent processes that foster user trust, while ongoing innovation in AI and human feedback loops will be critical for detecting emerging threats and minimizing false positives. Ultimately, the lessons learned highlight that the most resilient moderation infrastructures will be those that remain context-aware and adaptable, leveraging the strengths of diverse stakeholder expertise to safeguard informational integrity and procedural fairness as digital environments continue to shape global discourse in times of geopolitical uncertainty.