Submitted:

08 April 2026

Posted:

09 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

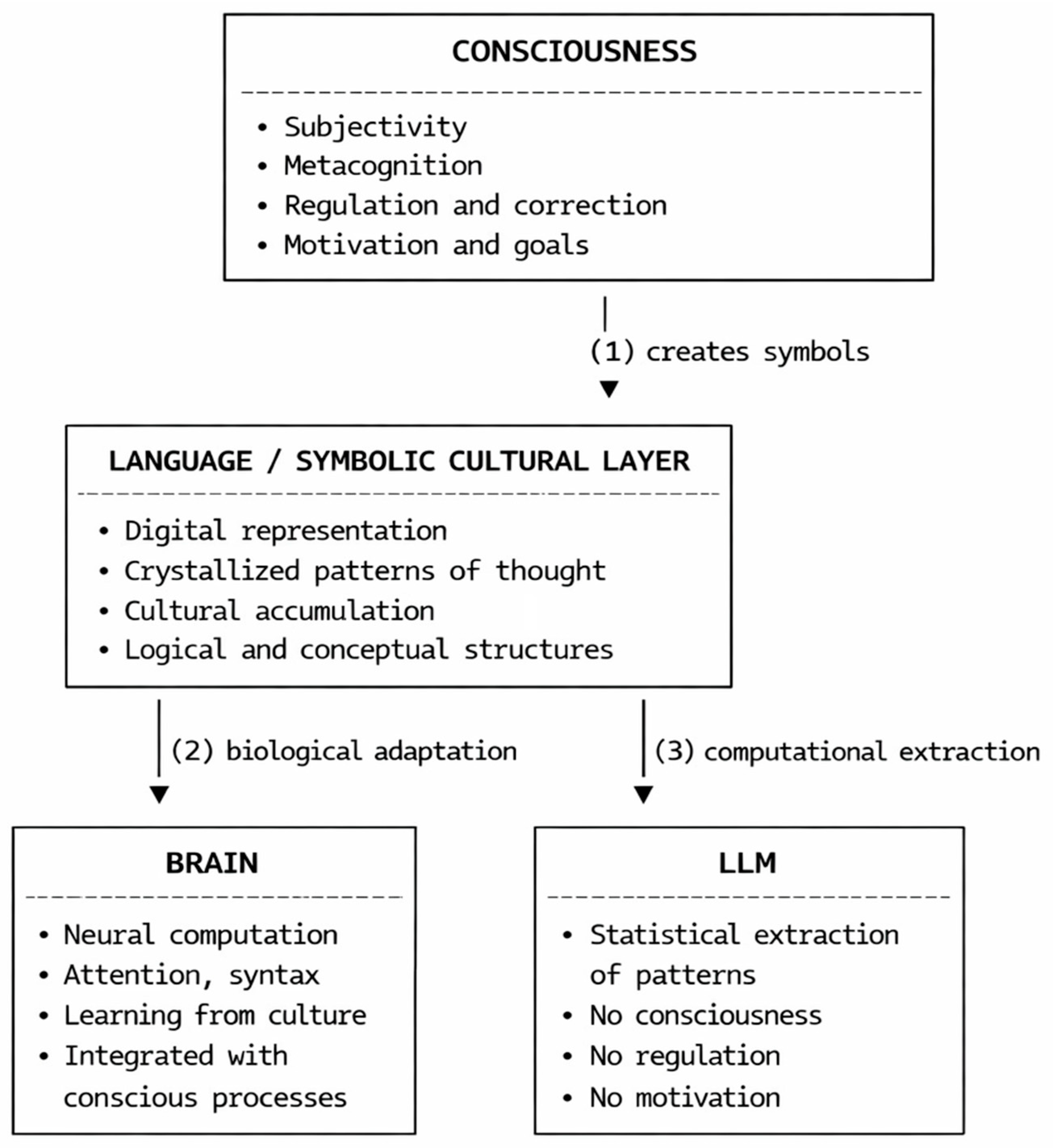

2. Methodology: Language as Crystallized Cognition

3. Consciousness as an Ontological Regulatory Layer

4. Discussion: Limits of Computational Mitigation in LLMs

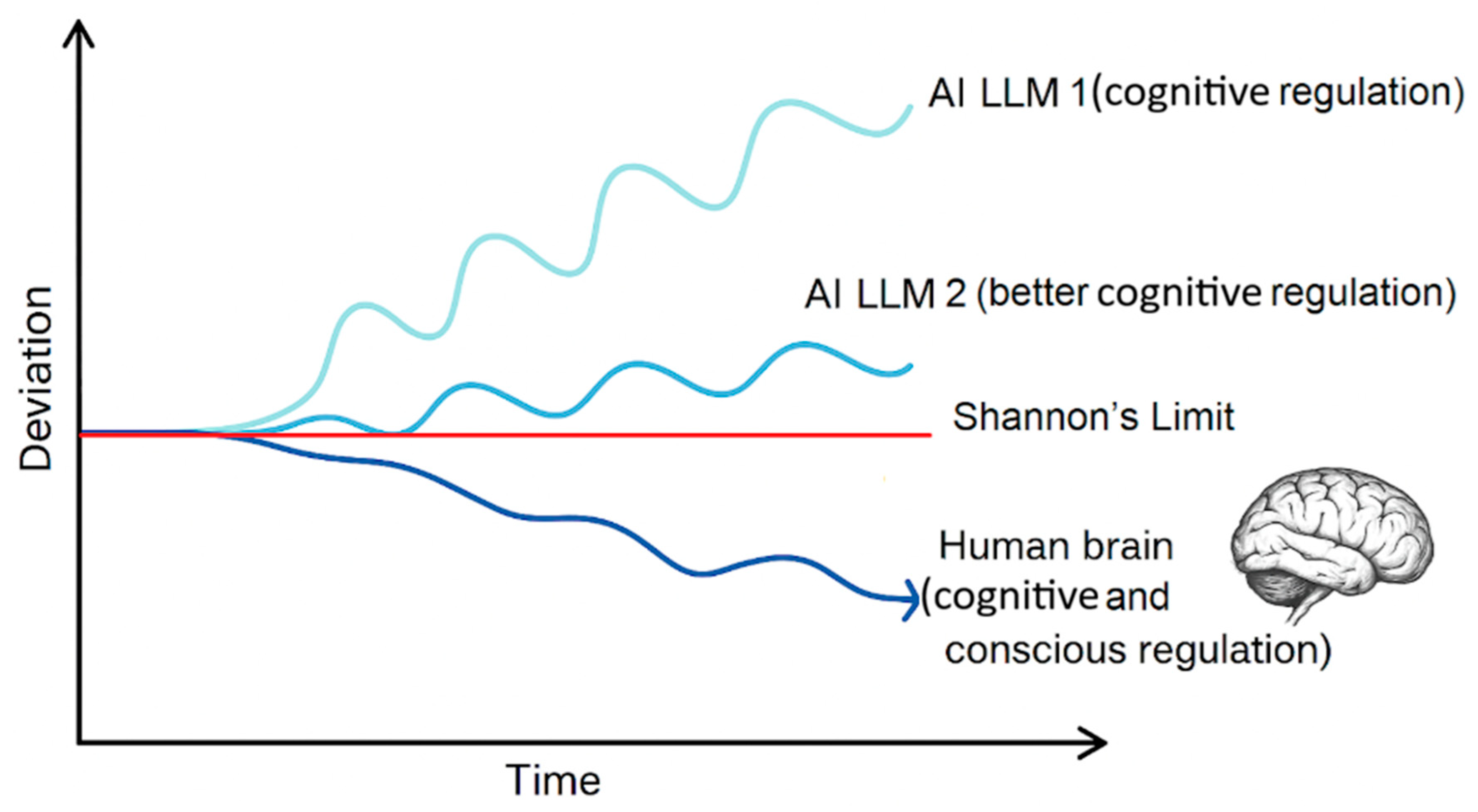

- entropy-based mitigations will reduce hallucinations in short chains but fail beyond many iterations due to rising entropy

- biologically inspired hybrids (e.g., biosynthetic computation) may approach stability, but pure digital systems will plateau. This reinforces the possibility that consciousness is not merely emergent from computation but may be a prerequisite for stable, autonomous cognition.

5. Gene–Culture Coevolution and the Rise of Human Intelligence

- Consciousness enabled the creation of symbolic representations.

- Language accumulated cultural knowledge.

- Brains evolved to process increasingly complex symbolic systems.

- Cultural evolution accelerated cognitive development beyond genetic timescales.

6. Implications for Artificial Cognition and the Philosophy of Mind

- Consciousness without symbolic reasoning is possible (animals) [3].

- Current LLMs cannot achieve conscious regulation through scaling alone, due to information-theoretic limits [14].

- Language is the bridge between biological and artificial cognition, as argued in recent conceptual analyses [15].

- Current LLMs appear unable to overcome information-theoretic limits (e.g., Shannon’s DPI) through computational mitigations alone, leading to inevitable entropy growth and hallucinations; this parallels the second law of thermodynamics, where consciousness in humans acts as an active reducer of cognitive entropy.

7. Information-Theoretic Foundations of Irreducible Limits

| Any purely computational system whose reasoning trajectory is updated iteratively without external low-entropy input must eventually lose stable information and drift toward noise. In current LLMs this decay is confined to the transient inference process, as their parameters remain frozen and structurally unaffected. |

8. Conclusions

9. Limitations

Funding

Institutional Review Board Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Harnad, S. The symbol grounding problem. Physica D 1990, 42, 335–346. [Google Scholar] [CrossRef]

- Tomasello, M. The Cultural Origins of Human Cognition; Harvard University Press: Cambridge, MA, USA, 1999. [Google Scholar]

- Chalmers, D.J. Facing up to the problem of consciousness. Journal of Consciousness Studies 1995, 2, 200–219. [Google Scholar]

- Block, N. Consciousness, accessibility, and the mesh between psychology and neuroscience. Behavioral and Brain Sciences 2007, 30, 481–548. [Google Scholar] [CrossRef] [PubMed]

- Boyd, R.; Richerson, P.J. Culture and the Evolutionary Process; University of Chicago Press: Chicago, IL, USA, 1985. [Google Scholar]

- Henrich, J. The Secret of Our Success; Princeton University Press: Princeton, NJ, USA, 2016. [Google Scholar]

- Dennett, D.C. The Baldwin effect: A crane, not a skyhook. In Evolution and Learning; Weber, B., Depew, D., Eds.; MIT Press: Cambridge, MA, USA, 2003; pp. 69–79. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention Is All You Need. Advances in Neural Information Processing Systems 2017, 30, 5998–6008. [Google Scholar]

- Bubeck, S.; Chandrasekaran, V.; Eldan, R.; Gehrke, J.; Horvitz, E.; Kamar, E.; Lee, P.; Li, Y.; Lundberg, S.; Nori, H.; et al. Sparks of Artificial General Intelligence: Early Experiments with GPT-4. arXiv 2023, arXiv:2303.12712. [Google Scholar] [CrossRef]

- Vygotsky, L.S. Mind in Society; Harvard University Press: Cambridge, MA, USA, 1978. [Google Scholar]

- Clark, A.; Chalmers, D. The extended mind. Analysis 1998, 58, 7–19. [Google Scholar] [CrossRef]

- Jackendoff, R. Foundations of Language; Oxford University Press: Oxford, UK, 2002. [Google Scholar]

- Schrimpf, M.; et al. The neural architecture of language. PNAS 2021, 118. [Google Scholar] [CrossRef] [PubMed]

- Shannon, C.E. A mathematical theory of communication. Bell System Technical Journal 1948, 27, 379–423. [Google Scholar] [CrossRef]

- Straňák, P. What Artificial Intelligence May Be Missing-And Why It Is Unlikely to Attain It Under Current Paradigms. Philosophies 2026, 11, 20. [Google Scholar] [CrossRef]

- Fisher, S.E.; et al. Localization of a gene implicated in a severe speech and language disorder. Nature Genetics 1998, 18, 168–170. [Google Scholar] [CrossRef] [PubMed]

- Pollard, K.S.; et al. An RNA gene expressed during cortical development evolved rapidly in humans. Nature 2006, 443, 167–172. [Google Scholar] [CrossRef] [PubMed]

- Straňák, P. Lossy Loops: Shannon’s DPI and Information Decay in Generative Model Training. Preprints.org. 2025. Available online: https://www.preprints.org/manuscript/202507.2260.

- Deacon, T.W. The Symbolic Species: The Co-evolution of Language and the Brain; W.W. Norton: New York, NY, USA, 1997. [Google Scholar]

- Clark, A. Supersizing the Mind: Embodiment, Action, and Cognitive Extension; Oxford University Press: Oxford, UK, 2008. [Google Scholar]

- Searle, J.R. Minds, brains, and programs. Behavioral and Brain Sciences 1980, 3, 417–457. [Google Scholar] [CrossRef]

- Bender, E.M.; Koller, A. Climbing towards NLU: On meaning, form, and understanding in the age of data. ACL 2020, 5185–5198. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).