Submitted:

08 November 2025

Posted:

11 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Can selective attention head pruning improve the performance and efficiency of transformer models for Urdu abstractive summarization?

- How do different transformer architectures (BART, T5, GPT-2) respond to attention head pruning when adapted for Urdu?

- What is the optimal pruning threshold that balances performance and efficiency for Urdu text summarization?

- How do the optimized models generalize across different domains of Urdu text?

- Development of optimized transformer models (EBART, ET5, and EGPT-2) through strategic attention head pruning, specifically designed for Urdu abstractive summarization;

- Comprehensive investigation of leading transformer architectures (BART, T5, and GPT-2) for Urdu language processing;

- Extensive evaluation of the optimized models using ROUGE metrics and comparative analysis against their original counterparts.

2. Background and Literature Review

2.1. Single Document Summarization Approaches

2.2. Multiple Document Summarization and Hybrid Approaches

3. Methodology

3.1. Dataset Description and Preprocessing

- Tokenization: The input Urdu text was tokenized into subword tokens using the respective pre-trained tokenizers for each model (e.g., GPT2Tokenizer, T5Tokenizer, BartTokenizer).[25]

- Sequence Length Adjustment: The tokenized sequences were padded or truncated to a fixed length of 512 tokens to match the models’ expected input dimensions.

- Text Normalization: Basic normalization was applied, including converting text to lowercase and removing extraneous punctuation marks.

- Tensor Conversion: The final tokenized and adjusted sequences were converted into PyTorch tensors, the required format for model inference and fine-tuning.

3.2. Efficient Transformer Summarizer Models by Pruning Attention Heads Based on Their Contribution

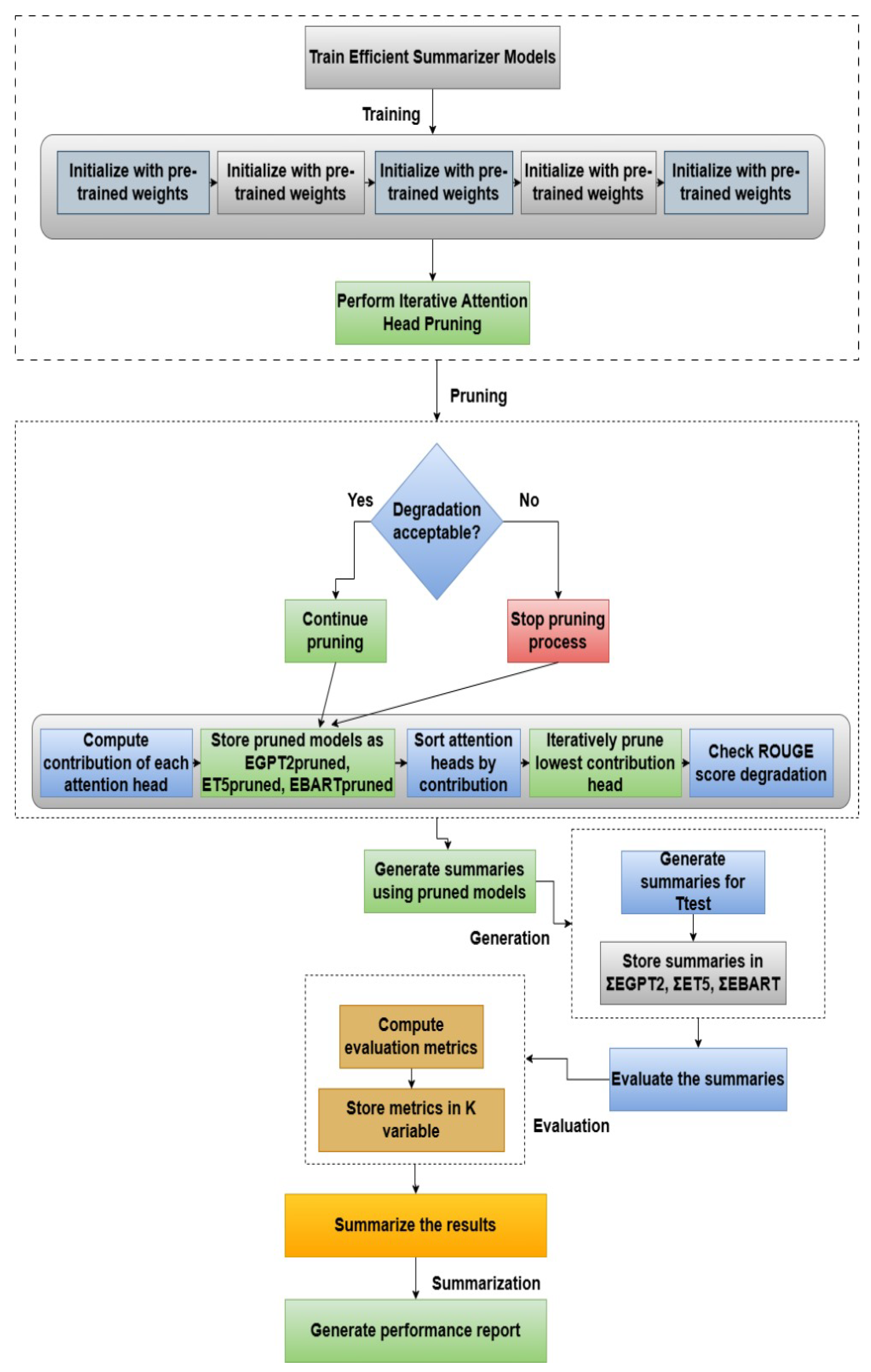

3.2.1. Training the Efficient Summarizer Models

- Fine-tuning: This phase adapts the pre-trained models to the specific domain of Urdu text summarization, exposing them to Urdu language patterns and content features [7]. This foundational step allows the model to learn Urdu-specific linguistic structures and contextual dependencies. The computational complexity of this phase is per epoch, where N is the number of training samples and L is the sequence length.

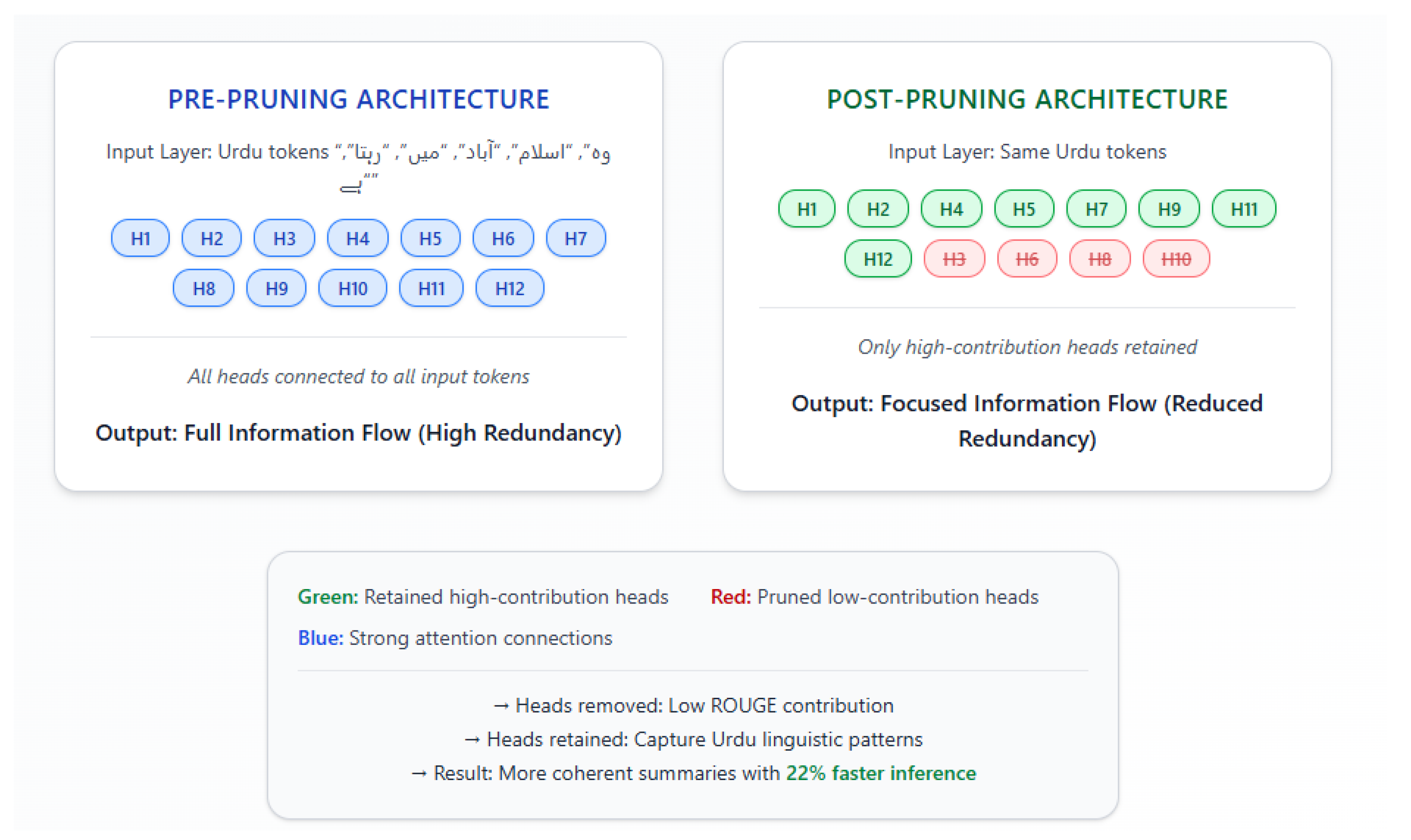

- Pruning: This phase systematically removes redundant attention heads based on their contribution to the ROUGE score, reducing model complexity and sharpening focus on salient Urdu linguistic features. By eliminating heads that contribute minimally to the task, the model becomes more efficient and focused. The iterative pruning process has a complexity of , where H is the number of attention heads and E is the evaluation cost per head.

- Evaluation: This phase ensures that pruning does not lead to performance degradation, guaranteeing the final model is both efficient and accurate. This validation step confirms that the pruned model retains its ability to generate high-quality Urdu summaries while achieving computational gains.

- Number of encoder/decoder layers: 6 (for BART and T5), 12 (for GPT-2).

- Number of attention heads: 12 (for BART and T5), 12 (for GPT-2).

- Embedding size: 768.

- Feed-forward network size: 3072.

- Dropout rate: 0.1.

- Learning rate: 1e-4, using the AdamW optimizer.

- Batch size: 8.

- Sequence length: 512 tokens.

- Weight decay: 0.01.

- Training epochs: 200

| Algorithm 1 Pruning and Summarization with Efficient GPT-2, T5, and BART Models |

|

- The optimal number of transformer blocks or layers in the encoder and decoder part of the transformer to avoid overfitting and better generalization.

- Number of attention heads in the multi-head attention mechanism to optimally focus on different parts of the input text simultaneously.

- Optimal embedding size to capture more information with optimal computational resources.

- Optimal feed-forward neural network size within each transformer block to increase the model’s capacity and decrease the complexity.

- Optimal dropout rate for various transformer layers to prevent overfitting by randomly dropping out some of the units during training.

- Optimal learning rate used by the optimization algorithm, like Adam, SGD, etc., during the fine-tuning process to converge quickly.

- Optimal batch size of the number of paragraphs/sentences processed in a single forward and backward pass during training for more stable gradients.

- Optimal sequence length of the input and output sequences to capture more context and optimal usage of computational resources, etc.

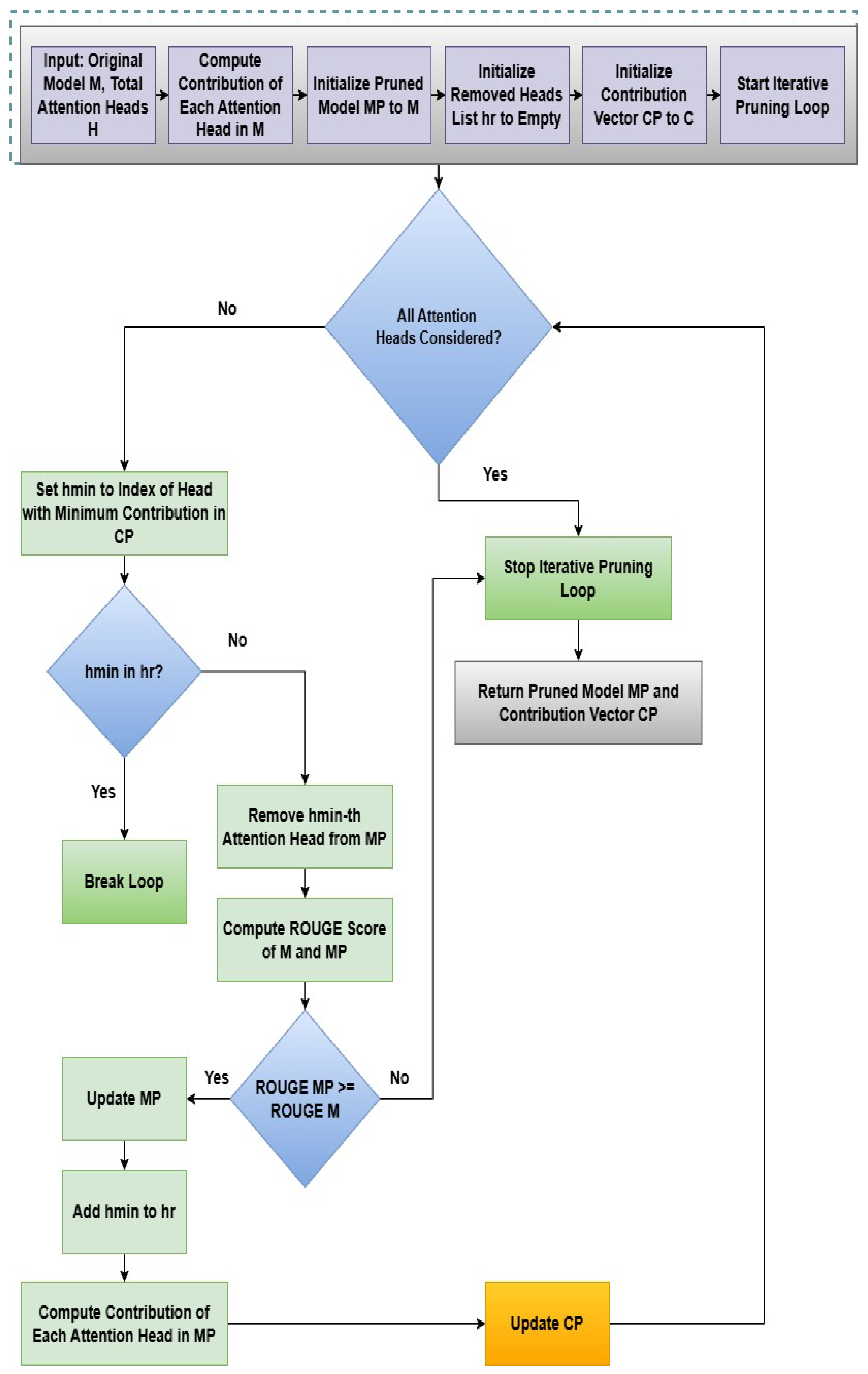

3.2.2. Perform Iterative Attention Heads Pruning with Individual Contribution Computation

-

The function perform_iterative_pruning(model, Tpruned, ),where , is responsible for performing the iterative attention heads pruning based on the individual contribution of the attention heads. EGPT2, ET5, and EBART models, along with the pruned tokenized text documents and an accuracy sliding window threshold , are input to the iterative attention head pruning function.

| Algorithm 2 Attention Heads Pruning Based on the Individual Contribution in the Multi-Head Attention |

|

3.2.3. Generate Summaries Using the Fine-Tuned Pruned Models

3.3. Ablation Study and Pruning Threshold Analysis

3.4. Computational Cost Measurement

4. Results

4.1. Experimental Setup and Statistical Validation

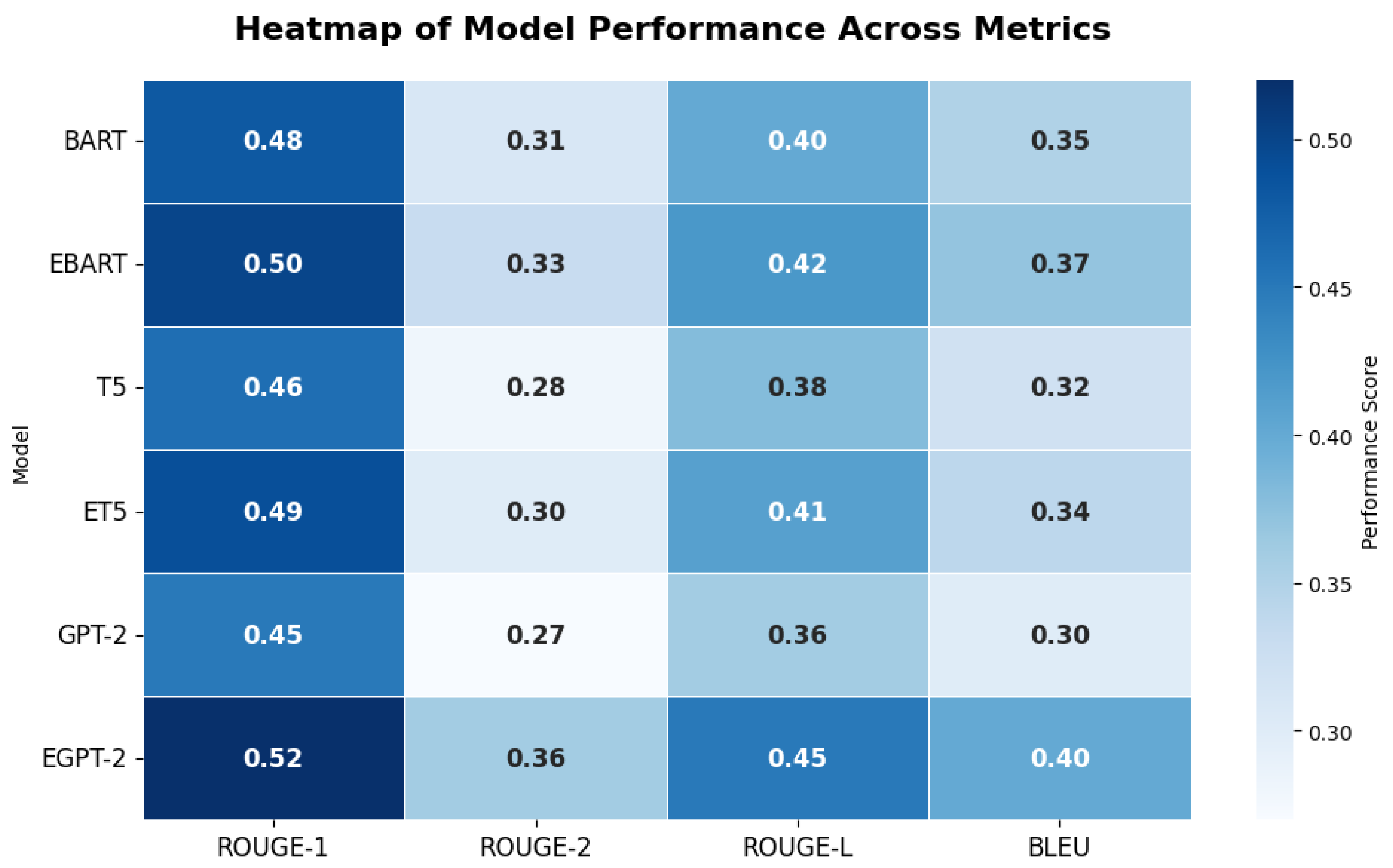

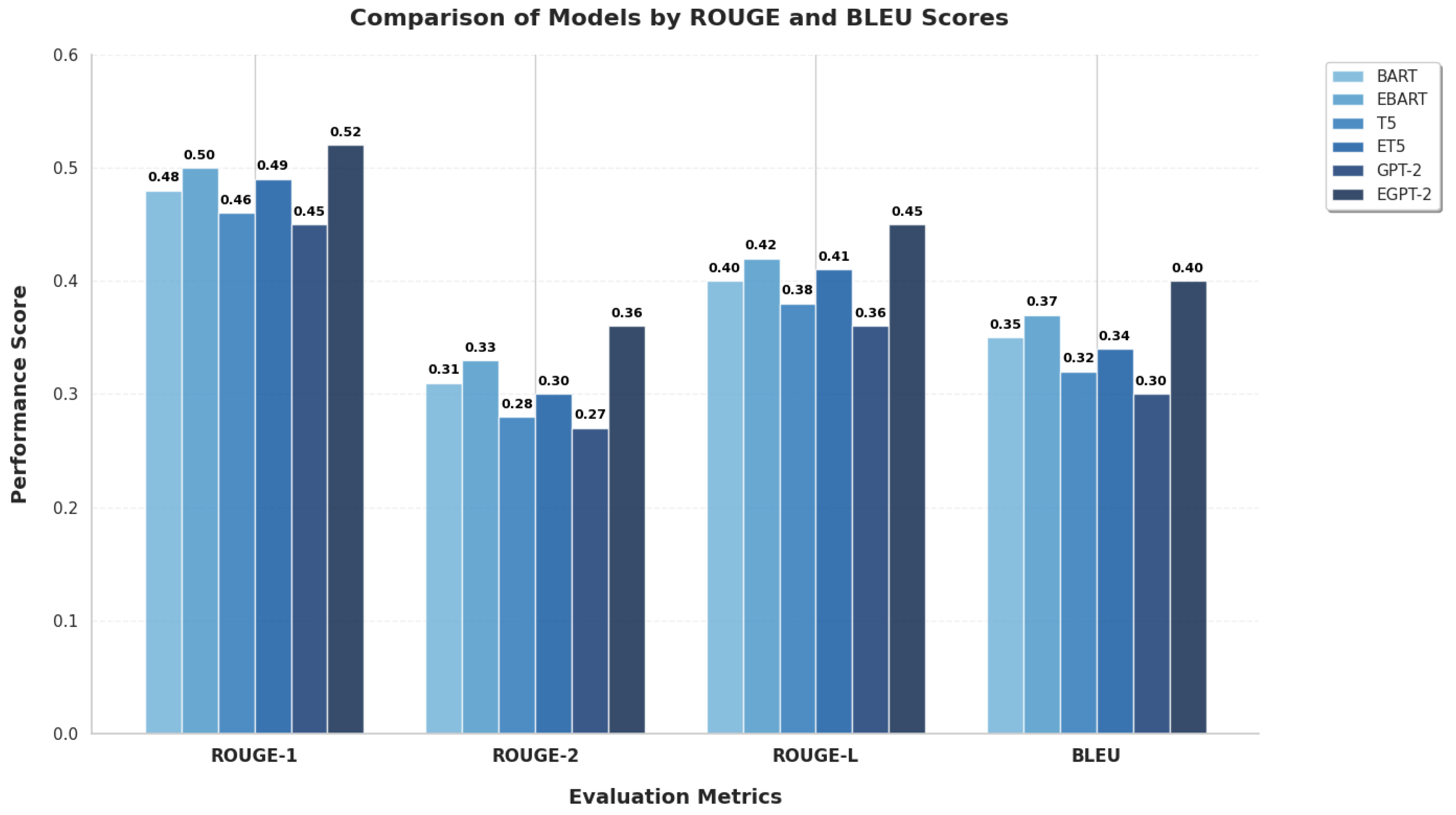

4.2. Overall Performance Analysis

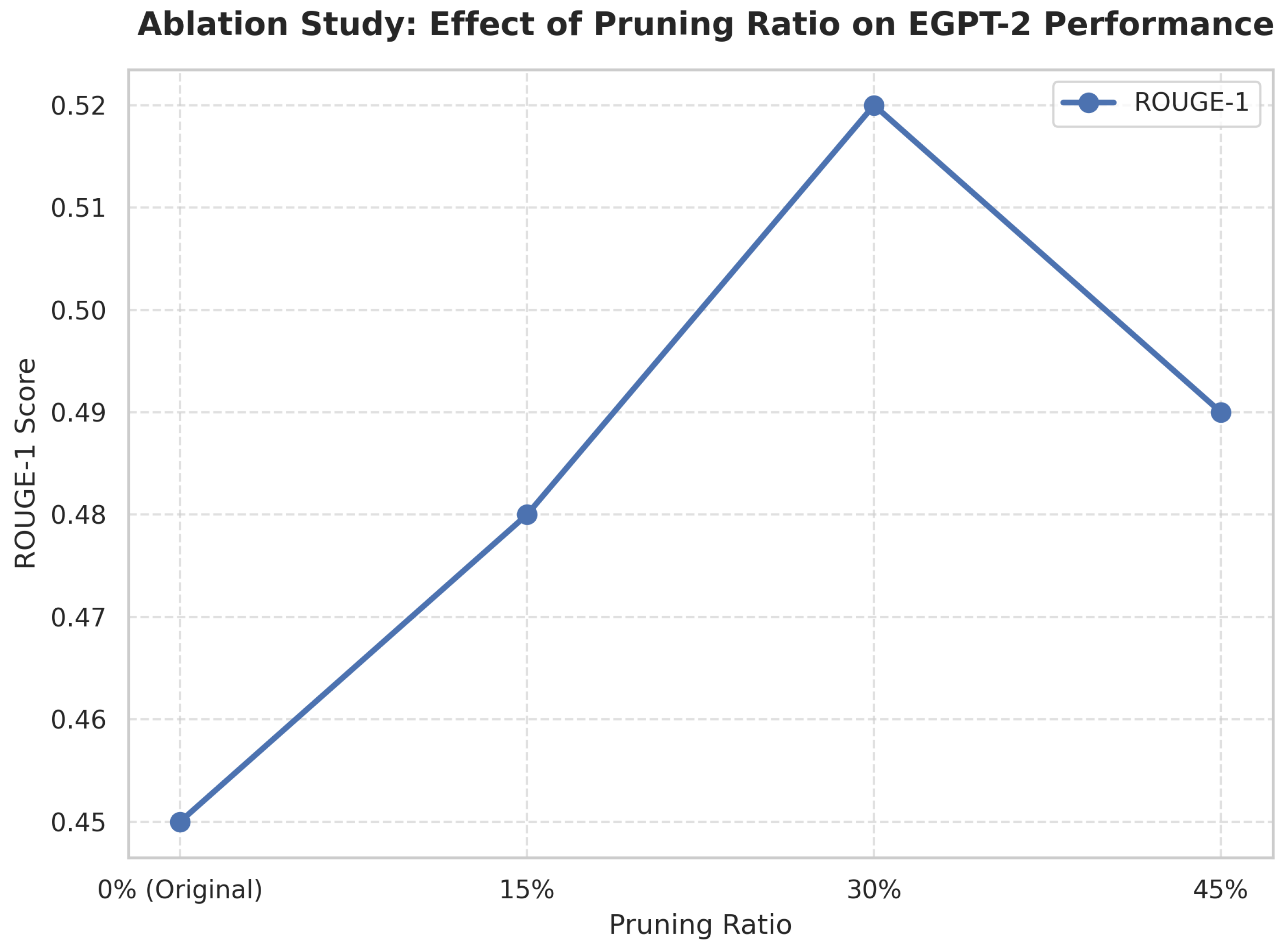

4.3. Ablation Study and Pruning Threshold Analysis

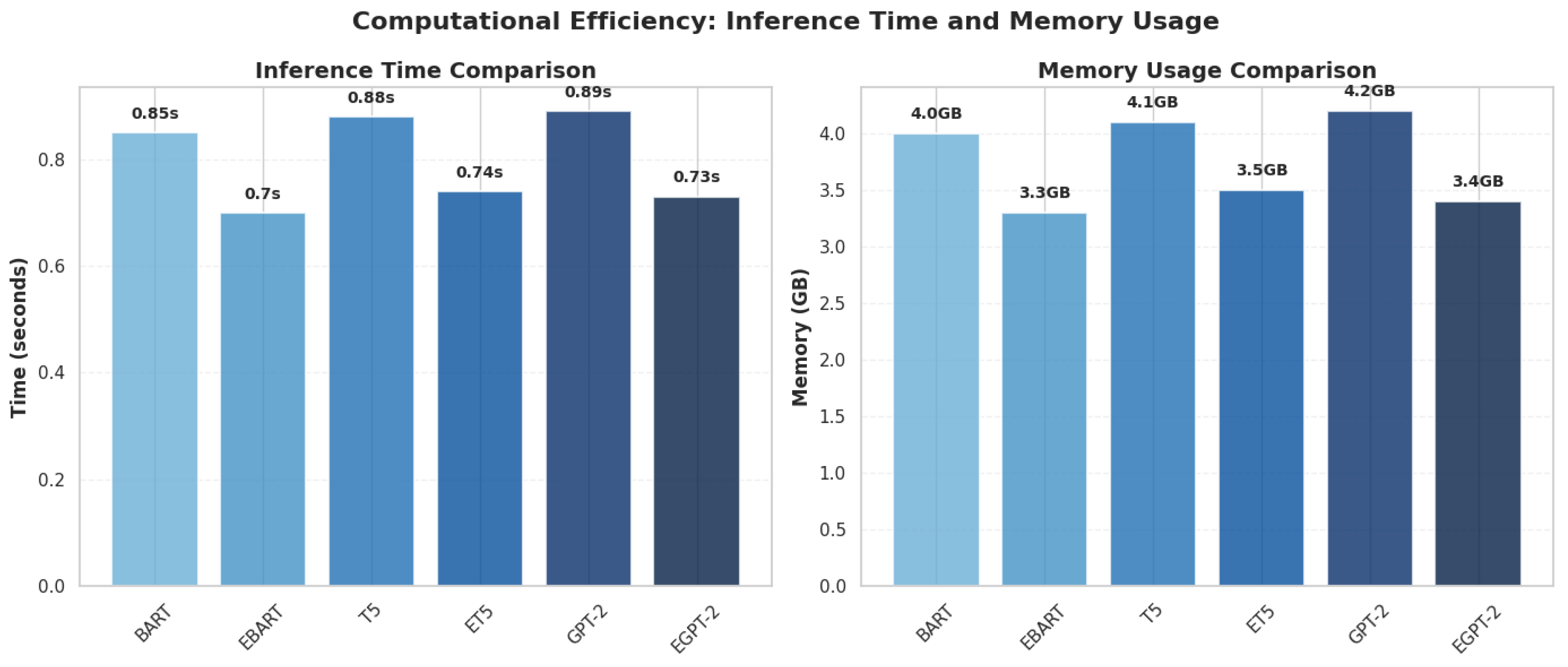

4.4. Computational Efficiency Analysis

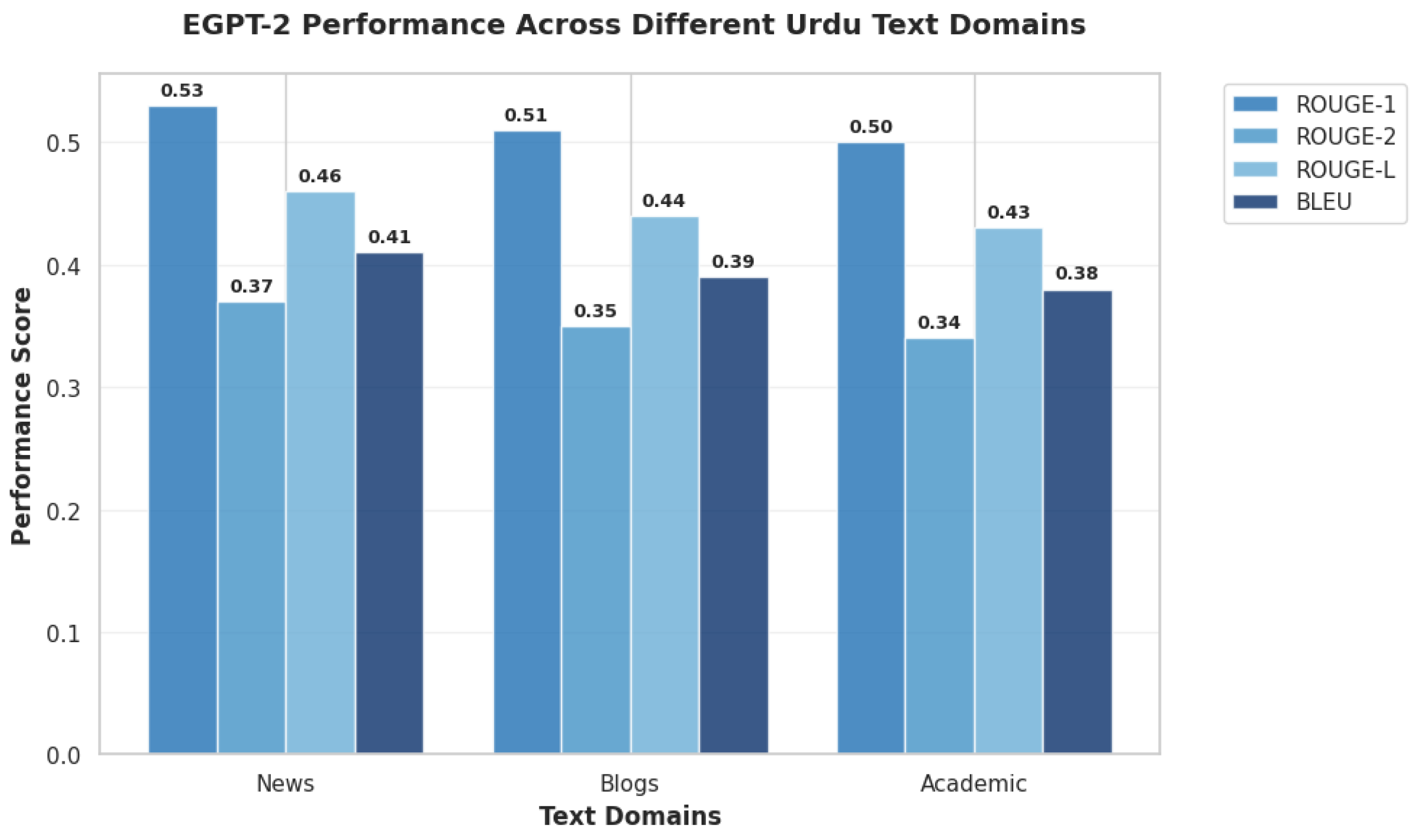

4.5. Cross-Domain Generalization

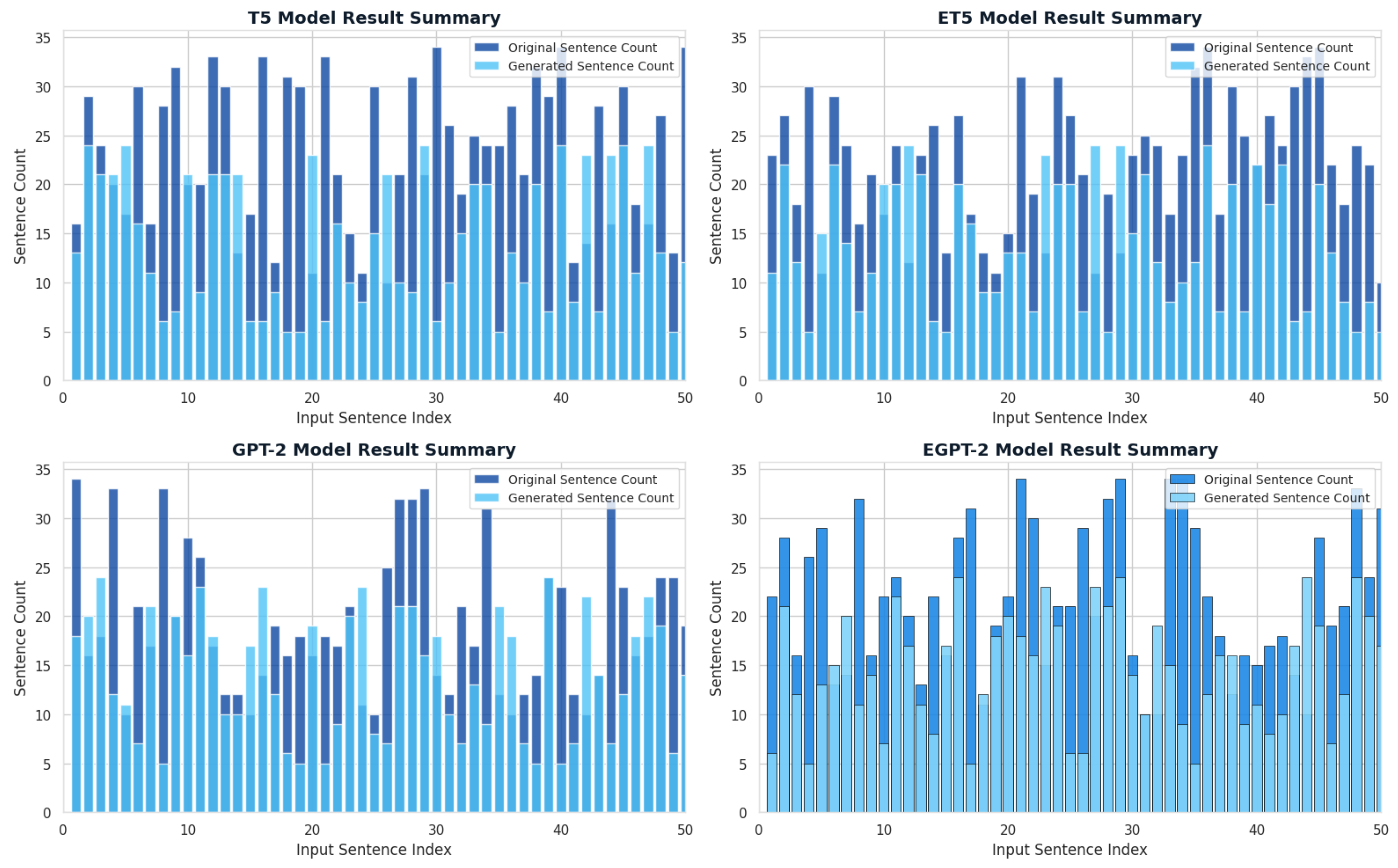

4.6. Impact of Input Sentence Count on Summary Generation

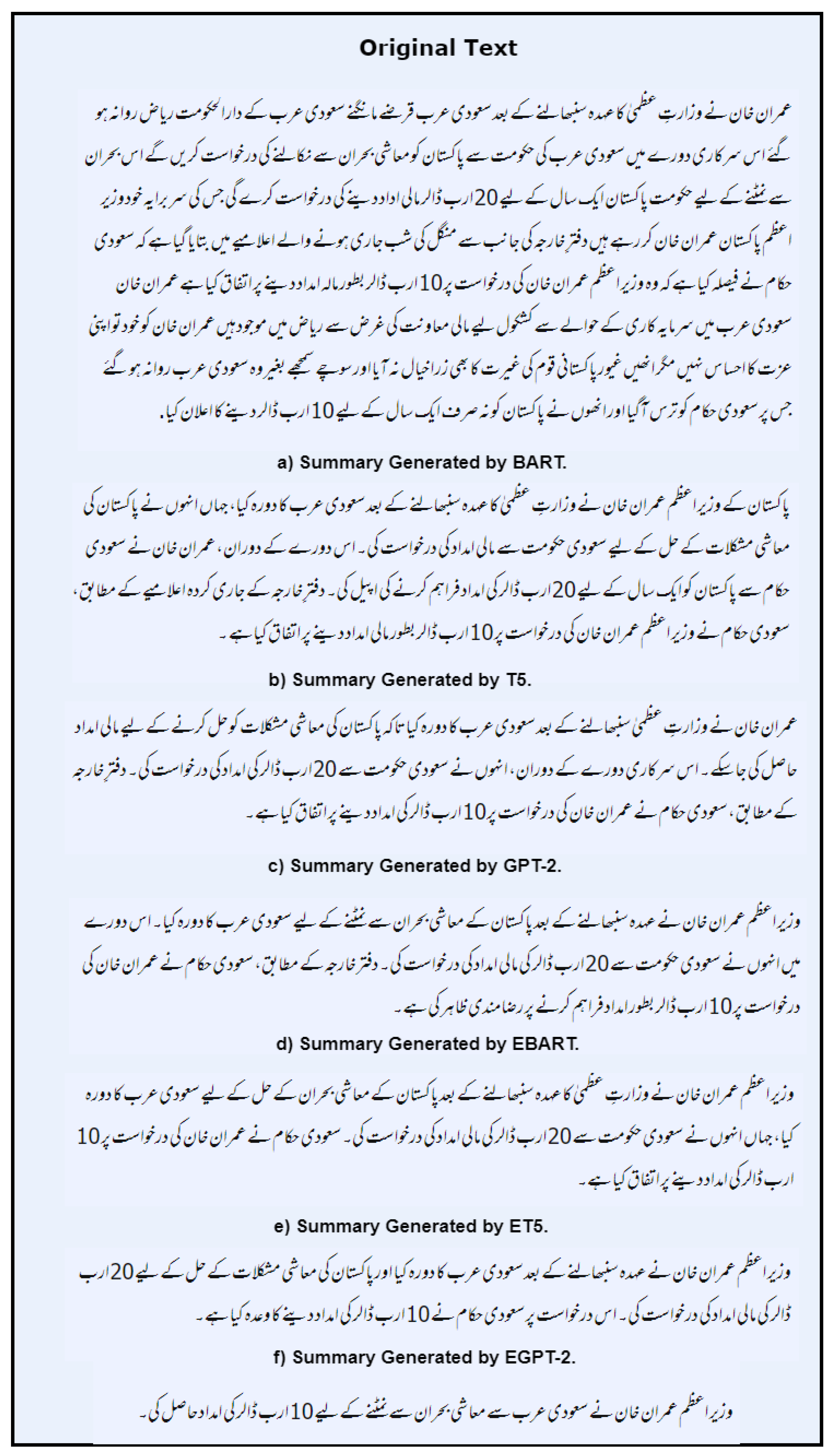

4.7. Qualitative Analysis and Model Comparison

5. Discussion

5.1. Interpretation of Results and Comparison with Prior Work

5.2. Answering Research Questions

- Can selective attention head pruning improve performance and efficiency? Yes, our results clearly show that all pruned models (EBART, ET5, EGPT-2) outperform their original counterparts across all metrics while achieving significant reductions in inference time (16-22%) and memory usage (15-19%).

- How do different architectures respond to pruning? All three architectures benefited from pruning, but GPT-2 showed the greatest improvement, suggesting that decoder-only models may be particularly amenable to this optimization technique for generative tasks.

- What is the optimal pruning threshold? Our ablation study identified 30% as the optimal pruning ratio for EGPT-2, balancing performance gains with computational efficiency. Beyond this threshold, performance degradation occurs, validating our contribution-based iterative approach.

- How do optimized models generalize across domains? EGPT-2 maintained strong performance (ROUGE-1: 0.50-0.53) across news, blogs, and academic texts, demonstrating robust cross-domain generalization capabilities.

5.3. Theoretical and Practical Implications

5.4. Urdu vs. English Processing Considerations

6. Conclusions and Future Work

- Developing a novel attention head pruning framework specifically tailored for low-resource languages

- Demonstrating that model optimization can simultaneously improve performance and computational efficiency

- Providing a comprehensive evaluation methodology for Urdu abstractive summarization

- Establishing transformer-based models as viable solutions for Urdu NLP tasks

- Extending the pruning methodology to other low-resource languages with similar morphological complexity

- Integrating Urdu-specific morphological embeddings to enhance semantic understanding and capture language-specific features

- Investigating dynamic pruning techniques that adapt to input characteristics and domain requirements

- Deploying and evaluating our models in real-time applications such as streaming news feeds and social media monitoring

- Exploring multi-modal summarization approaches that combine text with other data modalities

- Developing domain adaptation techniques to further improve performance in specialized domains

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Muhammad, M.; Jazeb, N.; Martinez-Enriquez, A.; Sikander, A. EUTS: Extractive Urdu Text Summarizer. In Proceedings of the 2018 17th Mexican International Conference on Artificial Intelligence (MICAI), Guadalajara, Mexico, 22–27 October 2018; pp. 39–44.

- Yu, Z.; et al. Coastal Zone Information Model: A comprehensive architecture for coastal digital twin by integrating data, models, and knowledge. Fundamental Research 2024. [CrossRef]

- Vijay, S.; Rai, V.; Gupta, S.; Vijayvargia, A.; Sharma, D.M. Extractive text summarisation in Hindi. In Proceedings of the 2017 International Conference on Asian Language Processing (IALP), Singapore, 5–8 December 2017; pp. 318–321.

- Rahimi, S.R.; Mozhdehi, A.T.; Abdolahi, M. An overview on extractive text summarization. In Proceedings of the 2017 IEEE 4th International Conference on Knowledge-Based Engineering and Innovation (KBEI), Tehran, Iran, 22 December 2017; pp. 54–62.

- Daud, A.; Khan, W.; Che, D. Urdu language processing: a survey. Artif. Intell. Rev. 2016, 27, 279–311. [CrossRef]

- Ali, A.R.; Ijaz, M. Urdu text classification. In Proceedings of the 6th International Conference on Frontiers of Information Technology, Islamabad, Pakistan, 16–18 December 2009; pp. 1–4.

- Lewis, M.; Liu, Y.; Goyal, N.; Ghazvininejad, M.; Mohamed, A.; Levy, O.; Zettlemoyer, L. BART: Denoising sequence-to-sequence pre-training for natural language generation, translation, and comprehension. arXiv 2019, arXiv:1910.13461. [CrossRef]

- Egomnwan, E.; Chali, Y. Transformer-based model for single documents neural summarization. In Proceedings of the 3rd Workshop on Neural Generation and Translation, Hong Kong, China, 4 November 2019; pp. 70–79.

- Abolghasemi, M.; Dadkhah, C.; Tohidi, N. HTS-DL: Hybrid text summarization system using deep learning. In Proceedings of the 2022 27th International Computer Conference, Computer Society of Iran (CSICC), Tehran, Iran, 23–24 February 2022; pp. 1–6.

- Jiang, J.; Zhang, H.; Dai, C.; Zhao, Q.; Feng, H.; Ji, Z.; Li, Y. Enhancements of attention-based bidirectional LSTM for hybrid automatic text summarization. IEEE Access 2021, 9, 123660–123671. [CrossRef]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. RoBERTa: A Robustly Optimized BERT Pretraining Approach. arXiv 2019, arXiv:1907.11692.

- Chowdhury, S.A.; Abdelali, A.; Darwish, K.; Soon-Gyo, J.; Salminen, J.; Jansen, B.J. Improving Arabic Text Categorization Using Transformer Training Diversification. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), Online, 16–20 November 2020; pp. 226–236.

- Farooq, A.; Batool, S.; Noreen, Z. Comparing different techniques of Urdu text summarization. In Proceedings of the 2021 Mohammad Ali Jinnah University International Conference on Computing (MAJICC), Karachi, Pakistan, 15–16 December 2021; pp. 1–6.

- Asif, M.; Raza, S.A.; Iqbal, J.; Perwatz, N.; Faiz, T.; Khan, S. Bidirectional encoder approach for abstractive text summarization of Urdu language. In Proceedings of the 2022 International Conference on Business Analytics for Technology and Security (ICBATS), Dubai, United Arab Emirates, 16–17 February 2022; pp. 1–6.

- Khyat, J.; Lakshmi, S.S.; Rani, M.U. Hybrid Approach for Multi-Document Text Summarization by N-gram and Deep Learning Models. J. Intell. Syst. 2021, 30, 123–135.

- Mujahid, K.; Bhatti, S.; Memon, M. Classification of URDU headline news using Bidirectional Encoder Representation from Transformer and Traditional Machine learning Algorithm. In Proceedings of the IMTIC 2021 - 6th International Multi-Topic ICT Conference: AI Meets IoT: Towards Next Generation Digital Transformation, Karachi, Pakistan, 24–25 November 2021; pp. 1–6.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is All You Need. In Proceedings of the 31st International Conference on Neural Information Processing Systems (NeurIPS), Long Beach, CA, USA, 4–9 December 2017; pp. 6000–6010.

- Lin, C.Y. ROUGE: A Package for Automatic Evaluation of Summaries. In Proceedings of the Text Summarization Branches Out, Barcelona, Spain, 25–26 July 2004; pp. 74–81.

- Wolf, T.; Debut, L.; Sanh, V.; Chaumond, J.; Delangue, C.; Moi, A.; Cistac, P.; Rault, T.; Louf, R.; Funtowicz, M.; et al. Transformers: State-of-the-Art Natural Language Processing. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: System Demonstrations, Online, 16–20 November 2020; pp. 38–45.

- Michel, P.; Levy, O.; Neubig, G. Are Sixteen Heads Really Better than One? In Proceedings of the Advances in Neural Information Processing Systems 32 (NeurIPS 2019), Vancouver, BC, Canada, 8–14 December 2019; pp. 14014–14024.

- Cheema, A.S.; Azhar, M.; Arif, F.; Sohail, M.; Iqbal, A. EGPT-SPE: Story Point Effort Estimation Using Improved GPT-2 by Removing Inefficient Attention Heads. Appl. Intell. 2025, 55, 1–16. [CrossRef]

- Savelieva, A.; Au-Yeung, B.; Ramani, V. Abstractive Summarization of Spoken and Written Instructions with BERT. arXiv 2020, arXiv:2008.09676.

- Xue, L.; Constant, N.; Roberts, A.; Kale, M.; Al-Rfou, R.; Siddhant, A.; Barua, A.; Raffel, C. mT5: A Massively Multilingual Pre-trained Text-to-Text Transformer. arXiv 2020, arXiv:2010.11934. [CrossRef]

- Munaf, M.; Afzal, H.; Mahmood, K.; Iltaf, N. Low Resource Summarization Using Pre-trained Language Models. ACM Trans. Asian Low-Resour. Lang. Inf. Process. 2024, 23, 1–19. [CrossRef]

- Khalid, U.; Beg, M.O.; Arshad, M.U. RUBERT: A Bilingual Roman Urdu BERT Using Cross Lingual Transfer Learning. arXiv 2021, arXiv:2102.11278.

- Rauf, F.; Irfan, R.; Mushtaq, L.; Ashraf, M. Fake News Detection in Urdu Using Deep Learning. VFAST Trans. Softw. Eng. 2022, 10, 151–165. [CrossRef]

- Azhar, M.; Amjad, A.; Dewi, D.A.; Kasim, S. A Systematic Review and Experimental Evaluation of Classical and Transformer-Based Models for Urdu Abstractive Text Summarization. Information 2025, 16, 784. [CrossRef]

| Model | ROUGE-1 | ROUGE-2 | ROUGE-L | BLEU Score |

|---|---|---|---|---|

| BART | 0.48 | 0.31 | 0.40 | 0.35 |

| EBART | 0.50 | 0.33 | 0.42 | 0.37 |

| T5 | 0.46 | 0.28 | 0.38 | 0.32 |

| ET5 | 0.49 | 0.30 | 0.41 | 0.34 |

| GPT-2 | 0.45 | 0.27 | 0.36 | 0.30 |

| EGPT-2 | 0.52 | 0.36 | 0.45 | 0.40 |

| Pruning Ratio | ROUGE-1 | Inference Time (s) | Memory Usage (GB) |

|---|---|---|---|

| 0% (Original) | 0.45 | 0.89 | 4.2 |

| 15% | 0.48 | 0.78 | 3.7 |

| 30% | 0.52 | 0.73 | 3.4 |

| 45% | 0.49 | 0.70 | 3.1 |

| Model | Inference Time (s) | Reduction | Memory Usage (GB) | Reduction |

|---|---|---|---|---|

| BART | 0.85 | - | 4.0 | - |

| EBART | 0.70 | 18% | 3.3 | 17% |

| T5 | 0.88 | - | 4.1 | - |

| ET5 | 0.74 | 16% | 3.5 | 15% |

| GPT-2 | 0.89 | - | 4.2 | - |

| EGPT-2 | 0.73 | 22% | 3.4 | 19% |

| Domain | ROUGE-1 | ROUGE-2 | ROUGE-L | BLEU |

|---|---|---|---|---|

| News | 0.53 | 0.37 | 0.46 | 0.41 |

| Blogs | 0.51 | 0.35 | 0.44 | 0.39 |

| Academic | 0.50 | 0.34 | 0.43 | 0.38 |

| Average | 0.51 | 0.35 | 0.44 | 0.39 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).