Submitted:

28 October 2025

Posted:

30 October 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- A topological perception multi-intelligent body reinforcement learning framework is proposed to realize the paradigm shift from "geometric avoidance" to "topological anti-entanglement"; the innovative hierarchical control architecture based on the topological isolation experience playback mechanism solves the limitations of traditional methods of ignoring movement history and effectively prevents entanglement problems.

- Real-time quantitative evaluation based on topological invariance can be realized, and the risk of winding can be perceived and measured at the millisecond level; through strict convergence and sample complexity analysis, the stability and efficiency of the proposed algorithm can be theoretically guaranteed.

- Develop a security intervention layer that integrates dynamic concurrent control and real-time action suppression to ensure the robustness and generalization of the system in complex scenarios; the enhanced design gives the system active risk management capabilities, including early warning and autonomous mitigation of potential threats.

2. Related Work

3. Problem Statement

3.1. Assumptions

- The task priority relationship is represented by a directed acyclic graph (DAG), and any available operating arm can perform each task;

- The duration of each maintenance process is determined by the minimum operating speed, which is a fixed value and is not affected by the kinematic configuration of the operating arm;

- It is assumed that there is a robust control strategy, which can alleviate the impact of sudden dynamic obstacles and prevent the sudden failure of the drive device during operation;

- The sensors used for shape reconstruction and positioning are highly reliable, and the communication infrastructure supports the real-time calculation of the global topology state.

3.2. Fundamental Definitions

3.3. Objective Function and Constraints

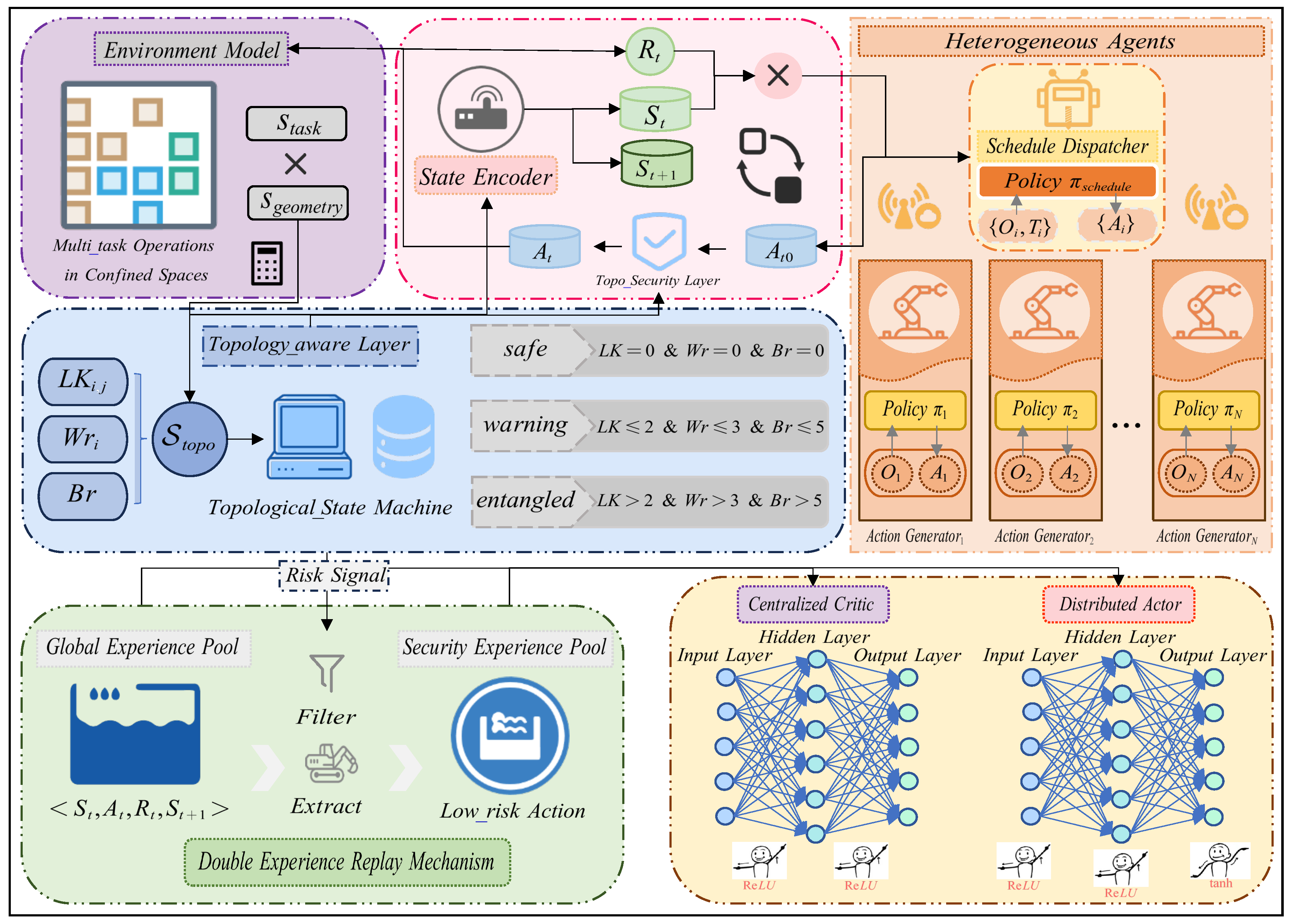

4. Methodology

4.1. Algorithm Framework

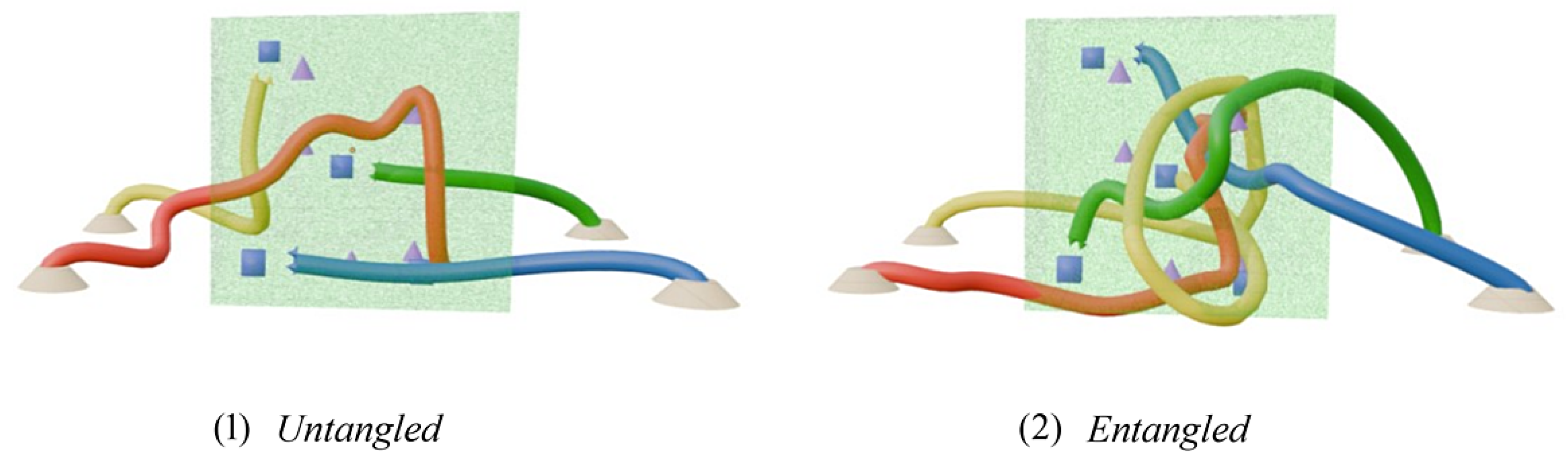

4.2. Topology Awareness and Safety Intervention Mechanisms

-

Simplified Braid Word Length (): By projecting the movement of the multi-robot system into a braid group and simplifying it to the minimum standard form, its length can directly measure the topological complexity and intertwining process of the system movement. Degree. See Algorithm 1 for the specific calculation process.

-

Linking Number (Lk): Accurately quantifies the degree of interlocking between robotic arms using Gaussian integral calculations. Mathematically, it is expressed as:This invariant remains strictly unchanged under environmental isotopies, providing a reliable theoretical basis for entanglement detection.

- Self-Entanglement Degree (Wr): Evaluates the propensity of a single robotic arm to self-entangle by calculating the self-winding integral along its centerline. Mathematically, it is expressed as:

4.3. Theoretical Framework of Dual Experience Replay and Topological Value Learning

4.4. Hierarchical Coordination Architecture of Heterogeneous Intelligent Agents

4.4.1. Hierarchical Design and Cooperative Optimization Mechanism

4.4.2. Dynamic Concurrent Control Mechanism

5. Experimentation

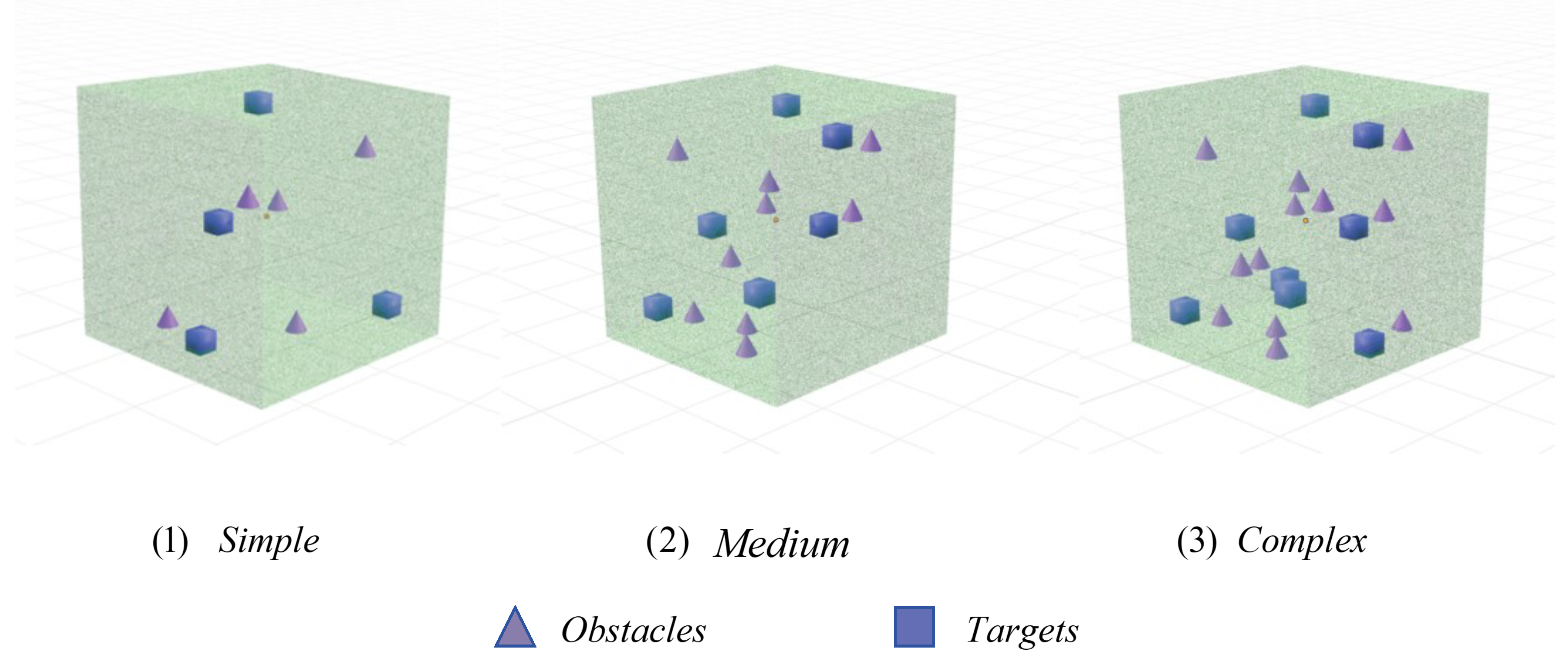

5.1. Simulation Environment Setup

5.2. Experimental Setup

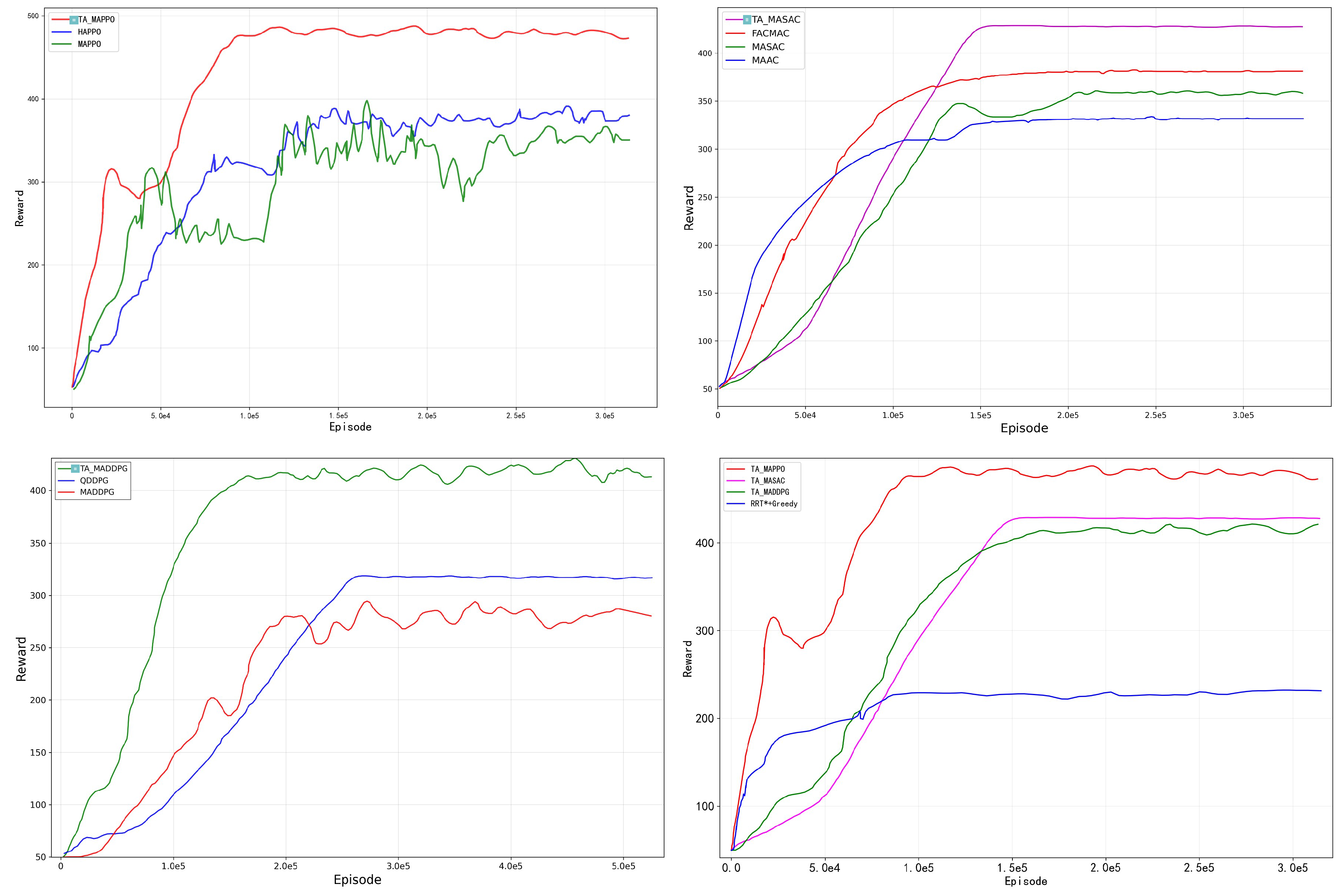

5.3. Performance Comparative Analysis

5.4. Ablation Experiment Analysis

5.5. Analysis of Algorithm Efficiency and Stability

6. Conclusion

Appendix A. Topological Invariance of the Linking Number Under Ambient Isotopy

Appendix B. Convergence of the Braid Word Simplification Algorithm

Appendix B.1. Preliminaries on Braid Groups

- for (far commutativity)

- for (braid relation)

Appendix B.2. Simplification Rules

- Cancellation: and

- Distant Commutation: for

- Braid Relation:

- No cancelable pairs ( or )

- Canonical generator ordering

- Consistent application of braid relations

Appendix C. Topological Invariance of the Writhe Under Ambient Isotopy

Appendix D. Verification of Closed-Loop System Stability

Appendix D.1. Introduction

Appendix D.2. Assumptions

Appendix D.3. Stability Analysis

- and for

- as

- for

Appendix D.4. Lyapunov Function Construction for MARL

Appendix E. Sample Complexity Analysis of TA-MARL

Appendix E.1. Theoretical Framework

References

- Rus, D.; Tolley, M.T. Design, fabrication and control of soft robots. Nature 2015, 521, 467–475. [Google Scholar] [CrossRef] [PubMed]

- Karaman, S.; Frazzoli, E. Sampling-based algorithms for optimal motion planning. Int. J. Rob. Res. 2011, 30, 846–894. [Google Scholar] [CrossRef]

- Betts, J.T. Survey of Numerical Methods for Trajectory Optimization. Journal of Guidance Control and Dynamics 1998, 21, 193–207. [Google Scholar] [CrossRef]

- Shiller, Z. Online sub-optimal obstacle avoidance. In Proceedings of the Proceedings 1999 IEEE International Conference on Robotics and Automation (Cat. No.99CH36288C), Vol. 1; 1999; pp. 335–340. [Google Scholar] [CrossRef]

- Lowe, R.; Wu, Y.; Tamar, A.; Harb, J.; Abbeel, P.; Mordatch, I. Multi-agent actor-critic for mixed cooperative-competitive environments. In Proceedings of the Proceedings of the 31st International Conference on Neural Information Processing Systems, Red Hook, NY, USA, 2017; NIPS’17, p. 6382–6393.

- Rashid, T.; Samvelyan, M.; De Witt, C.S.; Farquhar, G.; Foerster, J.; Whiteson, S. Monotonic value function factorisation for deep multi-agent reinforcement learning. J. Mach. Learn. Res. 2020, 21. [Google Scholar]

- Chen, Y.F.; Liu, M.; Everett, M.; How, J.P. Decentralized non-communicating multiagent collision avoidance with deep reinforcement learning. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA); 2017; pp. 285–292. [Google Scholar] [CrossRef]

- Hatton, R.L.; Choset, H. Generating gaits for snake robots by annealed chain fitting and Keyframe wave extraction. In Proceedings of the 2009 IEEE/RSJ International Conference on Intelligent Robots and Systems; 2009; pp. 840–845. [Google Scholar] [CrossRef]

- Soft robotics: Biological inspiration, state of the art, and future research. Applied Bionics and Biomechanics 2008, 5, 99–117. [CrossRef]

- Rucker, D.C.; Jones, B.A.; Webster III, R.J. A Geometrically Exact Model for Externally Loaded Concentric-Tube Continuum Robots. IEEE Transactions on Robotics 2010, 26, 769–780. [Google Scholar] [CrossRef]

- Webster, R.J.; Jones, B.A. Design and Kinematic Modeling of Constant Curvature Continuum Robots: A Review. The International Journal of Robotics Research 2010, 29, 1661–1683. [Google Scholar] [CrossRef]

- Lou, G.; Wang, C.; Xu, Z.; Liang, J.; Zhou, Y. Controlling Soft Robotic Arms Using Hybrid Modelling and Reinforcement Learning. IEEE Robotics and Automation Letters 2024, 9, 7070–7077. [Google Scholar] [CrossRef]

- Mohammad, N.; Bezzo, N. Soft Actor-Critic-Based Control Barrier Adaptation for Robust Autonomous Navigation in Unknown Environments. 2025 IEEE International Conference on Robotics and Automation (ICRA) 2025, pp. 2361–2367.

- Yang, Z.; Wang, Y.; Jiang, Y.; Zhang, H.; Yang, C. DeformerNet based 3D Deformable Objects Shape Servo Control for Bimanual Robot Manipulation. In Proceedings of the 2024 IEEE International Conference on Industrial Technology (ICIT); 2024; pp. 1–7. [Google Scholar] [CrossRef]

- Afnan Ahmed, A.; Saber, S.; Jinane, M.; Noel, M. A multi-robot collaborative manipulation framework for dynamic and obstacle-dense environments: integration of deep learning for real-time task execution. Frontiers in Robotics and AI 2025, 12, 1585544. [Google Scholar] [CrossRef]

- Ricca, R.L. Applications of knot theory in fluid mechanics. Banach Center Publications 1998, 42, 321–346. [Google Scholar] [CrossRef]

- Peterson, I. Knot Physics. Science News 1989, 135, 174–174. [Google Scholar] [CrossRef]

- Zhou, P.; Zheng, P.; Qi, J.; Li, C.; Lee, H.Y.; Duan, A.; Lu, L.; Li, Z.; Hu, L.; Navarro-Alarcon, D. Reactive human–robot collaborative manipulation of deformable linear objects using a new topological latent control model. Robot. Comput.-Integr. Manuf. 2024, 88. [Google Scholar] [CrossRef]

- Kuskonmaz, B.; Wisniewski, R.; Kallesøe, C. Topological Data Analysis-Based Replay Attack Detection for Water Networks. IFAC-PapersOnLine 2024, 58, 91–96. [Google Scholar] [CrossRef]

- Lowe, R.; Wu, Y.; Tamar, A.; Harb, J.; Abbeel, P.; Mordatch, I. Multi-Agent Actor-Critic for Mixed Cooperative-Competitive Environments. ArXiv, 2017; arXiv:1706.02275. [Google Scholar]

- Zhan, G.; Zhang, X.; Li, Z.; Xu, L.; Zhou, D.; Yang, Z. Multiple-UAV Reinforcement Learning Algorithm Based on Improved PPO in Ray Framework. Drones 2022, 6. [Google Scholar] [CrossRef]

- Sá Barreto, A.; Stefanov, P. Recovery of a general nonlinearity in the semilinear wave equation. Asymptotic Analysis 2024, 138, 27–68. [Google Scholar] [CrossRef]

- Kuba, J.G.; Chen, R.; Wen, M.; Wen, Y.; Sun, F.; Wang, J.; Yang, Y. Trust Region Policy Optimisation in Multi-Agent Reinforcement Learning. In Proceedings of the International Conference on Learning Representations; 2022. [Google Scholar]

- Garg, S.; Goharimanesh, M.; Sajjadi, S.; Janabi-Sharifi, F. Autonomous control of soft robots using safe reinforcement learning and covariance matrix adaptation. Engineering Applications of Artificial Intelligence 2025, 153, 110791. [Google Scholar] [CrossRef]

- Artin, E. Theory of Braids. Annals of Mathematics 1947, 48, 101–126. [Google Scholar] [CrossRef]

- BIRMAN, J.S. Braids, Links, and Mapping Class Groups. (AM-82), Volume 82; Vol. 82, Princeton University Press, 1974.

- Newman, M.H.A. On Theories with a Combinatorial Definition of "Equivalence". Annals of Mathematics 1942, 43, 223–243. [Google Scholar] [CrossRef]

| Task ID | Execute Time | Complexity | Processes (time) |

|---|---|---|---|

| Task 1 | 13 | Simple | [6, 6, 1] |

| Task 2 | 28 | Complex | [3, 2, 4, 13, 6] |

| Task 3 | 8 | Simple | [5, 3] |

| Task 4 | 18 | Medium | [2, 7, 5, 4] |

| Task 5 | 22 | Medium | [1, 10, 8, 3] |

| Task 6 | 35 | Complex | [6, 5, 6, 6, 7, 5] |

| Task 7 | 11 | Simple | [2, 5, 4] |

| Task 8 | 14 | Simple | [7, 3, 4] |

| Task ID | Time Steps | ||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 | 16 | 17 | 18 | 19 | 20 | 21 | 22 | 23 | 24 | 25 | 26 | 27 | 28 | 29 | 30 | 31 | 32 | 33 | 34 | 35 | |

| Task 1 | P1 | P2 | P2 | P2 | P2 | P2 | P2 | P3 | |||||||||||||||||||||||||||

| Task 2 | P1 | P1 | P1 | P2 | P2 | P3 | P3 | P3 | P3 | P4 | P4 | P4 | P4 | P4 | P4 | P4 | P4 | P4 | P5 | ||||||||||||||||

| Task 3 | P1 | P1 | P1 | P1 | P1 | P2 | P2 | P2 | |||||||||||||||||||||||||||

| Task 4 | P1 | P1 | P2 | P2 | P2 | P2 | P2 | P2 | P2 | P3 | P3 | P3 | P3 | P3 | P4 | P4 | P4 | P4 | |||||||||||||||||

| Task 5 | P1 | P2 | P2 | P2 | P2 | P2 | P2 | P2 | P2 | P2 | P2 | P3 | P3 | P3 | P3 | P3 | P3 | P3 | P3 | P4 | P4 | P4 | |||||||||||||

| Task 6 | P1 | P1 | P1 | P1 | P1 | P1 | P2 | P2 | P2 | P2 | P2 | P3 | P3 | P3 | P3 | P3 | P3 | P4 | P4 | P4 | P4 | P4 | P4 | P5 | P5 | P5 | P5 | P5 | |||||||

| Task 7 | P1 | P1 | P2 | P2 | P2 | P2 | P2 | P3 | P3 | P3 | P3 | ||||||||||||||||||||||||

| Task 8 | P1 | P1 | P1 | P1 | P1 | P1 | P1 | P2 | P2 | P2 | P3 | P3 | P3 | P3 | |||||||||||||||||||||

| Tip:The vacant interval signifies the mandated latency period; the workflow is sequential, requiring the prior process to be finalized | |||||||||||||||||||||||||||||||||||

| prior to advancing to subsequent stages. Operations within the same column are capable of concurrent execution. | |||||||||||||||||||||||||||||||||||

| Symbol | Parameter | Value |

|---|---|---|

| Policy network learning rate | 1e-4 | |

| Value network learning rate | 1e-4 | |

| Reward discount factor | ||

| Advantage estimation parameter | ||

| Clipping parameter | ||

| B | Training batch size | 128 |

| Topology loss weight | ||

| Mixing coefficient | ||

| Penalty coefficient 1 | ||

| Penalty coefficient 2 | ||

| Entanglement coefficient |

| Algorithm | Ent.(%) | Saf.Int.(%) | Succ.(%) | Idle(%) |

|---|---|---|---|---|

| Simple Scenario (4 arms, 4 targets) | ||||

| TA-MAPPO | 0.1±0.02 | 0.8±0.3 | 99.95±0.1 | 1.8±0.3 |

| HAPPO | 1.4±0.25 | 11.9±1.8 | 96.8±0.9 | 6.2±0.8 |

| MAPPO | 1.8±0.28 | 13.2±2.0 | 95.4±1.1 | 7.8±1.0 |

| TA-MASAC | 0.2±0.05 | 1.9±0.6 | 99.7±0.2 | 2.8±0.4 |

| FACMAC | 1.2±0.22 | 10.8±1.6 | 97.1±0.8 | 5.8±0.7 |

| MASAC | 1.6±0.25 | 12.4±1.8 | 95.8±1.0 | 7.2±0.9 |

| MAAC | 1.7±0.28 | 13.1±1.9 | 95.5±1.0 | 7.5±1.0 |

| TA-MADDPG | 0.3±0.08 | 3.2±0.8 | 99.35±0.3 | 3.2±0.5 |

| MADDPG | 2.0±0.30 | 14.8±2.1 | 95.4±1.1 | 8.5±1.1 |

| QDDPG | 2.8±0.42 | 18.5±2.8 | 92.8±1.5 | 11.2±1.6 |

| RRT*+Greedy | 3.0±0.78 | 27.0±3.5 | 90.0±2.1 | 3.1±0.4 |

| Medium Scenario (6 arms, 6 targets) | ||||

| TA-MAPPO | 0.2±0.05 | 3.2±0.8 | 98.9±0.3 | 2.8±0.4 |

| HAPPO | 2.2±0.35 | 16.4±2.1 | 92.3±1.2 | 10.1±1.3 |

| MAPPO | 3.2±0.42 | 20.7±2.8 | 88.8±1.5 | 13.1±1.8 |

| TA-MASAC | 0.5±0.12 | 6.3±1.2 | 97.4±0.5 | 5.1±0.7 |

| FACMAC | 1.8±0.28 | 14.6±2.3 | 93.2±1.8 | 9.2±1.1 |

| MASAC | 2.3±0.38 | 17.9±2.6 | 91.3±1.6 | 10.8±1.4 |

| MAAC | 2.4±0.41 | 19.3±2.9 | 90.3±1.7 | 11.5±1.5 |

| TA-MADDPG | 1.0±0.18 | 8.8±1.5 | 96.4±0.7 | 6.3±0.9 |

| MADDPG | 3.3±0.45 | 21.5±3.1 | 89.0±1.4 | 12.4±1.6 |

| QDDPG | 3.8±0.62 | 23.2±3.8 | 87.3±2.0 | 14.7±2.1 |

| RRT*+Greedy | 5.6±0.78 | 27.9±3.5 | 82.2±2.1 | 4.9±0.8 |

| Complex Scenario (10 arms, 8 targets) | ||||

| TA-MAPPO | 0.7±0.15 | 7.5±1.5 | 96.8±0.8 | 5.2±0.8 |

| HAPPO | 4.2±0.65 | 19.8±3.4 | 88.1±2.0 | 13.8±2.1 |

| MAPPO | 5.1±0.75 | 24.8±4.2 | 84.6±2.5 | 16.8±2.6 |

| TA-MASAC | 2.0±0.35 | 11.8±2.2 | 94.5±1.1 | 8.2±1.2 |

| FACMAC | 3.8±0.58 | 18.2±3.1 | 89.5±1.8 | 12.5±1.8 |

| MASAC | 4.5±0.68 | 21.2±3.6 | 87.2±2.1 | 14.5±2.2 |

| MAAC | 4.8±0.72 | 22.8±3.8 | 86.1±2.3 | 15.2±2.4 |

| TA-MADDPG | 2.3±0.42 | 14.4±2.8 | 92.8±1.4 | 9.8±1.5 |

| MADDPG | 5.7±0.82 | 31.1±4.2 | 82.3±2.1 | 18.7±2.4 |

| QDDPG | 6.2±0.89 | 33.5±4.6 | 81.1±2.3 | 19.8±2.6 |

| RRT*+Greedy | 10.4±1.03 | 37.8±5.4 | 80.3±2.8 | 5.3±0.6 |

| Tip: The table metrics are as follows: policy stochasticity | ||||

| (Entropy), safety intervention rate (Saf. Int.), task success | ||||

| rate (Succ.), and robotic arm idle rate (Idle). | ||||

| Model | Dual Experience Pool | Action Safety Avoidance | Heterogeneous Control | Entangled Rate(%) | Completion Rate(%) | Reward |

|---|---|---|---|---|---|---|

| Complete TA-Models (Full Components) | ||||||

| TA-MAPPO | ✓ | ✓ | ✓ | 0.7 | 96.8 | 478.3 |

| TA-MASAC | ✓ | ✓ | ✓ | 2.0 | 94.5 | 427.6 |

| TA-MADDPG | ✓ | ✓ | ✓ | 2.3 | 92.8 | 417.8 |

| TA-MAPPO Component Ablation | ||||||

| Topology-Aware | ✓ | ✓ | ✓ | 2.8 | 89.5 | 412.3 |

| Dual Experience Pool | × | ✓ | ✓ | 1.5 | 93.1 | 445.6 |

| Safety Replacement | ✓ | × | ✓ | 3.2 | 86.4 | 398.7 |

| Hierarchical Control | ✓ | ✓ | × | 1.9 | 91.2 | 431.2 |

| Base MAPPO | × | × | × | 5.1 | 84.6 | 372.1 |

| TA-MASAC Component Ablation | ||||||

| Topology-Aware | ✓ | ✓ | ✓ | 3.8 | 87.2 | 385.9 |

| Dual Experience Pool | × | ✓ | ✓ | 2.8 | 90.8 | 418.3 |

| Safety Replacement | ✓ | × | ✓ | 4.2 | 83.7 | 368.5 |

| Hierarchical Control | ✓ | ✓ | × | 3.1 | 88.9 | 402.7 |

| Base MASAC | × | × | × | 4.5 | 87.2 | 385.9 |

| TA-MADDPG Component Ablation | ||||||

| Topology-Aware | ✓ | ✓ | ✓ | 4.1 | 85.6 | 362.4 |

| Dual Experience Pool | × | ✓ | ✓ | 3.2 | 89.1 | 395.2 |

| Safety Replacement | ✓ | × | ✓ | 4.8 | 81.9 | 345.8 |

| Hierarchical Control | ✓ | ✓ | × | 3.5 | 87.4 | 378.6 |

| Base MADDPG | × | × | × | 5.7 | 82.3 | 348.2 |

| Algorithm | Convergence Episodes | Convergence Stability | Sample Efficiency |

|---|---|---|---|

| TA-MAPPO | 1.08e5 ± 1.8e4 | 0.94 ± 0.03 | 2.28 ± 0.18 |

| HAPPO | 1.62e5 ± 1.7e4 | 0.81 ± 0.07 | 1.24 ± 0.15 |

| MAPPO | 2.70e5 ± 2.4e4 | 0.77 ± 0.08 | 1.08 ± 0.12 |

| TA-MASAC | 1.57e5 ± 1.3e4 | 0.91 ± 0.04 | 1.95 ± 0.21 |

| MAAC | 1.76e5 ± 1.9e4 | 0.84 ± 0.06 | 1.52 ± 0.18 |

| MASAC | 2.17e5 ± 2.2e4 | 0.79 ± 0.08 | 1.28 ± 0.14 |

| FACMAC | 1.81e5 ± 1.8e4 | 0.76 ± 0.09 | 1.21 ± 0.13 |

| TA-MADDPG | 2.00e5 ± 2.3e4 | 0.88 ± 0.05 | 1.73 ± 0.19 |

| MADDPG | 2.62e5 ± 1.9e4 | 0.72 ± 0.09 | 0.95 ± 0.11 |

| QDDPG | 3.18e5 ± 3.4e4 | 0.68 ± 0.11 | 0.84 ± 0.09 |

| RRT*+Greedy | 8.50e4 ± 1.4e4 | 0.71 ± 0.18 | - |

| Algorithm | Parameter Sensitivity | Robustness Score |

|---|---|---|

| TA-MAPPO | Low (0.12) | 0.92 ± 0.04 |

| HAPPO | Medium (0.34) | 0.76 ± 0.08 |

| MAPPO | High (0.52) | 0.71 ± 0.09 |

| TA-MASAC | Low (0.18) | 0.89 ± 0.05 |

| FACMAC | Medium (0.41) | 0.79 ± 0.07 |

| MASAC | High (0.48) | 0.74 ± 0.09 |

| MAAC | High (0.51) | 0.72 ± 0.10 |

| TA-MADDPG | Low (0.22) | 0.86 ± 0.06 |

| MADDPG | High (0.58) | 0.67 ± 0.11 |

| QDDPG | Very High (0.67) | 0.63 ± 0.12 |

| RRT*+Greedy | Medium (0.38) | 0.71 ± 0.08 |

| Tip: Parameter sensitivity is classified based on specified | ||

| numerical thresholds. Low: Medium: | ||

| High:Very High: | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).