7. Monte Carlo Experiment and Numerical Examples

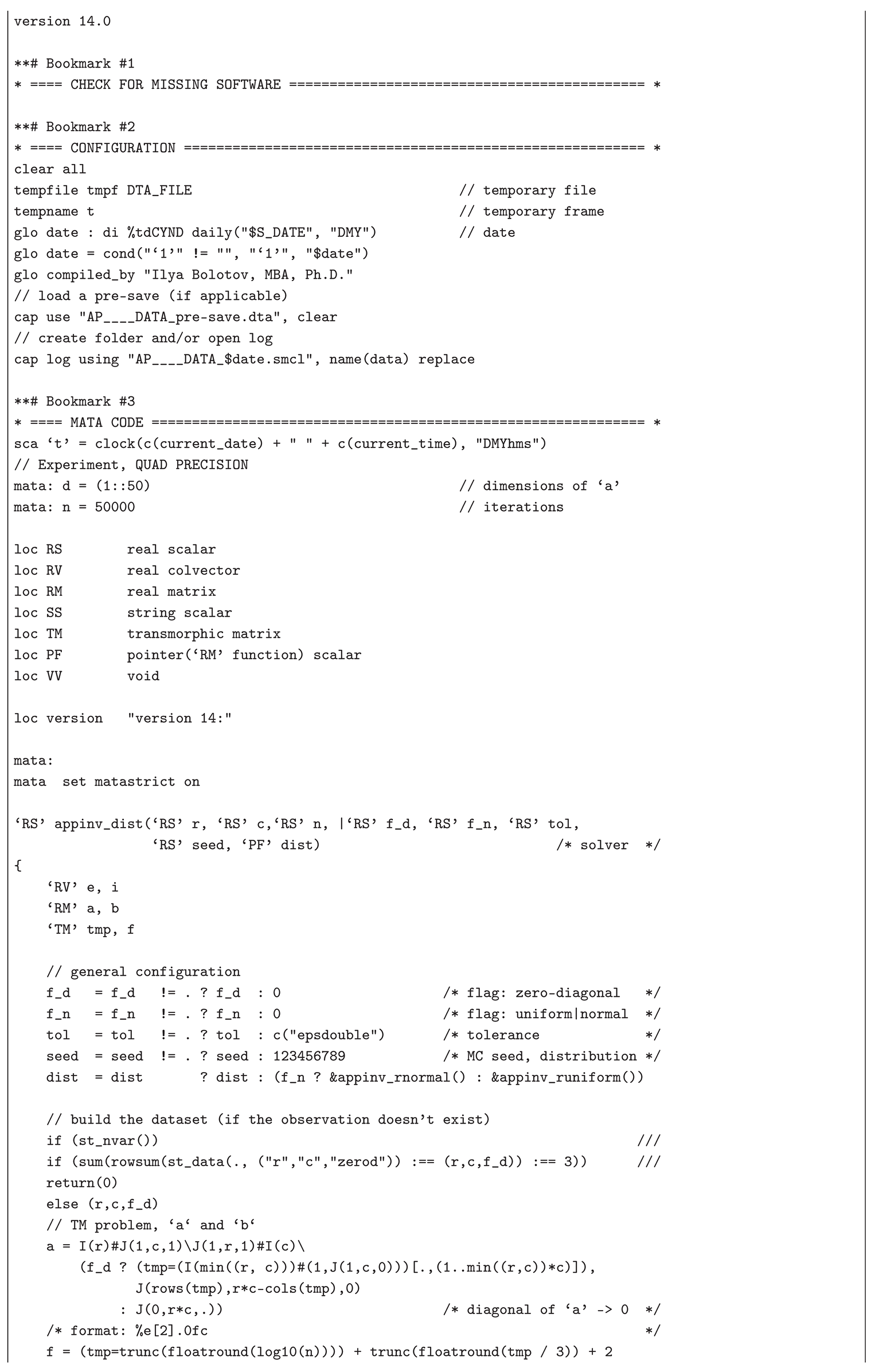

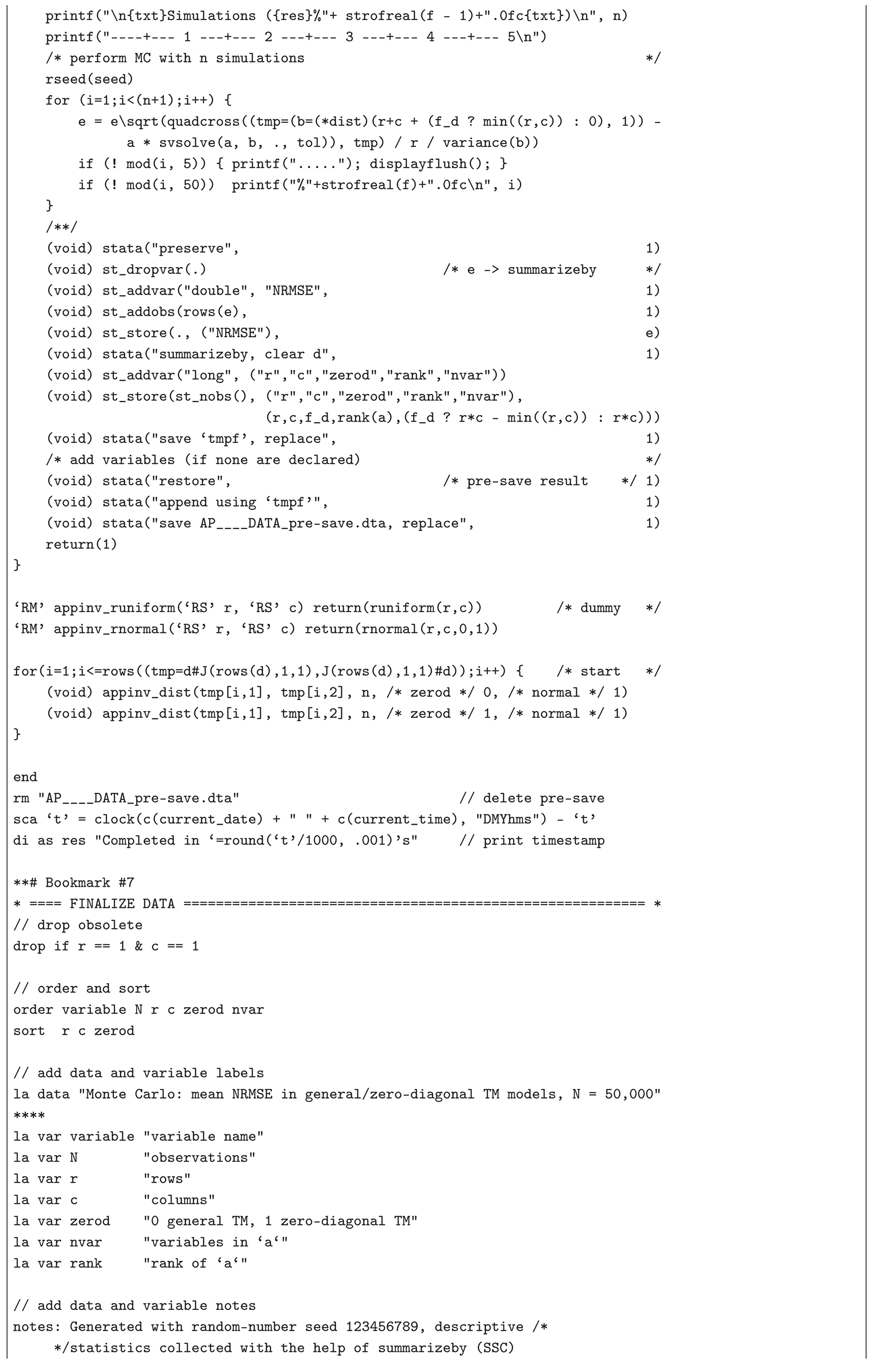

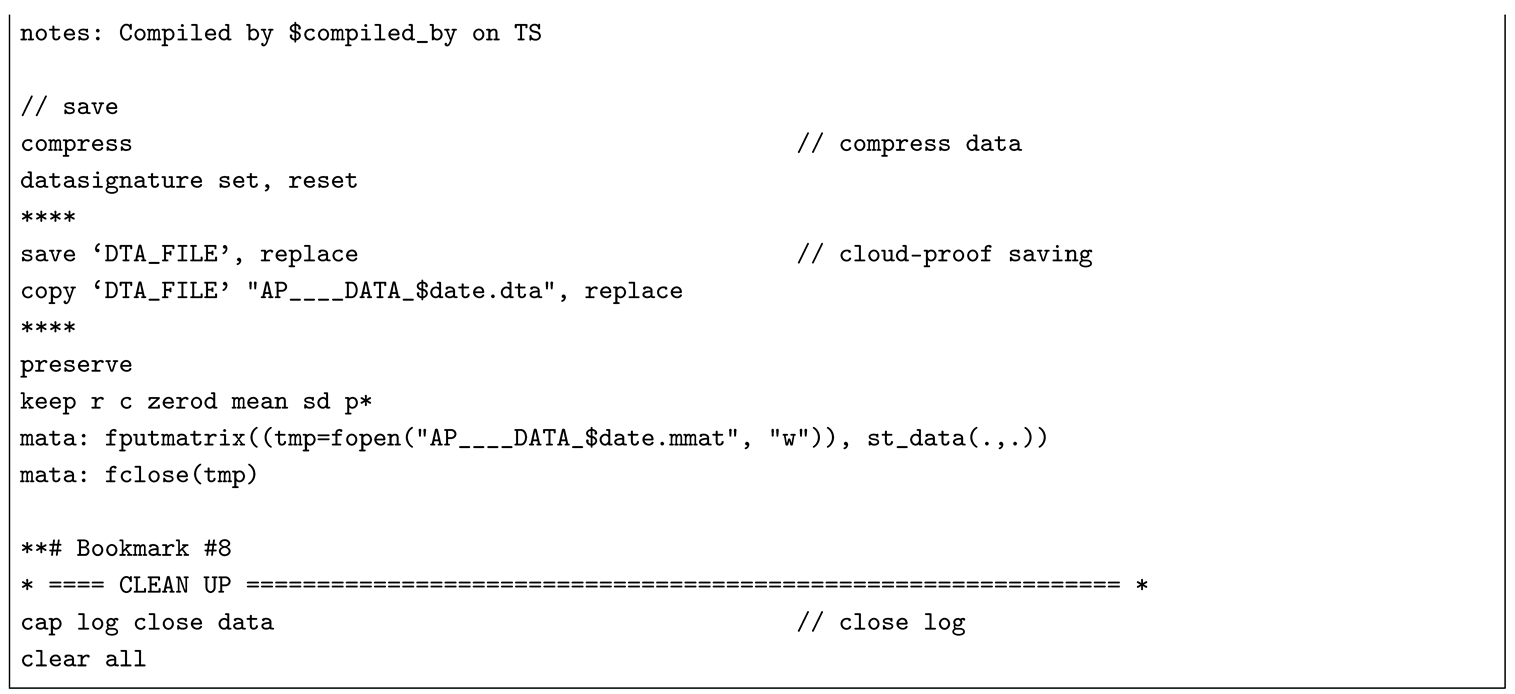

The Monte Carlo experiment and the simulated examples presented below illustrate the applicability of the CLSP to the AP (TM), CMLS (RP), and LPRLS/QPRLS problems. The standardized form of

in the APs (TMs), i.e.,

, with given

and

r, enables

large-scale simulation of the distribution of NRMSE — a robust goodness-of-fit metric for the CLSP — through

repeated random trials under varying matrix dimensions

, row and column group sizes

i and

j, and composition of

, inferring asymptotic percentile means and standard deviations for practical implementations (e.g., the

t-test for the mean). This text presents the results of a simulation study on the distribution of the NRMSE from the first-step estimate

(implementable in standard econometric software without CLSP modules) for

,

,

,

, and

, conducted in Stata/MP (set to version 14.0) with the help of Mata’s 128-bit floating-point cross-product-based

quadcross() for greater precision and SVD-based

svsolve() with a strict tolerance of

c("epsdouble") for increased numerical stability, and a random-variate seed

123456789 (see

Appendix B.1 containing a list of dependencies [

97] and the Stata .do-file). In this 50,000-iterations Monte Carlo simulation study, spanning matrix dimensions from 1×2 and 2×1 up to 50×50, random normal input vectors

were generated for each run, applied with and without zero diagonals (i.e., if, under

,

is reshaped into a (

)-matrix

, then

). Thus, a total of 249.9 million observations (

) was obtained via the formula

, resulting in 4,998 aggregated statistics of the asymptotic distribution, assuming convergence:

mean,

sd,

skewness,

kurtosis,

min,

max, as well as

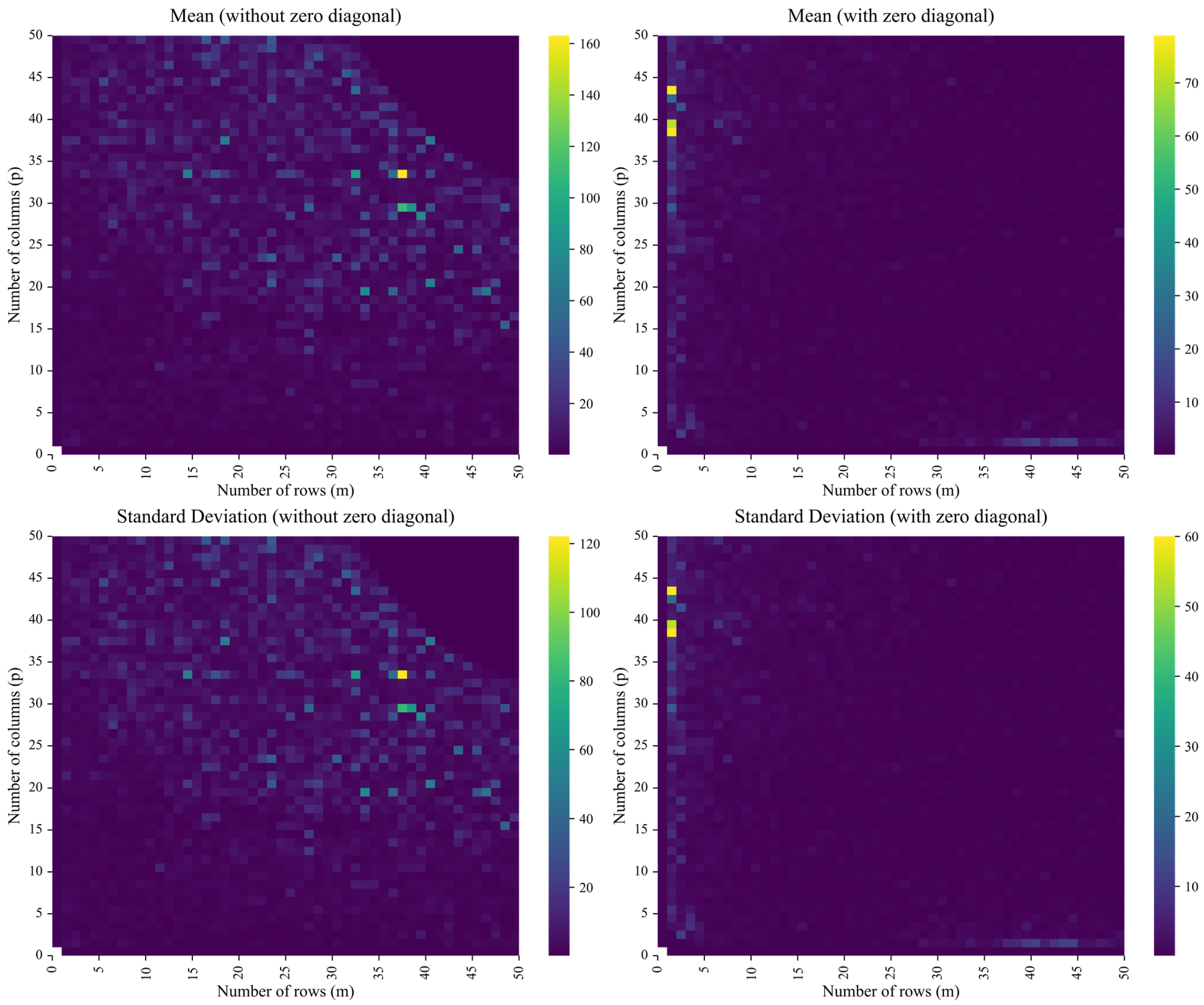

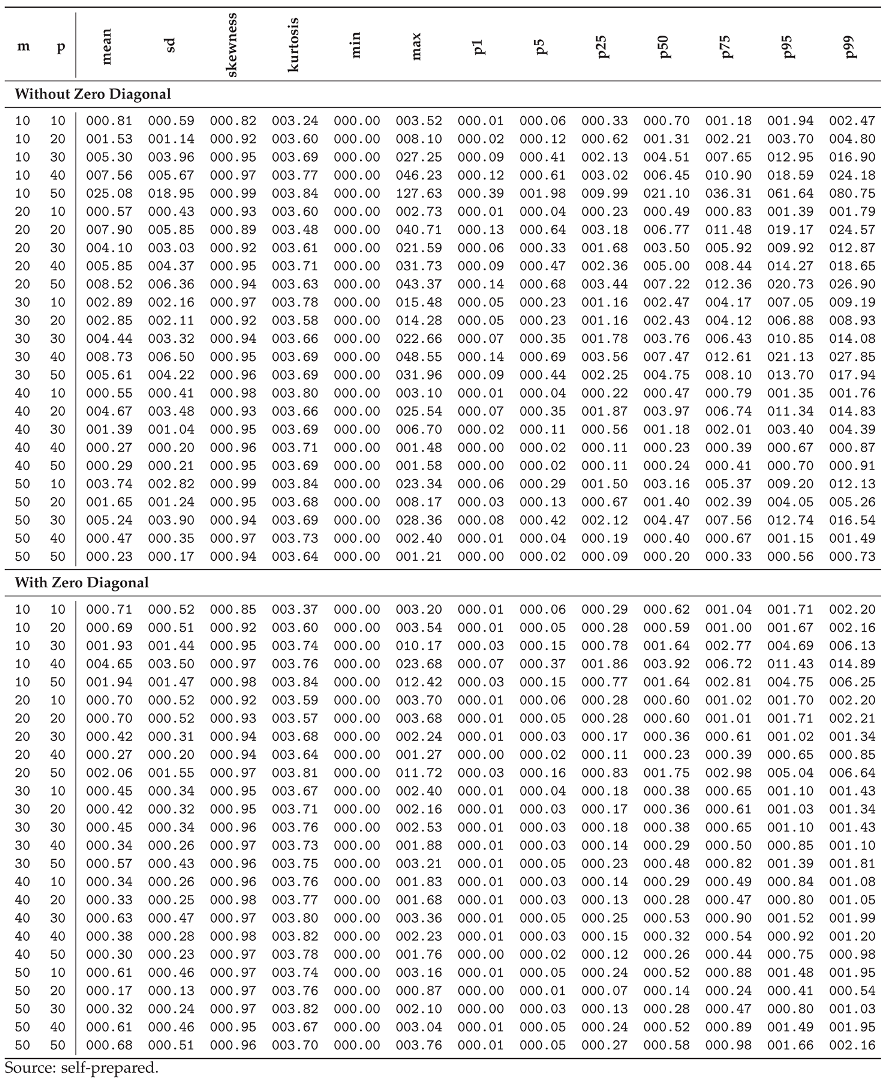

p1–p99, as presented in

Figure 6 (depicting the intensity of its first two moments,

mean and

sd) and

Table 2 (reporting a subset of all thirteen statistics for

).

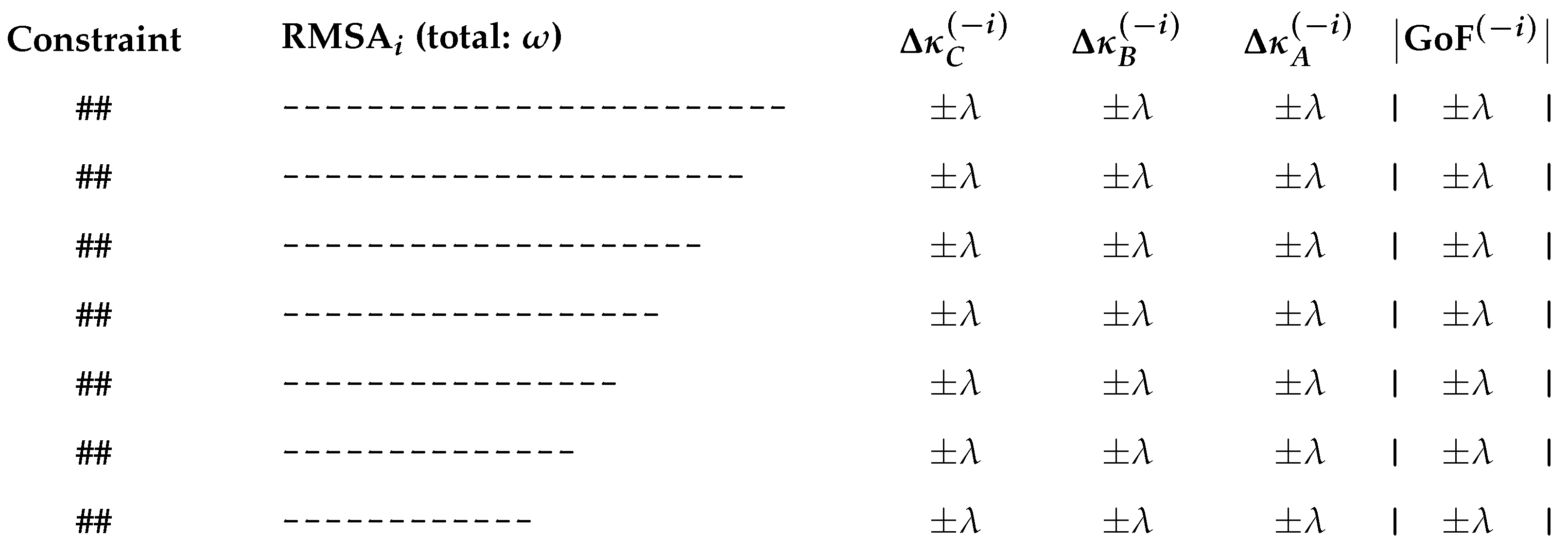

From the results of the Monte Carlo experiment, it is observable that: (1) the NRMSE from the first-step estimate and its distributional statistics exhibit increasing stability and boundedness as matrix dimensions increase — specifically, for , both the mean and sd of NRMSE tend to converge, indicating improved estimator performance (e.g., under no diagonal restrictions, at , and , while at , and ); (2) the zero-diagonal constraints () reduce the degrees of freedom and lead to a much more uniform and dimensionally stable distribution of NRMSE across different matrix sizes (e.g., at , mean drops to and sd to , while at , mean rises slightly to and sd to ); and (3) the distribution of NRMSE exhibits mild right skew and leptokurtosis — less so for the zero-diagonal case: under no diagonal restriction, the average skewness is with a coefficient of variation of (i.e., ), and the average kurtosis is with a variation of ; whereas under the zero-diagonal constraint, skewness averages with only variation, and kurtosis averages with variation (i.e., ). To sum up, the larger and less underdetermined (i.e., in an AP (TM) problem, the larger the block of ) the canonical system , the lower the estimation error (i.e., the mean NRMSE) and its variance, and, provided a stable limiting law for NRMSE exists, the skewness and kurtosis from the simulations drift toward 0 and 3, respectively, consistent with — but not proving — a convergence toward a standard-normal distribution.

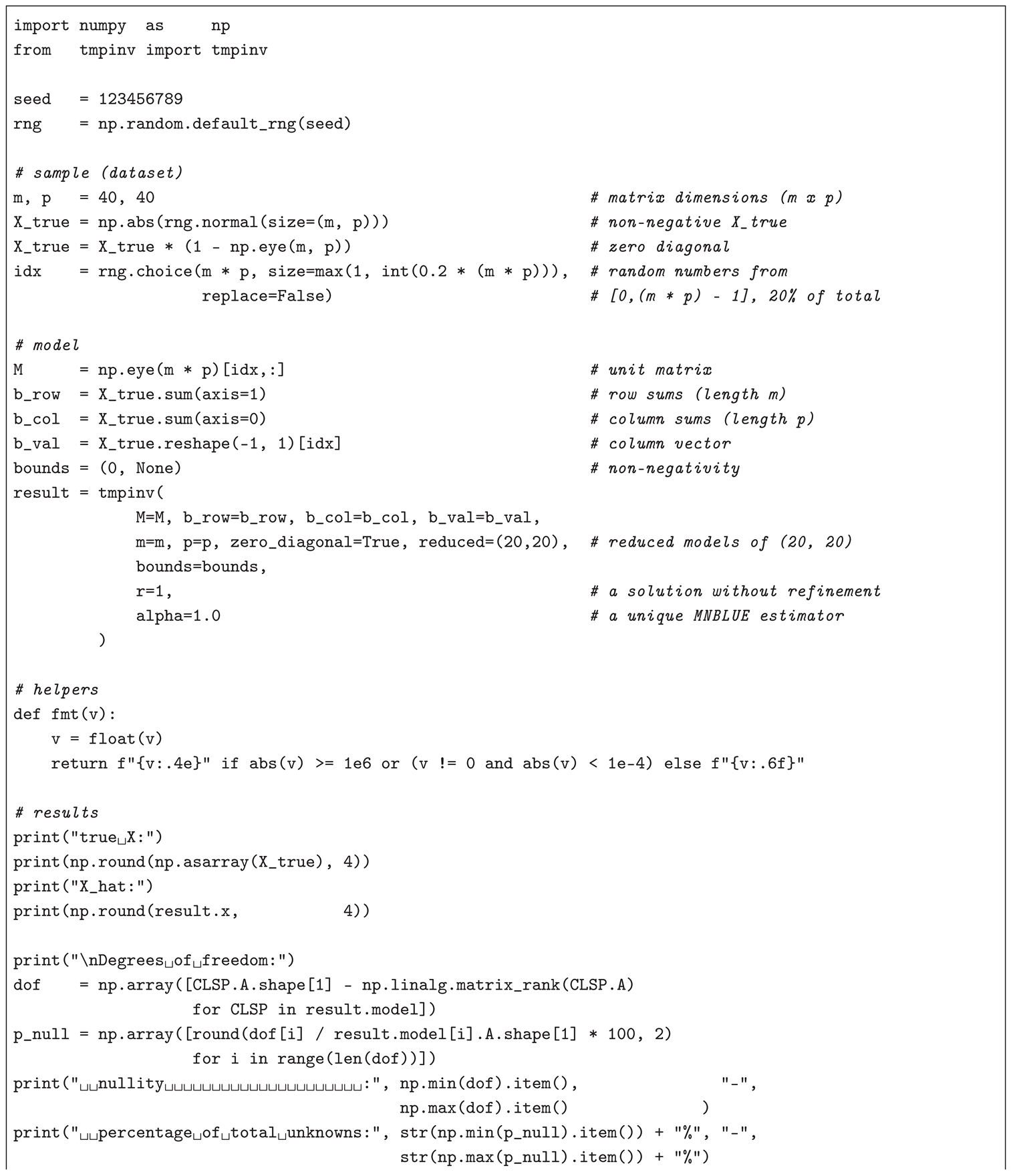

For an illustration of the applicability of the CLSP to the APs (TMs), CMLS (RPs), and LPRLS/QPRLS, the author-developed cross-platform Python 3 module,

pyclsp (version ≥ 1.3.0, available on PyPI) [

98], — together with its interface co-modules for the APs (TMs),

pytmpinv (version ≥ 1.2.0, available on PyPI) [

99], and the LPRLS/QPRLS,

pylppinv (version ≥ 1.3.0, available on PyPI) [

100] (a co-module for the CMLS (RPs) is not provided, as the variability of

within the

,

, block of

prevents efficient generalization), — is employed for a sample (dataset) of

i random elements

(scalars, vectors, or matrices depending on context) and their transformations, drawn independently from the standard normal distribution, i.e.,

, under the mentioned random-variate seed

123456789 (configured for consistency with the above-described Monte Carlo experiment). Thus, using the cases of (a) a (non-negative symmetric) input-output table and (b) a (non-negative) zero-diagonal trade matrix as two

AP (TM) numerical examples, this text simulates problems similar to the ones addressed in Pereira-López et al. [

95] and Bolotov [

16]: an underdetermined

is estimated — from row sums, column sums, and

k known values of two randomly generated matrices (a)

and (b)

,

, subject to (a)

and (b)

— via CLSP assuming

,

,

, and

. The Python 3 code, based on

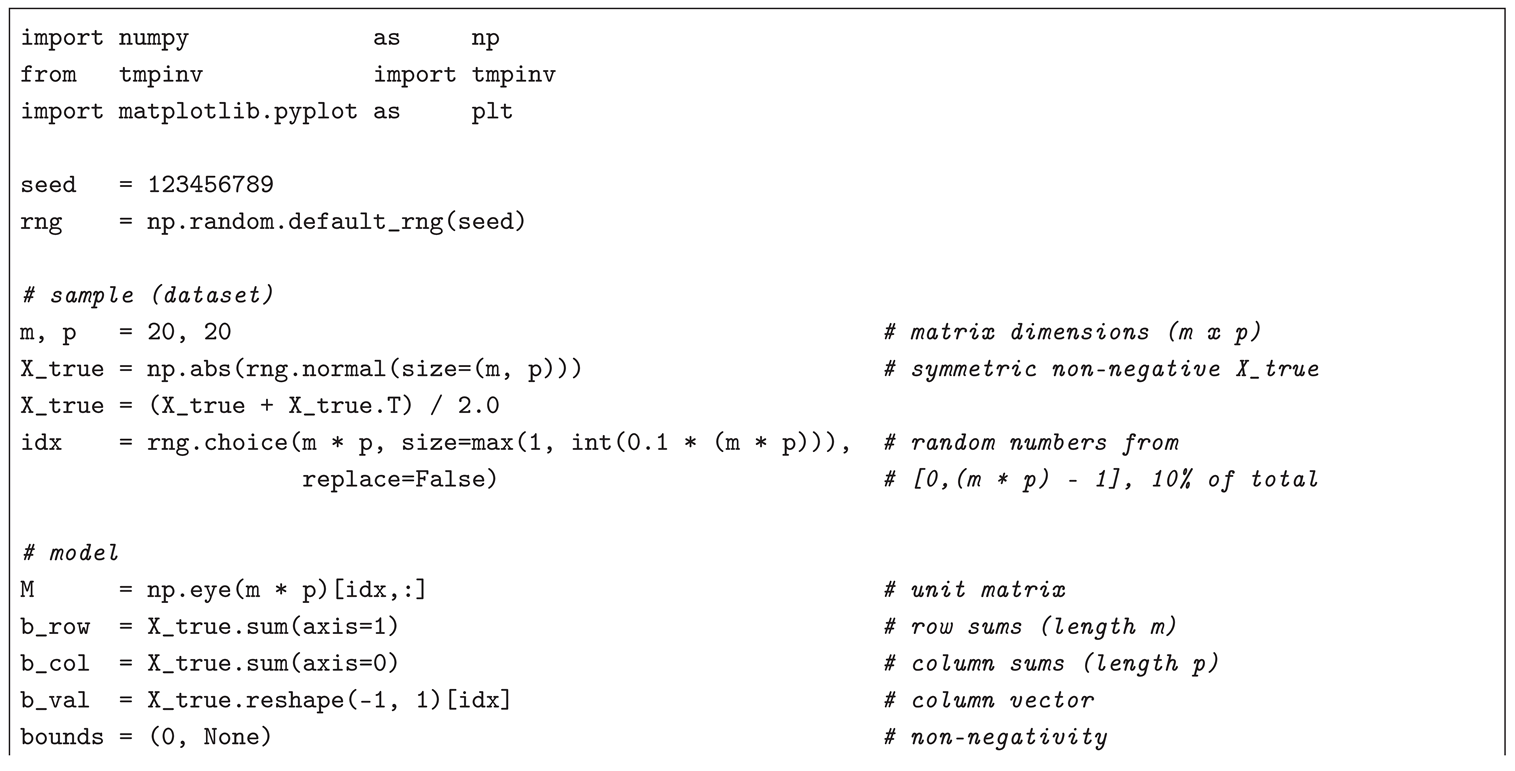

pytmpinv and two other modules, installable by executing

pip install matplotlib numpy pytmpinv==1.2.0, for (a)

and

and (b)

— estimated with the help of

-MNBLUE across

reduced models

assuming an estimability constraint of

— and

, with a non-iterated (

), unique (

), and MNBLUE (

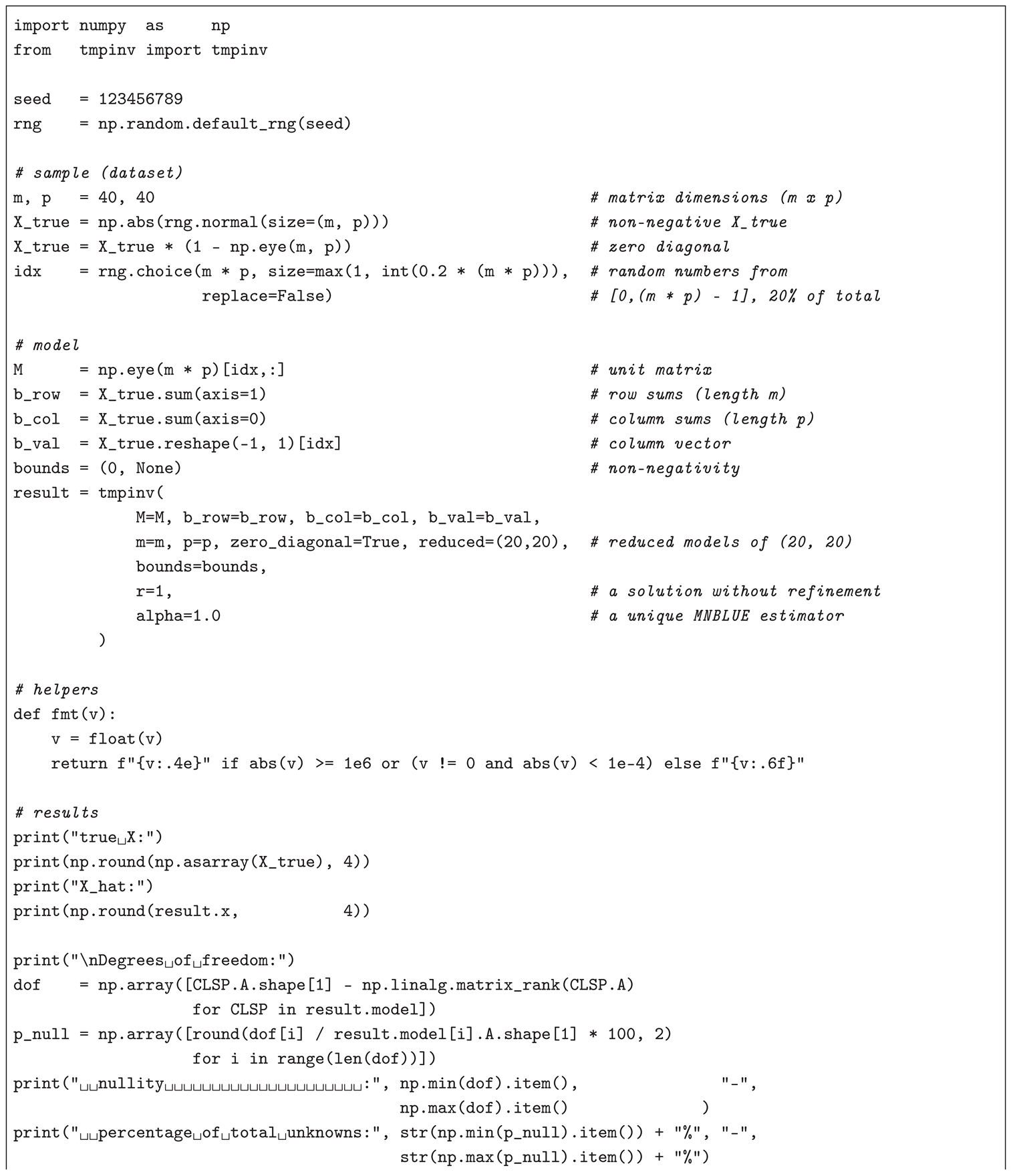

) (two-step) CLSP solution, is implemented in (a) Listing and (b) Listing (e.g., to be executed in a Jupyter Notebook).

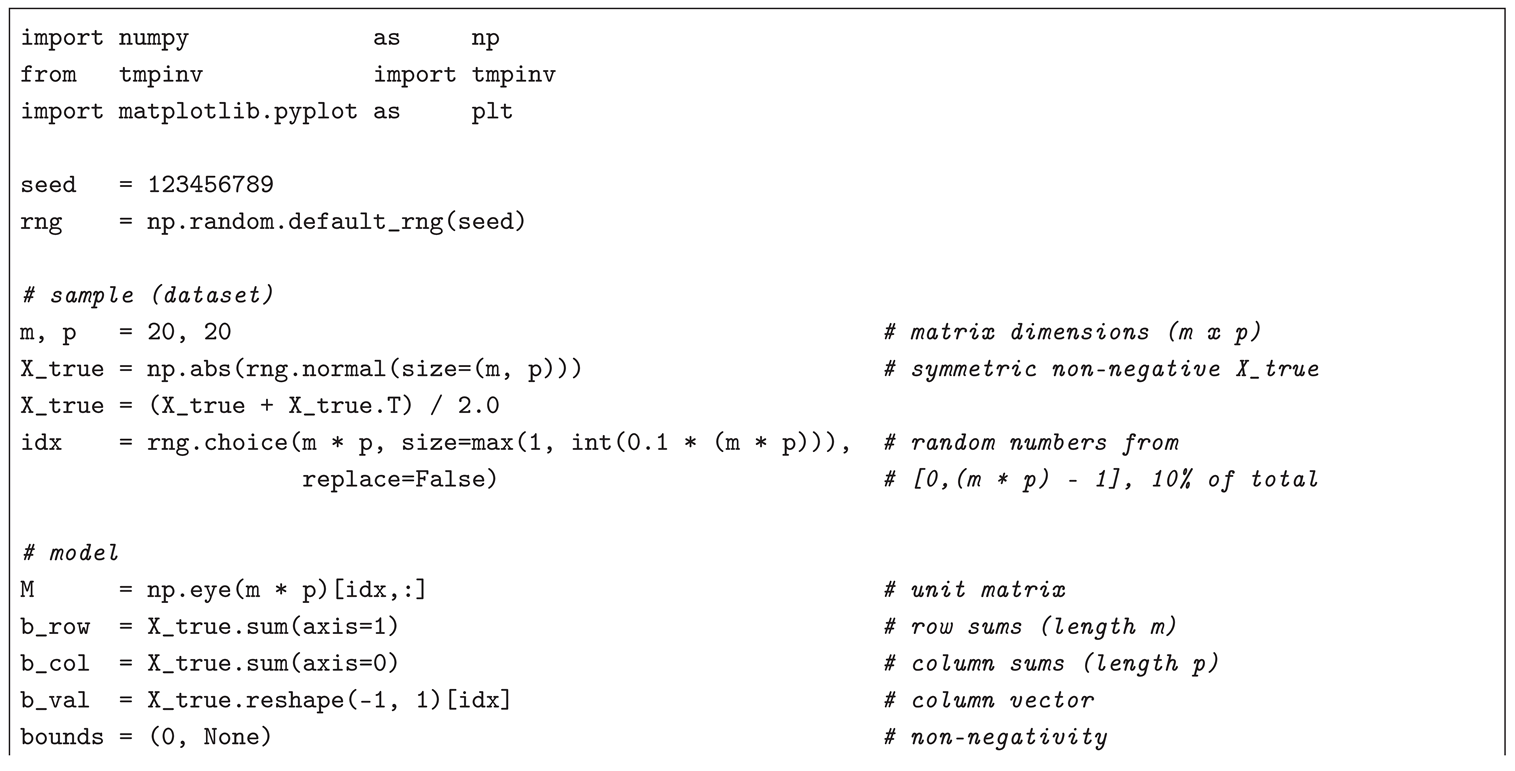

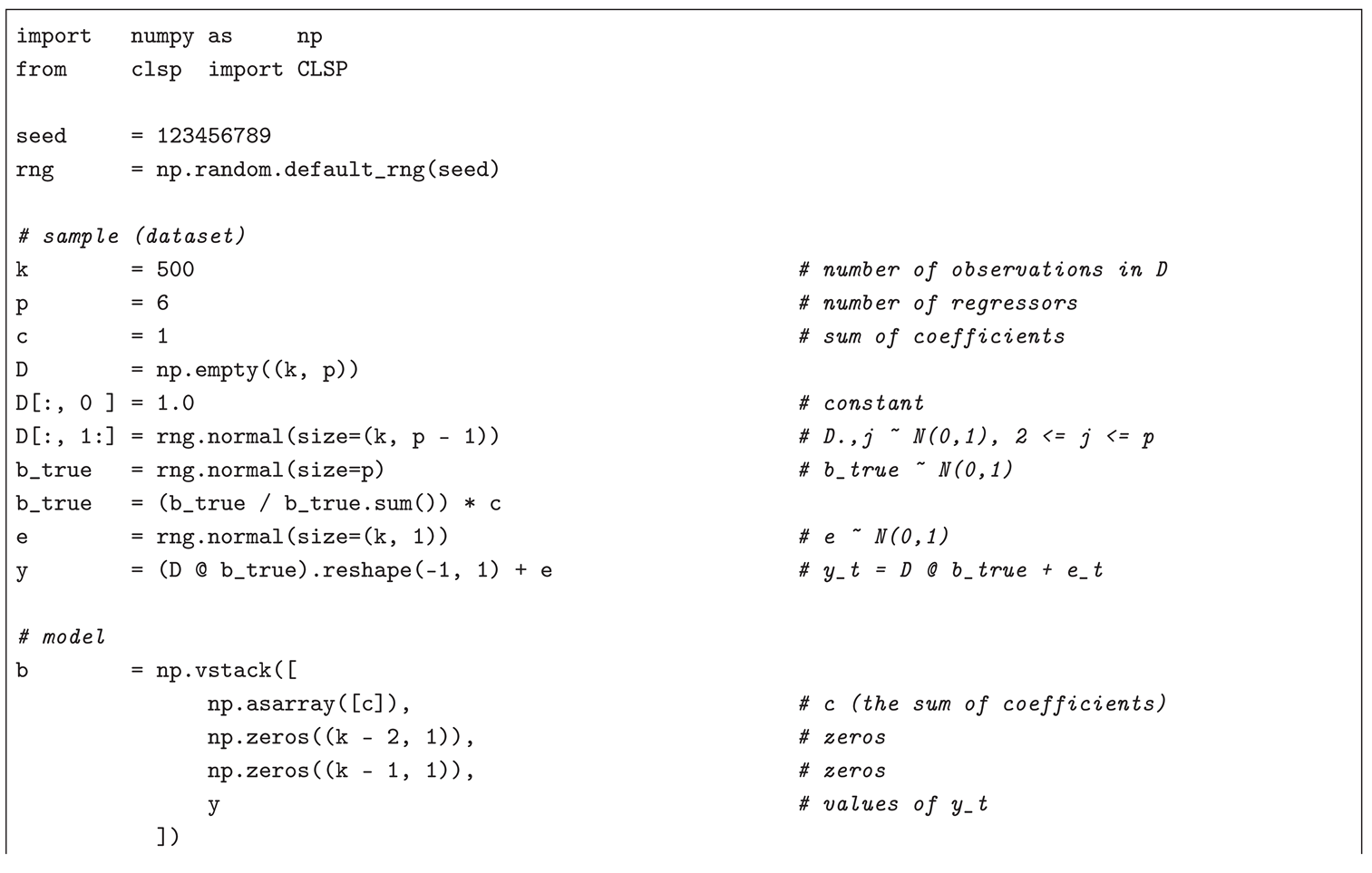

|

Listing 1. Simulated numerical example for a symmetric input-output-table-based AP (TM) problem. |

|

|

In case (a) (see Listing for code), the number of model (target) variables is with a nullity of , corresponding to 80.25% of the total unknowns. Given the simulation of , a matrix unknown in real-world applications, — i.e., CLSP is used to estimate the elements of an existing matrix from its row sums, , column sums, , and a randomly selected 10% of its entries, , — the model’s goodness of fit can be measured by a user-defined (i.e., CLSP achieves an improvement of over a hypothetical naïve predictor reproducing the known 10% entries of and yielding a , but still a modest value of , i.e. a relatively large error, ) with lying within wide condition-weighted diagnostic intervals reflecting the ill-conditioning of the strongly rectangular , , and only the left-sided Monte Carlo-based t-test for the mean of the NRMSE (on a sample of 30 NRMSE values obtained by substituting with ) suggesting consistency with expectations (i.e., with the ). In terms of numerical stability, with a low , as confirmed by the correlogram produced in matplotlib, indicating a well-chosen constraints matrix, even given the underdeterminedness of the model (which also prevents a better fit).

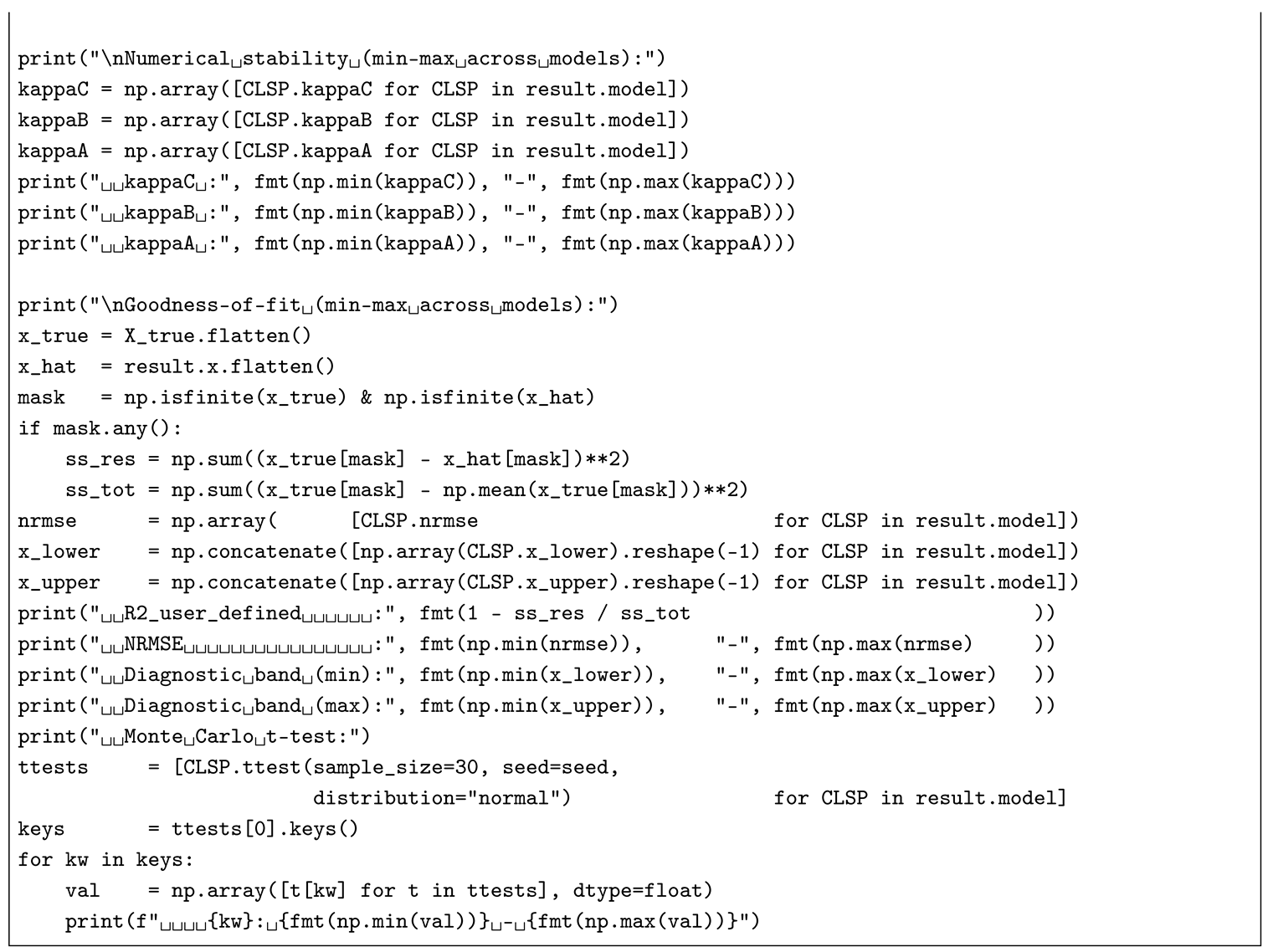

|

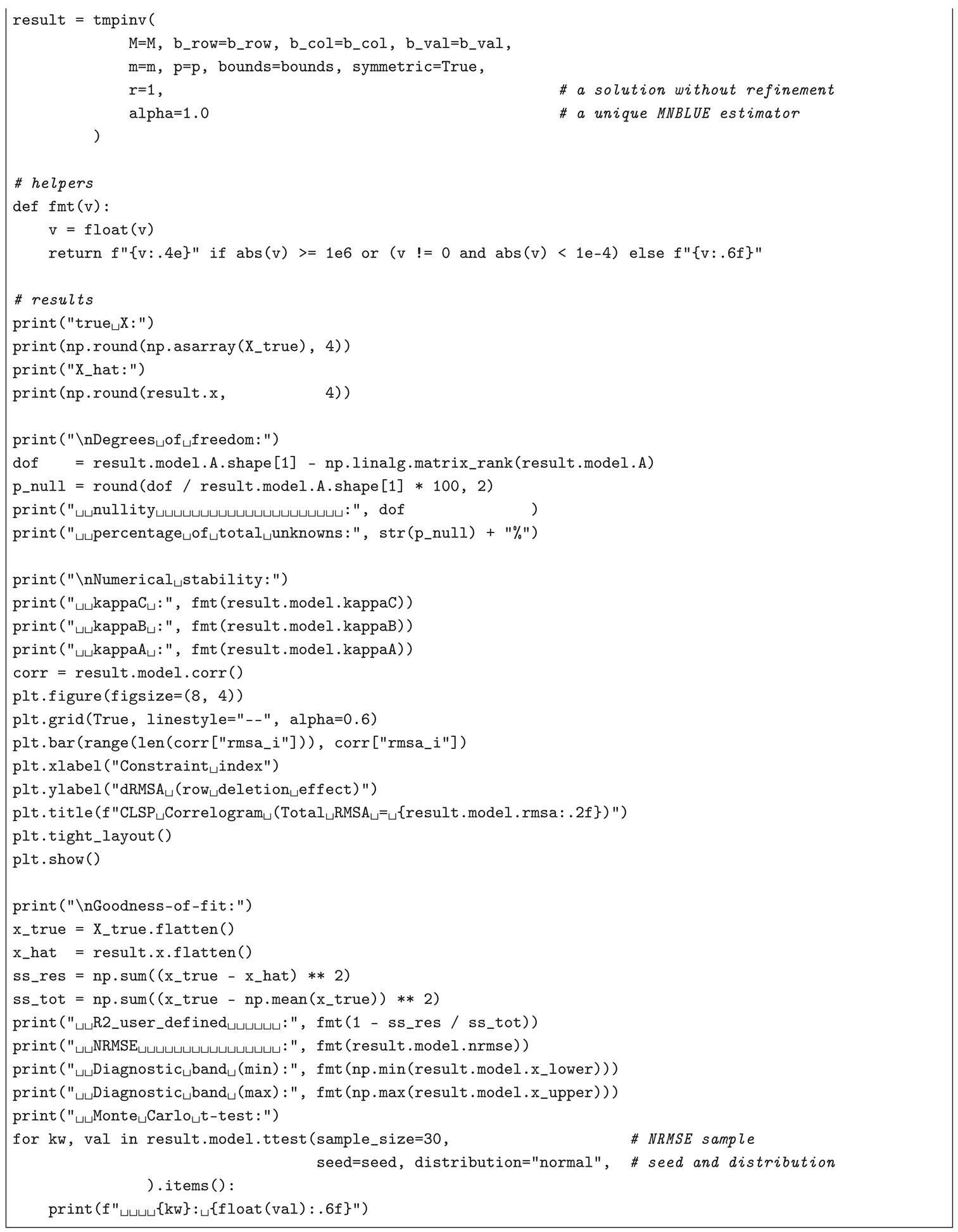

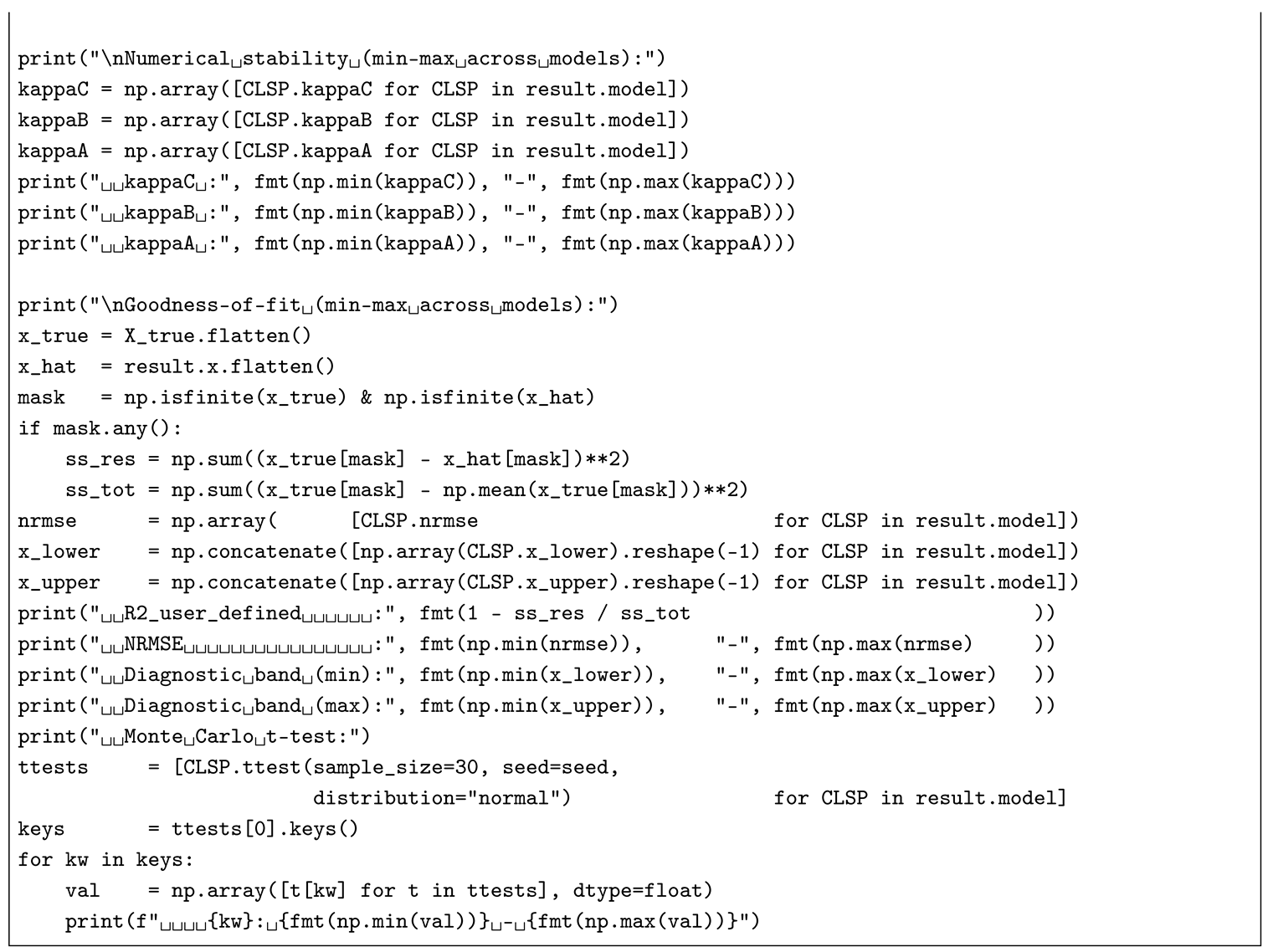

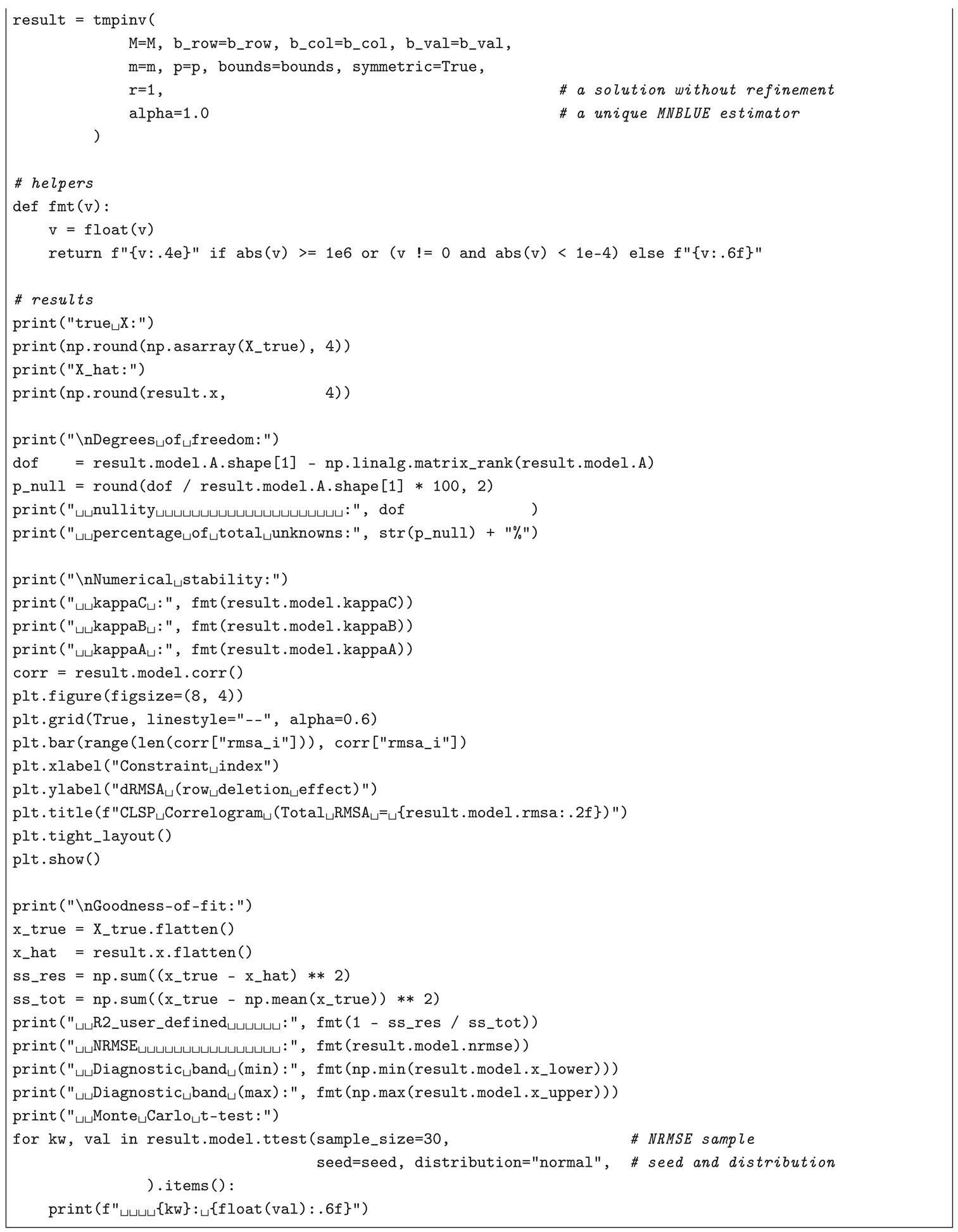

Listing 2. Simulated numerical example for a (non-negative) trade-matrix-based AP (TM) problem. |

|

|

In case (b) (see Listing for code), the corresponding number of model (target) variables in each of the reduced design submatrices , , is — where and , one row and one column being reserved for and , which enter as and the vector of slack variables , so that (i.e., the slack matrix compensates for the unaccounted row and column sums in the reduced models as opposed to the full one, such as case (a)) — with a nullity (per reduced model), corresponding to 25.00%–72.68% of the total unknowns (per reduced model) — to compare, a full model, under the same inputs and no computational constraints (i.e., ), would have a nullity , corresponding to 73.00% of the total unknowns. In the examined example, — based on , , and a randomly selected 20% of entries of the true matrix , — the reduced-model block solution’s goodness of fit could not be efficiently measured by a user-defined (i.e., the block matrix constructed from reduced-model estimates led to , but to an error proportionate to the one in case (a), (per reduced model)) — in contrast, a full model would achieve , but at a cost of a greater error, — with lying within strongly varying condition-weighted diagnostic intervals , where (per reduced model) and (per reduced model), and varying results of Monte Carlo-based t-tests for the mean of the (on a sample of 30 values obtained by substituting with ), where p-values range –1.000000 (left-sided), 0.000000–1.000000 (two-sided), and –1.000000 (right-sided) (per reduced model) — alternatively, a full model would lead to wider condition-weighted diagnostic intervals (i.e., reflecting the ill-conditioning of the strongly rectangular , ) and only the left-sided Monte Carlo-based t-test for the mean of the NRMSE (on a sample of 30 NRMSE values obtained by substituting with ) suggesting consistency with expectations (i.e., with the ). In terms of numerical stability, (per reduced model), which indicates well-conditioning of all the reduced models — conversely, in a full model, (therefore, a full model ensures an overall better fit but a lower fit quality, i.e., a trade-off).

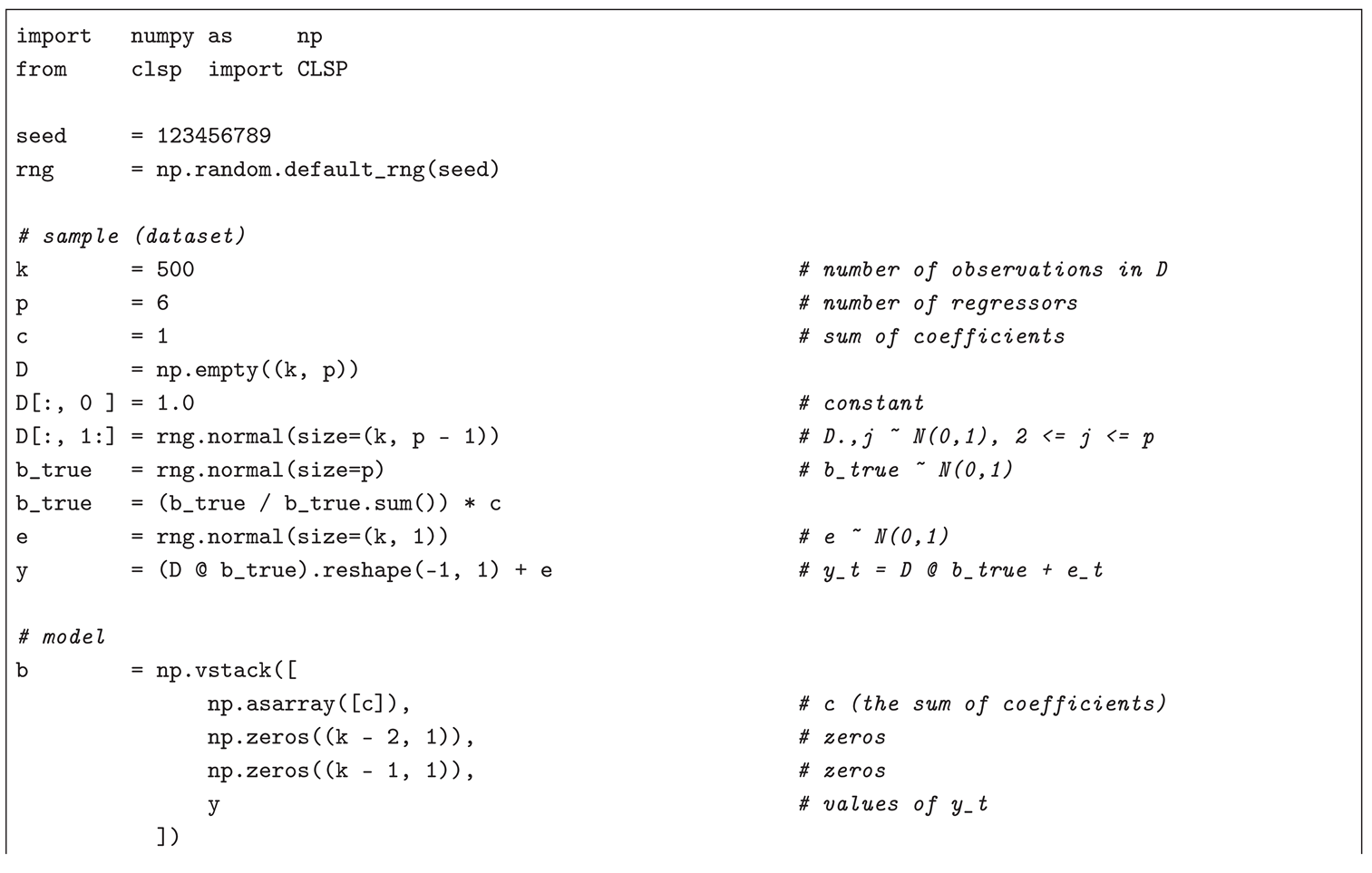

As the

CMLS (RP) numerical example, this text addresses a problem similar to the one solved in Bolotov [

15], Bolotov [

96]: a coefficient vector

from a (time-series) linear regression model in its classical (statistical) notation

with

denoting

n-th order differences (i.e., the discrete analogue of

) — where

is the dependent variable,

is the vector of regressors with a constant,

is the model’s error (residual),

c is a constant, and

is the set of stationary points — is estimated on a simulated sample (dataset) with the help of CLSP assuming

and

,

. The Python 3 code, based on

numpy and

pyclsp modules, installable by executing

pip install numpy pyclsp==1.3.0, for

,

,

,

, where

,

,

,

,

,

,

,

,

, and

, with a non-iterated (

), unique (

), and MNBLUE (

) two-step CLSP solution (consistent with the ones from cases (a) and (b) for APs (TMs)), is implemented in Listing (e.g., for a Jupyter Notebook).

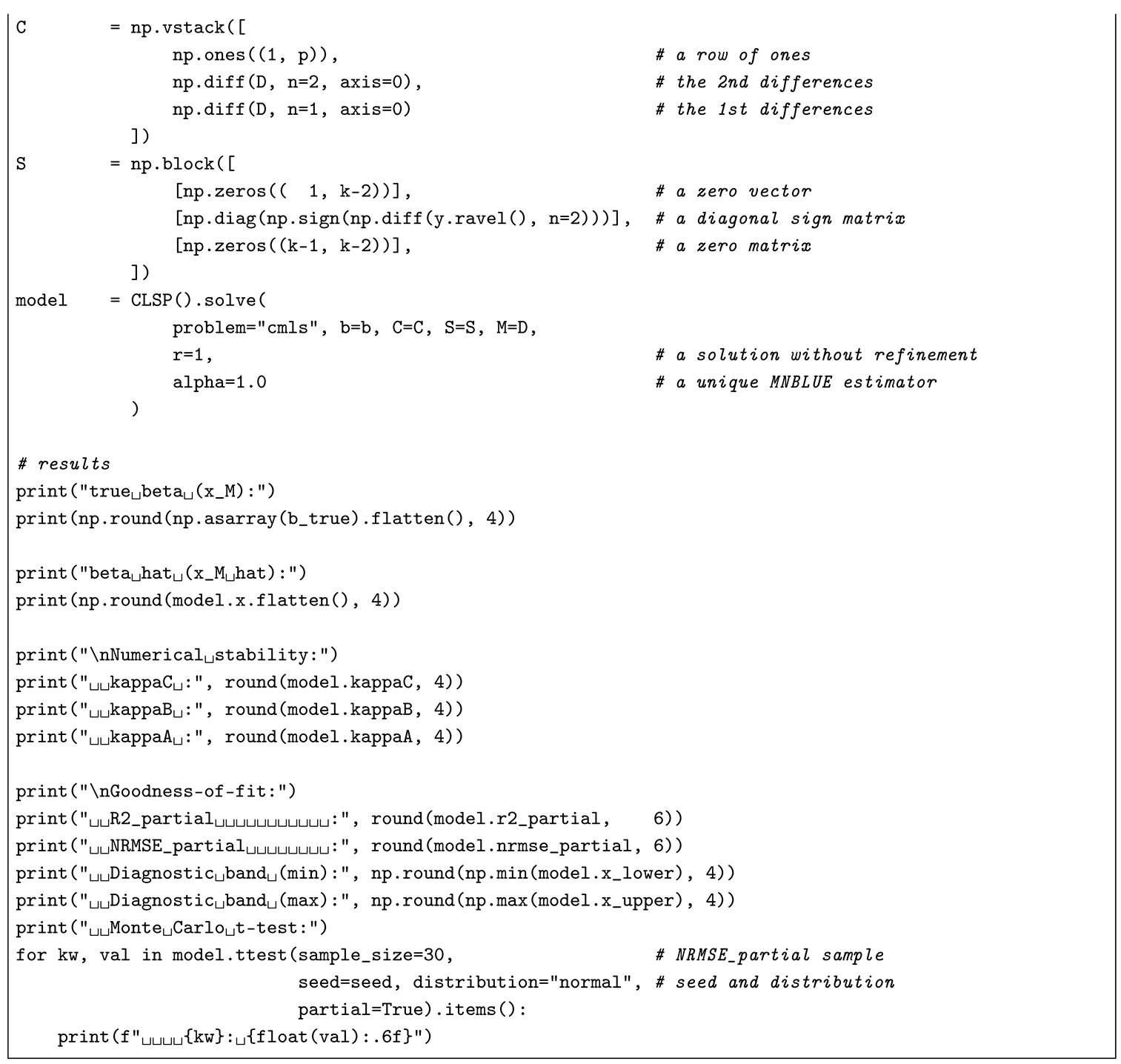

|

Listing 3. Simulated numerical example for a (time-series) stationary-points-based CMLS (RP) problem. |

|

|

Compared to the true values , the CMLS (RP) estimate is with a modest (i.e., a greater error, ), moderate condition-weighted diagnostic intervals , and only the right-sided Monte Carlo-based t-test for the mean of the (on a sample of 30 values obtained by substituting with ) — in the example under scrutiny, is preferable to NRMSE due to — suggesting consistency with expectations (i.e., with the ). Similarly, in terms of numerical stability, , indicating that the constraint block is ill-conditioned, most likely, because of the imposed data- (rather than theory-) based definition of stationary points, which rendered Step 2 infeasible () (limiting the fit quality).

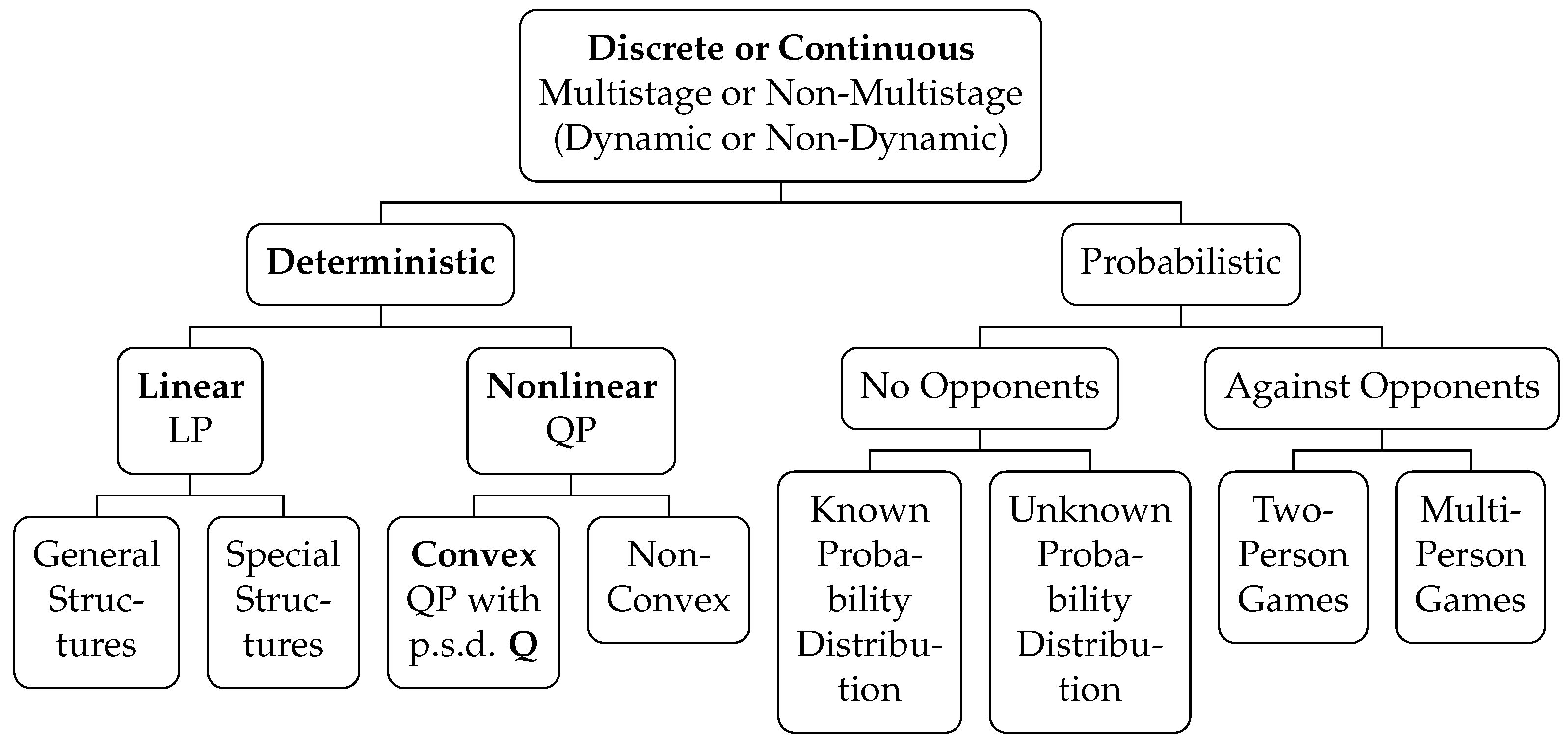

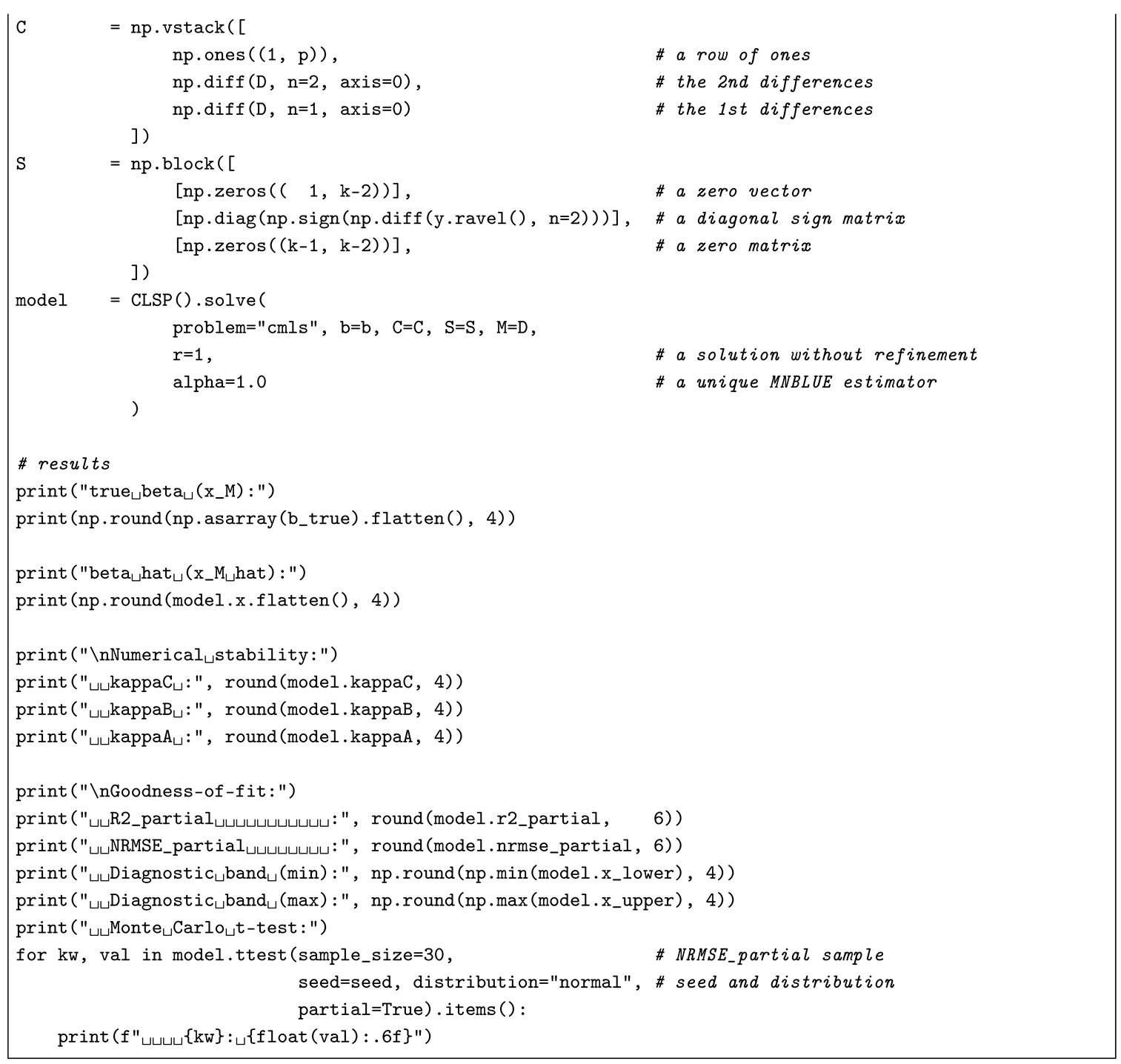

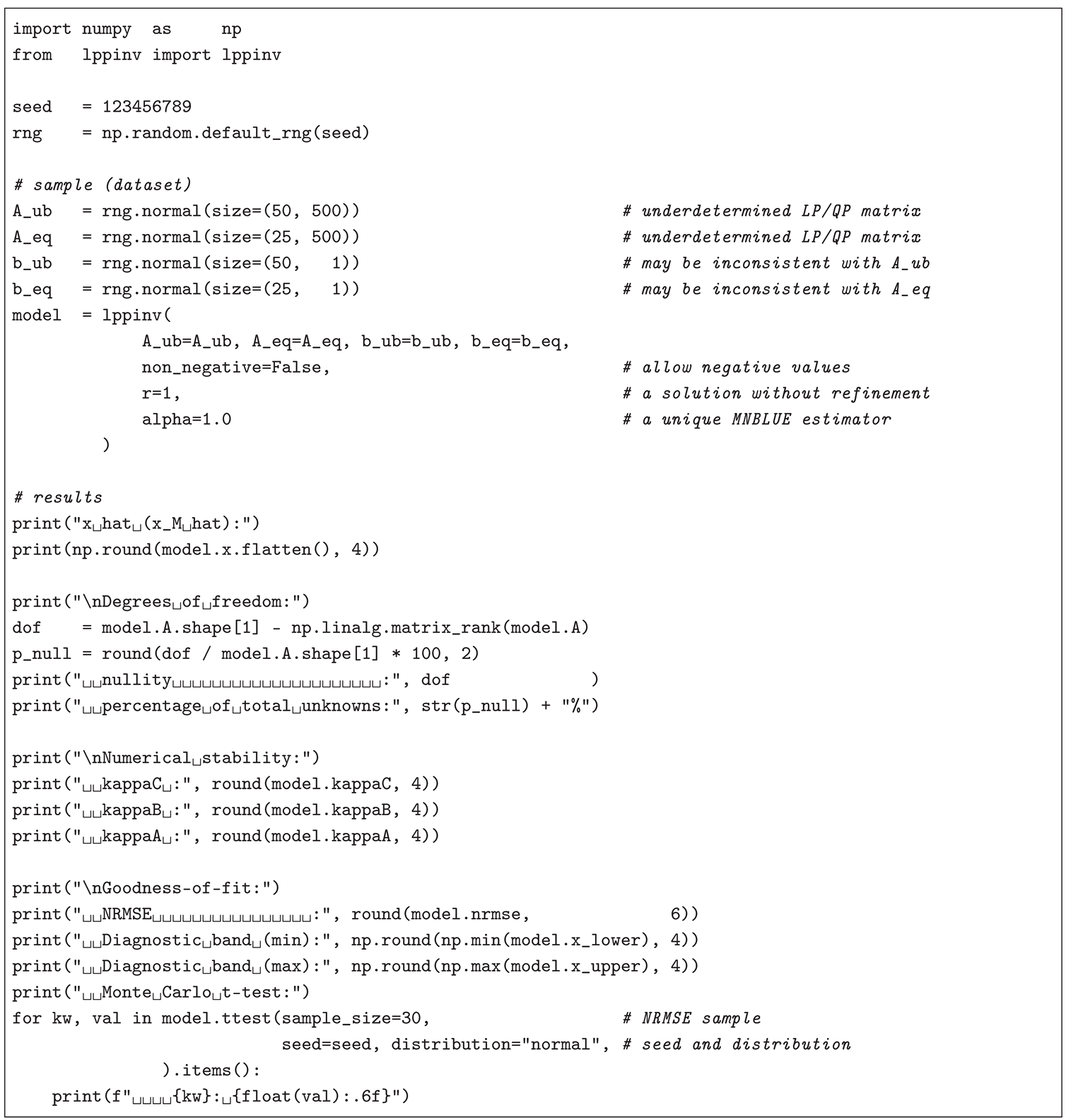

Finally, in the case of a

LPRLS/QPRLS numerical example, this text simulates potentially ill-posed LP (QP) problems, similar to the ones addressed in Whiteside et al. [

93] and Blair [

76], Blair [

94]: a solution

in its classical LP (QP) notation

(

),

— where

is a symmetric positive definite matrix,

,

, and

— is estimated from two randomly generated (coefficient) matrices

,

, and

,

, and two (right-hand-side) vectors

,

, and

,

, via CLSP assuming

,

, and

, where

and

are permutation matrices, while omitting

and

. The Python 3 code, based on

numpy and

pylppinv modules, installable by executing

pip install numpy pylppinv==1.3.0, for

,

, and

, with a non-iterated (

), unique (

), and MNBLUE (

) (two-step) CLSP solution (analogously consistent with the ones from cases (a) and (b) for APs (TMs) and the one from CMLS (RPs)), is implemented in Listing (e.g., for a Jupyter Notebook).

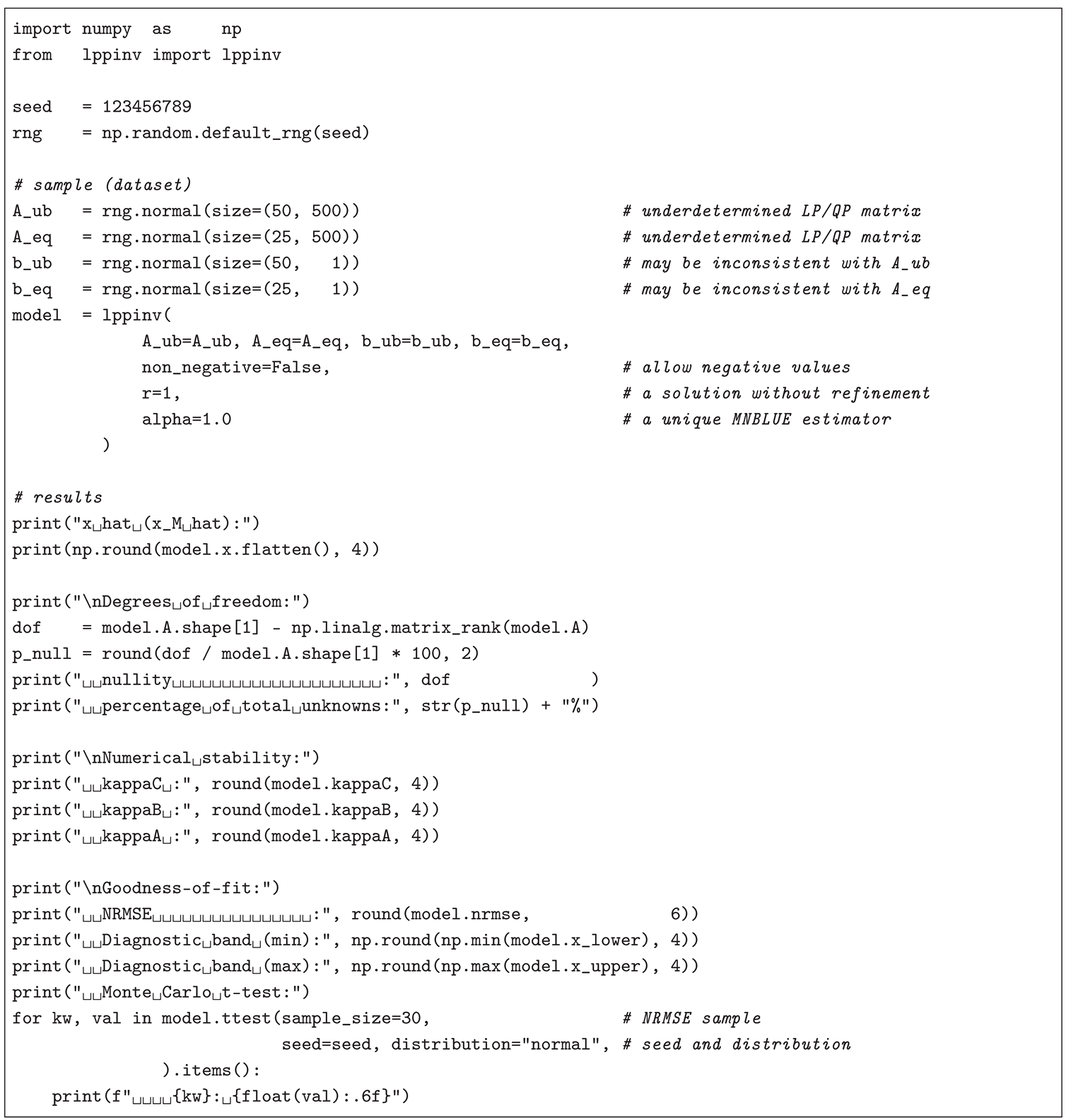

|

Listing 4. Simulated numerical example for a underdetermined and potentially infeasible LP (QP) problem. |

|

The nullity (i.e., underdeterminedness) of the CLSP design matrix, , is , corresponding to 86.36% of the total unknowns, accompanied by a greater (relatively high) error, , moderate condition-weighted diagnostic intervals , and only the right-sided Monte Carlo-based t-test for the mean of the NRMSE (on a sample of 30 NRMSE values obtained by substituting with ) suggesting consistency with expectations (i.e., with the ). Similarly, in terms of numerical stability, , indicating that the constraint block is well-conditioned despite a strongly rectangular . Hence, CLSP can be efficiently applied to AP (TM), CMLS (RP), and LPRLS/QPRLS special cases using Python 3, and the reader may wish to experiment with the code in Listings – by relaxing its uniqueness (setting ) or the MNBLUE characteristic (setting ).