Submitted:

11 September 2025

Posted:

15 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Traditional Dust Filtering Methods

2.2. Machine Learning Based Dust Filtering Methods

3. Proposed Dust Filtering Method

3.1. Neural Architecture: Reduced-PointNet++

3.2. Point Cloud Features

- SI: Spatial + Intensity features.

- STdm: Spatial + Temporal-magnitude-difference features.

- STdv: Spatial + Temporal-vector-difference features.

- STi: Spatial + Temporal-interpolated features.

- SITdm: Spatial + Intensity + Temporal-magnitude-difference features.

- SITdv: Spatial + Intensity + Temporal-vector-difference features.

- SITi: Spatial + Intensity + Temporal-interpolated features.

4. Experimental Results

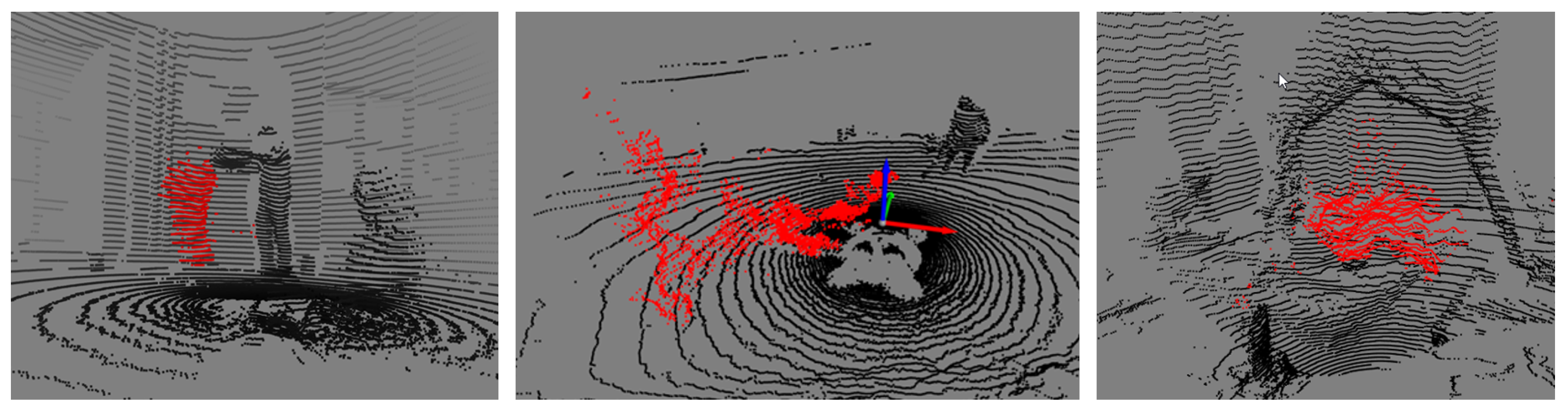

4.1. UCHILE-Dust Database

Interior 1 & 2 Subsets

Exterior 1 & 2 Subsets

Carén Subset

4.2. Experimental Setup

- The CrossEntropyLoss was used as a loss function but considering weights for the classes, since they are unbalanced. This weight consisted of the inverse of the proportion of each class within the corresponding training dataset.

- The number of training epochs was set at 100, but Early Stopping was implemented with 10 epochs of patience relative to the average accuracy value in the validation set. This metric was chosen as it is invariant to class imbalance.

- Data Augmentation methods were used: rotations, scaling, occlusion, and noise.

- A dropout rate of 0.7 was used to reduce overfitting and was applied to the last convolution layer before the classification layers.

4.3. Results in Real Environments with Static Sensors

4.4. Results in Real Environments with Moving Sensors

4.5. Measuring the Generalization Capabilities of the Method

| Method | Learn. Rate | Accuracy (avg) |

Precision (dust) |

Recall (dust) |

F1-Score (dust) |

| SI | 0.005 | 0.98 | 0.58 | 0.98 | 0.73 |

| STdm | 0.008 | 0.96 | 0.46 | 0.95 | 0.62 |

| STdv | 0.005 | 0.95 | 0.41 | 0.95 | 0.57 |

| STi | 0.005 | 0.96 | 0.64 | 0.94 | 0.76 |

| SITdm | 0.005 | 0.98 | 0.60 | 0.98 | 0.74 |

| SITdv | 0.008 | 0.97 | 0.54 | 0.98 | 0.70 |

| SITi | 0.01 | 0.98 | 0.57 | 0.98 | 0.72 |

| Method | Learn. Rate | Accuracy (avg) |

Precision (dust) |

Recall (dust) |

F1-Score (dust) |

| SI | 0.008 | 0.98 | 0.56 | 0.98 | 0.71 |

| STdm | 0.008 | 0.96 | 0.50 | 0.96 | 0.66 |

| STdv | 0.005 | 0.96 | 0.49 | 0.95 | 0.65 |

| STi | 0.01 | 0.96 | 0.52 | 0.95 | 0.67 |

| SITdm | 0.005 | 0.98 | 0.60 | 0.98 | 0.75 |

| SITdv | 0.008 | 0.98 | 0.58 | 0.98 | 0.73 |

| SITi | 0.008 | 0.98 | 0.57 | 0.98 | 0.72 |

4.6. Performance Comparison between PointNet++ and reduced-PointNet++

| Method | PointNet++ (s) | reduced-PointNet++ (s) |

| SI | 0.1383 | 0.0676 |

| STdm | 0.1398 | 0.0672 |

| STdv | 0.1419 | 0.0679 |

| STI | 0.1420 | 0.0694 |

| SITdm | 0.1372 | 0.0710 |

| SITdv | 0.1435 | 0.0664 |

| SITi | 0.1435 | 0.0630 |

| Average | 0.1409 | 0.0675 |

| Method | Learn. Rate | Accuracy (avg) |

Precision (dust) |

Recall (dust) |

F1-Score (dust) |

| SI | 0.005 | 0.98 | 0.55 | 0.98 | 0.71 |

| STdm | 0.005 | 0.96 | 0.46 | 0.96 | 0.62 |

| STdv | 0.005 | 0.95 | 0.51 | 0.94 | 0.66 |

| STi | 0.01 | 0.96 | 0.51 | 0.96 | 0.67 |

| SITdm | 0.005 | 0.98 | 0.58 | 0.98 | 0.73 |

| SITdv | 0.005 | 0.98 | 0.62 | 0.97 | 0.76 |

| SITi | 0.008 | 0.98 | 0.61 | 0.97 | 0.75 |

5. Discussion

Author Contributions

Funding

Conflicts of Interest

References

- Heinzler, R., Piewak, F., Schindler, P., y Stork, W., “Cnn-based lidar point cloud de- noising in adverse weather”, IEEE Robotics and Automation Letters, vol. 5, p. 2514– 2521, apr 2020. [CrossRef]

- Stanislas, L., Suenderhauf, N., y Peynot, T., “Lidar-based detection of airborne particles for robust robot perception”, en Proceedings of the Australasian Conference on Robotics and Automation (ACRA) 2018 (Woodhead, I., ed.), Australasian Conference on Robo- tics and Automation, ACRA, pp. 1–8, Australia: Australian Robotics and Automation Association (ARAA), 2018, https://eprints.qut.edu.au/125130/.

- Afzalaghaeinaeini, A., Design of Dust-Filtering Algorithms for LiDAR Sensors in Off- Road Vehicles Using the AI and Non-AI Methods. Tesis PhD, University of Ontario Institute of Technology, 2022.

- Parsons, T., Seo, J., Kim, B., Lee, H., Kim, J.-C., y Cha, M., “Dust de-filtering in lidar applications with conventional and cnn filtering methods”, IEEE Access, vol. PP, pp. 1–1, 01 2024. [CrossRef]

- Phillips, T., Guenther, N., y Mcaree, P., “When the dust settles: The four behaviors of lidar in the presence of fine airborne particulates”, Journal of Field Robotics, vol. 34, 02 2017. [CrossRef]

- Charron, N., Phillips, S., y Waslander, S. L., “De-noising of lidar point clouds corrupted by snowfall”, en 2018 15th Conference on Computer and Robot Vision (CRV), pp. 254–261, 2018. [CrossRef]

- Park, J.-I., Park, J., y Kim, K.-S., “Fast and accurate desnowing algorithm for lidar point clouds”, IEEE Access, vol. 8, pp. 160202–160212, 2020. [CrossRef]

- Afzalaghaeinaeini, A., Seo, J., Lee, D., y Lee, H., “Design of dust-filtering algorithms for lidar sensors using intensity and range information in off-road vehicles”, Sensors, vol. 22, no. 11, 2022. [CrossRef]

- Stanislas, L., Nubert, J., Dugas, D., Nitsch, J., Sünderhauf, N., Siegwart, R., Cadena, C., y Peynot, T., Airborne Particle Classification in LiDAR Point Clouds Using Deep Learning,pp.395–410. Springer, Singapore, 022021. [CrossRef]

- Qi, C. R., Su, H., Mo, K., y Guibas, L. J., “Pointnet: Deep learning on point sets for 3d classification and segmentation”, en Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 652–660, 2017.

- Qi,C.R.,Yi,L.,Su,H.,yGuibas,L.J.,“Pointnet++:Deephierarchicalfeaturelearning on point sets in a metric space”, Advances in neural information processing systems, vol. 30, 2017.

- Shi, H., Wei, J., Wang, H., Liu, F., y Lin, G., “Learning temporal variations for 4d point cloud segmentation”, International Journal of Computer Vision, vol. 132, no. 12, pp. 5603–5617, 2024. [CrossRef]

| Block | PointNet++ | reduced-PointNet++ |

| SA1 | 1024 pts, r=0.1, 32 nbr, MLP: [32, 32, 64] | 512 pts, r=0.1, 32 nbr, MLP: [16, 16, 32] |

| SA2 | 256 pts, r=0.2, 32 nbr, MLP: [64, 64, 128] | 128 pts, r=0.2, 32 nbr, MLP: [32, 32, 64] |

| SA3 | 64 pts, r=0.4, 32 nbr, MLP: [128, 128, 256] | - |

| SA4 | 16 pts, r=0.8, 32 nbr, MLP: [256, 256, 512] | - |

| FP4 | in_ch: 768, MLP: [256, 256] | - |

| FP3 | in_ch: 384, MLP: [256, 256] | - |

| FP2 | in_ch: 320, MLP: [256, 128] | in_ch: 96, MLP: [64, 32] |

| FP1 | in_ch: 128, MLP: [128, 128, 128] | in_ch: (32 + num_feats), MLP: [32, 32] |

| Conv1d | 128 → 128 | 32 → 32 |

| BatchNorm1d | 128 | 32 |

| Dropout | p=0.7 | p=0.7 |

| Conv1d (final) | 128 → num_classes | 32 → num_classes |

| Variant | Features Vector |

| SI | |

| STdm | |

| STdv | |

| STi | |

| SITdm | |

| SITdv | |

| SITi |

| Subset | Recordings | Point Clouds | Points | % Dust | Format | Train / Val / Test (%) |

| Interior 1 | 10 | 1,874 | 72,741,326 | 4.1% | PCAP | 82 / 09 / 09 |

| Interior 2 | 12 | 1,740 | 71,225,483 | 11.2% | PCAP | 70 / 15 / 15 |

| Exterior 1 | 10 | 1,529 | 45,311,889 | 3.2% | PCAP | 84 / 08 / 08 |

| Exterior 2 | 13 | 1,885 | 75,820,483 | 3.6% | PCAP | 66 / 17 / 17 |

| Carén | 13 | 7,089 | 234,929,532 | 8.1% | Rosbag | 46 / 26 / 28 |

| Subset | N | |||

| Interior 1 | 90 | 0.001 | 0.01 | 4 |

| Interior 2 | 90 | 0.001 | 0.01 | 4 |

| Exterior 1 | 90 | 0.01 | 0.01 | 4 |

| Exterior 2 | 90 | 0.01 | 0.01 | 4 |

| Carén | 90 | 0.001 | 0.01 | 4 |

| Method | Learn. Rate | Accuracy (avg) |

Precision (dust) |

Recall (dust) |

F1-Score (dust) |

| SI | 0.008 | 0.79 | 0.12 | 0.93 | 0.22 |

| STdm | 0.008 | 0.87 | 0.17 | 1.00 | 0.29 |

| STdv | 0.008 | 0.86 | 0.17 | 0.99 | 0.29 |

| STI | 0.01 | 0.89 | 0.26 | 0.91 | 0.41 |

| SITdm | 0.01 | 0.86 | 0.17 | 0.97 | 0.29 |

| SITdv | 0.005 | 0.77 | 0.13 | 0.85 | 0.23 |

| SITi | 0.005 | 0.80 | 0.15 | 0.85 | 0.26 |

| LIDROR | - | 0.76 | 0.14 | 0.77 | 0.24 |

| Method | Learn. Rate | Accuracy (avg) |

Precision (dust) |

Recall (dust) |

F1-Score (dust) |

| SI | 0.008 | 0.94 | 0.53 | 0.94 | 0.68 |

| STdm | 0.008 | 0.92 | 0.6 | 0.88 | 0.71 |

| STdv | 0.01 | 0.84 | 0.5 | 0.74 | 0.59 |

| STI | 0.008 | 0.87 | 0.55 | 0.8 | 0.65 |

| SITdm | 0.008 | 0.94 | 0.68 | 0.91 | 0.78 |

| SITdv | 0.008 | 0.95 | 0.66 | 0.93 | 0.77 |

| SITi | 0.008 | 0.94 | 0.6 | 0.92 | 0.73 |

| LIDROR | - | 0.86 | 0.21 | 0.96 | 0.35 |

| Method | Learn. Rate | Accuracy (avg) |

Precision (dust) |

Recall (dust) |

F1-Score (dust) |

| SI | 0.01 | 0.99 | 0.48 | 0.99 | 0.65 |

| STdm | 0.005 | 0.99 | 0.35 | 0.98 | 0.51 |

| STdv | 0.005 | 0.99 | 0.27 | 0.99 | 0.42 |

| STi | 0.005 | 0.97 | 0.46 | 0.95 | 0.62 |

| SITdm | 0.01 | 0.99 | 0.68 | 0.99 | 0.81 |

| SITdv | 0.005 | 0.99 | 0.54 | 0.98 | 0.70 |

| SITi | 0.01 | 0.99 | 0.51 | 0.98 | 0.67 |

| LIDROR | - | 0.91 | 0.03 | 0.98 | 0.06 |

| Method | Learn. Rate | Accuracy (avg) |

Precision (dust) |

Recall (dust) |

F1-Score (dust) |

| SI | 0.005 | 0.98 | 0.38 | 0.99 | 0.55 |

| STdm | 0.01 | 0.98 | 0.35 | 0.99 | 0.52 |

| STdv | 0.01 | 0.97 | 0.36 | 0.97 | 0.53 |

| STi | 0.01 | 0.94 | 0.2 | 0.97 | 0.34 |

| SITdm | 0.005 | 0.98 | 0.38 | 0.99 | 0.55 |

| SITdv | 0.005 | 0.98 | 0.39 | 0.99 | 0.57 |

| SITi | 0.008 | 0.98 | 0.44 | 0.99 | 0.61 |

| LIDROR | - | 0.88 | 0.09 | 0.98 | 0.16 |

| Method | Learn. Rate | Accuracy (avg) |

Precision (dust) |

Recall (dust) |

F1-Score (dust) |

| SI | 0.005 | 0.98 | 0.61 | 0.98 | 0.75 |

| STdm | 0.01 | 0.95 | 0.43 | 0.96 | 0.60 |

| STdv | 0.01 | 0.95 | 0.47 | 0.94 | 0.62 |

| STi | 0.008 | 0.96 | 0.72 | 0.94 | 0.81 |

| SITdm | 0.01 | 0.98 | 0.6 | 0.98 | 0.74 |

| SITdv | 0.008 | 0.98 | 0.62 | 0.98 | 0.76 |

| SITi | 0.005 | 0.98 | 0.63 | 0.97 | 0.76 |

| LIDROR | - | 0.89 | 0.18 | 0.98 | 0.03 |

| Method | Learn. Rate | Accuracy (avg) |

Precision (dust) |

Recall (dust) |

F1-Score (dust) |

| SI | 0.005 | 0.98 | 0.61 | 0.98 | 0.75 |

| STdm | 0.008 | 0.96 | 0.56 | 0.95 | 0.70 |

| STdv | 0.008 | 0.95 | 0.41 | 0.96 | 0.58 |

| STi | 0.005 | 0.96 | 0.56 | 0.95 | 0.71 |

| SITdm | 0.005 | 0.98 | 0.64 | 0.98 | 0.77 |

| SITdv | 0.005 | 0.98 | 0.7 | 0.97 | 0.82 |

| SITi | 0.008 | 0.98 | 0.62 | 0.98 | 0.76 |

| LIDROR | - | 0.89 | 0.18 | 0.98 | 0.3 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).