Submitted:

11 September 2025

Posted:

12 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Dataset and Emotion Categorization Framework

2.1. Dataset

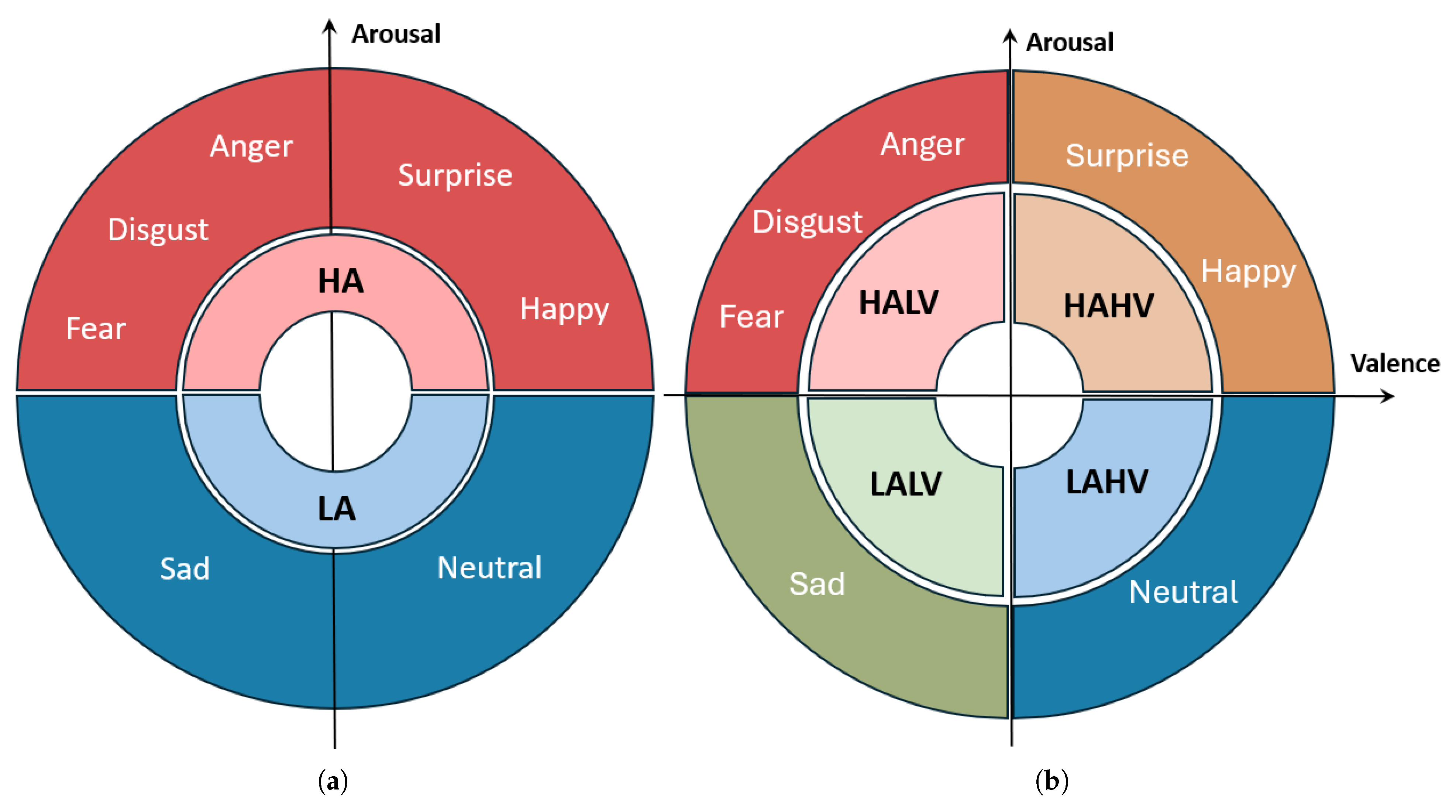

2.2. Emotion Categorization

- High Arousal High Valence (HAHV): Happy, surprise.

- High Arousal Low Valence (HALV): Disgust, anger, fear.

- Low Arousal Low Valence (LALV): Sad.

- Low Arousal High Valence (LAHV): Neutral.

- 45% of data falls within the HALV quadrant,

- 30% in HAHV,

- 15% in LALV, and

- 10% in LAHV.

3. Deep Learning Model

- Convolutional Layer (Conv1D): The first layer applies 64 filters with a kernel size of 3 and the ReLU activation function to extract temporal patterns from the input features.

- Pooling Layer: A MaxPooling1D layer with a pool size of 2 reduces dimensionality and emphasizes the most salient features.

- Dropout: To mitigate overfitting, dropout layers with rates between 0.25 and 0.5 were used at different stages of the network, depending on the architecture variant.

- Flatten and Dense Layers: After feature extraction, the output is flattened and passed to fully connected dense layers. The final output layer uses a sigmoid activation for binary classification (two halves) and softmax for multi-class settings (four quadrants and seven emotions).

3.1. Performance Metrics

- True positive (TP): The model correctly predicts an emotion.

- False negative (FN): The model fails to predict an emotion when it is the correct output.

- False Positive (FP): The model predicts an emotion incorrectly.

3.2. Ablation studies

4. System Performance

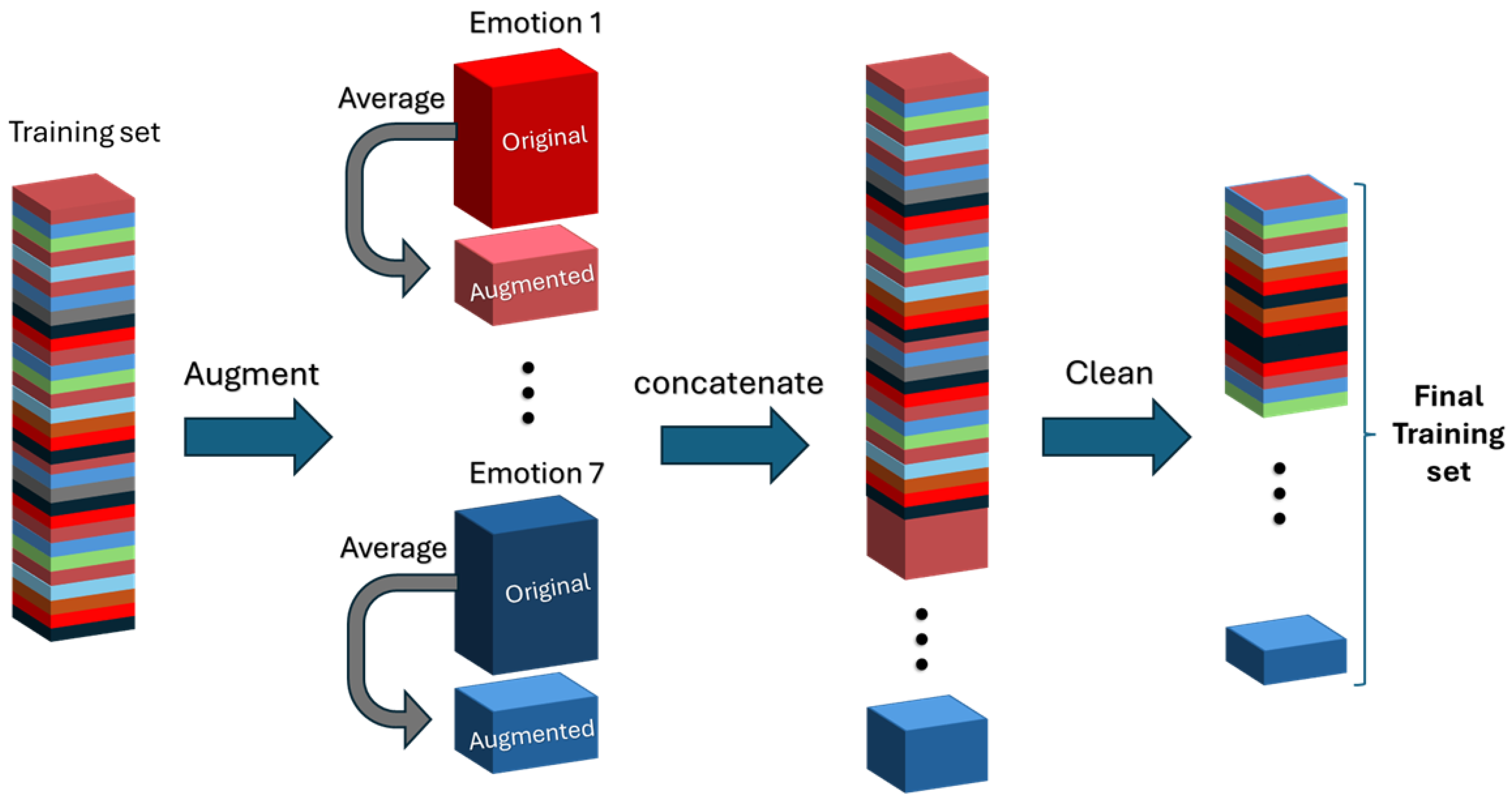

4.1. Data Augmentation and Noise Reduction

- Augmentation 1: This technique involves averaging two consecutive data entries for each emotion to create new synthetic entries.

- Augmentation 2: This approach generates even more synthetic data by averaging three, four, and five consecutive entries corresponding to the same emotion.

- Gaussian: Synthetic data is generated by adding Gaussian noise to the original dataset[35].

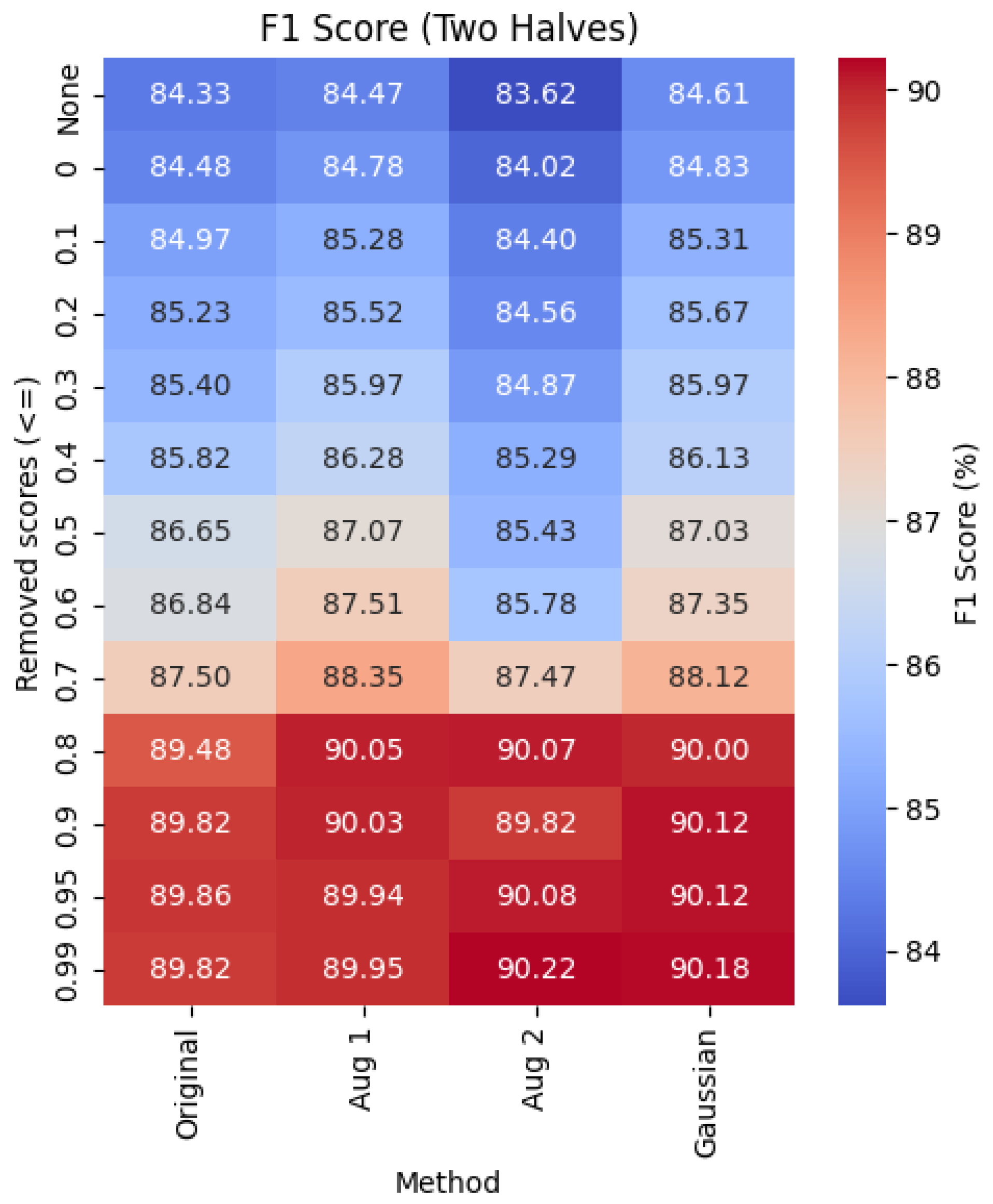

4.2. Impact of Predicting Two-Halves Emotions

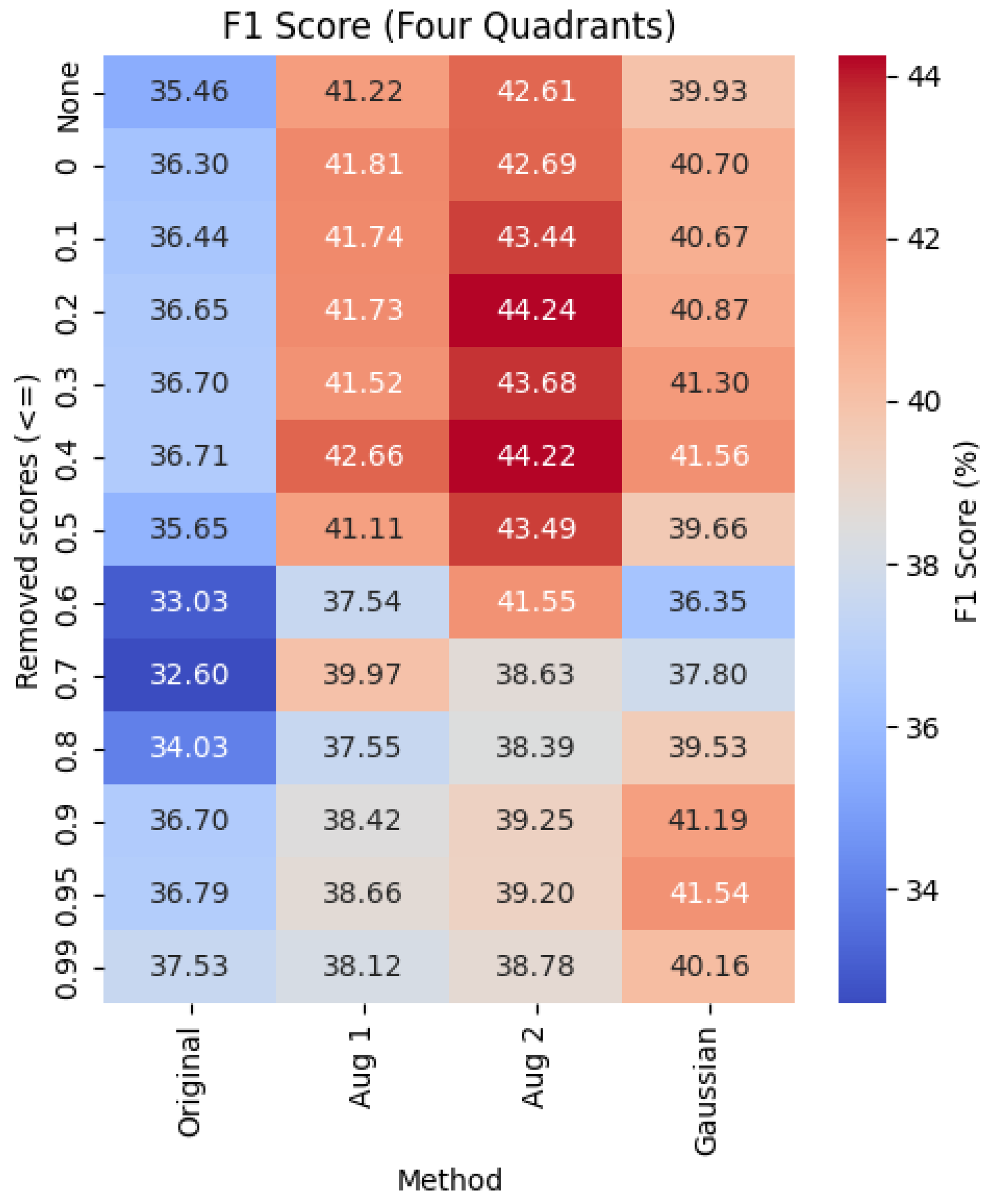

4.3. Impact of Predicting Four-Quadrant Emotions

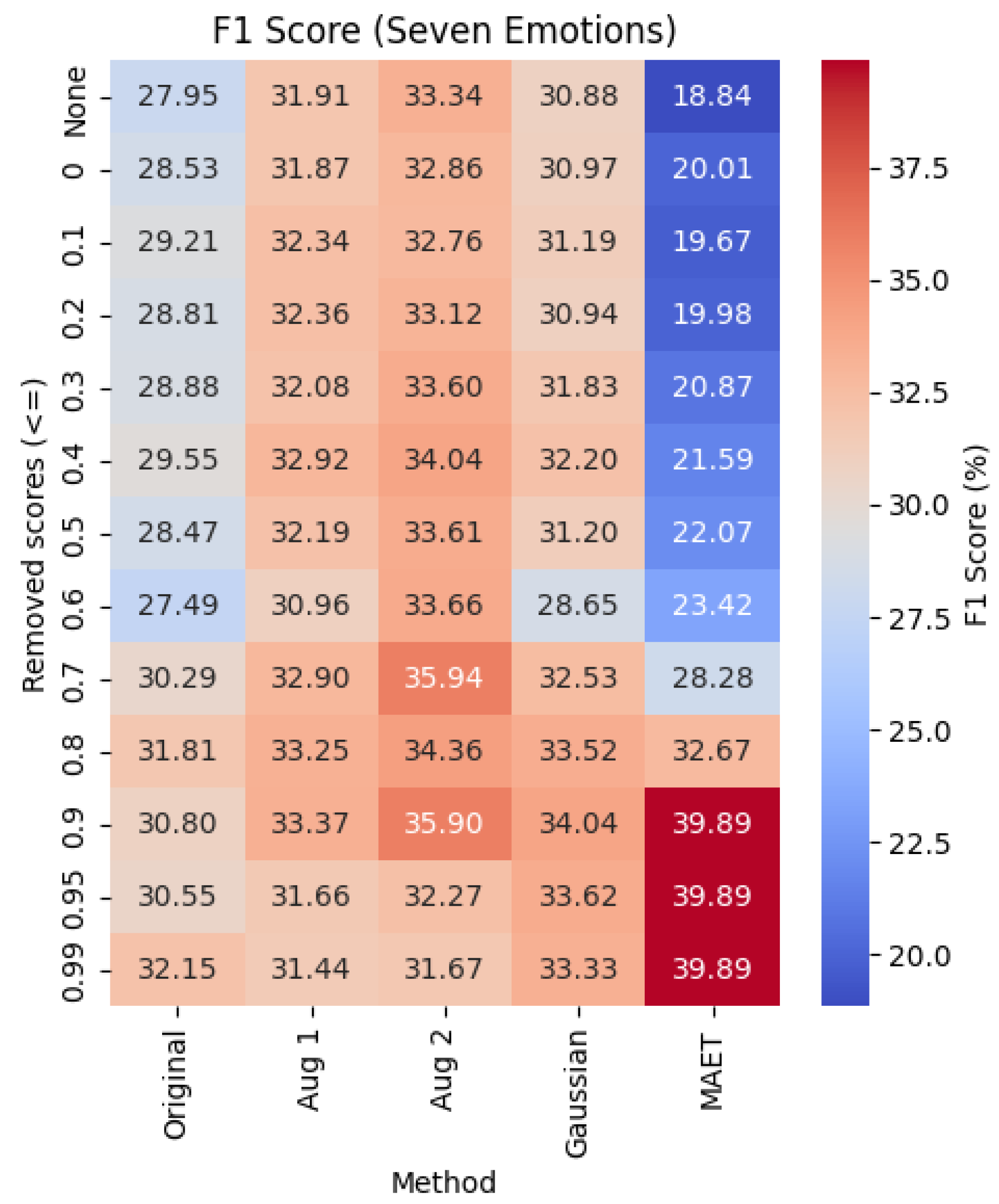

4.4. Impact of Predicting Seven Emotions

5. Conclusion

References

- A. Ng, “The age of data-centric AI,” 2021. [Online]. Available: https://www.deeplearning.ai/the-batch/what-is-data-centric-ai/.

- B. Settles, “Active learning literature survey,” University of Wisconsin-Madison, Tech. Rep. 1648, 2009. [Online]. Available: http://burrsettles.com/pub/settles.activelearning.pdf.

- G. Widmer and M. Kubat, “Learning in the presence of concept drift and hidden contexts,” Machine Learning, vol. 23, no. 1, pp. 69–101, 1996.

- Y. Zhang, J. Gao, Z. Tan, L. Zhou, K. Ding, M. Zhou, S. Zhang, and D. Wang, “Data-centric foundation models in computational healthcare: A survey,”, 2024. arXiv:2401.02458.

- A. Zahid, J. K. Poulsen, R. Sharma, and S. C. Wingreen, “A systematic review of emerging information technologies for sustainable data-centric health-care,” International Journal of Medical Informatics, vol. 149, p. 104420, 2021.

- J. M. Johnson and T. M. Khoshgoftaar, “Data-centric AI for healthcare fraud detection,” SN Computer Science, vol. 4, no. 4, p. 389, 2023.

- J. Adeoye, L. Hui, and Y.-X. Su, “Data-centric artificial intelligence in oncology: A systematic review assessing data quality in machine learning models for head and neck cancer,” Journal of Big Data, vol. 10, no. 1, p. 28, 2023.

- F. Emmert-Streib and O. Yli-Harja, “What is a digital twin? Experimental design for a data-centric machine learning perspective in health,” International Journal of Molecular Sciences, vol. 23, no. 21, p. 13149, 2022.

- J. A. L. Marques, F. N. B. Gois, J. A. N. da Silveira, T. Li, and S. J. Fong, “AI and deep learning for processing the huge amount of patient-centric data that assist in clinical decisions,” in Cognitive and Soft Computing Techniques for the Analysis of Healthcare Data, Elsevier, 2022, pp. 101–121.

- A. Bedenkov, C. Moreno, L. Agustin, N. Jain, A. Newman, L. Feng, and G. Kostello, “Customer centricity in medical affairs needs human-centric artificial intelligence,” Pharmaceutical Medicine, vol. 35, no. 1, pp. 21–29, 2021.

- M. Y. Jabarulla and H.-N. Lee, “A blockchain and artificial intelligence-based, patient-centric healthcare system for combating the COVID-19 pandemic: Opportunities and applications,” in Healthcare, vol. 9, no. 8, p. 1019, 2021.

- E. Moon, A. S. M. S. Sagar, and H. S. Kim, “Multimodal daily-life emotional recognition using heart rate and speech data from wearables,” IEEE Access, 2024.

- M. Kamruzzaman, J. Salinas, H. Kolla, K. Sale, U. Balakrishnan, and K. Poorey, “GenAI-based digital twins-aided data augmentation increases accuracy in real-time cokurtosis-based anomaly detection of wearable data,” 2024.

- P. Chang, H. Li, S. F. Quan, S. Lu, S.-F. Wung, J. Roveda, and A. Li, “A transformer-based diffusion probabilistic model for heart rate and blood pressure forecasting in Intensive Care Unit,” Computer Methods and Programs in Biomedicine, vol. 246, p. 108060, 2024.

- L. Santamaria-Granados, J. F. Mendoza-Moreno, A. Chantre-Astaiza, M. Munoz-Organero, and G. Ramirez-Gonzalez, “Tourist experiences recommender system based on emotion recognition with wearable data,” Sensors, vol. 21, no. 23, p. 7854, 2021.

- W.-L. Zheng and B.-L. Lu, “Investigating critical frequency bands and channels for EEG-based emotion recognition with deep neural networks,” IEEE Transactions on Autonomous Mental Development, vol. 7, no. 3, pp. 162–175, 2015.

- W.-L. Zheng, W. Liu, Y. Lu, B.-L. Lu, and A. Cichocki, “Emotionmeter: A multimodal framework for recognizing human emotions,” IEEE Transactions on Cybernetics, vol. 49, no. 3, pp. 1110–1122, 2018.

- W. Liu, J.-L. Qiu, W.-L. Zheng, and B.-L. Lu, “Comparing recognition performance and robustness of multimodal deep learning models for multimodal emotion recognition,” IEEE Transactions on Cognitive and Developmental Systems, vol. 14, no. 2, pp. 715–729, 2021.

- X. Du, C. Ma, G. Zhang, J. Li, Y.-K. Lai, G. Zhao, X. Deng, Y.-J. Liu, and H. Wang, “An efficient LSTM network for emotion recognition from multichannel EEG signals,” IEEE Transactions on Affective Computing, vol. 13, no. 3, pp. 1528–1540, 2020.

- Y. Li, J. Chen, F. Li, B. Fu, H. Wu, Y. Ji, Y. Zhou, Y. Niu, G. Shi, and W. Zheng, “GMSS: Graph-based multi-task self-supervised learning for EEG emotion recognition,” IEEE Transactions on Affective Computing, vol. 14, no. 3, pp. 2512–2525, 2022.

- P. Zhong, D. Wang, and C. Miao, “EEG-based emotion recognition using regularized graph neural networks,” IEEE Transactions on Affective Computing, vol. 13, no. 3, pp. 1290–1301, 2020.

- C. Tian, Y. Ma, J. Cammon, F. Fang, Y. Zhang, and M. Meng, “Dual-encoder VAE-GAN with spatiotemporal features for emotional EEG data augmentation,” IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 31, pp. 2018–2027, 2023.

- Y. Luo, L.-Z. Zhu, Z.-Y. Wan, and B.-L. Lu, “Data augmentation for enhancing EEG-based emotion recognition with deep generative models,” Journal of Neural Engineering, vol. 17, no. 5, p. 056021, 2020.

- M. M. Krell and S. K. Kim, “Rotational data augmentation for electroencephalographic data,” in Proc. 39th Annu. Int. Conf. IEEE Eng. Med. Biol. Soc. (EMBC), 2017, pp. 471–474.

- F. Lotte, “Signal processing approaches to minimize or suppress calibration time in oscillatory activity-based brain–computer interfaces,” Proceedings of the IEEE, vol. 103, no. 6, pp. 871–890, 2015.

- W.-B. Jiang, X.-H. Liu, W.-L. Zheng, and B.-L. Lu, “SEED-VII: A multimodal dataset of six basic emotions with continuous labels for emotion recognition,” IEEE Transactions on Affective Computing, 2024.

- J. Wang, Y. Huang, S. Song, B. Wang, J. Su, and J. Ding, “A novel Fourier Adjacency Transformer for advanced EEG emotion recognition,” arXiv preprint arXiv:2503.13465, 2025.

- Sun, X. Wang, and L. Chen, “ChannelMix-based transformer and convolutional multi-view feature fusion network for unsupervised domain adaptation in EEG emotion recognition,” Expert Systems with Applications, vol. 280, p. 127456, 2025.

- N. Moghadam, V. Honary, and S. Khoubrouy, “Enhancing neural network performance for medical data analysis through feature engineering,” in Proc. 17th Int. Conf. Signal Process. Commun. Syst. (ICSPCS), 2024, pp. 1–5.

- W. B. Jiang, X. H. Liu, W. L. Zheng, and B. L. Lu, “Multimodal adaptive emotion transformer with flexible modality inputs on a novel dataset with continuous labels,” in Proceedings of the 31st ACM International Conference on Multimedia, Ottawa, Canada, 2023, pp. 5975–5984.

- J. A. Russell, “A circumplex model of affect,” Journal of Personality and Social Psychology, vol. 39, no. 6, p. 1161, 1980.

- S. Kiranyaz, T. T. Ince, O. Abdeljaber, S. Hammoud, N. E. Ince, and M. Gabbouj, “1D convolutional neural networks and applications: A survey,” Mechanical Systems and Signal Processing, vol. 151, p. 107398, 2021.

- N. Srivastava, G. Hinton, A. Krizhevsky, I. Sutskever, and R. Salakhutdinov, “Dropout: A simple way to prevent neural networks from overfitting,” Journal of Machine Learning Research, vol. 15, pp. 1929–1958, 2014.

- R. Kohavi, “A study of cross-validation and bootstrap for accuracy estimation and model selection,” in Proc. Int. Joint Conf. Artif. Intell. (IJCAI), 1995.

- F. Wang, S.-h. Zhong, J. Peng, J. Jiang, and Y. Liu, “Data augmentation for EEG-based emotion recognition with deep convolutional neural networks,” in Proc. 24th Int. Conf. MultiMedia Modeling (MMM), Bangkok, Thailand, Feb. 5–7, 2018, Part II, pp. 82–93. Springer, 2018.

| 2 Halves | 4 Quadrants | 7 Emotions | |||||

| No. | Model Architecture | Accuracy % | F1 Score % | Accuracy % | F1 Score % | Accuracy % | F1 Score % |

| 1 | Conv1D MaxPooling1D Dropout(0.5) Flatten Dense |

||||||

| 2 | Conv1D Dropout(0.25) MaxPooling1D Flatten Dense(128, relu) Dropout(0.25) Dense |

84 | |||||

| 3 | Conv1D Dropout(0.5) MaxPooling1D Flatten Dense(128, relu) Dropout(0.5) Dense |

||||||

| Original | Augmentation 1 | Augmentation 2 | Gaussian | |||||

|---|---|---|---|---|---|---|---|---|

| Removed scores () | Accuracy % | F1 Score % | Accuracy % | F1 Score % | Accuracy % | F1 Score % | Accuracy % | F1 Score % |

| None | ||||||||

| 0 | ||||||||

| 0.1 | ||||||||

| 0.2 | ||||||||

| 0.3 | ||||||||

| 0.4 | ||||||||

| 0.5 | ||||||||

| 0.6 | 78 | |||||||

| 0.7 | ||||||||

| 0.8 | 90 | |||||||

| 0.9 | ||||||||

| 0.95 | ||||||||

| 0.99 | ||||||||

| Original | Augmentation 1 | Augmentation 2 | Gaussian | |||||

|---|---|---|---|---|---|---|---|---|

| Removed scores () | Accuracy % | F1 Score % | Accuracy % | F1 Score % | Accuracy % | F1 Score % | Accuracy % | F1 Score % |

| None | ||||||||

| 0 | ||||||||

| 0.1 | ||||||||

| 0.2 | ||||||||

| 0.3 | ||||||||

| 0.4 | ||||||||

| 0.5 | ||||||||

| 0.6 | ||||||||

| 0.7 | ||||||||

| 0.8 | ||||||||

| 0.9 | ||||||||

| 0.95 | 63 | |||||||

| 0.99 | ||||||||

| Original | Augmentation 1 | Augmentation 2 | Gaussian | MAET | ||||||

|---|---|---|---|---|---|---|---|---|---|---|

|

Removed scores () |

Accuracy % | F1 Score % | Accuracy % | F1 Score % | Accuracy % | F1 Score % | Accuracy % | F1 Score % | Accuracy % | F1 Score % |

| None | ||||||||||

| 0 | ||||||||||

| 0.1 | ||||||||||

| 0.2 | ||||||||||

| 0.3 | ||||||||||

| 0.4 | ||||||||||

| 0.5 | ||||||||||

| 0.6 | ||||||||||

| 0.7 | ||||||||||

| 0.8 | ||||||||||

| 0.9 | ||||||||||

| 0.95 | ||||||||||

| 0.99 | ||||||||||

| Setup | Method | Threshold | Best F1 | Original F1 | Improvement (%) |

|---|---|---|---|---|---|

| Two Halves | Augmentation 2 | 0.99 | 90.22 | 84.33 | +7.0% |

| Four Quadrants | Augmentation 2 | 0.2 | 44.24 | 35.46 | +24.7% |

| Seven Emotions | MAET | 0.9 | 39.89 | 27.95 | +42.7% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).