Submitted:

12 August 2025

Posted:

15 August 2025

You are already at the latest version

Abstract

Keywords:

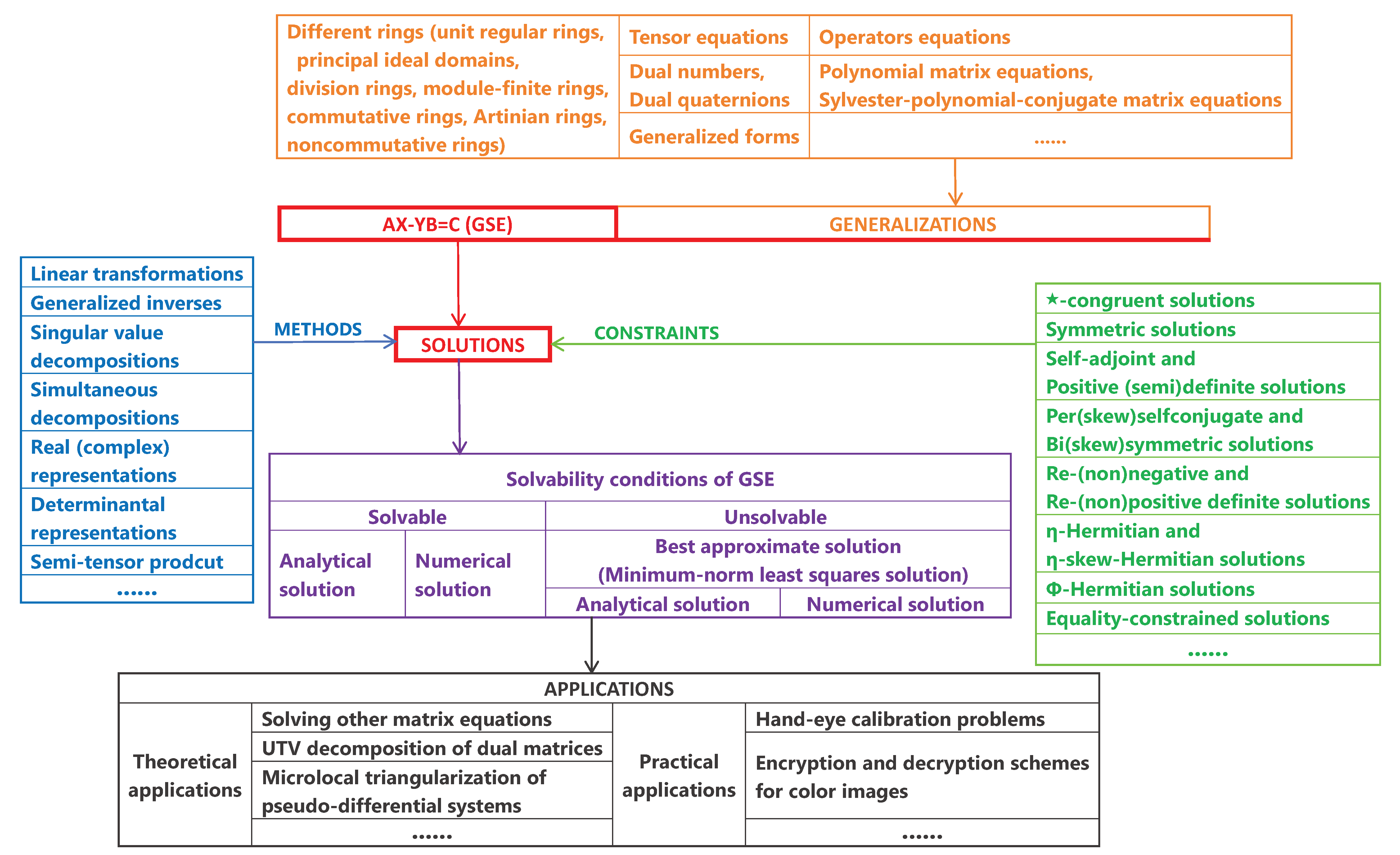

1. Introduction

2. Preliminary

- (1)

- Though some research does not focus directly on GSE itself, its traces are easily detectable. Thus, we regard such content as an integral part of GSE-related research.

- (2)

- We selected core literature relevant to this review. Results closely related to the theme are rigorously presented as theorems, while less relevant conclusions are briefly summarized narratively. Furthermore, the proofs of these theorems are omitted here.

- (3)

- The remarks in this paper include comments and suggestions on relevant results, encompassing both previous researchers’ views and our reflections, questions, and prospects.

3. Roth’s Equivalence Theorem

4. Different Methods on GSE

4.1. Method by Linear Transformations and Subspace Dimensions

- Step 1:

-

Define byThen, the condition (4) yields

- Step 2:

-

LetThen,LetFor , defineThen So, .

- Step 3:

- Since with , there also exists such in . Therefore, , i.e., (3) holds. □

- (1)

- In [80], Flanders and Wimmer mentioned that by making small modifications to the above proof, one can similarly obtain the proof of RET under the condition of rectangular matrices A, B, and C.

- (2)

4.2. Method by Generalized Inverses

4.3. Method by Singular Value Decompositions

4.4. Method by Simultaneous Decompositions

4.5. Method by Real (Complex) Representations

4.6. Method by Determinantal Representations

- (1)

-

For , the i-th row determinant of A is defined bywhere , and for and .

- (2)

-

For , the j-th column determinant of A is defined bywhere and for and .

- (1)

- Eq. (22) is solvable;

- (2)

- ;

- (3)

- ;

- (1)

-

The restricted Eq. (25) is solvable if and only ifin which case,whereand , , , , , and are arbitrary matrices over with appropriate dimensions.

- (2)

-

Let , and be full column rank matrices such thatDenote and . If Eq. (25) is solvable, thenwith is the j-th column ofand is the i-th row ofwhere , , , , and and are arbitrary matrices over with appropriate orders.

4.7. Method by Semi-Tensor Prodcuts

- (1)

- It is applied to any two matrices;

- (2)

- It has certain commutative properties;

- (3)

- It inherits all properties of the conventional matrix product;

- (4)

- It enables easy expression of multilinear functions (mappings);

- (1)

- Then, Eq. (26) is consistent if and only if

- (2)

-

LetThen,

- (3)

-

If satisfiesthen

5. Constrained Solutions of GSE

5.1. Chebyshev Solutions and -Solutions

5.2. ★-Congruent Solutions

5.3. (Minimum-norm least-squares) symmetric solutions

- (1)

-

LetThen if and only ifin which case,

- (2)

-

If satisfiesthen is unique and

- (3)

-

LetThen,

- (4)

-

If satisfiesthen is unique and

5.4. Self-Adjoint and Positive (Semi)Definite Solutions

- (1)

- (2)

- (3)

- (4)

- (5)

- (6)

- (7)

5.5. Per(Skew)Symmetric and Bi(Skew)Symmetric Solutions

- (1)

- (2)

- (3)

- (4)

- (1)

- (2)

- (3)

- (4)

5.6. Maximal and minimal ranks of the general solution

- (1)

- Then,

- (2)

-

LetThen,

- (3)

-

LetThen,

5.7. Re-(non)negative and Re-(non)positive definite solutions

- (1)

- X is Re-positive definite if and only if

- (2)

- X is Re-negative definite if and only if

- (3)

- X is Re-nonnegative definite if and only if

- (4)

- X is Re-nonpositive definite if and only if

- (5)

- Y is Re-positive definite if and only if

- (6)

- Y is Re-negative definite if and only if

- (7)

- Y is Re-nonnegative definite if and only if

- (8)

- Y is Re-nonpositive definite if and only if

5.8. -Hermitian and -skew-Hermitian solutions

- (1)

- Eq. (46) has an η-Hermitian solution pair ;

- (2)

- and ;

- (3)

- and .

- (1)

- The statement (47) holds.

- (2)

- There exist the matrices and over such that

- (3)

- There exist the matrices and over such that

- (1)

-

There exists a matrix pair such thatif and only if there exist the matrices , , and such that

- (2)

-

There exists a matrix pair such thatif and only if there exist the matrices , , and such that

- (1)

- Eq. (46) has an η-skew-Hermitian solution pair ;

- (2)

- and ;

- (3)

- and ;

5.9. -Hermitian solutions

- (1)

-

We call ϕ an anti-endomorphism if for any , ϕ satisfiesAn anti-endomorphism ϕ is called an involution if is the identity map.

- (2)

-

Let ϕ be a nonzero involution. Then ϕ can be represented as a matrix in with respect to the basis , i.e.,where either (in which case ϕ is called a standard involution), or is an orthogonal symmetric matrix with the eigenvalues (in which case ϕ is called a nonstandard involution).

- (3)

-

Let ϕ be a nonstandard involution and . DefineIf with , then A is called a ϕ-Hermitian matrix.

- (1)

- The system (50) has a solution such that .

- (2)

- The following rank equalities hold:

- (3)

- The following equations hold:

- (1)

-

When , Theorem 26 yields the result forwhich can be regarded as Eq. (1) under the constrain that X is ϕ-Hermitian, i.e.,

- (2)

-

Note that ϕ-Hermitian matrices are a generalization of Hermitian matrices. In [119], Theorems 5.1, 5.2, He and Wang have investigated the following problem over :which is clearly similar to the problem (52).

- (3)

- By the same method as in Remark 30, we can also discuss the following problem:

5.10. Equality-constrained solutions

- (1)

- (2)

- The following rank equations hold:

- (3)

- The the following equations hold:

6. Various Generalizations of GSE

6.1. Generalizing RET Over Different Rings

6.1.1. Generalizing RET over unit regular rings

- (1)

- M has an inner inverse with the form of ;

- (2)

- has a solution pair ;

- (3)

- for all and ;

- (4)

- , where and ;

- (5)

- , where are invertible;

- (6)

- for all and ;

- (7)

- is a reflexive inverse of M.

- (5a)

- ;

- (5b)

- .

6.1.2. Generalizing RET over Principal Ideal Domains

- (1)

-

Let , , and . Then, the matrix equationis consistent if and only ifare equivalent.

- (2)

-

Let for . Then,are equivalent if and only if there exist such thatfor .

6.1.3. Generalizing RET over Division and Module-Finite Rings

6.1.4. Generalizing RET over Commutative Rings

- (i)

- , , and are unknown;

- (ii)

- for , the symbol denotes the matrix transpose and, for the complex number field, also the matrix conjugate transpose ,

- (i)

- of complex matrix equations, in which and is the complex conjugate of X,

- (ii)

- of quaternion matrix equations, in which and is the quaternion conjugate transpose of X,

6.1.5. Generalizing RET over Artinian and Noncommutative Rings

- (1)

- A semisimple Artinian ring has the equivalence property.

- (2)

- An Artinian principal ideal ring has the equivalence property.

6.2. Generalizing RET to a rank minimization problem

6.3. GSE over Dual Numbers and Dual Quaternions

- (1)

- Eq. (1) has a solution pair and ;

- (2)

- and ;

- (3)

- The following rank equations hold:

6.4. Linear Operator Equations on Hilbert spaces

- (1)

- If the spectra of A and B are contained in the open right half-plane and the open left half-plane, respectively, then the operator Eq. (1) has the solution pair

- (2)

-

Suppose that A and B are Hermitian operators such thatwhere α and β are eigenvalues of A and B, respectively. Assume that for an absolutely integrable function f defined on , its Fourier transform satisfieswhere . Then, the operator Eq. (1) has the solution pair

6.5. Tensor Equations

- (1)

-

Theorem 48 is a direct corollary of [120], Theorem 5.1, which establishes the solvability conditions and the general solution for the following quaternion tensor equation:where and are unknown and other tensors are given over .

- (2)

-

Inspired by the transformation between tensors and matrices over (see [16], Definition 2.8), He et al. [117,120] defined an analogous transformation over , i.e., the transformation f is a map defined aswhere the components of A are given by[120], Lemma 2.2 shows that the transformation f is a bijection satisfyingfor and . The transformation f ingeniously bridges quaternion tensors under the Einstein product and quaternion matrices under the ordinary product. By virtue of its isomorphism property, f serves as a powerful tool for studying problems related to quaternion tensors under the Einstein product.

- (2)

- (1)

-

Then,where is arbitrary with appropriate dimensions.

- (2)

-

If satisfiesthen is unique and

6.6. Polynomial matrix equations

6.6.1. By the divisibility of polynomials

6.6.2. By skew-prime polynomial matrices

6.6.3. By the realization of matrix fraction descriptions

- (1)

-

Under the hypotheses of Theorem 52, letIn terms of [66], Lemma 2.2, Emre and Silverman have shown thatwhere . This implies that to characterize , it is sufficient to characterize .

- (2)

-

In [66], Section 3, Eq. (71) is further generalized to the case where Q is a general polynomial matrix. In fact, for , there exist unimodular polynomial matrices and such thatwhere is the nonsingular polynomial matrix. LetThen,

6.6.4. By the Unilateral Polynomial Matrix Equation

- (1)

- and are relatively left prime;

- (2)

- is nonsingular and satisfies that is strictly proper;

- (3)

- is the right coprime factorization of , where is row reduced.

6.6.5. By the equivalence of block polynomial matrices

6.6.6. By Jordan Systems of Polynomial Matrices

- (1)

- Eq. (80) is consistent;

- (2)

- There exists a pair of Jordan systems of with property for each ;

- (3)

- All pairs of Jordan systems of have property for each .

6.6.7. By linear matrix equations

- (1)

- Let . If Eq. (83) is solvable, then .

- (2)

-

Let . There exists satisfying if and only ifwhere

- (3)

-

Let . There exists satisfying if and only ifwhere , , and .

- (1)

-

For , letand . Then,

- (2)

- The explicit solutions to Eqs. (85) and (86) have been studied in [131,298], which also serve as a starting point of SubSection 6.7 in this paper.

- (3)

- Moreover, Sheng and Tian [22] mentioned that Theorem 58 still holds when the field is extended to a commutative ring with identity.

6.6.8. By Root Functions of Polynomial Matrices

- (1)

- For each satisfying , if is a right root function of at of order s and is a left root function of at of order t, then has a zero at of order at least ;

- (2)

- If is a right root function of at zero of order and is a left root function of at zero of order , then has a zero of order at least .

6.7. Sylvester-Polynomial-Conjugate Matrix Equations

- (1)

- [312], Theorem 9 guarantees the existence of the polynomial matrix in Theorem 61.

- (2)

-

TakingEq. (91) over reduces towhere , , and . Clearly, Theorem 61 is also a generalization of RET over .

- (3)

-

In [310], Theorem 1, Wu et al. characterized the homogeneous case of Eq. (91) more specifically via a pair of right coprime polynomial matrices. Moreover, in [310], Remark 4, they utilized the same method to discuss a more general form of Eq. (91), i.e.,where are unknown and others are given.

- (4)

- It can be observed that [310], Lemmas 11 and 12 are crucial for proving Theorem 61 and [312], Theorem 1. Meanwhile, it should be noted that [310], Lemmas 11 and 12 provide only necessary conditions for left and right coprimeness, respectively. Thus, we contend that exploring the converse problems of these two lemmas is interesting.

- (5)

- (i)

- for , , and ;

- (ii)

- for , , , and ;

- (iiii)

- for , , , and .

- (vi)

- for any ,

- (v)

- for any ,

- (iv)

- for any .

6.8. Generalized forms of GSE

7. Iterative Algorithms

- (1)

- In 1984, Ziętak [345], Section 3 proposed an algorithm to compute the -solutions of Eq. (5) over using [345], Theorem 2.3. In the same period, analogous to Algorithm R[343] for a nonlinear matrix equation, Ziętak [344] devised Algorithm T. Using this algorithm, [344], Theorems 5.2 and 5.3 yield a Chebyshev solution of Eq. (5) under the conditions (29) and (28), respectively.

- (2)

- (3)

- (4)

- (I)

- (II)

-

The condition number is an important topic in numerical analysis, characterizing the worst-case sensitivity of problems to input data perturbations. A large condition number indicates an ill-posed problem. Consider the following matrix equation:where X and Y are unknown.

- (i)

- (ii)

- (iii)

- In 2013, Diao et al. [52] developed the small sample statistical condition estimation algorithm to evaluate the normwise, mixed, and componentwise condition numbers of Eq. (108) over . In [52], they also investigated the effective condition number for Eq. (108) and derived sharp perturbation bounds using this condition number.

- (III)

- 1.

- In 2010, Dehghan and Hajarian [49] presented an iterative algorithm for solving the generalized bisymmetric solutions of the generalized coupled Sylvester matrix equation over :where X and Y are unknown generalized bisymmetric matrices.

- (IV)

- (V)

- In 2018, inspired by [128,338], Lv and Ma [194], Section 3 proposed a parametric iterative algorithm for Eq. (108) over . Moreover, in [194], Section 4, they developed an accelerated iterative algorithm based on this parametric approach. Note that Ref. [338] is a monograph on iterative algorithms for constrained solutions of matrix equations.

- (VI)

- Interestingly, in 2024, Ma et al. [195] proposed a Newton-type splitting iterative method for the coupled Sylvester-like absolute value equation :where X and Y are unknown. Here, means that each component of a matrix A is absolute-valued.

- (VII)

- (A)

-

In 2005–2006, using the hierarchical identification principle, Ding and Chen [53,54] presented a large family of iterative methods for the more general form of Eq. (5) over , i.e.,where are unknown. These iterative methods subsume the well-known Jacobi and Gauss-Seidel iterations. Subsequent scholars have conducted more extensive research on numerical algorithms for Eq. (110).

- (a)

- (b)

- (c)

- (d)

- (e)

- In 2017, based on the Hestenes-Stiefel version of the biconjugate residual (BCR) algorithm, Hajarian [105] solved the generalized Sylvester matrix equationover with the generalized reflexive solutions . In 2018, Lv and Ma [193] introduced another Hestenes-Stiefel version of BCR method for computing the centrosymmetric or anti-centrosymmetric solutions of Eq. (110) over .

- (f)

- In 2018, inspired by [208], Sheng [234] proposed a relaxed gradient based iterative (RGI) algorithm to solve Eq. (108), and further generalized this algorithm to Eq. (110). Moreover, Numerical examples in [234] demonstrate that the RGI algorithm outperforms the iterative algorithm in [54] in terms of speed, elapsed time, and iterative steps.

- (h)

-

In 2018, Hajarian [106] extended the Lanczos version of BCR algorithm .to find the symmetric solutions of the matrix equation over :

- (B)

- In 2009, from an optimization perspective, Zhou et al. [340] developed a novel iterative method for solving Eq. (110) over and its more general form, i.e.,with unknown , which contains iterative methods in [53,54] as special cases. In 2015, by extending the generalized product biconjugate gradient algorithms, Hajarian gave [102] four effective matrix algorithms for the coupled matrix equation over :where are unknown.

- (C)

- In 2011, Wu et al. [311] constructed an iterative algorithm to solve the coupled Sylvester-conjugate matrix equation over :where are unknown. In 2021, inspired by [311], Yan and Ma [328] proposed an iterative algorithm for the generalized Hamiltonian solutions of the generalized coupled Sylvester-conjugate matrix equations over :where , and and () are unknown generalized Hamiltonian matrices.

- (D)

- In 2015, inspired by [19,147], Hajarian [101] obtained an iterative method for the coupled Sylvester-transpose matrix equations over :with unknown X and Y, by developing the biconjugate A-orthogonal residual and the conjugate A-orthogonal residual squared methods. Based on this developed method, Hajarian [101] also considered the coupled periodic Sylvester matrix equations over :where and are unknown periodic matrices with a period.

- (E)

-

Discrete-time periodic matrix equations are an important tool for analyzing and designing periodic systems [15]. More related studies are as follows:

- (a)

- In 2017, Hajarian [104] introduced a generalized conjugate direction method for solving the general coupled Sylvester discrete-time periodic matrix equations over :where and are unknown periodic matrices with a period.

- (b)

- In 2022, Ma and Yan [196] proposed a modified conjugate gradient algorithm for solving the general discrete-time periodic Sylvester matrix equations over :where and are unknown periodic matrices of period T.

- (F)

- Interestingly, in 2014, Dehghani-Madiseh and Dehghan [51] presented the generalized interval Gauss-Seidel iteration method for the outer estimation of AE-solution set of the interval generalized Sylvester matrix equation over :where () and () are unknown interval matrices.

- (H)

- In 2018, Hajarian [107] established the biconjugate residual algorithm for solving the matrix equation over :where X and Y are the unknown generalized reflexive and anti-reflexive matrices, respectively.

- (I)

8. Applications to GSE

8.1. Theoretical Applications

8.1.1. Solvability of Matrix Equations

8.1.2. UTV Decomposition of Dual Matrices

8.1.3. Microlocal Triangularization of Pseudo-Differential Systems

- (1)

- In [159], Sections 3.3 and 3.4, Kiran showed that the triangularization scheme in Theorem 69 can also be applied to symbolic hierarchies.

- (2)

- [159], Lemma 2.5 shows that Eq. (1) over has a unique solution X if and only if A or B is nonsingular. However, there is a simple counterexample to its sufficiency. Indeed, if both A and B are identity matrices (and thus nonsingular), the solution X of Eq. (1) is obviously not unique for a given C. For instance, take and , or and . This minor error, however, does not affect the existence of solutions to Eq. (1).

8.2. Practical Applications

8.2.1. Calibration Problems

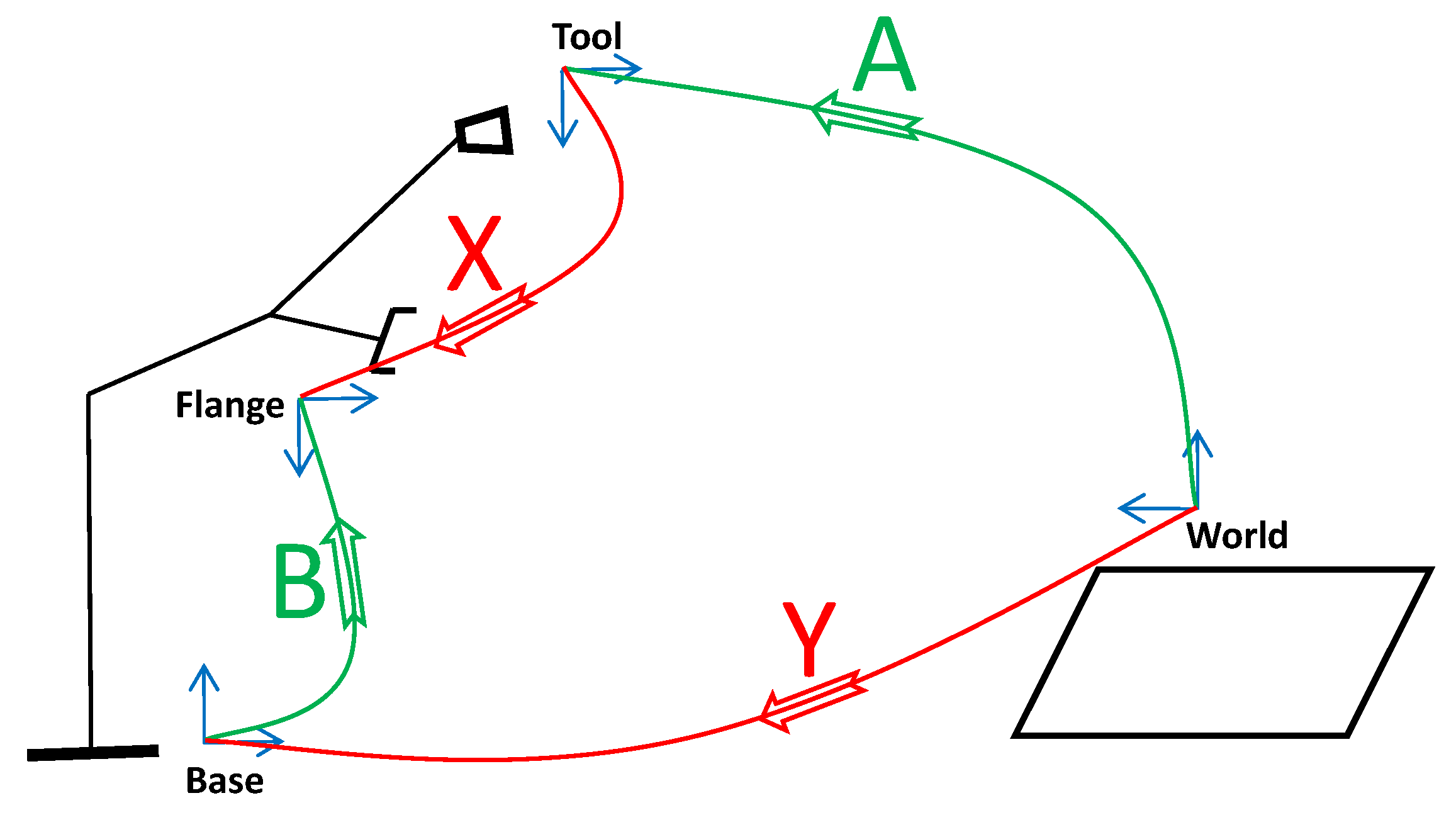

- (i)

- is the known homogeneous transformation from end effector pose measurements,

- (ii)

- is derived from the calibrated manipulator internal-link forward kinematics,

- (iii)

- is the unknown transformation from the tool frame to the flange frame,

- (iv)

- is the unknown transformation from the world frame to the base frame.

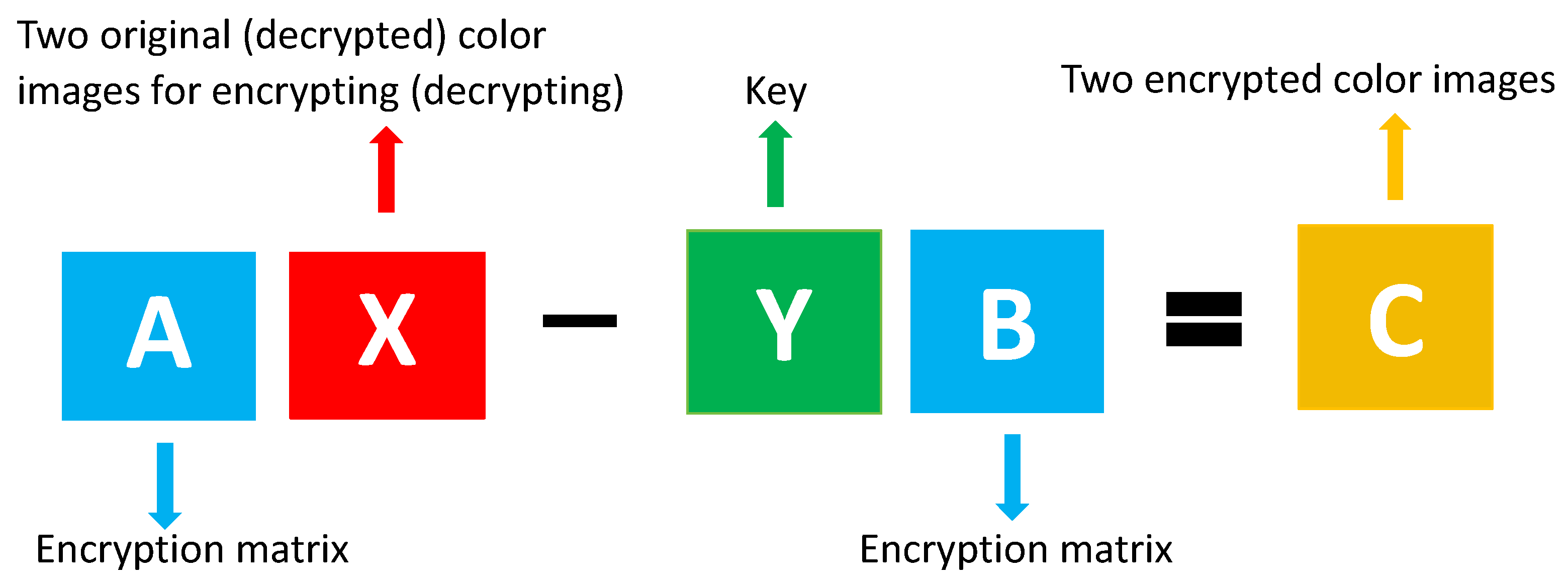

8.2.2. Encryption and Decryption Schemes for Color Images

| Algorithm 1 Color image encryption scheme |

|

| Algorithm 2 Color image decryption scheme |

|

9. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- S.L. Adler. Quaternionic quantum mechanics and quantum fields, Oxford University Press, New York. 1995.

- I. J. An, E. Ko, and J.E. Lee. On the generalized Sylvester operator equation AX-YB=C. Linear Multilinear Algebra 2022, 72, 585–596. [Google Scholar]

- A. L. Andrew. Centrosymmetric matrices. SIAM Rev. 1998, 40, 697–698. [Google Scholar] [CrossRef]

- H. Aslaksen. Quaternionic determinants. Math. Intell. 1996, 18, 57–65. [Google Scholar] [CrossRef]

- B. W. Bader and T.G. Kolda. Algorithm 862: Matlab tensor classes for fast algorithm prototyping. ACM Trans. Math. Softw. 2006, 32, 635–653. [Google Scholar] [CrossRef]

- J. K. Baksalary and R. Kala. The matrix equation AX-YB=C. Linear Algebra Appl. 1979, 25, 41–43. [Google Scholar] [CrossRef]

- J. K. Baksalary and R. Kala. The matrix equation AXB+CYD=E. Linear Algebra Appl. 1980, 30, 141–147. [Google Scholar] [CrossRef]

- S. Barnett. Regular polynomial matrices having relatively prime determinants. Proc. Camb. Philos. Soc. 1969, 65, 585–590. [Google Scholar] [CrossRef]

- A. Ben-Israel and T.N.E Greville. Generalized Inverses: Theory and Applications, Springer, New York, 2nd edition. 2003.

- G. Bengtsson. Output regulation and internal models–a frequency domain approach. Automatica 1977, 13, 333–345. [Google Scholar] [CrossRef]

- J.H. Bevis, F.J. Hall, and R.E. Hartwig. Consimilarity and the matrix equation AX-XB=C. In F. Uhlig and R. Grone, editors, Current Trends in Matrix Theory, pages 51–64. North-Holland, New York, 1987.

- J. H. Bevis, F.J. Hall, and R.E. Hartwig. The matrix equation AX¯-XB=C and its special cases. SIAM J. Matrix Anal. Appl. 1988, 9, 348–359. [Google Scholar]

- R. Bhatia. Matrix Analysis, Springer, New York. 1997.

- R. Bhatia and P. Rosenthal. How and why to solve the operator equation AX-XB=Y. Bull. Lond. Math. Soc. 1997, 29, 1–21. [Google Scholar] [CrossRef]

- S. Bittanti and P. Colaneri. Periodic Systems: Filtering and Control, Springer, London. 2009.

- M. Brazell, N. Li, C. Navasca, and C. Tamon. Solving multilinear systems via tensor inversion. SIAM J. Matrix Anal. Appl. 2013, 34, 542–570. [Google Scholar] [CrossRef]

- R. Byers and D. Kressner. Structured condition numbers for invariant subspaces. SIAM J. Matrix Anal. Appl. 2006, 28, 326–347. [Google Scholar] [CrossRef]

- A. Cantoni and P. Butler. Eigenvalues and eigenvectors of symmetric centrosymmetric matrices. Linear Algebra Appl. 1976, 13, 275–288. [Google Scholar] [CrossRef]

- B. Carpentieri, Y.F. Jing, and T.Z. Huang. The BiCOR and CORS iterative algorithms for solving nonsymmetric linear systems. SIAM J. Sci. Comput. 2011, 33, 3020–3036. [Google Scholar] [CrossRef]

- X. W. Chang and J.S. Wang. The symmetric solution of the matrix equations AX+YA=C, AXAT+BYBT=C, and (ATXA,BTXB)=(C,D). Linear Algebra Appl. 1993, 179, 171–189. [Google Scholar] [CrossRef]

- M. Che and Y. Wei. Theory and Computation of Complex Tensors and its Applications, Springer, Singapore. 2020.

- S. Chen and Y. Tian. On solutions of generalized Sylvester equation in polynomial matrices. J. Frankl. Inst. 2014, 351, 5376–5385. [Google Scholar] [CrossRef]

- W. Chen and C. Song. STP method for solving the least squares special solutions of quaternion matrix equations. Adv. Appl. Clifford Algebr. 2025, 35, 6. [Google Scholar] [CrossRef]

- Z. Chen, C. Ling, L. Qi, and H. Yan. A regularization-patching dual quaternion optimization method for solving the hand-eye calibration problem. J. Optim. Theory Appl. 2024, 200, 1193–1215. [Google Scholar] [CrossRef]

- D. Cheng. Matrix and Polynomial Approach to Dynamic Control Systems, Science Press, Beijing. 2002.

- D. Cheng. From Dimension-Free Matrix Theory to Cross-Dimensional Dynamic Systems, Elsevier, London. 2019.

- D. Cheng and H. Qi. Semi-tensor Product of Matrices–Theory and Applications, Science Press, Beijing, 2nd 62 edition, 2011. In Chinese.

- D. Cheng, Z. Xu, and T. Shen. Equivalence-based model of dimension-varying linear systems. IEEE Trans. Autom. Control 2020, 65, 5444–5449. [Google Scholar] [CrossRef]

- D. Cheng and Z. Liu. A new semi-tensor product of matrices. Control Theory Technol. 2019, 17, 4–12. [Google Scholar] [CrossRef]

- D. Cheng. Semi-tensor product of matrices and its application to Morgan’s problem. Sci. China (Ser. F) 2001, 44, 195–212. [Google Scholar]

- D. Cheng, H. Qi, and Z. Li. Analysis and Control of Boolean Networks: A Semi-tensor Product Approach, 2011.

- D. Cheng, H. Qi, and A. Xue. A survey on semi-tensor product of matrices. J. Syst. Sci. Complex. 2007, 20, 304–322. [Google Scholar] [CrossRef]

- L. Cheng and J. Pearson. Frequency domain synthesis of multivariable linear regulators. IEEE Trans. Autom. Control 1978, 23, 3–15. [Google Scholar] [CrossRef]

- K. E. Chu. Singular value and generalized singular value decompositions and the solution of linear matrix equations. Linear Algebra Appl. 1987, 88–89, 83–98. [Google Scholar]

- M. A. Clifford. Preliminary sketch of biquaternions. Proc. Lond. Math. Soc. 1873, 4, 381–395. [Google Scholar]

- J. Cockle. On systems of algebra involving more than one imaginary and on equations of the fifth degree. London, Edinburgh, Dublin Philos. Mag. J. Sci. 1849, 35, 434–437. [Google Scholar] [CrossRef]

- N. Cohen and S.D. Leo. The quaternionic determinant. Electron. J. Linear Algebra 2000, 7, 100–111. [Google Scholar]

- D. Condurache and A. Burlacu. Dual tensors based solutions for rigid body motion parameterization. Mech. Mach. Theory 2014, 74, 390–412. [Google Scholar] [CrossRef]

- D. Condurache and I.A. Ciureanu. A novel solution for AX=YB sensor calibration problem using dual Lie algebra. In 2019 6th International Conference on Control, Decision and Information Technologies (CoDIT’19), pages 302–307, Paris, France, 2019.

- D.S. Cvetković-Ilić and Y. Wei. Algebraic Properties of Generalized Inverses, Springer, Singapore. 2017.

- D. S. Cvetković-Ilić. The solutions of some operator equations. J. Korean Math. Soc. 2008, 45, 1417–1425. [Google Scholar] [CrossRef]

- A. Dajić. Common solutions of linear equations in a ring, with applications. Electron. J. Linear Algebra 2015, 30, 66–79. [Google Scholar]

- A. Dajić and J. J. Koliha. Positive solutions to the equations AX=C and XB=D for Hilbert space operators. J. Math. Anal. Appl. 2007, 333, 567–576. [Google Scholar] [CrossRef]

- K. Daniilidis. Hand-eye calibration using dual quaternions. Int. J. Robot. Res. 1999, 18, 286–298. [Google Scholar] [CrossRef]

- B. De Moor and H. Zha. A tree of generalization of the ordinary singular value decomposition. Linear Algebra Appl. 1991, 147, 469–500. [Google Scholar] [CrossRef]

- F. De Terán and F. M. Dopico. Consistency and efficient solution of the Sylvester equation for ★-congruence. Electron. J. Linear Algebra 2011, 22, 849–863. [Google Scholar]

- M. Dehghan and M. Hajarian. An iterative algorithm for the reflexive solutions of the generalized coupled Sylvester matrix equations and its optimal approximation. Appl. Math. Comput. 2008, 202, 571–588. [Google Scholar]

- M. Dehghan and M. Hajarian. The general coupled matrix equations over generalized bisymmetric matrices. Linear Algebra Appl. 2010, 432, 1531–1552. [Google Scholar] [CrossRef]

- M. Dehghan and M. Hajarian. An iterative method for solving the generalized coupled Sylvester matrix equations over generalized bisymmetric matrices. Appl. Math. Modell. 2010, 34, 639–654. [Google Scholar] [CrossRef]

- M. Dehghan and M. Hajarian. Iterative algorithms for the generalized centro-symmetric and central anti-symmetric solutions of general coupled matrix equations. Eng. Comput. 2012, 29, 528–560. [Google Scholar] [CrossRef]

- M. Dehghani-Madiseh and M. Dehghan. Generalized solution sets of the interval generalized Sylvester matrix equation ∑i=1pAiXi+∑j=1qYjBj=C and some approaches for inner and outer estimations. Comput. Math. Appl. 2014, 68, 1758–1774. [Google Scholar] [CrossRef]

- H. Diao, X. Shi, and Y. Wei. Effective condition numbers and small sample statistical condition estimation for the generalized Sylvester equation. Sci. China-Math. 2013, 56, 967–982. [Google Scholar] [CrossRef]

- F. Ding and T. Chen. Iterative least-squares solutions of coupled Sylvester matrix equations. Syst. Control Lett. 2005, 54, 95–107. [Google Scholar] [CrossRef]

- F. Ding and T. Chen. On iterative solutions of general coupled matrix equations. SIAM J. Control Optim. 2006, 44, 2269–2284. [Google Scholar] [CrossRef]

- W. Ding, Y. Li, D. Wang, and A. Wei. Constrained least squares solution of Sylvester equation. Math. Model. Control 2021, 1, 112–120. [Google Scholar] [CrossRef]

- W. Ding and Y. Wei. Theory and Computation of Tensors: Multi-dimensional Arrays, Elsevier/Academic Press, London,. 2016.

- W. Ding, Y. Li, and D. Wang. A real method for solving quaternion matrix equation X-AX^B=C based on semi-tensor product of matrices. Adv. Appl. Clifford Algebr. 2021, 31, 78. [Google Scholar] [CrossRef]

- A. Dmytryshyn, V. Futorny, T. Klymchuk, and V.V. Sergeichuk. Generalization of Roth’s solvability criteria to systems of matrix equations. Linear Algebra Appl. 2017, 527, 294–302. [Google Scholar] [CrossRef]

- A. Dmytryshyn and B. Kågström. Coupled Sylvester-type matrix equations and block diagonalization. SIAM J. Matrix Anal. Appl. 2015, 36, 580–593. [Google Scholar] [CrossRef]

- M. Dobovišek. On minimal solutions of the matrix equation AX-YB=0. Linear Algebra Appl. 2001, 325, 81–99. [Google Scholar] [CrossRef]

- R. G. Douglas. On majorization, factorization and range inclusion of operators on Hilbert space. Proc. Amer. Math. Soc. 1966, 17, 413–416. [Google Scholar] [CrossRef]

- M. P. Drazin. Pseudo-inverses in associative rings and semigroups. Am. Math. Mon. 1958, 65, 506–514. [Google Scholar] [CrossRef]

- P.K. Draxl. Skew Field. Cambridge University Press, London, 1983.

- G.R. Duan. Generalized Sylvester Equations: Unified Parametric Solutions, Taylor and Francis Group/CRC Press, Boca Raton. 2015.

- A. Einstein. The foundation of the general theory of relativity. In A.J. Kox, M.J. Klein, and R. Schulmann, editors, The Collected Papers of Albert Einstein (Vol. 6), pages 146–200. Princeton University Press, Princeton, 1997.

- E. Emre and L.M. Silverman. The equation XR+QY=Φ: A characterization of solutions. SIAM J. Control Optim. 1981, 19, 33–38. [Google Scholar]

- E. Emre. The polynomial equation QQc+RPc=Φ with application to dynamic feedback. SIAM J. Control Optim. 1980, 18, 611–620. [Google Scholar] [CrossRef]

- F. Ernst, L. Richter, L. Matthäus, V. Martens, et al. Non-orthogonal tool/flange and robot/world calibration. Int. J. Med Robot. Comput. Assist. Surg. 2012, 8, 407–420. [Google Scholar] [CrossRef] [PubMed]

- R. Fan, M. Zeng, and Y. Yuan. The solutions to some dual matrix equations. Miskolc Math. Notes 2024, 25, 679–691. [Google Scholar] [CrossRef]

- X. Fan, Y. Li, Z. Liu, and J. Zhao. Solving quaternion linear system based on semi-tensor product of quaternion matrices. Symmetry 2022, 14, 1359. [Google Scholar] [CrossRef]

- X. Fan, Y. Li, Z. Liu, and J. Zhao. The (anti)-η-Hermitian solution of quaternion linear system. Filomat 2024, 38, 4679–4695. [Google Scholar] [CrossRef]

- X. Fan, Y. Li, J. Sun, and J. Zhao. Solving quaternion linear system AXB=E based on semi-tensor product of quaternion matrices. Banach J. Math. Anal. 2023, 17, 25. [Google Scholar] [CrossRef]

- X. Fan, Y. Li, M. Zhang, and J. Zhao. Solving the least squares (anti)-Hermitian solution for quaternion linear systems. Comput. Appl. Math. 2022, 41, 371. [Google Scholar] [CrossRef]

- X. Fang, J. Yu, and H. Yao. Solutions to operator equations on Hilbert C*-modules. Linear Algebra Appl. 2009, 431, 2142–2153. [Google Scholar] [CrossRef]

- J. G. Farias, E.D. Pieri, and D. Martins. A review on the applications of dual quaternions. Machines 2024, 12, 402. [Google Scholar] [CrossRef]

- R.B. Feinberg. Equivalence of partitioned matrices. J. Res. Nat. Bur. Stand.–B. Math. Sci. 1976; 97.

- J. Feinstein and Y. Bar-Ness. On the uniqueness minimal solution of the matrix polynomial equation A(λ)X(λ)+Y(λ)B(λ)=C(λ). J. Frankl. Inst. 1980, 310, 131–134. [Google Scholar] [CrossRef]

- J. Feinstein and Y. Bar-Ness. The solution of the matrix polynomial A(s)X(s)+B(s)Y(s)=C(s). IEEE Trans. Autom. Control.

- I. Fischer. Dual-Number Methods in Kinematics, Statics and Dynamics, CRC Press, Boca Raton. 1999.

- H. Flanders and H.K. Wimmer. On the matrix equations AX-XB=C and AX-YB=C. SIAM J. Appl. Math. 1977, 32, 707–710. [Google Scholar]

- C. Flaut and V. Shpakivskyi. Real matrix representations for the complex quaternions. Adv. Appl. Clifford Algebr. 2013, 23, 657–671. [Google Scholar] [CrossRef]

- P. A. Fuhrmann. Algebraic system theory: An analyst’s point of view. J. Frankl. Inst. 1976, 301, 521–540. [Google Scholar] [CrossRef]

- V. Futorny, T. Klymchuk, and V.V. Sergeichuk. Roth’s solvability criteria for the matrix equations AX-X^B=C and X-AX^B=C over the skew field of quaternions with an involutive automorphism q↦q^. Linear Algebra Appl. 2016, 510, 246–258. [Google Scholar] [CrossRef]

- P. Gabriel. Unzerlegbare Darstellungen I. Manuscripta Math. 1972, 6, 71–103. [Google Scholar] [CrossRef]

- P.R. Girard. Quaternions, Clifford Algebras and Relativistic Physics, Birkhäuser, Basel, Switzerland. 2007.

- I. Gohberg, M.A. Kaashoek, and L. Lerer. On a class of entire matrix function equations. Linear Algebra Appl. 2007, 425, 434–442. [Google Scholar] [CrossRef]

- I. Gohberg, M.A. I. Gohberg, M.A. Kaashoek, and F. Schagen. Partially Specified Matrices and Operators: Classification, Completion, Applications, Birkhäuser Verlag, Basel. 1995. [Google Scholar]

- I. Gohberg, M.A. Kaashoek, and L. Lerer. The resultant for regular matrix polynomials and quasi commutativity. Indiana Univ. Math. J. 2008, 57, 2793–2813. [Google Scholar] [CrossRef]

- G.H. Golub and C.F.V. Loan. Matrix Computations. The Johns Hopkins University Press, Baltimore, 1983.

- G. H. Golub and H. Zha. Perturbation analysis of the canonical correlations of matrix pairs. Linear Algebra Appl. 1994, 210, 3–28. [Google Scholar] [CrossRef]

- D. Gu, D. Zhang, and Q. Liu. Parametric control to permanent magnet synchronous motor via proportional plus integral feedback. Trans. Inst. Meas. Control 2021, 43, 925–932. [Google Scholar] [CrossRef]

- Y.L. Gu and J.Y.S. Luh. Dual-number transformations and its applications to robotics. IEEE Journal of Robotics and Automation, -3.

- R. M. Guralnick. Roth’s theorems and decomposition of modules. Linear Algebra Appl. 1980, 39, 155–165. [Google Scholar]

- R. M. Guralnick. Matrix equivalence and isomorphism of modules. Linear Algebra Appl. 1982, 43, 125–136. [Google Scholar] [CrossRef]

- R. M. Guralnick. Roth’s theorems for sets of matrices. Linear Algebra Appl. 1985, 71, 113–117. [Google Scholar] [CrossRef]

- W. H. Gustafson. Roth’s theorems over commutative rings. Linear Algebra Appl. 1979, 23, 245–251. [Google Scholar] [CrossRef]

- W. H. Gustafson. Quivers and matrix equations. Linear Algebra Appl. 1995, 231, 159–174. [Google Scholar] [CrossRef]

- W. H. Gustafson. and J.M. Zelmanowitz. On matrix equivalence and matrix equations. Linear Algebra Appl. 1979, 27, 219–224. [Google Scholar] [CrossRef]

- J. Ha. Probabilistic framework for hand-eye and robot-world calibration AX=YB. IEEE Trans. Robot. 2023, 39, 1196–1211. [Google Scholar] [CrossRef]

- M. Hajarian. Matrix form of the CGS method for solving general coupled matrix equations. Appl. Math. Lett. 2014, 34, 37–42. [Google Scholar] [CrossRef]

- M. Hajarian. Developing BiCOR and CORS methods for coupled Sylvester-transpose and periodic Sylvester matrix equations. Appl. Math. Modell. 2015, 39, 6073–6084. [Google Scholar] [CrossRef]

- M. Hajarian. Matrix GPBiCG algorithms for solving the general coupled matrix equations. IET Control Theory Appl. 2015, 9, 74–81. [Google Scholar] [CrossRef]

- M. Hajarian. Generalized conjugate direction algorithm for solving the general coupled matrix equations over symmetric matrices. Numer. Algor. 2016, 73, 591–609. [Google Scholar] [CrossRef]

- M. Hajarian. Convergence analysis of generalized conjugate direction method to solve general coupled Sylvester discrete-time periodic matrix equations. Int. J. Adapt. Control Signal Process. 2017, 31, 985–1002. [Google Scholar] [CrossRef]

- M. Hajarian. Convergence of HS version of BCR algorithm to solve the generalized Sylvester matrix equation over generalized reflexive matrices. J. Frankl. Inst. 2017, 354, 2340–2357. [Google Scholar] [CrossRef]

- M. Hajarian. Computing symmetric solutions of general Sylvester matrix equations via Lanczos version of biconjugate residual algorithm. Comput. Math. Appl. 2018, 76, 686–700. [Google Scholar] [CrossRef]

- M. Hajarian. Convergence properties of BCR method for generalized Sylvester matrix equation over generalized reflexive and anti-reflexive matrices. Linear Multilinear Algebra 2018, 66, 1975–1990. [Google Scholar] [CrossRef]

- W. R. Hamilton. II. On quaternions; or on a new system of imaginaries in algebra. London, Edinburgh, Dublin Philos. Mag. J. Sci. 1844, 25, 10–13. [Google Scholar] [CrossRef]

- W.R. Hamilton. Lectures on Quaternions, Hodges and Smith, Dublin. 1853.

- R. E. Hartwig. Roth’s equivalence problem in unit regular rings. Proc. Amer. Math. Soc. 1976, 59, 39–44. [Google Scholar]

- R. E. Hartwig. A note on light matrices. Linear Algebra Appl. 1987, 97, 153–169. [Google Scholar] [CrossRef]

- Z. H. He. The general solution to a system of coupled Sylvester-type quaternion tensor equations involving η-Hermicity. Bull. Iran. Math. Soc. 2019, 45, 1407–1430. [Google Scholar] [CrossRef]

- Z. H. He. Pure PSVD approach to Sylvester-type quaternion matrix equations. Electron. J. Linear Algebra 2019, 35, 266–284. [Google Scholar] [CrossRef]

- Z. H. He, O.M. Agudelo, Q.W. Wang, and B. De Moor. Two-sided coupled generalized Sylvester matrix equations solving using a simultaneous decomposition for fifteen matrices. Linear Algebra Appl. 2016, 496, 549–593. [Google Scholar] [CrossRef]

- Z. H. He, A. Dmytryshyn, and Q.W. Wang. A new system of sylvester-like matrix equations with arbitrary number of equations and unknowns over the quaternion algebra. Linear Multilinear Algebra 2024, 73, 1269–1309. [Google Scholar]

- Z. H. He, Q.W. Wang, and Y. Zhang. The complete equivalence canonical form of four matrices over an arbitrary division ring. Linear Multilinear Algebra 2018, 66, 74–95. [Google Scholar] [CrossRef]

- Z. H. He, C. Navasca, and X.X. Wang. Decomposition for a quaternion tensor triplet with applications. Adv. Appl. Clifford Algebr. 2022, 32, 9. [Google Scholar] [CrossRef]

- Z. H. He and Q.W. Wang. A real quaternion matrix equation with applications. Linear Multilinear Algebra 2013, 61, 725–740. [Google Scholar] [CrossRef]

- Z. H. He and Q.W. Wang. A pair of mixed generalized Sylvester matrix equations. J. Shanghai Univ. (Natural Sci. 2014, 20, 138–156. [Google Scholar]

- Z.H. He, C. Z.H. He, C. Navasca, and Q.W. Wang. Tensor decompositions and tensor equations over quaternion algebra. arXiv, 1710. [Google Scholar]

- Z. H. He. Sylvester-type quaternion matrix equations with arbitrary equations and arbitrary unknowns. arXiv 2020, arXiv:2006.00189v1. [Google Scholar]

- Z. H. He, J. Liu, and T.Y. Tam. The general ϕ-Hermitian solution to mixed pairs of quaternion matrix Sylvester equations. Electron. J. Linear Algebra 2017, 32, 475–499. [Google Scholar] [CrossRef]

- Z. H. He and Q.W. Wang. A system of periodic discrete-time coupled Sylvester quaternion matrix equations. Algebra Colloq. 2017, 24, 169–180. [Google Scholar] [CrossRef]

- Z. H. He, Q.W. Wang, and Y. Zhang. A system of quaternary coupled Sylvester-type real quaternion matrix equations. Automatica 2018, 87, 25–31. [Google Scholar] [CrossRef]

- Z. H. He, Q.W. Wang, and Y. Zhang. A simultaneous decomposition for seven matrices with applications. J. Comput. Appl. Math. 2019, 349, 93–113. [Google Scholar] [CrossRef]

- Z. H. He, Y.Z. Xu, Q.W. Wang, and C.Q. Zhang. The equivalence canonical forms of two sets of five quaternion matrices with applications. Math. Meth. Appl. Sci. 2025, 48, 5483–5505. [Google Scholar] [CrossRef]

- R. A. Horn and F. Zhang. A generalization of the complex Autonne-Takagi factorization to quaternion matrices. Linear Multilinear Algebra 2012, 60, 1239–1244. [Google Scholar] [CrossRef]

- J. Huang. On parameter iteration method for solving the mixed-type Lyapunov matrix equation. Math. Numer. Sin. 2007, 29, 285–292. [Google Scholar]

- L. Huang. The solvability of linear matrix equation over a central simple algebra. Linear Multilinear Algebra 1996, 40, 353–363. [Google Scholar] [CrossRef]

- L. Huang. The quaternion matrix equation ∑AiXBi=E. Acta Math. Sin. New Ser. 1998, 14, 91–98. [Google Scholar] [CrossRef]

- L. Huang. The explicit solutions and solvability of linear matrix equations. Linear Algebra Appl. 2000, 311, 195–199. [Google Scholar] [CrossRef]

- L. Huang and Q. Zeng. The matrix equation AXB+CYD=E over a simple Artinian ring. Linear Multilinear Algebra 1995, 38, 225–232. [Google Scholar] [CrossRef]

- L. Huang and J. Liu. The extension of Roth’s theorem for matrix equations over a ring. Linear Algebra Appl. 1997, 259, 229–235. [Google Scholar] [CrossRef]

- S. Huang, G. Zhao, and M. Chen. Tensor extreme learning design via generalized Moore-Penrose inverse and triangular type-2 fuzzy sets. Neural Comput. Appl. 2019, 31, 5641–5651. [Google Scholar] [CrossRef]

- J. W. Huo, Y.Z. Xu, and Z.H. He. A simultaneous decomposition for a quaternion tensor quaternity with applications. Mathematics 2025, 13, 1679. [Google Scholar] [CrossRef]

- N. Ito and H.K. Wimmer. Rank minimization of generalized Sylvester equations over Bezout domains. Linear Algebra Appl. 2013, 439, 592–599. [Google Scholar] [CrossRef]

- J. Jaiprasert and P. Chansangiam. Solving the Sylvester-transpose matrix equation under the semi-tensor product. Symmetry 2022, 14, 1094. [Google Scholar] [CrossRef]

- A. Jameson and E. Kreindler. Inverse problem of linear optimal control. SIAM J. Control 1973, 11, 1–19. [Google Scholar] [CrossRef]

- A. Jameson, E. Kreindler, and P. Lancaster. Symmetric, positive semidefinite, and positive definite real solutions of AX=XAT and AX=YB. Linear Algebra Appl. 1992, 160, 189–215. [Google Scholar] [CrossRef]

- Z. R. Jia and Q.W. Wang. The general solution to a system of tensor equations over the split quaternion algebra with applications. Mathematics 2025, 13, 644. [Google Scholar] [CrossRef]

- T. Jiang and M. Wei. On solutions of the matrix equations X-AXB=C and X-AX¯B=C. Linear Algebra Appl. 2003, 367, 225–233. [Google Scholar]

- T. Jiang and S. Ling. On a solution of the quaternion matrix equation AX˜-XB=C and its applications. Adv. Appl. Clifford Algebr. 2013, 23, 689–699. [Google Scholar] [CrossRef]

- T. S. Jiang and M.S. Wei. On a solution of the quaternion matrix equation X-AX˜B=C and its application. Acta Math. Sin. Engl. Ser. 2005, 21, 483–490. [Google Scholar] [CrossRef]

- Z. Ji, J. Li, X. Zhou, F. Duan, and T. Li. On solutions of matrix equation AXB=C under semit-ensor product. Linear Multilinear Algebra 2021, 69, 1935–1963. [Google Scholar] [CrossRef]

- H. Jin, S. Xu, Y. Wang, and X. Liu. The Moore-Penrose inverse of tensors via the M-product. Comput. Appl. Math. 2023, 42, 294. [Google Scholar] [CrossRef]

- L. Jin, J. Yan, X. Du, X. Xiao, et al. RNN for solving time-variant generalized Sylvester equation with applications to robots and acoustic source localization. IEEE Trans. Ind. Inform. 2020, 16, 6359–6369. [Google Scholar] [CrossRef]

- Y. F. Jing, T.Z. Huang, Y. Zhang, L. Li, et al. Lanczos-type variants of the COCR method for complex nonsymmetric linear systems. J. Comput. Phys. 2009, 228, 6376–6394. [Google Scholar] [CrossRef]

- S. Jo, Y. Kim, and E. Ko. On Fuglede-Putnam properties. Positivity 2015, 19, 911–925. [Google Scholar] [CrossRef]

- M. A. Kaashoek and L. Lerer. On a class of matrix polynomial equations. Linear Algebra Appl. 2013, 439, 613–620. [Google Scholar] [CrossRef]

- B. Kågström. A perturbation analysis of the generalized Sylvester equation (AR-LB,DR-LE)=(C,F). SIAM J. Matrix Anal. Appl. 1994, 15, 1045–1060. [Google Scholar] [CrossRef]

- B. Kågström and P. Poromaa. Lapack-style algorithms and software for solving the generalized Sylvester equation and estimating the separation between regular matrix pairs. ACM Trans. Math. Softw. 1996, 22, 78–103. [Google Scholar] [CrossRef]

- W.B.V. Kandasamy and F. Smarandache. Zip Publishing, Ohio, USA. Dual Numbers, 2012.

- M. M. Karizaki, M. Hassani, M. Amyari, and M. Khosravi. Operator matrix of Moore-Penrose inverse operators on Hilbert C*-modules. Colloq. Math. 2015, 140, 171–182. [Google Scholar] [CrossRef]

- Y. Ke and C. Ma. An alternating direction method for nonnegative solutions of the matrix equation AX+YB=C. Comp. Appl. Math. 2017, 36, 359–365. [Google Scholar] [CrossRef]

- B. Kenwright. A beginner’s guide to dual-quaternions: What they are, how they work, and how to use them for 3D character hierarchies. In 20th International Conference in Central Europe on Computer Graphics, Visualization and Computer Vision, 2012.

- E. Kernfeld, M. Kilmer, and S. Aeron. Tensor-tensor products with invertible linear transforms. Linear Algebra Appl. 2015, 485, 545–570. [Google Scholar] [CrossRef]

- M. Kilmer, L. Horesh, H. Avron, and E. Newman. Tensor-tensor products for optimal representation and compression. 2019. arXiv:2001.00046v1.

- M. Kilmer and C.D. Martin. Factorization strategies for third-order tensors. Linear Algebra Appl. 2011, 435, 641–658. [Google Scholar] [CrossRef]

- N. U. Kiran. Simultaneous triangularization of pseudo-differential systems. J. Pseudo-Differ. Oper. Appl. 2013, 4, 45–61. [Google Scholar] [CrossRef]

- T. G. Kolda and B.W. Bader. Tensor decompositions and applications. SIAM Rev. 2009, 51, 455–500. [Google Scholar] [CrossRef]

- D. Kressner, C. Schröder, and D.S. Watkins. Implicit QR algorithms for palindromic and even eigenvalue problems. Numer. Algor. 2009, 51, 209–238. [Google Scholar] [CrossRef]

- V. Kučera. Algebraic approach to discrete stochastic control. Kybernetika 1975, 11, 114–147. [Google Scholar]

- J.B. Kuipers. Quaternions and Rotation Sequences: A Primer with Applications to Orbits, Aerospace and Virtual Reality, Princeton University Press, Princeton. 1999.

- I. Kyrchei. Explicit representation formulas for the minimum norm least squares solutions of some quaternion matrix equations. Linear Algebra Appl. 2013, 438, 136–152. [Google Scholar] [CrossRef]

- I. Kyrchei. Cramer’s rules for Sylvester quaternion matrix equation and its special cases. Adv. Appl. Clifford Algebr. 2018, 28, 90. [Google Scholar] [CrossRef]

- I. Kyrchei. Determinantal representations of solutions to systems of quaternion matrix equations. Adv. Appl. Clifford Algebr. 2018, 28, 23. [Google Scholar] [CrossRef]

- I. Kyrchei. Cramer’s rules of η-(skew-)Hermitian solutions to the quaternion Sylvester-type matrix equations. Adv. Appl. Clifford Algebr. 2019, 29, 56. [Google Scholar] [CrossRef]

- I. I. Kyrchei. Cramer’s rule for quaternionic systems of linear equations. J. Math. Sci. 2008, 155, 839–858. [Google Scholar] [CrossRef]

- I. I. Kyrchei. Analogs of the adjoint matrix for generalized inverses and corresponding Cramer rules. Linear Multilinear Algebra 2008, 56, 453–469. [Google Scholar] [CrossRef]

- I. I Kyrchei. Determinantal representations of the Moore-Penrose inverse matrix over the quaternion skew field. J. Math. Sci. 2012, 180, 23–33. [Google Scholar] [CrossRef]

- I.I. Kyrchei. The theory of the column and row determinants in a quaternion linear algebra. In A.R. Baswell, editor, Advances in Mathematics Research, volume 15, pages 301–358. Nova Science Publisher, New York, 2012.

- I. I. Kyrchei. Explicit determinantal representation formulas for the solution of the two-sided restricted quaternionic matrix equation. J. Appl. Math. Comput. 2018, 58, 335–365. [Google Scholar] [CrossRef]

- I. I. Kyrchei. Cramer’s rule for some quaternion matrix equations. Appl. Math. Comput. 2010, 217, 2024–2030. [Google Scholar]

- T.Y. Lam. A First Course in Noncommutative Rings, Springer, New York. 1991.

- T.Y. Lam. Introduction to Quadratic Forms over Fields, American Mathematical Society, Providence. 2005.

- E.C. Lance. C, Hilbert C*-Modules: a toolkit for operator algebraists. Cambridge University Press, Cambridge. 1995.

- S. G. Lee and Q.P. Vu. Simultaneous solutions of matrix equations and simultaneous equivalence of matrices. Linear Algebra Appl. 2012, 437, 2325–2339. [Google Scholar] [CrossRef]

- A. Li, L. Wang, and D. Wu. Simultaneous robot-world and hand-eye calibration using dual-quaternions and Kronecker product. Int. J. Phys. Sci. 2010, 5, 1530–1536. [Google Scholar]

- J. F. Li, X.Y. Hu, and X.F. Duan. A symmetric preserving iterative method for generalized Sylvester equation. Asian J. Control 2011, 13, 408–417. [Google Scholar] [CrossRef]

- J. Li, L. Tao, W. Li, Y. Chen, and R. Huang. Solvability of matrix equations AX=B,XC=D under semi-tensor product. Linear Multilinear Algebra 2017, 65, 1705–1733. [Google Scholar] [CrossRef]

- S. K. Li and T.Z. Huang. LSQR iterative method for generalized coupled Sylvester matrix equations. Appl. Math. Modell. 2012, 36, 3545–3554. [Google Scholar] [CrossRef]

- T. Li, Q.W. Wang, and X.F. Zhang. A modified conjugate residual method and nearest Kronecker product preconditioner for the generalized coupled Sylvester tensor equations. Mathematics 2022, 10, 1730. [Google Scholar] [CrossRef]

- W. Li. Quaternion Matrices. National University of Defense Technology Press, Changsha, China, 2002. In Chinese. 2002.

- A. P. Liao, Z.Z. Bai, and Y. Lei. Best approximate solution of matrix equation AXB+CYD=E. SIAM J. Matrix Anal. Appl. 2005, 27, 675–688. [Google Scholar] [CrossRef]

- L. H. Lim. Tensors in computations. Acta Numer. 2021, 30, 555–764. [Google Scholar] [CrossRef]

- M. Lin and H.K. Wimmer. The generalized Sylvester matrix equation, rank minimization and Roth’s equivalence theorem. Bull. Aust. Math. Soc. 2011, 84, 441–443. [Google Scholar] [CrossRef]

- Y. Lin and Y. Wei. Condition numbers of the generalized Sylvester equation. IEEE Trans. Autom. Control 2007, 52, 2380–2385. [Google Scholar] [CrossRef]

- X. Liu, Q.W. Wang, and Y. Zhang. Consistency of quaternion matrix equations AX★-XB=C and X-AX★B=C★. Electron. J. Linear Algebra 2019, 35, 394–407. [Google Scholar]

- X. Liu, Y. Li, W. Ding, and R. Tao. A real method for solving octonion matrix equation AXB=C based on semi-tensor product of matrices. Adv. Appl. Clifford Algebr. 2024, 34, 12. [Google Scholar] [CrossRef]

- X. Liu and Y. Zhang. Matrices over quaternion algebras. In M.S. Moslehian, editor, Matrix and Operator Equations and Applications, pages 139–183. Springer, Switzerland, 2023.

- Y. H. Liu. Ranks of solutions of the linear matrix equation AX+YB=C. Comput. Math. Appl. 2006, 52, 861–872. [Google Scholar] [CrossRef]

- Z. Liu, Y. Li, X. Fan, and W. Ding. A new method of solving special solutions of quaternion generalized Lyapunov matrix equation. Symmetry 2022, 14, 1120. [Google Scholar] [CrossRef]

- C. Q. Lv and C.F. Ma. BCR method for solving generalized coupled Sylvester equations over centrosymmetric or anti-centrosymmetric matrix. Comput. Math. Appl. 2018, 75, 70–88. [Google Scholar] [CrossRef]

- C. Lv and C. Ma. Two parameter iteration methods for coupled Sylvester matrix equations. East Asian J. Appl. Math. 2018, 8, 336–351. [Google Scholar] [CrossRef]

- C. Ma, Y. Wu, and Y. Xie. The Newton-type splitting iterative method for a class of coupled Sylvester-like absolute value equation. J. Appl. Anal. Comput. 2024, 14, 3306–3331. [Google Scholar]

- C. Ma and T. Yan. A finite iterative algorithm for the general discrete-time periodic Sylvester matrix equations. J. Frankl. Inst. 2022, 359, 4410–4432. [Google Scholar] [CrossRef]

- G. Marsaglia and G.P.H. Styan. Equalities and inequalities for ranks of matrices. Linear Multilinear Algebra 1974, 2, 269–292. [Google Scholar] [CrossRef]

- R. Mazurek. A general approach to Sylvester-polynomial-conjugate matrix equations. Symmetry 2024, 16, 246. [Google Scholar] [CrossRef]

- M. S. Mehany, Q. Wang, and L. Liu. A system of Sylvester-like quaternion tensor equations with an application. Front. Math. 2024, 19, 749–768. [Google Scholar] [CrossRef]

- M. S. Mehany and Q.W. Wang. Three symmetrical systems of coupled Sylvester-like quaternion matrix equations. Symmetry 2022, 14, 550. [Google Scholar] [CrossRef]

- C. D. Meyer, Jr. Generalized inverses of block triangular matrices. SIAM J. Appl. Math. 1970, 19, 741–750. [Google Scholar] [CrossRef]

- T. Miyata. Note on direct summands of modules. J. Math. Kyoto Univ. 1967, 7, 65–69. [Google Scholar]

- Z. N. Moghani, M.M. Karizaki, and M. Khanehgir. Solutions of the Sylvester equation in C*-Modular operators. Ukr. Math. J. 2021, 73, 354–369. [Google Scholar]

- B.W. Mooring, Z.S. Roth, and M.R. Driels. Fundamentals of Manipulator Calibration, Wiley, New York. 1991.

- Z. Mousavi, R. Eskandari, M.S. Moslehian, and F. Mirzapour. Operator equations AX+YB=C and AXA*+BYB*=C in Hilbert C*-modules. Linear Algebra Appl. 2017, 517, 85–98. [Google Scholar] [CrossRef]

- R. Mukundan. Quaternions: From classical mechanics to computer graphics, and beyond. In Proceedings of the 7th Asian Technology Conference in Mathematics, pages 97–106. 2002.

- M. Newman. The Smith normal form of a partitioned matrix. J. Res. Nat. Bur. Stand.–B. Math. Sci.

- Q. Niu, X. Wang, and L.Z. Lu. A relaxed gradient based algorithm for solving Sylvester equations. Asian J. Control 2011, 13, 461–464. [Google Scholar] [CrossRef]

- V. Olshevsky. Similarity of block diagonal and block triangular matrices. Integr. Equat. Oper. Th. 1992, 15, 853–863. [Google Scholar] [CrossRef]

- A. B. Özgüler. The matrix equation AXB+CYD=E over a principal ideal domain. SIAM J. Matrix Anal. Appl. 1991, 12, 581–591. [Google Scholar] [CrossRef]

- C. C. Paige and M.A. Saunders. LSQR: An algorithm for sparse linear equations and sparse least squares. ACM Trans. Math. Softw. 1982, 8, 43–71. [Google Scholar] [CrossRef]

- C. C. Paige and M.A. Saunders. Towards a generalized singular value decomposition. SIAM J. Numer. Anal. 1981, 18, 398–405. [Google Scholar] [CrossRef]

- J. Pan, Z. Fu, H. Yue, X. Lei, et al. Toward simultaneous coordinate calibrations of AX=YB problem by the LMI-SDP optimization. IEEE Trans. Autom. Sci. Eng. 2023, 20, 2445–2453. [Google Scholar] [CrossRef]

- Z. Peng and Y. Peng. An efficient iterative method for solving the matrix equation AXB+CYD=E. Numer. Linear Algebra Appl. 2006, 13, 473–485. [Google Scholar] [CrossRef]

- R. Penrose. A generalized inverse for matrices. Math. Proc. Camb. Philos. Soc. 1955, 51, 406–413. [Google Scholar] [CrossRef]

- H. Pottmann and J. Wallner. Computational Line Geometry, Springer, Berlin. 2001.

- L. Qi and Z. Luo. Tensor Analysis: Spectral theory and special tensors, SIAM, Philadelphia. 2017.

- L. Qi. Standard dual quaternion optimization and its applications in hand-eye calibration and SLAM. Commun. Appl. Math. Comput. 2023, 5, 1469–1483. [Google Scholar] [CrossRef]

- L. Qi, H. Chen, and Y. Chen. Tensor Eigenvalues and Their Applications, Springer, Singapore. 2018.

- J. Qin and Q.W. Wang. Solving a system of two-sided Sylvester-like quaternion tensor equations. Comput. Appl. Math. 2023, 42, 232. [Google Scholar] [CrossRef]

- Z. Qin, Z. Ming, and L. Zhang. Singular value decomposition of third order quaternion tensors. Appl. Math. Lett. 2022, 123, 107597. [Google Scholar] [CrossRef]

- H. Radjavi and P. Rosenthal. Simultaneous triangularization, Springer, New York. 2000.

- C.R. Rao and S.K. Mitra. Generalized Inverse of Matrices and its Applications, Wiley, New York. 1971.

- A. Rehman, Q.W. Wang, I. Ali, M. Akram, et al. A constraint system of generalized Sylvester quaternion matrix equations. Adv. Appl. Clifford Algebr. 2017, 27, 3183–3196. [Google Scholar] [CrossRef]

- R. M. Reid. Some eigenvalues properties of persymmetric matrices. SIAM Rev. 1997, 39, 313–316. [Google Scholar] [CrossRef]

- B. Y. Ren, Q.W. Wang, and X.Y. Chen. The η-anti-Hermitian solution to a constrained matrix equation over the generalized segre quaternion algebra. Symmetry 2023, 15, 592. [Google Scholar] [CrossRef]

- L. Rodman. Topics in Quaternion Linear Algebra, Princeton University Press, Princeton. 2014.

- W. E. Roth. The equations AX-YB=C and AX-XB=C in matrices. Proc. Amer. Math. Soc. 1952, 3, 392–396. [Google Scholar] [CrossRef]

- B. Savas and L. Eldén. Handwritten digit classification using higher order singular value decomposition. Pattern Recognit. 2007, 40, 993–1003. [Google Scholar] [CrossRef]

- C. Segre. The real representations of complex elements and extension to bicomplex systems. Math. Ann. 1892, 40, 413–467. [Google Scholar]

- M. Shah, R. Bostelman, S. Legowik, and T. Hong. Calibration of mobile manipulators using 2D positional features. Measurement 2018, 124, 322–328. [Google Scholar] [CrossRef] [PubMed]

- M. Shah. Solving the robot-world/hand-eye calibration problem using the Kronecker product. J. Mech. Robot. 2013, 5, 031007. [Google Scholar] [CrossRef]

- J. Y. Shao. A general product of tensors with applications. Linear Algebra Appl. 2013, 439, 2350–2366. [Google Scholar] [CrossRef]

- X. Sheng. A relaxed gradient based algorithm for solving generalized coupled Sylvester matrix equations. J. Frankl. Inst. 2018, 355, 4282–4297. [Google Scholar] [CrossRef]

- A. Shirilord and M. Dehghan. Gradient descent-based parameter-free methods for solving coupled matrix equations and studying an application in dynamical systems. Appl. Numer. Math. 2025, 212, 29–59. [Google Scholar] [CrossRef]

- Y. C. Shiu and S. Ahmad. Calibration of wrist-mounted robotic sensors by solving homogeneous transform equations of the form AX=XB. IEEE Trans. Robot. Automat. 1989, 5, 16–29. [Google Scholar] [CrossRef]

- N. D. Sidiropoulos, L.D. Lathauwer, X. Fu, K. Huang, et al. Tensor decomposition for signal processing and machine learning. IEEE Trans. Signal Process. 2017, 65, 3551–3582. [Google Scholar] [CrossRef]

- C. Song and G. Chen. On solutions of matrix equation XF-AX=C and XF-AX˜=C over quaternion field. J. Appl. Math. Comput. 2011, 37, 57–68. [Google Scholar] [CrossRef]

- C. Song and G. Chen. Solutions to matrix equations X-AXB=CY+R and X-AX^B=CY+R. J. Comput. Appl. Math. 2018, 343, 488–500. [Google Scholar] [CrossRef]

- C. Song and J. Feng. On solutions to the matrix equations XB-AX=CY and XB-AX^=CY. J. Frankl. Inst. 2016, 353, 1075–1088. [Google Scholar] [CrossRef]

- C. Song, J. Feng, X. Wang, and J. Zhao. A real representation method for solving Yakubovich-j-conjugate quaternion matrix equation. Abstr. Appl. Anal. 2014, 2014, 285086. [Google Scholar]

- C. Song, G. Chen, and Q. Liu. Explicit solutions to the quaternion matrix equations X-AXF=C and X-AX˜F=C. Int. J. Comput. Math. 2012, 89, 890–900. [Google Scholar] [CrossRef]

- G. J. Song and C.Z. Dong. New results on condensed Cramer’s rule for the general solution to some restricted quaternion matrix equations. J. Appl. Math. Comput. 2017, 53, 321–341. [Google Scholar] [CrossRef]

- G. J. Song and Q.W. Wang. Condensed Cramer rule for some restricted quaternion linear equations. Appl. Math. Comput. 2011, 218, 3110–3121. [Google Scholar]

- G. J. Song, Q.W. Wang, and H.X. Chang. Cramer rule for the unique solution of restricted matrix equations over the quaternion skew field. Comput. Math. Appl. 2011, 61, 1576–1589. [Google Scholar] [CrossRef]

- G. J. Song, Q.W. Wang, and S.W. Yu. Cramer’s rule for a system of quaternion matrix equations with applications. Appl. Math. Comput. 2018, 336, 490–499. [Google Scholar]

- G. J. Song. Determinantal expression of the general solution to a restricted system of quaternion matrix equations with applications. Bull. Korean Math. Soc. 2018, 55, 1285–1301. [Google Scholar]

- W. Song and A. Jin. Observer-based model reference tracking control of the Markov jump system with partly unknown transition rates. Appl. Sci. 2023, 13, 914. [Google Scholar] [CrossRef]

- P. S. Stanimirovic. General determinantal representation of pseudoinverses of matrices. Mat. Vesn. 1996, 48, 1–9. [Google Scholar]

- L. Sun, B. Zheng, C. Bu, and Y. Wei. Moore-Penrose inverse of tensors via Einstein product. Linear Multilinear Algebra 2016, 64, 686–698. [Google Scholar] [CrossRef]

- J.J. Sylvester. Sur l’équation en matrices px=xq. C. R. Acad. Sci. Paris.

- N. Tan, X. Gu, and H. Ren. Simultaneous robot-world, sensor-tip, and kinematics calibration of an underactuated robotic hand with soft fingers. IEEE Access 2018, 6, 22705–22715. [Google Scholar] [CrossRef]

- M. Taylor. Pseudo differential operators, Springer, Heidelberg. 1974.

- Y. Tian, X. Liu, and Y. Zhang. Least-squares solutions of the generalized reduced biquaternion matrix equations. Filomat 2023, 37, 863–870. [Google Scholar] [CrossRef]

- C. C. Took and D.P. Mandic. Augmented second-order statistics of quaternion random signals. Signal Process. 2011, 91, 214–224. [Google Scholar] [CrossRef]

- C. C. Took, D.P. Mandic, and F. Zhang. On the unitary diagonalisation of a special class of quaternion matrices. Appl. Math. Lett. 2011, 24, 1806–1809. [Google Scholar] [CrossRef]

- H. Trinh, T.D. Tran, and S. Nahavandi. Design of scalar functional observers of order less than (ν-1). Int. J. Control 2006, 79, 1654–1659. [Google Scholar] [CrossRef]

- F. E. Udwadia. Dual generalized inverses and their use in solving systems of linear dual equations. Mech. Mach. Theory 2021, 156, 104158. [Google Scholar] [CrossRef]

- F. E. Udwadia, E. Pennestri, and D. de Falco. Do all dual matrices have dual Moore-Penrose inverses? Mech. Mach. Theory 2020, 151, 103878. [Google Scholar] [CrossRef]

- J. W. van der Woude. Almost non-interacting control by measurement feedback. Syst. Control Lett. 1987, 9, 7–16. [Google Scholar] [CrossRef]

- A. Varga. A numerically reliable approach to robust pole assignment for descriptor systems. Futur. Gener. Comp. Syst. 2012, 19, 1221–1230. [Google Scholar]

- J. Voight. Quaternion Algebras, Springer, Switzerland. 2021.

- D. Wang, Y. Li, and W.X. Ding. Several kinds of special least squares solutions to quaternion matrix equation AXB=C. J. Appl. Math. Comput. 2022, 68, 1881–1899. [Google Scholar] [CrossRef]

- G. Wang, Z. Guo, D. Zhang, and T. Jiang. Algebraic techniques for least-squares problem over generalized quaternion algebras: A unified approach in quaternionic and split quaternionic theory. Math. Meth. Appl. Sci. 2020, 43, 1124–1137. [Google Scholar] [CrossRef]

- G. Wang, Y. Wei, and S. Qiao. Generalized Inverses: Theory and Computations, Springer, Singapore. 2018.

- J. Wang, J. Feng, and H. Huang. Solvability of the matrix equation AX2=B with semi-tensor product. Electron. Res. Arch. 2020, 29, 2249–2267. [Google Scholar]

- J. Wang. On solutions of the matrix equation A∘lX=B with respect to MM-2 semitensor product. J. Math. 2021, 2021, 6651434. [Google Scholar]

- J. Wang. Least squares solutions of matrix equation AXB=C under semi-tensor product. Electron. Res. Arch. 2024, 32, 2976–2993. [Google Scholar] [CrossRef]

- J. Wang, D. Qu, and F. Xu. A new hybrid calibration method for extrinsic camera parameters and hand-eye transformation. In IEEE International Conference on Mechatronics and Automation, volume 4, pages 1981–1985, Niagara Falls, ON, Canada, 2005.

- L. Wang, Q. Wang, and Z. He. The common solution of some matrix equations. Algebra Colloq. 2016, 23, 71–81. [Google Scholar] [CrossRef]

- N. Wang. Solvability of the Sylvester equation AX-XB=C under left semi-tensor product. Math. Model. Control 2022, 2, 81–89. [Google Scholar] [CrossRef]

- Q. W. Wang and Z.H. He. Systems of coupled generalized Sylvester matrix equations. Automatica 2014, 50, 2840–2844. [Google Scholar] [CrossRef]

- Q. W. Wang, J.W. van der Woude, and S.W. Yu. An equivalence canonical form of a matrix triplet over an arbitrary division ring with applications. Sci. China-Math. 2011, 54, 907–924. [Google Scholar] [CrossRef]

- Q. W. Wang and X. Wang. A system of coupled two-sided Sylvester-type tensor equations over the quaternion algebra. Taiwan. J. Math. 2020, 24, 1399–1416. [Google Scholar]

- Q. W. Wang, X. Wang, and Y. Zhang. A constraint system of coupled two-sided Sylvester-like quaternion tensor equations. Comput. Appl. Math. 2020, 39, 317. [Google Scholar] [CrossRef]

- Q. W. Wang, X. Zhang, and J.W. van der Woude. A new simultaneous decomposition of a matrix quaternity over an arbitrary division ring with applications. Commun. Algebra 2012, 40, 2309–2342. [Google Scholar] [CrossRef]

- Q. W. Wang. A system of matrix equations and a linear matrix equation over arbitrary regular rings with identity. Linear Algebra Appl. 2004, 384, 43–54. [Google Scholar] [CrossRef]

- Q. W. Wang, Z.H. Gao, and J. Gao. A comprehensive review on solving the system of equations AX=C and XB=D. Symmetry 2025, 17, 625. [Google Scholar] [CrossRef]

- Q.W. Wang, Z.H. Gao, and Y.F. Li. An overview of methods for solving the system of matrix equations A1XB1=C1 and A2XB2=C2. Preprints, 2025. C. [CrossRef]

- Q. W. Wang and Z.H. He. Some matrix equations with applications. Linear Multilinear Algebra 2012, 60, 1327–1353. [Google Scholar] [CrossRef]

- Q. W. Wang and Z.H. He. Solvability conditions and general solution for mixed Sylvester equations. Automatica 2013, 49, 2713–2719. [Google Scholar] [CrossRef]

- Q. W. Wang, R.Y. Lv, and Y. Zhang. The least-squares solution with the least norm to a system of tensor equations over the quaternion algebra. Linear Multilinear Algebra 2022, 70, 1942–1962. [Google Scholar] [CrossRef]

- Q. W. Wang, A. Rehman, Z.H. He, and Y. Zhang. Constraint generalized Sylvester matrix equations. Automatica 2016, 69, 60–64. [Google Scholar] [CrossRef]

- Q. W. Wang, L.M. Xie, and Z.H. Gao. A survey on solving the matrix equation AXB=C with applications. Mathematics 2025, 13, 450. [Google Scholar] [CrossRef]

- Q.W. Wang and M. Xie. A system of k Sylvester-type quaternion matrix equations with 3k+1 variables. arXiv, arXiv:2007.14536v2.

- Q. W. Wang, H.S. Zhang, and G.J. Song . A new solvable condition for a pair of generalized Sylvester equations. Electron. J. Linear Algebra 2009, 18, 289–301. [Google Scholar]

- Q. W. Wang and S.Z. Li. Persymmetric and perskewsymmetric solutions to sets of matrix equations over a finite central algebra. Acta Math. Sin. 2004, 47, 27–34. [Google Scholar]

- Q. W. Wang, J.H. Sun, and S.Z. Li. Consistency for bi(skew)symmetric solutions to systems of generalized Sylvester equations over a finite central algebra. Linear Algebra Appl. 2002, 353, 169–182. [Google Scholar] [CrossRef]

- X. Wang, J. Huang, and H. Song. Simultaneous robot-world and hand-eye calibration based on a pair of dual equations. Measurement 2021, 181, 109623. [Google Scholar] [CrossRef]

- X. Wang and H. Song. One-step solving the robot-world and hand-eye calibration based on the principle of transference. J. Mech. Robot. 2024, 17, 031014. [Google Scholar]

- J. R. Weaver. Centrosymmetric (cross-symmetric) matrices, their basic properties, eigenvalues, eigenvectors. Am. Math. Mon. 1985, 92, 711–717. [Google Scholar] [CrossRef]

- M.S. Wei, Y. Li, F. Zhang, and J. Zhao. Quaternion Matrix Computations, Nova Science Publishers, New York. 2018.

- T. Wei, W. Ding, and Y. Wei. Singular value decomposition of dual matrices and its application to traveling wave identification in the brain. SIAM J. Matrix Anal. Appl. 2024, 45, 634–660. [Google Scholar] [CrossRef]

- H. K. Wimmer. The structure of nonsingular polynomial matrices. Math. Syst. Theory 1981, 14, 367–379. [Google Scholar] [CrossRef]

- H. K. Wimmer. The matrix equation X-AXB=C and an analogue of Roth’s theorem. Linear Algebra Appl. 1988, 109, 145–147. [Google Scholar] [CrossRef]

- H. K. Wimmer. Consistency of a pair of generalized Sylvester equations. IEEE Trans. Autom. Control 1994, 39, 1014–1016. [Google Scholar] [CrossRef]

- H. K. Wimmer. The generalized Sylvester equation in polynomial matrices. IEEE Trans. Autom. Control 1996, 41, 1372–1376. [Google Scholar] [CrossRef]

- H. K. Wimmer. Explicit solutions of the matrix equation ∑AiXDi=C. SIAM J. Matrix Anal. Appl. 1992, 13, 1123–1130. [Google Scholar] [CrossRef]

- H. K. Wimmer. Roth’s theorems for matrix equations with symmetry constraints. Linear Algebra Appl. 1994, 199, 357–362. [Google Scholar] [CrossRef]

- W.A. Wolovich. Linear Multivariable Systems, Springer, New York. 1974.

- W.A. Wolovich. Skew prime polynomial matrices. IEEE Trans. Autom. Control, -23.

- A. G. Wu, G.R. Duan, and Y. Xue. Kronecker maps and Sylvester-polynomial matrix equations. IEEE Trans. Autom. Control 2007, 52, 905–910. [Google Scholar] [CrossRef]

- A. G. Wu, W. Liu, C. Li, and G.R. Duan. On j-conjugate product of quaternion polynomial matrices. Appl. Math. Comput. 2013, 219, 11223–11232. [Google Scholar]

- A.G. Wu and Y. Zhang. Complex Conjugate Matrix Equations for Systems and Control, Springer, Singapore. 2017.

- A. G. Wu, G.R. Duan, and H.H. Yu. On solutions of the matrix equations XF-AX=C and XF-AX¯=C. Appl. Math. Comput. 2006, 183, 932–941. [Google Scholar]

- A. G. Wu, G. Feng, J. Hu, and G.R. Duan. Closed-form solutions to the nonhomogeneous Yakubovich-conjugate matrix equation. Appl. Math. Comput. 2009, 214, 442–450. [Google Scholar]

- A. G. Wu, Y.M. Fu, and G.R. Duan. On solutions of matrix equations V-AVF=BW and V-AV¯F=BW. Math. Comput. Model. 2008, 47, 1181–1197. [Google Scholar]

- A. G. Wu, H.Q. Wang, and G.R. Duan. On matrix equations X-AXF=C and X-AX¯F=C. J. Comput. Appl. Math. 2009, 230, 690–698. [Google Scholar]

- A. G. Wu, G.R. Duan, G. Feng, and W. Liu. On conjugate product of complex polynomials. Appl. Math. Lett. 2011, 24, 735–741. [Google Scholar] [CrossRef]

- A. G. Wu, G. Feng, W. Liu, and G.R. Duan. The complete solution to the Sylvester-polynomial-conjugate matrix equations. Math. Comput. Model. 2011, 53, 2044–2056. [Google Scholar] [CrossRef]

- A. G. Wu, B. Li, Y. Zhang, and G.R. Duan. Finite iterative solutions to coupled Sylvester-conjugate matrix equations. Appl. Math. Modell. 2011, 35, 1065–1080. [Google Scholar] [CrossRef]

- A. G. Wu, W. Liu, and G.R. Duan. On the conjugate product of complex polynomial matrices. Math. Comput. Model. 2011, 53, 2031–2043. [Google Scholar] [CrossRef]

- F. Wu, C. Li, and Y. Li. Manifold regularization nonnegative triple decomposition of tensor sets for image compression and representation. J. Optim. Theory Appl. 2022, 192, 979–1000. [Google Scholar] [CrossRef]

- J. Wu, M. Liu, Y. Zhu, Z. Zou, et al. Globally optimal symbolic hand-eye calibration. IEEE-ASME Trans. Mechatron. 2021, 26, 1369–1379. [Google Scholar] [CrossRef]

- L. Wu and H. Ren. Finding the kinematic base frame of a robot by hand-eye calibration using 3D position data. IEEE Trans. Autom. Sci. Eng. 2017, 14, 314–324. [Google Scholar] [CrossRef]

- Y. Xi, Z. Liu, Y. Li, R. Tao, et al. On the mixed solution of reduced biquaternion matrix equation ∑i=1nAiXiBi=E with sub-matrix constraints and its application. AIMS Math. 2023, 8, 27901–27923. [Google Scholar] [CrossRef]

- L. M. Xie, Q.W. Wang, and Z.H. He. The generalized hand-eye calibration matrix equation AX-YB=C over dual quaternions. Comput. Appl. Math. 2025, 44, 137. [Google Scholar] [CrossRef]

- L.M. Xie and Q.W. Wang. A generalized Sylvester dual quaternion matrix equation with applications. Preprints, 2025. [CrossRef]

- L. M. Xie and Q.W. Wang. Some novel results on a classical system of matrix equations over the dual quaternion algebra. Filomat 2025, 39, 1477–1490. [Google Scholar] [CrossRef]

- M. Xie and Q.W. Wang. Reducible solution to a quaternion tensor equation. Front. Math. China 2020, 15, 1047–1070. [Google Scholar] [CrossRef]

- M. Xie, Q.W. Wang, and Y. Zhang. The minimum-norm least squares solutions to quaternion tensor systems. Symmetry 2022, 14, 1460. [Google Scholar] [CrossRef]

- M. Y. Xie, Q.W. Wang, Z.H. He, and M.M. Saad. A system of Sylvester-type quaternion matrix equations with ten variables. Acta. Math. Sin.-Engl. Ser. 2022, 38, 1399–1420. [Google Scholar] [CrossRef]

- G. Xu, M. Wei, and D. Zheng. On solutions of matrix equation AXB+CYD=F. Linear Algebra Appl. 1998, 279, 93–109. [Google Scholar]

- Q. Xu. Common Hermitian and positive solutions to the adjointable operator equations AX=C, XB=D. Linear Algebra Appl. 2008, 429, 1–11. [Google Scholar] [CrossRef]

- R. Xu, T. Wei, Y. Wei, and H. Yan. UTV decomposition of dual matrices and its applications. Comput. Appl. Math. 2024, 43, 41. [Google Scholar] [CrossRef]

- I.M. Yaglom. Complex Numbers in Geometry, Academic Press, New York. 1968.

- T. Yan and C. Ma. The BCR algorithms for solving the reflexive or anti-reflexive solutions of generalized coupled Sylvester matrix equations. J. Frankl. Inst. 2020, 357, 12787–12807. [Google Scholar] [CrossRef]

- T. Yan and C. Ma. An iterative algorithm for generalized Hamiltonian solution of a class of generalized coupled Sylvester-conjugate matrix equations. Appl. Math. Comput. 2021, 411, 126491. [Google Scholar]

- L. Yang, Q.W. Wang, and Z. Kou. A system of tensor equations over the dual split quaternion algebra with an application. Mathematics 2024, 12, 3571. [Google Scholar] [CrossRef]

- X. Yang and W. Huang. Backard error analysis of the matrix equations for Sylvester and Lyapunov. J. Sys. Sci. Math. Scis. 2008, 28, 524–534. [Google Scholar]

- J. Yao, J. Feng, and M. Meng. On solutions of the matrix equation AX=B with respect to semi-tensor product. J. Frankl. Inst. 2016, 353, 1109–1131. [Google Scholar] [CrossRef]

- C. Yu, X. Liu, and Y. Zhang. The generalized quaternion matrix equation AXB+CX★D=E. Math. Meth. Appl. Sci. 2020, 43, 8506–8517. [Google Scholar] [CrossRef]

- S. Yuan. Least squares pure imaginary solution and real solution of the quaternion matrix equation AXB+CXD=E with the least norm. J. Appl. Math. 2014, 2014, 857081. [Google Scholar]

- S. Yuan and A. Liao. Least squares solution of the quaternion matrix equation X-AX^B=C with the least norm. Linear Multilinear Algebra 2011, 59, 985–998. [Google Scholar] [CrossRef]

- S. F. Yuan and Q.W. Wang. Two special kinds of least squares solutions for the quaternion matrix equation AXB+CXD=E. Electron. J. Linear Algebra 2012, 23, 257–274. [Google Scholar]

- S.H. Żak. On the polynomial matrix equation AX+YB=C. IEEE Trans. Autom. Control.

- F. Zhang, W. Mu, Y. Li, and J. Zhao. Special least squares solutions of the quaternion matrix equation AXB+CXD=E. Comput. Math. Appl. 2016, 72, 1426–1435. [Google Scholar] [CrossRef]

- K. Zhang. Iterative Algorithms for Constrained Solutions of Matrix Equations, National Defense Industry Press, Beijing, 2015. In Chinese.

- M. Zhang, Y. Li, J. Sun, X. Fan, et al. A new method based on the semi-tensor product of matrices for solving communicative quaternion matrix equation ∑i=1kAiXBi=C and its application. Bull. Sci. Math. 2025, 199, 103576. [Google Scholar] [CrossRef]

- B. Zhou, G.R. Duan, and Z.Y. Li. Gradient based iterative algorithm for solving coupled matrix equations. Syst. Control Lett. 2009, 58, 327–333. [Google Scholar] [CrossRef]

- H. Zhuang and Z.S. Roth. Comments on “Calibration of wrist-mounted robotic sensors by solving homogeneous transformation equations of the form AX=XB". IEEE Trans. Robot. Autom. 1991, 7, 877–878. [Google Scholar] [CrossRef]

- H. Zhuang, Z.S. Roth, and R. Sudhakar. Simultaneous robot/world and tool/flange calibration by solving homogeneous transformation equations of the form AX=YB. IEEE Trans. Robot. Autom. 1994, 10, 549–554. [Google Scholar] [CrossRef]

- K. Ziętak. The properties of the minimax solution of a non-linear matrix equation XY=A. IMA J. Numer. Anal. 1983, 3, 229–244. [Google Scholar] [CrossRef]

- K. Ziętak. The Chebyshev solution of the linear matrix equation AX+YB=C. Numer. Math. 1985, 46, 455–478. [Google Scholar] [CrossRef]

- K. Ziętak. The lp-solution of the linear matrix equation AX+YB=C. Computing 1984, 32, 153–162. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).