Submitted:

09 August 2025

Posted:

11 August 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Conceptual Background

2.1. Introducing De Zubiría's Dialogic Pedagogical Model and Its Theoretical Foundations

2.2. De Zubiría's Dialogic Pedagogical Model in Detail

2.3. The Artificial Intelligence Assessment Scale (AIAS)

2.4. The Need for Synthesis

- First, it leverages educators' pedagogical knowledge as the primary driver for AI integration decisions. Rather than requiring extensive AI expertise, this approach allows professors to make informed decisions based on their professional strengths: understanding student needs, cognitive development stages, and learning processes. This approach ensures that technology serves learning objectives rather than dictating them.

- Second, it provides guidance for AI integration that aligns with recognized cognitive development stages, making implementation more intuitive for educators. By mapping AIAS levels to De Zubiría's cognitive stages, educators can more easily determine appropriate AI integration strategies based on their students' developmental needs.

- Finally, it focuses on augmenting, rather than replacing, proven pedagogical practices. The synthesis transforms the technical-centric approach of AIAS into one grounded in pedagogical principles, ensuring that AI integration enhances rather than disrupts effective teaching and learning processes.

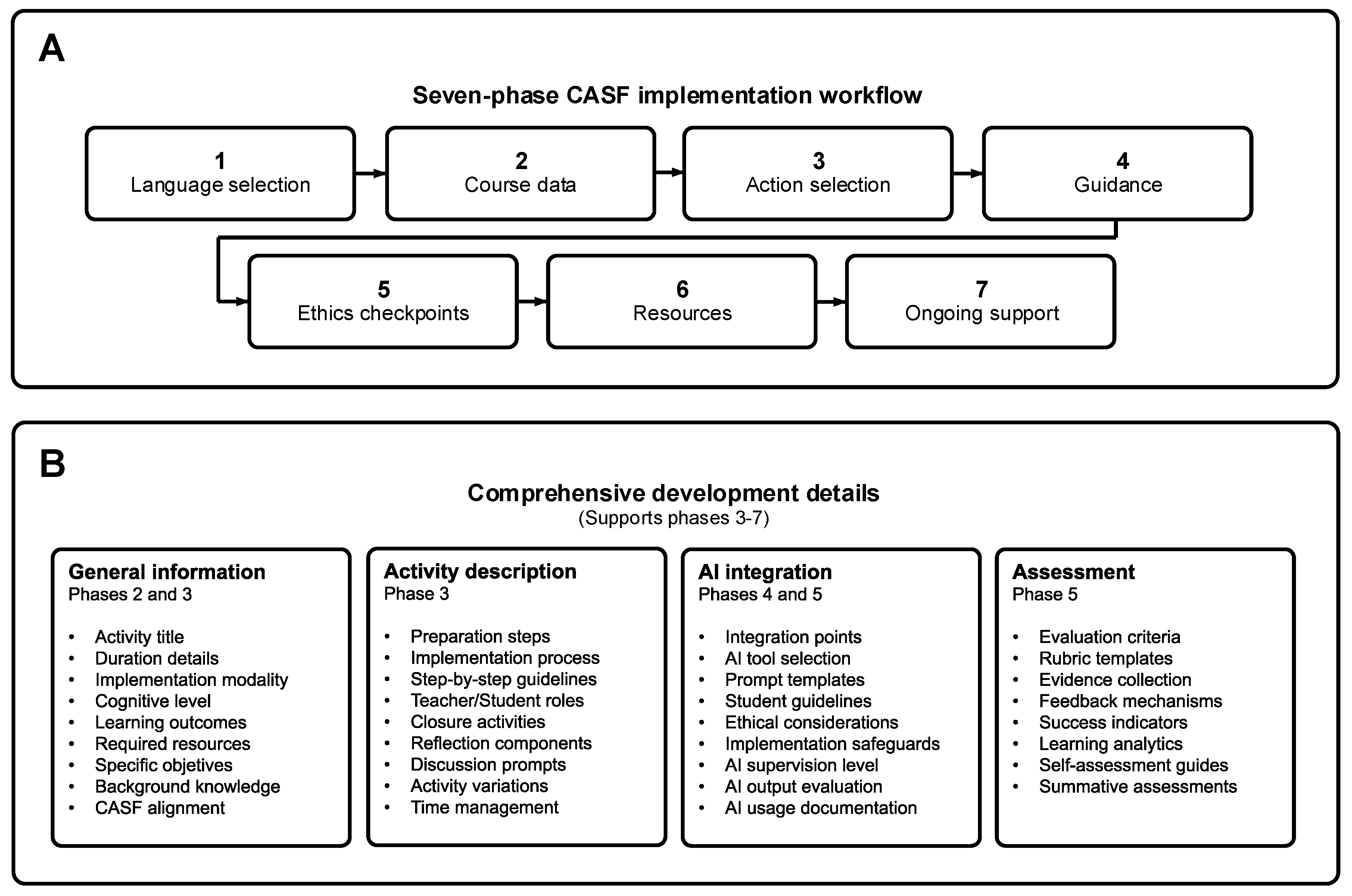

3. The Cognitive-AI Synergy Framework (CASF)

3.1. Framework Structure and Implementation Stages

3.1.1. Foundational AI Integration Stage

3.1.2. Conceptual and Formal Cognitive Levels

3.1.3. Categorial and Metacognitive Cognitive Levels

3.2. Quality Assurance and Ethical Considerations

- Do all students have equitable access to AI tools?

- Might algorithmic biases affect learning outcomes?

- How can assessment maintain integrity while incorporating technological assistance?

3.3. Framework Adaptability and Ongoing Development

4. CASF Implementation Assistant

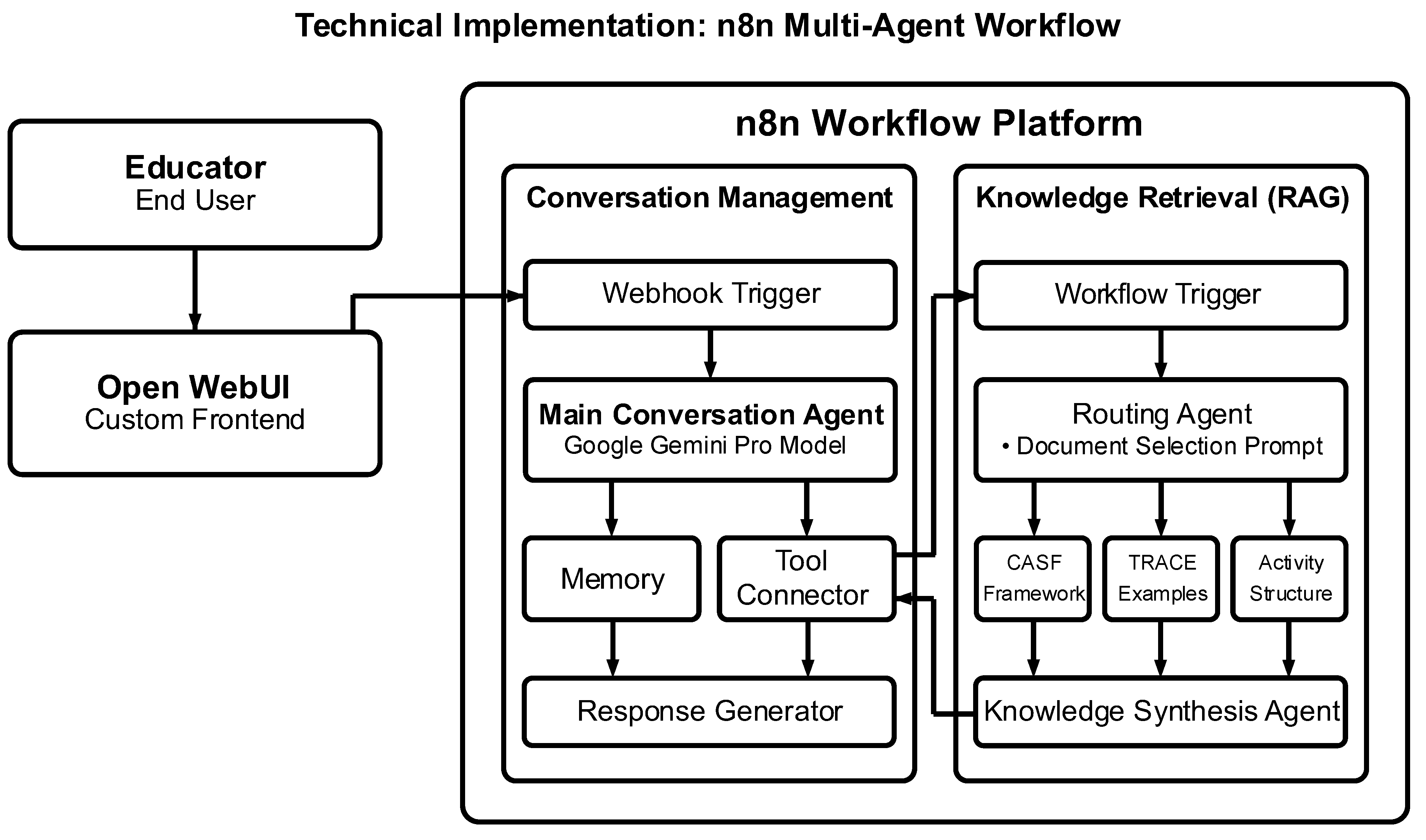

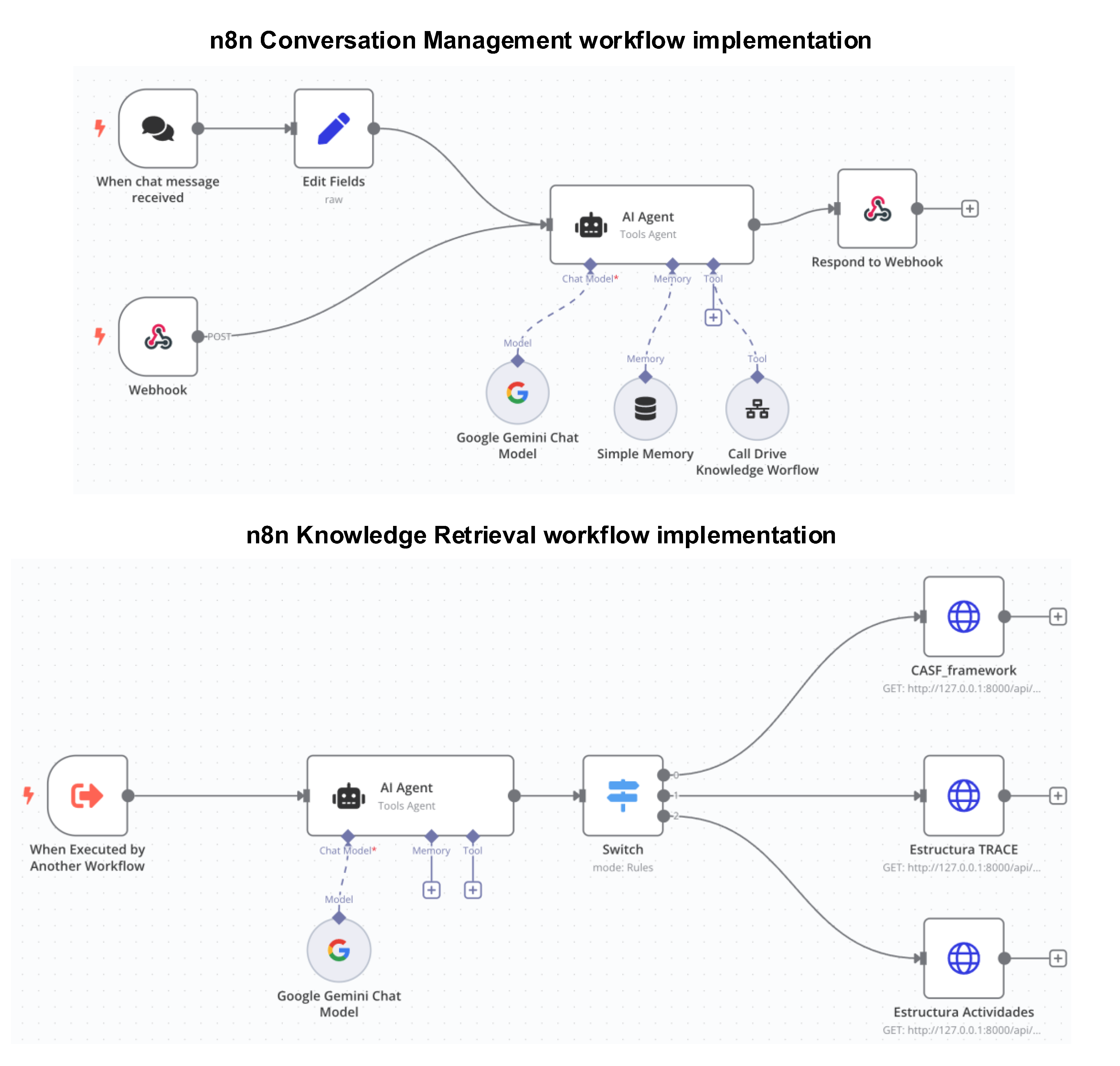

4.1. Technical Design and Architecture

4.2. Development in n8n

- Multi-Agent Coordination: The system distributes tasks across specialized agents rather than relying on a single conversational agent. This architecture facilitates consulting different resources when developing teaching strategies, allowing for more targeted and efficient processing aligned with educational needs.

- Dynamic Knowledge Retrieval: Unlike static prompt systems, the implementation selectively accesses specific framework documents based on conversation context. The system routes queries to the most relevant knowledge sources: CASF framework specifications, TRACE prompt libraries, and activity templates.

- Vector-Based Semantic Search: Text embedding and vector storage enable the assistant to identify conceptually relevant information even when exact terminology varies. This capability is particularly valuable for supporting educators who may describe similar pedagogical concepts using different disciplinary language.

4.3. Key Features and Functionality

4.4. Implementation Process

4.5. Workshop Implementation and Evaluation

5. Application Examples in Education

5.1. General Examples of Application

5.2. Results from the Implementation Workshop

Instructor: Hola

5.2.1. Participant Demographics and Implementation Context

5.2.2. Quantitative Assessment of Framework Effectiveness

5.2.3. Qualitative Insights on Implementation Experience

6. Discussion

6.1. Limitations and Challenges

6.1.1. Cognitive Level Alignment Challenges

6.1.2. Risk of Over-Reliance on GenAI

6.1.3. Technical and Institutional Barriers

6.2. Recommendations for Implementation

6.2.1. Curriculum Redesign Strategies

- Progressive AI Integration: Design curricula that gradually increase AI integration as students advance through cognitive levels, beginning with minimal AI support at notional levels and expanding to more collaborative integration at formal and categorial levels.

- Dual-Path Activities: Develop learning activities with parallel paths (one with AI assistance and one without), allowing students to compare approaches and develop metacognitive awareness of when AI enhances or hinders their learning.

- Skill Preservation Focus: Identify core skills that should remain AI-independent, and design dedicated activities that develop these abilities without technological assistance, particularly at foundational cognitive levels.

- Cross-Cognitive Level Mapping: Create curriculum maps that explicitly connect learning objectives with both De Zubiría's cognitive levels and appropriate AI integration modalities, ensuring pedagogical alignment throughout the course.

6.2.2. Innovative Pedagogical Approaches

- AI-Enhanced Dialogic Teaching: Implement structured dialogues where students critically engage with AI outputs rather than passively consuming them, promoting the active construction of knowledge through technology-mediated discussion.

- Cognitive Apprenticeship with AI: Design activities where AI serves as a model for expert thinking that students progressively internalize, following the cognitive apprenticeship model described by Collins et al. (1991).

- Metacognitive Scaffolding: Include explicit reflection activities where students analyze how AI affects their thinking processes, becoming more conscious of both its benefits and limitations.

- Calibrated AI Prompting: Teach students to create increasingly sophisticated AI prompts that align with their cognitive development stage, moving from simple verification prompts at propositional levels to complex analytical prompts at formal and categorial levels.

6.2.3. Adaptive Assessment Strategies

- Process Documentation: Implement assessment approaches that emphasize documenting the thinking process rather than just final outputs, requiring students to explain their reasoning and AI interaction decisions.

- Comparative Evaluation: Assess students' ability to critically evaluate AI outputs against established knowledge, encouraging them to identify limitations and potential biases in AI-generated content.

- Staged Assessment Design: Create multi-phase assessments where initial phases are completed without AI to establish baseline understanding, followed by AI-enhanced phases that build upon this foundation.

- AI-Verification Balance: Design assessments that require both AI-assisted components and human verification steps, ensuring students develop the ability to validate AI outputs rather than accepting them uncritically.

6.2.4. Ethical Implementation Considerations

- Equity of Access: Ensure all students have equitable access to required AI tools, implementing alternative approaches when technological disparities exist.

- Transparent AI Boundaries: Clearly communicate to students when and how AI should be used in their learning, establishing explicit expectations for appropriate use at different cognitive levels.

- Data Privacy Protection: Implement protocols for protecting student data when using AI tools, particularly when these tools may store interactions or require account creation.

- Bias Awareness Education: Include discussions of algorithmic bias and limitations in AI implementation, helping students develop critical awareness of how these technologies may reflect and amplify existing societal biases.

6.3. Implementation Workshop Analysis

6.4. Future Development and Research Directions

6.4.1. Empirical Validation Studies

- Longitudinal Implementation Studies: Track the impact of CASF implementation across multiple semesters to assess changes in student cognitive development, technical skill acquisition, and engagement.

- Comparative Framework Evaluation: Compare learning outcomes between CASF and other AI integration approaches to identify relative strengths and potential synergies.

- Cognitive Development Measurement: Develop and validate assessment instruments specifically designed to measure the impact of AI-enhanced activities on cognitive development across De Zubiría's stages.

6.4.2. Framework Extensions and Adaptations

- Discipline-Specific Adaptations: Develop specialized versions of CASF tailored to specific disciplinary contexts, accounting for the unique cognitive demands and GenAI applications within different fields.

- Cross-Cultural Framework Validation: Evaluate CASF's effectiveness across diverse cultural and educational contexts, identifying necessary adaptations for global implementation.

- Integration with Other Pedagogical Models: Explore how CASF might be synthesized with additional pedagogical approaches such as problem-based learning, flipped classroom methodologies, or universal design for learning principles.

6.4.3. Technical Enhancements to the Implementation Assistant

- Enhanced User Interface: Develop more intuitive interfaces that reduce technical barriers while maintaining the assistant's pedagogical sophistication.

- Advanced Activity Analytics: Incorporate features that analyze proposed activities for their cognitive engagement level, providing feedback on how effectively they promote higher-order thinking.

- Expanded Resource Integration: Enable direct integration with institutional learning management systems and resource repositories to facilitate seamless implementation.

7. Conclusions

7.1. Implications for Engineering Education

7.2. Final Thoughts

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| CASF | Cognitive-AI Synergy Framework |

| GenAI | Generative Artificial Intelligence |

| AIAS | Artificial Intelligence Assessment Scale |

| AI | Artificial Intelligence |

| ZPD | Zone of Proximal Development |

| TRACE(SE) | Task, Requirement, Action, Context, Example, (Structure Element) |

| n8n | Workflow automation platform (pronounced "n8n") |

| CIFI | School of Engineering Research Center |

References

- Anderson, L. W., & Krathwohl, D. R. (2001). A taxonomy for learning, teaching, and assessing: A revision of Bloom’s taxonomy of educational objectives: complete edition. Addison Wesley Longman, Inc.

- Collins, A., Brown, J. S., & Holum, A. (1991). Cognitive apprenticeship: Making thinking visible. American Educator, 15(3), 6–11.

- Crawford, J., Cowling, M., & Allen, K.-A. (2023). Leadership is needed for ethical ChatGPT: Character, assessment, and learning using artificial intelligence (AI). Journal of University Teaching and Learning Practice, 20(3), 1–19. https://search.informit.org/doi/10.3316/informit.T2024112000002891427961342.

- Cukurova, M., Luckin, R., & Kent, C. (2020). Impact of an Artificial Intelligence Research Frame on the Perceived Credibility of Educational Research Evidence. International Journal of Artificial Intelligence in Education, 30(2), 205–235. [CrossRef]

- de Zubiría Samper, J. (2006). Los modelos pedagógicos: hacia una pedagogía dialogante. Cooperativa Editorial Magisterio.

- de Zubiría Samper, J. (2021). De la escuela nueva al constructivismo. Magisterio. https://books.google.com.co/books?id=h3xbEAAAQBAJ.

- Eager, B., & Brunton, R. (2023). Prompting higher education towards ai-augmented teaching and learning practice. Journal of University Teaching and Learning Practice, 20(5), 1–19. [CrossRef]

- Furze, L., Perkins, M., Roe, J., & MacVaugh, J. (2024). The AI Assessment Scale (AIAS) in action: A pilot implementation of GenAI-supported assessment. Australasian Journal of Educational Technology. [CrossRef]

- Ghimire, A., Pather, J., & Edwards, J. (2024). Generative AI in Education: A Study of Educators’ Awareness, Sentiments, and Influencing Factors. 2024 IEEE Frontiers in Education Conference (FIE), 1–9. [CrossRef]

- Hazzan-Bishara, A., Kol, O., & Levy, S. (2025). The factors affecting teachers’ adoption of AI technologies: A unified model of external and internal determinants. Education and Information Technologies. [CrossRef]

- Jose, B., Cherian, J., Verghis, A. M., Varghise, S. M., S, M., & Joseph, S. (2025). The cognitive paradox of AI in education: between enhancement and erosion. Frontiers in Psychology, Volume 16-2025. [CrossRef]

- Lee, D., Arnold, M., Srivastava, A., Plastow, K., Strelan, P., Ploeckl, F., Lekkas, D., & Palmer, E. (2024). The impact of generative AI on higher education learning and teaching: A study of educators’ perspectives. Computers and Education: Artificial Intelligence, 6, 100221. [CrossRef]

- Lim, W. M., Gunasekara, A., Pallant, J. L., Pallant, J. I., & Pechenkina, E. (2023). Generative AI and the future of education: Ragnarök or reformation? A paradoxical perspective from management educators. The International Journal of Management Education, 21(2), 100790. [CrossRef]

- Lodge, J., Howard, S., Bearman, M., & Dawson, P. (2023). Assessment reform for the age of artificial intelligence. Tertiary Education Quality and Standards Agency.

- Michel-Villarreal, R., Vilalta-Perdomo, E., Salinas-Navarro, D. E., Thierry-Aguilera, R., & Gerardou, F. S. (2023). Challenges and Opportunities of Generative AI for Higher Education as Explained by ChatGPT. Education Sciences, 13(9), 856. [CrossRef]

- Ng, D. T. K., Chan, E. K. C., & Lo, C. K. (2025). Opportunities, challenges and school strategies for integrating generative AI in education. Computers and Education: Artificial Intelligence, 8, 100373. [CrossRef]

- Perkins, M., Furze, L., Roe, J., & MacVaugh, J. (2024). The Artificial Intelligence Assessment Scale (AIAS): A Framework for Ethical Integration of Generative AI in Educational Assessment. Journal of University Teaching and Learning Practice, 21(06). [CrossRef]

- Roe, J., Perkins, M., & Tregubova, Y. (2024). The EAP-AIAS: Adapting the AI Assessment Scale for English for Academic Purposes. ArXiv Preprint ArXiv:2408.01075.

- Sharma, B. (2024). The Transformative Power of AI and Machine Learning in Education. International Journal of Computer Aided Manufacturing, 10(2), 1–5.

- Tajik, E. (2024). A Comprehensive Examination of the Potential Application of Chat GPT in Higher Education Institutions. SSRN Electronic Journal. [CrossRef]

- Vygotsky, L. S., & Cole, M. (1978). Mind in Society: Development of Higher Psychological Processes. Harvard University Press. https://books.google.com.co/books?id=RxjjUefze_oC.

- Wang, S., Wang, F., Zhu, Z., Wang, J., Tran, T., & Du, Z. (2024). Artificial intelligence in education: A systematic literature review. Expert Systems with Applications, 252, 124167. [CrossRef]

- Wood, D., Bruner, J. S., & Ross, G. (1976). The role of tutoring in problem solving. Journal of Child Psychology and Psychiatry, 17(2), 89–100. [CrossRef]

- Zimmerman, B. J. (2002). Becoming a self-regulated learner: An overview. Theory into Practice, 41(2), 64–70.

- Zoller, U., & Pushkin, D. (2007). Matching Higher-Order Cognitive Skills (HOCS) promotion goals with problem-based laboratory practice in a freshman organic chemistry course. Chem. Educ. Res. Pract., 8(2), 153–171. [CrossRef]

| Level | Description | Characteristics | Example in Higher Education |

|---|---|---|---|

| Notional (Pre-categorical) | Students form basic notions about disciplinary concepts | Focus on memorization and recognition of basic terms and ideas | Students can identify key theories in their field and describe them in simple terms |

| Propositional | Students establish relationships between concepts | Application of knowledge to solve straightforward problems | Students can apply formulas to solve standard problems or implement basic procedures |

| Conceptual | Students handle abstract and complex concepts | Analysis and comparison of different theoretical frameworks | Students can compare competing theories and identify the strengths and weaknesses of each approach |

| Formal | Students apply logical and abstract reasoning to complex problems | Evaluation and justification of decisions based on theoretical frameworks | Students can justify their approach to solving complex problems using theoretical principles |

| Categorial | Students synthesize knowledge from different areas | Creation of new knowledge by combining existing theories | Students can integrate theories from different disciplines to address complex problems |

| Metacognitive | Students reflect on their thought processes and learning | Self-regulation of learning strategies and awareness of cognitive processes | Students can plan, monitor, and evaluate their learning process and adapt strategies accordingly |

| Level | Description | Student Role | Faculty Role |

|---|---|---|---|

| Level 1: No AI | Assessment completed entirely without AI assistance | Students rely solely on their knowledge and skills | Faculty design traditional assessments that verify original student work |

| Level 2: AI-Assisted Idea Generation | AI is used for brainstorming and structuring ideas, but no AI content in the final submission | Students use AI to explore ideas but create all content independently | Faculty guide students in effective AI use for idea generation while maintaining originality |

| Level 3: AI-Assisted Editing | AI is used to improve the clarity or quality of student-created work | Students create original work and use AI to refine it, providing original work in an appendix | Faculty evaluate both original work and AI-refined versions to assess improvement |

| Level 4: AI Task Completion with Human Evaluation | AI completes specific tasks, with students providing commentary on AI-generated content | Students critically engage with AI outputs, evaluating their validity and appropriateness | Faculty assess students' critical evaluation skills rather than content creation abilities |

| Level 5: Full AI | AI is used as a 'co-pilot' throughout the assessment process | Students collaborate with AI throughout the assessment, focusing on creative applications | Faculty design assessments that evaluate effective AI collaboration rather than traditional content mastery |

| Implementation Stage | Cognitive Level | AI Integration Modality | Faculty Role | Assessment Approach |

|---|---|---|---|---|

| Foundational AI Integration | Notional | No AI: 🟢 ж Idea Generation: 🟡 ж Editing: 🔴 ж Task Completion: 🔴 | Direct guidance and close supervision; explicit instruction in AI-tool basics; frequent validation of outputs | Traditional methods focused on fundamental-concept mastery |

| Propositional | No AI: 🟢 ж Idea Generation: 🟢 ж Editing: 🟡 ж Task Completion: 🔴 | Direct guidance plus procedural coaching in AI use | Traditional assessment with limited AI leverage | |

| Intermediate AI Collaboration | Conceptual | No AI: 🟢 ж Idea Generation: 🟢 ж Editing: 🟡 ж Task Completion: 🟡 | Facilitative guidance; monitoring of AI contributions; support for analytical-skill development | Hybrid assessment (process documentation + traditional artefacts) |

| Formal | No AI: 🟢 ж Idea Generation: 🟢 ж Editing: 🟢 ж Task Completion: 🟡 | Facilitative guidance; oversight of AI-enhanced evaluation tasks | Hybrid assessment combining AI-enhanced and classical methods | |

| Advanced AI Synergy | Categorial | No AI: 🟢 ж Idea Generation: 🟢 ж Editing: 🟢 ж Task Completion: 🟢 | Strategic mentorship on sophisticated AI integration; emphasis on creativity | Complex, authentic assessment incorporating advanced AI use |

| Metacognitive | No AI: 🟢 ж Idea Generation: 🟢 ж Editing: 🟢 ж Task Completion: 🟢 | Strategic mentorship; focus on reflective, self-regulated learning with AI | Complex, authentic assessment with metacognitive reflection |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).