3. Experimental Results

3.1. Demographic and Clinical Characteristics

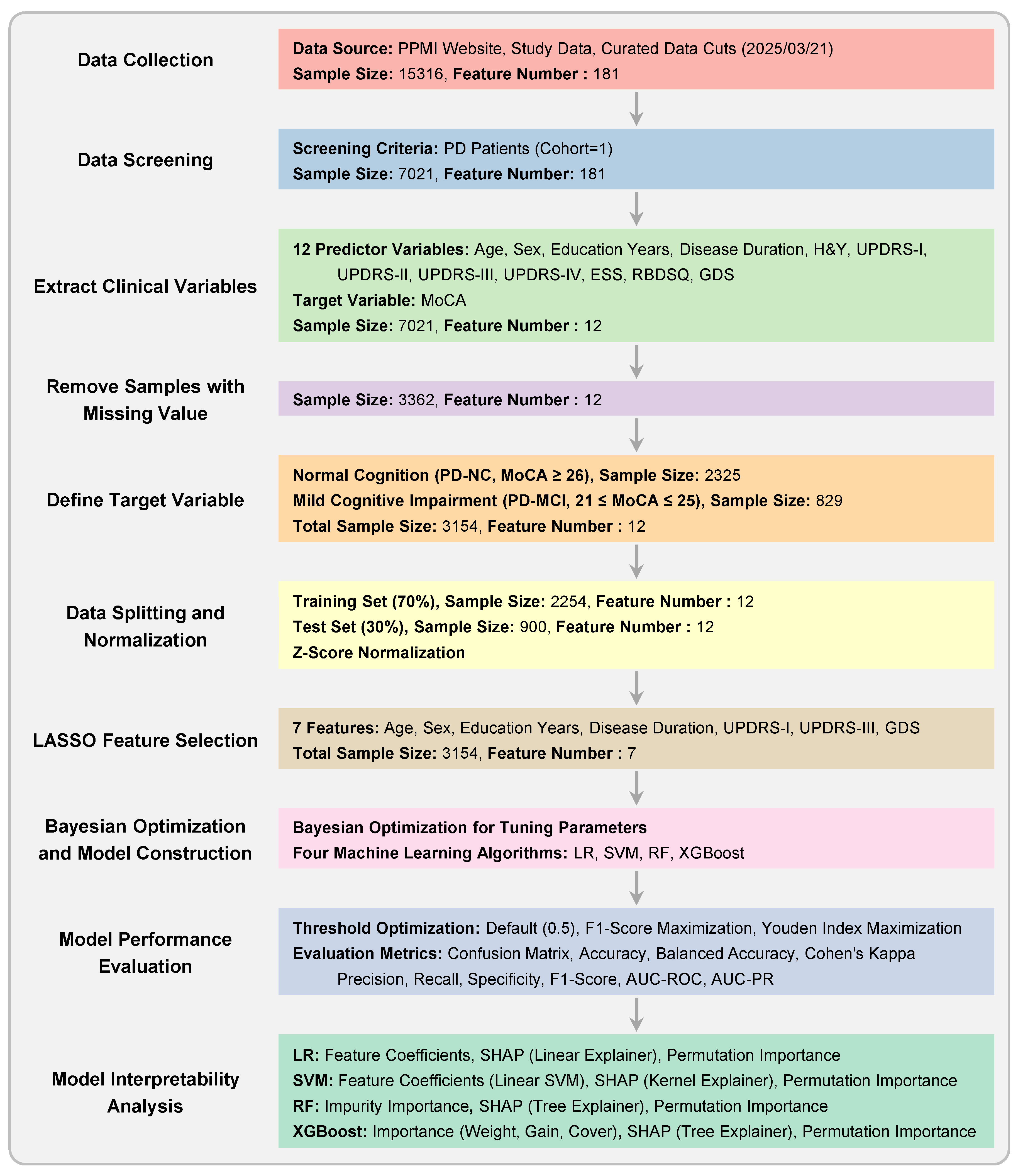

After preprocessing and filtering based on MoCA scores, the final dataset comprised 3,154 valid records from 896 unique patients. Of these, 2,325 records were classified as PD-NC and 829 as PD-MCI. The demographic and clinical characteristics of the study population are presented in

Table 3.

After applying False Discovery Rate (FDR) correction, significant between-group differences were observed for most variables. The PD-MCI group was significantly older, had a higher proportion of males, fewer years of education, and showed a shorter disease duration compared to the PD-NC group (all ). Clinically, the PD-MCI group exhibited more severe non-motor symptoms of daily living (UPDRS-I), motor symptoms of daily living (UPDRS-II), motor signs (UPDRS-III), and depressive symptoms (GDS), as well as higher rates of REM sleep behavior disorder symptoms (RBDSQ) (all ). These findings highlight a distinct clinical and demographic profile for patients with PD-MCI, providing a strong basis for machine learning-based classification.

After applying False Discovery Rate (FDR) correction, significant between-group differences were observed for all variables except ESS (). The PD-MCI group was significantly older, had a higher proportion of males, fewer years of education, and showed a shorter disease duration compared to the PD-NC group (all ). Clinically, the PD-MCI group exhibited significantly higher Hoehn and Yahr stage (H&Y), more severe non-motor symptoms of daily living (UPDRS-I), motor symptoms of daily living (UPDRS-II), motor signs (UPDRS-III), motor complications (UPDRS-IV), and depressive symptoms (GDS), as well as higher rates of REM sleep behavior disorder symptoms (RBDSQ) (all ). In contrast, daytime sleepiness scores (ESS) showed no significant difference between groups. These findings highlight a distinct clinical and demographic profile for patients with PD-MCI, providing a strong basis for machine learning-based classification.

3.2. Feature Correlation and Multicollinearity Assessment

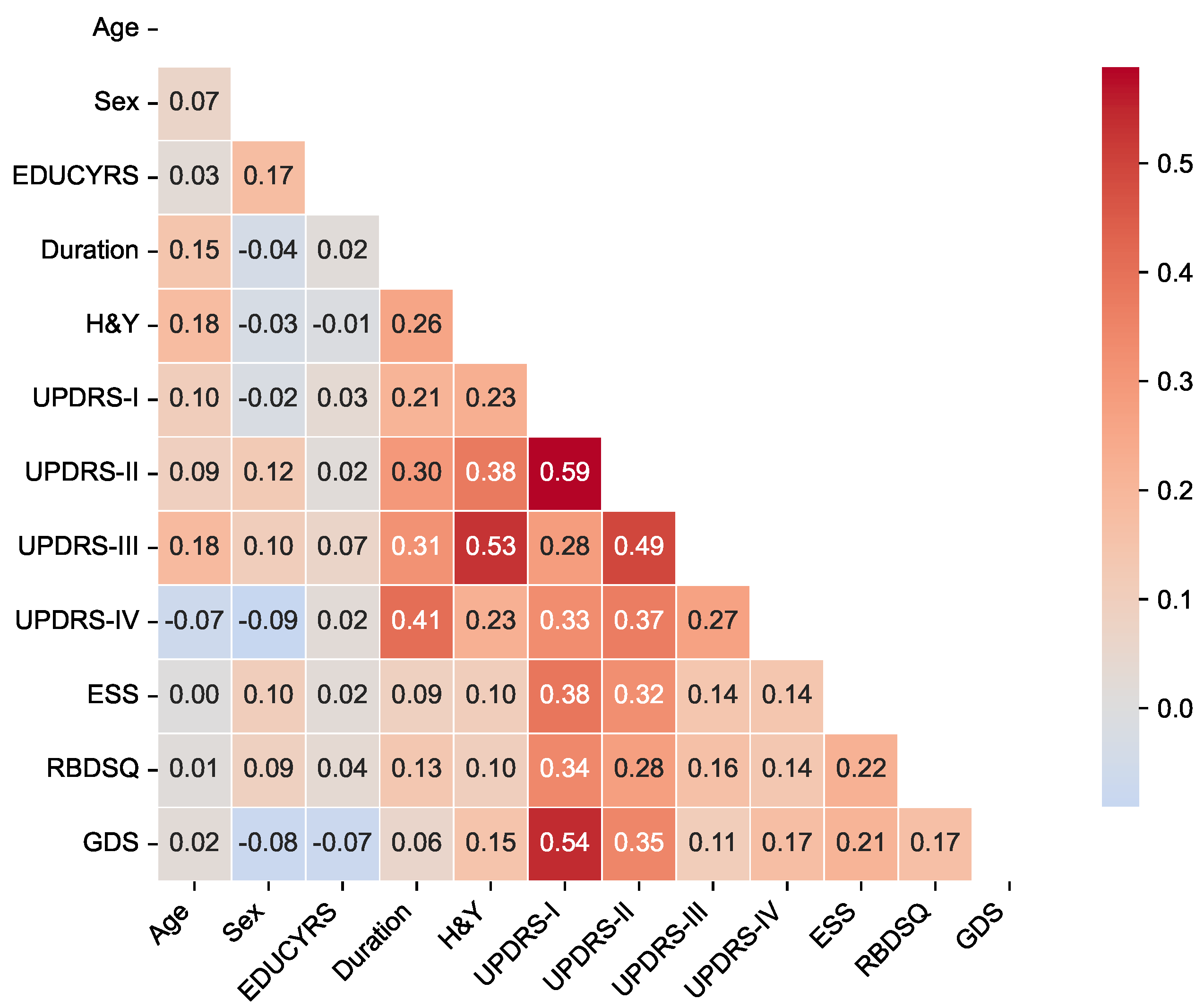

To assess potential multicollinearity among predictor variables and understand the relationships between clinical features, we examined pairwise correlations using Pearson correlation coefficients for the complete dataset. The correlation matrix is presented in

Figure 2, revealing the strength and direction of associations between all predictor variables used in the classification models.

The correlation analysis demonstrated generally low to moderate correlations among most clinical features, with the highest positive correlation being between UPDRS-I and UPDRS-II scores, and the lowest negative correlation of between sex and UPDRS-IV. Importantly, no feature pairs exceeded the high correlation threshold of , indicating minimal multicollinearity concerns for our machine learning models.

The strongest correlations were observed among UPDRS subscales, particularly between UPDRS-I (non-motor experiences of daily living) and UPDRS-II (motor experiences of daily living) (), and between UPDRS-II and UPDRS-III (motor examination) (). These moderate correlations reflect the expected clinical relationships within the unified rating scale framework while maintaining sufficient independence for predictive modeling. Disease duration showed meaningful positive correlations with motor severity measures, including H&Y stage (), UPDRS-II (), UPDRS-III (), and notably UPDRS-IV (motor complications) (), consistent with the progressive nature of Parkinson’s disease. Among non-motor features, UPDRS-I demonstrated moderate associations with sleep-related measures (ESS: ; RBDSQ: ) and mood assessment (GDS: ), reflecting the interconnected nature of non-motor symptoms in PD-MCI development.

The overall pattern of correlations supports the inclusion of all selected variables in subsequent machine learning analyses without substantial redundancy, while providing clinically interpretable relationships that align with our understanding of Parkinson’s disease pathophysiology.

3.3. Feature Selection Results

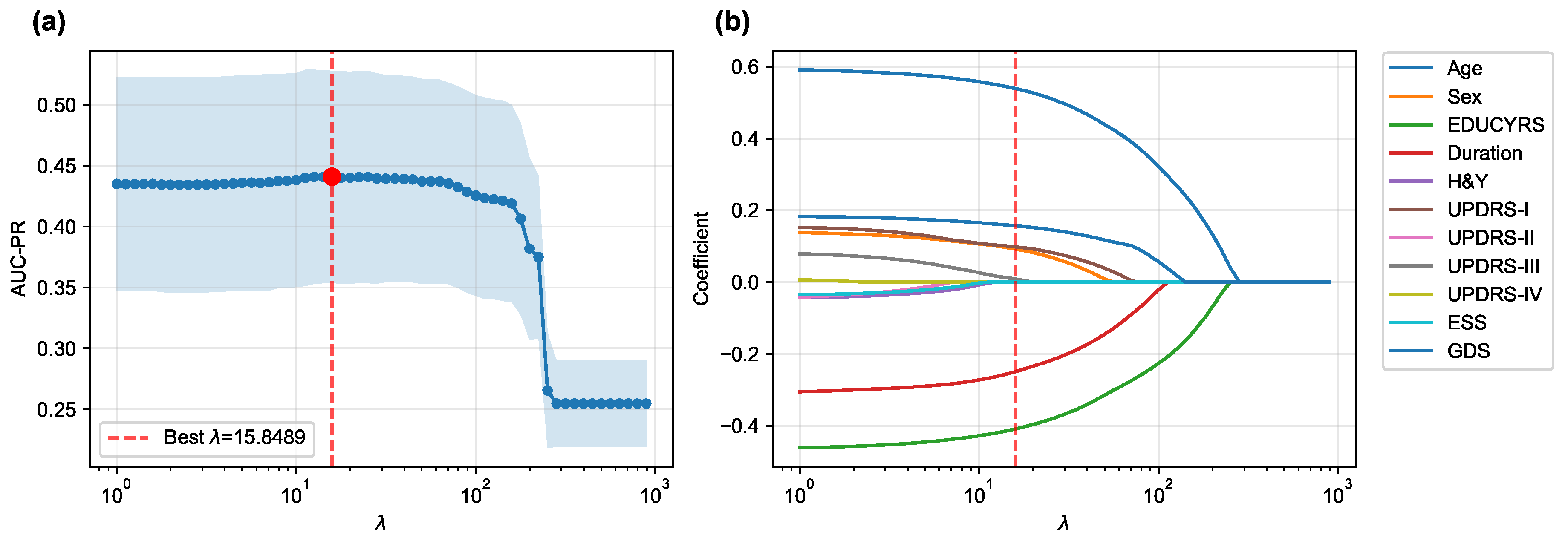

The LASSO logistic regression process, optimized via subject-level stratified 10-fold cross-validation on the training set to maximize the area under the precision-recall curve (AUC-PR), was used to identify the most salient predictors from the initial 12 features.

Figure 3 illustrates both the performance curve derived from cross-validation and the coefficient paths obtained by retraining the model on the complete training set across a range of regularization parameters.

The cross-validation procedure identified an optimal regularization parameter of , which maximized the mean AUC-PR across all folds. At this optimal regularization strength, the LASSO algorithm selected a parsimonious subset of seven key features while shrinking the coefficients of the remaining five features (H&Y, UPDRS-II, UPDRS-IV, ESS, and RBDSQ) to zero, effectively excluding them from the final model.

When the final LASSO model was trained on the complete training set using

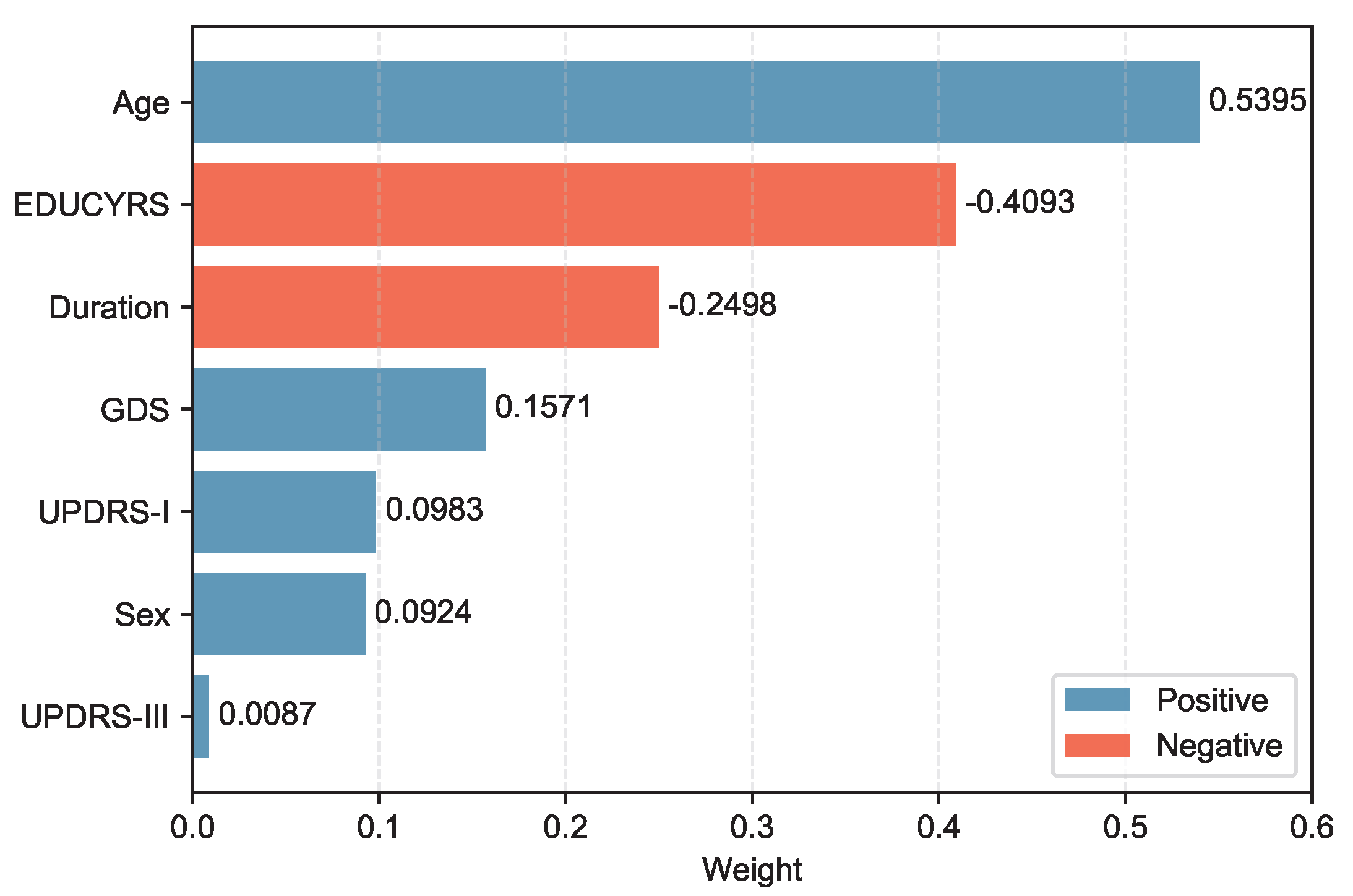

, the selected features demonstrated varying contributions to PD-MCI classification. The selected features, ranked by the absolute magnitude of their coefficients, are visualized in

Figure 4.

Age emerged as the most influential predictor with a positive coefficient of 0.5395, indicating that older patients have substantially higher odds of developing PD-MCI. Education years (EDUCYRS) showed the second-largest magnitude but with a negative coefficient of -0.4093, confirming its protective role against cognitive decline. Disease duration exhibited a negative coefficient of -0.2498, suggesting that longer disease duration may be associated with better cognitive preservation in this cohort. Among the clinical severity measures, depressive symptoms (GDS) demonstrated a positive coefficient of 0.1571, while non-motor experiences of daily living (UPDRS-I) and sex showed smaller positive contributions of 0.0983 and 0.0924, respectively. Motor examination scores (UPDRS-III) had the smallest coefficient of 0.0087, indicating minimal direct contribution to the classification decision. These seven features were used for the construction and comparison of all subsequent machine learning models.

3.4. Hyperparameter Optimization

To ensure optimal model performance prior to final testing, all machine learning algorithms underwent systematic hyperparameter optimization on the training set using subject-level stratified 10-fold cross-validation. Hyperparameters for each algorithm were optimized using Bayesian optimization with Optuna, where AUC-PR was used as the objective function to identify the optimal parameter configurations that maximize predictive performance while maintaining generalizability. The resulting optimal hyperparameters for each model are summarized in

Table 4.

The hyperparameter optimization revealed distinct algorithmic preferences that reflect the underlying data characteristics and modeling challenges. For logistic regression, the selection of L2 penalty indicates that Ridge regularization was more effective than L1 (LASSO) regularization for this specific classification task, likely due to the relatively small feature set (7 features) selected by prior LASSO feature selection, where multicollinearity was already minimized. The very low regularization strength (C = ) suggests that substantial regularization was necessary to prevent overfitting, which is consistent with the limited sample size relative to the complexity of the PD-MCI classification problem.

The SVM model’s preference for a linear kernel over non-linear alternatives (RBF, polynomial, or sigmoid) indicates that the optimal decision boundary in the 7-dimensional feature space is approximately linear. This finding suggests that the relationship between clinical features and PD-MCI status can be effectively captured through linear combinations of the selected predictors, without requiring complex non-linear transformations. The extremely low C value () demonstrates a strong preference for a large margin classifier, prioritizing generalization over perfect training set classification.

For Random Forest, the optimization resulted in a moderate maximum depth of 6 and conservative splitting criteria with high minimum samples per split (18) and minimum samples per leaf (28) values. These conservative parameters reflect the algorithm’s adaptation to the limited sample size and suggest that simple decision rules are sufficient for effective PD-MCI classification. The max_features value () indicates that approximately 60% of the available features were optimal for each split, maintaining adequate feature diversity while preserving discriminative power.

The XGBoost optimization yielded particularly revealing insights with its selection of max_depth = 2, indicating that simple two-level decision trees were optimal for this dataset. This shallow tree depth suggests that the PD-MCI classification can be effectively achieved through relatively simple decision rules with minimal hierarchical feature interactions. This finding aligns with the linear separability suggested by the SVM results and implies that the selected clinical features provide straightforward, interpretable decision pathways for PD-MCI identification. The moderate learning rate () and high subsample ratio () further support a conservative boosting approach that emphasizes stability over aggressive fitting, while the substantial regularization parameters (reg_lambda = 8.896) indicate strong preference for generalization over training set performance.

3.5. Cross-Validated Performance on Training Data

Following hyperparameter optimization, each model was evaluated on the training set using subject-level stratified 10-fold cross-validation with the obtained optimal parameters to assess their intrinsic discriminative capacity. The evaluation initially computed threshold-independent metrics (AUC-ROC and AUC-PR) that assess the model’s fundamental ability to distinguish between PD-MCI and non-MCI cases across all possible decision thresholds. Subsequently, three different threshold optimization strategies were systematically applied to determine optimal decision boundaries: the default threshold (0.5), F1-score maximization, and Youden index maximization. These threshold optimization strategies specifically influence threshold-dependent metrics such as accuracy, precision, recall, and F1-score. The comprehensive cross-validation performance results using the optimized hyperparameters across all threshold strategies are presented in

Table 5.

The comprehensive cross-validation analysis revealed distinct performance patterns across the four machine learning algorithms under three threshold optimization strategies. Regarding threshold-independent metrics, XGBoost demonstrated superior discriminative capability, achieving the highest AUC-ROC of 0.7076±0.0442 and AUC-PR of 0.4529±0.0807. Nevertheless, the performance differences among all algorithms were relatively modest, with AUC-ROC values ranging from 0.6946 to 0.7076 and AUC-PR values spanning 0.4408 to 0.4529, suggesting comparable inherent discriminative capacity across models for PD-MCI classification tasks.

The default threshold (0.5) strategy revealed substantial performance variations across algorithms. SVM achieved the highest accuracy (0.7507±0.0355) and specificity (0.9562±0.0212), but demonstrated markedly poor recall (0.1534±0.0840), resulting in the lowest F1-score (0.2300±0.1174) and Cohen’s kappa (0.1393±0.1042). This pattern indicates that the default threshold is excessively conservative for SVM in PD-MCI detection, leading to substantial underdiagnosis. In contrast, logistic regression with the default threshold achieved more balanced performance with the highest recall (0.6633±0.0835), F1-score (0.4820±0.0702), and Cohen’s kappa (0.2393±0.0806).

Both optimized threshold strategies demonstrated superior balance between sensitivity and specificity compared to the default threshold. The F1-score optimization strategy consistently improved recall across all models while maintaining reasonable precision. XGBoost achieved the best overall performance under F1-score optimization with the highest accuracy (0.6474±0.1051), balanced accuracy (0.6836±0.0475), precision (0.4201±0.1038), specificity (0.6058±0.1880), F1-score (0.5278±0.0630), and Cohen’s kappa (0.2937±0.1140). Logistic regression exhibited the highest recall (0.8145±0.0812) under this strategy.

The Youden index optimization provided a similar balanced performance profile to F1-score optimization. XGBoost again demonstrated the strongest performance across most metrics, achieving the highest accuracy (0.6528±0.0830), balanced accuracy (0.6859±0.0452), precision (0.4166±0.0966), specificity (0.6179±0.1368), F1-score (0.5276±0.0629), and Cohen’s kappa (0.2950±0.1051). Notably, logistic regression maintained the highest recall (0.7765±0.0881) under Youden index optimization.

The optimal thresholds derived from cross-validation varied substantially across algorithms and optimization criteria. SVM consistently required the lowest thresholds (F1-score: 0.2217±0.0467; Youden: 0.2441±0.0496), reflecting its tendency to produce conservative probability estimates. XGBoost required intermediate thresholds (F1-score: 0.3570±0.1099; Youden: 0.3643±0.0770), while logistic regression and Random Forest showed higher and more variable threshold requirements.

Based on these comprehensive cross-validation results, XGBoost emerged as the most promising algorithm across both optimized threshold strategies, consistently achieving the highest F1-scores and Cohen’s kappa values. The optimized threshold strategies (F1-score and Youden index) demonstrated clear superiority over the default threshold for PD-MCI classification, providing more clinically relevant sensitivity-specificity trade-offs. For subsequent test set evaluation, the median optimized thresholds from cross-validation were adopted to ensure robust and generalizable performance estimates.

3.6. Model Evaluation

The performance of the four machine learning models was evaluated on the independent test set using the median optimized thresholds derived from cross-validation.

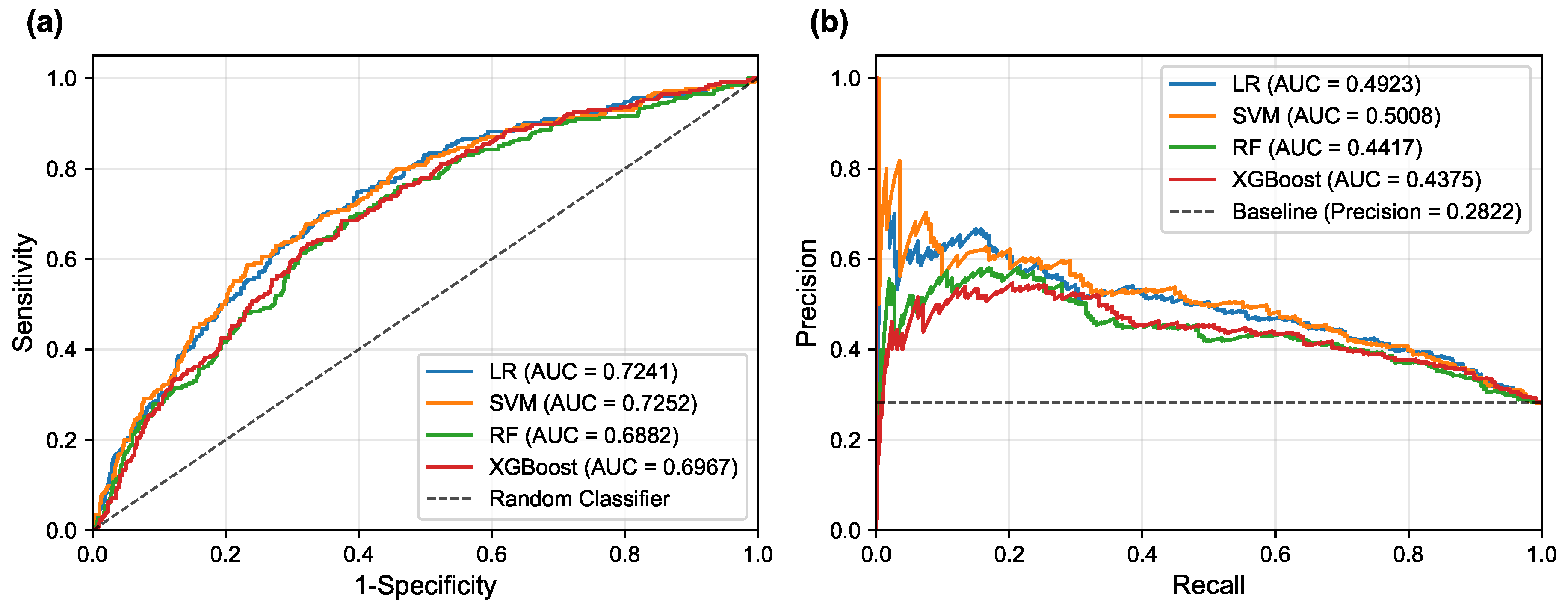

Figure 5 illustrates the corresponding ROC and PR curves for all models, providing visual representation of their discriminative performance.

Table 6 presents a detailed comparison of model performance across different threshold strategies, providing both threshold-independent metrics (AUC-ROC and AUC-PR) and threshold-dependent metrics under various optimization criteria. The comprehensive evaluation of the four models revealed important insights into their discriminative abilities and the critical role of threshold optimization in imbalanced classification scenarios.

In terms of overall discriminative ability, the Support Vector Machine (SVM) demonstrated the superior performance, achieving the highest AUC-ROC of 0.7252 and AUC-PR of 0.5008. Logistic Regression (LR) followed closely with an AUC-ROC of 0.7241 and AUC-PR of 0.4923, indicating comparable and robust classification potential. These results suggest that linear models possess excellent discriminative power for PD-MCI classification in this dataset, likely due to their ability to capture the linear relationships between the selected clinical features and cognitive impairment status. The Random Forest (RF) and XGBoost models, while showing respectable performance, achieved lower AUC values (RF: AUC-ROC = 0.6882, AUC-PR = 0.4417; XGBoost: AUC-ROC = 0.6967, AUC-PR = 0.4375 respectively), suggesting that the additional complexity of ensemble methods may not provide substantial benefits for this particular feature set and dataset.

The default threshold of 0.5 again proved suboptimal, as exemplified by the SVM’s performance: while achieving high specificity (0.9613) and precision (0.6212), its recall was only 0.1614, resulting in an extremely low F1-score of 0.2563. Such performance characteristics would be unacceptable in clinical scenarios where high sensitivity is crucial for detecting cognitive impairment, as missing PD-MCI cases could delay appropriate interventions and patient care planning. This underscores the fundamental necessity of threshold optimization when dealing with imbalanced datasets to achieve an effective trade-off between sensitivity and specificity that aligns with clinical priorities.

The two threshold optimization strategies, i.e., maximizing the F1-score and maximizing the Youden Index, yielded substantially more balanced performance across all models. Under F1-score optimization, the LR model demonstrated superior performance across the majority of evaluation metrics, achieving the highest balanced accuracy (0.6667), F1-score (0.5344), and Cohen’s Kappa (0.2645). Similarly, under Youden Index optimization, the LR model again secured the best performance in most metrics, including the highest accuracy (0.6433), balanced accuracy (0.6751), precision (0.4251), specificity (0.6022), F1-score (0.5421), and Cohen’s Kappa (0.2846). The SVM model consistently achieved competitive performance under both optimization strategies, particularly showing strong results in F1-score optimization with an accuracy of 0.6300 and the highest precision of 0.4132. This consistent performance highlights the strength of both linear models, particularly the LR model, in achieving well-rounded and balanced overall performance for PD-MCI classification. The LR model’s interpretability, combined with its robust performance, makes it particularly suitable for clinical applications where understanding the contribution of individual features is important for clinical decision-making.

However, two notable exceptions emerged from the threshold optimization results that merit careful consideration. When the threshold was optimized to maximize the F1-score, the Random Forest model achieved the highest recall (0.8150), while under Youden Index optimization, the RF model secured the top performance in recall again, reaching 0.7795. These findings indicate that if the primary clinical objective is to identify the maximum number of PD-MCI cases (i.e., maximizing recall to minimize missed diagnoses), appropriately optimized Random Forest models might be more suitable choices than linear models. The RF model’s ability to achieve high recall values suggests that for clinical applications where the cost of false negatives is particularly high—such as screening scenarios where missing cognitive impairment could lead to delayed treatment—ensemble models with optimized thresholds could be preferred despite their lower overall discriminative ability.

These findings highlight the fundamental importance of aligning model selection and threshold optimization with specific clinical objectives. For applications prioritizing the minimization of false positives (high specificity) or seeking the best overall diagnostic accuracy, LR and SVM demonstrate superior performance. Conversely, for scenarios where maximizing the detection of PD-MCI patients is paramount, Random Forest models with appropriately optimized thresholds may provide better clinical utility despite potentially higher false positive rates.

3.7. Feature Importance Analysis

To gain deeper insights into the decision-making processes of our models and identify the most influential clinical factors for PD-MCI classification, we conducted a comprehensive feature importance analysis after training each of the four models (LR, SVM, RF, XGBoost) on the complete training dataset using the seven selected features and optimized hyperparameters. We employed multiple complementary analytical approaches to ensure robust and comprehensive assessment: model-specific importance measures (coefficients for linear models, impurity-based scores for RF, and Gain for XGBoost), SHAP values for understanding individual feature contributions, and the model-agnostic permutation importance method to corroborate our findings from multiple perspectives.

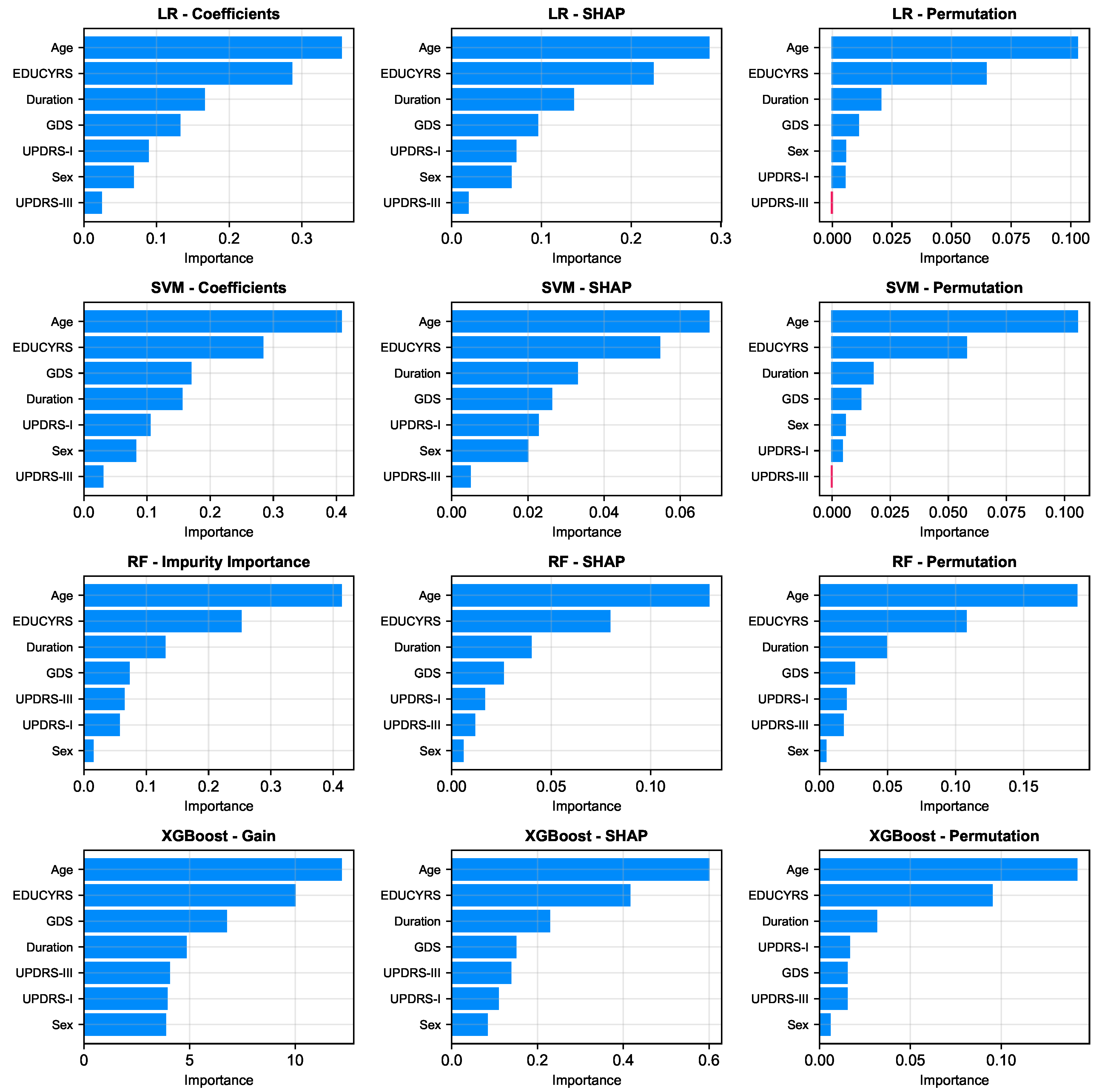

As illustrated in

Figure 6, a remarkably uniform pattern emerges across all models and analytical methodologies. Three clinical variables consistently rank as the most salient predictors of cognitive status: Age, Education Years, and Disease Duration, which respectively reflect the natural progression of cognitive decline, cognitive reserve capacity, and cumulative pathological burden. Additionally, GDS frequently appears among the top four important features, underscoring the significant relationship between depressive symptoms and cognitive impairment in Parkinson’s disease.

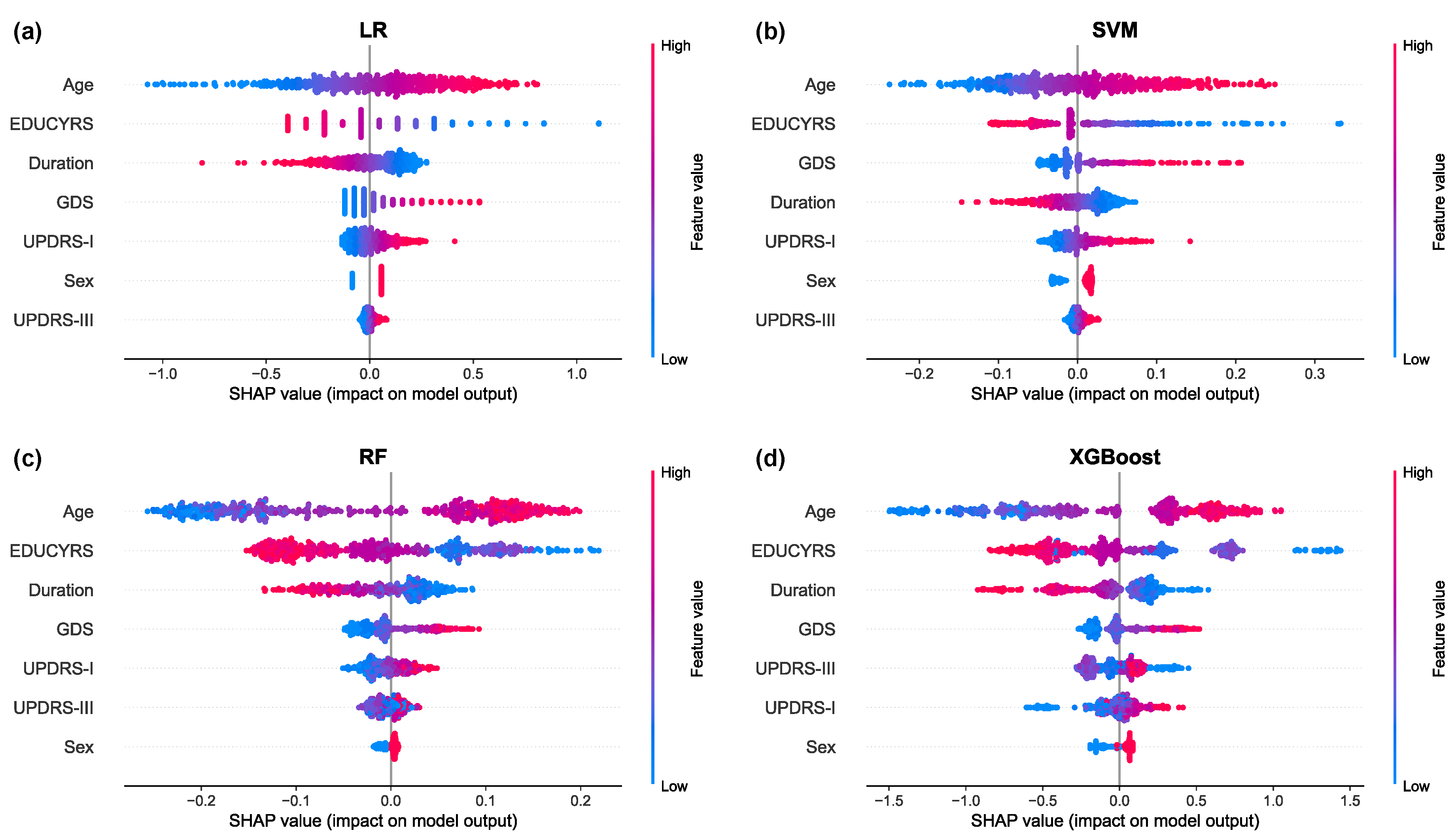

The SHAP summary plots, presented in

Figure 7, provide granular insights into both the magnitude and directionality of each feature’s contribution to model predictions. These visualizations reveal that higher values for Age and GDS (represented by red points) are consistently associated with positive SHAP values, indicating an increased probability of PD-MCI classification [

31]. Conversely, higher EDUCYRS values are associated with negative SHAP values, demonstrating the protective effect of education against cognitive decline. This pattern aligns with established neurological literature suggesting that educational attainment may contribute to cognitive reserve, potentially delaying the onset or manifestation of cognitive impairment in neurodegenerative diseases [

32,

33].