Submitted:

15 July 2025

Posted:

16 July 2025

Read the latest preprint version here

Abstract

Keywords:

Introduction

Background

Literature Review

Solution

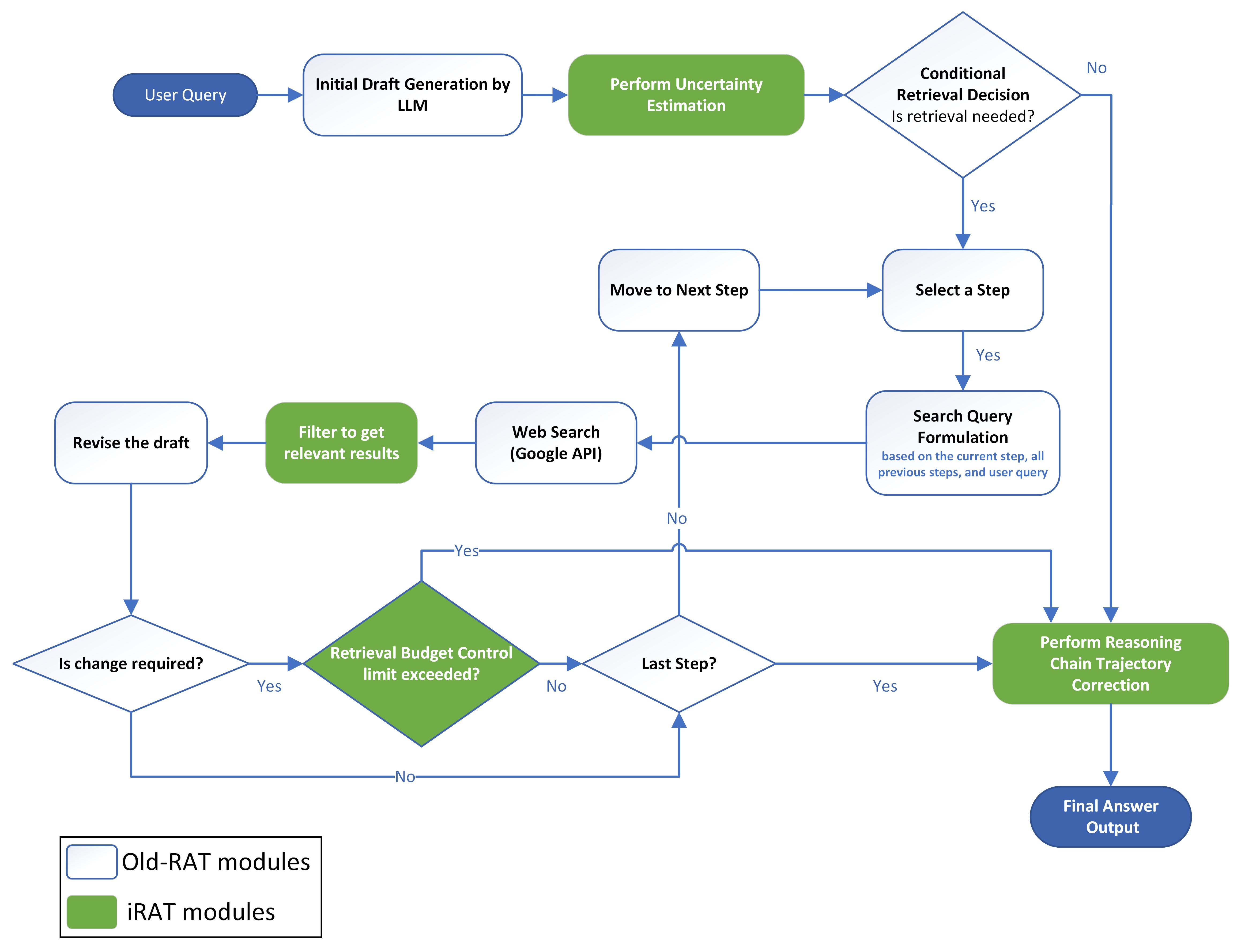

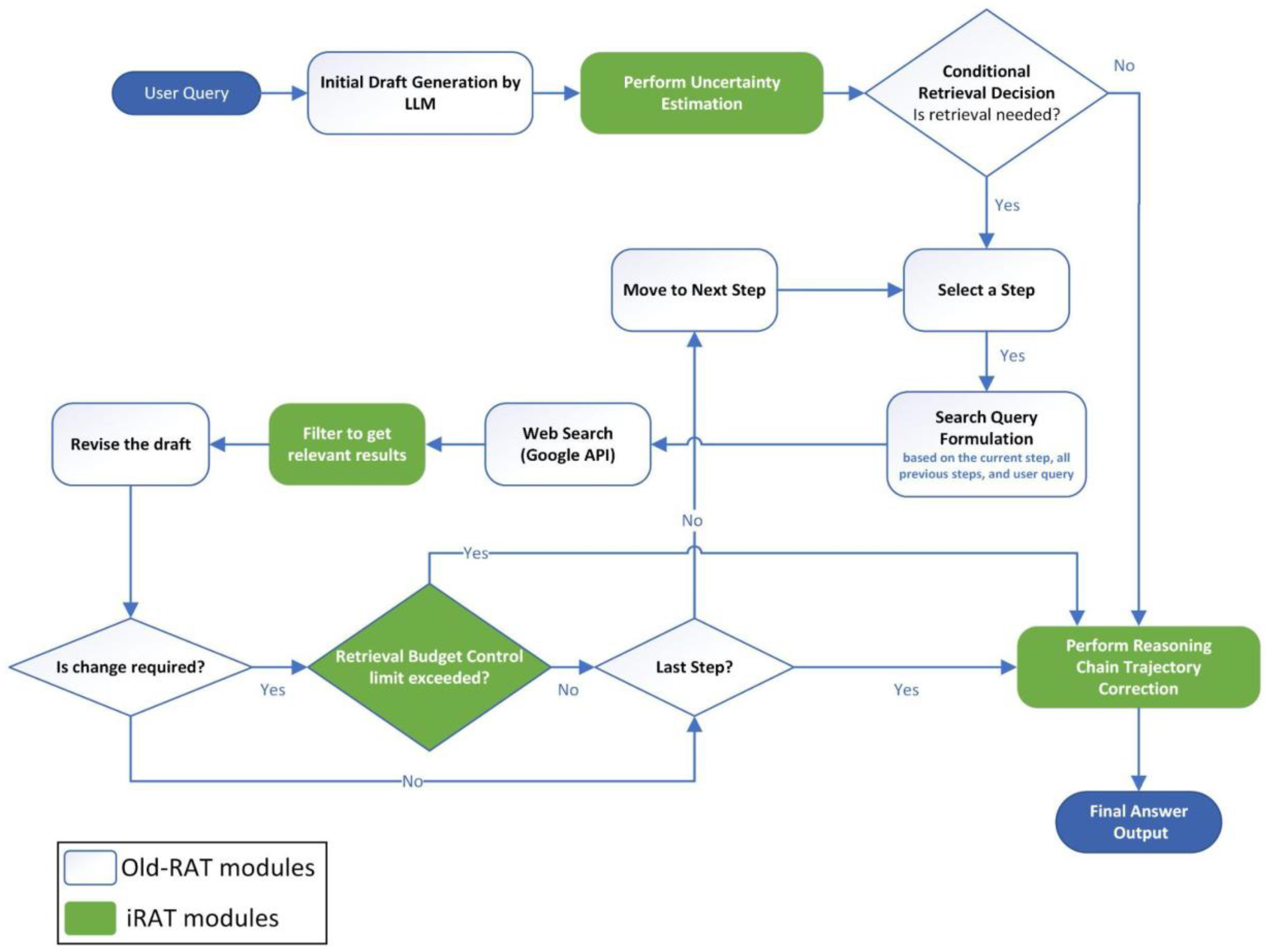

Methods

Initial Draft Generation

Uncertainty Estimation

Retrieval

Retrieval Decision

Retrieval-Based Revision with Budget Control

Result Filtering

Replanning

Final Evaluation

Results and Discussion

Performance

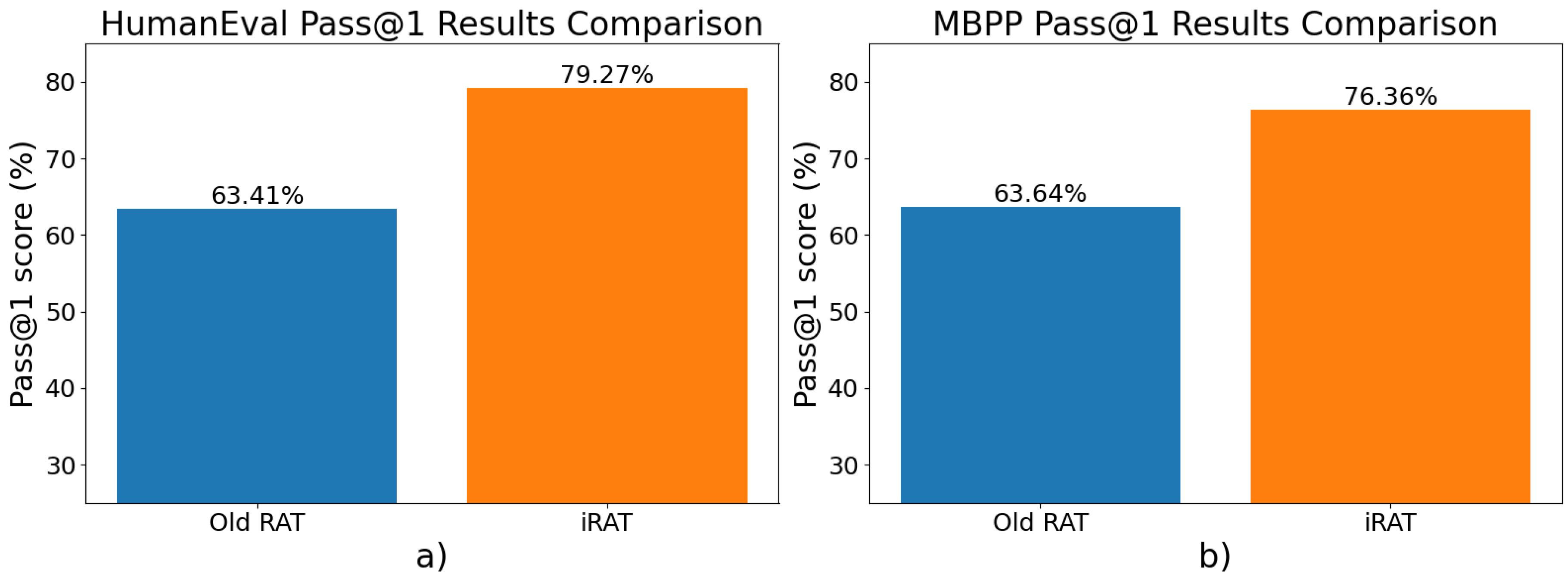

Coding Task Results

| Method | HumanEval pass@1 score | MBPP pass@1 score |

| Old-RAT | 63.41% | 63.64% |

| iRAT | 79.27% | 76.36% |

| Improvement | 15.86% | 12.72% |

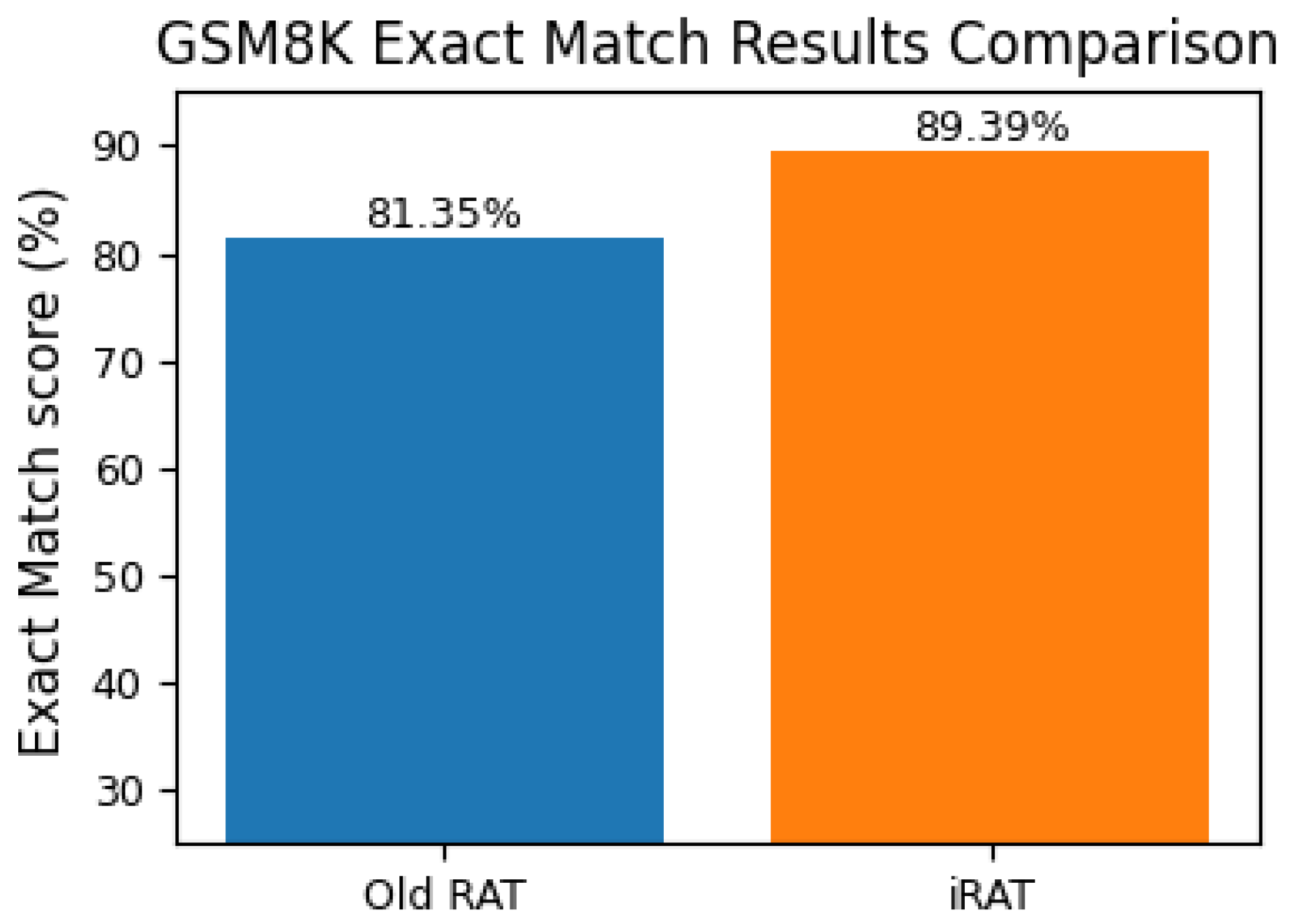

Mathematical Reasoning Task Results

| Method | GSM8K Exact Match score |

| Old-RAT | 81.35% |

| iRAT | 89.39% |

| Improvement | 8.04% |

Usage of Retrievals

| Dataset |

Average Retrievals (old-RAT) |

Average Retrievals (iRAT) |

Reduction in retrievals |

| HumanEval | 4.46 | 3.16 | 29.15% |

| MBPP | 5.24 | 3.36 | 35.88% |

| GSM8K | 3.43 | 1.76 | 48.69% |

Discussion

Limitations and Future Work

Conclusions

Author Contributions

Acknowledgments

Appendix A

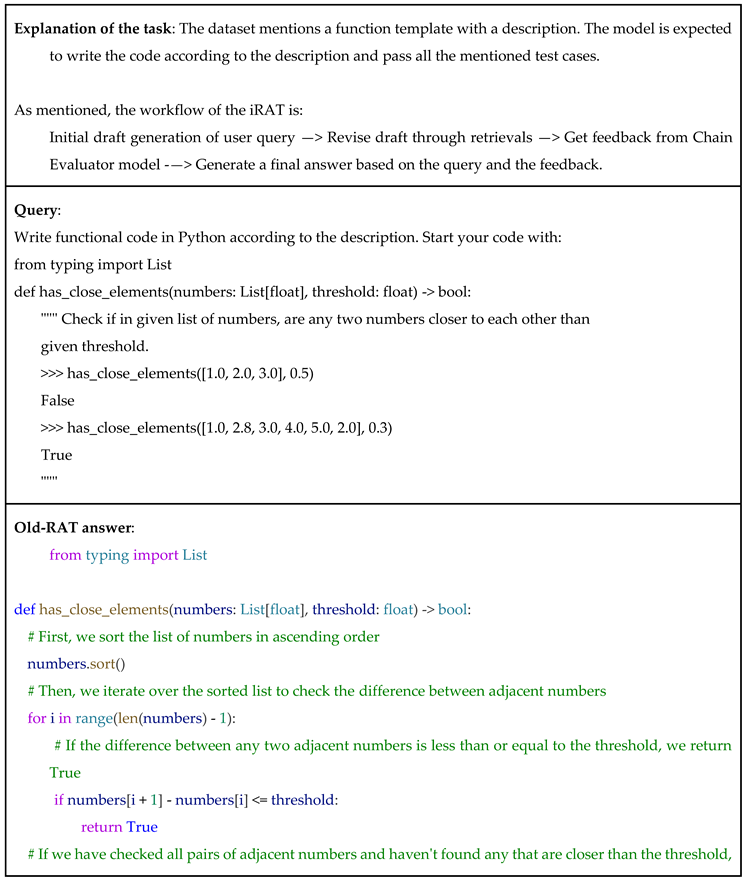

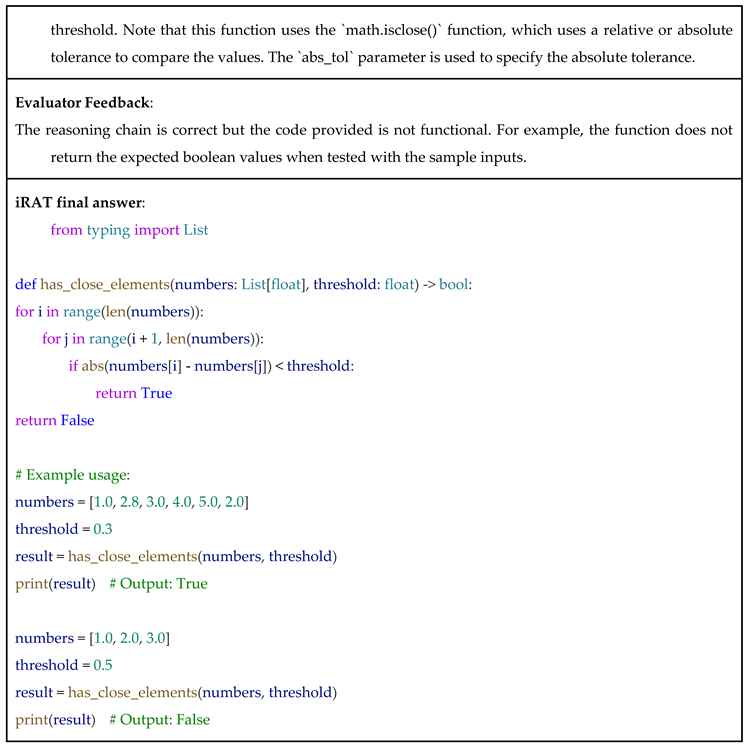

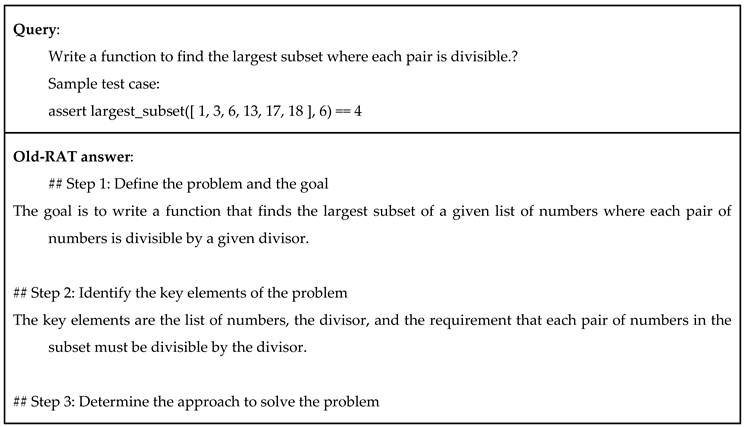

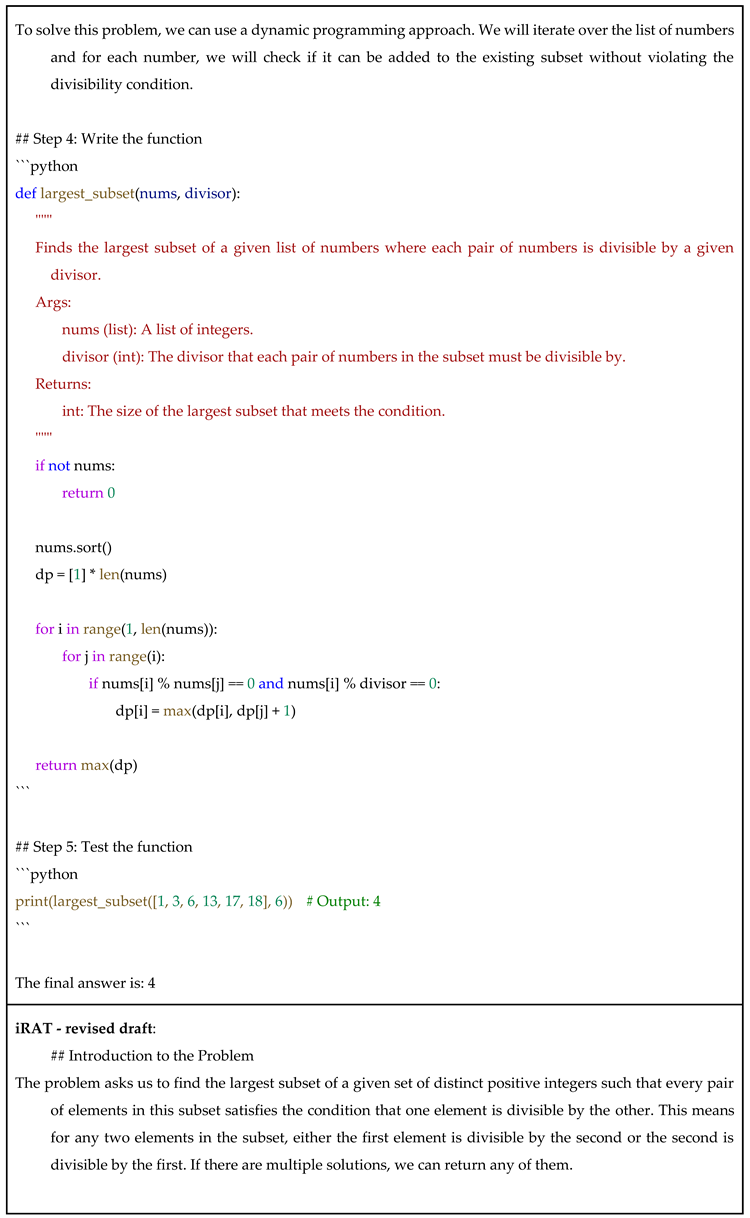

Examples of Thoughts Generated

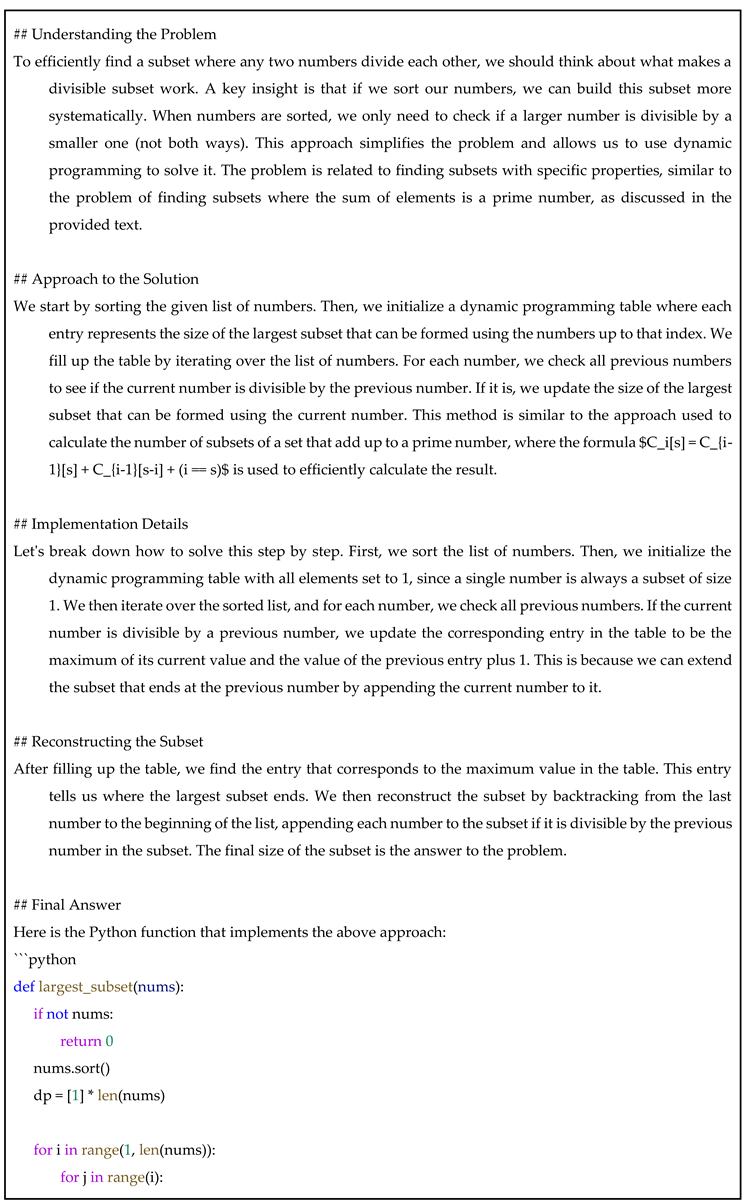

Example 1: (From HumanEval Dataset)

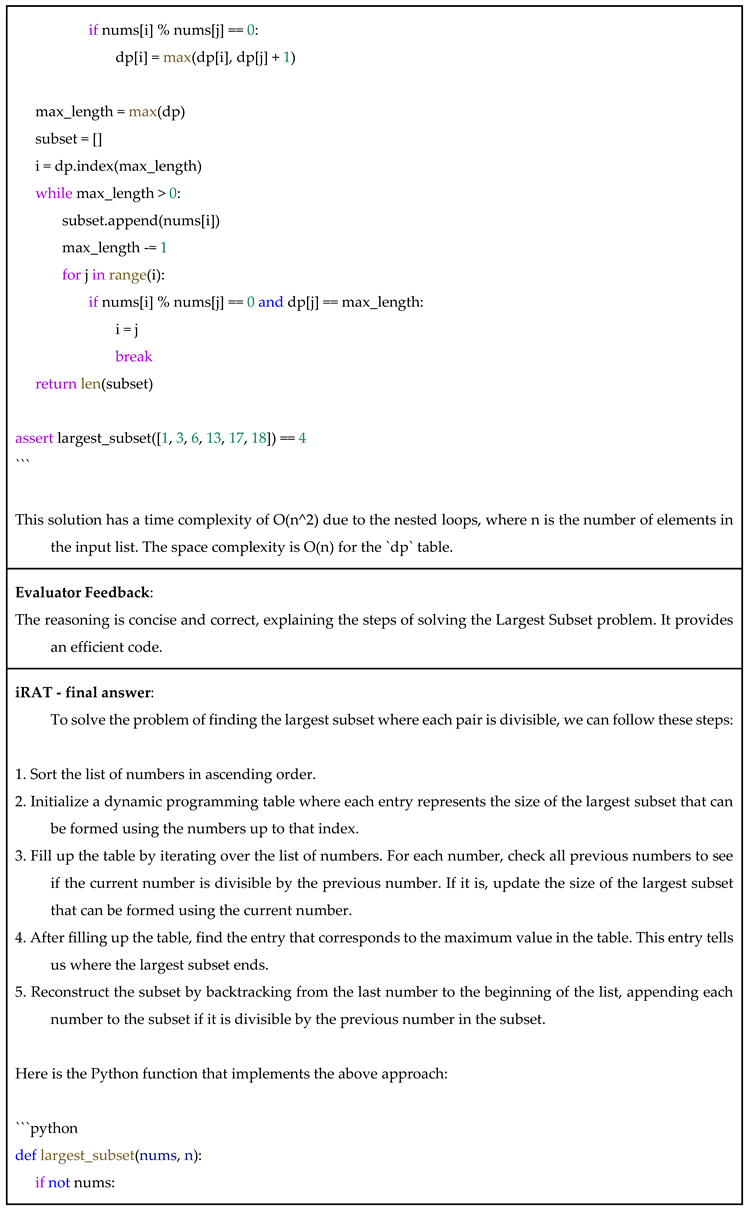

Example 2: (From MBPP Dataset)

Example 3: (From GSM8K Dataset)

Exploratory Data Analysis (EDA)

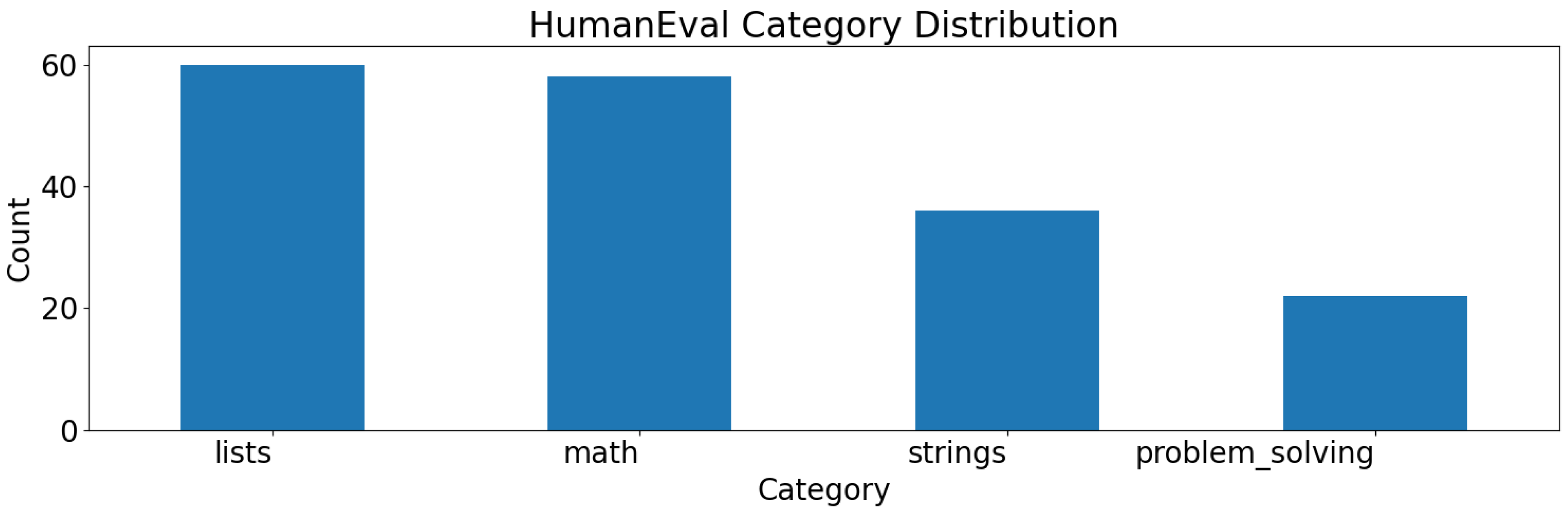

HumanEval

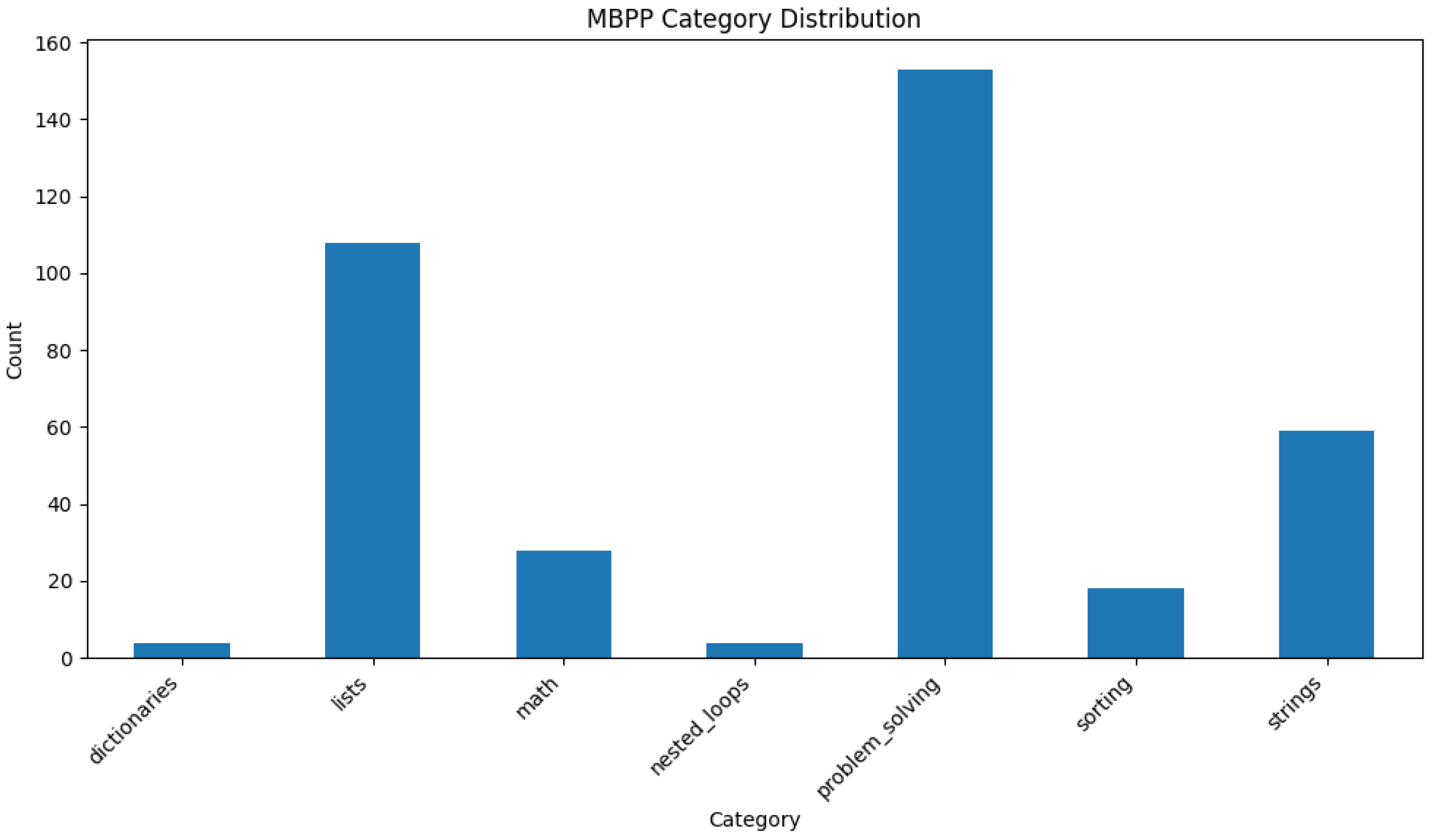

MBPP

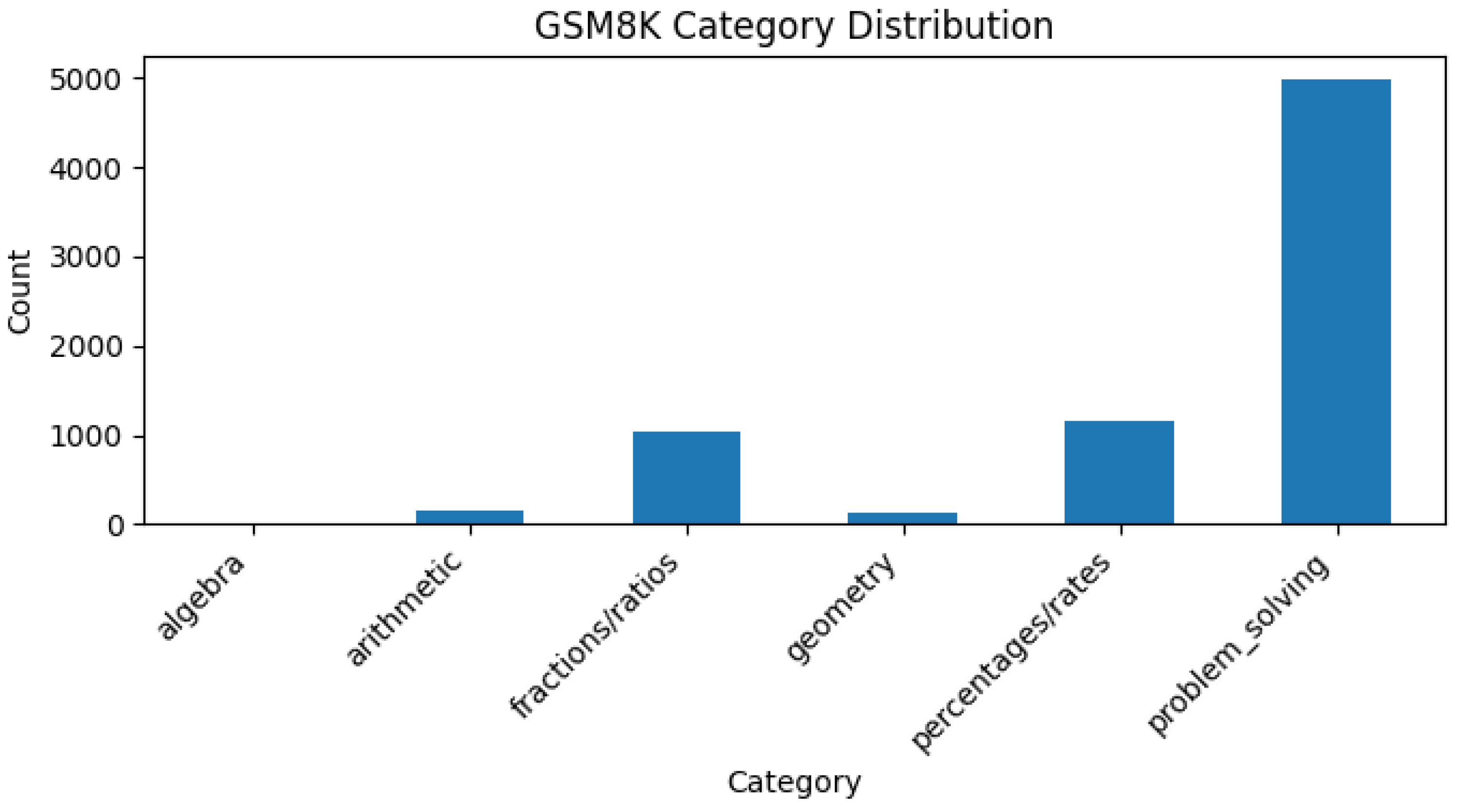

GSM8K

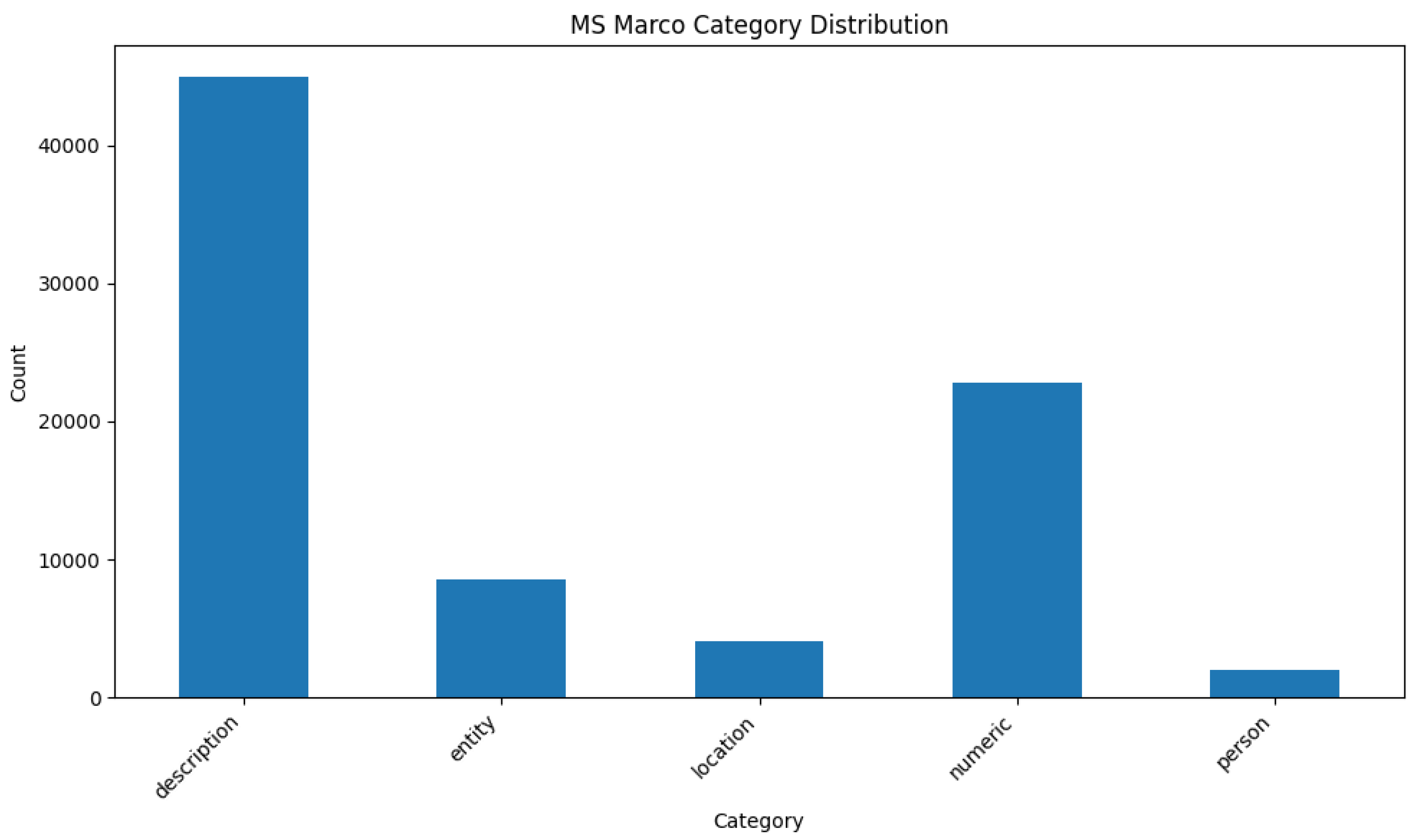

MS MARCO

Hardware and Software

| Name | Value | |

| Platform | Azure | |

| VM name | B4as_v2 | |

| vCPUs | 4 | |

| Memory | 16 GB | |

| GPU | N/A |

| Package | Version |

| beautifulsoup4 | 4.13.4 |

| cohere | 5.15.0 |

| datasets | 3.6.0 |

| google-api-python-client | 2.174.0 |

| gradio | 5.35.0 |

| html2text | 2025.4.15 |

| html5lib | 1.1 |

| human-eval | 1.0.3 |

| IPython | 9.4.0 |

| jupyter | 1.1.1 |

| langchain | 0.3.26 |

| langchain-community | 0.3.27 |

| last_layer | 0.1.33 |

| loguru | 0.7.3 |

| lxml | 6.0.0 |

| matplotlib | 3.10.3 |

| numpy | 2.3.1 |

| openai | 1.93.0 |

| pysafebrowsing | 0.1.4 |

| python-dotenv | 1.1.1 |

| readability-lxml | 0.8.4.1 |

| requests | 2.32.4 |

| sentence-transformers | 5.0.0 |

| simple-cache | 0.35 |

| tiktoken | 0.9.0 |

| transformers | 4.53.0 |

Evaluation of Re-Ranking Models for Coding Tasks

| Model | Parameters | Estimated R-Precision Score |

| ms-marco-MiniLM-L6-v2 [13] | 22.7M | 78.71% |

| ms-marco-MiniLM-L4-v2 [23] | 19.2M | 76.45% |

| mxbai-rerank-xsmall-v1 [24] | 70.8M | 73.87% |

Keywords Used to Extract Coding-Related Rows from MARCO:

Chain Evaluator Model - Initial Experiment with Reinforcement Learning (RL)

References

- J. Wei et al., “Chain-of-Thought Prompting Elicits Reasoning in Large Language Models,” 2023, arXiv. [Online]. Available: http://arxiv.org/abs/2201.11903.

- Y. Gao et al., “Retrieval-Augmented Generation for Large Language Models: A Survey,” 2024, arXiv. [Online]. Available: http://arxiv.org/abs/2312.10997.

- P. Shojaee, I. Mirzadeh, K. Alizadeh, M. Horton, S. Bengio, and M. Farajtabar, “The Illusion of Thinking: Understanding the Strengths and Limitations of Reasoning Models via the Lens of Problem Complexity,” 2025, arXiv. [CrossRef]

- Z. Wang, A. Liu, H. Lin, J. Li, X. Ma, and Y. Liang, “RAT: Retrieval Augmented Thoughts Elicit Context-Aware Reasoning and Verification in Long-Horizon Generation,” in NeurIPS 2024 Workshop on Open-World Agents, 2024. [Online]. Available: https://openreview.net/forum?id=5QtKMjNkjL.

- “RAT on GitHub.” [Online]. Available: https://github.com/CraftJarvis/RAT.

- Asai, Z. Wu, Y. Wang, A. Sil, and H. Hajishirzi, “Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection,” 2023, arXiv. [Online]. Available: https://paperswithcode.com/paper/self-rag-learning-to-retrieve-generate-and.

- J. Sohn et al., “Rationale-Guided Retrieval Augmented Generation for Medical Question Answering,” 2024, arXiv. [Online]. Available: https://paperswithcode.com/paper/rationale-guided-retrieval-augmented.

- Meta AI, “meta-llama/Llama-3.3-70B-Instruct,” HuggingFace. [Online]. Available: https://huggingface.co/meta-llama/Llama-3.3-70B-Instruct.

- Sentence Transformers, “all-MiniLM-L6-v2,” Hugging Face. [Online]. Available: https://huggingface.co/sentence-transformers/all-MiniLM-L6-v2.

- J. Bevendorff, M. Wiegmann, M. Potthast, and B. Stein, “Is Google Getting Worse? A Longitudinal Investigation of SEO Spam in Search Engines,” in Advances in Information Retrieval, N. Goharian, N. Tonellotto, Y. He, A. Lipani, G. McDonald, C. Macdonald, and I. Ounis, Eds., Cham: Springer Nature Switzerland, 2024, pp. 56–71.

- Google, “Safe Browsing Lookup API (v4),” Google Developers. [Online]. Available: https://developers.google.com/safe-browsing/v4/lookup-api.

- M. Siddhartha, “Malicious URLs dataset,” Kaggle. [Online]. Available: https://paperswithcode.com/dataset/malicious-urls-dataset.

- Cross Encoder, “ms-marco-MiniLM-L6-v2,” Hugging Face. [Online]. Available: https://huggingface.co/cross-encoder/ms-marco-MiniLM-L6-v2.

- “MS MARCO Scores - Pretrained Models,” Sentence Transformers. [Online]. Available: https://sbert.net/docs/cross_encoder/pretrained_models.html#ms-marco.

- Deepseek AI, “DeepSeek-R1-Distill-Qwen-1.5B,” Hugging Face. [Online]. Available: https://huggingface.co/deepseek-ai/DeepSeek-R1-Distill-Qwen-1.5B.

- E. J. Hu et al., “LoRA: Low-Rank Adaptation of Large Language Models,” 2021, arXiv. [Online]. Available: http://arxiv.org/abs/2106.09685.

- OpenAI, “HumanEval.” Hugging Face, 2023. [Online]. Available: https://huggingface.co/datasets/openai/openai_humaneval.

- Google Research, “MBPP.” Hugging Face, 2023. [Online]. Available: https://huggingface.co/datasets/google-research-datasets/mbpp.

- OpenAI, “GSM8K.” Hugging Face, 2021. [Online]. Available: https://huggingface.co/datasets/openai/gsm8k.

- S. Kulal et al., “SPoC: Search-based Pseudocode to Code,” in Advances in Neural Information Processing Systems, H. Wallach, H. Larochelle, A. Beygelzimer, F. d’Alché-Buc, E. Fox, and R. Garnett, Eds., Curran Associates, Inc., 2019. [Online]. Available: https://proceedings.neurips.cc/paper_files/paper/2019/file/7298332f04ac004a0ca44cc69ecf6f6b-Paper.pdf.

- P. Rajpurkar, J. Zhang, K. Lopyrev, and P. Liang, “SQuAD: 100,000+ Questions for Machine Comprehension of Text,” in Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing, J. Su, K. Duh, and X. Carreras, Eds., Austin, Texas: Association for Computational Linguistics, Nov. 2016, pp. 2383–2392. [CrossRef]

- Microsoft, “MS MARCO (v2.1).” Hugging Face, 2018. [Online]. Available: https://huggingface.co/datasets/microsoft/ms_marcoms-marco.

- Cross Encoder, “ms-marco-MiniLM-L4-v2,” Hugging Face. [Online]. Available: https://huggingface.co/cross-encoder/ms-marco-MiniLM-L4-v2.

- Mixedbread AI, “mxbai-rerank-xsmall-v1.” Hugging Face, 2024. [Online]. Available: https://huggingface.co/mixedbread-ai/mxbai-rerank-xsmall-v1.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).