Submitted:

12 June 2025

Posted:

13 June 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

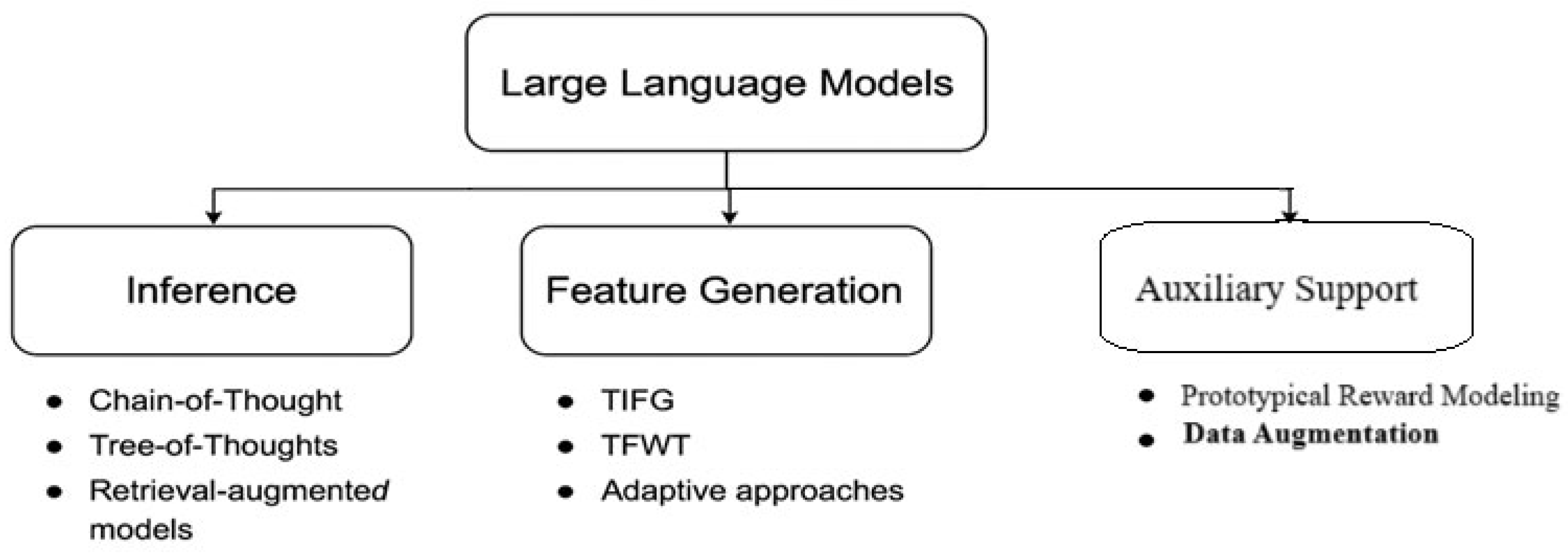

2. Taxonomy and Methodological Foundations

2.1. Inference Techniques

2.2. Feature Generation Techniques

2.3. Auxiliary Support Strategies

3. Method Analysis and Technical Deep Dive

3.1. Chain-of-Thought (CoT)

3.2. Tree of Thoughts (ToT)

3.3. Retrieval-Augmented Generation (RAG)

3.4. Retrieval-Augmented Thought Trees (RATT)

3.5. Thought Space Explorer (TSE)

3.6. Text-Informed Feature Generation (TIFG)

3.7. Transformer-Based Feature Weighting (TFWT)

3.8. Dynamic and Adaptive Feature Generation (DAFG)

3.9. Prototypical Reward Modeling (Proto-RM)

3.10. Data Augmentation

| Method | Dataset/Task | Interpretability | Scalability | Strength | Limitation |

| CoT | GSM8K, SVAMP | Medium | High | Enables stepwise logical reasoning | May propagate early reasoning errors |

| ToT | Game of 24 | High | Low | Explores multiple reasoning paths | Computationally intensive |

| RAG | Natural Questions, TriviaQA | High | Medium | Improves factual grounding | Dependent on retrieval quality |

| RATT | StrategyQA | High | Low | Combines CoT, ToT, and retrieval for robust reasoning | High architectural complexity |

| TSE | Commonsense QA | High | Medium | Expands reasoning space dynamically | May generate redundant steps |

| TIFG | Clinical QA, Tabular Text | High | Medium | Produces domain-specific, explainable features | Requires curated context |

| DAFG | MLBench tasks | High | Low | Iteratively refines features via feedback loops | Needs multi-agent orchestration |

| TFWT | UCI tabular datasets | Medium | High | Learns contextual feature importance | Limited transparency in attention weights |

| SMOTE/Augment | Imbalanced tabular/image data | Low | High | Increases diversity in underrepresented classes | May introduce noise or artifacts |

| Proto-RM | HH-RLHF | Medium | High | Enhances data efficiency in RLHF | Sensitive to prototype definition |

4. Key Insights and Open Research Challenges

- 4.1.

- Co-evolution of Reasoning and Retrieval: Structured prompting techniques increasingly incorporate retrieval to support factual accuracy. RAG and RATT are prime examples. However, they also increase latency and model complexity.

- 4.2.

- Rise of Feedback Loops: Feature generation frameworks now support feedback from downstream task metrics. Adaptive FG agents iterate over proposals, using results to refine feature quality.

- 4.3.

- Importance of Interpretability: CoT, ToT, and TIFG are popular because they make decisions understandable. This is essential in high-stakes domains like healthcare or finance.

- 4.4.

- Cost and Scalability: Tree-based reasoning and dynamic feature generation are compute-intensive. Future work must address the trade-off between complexity and accuracy.

- -

- How to benchmark reasoning-guided feature generation?

- -

- Can LLMs generate features for non-textual modalities (e.g., images, graphs)?

- -

- What are the theoretical limits of inference-augmented data engineering?

- -

- How can transparency and performance be jointly optimized in domains that require both accuracy and explainability?

5. Limitations and Future Directions

- 5.1

- Lack of Unified Frameworks: Most reviewed methods address either reasoning or feature engineering in isolation. Although approaches like TIFG and RATT begin to merge these paradigms, there is no end-to-end pipeline that modularly integrates reasoning, feature construction, and feedback-driven refinement in a scalable architecture.

- 5.2

- Benchmarking Challenges: Unlike traditional NLP tasks that rely on standard benchmarks such as GLUE or SQuAD, LLM-based feature generation lacks evaluation protocols for assessing feature novelty, interpretability, and downstream effectiveness. This hampers reproducibility and model comparison across studies.

- 5.3

- Interpretability Trade-offs: While tree-based reasoning methods like ToT and TSE enhance transparency, they often incur high computational costs and longer inference times. On the other hand, transformer-based approaches like TFWT provide performance gains but may obscure the model’s decision logic—especially to non-expert users.

- 5.4

- Generalization and Domain Transfer: Many proposed techniques are demonstrated on narrowly scoped or synthetic datasets, limiting confidence in their performance on real-world, noisy, or multimodal data. Broader validation and domain adaptation strategies are required to ensure robustness.

- 5.5

- Underutilization of Human Feedback: Human-in-the-loop collaboration is largely restricted to reward modeling stages (e.g., RLHF). Broader incorporation of user input during reasoning or feature generation—such as approving, modifying, or vetoing model-generated elements—could make systems more interactive and trustworthy.

- 5.6

- Ethical and Fairness Considerations: As LLMs influence high-stakes domains, issues of fairness, bias, and transparency become critical. There is an urgent need for research on ethical safeguards, bias mitigation, and explainable feature attribution in both reasoning and data generation processes.

6. Real-World Applications: Integrating LLM Methods to Overcome Systemic Limitations

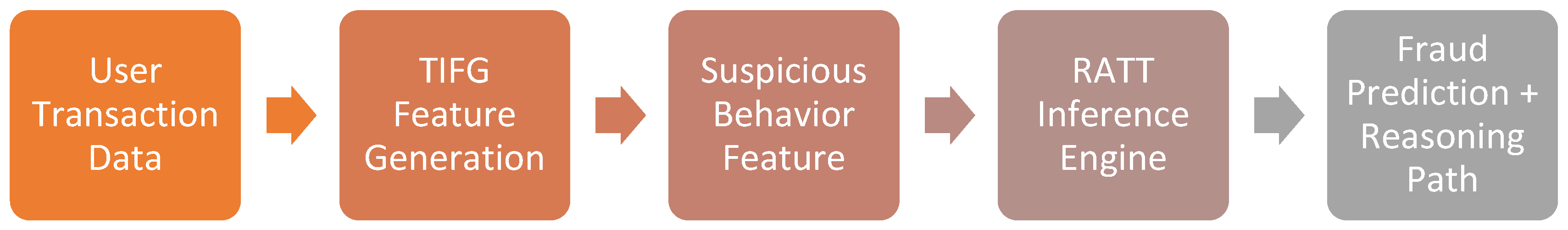

6.1. Use Case 1: Fraud Detection Using RATT and TIFG

- High explainability due to reasoning trees.

- Strong contextual relevance via LLM-generated features.

- Fewer false positives due to targeted inference paths.

- Easily auditable, satisfying legal requirements for model transparency.

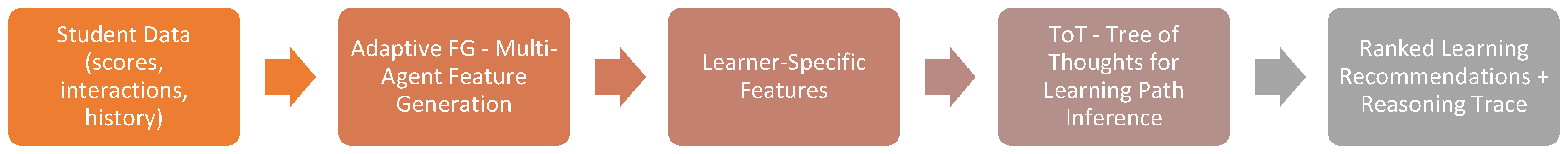

6.2. Use Case 2: Personalized Learning Pathways Using ToT and Adaptive FG

- Rich, interpretable learning analytics for educators.

- Personalized content sequencing based on actual behavior patterns.

- Adaptive, feedback-driven refinement of recommendations.

- Supports both autonomous learners and guided instruction.

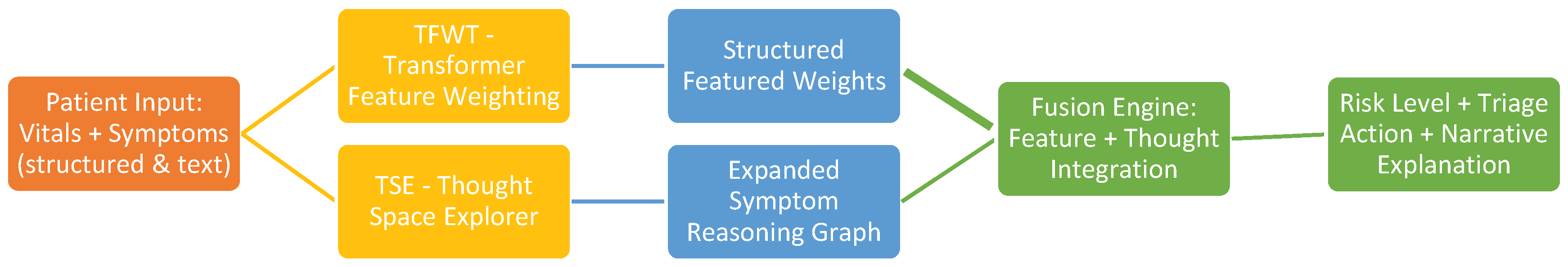

6.3. Use Case 3: Medical Triage and Symptom Analysis Using TSE and TFWT

- Combines structured data precision with unstructured reasoning.

- Personalized triage recommendations tailored to risk profiles.

- Highly interpretable: shows what mattered and why.

- Scalable: could be deployed in clinics, telemedicine apps, or pre-screening portals.

7. Conclusions

References

- T. B. Brown, B. Mann, N. Ryder et al., “Language Models are Few-Shot Learners,” in Advances in Neural Information Processing Systems, vol. 33, 2020, pp. 1877–1901.

- Chowdhery, S. Narang, J. Devlin et al., “PaLM: Scaling Language Modeling with Pathways,” arXiv preprint arXiv:2204.02311, 2022.

- H. Touvron, T. Lavril, G. Izacard et al., “LLaMA: Open and Efficient Foundation Language Models,” arXiv preprint arXiv:2302.13971, 2023.

- J. Wei, X. Wang, D. Schuurmans et al., “Chain-of-Thought Prompting Elicits Reasoning in Large Language Models,” Advances in Neural Information Processing Systems, vol. 35, 2022.

- Yao, D. Yu, J. Zhao et al., “Tree of Thoughts: Deliberate Problem Solving with Large Language Models,” arXiv preprint arXiv:2305.10601, 2023.

- P. Lewis, E. Perez, A. Piktus et al., “Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks,” Advances in Neural Information Processing Systems, vol. 33, 2020.

- M. Lewis, Y. Liu, N. Goyal et al., “BART: Denoising Sequence-to-Sequence Pre-training for Natural Language Generation,” in Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 2020, pp. 7871–7880. [CrossRef]

- J. Zhang, X. Wang, W. Ren et al., “RATT: A Thought Structure for Coherent and Correct LLM Reasoning,” arXiv preprint arXiv:2406.02746, 2024. [CrossRef]

- J. Zhang, F. Mo, X. Wang, and K. Liu, “Thought Space Explorer: Navigating and Expanding Thought Space for Large Language Model Reasoning,” arXiv preprint arXiv:2410.24155, 2024.

- J. Zhang, F. Mo, and K. Liu, “Text-Informed Feature Generation with LLMs,” arXiv preprint arXiv:2406.11177, 2024.

- J. Zhang, F. Mo, and K. Liu, “Dynamic and Adaptive Feature Generation with LLMs,” arXiv preprint arXiv:2406.03505, 2024.

- J. Zhang, X. Wang, and K. Liu, “Transformer-Based Feature Weighting for Tabular Data Using LLMs,” arXiv preprint arXiv:2405.08403, 2024.

- Z. Wang, Q. Yang, and Y. Li, “A Comprehensive Survey on Data Augmentation,” arXiv preprint arXiv:2405.09591, 2024.

- N. V. Chawla, K. W. Bowyer, L. O. Hall, and W. P. Kegelmeyer, “SMOTE: Synthetic Minority Over-sampling Technique,” Journal of Artificial Intelligence Research, vol. 16, pp. 321–357, 2002. [CrossRef]

- H. Zhang, M. Cisse, Y. N. Dauphin, and D. Lopez-Paz, “mixup: Beyond Empirical Risk Minimization,” in International Conference on Learning Representations, 2018. [Online]. Available: https://openreview.net/forum?id=r1Ddp1-Rb.

- J. Zhang, X. Wang, and K. Liu, “Proto-RM: A Prototypical Reward Model for Data-Efficient RLHF,” arXiv preprint arXiv:2406.06606, 2024.

- L. Ouyang, J. Wu, X. Jiang et al., “Training Language Models to Follow Instructions with Human Feedback,” Advances in Neural Information Processing Systems, vol. 35, 2022.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).