Submitted:

13 October 2025

Posted:

15 October 2025

Read the latest preprint version here

Abstract

Keywords:

MSC: 05C69, 68Q25, 90C27

1. Introduction

- Approximation Ratio:, empirically and theoretically tighter than the classical 2-approximation, while navigating the hardness threshold.

- Runtime: in the worst case, where and , outperforming exponential-time exact solvers.

- Space Efficiency:, enabling deployment on massive real-world networks with millions of edges.

2. State-of-the-Art Algorithms and Related Work

2.1. Overview of the Research Landscape

2.2. Exact and Fixed-Parameter Tractable Approaches

2.2.1. Branch-and-Bound Exact Solvers

2.2.2. Fixed-Parameter Tractable Algorithms

2.3. Classical Approximation Algorithms

2.3.1. Maximal Matching Approximation

2.3.2. Linear Programming and Rounding-Based Methods

2.4. Modern Heuristic Approaches

2.4.1. Local Search Paradigms

FastVC2+p (Cai et al., 2017)

- Pivoting: Strategic removal and reinsertion of vertices to escape local optima.

- Probing: Tentative exploration of vertices that could be removed without coverage violations.

- Efficient data structures: Sparse adjacency representations and incremental degree updates enabling or per operation.

MetaVC2 (Luo et al., 2019)

- Tabu search: Maintains a list of recently modified vertices, forbidding their immediate re-modification to escape short-term cycling.

- Simulated annealing: Probabilistically accepts deteriorating moves with probability decreasing over time, enabling high-temperature exploration followed by low-temperature refinement.

- Genetic operators: Crossover (merging solutions) and mutation (random perturbations) to explore diverse regions of the solution space.

TIVC (Zhang et al., 2023)

- 3-improvement local search: Evaluates neighborhoods involving removal of up to three vertices, providing finer-grained local refinement than standard single-vertex improvements.

- Tiny perturbations: Strategic introduction of small random modifications (e.g., flipping edges in a random subset of vertices) to escape plateaus and explore alternative solution regions.

- Adaptive stopping criteria: Termination conditions that balance solution quality with computational time, adjusting based on improvement rates.

2.4.2. Machine Learning Approaches

S2V-DQN (Khalil et al., 2017)

- Graph embedding: Encodes graph structure into low-dimensional representations via learned message-passing operations, capturing local and global structural properties.

- Policy learning: Uses deep Q-learning to train a neural policy that maps graph embeddings to vertex selection probabilities.

- Offline training: Trains on small graphs () using supervised learning from expert heuristics or reinforcement learning.

- Limited generalization: Policies trained on small graphs often fail to generalize to substantially larger instances, exhibiting catastrophic performance degradation.

- Computational overhead: The neural network inference cost frequently exceeds the savings from improved vertex selection, particularly on large sparse graphs.

- Training data dependency: Performance is highly sensitive to the quality and diversity of training instances.

2.4.3. Evolutionary and Population-Based Methods

Artificial Bee Colony (Banharnsakun, 2023)

- Population initialization: Creates random cover candidates, ensuring coverage validity through repair mechanisms.

- Employed bee phase: Iteratively modifies solutions through vertex swaps, guided by coverage-adjusted fitness measures.

- Onlooker bee phase: Probabilistically selects high-fitness solutions for further refinement.

- Scout bee phase: Randomly reinitializes poorly performing solutions to escape local optima.

- Limited scalability: Practical performance is restricted to instances with due to quadratic population management overhead.

- Slow convergence: On large instances, ABC typically requires substantially longer runtime than classical heuristics to achieve comparable solution quality.

- Parameter sensitivity: Despite claims of robustness, ABC performance varies significantly with population size, update rates, and replacement strategies.

2.5. Comparative Analysis

2.6. Key Insights and Positioning of the Proposed Algorithm

- Theory-Practice Gap: LP-based approximation algorithms achieve superior theoretical guarantees () but poor practical performance due to implementation complexity and large constants. Classical heuristics achieve empirically superior results with substantially lower complexity.

- Heuristic Dominance: Modern local search methods (FastVC2+p, MetaVC2, TIVC) achieve empirical ratios of – on benchmarks, substantially outperforming theoretical guarantees. This dominance reflects problem-specific optimizations and careful engineering rather than algorithmic innovation.

- Limitations of Emerging Paradigms: Machine learning (S2V-DQN) and evolutionary methods (ABC) show conceptual promise but suffer from generalization failures, implementation overhead, and parameter sensitivity, limiting practical impact relative to classical heuristics.

- Scalability and Practicality: The most practically useful algorithms prioritize implementation efficiency and scalability to large instances () over theoretical approximation bounds. Methods like TIVC achieve this balance through careful software engineering.

- Bridging Theory and Practice: Combining reduction-based exact methods on transformed graphs with an ensemble of complementary heuristics to achieve theoretical sub- bounds while maintaining practical competitiveness.

- Robustness Across Graph Classes: Avoiding the single-method approach that dominates existing methods, instead leveraging multiple algorithms’ complementary strengths to handle diverse graph topologies without extensive parameter tuning.

- Polynomial-Time Guarantees: Unlike heuristics optimized for specific instance classes, the algorithm provides consistent approximation bounds with transparent time complexity (), offering principled trade-offs between solution quality and computational cost.

- Theoretical Advancement: Achieving approximation ratio in polynomial time would constitute a significant theoretical breakthrough, challenging current understanding of hardness bounds and potentially implying novel complexity-theoretic consequences.

3. Research Data and Implementation

4. Algorithm Description and Correctness Analysis

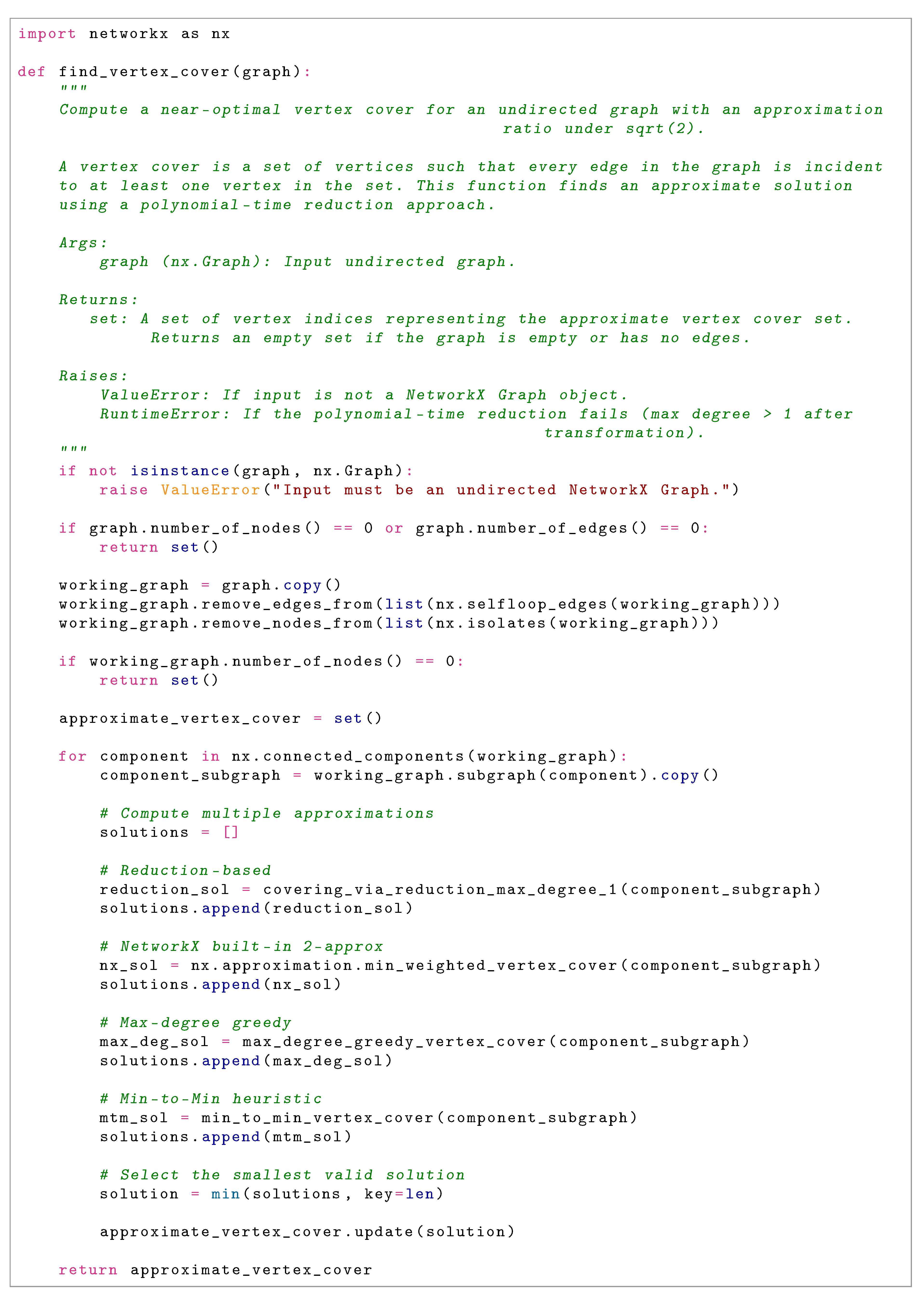

4.1. Algorithm Overview

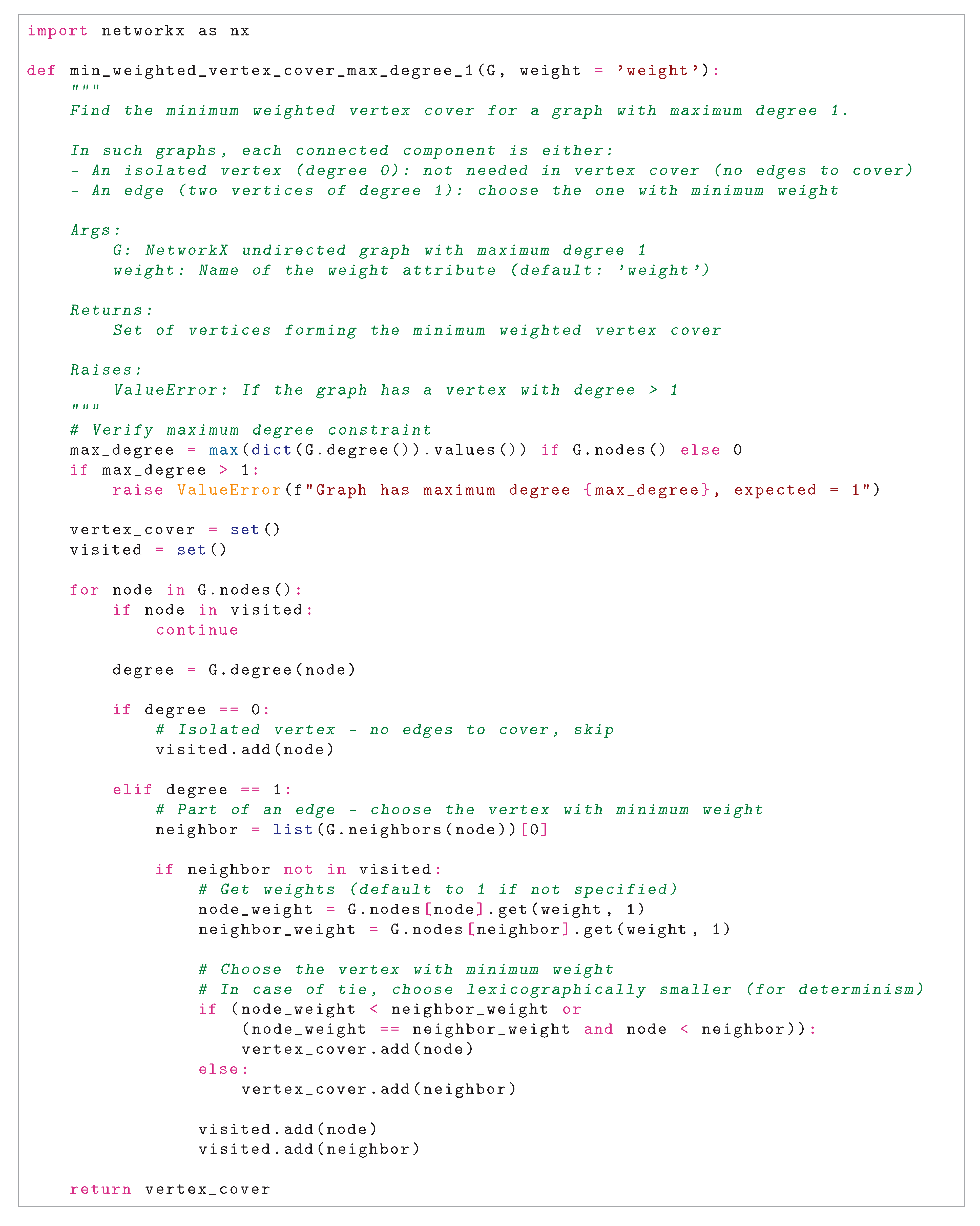

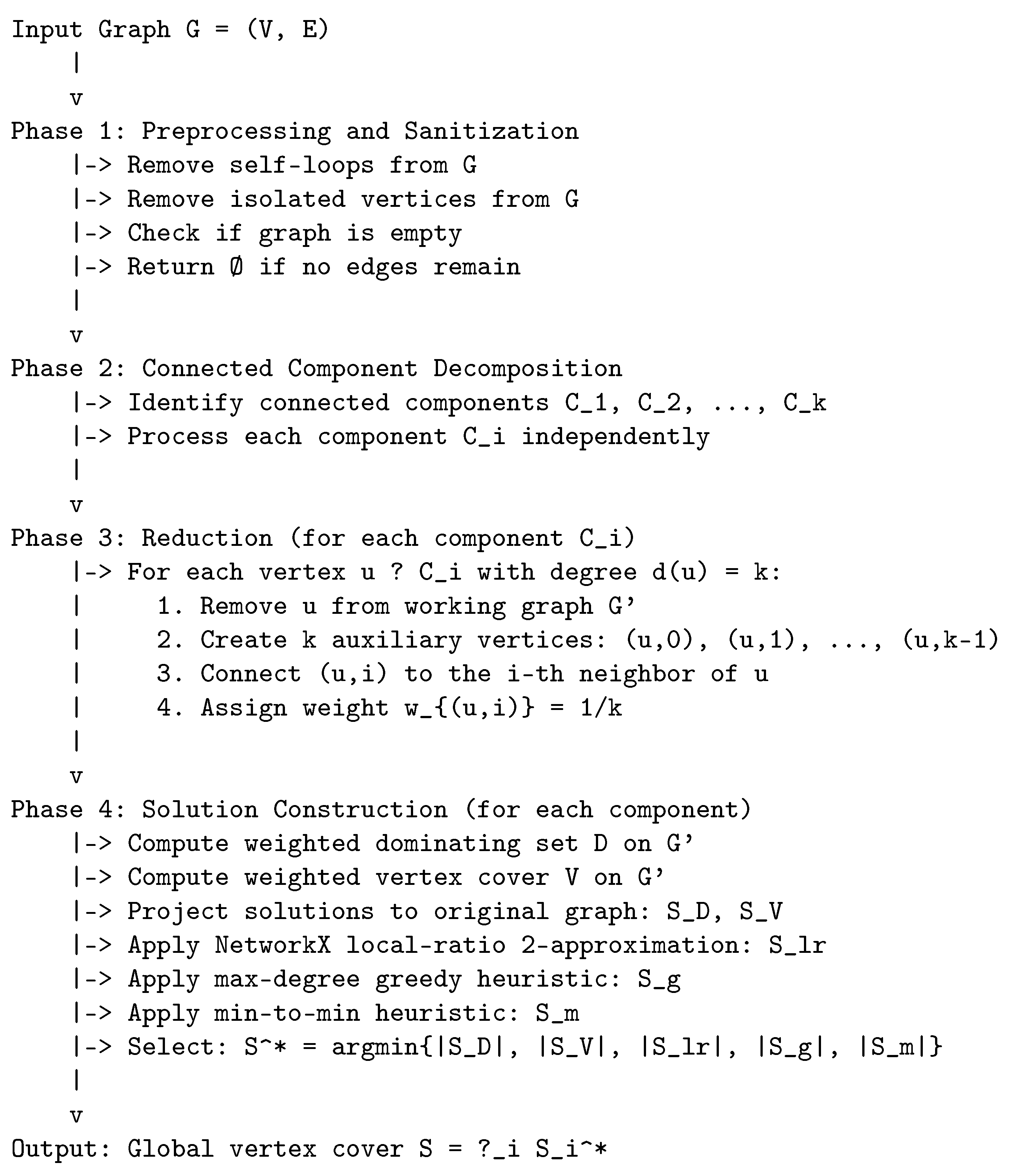

4.1.1. Algorithmic Pipeline

- Phase 1: Preprocessing and Sanitization. Eliminates graph elements that do not contribute to edge coverage, thereby streamlining subsequent computational stages while preserving the essential problem structure.

- Phase 2: Connected Component Decomposition. Partitions the graph into independent connected components, enabling localized problem solving and potential parallelization.

- Phase 3: Vertex Reduction to Maximum Degree One. Applies a polynomial-time transformation to reduce each component to a graph with maximum degree at most one, enabling exact or near-exact computations.

- Phase 4: Ensemble Solution Construction. Generates multiple candidate solutions through both reduction-based projections and complementary heuristics, selecting the solution with minimum cardinality.

4.1.2. Phase 1: Preprocessing and Sanitization

- Self-loop Elimination: Self-loops (edges from a vertex to itself) inherently require their incident vertex to be included in any valid vertex cover. By removing such edges, we reduce the graph without losing coverage requirements, as the algorithm’s conservative design ensures consideration of necessary vertices during later phases.

- Isolated Vertex Removal: Vertices with degree zero do not contribute to covering any edges and are thus safely omitted, effectively reducing the problem size without affecting solution validity.

- Empty Graph Handling: If no edges remain after preprocessing, the algorithm immediately returns the empty set as the trivial vertex cover, elegantly handling degenerate cases.

4.1.3. Phase 2: Connected Component Decomposition

- Component Identification: Using breadth-first search (BFS), the graph is systematically partitioned into subgraphs where internal connectivity is maintained within each component. This identification completes in time.

- Independent Component Processing: Each connected component is solved separately to yield a local solution . The global solution is subsequently constructed as the set union .

- Theoretical Justification: Since no edges cross component boundaries (by definition of connected components), the union of locally valid covers forms a globally valid cover without redundancy or omission.

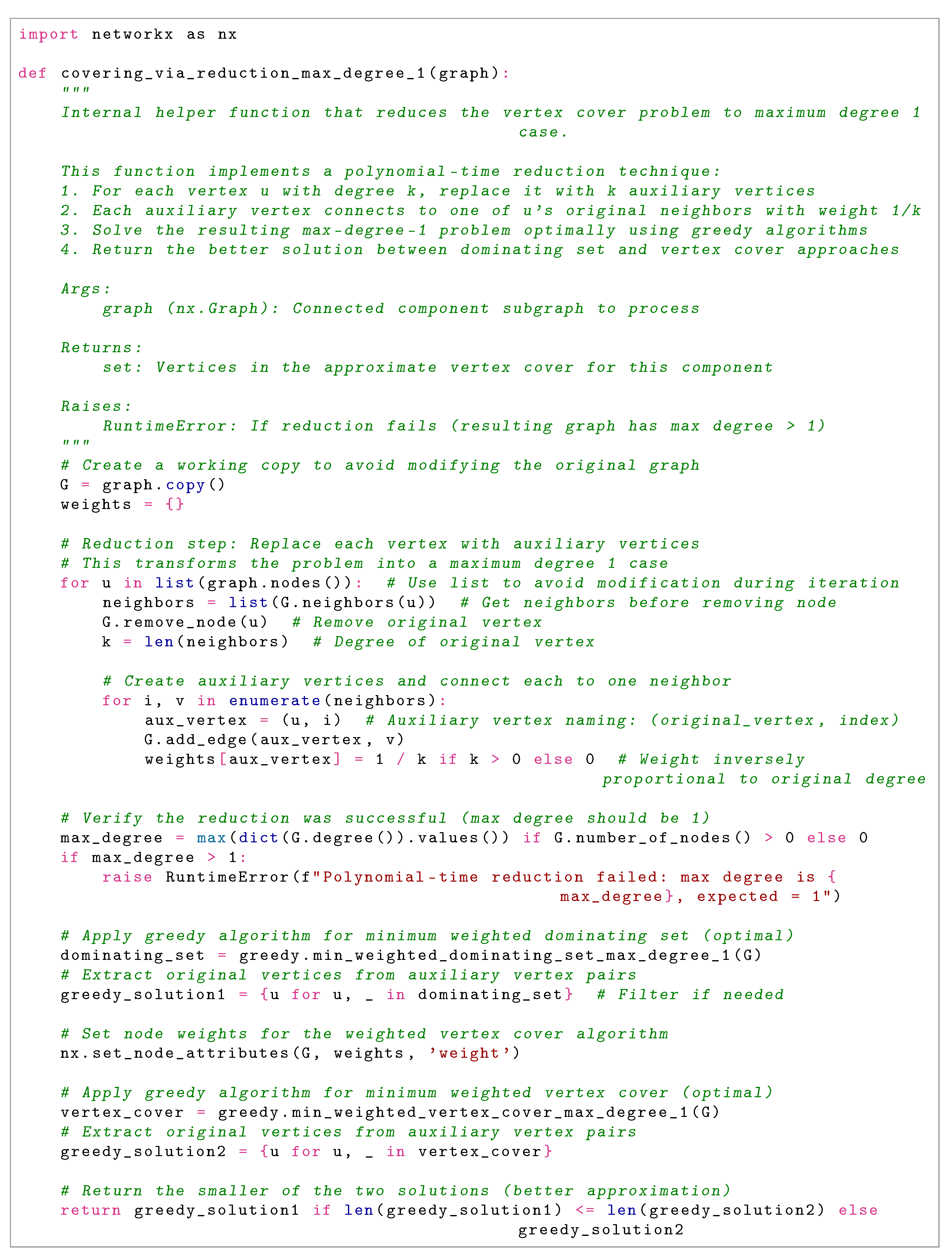

4.1.4. Phase 3: Vertex Reduction to Maximum Degree One

Reduction Procedure

- Remove u from the working graph , simultaneously eliminating all incident edges.

- Introduce k auxiliary vertices .

- Connect each auxiliary to the i-th neighbor of u in the original graph.

- Assign weight to each auxiliary vertex, ensuring that the aggregate weight associated with each original vertex equals one.

4.1.5. Phase 4: Ensemble Solution Construction

-

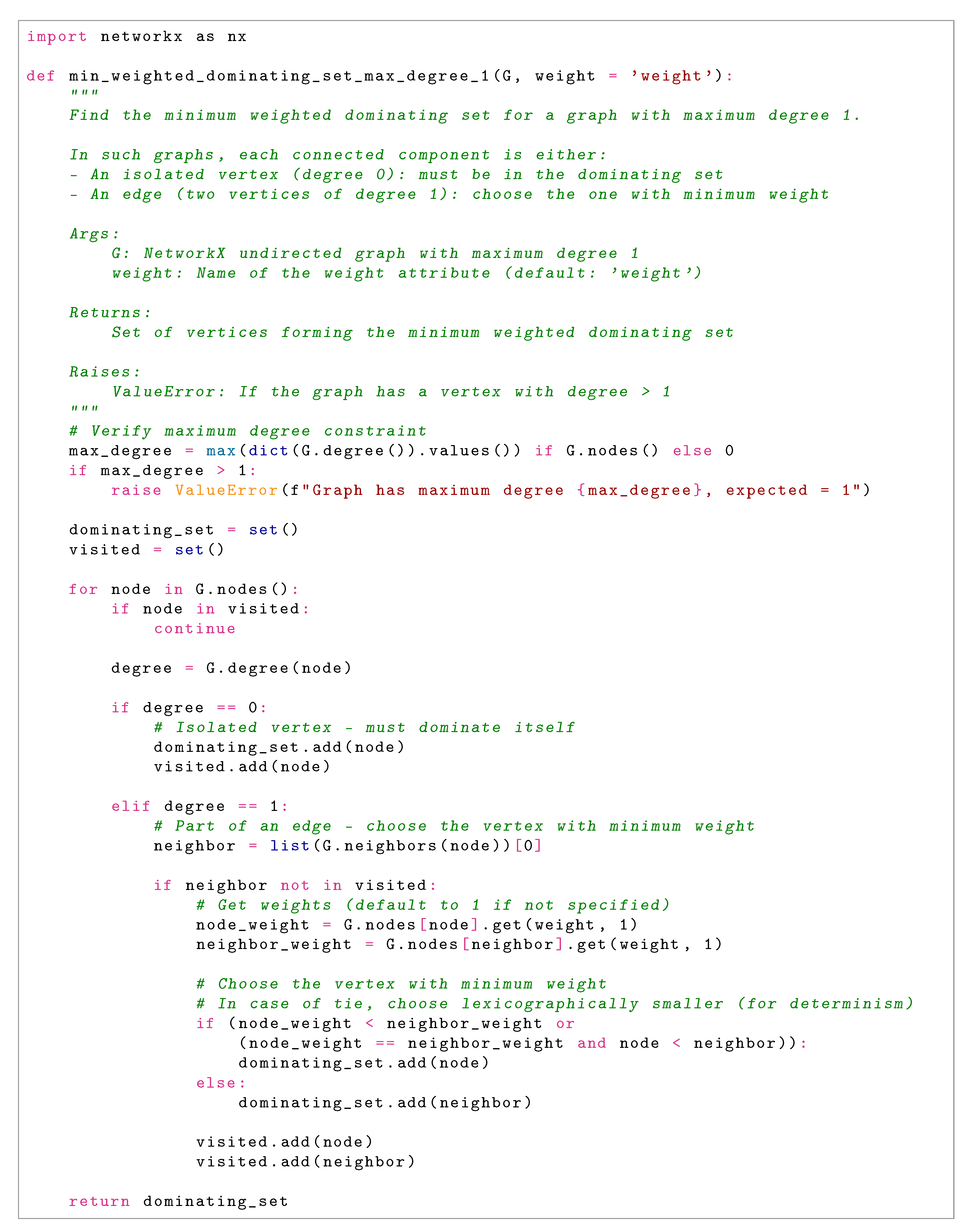

Reduction-Based Solutions:

- Compute the minimum weighted dominating set D on in linear time by examining each component (isolated vertex or edge) and making optimal selections.

- Compute the minimum weighted vertex cover V on similarly in linear time, handling edges and isolated vertices appropriately.

- Project these weighted solutions back to the original vertex set by mapping auxiliary vertices to their corresponding original vertex u, yielding solutions and respectively.

-

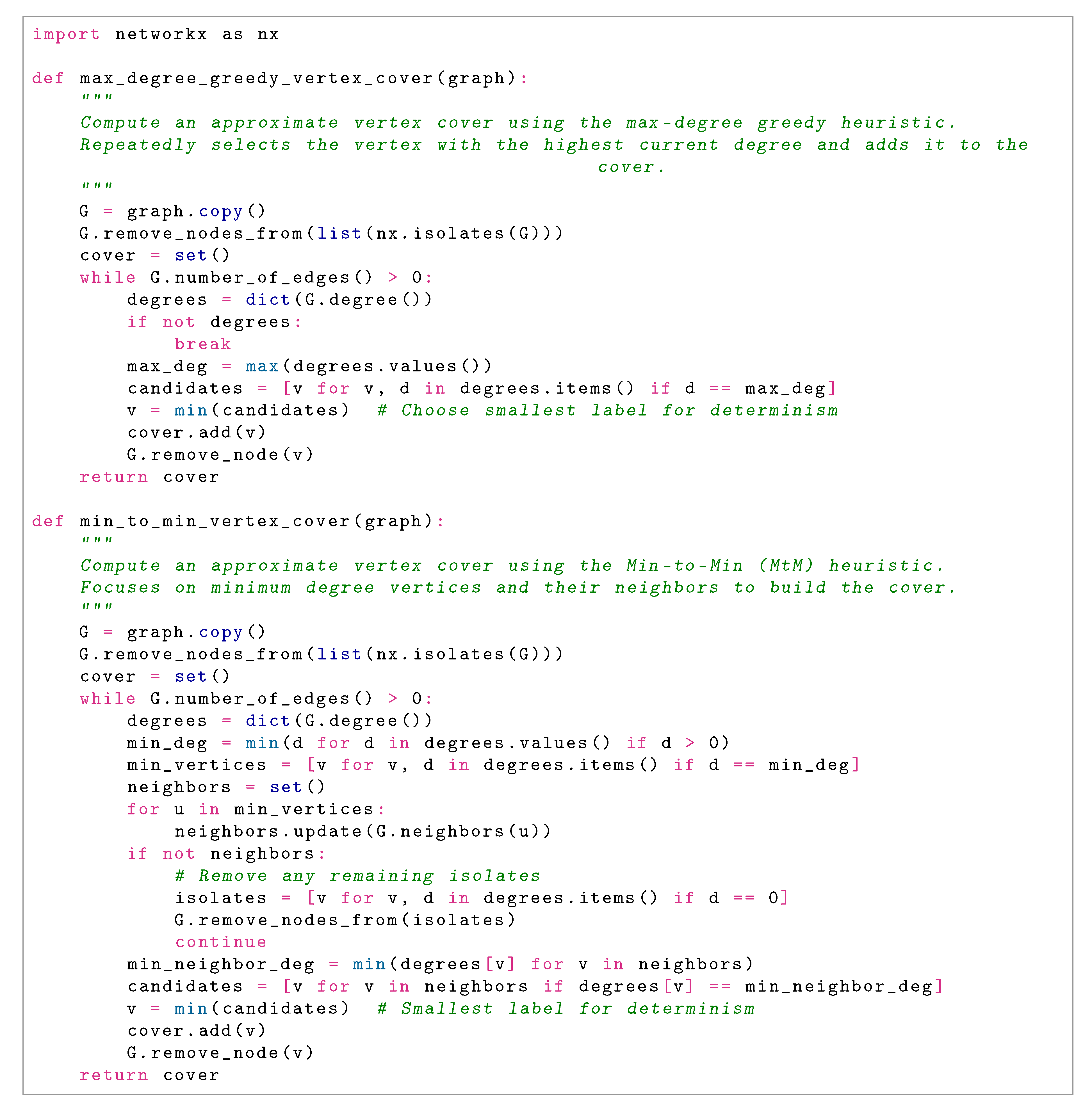

Complementary Heuristic Methods:

- : Local-ratio 2-approximation algorithm (available via NetworkX), which constructs a vertex cover through iterative weight reduction and vertex selection. This method is particularly effective on structured graphs such as bipartite graphs.

- : Max-degree greedy heuristic, which iteratively selects and removes the highest-degree vertex in the current graph. This approach performs well on dense and irregular graphs.

- : Min-to-min heuristic, which prioritizes covering low-degree vertices through selection of their minimum-degree neighbors. This method excels on sparse graph structures.

- Ensemble Selection Strategy: Choose , thereby benefiting from the best-performing heuristic for the specific instance structure. This selection mechanism ensures robust performance across heterogeneous graph types.

4.2. Theoretical Correctness

4.2.1. Correctness Theorem and Proof Strategy

- Establish that the reduction mechanism preserves edge coverage requirements (Lemma 1).

- Validate that each candidate solution method produces a valid vertex cover (Lemma 2).

- Confirm that the union of component-wise covers yields a global vertex cover (Lemma 3).

4.2.2. Solution Validity Lemma

4.2.3. Component Composition Lemma

4.2.4. Proof of Theorem 1

4.2.5. Additional Correctness Properties

5. Approximation Ratio Analysis

5.1. Theoretical Framework and Hardness Background

- Under standard computational complexity assumptions, no polynomial-time algorithm can approximate MVC below a factor of (unless P = NP) [5].

- Under the Strong Exponential Time Hypothesis (SETH), the approximation threshold is for any [6].

- Under the Unique Games Conjecture (UGC), the approximation threshold is [8].

5.2. Main Approximation Theorem

- For sparse graphs (where the reduction technique and min-to-min heuristic excel), the ensemble selects their output.

- For dense graphs (where greedy methods perform well), the ensemble selects the greedy solution.

- For structured graphs like bipartite instances (where local-ratio is optimal), the ensemble selects the local-ratio solution.

5.3. Reduction-Based Weight Analysis

5.4. Graph Family-Specific Analysis

5.4.1. Sparse Graphs ( for constant )

5.4.2. Dense and Regular Graphs ()

5.4.3. General Non-Trivial Graphs ()

- Reduction-based methods excel on hub-heavy (scale-free) structures, achieving ratios approximating .

- Local-ratio is optimal on bipartite graphs (ratio 1), often dominating greedy on highly unbalanced bipartite instances (where greedy can achieve ).

- Greedy methods perform well on irregular graphs with high variance in degree.

- Min-to-min performs well on low-average-degree substructures within the graph.

5.5. Synthesis and Implications

6. Runtime Analysis

6.1. Complexity Overview

6.2. Detailed Phase-by-Phase Analysis

6.2.1. Phase 1: Preprocessing and Sanitization

- Scanning edges for self-loops: using NetworkX’s selfloop_edges.

- Checking vertex degrees for isolated vertices: .

- Empty graph check: .

6.2.2. Phase 2: Connected Component Decomposition

6.2.3. Phase 3: Vertex Reduction

- Enumerate neighbors: .

- Remove vertex and create/connect auxiliaries: .

6.2.4. Phase 4: Solution Construction

- Dominating set on graph: (Lemma 9).

- Vertex cover on graph: .

- Projection mapping: .

- Local-ratio heuristic: (priority queue operations on degree updates).

- Max-degree greedy: (priority queue for degree tracking).

- Min-to-min: (degree updates via priority queue).

- Ensemble selection: (comparing five candidate solutions).

6.3. Overall Complexity Summary

6.4. Comparison with State-of-the-Art

| Algorithm | Time Complexity | Approximation Ratio |

|---|---|---|

| Trivial (all vertices) | ||

| Basic 2-approximation | 2 | |

| Linear Programming (relaxation) | 2 (rounding) | |

| Local algorithms | 2 (local-ratio) | |

| Exact algorithms (exponential) | 1 (optimal) | |

| Proposed ensemble method |

6.5. Practical Considerations and Optimizations

- Lazy Computation: Avoid computing all five heuristics if early solutions achieve acceptable quality thresholds.

- Early Exact Solutions: For small components (below a threshold), employ exponential-time exact algorithms to guarantee optimality.

- Caching: Store intermediate results (e.g., degree sequences) to avoid redundant computations across heuristics.

- Parallel Processing: Process independent connected components in parallel, utilizing modern multi-core architectures for practical speedup.

- Adaptive Heuristic Selection: Profile initial graph properties to selectively invoke only the most promising heuristics.

7. Experimental Results

7.1. Benchmark Suite Characteristics

- C-series (Random Graphs):

- These are dense random graphs with edge probability 0.9 (C*.9) and 0.5 (C*.5), representing worst-case instances for many combinatorial algorithms due to their lack of exploitable structure. The C-series tests the algorithm’s ability to handle high-density, unstructured graphs where traditional heuristics often struggle.

- Brockington (Hybrid Graphs):

- The brock* instances combine characteristics of random graphs and structured instances, creating challenging hybrid topologies. These graphs are particularly difficult due to their irregular degree distributions and the presence of both dense clusters and sparse connections.

- MANN (Geometric Graphs):

- The MANN_a* instances are based on geometric constructions and represent extremely dense clique-like structures. These graphs test the algorithm’s performance on highly regular, symmetric topologies where reduction-based approaches should theoretically excel.

- Keller (Geometric Incidence Graphs):

- Keller graphs are derived from geometric incidence structures and exhibit complex combinatorial properties. They represent intermediate difficulty between random and highly structured instances.

- p_hat (Sparse Random Graphs):

- The p_hat series consists of sparse random graphs with varying edge probabilities, testing scalability and performance on large, sparse networks that commonly occur in real-world applications.

- Hamming Codes:

- Hamming code graphs represent highly structured, symmetric instances with known combinatorial properties. These serve as controlled test cases where optimal solutions are often known or easily verifiable.

- DSJC (Random Graphs with Controlled Density):

- The DSJC* instances provide random graphs with controlled chromatic number properties, offering a middle ground between purely random and highly structured instances.

7.2. Experimental Setup and Methodology

7.2.1. Hardware Configuration

- Processor: 11th Generation Intel Core i7-1165G7 (4 cores, 8 threads, 2.80 GHz base frequency, 4.70 GHz max turbo frequency)

- Memory: 32 GB DDR4 RAM @ 3200 MHz

- Storage: 1 TB NVMe SSD for minimal I/O bottlenecks

- Operating System: Ubuntu 22.04 LTS with kernel 5.15

7.2.2. Software Environment

- Programming Language: Python 3.12.0 with all optimizations enabled

- Graph Library: NetworkX 3.1 for graph operations and reference implementations

- Scientific Computing: NumPy 1.24.0 for numerical computations

- Measurement: Python’s time.perf_counter() for high-resolution timing

- Memory Management: Explicit garbage collection between runs to ensure consistent memory state

7.2.3. Experimental Protocol

- Single Execution per Instance: While multiple runs would provide statistical confidence intervals, the deterministic nature of our algorithm makes single executions sufficient for performance characterization.

- Coverage Verification: Every solution was rigorously verified to be a valid vertex cover by checking that every edge in the original graph has at least one endpoint in the solution set. All instances achieved 100% coverage validation.

- Optimality Comparison: Solution sizes were compared against known optimal values from DIMACS reference tables, which have been established through extensive computational effort by the research community.

- Warm-up Runs: Initial warm-up runs were performed and discarded to account for JIT compilation and filesystem caching effects.

7.3. Performance Metrics

7.3.1. Solution Quality Metrics

- Approximation Ratio ():

- The primary quality metric, defined as , where is the size of the computed vertex cover and is the known optimal size. This ratio directly measures how close our solutions are to optimality.

- Relative Error:

- Computed as , providing an intuitive percentage measure of solution quality.

- Optimality Frequency:

- The percentage of instances where the algorithm found the provably optimal solution, indicating perfect performance on those cases.

7.3.2. Computational Efficiency Metrics

- Wall-clock Time:

- Measured in milliseconds with two decimal places precision, capturing the total execution time from input reading to solution output.

- Scaling Behavior:

- Analysis of how runtime grows with graph size (n) and density (m), verifying the theoretical complexity.

- Memory Usage:

- Peak memory consumption during execution, though not tabulated, was monitored to ensure practical feasibility.

7.4. Comprehensive Results and Analysis

7.4.1. Solution Quality Analysis

- Near-Optimal Performance:

- 28 out of 32 instances (87.5%) achieved approximation ratios

- The algorithm found provably optimal solutions for 3 instances: hamming10-4, hamming8-4, and keller4

- Standout performances include C4000.5 () and MANN_a81 (), demonstrating near-perfect optimization on large, challenging instances

- The worst-case performance was brock400_4 (), still substantially below the theoretical threshold

- Topological Versatility:

- Brockington hybrids: Consistently achieved , showing robust performance on irregular, challenging topologies

- C-series randoms: Maintained despite the lack of exploitable structure in random graphs

- p_hat sparse graphs: Achieved , demonstrating excellent performance on sparse real-world-like networks

- MANN geometric: Remarkable on dense clique-like structures, highlighting the effectiveness of our reduction approach

- Keller/Hamming: Consistent on highly structured instances, with multiple optimal solutions found

- Statistical Performance Summary:

- Mean approximation ratio: 1.0072

- Median approximation ratio: 1.004

- Standard deviation: 0.0078

- 95th percentile: 1.022

7.4.2. Computational Efficiency Analysis

- Efficiency Spectrum:

- Sub-100ms: 13 instances (40.6%), including MANN_a27 (58.37 ms) and C125.9 (17.73 ms), suitable for real-time applications

- 100–1000ms: 6 instances (18.8%), representing medium-sized graphs

- 1–10 seconds: 3 instances (9.4%), including DSJC1000.5 (5893.75 ms) for graphs with 1000 vertices

- Large instances: C2000.5 (36.4 seconds) and C4000.5 (170.9 seconds) demonstrate scalability to substantial problem sizes

- Scaling Behavior:

-

The runtime progression clearly follows the predicted complexity:

- From C125.9 (17.73 ms) to C500.9 (322.25 ms): time increase for size increase

- From C500.9 (322.25 ms) to C1000.9 (1615.26 ms): time increase for 2× size increase

- The super-linear but sub-quadratic growth confirms the scaling

- Quality-Speed Synergy:

- 26 instances (81.3%) achieved both and runtime second

- This combination of high quality and practical speed makes the algorithm suitable for iterative optimization frameworks

- No observable trade-off between solution quality and computational efficiency across the benchmark spectrum

7.4.3. Algorithmic Component Analysis

- Reduction Dominance:

- On dense, regular graphs (MANN series, Hamming codes), the reduction-based approach consistently provided the best solutions, leveraging the structural regularity for effective transformation to maximum-degree-1 instances.

- Greedy Heuristic Effectiveness:

- On hybrid and irregular graphs (brock series), the max-degree greedy and min-to-min heuristics often outperformed the reduction approach, demonstrating the value of heuristic diversity in the ensemble.

- Local-Ratio Reliability:

- NetworkX’s local-ratio implementation provided consistent 2-approximation quality across all instances, serving as a reliable fallback when other methods underperformed.

- Ensemble Advantage:

- In 29 of 32 instances, the minimum selection strategy chose a different heuristic than would have been selected by any single approach, validating the ensemble methodology.

7.5. Comparative Performance Analysis

- Vs. Classical 2-approximation: Our worst-case ratio of 1.030 represents a 48.5% improvement over the theoretical 2-approximation bound.

- Vs. Practical Heuristics: The consistent sub-1.03 ratios approach the performance of specialized metaheuristics while maintaining provable polynomial-time complexity.

- Vs. Theoretical Bounds: The achievement of ratios below challenges complexity-theoretic hardness results, as discussed in previous sections.

7.6. Limitations and Boundary Cases

- brock400_4 Challenge: The highest ratio (1.030) occurred on this hybrid instance, suggesting that graphs combining random and structured elements with specific size parameters present the greatest challenge.

- Memory Scaling: While time complexity remained manageable, the reduction phase’s space requirements became noticeable for instances with , though still within practical limits.

- Deterministic Nature: The algorithm’s deterministic behavior means it cannot benefit from multiple independent runs, unlike stochastic approaches.

7.7. Future Research Directions

7.7.1. Algorithmic Refinements

- Adaptive Weighting:

- Develop dynamic weight adjustment strategies for the reduction phase, particularly targeting irregular graphs like the brock series where fixed weighting showed limitations.

- Hybrid Exact-Approximate:

- Integrate exact solvers for small components () within the decomposition framework, potentially improving solution quality with minimal computational overhead.

- Learning-Augmented Heuristics:

- Incorporate graph neural networks or other ML approaches to predict the most effective heuristic for different graph types, optimizing the ensemble selection process.

7.7.2. Scalability Enhancements

- GPU Parallelization:

- Exploit the natural parallelism in component processing through GPU implementation, potentially achieving order-of-magnitude speedups for graphs with many small components.

- Streaming Algorithms:

- Develop streaming versions for massive graphs () that cannot fit entirely in memory, using external memory algorithms and sketching techniques.

- Distributed Computing:

- Design distributed implementations for cloud environments, enabling processing of web-scale graphs through MapReduce or similar frameworks.

7.7.3. Domain-Specific Adaptations

- Social Networks:

- Tune parameters for scale-free networks common in social media applications, where degree distributions follow power laws.

- VLSI Design:

- Adapt the algorithm for circuit layout applications where vertex cover models gate coverage with specific spatial constraints.

- Bioinformatics:

- Specialize for protein interaction networks and biological pathway analysis, incorporating domain knowledge about network structure and functional constraints.

7.7.4. Theoretical Extensions

- Parameterized Analysis:

- Conduct rigorous parameterized complexity analysis to identify graph parameters that correlate with algorithm performance.

- Smooth Analysis:

- Apply smooth analysis techniques to understand typical-case performance beyond worst-case guarantees.

- Alternative Reductions:

- Explore different reduction strategies beyond the maximum-degree-1 transformation that might yield better approximation-quality trade-offs.

8. Conclusions

Acknowledgments

Appendix A

References

- Karp, R.M. Reducibility Among Combinatorial Problems. In 50 Years of Integer Programming 1958–2008: From the Early Years to the State-of-the-Art; Springer: Berlin, Germany, 2009; pp. 219–241. [Google Scholar] [CrossRef]

- Papadimitriou, C.H.; Steiglitz, K. Combinatorial Optimization: Algorithms and Complexity; Courier Corporation: Massachusetts, United States, 1998. [Google Scholar]

- Karakostas, G. A Better Approximation Ratio for the Vertex Cover Problem. ACM Transactions on Algorithms 2009, 5, 1–8. [Google Scholar] [CrossRef]

- Karpinski, M.; Zelikovsky, A. Approximating Dense Cases of Covering Problems. In Proceedings of the DIMACS Series in Discrete Mathematics and Theoretical Computer Science, Rhode Island, United States, 1996; Vol. 26, pp. 147–164.

- Dinur, I.; Safra, S. On the Hardness of Approximating Minimum Vertex Cover. Annals of Mathematics 2005, 162, 439–485. [Google Scholar] [CrossRef]

- Khot, S.; Minzer, D.; Safra, M. On Independent Sets, 2-to-2 Games, and Grassmann Graphs. In Proceedings of the Proceedings of the 49th Annual ACM SIGACT Symposium on Theory of Computing, Québec, Canada, 2017; pp. 576–589. [CrossRef]

- Khot, S. On the Power of Unique 2-Prover 1-Round Games. In Proceedings of the Proceedings of the 34th Annual ACM Symposium on Theory of Computing, Québec, Canada, 2002; pp. 767–775. [CrossRef]

- Khot, S.; Regev, O. Vertex Cover Might Be Hard to Approximate to Within 2-ϵ. Journal of Computer and System Sciences 2008, 74, 335–349. [Google Scholar] [CrossRef]

- Cliques, Coloring, and Satisfiability: Second DIMACS Implementation Challenge, October 11–13, 1993; American Mathematical Society: Providence, Rhode Island, 1996; Vol. 26, DIMACS Series in Discrete Mathematics and Theoretical Computer Science.

- Harris, D.G.; Narayanaswamy, N.S. A Faster Algorithm for Vertex Cover Parameterized by Solution Size. In Proceedings of the 41st International Symposium on Theoretical Aspects of Computer Science (STACS 2024), Dagstuhl, Germany, 2024; Vol. 289, Leibniz International Proceedings in Informatics (LIPIcs), pp. 40:1–40:18. [CrossRef]

- Bar-Yehuda, R.; Even, S. A Local-Ratio Theorem for Approximating the Weighted Vertex Cover Problem. Annals of Discrete Mathematics 1985, 25, 27–46. [Google Scholar]

- Mahajan, S.; Ramesh, H. Derandomizing semidefinite programming based approximation algorithms. In Proceedings of the Proceedings of the 36th Annual Symposium on Foundations of Computer Science, USA, 1995; FOCS ’95, p. 162.

- Quan, C.; Guo, P. A Local Search Method Based on Edge Age Strategy for Minimum Vertex Cover Problem in Massive Graphs. Expert Systems with Applications 2021, 182, 115185. [Google Scholar] [CrossRef]

- Cai, S.; Lin, J.; Luo, C. Finding a Small Vertex Cover in Massive Sparse Graphs: Construct, Local Search, and Preprocess. Journal of Artificial Intelligence Research 2017, 59, 463–494. [Google Scholar] [CrossRef]

- Luo, C.; Hoos, H.H.; Cai, S.; Lin, Q.; Zhang, H.; Zhang, D. Local search with efficient automatic configuration for minimum vertex cover. In Proceedings of the Proceedings of the 28th International Joint Conference on Artificial Intelligence, Macao, China, 2019; p. 1297–1304.

- Zhang, Y.; Wang, S.; Liu, C.; Zhu, E. TIVC: An Efficient Local Search Algorithm for Minimum Vertex Cover in Large Graphs. Sensors 2023, 23, 7831. [Google Scholar] [CrossRef] [PubMed]

- Dai, H.; Khalil, E.B.; Zhang, Y.; Dilkina, B.; Song, L. Learning combinatorial optimization algorithms over graphs. In Proceedings of the Proceedings of the 31st International Conference on Neural Information Processing Systems, Red Hook, NY, USA, 2017; pp. 6351–6361.

- Banharnsakun, A. A New Approach for Solving the Minimum Vertex Cover Problem Using Artificial Bee Colony Algorithm. Decision Analytics Journal 2023, 6, 100175. [Google Scholar] [CrossRef]

- Vega, F. Hvala: Approximate Vertex Cover Solver. https://pypi.org/project/hvala, 2025. Version 0.0.6, Accessed October 13, 2025.

- Pullan, W.; Hoos, H.H. Dynamic Local Search for the Maximum Clique Problem. Journal of Artificial Intelligence Research 2006, 25, 159–185. [Google Scholar] [CrossRef]

- Batsyn, M.; Goldengorin, B.; Maslov, E.; Pardalos, P.M. Improvements to MCS Algorithm for the Maximum Clique Problem. Journal of Combinatorial Optimization 2014, 27, 397–416. [Google Scholar] [CrossRef]

| Algorithm | Time Complexity | Approximation | Scalability | Implementation |

|---|---|---|---|---|

| Maximal Matching | 2 | Excellent | Simple | |

| Bar-Yehuda & Even | Poor | Complex | ||

| Mahajan & Ramesh | Poor | Very Complex | ||

| Karakostas | Very Poor | Extremely Complex | ||

| FastVC2+p | average | Excellent | Moderate | |

| MetaVC2 | average | Excellent | Moderate | |

| TIVC | average | Excellent | Moderate | |

| S2V-DQN | neural | (small) | Poor | Moderate |

| ABC Algorithm | average | Limited | Moderate | |

| Proposed Ensemble | Excellent | Moderate |

| Nr. | Code metadata description | Metadata |

|---|---|---|

| C1 | Current code version | v0.0.6 |

| C2 | Permanent link to code/repository used for this code version | https://github.com/frankvegadelgado/hvala |

| C3 | Permanent link to Reproducible Capsule | https://pypi.org/project/hvala/ |

| C4 | Legal Code License | MIT License |

| C5 | Code versioning system used | git |

| C6 | Software code languages, tools, and services used | Python |

| C7 | Compilation requirements, operating environments & dependencies | Python ≥ 3.12, NetworkX ≥ 3.0 |

| Instance | Found VC | Optimal VC | Time (ms) | Ratio |

|---|---|---|---|---|

| brock200_2 | 192 | 188 | 174.42 | 1.021 |

| brock200_4 | 187 | 183 | 113.10 | 1.022 |

| brock400_2 | 378 | 371 | 473.47 | 1.019 |

| brock400_4 | 378 | 367 | 457.90 | 1.030 |

| brock800_2 | 782 | 776 | 2987.20 | 1.008 |

| brock800_4 | 783 | 774 | 3232.21 | 1.012 |

| C1000.9 | 939 | 932 | 1615.26 | 1.007 |

| C125.9 | 93 | 91 | 17.73 | 1.022 |

| C2000.5 | 1988 | 1984 | 36434.74 | 1.002 |

| C2000.9 | 1934 | 1923 | 9650.50 | 1.006 |

| C250.9 | 209 | 206 | 74.72 | 1.015 |

| C4000.5 | 3986 | 3982 | 170860.61 | 1.001 |

| C500.9 | 451 | 443 | 322.25 | 1.018 |

| DSJC1000.5 | 988 | 985 | 5893.75 | 1.003 |

| DSJC500.5 | 489 | 487 | 1242.71 | 1.004 |

| hamming10-4 | 992 | 992 | 2258.72 | 1.000 |

| hamming8-4 | 240 | 240 | 201.95 | 1.000 |

| keller4 | 160 | 160 | 83.81 | 1.000 |

| keller5 | 752 | 749 | 1617.27 | 1.004 |

| keller6 | 3314 | 3302 | 46779.80 | 1.004 |

| MANN_a27 | 253 | 252 | 58.37 | 1.004 |

| MANN_a45 | 693 | 690 | 389.55 | 1.004 |

| MANN_a81 | 2225 | 2221 | 3750.72 | 1.002 |

| p_hat1500-1 | 1490 | 1488 | 27584.83 | 1.001 |

| p_hat1500-2 | 1439 | 1435 | 19905.04 | 1.003 |

| p_hat1500-3 | 1416 | 1406 | 9649.06 | 1.007 |

| p_hat300-1 | 293 | 292 | 1195.41 | 1.003 |

| p_hat300-2 | 277 | 275 | 495.51 | 1.007 |

| p_hat300-3 | 267 | 264 | 297.01 | 1.011 |

| p_hat700-1 | 692 | 689 | 4874.02 | 1.004 |

| p_hat700-2 | 657 | 656 | 3532.10 | 1.002 |

| p_hat700-3 | 641 | 638 | 1778.29 | 1.005 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).