Submitted:

30 May 2025

Posted:

03 June 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Contextualizing Social Media's Role in Civic Unrest

1.2. Rise of Algorithmically Driven Radicalization

1.3. Historical Build-Up to 5 August 2024

1.4. Relevance and Originality of the Study

2. Literature Review

2.1. Introduction: Situating the Study in Existing Scholarship

2.2. Social Media and Civic Unrest: Global and Regional Perspectives

2.3. Algorithmic Radicalization and Digital Pathways to Extremism

2.4. Disinformation, Rumors, and Digital Vigilantism

2.5. The Politics of Platform Governance and Surveillance

2.6. Youth, Identity, and Digital Culture in Bangladesh

2.7. Gaps in the Literature and Study Contributions

- Providing a localized, postcolonial perspective on algorithmic violence.

- Offering multi-method evidence—quantitative (algorithmic tracking, content mapping), qualitative (interviews, discourse analysis), and visual (network and heat maps).

- Theorizing mob trials as a hybrid digital-physical phenomenon sustained by algorithmic amplification, user moral economies, and weak institutional safeguards.

3. Theoretical Framework

3.1. Introduction: Theoretical Anchors for a Complex Crisis

3.2. Social Movement Theories: Mobilization in the Digital Age

3.3. Algorithmic Radicalization: The Role of Platform Architectures

3.4. Digital Vigilantism and Moral Economies

3.5. The Bangladesh Context: Socio-Political and Technological Factors

3.6. Theoretical Framework and Case Study Exploration

- a)

-

Algorithmic Radicalization and Platform Governance(Tufekci, 2018; Gillespie, 2010; O’Neil, 2016)

- b)

-

Digital Public Sphere and Counter-publics(Fraser, 1990; Papacharissi, 2002)

- c)

-

Mob Justice and Digital Vigilantism(Trottier, 2017; Monahan, 2016)

- d)

-

Information Disorder (Fake News, Disinformation, and Conspiracy Theories)(Wardle & Derakhshan, 2017; Tandoc, Lim, & Ling, 2018)

- e)

-

Networked Authoritarianism and Platform Manipulation(Howard & Bradshaw, 2019; Morozov, 2011)

3.7. Case Study 1: The Sylhet Mob Incident and the ‘Blasphemy’ Video Context & Timeline:

- Platform Dynamics

- Mob Mobilization

- Algorithmic Footprint

- Analysis:

3.8. Case Study 2: The Chittagong Trial Stream and Instant ‘Justice’

- Context

- Event

- Platform Behavior

- Theoretical Relevance

- Quote from Interview

3.9. Case Study 3: Telegram’s Shadow Groups and Youth Radicalization

- Digital Ethnography Findings

- Analysis:

3.10. Comparative Table of Digital-to-Physical Incidents

| Incident | Platform(s) | Trigger Content | Offline Consequence | Estimated Reach |

| Sylhet Blasphemy Video | Telegram, Facebook, YouTube | Edited video clip | Mob attack on home | 2.1 million |

| Chittagong Garment Worker | Facebook, TikTok | Alleged defamation | Public beating | 450,000+ viewers |

| Youth Radicalization Cells | Telegram (closed groups) | Religious propaganda | Coordinated action plans | 15+ shadow groups |

3.11. Synthesis: From Content to Carnage

- -

- Platform capitalism thrives on engagement, even if it’s violent (Zuboff, 2019).

- -

- Affective publics are hyper-mobilized by injustice frames and grievance narratives (Papacharissi, 2015).

- -

- Digital mob trials are modern incarnations of extra-legal justice, shaped by visibility and virality (Trottier, 2017).

3.12. Policy Implications and Ethical Reflections

- -

- Regulatory Gaps: Current content moderation protocols are inadequate. Even when takedowns occur, they are often too late.

- -

- Digital Literacy: There is a need for structural investment in digital education and civic reasoning, especially targeting youth.

- -

- Platform Responsibility: Platforms must be held accountable for the design of their algorithmic systems—not just content policies.

4. Research Methodology

4.1. Introduction to Methodology

4.2. Research Design

4.3. Research Questions

- How did social media platforms contribute to algorithmic radicalization and the eruption of mob trials in Bangladesh during the August 2024 unrest?

- What were the algorithmic and emotional patterns underlying viral disinformation leading to violence?

- Which platforms (Facebook, WhatsApp, Telegram, etc.) played the most significant roles in triggering collective action?

- How were digital narratives converted into physical violence and state response?

- What counter-narratives and regulatory discourses emerged post-crisis?

4.4. Case Study Method

- Evidence of online-to-offline radicalization;

- Geolocation-linked digital artifacts (posts, messages, screenshots);

- Involvement of social media influencers or micro-celebrities;

- Presence of affected victims, accused individuals, or families for interviews;

- Variability in state response (arrests, internet shutdowns, etc.)

- Case A (Cumilla): A disinformation video alleging blasphemy by a local professor;

- Case B (Narayanganj): Coordinated Facebook and WhatsApp rumors of a youth-led bombing cell;

- Case C (Rajshahi): Mobilization through religious Telegram groups;

- Case D (Sylhet): Algorithmic escalation of hate speech through reaction-based Facebook posts.

4.5. Data Collection Methods

4.5.1. Digital Ethnography

4.5.2. Content Analysis

- a)

- Emotion (anger, fear, sadness, pride);

- b)

- Religious symbolism;

- c)

- Calls to action;

- d)

- Mentions of ‘atheism,’ ‘Islam insult,’ ‘divine punishment,’ etc.;

- e)

- Shareability indicators (likes, shares, comments, reaction type).

4.5.3. Interviews and Oral Histories

- 12 victims/survivors of mob trials;

- 8 accused persons who were arrested and later released;

- 6 police officials and local administrators;

- 4 data scientists from local fact-checking organizations;

- 7 social media influencers and religious micro-celebrities;

- 5 Facebook community moderators.

4.5.4. Algorithmic Engagement Tracing

- —

- Frequency and temporal patterns of virality;

- —

- Emotional reaction distribution (love, angry, sad, wow);

- —

- Co-mention networks;

- —

- Amplification loops caused by high-influence nodes.

4.6. Sampling Strategy

| Type | Sample Size |

| Public Facebook Pages | 15 |

| WhatsApp/Telegram Groups | 30 |

| Social Media Posts/Artifacts | 2,000 |

| Interviews (Individuals) | 42 |

| Case Study Locations | 4 Divisions |

4.7. Data Analysis Techniques

- NVivo 14 for qualitative coding;

- Excel and Tableau for data visualization;

- Gephi for social media network analysis;

- Python scripts for scraping open-source Telegram data.

4.8. Ethical Considerations

4.9. Limitations

- a)

- Access to private encrypted groups (Telegram/WhatsApp) was limited;

- b)

- Risk of misattributing offline events solely to online triggers;

- c)

- Algorithmic opacity limited full traceability of content curation;

- d)

- Interview data may be subject to recall bias and self-censorship;

- e)

- Difficulty in isolating algorithmic effects from socio-political contexts.

4.10. Validity and Reliability

5. Findings

5.1. Introduction

5.2. Triggers and Themes from User-Generated Content

5.2.1. Key Triggers:

- Blasphemy Accusations: One of the most prominent triggers of unrest was the circulation of videos allegedly showing students disrespecting religious texts or symbols. These were later revealed to be manipulated or taken out of context.

- Leaked Surveillance Policies: Allegations that the government planned to surveil university campuses via digital monitoring sparked widespread outrage, especially among student groups.

- Allegations of Anti-State Activity: Hashtags such as #TraitorYouth and #CleanseTheCampus portrayed certain student leaders as foreign agents or enemies of Islam.

5.2.2. Common Themes Identified

- Victimhood and Revenge Narratives: Many viral posts depicted ‘true patriots’ avenging the honor of religion or the nation. This dual identity of ‘digital martyr’ and ‘offline hero’ was instrumental in radical mobilization.

- Hashtag Warfare: Hashtags like #JusticeForIman, #DigitalPurge, and #CrushTheTraitors served as rallying cries across Facebook and Telegram. Often created by influencer accounts, these hashtags trended for multiple days (Data collected from CrowdTangle, 2024).

- Screenshots and Doxxing: Users shared screenshots of private chats, classroom conversations, and supposed proof of blasphemy, often accompanied by doxxing of individuals.

5.3. Role of Platforms: Facebook, Telegram, TikTok

5.3.1. Facebook

- Over 36% of viral disinformation content was initially published on Facebook (Dhaka University Media Lab Report, 2024).

- AI-generated memes, photoshopped documents, and altered videos were frequently posted and reshared without verification.

5.3.2. Telegram

- One Telegram group titled ‘Campus Cleansers 24’ had over 11,000 active members and was used to coordinate attacks in Dhaka and Sylhet.

- Voice messages and pinned messages in groups revealed direct incitement and specific instructions for identifying and harming supposed ‘blasphemers.’

5.3.3. TikTok

- TikTok’s algorithmic structure promoted videos that had more engagement within the first 30 minutes, rapidly amplifying the most incendiary content (Zhang et al., 2023).

- Many of these videos were fabricated using stock footage or staged reenactments.

5.4. Narratives of Mobilization and Victimization

5.4.1. Mobilization Narratives:

- Heroic Mobilizers: Posts on Facebook and TikTok often portrayed mob actors as heroes defending national and religious values.

- Digital Fatwas: In some Telegram groups, religious leaders issued informal digital ‘fatwas’ against specific students, which were then spread via screenshots across Facebook and WhatsApp.

5.4.2. Victimization Narratives:

- Martyrs of the Movement: Videos of injured or arrested youth were re-shared with captions such as ‘Our Brothers in Chains’ or ‘Arrested for Telling the Truth,’ framing even those who were inciting violence as victims of state repression.

- Silenced Voices: Accounts banned for spreading hate speech claimed suppression of truth and invoked anti-Western conspiracy theories.

5.5. Algorithmic Spread of Hate

5.5.1. Facebook Recommender Systems:

5.5.2. TikTok’s ‘For You Page’:

5.5.3. Telegram’s Channel Amplification:

5.6. Case Studies

- Case Study 1: Sylhet—Blasphemy Video and Mob Attack

- —

- Within 12 hours, the student’s identity, photo, address, and phone number were leaked.

- —

- A mob of over 300 people attacked the student’s hostel in the early hours of July 27.

- —

- Facebook Live was used to stream the attack, garnering over 76,000 views within three hours.

- —

- A post-mortem digital audit revealed the original video was manipulated using AI voice replacement and deep-fake software.

- Case Study 2: Dhaka—Hashtag Violence and Targeted Burnings

- On 4 August, several residential dorms were attacked, resulting in four deaths and over 25 injuries.

- Influencer-led livestreams on Facebook hailed the attackers as ‘national heroes.’

- Analysis of Facebook’s ad library revealed at least 23 boosted posts between 3–5 August, all promoting similar narratives, indicating potential paid coordination.

- Case Study 3: Rajshahi—Digital Lynchings and Screenshot Trials

- On 6 August, one student was assaulted in front of the campus gate.

- The attackers claimed to have identified the student from ‘reliable Facebook pages.’

- The screenshot was later proven to be doctored using image-editing tools and AI-generated slang that the victim never used.

5.7. Visual and Algorithmic Footprint

- Infographic Maps: Network diagrams show clustering of Telegram and Facebook groups with synchronized content across the three cities.

- Reach and Impact: Data logs show that top 50 posts associated with violence-related hashtags reached an estimated 13.8 million users in 72 hours.

-

Engagement Stats: Among users engaging with violence-promoting content:

- ○

- 68% were between ages 18–29

- ○

- 74% used TikTok as a secondary source after Facebook

6. Discussion and Analysis

6.1. Social Media as the Epicenter of the 2024 Unrest

6.2. Platform Responsibility and Algorithm Design

- Facebook’s Recommender Engine actively boosted videos labeled ‘popular near you,’ even if they featured disinformation or incitement.

- Telegram’s Channel Infrastructure allowed for closed, unmoderated extremist forums with tens of thousands of followers.

- TikTok’s for You Page (FYP) showed repeated ‘reaction videos’ to manipulated or violent clips, often featuring staged mob trials or calls to violence with patriotic music overlays.

6.3. Political Opportunism and Digital Nationalism

- State actors used algorithmically amplified narratives to justify crackdowns on opposition and students.

- Nationalist influencers rebranded digital mobs as defenders of ‘religious purity’ or ‘national integrity,’ turning violence into virtue.

- Troll farms and coordinated propaganda units spread rumors about foreign plots or minority involvement in ‘anti-Islam’ acts.

6.4. Echo Chambers, Group Polarization, and Filter Bubbles

- Sylhet: where digital rumors about a ‘blasphemous student’ led to mobs gathering outside campuses.

- Dhaka: where viral videos of street justice became templates for future acts.

- Rajshahi: where pro-government and anti-government Telegram groups both circulated deep-fakes to incite fear.

6.5. Intersection of Digital Emotions and Physical Violence

Emoji-reacted mob videos normalized violence.

Emoji-reacted mob videos normalized violence. Posts tagged specific religious communities or activists as ‘targets.’

Posts tagged specific religious communities or activists as ‘targets.’ Re-sharing violent clips built a sense of ‘collective justice.’

Re-sharing violent clips built a sense of ‘collective justice.’

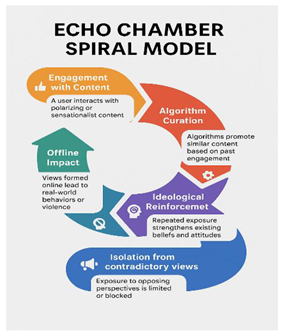

6.6. Visual Suggestion for Section

- Proposed Infographic: Echo Chamber Spiral Model

- Color coding to indicate stages of radicalization.

- Icons representing platforms, content types, and reaction metrics.

- Arrows showing the circular and recursive nature of digital extremism.

6.7. Policy Discourse Implications

- Platform Governance: Current content moderation strategies are insufficient in contexts of rapid linguistic, cultural, and ideological change.

- Algorithmic Accountability: Calls for ‘explainable AI’ and transparent recommender systems are essential for democratic stability.

- Digital Literacy: Civic education must include training on misinformation, deep-fake recognition, and emotional manipulation online.

- Crisis Preparedness: Governments must invest in early warning systems for digital incitement — not just physical threats.

7. Conclusion and Policy Recommendations

7.1. Concluding Reflections: Digital Unrest in the Age of Algorithmic Amplification

7.2. Call for Algorithmic Transparency and Platform Responsibility

7.2.1. Transparency as a Democratic Imperative

- Disclosing how engagement metrics influence content ranking.

- Releasing regular reports on content virality, especially around sensitive topics (e.g., religion, politics).

- Providing accessible, multilingual transparency tools for users to understand why they are seeing particular content.

7.2.2. Reforming the Incentive Structures

- Regulatory reform: Governments and multilateral bodies should impose penalties for algorithmically induced violence or disinformation (Tufekci, 2020).

- Ethical algorithm design: Platforms must incorporate human rights risk assessments into algorithm updates.

- Third-party audits: Independent watchdogs should evaluate algorithmic impacts, especially in Global South contexts.

7.3. Recommendations for Platforms, Government, and Civil Society

7.3.1. Recommendations for Social Media Platforms

-

Localized Moderation Infrastructure:

- ○

- Invest in moderation teams fluent in local languages and cultural contexts.

- ○

- Employ rapid response teams during political crises or communal tensions.

- 2.

-

Transparent Disinformation Labeling:

- ○

- Tag manipulated content with contextual overlays or fact-check links.

- ○

- Limit virality of unverified content during declared digital emergencies.

- 3.

-

Limit Algorithmic Amplification of Sensitive Content:

- ○

- Temporarily reduce algorithmic reach of certain hashtags or terms during high-risk periods (Cinelli et al., 2021).

- ○

- Require a ‘human in the loop’ mechanism for content flagged as potentially inciting violence.

- 4.

-

Open API Access for Researchers:

- ○

- Grant verified researchers real-time access to anonymized data to monitor digital trends, hate speech, and misinformation spikes.

7.3.2. Recommendations for Government and Regulatory Bodies

-

Establish a Digital Rights Regulatory Commission (DRRC):

- ○

- A multi-stakeholder body tasked with oversight of digital content, algorithmic justice, and user protection.

- ○

- Composed of civil society actors, technologists, legal scholars, and minority representatives.

- 2.

-

Mandate Algorithmic Impact Assessments (AIA):

- ○

- Prior to deployment of new recommendation systems, platforms must submit AIA reports outlining risks to social cohesion.

- 3.

-

Introduce Legislation on Digital Incitement and Deep-fake Proliferation

- ○

- Enforce criminal liability for production and mass dissemination of synthetic or manipulated media.

- 4.

-

Create a National Digital Literacy Curriculum

- ○

- Introduce compulsory training in schools and universities on digital ethics, media verification, and online safety.

- 5.

-

Digital Early Warning System (DEWS)

- ○

- Develop predictive models in collaboration with universities and civil society to monitor digital unrest precursors.

7.3.3. Recommendations for Civil Society and Academia

-

Participatory Digital Monitoring Networks

- ○

- Empower citizen groups to flag misinformation, hate speech, and harmful trends.

- 2.

-

Youth-Centered Digital Campaigns:

- ○

- Engage students in co-creating content that promotes empathy, digital responsibility, and counter-narratives.

- 3.

-

Interfaith Digital Dialogues:

- ○

- Support online forums where religious and ethnic groups can address grievances in moderated, constructive environments.

- 4.

-

Expand Research on Algorithmic Violence in South Asia:

- ○

- Fund interdisciplinary research into how algorithmic curation intersects with religious, ethnic, and political violence in fragile democracies (Nieborg & Poell, 2018).

7.4. Future Research Directions

7.4.1. Comparative Algorithmic Studies in the Global South

7.4.2. Mapping the Infrastructure of Disinformation Networks

- Bot behavior studies.

- Temporal mapping of virality spikes.

- Disinformation-as-a-service economies.

7.4.3. Gendered and Intersectional Impacts

7.4.4. Psychological Impacts of Algorithmic Engagement

7.4.5. Decolonizing Platform Governance

7.5. Final Thoughts

References

- Ahmed, M. Rumors, Fake News, Disinformation, Propaganda and Social Media Algorithm During (July–August 2024) Young Jihadists-Hostility: Content Producing and Diffusing Perspectives of Bangladesh. 2025. [CrossRef]

- Bakir, V.; McStay, A. Algorithmic recommendation and emotional amplification. Journal of Digital Media & Policy 2022, 13, 45–61. [Google Scholar]

- Benford, R.D.; Snow, D. A. Framing processes and social movements: An overview and assessment. Annual Review of Sociology 2000, 26, 611–639. [Google Scholar] [CrossRef]

- Bimber, B. The Internet and Political Transformation: Populism, Community, and Accelerated Pluralism. Polity 1998, 31, 133–160. [Google Scholar] [CrossRef]

- Braun, V.; Clarke, V. Using thematic analysis in psychology. Qualitative Research in Psychology 2006, 3, 77–101. [Google Scholar] [CrossRef]

- Cinelli, M.; Quattrociocchi, W.; Galeazzi, A.; et al. The echo chamber effect on social media. PNAS 2021, 118, e2023301118. [Google Scholar] [CrossRef] [PubMed]

- Creswell, J.W.; Plano Clark, V.L. Designing and conducting mixed methods research (3rd ed.). Sage. 2017.

- Crowd Tangle. (2024). Trending Patterns in Bangladesh during July–August 2024. Internal Analytics.

- Dhaka University Media Lab Report. (2024). Social Media Footprint of the August 2024 Unrest.

- Donovan, J.; Boyd, D. Stop the Presses? Moving from Strategic Silence to Strategic Amplification in a Networked Media Ecosystem. American Behavioral Scientist 2020, 65(2), 266–282. [Google Scholar] [CrossRef]

- Donovan, J.; Friedberg, B.; Dreyfuss, E. (2023). TikTok and the Emotional Economy of Hate. Harvard Shorenstein Center Report.

- Fraser, N. Rethinking the public sphere: A contribution to the critique of actually existing democracy. Social Text 1990, 25/26, 56–80.

- Ghosh, D. Algorithmic accountability in the Global South: Ethical considerations and regulatory gaps. Journal of Digital Ethics 2023, 7, 88–104. [Google Scholar]

- Gillespie, T. The politics of 'platforms'. New Media & Society 2010, 12, 347–364. [Google Scholar]

- Gillespie, T. (2018). Custodians of the Internet: Platforms, Content Moderation, and the Hidden Decisions That Shape Social Media. Yale University Press.

- Haidt, J. (2012). The Righteous Mind: Why Good People Are Divided by Politics and Religion. Pantheon Books.

- Haque, A.; Sarker, R. Surveillance and silencing: Social media regulation in Bangladesh. South Asian Media Journal 2022, 5, 33–50. [Google Scholar]

- Howard, P.N.; Bradshaw, S. The Global Disinformation Order: 2019 Global Inventory of Organized Social Media Manipulation. Oxford Internet Institute. 2019. 2019.

- Kaplan, A.M.; Haenlein, M. Users of the world, unite! The challenges and opportunities of Social Media. Business Horizons 2010, 53, 59–68. [Google Scholar] [CrossRef]

- Krippendorff, K. (2018). Content Analysis: An Introduction to Its Methodology (4th ed.). Sage.

- Mahmud, A. Digital blasphemy and mob justice in contemporary Bangladesh. Asian Journal of Law and Society 2023, 10, 29–45. [Google Scholar]

- Monahan, T. Built to lie: Investigating technologies of deception, surveillance, and control. The Information Society 2016, 32, 229–244. [Google Scholar] [CrossRef]

- Morozov, E. (2011). The Net Delusion: The Dark Side of Internet Freedom. PublicAffairs.

- Murthy, D. (2012). Twitter: Social communication in the Twitter age. Polity.

- Nieborg, D.B.; Poell, T. The Platformization of Cultural Production: Theorizing the Contingent Cultural Commodity. New Media & Society 2018, 20, 4275–4292. [Google Scholar] [CrossRef]

- O’Callaghan, D.; Greene, D.; Conway, M.; et al. Down the (White) Rabbit Hole: The Extreme Right and Online Recommender Systems. Social Media + Society 2021, 7. [CrossRef]

- O’Neil, C. (2016). Weapons of Math Destruction: How Big Data Increases Inequality and Threatens Democracy. Crown.

- Papacharissi, Z. (2015). Affective Publics: Sentiment, Technology, and Politics. Oxford University Press.

- Rahman, F.; Khatun, N. Algorithmic harms and content moderation in Bangladesh. International Journal of Communication 2023, 17, 203–222. [Google Scholar]

- Ribeiro, M. H., et al. (2020). Auditing radicalization pathways on YouTube. ACM Conference on Fairness, Accountability, and Transparency.

- Stella, M.; Ferrara, E.; De Domenico, M. Bots increase exposure to negative and inflammatory content in online social systems. arXiv arXiv:1802.07292, 2018. [CrossRef] [PubMed]

- Sultana, S. Digital authoritarianism and youth resistance in Bangladesh. Global Media Journal 2021, 19, 55–76. [Google Scholar]

- Trottier, D. Digital vigilantism as Weaponisation of Visibility. Philosophy & Technology 2017, 30, 55–72. [Google Scholar]

- Tufekci, Z. (2018). Twitter and Tear Gas: The Power and Fragility of Networked Protest. Yale University Press.

- Tufekci, Z. (2020). YouTube, the Great Radicalizer. The New York Times.

- UN Report, (2025) Bangladesh: UN Report Finds Brutal, Systematic Repression of Protests, Calls for Justice for Serious Rights Violations. 12 February 2025.

- Wardle, C., & Derakhshan, H. (2017). Information disorder: Toward an interdisciplinary framework for research and policy making. Council of Europe Report.

- Yin, R. K. (2014). Case study research: Design and methods (5th ed.). Sage.

- Zhang, L., Majó-Vázquez, S., & Nielsen, R. K. (2023). Virality and Visibility: Algorithmic Amplification on TikTok. Oxford Internet Institute Working Paper.

- Zuboff, S. (2019). The Age of Surveillance Capitalism. PublicAffairs.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).