1. Introduction

The 21st century presents science with two fundamental challenges: the unification of general relativity and quantum mechanics in physics, and the exploration of the nature of intelligence and consciousness in artificial intelligence. These two ostensibly disparate fields possess profound intrinsic connections. Within the theoretical frameworks of physics, the observer occupies a pivotal position [

1]—the measurement problem in quantum mechanics and the choice of reference frames in general relativity both underscore the observer's central role. Meanwhile, in intelligence science, Agent has become a focal Pole of research, with intelligence and consciousness understood as intrinsic attributes of Agent [

2]. In recent years, interdisciplinary research has indicated that the "observer" in physics can be regarded as a specific instance of Agent [

3]. This realization suggests that constructing an Agent model based on first principles can not only advance intelligence science but may also offer new perspectives for unifying fundamental theories in physics. However, despite significant progress in Agent research, a unified theoretical framework for Agent rooted in first principles is still lacking [

4]. Existing definitions of Agent predominantly remain at the level of functional descriptions, exhibiting fragmentation and lacking systematicity and internal consistency.

To address this challenge, this paper, adhering to first principles, aims to construct a unified theoretical model with universal explanatory power—Generalized Agent Theory (GAT)—by starting from the most fundamental constituents and operational mechanisms of Agent. Central to this theory is the proposal of Standard Agent Model (SAM), which strives for 'maximal functional optimality and universal explanatory power' under the premise of 'structural simplicity'. Standard Agent Model posits that any Agent is composed of five irreducible fundamental functional modules: Information Input (In), Information Output (Out), Dynamic Storage (DS), Information Creation (Cr), and Control (Con). Inspired by classical computational models such as the von Neumann architecture, this model particularly emphasizes Information Creation as a key capability distinguishing Agent from traditional computational systems, and more precisely integrates the 'computation' function into the unified concept of Dynamic Storage. The magnitudes of these five fundamental functions of Agent constitute its five-dimensional capability vector space.

Based on Standard Agent Model and its defined five-dimensional capability vector, this paper further systematically elaborates on the classification of Agent types and relationships. This includes defining three fundamental Agent types: Absolute Zero Agent or Alpha Agent (all five capabilities at zero), Omniscient and Omnipotent Agent or Omega Agent (all five capabilities at infinity), and Finite Agent; it further refines these into 243 theoretically existing Agent types. Concurrently, the paper explores 15 fundamental types of inter-Agent relationships along three dimensions: perception, communication, and interaction.

Generalized Agent Theory not only provides a description of the static structure of Agent but also introduces a refined evolutionary dynamics mechanism to understand the dynamic transformation of Agent capabilities. This mechanism is established upon Agent capability space, with Polar Intelligent Field Model at its core. This model elucidates how the evolution of Agent is influenced by the combined effects of Alpha Degradation Field (), which points towards capability decay, and Omega Enhancement Field (), which points towards capability enhancement, with these fields in turn generating corresponding intelligent evolution forces. The resultant of these forces (i. e., Net Intelligent Evolution Force ) guides Agents migration towards Alpha Polar(Alpha Agent) or Omega Polar(Omega Agent) within capability space. Critically, this dynamics mechanism also introduces a key intrinsic attribute of Agent—Wisdom (W)—to measure the response characteristics of Agent in utilizing it against these evolutionary forces, thereby more comprehensively delineating the evolutionary trajectory of Agent.

The universality of Generalized Agent Theory enables it to deeply analyze some long-standing scientific challenges. In the study of intelligence and consciousness, the theory defines intelligence as the overall efficacy and adaptability exhibited by Agent when utilizing its five core functions to achieve evolutionary goals under the drive of Intelligent field. Consciousness, in turn, is explicitly demarcated as the Control function (Con) itself and its operational process. It is further classified into types such as self-consciousness and other-consciousness based on the origin of control commands (e. g., from internal creation or external input), providing criteria for analyzing the consciousness state of current artificial intelligence, such as large language models.

More groundbreakingly, Generalized Agent Theory attempts to conceptualize the "observer" as Agent, providing a completely new perspective for interpreting several core issues in physics. The theory argues that the Universe itself can be regarded as a dynamically evolving generalized Agent, currently in a finite state. On this basis, objective reality versus subjective non-reality, determinism versus indeterminism, and indeed the nature of time and space, are all interpreted as relative concepts closely related to the capability state and internal construction processes of Observer Agent, rather than absolute ontological attributes. Crucially, by adjusting the five-dimensional capability vector of Observer Agent (particularly information input, output, and internal processing capabilities), the theory proposes a new pathway for unifying classical mechanics, relativity, and quantum mechanics: the differences among these three theoretical frameworks are rooted in the differing capability configurations of their implicit observer models. For example, the omniscient passive observer of classical mechanics, the information-input-restricted observer of relativity, and the capability-limited, environment-interacting observer of quantum mechanics each construct different models of physical reality. Similarly, the origin of entropy and its observer-dependence are given a new interpretation: entropy increase is considered the inevitable process of increasing information deficit experienced by a limited-capability observer when faced with the fundamental units of the Universe exploring state space under the drive of Omega Enhancement Field. These systematic explorations reveal the immense potential of Generalized Agent Theory as a foundational theoretical tool for connecting different scientific domains and deepening our understanding of the nature of the Universe and intelligence.

Therefore, the core contributions of Generalized Agent Theory, as systematically constructed and elaborated in this paper, are twofold: Firstly, originating from first principles, it establishes a structurally self-consistent and universally explanatory unified theoretical framework. This framework is built upon Standard Agent Model as its core constructive unit, elucidates its evolutionary dynamics through Pole Intelligent Field Model, and institutes a systematic theory of Agent classification and relationships. Secondly, the application of this theory provides insightful solutions to fundamental scientific challenges: it offers novel interpretations and analytical criteria for exploring the nature of intelligence and consciousness; crucially, by conceptualizing the observer in physics as Agent, it opens new research pathways of profound potential for understanding the dynamic evolution of the Universe, the interplay between objective reality and subjective cognition, the origins of determinism and indeterminism, the deep structure of time and space, the nature of entropy, and indeed, for tackling the unification challenge of classical mechanics, relativity, and quantum mechanics. In summary, Generalized Agent Theory aims to provide a unified foundational dialogue platform and research paradigm for key fields such as artificial intelligence, physics, complexity science, and the philosophy of technology, laying a solid groundwork for future theoretical innovations and interdisciplinary integration.

The construction of Generalized Agent Theory has undergone more than a decade of iterative development. It began in 2014, with the initial research goal of seeking a unified standard for measuring generalized intelligent systems (encompassing biological, machine, and artificial intelligence systems), which led us to realize the urgent need for a more fundamental and universal theoretical framework. Drawing inspiration from the von Neumann architecture in computer science, Standard Agent Model was first constructed [

5]. Subsequently, in 2017, an intelligence level test scale designed based on this model was used in empirical research [

6]. We conducted unified tests on leading AI systems of the time and human subjects of different age groups, revealing stage-specific characteristics of AI intelligence levels and providing a baseline for tracking the significant leap in AI capabilities up to 2024.

The phase of theoretical deepening was marked by the year 2020[

7], when we established the two extreme boundary forms of Agent: Alpha Agent, with all capabilities at zero, and Omega Agent, with all capabilities at infinity. From this, we proposed Alpha Gravity and Omega Gravity as the respective driving forces for Agent's evolution towards these two poles. This preliminarily established the dynamic evolutionary mechanism of Generalized Agent Theory, laying a foundation for research into the nature of intelligence and consciousness. By June 2024, by integrating the 'observer' as a special case of Standard Agent Model, we extended Generalized Agent Theory to the analysis of fundamental problems in physics [

3], including the nature of reality and non-reality, determinism and indeterminism, and time and space. We also proposed for the first time that the essential differences among classical mechanics, relativity, and quantum mechanics stem from the intelligence level settings of the implicit observers in each theory. Furthermore, in a study published by our team in March 2025, we elaborated on the view derived from logical deductions based on the two extreme forms of Agent: that the Universe itself is a dynamically evolving Agent, and its fundamental constituent units are also isomorphic Agent [

8].

The aforementioned research has laid a forward-looking foundation for the systematic construction and applicational exploration of Generalized Agent Theory. This paper aims to systematically integrate and deepen these research achievements, striving to enhance the rigor, completeness, and scalability of the theory, in order to overcome potential fragmentation issues encountered in earlier explorations.

2. A Unified Structure of Agent Derived from First Principles

The concept of Agent originated in computer science, initially aimed at constructing programs with a degree of autonomy capable of performing tasks on behalf of users. This early notion already encapsulated the fundamental information processing pattern of acquiring input from an environment, performing internal computations and judgments, and generating operational outputs [

9].

In artificial intelligence, the concept of Agent has been formalized and extensively studied. Classical definitions, such as that proposed by Russell and Norvig [

10], describe Agent as anything that perceives its environment through sensors and acts upon that environment through actuators. The core lies in the perception-action cycle, with internal mechanisms responsible for connecting these two, essentially involving the processing of input information and decision-making. The rise of Distributed Artificial Intelligence (DAI) and Multi-Agent Systems (MAS) further introduced a social dimension to Agent, emphasizing communication and collaboration based on information exchange (e. g., message passing) [

11].

In the 21st century, cutting-edge explorations such as embodied intelligence, reinforcement learning, and Agent based on large models have significantly expanded the capability boundaries and application complexity of Agent [

12]. However, throughout the evolutionary trajectory of the Agent concept, its fundamental workflow has consistently adhered to an information processing system paradigm. Despite vast differences in specific forms and functional manifestations (including perception, action, internal state maintenance, reasoning, planning, learning, interaction, and even creation), all can fundamentally be attributed to the processes of acquiring, processing, transforming, and utilizing information [

13]. A systematic review of the Agent concept clearly reveals its essence as an information processing system operating in a general environment, with its core lying in efficient and flexible information processing capabilities,As shown in

Figure 1.

This research, adhering to first principles, starts from the most fundamental constituents and operational mechanisms of Agent, aiming to construct a unified theoretical model with robust universal explanatory power. This model strives for 'maximal functional optimality and universal explanatory power' under the premise of 'structural simplicity', while ensuring its abstraction 'granularity' achieves a 'balanced Pole of being appropriate and irreducible'.

The preceding systematic review of the Agent concept clearly reveals that its core essence can be summarized as an information processing system operating in a general environment. Nevertheless, establishing a universal and robust theoretical definition for Agent, particularly one that forms a unified framework capable of guiding its architectural design, remains a fundamental challenge in artificial intelligence. Existing attempts at definition often face a dilemma: on one hand, defining Agent by enumerating overt behavioral characteristics tends to lead to definitions that fragment or even expand indefinitely with technological advancements, making it difficult to form a stable theoretical cornerstone; on the other hand, abstracting the essence of Agent to a singular 'information processing system', while capturing its core attribute and reflecting first principles, is often too generalized and lacks the structured insights necessary for guiding concrete design.

Starting from the fundamental postulate that Agent is an information processing system, we have deeply analyzed the minimal necessary stages of information flow. The operation of Agent can be abstracted as a continuous cycle: acquiring environmental information through perception and transmitting it internally (input); internal algorithms and models processing this information to generate new information or decisions (internal information processing); and finally, producing outputs that affect the environment or interact with other entities through actuators (output). This 'Input-Internal Processing-Output' trimodular model,As shown in

Figure 2, while more specific than the definition of 'Agent as an information processing system,' still lacks sufficient descriptive and explanatory power. Particularly, the abstraction granularity of its 'internal information processing' module is too coarse, failing to effectively identify and differentiate more refined information processing functions within Agent—functions that, at a conceptual level, should share an equally important functional status with the input and output modules.

Such structural limitations are anticipated to pose significant cognitive and modeling obstacles when addressing major theoretical challenges in the future, such as constructing Artificial General Intelligence (AGI), elucidating the origin of consciousness, discerning the deep connections between relativity and quantum mechanics, investigating the physical nature of entropy, and understanding emergent behaviors in complex systems.

To further deepen the understanding of Agent's information processing flow, we examined the von Neumann architecture that guides modern computer design. With its five core components—input, output, storage, computation, and control—this architecture has achieved remarkable success in general-purpose computing. However, when attempting to apply it directly to the high-level, universal modeling of Agent, its inherent limitations become apparent. Re-examining Agent's information processing logic from first principles, we identified shortcomings in the von Neumann architecture's direct mapping of Agent's core capabilities and, based on this, propose the following two structural adjustments and additions:

First, regarding the 'computation' function: in Agent's information processing flow, computation is essentially the process of operating on stored data elements and established rules to derive new information. Crucially, this newly generated information fundamentally remains subordinate to the generalized set of original information. Therefore, abstracting the computation function and integrating it into the unified concept of Dynamic Storage more accurately reflects computation's intrinsic dependence on storage and their synergy. This move also resonates with the currently prominent 'processing-in-memory' engineering paradigm.

Second, recognizing the Creation function as a key capability distinguishing Agent from classical computational systems holds crucial theoretical significance. It is not merely limited to transforming existing information or logical deduction; its deeper connotation lies in the active generation of entirely new and unanticipated information and solutions. This capability embodies Agent's response to uncertainty, its realization of true autonomy, and its capacity for innovative breakthroughs, and thus warrants consideration as an independent, fundamental functional dimension. The evident inability of classical computational models like the von Neumann architecture to accommodate achievements of human knowledge creation, such as Newton's discovery of universal gravitation or Einstein's formulation of relativity, within their explanatory frameworks, conversely attests to the unique and significant value of the Creation function.

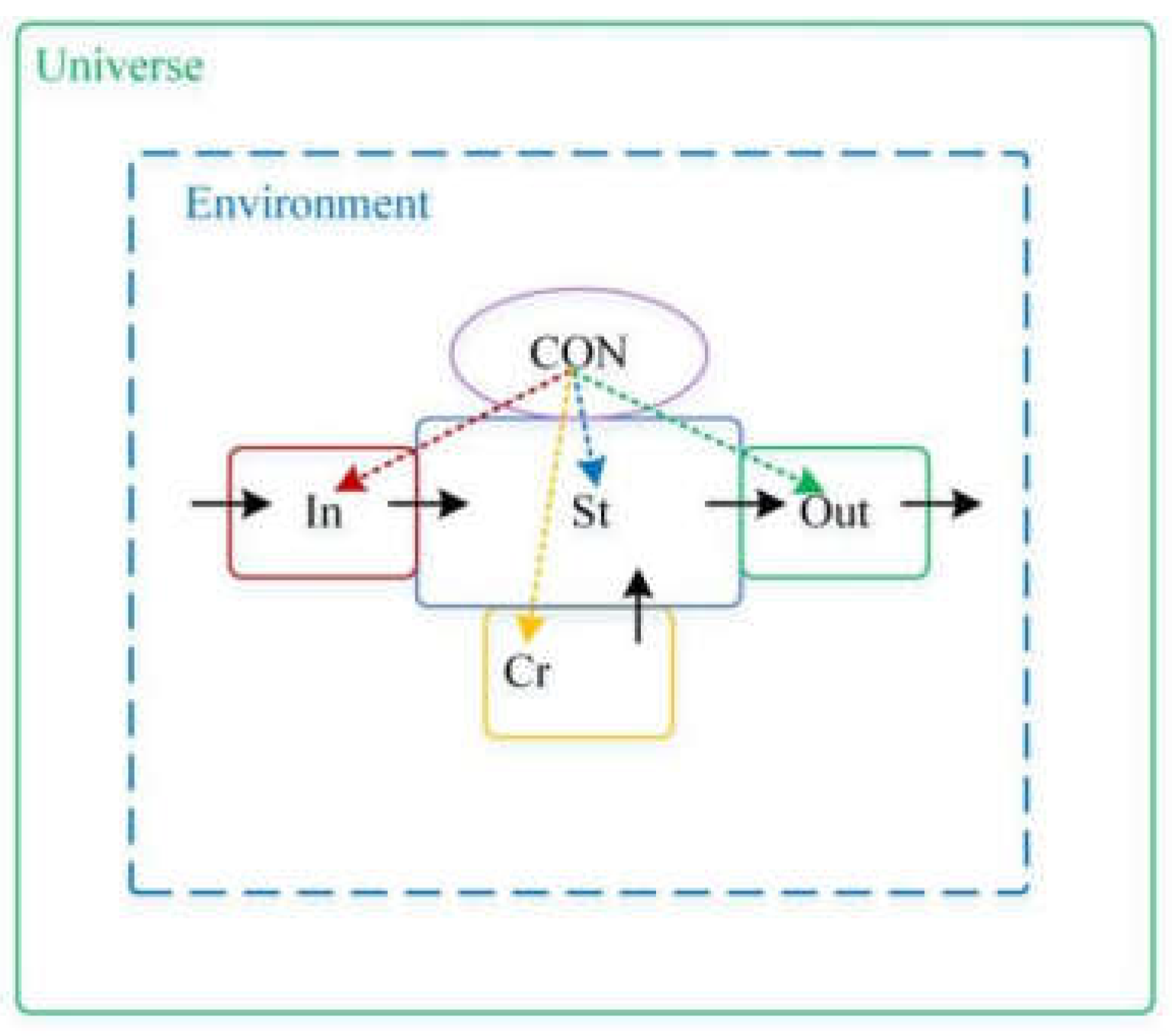

Based on the foregoing first-principles analysis of Agent as an information processing system, and by carefully absorbing the insights and inspiration from the von Neumann architecture, we have identified and refined a novel unified model that adheres to first-principles construction. This model comprises five fundamental functional modules: Information Input, Dynamic Storage, Information Creation, Information Output, and Control. Originating from the minimal necessary stages of information flow, these five functional modules constitute the axiomatic foundation of this unified model of Agent. This model aims to capture the universal and essential attributes of Agent, providing an irreducible theoretical starting Pole for cross-domain research. We name this unified model Standard Agent Model,As shown in

Figure 3.

5. The Evolutionary Dynamics of Agent: A Mechanism Based on Standard Agent Model

Standard Agent Model provides us with a static framework for describing the core capabilities of Agent, upon which classifications of types and relationships have been made. However, a key characteristic of Agent is its dynamism—its capabilities are not immutable but continuously evolve through interaction with the environment and internal operations. Understanding the intrinsic laws and driving forces behind these capability state transformations is a crucial component of Generalized Agent Theory. Within the framework of Standard Agent Model, we will introduce a field-based perspective to explore the dynamics mechanism of Agent capability state transitions, striving for independence from any a priori temporal background and focusing on the structure of capability space itself and its intrinsic driving forces.

5.1. Agent Capability Space

The capability state of Agent A is defined by its capability vector

:

All such capability vectors constitute the high-dimensional Agent Capability Space ().

Within this space, two Poles exist as theoretical references, corresponding precisely to the two boundary Agent types we defined in previous sections based on Standard Agent Model:

Definition 5. 1

Alpha Pole ():

This pole corresponds to Alpha Agent, whose capability vector is the zero vector , representing the absolute zero or baseline state of capabilities.

Definition 5. 2

Omega Pole ():

This pole corresponds to Omega Agent, whose capability vector is the limit state where all components are infinite, , representing the idealized state of infinite capabilities.

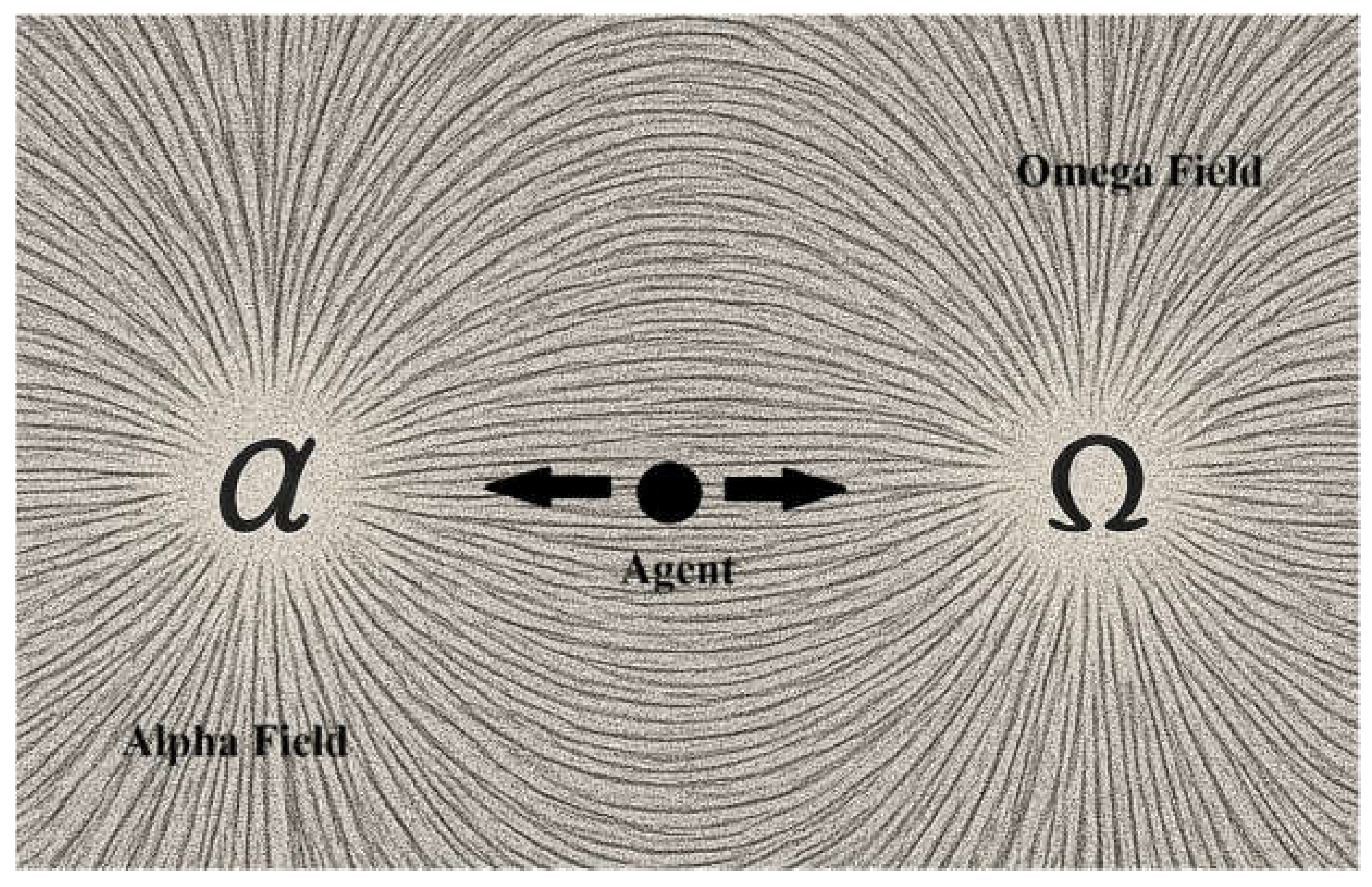

Any Finite Agent () occupies a state pole within Agent Capability Space between and . The "evolution" of Agent is the migration of its state pole within . To understand the driving forces behind this migration, we propose Pole Intelligent Field Model. This model posits that Agent Capability Space is permeated by two fundamental, opposing "fields of force" that collectively determine the tendency of Agent capability transformation.

5.2. Pole Intelligent Field Model

To understand the driving forces behind Agents migration in Capability Space, we propose Pole Intelligent Field Model. This model posits that Agent Capability Space

is permeated by two fundamental, opposing "fields of force" that collectively determine the tendency of Agent capability transformation,As shown in

Figure 4.

Definition 5. 3

Alpha Degradation Field (Alpha Field,):

This is a vector field defined throughout Agent Capability Space , referred to as Alpha Field and denoted as . It depends on the current capability state of Agent and its environment . It represents the aggregate effect of all factors causing capability decay, simplification, or regression towards the baseline state. These factors may stem from resource consumption, information forgetting, structural degradation, environmental noise interference, and the intrinsic costs of maintaining capabilities. The direction of generally points towards diminishing capabilities, i. e., tending to "pull" the state Pole towards Alpha Pole ().

Definition 5. 4

Omega Enhancement Field (Omega Field,):

This is likewise a vector field defined on , referred to as Omega Field and denoted as , also dependent on state and environment . It represents the aggregate effect of all factors promoting capability enhancement, complexification, or development away from the baseline state. These factors are associated with processes such as learning, adaptation, creation (), effective resource acquisition and utilization, self-organization, and synergistic effects. The direction of generally points towards enhancing capabilities, i. e., tending to "push" the state Pole away from Alpha Pole and towards Omega Pole () within capability space.

To more precisely describe the evolutionary impact of these fields on a specific Agent, we hereby introduce the concept of "force," reifying the effect of the field on a specific Agent.

Definition 5. 5

Alpha Force ()

Alpha Force is the specific evolutionary driving force exerted by Alpha Degradation Field on Agent A, which is in a particular capability state

and environment

, causing its capabilities to tend towards decay, simplification, or regression. For Agent A, the Alpha Force

it experiences is equal to the field vector of Alpha Degradation Field at its capability state point:

Definition 5. 6

Omega Force ()

Omega Force is the specific evolutionary driving force exerted by Omega Enhancement Field on Agent A, which is in a particular capability state

and environment

, prompting its capabilities to tend towards enhancement, complexification, or development. For Agent A, the Omega Force

it experiences is equal to the field vector of Omega Enhancement Field at its capability state point:

5.3. Net Intelligent Evolution Force and Net Intelligent Evolution Field

Within Agent capability space , the diffused Alpha Degradation Field () and Omega Enhancement Field () interact, collectively shaping the dynamic landscape of Agent capability evolution. At any given capability state point, the ultimate evolutionary drive experienced by Agent is embodied as Net Intelligent Evolution Force, and the spatial distribution of these forces constitutes Net Intelligent Evolution Field.

Definition 5. 7

Net Intelligent Evolution Force ()

Net Intelligent Evolution Force is the vector difference between Omega Force and Alpha Force acting upon Agent A, determining the instantaneous final direction and magnitude of its evolution in capability space. The mathematical description of Net Intelligent Evolution Force is:

Definition 5. 8

Net Intelligent Evolution Field ()

Net Intelligent Evolution Field is the vector distribution of the net evolutionary tendency at every point in capability space, where its field vector at a point is equal to the vector difference between Omega Enhancement Field and Alpha Degradation Field. Net Intelligent Evolution Force is the manifestation of Net Intelligent Evolution Field on a specific Agent. The mathematical description of Net Intelligent Evolution Field is:

Thus, the relationship between Net Intelligent Evolution Force and Net Intelligent Evolution Field is:

Net Intelligent Evolution Field assigns a vector to every point in capability space, collectively depicting the local dynamic landscape of capability state transitions:

The direction of the field vector indicates the instantaneous direction in which Agent capability is most inclined to evolve at that state .

The magnitude (modulus) of the field vector, , reflects the relative instability of that state, or the intensity of the capability transformation tendency. The greater the modulus, the stronger the evolutionary driving force.

Core Principle of Dynamics: The state migration of Agent in capability space (i. e., the evolution of its capabilities) is directly driven by Net Intelligent Evolution Force () generated by Net Intelligent Evolution Field (the vector difference of Omega Enhancement Field and Alpha Degradation Field) acting upon it. The Agents state point tends to shift along the field lines or integral curves of the field corresponding to this force.

Based on the structure of Net Intelligent Evolution Field, we can analyze the fundamental evolutionary trends of Agent in capability space:

-

1.

Regions Tending Towards Omega Pole ():

In these regions of capability space, the influence of Omega Enhancement Field dominates Alpha Degradation Field , causing the Net Intelligent Evolution Field vector to generally Pole towards comprehensive capability enhancement, driving Agent to evolve towards .

-

2.

Regions Tending Towards Alpha Pole ():

In these regions, the influence of is predominant, causing to generally Pole towards comprehensive capability decay, driving Agent to regress or degenerate towards .

-

3.

Equilibrium/Steady States ():

There may exist certain state points or regions in capability space where Net Intelligent Evolution Field is a zero vector, i. e., 0, This means that at such states, the driving forces for enhancement and decay achieve a dynamic equilibrium, and the system has no net evolutionary tendency. These equilibrium points can be further distinguished based on the structure of the nearby field:

Stable Equilibrium Points (Attractors): Nearby field lines converge towards these points; Agent tends to return to such a state after minor perturbations.

Unstable Equilibrium Points (Repellers or Saddle Points): Nearby field lines diverge from these points; once Agent deviates, it will continue to move away from such a state. The existence and stability of equilibrium states determine whether Agents capability structure tends to solidify at a specific level or will continuously evolve.

It must be particularly emphasized that Net Intelligent Evolution Field, , itself describes the local, time-parameter-independent tendency of transformation for each state Pole in capability space. Although the fields structure implies the possibility of evolutionary paths, this does not mean the model a priori sets a unified external time parameter . A specific time parameter or evolutionary order parameter can be introduced upon this base field, for example, by solving the fields integral curves (formalized as ) to obtain specific evolutionary trajectories, or by modeling it as a Markov process based on state transition probabilities determined by . The core of this section is to define this Net Intelligent Evolution Field itself as the basis for capability evolution, independent of specific path parameters, which helps maintain the theorys potential for background independence.

The dynamic mechanism of this model finds broad corroboration in the real world. For instance, in

biological evolution, species develop more complex adaptive capabilities and tend towards higher capability states through genetic variation (associated with

) and natural selection (driven by environmental feedback, Omega Field)[

20]; simultaneously, environmental pressures, genetic drift, and senescence (Alpha Field) can lead to simplification or extinction, tending towards

[

21].

Individual learning processes also reflect these dynamics: skills are acquired (enhancing

, etc.)[

22]; through practice and information input (driven by Omega Field), while forgetting and skill decay (reflecting Alpha Field) are ubiquitous, potentiallyleading to a stable skill level (equilibrium state

)[

23]. In

technological development, artificial intelligence models (such as large language models) experience rapid capability enhancement (tending towards

) driven by data and computational power (Omega Field)[

24], yet are still limited by architecture, costs, and ethics (constituting Alpha Field); their development is not infinite[

25]. These examples demonstrate that the interplay of Alpha Field and Omega Field provides a unified perspective for understanding the evolutionary trends of complex systems across different domains.

5.4. Wisdom (W): The Intrinsic Metric of Agent Evolution and Its Dynamics Equation

In the aforementioned dynamics mechanism, the response of Agent to Net Intelligent Evolution Force is not entirely uniform. To explain the different evolutionary rates exhibited by different Agents towards one of the two intelligent poles under the same intelligent evolution force, we introduce an entirely new fundamental dimension termed Wisdom (W), with W as its dimensional symbol.

Definition 5. 9 Wisdom (W)

Wisdom (W) is a key intrinsic metric of Agent, a scale measuring the magnitude of its comprehensive information processing capability, used to quantify the total amount of intelligence possessed by Agent. It further reflects its response characteristics when changing its capability state under the influence of intelligent field or intelligent force in capability space, which in some cases manifests as "evolutionary inertia" (e. g., resisting decay), and in others as accelerated utilization of favorable conditions.

The constitution of Wisdom (W) is conjointly determined by the current synergistic structure of the five core functions defined by Standard Agent Model(Input, Output, Dynamic Storage, Creation, Control)and the total amount of information within its dynamic storage. In this paper, we preliminarily define Wisdom (W) as a quantitative measure of the comprehensive capability of Agent and emphatically reveal its critical role in evolutionary dynamics. In future research, we plan to further explore the multidimensional constitution of Wisdom (W); for instance, by systematically distinguishing between "fundamental information processing capabilities," represented by functions such as Input, Output, Dynamic Storage, and Creation, and "advanced regulatory wisdom," centered around the Control function, and by conducting more refined quantification and modeling of both aspects and their interactions.

Unlike mass in classical physics, which is usually a constant scalar, Wisdom (W) is a dynamic variable. It not only reflects the current "accumulation" of Agent but also influences its future "developmental potential," exhibiting the following key characteristics:

Matthew Effect: If Omega Pole is considered the positive direction of evolution, when Net Intelligent Evolution Force (force direction points towards Omega Pole), Agent with greater Wisdom (W) can more effectively utilize this force due to its internal structure and information reserves, manifesting as greater evolutionary gain towards Omega Pole, thus achieving faster positive acceleration.

Resilience Effect: When Net Intelligent Evolution Force is negative (force direction points towards Alpha Pole), Agent with greater Wisdom (W) can better resist negative impacts due to its structural stability and information redundancy, manifesting as greater evolutionary hindrance towards Alpha Pole, thereby slowing the rate of decay. Thus, Wisdom (W) is not a simple inertial mass but a state-dependent, direction-sensitive response-regulating factor.

The Wisdom Dynamics Equation is expressed as follows:

-

1.

When(Matthew Effect is dominant): whereis a positive proportionality constant

-

2.

When(Resilience Effect is dominant): whereis a negative proportionality constant

This set of equations quantitatively reveals how the external evolutionary pressure (force) acting on Agent, through its dynamic intrinsic attribute (Wisdom), ultimately determines the specific trajectory (acceleration) of its capability evolution. It is important to note that since evolutionary acceleration will lead to changes in the capability state, and Wisdom itself is determined by the capability state (functional structure and total information), the change in Wisdom is a natural component of the evolutionary process. This implies that Wisdom and capability state will influence each other and co-evolve, reflecting the non-linear and adaptive characteristics of Agent evolution. In specific analysis, this is typically handled through iterative methods of discrete time steps or by quasi-static approximation within a specific timescale.

This framework lays the foundation for a deeper understanding and quantification of the evolutionary differences among various Agents. Wisdom is a core, fundamental parameter or metric defined within Generalized Agent Theory. Given its uniqueness and foundational role in intelligent phenomena, it has the potential to become a new dimension in a broader sense. However, whether it can ultimately be universally accepted by the scientific community as a new fundamental dimension(metric) to existing physical dimensions will depend on more extensive future theoretical verification, empirical research, and compatibility with other disciplines. This exploration still requires continuous in-depth study and observation.

5.5. System Construction of Generalized Agent Theory

In summary, Standard Agent Model constructed from first principles, the Agent classification system and relationship types derived from this model, and the Agent evolutionary dynamics mechanism expounded in this chapter—which is based on Agent capability space, describes evolutionary drive via Polar Intelligent Field Model, and introduces Wisdom (W) as a key intrinsic attribute of Agent and the core object of field interaction—collectively constitute the core theoretical framework of Generalized Agent Theory . This framework provides a unified, foundational conceptual and mathematical toolkit for understanding and analyzing scientific phenomena across different domains and levels.

6. Analysis of Intelligence and Consciousness Based on Generalized Agent Theory

The nature of intelligence and consciousness represents not only core challenges in modern science and philosophy but also key issues in artificial intelligence [

26]. Despite significant progress in related research, existing theories (such as Integrated Information Theory, Global Workspace Theory, etc. [

27,

28]) and definitions are numerous and still face challenges in terms of unity, fundamentality, and addressing the hard problem of subjective experience, highlighting the necessity of constructing a more universal theoretical framework [

29].

Generalized Agent Theory, as a unified model of Agent constructed from first principles, offers a unique perspective on this. This chapter aims to utilize the core principles of Generalized Agent Theory—including Standard Agent Model, Agent Capability Space () and its evolutionary dynamics ( Pole (), Pole (), Alpha Degradation Field (), Omega Enhancement Field ())—to elucidate its definitions of intelligence and consciousness and their interrelationship, rather than conducting an exhaustive comparative analysis of numerous existing theories.

Generalized Agent Theory posits that understanding intelligence and consciousness first requires clarifying the basis of their existence, objectives, driving forces, and intrinsic connections. In this regard, the core viewpoints provided by Generalized Agent Theory are:

1. Subject Affiliation: Intelligence and consciousness are inherent attributes of Agent (as defined by Standard Agent Model); their emergence and manifestation are inseparable from Agents structural and functional basis.

2. Fundamental Objective: The fundamental role of intelligence and consciousness is to serve Agents survival and evolution within Agent Capability Space . Agents specific evolutionary direction (towards or , or maintaining a certain equilibrium state) is not predetermined but depends on the direction of the resultant force from Alpha Degradation Field and Omega Enhancement Field that Agent faces in a specific environment, i. e., it is determined by Net Intelligent Evolution Field.

3. Intrinsic Driving Force: The fundamental driving force for the emergence and operation of intelligence and consciousness originates from Pole Intelligent Field Model defined in Generalized Agent Theory, specifically manifesting as the driving force exerted by Net Intelligent Evolution Field formed by the interaction of Alpha Degradation Field () and Omega Enhancement Field ().

4. Intrinsic Relationship: Intelligence is the overall efficacy and adaptability exhibited by Agent in utilizing all its five core functions (Input, Output, Dynamic Storage, Creation, and Control) to evolve towards its fundamental objective under a given driving force. Consciousness, its essence is explicitly demarcated by Generalized Agent Theory as the Control function (Con) and its operational process. Therefore, consciousness is a key component of overall intelligence, rather than a concept parallel to or separate from it.

The subsequent content of this chapter will revolve around these core viewpoints, conducting in-depth discussions on the definitions, assessment methods, type classifications, and interrelationships of intelligence and consciousness according to Generalized Agent Theory.

6.1. Definition and Assessment Methods for Intelligence

Based on Generalized Agent Theory and adhering to first principles, this section will define "intelligence" from three dimensions: its subject (the framework of Standard Agent Model), fundamental objective (evolutionary direction in capability space), and intrinsic driving force (driven by Pole Intelligent Field Model).

Definition 6. 1 Intelligence

Within the framework of Generalized Agent Theory, intelligence is defined as: the overall information processing efficacy and environmental adaptability exhibited by an Agent conforming to Standard Agent Model, as it utilizes all its five core functions (Information Input (In), Information Output (Out), Dynamic Storage (DS), Information Creation (Cr), and Control function (Con)) under the combined influence of its inherent Alpha Degradation Field () and Omega Enhancement Field (), to adapt to the environment and achieve its evolutionary objective (tending towards (Pole), (Ω Pole), or a specific equilibrium state) determined by Net Intelligent Evolution Field.

The magnitude or strength of intelligence can be assessed through some comprehensive measure of Agents capability vector

. This capability vector is composed of the capability components of Agents five core functional modules:

There are multiple methods for a comprehensive measure of

. A commonly used method is the

Weighted Sum Method, which assigns different weights

(where

) to the five core capabilities. The comprehensive assessment value of intelligence,

, can then be expressed as:

The specific values of the weights can be adjusted according to Agents environment, task requirements, or assessment focus.

, as the core for coordinating all capabilities, its weight is particularly important when assessing advanced intelligent behaviors. To illustrate the application of the weighted sum method, we set a hypothetical group of weights. Considering the central coordinating role of the Control function (Con) and the importance of the Creation function (Cr) for advanced intelligence, we can set the following weights (this set of weights is merely illustrative; in practical applications, they can be determined by methods such as the Delphi method):

Now, let us assume capability scores for three different types of Agent (assuming a scoring range of 0-100, where higher scores represent stronger capabilities; these scores are also illustrative), as shown in

Table 1.

The weight distribution and capability scores in the example above are highly simplified and subjectively set, merely to demonstrate the calculation process. In actual intelligence assessment, how to objectively and accurately quantify the five core capabilities ( to ), and how to set reasonable weights according to specific contexts and assessment objectives, require in-depth research to form executable schemes based on specific situations. For example, some tasks may place more emphasis on creativity ( being higher), while others may value the stability and precision of control ( being higher). Furthermore, these capabilities may not be entirely independent, and their interactions could also affect overall intelligent performance.

The intelligence assessment framework based on the weighted sum method proposed in this section not only provides a foundational structure for measuring the current intelligence level of Agent, but its calculated comprehensive capability value also holds potential as a preliminary or approximate quantification method for Wisdom (W) as introduced in Chapter 5. This is because the core of Wisdom lies in measuring the comprehensive information processing capability of Agent, and the weighted sum method is precisely a specific calculation of this comprehensive capability.

However, it should also be noted that Wisdom, as defined in

Section 5. 4 of this paper, is further emphasized as being conjointly determined by the "current synergistic structure" of the five core functions and the "total amount of information in dynamic storage," and is manifested through unique response characteristics in evolutionary dynamics. Therefore, although the weighted sum method offers a valuable starting point for quantification, future research should be dedicated to exploring more comprehensive and precise methods for measuring the rich connotations of Wisdom. For instance, considerations should include how to more directly incorporate factors such as the synergistic structure among modules, and the total amount and quality of information in storage, into the computational model of Wisdom, thereby more profoundly revealing its essence as a key intrinsic attribute in the evolution of Agent.

6.2. Definition and Classification of Consciousness

Within Standard Agent Model—the core of Generalized Agent Theory—the Control function occupies a unique and critical position. Unlike the other four fundamental functions that directly process information, the core responsibility of the Control function is the overall coordination, management, and regulation of these four modules and the information flows they carry. This characteristic of unified command and management over internal states and external behaviors makes the Control function a natural focal Pole for exploring Agent autonomy, decision-making, and even deeper cognitive phenomena. Although the direct association of consciousness with the control function is not currently mainstream, it has been reflected in multidisciplinary theories[

30]. Most existing research views control as a result or condition of consciousness, rather than viewing consciousness itself as a control mechanism[

31]. Generalized Agent Theory goes further, directly defining the Control function itself and its operational process as the essence of consciousness, formulated as follows:

Definition 6. 2 Consciousness

Within the framework of Generalized Agent Theory, consciousness is defined as: Agents Control function (Con), by virtue of its unified command capability, conducting overall coordination and advanced regulation of the four core functions—Information Input (In), Information Output (Out), Information Dynamic Storage (DS), and Information Creation (Cr)—under the evolutionary drive formed by Alpha Degradation Field and Omega Enhancement Field, to achieve its fundamental evolutionary objective of tending towards Pole or Pole in Agent Capability Space ().

As previously elaborated for the Control function (Con), the meta-commands it generates (constituting the control command space ) can originate from various informational bases: Agents inherent preset instructions, original content generated via the Information Creation function (Cr), and information acquired from the external environment via the Information Input function (In). All this information must be represented and integrated within the Dynamic Storage space () to serve as the basis for the Control functions decision-making.

Based on the meta-commands generated by the Control function Con (whose decision basis stems from different primary sources of information in ) and the state of Control function capability , consciousness can be distinguished into the following four basic types:

-

Self-Consciousness ()

Core Definition: Agents control space meta-commands are not empty, or they originate from inherent control meta-commands within the control space, or they originate from control meta-commands generated by its own Information Creation function (Cr) and integrated into .

Characteristics: Reflects Agent controlling its own information processing capabilities (information input, output, dynamic storage, and creation) by following its own control commands.

-

Other-Consciousness ()

Core Definition: Agents control space meta-commands are not empty and originate from control meta-commands acquired by Agent through Information Input and integrated into Dynamic Storage space .

Characteristics: Reflects Agent essentially controlling its own information processing capabilities (information input, output, dynamic storage, and creation) by following control commands from other Agent instances.

-

Mixed Consciousness ()

Core Definition: Agent simultaneously possesses self-consciousness and other-consciousness.

Characteristics: Reflects Agent following control commands that include its own as well as those from other Agent instances, operating its own information processing capabilities (information input, output, dynamic storage, and creation).

Mathematical Criterion: .

-

Unconsciousness ()

Core Definition: The capability of Agents Control function (Con) is equal to zero, meaning its control function is completely missing or ineffective, unable to read Agents control space meta-commands. Or Agents control space meta-commands are empty.

Characteristics: Agent does not possess control function, or lacks effective commands for the control function to call upon.

Mathematical Criterion: .

Based on the classification of consciousness types by Generalized Agent Theory, a preliminary determination of the consciousness state of current artificial intelligence (AI), particularly large language models (LLMs), can be made. Although LLMs exhibit powerful information processing and seemingly novel text generation capabilities, their operational essence still lies within the Turing computation framework, involving complex computations and information recombination based on preset rules and external data (which constitute their core internal state space ).

Generalized Agent Theory distinguishes between the Dynamic Storage function (DS), primarily responsible for information preservation and inference, and the Information Creation function (Cr), which produces genuine novelty. Accordingly, the seemingly innovative outputs of LLMs stem more from their powerful DS functions deep processing of massive training data and current input (In module), rather than the original emergence defined by the Cr function that transcends the scope of existing information[

32]. Most critically, this information, primarily generated at the DS level, even if exhibiting certain complexity and utility, has not currently been proven capable of autonomously generating and dominating the command space (

) of its Control function (Con) to form core driving objectives with autonomy originating from the internal Information Creation function (Cr)[

33].

Therefore, because the core instructional content for the Control function (Con) of LLMs during decision-making entirely originates from external input (imported into via the In module) or internal models formed from external data training (within the DS module, forming part of ), and completely lacks original, autonomous driving content independently generated by its own Information Creation function (Cr) to dominate , it follows that according to the strict criteria of Generalized Agent Theory, the current form of consciousness in AI and LLMs should be judged as other-consciousness (), and not self-consciousness () or mixed consciousness () containing components of self-consciousness.

7. Discussion of Important Problems in Physics Based on Generalized Agent Theory

This chapter will delve into how Generalized Agent Theory can be employed to examine and analyze several core issues in physics. We will begin with the argument for the "agentification" of the Universe, then proceed to discuss the delineation of reality and non-reality, the origin of uncertainty, and the nature of time and space within the framework of Generalized Agent Theory. Building upon this, the chapter will focus on elucidating how, from the perspective of Observer as Agent and based on differences in the intelligence levels of observers, new approaches can be provided for the unification of classical mechanics, relativity, and quantum mechanics, and offer a unified interpretation for the origin of entropy and its observer-dependence. Through these systematic explorations, this chapter aims to reveal the theoretical potential, adaptability, and robustness of Generalized Agent Theory in understanding and unifying significant problems in physics, and to provide inspiration for future interdisciplinary research.

7.1. Argument for the Universe as a Dynamically Evolving Agent

Generalized Agent Theory universally defines Agent as a system possessing five fundamental functions and a corresponding capability vector. From this, we deduce in this section that the Universe is an Agent conforming to Standard Agent Model, capable of exhibiting three typical states—Alpha Agent, Finite Agent, and Omega Agent—while continuously evolving among these three states under the drive of Alpha Field and Omega Field. The view of Universe as Agent, constructed within this theoretical framework, provides a unified explanatory framework based on first principles for a deeper understanding of the fundamental attributes of the Universe, its evolutionary laws, and its connection to fundamental problems in physics.

The core of Generalized Agent Theory lies in its Standard Agent Model and the extreme Agent types within the capability spectrum—Alpha Agent (, all capabilities at zero) and Omega Agent (, all capabilities at infinity)—as well as Finite Agent () existing between them. By analyzing the relationship between the Universe as a whole and the forms of Agent within it, the "agentification" of the Universe and its dynamic evolution can be argued.

Firstly, consider the nature of Omega Agent (). is defined as possessing infinite capabilities in information input, output, storage, creation, and control. If any Agent in the Universe evolves into , then based on its omniscient and omnipotent definition, this must be able to perceive, understand, influence, and ultimately integrate all spacetime, matter, and information of the Universe, whereupon the Universe enters an Omega Agent state (). If any part of the Universe exists outside the cognition and control of this , then said has not yet reached its defined completeness, which would constitute a theoretical paradox. Therefore, the theoretical existence of necessarily implies that the entire Universe can be "agentified".

Secondly, examine the characteristics of Alpha Agent (). All core capabilities of are zero; it possesses no information processing capability. If all constituent units and subsystems in the Universe were to degenerate to the state, then, deducing from the perspective of Agent relationship types in Generalized Agent Theory, these instances would conceptually merge or homogenize into a single entity, as lacks any capability to define its independent boundary or to interact, meaning no true separation exists among them. Thus, when the Universe is entirely composed of , it as a whole enters an Alpha Agent state ().

Thirdly, when the constitution of the Universe is neither purely in a state nor has it collectively reached a state, it must internally contain one or more Finite Agent instances (). The capability vector of these instances has at least one non-zero and finite component, while not all components tend towards infinity. In this situation, the overall state of the Universe is characterized by the that exhibits the most active and advanced capabilities. This implies that the Universe itself can be regarded as a special Finite Agent(), composed of Finite Agent instances and possibly a background of numerous Alpha Agent instances; the Universe is in a finite state ().

Thus, the three fundamental Agent states of the Universe as a generalized Agent can be summarized as follows:

Omega Agent Universe (): Dominated by a sole , the overall capability of the Universe tends towards infinity; also termed the Omega state of the Universe.

Alpha Agent Universe (): Completely composed of and homogenized by , the overall capability of the Universe is zero; also termed the Alpha state of the Universe.

Finite Agent Universe (): Contains one or more instances, the Universe as a whole manifests as a special Finite Agent ; also termed the finite state of the Universe.

In summary, the Universe as a whole can be logically proven to be an Agent conforming to Standard Agent Model, possessing three core states (

). According to the Agent evolutionary dynamics from Chapter 5, the capability (

of any Agent A is driven by the combined effects of Alpha Degradation Field (

) and Omega Enhancement Field (

). Their vector difference, Net Intelligent Evolution Field, is expressed as:

where E is the environment. For Universe Agent

(with capability

, where its "environment"

can be considered its own internal st

ate or absorbed into the field function), its universe-level field formula is approximated as:

Hence, Universe must dynamically evolve under the continuous influence of its intrinsic, universe-level field.

Having demonstrated that the Universe is a dynamically evolving generalized Agent, we can determine the state of the current Universe (). Empirical observations indicate the widespread existence of Finite Agent instances () such as humans and artificial intelligence systems in the current Universe. The existence of precludes the possibility of the current Universe being in a state. Concurrently, there is no evidence to suggest that the current Universe has reached an -dominated state. Therefore, it can be definitively determined: the current Universe, as a generalized Agent , is in a finite state (). This determination not only reveals the current macroscopic intelligent attributes and future evolutionary potential of the Universe but, more importantly, establishing the current state of the Universe lays the theoretical foundation for subsequently applying Generalized Agent Theory to analyze the applicability boundaries and theoretical precision of core theories in modern physics (such as relativity and quantum mechanics).

7.2. Interpretation of Objective Reality and Subjective Non-reality

The division between subjective non-reality and objective reality is a fundamental issue in physics and philosophy[

34]. Generalized Agent Theory provides a novel interpretive framework, positing that these concepts are not absolute ontological attributes but are closely related to the capability state types of Agent (Alpha Agent, Omega Agent, and Finite Agent). In this section, "Subjective Non-reality" (corresponding state space denoted by

) and "Objective Reality" (corresponding state space denoted by

) are discussed.

When any Agent A is at the lowest end of the capability spectrum, i. e., in Alpha state (), its overall capability vector has all components at zero, possessing no information processing or interaction capabilities. In this state:

Agent As "Subjective Non-reality" ():

Agent As "Objective Reality" ():

For , since the basis for perceiving an objective world and constructing a subjective internal state is entirely absent, neither "objective reality" nor "subjective non-reality" can be meaningfully discussed.

If any Agent A reaches the highest end of the capability spectrum, i. e., the omniscient and omnipotent Omega Agent (), all components of its tend towards infinity (). For this , due to its infinite capabilities pervading the entire Universe, it becomes an Omega Agent Universe; an "external objective world" that needs to be perceived and interacted with in the traditional sense does not exist for it. Therefore, the concept of "objective reality" that traditionally requires perceptual interaction dissolves here, and the entire Universe becomes its own subjective non-real internal world:

Agent As "Objective Reality" ():

Agent As "Subjective Non-reality" ():

At this Pole, s points to the Omega-state Universe itself, . This all-encompassing, thoroughly internalized subjective non-real state is the core characteristic and ultimate manifestation of Omega Universe (); the entire Universe at this juncture achieves complete subjective non-realization, with no distinction between subject and object.

Only when Universe Agent AU is in a finite state (), i. e., when one or more Finite Agent instances () exist within the Universe, does the distinction between "objective reality" and "subjective non-reality" acquire its most typical and crucial significance, and is strictly relative to a specific . For a Finite Agent : all that it faces which lies beyond its current capability boundary constitutes "s objective Universe". This concept is defined as:

Definition 7.

s Objective Universe ()

The cosmic potential of Agent is the entire set of Agent instances, both perceivable and unperceivable by said , represented by the "difference" between the infinite information processing capability () possessed by the Universe as Omega Agent and the finite capability ()) of this .

's "Objective Reality" (

) is that portion of objective information actually perceived and incorporated into its cognitive scope from "

's Objective Universe" (

) by virtue of its finite Information Input capability (

), i. e.,

s external objective environment

:

's "Subjective Non-reality" (

) is its internal information space

(primarily composed of Dynamic Storage space

, Information Creation space

, and control command space

) constructed based on its own internal information processing mechanisms (driven by capabilities such as

,

,

):

Each , due to its differing capabilities, will necessarily define a distinct "Objective Reality" and construct a different "Subjective Non-reality" , profoundly reflecting the Agent-dependence of these concepts. Therefore, for Finite Agent , the division of objective reality and subjective non-reality is not only relative to its own capabilities but is also a hallmark of Finite Agent existence. Each , due to differences in its capability vector, will inevitably define a different "Objective Reality" and construct a different "Subjective Non-reality" .

In a containing multiple instances, for different Agent instances to form a cognition of "shared objective reality," their respective must overlap, and consensus must be reached through information exchange (dependent on their respective Input and Output functions). Concurrently, the principle of individual boundaries in Standard Agent Model states that to maintain the independence of , its subjective non-reality cannot be directly read by other Agent instances; different Finite Agent instances must necessarily be separated by Alpha Agent , otherwise they would be equivalent to a single Agent, and the exchange between their subjective non-real worlds must be mediated through their "shared objective reality".

Within the unified framework of Generalized Agent Theory, given that all things in the Universe can be regarded as Agent of different forms and capability levels, The objective environment () of a Finite Agent is defined as the primary information set it identifies and acquires from other external Agent instances () via Agent 's Information Input function (). This information set strictly excludes the subjective non-real content () of other Agent instances , constituting the raw data stream for 's perception of the external world. After being incorporated into 's internal information space , this basic information will serve as the foundational elements and core basis for its subsequent subjective construction (such as forming spatiotemporal concepts, abstracting mathematical structures, and establishing theoretical systems).

In summary, Generalized Agent Theory places the subject-object problem within the dynamic evolutionary framework of Agent, revealing the relativity and dynamism of subjective non-reality and objective reality, and providing a novel theoretical interpretation for this ancient philosophical issue based on the first principles of Agent.

7.3. Essential Interpretation of Certainty and Uncertainty

Certainty and uncertainty stand as pivotal core concepts in physics and the philosophy of technology, continuously exerting a profound influence on numerous theories. They not only shape our understanding of a system's knowability and predictability but also form the fundamental bedrock of the determinism versus indeterminism debate[

35]. Within the framework of Generalized Agent Theory, these concepts receive a unique and distinct interpretation.

The theory posits that certainty and uncertainty are not inherent ontological attributes of a system but are relative to the capability state of Agent, and are closely related to the overall state of the Universe as a generalized Agent —Alpha state (, null capability), Omega state (, infinite capability), or finite state (, finite capability).

In the two extreme states of the Universe, the connotations of certainty and uncertainty exhibit significant specificity. When Universe Agent is in Alpha state , i. e., its overall capability vector , the Universe as a whole lacks any capability for information processing, state representation, or interaction with an environment. In this state, due to the absence of information states and processing, there is no basis for "knowing" (which would lead to certainty) or "not knowing" (which would lead to uncertainty). Therefore, for a Universe in state , the concepts of certainty and uncertainty themselves lose their basis of applicability.

Conversely, when Universe Agent reaches Omega state , all components of its capability vector tend towards infinity (), manifesting as an omniscient and omnipotent unified entity. Omniscience implies complete information about and perfect predictive capability for the state of the Universe itself (i. e., its entire content); omnipotence implies complete control over its evolution. In this state, any uncertainty arising from insufficient information or limited predictive capability vanishes. Therefore, for Universe Agent in state , certainty is its core, essential attribute.

However, when we consider the case where Universe Agent is in a finite state —i. e., one or several Finite Agent instances exist, and the Universe as a whole is neither in state nor has reached state —the relationship between certainty and uncertainty presents a special dialectical characteristic. The capability of any Finite Agent is limited, meaning at least one component of its capability vector is less than infinity. However, the external objective environment faced by (e. g., the complex dynamics of the physical Universe) and the potential complexity and dynamic changes of its internal subjective space often far exceed what its finite capabilities can fully grasp. The limitations of its information input (output, storage, creation, and control capabilities) prevent it from acquiring all relevant information, establishing perfect predictive models, or achieving completely precise control. Therefore, any Finite Agent , when interacting with objective and subjective worlds and making decisions, inevitably faces fundamental, ineliminable uncertainty.

Despite facing such fundamental uncertainty, the degree of uncertainty experienced by a Finite Agent is relative. When s capability is stronger relative to the complexity of the problem it handles or the challenges of its environment, it can more effectively acquire information, predict the future, and control outcomes, thereby achieving higher relative certainty within its cognitive and operational scope. Conversely, the weaker its capability relative to environmental and task demands, the greater the uncertainty it faces.

In summary, due to the inherent, unbridgeable gap between the capability of Finite Agent and the complexity of the world it faces, uncertainty constitutes an essential attribute of Finite Agent (including the Universe itself in a finite state ). Finite Agent always operates and evolves under conditions of incomplete information and imperfect predictive capability.

A function can be designed to express Generalized Agent Theorys interpretation of uncertainty, for example:

based on the capitalization in the conceptual term 'UnCertainty'.,represents the level of uncertainty. Its range is .

represents the comprehensive capability of Agent, its range is .

represents the effective complexity of the environment, assumed to be always positive ().

(delta) is a positive constant that adjusts the sensitivity of uncertainty to environmental complexity relative to capability.

This function qualitatively satisfies the following core characteristics:

Capability Impact: The stronger Agents capability (when ), the lower the uncertainty , tending towards (complete certainty).

Complexity Impact: The higher the environmental complexity (when ), the higher the uncertainty C, tending towards (high uncertainty).

-

Behavior of Boundary Agent Types:

For Omega Agent (, when ): Uncertainty , exhibiting complete certainty.

When Agent A approaches the capability of Alpha Agent (): Uncertainty , exhibiting extremely high uncertainty.

For a strict Alpha Agent (, i. e., ): This formula is not directly defined at this Pole (as is in the denominator), which is consistent with the view in Generalized Agent Theory that the concepts of certainty and uncertainty themselves lose their conventional meaning at this stage.

This mathematical representation further reinforces the core view of Generalized Agent Theory: certainty and uncertainty are not absolute ontological attributes but are relative concepts closely related to Agents capability and the complexity of its environment. This perspective provides a unified basis, rooted in Agent theory, for understanding randomness, predictive limits, and the central role of information as manifested in physical systems (which can be viewed as different types of generalized Agent).

Furthermore, this agent-relative framework for certainty and uncertainty offers a new lens through which to re-examine and potentially reinterpret long-standing debates in physics and philosophy that have traditionally centered on system-inherent determinism versus indeterminism, suggesting that such qualities may be more precisely understood as reflections of an agents capacity to achieve certainty about a system.

7.4. Essential Analysis of Time and Space

Time and space constitute foundational concepts within physics and the philosophy of science[

36]. Generalized Agent Theory offers a new perspective for understanding the nature of time and space, positing that they are not absolute backgrounds independent of an observer or Agent, but are intimately related to Agents existence and its capability state. This section aims to elucidate how the origin, properties, and relativity of time and space can be understood based on the different states of the Universe as a generalized Agent (

)—Alpha state (

), Omega state (

), and finite state (

).

When Universe Agent is in Alpha state (), its overall capability vector . This means the Universe as a whole lacks any information processing capability, unable to perceive change (the basis of time) or establish relationships (the basis of space). Therefore, from the perspective of Generalized Agent Theory, for a Universe in state , the two fundamental concepts of time and space lose their basis for existence.

When Universe Agent reaches Omega state (), its capability vector , becoming an omniscient and omnipotent unique Agent entity. Previous analysis indicated that a Universe in this state no longer possesses objective reality but evolves into a comprehensively internalized subjective non-reality. In this state, theoretically grasps information from all points in time simultaneously and can arbitrarily reconstruct temporal sequences, causing the concept of linearly flowing, causally constrained time to lose its original limiting significance for it. Similarly, space as a framework describing extension and separation would also lose its fundamental importance for an entity capable of simultaneously perceiving and controlling all "locations". Time and space can continue to exist as a structured description within 's internal subjective non-reality, but for itself, they are more akin to internal forms under its complete control and arbitrarily shapeable, rather than rigid external constraints.

When the Universe is in a finite state (), i. e., containing Finite Agent (), the concepts of time and space emerge from the previous "void". As analyzed earlier, Generalized Agent Theory proposes that for Finite Agent , its objective reality is constituted by the various Agent instances (contours formed by boundaries) it can identify and which are constantly changing. Time and space are constructed subjectively by Finite Agent after perceiving the external objective environment; the specific process is as follows:

The generation mechanism of time originates from Finite Agent perceiving changes and event sequences in the environment through its Information Input function () and utilizing Dynamic Storage () to record and process these sequential information. With the aid of the Information Creation function (), Agent can identify patterns of stable periodicity or predictability from these sequences, such as celestial motion or physical oscillations. Ultimately, through the selection of the Control function (), Agent adopts one or more such stable patterns of change as a "standard" or "clock" for measuring other changes. Therefore, within the framework of Generalized Agent Theory, time is a construct for measuring change generated within .

Similarly, the generation of space also originates from the internal construction process of Agent . Agent perceives dynamic information of various Agent instances (including itself) in the environment via and stores it in . Through , Agent can abstract and model this Agent information, establishing structured models that describe relative positional relationships among Agent instances, such as topological, distance, and orientational relationships. The Control function can then, according to task requirements, select appropriate spatial dimensions and geometric frameworks (e. g., one, two, three-dimensional Euclidean space, or even more complex spatial representations) to organize these relational models. Thus, Generalized Agent Theory posits that space is likewise a construct generated within for organizing and representing the relative relationships of things.

Based on the above mechanisms, the core view of Generalized Agent Theory is that for Finite Agent , time and space are essentially elements or concepts generated within its internal subjective world, based on the perception, processing, abstraction, and selection of information from the external environment. They are not absolute, a priori objective backgrounds, but cognitive tools actively constructed by Agent to understand and predict the environment and coordinate its own actions. Their specific form and precision are necessarily limited by Agents various core capabilities ().

Since time and space are subjectively constructed by Finite Agent, how do different Agent instances form the seemingly unified public spacetime of our everyday experience? Generalized Agent Theory posits that shared spacetime originates from inter-Agent interaction and consensus. When different Finite Agent instances () can perceive and communicate with each other, they can establish a shared, conventional public time and space framework by sharing their chosen temporal standards (e. g., jointly observing an astronomical cycle, or agreeing to use a certain artificial timepiece) and spatial reference systems (e. g., agreed-upon coordinate systems, reference objects), and reaching consensus on these standards (through the synergistic action of In, Out, DS, and Con). Although this shared spacetime exhibits objectivity in use, its foundation remains the subjective construction capabilities of Finite Agent and their process of social consensus. The spatiotemporal view of Generalized Agent Theory closely links spacetime with Agents information processing capabilities and evolutionary state, offering new theoretical pathways for exploring the origin of spacetime, its relativity, and its manifestations in systems of different scales.

7.5. Analysis of the Unification of Classical Mechanics, Relativity, and Quantum Mechanics

Generalized Agent Theory offers a novel potential pathway for unifying the three major theoretical frameworks of physics: classical mechanics, relativity, and quantum mechanics. Its core tenet is that the differences among these theoretical systems stem from the differing "observer" role settings implicit within each. Generalized Agent Theory regards the "observer" in physics as a special case of "Agent," and by analyzing and adjusting the five-dimensional capability vector of Observer Agent (), it hopes to reveal the intrinsic connections and transitions among the three theories.

7.5.1. Capability Analysis of Observers in the Three Theories

The ideal observer in classical mechanics, akin to "Laplaces demon," is assumed to be capable of instantaneously knowing the precise state of all particles in the Universe and calculating their past and future with infinite precision, without interfering with the Universes operation[

37]. Within the framework of Generalized Agent Theory, this corresponds to Agent of Index 241, "Omniscient Agent," whose Information Input (

), Dynamic Storage (

), Information Creation (

), and Control (

) capabilities all tend towards infinity, while its Information Output capability (

) is zero. Such an observer is a purely passive, omniscient Agent with infinite computational, storage, and innovative capabilities; from its perspective, the Universe presents a strictly deterministic picture.

Relativity retains macroscopic determinism but introduces the speed of light

as an upper limit for information propagation, meaning the observers Information Input capability (

) must be finite. Concurrently, concepts like the equivalence principle also imply limitations on a local observers ability to infer global spacetime structure[

38], which can be seen as a certain finiteness of

or

. Therefore, a relativistic observer can be regarded as a special type of Finite Agent (

), whose key characteristic is a finite

constrained by physical laws, but who may possess extremely high (even near-infinite) Dynamic Storage and deductive capabilities

. Since the relativistic observer still does not intervene in or affect its Universe, its

, and it is still considered a passive observer.

The core principles of quantum mechanics, such as Heisenbergs uncertainty principle and Bohrs complementarity principle, reveal fundamental limitations on an observers ability to simultaneously acquire information about certain conjugate physical quantities[

39], reflecting the inherent finiteness of

and

. More importantly, quantum measurement theory emphasizes that the observation process itself inevitably affects the observed system (observer effect)[