Submitted:

27 May 2025

Posted:

28 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. State of Art

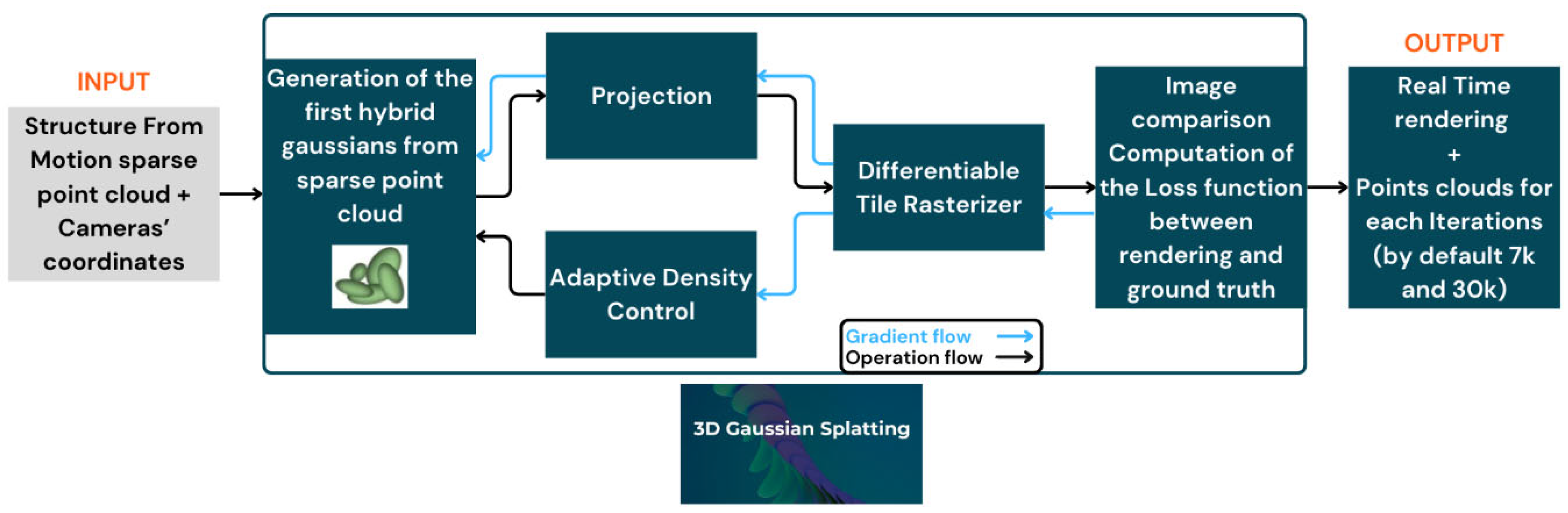

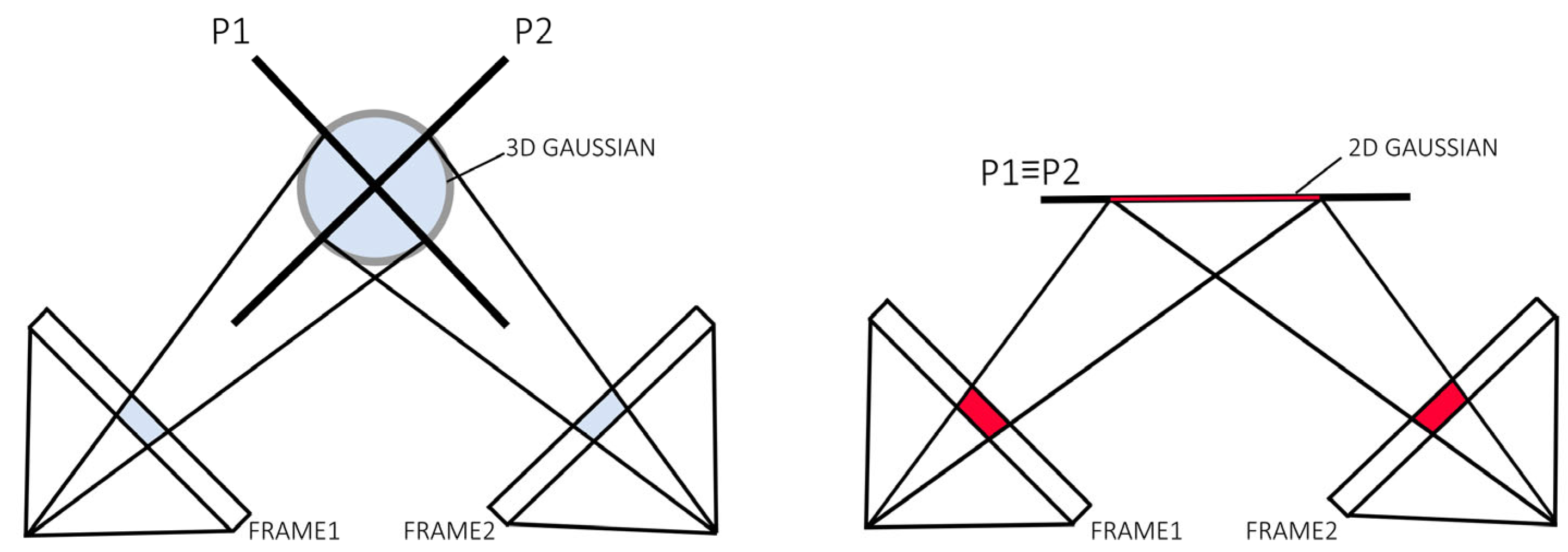

2.1. Gaussian-Splatting and Evaluation Metrics

2.2. Optimizations from the Original Paper of 3DGS

2.2.1. Storage Reduction

2.2.2. Surface Mesh Extraction

5. Materials

5.1. Software and Environment Employed

- Agisoft Metashape v.2.0.0

- Lightroom Classic v.14.0.1

- Cloud Compare v.2.13.2

- 3D Gaussian-Splatting (latest code update on Aug. 2024)

- SuGaR (latest code update on Sept. 2024)

- 2D Gaussian-Splatting (latest code update on Dec. 2024)

- Anaconda environment v.conda 23.7.4

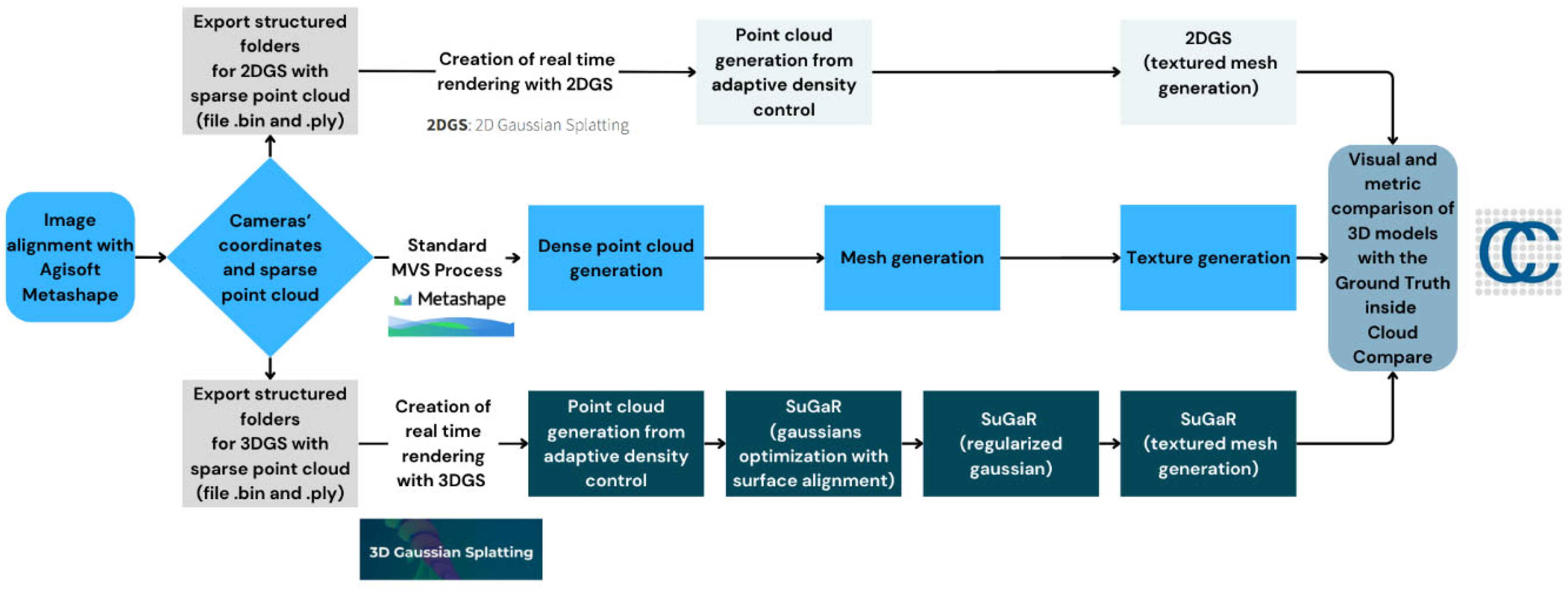

6. Methodology

6.1. Common Input and Initial Phase

6.2. Parallel Reconstruction Processes

6.2.1. Structure from Motion (SfM)

6.2.2. 3D Gaussian Splatting (3DGS) + SuGaR

- python train.py -s data/bottle2025mask -r 2 --iterations 30000 [3DGS]

- python train.py -s data\bottle2025mask -c gaussian_splatting\output\bottle2025mask\ -r dn_consistency --refinement_time long --high_poly True -i 30000 [SuGaR]

6.2.3. 2D Gaussian Splatting (2DGS)

- Depth distortion: Corrects errors in the perceived depth between objects.

- Normal consistency: Ensures consistency in surface normals (the direction of surface planes) to maintain coherent surface representation across views.

- python train.py -s data/bottle2025mask -r 2 --iterations 30000 [2DGS]

- python render.py -m output\bottle2025mask -s data\bottle2025mask [2DGS]

7. Results and Comparative Analysis

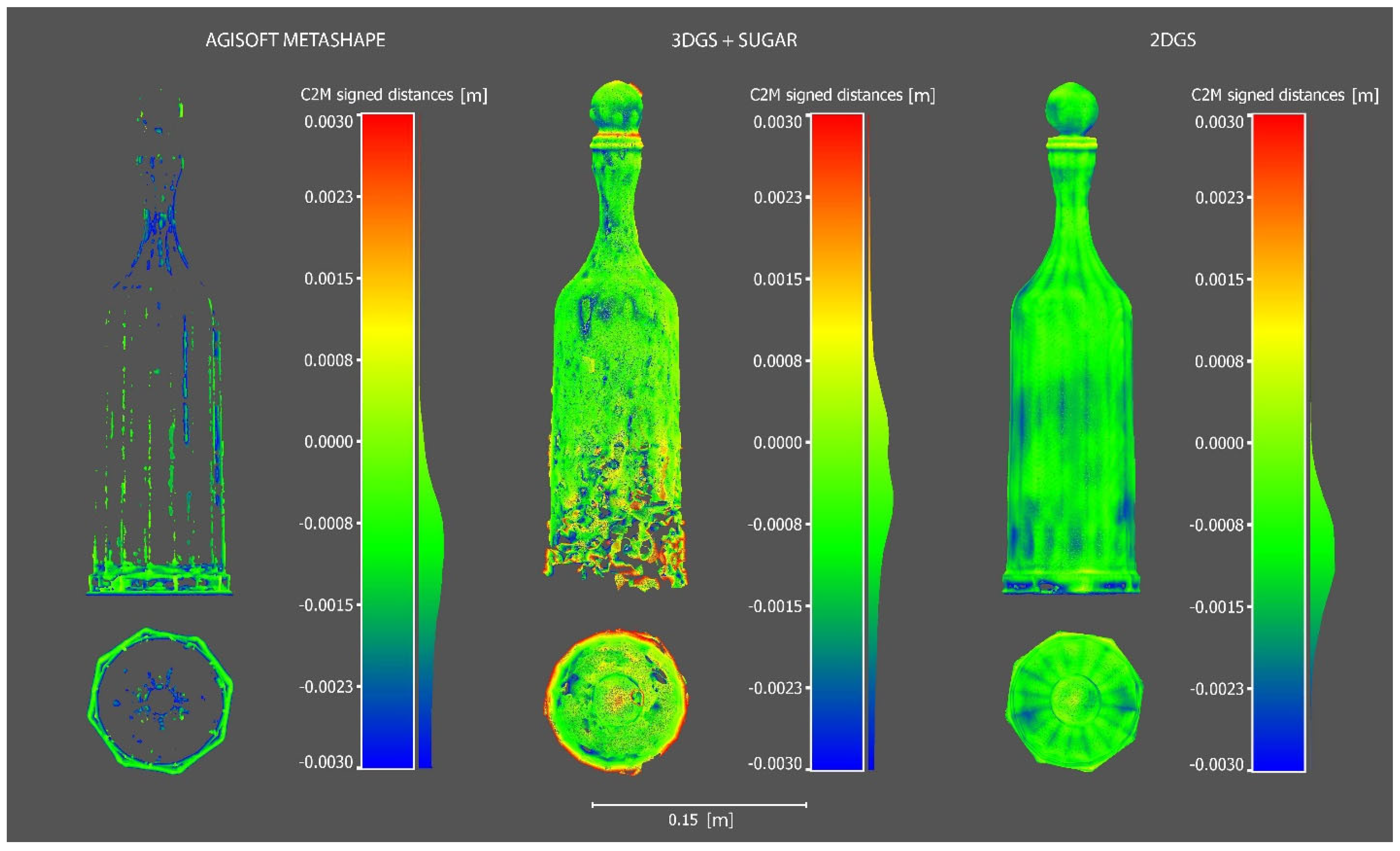

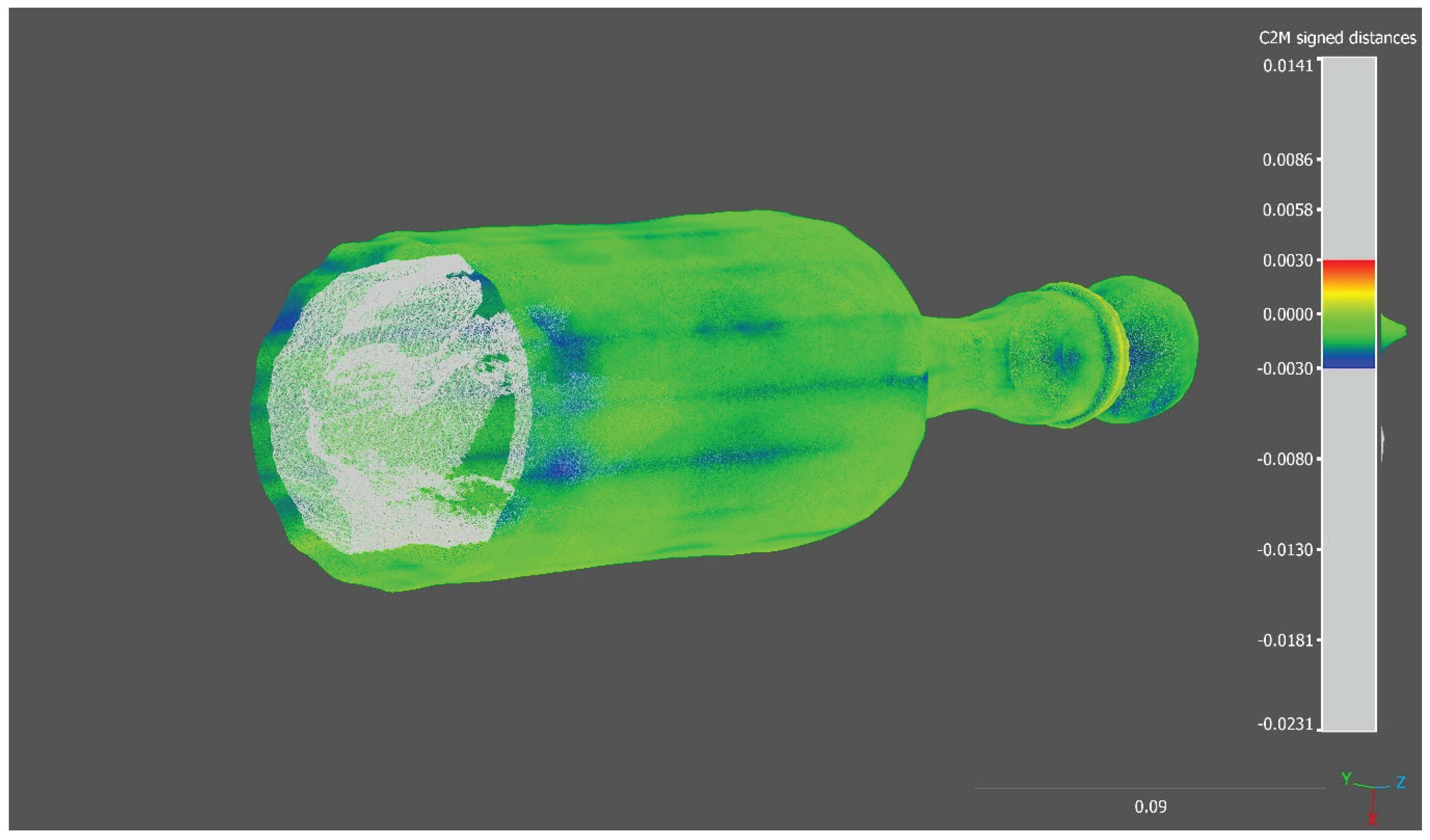

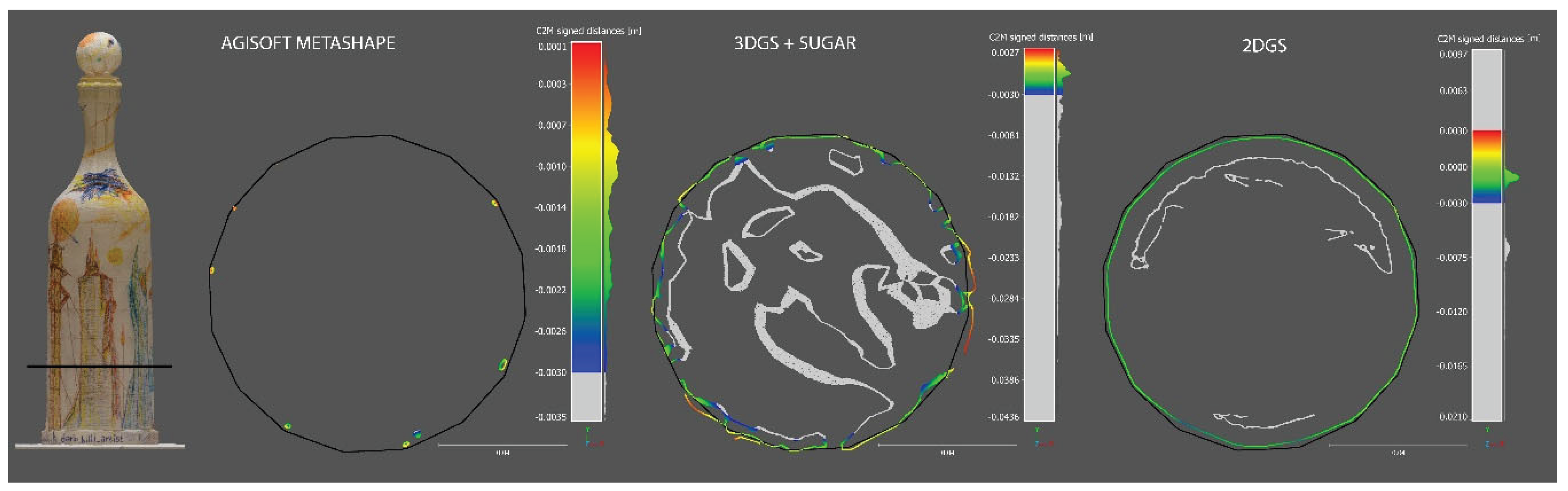

7.1. Qualitative Analysis

7.2. Quantitative Analysis

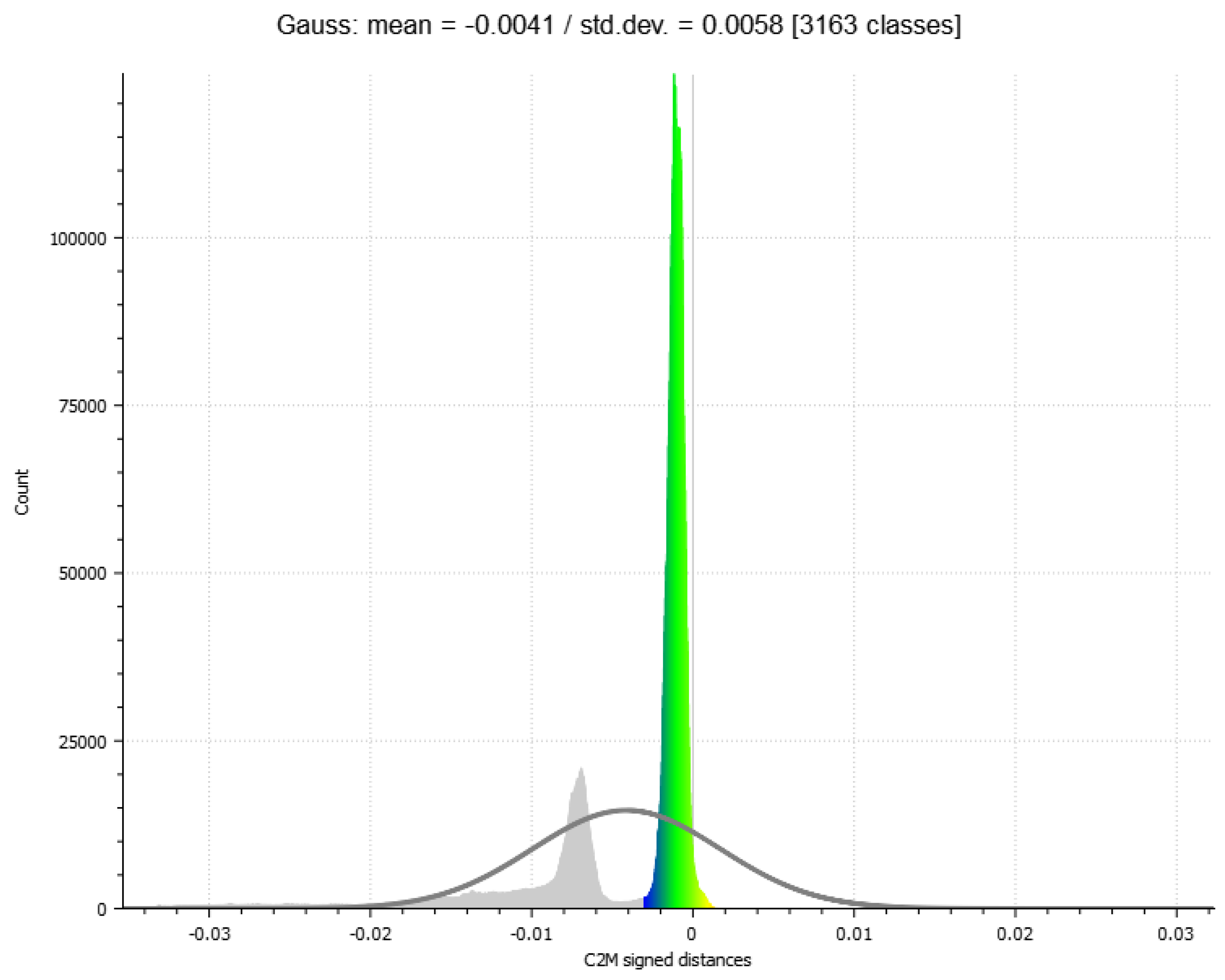

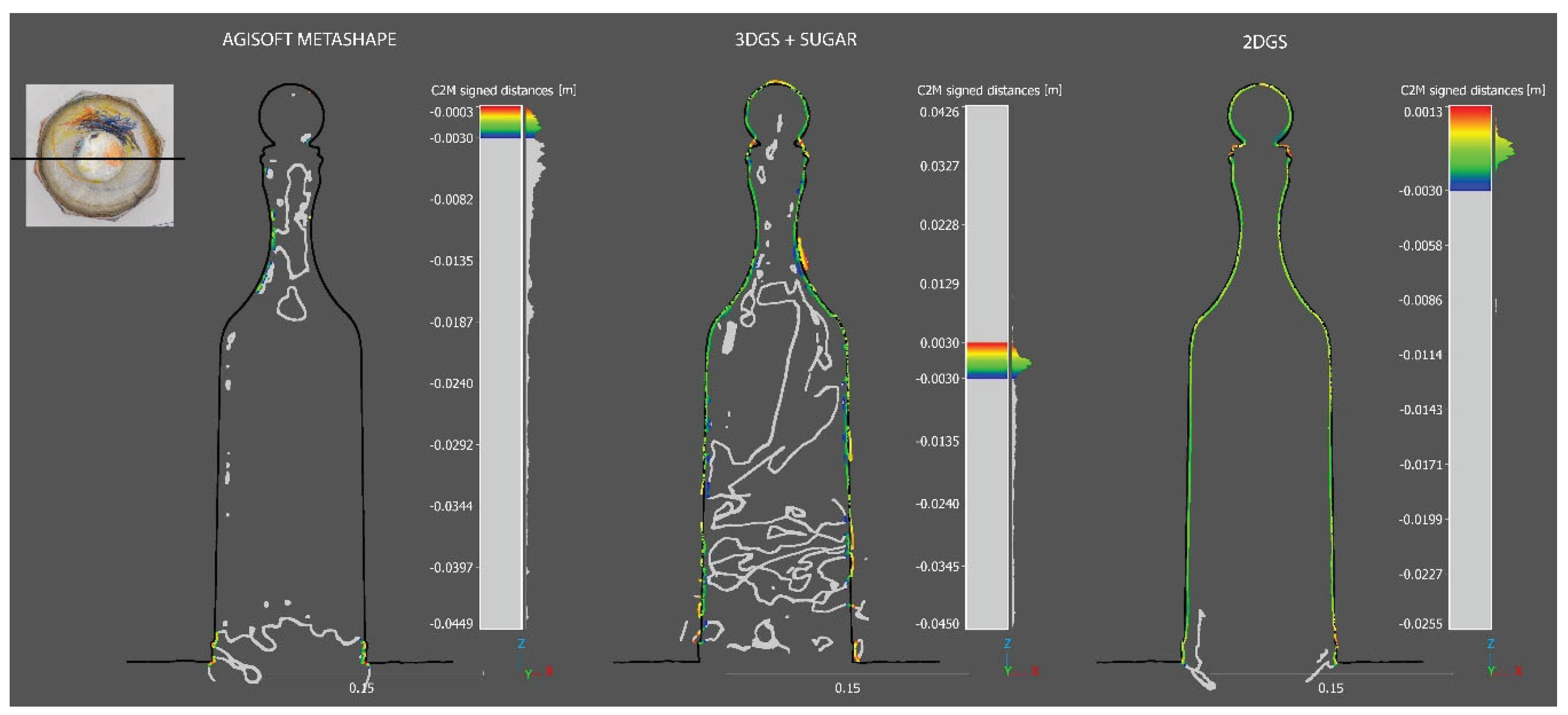

7.2.1. Completeness Evaluation of Reconstructed Models

| Processes | Gauss Mean | St. Deviation | Points in range | Outliers |

|---|---|---|---|---|

| Agisoft Metashape | -0,0014 | 0,0008 | 2.889.373 | 7.110.741 |

| 3DGS+SUGAR | -0,0006 | 0,0011 | 3.894.545 | 6.105.469 |

| 2DGS | -0,0011 | 0,0005 | 6.870.297 | 3.129.632 |

| Model | Completeness | Triangles (original) | Triangles (after outliers removed) | Surface area (m²) | Border edges | Perimeter (m) |

|---|---|---|---|---|---|---|

| Ground Truth | - | 255971 | - | 0.080029 | 425 | 0,370151 |

| Agisoft Metashape | 16,96% | 188267 | 76137 | 0.013567 | 4289 | 3,158630 |

| 3DGS+SUGAR | 99,62% | 69979 | 33096 | 0,079725 | 5146 | 12,443651 |

| 2DGS | 96,43% | 206201 | 141498 | 0,077172 | 642 | 0,722261 |

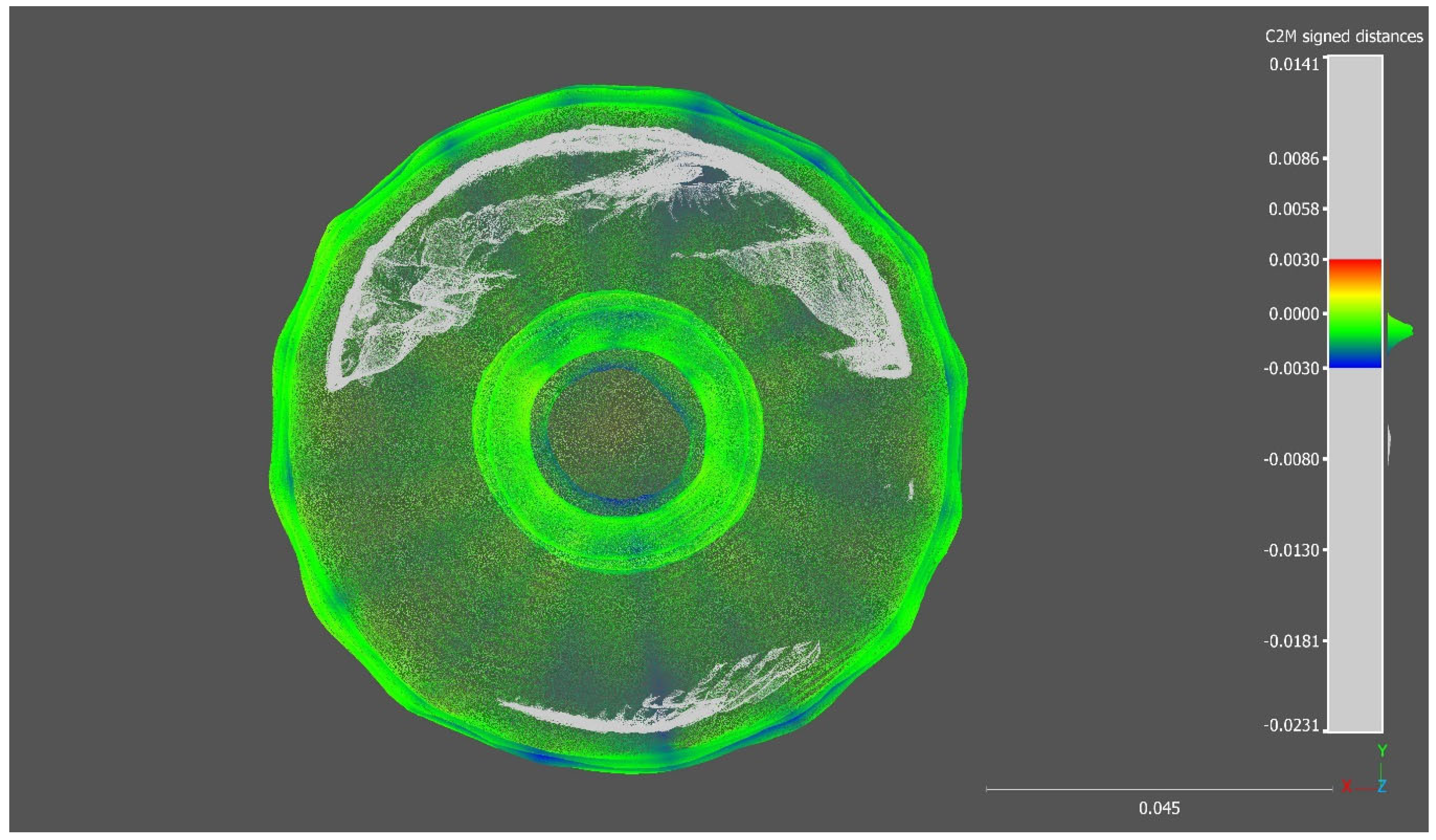

7.2.2. Completeness Evaluation of Reconstructed Models on Slice Sections

7.3. Rendering Metrics PSNR LLPIS and SSIM for 3DGS and 2DGS

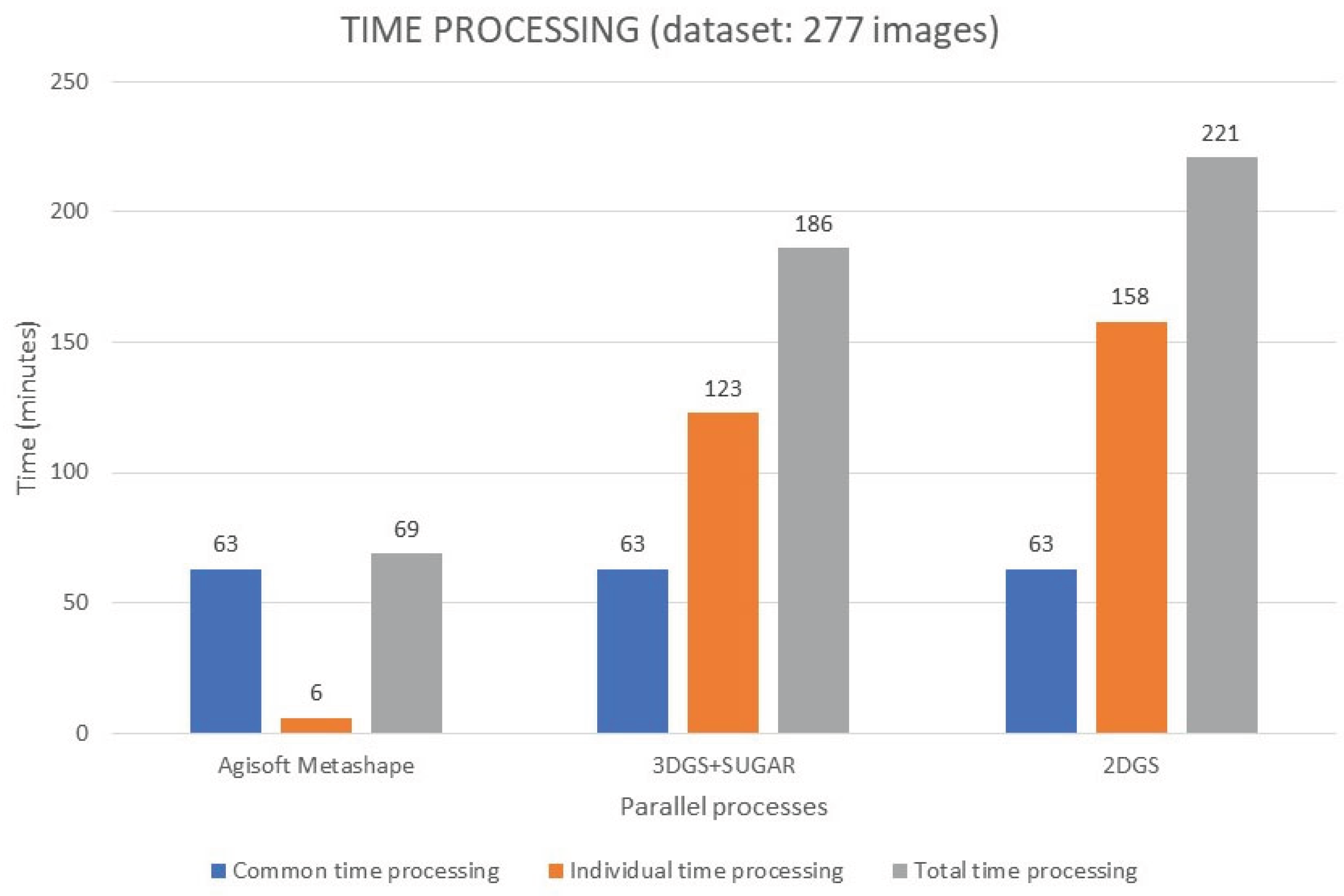

7.4. Time Processing

7.4.1. CPU and GPU Usage

8. Discussions

8.1. Comparative Performance Analysis

8.2. Key Findings and Implications

8.2.1. Challenges of Photogrammetry

8.2.2. Edge and Boundary Artifacts

8.2.3. Internal Surface Generation

8.2.4. Outlier Distribution and Robustness

9. Limitations and Future Work

10. Conclusion

Supplementary Materials

Abbreviations

| SfM | Structure-from-Motion |

| MVS | Multi View Stereo |

| 3DGS | 3D Gaussian Splatting |

| 2DGS | 2D Gaussian Splatting |

| CH | Cultural Heritage |

| AI | Artificial Intelligence |

| CV | Computer Vision |

| NeRF | Neural Radiance Field |

| MLP | Multi-Layer Perceptron |

| LPIPS | Learned Perceptual Image Patch Similarity |

| PSNR | Peak Signal-to-Noise Ratio |

| SSIM | Structural Similarity Index Measure |

| SuGaR | Surface-Aligned Gaussian Splatting for Efficient 3D Mesh Reconstruction and High-Quality Mesh Rendering |

| GS2Mesh | Gaussian Splatting-to-Mesh |

| GOF | Gaussian Opacity Fields |

| MVG-Splatting | Multi-View Guided Gaussian Splatting |

| GPU | Graphics Processing Unit |

| CPU | Central Processing Unit |

References

- Moyano, J.; Nieto-Julián, J.E.; Bienvenido-Huertas, D.; Marín-García, D. Validation of Close-Range Photogrammetry for Architectural and Archaeological Heritage: Analysis of Point Density and 3D Mesh Geometry. Remote Sens. 2020, 12, 3571. [Google Scholar] [CrossRef]

- Rea, P.; Pelliccio, A.; Ottaviano, E.; Saccucci, M. The Heritage Management and Preservation Using the Mechatronic Survey. Int. J. Archit. Herit. 2017, 11, 1121–1132. [Google Scholar] [CrossRef]

- Karami, A.; Battisti, R.; Menna, F.; Remondino, F. 3D Digitization of Transparent and Glass Surfaces: State of the Art and Analysis of Some Methods. Int. Arch. Photogramm. Remote Sens. Spatial Inf. Sci. 2022, XLIII-B2-2022, 695–702. [Google Scholar] [CrossRef]

- Morelli, L.; Karami, A.; Menna, F.; Remondino, F. Orientation of Images with Low Contrast Textures and Transparent Objects. Remote Sens. 2022, 14, 6345. [Google Scholar] [CrossRef]

- Fiorucci, M.; Khoroshiltseva, M.; Pontil, M.; Traviglia, A.; Del Bue, A.; James, S. Machine Learning for Cultural Heritage: A Survey. Pattern Recognit. Lett. 2020, 133, 102–108. [Google Scholar] [CrossRef]

- Croce, V.; Caroti, G.; Piemonte, A.; De Luca, L.; Véron, P. H-BIM and Artificial Intelligence: Classification of Architectural Heritage for Semi-Automatic Scan-to-BIM Reconstruction. Sensors 2023, 23, 2497. [Google Scholar] [CrossRef]

- Condorelli, F.; Rinaudo, F. Cultural Heritage Reconstruction from Historical Photographs and Videos. Int. Arch. Photogramm. Remote Sens. Spatial Inf. Sci. 2018, XLII-2, 259–265. [Google Scholar] [CrossRef]

- Mildenhall, B.; Srinivasan, P.P.; Tancik, M.; Barron, J.T.; Ramamoorthi, R.; Ng, R. NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis. arXiv 2020, arXiv:2003.08934. [Google Scholar] [CrossRef]

- Barron, J.T.; Mildenhall, B.; Verbin, D.; Srinivasan, P.P.; Hedman, P. Mip-NeRF 360: Unbounded Anti-Aliased Neural Radiance Fields. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 19-24 June 2022; pp. 5470–5479. [Google Scholar]

- Croce, V.; Billi, D.; Caroti, G.; Piemonte, A.; De Luca, L.; Véron, P. Comparative Assessment of Neural Radiance Fields and Photogrammetry in Digital Heritage: Impact of Varying Image Conditions on 3D Reconstruction. Remote Sens. 2024, 16, 301. [Google Scholar] [CrossRef]

- Kerbl, B.; Kopanas, G.; Leimkühler, T.; Drettakis, G. 3D Gaussian Splatting for Real-Time Radiance Field Rendering. arXiv 2023, arXiv:2308.04079. [Google Scholar] [CrossRef]

- Huang, B.; Yu, Z.; Chen, A.; Geiger, A.; Gao, S. 2D Gaussian Splatting for Geometrically Accurate Radiance Fields. In Proceedings of the SIGGRAPH '24 Conference Papers, Denver, CO, USA, 28 July–1 August 2024; pp. 1–11. [Google Scholar] [CrossRef]

- Fei, B.; Xu, J.; Zhang, R.; Zhou, Q.; Yang, W.; He, Y. 3D Gaussian Splatting as New Era: A Survey. IEEE Trans. Vis. Comput. Graphics 2024. [Google Scholar] [CrossRef] [PubMed]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image Quality Assessment: From Error Visibility to Structural Similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef]

- Zhang, R.; Isola, P.; Efros, A.A.; Shechtman, E.; Wang, O. The Unreasonable Effectiveness of Deep Features as a Perceptual Metric. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 586–595. [Google Scholar]

- Iandola, F.N.; Han, S.; Moskewicz, M.W.; Ashraf, K.; Dally, W.J.; Keutzer, K. SqueezeNet: AlexNet-Level Accuracy with 50x Fewer Parameters and <0.5MB Model Size. arXiv 2016, arXiv:1602.07360. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet Classification with Deep Convolutional Neural Networks. Advances in Neural Information Processing Systems 25 (NIPS 2012), Lake Tahoe, NV, USA, 3-6 December 2012; pp. 1097–1105. [Google Scholar]

- Lee, J.C.; Rho, D.; Sun, X.; Ko, J.H.; Park, E. Compact 3D Gaussian Representation for Radiance Field. arXiv 2023, arXiv:2311.13681. [Google Scholar]

- Chen, Y.; Wu, Q.; Cai, J.; Harandi, M.; Lin, W. HAC: Hash-Grid Assisted Context for 3D Gaussian Splatting Compression. arXiv 2024, arXiv:2403.14530. [Google Scholar]

- Fan, Z.; Wang, K.; Wen, K.; Zhu, Z.; Xu, D.; Wang, Z. LightGaussian: Unbounded 3D Gaussian Compression with 15x Reduction and 200+ FPS. arXiv 2023, arXiv:2311.17245. [Google Scholar]

- Girish, S.; Gupta, K.; Shrivastava, A. EAGLES: Efficient Accelerated 3D Gaussians with Lightweight Encodings. arXiv 2023, arXiv:2312.04564. [Google Scholar]

- Papantonakis, P.; Kopanas, G.; Kerbl, B.; Lanvin, A.; Drettakis, G. Reducing the Memory Footprint of 3D Gaussian Splatting. Proc. ACM Comput. Graph. Interact. Tech. 2024, 7, 1–17. [Google Scholar] [CrossRef]

- Malarz, D.; Smolak, W.; Tabor, J.; Tadeja, S.; Spurek, P. Gaussian Splatting with NeRF-Based Color and Opacity. arXiv 2024, arXiv:2312.13729. [Google Scholar] [CrossRef]

- Guédon, A.; Lepetit, V. SuGaR: Surface-Aligned Gaussian Splatting for Efficient 3D Mesh Reconstruction and High-Quality Mesh Rendering. arXiv 2023, arXiv:2311.12775. [Google Scholar]

- Wolf, Y.; Bracha, A.; Kimmel, R. Surface Reconstruction from Gaussian Splatting via Novel Stereo Views. arXiv 2024, arXiv:2404.01810. [Google Scholar]

- Yu, Z.; Sattler, T.; Geiger, A. Gaussian Opacity Fields: Efficient High-Quality Compact Surface Reconstruction in Unbounded Scenes. arXiv 2024, arXiv:2404.10772. [Google Scholar]

- Li, Z.; Yao, S.; Chu, Y.; García-Fernández, Á.F.; Yue, Y.; Lim, E.G.; Zhu, X. MVG-Splatting: Multi-View Guided Gaussian Splatting with Adaptive Quantile-Based Geometric Consistency Densification. arXiv 2024, arXiv:2407.11840. [Google Scholar]

- Yao, Y.; Luo, Z.; Li, S.; Fang, T.; Quan, L. MVSNet: Depth Inference for Unstructured Multi-View Stereo. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8-14 September 2018; pp. 767–783. [Google Scholar]

- Cheng, K.; Long, X.; Yang, K.; Yao, Y.; Yin, W.; Ma, Y.; Wang, W.; Chen, X. GaussianPro: 3D Gaussian Splatting with Progressive Propagation. In Proceedings of the Forty-first International Conference on Machine Learning, Vienna, Austria, 21–27 July 2024. [Google Scholar]

- Liu, T.; Wang, G.; Hu, S.; Shen, L.; Ye, X.; Zang, Y.; Cao, Z.; Li, W.; Liu, Z. Fast Generalizable Gaussian Splatting Reconstruction from Multi-View Stereo. arXiv 2024, arXiv:2405.12218. [Google Scholar]

- Wolf, Y.; Bracha, A.; Kimmel, R. Surface Reconstruction from Gaussian Splatting via Novel Stereo Views. arXiv 2024, arXiv:2404.01810. [Google Scholar]

- Chen, Y.; Xu, H.; Zheng, C.; Zhuang, B.; Pollefeys, M.; Geiger, A.; Cham, T.-J.; Cai, J. MVSplat: Efficient 3D Gaussian Splatting from Sparse Multi-View Images. arXiv 2024, arXiv:2403.14627. [Google Scholar]

- Lorensen, W.E.; Cline, H.E. Marching Cubes: A High Resolution 3D Surface Construction Algorithm. In Seminal Graphics: Pioneering Efforts That Shaped the Field; ACM: New York, NY, USA, 1998; pp. 347–353. [Google Scholar]

| Metric | Range | Interpretation |

|---|---|---|

| SSIM | > 0.98 | Excellent structural similarity |

| 0.95 – 0.98 | High quality | |

| 0.90 – 0.95 | Good quality | |

| < 0.90 | Noticeable structural degradation | |

| PSNR | > 40 | Very high visual fidelity |

| 35 – 40 | High quality | |

| 30 – 35 | Medium / acceptable quality | |

| < 30 | Perceptible degradation | |

| LPIPS | < 0.05 | Excellent perceptual similarity |

| 0.05 – 0.10 | High perceptual quality | |

| 0.10 – 0.20 | Medium quality | |

| > 0.20 | Low perceptual fidelity / perceptible error |

| Name | Image dimension | Focal lenght | Sensor dimensions |

|---|---|---|---|

| Nikon D750 | 6016x4016 pixels | 50 mm | W=36.0 mm H=23.9 mm |

| Aperture | Shutter speed range (Aperture priority mode) | ISO | Format |

|---|---|---|---|

| f/16 | 1/8 – 1/10 | 200 | RAW |

| Accuracy | Limit key points | Limit tie points | Generic preselection | Reference preselection | Adaptive camera model fitting | Exclude stationary tie points | Guided image matching |

|---|---|---|---|---|---|---|---|

| High | 0 | 0 | No | No | No | Yes | No |

| Source data | Surface type | Quality | Face count | Interpolation | Depth filtering |

|---|---|---|---|---|---|

| Depth Maps | Arbitrary | High | High | Enabled | Mild |

| Method | SSIM | PSNR | LPIPS | Visual Fidelity | Mesh Accuracy |

|---|---|---|---|---|---|

| 3DGS | 0.9768 | 36.06 | 0.0629 | ☑ Higher (more realistic) | ✖ Low degree of conformity |

| 2DGS | 0.9734 | 34.91 | 0.0696 | ☑ Good, slightly worse | ☑ High degree of conformity |

| Software/Process | CPU Usage | GPU Usage | Notes |

|---|---|---|---|

|

Agisoft Metashape |

☑ Important |

☑ Important |

- CPU used for feature matching, mesh generation, and texturing. - GPU accelerates depth maps, point cloud, and rendering. |

|

3DGS + SUGAR |

✖ Minimal |

☑ Primary |

- Intensive GPU computation for 3D Gaussian management. - CPU marginally used for coordination. |

|

2DGS |

✖ Minimal |

☑ Primary |

- Uses GPU for rasterization and Gaussian optimization. - CPU involved only in data management. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).