Submitted:

16 May 2025

Posted:

16 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Harmony Tokenization Methods

2.1. Melody and Harmony Tokenization Foundations

- <bar> to indicate a new bar,

- <rest> to indicate a rest,

- position_BxSD for note onset time,

- P:X for MIDI pitch.

2.2. Harmony Tokenization

- ChordSymbol: Each token directly encodes the chord symbol as it appears in the lead sheet, e.g., C:maj7. To ensure homogeneity between different representations of the same chord symbols (e.g., Cmaj and C▵) all chords are transformed into their equivalent MIR_eval [30] symbol according to their pitch class correspondence.

- RootType: Separate tokens are used for the root and the quality of the chord, e.g., C and maj7.

- PitchClass: Chord symbols are not used; instead, the chord is represented as a set of pitch classes. For example, a Cmaj7 chord is tokenized as chord_pc_0, chord_pc_4, chord_pc_7, and chord_pc_11.

- RootPC: Similar to the previous method, but includes a dedicated token for the root pitch class, e.g., root_pc_0, chord_pc_4, chord_pc_7, chord_pc_11. This helps disambiguate chords with similar pitch class sets (e.g., Cmaj6 vs. Am7).

3. Experimental Setup, Models, Data and Evaluation Approach

- Masked Language Modeling (MLM) is a self-supervised learning objective used to train transformer-based encoders like BERT [31] and RoBERTa [32]. In MLM, a portion of the input tokens is randomly masked (typically 15% in BERT) and replaced with a special <mask> token, while the model is trained to predict the original tokens based on the surrounding context. Unlike traditional left-to-right language models, MLM allows bidirectional context learning, enabling deeper semantic understanding. In the current study we employ the RoBERTa strategy, which removes the Next Sentence Prediction (NSP) task and focus only on predicting the <mask> tokens.

- Encoder-decoder melody harmonization performed by BART [33]. In this task the melody is used as input in the encoder and the harmony is generated autoregressively in the decoder until a stopping criterion (end-of-sentence token is generated or maximum number of tokens is achieved).

- Decoder-only melody harmonization performed by GPT-2 [34]. This follows an autoregressive approach where harmony tokens are generated sequentially from left to right. Given an initial prompt of melody tokens, the model predicts the next harmony token based on previous tokens, iterating until a stopping criterion (end-of-sentence token is generated or maximum number of tokens is achieved). All transformer architectures for melodic harmonization in the literature so far are encoder-decoder architectures, but we want to generalize the study to the possibilities offered by decoder-only architectures.

- Masked Language Modeling (MLM) A self-supervised objective for training encoder-based models such as BERT [31] and RoBERTa [32]. MLM randomly masks a subset of input tokens (typically 15%) and trains the model to recover the original tokens using contextual cues. We follow the RoBERTa strategy, removing the Next Sentence Prediction (NSP) component and focusing solely on predicting <mask> tokens.

- Encoder-Decoder Melody Harmonization Using BART [33], this task encodes a melody and generates a corresponding harmony sequence autoregressively until an end-of-sequence token or a token limit is reached.

- Decoder-Only Melody Harmonization Modeled using GPT-2 [34], harmony tokens are generated from left to right, conditioned on a melody prompt. While prior work typically uses encoder-decoder models for harmonization, we explore the applicability of decoder-only models in this context.

- Token-Based Metrics: Measure internal consistency and structural validity of generated token sequences.

- Symbolic Music Metrics: Capture harmonic, melodic, and rhythmic attributes of the generated sequences, independent of exact token alignment with ground truth.

- How closely do generated token sequences align with the ground truth?

- Are the generated chord progressions musically coherent, harmonically accurate, and rhythmically appropriate?

- How effectively do BART and GPT-2 leverage different tokenizations to generate high-quality harmonizations?

3.1. Token Metrics

Duplicate Ratio (DR)

Token Consistency Ratio (TCR)

3.2. Symbolic Music Metrics

3.2.1. Chord Progression Coherence and Diversity

Chord Histogram Entropy (CHE)

Chord Coverage (CC)

Chord Tonal Distance (CTD)

3.2.2. Chord/Melody Harmonicity

Chord Tone to non-Chord Tone Ratio (CTnCTR)

Pitch Consonance Score (PCS)

Melody-Chord Tonal Distance (MCTD)

3.2.3. Harmonic Rhythm Coherence and Diversity

Harmonic Rhythm Histogram Entropy (HRHE)

Harmonic Rhythm Coverage (HRC)

Chord Beat Strength (CBS)

- Score 0 if a chord occurs exactly at the start of the measure (onset 0.0).

- Score 1 if it coincides with any other strong beat (e.g., onsets 1.0, 2.0, 3.0 in a 4/4 measure).

- Score 2 if placed on a half-beat (e.g., 0.5, 1.5, etc.).

- Score 3 if on an eighth subdivision (e.g., 0.25, 0.75).

- Score 4 otherwise.

3.2.4. Fréchet Audio Distance Evaluation

4. Results

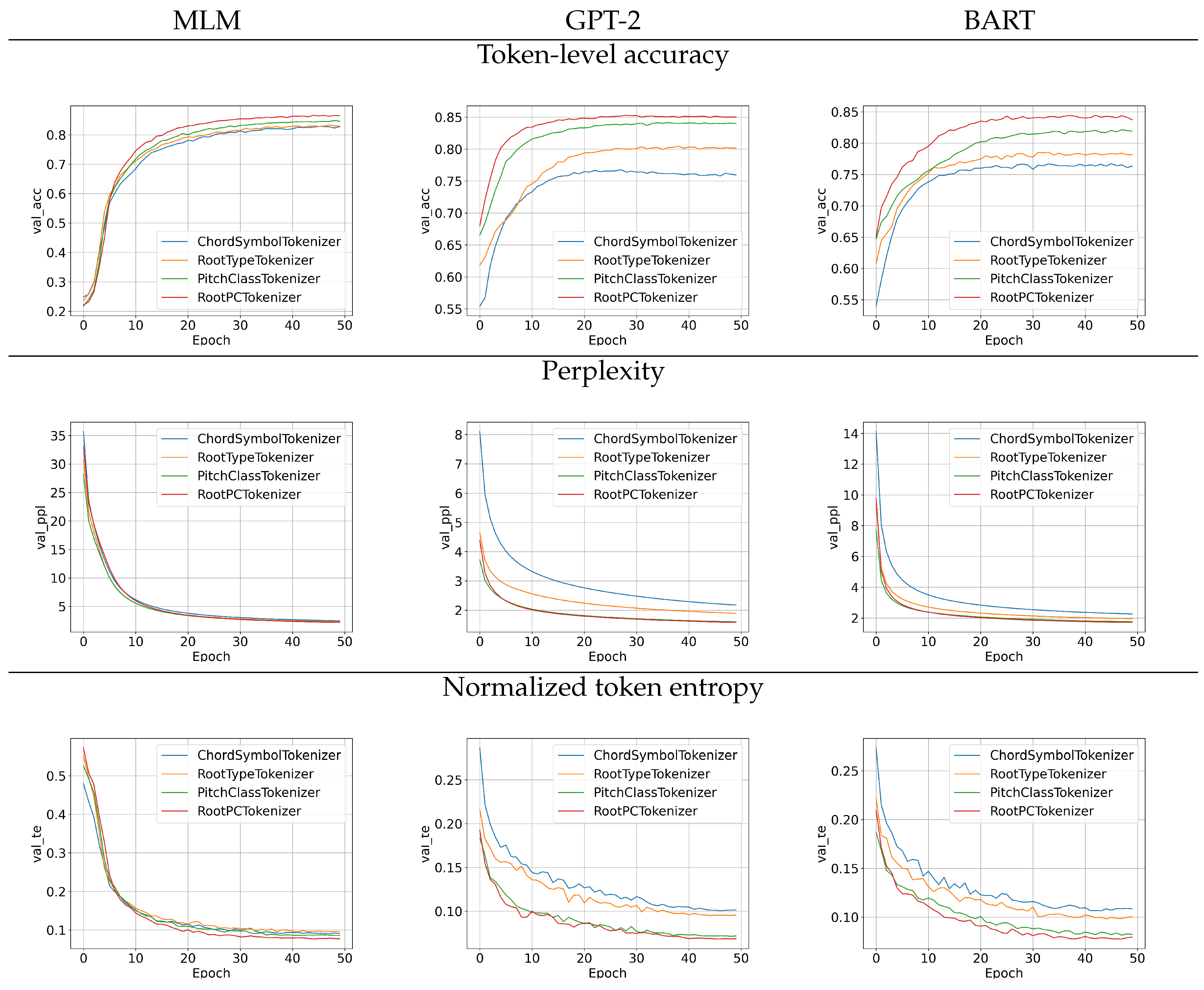

4.1. Training Analysis

4.1.1. Information-Theoretic Considerations

4.1.2. Training Convergence Analysis

- correct bar: The bar tokens are predicted correctly.

- correct new chord: A position_X token is correctly placed, regardless of the specific position value. This indicates that the model correctly identified the onset of a new chord (rather than a new bar or additional pitch), irrespective of the precise timing.

- correct position: The model correctly identifies both the presence and the timing of a new chord.

- correct chord: The complete chord structure is accurately predicted. For the PitchClass tokenizer, which lacks root information, this refers to correctly predicting the full set of pitch classes that constitute the chord.

- correct root: The root note of the chord is correctly predicted. This criterion is not applicable to the PitchClass tokenizer.

4.2. Melodic Harmonization Generation Results

4.2.1. Token and Symbolic Music Metrics

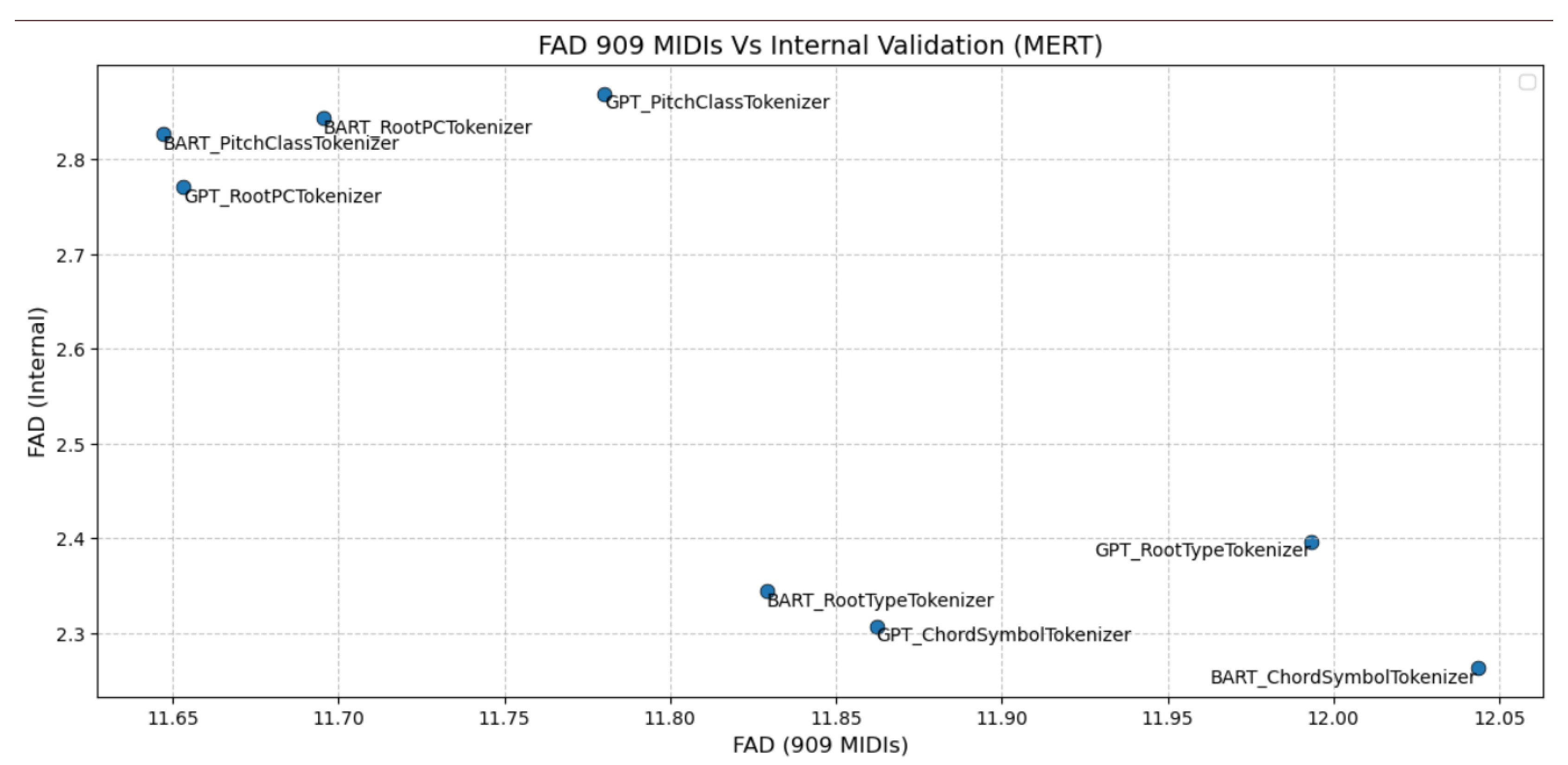

4.2.2. FAD Results

| Model + Tokenizer | FAD (909, MERT) | FAD (internal, MERT) |

|---|---|---|

| BART_PitchClassTokenizer | 11.6472 | 2.8262 |

| BART_RootPCTokenizer | 11.6954 | 2.8432 |

| BART_RootTypeTokenizer | 11.8291 | 2.3438 |

| BART_ChordSymbolTokenizer | 12.0435 | 2.2630 |

| GPT_PitchClassTokenizer | 11.7803 | 2.8694 |

| GPT_RootPCTokenizer | 11.6531 | 2.7705 |

| GPT_RootTypeTokenizer | 11.9934 | 2.3962 |

| GPT_ChordSymbolTokenizer | 11.8622 | 2.3069 |

5. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Sennrich, R.; Haddow, B.; Birch, A. Neural machine translation of rare words with subword units. arXiv preprint arXiv:1508.07909 2015.

- Kudo, T.; Richardson, J. Sentencepiece: A simple and language independent subword tokenizer and detokenizer for neural text processing. arXiv preprint arXiv:1808.06226 2018.

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P.J. Exploring the limits of transfer learning with a unified text-to-text transformer. Journal of machine learning research 2020, 21, 1–67.

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language models are few-shot learners. Advances in neural information processing systems 2020, 33, 1877–1901.

- Kaplan, J.; McCandlish, S.; Henighan, T.; Brown, T.B.; Chess, B.; Child, R.; Gray, S.; Radford, A.; Wu, J.; Amodei, D. Scaling laws for neural language models. arXiv preprint arXiv:2001.08361 2020.

- Agosti, G. Transformer networks for the modelling of jazz harmony. Master’s thesis, Politecnico di Milano, Milano, Italy, 2021.

- Hahn, S.; Yin, J.; Zhu, R.; Xu, W.; Jiang, Y.; Mak, S.; Rudin, C. SentHYMNent: An Interpretable and Sentiment-Driven Model for Algorithmic Melody Harmonization. In Proceedings of the Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2024, pp. 5050–5060.

- Cambouropoulos, E.; Kaliakatsos-Papakostas, M.A.; Tsougras, C. An idiom-independent representation of chords for computational music analysis and generation. In Proceedings of the Joint 40th International Computer Music Conference (ICMC) and 11th Sound and Music Computing (SMC) Conference (ICMC-SMC2014), 2014.

- Kaliakatsos-Papakostas, M.; Katsiavalos, A.; Tsougras, C.; Cambouropoulos, E. Harmony in the polyphonic songs of epirus: Representation, statistical analysis and generation. In Proceedings of the Proceedings of the 4th international workshop on folk music analysis, 2014, pp. 21–28.

- Kaliakatsos-Papakostas, M.A.; Zacharakis, A.I.; Tsougras, C.; Cambouropoulos, E. Evaluating the General Chord Type Representation in Tonal Music and Organising GCT Chord Labels in Functional Chord Categories. In Proceedings of the Proceedings of the International Society for Music Information Retrieval (ISMIR) Conference, 2015, pp. 427–433.

- Costa, L.F.; Barchi, T.M.; de Morais, E.F.; Coca, A.E.; Schemberger, E.E.; Martins, M.S.; Siqueira, H.V. Neural networks and ensemble based architectures to automatic musical harmonization: a performance comparison. Applied Artificial Intelligence 2023, 37, 2185849.

- Yeh, Y.C.; Hsiao, W.Y.; Fukayama, S.; Kitahara, T.; Genchel, B.; Liu, H.M.; Dong, H.W.; Chen, Y.; Leong, T.; Yang, Y.H. Automatic melody harmonization with triad chords: A comparative study. Journal of New Music Research 2021, 50, 37–51.

- Chen, Y.W.; Lee, H.S.; Chen, Y.H.; Wang, H.M. SurpriseNet: Melody harmonization conditioning on user-controlled surprise contours. arXiv preprint arXiv:2108.00378 2021.

- Sun, C.E.; Chen, Y.W.; Lee, H.S.; Chen, Y.H.; Wang, H.M. Melody harmonization using orderless NADE, chord balancing, and blocked Gibbs sampling. In Proceedings of the ICASSP 2021-2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2021, pp. 4145–4149.

- Zeng, T.; Lau, F.C. Automatic melody harmonization via reinforcement learning by exploring structured representations for melody sequences. Electronics 2021, 10, 2469.

- Wu, S.; Yang, Y.; Wang, Z.; Li, X.; Sun, M. Generating chord progression from melody with flexible harmonic rhythm and controllable harmonic density. EURASIP Journal on Audio, Speech, and Music Processing 2024, 2024, 4.

- Wu, S.; Li, X.; Sun, M. Chord-conditioned melody harmonization with controllable harmonicity. In Proceedings of the ICASSP 2023-2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2023, pp. 1–5.

- Yi, L.; Hu, H.; Zhao, J.; Xia, G. Accomontage2: A complete harmonization and accompaniment arrangement system. arXiv preprint arXiv:2209.00353 2022.

- Rhyu, S.; Choi, H.; Kim, S.; Lee, K. Translating melody to chord: Structured and flexible harmonization of melody with transformer. IEEE Access 2022, 10, 28261–28273.

- Zhou, J.; Zhu, H.; Wang, X. Choir Transformer: Generating Polyphonic Music with Relative Attention on Transformer (2023).

- Huang, J.; Yang, Y.H. Emotion-driven melody harmonization via melodic variation and functional representation. arXiv preprint arXiv:2407.20176 2024.

- Wu, S.; Wang, Y.; Li, X.; Yu, F.; Sun, M. Melodyt5: A unified score-to-score transformer for symbolic music processing. arXiv preprint arXiv:2407.02277 2024.

- Cholakov, V. AI Enhancer—Harmonizing Melodies of Popular Songs with Sequence-to-Sequence. Master’s thesis, The University of Edinburgh, Edinburgh, Scotland, 2018.

- Huang, C.Z.A.; Vaswani, A.; Uszkoreit, J.; Shazeer, N.; Simon, I.; Hawthorne, C.; Dai, A.M.; Hoffman, M.D.; Dinculescu, M.; Eck, D. Music Transformer, 2018, [arXiv:cs.LG/1809.04281].

- Wang, Z.; Wang, D.; Zhang, Y.; Xia, G. Learning interpretable representation for controllable polyphonic music generation. arXiv preprint arXiv:2008.07122 2020.

- Min, L.; Jiang, J.; Xia, G.; Zhao, J. Polyffusion: A diffusion model for polyphonic score generation with internal and external controls. arXiv preprint arXiv:2307.10304 2023.

- Ji, S.; Yang, X.; Luo, J.; Li, J. Rl-chord: Clstm-based melody harmonization using deep reinforcement learning. IEEE Transactions on Neural Networks and Learning Systems 2023.

- Lim, H.; Rhyu, S.; Lee, K. Chord generation from symbolic melody using BLSTM networks. arXiv preprint arXiv:1712.01011 2017.

- Ji, S.; Yang, X. Emotion-conditioned melody harmonization with hierarchical variational autoencoder. In Proceedings of the 2023 IEEE International Conference on Systems, Man, and Cybernetics (SMC). IEEE, 2023, pp. 228–233.

- Raffel, C.; McFee, B.; Humphrey, E.J.; Salamon, J.; Nieto, O.; Liang, D.; Ellis, D.P.; Raffel, C.C. MIR_EVAL: A Transparent Implementation of Common MIR Metrics. In Proceedings of the ISMIR, 2014, Vol. 10, p. 2014.

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. Roberta: A robustly optimized bert pretraining approach. arXiv preprint arXiv:1907.11692 2019.

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the Proceedings of the 2019 conference of the North American chapter of the association for computational linguistics: human language technologies, volume 1 (long and short papers), 2019, pp. 4171–4186.

- Lewis, M.; Liu, Y.; Goyal, N.; Ghazvininejad, M.; Mohamed, A.; Levy, O.; Stoyanov, V.; Zettlemoyer, L. Bart: Denoising sequence-to-sequence pre-training for natural language generation, translation, and comprehension. arXiv preprint arXiv:1910.13461 2019.

- Radford, A.; Wu, J.; Child, R.; Luan, D.; Amodei, D.; Sutskever, I.; et al. Language models are unsupervised multitask learners. OpenAI blog 2019, 1, 9.

- Krumhansl, C.L. Cognitive foundations of musical pitch; Oxford University Press, 2001.

- Fradet, N.; Gutowski, N.; Chhel, F.; Briot, J.P. Byte pair encoding for symbolic music. arXiv preprint arXiv:2301.11975 2023.

- Snover, M.; Dorr, B.; Schwartz, R.; Micciulla, L.; Makhoul, J. A study of translation edit rate with targeted human annotation. In Proceedings of the Proceedings of the 7th Conference of the Association for Machine Translation in the Americas: Technical Papers, 2006, pp. 223–231.

- Harte, C.; Sandler, M.; Gasser, M. Detecting harmonic change in musical audio. In Proceedings of the Proceedings of the 1st ACM workshop on Audio and music computing multimedia, 2006, pp. 21–26.

- Kilgour, K.; Zuluaga, M.B.; Roblek, D.; Sharifi, M. Fréchet Audio Distance: A Reference-Free Metric for Evaluating Music Enhancement Algorithms. In Proceedings of the Interspeech 2019, 2019, pp. 2350–2354. [CrossRef]

- Wang*, Z.; Chen*, K.; Jiang, J.; Zhang, Y.; Xu, M.; Dai, S.; Bin, G.; Xia, G. POP909: A Pop-song Dataset for Music Arrangement Generation. In Proceedings of the Proceedings of 21st International Conference on Music Information Retrieval, ISMIR, 2020.

- Li, Y.; Yuan, R.; Zhang, G.; Ma, Y.; Chen, X.; Yin, H.; Lin, C.; Ragni, A.; Benetos, E.; Gyenge, N.; et al. MERT: Acoustic Music Understanding Model with Large-Scale Self-supervised Training, 2023, [arXiv:cs.SD/2306.00107].

- Gui, A.; Liu, S.; Yang, Y.; Yang, L.; Li, Y. Adapting Fréchet Audio Distance for Generative Music Evaluation. In Proceedings of the ICASSP 2024 - 2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2024, pp. 1331–1335. [CrossRef]

- Deutsch, P. Rfc1951: Deflate compressed data format specification version 1.3, 1996.

- Ziv, J.; Lempel, A. A universal algorithm for sequential data compression. IEEE Transactions on information theory 1977, 23, 337–343.

- Huffman, D.A. A method for the construction of minimum-redundancy codes. Proceedings of the IRE 1952, 40, 1098–1101.

| 1 | This is equivalent to transposing all pieces to C major or C minor. |

| 2 | |

| 3 | The beat subdivisions used are: {0, 0.16, 0.25, 0.33, 0.5, 0.66, 0.75, 0.83}. |

| Tokenizer | Example |

|---|---|

| ChordSymbol | <h> <bar> position_0x00 C:maj <bar> position_0x00 E:min position_2x00 G:maj |

| RootType | <h> <bar> position_0x00 C maj <bar> position_0x00 E min position_2x00 G maj |

| PitchClass | <h> <bar> position_0x00 chord_pc_0 chord_pc_4 chord_pc_7 <bar> position_0x00 chord_pc_4 chord_pc_7 chord_pc_11 position_2x00 chord_pc_7 chord_pc_11 chord_pc_2 |

| RootPC | <h> <bar> position_0x00 chord_root_0 chord_pc_4 chord_pc_7 <bar> position_0x00 chord_root_4 chord_pc_7 chord_pc_11 position_2x00 chord_root_7 chord_pc_11 chord_pc_2 |

| Tokenizer | vocab. size | mean len. | max len. | total compr. | compr. len. |

|---|---|---|---|---|---|

| ChordSymbol | 436 | 48.14 | 484 | 0.05926 | 2.8525 |

| RootType | 129 | 66.07 | 659 | 0.04832 | 3.1922 |

| RootPC | 112 | 87.11 | 866 | 0.04140 | 3.6061 |

| PitchClass | 100 | 87.11 | 866 | 0.04138 | 3.6045 |

| MelodyPitch | 195 | 132.46 | 1350 | 0.07501 | 9.9359 |

| Metric | ChordSymbol | RootType | PitchClass | RootPC |

| BART | ||||

| correct bar | 0.9363 | 0.9183 | 0.8929 | 0.8585 |

| correct new chord | 0.9092 | 0.9106 | 0.9000 | 0.9111 |

| correct position | 0.8511 | 0.8457 | 0.8364 | 0.8454 |

| correct chord | 0.5848 | 0.5312 | 0.5759 | 0.5795 |

| correct root | 0.6237 | 0.5833 | — | 0.6318 |

| GPT-2 | ||||

| correct bar | 0.9349 | 0.9152 | 0.9159 | 0.9064 |

| correct new chord | 0.9111 | 0.9072 | 0.9030 | 0.8988 |

| correct position | 0.8542 | 0.8525 | 0.8527 | 0.8502 |

| correct chord | 0.5703 | 0.5527 | 0.5814 | 0.5885 |

| correct root | 0.6168 | 0.5959 | — | 0.6434 |

| Model | Tokenizer | Duplicate Ratio | Token Consistency Ratio |

| BART | ChordSymbol | 0.02 | 96.23 |

| BART | RootType | 0.03 | 98.89 |

| BART | PitchClass | 0.00 | 99.98 |

| BART | RootPC | 0.01 | 99.20 |

| GPT-2 | ChordSymbol | 0.01 | 98.05 |

| GPT-2 | RootType | 0.01 | 99.09 |

| GPT-2 | PitchClass | 0.00 | 99.92 |

| GPT-2 | RootPC | 0.00 | 99.50 |

| Model | Tokenizer | CHE | CC | CTD | CTnCTR | PCS | MCTD | HRHE | HRC | CBS |

| Ground Truth | 1.359 | 4.663 | 0.897 | 0.837 | 0.480 | 1.345 | 0.685 | 2.789 | 0.441 | |

| BART | ChordSymbol | 0.943 | 2.922 | 0.886 | 0.803 | 0.436 | 1.384 | 0.352 | 1.778 | 0.234 |

| BART | RootType | 1.002 | 3.055 | 0.938 | 0.789 | 0.435 | 1.411 | 0.332 | 1.663 | 0.226 |

| BART | PitchClass | 0.965 | 2.996 | 0.913 | 0.789 | 0.425 | 1.413 | 0.391 | 1.841 | 0.281 |

| BART | RootPC | 0.948 | 2.923 | 0.917 | 0.779 | 0.419 | 1.421 | 0.384 | 1.781 | 0.259 |

| GPT-2 | ChordSymbol | 0.839 | 2.650 | 0.881 | 0.766 | 0.376 | 1.444 | 0.355 | 1.810 | 0.236 |

| GPT-2 | RootType | 0.803 | 2.623 | 0.822 | 0.779 | 0.407 | 1.423 | 0.300 | 1.610 | 0.192 |

| GPT-2 | PitchClass | 0.898 | 2.692 | 0.851 | 0.807 | 0.447 | 1.392 | 0.345 | 1.734 | 0.235 |

| GPT-2 | RootPC | 0.803 | 2.623 | 0.822 | 0.779 | 0.407 | 1.423 | 0.299 | 1.610 | 0.192 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).