4. ML Results

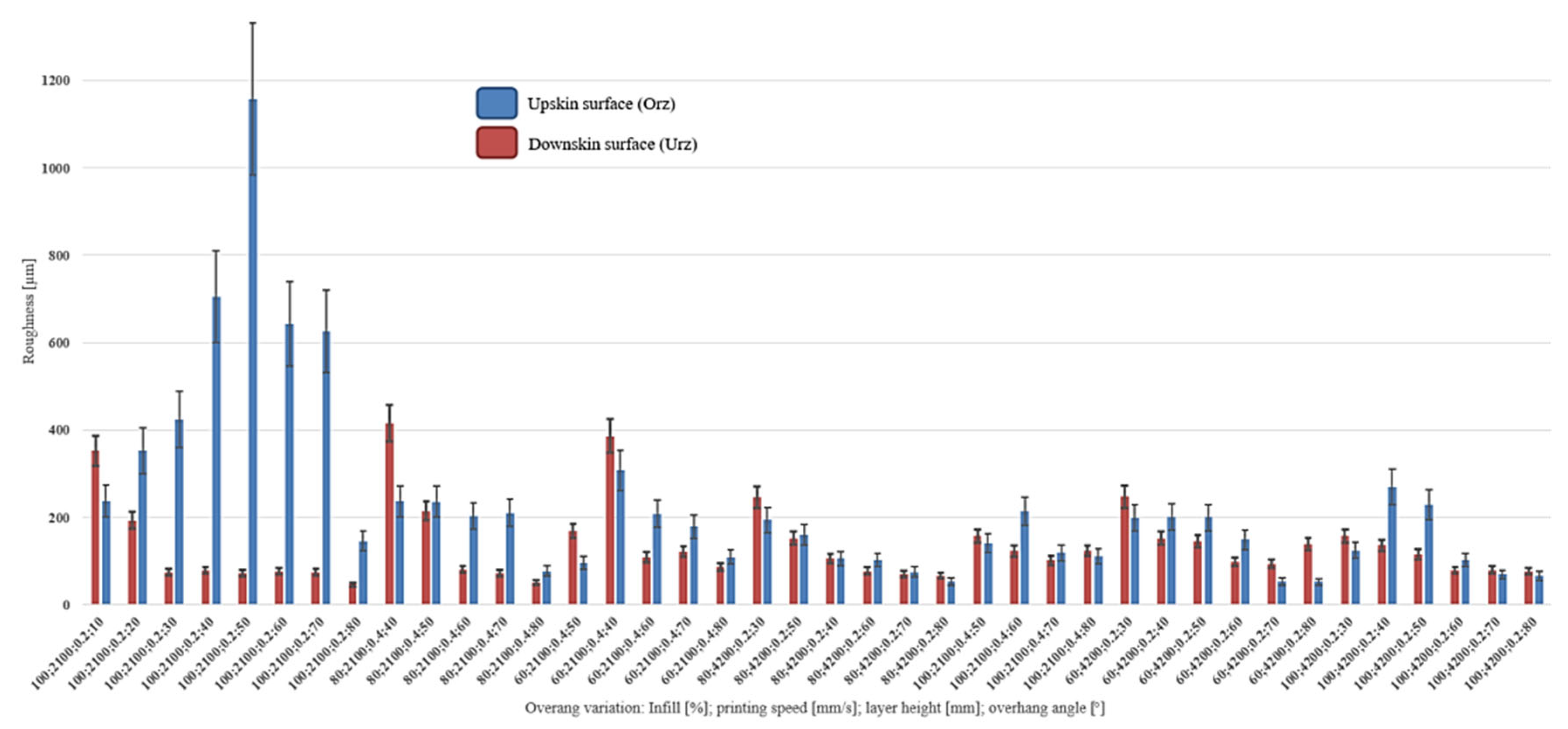

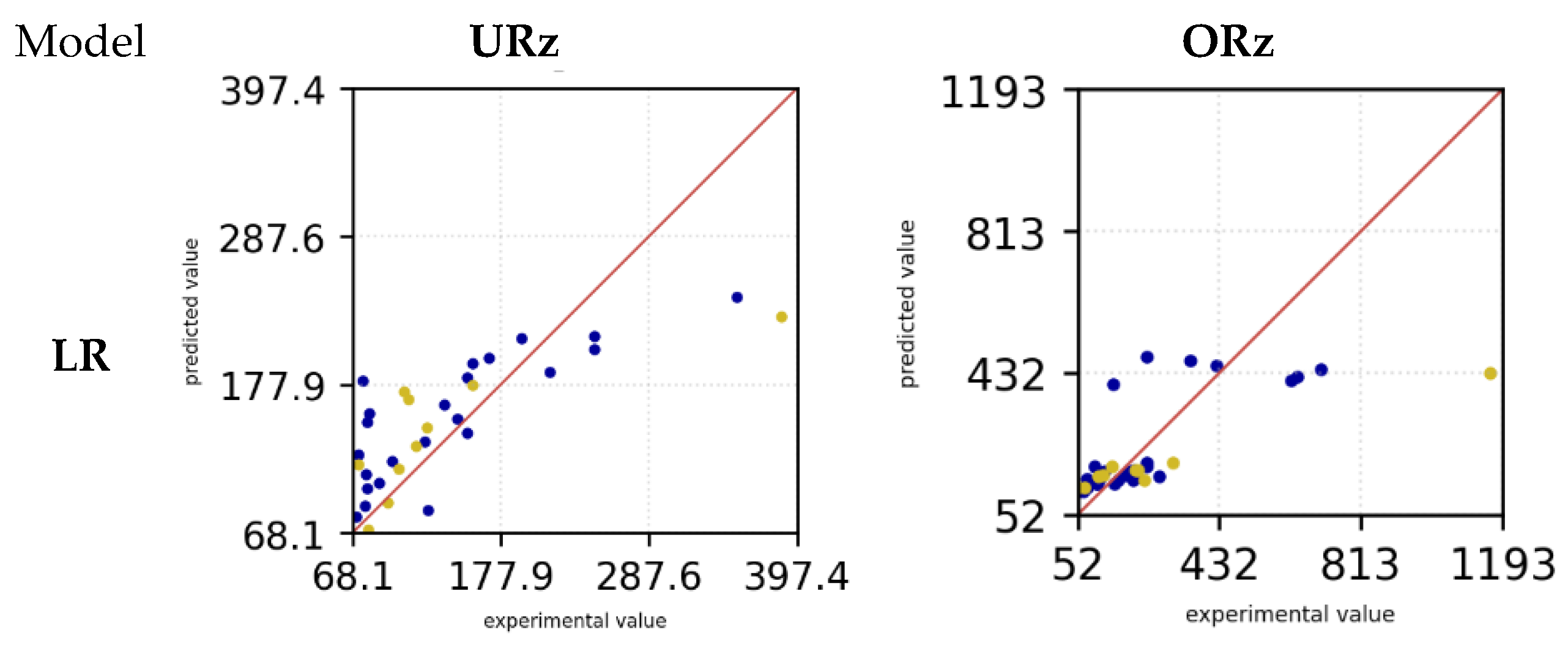

The various ML models LR, RFR, SVM, kNN, MLP and Bag were trained and evaluated with hyperparameter tuning. Subsequently, the R² factor and the RSE for downskin and upskin angle of the experimental data were evaluated (compare

Figure 3).

Table 5 shows the respective values of each model. The highest R² value in the training data set can be seen in the MLP model for the URz.

RFR and Bag also show a high value of 0.86 and 0.9. Similarly, these models also have a low RMSE value for the training data (MLP 18.75 µm, RFR 32.04 µm and Bag 27.15 µm). A similar observation can be made in the training data set for ORz, in which MLP (0.99) also has the highest R² value, followed by RFR (0.88) and Bag (0.75). SVM has the lowest R² value in the training data set for URz with 0.44 followed by LR with a value of 0.55. The RMSE value is highest for SVM at 63.79 µm and for LR at 57.44 µm. In the case of ORz, the R² factor is also lowest for SVM with 0.26 and also followed by LR with 0.58. Here the RMSE value is 144.90 µm (SVM) and 109.34 µm (LR). In the test dataset, the RFR model achieved a maximum coefficient of determination (R²) of 0.7 for the target variable URz, while the kNN model attained an R² value of 0.65. The corresponding RMSE values were 51.27 µm and 47.52 µm, respectively. For the target variable ORz, the kNN model also recorded the highest R² value of 0.69, with an RMSE value of 170.96 µm.

Table 5.

R² value and RMSE of URz and ORz in training and test case for all ML models.

Table 5.

R² value and RMSE of URz and ORz in training and test case for all ML models.

| Model |

Target value |

Indicators |

Training |

Test |

| LR |

URz |

R² |

0.55 |

0.51 |

| RMSE [µm] |

57.44 |

60.21 |

| ORz |

R² |

0.58 |

0.41 |

| RMSE [µm] |

109.34 |

237.45 |

| RFR |

URz |

R² |

0.86 |

0.70 |

| RMSE [µm] |

32.04 |

47.51 |

| ORz |

R² |

0.89 |

0.31 |

| RMSE [µm] |

56.79 |

255.7 |

| SVM |

URz |

R² |

0.44 |

0.39 |

| RMSE [µm] |

63.79 |

67.60 |

| ORz |

R² |

0.26 |

0.1 |

| RMSE [µm] |

144.90 |

293.12 |

| kNN |

URz |

R² |

0.66 |

0.65 |

| RMSE [µm] |

49.80 |

51.27 |

| ORz |

R² |

0.73 |

0.69 |

| RMSE [µm] |

87.98 |

170.96 |

| MLP |

URz |

R² |

0.95 |

0.26 |

| RMSE [µm] |

18.76 |

74.66 |

| ORz |

R² |

0.99 |

0.13 |

| RMSE [µm] |

13.71 |

288.44 |

| bag |

URz |

R² |

0.90 |

0.53 |

| RMSE [µm] |

27.15 |

59.10 |

| ORz |

R² |

0.75 |

0.60 |

| RMSE [µm] |

84.63 |

194.01 |

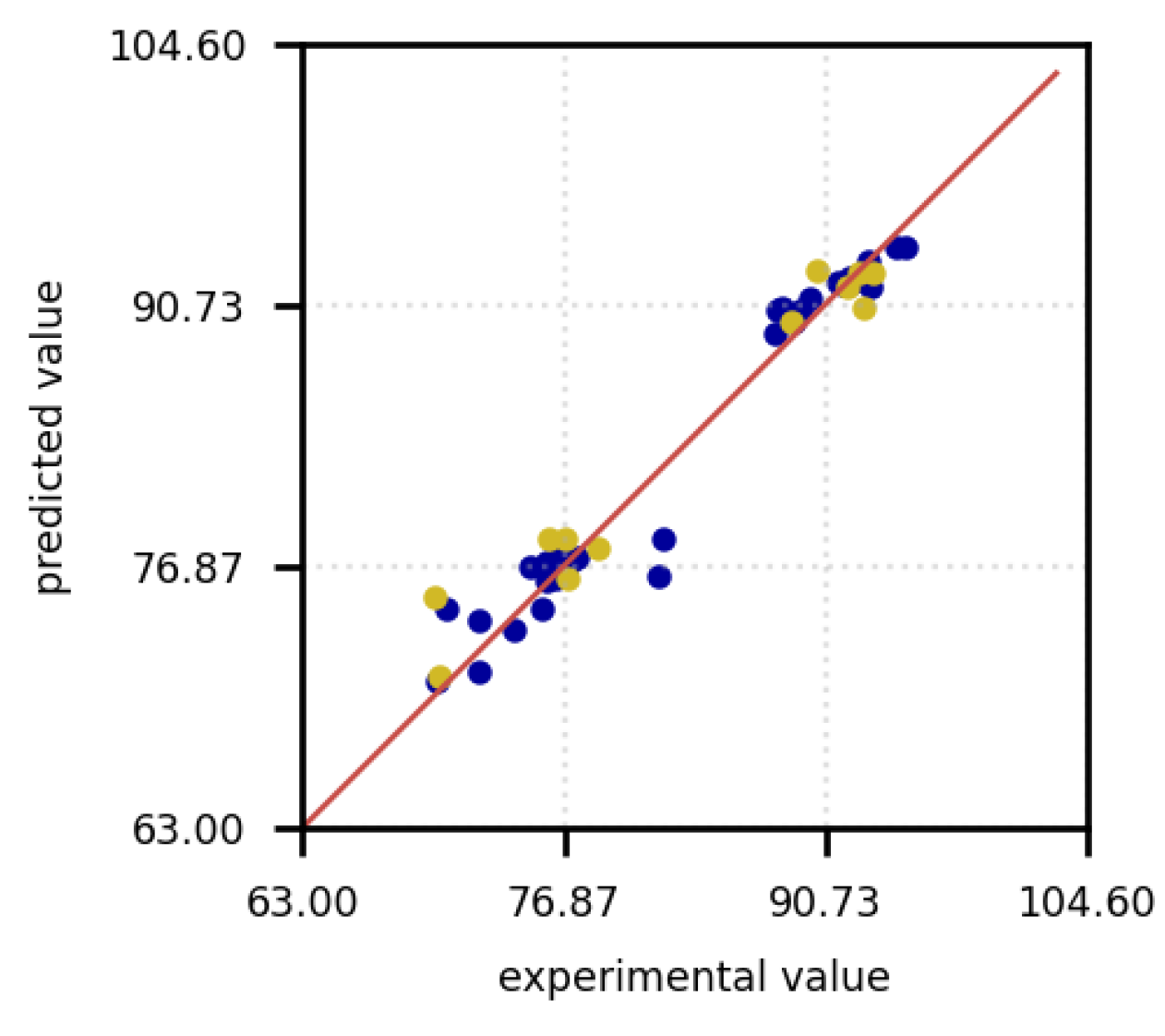

Additionally, diagrams of the respective models were generated, illustrating the predictions compared to the experimental data. The red line indicates a perfect prediction. The farther the points are from the line, the less accurate the predictions are.

Figure 6 presents the prediction diagrams of the models under examination, facilitating an initial selection of the models in question. The blue dots depict the training data, while the yellow dots represent the test data. For the LR model, it is observed that both the test and training data predictions deviate from the experimental data. This phenomenon is observable for both the downskin angle URz and the upskin angle ORz. Only for small overhang angles do the predictions appear to align more closely with the experimental data, resulting in smaller deviations for both URz and ORz. The SVM model exhibits a behavior similar to that of the LR model's predictions. In this instance as well, the predictions significantly deviate from the actual values. Both the LR and SVM models tend to underestimate the actual surface roughness for URz and ORz. In contrast, the RFR model demonstrates higher accuracy in predicting the training data with larger URz values, a trend that is also observed in the test data. Nonetheless, in both scenarios, the roughness prediction is underestimated.

Figure 6.

Surface roughness prediction versus experimental measurements in [µm] for the training (blue) and test data (yellow) of all investigated ML models.

Figure 6.

Surface roughness prediction versus experimental measurements in [µm] for the training (blue) and test data (yellow) of all investigated ML models.

The kNN model demonstrates that more accurate predictions can be achieved for low URz and Orz values. However, for higher roughness, the predictions deviate from the experimental data. In this context, both the training and test datasets underestimate the actual values for lager roughness values (> 200 µm). The bag model exhibited a high level of predictive accuracy for Urz in the training dataset, including for large roughness values. Nevertheless, for small roughness values, the model showed a tendency to overestimate predictions in the test dataset, whereas for large roughness values, it occasionally underestimated the predictions. In the case of Orz, the training data is even more accurate in its prediction and tends to underestimate small roughness values.

The test data overestimates the experimental data and predict higher values for the roughness. However, for large roughnesses, the predictions in the test data set tend to be underestimated. In the training dataset, the MLP model shows the smallest deviations in the predicted values for both Orz and Urz. Only in the test data, small roughnesses are overestimated and larger roughnesses are underestimated.

Since the training data for MLP and Bag are heavily aligned with the experimental data, while the test data fluctuate more around the exact prediction, the machine learning models appear to be overfitted. For Urz and Orz, the MLP model appears to perform the best despite signs of overfitting and is therefore selected for further parameter optimization. The other algorithms are not further investigated as the MLP seems to be the most promising algorithm for this dataset.

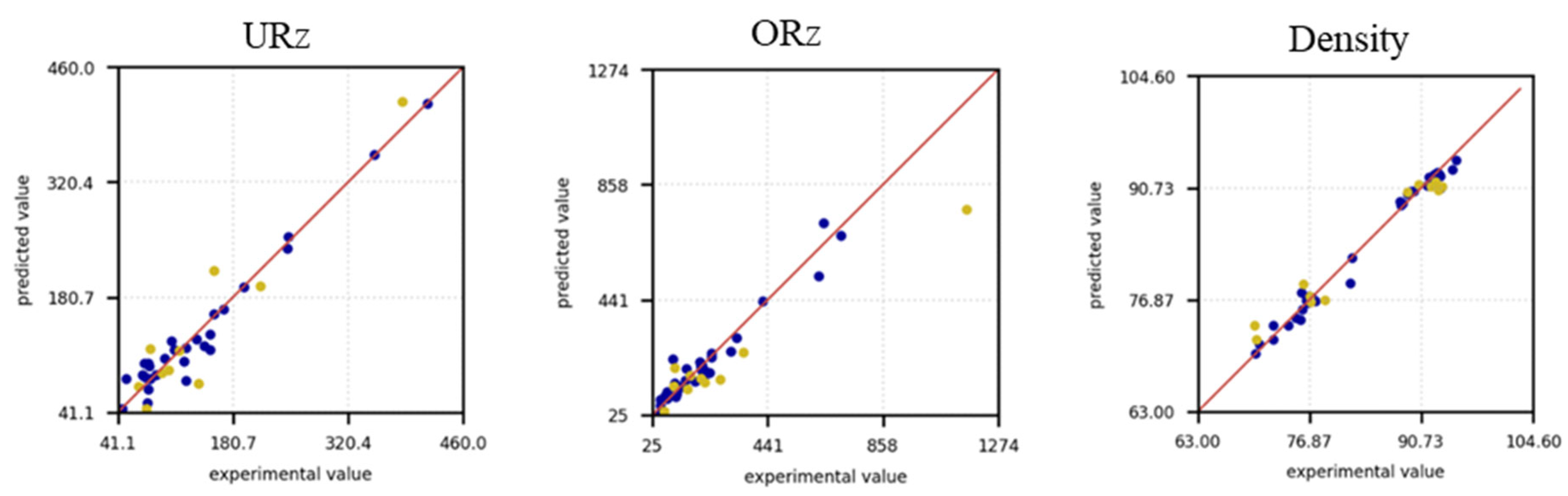

The same approach was applied to the target variable density, where, with parameter optimization using polynomial feature importances and the SelectKBest method, the Bag model appears to be the best starting point. The prediction diagram is shown in

Figure 7. It can be observed that the predictions for both the training data and the test data are located close to the actual values. Occasionally, the predictions for the training data are underestimated, which may indicate that overfitting is not occurring. Both training and test data oscillate within a similar range around the optimal axis, which means that a good generalization of the model can be assumed. In the following only the Bag model will be investigated further for the density prediction.

Figure 7.

Density prediction versus experimental measurements in % points for the training (blue) and test data (yellow).

Figure 7.

Density prediction versus experimental measurements in % points for the training (blue) and test data (yellow).

The hyperparameter optimization over 10 runs using polynomial feature importances and SelectKBest led to the following results in terms of the R² factor and RMSE for Urz, Orz and density (see

Table 6). A direct comparison with

Table 5 for the MLP model shows a significant increase in the R² value from 0.25 to approximately 0.71. Additionally, the RMSE was reduced by more than 25 µm to around 49.27 µm. Although the R² factor in the training dataset decreased to 0.83 and the RMSE increased to 34.82 µm, the model initially seemed to be overfitted, so the optimization counteracted this trend. The standard deviation of the R² value for Urz is 0.11 for the training data and 0.3 for the test data. Thus, the variability among the training data is less than that of the test data. This suggests variability in the model’s performance and indicates the need for further iterations to achieve a more robust R² value. The RMSE for both the training data and test data also exhibits significant variability, making it difficult to draw definitive conclusions about the error of the mean values after 10 iterations. This variability suggests that the MLP model is sensitive to the hyperparameters, necessitating further optimization iterations to achieve greater robustness.

A similar observation can be made for the ORz MLP model. Here, the R² value decreases from 0.99 to 0.72 and the RMSE increased from 13.71 µm to approximately 90.8 µm in the training dataset from the initial iteration step (compare

Table 5). The R² value for the test data, on the other hand, increases from 0.12 to around 0.46 and the RMSE error is reduced to 222.92 µm. The reduction of the R² value in the training data is an indication that overfitting is counteracted, but the high standard deviation of R² and RMSE indicates a low robustness of the model.

The bag model was used for the den”Ity ’orecast. In the training data set as well as in the test data set, the R² value is already high at 0.96 and 0.91, so that the independent variables can be well described by the number of dependent variables. The standard deviation is also much smaller than for the other two MLP models, which means that the model can be expected to be more robust. The same applies to the RMSE error, which is small at 1.64 % (training) and 2.53 % (test) and also has a standard deviation of less than 0.55 %.

Table 6.

Hyperparameter optimization after 10 iterations for the MLP and Bag models.

Table 6.

Hyperparameter optimization after 10 iterations for the MLP and Bag models.

| |

Value |

Urz (MLP) |

Orz (MLP) |

Density (Bag) |

| Train |

R2 |

0.83 ± 0.11 |

0.72 ± 0.12 |

0.96 ± 0.02 |

| RMSE |

34.82 ± 13.82 [µm] |

90.8 ± 34.7 [µm] |

1.64 ± 0.34 [%] |

| Test |

R2 |

0.71 ± 0.3 |

0.46 ± 0.15 |

0.91 ± 0.04 |

| RMSE |

49.27 ± 18.95 [µm] |

222.92 ± 41.41 [µm] |

2.53 ± 0.53 [%] |

After completing 10 iterations, the optimal hyperparameters were determined by maximizing the coefficient of determination and minimizing the root mean square error.

Table 7 shows the values with the optimized hyperparameters. The selected hyperparameters for the MLP model are illustrated in

Table 8 and for the Bag model in

Table 9.

An R² of 0.96 shows that the model can explain 96 % of the variance of the surface roughness (Urz) in the training. The RMSE error is also significantly lower here at 16.04 µm. In the test data set, the R² factor was also increased to 0.88 and also has a low RMSE value of 32.11 µm. A significant increase in the error on the test data indicates that the model has difficulties predicting the test data with the same precision as the training data. There is a possibility of a slight overfitting effect occurring in this case. The R² factor with 0.91 does not change for Orz. A constant R² value indicates that the model has learned the patterns in the data well and also transfers them to the test data. An RMSE increase from 51.29 µm (training) to 91.89 µm (test) strongly indicates overfitting. Regularization, optimized data processing and model adjustments can improve the generalization and reduce the test error. For the bag model for density, the R² value for 0.98 (training) and 0.95 (test) is also high. The RMSE error is lower in the training (1.12 %) than in the test (1.97 %). Further optimization iterations should reduce the different errors.

Table 7.

Parameter optimization (best models).

Table 7.

Parameter optimization (best models).

| |

Value |

Urz (MLP) |

Orz (MLP) |

Density (Bag) |

| Train |

R2 |

0.96 |

0.91 |

0.98 |

| RMSE |

16.04 [µm] |

51.29 [µm] |

1.12 [%] |

| Test |

R2 |

0.88 |

0.91 |

0.95 |

| RMSE |

32.11 [µm] |

91.89 [µm] |

1.97 [%] |

Table 8.

Parameter optimization (selected hyperparameter) – Urz, Orz.

Table 8.

Parameter optimization (selected hyperparameter) – Urz, Orz.

| Algorithm |

Parameter |

Urz |

Orz |

| polynomial features |

activated |

False |

True |

| Kbest |

K |

- |

10 |

| scaler |

scaler |

MinMaxScaler |

MinMaxScaler |

| estimator |

model |

MLP |

| hyperparameters |

hidden_layer_sizes |

(10,) |

(10,) |

| alpha |

0.01 |

0.01 |

| activation |

relu |

relu |

| solver |

lbfgs |

lbfgs |

| learning_rate |

adaptive |

adaptive |

Table 9.

Parameter optimization (selected hyperparameter) – density.

Table 9.

Parameter optimization (selected hyperparameter) – density.

| Algorithm |

Parameter |

Density |

| polynomial features |

activated |

True |

| Kbest |

K |

15 |

| scaler |

scaler |

StandardScaler |

| bag |

estimator |

DecisionTreeRegressor |

| n_estimators |

10 |

| max_samples |

0.7 |

| bootstrap |

false |

| bootstrap_features |

True |

Table 10 indicates the averaged evaluation parameters of 50 iterations with the optimized hyperparameters. The observed discrepancy between training and test data indicates that the model performs better on the training set, which could suggest some degree of overfitting. However, the low standard deviation across both datasets demonstrates a high level of robustness and consistency in the model’s predictions. For Orz, the R² factor is 0.94 in the training dataset and 0.73 in the test dataset, with a standard deviation of 0.07. Although the performance on the test data is lower, the small standard deviation highlights the stability of the model across samples and deliver similar results. Similarly, for density, the R² factor is 0.98 on the training set with a standard deviation of 0.003, and 0.92 on the test set with a standard deviation of 0.02. This consistency in performance, coupled with the relatively small deviations, reinforces the robustness of the model, even if the performance on the test data does not reach the same level as on the training data. Overall, while there is evidence of better training data performance, the low standard deviations suggest that the model’s predictions are reliable and not overly sensitive to variations within the datasets. For the RMSE, the error for Urz during training is 14.32 with a standard deviation of 3.27, reflecting improvements compared to the results obtained after hyperparameter optimization with 10 iterations. Furthermore, the error in the test set was reduced to 34.93, accompanied by a standard deviation of 6.28. These results indicate that the optimization process enhanced both the accuracy and stability of the predictions for Urz.

For Orz, the RMSE during training is 47.67 µm with a standard deviation of 16.45 µm. However, the test RMSE is significantly higher at 155.63 µm, with a standard deviation of 20.47 µm. This suggests that, while the model achieved reasonable performance in training, its ability to generalize to test data is more limited for Orz. Additionally, both the roughness and deviations are notably higher for Orz compared to Urz.

Importantly, despite the higher error and variability in the predictions for Orz, there was a reduction in standard deviation for both training and test datasets when compared to the results obtained after 10 iterations of hyperparameter optimization (as seen in

Table 6). This improvement in stability indicates that the optimization process contributed to reducing variability in the predictions, even if the overall performance still shows room for improvement. For density, the RMSE in training is 1.06 % with a standard deviation of 0.11, while in the test dataset, it increases to 2.51 % with a deviation of 0.29 %. This indicates a noticeable performance drop when transitioning from training to test data. Furthermore, the test RMSE and its deviation are higher compared to the results after 10 iterations of hyperparameter optimization.

Table 10.

Model training with best parameters (50 runs) – MLP, MLP und Bagging.

Table 10.

Model training with best parameters (50 runs) – MLP, MLP und Bagging.

| |

Value |

Urz (MLP) |

Orz (MLP) |

Density (Bag) |

| Train |

R2 |

0.97 ± 0.01 |

0.94 ± 0.06 |

0.98 ± 0.003 |

| RMSE |

14.32 ± 3.27 [µm] |

47.67 ± 16.45 [µm] |

1.06 ± 0.11 [%] |

| Test |

R2 |

0.85 ± 0.06 |

0.73 ± 0.07 |

0.92 ± 0.02 |

| RMSE |

34.93 ± 6.28 [µm] |

155.63 ± 20.47 [µm] |

2.51 ± 0.29 [%] |

Using the selected hyperparameters from

Table 8 and

Table 9 for the MLP and Bag models, the corresponding values for RMSE and R² as best results are presented in

Table 11. These results offer insights into how the optimized hyperparameters affect model performance in terms of accuracy and explanatory power. For Urz, the model demonstrated a strong fit with an R² of 0.96 in the training set, indicating that 96% of the variance in the data was explained. However, the test set R² decreased to 0.87, and the RMSE increased from 17.38 µm in training to 32.93 µm in testing, suggesting some overfitting. The Orz results showed a similar trend, with a high R² of 0.94 in the training set, but a significant drop to 0.79 in the test set. Additionally, the RMSE for Orz was substantially higher, moving from 41.58 µm in training to 138.99 µm in testing, indicating a severe generalization issue. In contrast, the model performed exceptionally well for density, achieving an R² of 0.99 in the training set and 0.95 in the test set, with an RMSE of 0.95% and 1.88%, respectively. The minimal difference between training and test RMSE for density suggests that the model generalizes well to unseen data. Overall, the results highlight that while the model is highly effective for density, additional improvements in feature selection and regularization are needed to enhance the generalization performance for Urz and especially Orz, where overfitting is more pronounced.

Table 11.

Model training with best models (best models).

Table 11.

Model training with best models (best models).

| |

Value |

Urz (MLP) |

Orz (MLP) |

Density (Bag) |

| Train |

R2 |

0.96 |

0.94 |

0.99 |

| RMSE |

17.38 [µm] |

41.58 [µm] |

0.95 [%] |

| Test |

R2 |

0.87 |

0.79 |

0.95 |

| RMSE |

32.93 [µm] |

138.99 [µm] |

1.88 [%] |

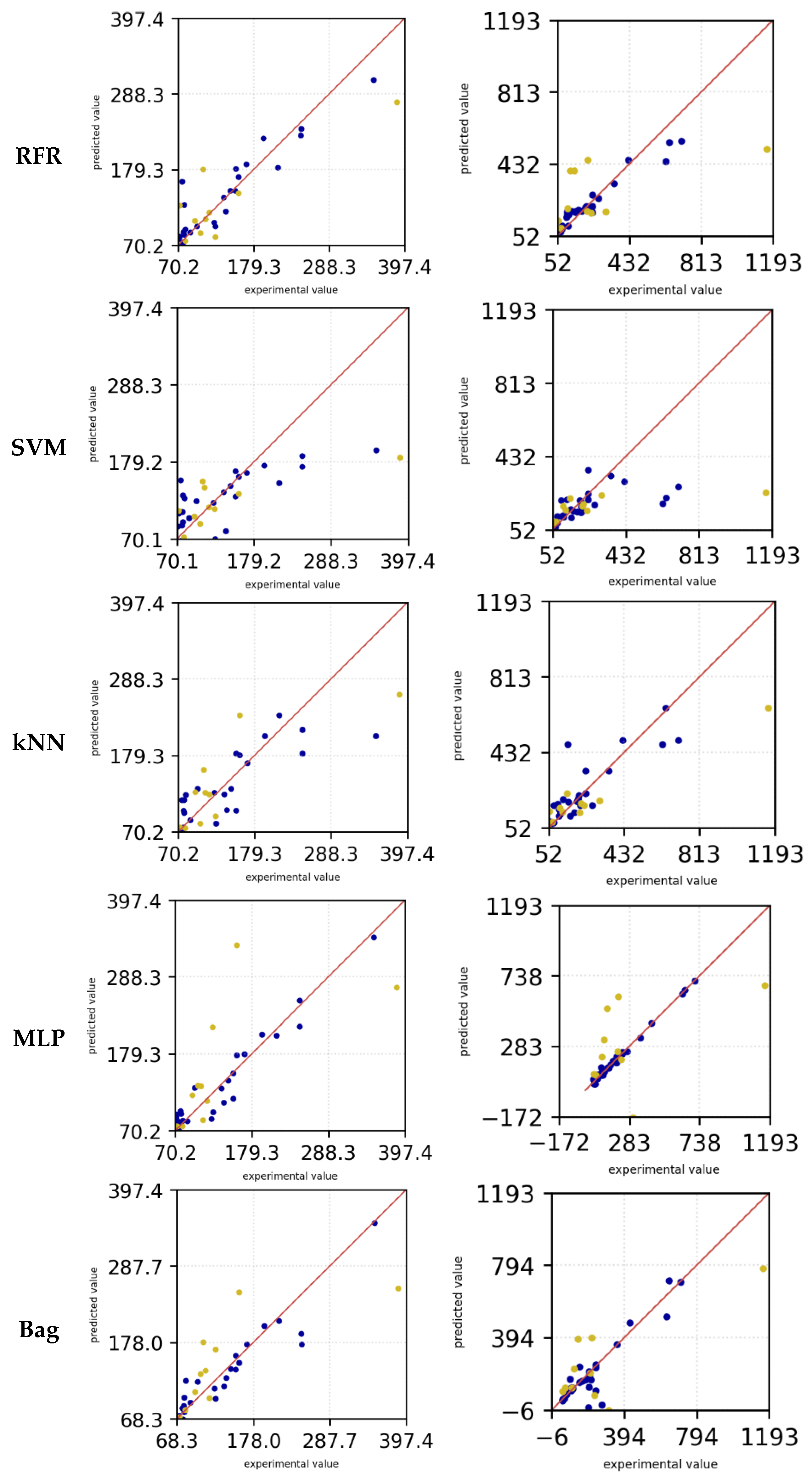

Figure 8 shows the predictions of the experimental values as a further evaluation criterion. In this case too, the blue dots are the training data set and the yellow dots are the test data. For Urz (left) it can be seen that the trending data for small roughness values deviate from the exact values and make precise predictions with increasing roughness. The scattering for small roughness values can be observed in the test data. With increasing roughness, a deviation from the experimental data can still be observed, but this deviation remains constant with increasing roughness values.

Figure 8.

Surface roughness prediction versus experimental measurements for the training (blue) and test data (yellow) of all investigated ML models for URZ (left) in µm, ORz (center) in µm, and density (right) % points.

Figure 8.

Surface roughness prediction versus experimental measurements for the training (blue) and test data (yellow) of all investigated ML models for URZ (left) in µm, ORz (center) in µm, and density (right) % points.

For ORz (

Figure 8, center), the model demonstrates superior predictive accuracy for small roughness values (< 441 µm), as evidenced by better alignment with the training data and reduced scatter in the predictions. This indicates that the model effectively captures the underlying patterns within this range, likely due to a higher density of data points or less variability in the observed values. However, as roughness increases beyond this threshold, the accuracy of the predictions deteriorates, accompanied by a noticeable increase in scatter. This decline suggests that the model struggles to generalize to larger roughness values, potentially due to a lack of sufficient data or increased complexity in the relationships governing higher roughness levels. Addressing this issue may require additional data for large roughnesses or the inclusion of features that better capture the variability in this range. The situation is the same for the test data of ORz, although the deviation of the predictions is generally significantly larger. In the density prediction (

Figure 8, right), the training data are well adapted to the experimental data and an accurate prediction with few outliers is made for both low and high density values. For the test data, the predictions for low density values tend to be overestimated with a few outliers, while for high density predictions the values are primarily underestimated.