Submitted:

25 February 2025

Posted:

26 February 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- This work presents a hybrid baseline model (HBM) that combines a Transformer model with LSTM, designed for optimal tuning with variable hyper-parameters.

- Introduces a novel cost function and utilizes Genetic Algorithms for optimizing discrete design spaces (DDS) influenced by model hyper-parameters.

- Incorporates hardware-specific performance evaluations at each optimization step, ensuring effective deployment on targeted hardware.

2. Proposed Methodology

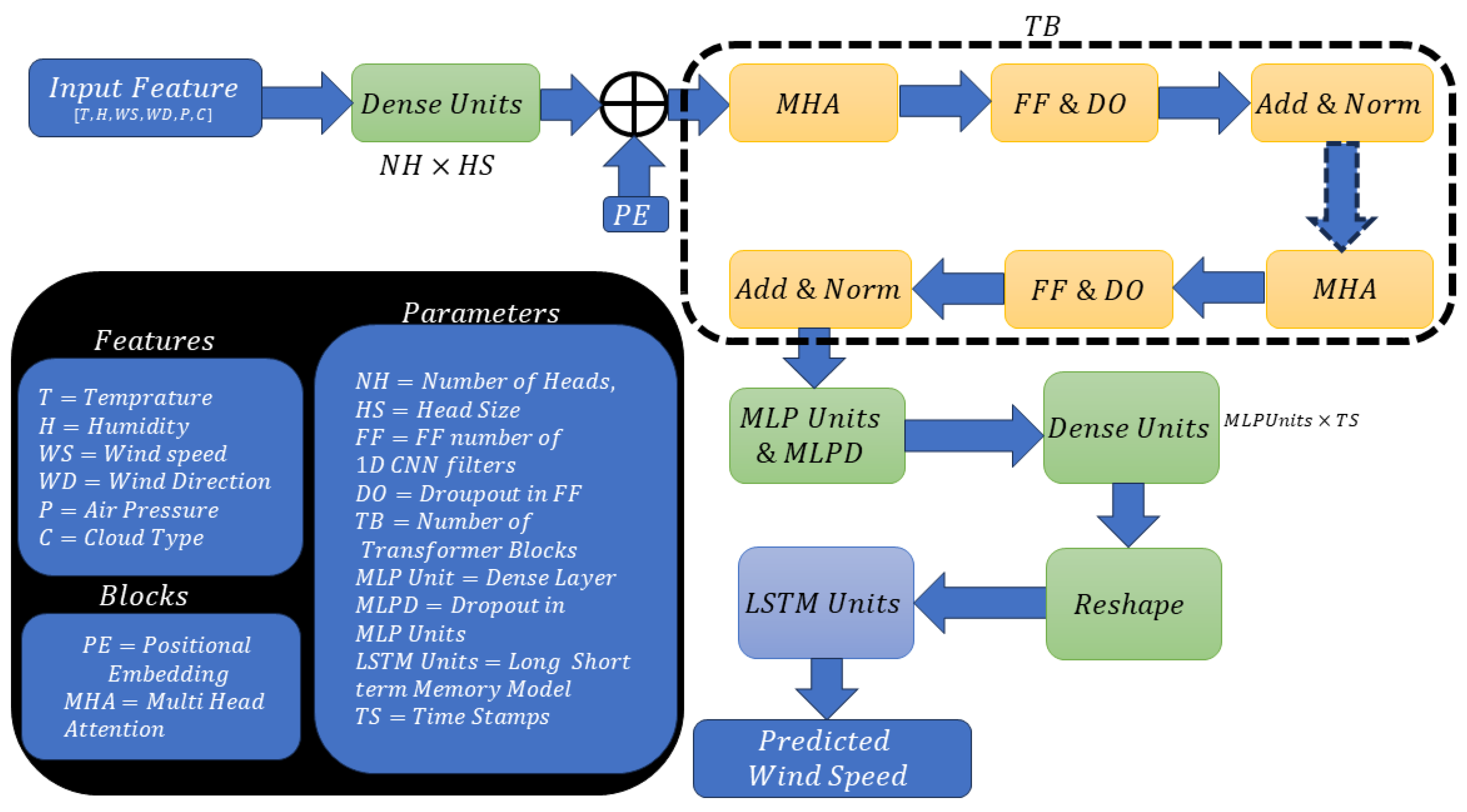

2.1. Baseline Architecture

2.2. Model Performance Metrics

2.3. Cost and Fitness Functions

- Head Size (): Influences the granularity of attention and overall model capacity.

- Number of Heads (): Enables concurrent attention mechanisms, facilitating the model’s understanding of diverse data facets.

- Feed-Forward Dimension (): Signifies the inner processing capability of the feed-forward network within the Transformer.

- Number of Transformer Blocks (): Multiple blocks augment the model’s depth, enhancing its ability to discern complex patterns.

- MLP Units (): Governs the capacity of the dense layers within the model.

- Dropout (): Acts as a regularization knob in the transformer encoder section.

- MLP Dropout (): Acts as a regularization knob in the dense layer section, mitigating the risk of overfitting.

- LSTM Units (): Determines the temporal processing power of the LSTM layers.

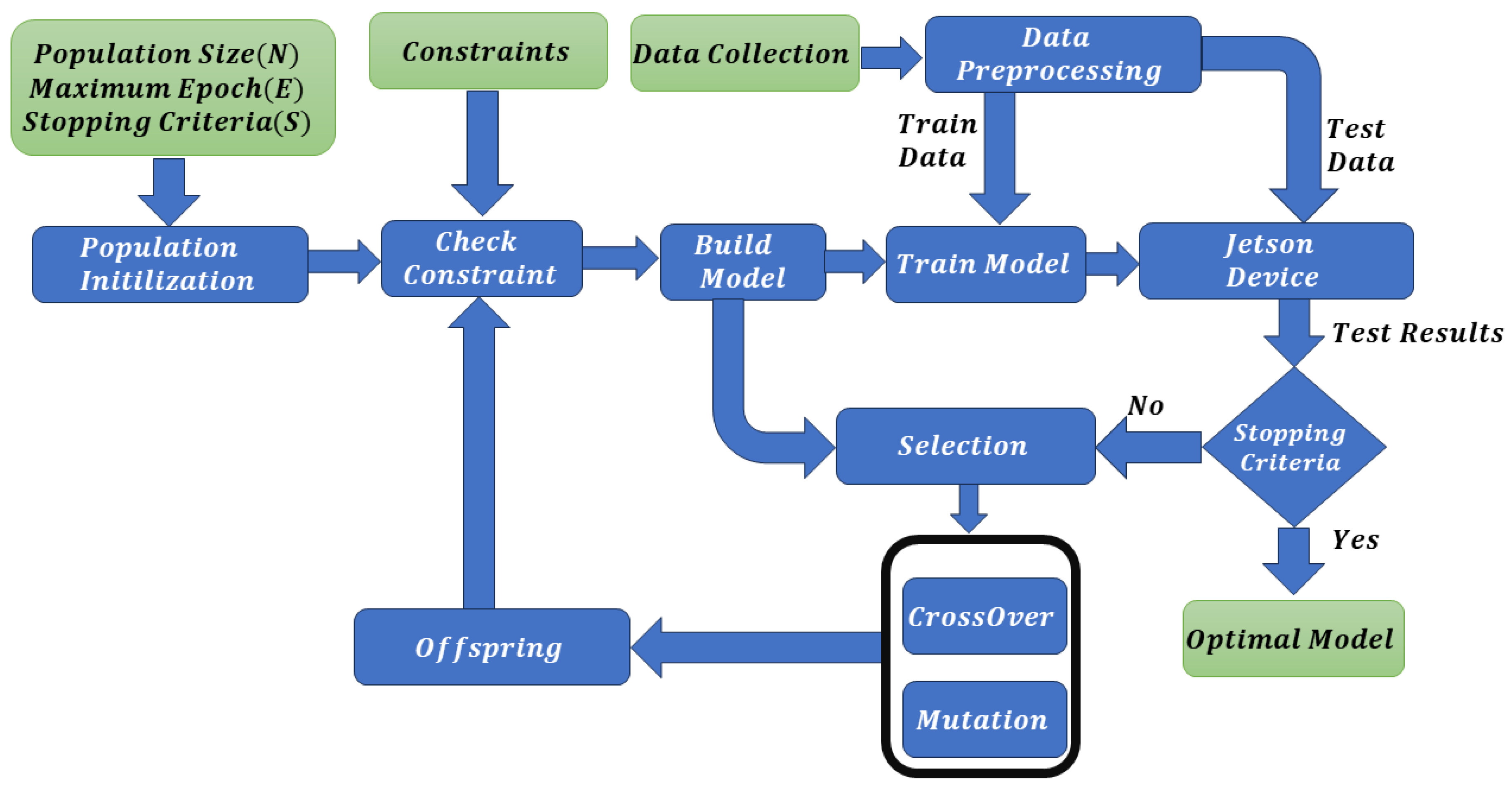

2.4. Hardware-Centric DDS Optimization Scheme

- A Genetic Algorithm initiates a random generation of models, where each gene represents a specific aspect of the model and one chromosome can be given as X in equation 16. Once these models are generated, they are passed through a constraint check.

- These models are then built and trained for a limited duration of 25 epochs. Post-training, they are deployed on a memory-constrained device to evaluate their performance along with the test data.

- The fitness of these models is calculated based on the test results, using equation 18, where is a Lagrange multiplier that penalizes models with a negative R2 score after 25 epochs.

-

The GA process for generating new models involves selection, mutation and crossover by considering the following aspects:

- If there is an improvement in performance over the last three generations, the two best models (minimum fitness value) are selected for mutation and crossover.

- If performance does not improve, one best parent and a second randomly selected gene are used for mutation and crossover.

- During mutation or crossover, if any gene exceeds its predefined constraint limits, a random value within the permissible range is reassigned to maintain the MS within the required memory space.

- The process returns to step 2 unless a stopping criterion is met.

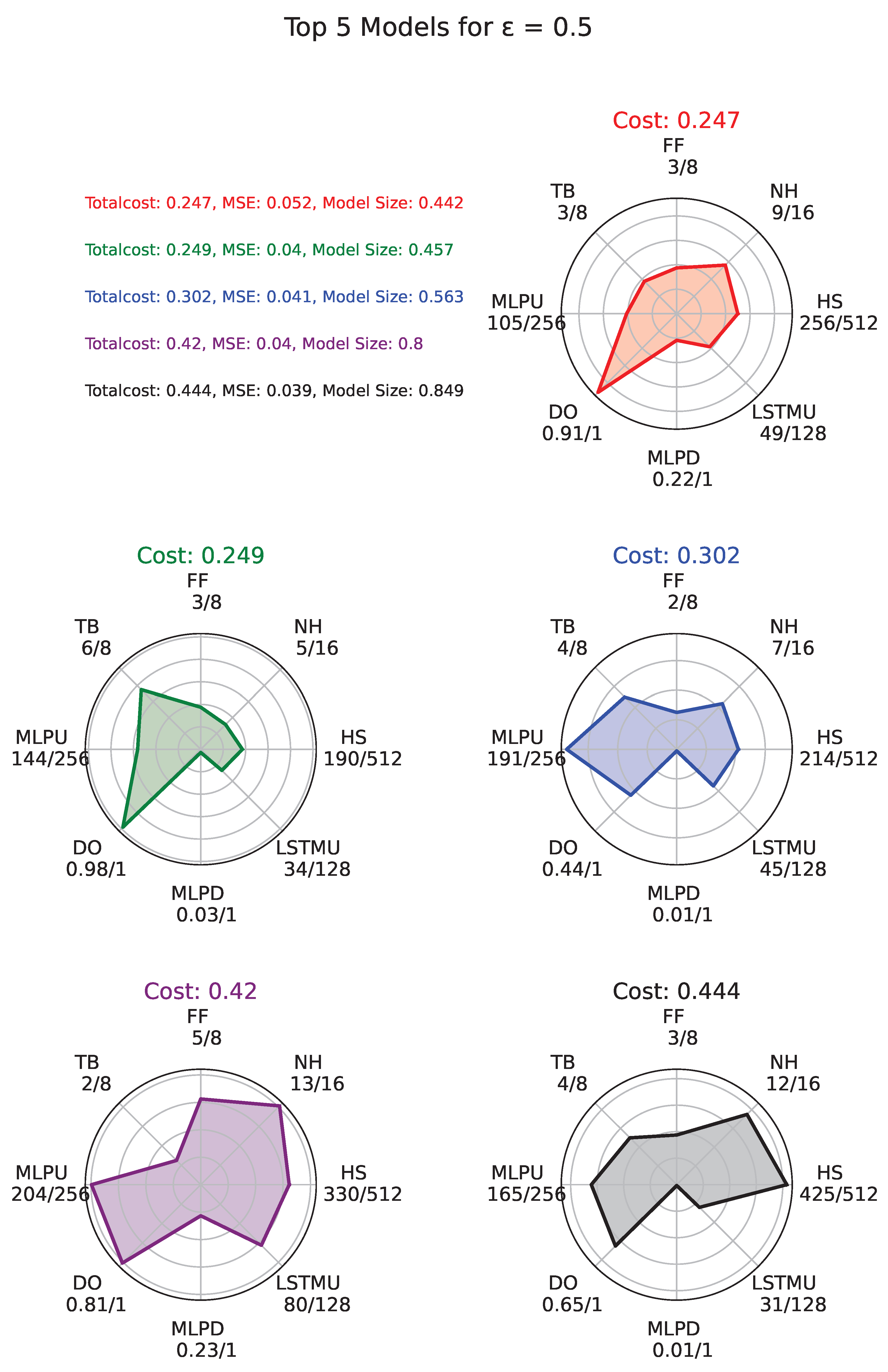

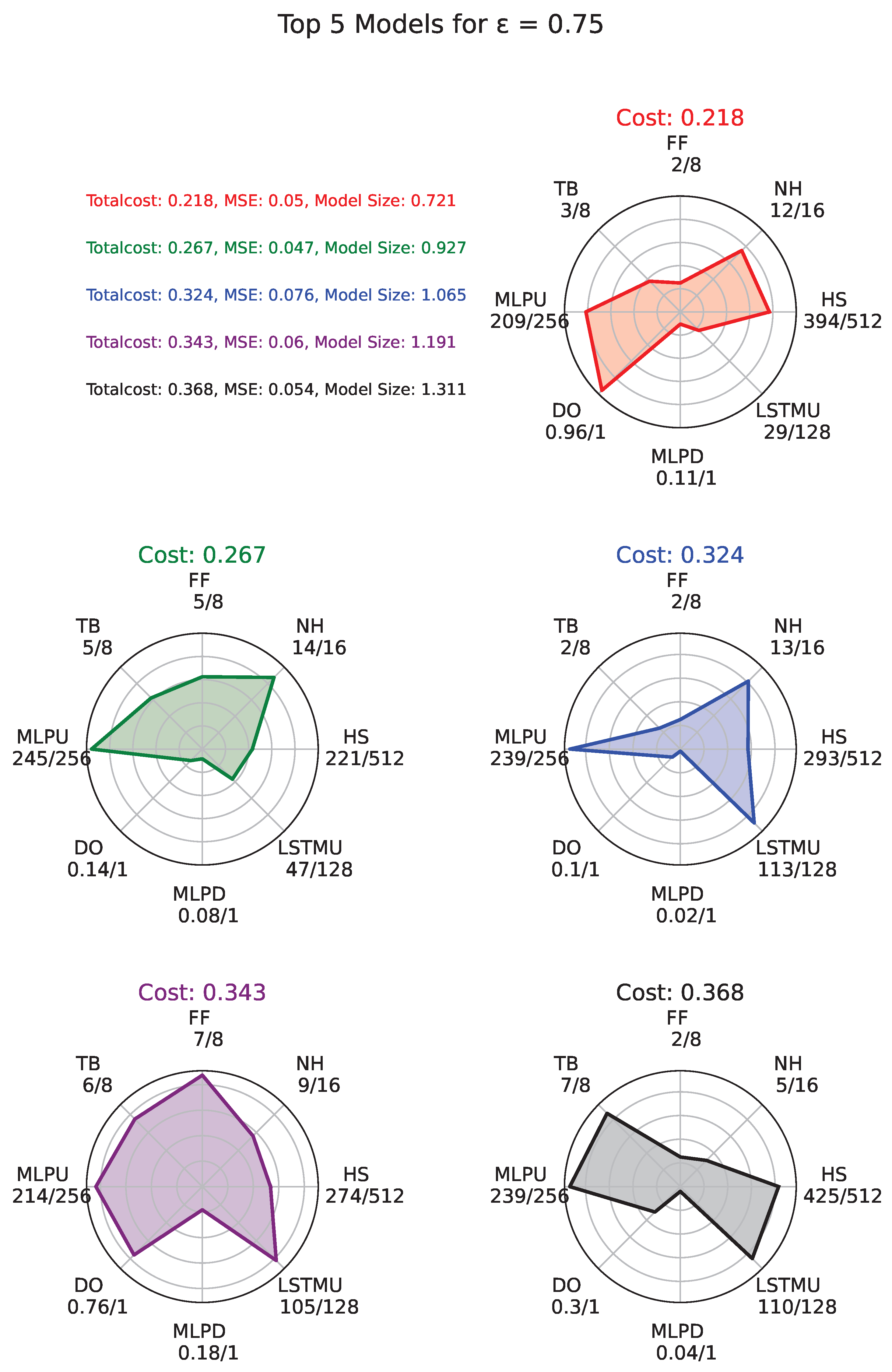

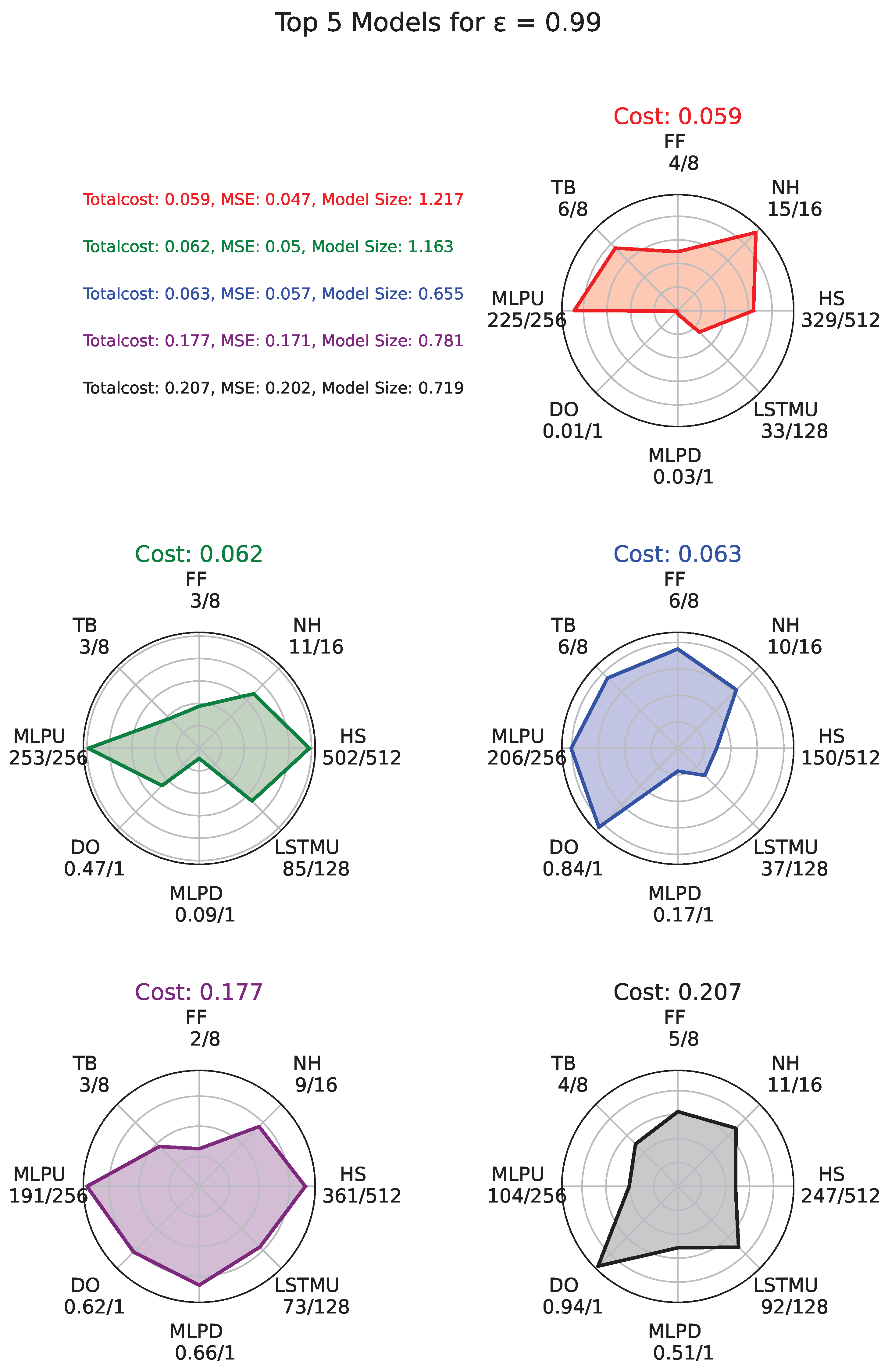

- Finally, the top 5 models from the experiment are selected for further training over an extended number of epochs. They are then re-evaluated on the memory-constrained device to ascertain their final performance metrics.

| Algorithm 1:Hardware-Centric DDS Optimization Algorithm |

|

3. Results and Comparative Analysis

3.1. Datasets and Parameter Configurations

3.2. Results

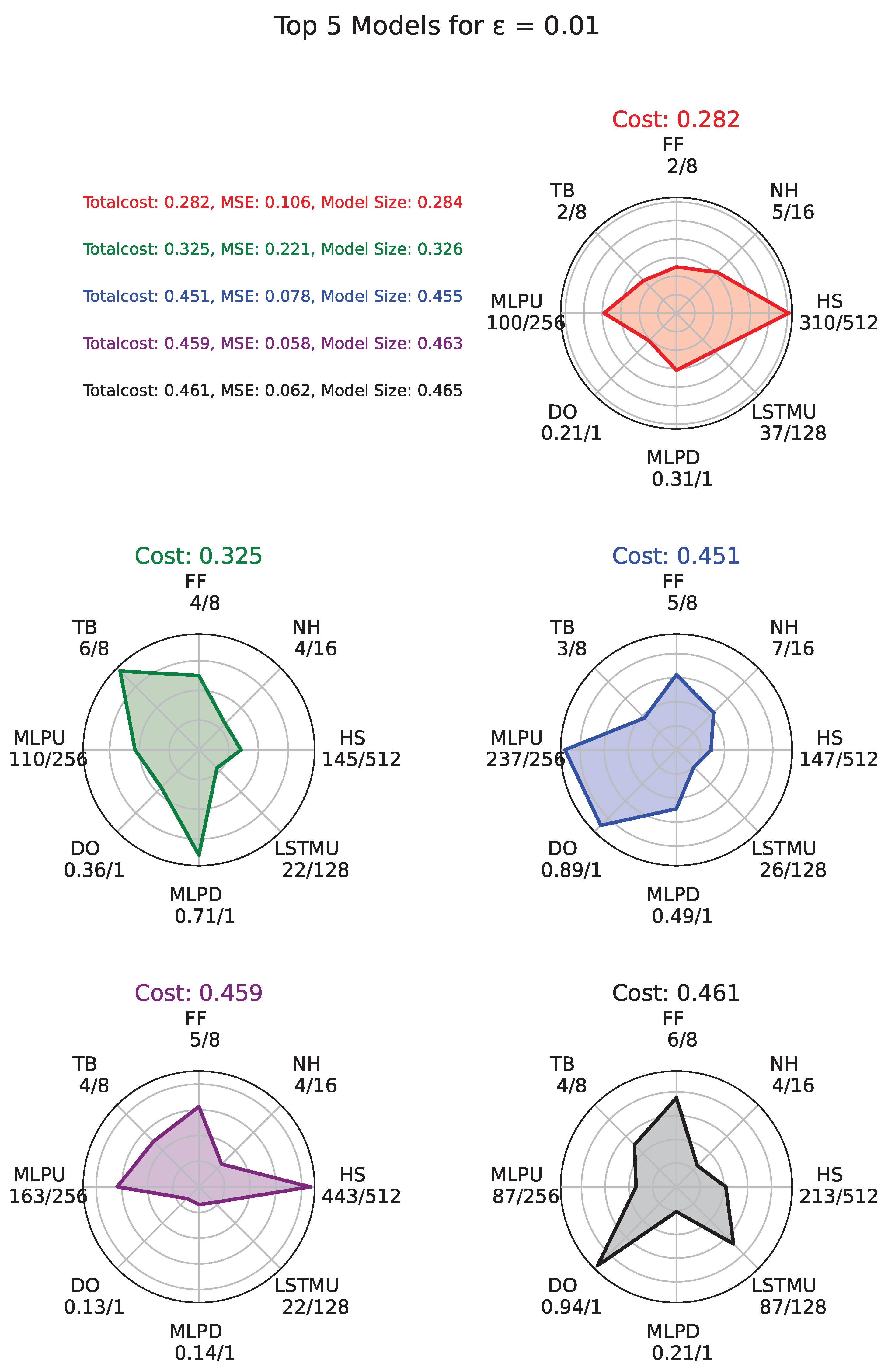

3.2.1. Experiment with

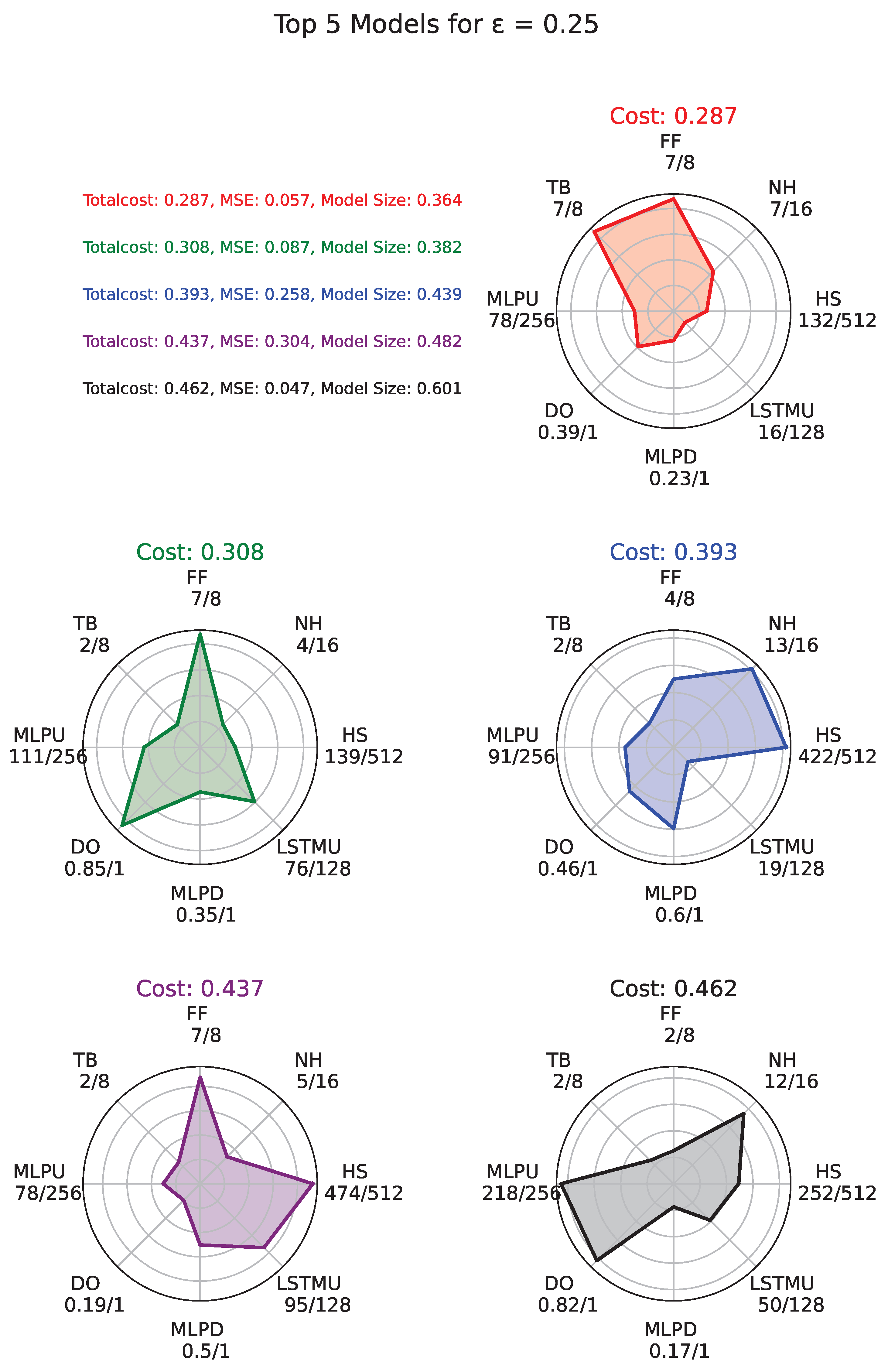

3.2.2. Experiment with

3.2.3. Experiment with

3.2.4. Experiment with

3.2.5. Experiment with

3.3. Optimal Models Performance Across Datasets

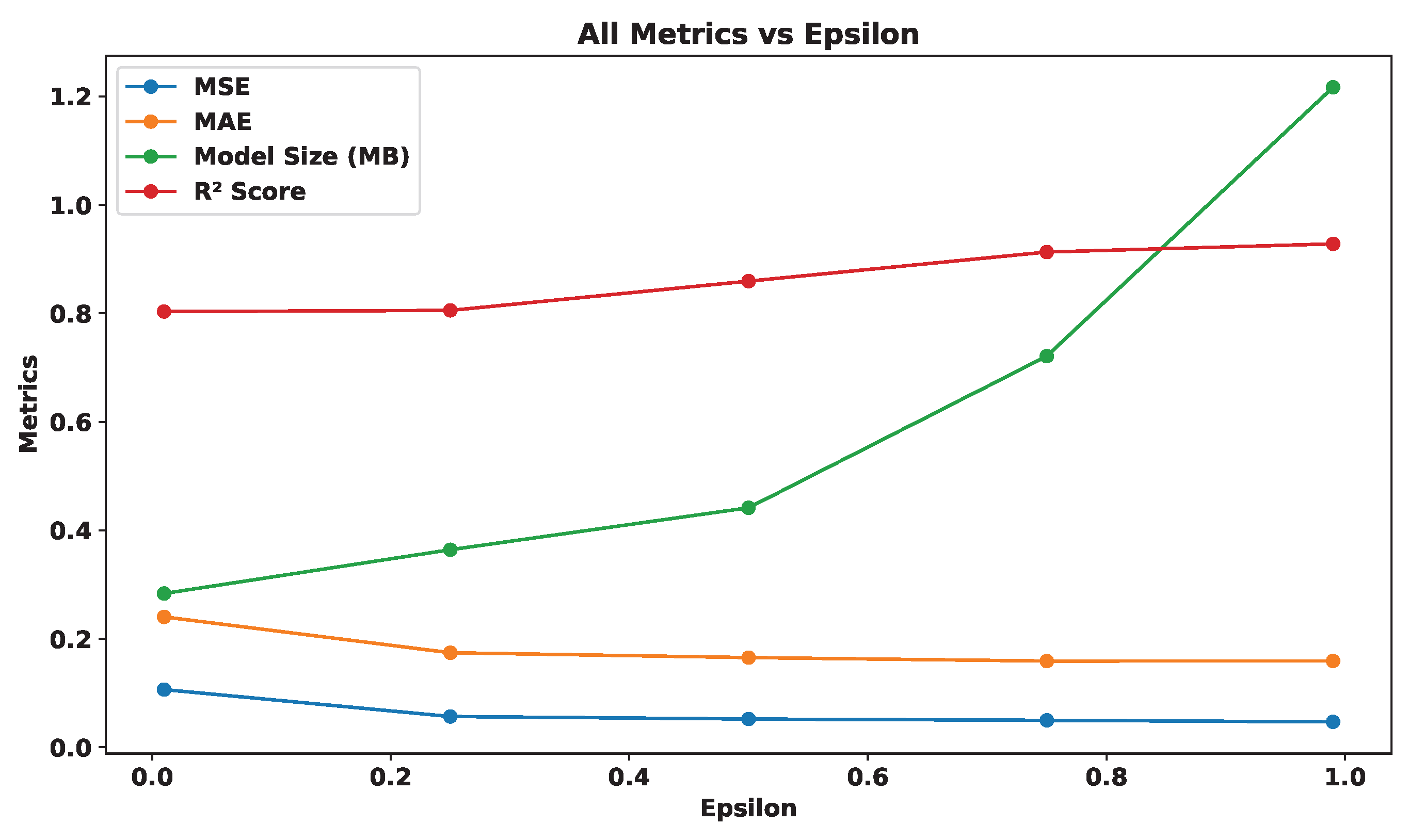

3.4. Analyzing the Influence of on Model Metrics

3.5. Comparison

4. Conclusion

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Lee, J.; Zhao, F. Global Wind Report 2022, 2022.

- Brabec, M.; Craciun, A.; Dumitrescu, A. Hybrid numerical models for wind speed forecasting. Journal of Atmospheric and Solar-Terrestrial Physics 2021, 220, 105669. [Google Scholar] [CrossRef]

- Moreno, S.; Mariani, V.; dos Santos Coelho, L. Hybrid multi-stage decomposition with parametric model applied to wind speed forecasting in Brazilian northeast. Renewable Energy 2021, 164, 1508–1526. [Google Scholar] [CrossRef]

- Nascimento, E.G.S.; de Melo, T.A.; Moreira, D.M. A transformer-based deep neural network with wavelet transform for forecasting wind speed and wind energy. Energy 2023, 278, 127678. [Google Scholar] [CrossRef]

- Huang, H.; Chen, J.; Sun, R.; Wang, S. Short-term traffic prediction based on time series decomposition. Physica A 2022, 585, 126441. [Google Scholar] [CrossRef]

- Torres, J.; et al. Forecast of hourly average wind speed with ARMA models in Navarre (Spain). Solar Energy 2005, 79, 65–77. [Google Scholar] [CrossRef]

- Li, L.; et al. ARMA model-based wind speed prediction for large radio telescope. Acta Astronomica Sinica 2022, 63, 70. [Google Scholar]

- Moreno, S.; dos Santos Coelho, L. Wind speed forecasting approach based on singular spectrum analysis and adaptive neuro fuzzy inference system. Renewable Energy 2018, 126, 736–754. [Google Scholar] [CrossRef]

- Bechrakis, D.; Sparis, P. Wind speed prediction using artificial neural networks. Wind Engineering 1998, 287–295. [Google Scholar]

- Mohandes, M.A.; Halawani, T.O.; Rehman, S.; Hussain, A.A. Support vector machines for wind speed prediction. Renewable energy 2004, 29, 939–947. [Google Scholar] [CrossRef]

- Geng, D.; Zhang, H.; Wu, H. Short-term wind speed prediction based on principal component analysis and LSTM. Applied Sciences 2020, 10, 4416. [Google Scholar] [CrossRef]

- Cai, R.; Xie, S.; Wang, B.; Yang, R.; Xu, D.; He, Y. Wind speed forecasting based on extreme gradient boosting. IEEE Access 2020, 8, 175063–175069. [Google Scholar] [CrossRef]

- Aslam, L.; Zou, R.; Awan, E.; Butt, S.A. Integrating Physics-Informed Vectors for Improved Wind Speed Forecasting with Neural Networks. In Proceedings of the Asian Control Conference, July 2024. [Google Scholar]

- Liu, H.; Tian, H.; Li, Y. Comparison of two new ARIMA-ANN and ARIMA-Kalman hybrid methods for wind speed prediction. Applied Energy 2012, 98, 415–424. [Google Scholar] [CrossRef]

- Zhang, Y.; Le, J.; Liao, X.; Zheng, F.; Li, Y. A novel combination forecasting model for wind power integrating least square support vector machine, deep belief network, singular spectrum analysis and locality-sensitive hashing. Energy 2019, 168, 558–572. [Google Scholar]

- Wan, A.; Chang, Q.; et al. Short-term power load forecasting for combined heat and power using CNN-LSTM enhanced by attention mechanism. Energy 2023, 282, 128274. [Google Scholar] [CrossRef]

- Pan, S.; et al. Oil well production prediction based on CNN-LSTM model with self-attention mechanism. Energy 2023, 284, 128701. [Google Scholar] [CrossRef]

- Li, W.; Li, Y.; Garg, A.; Gao, L. Enhancing real-time degradation prediction of lithium-ion battery: A digital twin framework with CNN-LSTM-attention model. Energy 2024, 286, 129681. [Google Scholar]

- Ata Teneler, A.; Hassoy, H. Health effects of wind turbines: a review of the literature between 2010-2020. International journal of environmental health research 2023, 33, 143–157. [Google Scholar] [CrossRef]

- Gkeka-Serpetsidaki, P.; Papadopoulos, S.; Tsoutsos, T. Assessment of the visual impact of offshore wind farms. Renewable Energy 2022, 190, 358–370. [Google Scholar] [CrossRef]

- Bilgili, M.; Alphan, H. Visual impact and potential visibility assessment of wind turbines installed in Turkey. Gazi University Journal of Science 2022, 35, 198–217. [Google Scholar] [CrossRef]

- Lehnardt, Y.; Barber, J.R.; Berger-Tal, O. Effects of wind turbine noise on songbird behavior during nonbreeding season. Conservation Biology 2024, 38, e14188. [Google Scholar] [CrossRef]

- Teff-Seker, Y.; Berger-Tal, O.; Lehnardt, Y.; Teschner, N. Noise pollution from wind turbines and its effects on wildlife: A cross-national analysis of current policies and planning regulations. Renewable and Sustainable Energy Reviews 2022, 168, 112801. [Google Scholar] [CrossRef]

- Zolotoff-Pallais, J.M.; Perez, A.M.; Donaire, R.M. General Comparative Analysis of Bird-Bat Collisions at a Wind Power Plant in the Department of Rivas, Nicaragua, between 2014 and 2022. European Journal of Biology and Biotechnology 2024, 5, 1–7. [Google Scholar] [CrossRef]

- Richardson, S.M.; Lintott, P.R.; Hosken, D.J.; Economou, T.; Mathews, F. Peaks in bat activity at turbines and the implications for mitigating the impact of wind energy developments on bats. Scientific Reports 2021, 11, 3636. [Google Scholar] [CrossRef]

- Choi, D.Y.; Wittig, T.W.; Kluever, B.M. An evaluation of bird and bat mortality at wind turbines in the Northeastern United States. PLoS One 2020, 15, e0238034. [Google Scholar] [CrossRef]

- Barter, G.E.; Sethuraman, L.; Bortolotti, P.; Keller, J.; Torrey, D.A. Beyond 15 MW: A cost of energy perspective on the next generation of drivetrain technologies for offshore wind turbines. Applied Energy 2023, 344, 121272. [Google Scholar] [CrossRef]

- Wiser, R.; Millstein, D.; Bolinger, M.; Jeong, S.; Mills, A. The hidden value of large-rotor, tall-tower wind turbines in the United States. Wind Engineering 2021, 45, 857–871. [Google Scholar] [CrossRef]

- Turc Castellà, F.X. Operations and maintenance costs for offshore wind farm. Analysis and strategies to reduce O&M costs. Master’s thesis, Universitat Politècnica de Catalunya, 2020.

- Abdelateef Mostafa, M.; El-Hay, E.A.; ELkholy, M.M. Recent trends in wind energy conversion system with grid integration based on soft computing methods: comprehensive review, comparisons and insights. Archives of Computational Methods in Engineering 2023, 30, 1439–1478. [Google Scholar] [CrossRef]

- Ahmed, S.D.; Al-Ismail, F.S.; Shafiullah, M.; Al-Sulaiman, F.A.; El-Amin, I.M. Grid integration challenges of wind energy: A review. Ieee Access 2020, 8, 10857–10878. [Google Scholar] [CrossRef]

- Suo, L.; Peng, T.; Song, S.; Zhang, C.; Wang, Y.; Fu, Y.; Nazir, M.S. Wind speed prediction by a swarm intelligence based deep learning model via signal decomposition and parameter optimization using improved chimp optimization algorithm. Energy 2023, 276, 127526. [Google Scholar] [CrossRef]

- Saini, V.K.; Kumar, R.; Al-Sumaiti, A.S.; Sujil, A.; Heydarian-Forushani, E. Learning based short term wind speed forecasting models for smart grid applications: An extensive review and case study. Electric Power Systems Research 2023, 222, 109502. [Google Scholar] [CrossRef]

- Zhang, Y.; Pan, G.; Chen, B.; Han, J.; Zhao, Y.; Zhang, C. Short-term wind speed prediction model based on GA-ANN improved by VMD. Renewable energy 2020, 156, 1373–1388. [Google Scholar] [CrossRef]

- Salinas, D.; Flunkert, V.; Gasthaus, J.; Januschowski, T. DeepAR: Probabilistic forecasting with autoregressive recurrent networks. International Journal of Forecasting 2020, 36, 81–91. [Google Scholar] [CrossRef]

- Chen, Y.; Kang, Y.; Chen, Y.; Wang, Z. Probabilistic forecasting with temporal convolutional neural network. Neurocomputing 2020, 399, 491–501. [Google Scholar] [CrossRef]

- Wu, Q.; Guan, F.; Lv, C.; Huang, Y. Ultra-short-term multi-step wind power forecasting based on CNN-LSTM. IET Renewable Power Generation 2021, 15, 1019–1029. [Google Scholar] [CrossRef]

| Dataset | Size (MB) | MSE (m/s) | MAE (m/s) | R2 | Latency (Sec) | |

|---|---|---|---|---|---|---|

| Jhimpir | 0.01 | 0.2862 | 0.0848 | 0.2180 | 0.9732 | 0.0848 |

| 0.25 | 0.3812 | 0.0932 | 0.2284 | 0.9706 | 0.0341 | |

| 0.50 | 0.4587 | 0.0442 | 0.1573 | 0.9860 | 0.1557 | |

| 0.75 | 0.7232 | 0.0442 | 0.1573 | 0.9860 | 0.1620 | |

| 0.99 | 1.2104 | 0.0451 | 0.1583 | 0.9858 | 0.6929 | |

| Gwadar | 0.01 | 0.2836 | 0.1065 | 0.2404 | 0.9600 | 0.0915 |

| 0.25 | 0.3643 | 0.0568 | 0.1744 | 0.9786 | 0.1992 | |

| 0.50 | 0.4416 | 0.0522 | 0.1654 | 0.9804 | 0.1773 | |

| 0.75 | 0.7211 | 0.0498 | 0.1591 | 0.9850 | 0.3022 | |

| 0.99 | 1.2168 | 0.0470 | 0.1592 | 0.9823 | 0.6224 | |

| Pasni | 0.01 | 0.2849 | 0.5513 | 0.5620 | 0.7900 | 0.0876 |

| 0.25 | 0.3829 | 0.6610 | 0.6160 | 0.7514 | 0.0311 | |

| 0.50 | 0.4413 | 0.5412 | 0.5892 | 0.7964 | 0.1610 | |

| 0.75 | 0.7233 | 0.3263 | 0.4346 | 0.8772 | 0.2982 | |

| 0.99 | 1.2107 | 0.2856 | 0.4065 | 0.8925 | 0.6639 |

| Dataset | Scheme | Size (MB) | MSE (m/s) | MAE (m/s) | R2 |

|---|---|---|---|---|---|

| Jhimpir | DeepAR[35] | 100.3615 | 0.0921 | 0.2321 | 0.8974 |

| DeepTCN[36] | 15.6832 | 0.0751 | 0.2075 | 0.9332 | |

| CNN-LSTM[37] | 12.6971 | 0.0793 | 0.2251 | 0.9162 | |

| Proposed Scheme | 1.2104 | 0.0451 | 0.1583 | 0.9858 | |

| Gwadar | DeepAR[35] | 100.3628 | 0.0903 | 0.2561 | 0.9600 |

| DeepTCN[36] | 15.6756 | 0.0698 | 0.1945 | 0.9489 | |

| CNN-LSTM[37] | 12.6348 | 0.0815 | 0.2678 | 0.8756 | |

| Proposed Scheme | 1.2168 | 0.0470 | 0.1592 | 0.9823 | |

| Pasni | DeepAR[35] | 100.5483 | 0.4361 | 0.5620 | 0.7900 |

| DeepTCN[36] | 15.6540 | 0.4275 | 0.4598 | 0.8219 | |

| CNN-LSTM[37] | 12.3256 | 0.5017 | 0.5321 | 0.7545 | |

| Proposed Scheme | 1.2107 | 0.2856 | 0.4065 | 0.8925 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).