Submitted:

18 February 2025

Posted:

19 February 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction and Motivation

2. Related Work

2.1. Correlation-Based Methods

2.2. Learning-Based Methods

2.3. Rendering-Based Methods

2.4. Additional Methods

3. Proposed Approach

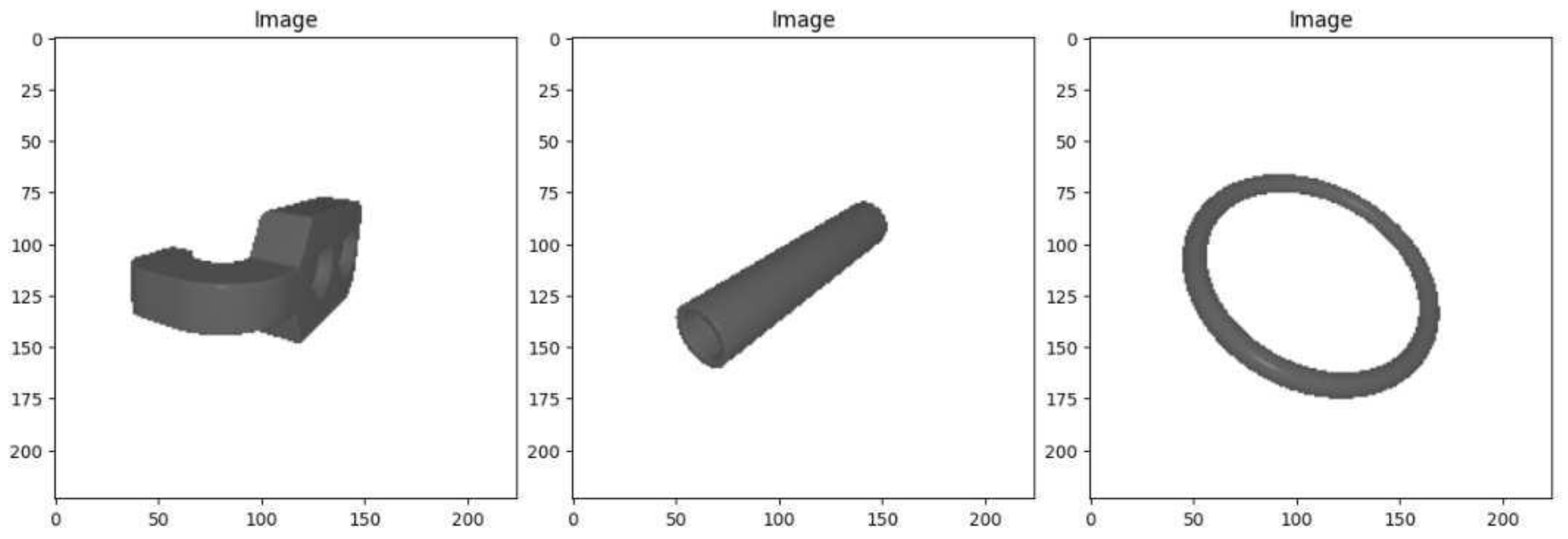

3.1. Dataset Creation

3.2. Training Workflow and Hardware

3.3. Regression Network Approach

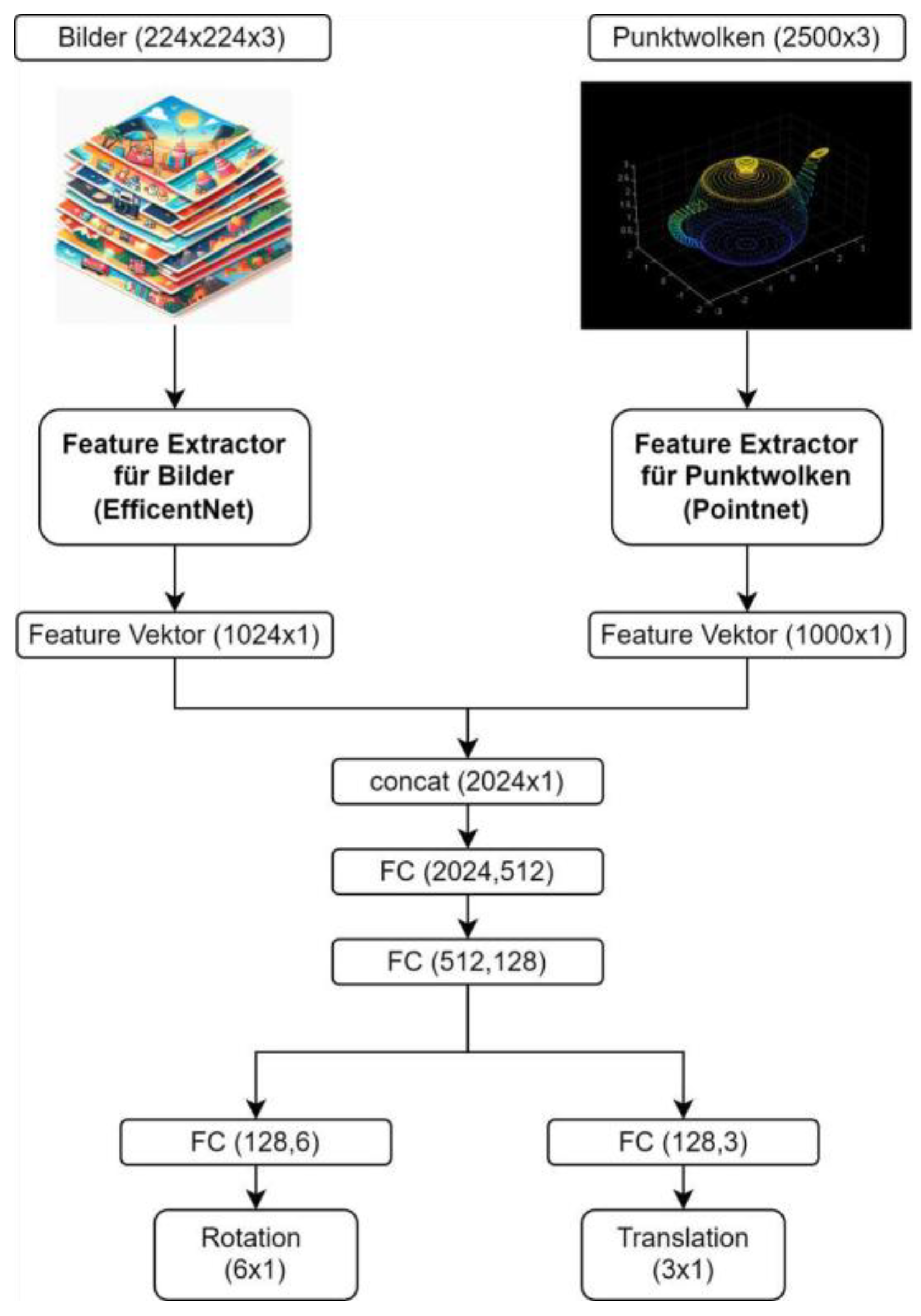

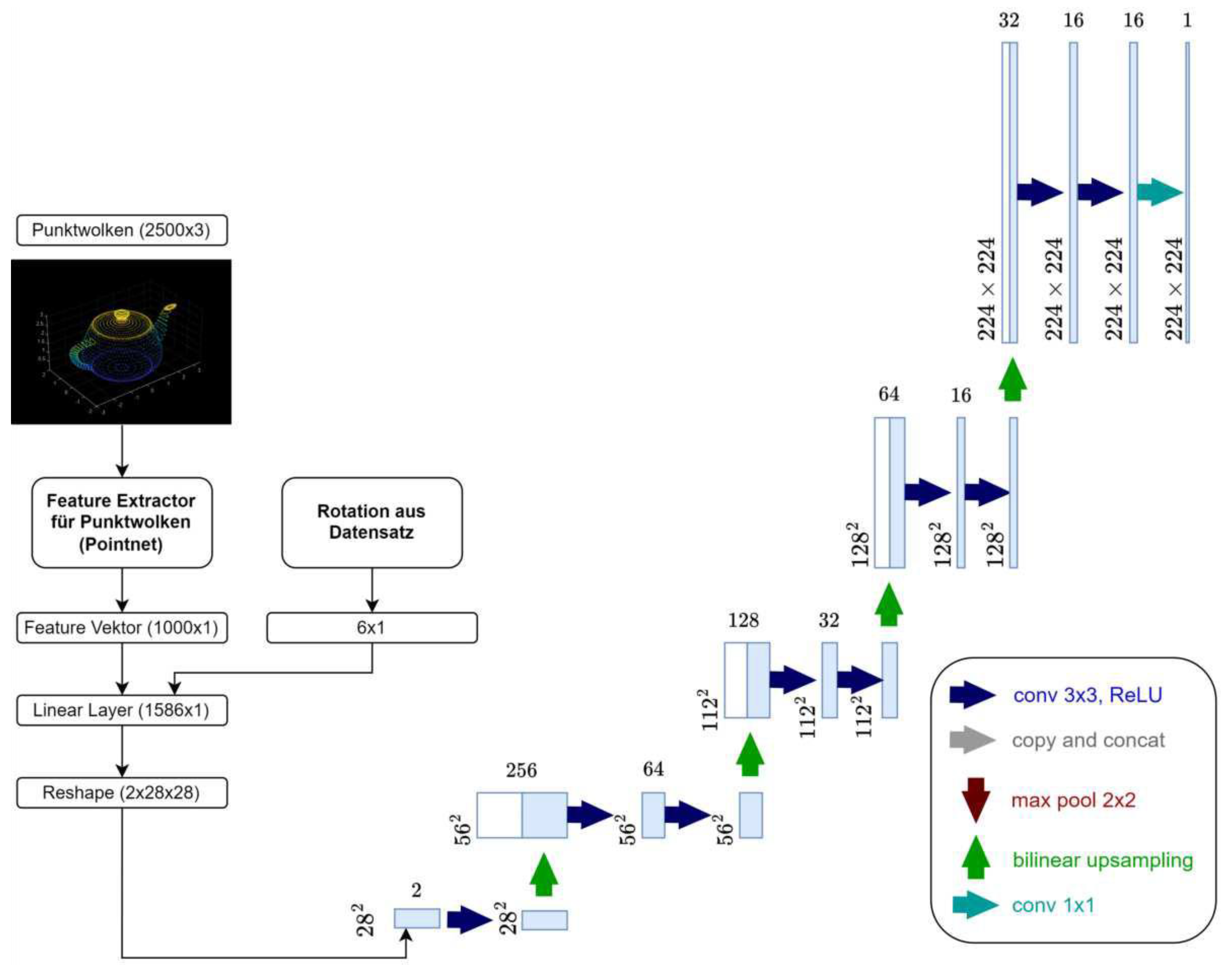

3.3.1. Architecture

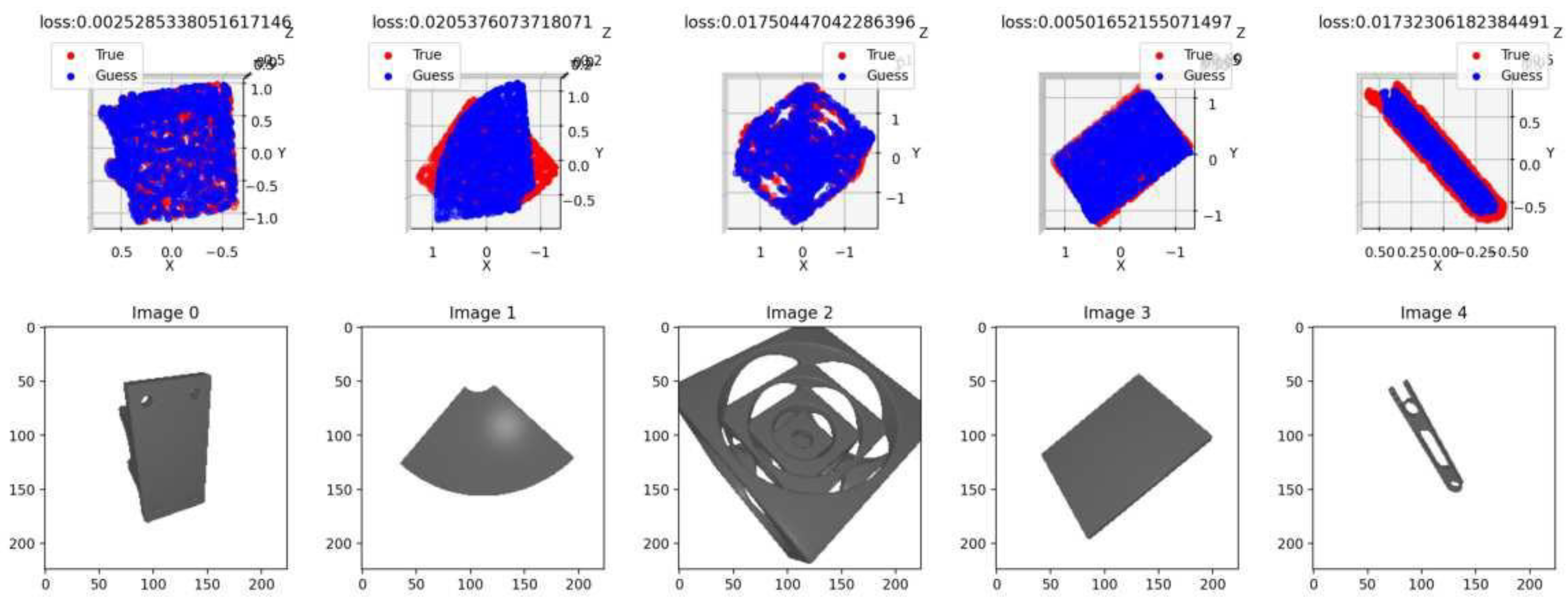

3.3.2. Evaluation

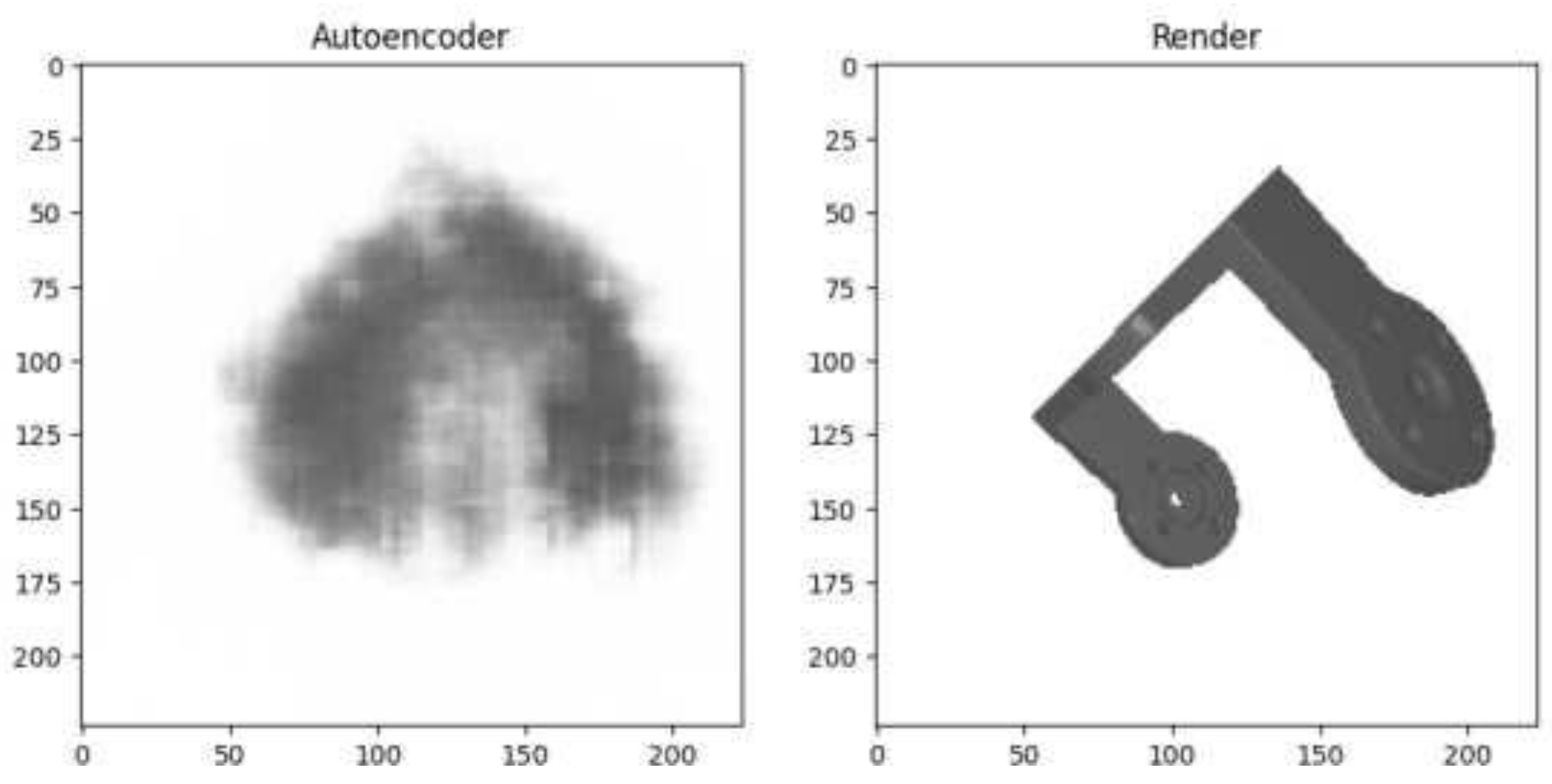

3.4. Rendering Approximator Autoencoder

3.4.1. Point Net Architecture

3.4.2. Evaluation

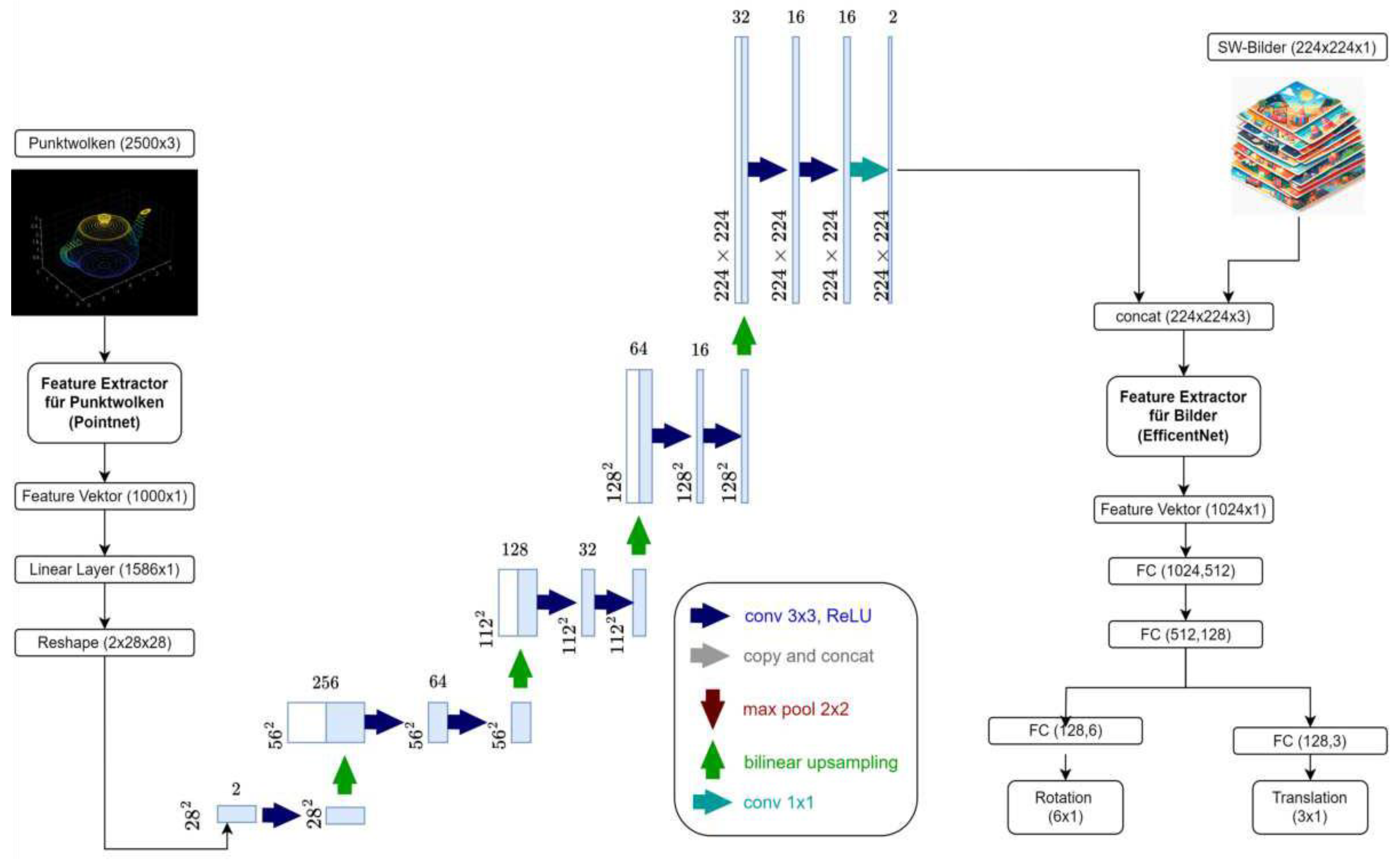

3.5. Autoencoder Regression Network

- It aims to identify more precise correspondences between images and point clouds converted into image format. The assumption is that allowing the information to pass through the convolutional layers together enables it to be compared more frequently.

- Another advantage lies in the previously mentioned ease of implementation within existing architectures.

3.5.1. Architecture

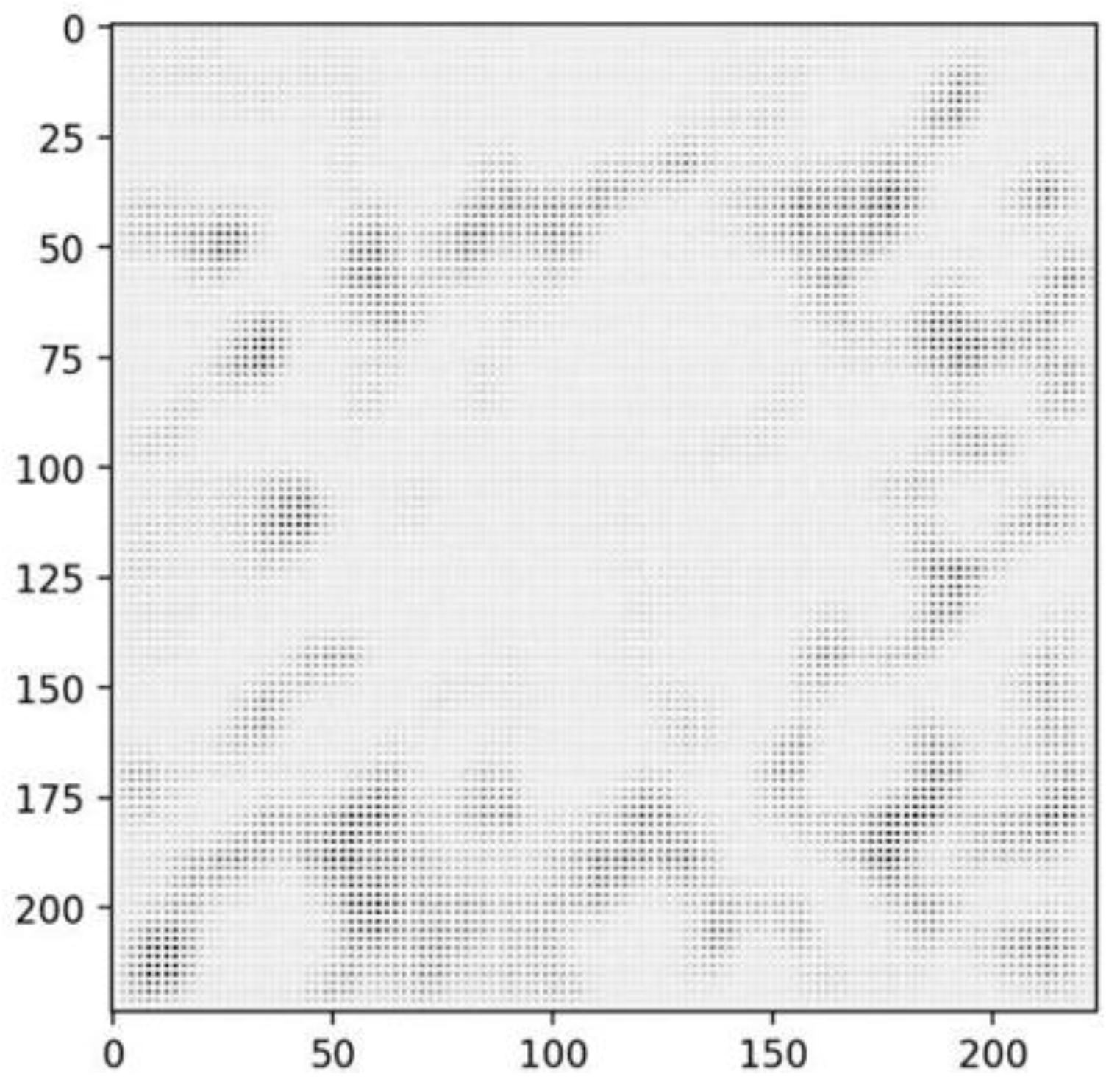

3.5.2. Evaluation

- The system converges at higher endpoint values.

- The learning rate must be very low to achieve these values (AdamW optimizer with a learning rate of , compared to in the previous experiment).

- Training is time-intensive, with the computation time per sample being twice as high as in the previous approach.

3.7. Direct Regression with PointEncoder

3.7.1. Point Cloud Encoder Architecture

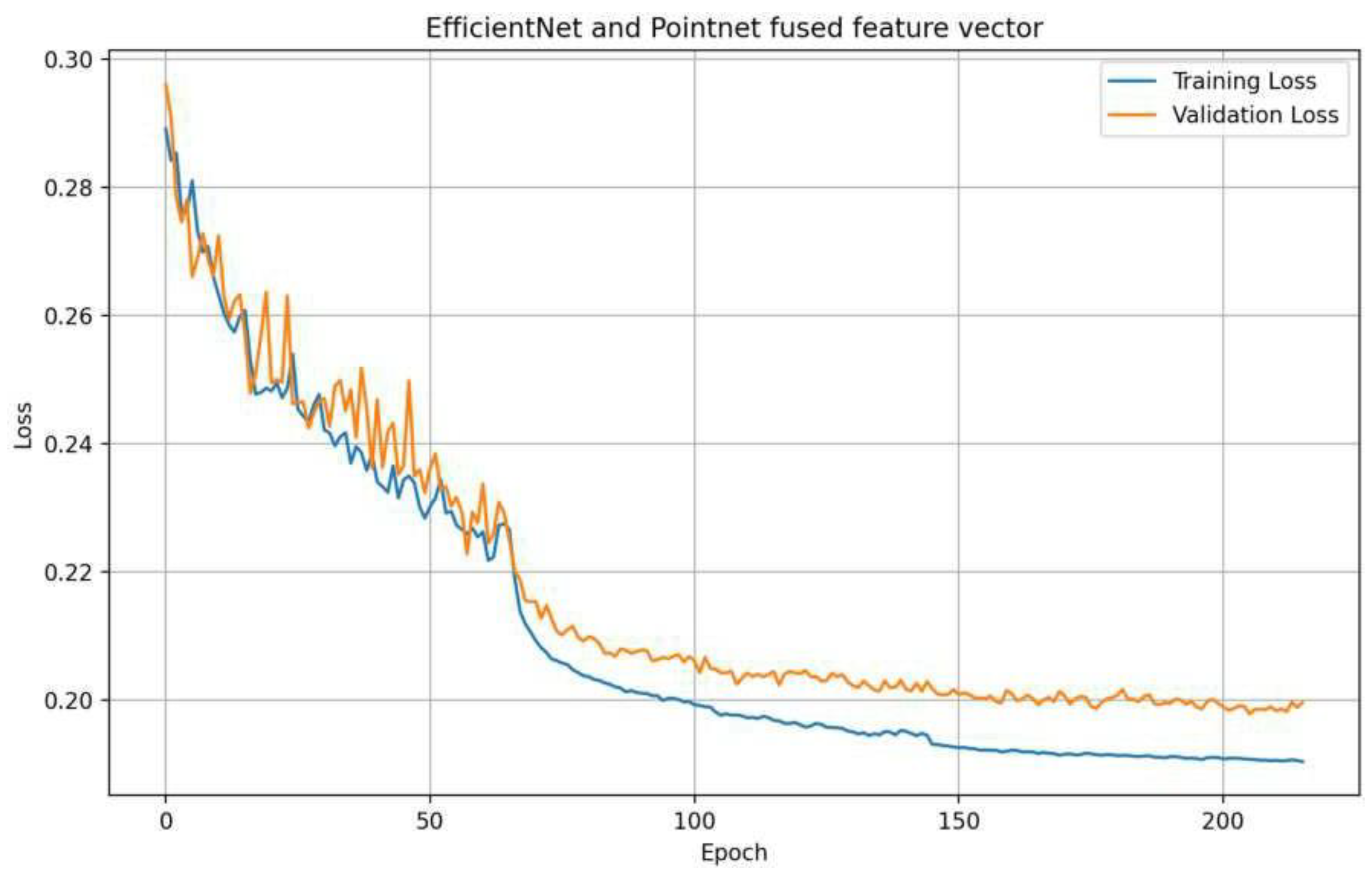

3.7.2. Evaluation and Improvements

- The network converges more quickly compared to using PointNet.

- The loss achieved is lower.

- The network generalizes better across various data.

3.8. Results and Insights for the Comparison

4. Implementation

4.1. Enhanced Synthetic Dataset

4.1.2. Dataset Adaption

4.2. Regression with DETR

4.2.1. Modification of the Architecture

- Bounding boxes

- Class labels

- Projected circumcircles of the point clouds

- 6D rotations

- One was added to determine the class (binary: 0 or 1) and bounding box.

- Second, MLP estimates the center and radius of the projected bounding sphere.

- A third network is dedicated to estimating the rotation.

4.2.2. Design of Loss Functions

- 3D Nearest-Neighbor Loss (3D NN Loss): Measures the nearest-neighbor distance between the predicted and actual 3D point clouds.

- 3D Correspondence Loss: Computes the Euclidean distance between corresponding points in the predicted and actual 3D point clouds.

- 2D Nearest-Neighbor Loss (2D NN Loss): Similar to the 3D NN Loss but applied to the projected 2D point clouds.

- 2D Correspondence Loss: Analogous to the 3D Correspondence Loss but applied to projected 2D points.

- Rotation Loss: Captures the deviation between the predicted and actual rotations.

- Circle Regression Loss: Measures the L1 deviation of the predicted circle center and radius of the bounding sphere from the actual values.

- Circle IOU: A custom implementation of the Intersection over Union specifically for circular shapes.

4.3. Training

4.4. Evaluation

4.4.1. Evaluation for 2D Detection

3.4.2. Evaluation with Rotation

4.4.3. Limitations

5. Conclusions and Future Work

Author Contributions

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- R. Bogue, “Bin picking: a review of recent development,” 2023. [CrossRef]

- Z. Liu, L. Jiang and M. Cheng, “A Fast Grasp Planning Algorithm for Humanoid Robot Hand,” 2024. [CrossRef]

- Z. He, W. Feng, X. Zhao and Y. Lv, “6D Pose Estimation of Objects: Recent Technologies and Challenges,” Appl. Sci., vol. 11, no. 228, 2021. [CrossRef]

- G. Marullo, L. Tanzi and P. Piazzolla, “6D object position estimation from 2D images: a literature review,” Multimed Tools Appl, vol. 82, p. 24605–24643, 2023. [CrossRef]

- Y. Hu, J. Hugonot, P. Fua and M. Salzmann, “Segmentation-Driven 6D Object Pose Estimation,” in 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 2019.

- E. Brachmann, A. Krull, F. Michel, S. Gumhold, J. Shotton and C. Rother, “Learning 6D Object Pose Estimation Using 3D Object Coordinates,” Computer Vision – ECCV 2014. ECCV 2014. Lecture Notes in Computer Science, vol. 8690, 2014.

- Y. Labbé, J. Carpentier, M. Aubry and J. Sivic, “CosyPose: Consistent Multi-view Multi-object 6D Pose Estimation,” Lecture Notes in Computer Science, Computer Vision – ECCV 2020. ECCV 2020, vol. 12362, 2020.

- J. Guo et al., “Efficient Center Voting for Object Detection and 6D Pose Estimation in 3D Point Cloud,” IEEE Transactions on Image Processing, vol. 30, pp. 5072-5084, 2021. [CrossRef]

- Hinterstoisser, S. et al., “Technical Demonstration on Model Based Training, Detection and Pose Estimation of Texture-Less 3D Objects in Heavily Cluttered Scenes,” in Computer Vision – ECCV 2012. Workshops and Demonstrations. ECCV 2012. Lecture Notes in Computer Science , Berlin, Heidelberg, 2012.

- C. Wang, D. Xu, Y. Zhu, R. Martín-Martín, C. Lu, L. Fei-Fei and S. Savarese, “DenseFusion: 6D Object Pose Estimation by Iterative Dense Fusion,” in 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 2019.

- J. Deng, W. Dong, R. Socher, L. Li, K. Li and L. Fei-Fei, “Imagenet: A large-scale hierarchical image database,” in 2009 IEEE conference on computer vision and pattern recognition, 2009.

- J. Guan, Y. Hao, Q. Wu, S. Li and Y. Fang, “A Survey of 6DoF Object Pose Estimation Methods for Different Application cenarios,” Sensors, vol. 24, no. 1076, 2024.

- A. Krull, E. Brachmann, F. Michel, M. Yang, S. Gumhold and C. Rother, “Learning Analysis-by-Synthesis for 6D Pose Estimation in RGB-D Images,” in 2015 IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 2015.

- K. Park, T. Patten and M. Vincze, “Neural Object Learning for 6D Pose Estimation Using a Few Cluttered Images,” in Computer Vision – ECCV 2020. ECCV 2020. Lecture Notes in Computer Science, Cham, 2020.

- V. L. Tran and H.-Y. Lin, “3D Object Detection and 6D Pose Estimation Using RGB-D Images and Mask R-CNN,” in 2020 IEEE International Conference on Fuzzy Systems (FUZZ-IEEE), Glasgow, UK, 2020.

- S. Hoque, M. Arafat, S. Xu, A. Maiti and Y. Wei, “A Comprehensive Review on 3D Object Detection and 6D Pose Estimation With Deep Learning,” IEEE Access, vol. 9, pp. 143746-143770, 2021.

- W. Kehl, F. Manhardt, F. I. S. Tombari and N. Navab, “SSD-6D: Making RGB-Based 3D Detection and 6D Pose Estimation Great Again,” in 2017 IEEE International Conference on Computer Vision (ICCV), 2017.

- B. Tekin, S. Sinha and P. Fua, “Real-Time Seamless Single Shot 6D Object Pose Prediction,” in 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 2018.

- Y. Bukschat and M. Vetter, “EfficientPose: An efficient, accurate and scalable end-to-end 6D multi object pose estimation approach,” ArXiv, vol. abs/2011.04307.

- Xiang, Y.; Schmidt, Т. Narayanan, V.; Fox, D., “PoseCNN: A Convolutional Neural Network for 6D Object Pose Estimation in Cluttered Scenes,” ArXiv, vol. abs/1711.00199, 2017. [CrossRef]

- A. Amini, A. Periyasamy and S. Behnke, “T6D-Direct: Transformers for Multi-Object 6D Pose Direct Regression,” ArXiv, vol. abs/2109.10948, 2021.

- W. Ma, A. Wang, A. Yuille and A. Kortylewski, “Robust Category-Level 6D Pose Estimation with Coarse-to-Fine Rendering of Neural Features,” in European Conference on Computer Vision, 2022.

- A. Trabelsi, M. Chaabane, N. Blanchard and R. Beveridge, “A Pose Proposal and Refinement Network for Better 6D Object Pose Estimation,” in 2021 IEEE Winter Conference on Applications of Computer Vision (WACV), Waikoloa, HI, USA, 2021.

- X. Cui, N. Li, C. Zhang, Q. Zhang, W. Feng and L. Wan, “Silhouette-Based 6D Object Pose Estimation,” in Computational Visual Media. CVM 2024. Lecture Notes in Computer Science, Singapore, 2024.

- M. Gou, I. F. H. L. Z. Pan, C. Lu and P. Tan, “Unseen Object 6D Pose Estimation: A Benchmark and Baselines,” ArXiv, vol. abs/2206.11808, 2022.

- J. Vidal, C.-Y. Lin, X. Lladó and R. A. Martí, “Method for 6D Pose Estimation of Free-Form Rigid Objects Using Point Pair Features on Range Data,” Sensors, vol. 18, no. 2678, 2018. [CrossRef]

- Li, Y.; Wang, G;, Ji, X. et al., “DeepIM: Deep Iterative Matching for 6D Pose Estimation,” Int J Comput Vis, no. 128, p. 657–678, 2020.

- M. Sundermeyer, Z. Marton, M. Durner, M. Brucker and R. Triebel, “Implicit 3D Orientation Learning for 6D Object Detection from RGB Images,” Computer Vision – ECCV 2018. ECCV 2018. Lecture Notes in Computer Science, vol. 11210, 2018.

- Y. Konishi, K. Hattori and M. Hashimoto, “Real-Time 6D Object Pose Estimation on CPU,” in 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS).

- H. Wang, S. Sridhar, J. Huang, J. Valentin, S. Song and L. Guibas, “Normalized Object Coordinate Space for Category-Level 6D Object Pose and Size Estimation,” in 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 2019. [CrossRef]

- E. Brachmann, F. Michel, A. Krull, M. Y. Yang, S. Gumhold and C. Rother, “Uncertainty-Driven 6D Pose Estimation of Objects and Scenes from a Single RGB Image,” in 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 2016.

- D. DeTone, T. Malisiewicz and A. Rabinovich, “Deep Image Homography Estimation,” ArXiv, vol. abs/1606.03798, 2016.

- T.Y. Lin et al., “Microsoft COCO: Common Objects in Context,” Computer Vision – ECCV 2014. ECCV 2014. Lecture Notes in Computer Science, vol. 8693, 2014. [CrossRef]

- S. Koch, A. Matveev, Z. Jiang, F. Williams, A. Artemov, E. Burnaev, M. Alexa, D. Zorin and D. Panozzo, “ABC: A Big CAD Model Dataset For Geometric Deep Learning,” The IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2019.

- M. Tan and Q. Le, “Rethinking Model Scaling for Convolutional Neural Networks,” ArXiv, vol. abs/1905.11946, 2019.

- C. Qi, H. Su and K. G. L. Mo, “PointNet: Deep Learning on Point Sets for 3D Classification and Segmentation,” in 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016.

- O. Ronneberger, P. Fischer and T. Brox, “U-Net: Convolutional Networks for Biomedical Image Segmentation,” Medical Image Computing and Computer-Assisted Intervention – MICCAI 2015. MICCAI 2015, Lecture Notes in Computer Science, vol. 9351, 2015.

- L. Mescheder, M. Oechsle, M. Niemeyer, S. Nowozin and A. Geiger, “Occupancy Networks: Learning 3D Reconstruction in Function Space,” in 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 2019.

- A. Chang, T. Funkhouser, L. Guibas, P. Hanrahan, Q. Huang, Z. Li, S. Savarese, M. Savva, S. Song, H. Su, J. Xiao, L. Yi and F. Yu, “ShapeNet: An Information-Rich 3D Model Repository,” 2015.

- L. Downs et al., “Google Scanned Objects: A High-Quality Dataset of 3D Scanned Household Items,” in International Conference on Robotics and Automation (ICRA), 2022.

- N. Carion, F. Massa, G. Synnaeve, N. Usunier, A. Kirillov and S. Zagoruyko, “End-to-End Object Detection with Transformers,” in European Conference on Computer Vision (ECCV), 2020.

- Z. Liu, Y. Lin, Y. Cao, H. Hu, Y. Wei, Z. Zhang, S. Lin and B. Guo, “Swin Transformer: Hierarchical Vision Transformer using Shifted Windows,” in Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021.

- K. Zeid, “Point2Vec for Self-Supervised Representation Learning on Point Clouds,” ArXiv, vol. abs/2303.16570, 2023.

| PointNet Autoencoder | Point Embedding | ||

| Training | 0.19 | 0.205 | 0.175 |

| Validation | 0.2 | 0.22 | 0.182 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).