3. Dataset and System Model

An experimental dataset dedicated to UAV audio signal detection is used in this study. A review of the relevant literature reveals that this dataset was proposed by the authors and proved to be suitable for this study. The dataset contains more than 1300 segments of UAV audio data to simulate complex scenarios in real life. To enhance the versatility and adaptability of the dataset, the authors also introduced noise segments from publicly available noise datasets to examine the robustness and detection ability of the model in a noisy environment.

Materials and Methods should be describeAn experimental dataset dedicated to UAV audio signal detection is used in this study. A review of the relevant literature reveals that this dataset was proposed by the authors and proved to be suitable for this study. The dataset contains more than 1300 segments of UAV audio data to simulate complex scenarios in real life. To enhance the versatility and adaptability of the dataset, the authors also introduced noise segments from publicly available noise datasets to examine the robustness and detection ability of the model in a noisy environment.

The dataset covers the audio signals of two different UAV models, namely Bebop and Mambo.The audio recording of each UAV has a duration of 11 minutes and 6 seconds, with a sampling rate of 16 kHz, mono, and a maximum audio bit rate of 64 kbps.All the audio files are stored in the MPEG-4 audio format, which ensures efficient storage and transmission of the data.

In order to meet the requirements of feature learning in deep learning models, the audio files are divided into multiple smaller segments, and the optimal solution is the 1-second segmentation, which is determined through experiments. After this processing, the audio data not only retains the complete timing characteristics, but also facilitates efficient training and learning of the model with limited computational resources.

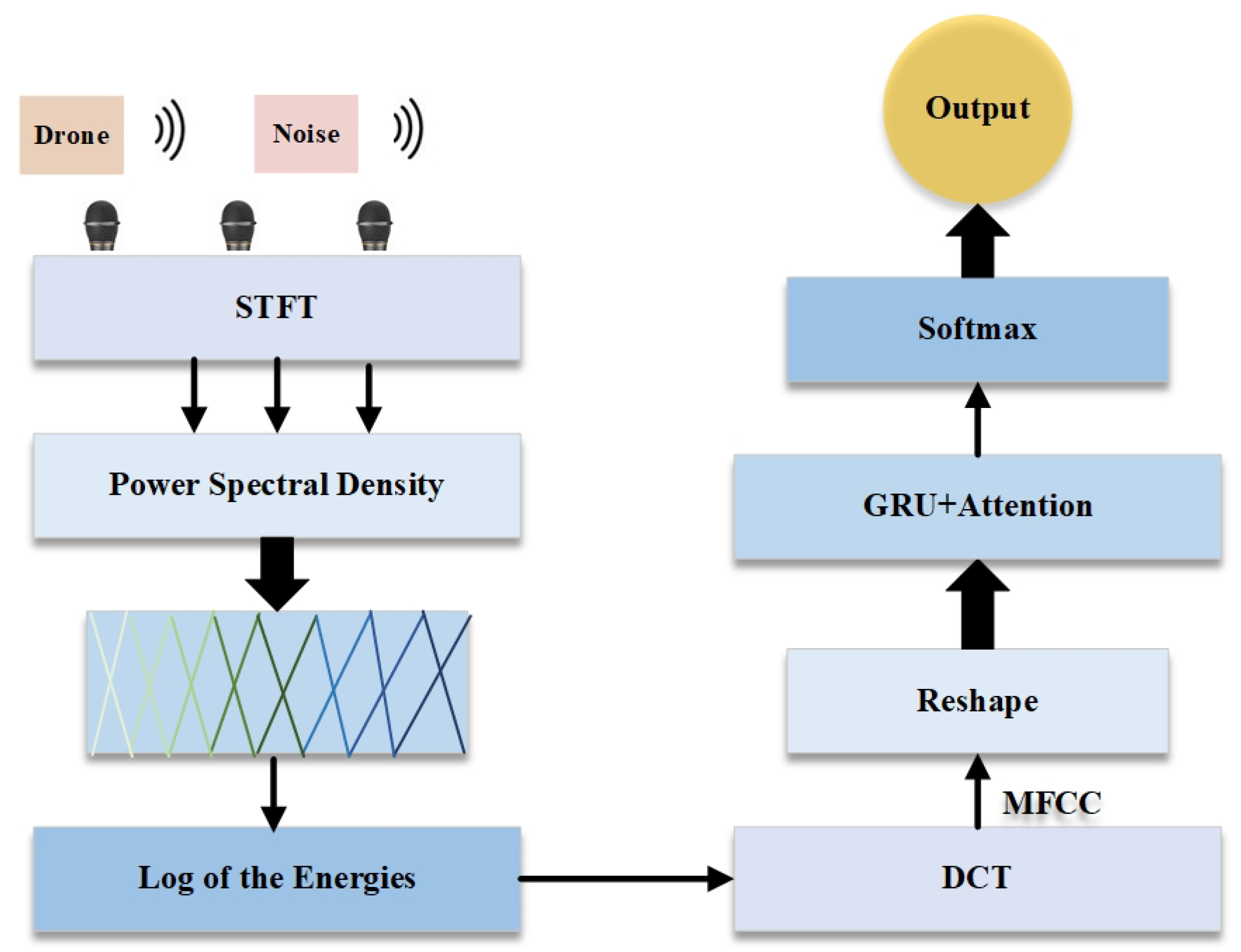

This study can be roughly divided into three steps: audio feature extraction, feature processing and modeling, and classification prediction. Firstly, spectrogram feature extraction is performed on the audio data in the dataset, which converts the one-dimensional waveform data into a two-dimensional spectrogram feature space; then the extracted spectrogram features are reshaped and global features in the time series are extracted by a two-layer gated recurrent unit (GRU) network, and at the same time, high-dimensional features are combined with the fully-connected layer to perform the dimensionality reduction process, and then regularization is performed by the Dropout layer to reduce the over regularization through Dropout layer to reduce the risk of overfitting. Finally, the Softmax output layer is used to generate the UAV audio detection and classification results to realize the classification prediction of UAV audio. The overall structure flow chart is shown in

Figure 1:

Features extracted from audio signals mainly include time domain features and frequency domain features. Time-domain features are the simplest and most direct features extracted from the sampled signal with time as the independent variable. However, generally through the time domain analysis can only get the power change of the acoustic signal, can not judge the frequency components contained in the acoustic signal, so it should be analyzed from the frequency domain features. The time-domain signal of the acoustic signal can be converted to the frequency domain by Fourier transform to obtain the corresponding phase spectrum and amplitude spectrum, from which the amplitude spectrum can be used to analyze the frequency components of the signal and obtain the energy distribution of different frequency components during the time. As a result, the frequency domain features of the sound signal can be extracted. The frequency domain features include Meier frequency cepstrum coefficient features, logarithmic amplitude spectral features, logarithmic Meier filter bank energy features, perceptual linear prediction features, Gammatone frequency cepstrum coefficient features, and power regularization cepstrum coefficient features.

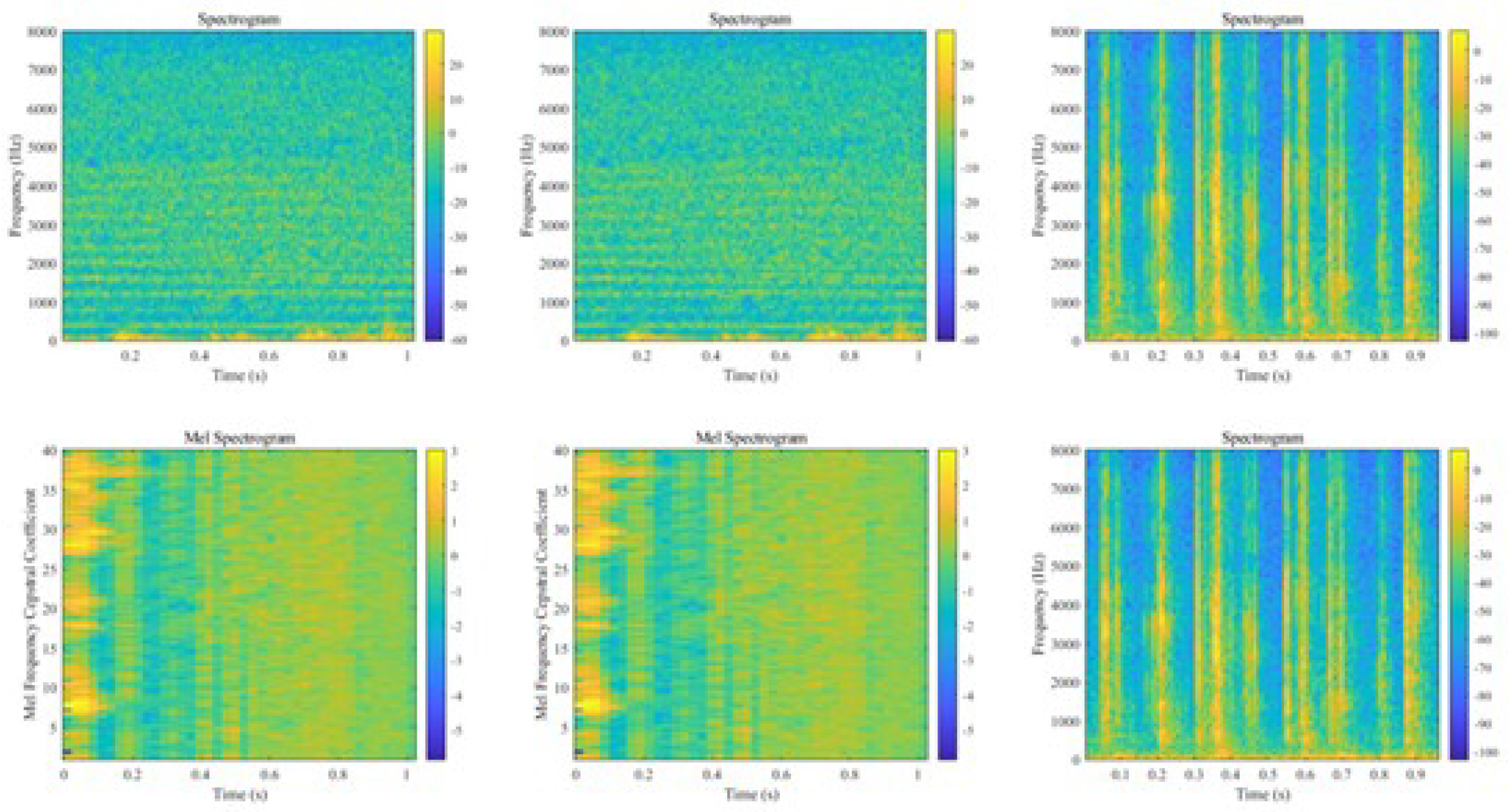

Linear predictive cepstral coefficient (LPCC) and Mel frequency cepstral coefficient (MFCC) as two common sound signal feature extraction techniques.MFCC is a method of speech analysis based on the human hearing mechanism, which analyzes the spectrum of speech by simulating the perceptual characteristics of the human ear to the sound signal. The computational process of MFCC mainly includes the following steps: firstly, the time domain signal is segmented and converted to the frequency domain by Short-Time Fourier Transform (STFT). Subsequently, the frequency components on the Meier frequency scale are extracted by a set of triangular filters using the Meier filter bank. And then the filtered energy is logarithmically taken to enhance the small signal variations. Finally, the logarithmic energy spectrum is converted into cepstrum coefficients using the Discrete Cosine Transform (DCT), which reduces the redundant information and retains the main energy.LPCC is a cepstrum feature extraction method based on linear predictive analysis, which analyzes the spectral structure of audio signals by predicting the relationship between the current signal values and the past signal values. However, LPCC tends to retain some redundant information that is not related to the signal, which leads to the degradation of classification performance, so MFCC is chosen as the main feature extraction method in this study. The acoustic spectrograms of some drones and noises as well as the cepstrum of Mel’s frequency spectrum are shown in Figure 2, respectively:

Assuming that the input UAV audio signal is

, the frame length is

L , the frame shift is , and the audio signal is subjected to sub-framing to divide the time-domain signal into multiple sub-frames, the signal in the first frame is:

To minimize spectral leakage, the signal is windowed. For each frame of the signal

, the window function

is applied:

The Hamming window used in this study is:

Project the time domain signal

into the time-frequency domain by Short Time Fourier Transform (STFT) to obtain the frequency domain representation of the signal, including the magnitude and phase spectra:

where

is the frequency domain representation of the

k-th frame signal,

w denotes the frequency variable, and

denotes the signal after adding a window to the

k-th signal., the output of the STFT contains the magnitude and phase spectra.

The magnitude spectrum provides the energy distribution of the signal:

The phase spectrum provides the phase information of the signal:

The Mel filter set is used to simulate the auditory properties of the human ear, emphasizing the sensitive frequency range of the human ear (e.g., 300 4000 Hz) and converting the frequency axis to the Mel Scale. A set of Mel filters is used to filter the amplitude spectrum and extract the energy information on the Mel Frequency Scale, and the relationship between the frequency (Hz) and the Mel Frequency is as follows:

Assuming that the magnitude spectrum passing through the STFT is

, the Mel filter bank is weighted and summed by a set of triangular filters as follows:

where

denotes the output energy of the

m-th Mel filter,

M denotes the weight of the

m-th Mel filter, and

is the number of Mel filters. Each filter’s

is triangular in shape and has a peak at the center frequency corresponding to the Meier frequency.

Taking logarithms of the output

of each Meier filter enhances the variation of low-energy signals:

where

denotes a very small positive number to avoid the logarithm going to infinity.

The Discrete Cosine Transform (DCT) converts the logarithmic energy into cepstrum coefficients and removes the redundant information from the features to obtain the Mel frequency cepstrum coefficients (MFCC):

where

denotes the

n-th Mel frequency cepstrum coefficient,

M denotes the number of Mel filter banks, and

N denotes the number of retained Mel frequency cepstrum coefficients.

The original input signal is a two-dimensional signal obtained by STFT, which needs to be feature reshaped to include time dimension as well as frequency dimension information, and the two-dimensional features of the spectrogram are adjusted to the three-dimensional time series data required by the GRU network (the batch size, the time step, and the feature size of each time step), and the spectrogram features are reshaped to fit the input requirements of the GRU network Input Features:

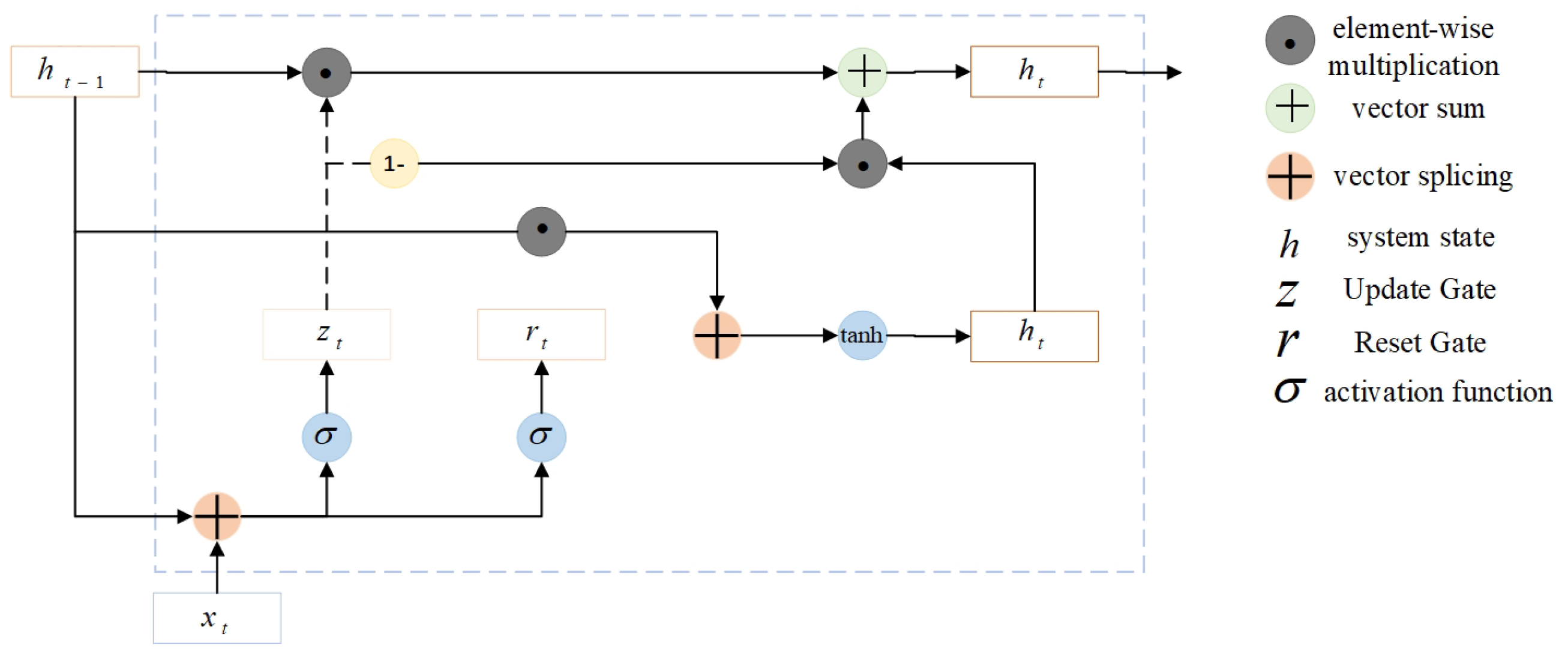

In the global feature extraction part, the time series information of audio features is extracted using a two-layer GRU network to capture the long-term dependencies in the audio signal. The first layer GRU retains the features at each time step and extracts the coarse-grained features of the sequence and passes them to the second layer GRU, which does not retain the time step sequence and outputs the final high-level features for higher-level feature extraction. The GRU network contains two gates inside, the update gate as well as the reset gate. The GRU model is shown in Figure 3:

Update Gate

determines how much of the current timestep’s state will be updated:

Reset gate

controls the effect of the previous hidden state on the current computation:

Candidate hidden state

is the candidate value for the current hidden state:

Hidden state

represents a weighted update using the update gate directly between the previous hidden state and the new candidate hidden state:

where,

denotes the input of the current time step,

denotes the hidden state of the current time step,

denotes the hidden state of the previous time step,

and

represent the update gate and reset gate, respectively.

W,

U, and

b represent the input-to-hidden state weight matrix, the hidden state-to-hidden state weight matrix, and the bias, respectively, and

denotes the sigmoid activation function, tanh denotes the tanh activation function, and ⊙ denotes the element-by-element multiplication.

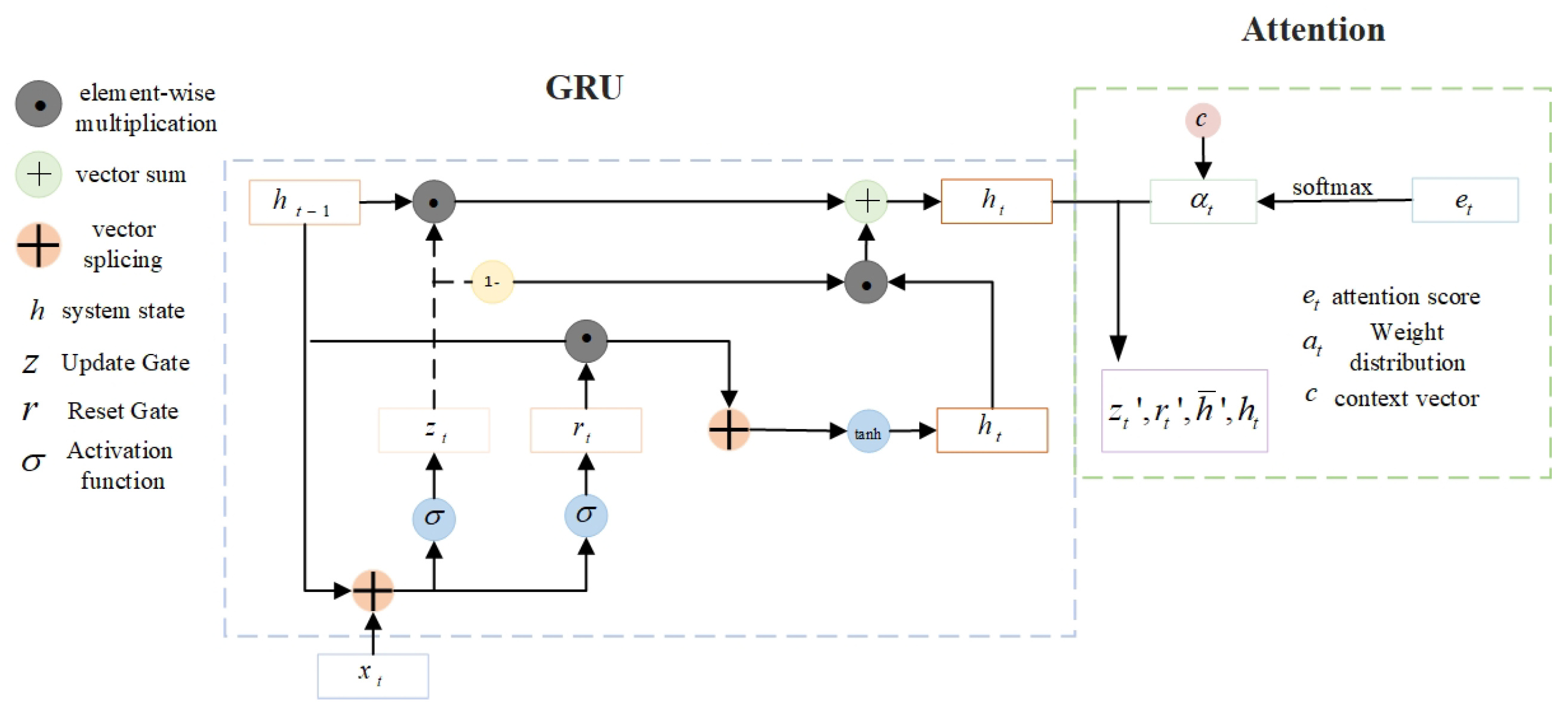

For the input hidden state sequence from the GRU, the ATTENTION mechanism first calculates the attention score for each time step, which is used to indicate the degree of contribution of each time step in the input sequence to the output:

where

and

are the learnable weight matrix and bias.

The attention scores are converted to weight distributions by the softmax activation function:

where

denotes the attentional weights of the time step that satisfies

,

T representing the total time step.

The context vector

c is the weighted sum of the input hidden states, which is used to represent the global information attended by the model:

where

denotes the attentional weight, and

denotes the input hidden state. The context vector is incorporated into the GRU hidden state update formula for further feature extraction and classification of the subsequent network as follows:

Candidate hidden state

:

Where, denotes the input of the current time step, denotes the hidden state of the previous time step, denotes the weight matrix from the input to the corresponding gate, denotes the weight matrix from the previous hidden state to the corresponding gate, and denotes the bias term of the corresponding gate. The overall architecture is shown in Figure 4.

The high-dimensional features are downscaled and mapped to the low-dimensional space using a Dense (fully connected) layer with the mathematical expression:

where

W denotes the weight matrix,

h denotes the hidden state,

b denotes the bias, and

denotes the activation function used in this study (typically,

could represent a function like sigmoid, ReLU, etc.).

The Dropout layer randomly discards some neurons to avoid overfitting the model to the training data:

where

p is the retention probability,

m is the randomly generated binary matrix used to discard neurons,

h is the original features, and

is the features after dropout processing.

After GRU and fully connected layer processing of the features, the Softmax layer is used to generate the output probability distribution, indicating the probability that the audio data belongs to each category, and the final classification result is determined according to the maximum value of the output probability:

Where, denotes the output score of the k-th category, K denotes the total number of categories, and denotes the probability that the input belongs to the k-th category.

The accuracy rate is used to indicate the proportion of correctly predicted samples to the total samples by the model:

where,

denotes that the true label is positive and the prediction is also positive;

denotes that the true label is negative and the prediction error is positive;

denotes that the true label is negative and the prediction is negative;

denotes that the true label is positive and the prediction error is negative.

The sparse classification cross-entropy loss function is suitable for multi-classification tasks where the labels are integer coded:

where,

N denotes the number of samples,

denotes the true label of the

i-th sample, and

denotes the probability of the true category in the

i-th sample predicted by the model.

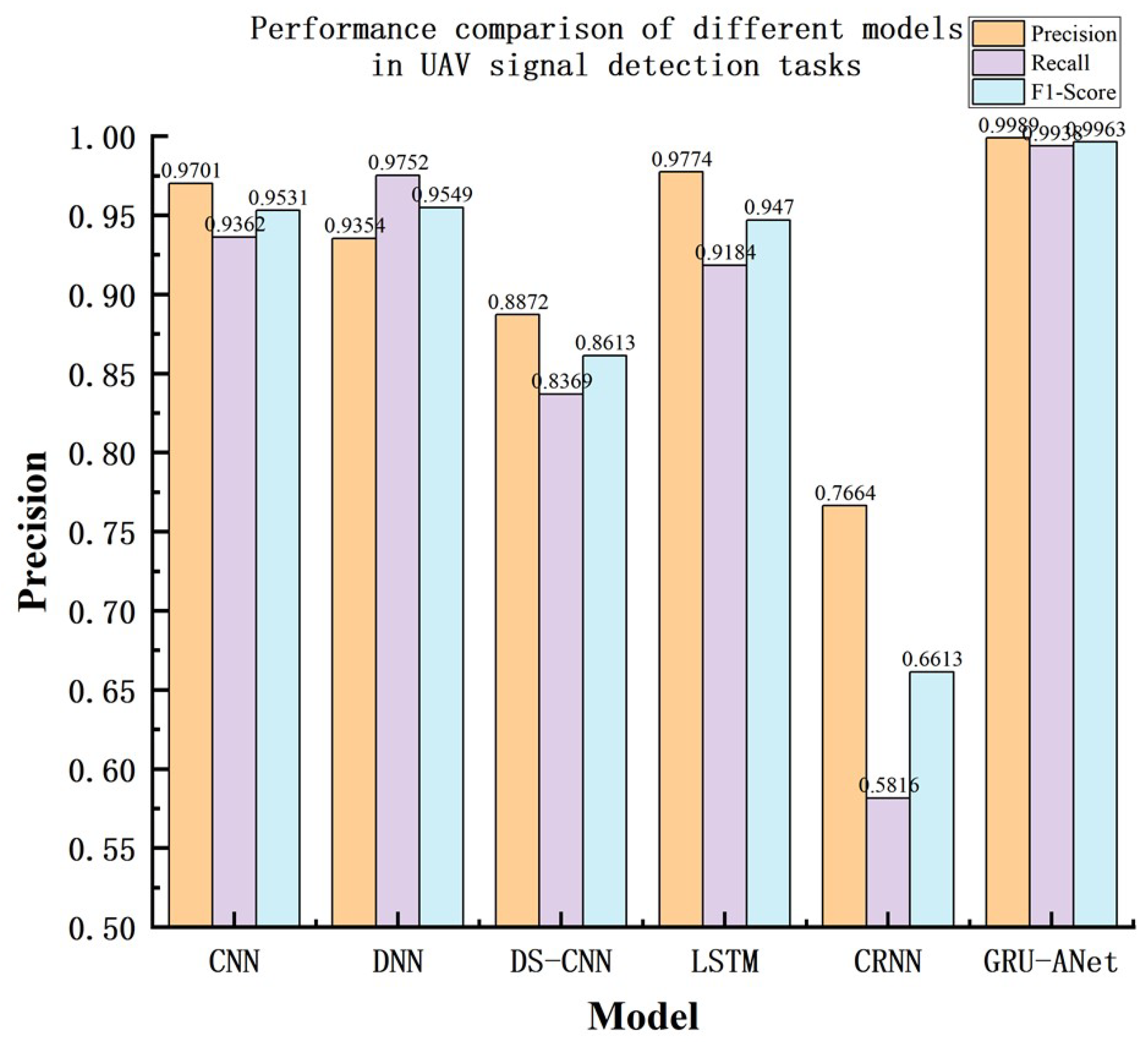

The precision is used to measure how many of the samples that are positive in the model prediction are true positive samples:

Recall is used to measure how many of all true samples are correctly predicted as positive:

The F1-score is a reconciled average of Precision and Recall, which combines measures of model precision and recall:

When precision and recall are close, the F1-score is also higher; if precision or recall is very low, the F1-score is also significantly lower. In summary, our proposed method for UAV audio signal detection based on GRU with attention mechanism, i.e., GRU-ANET can be summarized as Algorithm 1.

|

Algorithm 1: GRU-Based Drone Audio Detection Framework |

- 1:

Input: - 2:

Audio dataset D: Labeled audio files for drone and non-drone sounds. - 3:

Preprocessing parameters: Frame length l, Frame step s. - 4:

Model architecture parameters: GRU layers ,Dropout rate r ,Epochs e , Batch size b . - 5:

Output: Trained GRU model . Predictions for test audio samples. - 6:

Initialize: - 7:

Split D into training () and validation () datasets using an 80:20 ratio. - 8:

Define preprocessing pipeline for converting audio waveforms to spectrograms. - 9:

Preprocess Data: - 10:

For each audio file : Apply Short-Time Fourier Transform (STFT) to generate spectrogram S and normalize S to ensure consistent input features. - 11:

Model Construction: Initialize sequential GRU-based neural network: - 12:

Input layer: Accepts spectrogram S of shape where t is time frames and f is frequency bins. - 13:

GRU layers: Stack two GRU layers with hidden sizes and . Fully connected layers: - 14:

Dense layer with 64 units and ReLU activation. - 15:

Dropout layer with rate . - 16:

Output layer: Softmax activation for class probabilities. - 17:

Train the Model: - 18:

Compile : - 19:

Loss: Sparse Categorical Crossentropy. - 20:

Optimizer: Adam. - 21:

Metric: Accuracy. - 22:

Train on for epochs, with batch size . - 23:

Validate the model on . Apply early stopping with patience . - 24:

Evaluate: - 25:

Compute evaluation metrics: Accuracy, Precision, Recall, F1-score. - 26:

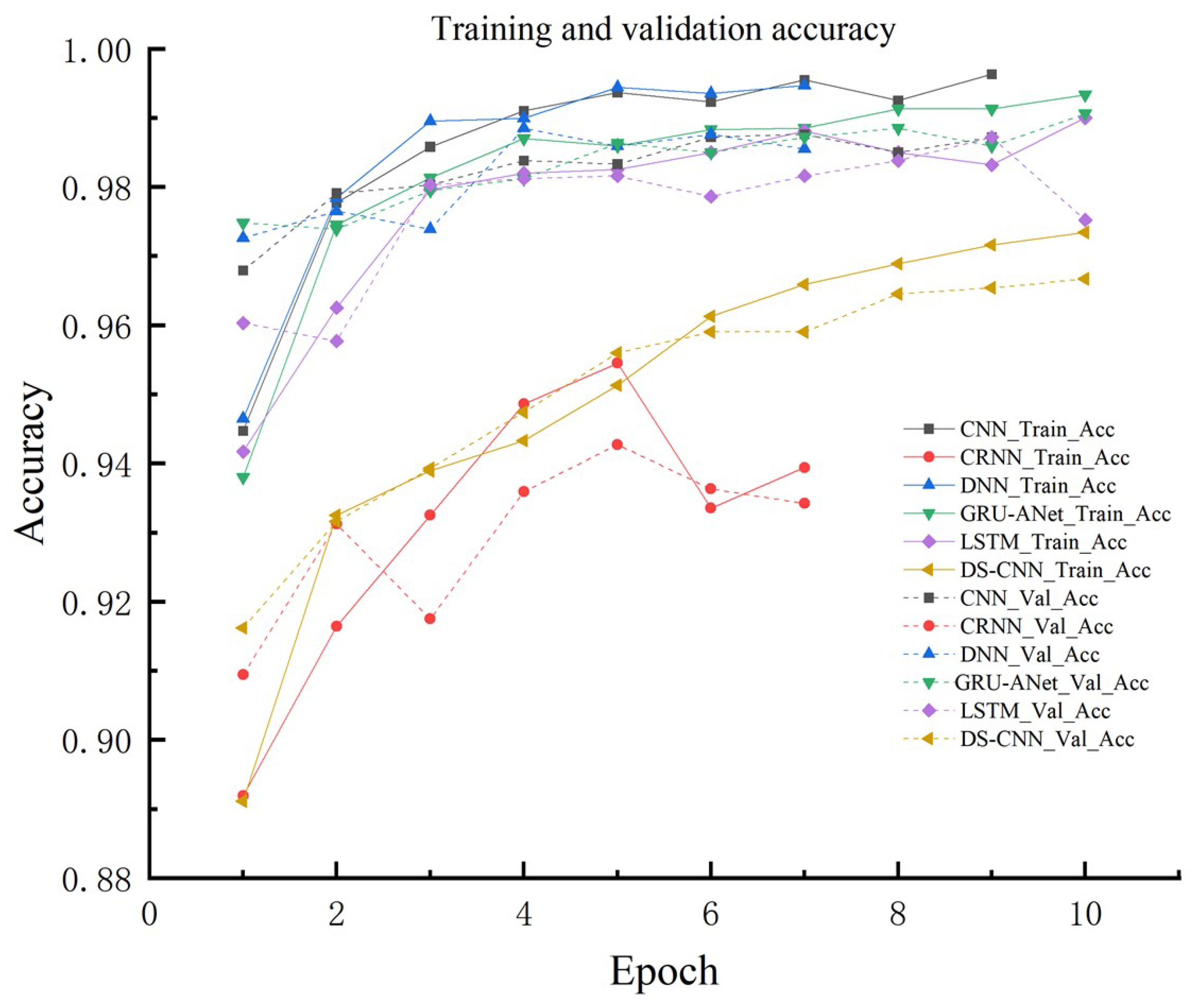

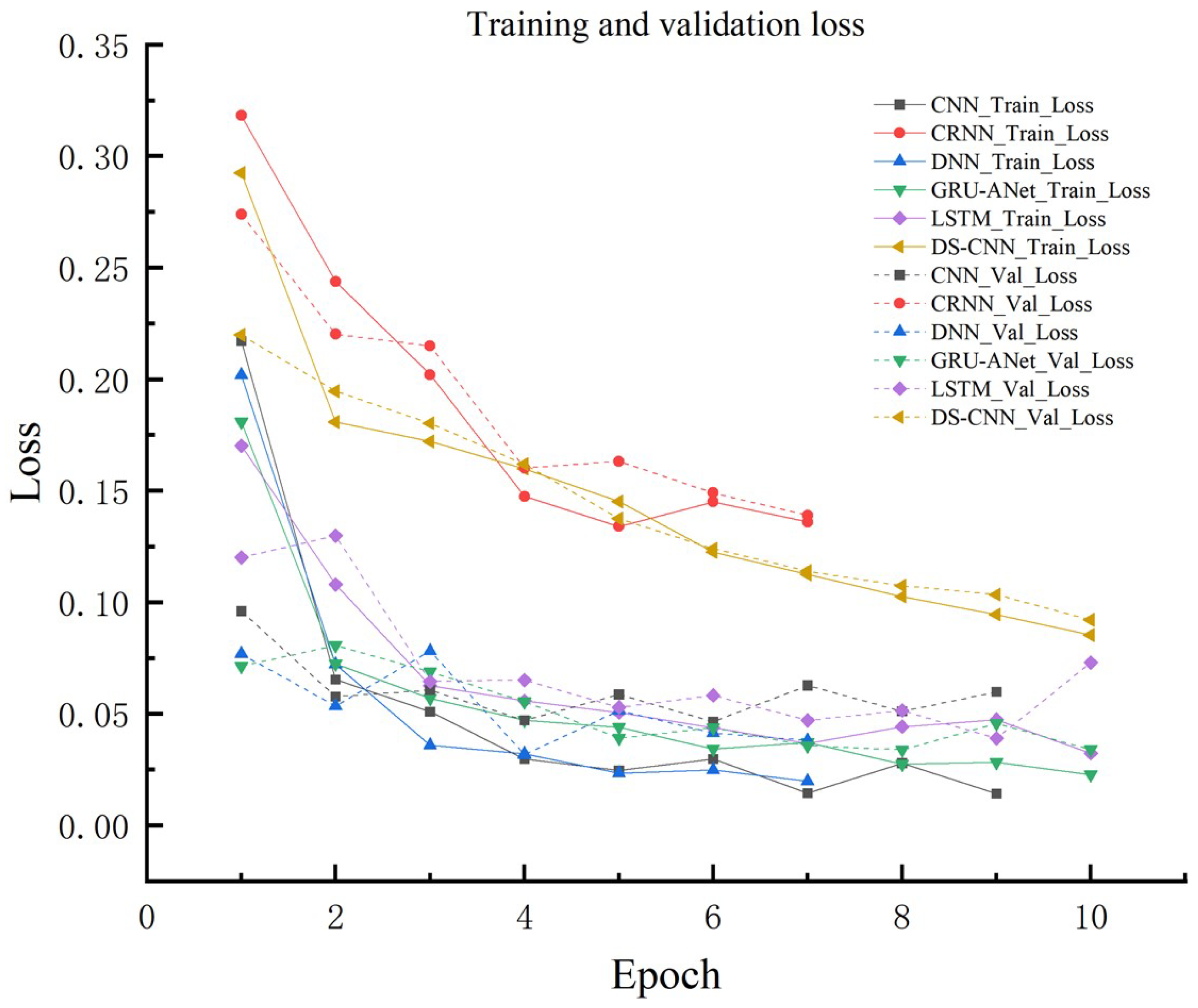

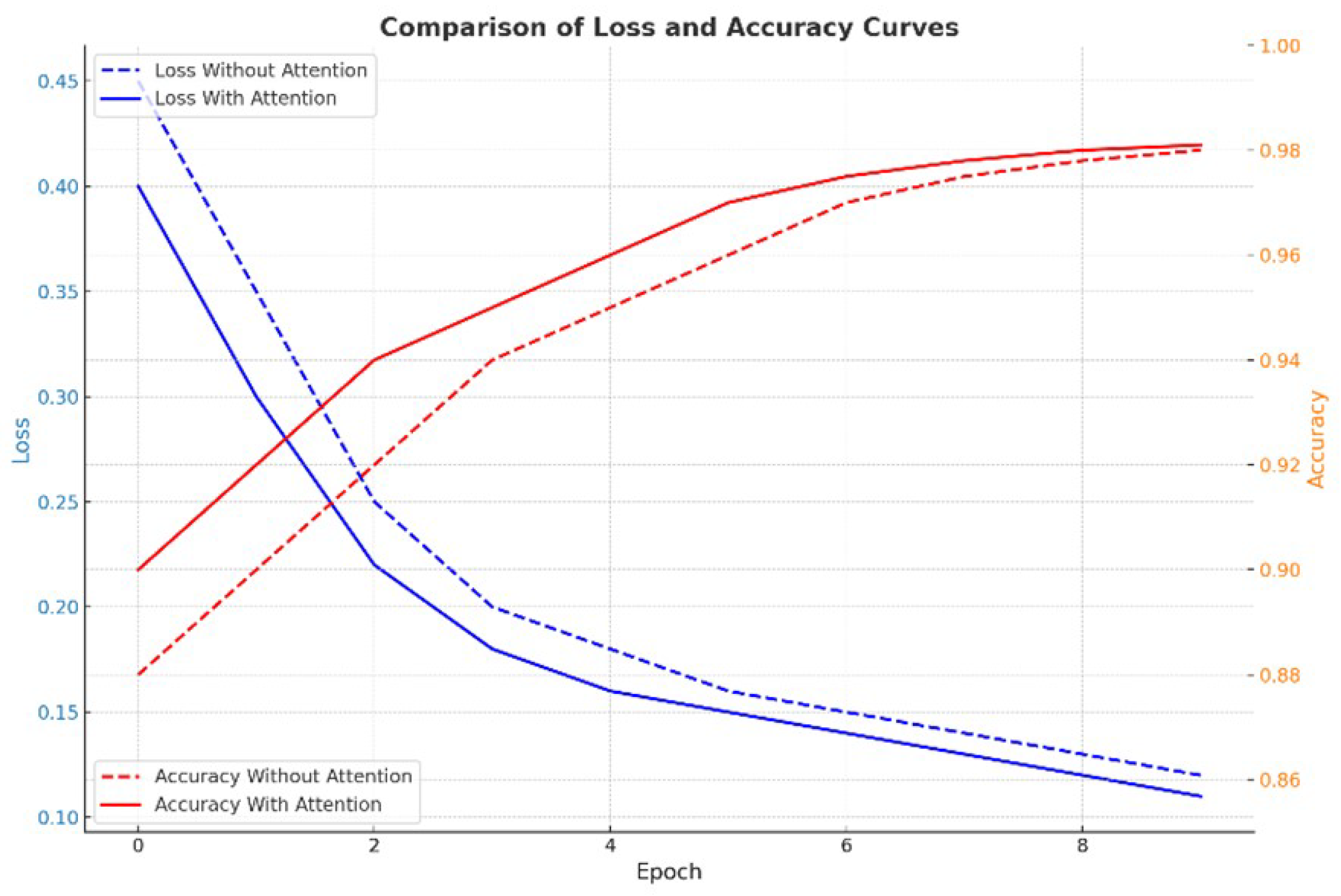

Plot training and validation loss and accuracy curves over epochs. - 27:

Train the Model: Predict: - 28:

For a test audio file : - 29:

Preprocess into a spectrogram . - 30:

Predict class probabilities . - 31:

Output: - 32:

Save the trained model for future use. - 33:

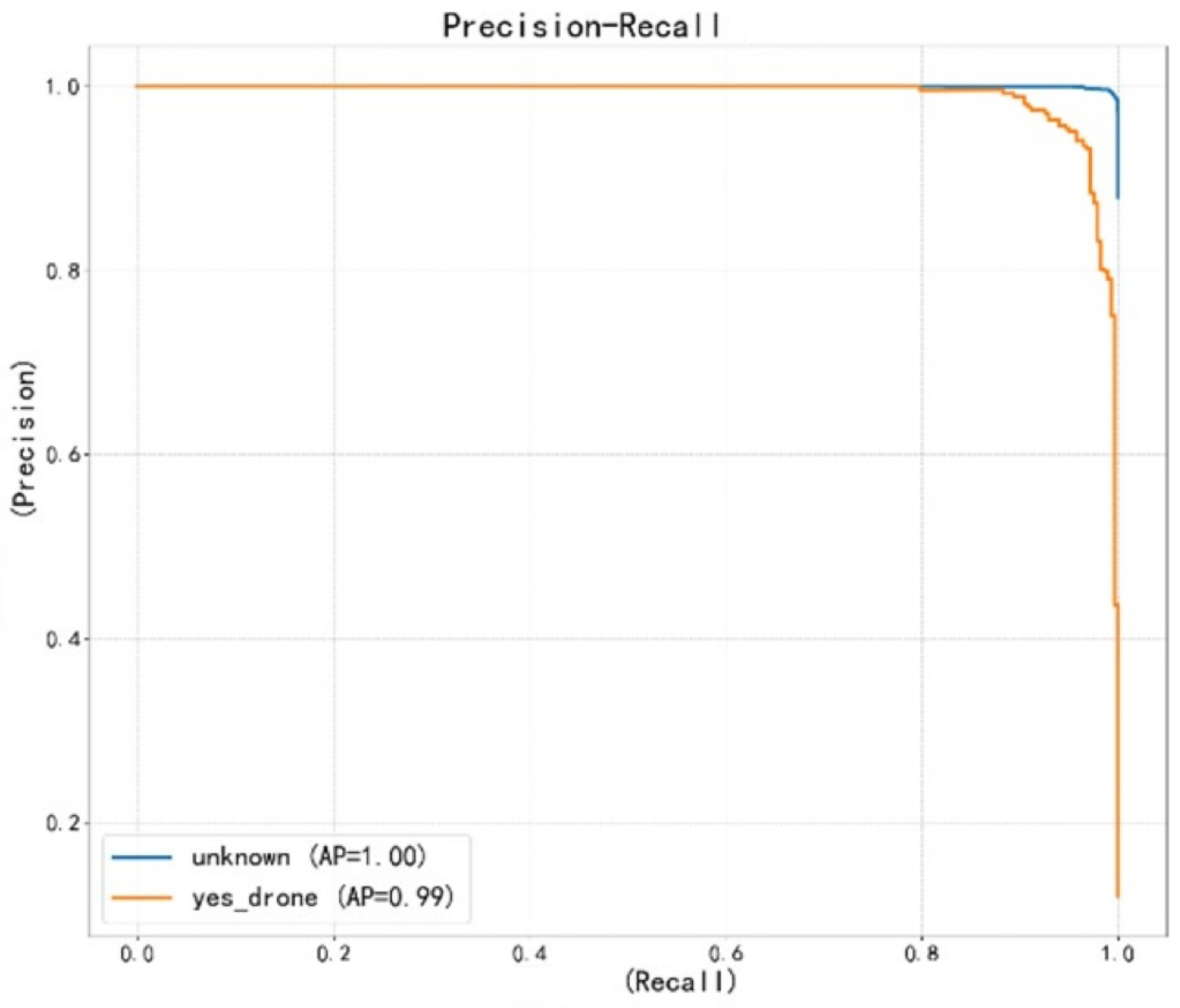

Generate classification reports, confusion matrix, and precision-recall curves. |