Submitted:

17 January 2025

Posted:

17 January 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

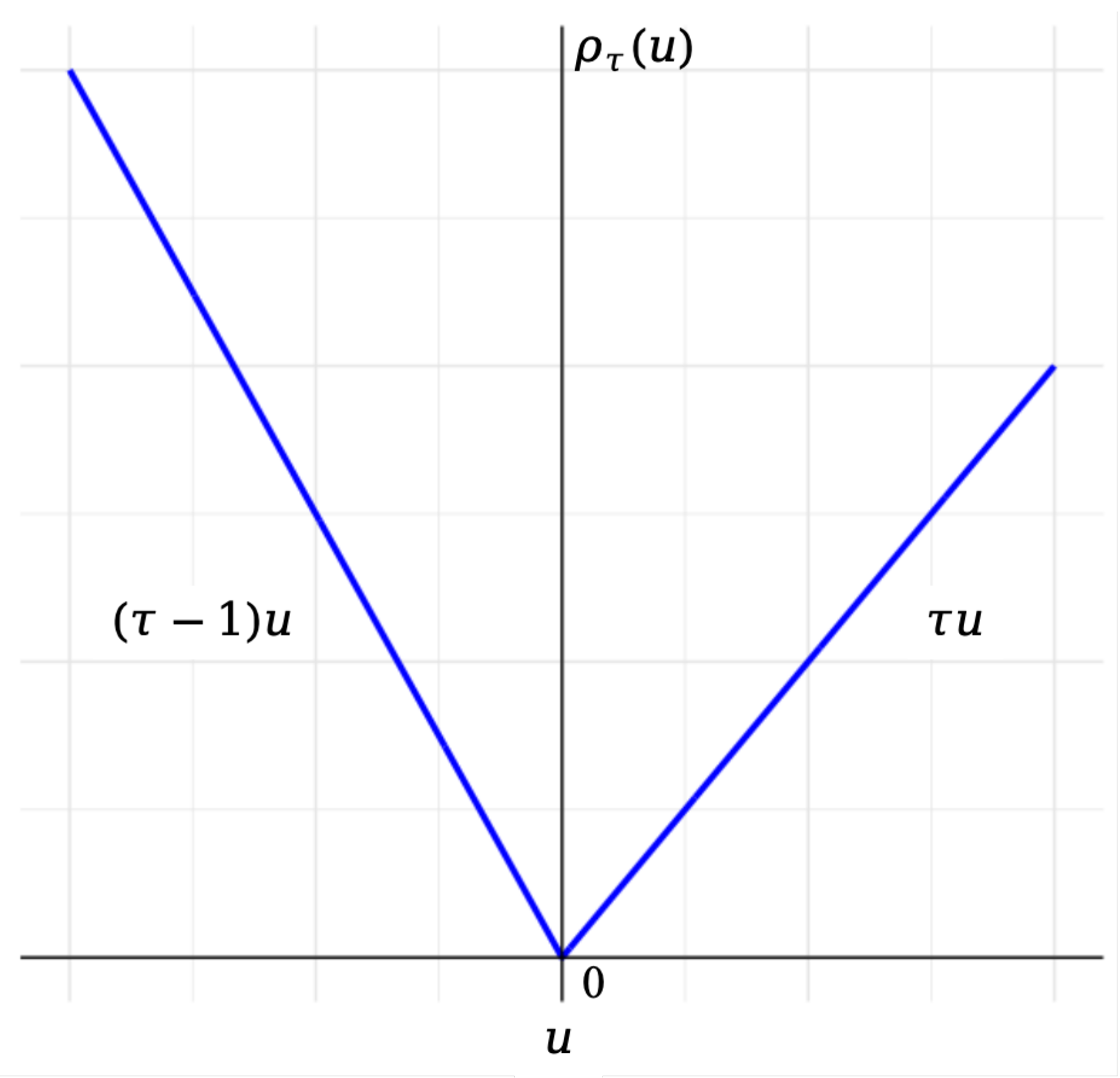

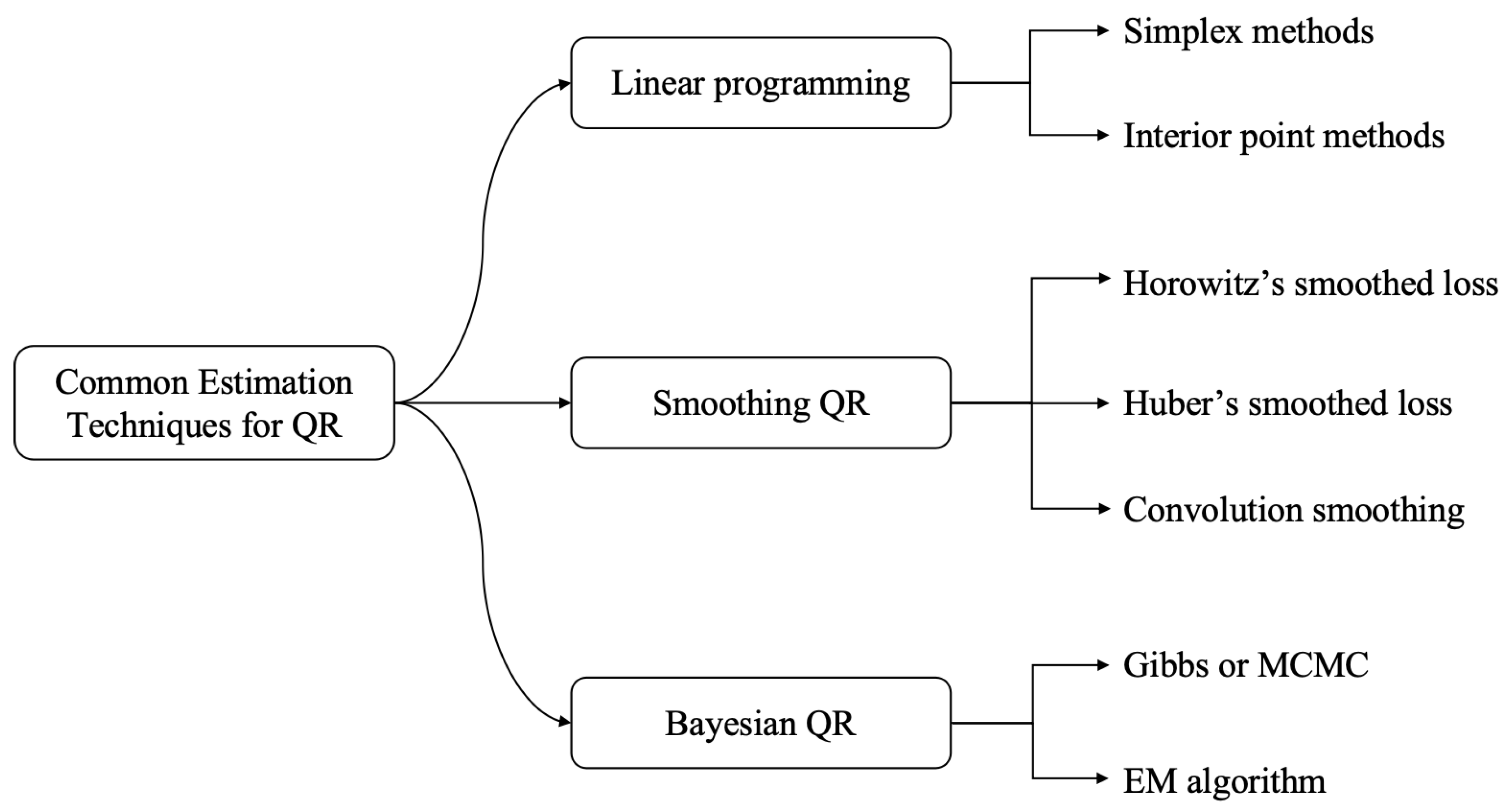

- Overcoming non-differentiability of the QR check function. We introduce common techniques on smooth or surrogate of the QR loss function.

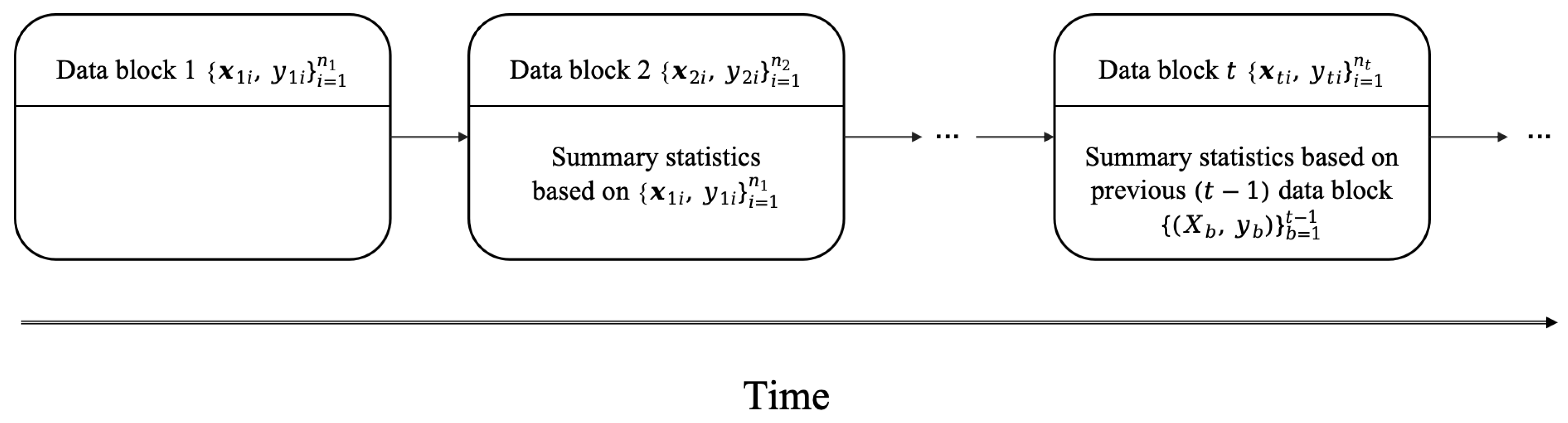

- Parameter Estimation in distributed and streaming settings. We systematically reviewe methods to efficiently compute and aggregate QR estimates when large-scale data is stored across multiple machines or in one machine. Then we summarize recent research progress of renewable estimation for QR regression as the data arrive sequentially.

- Statistical Inference for Large-Scale QR. We briefly introduce statistic theoretic of QR estimator for large-scale data, such as asymptotic distribution and techniques about how to derive empirical variance of estimators.

- Applications. We introduce the applications in meteorological forecasting, demand and price forecasting, geographical distribution data and high-frequency data forecasting.

- Potential research directions. We provide some potential research directions which can promote the development of this field.

2. Foundations of Quantile Regression

2.1. Definition and Mathematical Framework of QR

2.2. Common Estimation Techniques

2.3. Comparison with Mean Regression: Key Differences and Applications

3. Advances in QR for Massive Data

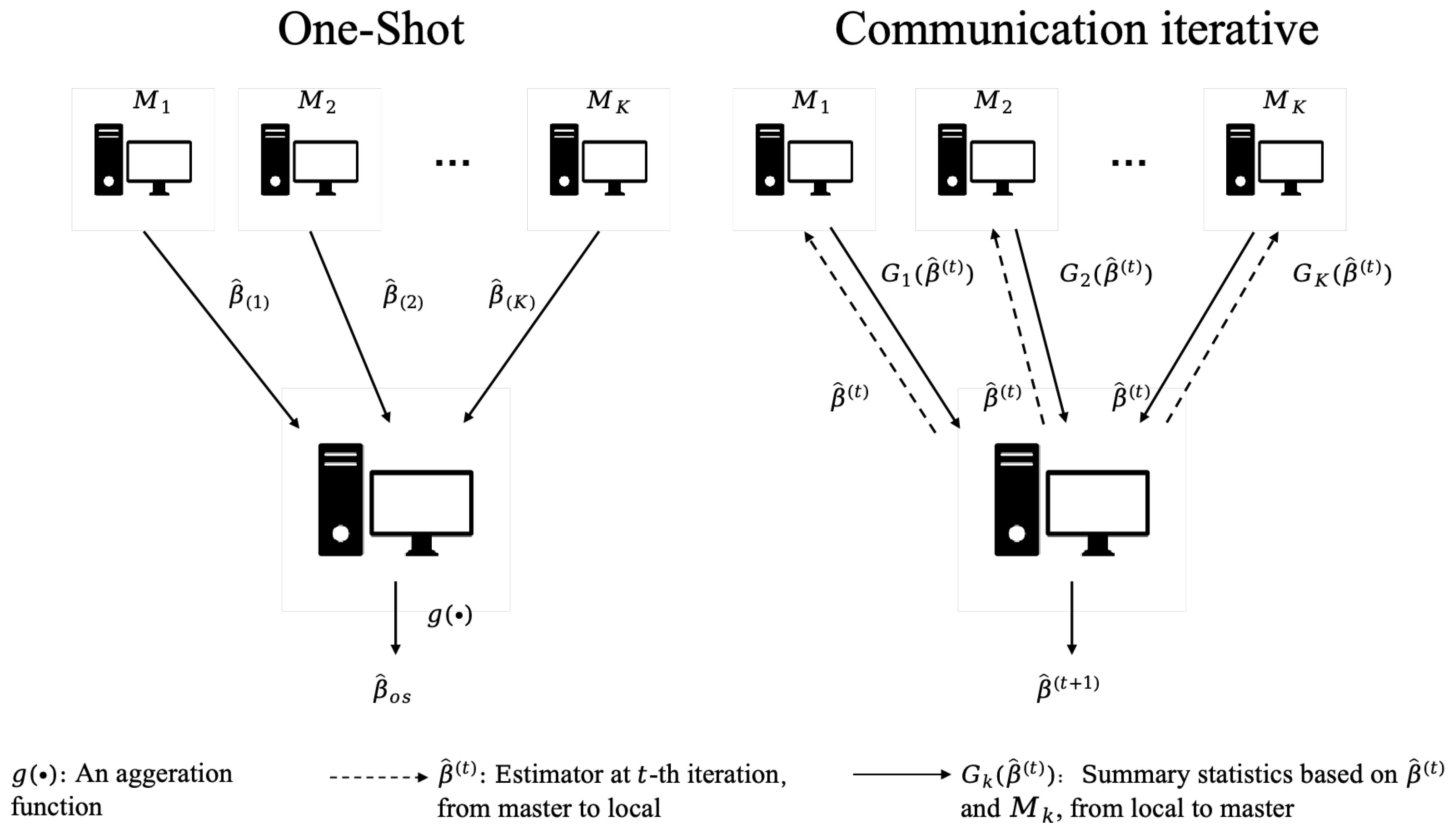

3.1. Distributed Computing

3.1.1. One-Shot Method and Its Variants

3.1.2. Communication Iterative Computation

3.1.3. Distributed High-Dimensional QR

3.1.4. Distributed Statistical Inference for QR

3.2. Subsampling-Based Method for QR

4. Advances in Quantile Regression for Streaming Data

4.1. os-dc Methods and Its Variants

4.2. sqr Algorithm

4.3. cee-taylor-Based Renewable Estimation

4.4. Renewable Estimation for Streaming Quantile Eegression Under Heterogeneity Scenario

5. Application

5.1. Meteorological Forecasting

5.2. Demand and Price Forecasting

5.3. Geographical Distribution Data

5.4. High-Frequency Data Forecasting

6. Concluding Remarks and Future Research

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| QR | Quantile Regression |

| ALD | Asymmetric Laplace distribution |

| DC | Distribured Computing |

| OS | One-Shot |

References

- Fan, J.; Han, F.; Liu, H. Challenges of big data analysis. National science review 2014, 1, 293–314. [Google Scholar] [CrossRef] [PubMed]

- Worthey, E.A.; Mayer, A.N.; Syverson, G.D.; Helbling, D.; Bonacci, B.B.; Decker, B.; Serpe, J.M.; Dasu, T.; Tschannen, M.R.; Veith, R.L.; et al. Making a definitive diagnosis: successful clinical application of whole exome sequencing in a child with intractable inflammatory bowel disease. Genetics in Medicine 2011, 13, 255–262. [Google Scholar] [CrossRef]

- Chen, R.; Mias, G.I.; Li-Pook-Than, J.; Jiang, L.; Lam, H.Y.; Chen, R.; Miriami, E.; Karczewski, K.J.; Hariharan, M.; Dewey, F.E.; et al. Personal omics profiling reveals dynamic molecular and medical phenotypes. Cell 2012, 148, 1293–1307. [Google Scholar] [CrossRef] [PubMed]

- Aramaki, E.; Maskawa, S.; Morita, M. Twitter catches the flu: detecting influenza epidemics using Twitter. In Proceedings of the Proceedings of the 2011 Conference on empirical methods in natural language processing, 2011, pp. 1568–1576.

- Bollen, J.; Mao, H.; Zeng, X. Twitter mood predicts the stock market. Journal of computational science 2011, 2, 1–8. [Google Scholar] [CrossRef]

- Asur, S. Predicting the Future with Social Media. arXiv, 2010; arXiv:1003.5699 2010. [Google Scholar]

- Chen, L.; Zhou, Y. Quantile regression in big data: A divide and conquer based strategy. Computational Statistics & Data Analysis 2020, 144, 106892. [Google Scholar]

- Chen, Y.; Dong, G.; Han, J.; Wah, B.W.; Wang, J. Multi-Dimensional Regression Analysis of Time-Series Data Streams. In VLDB ’02: Proceedings of the 28th International Conference on Very Large Databases; Morgan Kaufmann: San Francisco, 2002; pp. 323–334. [Google Scholar]

- Han, J.; Chen, Y.; Dong, G.; Pei, J.; Wah, B.W.; Wang, J.; Cai, Y.D. Stream Cube: An Architecture for Multi-Dimensional Analysis of Data Streams. Distributed and Parallel Databases 2005, 18, 173–197. [Google Scholar] [CrossRef]

- Xi, R.; Lin, N.; Chen, Y. Compression and Aggregation for Logistic Regression Analysis in Data Cubes. IEEE Transactions on Knowledge and Data Engineering 2009, 21, 479–492. [Google Scholar]

- Wang, K.; Wang, H.; Li, S. Renewable quantile regression for streaming datasets. Knowledge-Based Systems 2022, 235, 107675. [Google Scholar] [CrossRef]

- Fan, Y.; Lin, N. Sequential Quantile Regression for Stream Data by Least Squares. Journal of Econometrics, 2024; 105791. [Google Scholar] [CrossRef]

- Chen, X.; Yuan, S. Renewable Quantile Regression with Heterogeneous Streaming Datasets. Journal of Computational and Graphical Statistics 2024, 1–17. [Google Scholar] [CrossRef]

- Gama, J.; Sebastiao, R.; Rodrigues, P.P. On evaluating stream learning algorithms. Machine learning 2013, 90, 317–346. [Google Scholar] [CrossRef]

- Lin, N.; Xi, R. Aggregated estimating equation estimation. Statistics and its Interface 2011, 4, 73–83. [Google Scholar] [CrossRef]

- Schifano, E.D.; Wu, J.; Wang, C.; Yan, J.; Chen, M.H. Online updating of statistical inference in the big data setting. Technometrics 2016, 58, 393–403. [Google Scholar] [CrossRef]

- Chen, X.; Lee, J.D.; Tong, X.T.; Zhang, Y. Statistical inference for model parameters in stochastic gradient descent. The Annals of Statistics 2020, 48, 251–273. [Google Scholar] [CrossRef]

- Zhun, W.; Chen, X.; Wu, W.B. Online Covariance Matrix Estimation in Stochastic Gradient Descent. Journal of the American Statistical Association 2023, 118, 393–404. [Google Scholar]

- Luo, L.; Song, P.X.K. Renewable estimation and incremental inference in generalized linear models with streaming data sets. Journal of the Royal Statistical Society Series B: Statistical Methodology 2020, 82, 69–97. [Google Scholar] [CrossRef]

- Luo, L.; Song, P.X.K. Renewable Estimation and Incremental Inference in Generalized Linear Models with Streaming Data Sets. Journal of the Royal Statistical Society Series B: Statistical Methodology 2019, 82, 69–97. [Google Scholar] [CrossRef]

- Fan, Y.; Liu, Y.; Zhu, L. Optimal Subsampling for Linear Quantile Regression Models. Canadian Journal of Statistics 2021, 49, 1039–1057. [Google Scholar] [CrossRef]

- Chen, X.; Xie, M.g. A split-and-conquer approach for analysis of extraordinarily large data. Statistica Sinica 2014, 1655–1684. [Google Scholar]

- Zhang, Y.; Wainwright, M.J.; Duchi, J.C. Communication-efficient algorithms for statistical optimization. Advances in neural information processing systems 2012, 25. [Google Scholar]

- Koenker, R.; Bassett, G. Regression quantiles. Econometrica 1978, 46, 33–50. [Google Scholar] [CrossRef]

- Koenker, R. Quantile regression; Cambridge University Press, 2005.

- Xu, Z.; Kim, S.; Zhao, Z. Locally stationary quantile regression for inflation and interest rates. Journal of Business & Economic Statistics 2022, 40, 838–851. [Google Scholar]

- Liu, Y.; Xia, L.; Hu, F. Testing heterogeneous treatment effect with quantile regression under covariate-adaptive randomization. Journal of Econometrics, 2024; 105808. [Google Scholar]

- Ishihara, T. Panel data quantile regression for treatment effect models. Journal of Business & Economic Statistics 2023, 41, 720–736. [Google Scholar]

- Yang, X.; Narisetty, N.N.; He, X. A New Approach to Censored Quantile Regression Estimation. Journal of Computational and Graphical Statistics 2018, 27, 417–425. [Google Scholar] [CrossRef]

- Narisetty, N.; Koenker, R. Censored quantile regression survival models with a cure proportion. Journal of Econometrics 2022, 226, 192–203. [Google Scholar] [CrossRef]

- Wu, C.; Ling, N.; Vieu, P.; Liang, W. Partially functional linear quantile regression model and variable selection with censoring indicators. Journal of Multivariate Analysis 2023, 197, 105–189. [Google Scholar] [CrossRef]

- Xu, X.; Wang, W.; Shin, Y.; Zheng, C. Dynamic network quantile regression model. Journal of Business & Economic Statistics 2024, 42, 407–421. [Google Scholar]

- Zhong, Q.; Wang, J.L. Neural networks for partially linear quantile regression. Journal of Business & Economic Statistics 2024, 42, 603–614. [Google Scholar]

- Stengel, R.F. Optimal control and estimation; Courier Corporation, 1994.

- Wu, J.; Chen, M.H.; Schifano, E.D.; Yan, J. Online updating of survival analysis. Journal of Computational and Graphical Statistics 2021, 30, 1209–1223. [Google Scholar] [CrossRef] [PubMed]

- Davino, C.; Furno, M.; Vistocco, D. Quantile regression: theory and applications; Vol. 988, John Wiley & Sons, 2013.

- Lange, K. Numerical analysis for statisticians; Vol. 1, New York: springer, 2000. [Google Scholar]

- Koenker, R.; d’Orey, V. Remark AS R92: A remark on algorithm AS 229: Computing dual regression quantiles and regression rank scores. Journal of the Royal Statistical Society. Series C (Applied Statistics) 1994, 43, 410–414. [Google Scholar] [CrossRef]

- Karmarkar, N. A new polynomial-time algorithm for linear programming. In Proceedings of the Proceedings of the sixteenth annual ACM symposium on Theory of computing, 1984, pp. 302–311. [CrossRef]

- Horowitz, J.L. Bootstrap methods for median regression models. Econometrica 1998, 66, 1327–1351. [Google Scholar] [CrossRef]

- Chen, X.; Liu, W.; Zhang, Y. Quantile regression under memory constraint. The Annals of Statistics 2019, 47. [Google Scholar] [CrossRef]

- Chen, C. A finite smoothing algorithm for quantile regression. Journal of Computational and Graphical Statistics 2007, 16, 136–164. [Google Scholar] [CrossRef]

- Fernandes, M.; Guerre, E.; Horta, E. Smoothing Quantile Regressions. Journal of Business & Economic Statistics 2021, 39, 338–357. [Google Scholar]

- He, X.; Pan, X.; Tan, K.M.; Zhou, W.X. Smoothed Quantile Regression with Large-Scale Inference. Journal of Econometrics 2023, 232, 367–388. [Google Scholar] [CrossRef]

- Tan, K.M.; Battey, H.; Zhou, W. Communication-constrained distributed quantile regression with optimal statistical guarantees. Journal of machine learning research 2022, 23, 1–61. [Google Scholar]

- Tan, K.M.; Wang, L.; Zhou, W. High-dimensional quantile regression: Convolution smoothing and concave regularization. Journal of the Royal Statistical Society Series B: Statistical Methodology 2022, 84, 205–233. [Google Scholar] [CrossRef]

- Yu, K.; Moyeed, R.A. Bayesian quantile regression. Statistics & Probability Letters 2001, 54, 437–447. [Google Scholar]

- Kozumi, H.; Kobayashi, G. Gibbs sampling methods for Bayesian quantile regression. Journal of statistical computation and simulation 2011, 81, 1565–1578. [Google Scholar] [CrossRef]

- Staffa, S.J.; Kohane, D.S.; DavidZurakowski. Quantile regression and its applications: a primer for anesthesiologists. Anesthesia & Analgesia 2019, 128, 820–830. [Google Scholar]

- Baur, D.G.; Dimpfl, T.; Jung, R.C. Stock return autocorrelations revisited: A quantile regression approach. Journal of Empirical Finance 2012, 19, 254–265. [Google Scholar] [CrossRef]

- Baur, D.G. The structure and degree of dependence: A quantile regression approach. Journal of Banking & Finance 2013, 37, 786–798. [Google Scholar]

- Waldmann, E. Quantile regression: a short story on how and why. Statistical Modelling 2018, 18, 203–218. [Google Scholar] [CrossRef]

- Cole, T.J. Fitting smoothed centile curves to reference data. Journal of the Royal Statistical Society Series A: Statistics in Society 1988, 151, 385–406. [Google Scholar] [CrossRef]

- Dunham, J.B.; Cade, B.S.; Terrell, J.W. Influences of spatial and temporal variation on fish-habitat relationships defined by regression quantiles. Transactions of the American Fisheries Society 2002, 131, 86–98. [Google Scholar] [CrossRef]

- Cade, B.S.; Noon, B.R. A gentle introduction to quantile regression for ecologists. Frontiers in Ecology and the Environment 2003, 1, 412–420. [Google Scholar] [CrossRef]

- Yang, S. Censored median regression using weighted empirical survival and hazard functions. Journal of the American Statistical Association 1999, 94, 137–145. [Google Scholar] [CrossRef]

- Cade, B.S. Quantile regression models of animal habitat relationships; Colorado State University, 2003.

- Li, R.; Lin, D.K.; Li, B. Statistical inference in massive data sets. Applied Stochastic Models in Business and Industry 2013, 29, 399–409. [Google Scholar] [CrossRef]

- Jordan, M.I. On statistics, computation and scalability. Bernoulli 2013, 19, 1378–1390. [Google Scholar] [CrossRef]

- Zhang, Y.; Duchi, J.; Wainwright, M. Divide and conquer kernel ridge regression. In Proceedings of the Conference on learning theory. PMLR; 2013; pp. 592–617. [Google Scholar]

- Mackey, L.; Jordan, M.; Talwalkar, A. Divide-and-conquer matrix factorization. Advances in neural information processing systems 2011, 24. [Google Scholar]

- Zhang, Y.; Duchi, J.; Wainwright, M. Divide and conquer kernel ridge regression: A distributed algorithm with minimax optimal rates. The Journal of Machine Learning Research 2015, 16, 3299–3340. [Google Scholar]

- Xu, Q.; Cai, C.; Jiang, C.; Sun, F.; Huang, X. Block average quantile regression for massive dataset. Statistical Papers 2020, 61, 141–165. [Google Scholar] [CrossRef]

- Lee, J.D.; Sun, Y.; Liu, Q.; Taylor, J.E. Communication-efficient sparse regression: a one-shot approach. arXiv 2015, arXiv:1503.04337 2015. [Google Scholar]

- Jordan, M.I.; Lee, J.D.; Yang, Y. Communication-efficient distributed statistical inference. Journal of the American Statistical Association 2019, 114, 668–681. [Google Scholar] [CrossRef]

- Wang, J.; Kolar, M.; Srebro, N.; Zhang, T. Efficient distributed learning with sparsity. In Proceedings of the International conference on machine learning. PMLR; 2017; pp. 3636–3645. [Google Scholar]

- Volgushev, S.; Chao, S.K.; Cheng, G. Distributed Inference for Quantile Regression Processes. The Annals of Statistics 2019, 47, 1634–1662. [Google Scholar] [CrossRef]

- Pan, R.; Ren, T.; Guo, B.; Li, F.; Li, G.; Wang, H. A note on distributed quantile regression by pilot sampling and one-step updating. Journal of Business & Economic Statistics 2022, 40, 1691–1700. [Google Scholar]

- Hu, A.; Jiao, Y.; Liu, Y.; Shi, Y.; Wu, Y. Distributed Quantile Regression for Massive Heterogeneous Data. Neurocomputing 2021, 448, 249–262. [Google Scholar] [CrossRef]

- Jiang, R.; Yu, K. Smoothing Quantile Regression for a Distributed System. Neurocomputing 2021, 466, 311–326. [Google Scholar] [CrossRef]

- Zhao, W.; Zhang, F.; Lian, H. Debiasing and distributed estimation for high-dimensional quantile regression. IEEE transactions on neural networks and learning systems 2019, 31, 2569–2577. [Google Scholar] [CrossRef] [PubMed]

- Wang, L.; Lian, H. Communication-efficient estimation of high-dimensional quantile regression. Analysis and Applications 2020, 18, 1057–1075. [Google Scholar] [CrossRef]

- Chen, X.; Liu, W.; Mao, X.; Yang, Z. Distributed high-dimensional regression under a quantile loss function. Journal of Machine Learning Research 2020, 21, 1–43. [Google Scholar]

- Wang, C.; Shen, Z. Distributed High-Dimensional Quantile Regression: Estimation Efficiency and Support Recovery. arXiv preprint arXiv:2405.07552 2024, arXiv:2405.07552 2024. [Google Scholar]

- Van de Geer, S.; Bühlmann, P.; Ritov, Y.; Dezeure, R. On asymptotically optimal confidence regions and tests for high-dimensional models. The Annals of Statistics 2014, 42, 1166–1202. [Google Scholar] [CrossRef]

- Tibshirani, R. Regression shrinkage and selection via the lasso. Journal of the Royal Statistical Society Series B: Statistical Methodology 1996, 58, 267–288. [Google Scholar] [CrossRef]

- Fan, J.; Li, R. Variable selection via nonconcave penalized likelihood and its oracle properties. Journal of the American statistical Association 2001, 96, 1348–1360. [Google Scholar] [CrossRef]

- Xu, G.; Shang, Z.; Cheng, G. Optimal tuning for divide-and-conquer kernel ridge regression with massive data. In Proceedings of the International Conference on Machine Learning. PMLR; 2018; pp. 5483–5491. [Google Scholar]

- Lee, S.; Liao, Y.; Seo, M.H.; Shin, Y. Fast inference for quantile regression with tens of millions of observations. Journal of Econometrics 2024, 105673. [Google Scholar] [CrossRef]

- Drineas, P.; Mahoney, M.W.; Muthukrishnan, S. Sampling algorithms for l2 regression and applications. In Proceedings of the Proceedings of the seventeenth annual ACM-SIAM symposium on Discrete algorithm, 2006, pp. 1127–1136.

- Ma, P.; Sun, X. Leveraging for big data regression. Wiley Interdisciplinary Reviews: Computational Statistics 2015, 7, 70–76. [Google Scholar] [CrossRef]

- Wang, H.; Yang, M.; Stufken, J. Information-based optimal subdata selection for big data linear regression. Journal of the American Statistical Association 2019, 114, 393–405. [Google Scholar] [CrossRef]

- Fithian, W.; Hastie, T. Local case-control sampling: Efficient subsampling in imbalanced data sets. Annals of statistics 2014, 42, 1693–1724. [Google Scholar] [CrossRef] [PubMed]

- Wang, H.; Zhu, R.; Ma, P. Optimal subsampling for large sample logistic regression. Journal of the American Statistical Association 2018, 113, 829–844. [Google Scholar] [CrossRef]

- Ai, M.; Yu, J.; Zhang, H.; Wang, H. Optimal subsampling algorithms for big data generalized linear models. arXiv preprint arXiv:1806.06761 2018, arXiv:1806.06761 2018. [Google Scholar]

- Ai, M.; Yu, J.; Zhang, H.; Wang, H. Optimal subsampling algorithms for big data regressions. Statistica Sinica 2021, 31, 749–772. [Google Scholar] [CrossRef]

- Wang, H.; Ma, Y. Optimal subsampling for quantile regression in big data. Biometrika 2021, 108, 99–112. [Google Scholar] [CrossRef]

- Chao, Y.; Ma, X.; Zhu, B. Distributed Optimal Subsampling for Quantile Regression with Massive Data. Journal of Statistical Planning and Inference 2024, 233, 106186. [Google Scholar] [CrossRef]

- Shao, L.; Song, S.; Zhou, Y. Optimal Subsampling for Large-sample Quantile Regression with Massive Data. Canadian Journal of Statistics 2023, 51, 420–443. [Google Scholar] [CrossRef]

- Tian, Z.; Xie, X.; Shi, J. Bayesian quantile regression for streaming data. AIMS Mathematics 2024, 9, 26114–26138. [Google Scholar] [CrossRef]

- Jiang, R.; Yu, K. Renewable Quantile Regression for Streaming Data Sets. Neurocomputing 2022, 508, 208–224. [Google Scholar] [CrossRef]

- Sun, X.; Wang, H.; Cai, C.; Yao, M.; Wang, K. Online Renewable Smooth Quantile Regression. Computational Statistics & Data Analysis 2023, 185, 107781. [Google Scholar]

- Xie, J.; Yan, X.; Jiang, B.; Kong, L. Statistical Inference for Smoothed Quantile Regression with Streaming Data. Journal of Econometrics 2024, 105924. [Google Scholar] [CrossRef]

- Peng, Y.; Wang, L. Two-stage online debiased lasso estimation and inference for high-dimensional quantile regression with streaming data. Journal of Systems Science and Complexity 2024, 37, 1251–1270. [Google Scholar] [CrossRef]

- Chen, Y.; Fang, S.; Lin, L. Renewable composite quantile method and algorithm for nonparametric models with streaming data. Statistics and Computing 2024, 34, 34–43. [Google Scholar] [CrossRef]

- Song, S.; Lin, Y.; Zhou, Y. Linear expectile regression under massive data. Fundamental Research 2021, 1, 574–585. [Google Scholar] [CrossRef]

- Tao, C.; Wang, S. Online updating Huber robust regression for big data streams. Statistics 2024, 58, 1197–1223. [Google Scholar] [CrossRef]

- Jiang, R.; Liang, L.; Yu, K. Renewable Huber estimation method for streaming datasets. Electronic Journal of Statistics 2024, 18, 674–705. [Google Scholar] [CrossRef]

- Jiang, R.; Yu, K. Unconditional Quantile Regression for Streaming Datasets. Journal of Business & Economic Statistics 2024, 42, 1143–1154. [Google Scholar]

- Zeng, D.; Lin, D. Efficient resampling methods for nonsmooth estimating functions. Biostatistics 2008, 9, 355–363. [Google Scholar] [CrossRef]

- van Wieringen, W.N.; Binder, H. Sequential learning of regression models by penalized estimation. Journal of Computational and Graphical Statistics 2022, 31, 877–886. [Google Scholar] [CrossRef]

- Zou, H.; Yuan, M. Composite quantile regression and the oracle model selection theory. The Annals of Statistics 2008, 36, 1108–1126. [Google Scholar] [CrossRef]

- Luo, L.; Song, P.X.K. Multivariate online regression analysis with heterogeneous streaming data. Canadian Journal of Statistics 2023, 51, 111–133. [Google Scholar] [CrossRef]

- Wei, J.; Yang, J.; Cheng, X.; Ding, J.; Li, S. Adaptive regression analysis of heterogeneous data streams via models with dynamic effects. Mathematics 2023, 11, 4899. [Google Scholar] [CrossRef]

- Ding, J.; Li, J.; Wang, X. Renewable risk assessment of heterogeneous streaming time-to-event cohorts. Statistics in Medicine 2024, 43, 3761–3777. [Google Scholar] [CrossRef] [PubMed]

- Huang, L.; Wei, X.; Zhu, P.; Gao, Y.; Chen, M.; Kang, B. Federated Quantile Regression over Networks. In Proceedings of the 2020 International Wireless Communications and Mobile Computing (IWCMC). IEEE; 2020; pp. 57–62. [Google Scholar]

- Elgabli, A.; Park, J.; Bedi, A.S.; Bennis, M.; Aggarwal, V. GADMM: Fast and communication efficient framework for distributed machine learning. Journal of Machine Learning Research 2020, 21, 1–39. [Google Scholar]

- Wang, X.; Dunson, D.B.; Leng, C. DECOrrelated feature space partitioning for distributed sparse regression. Advances in neural information processing systems 2016, 29. [Google Scholar]

- Jin, J.; Yan, J.; Aseltine, R.H.; Chen, K. Transfer learning with large-scale quantile regression. Technometrics 2024, 1–13. [Google Scholar] [CrossRef]

- Wang, C.; Li, T.; Zhang, X.; Feng, X.; He, X. Communication-Efficient Nonparametric Quantile Regression via Random Features. Journal of Computational and Graphical Statistics 2024, 33, 1175–1184. [Google Scholar] [CrossRef]

- Sit, T.; Xing, Y. Distributed Censored Quantile Regression. Journal of Computational and Graphical Statistics 2023, 1–13. [Google Scholar] [CrossRef]

| Large-scale data | Data storage | Methodology | Brief Description |

|---|---|---|---|

| Massive data | Distributed storage | One-shot | Calculate estimator on each local machine using the data stored locally in a fully parallel manner. Subsequently, transmit the local estimator to a central machine, where they are combined to form the final estimator. |

| Iterative | Calculate some statistics on each local machine with local data. Transmit them to the central machine to update the global estimator and repeat the process until converge. | ||

| Stored on a single machine but too large to be fully loaded into the computer’s memory at once. | Subsampling | Repeatedly generate subsamples of small sizes for parameter estimation. | |

| Streaming data | Summary statistics based on historical data and the current data | Renewable | The resulting estimator is renewed with current data and summary statistics of historical data, without access to the historical data. |

| Methology | Applicable Scene | Merit & Drawback |

|---|---|---|

| One shot | · Distributed across multiple nodes · Require low computation cost · Uniform distribution, with minimal heterogeneity between batches |

· High communication efficiency, simple to implement · Sensitive to data heterogeneity, accuracy may be limited |

| Iterative | · Distributed across multiple nodes · Require high estimation accuracy · Strong heterogeneity between batches |

· High accuracy, strong adaptability, theoretical guarantees · High communication cost, complex implementation |

| Subsampling | · Massive dataset, samples are sufficient to achieve a good estimate · Require low computation cost · Uniform data distribution or representative samples are available |

· High computational efficiency, simple to implement · Sensitive to data distribution, high randomness |

| Methodology | Form of solution | Brief Description |

|---|---|---|

| os-dc and its variants [7,11,23,41] | Close-form | Viewing stream data as data blocks partitioned over the time domain, only the estimate or summary statistics of each block are retained, and obtain the renewable estimation by aggregating the above statistics of each block. |

| sqr algorithm [12,90] | Iterative | Motivated by Bayesian QR, sequential updates occur naturally under the Bayesian setup, i.e., using the posterior distribution obtained from the past analysis as the prior information for later analysis. |

| cee-taylor-based [91,92,93,94,95] | Iterative | Reformulate the loss function using a Taylor expansion and apply the Newton algorithm for estimation, incorporating various smoothing techniques. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).